1. Introduction

High-rate dynamic systems are engineering systems subjected to high-amplitude dynamic events, often higher than 100 g

(g-force), and over very short durations, typically under 100 ms. Enabling closed-loop feedback capabilities for high-rate systems, such as hypersonic vehicles, advanced weaponry, and airbag deployment systems, is driven by the need to ensure continuous operations and safety. Such capabilities require high-rate system identification and state estimation, defined as high-rate structural health monitoring (HRSHM), through algorithms capable of sub-millisecond decisions using sensor measurements [

1]. However, the development of HRSHM algorithms is a difficult task, because the dynamics of high-rate systems is uniquely characterized by (1) large uncertainties in the external loads, (2) high levels of non-stationarity and heavy disturbances, and (3) unmodeled dynamics generated from changes in the system configurations [

2].

There have been recent research efforts in constructing algorithms for HRSHM by integrating some levels of physical knowledge about the system of interest, in particular on an experimental setup constructed to reproduce high-rate dynamics that consists, among other features, of a cantilever beam equipped with a moving cart acting as a sliding boundary condition [

3]. Using these experimental data acquired from an accelerometer located under the beam, Joyce et al. [

3] presented a sliding mode observer to track the position of the moving cart through the online identification of the fundamental frequency. Downey et al. [

4] proposed to track the cart location using a real-time model-matching approach of the fundamental frequency extracted using a Fourier transform, and experimentally demonstrated the promise of the algorithm on the test setup. Yan et al. [

5] developed a model reference adaptive system algorithm consisting of a sliding mode observer used in updating a reduced-order physical representation of the dynamic system, and numerically showed the sub-millisecond capabilities in tracking the cart location.

While these algorithms showed great promise, the dominating dynamics of the experimental setup was relatively simple, where the position of the cart could be mapped linearly to the beam’s first fundamental frequency. However, high-rate systems in field applications are rarely that simple, and experimental data is difficult and expensive to acquire. It follows that one must assume low levels of physical knowledge in designing HRSHM algorithms. A solution is to leverage data-based techniques, which may also be beneficial in increasing the computational efficiency of the algorithms [

6]. To cope with the challenges of limited training data, high non-stationarities, and high uncertainties, a desirable HRSHM algorithm is one capable of adaptive behavior, ideally in real time [

2].

Among data-driven algorithms, neural network-based models have been successfully applied to model complex nonlinear dynamic systems across many fields [

7,

8], including electricity demand prediction [

9], biology [

10], autonomous vehicles [

11,

12], and structural health monitoring [

13,

14,

15]. Among these models, recurrent neural networks (RNNs) are of interest to the HRSHM problem due to their temporal dynamic characteristics, where they are capable of recognizing sequential patterns by selectively processing information [

16]. Long short-term memory (LSTM) networks are a specialized type of RNN capable of capturing long-term temporal dependencies [

17], and have thus been successfully applied to modeling time series measurements [

18,

19,

20,

21], including modeling multivariate time series [

22,

23] and reconstructing attractors [

24]. Some work on LSTM focused on the problem of prediction for non-stationary systems. For instance, Guen and Thome [

19] introduced a new loss function based on both temporal errors and distortion of future predicted trajectories to enforce learning in a highly non-stationary environment. Cui et al. [

20] used multi-layer bidirectional LSTMs to better capture spatial features of traffic data for large scale traffic network prediction. Hua et al. [

25] introduced random connections in the LSTM architecture to better cope with non-stationarity in the problem of traffic and mobility prediction. Yeo and Melnyk [

26] introduced a probabilistic framework for predicting noisy time series with RNNs.

The architectures proposed in the existing methods necessitate the use of large-sized networks, which require relatively long computing times. This limits the application of these methods to HRSHM. It is also noted that leveraging data-based algorithms for HRSHM requires a certain level of on-the-edge learning due to the complex dynamics under consideration and the limited availability of training data. The adaptive algorithm must be capable of rapid convergence to ensure adequate performance while guaranteeing fast computing to empower real-time applicability. The introductory paper on HRSHM [

2] discussed the important conflict between convergence speed and computing time, where convergence speed generally increases with the algorithm’s complexity while computing time decreases.

A viable approach to improving both convergence speed and computing time is to incorporate physical knowledge into data-based architectures, a process also known as physics-informed machine learning [

27]. Such knowledge integration can be done, for example, in the form of accompanying logic rules [

28], algebraic equations [

29], and mechanistic models [

30,

31]. By incorporating physical knowledge, the algorithm can be designed to converge more efficiently, therefore preserving a leaner or less complex architecture, and thus favoring faster computing. Of interest, the authors have proposed in [

32] a purely on-the-edge learning wavelet neural network that exhibited good convergence properties by varying its input space as a function of the extracted local dynamic characteristics of the time series. This information on the time series data structure was based on Takens’ embedding theorem [

33] which constituted the physical information fed to the wavelet network. The objective was to demonstrate that a machine learning algorithm could learn a non-stationary representation without pre-training. While successful, the architecture of the algorithm itself would not converge because of the constantly changing input space, and the computing time required to extract the local dynamic characteristics was too long for HRSHM applications.

The objective of this paper is to investigate the performance of a physics-informed deep learning method in predicting sensor measurements enabling HRSHM, inspired by the authors’ prior work in [

32]. Instead of a time-varying input space, the algorithm uses an ensemble of RNNs, each using a different delay vector to represent distinct local data structures in the dynamics. The novelty lies in the incorporation of physics in the algorithm, which stems from the pre-analysis of available training data to extract the appropriate delay vector characteristics for each RNN after the identification of the time series data structure through principal component analysis (PCA). This allows the network to extract local features in the time series in order to provide improved multi-step ahead prediction performance capabilities. The physics-informed deep learning method shows improved performance over a conventional grid search method. Short-sequence LSTMs are used to improve computing speed, and transfer learning [

34] is used to adapt the representation to the target domain.

The rest of the paper is organized as follows.

Section 2 provides the algorithm used for prediction.

Section 3 describes the proposed input space construction method.

Section 4 presents the validation method including the experimental test used to collect high-rate data and performance metrics.

Section 5 and

Section 6 present the validation results and a discussion about their implications, respectively.

Section 7 concludes the paper.

2. Deep Learning Architecture

This section presents the background on the algorithm used to conduct step ahead predictions of non-stationary time series measurements

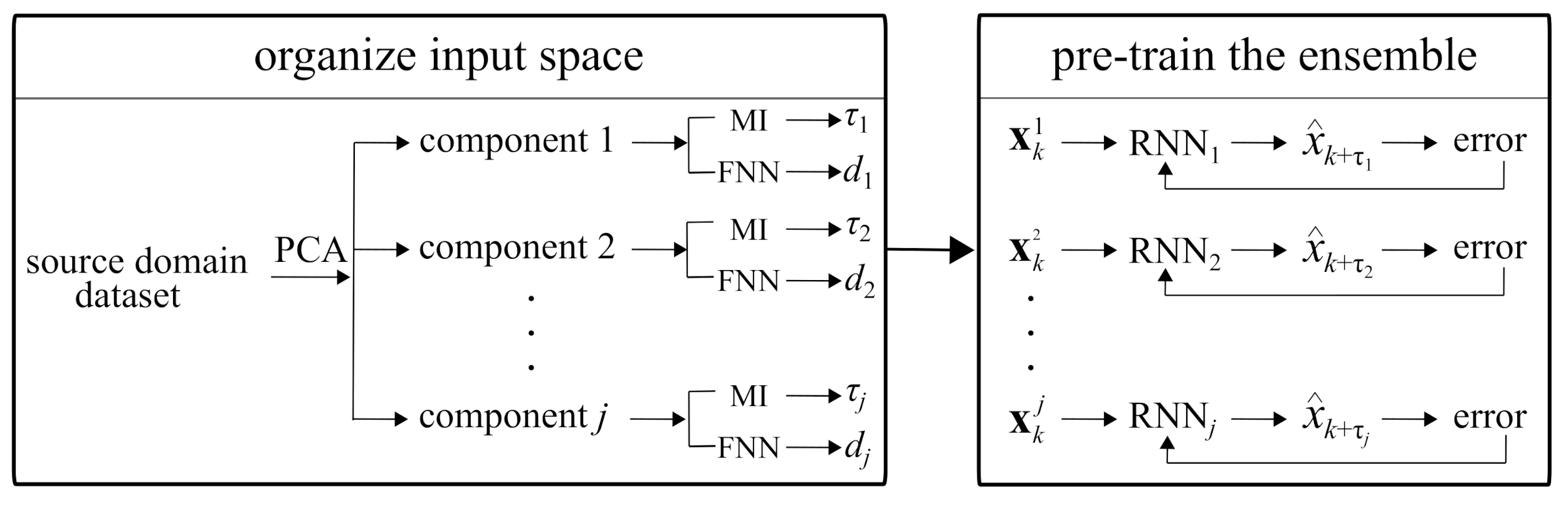

x while sensor measurements are being acquired. The algorithm, shown in

Figure 1, consists of (1) a multi-rate sampler, shown in

Figure 1 (left), and (2) an ensemble of

j LSTM cells arranged in parallel, joined through an attention layer, to conduct the prediction

, shown in

Figure 1 (right) as making a one-step ahead prediction at step

k,

. Short-sequence LSTMs are used to accelerate computing. A particularity of the algorithm is that the multi-rate sampler is used on part of the acquired sensor measurements

to represent unique dynamic characteristics. More precisely, it samples a different sequence for each LSTM

i (

) using a different time delay

embedded in a vector of dimension

with the delay vector

written

where

is a positive integer. Note that in Equation (1),

is organized such that each individual one-step ahead prediction

is temporally consistent. The choice for

and

d in the multi-rate sampler is based on physics and constitutes the novelty of the proposed algorithm. It will be described in the next section.

The use of input spaces of different time resolutions allows capturing different local dynamics that can be fed into different LSTM cells to extract multi-resolution dynamics features. The role of the attention layer and linear neuron is to combine these extracted features to model the dynamics of the system. Each individual LSTM cell uses the delay vector to recursively update the hidden state

. Hidden state

at time step

k represents a feature vector used in conducting the prediction, with

where

r is the updating function of an LSTM cell, with

commonly initialized at zero [

16]. The recursive update process of an RNN with LSTM cells is illustrated in

Figure 2a, and the internal architecture of a single LSTM cell in

Figure 2b. LSTMs are defined by the following equations [

17]

where

, and

represent hyperbolic tangent and the logistic sigmoid functions, respectively,

and

the input and output weights, respectively,

b the bias vector associated with the gate in subscript, and ⊙ an element-wise multiplication. Both weights and biases are shared through all time steps. These LSTM cells use internal gating functions (Equations (3)–(8)) to augment memory capabilities. Three gates, consisting of the input gate

, forget gate

, and output gate

, modulate the flow of information inside the cell by assigning a value in the range of

to write the input to the internal memory

(

in Equation (7)), reset the memory (

in Equation (7)), or read from it (Equation (8)). Gate values close to zero are less relevant for prediction purposes than those with values close to one.

The one-step ahead prediction is based on a linear combination of the features extracted by the LSTM cells in the ensemble. The combination of features is conducted in two steps. First, an attention layer determines dominant features by assigning attention weights for to the LSTM outputs. Second, a linear neuron combines the scaled features to produce the one-step ahead prediction . This architecture can also be used to predict multiple steps ahead. The idea is to iteratively execute one-step ahead prediction q times to predict a q-step trajectory. For the ith LSTM cell in the ensemble, the moving window of the multi-rate sampler continues to provide inputs from the measured time series up to . For , the predicted measurements are appended to the actual measurements to construct the input space.

4. Validation Methodology

The proposed deep learning algorithm is validated on experimental data obtained from a series of drop tower tests. In what follows, the experimental test setup is described, and the performance metrics defined.

4.1. Experimental Setup

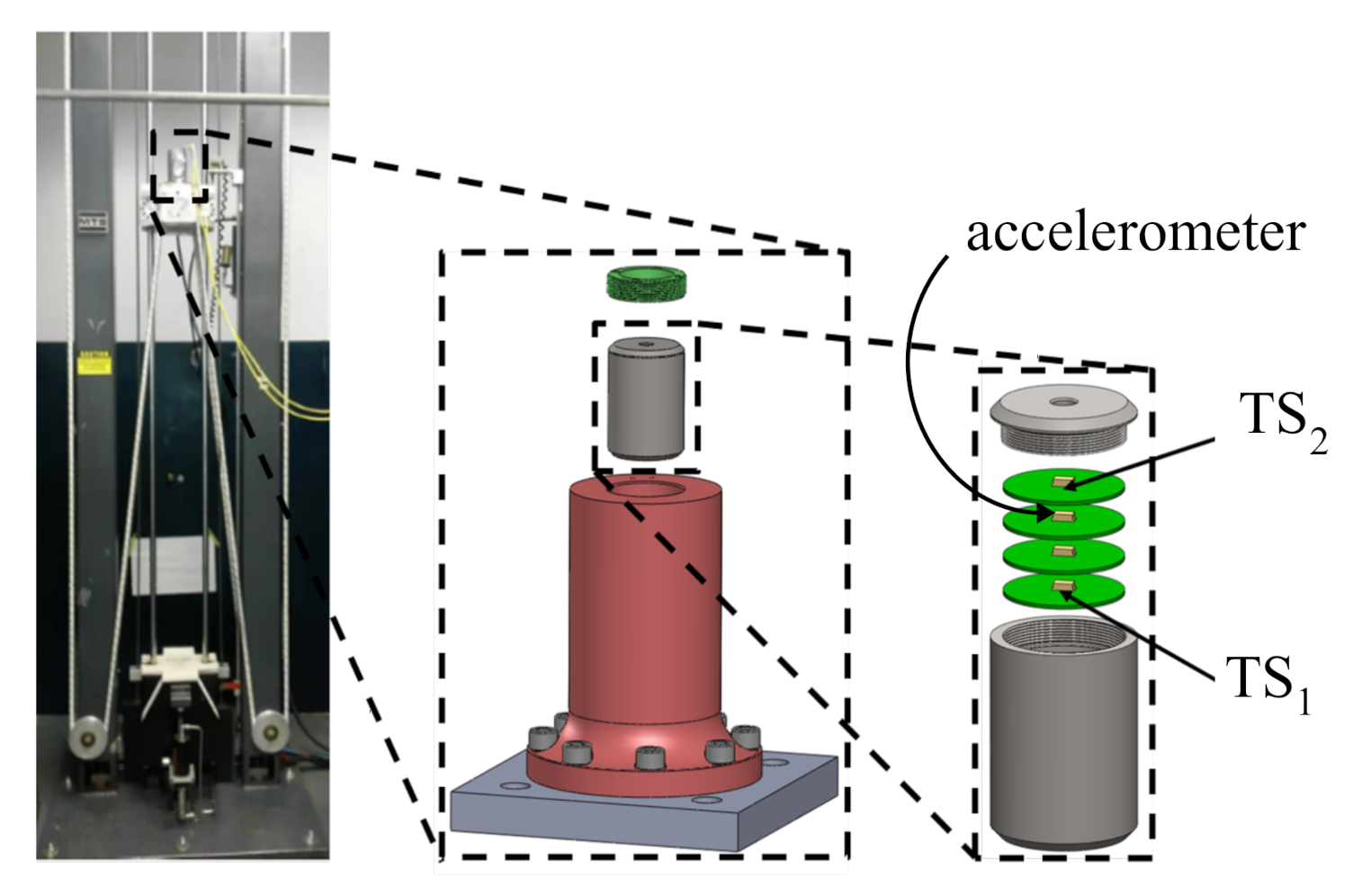

The proposed HRSHM algorithm is validated on high-rate dynamic datasets obtained experimentally from accelerated drop tower tests. The experimental configuration is illustrated in

Figure 4 and described in detail in [

32]. Briefly, four circuit boards, each equipped with an accelerometer capable of measuring up to 120,000 g

(or 120 kg

), were placed inside a canister with the electronics secured using a potting material. The canister was dropped five consecutive times and deceleration responses of the circuit boards were recorded with a sampling rate of 1 MHz (

s).

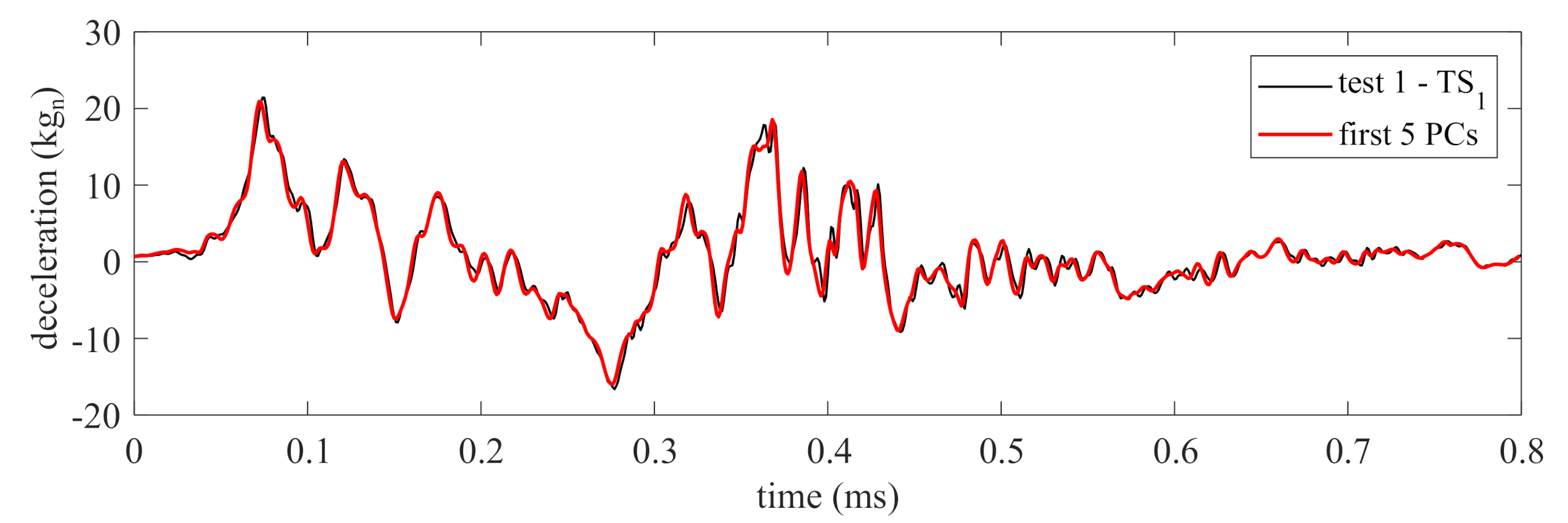

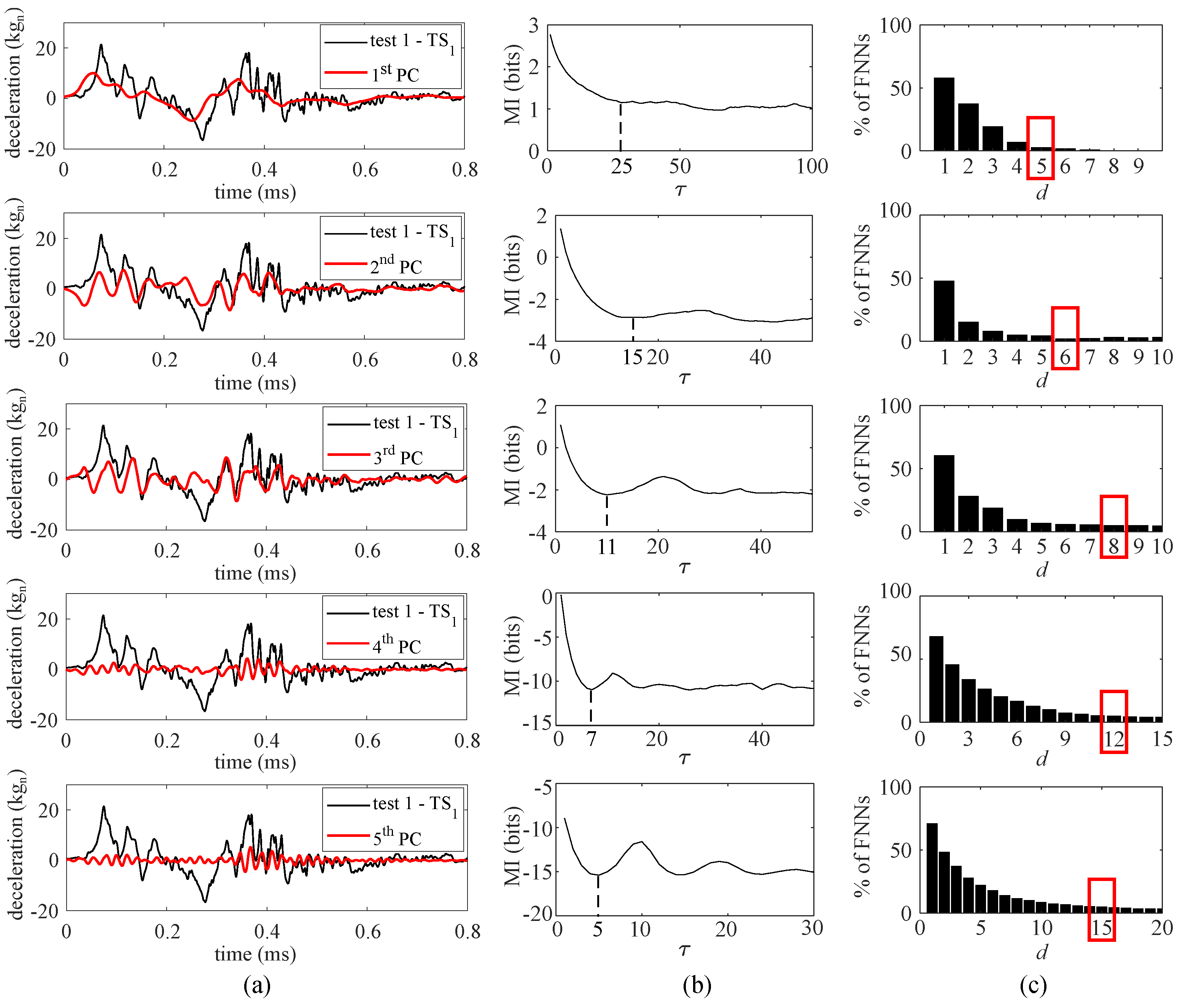

Figure 5a,b plot the recorded time series for TS

and TS

obtained from accelerometers 1 and 2, respectively, through five consecutive tests. From these time series plots, one can observe the following high-rate dynamic characteristics: (1) the magnitude of deceleration is high, in the kg

range; (2) the duration of the excitation occurs during a very short time frame, under 1 ms; (3) the dynamics exhibits high levels of non-stationarities; and (4) the dynamic response is altered after each test, likely attributable to the whipping of cables and/or damage in the potting material housing the electronics.

In this work, the acceleration time series TS and TS are used for the algorithm validation. In particular, it is assumed that only test 1 from TS is available for training (source domain), and is therefore used to construct the input space. The target domain consists of tests 1–5 from TS, used to conduct real-time prediction while sensor data is being acquired.

4.2. Performance Metrics

Four performance metrics are defined to evaluate the proposed algorithm. The first two metrics are the mean absolute error (MAE) and root mean square error (RMSE) of the prediction, defined as

where

n is the number of samples.

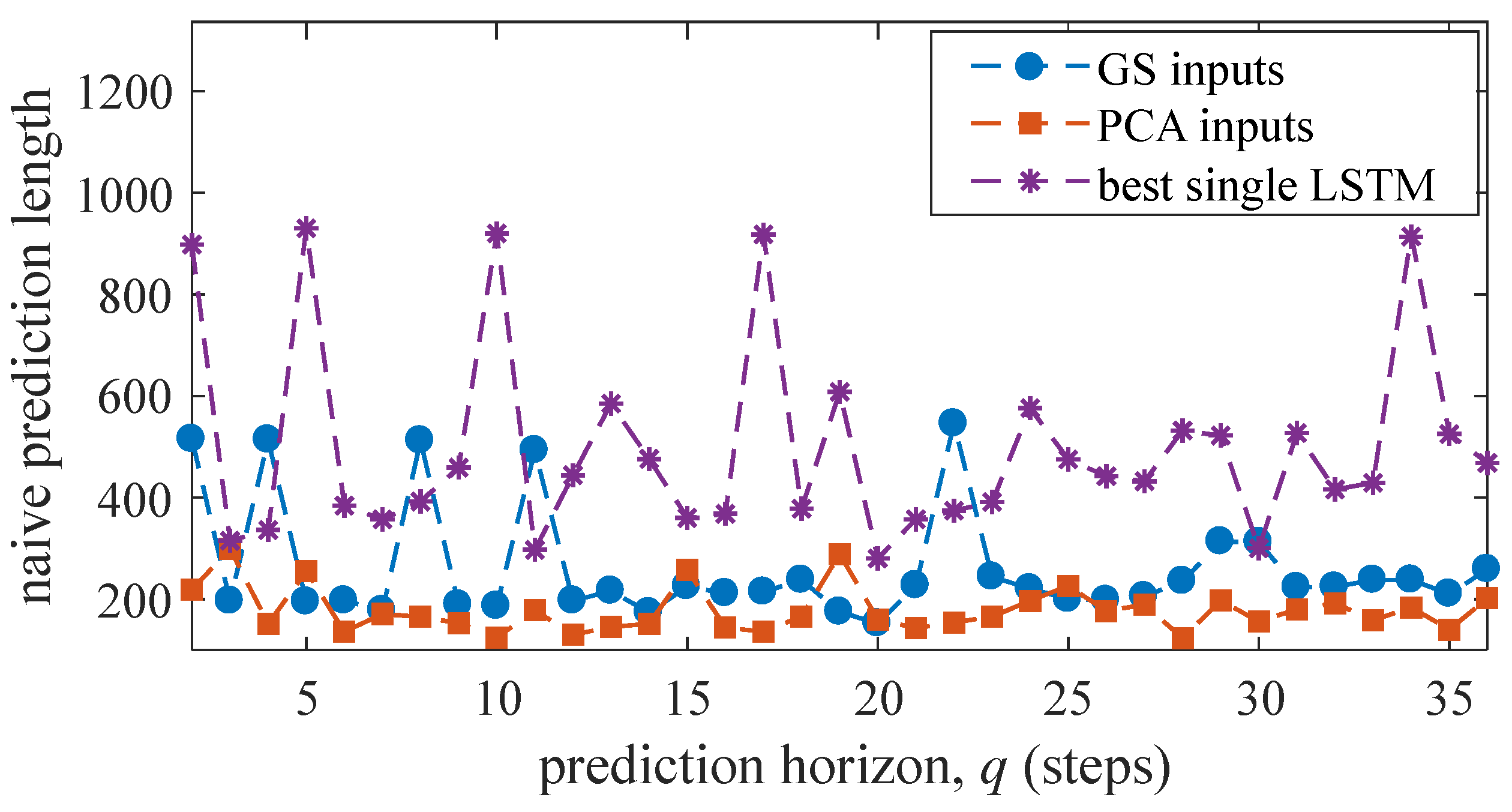

The third metric is the naive prediction length, where a naive prediction is defined as the predicted value of a future step being equal to that of the immediate previous step. This behavior manifests itself as a flat horizontal line in a time series plot. To detect a naive prediction, a moving window of length q equal to that of the prediction horizon is moved along both the real and predicted time series, and the standard deviations are computed. If the standard deviation of the prediction is arbitrarily less than 50% of the standard deviation of the original time series, the prediction values within the window are reported as a naive. The total length of naive prediction windows, in terms of number of prediction steps, over the length of the time series forms the naive metric.

The fourth metric is based on dynamic time warping (DTW) [

42]. DTW searches for local similarities between two sequences (i.e., between the real and predicted time series) by compressing or stretching them. The DTW metric for two sequences

and

is obtained by sliding a window of length

l over

and

to construct a global distance matrix

, where the local element

is the distance between the

ith point in

and

jth point in

within the

kth non-overlapping window and takes the following form

The global distance matrix

is created by appending local matrices diagonally as

A warping path is obtained by starting at the first element of the distance matrix , and moving to the right, down, or diagonally by following the minimum elements. The DTW value is taken as the sum of the elements on the warping path, where a smaller DTW value indicates greater similarity between the two sequences. The metric is applied here to measure the similarity between the features extracted by the LSTMs and the sensor measurements.

For all four performance metrics, smaller values indicate better performance of the algorithm and are thus desirable. In the numerical study that follows, the HRSHM algorithm that incorporates physical knowledge (“PCA inputs”) is compared against the so-called grid-search (“GS inputs”) method where

’s are selected based on a visual inspection of the topology of the phase-space of the source domain and

d optimized to obtain the best performance for one-step ahead predictions. Remark that there are other methods available for selecting the hyper-parameters of a neural network, including Bayesian [

43,

44] and evolutionary optimization [

45,

46] techniques. Nevertheless, given the small number of hyper-parameters inherent to our algorithm, the “GS inputs” method is deemed appropriate for comparison. Results are also benchmarked against the one-step ahead prediction errors (MAE and RMSE) by the purely on-the-edge learning algorithm presented in [

32] that was numerically simulated on the same dataset.

Here, the “GS inputs” method is expected to perform better in short-term predictions given the pre-optimization of the input space based on the one-step ahead prediction performance, while the “PCA inputs” method is expected to perform better in longer-term predictions given its capability to extract physics-informed features. Numerical simulations were performed in Python 3 using a self-developed code. Although the LSTM cells in the ensemble were set to run in parallel, parallel computing was not implemented in the code used here.

6. Discussion

The presented deep learning algorithm was constructed for HRSHM applications, with the promise that the incorporation of physics could enhance feature extraction performance, thus yielding better predictions. The physical information stemmed from the PCA that extracted data structure from the training data, followed by the extraction of essential dynamics characteristics (

,

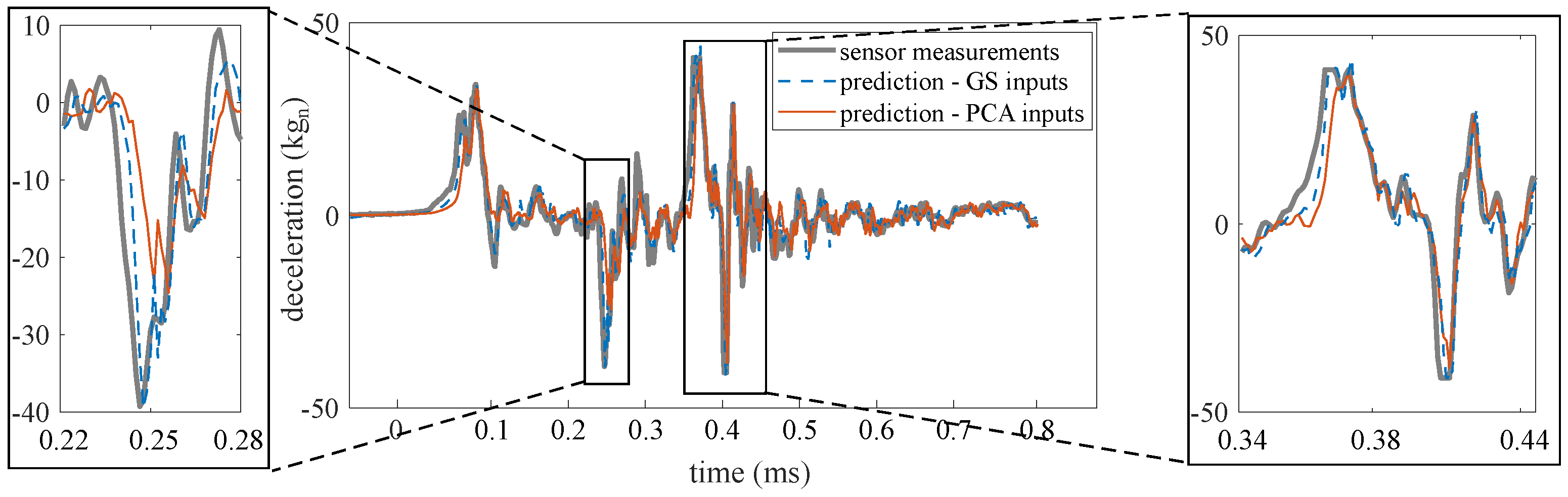

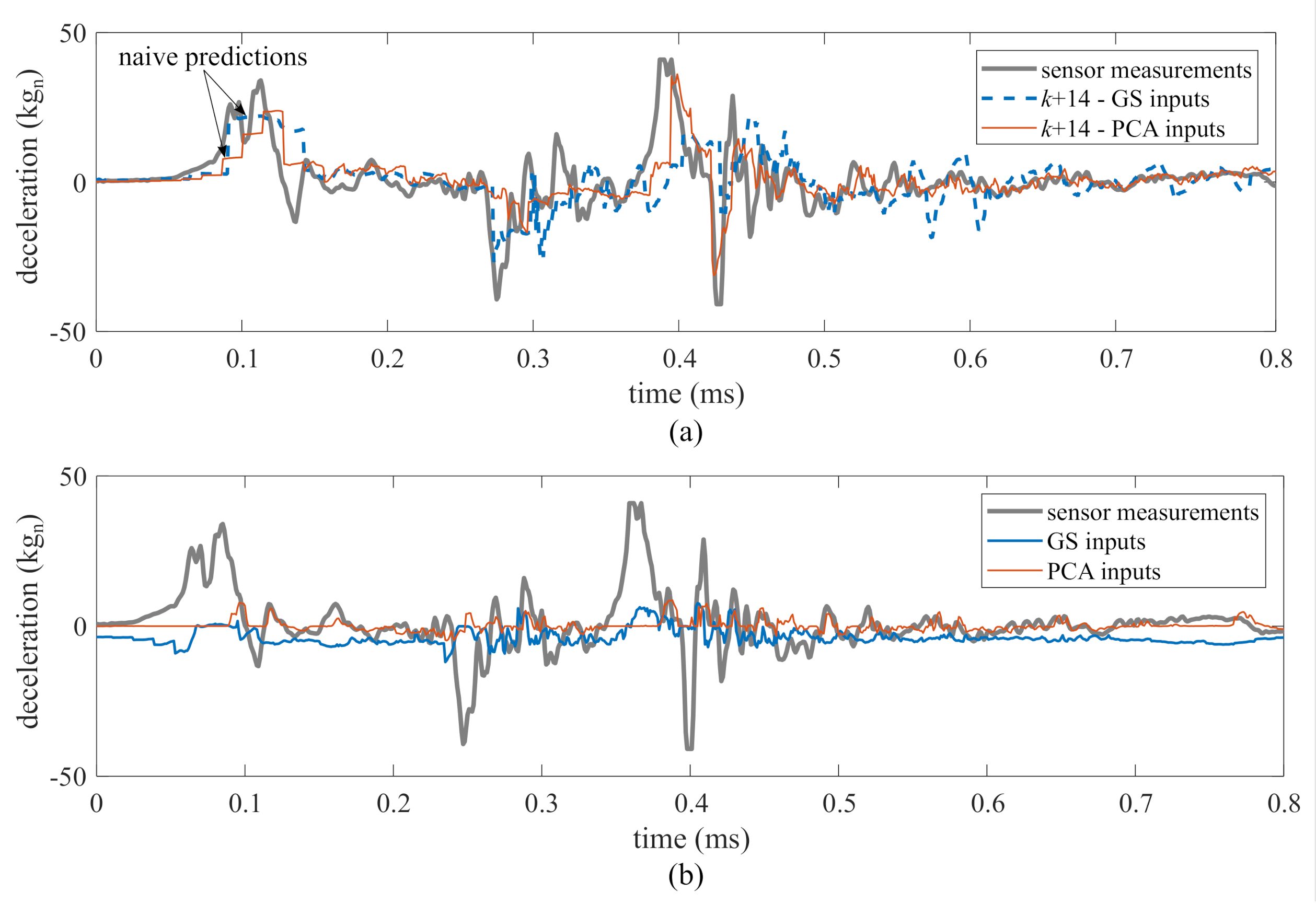

d) through the multi-rate sampler. Results showed that the proposed “PCA inputs” method did extract better features when compared against the “GS inputs” method, because (1) as exhibited in

Figure 9b, the selected hidden state under “PCA inputs” followed changes in the time series significantly more closely; (2) the naive component (

Figure 11) of “PCA inputs” was stable through all of the prediction horizons; (3) the DTW metric (

Figure 12) showed a level of similarity between the sequence extracted by each LSTM and the source domain over all prediction horizon, which was not the case for “GS inputs”; (4) there was a net improvement in step ahead prediction capabilities (

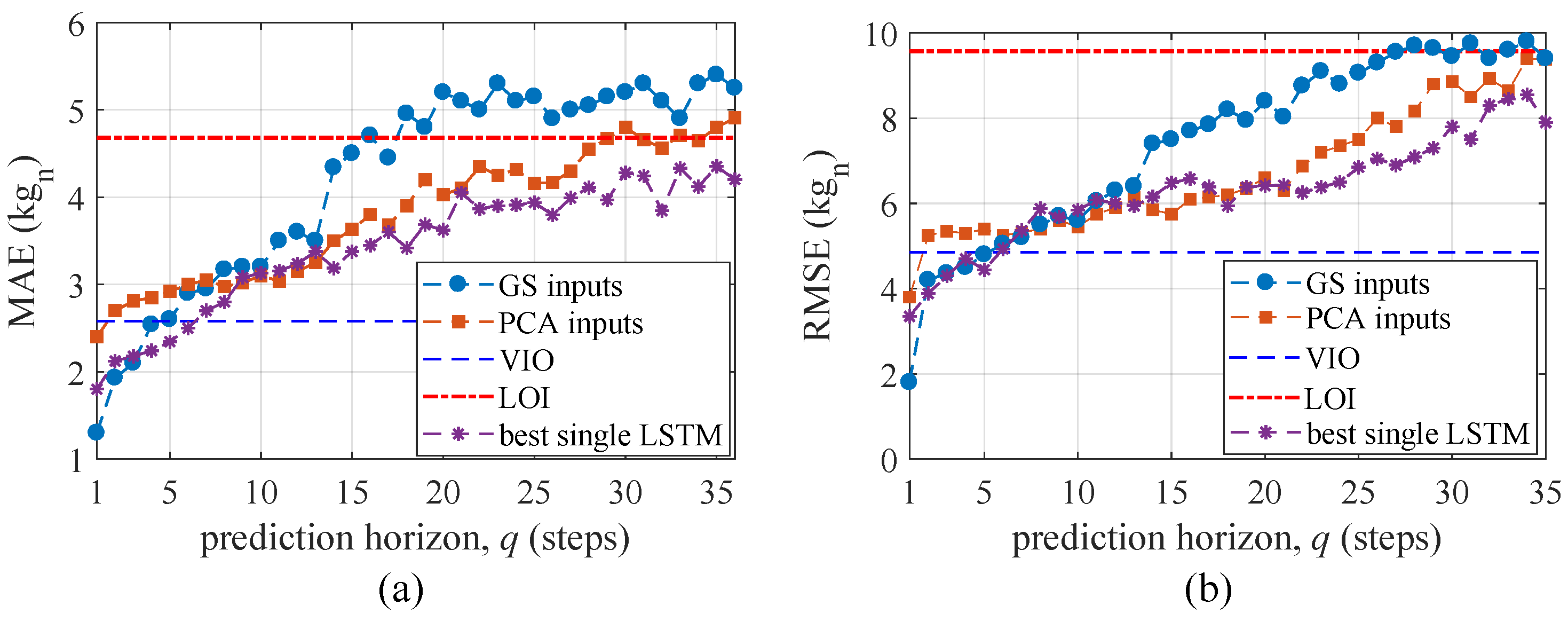

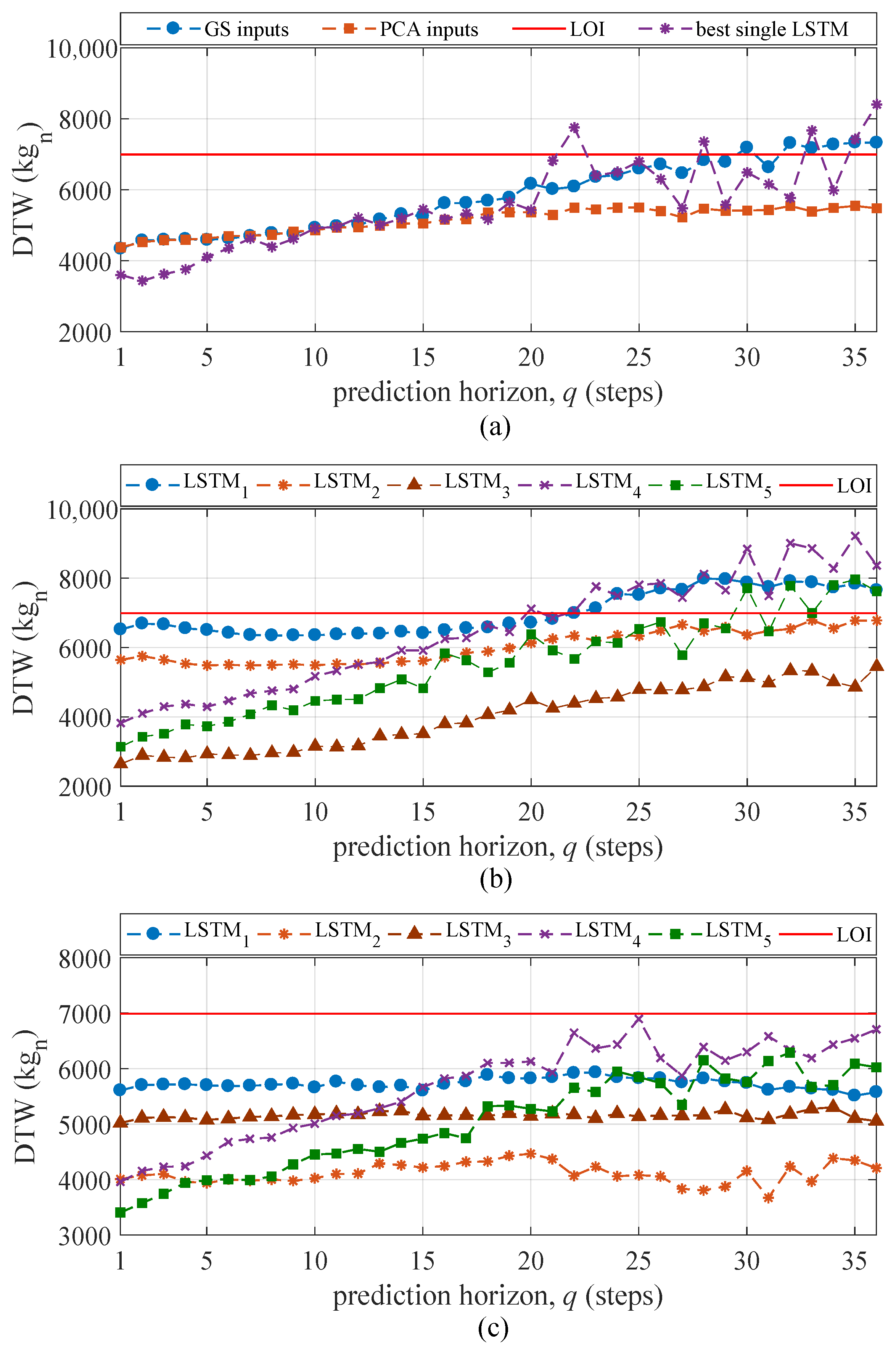

Figure 10), with “PCA inputs” surpassing the LOI for up to 29 steps ahead under the MAE metric and 35 steps ahead under the RMSE metric, compared against “GS input” surpassing the LOI for up to 15 and 27 steps ahead under the MAE and RMSE metrics, respectively; and (5) the use of an ensemble is critical in extracting useful temporal features compared to using a (hypothetical) best single LSTM under each prediction horizon. It follows that, with this particular data set, the incorporation of physics through the multi-sampler that aims at extracting essential dynamics out of different temporal characteristics obtained from PCA was a suitable approach and yielded important improvement in predictive performance.

In terms of HRSHM applicability, it is important for the computing time of the algorithm to remain under the prediction horizon for real-time implementation. The presented algorithm, coded in Python, had an average computing time of 25

s. This would govern what should be the minimum data sampling rate, which here was 1

s in the given dataset. It must be remarked, however, that the execution of the feature extractors was not conducted in parallel in the code, and that such parallel implementation combined with the use of more efficient coding techniques is expected to significantly decrease computing time. This is also true for implementations of the algorithm on hardware, such as on a field-programmable gate arrays (FPGA) or micro-controller. It should also be noted that while LSTM networks were used in this paper to validate the multi-rate sampling method, other time-series modeling algorithms could have been considered. An example is the gated recurrent unit (GRU) network that has exhibited promise in recent literature [

47]. The study of different time-series modeling algorithms is left for future work.

It is also important to note that step ahead predictions are difficult to use directly into a decision making process, and that these predictions would need to be combined with other algorithms to empower the feedback system at the expense of additional computing time. One of the most straightforward applications of step ahead predictions to HRSHM could be the computation of a binary state damage/undamaged by comparing drifts in prediction errors, or by simply leveraging step ahead predictions in state estimation algorithms to augment the available computing time for real-time applications. More advanced implementations may use the step ahead prediction in parallel with an adaptive physical representation to obtain actionable information based on physics. This could be, for example, the extraction of local stiffness values in order to be capable of localizing and quantifying damage. Along with appropriate prognostic and remaining useful life models, this could constitute a powerful tool in making high-rate real-time decisions.

7. Conclusions

In this work, a deep learning algorithm for real-time prediction of high-rate sensor data was presented. The algorithm consisted of an ensemble of short-sequence long short-term memory (LSTM) cells that are concurrently trained. The main novelty was the use of a multi-rate sampler designed to individually select the input space of each LSTM based on local dynamics extracted using the embedding theorem, therefore providing improved step-ahead prediction accuracy and longer prediction horizons by extracting more representative time series features. This construction of the input space was conducted by first decomposing the signal using principal component analysis (PCA), and second selecting the individual delay vectors that extracted the essential dynamics of each principal component based on the embedding theorem.

The performance of the algorithm was evaluated on experimental high-rate data gathered from accelerated drop tower tests, consisting of time series measurements acquired from two accelerometers over five consecutive tests. The data from one sensor over a single test (TS—test 1) was used to construct the input space and pre-train feature extractors. The data from the second sensor was used as the target domain to evaluate predictive capabilities. Performance was assessed in terms of mean absolute error (MAE), root mean square error (RMSE), and naivety of the prediction, as well as feature-signal similarities quantified through dynamic time warping (DTW). Performance of the proposed method (“PCA inputs”) was benchmarked against a more heuristic selection of the input space tailored to one-step ahead performance termed grid search inputs (“GS inputs”).

Results from the numerical simulations showed that, on this particular data set, the “PCA inputs” method outperformed the “GS inputs” method for prediction horizons beyond 6 steps in terms of MAE and RMSE. The “PCA inputs” also showed stability in terms of prediction naivety over the entire 36 steps-ahead prediction horizon. A study of the DTW metric showed superior performance of the “PCA inputs” in terms of extracting features that shared similarities with the sensor measurements. The enhanced predictive performance of the “PCA inputs” method can be attributed to this capability of extracting useful features from the highly non-stationary time series. The average computing time per discrete time step during the online prediction task was 25 s, below the maximum useful of 29 steps, equivalent to 29 s, under the MAE metric, thus demonstrating the promise of the algorithm at high-rate structural health monitoring (HRSHM) applications. Moreover, the comparison of the ensemble of LSTMs with a single LSTM showed the stability of the ensemble in terms of naive behavior of the predictions. It should be remarked that the computing speed of the algorithm could be improved by enabling parallel processing of the LSTM cells, and through hardware implementation.

Overall, this study shows the promise of a data-based technique at quickly learning a non-stationary time series and conducting predictions based on limited training datasets. While its performance could differ if applied to different time series dynamics, it was shown that it is possible to conduct adequate predictions of complex dynamics in the sub-millisecond range. It should also be noted that, to fully empower HRSHM, such predictive capability must be mapped to decisions through the extraction of actionable information. Future research is also required to investigate domain adaptation methods for situations involving source and target domains that differ significantly.