The More, the Better? Improving VR Firefighting Training System with Realistic Firefighter Tools as Controllers

Abstract

:1. Introduction

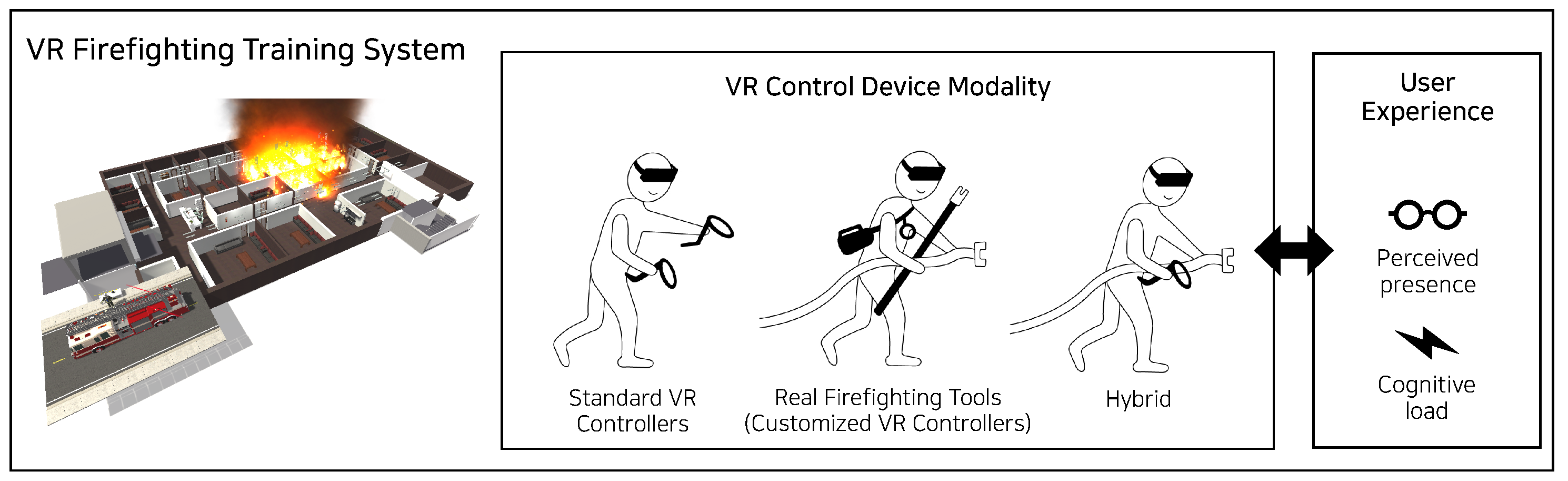

- We developed a firefighting training system and integrated real firefighting tools and the standard VR controller to provide users with an immersive VR experience for training.

- We analyzed the relationship between the VR controller and human factor constructs (i.e., perceived presence and cognitive load) at different levels of tool modality (i.e., standard VR controllers only, real tools only, and hybrid).

- We present a strategic plan for the use of VR controllers to help enhance the user experience and achieve VR training goals.

2. Related Work

2.1. VR Simulation for Training

2.2. Customized VR Controller Development

2.3. User Experience in VR

2.3.1. Perceived Presence

2.3.2. Cognitive Load

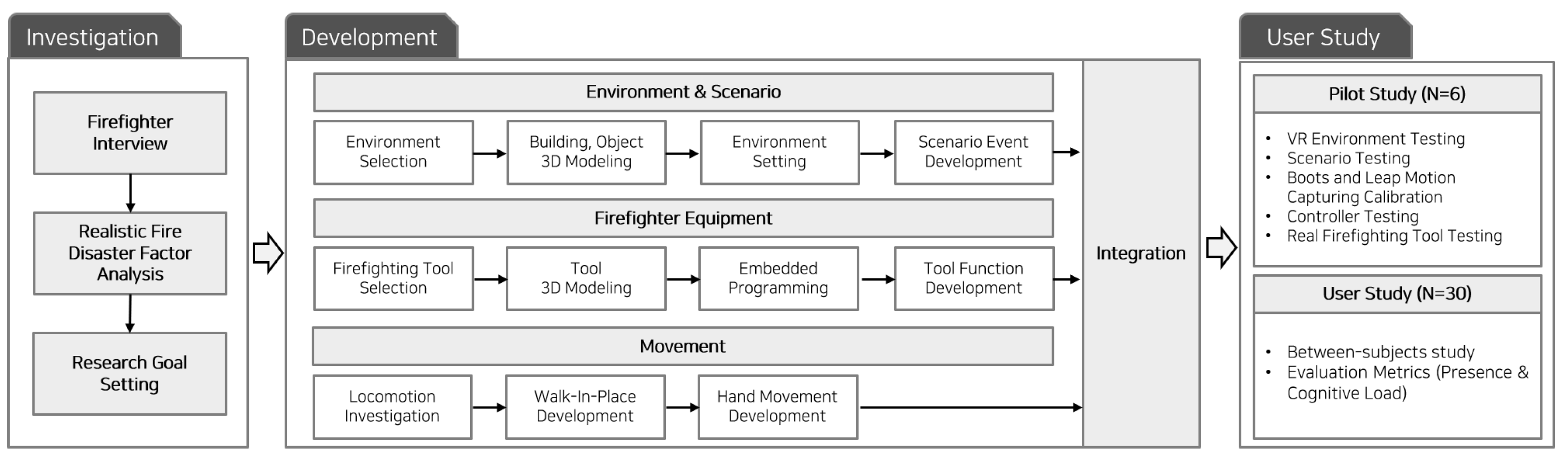

3. Study Procedure

- H1: Using one real tool and one standard VR controller will result in a similar degree of perceived presence as using four real tools.

- H2: Using one real tool and one standard VR controller will result in a similar degree of cognitive load as using two standard VR controllers.

4. Development

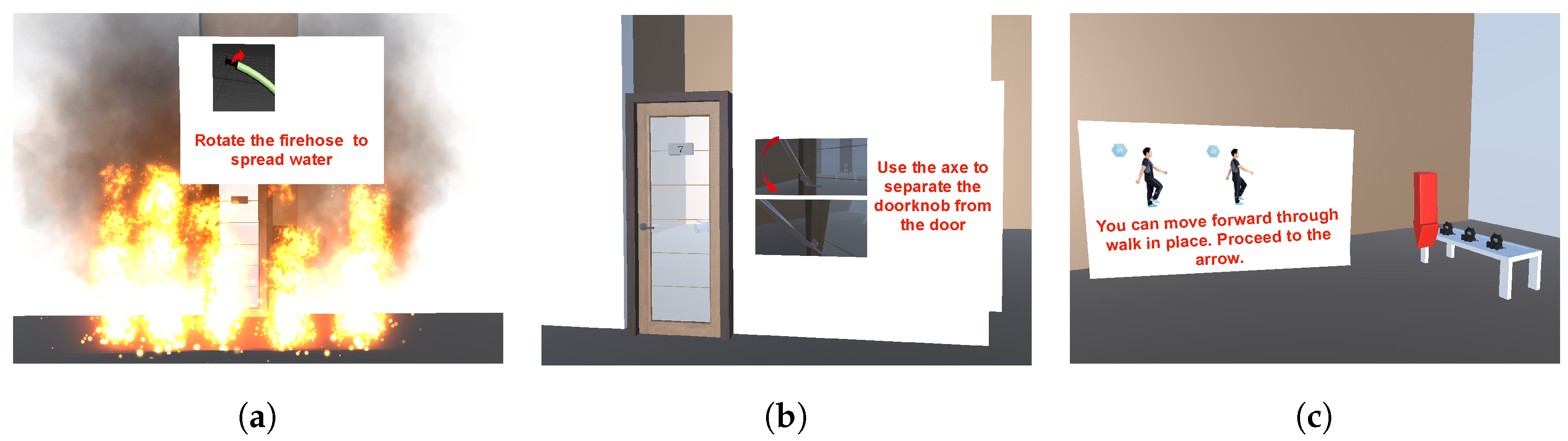

4.1. VR Environment and Scenario

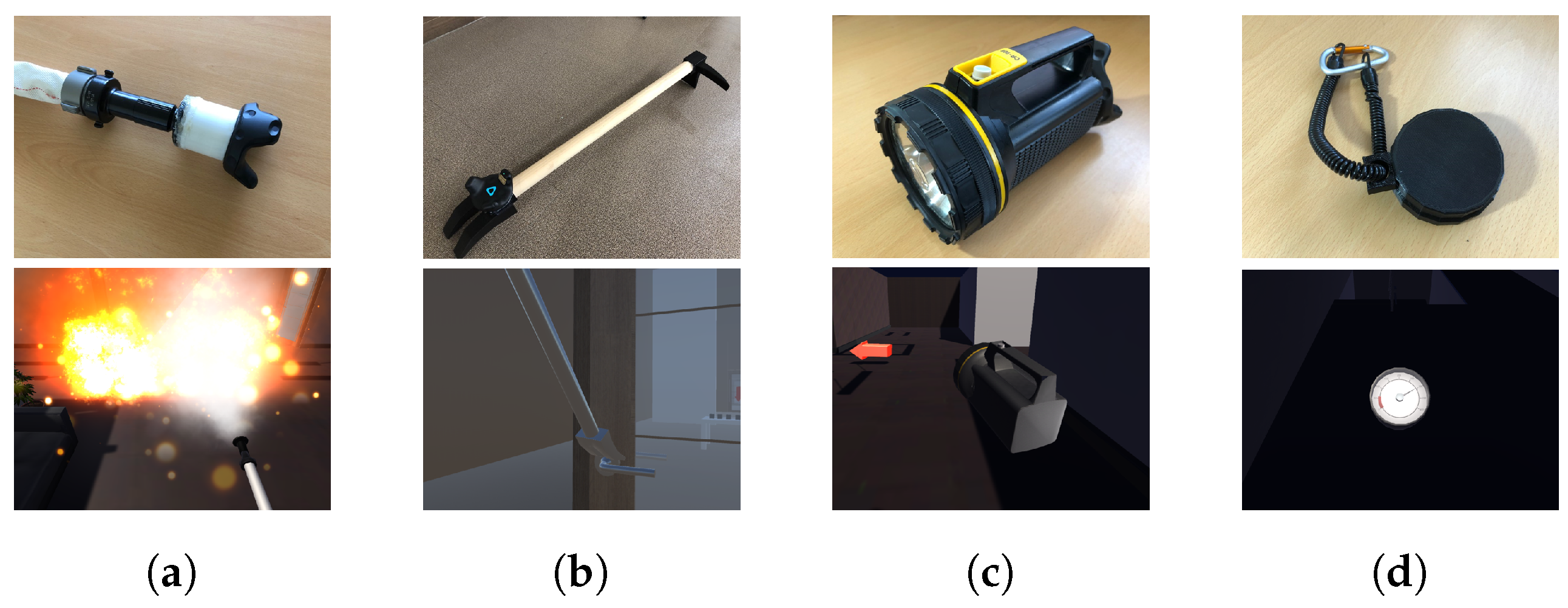

4.2. Firefighting Tools

4.2.1. Fire Hose

4.2.2. Fire Axe

4.2.3. Flashlight

4.2.4. Air Pressure Gauge

4.3. Movement

4.3.1. Hand Control

4.3.2. Locomotion

4.4. Integration

5. Pilot Study: Validation of VR Controller

5.1. Study Procedure

5.2. Results

6. User Study: VR Control Device Modality

6.1. Background and Hypotheses

- Standard controllers condition (control): two standard VR controllers (one for tool selection and the other for tool operation).

- Real tools condition (experimental #1): four real firefighting tools.

- Hybrid condition (experimental #2): one real tool and one standard VR controller.

6.2. User Study Design

6.3. Measurement of Human Factor Constructs

6.3.1. Perceived Presence

6.3.2. Cognitive Load

6.4. Results

6.4.1. Survey Responses

6.4.2. Interviews

7. Discussion

7.1. Summary of the User Studies and Implications

7.1.1. Designing VR Systems for Beyond Being There

7.1.2. Hybrid VR Control Device Modality

7.2. Limitations and Future Work

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- Panerai, S.; Gelardi, D.; Catania, V.; Rundo, F.; Tasca, D.; Musso, S.; Prestianni, G.; Muratore, S.; Babiloni, C.; Ferri, R. Functional Living Skills: A Non-Immersive Virtual Reality Training for Individuals with Major Neurocognitive Disorders. Sensors 2021, 21, 5751. [Google Scholar] [CrossRef] [PubMed]

- Jeon, S.G.; Han, J.; Jo, Y.; Han, K. Being more focused and engaged in firefighting training: Applying user-centered design to VR system development. In Proceedings of the 25th ACM Symposium on Virtual Reality Software and Technology, Parramatta, Australia, 12–15 November 2019. [Google Scholar]

- Gavish, N.; Gutiérrez, T.; Webel, S.; Rodríguez, J.; Peveri, M.; Bockholt, U.; Tecchia, F. Evaluating virtual reality and augmented reality training for industrial maintenance and assembly tasks. Interact. Learn. Environ. 2015, 23, 778–798. [Google Scholar] [CrossRef]

- Khan, N.; Muhammad, K.; Hussain, T.; Nasir, M.; Munsif, M.; Imran, A.S.; Sajjad, M. An adaptive game-based learning strategy for children road safety education and practice in virtual space. Sensors 2021, 21, 3661. [Google Scholar] [CrossRef]

- Engelbrecht, H.; Lindeman, R.W.; Hoermann, S. A SWOT analysis of the field of virtual reality for firefighter training. Front. Robot. AI 2019, 6, 101. [Google Scholar] [CrossRef] [Green Version]

- Lovreglio, R.; Duan, X.; Rahouti, A.; Phipps, R.; Nilsson, D. Comparing the effectiveness of fire extinguisher virtual reality and video training. Virtual Real. 2021, 25, 133–145. [Google Scholar] [CrossRef]

- Saghafian, M.; Laumann, K.; Akhtar, R.S.; Skogstad, M.R. The Evaluation of Virtual Reality Fire Extinguisher Training. Front. Psychol. 2020, 11, 593466. [Google Scholar] [CrossRef]

- Zenner, A.; Krüger, A. Drag on: A virtual reality controller providing haptic feedback based on drag and weight shift. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019. [Google Scholar]

- Arora, J.; Saini, A.; Mehra, N.; Jain, V.; Shrey, S.; Parnami, A. VirtualBricks: Exploring a Scalable, Modular Toolkit for Enabling Physical Manipulation in VR. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019. [Google Scholar]

- Zhu, K.; Chen, T.; Han, F.; Wu, Y.S. HapTwist: Creating interactive haptic proxies in virtual reality using low-cost twistable artefacts. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019. [Google Scholar]

- Shigeyama, J.; Hashimoto, T.; Yoshida, S.; Narumi, T.; Tanikawa, T.; Hirose, M. Transcalibur: A weight shifting virtual reality controller for 2d shape rendering based on computational perception model. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019. [Google Scholar]

- Yi, H.; Hong, J.; Kim, H.; Lee, W. DexController: Designing a VR Controller with Grasp-Recognition for Enriching Natural Game Experience. In Proceedings of the 25th ACM Symposium on Virtual Reality Software and Technology, Parramatta, Australia, 12–15 November 2019. [Google Scholar]

- Seo, S.W.; Kwon, S.; Hassan, W.; Talhan, A.; Jeon, S. Interactive Virtual-Reality Fire Extinguisher with Haptic Feedback. In Proceedings of the 25th ACM Symposium on Virtual Reality Software and Technology, Parramatta, Australia, 12–15 November 2019. [Google Scholar]

- Krell, M. Evaluating an Instrument to Measure Mental Load and Mental Effort Using Item Response Theory. Cogent Educ. 2015, 4. [Google Scholar] [CrossRef]

- Noyes, J.; Garland, K.; Robbins, L. Paper-based versus computer-based assessment: Is workload another test mode effect? Br. J. Educ. Technol. 2004, 35, 111–113. [Google Scholar] [CrossRef]

- Sweller, J. Cognitive load theory. In Psychology of Learning and Motivation; Elsevier: Amsterdam, The Netherlands, 2011; Volume 55, pp. 37–76. [Google Scholar]

- Jung, S.; Wood, A.L.; Hoermann, S.; Abhayawardhana, P.L.; Lindeman, R.W. The Impact of Multi-sensory Stimuli on Confidence Levels for Perceptual-cognitive Tasks in VR. In Proceedings of the 2020 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Atlanta, GA, USA, 22–26 March 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 463–472. [Google Scholar]

- Çakiroğlu, Ü.; Gökoğlu, S. Development of fire safety behavioral skills via virtual reality. Comput. Educ. 2019, 133, 56–68. [Google Scholar] [CrossRef]

- Clifford, R.M.; Jung, S.; Hoerrnann, S.; Billinqhurst, M.; Lindeman, R.W. Creating a stressful decision making environment for aerial firefighter training in virtual reality. In Proceedings of the 2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Osaka, Japan, 23–27 March 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 181–189. [Google Scholar]

- Nahavandi, S.; Wei, L.; Mullins, J.; Fielding, M.; Deshpande, S.; Watson, M.; Korany, S.; Nahavandi, D.; Hettiarachchi, I.; Najdovski, Z.; et al. Haptically-enabled vr-based immersive fire fighting training simulator. In Intelligent Computing-Proceedings of the Computing Conference; Springer: Berlin/Heidelberg, Germany, 2019; pp. 11–21. [Google Scholar]

- Bliss, J.P.; Tidwell, P.D.; Guest, M.A. The effectiveness of virtual reality for administering spatial navigation training to firefighters. Presence 1997, 6, 73–86. [Google Scholar] [CrossRef]

- Krekhov, A.; Emmerich, K.; Bergmann, P.; Cmentowski, S.; Krüger, J. Self-transforming controllers for virtual reality first person shooters. In Proceedings of the Annual Symposium on Computer-Human Interaction in Play, Amsterdam, The Netherlands, 15–18 October 2017; pp. 517–529. [Google Scholar]

- Narciso, D.; Melo, M.; Raposo, J.V.; Cunha, J.; Bessa, M. Virtual reality in training: An experimental study with firefighters. Multimed. Tools. Appl. 2020, 79, 6227–6245. [Google Scholar] [CrossRef]

- Rizzo, A.A.; Wiederhold, M.; Buckwalter, J.G. Basic issues in the use of virtual environments for mental health applications. Stud. Health Technol. Inform. 1998, 58, 21–42. [Google Scholar]

- Heeter, C. Being there: The subjective experience of presence. Presence 1992, 1, 262–271. [Google Scholar] [CrossRef]

- Schuemie, M.J.; Van Der Straaten, P.; Krijn, M.; Van Der Mast, C.A. Research on presence in virtual reality: A survey. Cyberpsychol. Behav. 2001, 4, 183–201. [Google Scholar] [CrossRef] [PubMed]

- Schubert, T.; Friedmann, F.; Regenbrecht, H. The experience of presence: Factor analytic insights. Presence 2001, 10, 266–281. [Google Scholar] [CrossRef]

- Ayres, P. Impact of reducing intrinsic cognitive load on learning in a mathematical domain. Appl. Cogn. Psychol. J. Appl. Res. Mem. Cogn. 2006, 20, 287–298. [Google Scholar] [CrossRef]

- Gerjets, P.; Scheiter, K.; Catrambone, R. Designing instructional examples to reduce intrinsic cognitive load: Molar versus modular presentation of solution procedures. Instr. Sci. 2004, 32, 33–58. [Google Scholar] [CrossRef]

- Huang, C.L.; Luo, Y.F.; Yang, S.C.; Lu, C.M.; Chen, A.S. Influence of students’ learning style, sense of presence, and cognitive load on learning outcomes in an immersive virtual reality learning environment. J. Educ. Comput. Res. 2020, 58, 596–615. [Google Scholar] [CrossRef]

- Nebel, S.; Schneider, S.; Beege, M.; Rey, G.D. Leaderboards within educational videogames: The impact of difficulty, effort and gameplay. Comput. Educ. 2017, 113, 28–41. [Google Scholar] [CrossRef]

- Luong, T.; Martin, N.; Raison, A.; Argelaguet, F.; Diverrez, J.M.; Lécuyer, A. Towards Real-Time Recognition of Users Mental Workload Using Integrated Physiological Sensors Into a VR HMD. In Proceedings of the 2020 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Porto de Galinhas, Brazil, 9–13 November 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 425–437. [Google Scholar]

- Zhang, L.; Wade, J.; Bian, D.; Fan, J.; Swanson, A.; Weitlauf, A.; Warren, Z.; Sarkar, N. Cognitive load measurement in a virtual reality-based driving system for autism intervention. IEEE Trans. Affect. Comput. 2017, 8, 176–189. [Google Scholar] [CrossRef]

- Krijn, M.; Emmelkamp, P.M.; Biemond, R.; de Ligny, C.d.W.; Schuemie, M.J.; van der Mast, C.A. Treatment of acrophobia in virtual reality: The role of immersion and presence. Behav. Res. Ther. 2004, 42, 229–239. [Google Scholar] [CrossRef]

- Tao, G.; Archambault, P.S. Powered wheelchair simulator development: Implementing combined navigation-reaching tasks with a 3D hand motion controller. J. Neuroeng. Rehabil. 2016, 13, 3. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Felnhofer, A.; Kafka, J.X.; Hlavacs, H.; Beutl, L.; Kryspin-Exner, I.; Kothgassner, O.D. Meeting others virtually in a day-to-day setting: Investigating social avoidance and prosocial behavior towards avatars and agents. Comput. Hum. Behav. 2018, 80, 399–406. [Google Scholar] [CrossRef]

- Iachini, T.; Maffei, L.; Masullo, M.; Senese, V.P.; Rapuano, M.; Pascale, A.; Sorrentino, F.; Ruggiero, G. The experience of virtual reality: Are individual differences in mental imagery associated with sense of presence? Cogn. Process. 2019, 20, 291–298. [Google Scholar] [CrossRef] [PubMed]

- Krell, M. Evaluating an instrument to measure mental load and mental effort considering different sources of validity evidence. Cogent. Educ. 2017, 4, 1280256. [Google Scholar] [CrossRef]

- Hollan, J.; Stornetta, S. Beyond being there. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Monterey, CA, USA, 3–7 May 1992; pp. 119–125. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jeon, S.; Paik, S.; Yang, U.; Shih, P.C.; Han, K. The More, the Better? Improving VR Firefighting Training System with Realistic Firefighter Tools as Controllers. Sensors 2021, 21, 7193. https://doi.org/10.3390/s21217193

Jeon S, Paik S, Yang U, Shih PC, Han K. The More, the Better? Improving VR Firefighting Training System with Realistic Firefighter Tools as Controllers. Sensors. 2021; 21(21):7193. https://doi.org/10.3390/s21217193

Chicago/Turabian StyleJeon, Seunggon, Seungwon Paik, Ungyeon Yang, Patrick C. Shih, and Kyungsik Han. 2021. "The More, the Better? Improving VR Firefighting Training System with Realistic Firefighter Tools as Controllers" Sensors 21, no. 21: 7193. https://doi.org/10.3390/s21217193

APA StyleJeon, S., Paik, S., Yang, U., Shih, P. C., & Han, K. (2021). The More, the Better? Improving VR Firefighting Training System with Realistic Firefighter Tools as Controllers. Sensors, 21(21), 7193. https://doi.org/10.3390/s21217193