Daily Human Activity Recognition Using Non-Intrusive Sensors

Abstract

:1. Introduction

2. Overview of Related Work

3. Analysis of the System

3.1. Components and Tools

3.1.1. CASAS Dataset

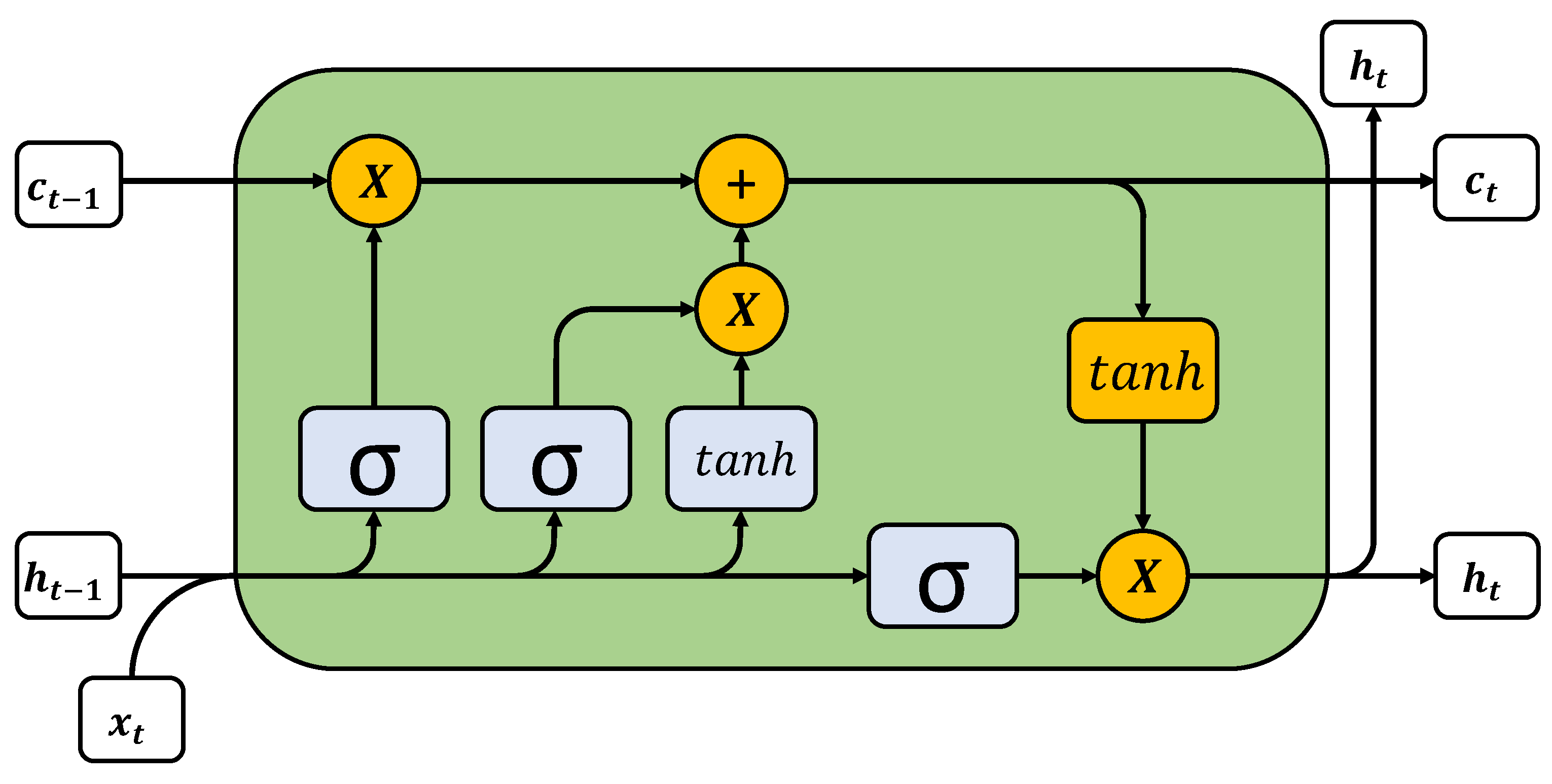

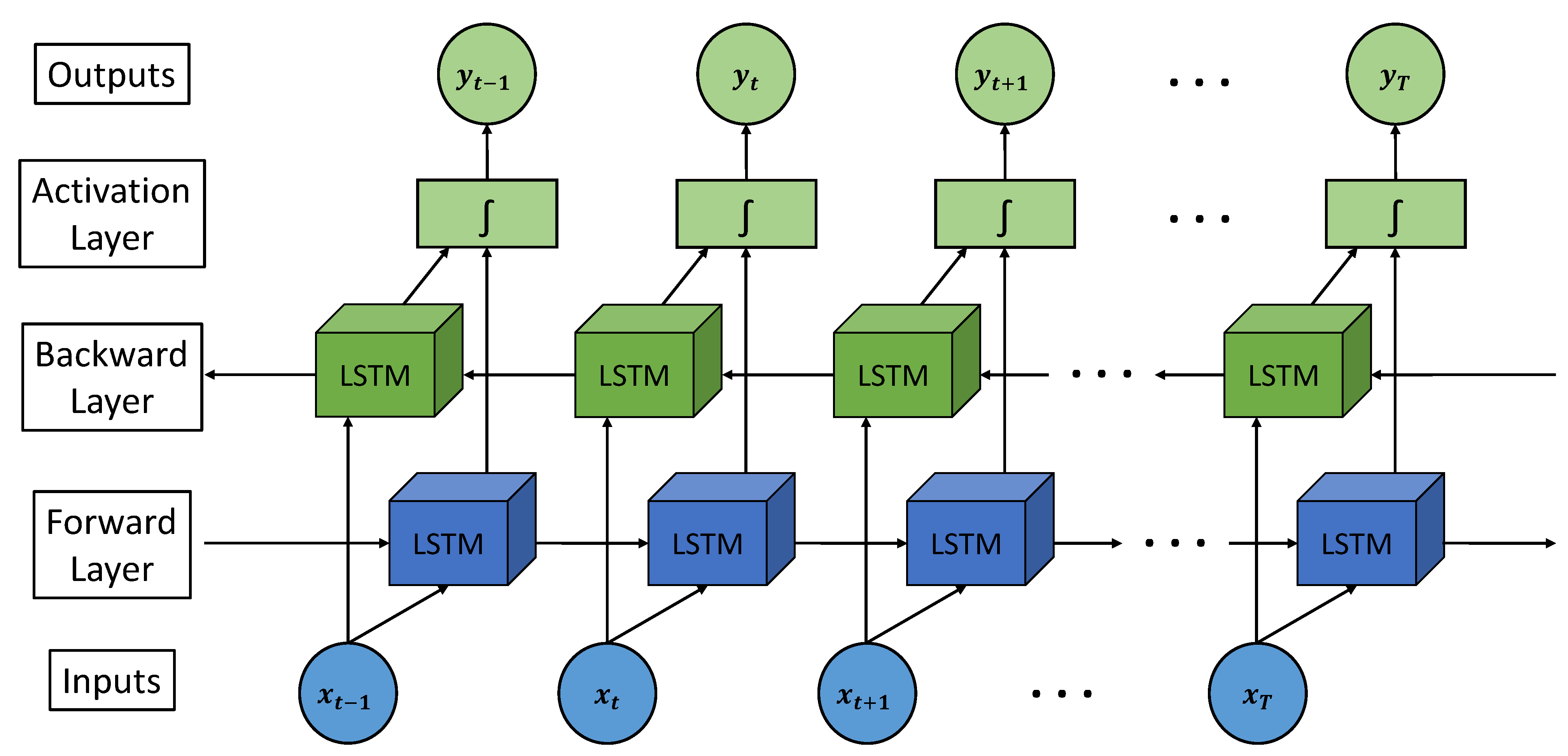

3.1.2. Neural Network—LSTM Model

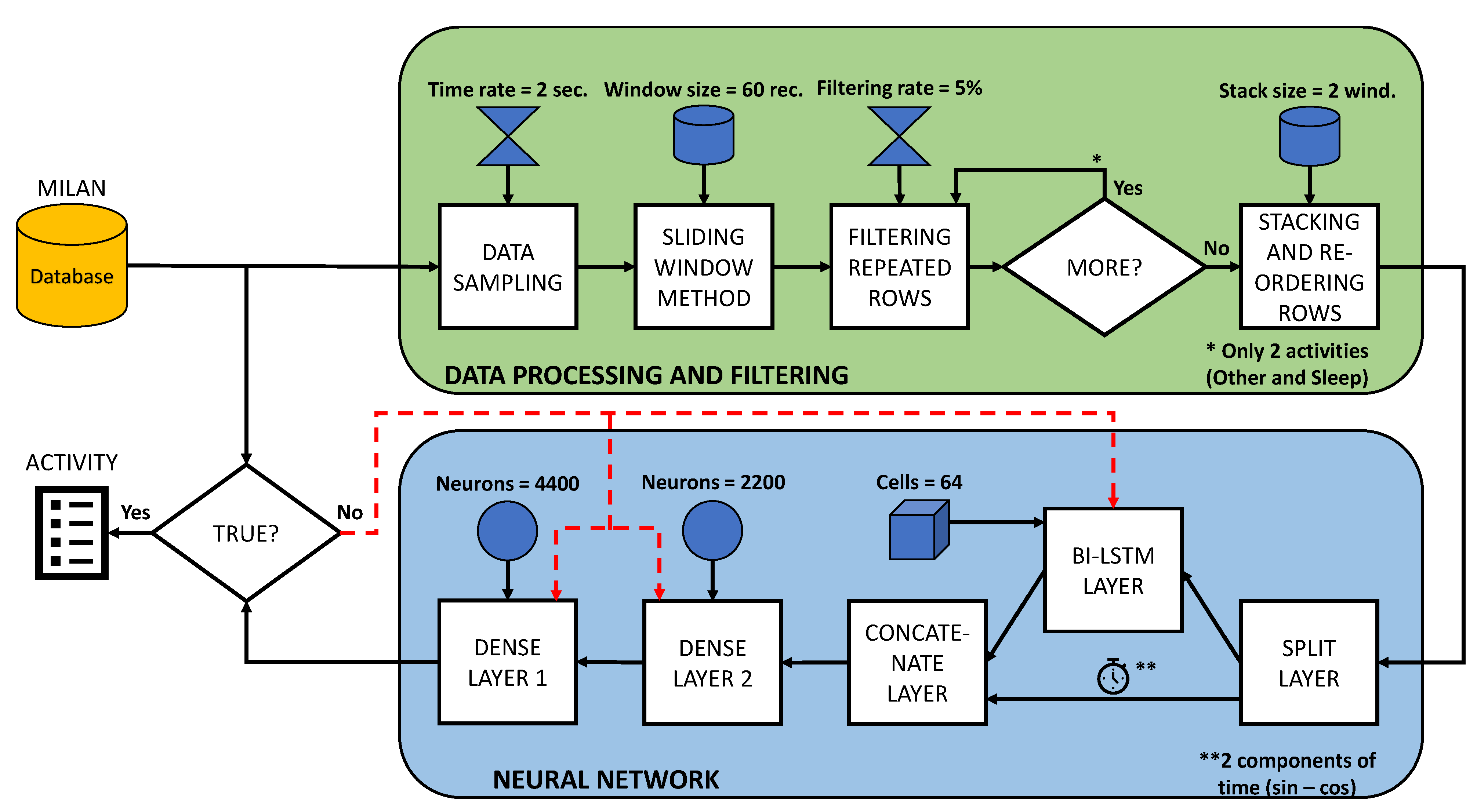

3.2. Data Processing and Filtering

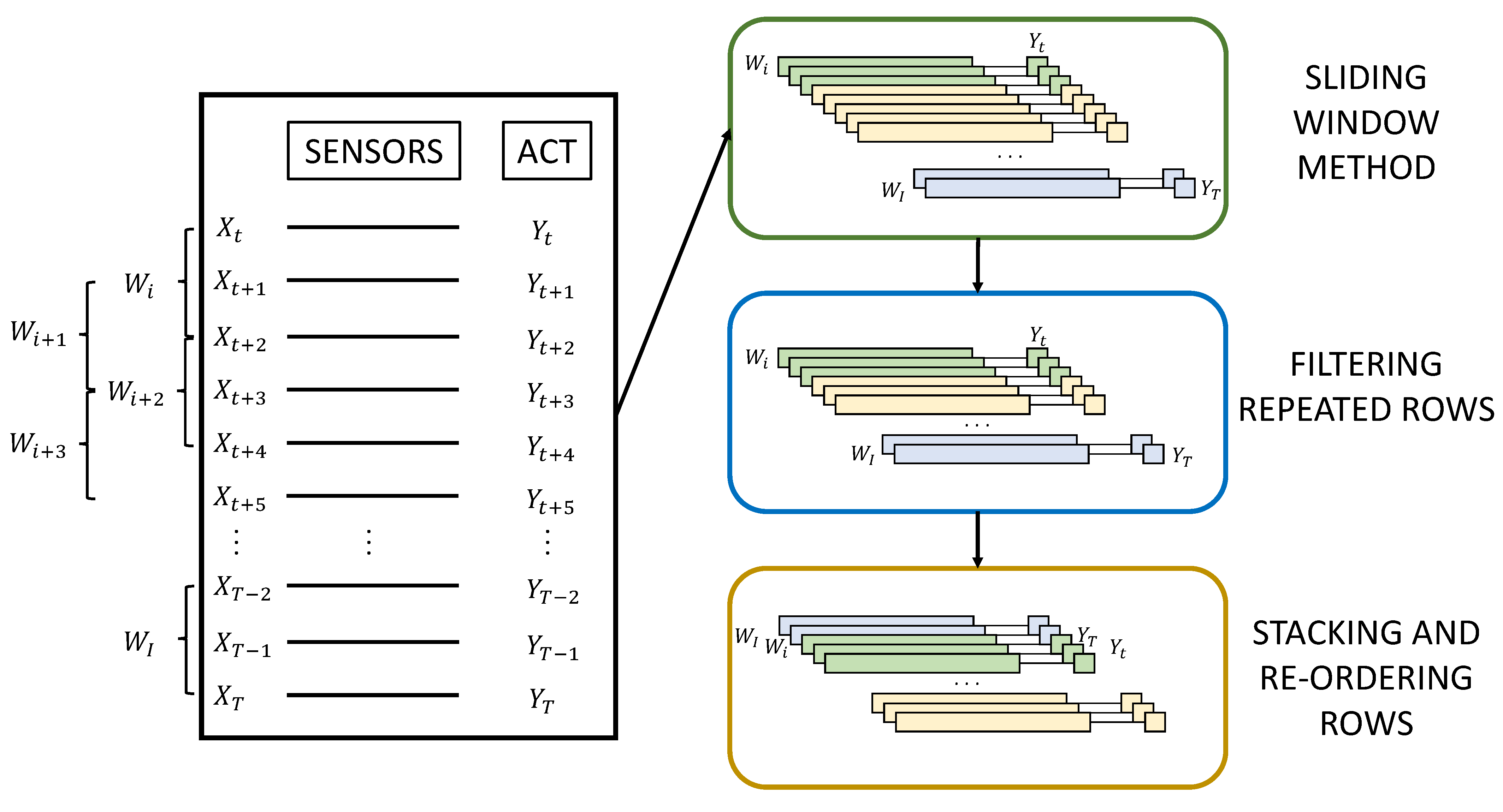

- Sliding window method: To add more consistency to the data, a technique called ‘sliding window’ is used. The sliding window technique consists of collecting the closest old records from the sensors to obtain a certain output. That is to say, for an instant (T) in which an output () is sought, the sensor registers (, …, , ) are used, (W) being the size of the window. By using the sliding window technique, greater repeatability of the data is achieved, and in addition, the network is strengthened due to the sequential pattern provided by the sensor events.

- Filtering repeated rows: This method serves to minimise the load of activities with longer durations. When a particular activity exceeds a certain number of consecutive records, a filtering percentage is applied to prevent the model from being trained in an unbalanced way and to avoid giving more importance to activities with longer durations. In this way, a percentage of the intermediate records of the same activity is eliminated, leaving the records at the beginning and end of the activity.

- Stacking and re-ordering rows: A grouping of the data windows into blocks is applied before re-ordering to maintain the sequential order of the records and then randomly re-ordering the groups of windows to avoid overfitting.

3.3. Neural Network Architecture

- Split Layer: The first layer of the model is a , which is responsible for separating the two components corresponding to the time from the rest of the sensor information. This is due to the fact that it is more rigorous to train the LSTMs only with the information from the sensors than with all the data mixed together, as this will provide more sequential information than the time, as they are equidistant sequential patterns.

- Bidirectional LSTM: The bidirectional LSTM layers are the main part of the prediction model. They are responsible for providing the neural network with the sequential value corresponding to the activation of the sensors. To improve the behaviour of the neural network against overfitting and thus further generalise the model, an L2 regularisation [45] has been used. To calculate the regularisation value, it is necessary to take into account the cost function:The value to regularise the LSTM can be quantified using this expression 9. The term refers to the original cost, while is the term that quantifies the regularisation weight and the proportion of . The term n corresponds to the size of the training dataset, and represents all the parameters.

- Dropout: Dropout layers are included to reduce overfitting. They are responsible for randomly and temporarily disabling the connections between the outputs of the previous layer and the inputs of the subsequent [46] layer. Once all the data have made a pass through the model, the Dropout layers randomly change the deactivated connections while maintaining the given proportion.

- Batch normalisation: A batch normalisation is performed in order to reduce a term called “Internal Covariate Shift”. The internal covariate shift occurs due to the change of parameters between the layers that make up the model [47]. For this reason, the batch normalisation layer generates small batch sizes that are adjusted so that they are all approximately the same length.

- Concatenate layer: This layer is responsible for merging the two time components for each sliding data window back together with all the sensor information processed by the bidirectional LSTM layers to perform the final processing step.

- Dense layer: This is the last processing step, which takes into account both the sensor information processed by the bidirectional LSTM stage and the time of analysis. An architecture based on two fully-connected dense layers has been proposed for this system, whose distribution of neurons follows a distribution of and , where N is the total number of neurons.

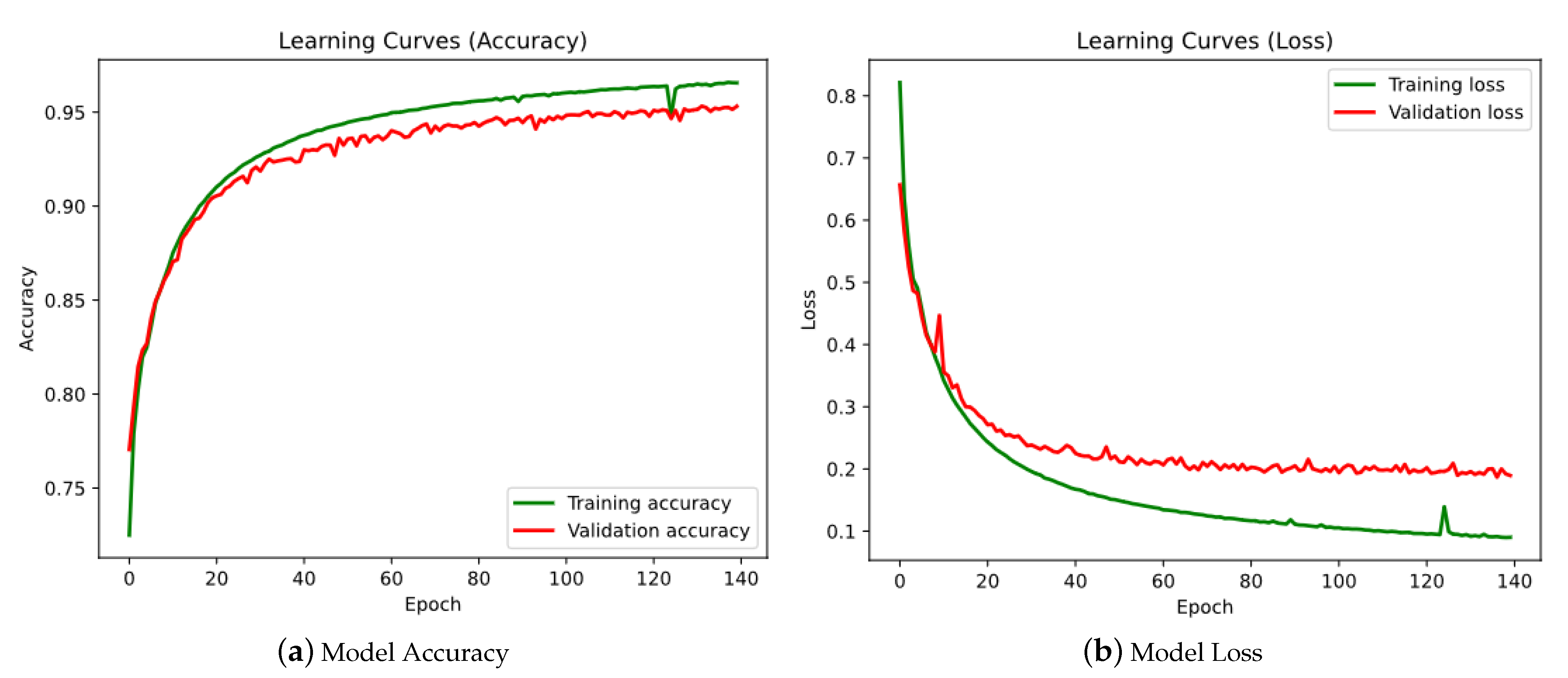

4. Experiments and Discussion

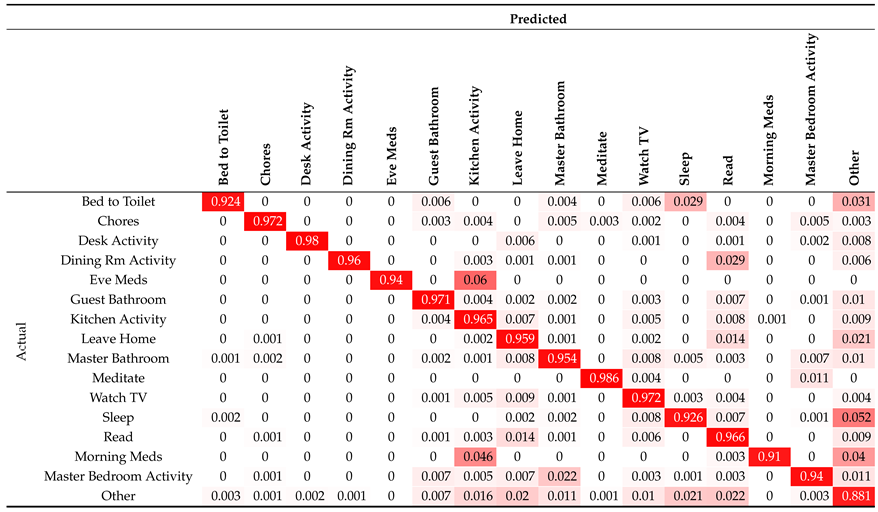

- Failure to detect taking medication: This failure occurs in both the activity and the activity, and both are confused with . This is because the box where the medicines are stored is in the kitchen, and although this box has a contact sensor, there may have been some malpractice during the day, such as leaving medicines out of the box or leaving the door open.

- Confusion between and : This problem can occur because the two rooms are in close proximity and can create confusion for motion sensors. Furthermore, the activity is short-lived and not as robust as the activity (which has a duration of many hours).

- Confusion between and : The activity takes place exclusively in that room. Therefore, it is an activity included within the range offered by .

- Tendency to confusion with : The activity is the dummy activity generated to label times when the user does nothing. For this reason, the neural network may fail during the transition between periods of time with no activity and a new activity. Specifically, the highest failure rate in this respect falls on the activity, as it is the activity that most closely resembles in terms of duration.

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Mehr, H.D.; Polat, H.; Cetin, A. Resident activity recognition in smart homes by using artificial neural networks. In Proceedings of the 2016 4th International Istanbul Smart Grid Congress and Fair (ICSG), Istanbul, Turkey, 20–21 April 2016; pp. 1–5. [Google Scholar]

- Teoh, C.C.; Tan, C.E. A neural network approach towards reinforcing smart home security. In Proceedings of the 8th Asia-Pacific Symposium on Information and Telecommunication Technologies, Sarawak, Malaysia, 15–18 June 2010; pp. 1–5. [Google Scholar]

- Vrigkas, M.; Nikou, C.; Kakadiaris, I.A. A review of human activity recognition methods. Front. Robot. AI 2015, 2, 28. [Google Scholar] [CrossRef] [Green Version]

- Ann, O.C.; Theng, L.B. Human activity recognition: A review. In Proceedings of the 2014 IEEE International Conference on Control System, Computing and Engineering (ICCSCE 2014), Penang, Malaysia, 28–30 November 2014; pp. 389–393. [Google Scholar]

- Xia, K.; Huang, J.; Wang, H. LSTM-CNN architecture for human activity recognition. IEEE Access 2020, 8, 56855–56866. [Google Scholar] [CrossRef]

- Wiseman, Y. Take a picture of your tire! In Proceedings of the 2010 IEEE International Conference on Vehicular Electronics and Safety, Qingdao, China, 15–17 July 2010; pp. 151–156. [Google Scholar]

- Ahmed, N.; Rafiq, J.I.; Islam, M.R. Enhanced human activity recognition based on smartphone sensor data using hybrid feature selection model. Sensors 2020, 20, 317. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- D’Sa, A.G.; Prasad, B. A survey on vision based activity recognition, its applications and challenges. In Proceedings of the 2019 Second International Conference on Advanced Computational and Communication Paradigms (ICACCP), Gangtok, India, 25–28 February 2019; pp. 1–8. [Google Scholar]

- Jegham, I.; Khalifa, A.B.; Alouani, I.; Mahjoub, M.A. Vision-based human action recognition: An overview and real world challenges. Forensic Sci. Int. Digit. Investig. 2020, 32, 200901. [Google Scholar] [CrossRef]

- Lara, O.D.; Labrador, M.A. A survey on human activity recognition using wearable sensors. IEEE Commun. Surv. Tutor. 2012, 15, 1192–1209. [Google Scholar] [CrossRef]

- Jia, Y. Diatetic and exercise therapy against diabetes mellitus. In Proceedings of the 2009 Second International Conference on Intelligent Networks and Intelligent Systems, Tianjin, China, 1–3 November 2009; pp. 693–696. [Google Scholar]

- Yin, J.; Yang, Q.; Pan, J.J. Sensor-based abnormal human-activity detection. IEEE Trans. Knowl. Data Eng. 2008, 20, 1082–1090. [Google Scholar] [CrossRef]

- Cook, D.J.; Schmitter-Edgecombe, M. Assessing the quality of activities in a smart environment. Methods Inf. Med. 2009, 48, 480. [Google Scholar] [PubMed] [Green Version]

- Wilson, D.H.; Atkeson, C. Simultaneous tracking and activity recognition (STAR) using many anonymous, binary sensors. In International Conference on Pervasive Computing; Springer: Berlin/Heidelberg, Germany, 2005; pp. 62–79. [Google Scholar]

- Tapia, E.M.; Intille, S.S.; Larson, K. Activity recognition in the home using simple and ubiquitous sensors. In International Conference on Pervasive Computing; Springer: Berlin/Heidelberg, Germany, 2004; pp. 158–175. [Google Scholar]

- Intille, S.S.; Larson, K.; Beaudin, J.; Nawyn, J.; Tapia, E.M.; Kaushik, P. A living laboratory for the design and evaluation of ubiquitous computing technologies. In Proceedings of the CHI’05 Extended Abstracts on Human Factors in Computing Systems, Portland, OR, USA, 2–7 April 2005; pp. 1941–1944. [Google Scholar]

- Alemdar, H.; Ertan, H.; Incel, O.D.; Ersoy, C. ARAS human activity datasets in multiple homes with multiple residents. In Proceedings of the 2013 7th International Conference on Pervasive Computing Technologies for Healthcare and Workshops, Venice, Italy, 5–8 May 2013; pp. 232–235. [Google Scholar]

- Tran, S.N.; Zhang, Q. Towards multi-resident activity monitoring with smarter safer home platform. In Smart Assisted Living; Springer: Berlin/Heidelberg, Germany, 2020; pp. 249–267. [Google Scholar]

- Van Kasteren, T.; Noulas, A.; Englebienne, G.; Kröse, B. Accurate activity recognition in a home setting. In Proceedings of the 10th International Conference on Ubiquitous Computing, Seoul, Korea, 21–24 September 2008; pp. 1–9. [Google Scholar]

- Köckemann, U.; Alirezaie, M.; Renoux, J.; Tsiftes, N.; Ahmed, M.U.; Morberg, D.; Lindén, M.; Loutfi, A. Open-source data collection and data sets for activity recognition in smart homes. Sensors 2020, 20, 879. [Google Scholar] [CrossRef] [Green Version]

- Gallissot, M.; Caelen, J.; Bonnefond, N.; Meillon, B.; Pons, S. Using the Multicom Domus Dataset. Ph.D. Thesis, LIG, Saint-Martin-d’Hères, France, 2011. [Google Scholar]

- Cook, D.; Schmitter-Edgecombe, M.; Crandall, A.; Sanders, C.; Thomas, B. Collecting and disseminating smart home sensor data in the CASAS project. In Proceedings of the CHI Workshop on Developing Shared Home Behavior Datasets to Advance HCI and Ubiquitous Computing Research, Boston, MA, USA, 4–9 April 2009; pp. 1–7. [Google Scholar]

- Meng, Z.; Zhang, M.; Guo, C.; Fan, Q.; Zhang, H.; Gao, N.; Zhang, Z. Recent progress in sensing and computing techniques for human activity recognition and motion analysis. Electronics 2020, 9, 1357. [Google Scholar] [CrossRef]

- Moreira, B.S.; Perkusich, A.; Luiz, S.O. An Acoustic Sensing Gesture Recognition System Design Based on a Hidden Markov Model. Sensors 2020, 20, 4803. [Google Scholar] [CrossRef]

- Huang, P.; Li, Y.; Lv, X.; Chen, W.; Liu, S. Recognition of Common Non-Normal Walking Actions Based on Relief-F Feature Selection and Relief-Bagging-SVM. Sensors 2020, 20, 1447. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wang, J.; Chen, Y.; Hao, S.; Peng, X.; Hu, L. Deep learning for sensor-based activity recognition: A survey. Pattern Recognit. Lett. 2019, 119, 3–11. [Google Scholar] [CrossRef] [Green Version]

- Bengio, Y. Deep learning of representations: Looking forward. In International Conference on Statistical Language and Speech Processing; Springer: Berlin/Heidelerg, Germany, 2013; pp. 1–37. [Google Scholar]

- Zhao, Z.; Chen, W.; Wu, X.; Chen, P.C.; Liu, J. LSTM network: A deep learning approach for short-term traffic forecast. IET Intell. Transp. Syst. 2017, 11, 68–75. [Google Scholar] [CrossRef] [Green Version]

- Du, Y.; Lim, Y.; Tan, Y. Activity Prediction using LSTM in Smart Home. In Proceedings of the 2019 IEEE 8th Global Conference on Consumer Electronics (GCCE), Osaka, Japan, 15–18 October 2019; pp. 918–919. [Google Scholar]

- Farrag, T.A.; Elattar, E.E. Optimized Deep Stacked Long Short-Term Memory Network for Long-Term Load Forecasting. IEEE Access 2021, 9, 68511–68522. [Google Scholar] [CrossRef]

- Sagheer, A.; Kotb, M. Time series forecasting of petroleum production using deep LSTM recurrent networks. Neurocomputing 2019, 323, 203–213. [Google Scholar] [CrossRef]

- Ronald, M.; Poulose, A.; Han, D.S. iSPLInception: An Inception-ResNet Deep Learning Architecture for Human Activity Recognition. IEEE Access 2021, 9, 68985–69001. [Google Scholar] [CrossRef]

- Chen, Z.; Jiang, C.; Xiang, S.; Ding, J.; Wu, M.; Li, X. Smartphone sensor-based human activity recognition using feature fusion and maximum full a posteriori. IEEE Trans. Instrum. Meas. 2019, 69, 3992–4001. [Google Scholar] [CrossRef]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. A Public Domain Dataset for Human Activity Recognition Using Smartphones. In Proceedings of the ESANN 2013 Proceedings, European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning, Bruges, Belgium, 24–26 April 2013; Volume 3, p. 3. [Google Scholar]

- Steven Eyobu, O.; Han, D.S. Feature representation and data augmentation for human activity classification based on wearable IMU sensor data using a deep LSTM neural network. Sensors 2018, 18, 2892. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Liciotti, D.; Bernardini, M.; Romeo, L.; Frontoni, E. A sequential deep learning application for recognising human activities in smart homes. Neurocomputing 2020, 396, 501–513. [Google Scholar] [CrossRef]

- Fang, H.; He, L. BP neural network for human activity recognition in smart home. In Proceedings of the 2012 International Conference on Computer Science and Service System, Wrocław, Poland, 9–12 September 2012; pp. 1034–1037. [Google Scholar]

- Cook, D.J.; Crandall, A.S.; Thomas, B.L.; Krishnan, N.C. CASAS: A smart home in a box. Computer 2012, 46, 62–69. [Google Scholar] [CrossRef] [Green Version]

- Rashidi, P.; Cook, D.J. Keeping the resident in the loop: Adapting the smart home to the user. IEEE Trans. Syst. Man Cybern. Part A Syst. Hum. 2009, 39, 949–959. [Google Scholar] [CrossRef]

- Fahad, L.G.; Tahir, S.F. Activity recognition and anomaly detection in smart homes. Neurocomputing 2021, 423, 362–372. [Google Scholar] [CrossRef]

- Kuchaiev, O.; Ginsburg, B. Factorization tricks for LSTM networks. arXiv 2017, arXiv:1703.10722. [Google Scholar]

- Salman, A.G.; Heryadi, Y.; Abdurahman, E.; Suparta, W. Single layer & multi-layer long short-term memory (LSTM) model with intermediate variables for weather forecasting. Procedia Comput. Sci. 2018, 135, 89–98. [Google Scholar]

- Huang, Z.; Xu, W.; Yu, K. Bidirectional LSTM-CRF models for sequence tagging. arXiv 2015, arXiv:1508.01991. [Google Scholar]

- Wöllmer, M.; Eyben, F.; Graves, A.; Schuller, B.; Rigoll, G. Bidirectional LSTM networks for context-sensitive keyword detection in a cognitive virtual agent framework. Cogn. Comput. 2010, 2, 180–190. [Google Scholar] [CrossRef] [Green Version]

- Wang, J.; Cao, Z. Chinese text sentiment analysis using LSTM network based on L2 and Nadam. In Proceedings of the 2017 IEEE 17th International Conference on Communication Technology (ICCT), Chengdu, China, 27–30 October 2017; pp. 1891–1895. [Google Scholar]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Laurent, C.; Pereyra, G.; Brakel, P.; Zhang, Y.; Bengio, Y. Batch normalized recurrent neural networks. In Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Shanghai, China, 20–25 March 2016; pp. 2657–2661. [Google Scholar]

- Singh, D.; Merdivan, E.; Psychoula, I.; Kropf, J.; Hanke, S.; Geist, M.; Holzinger, A. Human activity recognition using recurrent neural networks. In International Cross-Domain Conference for Machine Learning and Knowledge Extraction; Springer: Berlin/Heidelberg, Germany, 2017; pp. 267–274. [Google Scholar]

- Van Kasteren, T.L.M.; Englebienne, G.; Kröse, B.J.A. Human Activity Recognition from Wireless Sensor Network Data: Benchmark and Software. In Activity Recognition in Pervasive Intelligent Environments; Chen, L., Nugent, C.D., Biswas, J., Hoey, J., Eds.; Atlantis Press: Paris, France, 2011; pp. 165–186. [Google Scholar] [CrossRef]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. Human activity recognition on smartphones using a multiclass hardware-friendly support vector machine. In International Workshop on Ambient Assisted Living; Springer: Berlin/Heidelberg, Germany, 2012; pp. 216–223. [Google Scholar]

- Saeedi, R.; Norgaard, S.; Gebremedhin, A.H. A closed-loop deep learning architecture for robust activity recognition using wearable sensors. In Proceedings of the 2017 IEEE International Conference on Big Data (Big Data), Boston, MA, USA, 11–14 December 2017; pp. 473–479. [Google Scholar]

| Type of Sensors | Time Range | Activities | Activities (Instances) |

|---|---|---|---|

| Motion (M) Door (D) Temperature (T) | 16 October 2009– 6 January 2010 | 15 | Bed to Toilet (89), Desk Activity (54), Chores (23), Dining Room Activity (22), Evening Medication (19), Read (314), Kitchen Activity (554), Guest Bathroom (330), Leave Home (214), Master Bathroom (306), Sleep (96), Master Bedroom Activity (117), Meditate (17), Morning Medication (41) and Watch TV (114) |

| Timestamp | Sensor | State | Activity |

|---|---|---|---|

| 2009-11-19 08:47:38.000019 | M017 | ON | Guest Bathroom begin |

| 2009-11-19 08:47:38.000021 | M015 | OFF | |

| 2009-11-19 08:47:40.000041 | M011 | OFF | |

| 2009-11-19 08:47:40.000089 | M018 | ON | |

| . | . | . | |

| . | . | . | |

| . | . | . | |

| 2009-11-19 08:49:02.000086 | M018 | ON | |

| 2009-11-19 08:49:08.000076 | M017 | ON | Guest Bathroom end |

| Activity | Precision | Recall | F1-Score |

|---|---|---|---|

| Bed to Toilet | 0.93 | 0.92 | 0.93 |

| Chores | 0.95 | 0.97 | 0.96 |

| Desk Activity | 0.98 | 0.98 | 0.98 |

| Dining Rm Activity | 0.97 | 0.96 | 0.96 |

| Eve Meds | 0.90 | 0.94 | 0.92 |

| Guest Bathroom | 0.93 | 0.97 | 0.95 |

| Kitchen Activity | 0.97 | 0.97 | 0.97 |

| Leave Home | 0.93 | 0.96 | 0.94 |

| Master Bathroom | 0.96 | 0.95 | 0.96 |

| Meditate | 0.95 | 0.99 | 0.97 |

| Watch TV | 0.97 | 0.97 | 0.97 |

| Sleep | 0.90 | 0.93 | 0.91 |

| Read | 0.97 | 0.97 | 0.97 |

| Morning Meds | 0.92 | 0.91 | 0.91 |

| Master Bedroom Activity | 0.96 | 0.94 | 0.95 |

| Other | 0.90 | 0.88 | 0.89 |

| Accuracy | 0.95 | ||

| Macro avg | 0.94 | 0.95 | 0.95 |

| Weighted avg | 0.95 | 0.95 | 0.95 |

|

| Paper | Dataset | Success Rate | Activities | Sensors | DL Method |

|---|---|---|---|---|---|

| [48] | Own development [49] | 89.80% | 10 | Non-intrusive | Recurrent neural network |

| 85.70% | 13 | ||||

| 64.22% | 16 | ||||

| [50] | Own development [50] | 89.30% | 6 | Smartphone | SVM algorithm |

| [51] | OPPORTUNITY | 70.00% | 17 | Wearables | CNN-LSTM neural network |

| [32] | UCI [34] | 95.09% | 6 | Smartphone | iSPLInception |

| OPPORTUNITY | 88.14% | 17 | Wearables | ||

| Daphnet | 93.52% | 2 | Wearables | ||

| PAMAP2 | 89.09% | 11 | Wearables | ||

| [33] | UCI [34] | 98.85% | 6 | Smartphone | DL-MFAP |

| [35] | UCI [34] | 88.14% | 6 | Smartphone | Deep LSTM Network |

| [36] | Milan (CASAS) | 94.12% | 16 | Non-intrusive | Bidirectional LSTM |

| Proposed | Milan (CASAS) | 95.42% | 16 | Non-intrusive | Proposed architecture |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ramos, R.G.; Domingo, J.D.; Zalama, E.; Gómez-García-Bermejo, J. Daily Human Activity Recognition Using Non-Intrusive Sensors. Sensors 2021, 21, 5270. https://doi.org/10.3390/s21165270

Ramos RG, Domingo JD, Zalama E, Gómez-García-Bermejo J. Daily Human Activity Recognition Using Non-Intrusive Sensors. Sensors. 2021; 21(16):5270. https://doi.org/10.3390/s21165270

Chicago/Turabian StyleRamos, Raúl Gómez, Jaime Duque Domingo, Eduardo Zalama, and Jaime Gómez-García-Bermejo. 2021. "Daily Human Activity Recognition Using Non-Intrusive Sensors" Sensors 21, no. 16: 5270. https://doi.org/10.3390/s21165270

APA StyleRamos, R. G., Domingo, J. D., Zalama, E., & Gómez-García-Bermejo, J. (2021). Daily Human Activity Recognition Using Non-Intrusive Sensors. Sensors, 21(16), 5270. https://doi.org/10.3390/s21165270