A Systematic Review for Cognitive State-Based QoE/UX Evaluation

Abstract

1. Introduction

2. Background

2.1. Mental and Cognitive States

2.2. Physiological and Behavioral Data

- based on perception or behavior, including all data from elements of human expression, such as: facial expressions, intonation and voice modulation, body movements, contextual information, etc.;

- physiological, coming from the subconscious responses of the human body, such as heartbeat, blood pressure, brain activity, etc., related to the central nervous system, the neuroendocrine system, and the autonomous nervous system;

- subjective, self-reports by individuals about how they perceive their state, being less dependent on technology than the previous two.

- Electroencephalogram, a signal related to electrical activity in the brain, is registered by electrodes attached to the scalp commonly distributed under the 10–20 standard [24]. The power of the signal is due to five rhythms according to the frequency ranges: delta (), below 4 Hz; theta (), around 5 Hz; alpha (), around 10 Hz; beta (), around 20 Hz; and gamma (), usually above 30 Hz.

- Electrocardiogram, a signal related to electrical activity generated by the heart muscle, is recorded by placing a set of electrodes on the chest and occasionally on the extremities, depending on the application [24]. A beat has five different waves (P, Q, R, S, and T) that allow determining the heart rate and rhythm.

- Galvanic skin response, also known as Electrodermal Activity (EDA), provides a measurement of the electrical resistance of the skin when placing two electrodes on the distal phalanges of the middle and index fingers, which can increase or decrease according to the variation of sweating of the human body [25].

3. Materials and Methods

3.1. Eligibility Criteria

- papers outside the QoE/UX context;

- papers recognizing only emotions of the traditional circumplex model of affect [33];

- papers involving only signal data outside the research scope (fNIRS, fMRI, pupillometry, facial expressions, etc.);

- papers involving experiments only with disorder-diagnosed participants, for example: autism spectrum disorder.

3.2. Search Strategy

- cognitive states AND data AND machine learning AND user experience;

- cognitive states AND data AND user experience;

- cognitive states AND user experience;

- cognitive states AND data AND machine learning.

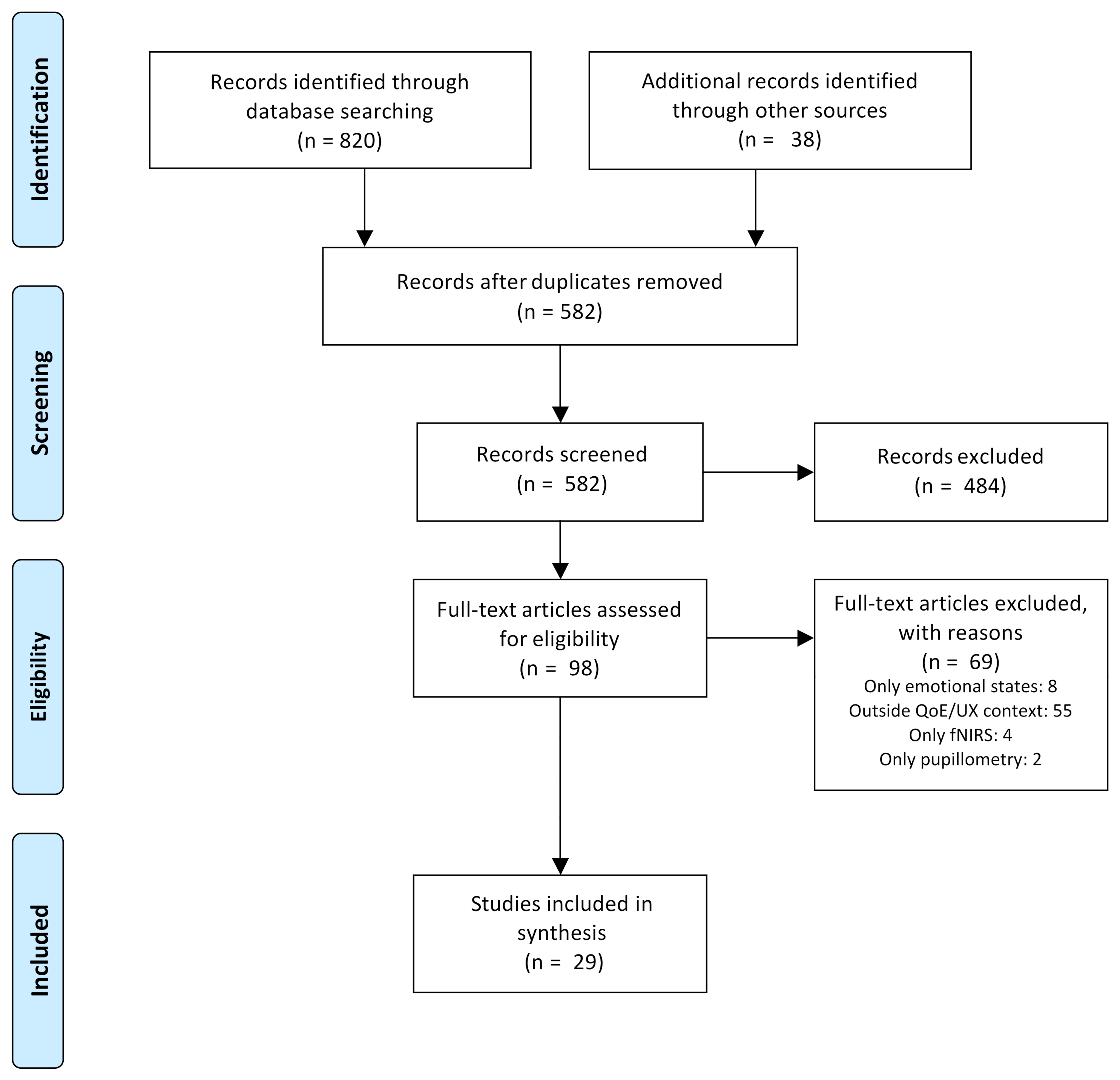

3.3. Study Selection

3.4. Data Extraction

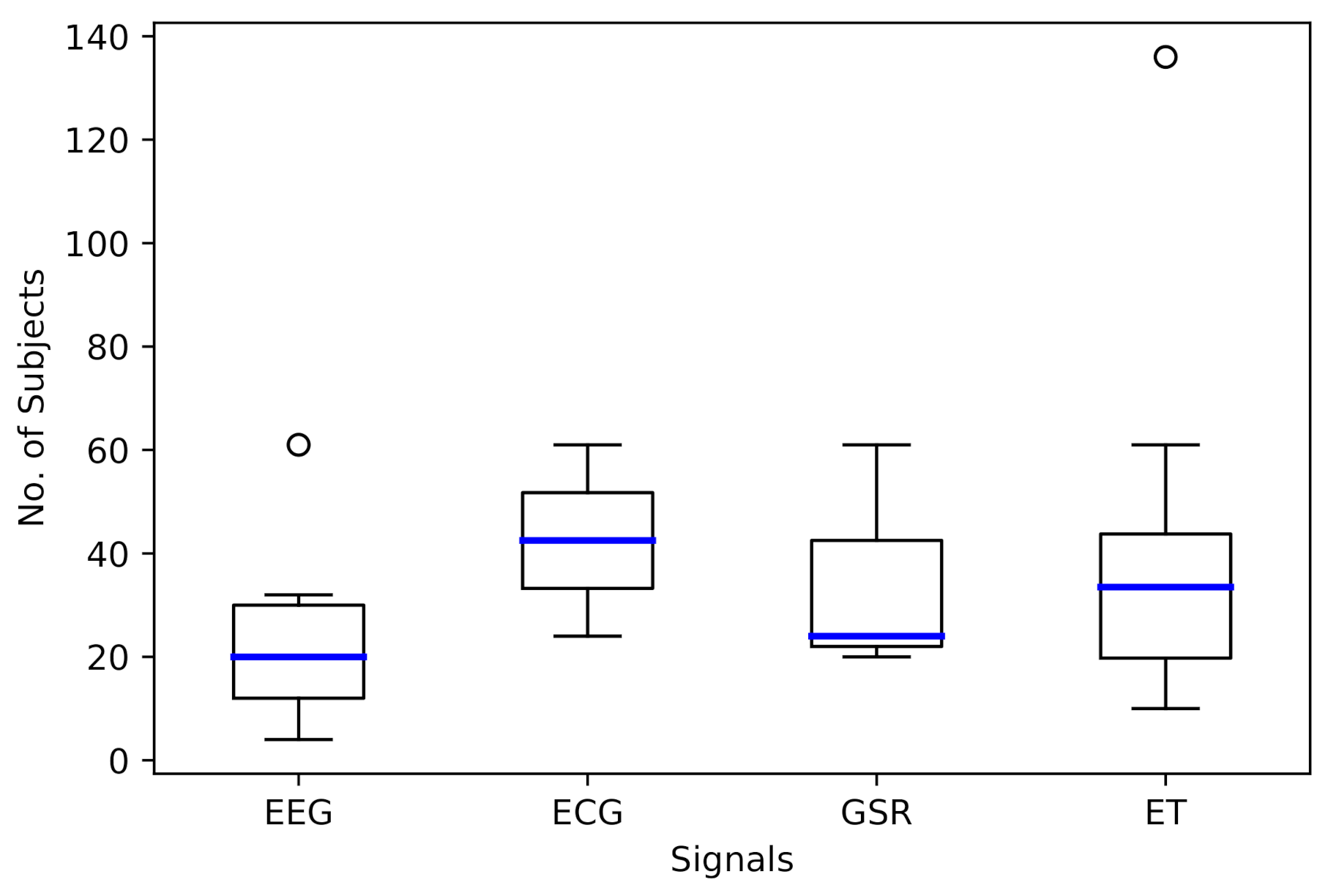

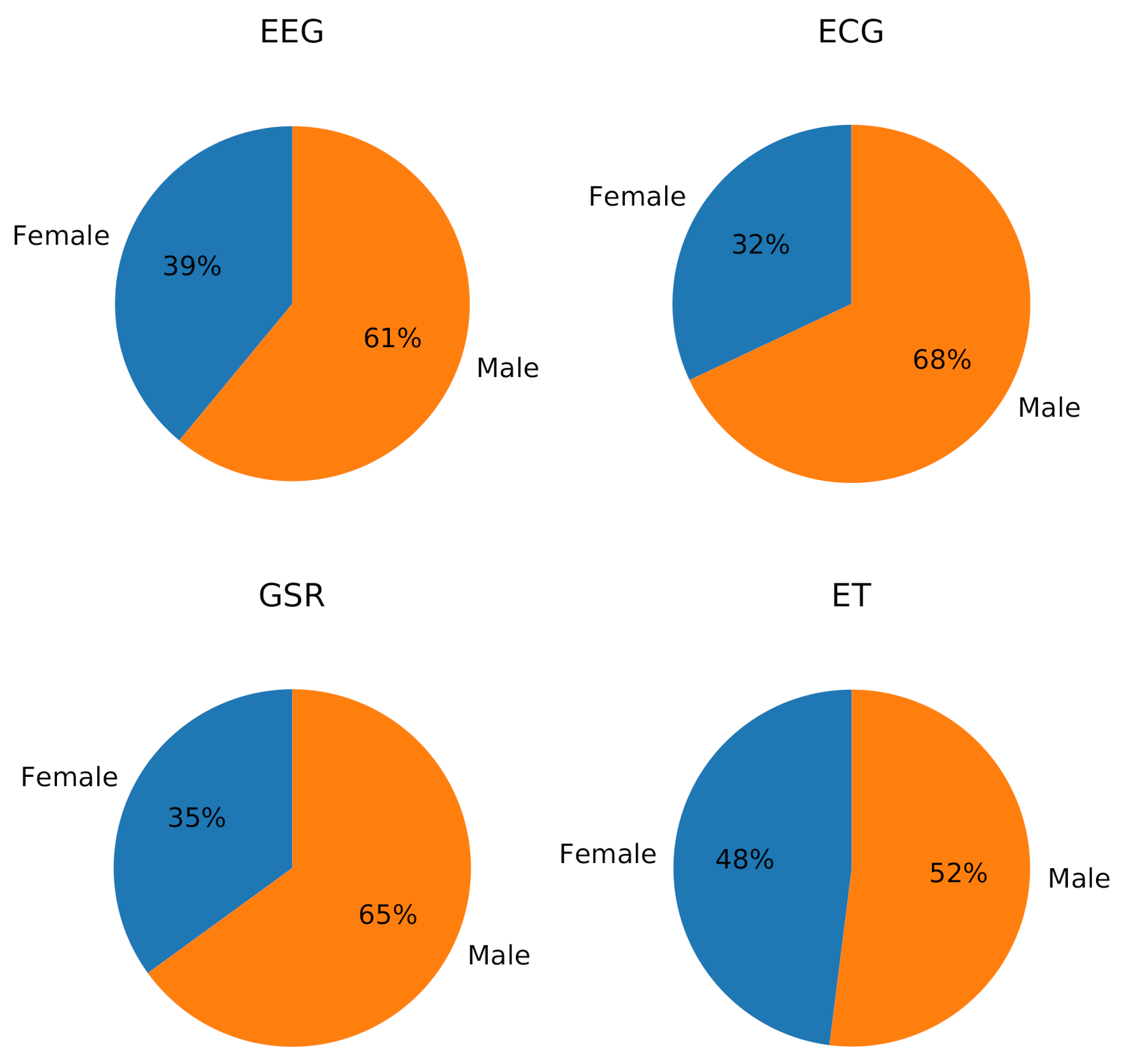

4. Results

4.1. Classification of Cognitive States

4.1.1. Classification Models

4.1.2. Stimulus

4.2. QoE/UX Evaluation Architectures

4.3. Correlations with Cognitive States and QoE/UX Metrics

| Ref. | Year | Objective | No. of Subjects (Female/ Male) | Stimulus | Data |

|---|---|---|---|---|---|

| [46] | 2014 | Correlations between frontal alpha EEG asymmetry, experience and task difficulty | 20 (10F/10M) | Mobile application tasks | Self-report; EEG |

| [48] | 2014 | Correlations between GSR and task performance metrics | 20 (10F/10M) | Mobile application tasks | Self-report; GSR, blood volume pulse, hear rate, EEG, and respiration |

| [50] | 2014 | Correlations between quality perception, brain activity, and ET metrics | 19 (11F/8M) | Videos | EEG and ET (with pupillometry) |

| [51] | 2015 | QoE evaluation | 32 (5F/27M) | Online game | Self-report; EEG |

| [52] | 2015 | EEG power analysis during tasks with cognitive differences | 30 (20F/10M) | Two-Picture cognitive task and video game | EEG, screen, and frontal videos |

| [53] | 2015 | Flow state analysis based on engagement and arousal indices | 30 (20F/10M) | Video game | EEG, screen and frontal videos |

| [54] | 2016 | Sleepiness analysis | 12 (3F/9M), 24 (8F/16M) | Videos | 1st study: self-report, EEG, electrooculogram (EOG); 2nd study: self-report, EEG, GSR, ECG, and electromyogram (EMG) |

| [55] | 2017 | Cognitive load, product sorting, and users’ goal analysis | 21 (10F/11M) | Online shopping tasks | EEG |

| [47] | 2017 | Correlations between ET, acceptance and perception | 10 (7F/3M) | Database creation assistant | Self-report; ET (with pupillometry), clicks, and screen video |

| [56] | 2018 | Visual attention and task performance analysis | 38 (not indicated) | Online shopping tasks | ET |

| [57] | 2019 | Analysis of the attitude towards a website considering visual attention, cognitive load, product type, and arithmetic complexity | 38 (17F/21M) | Online shopping tasks | Self-report; ET (with pupillometry) |

| [49] | 2019 | Usability evaluation | 30 (15F/15M) | Website tasks | Self-report; screen and frontal videos, mouse and keyboard usage logs, EEG |

4.4. Other Related Research

5. Discussion

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| ADASYN | Adaptive Synthetic Sampling Approach for Imbalanced Learning |

| ECG | Electrocardiogram |

| EDA | Electrodermal Activity |

| EEG | Electroencephalogram |

| EMG | Electromyogram |

| EOG | Electrooculogram |

| ERP | Event-Related Potential |

| ET | Eye Tracking |

| GAN | Generative Adversarial Net |

| GSR | Galvanic Skin Response |

| kNN | k-Nearest Neighbors |

| LDA | Linear Discriminant Analysis |

| MLP | Multilayer Perceptron |

| PPG | Photoplethysmography |

| PRISMA | Preferred Reporting Items for Systematic Reviews and Meta-Analyses |

| QoE | Quality of Experience |

| QoS | Quality of Service |

| QUX | Quality of User Experience |

| RF | Random Forest |

| SAM | Self-Evaluation Manikin |

| SMOTE | Synthetic Minority Over-sampling Technique |

| SVM | Support Vector Machine |

| SUS | System Usability Scale |

| TRL | Technology Readiness Level |

| UX | User Experience |

References

- International Organization for Standardization. Ergonomics of Human-System Interaction—Part 210: Human-Centred Design for Interactive Systems; Standard No. 9241-210:2010; ISO: Geneva, Switzerland, 2010. [Google Scholar]

- Raake, A.; Egger, S. Quality and Quality of Experience. In Quality of Experience: Advanced Concepts, Applications and Methods; Möller, S., Raake, A., Eds.; Springer: Cham, Switzerland, 2014; Chapter 2; pp. 11–33. [Google Scholar] [CrossRef]

- Wechsung, I.; De Moor, K. Quality of Experience Versus User Experience. In Quality of Experience: Advanced Concepts, Applications and Methods; Möller, S., Raake, A., Eds.; Springer: Cham, Switzerland, 2014; Chapter 3; pp. 35–54. [Google Scholar] [CrossRef]

- Brooke, J. SUS-A quick and dirty usability scale. In Usability Evaluation in Industry; Jordan, P.W., Thomas, B., McClelland, I.L., Weerdmeester, B., Eds.; CRC Press: London, UK, 1996; Chapter 21; pp. 189–194. [Google Scholar]

- Bradley, M.M.; Lang, P.J. Measuring emotion: The self-assessment manikin and the semantic differential. J. Behav. Ther. Exp. Psychiatry 1994, 25, 49–59. [Google Scholar] [CrossRef]

- Hammer, F.; Egger-Lampl, S.; Möller, S. Quality-of-user-experience: A position paper. Qual. User Exp. 2018, 3, 9. [Google Scholar] [CrossRef]

- van de Laar, B.; Gürkök, H.; Bos, D.P.O.; Nijboer, F.; Nijholt, A. Brain-Computer Interfaces and User Experience Evaluation. In Towards Practical Brain-Computer Interfaces: Bridging the Gap from Research to Real-World Applications; Allison, B.Z., Dunne, S., Leeb, R., Millán, J.D.R., Nijholt, A., Eds.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 223–237. [Google Scholar] [CrossRef]

- Law, E.L.; Van Schaik, P. Modelling user experience—An agenda for research and practice. Interact. Comput. 2010, 22, 313–322. [Google Scholar] [CrossRef]

- Law, E.L.C.; van Schaik, P.; Roto, V. Attitudes towards user experience (UX) measurement. Int. J. Hum.-Comput. Stud. 2014, 72, 526–541. [Google Scholar] [CrossRef]

- Reiter, U.; Brunnström, K.; De Moor, K.; Larabi, M.C.; Pereira, M.; Pinheiro, A.; You, J.; Zgank, A. Factors Influencing Quality of Experience. In Quality of Experience: Advanced Concepts, Applications and Methods; Möller, S., Raake, A., Eds.; Springer International Publishing: Cham, Switzerland, 2014; pp. 55–72. [Google Scholar] [CrossRef]

- Bonomi, M.; Battisti, F.; Boato, G.; Barreda-Ángeles, M.; Carli, M.; Le Callet, P. Contactless approach for heart rate estimation for QoE assessment. Signal Process. Image Commun. 2019, 78, 223–235. [Google Scholar] [CrossRef]

- Matthews, O.; Davies, A.; Vigo, M.; Harper, S. Unobtrusive arousal detection on the web using pupillary response. Int. J. Hum.-Comput. Stud. 2020, 136, 102361. [Google Scholar] [CrossRef]

- Mesfin, G.; Hussain, N.; Covaci, A.; Ghinea, G. Using Eye Tracking and Heart-Rate Activity to Examine Crossmodal Correspondences QoE in Mulsemedia. ACM Trans. Multimed. Comput. Commun. Appl. 2019, 15. [Google Scholar] [CrossRef]

- Lasa, G.; Justel, D.; Retegi, A. Eyeface: A new multimethod tool to evaluate the perception of conceptual user experiences. Comput. Hum. Behav. 2015, 52, 359–363. [Google Scholar] [CrossRef]

- Zheng, W.L.; Lu, B.L. A multimodal approach to estimating vigilance using EEG and forehead EOG. J. Neural Eng. 2017, 14. [Google Scholar] [CrossRef] [PubMed]

- Han, S.; Kim, J.; Lee, S. Recognition of Pilot’s Cognitive States based on Combination of Physiological Signals. In 2019 7th International Winter Conference on Brain-Computer Interface (BCI); IEEE: Gangwon, Korea, 2019; pp. 1–5. [Google Scholar] [CrossRef]

- Aricò, P.; Borghini, G.; Di Flumeri, G.; Colosimo, A.; Bonelli, S.; Golfetti, A.; Pozzi, S.; Imbert, J.P.; Granger, G.; Benhacene, R.; et al. Adaptive automation triggered by EEG-based mental workload index: A passive brain-computer interface application in realistic air traffic control environment. Front. Hum. Neurosci. 2016, 10. [Google Scholar] [CrossRef] [PubMed]

- Salzman, C.D.; Fusi, S. Emotion, cognition, and mental state representation in amygdala and prefrontal cortex. Annu. Rev. Neurosci. 2010, 33, 173–202. [Google Scholar] [CrossRef]

- Robinson, M.D.; Watkins, E.R.; Harmon-Jones, E. Cognition and Emotion: An Introduction. In Handbook of Cognition and Emotion; Robinson, M.D., Watkins, E.R., Harmon-Jones, E., Eds.; The Guilford Press: New York, NY, USA, 2013; Chapter 1; pp. 3–16. [Google Scholar]

- Pessoa, L. On the relationship between emotion and cognition. Nat. Rev. Neurosci. 2008, 9, 148–158. [Google Scholar] [CrossRef]

- Vidulich, M.A.; Tsang, P.S. Mental workload and situation awareness. In Hanbook of Human Factors and Ergonomics, 4th ed.; Salvendy, G., Ed.; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 2012; Chapter 8; pp. 243–273. [Google Scholar]

- Huang, M.X.; Li, J.; Ngai, G.; Leong, H.V. StressClick: Sensing Stress from Gaze-Click Patterns. In Proceedings of the 24th ACM International Conference on Multimedia; Association for Computing Machinery: New York, NY, USA, 2016; pp. 1395–1404. [Google Scholar] [CrossRef]

- Cernea, D.; Kerren, A. A survey of technologies on the rise for emotion-enhanced interaction. J. Vis. Lang. Comput. 2015, 31, 70–86. [Google Scholar] [CrossRef]

- Naït-Ali, A.; Karasinski, P. Biosignals: Acquisition and General Properties. In Advanced Biosignal Processing; Naït-Ali, A., Ed.; Springer: Berlin/Heidelberg, Germany, 2009; pp. 1–13. [Google Scholar] [CrossRef]

- Koelstra, S.; Mühl, C.; Soleymani, M.; Lee, J.S.; Yazdani, A.; Ebrahimi, T.; Pun, T.; Nijholt, A.; Patras, I. DEAP: A Database for Emotion Analysis Using Physiological Signals. IEEE Trans. Affect. Comput. 2012, 3, 18–31. [Google Scholar] [CrossRef]

- Bulling, A.; Ward, J.A.; Gellersen, H.; Tröster, G. Eye Movement Analysis for Activity Recognition Using Electrooculography. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 741–753. [Google Scholar] [CrossRef]

- Hansen, D.W.; Ji, Q. In the Eye of the Beholder: A Survey of Models for Eyes and Gaze. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 478–500. [Google Scholar] [CrossRef] [PubMed]

- Lim, Y.; Gardi, A.; Pongsakornsathien, N.; Sabatini, R.; Ezer, N.; Kistan, T. Experimental characterisation of eye-tracking sensors for adaptive human-machine systems. Measurement 2019, 140, 151–160. [Google Scholar] [CrossRef]

- Schall, A.J.; Romano Bergstrom, J. Introduction to Eye Tracking. In Eye Tracking in User Experience Design; Romano Bergstrom, J., Schall, A.J., Eds.; Morgan Kaufmann: Boston, MA, USA, 2014; Chapter 1; pp. 3–26. [Google Scholar] [CrossRef]

- Onorati, F.; Barbieri, R.; Mauri, M.; Russo, V.; Mainardi, L. Characterization of affective states by pupillary dynamics and autonomic correlates. Front. Neuroeng. 2013, 6, 9. [Google Scholar] [CrossRef] [PubMed]

- Kitchenham, B.; Charters, S. Guidelines for Performing Systematic Literature Reviews in Software Engineering; EBSE Technical Report No. EBSE-2007-01; Keele University and University of Durham Joint Report; BibSonomy: Kassel, Germany, 2007. [Google Scholar]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; Group, T.P. Preferred Reporting Items for Systematic Reviews and Meta-Analyses: The PRISMA Statement. PLoS Med. 2009, 6, e1000097. [Google Scholar] [CrossRef]

- Posner, J.; Russell, J.A.; Peterson, B.S. The circumplex model of affect: An integrative approach to affective neuroscience, cognitive development, and psychopathology. Dev. Psychopathol. 2005, 17, 715–734. [Google Scholar] [CrossRef] [PubMed]

- Jimenez-Molina, A.; Retamal, C.; Lira, H. Using Psychophysiological Sensors to Assess Mental Workload During Web Browsing. Sensors 2018, 18, 458. [Google Scholar] [CrossRef]

- Mathur, A.; Lane, N.D.; Kawsar, F. Engagement-Aware Computing: Modelling User Engagement from Mobile Contexts. In Proceedings of the 2016 ACM International Joint Conference on Pervasive and Ubiquitous Computing; Association for Computing Machinery: New York, NY, USA, 2016; pp. 622–633. [Google Scholar] [CrossRef]

- Frey, J.; Daniel, M.; Castet, J.; Hachet, M.; Lotte, F. Framework for Electroencephalography-Based Evaluation of User Experience. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems; Association for Computing Machinery: New York, NY, USA, 2016; pp. 2283–2294. [Google Scholar] [CrossRef]

- Salminen, J.; Nagpal, M.; Kwak, H.; An, J.; Jung, S.G.; Jansen, B.J. Confusion Prediction from Eye-Tracking Data: Experiments with Machine Learning. In Proceedings of the 9th International Conference on Information Systems and Technologies (ICIST 2019); ACM Press: New York, NY, USA, 2019; pp. 1–9. [Google Scholar] [CrossRef]

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: Synthetic minority over-sampling technique. J. Artif. Intell. Res. 2002, 16, 321–357. [Google Scholar] [CrossRef]

- Lallé, S.; Conati, C.; Carenini, G. Predicting Confusion in Information Visualization from Eye Tracking and Interaction Data. In Proceedings of the Twenty-Fifth International Joint Conference on Artificial Intelligence (IJCAI ’2016); AAAI Press: New York, NY, USA, 2016; pp. 2529–2535. [Google Scholar]

- Libert, A.; Van Hulle, M.M. Predicting Premature Video Skipping and Viewer Interest from EEG Recordings. Entropy 2019, 21, 1014. [Google Scholar] [CrossRef]

- Hussain, J.; Khan, W.A.; Hur, T.; Bilal, H.S.M.; Bang, J.; Ul Hassan, A.; Afzal, M.; Lee, S. A multimodal deep log-based user experience (UX) platform for UX evaluation. Sensors 2018, 18, 1622. [Google Scholar] [CrossRef] [PubMed]

- Courtemanche, F.; Léger, P.M.; Dufresne, A.; Fredette, M.; Labonté-Lemoyne, É.; Sénécal, S. Physiological heatmaps: A tool for visualizing users’ emotional reactions. Multimed. Tools Appl. 2018, 77, 11547–11574. [Google Scholar] [CrossRef]

- Georges, V.; Courtemanche, F.; Sénécal, S.; Baccino, T.; Fredette, M.; Léger, P.M. UX heatmaps: Mapping user experience on visual interfaces. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems (CHI ’16); Association for Computing Machinery: New York, NY, USA, 2016; pp. 4850–4860. [Google Scholar] [CrossRef]

- Georges, V.; Courtemanche, F.; Sénécal, S.; Léger, P.M.; Nacke, L.; Pourchon, R. The adoption of physiological measures as an evaluation tool in UX. In HCI in Business, Government and Organizations. Interacting with Information Systems; Nah, F.F.H., Tan, C.H., Eds.; Springer International Publishing: Cham, Switzerland, 2017; pp. 90–98. [Google Scholar]

- Léger, P.M.; Courtemanche, F.; Fredette, M.; Sénécal, S. A Cloud-Based Lab Management and Analytics Software for Triangulated Human-Centered Research. In Information Systems and Neuroscience; Davis, F.D., Riedl, R., vom Brocke, J., Léger, P.M., Randolph, A.B., Eds.; Springer International Publishing: Cham, Switzerland, 2019; pp. 93–99. [Google Scholar] [CrossRef]

- Chai, J.; Ge, Y.; Liu, Y.; Li, W.; Zhou, L.; Yao, L.; Sun, X. Application of frontal EEG asymmetry to user experience research. In Engineering Psychology and Cognitive Ergonomics (EPCE 2014); Lecture Notes in Computer Science; Harris, D., Ed.; Springer International Publishing: Cham, Switzerland, 2014; Volume 8532, pp. 234–243. [Google Scholar] [CrossRef]

- Tzafilkou, K.; Protogeros, N. Diagnosing user perception and acceptance using eye tracking in web-based end-user development. Comput. Hum. Behav. 2017, 72, 23–37. [Google Scholar] [CrossRef]

- Yao, L.; Liu, Y.; Li, W.; Zhou, L.; Ge, Y.; Chai, J.; Sun, X. Using physiological measures to evaluate user experience of mobile applications. In Engineering Psychology and Cognitive Ergonomics (EPCE 2014); Lecture Notes in Computer Science; Harris, D., Ed.; Springer International Publishing: Cham, Switzerland, 2014; Volume 8532, pp. 301–310. [Google Scholar] [CrossRef]

- Federici, S.; Mele, M.L.; Bracalenti, M.; Buttafuoco, A.; Lanzilotti, R.; Desolda, G. Bio-behavioral and Self-Report User Experience Evaluation of a Usability Assessment Platform (UTAssistant). In Proceedings of the 14th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications (VISIGRAPP 2019), Prague, Czech Republic, 25–27 February 2019. [Google Scholar]

- Arndt, S.; Radun, J.; Antons, J.N.; Möller, S. Using eye-tracking and correlates of brain activity to predict quality scores. In Proceedings of the 2014 Sixth International Workshop on Quality of Multimedia Experience (QoMEX), Singapore, 18–20 September 2014; pp. 281–285. [Google Scholar] [CrossRef]

- Beyer, J.; Varbelow, R.; Antons, J.N.; Möller, S. Using electroencephalography and subjective self-assessment to measure the influence of quality variations in cloud gaming. In Proceedings of the 2015 Seventh International Workshop on Quality of Multimedia Experience (QoMEX), Costa Navarino, Greece, 26–29 May 2015; pp. 1–6. [Google Scholar] [CrossRef]

- McMahan, T.; Parberry, I.; Parsons, T.D. Modality specific assessment of video game player’s experience using the Emotiv. Entertain. Comput. 2015, 7, 1–6. [Google Scholar] [CrossRef]

- McMahan, T.; Parberry, I.; Parsons, T.D. Evaluating Player Task Engagement and Arousal Using Electroencephalography. Procedia Manuf. 2015, 3, 2303–2310. [Google Scholar] [CrossRef]

- Arndt, S.; Antons, J.N.; Schleicher, R.; Möller, S. Using electroencephalography to analyze sleepiness due to low-quality audiovisual stimuli. Signal Process. Image Commun. 2016, 42, 120–129. [Google Scholar] [CrossRef]

- Mirhoseini, S.; Leger, P.M.; Senecal, S. Investigating the Effect of Product Sorting and Users’ Goal on Cognitive load. In SIGHCI 2017 Proceedings; SIGHCI: Singapore, 2017; p. 3. [Google Scholar]

- Juanéda, C.; Sénécal, S.; Léger, P.M. Product Web Page Design: A Psychophysiological Investigation of the Influence of Product Similarity, Visual Proximity on Attention and Performance. In Proceedings of the HCIBGO 2018: HCI in Business, Government, and Organizations; Nah, F.F.H., Xiao, B.S., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 327–337. [Google Scholar]

- Desrochers, C.; Léger, P.M.; Fredette, M.; Mirhoseini, S.; Sénécal, S. The arithmetic complexity of online grocery shopping: The moderating role of product pictures. Ind. Manag. Data Syst. 2019, 119, 1206–1222. [Google Scholar] [CrossRef]

- Engelke, U.; Darcy, D.P.; Mulliken, G.H.; Bosse, S.; Martini, M.G.; Arndt, S.; Antons, J.N.; Chan, K.Y.; Ramzan, N.; Brunnström, K. Psychophysiology-Based QoE Assessment: A Survey. IEEE J. Sel. Top. Signal Process. 2016, 11, 6–21. [Google Scholar] [CrossRef]

- Asan, O.; Yang, Y. Using Eye Trackers for Usability Evaluation of Health Information Technology: A Systematic Literature Review. JMIR Hum. Factors 2015, 2, e5. [Google Scholar] [CrossRef]

- Arndt, S.; Brunnström, K.; Cheng, E.; Engelke, U.; Möller, S.; Antons, J.N. Review on using physiology in quality of experience. Electron. Imaging 2016, 2016, 1–9. [Google Scholar] [CrossRef]

- Salgado, D.P.; Martins, F.R.; Rodrigues, T.B.; Keighrey, C.; Flynn, R.; Naves, E.L.M.; Murray, N. A QoE Assessment Method Based on EDA, Heart Rate and EEG of a Virtual Reality Assistive Technology System. In Proceedings of the 9th ACM Multimedia Systems Conference; Association for Computing Machinery: New York, NY, USA, 2018; pp. 517–520. [Google Scholar] [CrossRef]

- Baig, M.Z.; Kavakli, M. A Survey on Psycho-Physiological Analysis & Measurement Methods in Multimodal Systems. Multimodal Technol. Interact. 2019, 3, 37. [Google Scholar] [CrossRef]

- Du, L.H.; Liu, W.; Zheng, W.L.; Lu, B.L. Detecting driving fatigue with multimodal deep learning. In Proceedings of the International IEEE/EMBS Conference on Neural Engineering, NER, Shanghai, China, 25–28 May 2017; pp. 74–77. [Google Scholar] [CrossRef]

- Li, F.; Zhang, G.; Wang, W.; Xu, R.; Schnell, T.; Wen, J.; McKenzie, F.; Li, J. Deep Models for Engagement Assessment with Scarce Label Information. IEEE Trans. Hum. Mach. Syst. 2017, 47, 598–605. [Google Scholar] [CrossRef]

- Qayyum, A.; Faye, I.; Malik, A.S.; Mazher, M. Assessment of Cognitive Load using Multimedia Learning and Resting States with Deep Learning Perspective. In Proceedings of the 2018 IEEE-EMBS Conference on Biomedical Engineering and Sciences (IECBES), Sarawak, Malaysia, 3–6 December 2018; pp. 600–605. [Google Scholar] [CrossRef]

- Siddharth, S.; Jung, T.P.; Sejnowski, T.J. Utilizing Deep Learning Towards Multi-modal Bio-sensing and Vision-based Affective Computing. IEEE Trans. Affect. Comput. 2019. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- He, H.; Bai, Y.; Garcia, E.A.; Li, S. ADASYN: Adaptive synthetic sampling approach for imbalanced learning. In Proceedings of the 2008 IEEE International Joint Conference on Neural Networks (IEEE World Congress on Computational Intelligence), Hong Kong, China, 1–8 June 2008; pp. 1322–1328. [Google Scholar] [CrossRef]

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial nets. In Proceedings of the 27th International Conference on Neural Information Processing Systems—Volume 2 (NIPS’14); MIT Press: Cambridge, MA, USA, 2014; pp. 2672–2680. [Google Scholar]

- Nikolaidis, K.; Kristiansen, S.; Goebel, V.; Plagemann, T.; Liestøl, K.; Kankanhalli, M. Augmenting Physiological Time Series Data: A Case Study for Sleep Apnea Detection. In Machine Learning and Knowledge Discovery in Databases; Brefeld, U., Fromont, E., Hotho, A., Knobbe, A., Maathuis, M., Robardet, C., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 376–399. [Google Scholar]

- Fahimi, F.; Zhang, Z.; Goh, W.B.; Ang, K.K.; Guan, C. Towards EEG Generation Using GANs for BCI Applications. In Proceedings of the 2019 IEEE EMBS International Conference on Biomedical & Health Informatics (BHI), Chicago, IL, USA, 19–22 May 2019; pp. 1–4. [Google Scholar] [CrossRef]

- Zeagler, C. Where to Wear It: Functional, Technical, and Social Considerations in on-Body Location for Wearable Technology 20 Years of Designing for Wearability. In Proceedings of the 2017 ACM International Symposium on Wearable Computers; Association for Computing Machinery: New York, NY, USA, 2017; pp. 150–157. [Google Scholar] [CrossRef]

- Erins, M.; Minejeva, O.; Kivlenieks, R.; Lauznis, J. Feasibility study of Physiological Parameter Registration Sensors for Non-Intrusive Human Fatigue Detection System. In Proceedings of the 18th International Scientific Conference Engineering for Rural Development, Jelgava, Latvia, 22–24 May 2019; pp. 827–832. [Google Scholar] [CrossRef]

- Charlton, S.G. Measurement of Cognitive States in Test and Evaluation. In Handbook of Human Factors Testing and Evaluation, 2nd ed.; Charlton, S.G., O’Brien, T.G., Eds.; Lawrence Erlbaum Associates, Inc.: Mahwah, NJ, USA, 2002; Chapter 6; pp. 97–126. [Google Scholar]

- Lohani, M.; Payne, B.R.; Strayer, D.L. A Review of Psychophysiological Measures to Assess Cognitive States in Real-World Driving. Front. Hum. Neurosci. 2019, 13, 57. [Google Scholar] [CrossRef] [PubMed]

- Momin, A.; Bhattacharya, S.; Sanyal, S.; Chakraborty, P. Visual Attention, Mental Stress and Gender: A Study Using Physiological Signals. IEEE Access 2020, 8, 165973–165988. [Google Scholar] [CrossRef]

- Mahesh, B.; Prassler, E.; Hassan, T.; Garbas, J.U. Requirements for a Reference Dataset for Multimodal Human Stress Detection. In Proceedings of the 2019 IEEE International Conference on Pervasive Computing and Communications Workshops, PerCom Workshops 2019, Kyoto, Japan, 11–15 March 2019; pp. 492–498. [Google Scholar] [CrossRef]

| Groups | Keywords |

|---|---|

| Cognitive states | cognitive states, cognitive state |

| Data | physiological, EEG, GSR, ECG, eye tracking, sensor, multimodal |

| Machine learning | machine learning, deep learning |

| User experience | user experience, UX, QoE |

| Ref. | Year | Cognitive States | Best Performing Models | No. of Subjects (Female/ Male) | Stimulus | Data |

|---|---|---|---|---|---|---|

| [39] | 2016 | Confusion | RF, sensitivity 0.61, specificity 0.926 | 136 (75F/61M) | Data visualization software | Self-report, ET (with pupillometry), clicks |

| [36] | 2016 | Mental workload, attention | LDA, accuracy: 92% mental workload and 86% attention | 12 (3F/9M) | Virtual maze game | Self-report, EEG, keyboard, and touch behavior |

| [22] | 2016 | Mental stress | RF, click-level user-dependent f1-score 0.66; logistic classifier, session-level user-independent f1-score 0.79 | 20 (7F/13M) | Arithmetic questions software | ET (from video), clicks |

| [35] | 2016 | Engagement | SVM, f1-score 0.82 | 10 (3F/7M), 10 (3F/7M), 130 (34F/96M) | Cell phone usage | 1st and 2nd studies: EEG and usage logs; 3rd study: usage logs, context, and demographic data |

| [34] | 2018 | Mental workload | MLP, accuracy 93.7% | 61 (19F/42M) | Website browsing | EDA, Photoplethysmography (PPG), temperature, ECG, EEG, ET (with pupillometry) |

| [37] | 2019 | Confusion | RF, accuracy range 72.6–99.1% | 29 (14F/15M) | Personal data sheets | ET, age, gender |

| [40] | 2019 | Engagement (as a basis for interest detection) | kNN (k-Nearest Neighbors), average accuracy 80.3% | 4 (2F/2M) | Videos | Self-report, EEG |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bañuelos-Lozoya, E.; González-Serna, G.; González-Franco, N.; Fragoso-Diaz, O.; Castro-Sánchez, N. A Systematic Review for Cognitive State-Based QoE/UX Evaluation. Sensors 2021, 21, 3439. https://doi.org/10.3390/s21103439

Bañuelos-Lozoya E, González-Serna G, González-Franco N, Fragoso-Diaz O, Castro-Sánchez N. A Systematic Review for Cognitive State-Based QoE/UX Evaluation. Sensors. 2021; 21(10):3439. https://doi.org/10.3390/s21103439

Chicago/Turabian StyleBañuelos-Lozoya, Edgar, Gabriel González-Serna, Nimrod González-Franco, Olivia Fragoso-Diaz, and Noé Castro-Sánchez. 2021. "A Systematic Review for Cognitive State-Based QoE/UX Evaluation" Sensors 21, no. 10: 3439. https://doi.org/10.3390/s21103439

APA StyleBañuelos-Lozoya, E., González-Serna, G., González-Franco, N., Fragoso-Diaz, O., & Castro-Sánchez, N. (2021). A Systematic Review for Cognitive State-Based QoE/UX Evaluation. Sensors, 21(10), 3439. https://doi.org/10.3390/s21103439