Moment-to-Moment Continuous Attention Fluctuation Monitoring through Consumer-Grade EEG Device

Abstract

1. Introduction

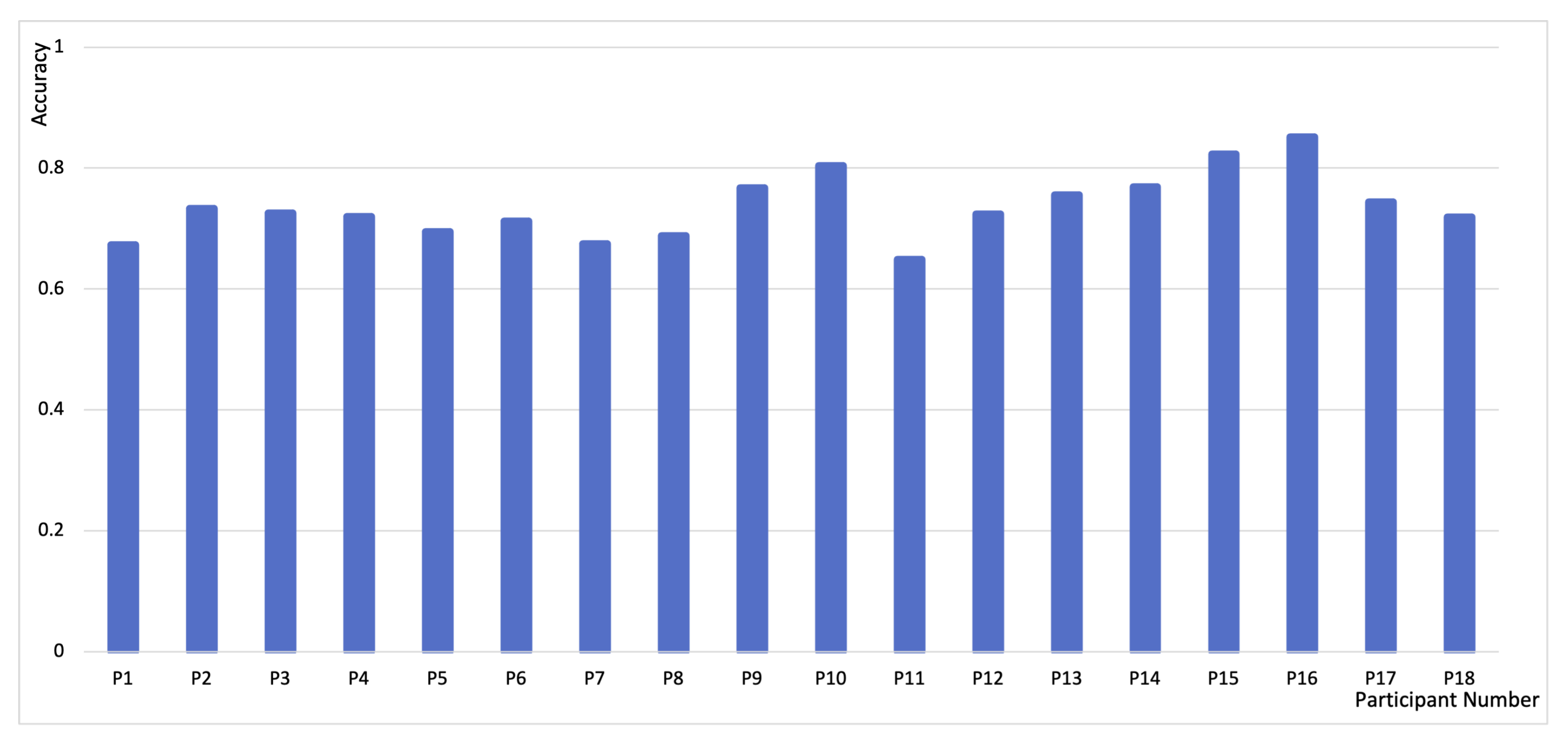

- We applied gradCPT, a well-established method in psychological research, to collect continuous attention fluctuation labels. Based on gradCPT, we developed a new technique for measuring continuous attention with consumer grade EEG devices and achieved 73.49% of accuracy in detection of attention fluctuation for the sub-second scale, moment-to-moment.

- We empirically validated our technique in a video learning scenario, which suggested the feasibility of predicting learners’ continuous attention fluctuation while watching lecture videos.

- We discussed both research and application implications of measuring continuous attention fluctuation using EEG for future studies.

2. Related Work

2.1. Attention State Classification

2.2. EEG-Based Attention Research

3. Methods

3.1. Experiment

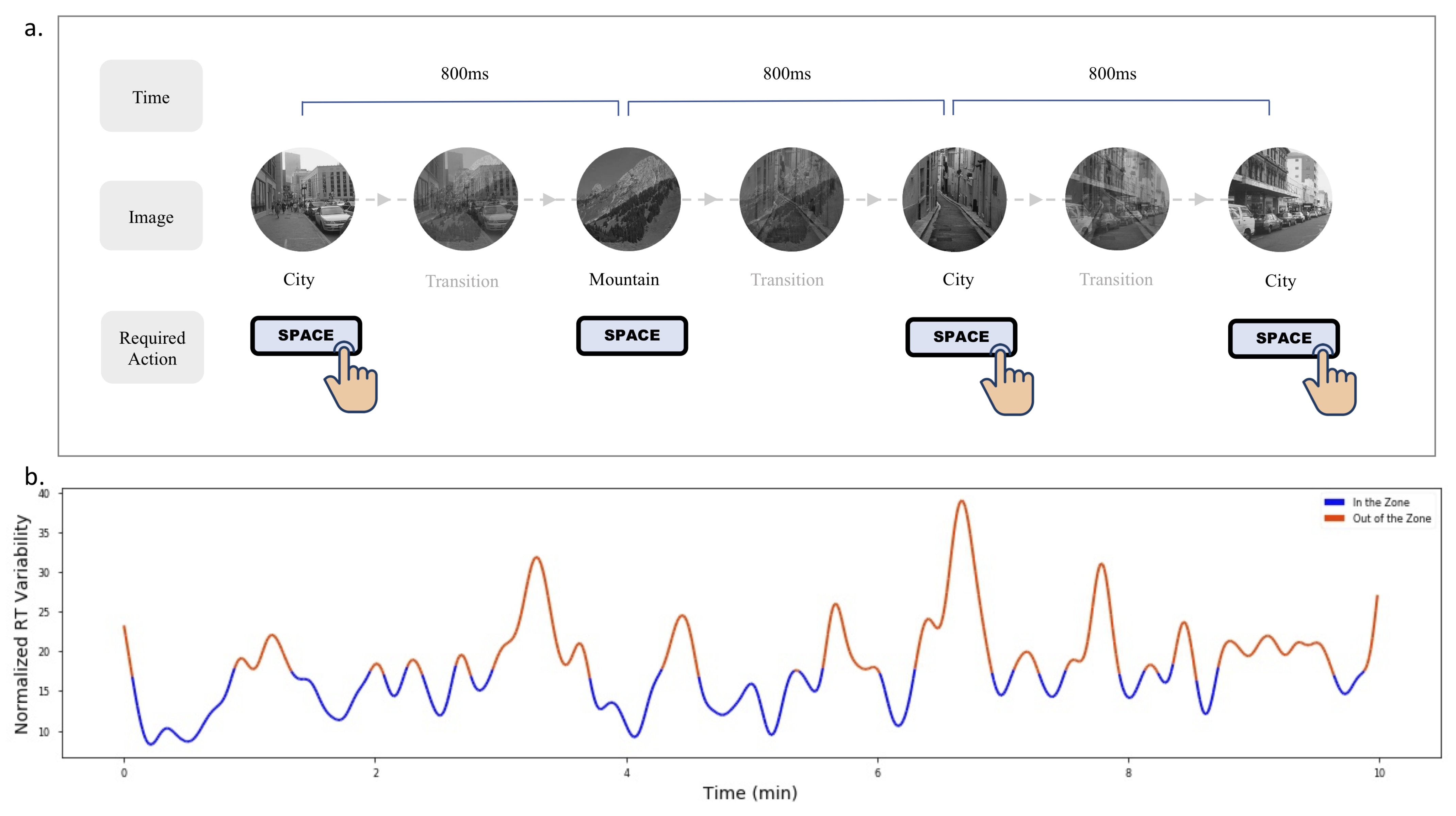

3.1.1. Attention Labeling by the gradCPT

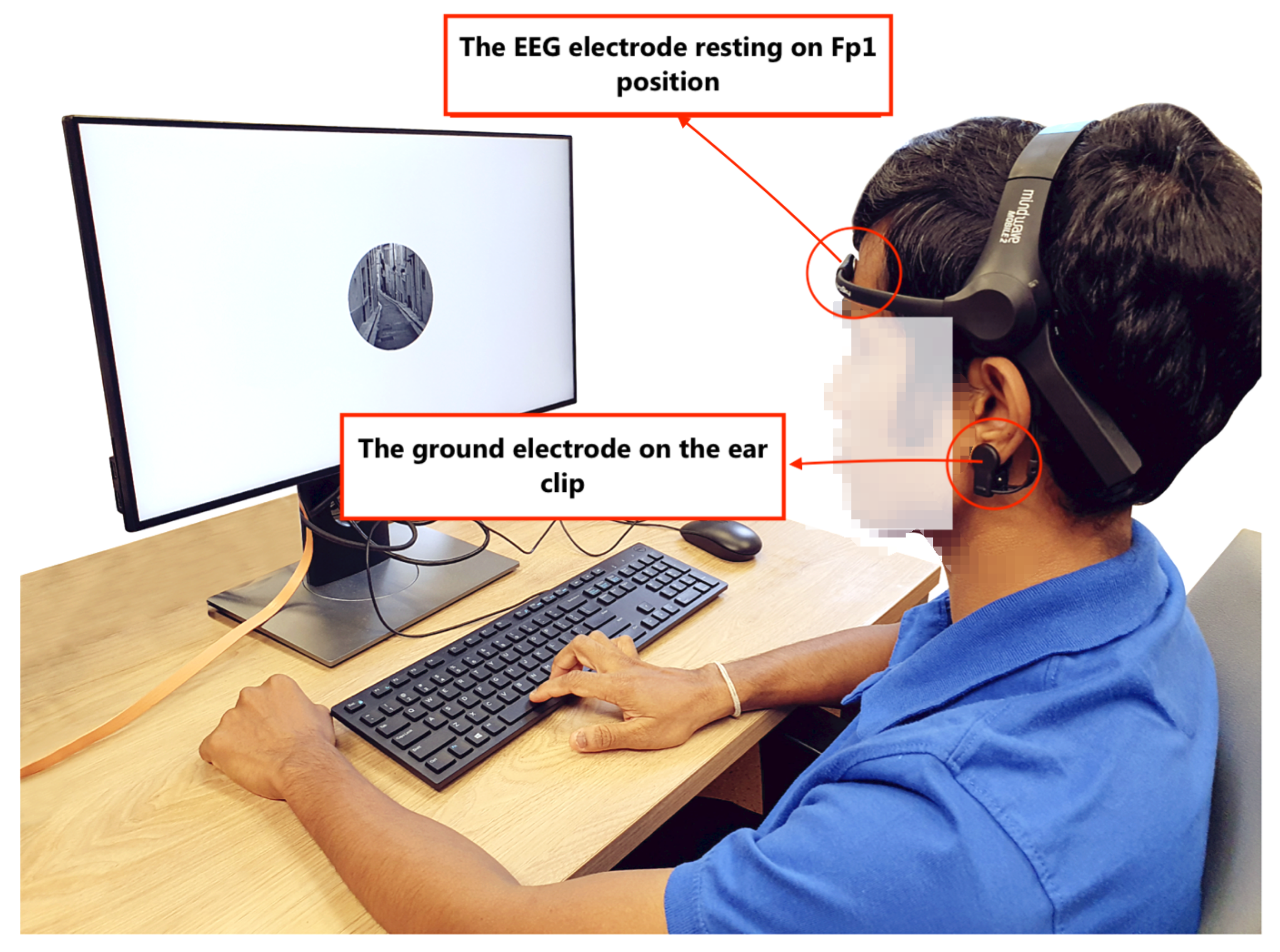

3.1.2. Setup

3.1.3. Participants and Procedure

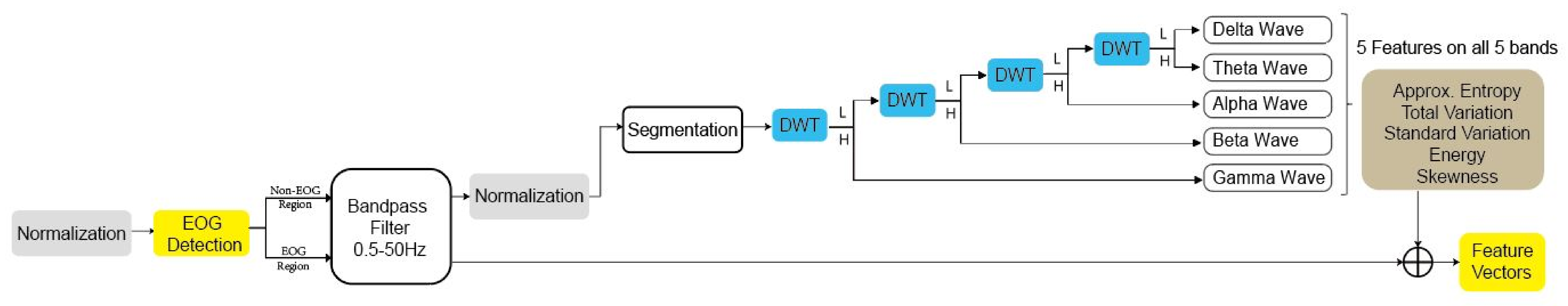

3.2. Preprocessing

3.2.1. Normalization

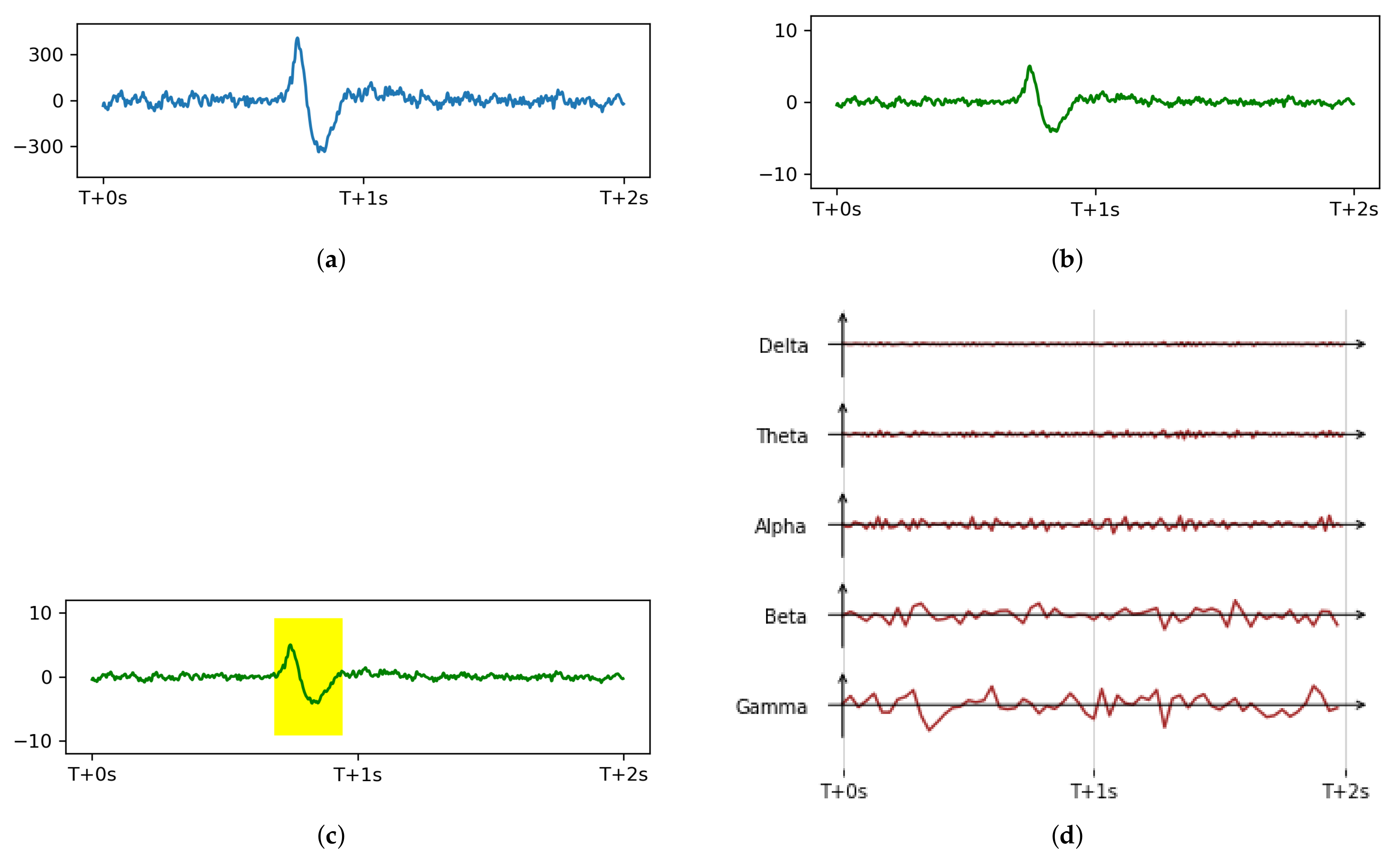

3.2.2. Artifacts Removal

3.2.3. Bandpass Filtering

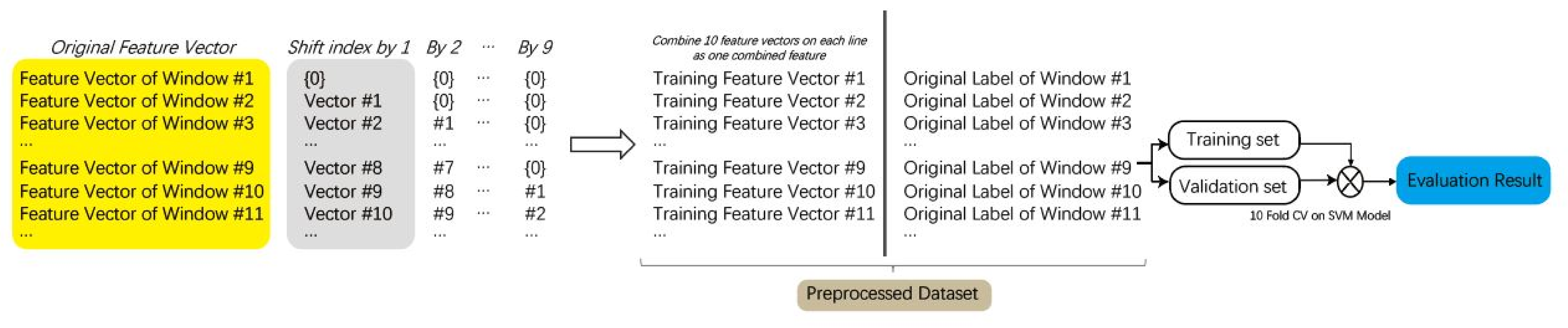

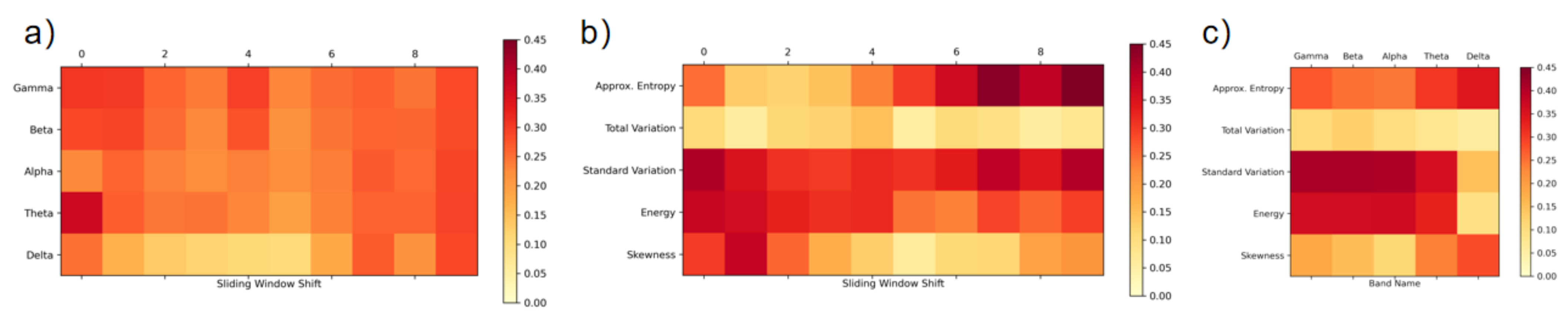

3.3. Feature Extraction

3.4. Classifier

4. Classification Result and Discussion

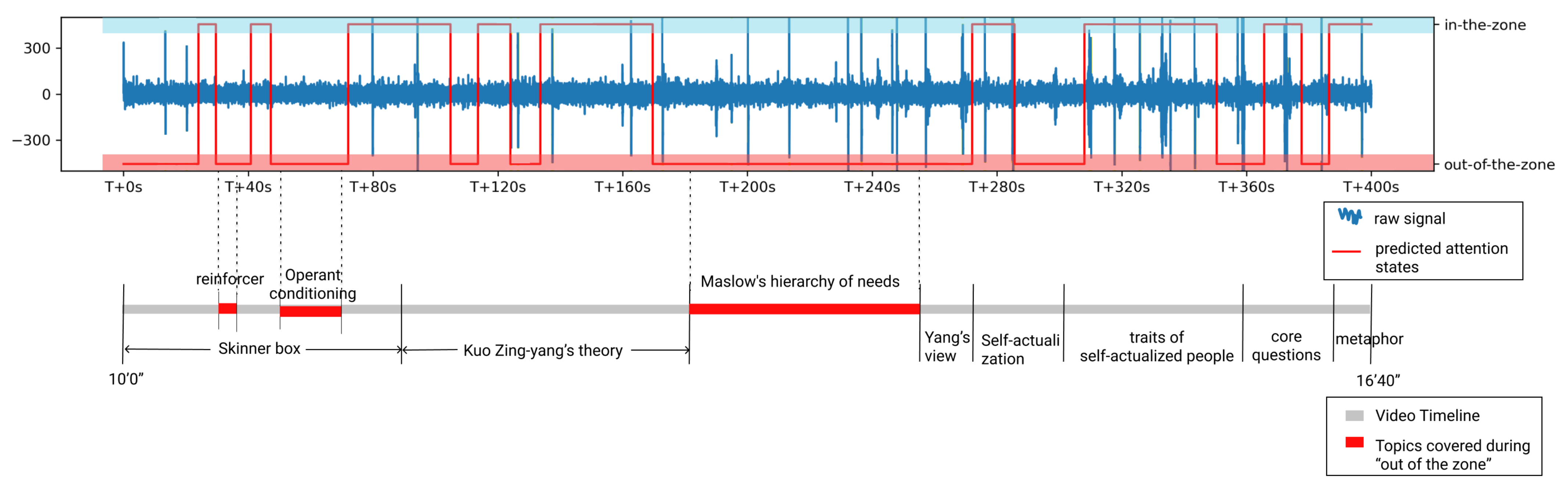

5. Validation Study—Detecting Attention Fluctuation in Video Learning

- How does our model’s prediction compare to the thought probe in measuring the learner’s attention state?

- What can continuous attention monitoring reveal about the learner’s attention state? What are its implications for future designs?

5.1. Thought Probe and Video Material Design

5.2. Participants and Procedure

6. Results and Discussion

6.1. Prediction vs. Thought Probe Result

6.2. Discussion

6.2.1. Comparison with Previous Studies

6.2.2. Implication for Attention-Aware System in Video Learning

7. Overall Discussion

8. Challenges, Limitations and Future Work

9. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| CNN | Convolutional neural network |

| CPT | Continuous performance test |

| CV | Cross-validation |

| DWT | Discrete wavelet transform |

| EDA | Electrodermal activity |

| EEG | Electroencephalography |

| EMG | Electromyogram |

| EOG | Electrooculogram |

| ER | Error rate |

| fMRI | Functional magnetic resonance imaging |

| gradCPT | Gradual onset CPT |

| iEEG | Intracranial electroencephalography |

| kNN | k-nearest neighbor |

| LSTM | Long short term memory |

| PPG | Photoplethysmogram |

| RT | Response time |

| RTV | Response time variability |

| SART | Sustained-attention-to-response task |

| SVM | Support Vector Machine |

| VTC | Variance Time Course |

References

- Esterman, M.; Rothlein, D. Models of sustained attention. Curr. Opin. Psychol. 2019, 29, 174–180. [Google Scholar] [CrossRef]

- Fortenbaugh, F.C.; DeGutis, J.; Esterman, M. Recent theoretical, neural, and clinical advances in sustained attention research. Ann. N. Y. Acad. Sci. 2017, 1396, 70. [Google Scholar] [CrossRef]

- Horvitz, E.; Kadie, C.; Paek, T.; Hovel, D. Models of attention in computing and communication: From principles to applications. Commun. ACM 2003, 46, 52–59. [Google Scholar] [CrossRef]

- Roda, C.; Thomas, J. Attention aware systems: Theories, applications, and research agenda. Comput. Hum. Behav. 2006, 22, 557–587. [Google Scholar] [CrossRef]

- Anderson, C.; Hübener, I.; Seipp, A.K.; Ohly, S.; David, K.; Pejovic, V. A survey of attention management systems in ubiquitous computing environments. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2018, 2, 1–27. [Google Scholar] [CrossRef]

- Zhao, Y.; Lofi, C.; Hauff, C. Scalable mind-wandering detection for MOOCs: A webcam-based approach. In Proceedings of the European Conference on Technology Enhanced Learning, Tallinn, Estonia, 12–15 September 2017; Springer: New York, NY, USA, 2017; pp. 330–344. [Google Scholar]

- Hutt, S.; Mills, C.; White, S.; Donnelly, P.J.; D’Mello, S.K. The Eyes Have It: Gaze-Based Detection of Mind Wandering during Learning with an Intelligent Tutoring System. In Proceedings of the International Conference on Educational Data Mining (EDM), Raleigh, NC, USA, 29 June–2 July 2016. [Google Scholar]

- Hutt, S.; Krasich, K.; Mills, C.; Bosch, N.; White, S.; Brockmole, J.R.; D’Mello, S.K. Automated gaze-based mind wandering detection during computerized learning in classrooms. User Model. User Adapt. Interact. 2019, 29, 821–867. [Google Scholar] [CrossRef]

- Bixler, R.; D’Mello, S. Automatic gaze-based user-independent detection of mind wandering during computerized reading. User Model. User Adapt. Interact. 2016, 26, 33–68. [Google Scholar] [CrossRef]

- Visuri, A.; van Berkel, N. Attention computing: Overview of mobile sensing applied to measuring attention. In Proceedings of the 2019 ACM International Joint Conference on Pervasive and Ubiquitous Computing and 2019 ACM International Symposium on Wearable Computers, London, UK, 11–13 September 2019; pp. 1079–1082. [Google Scholar]

- Xiao, X.; Wang, J. Undertanding and detecting divided attention in mobile mooc learning. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, Denver, CO, USA, 6–11 May 2017; pp. 2411–2415. [Google Scholar]

- Abdelrahman, Y.; Khan, A.A.; Newn, J.; Velloso, E.; Safwat, S.A.; Bailey, J.; Bulling, A.; Vetere, F.; Schmidt, A. Classifying Attention Types with Thermal Imaging and Eye Tracking. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2019, 3, 1–27. [Google Scholar] [CrossRef]

- Mackworth, N.H. The breakdown of vigilance during prolonged visual search. Q. J. Exp. Psychol. 1948, 1, 6–21. [Google Scholar] [CrossRef]

- Rosvold, H.E.; Mirsky, A.F.; Sarason, I.; Bransome, E.D., Jr.; Beck, L.H. A continuous performance test of brain damage. J. Consult. Psychol. 1956, 20, 343. [Google Scholar] [CrossRef]

- Robertson, I.H.; Manly, T.; Andrade, J.; Baddeley, B.T.; Yiend, J. Oops!’: Performance correlates of everyday attentional failures in traumatic brain injured and normal subjects. Neuropsychologia 1997, 35, 747–758. [Google Scholar] [CrossRef]

- Conners, C. Continuous Performance Test II Technical Guide and Software Manual; Multi-Health Systems: Toronto, ON, Canada, 2000. [Google Scholar]

- Bastian, M.; Sackur, J. Mind wandering at the fingertips: Automatic parsing of subjective states based on response time variability. Front. Psychol. 2013, 4, 573. [Google Scholar] [CrossRef] [PubMed]

- Helton, W.S.; Kern, R.P.; Walker, D.R. Conscious thought and the sustained attention to response task. Conscious. Cogn. 2009, 18, 600–607. [Google Scholar] [CrossRef]

- Helton, W.S.; Russell, P.N. Feature absence–presence and two theories of lapses of sustained attention. Psychol. Res. 2011, 75, 384–392. [Google Scholar] [CrossRef] [PubMed]

- Cheyne, J.A.; Solman, G.J.; Carriere, J.S.; Smilek, D. Anatomy of an error: A bidirectional state model of task engagement/disengagement and attention-related errors. Cognition 2009, 111, 98–113. [Google Scholar] [CrossRef] [PubMed]

- Yantis, S.; Jonides, J. Abrupt visual onsets and selective attention: Evidence from visual search. J. Exp. Psychol. Hum. Percept. Perform. 1984, 10, 601. [Google Scholar] [CrossRef] [PubMed]

- Rosenberg, M.; Noonan, S.; DeGutis, J.; Esterman, M. Sustaining visual attention in the face of distraction: A novel gradual-onset continuous performance task. Atten. Percept. Psychophys. 2013, 75, 426–439. [Google Scholar] [CrossRef] [PubMed]

- Rosenberg, M.D.; Finn, E.S.; Constable, R.T.; Chun, M.M. Predicting moment-to-moment attentional state. Neuroimage 2015, 114, 249–256. [Google Scholar] [CrossRef] [PubMed]

- Unsworth, N.; Robison, M.K. Pupillary correlates of lapses of sustained attention. Cogn. Affect. Behav. Neurosci. 2016, 16, 601–615. [Google Scholar] [CrossRef]

- Jin, C.Y.; Borst, J.P.; van Vugt, M.K. Predicting task-general mind-wandering with EEG. Cogn. Affect. Behav. Neurosci. 2019, 19, 1059–1073. [Google Scholar] [CrossRef]

- Huang, M.X.; Li, J.; Ngai, G.; Leong, H.V.; Bulling, A. Moment-to-Moment Detection of Internal Thought during Video Viewing from Eye Vergence Behavior. In Proceedings of the 27th ACM International Conference on Multimedia, Nice, France, 21–25 October 2019; pp. 2254–2262. [Google Scholar]

- Brishtel, I.; Khan, A.A.; Schmidt, T.; Dingler, T.; Ishimaru, S.; Dengel, A. Mind Wandering in a Multimodal Reading Setting: Behavior Analysis & Automatic Detection Using Eye-Tracking and an EDA Sensor. Sensors 2020, 20, 2546. [Google Scholar]

- Wang, L. Attention decrease detection based on video analysis in e-learning. In Transactions on Edutainment XIV; Springer: New York, NY, USA, 2018; pp. 166–179. [Google Scholar]

- Zhao, Y.; Görne, L.; Yuen, I.M.; Cao, D.; Sullman, M.; Auger, D.; Lv, C.; Wang, H.; Matthias, R.; Skrypchuk, L.; et al. An orientation sensor-based head tracking system for driver behaviour monitoring. Sensors 2017, 17, 2692. [Google Scholar] [CrossRef]

- Li, J.; Ngai, G.; Leong, H.V.; Chan, S.C. Multimodal human attention detection for reading from facial expression, eye gaze, and mouse dynamics. ACM Sigapp Appl. Comput. Rev. 2016, 16, 37–49. [Google Scholar] [CrossRef]

- Mills, C.; D’Mello, S. Toward a Real-Time (Day) Dreamcatcher: Sensor-Free Detection of Mind Wandering during Online Reading. In Proceedings of the International Conference on Educational Data Mining (EDM), Madrid, Spain, 26–29 June 2015. [Google Scholar]

- Zaletelj, J. Estimation of students’ attention in the classroom from kinect features. In Proceedings of the 10th International Symposium on Image and Signal Processing and Analysis, Ljubljana, Slovenia, 18–20 September 2017; pp. 220–224. [Google Scholar]

- D’Mello, S.K. Giving eyesight to the blind: Towards attention-aware AIED. Int. J. Artif. Intell. Educ. 2016, 26, 645–659. [Google Scholar] [CrossRef]

- Di Lascio, E.; Gashi, S.; Santini, S. Unobtrusive assessment of students’ emotional engagement during lectures using electrodermal activity sensors. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2018, 2, 1–21. [Google Scholar] [CrossRef]

- Nunez, P.L.; Srinivasan, R. A theoretical basis for standing and traveling brain waves measured with human EEG with implications for an integrated consciousness. Clin. Neurophysiol. 2006, 117, 2424–2435. [Google Scholar] [CrossRef]

- Ko, L.W.; Komarov, O.; Hairston, W.D.; Jung, T.P.; Lin, C.T. Sustained attention in real classroom settings: An EEG study. Front. Hum. Neurosci. 2017, 11, 388. [Google Scholar] [CrossRef]

- Behzadnia, A.; Ghoshuni, M.; Chermahini, S. EEG activities and the sustained attention performance. Neurophysiology 2017, 49, 226–233. [Google Scholar] [CrossRef]

- Zeid, S. Assessment of vigilance using EEG source localization. In Proceedings of the 2nd International Conference on Educational Neuroscience, Berlin, Germany, 10–12 June 2017. [Google Scholar]

- Wang, Y.K.; Jung, T.P.; Lin, C.T. EEG-based attention tracking during distracted driving. IEEE Trans. Neural Syst. Rehabil. Eng. 2015, 23, 1085–1094. [Google Scholar] [CrossRef]

- Vortmann, L.M.; Kroll, F.; Putze, F. EEG-based classification of internally-and externally-directed attention in an augmented reality paradigm. Front. Hum. Neurosci. 2019, 13, 348. [Google Scholar] [CrossRef]

- Di Flumeri, G.; De Crescenzio, F.; Berberian, B.; Ohneiser, O.; Kramer, J.; Aricò, P.; Borghini, G.; Babiloni, F.; Bagassi, S.; Piastra, S. Brain–computer interface-based adaptive automation to prevent out-of-the-loop phenomenon in air traffic controllers dealing with highly automated systems. Front. Hum. Neurosci. 2019, 13, 296. [Google Scholar] [CrossRef]

- Chen, C.M.; Wang, J.Y.; Yu, C.M. Assessing the attention levels of students by using a novel attention aware system based on brainwave signals. Br. J. Educ. Technol. 2017, 48, 348–369. [Google Scholar] [CrossRef]

- Sebastiani, M.; Di Flumeri, G.; Aricò, P.; Sciaraffa, N.; Babiloni, F.; Borghini, G. Neurophysiological vigilance characterisation and assessment: Laboratory and realistic validations involving professional air traffic controllers. Brain Sci. 2020, 10, 48. [Google Scholar] [CrossRef] [PubMed]

- Esterman, M.; Noonan, S.K.; Rosenberg, M.; DeGutis, J. In the zone or zoning out? Tracking behavioral and neural fluctuations during sustained attention. Cereb. Cortex 2013, 23, 2712–2723. [Google Scholar] [CrossRef] [PubMed]

- Duncan, J. Attention, intelligence, and the frontal lobes. In The Cognitive Neurosciences; Gazzaniga, M.S., Ed.; The MIT Press: Cambridge, MA, USA, 1995. [Google Scholar]

- Ni, D.; Wang, S.; Liu, G. The EEG-based attention analysis in multimedia m-learning. Comput. Math. Methods Med. 2020, 2020. [Google Scholar] [CrossRef]

- Chen, C.M.; Huang, S.H. Web-based reading annotation system with an attention-based self-regulated learning mechanism for promoting reading performance. Br. J. Educ. Technol. 2014, 45, 959–980. [Google Scholar] [CrossRef]

- Fong, S.S.M.; Tsang, W.W.; Cheng, Y.T.; Ki, W.; Ada, W.W.; Macfarlane, D.J. Single-channel electroencephalographic recording in children with developmental coordination disorder: Validity and influence of eye blink artifacts. J. Nov. Physiother. 2015, 5, 1000270. [Google Scholar] [CrossRef]

- Maskeliunas, R.; Damasevicius, R.; Martisius, I.; Vasiljevas, M. Consumer-grade EEG devices: Are they usable for control tasks? PeerJ 2016, 4, e1746. [Google Scholar] [CrossRef] [PubMed]

- Abo-Zahhad, M.; Ahmed, S.M.; Abbas, S.N. A novel biometric approach for human identification and verification using eye blinking signal. IEEE Signal Process. Lett. 2014, 22, 876–880. [Google Scholar] [CrossRef]

- Rebolledo-Mendez, G.; Dunwell, I.; Martínez-Mirón, E.A.; Vargas-Cerdán, M.D.; De Freitas, S.; Liarokapis, F.; García-Gaona, A.R. Assessing neurosky’s usability to detect attention levels in an assessment exercise. In Proceedings of the International Conference on Human-Computer Interaction, San Diego, CA, USA, 19–24 July 2009; Springer: New York, NY, USA, 2009; pp. 149–158. [Google Scholar]

- Johnstone, S.J.; Blackman, R.; Bruggemann, J.M. EEG from a single-channel dry-sensor recording device. Clin. Eeg Neurosci. 2012, 43, 112–120. [Google Scholar] [CrossRef]

- Rieiro, H.; Diaz-Piedra, C.; Morales, J.M.; Catena, A.; Romero, S.; Roca-Gonzalez, J.; Fuentes, L.J.; Di Stasi, L.L. Validation of electroencephalographic recordings obtained with a consumer-grade, single dry electrode, low-cost device: A comparative study. Sensors 2019, 19, 2808. [Google Scholar] [CrossRef]

- Kucyi, A.; Esterman, M.; Riley, C.S.; Valera, E.M. Spontaneous default network activity reflects behavioral variability independent of mind-wandering. Proc. Natl. Acad. Sci. USA 2016, 113, 13899–13904. [Google Scholar] [CrossRef] [PubMed]

- Kucyi, A.; Daitch, A.; Raccah, O.; Zhao, B.; Zhang, C.; Esterman, M.; Zeineh, M.; Halpern, C.H.; Zhang, K.; Zhang, J.; et al. Electrophysiological dynamics of antagonistic brain networks reflect attentional fluctuations. Nat. Commun. 2020, 11, 1–14. [Google Scholar] [CrossRef]

- Molina-Cantero, A.J.; Guerrero-Cubero, J.; Gómez-González, I.M.; Merino-Monge, M.; Silva-Silva, J.I. Characterizing computer access using a one-channel EEG wireless sensor. Sensors 2017, 17, 1525. [Google Scholar] [CrossRef]

- Jiang, S.; Li, Z.; Zhou, P.; Li, M. Memento: An emotion-driven lifelogging system with wearables. ACM Trans. Sens. Netw. (TOSN) 2019, 15, 1–23. [Google Scholar] [CrossRef]

- Elsayed, N.; Zaghloul, Z.S.; Bayoumi, M. Brain computer interface: EEG signal preprocessing issues and solutions. Int. J. Comput. Appl. 2017, 169, 975–8887. [Google Scholar] [CrossRef]

- Kim, S.P. Preprocessing of eeg. In Computational EEG Analysis; Springer: New York, NY, USA, 2018; pp. 15–33. [Google Scholar]

- Zhu, T.; Luo, W.; Yu, F. Convolution-and attention-based neural network for automated sleep stage classification. Int. J. Environ. Res. Public Health 2020, 17, 4152. [Google Scholar] [CrossRef]

- Zhang, X.; Yao, L.; Zhang, D.; Wang, X.; Sheng, Q.Z.; Gu, T. Multi-person brain activity recognition via comprehensive EEG signal analysis. In Proceedings of the 14th EAI International Conference on Mobile and Ubiquitous Systems: Computing, Networking and Services, Melbourne, Australia, 7–10 November 2017; pp. 28–37. [Google Scholar]

- Jiang, X.; Bian, G.B.; Tian, Z. Removal of artifacts from EEG signals: A review. Sensors 2019, 19, 987. [Google Scholar] [CrossRef]

- Szafir, D.; Mutlu, B. Pay attention! Designing adaptive agents that monitor and improve user engagement. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Austin, TX, USA, 5–10 May 2012; pp. 11–20. [Google Scholar]

- De Pascalis, V.; Cacace, I. Pain perception, obstructive imagery and phase-ordered gamma oscillations. Int. J. Psychophysiol. 2005, 56, 157–169. [Google Scholar] [CrossRef]

- Lutz, A.; Greischar, L.L.; Rawlings, N.B.; Ricard, M.; Davidson, R.J. Long-term meditators self-induce high-amplitude gamma synchrony during mental practice. Proc. Natl. Acad. Sci. USA 2004, 101, 16369–16373. [Google Scholar] [CrossRef]

- Chavez, M.; Grosselin, F.; Bussalb, A.; Fallani, F.D.V.; Navarro-Sune, X. Surrogate-based artifact removal from single-channel EEG. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 540–550. [Google Scholar] [CrossRef]

- Jung, T.P.; Makeig, S.; Westerfield, M.; Townsend, J.; Courchesne, E.; Sejnowski, T.J. Removal of eye activity artifacts from visual event-related potentials in normal and clinical subjects. Clin. Neurophysiol. 2000, 111, 1745–1758. [Google Scholar] [CrossRef]

- Zhang, H.; Zhao, M.; Wei, C.; Mantini, D.; Li, Z.; Liu, Q. EEGdenoiseNet: A benchmark dataset for deep learning solutions of EEG denoising. arXiv 2020, arXiv:2009.11662. [Google Scholar]

- Sha’abani, M.; Fuad, N.; Jamal, N.; Ismail, M. kNN and SVM classification for EEG: A review. In Proceedings of the 5th International Conference on Electrical, Control & Computer Engineering (Pahang), Kuantan, Malaysia, 29 July 2019; pp. 555–565. [Google Scholar]

- Craik, A.; He, Y.; Contreras-Vidal, J.L. Deep learning for electroencephalogram (EEG) classification tasks: A review. J. Neural Eng. 2019, 16, 031001. [Google Scholar] [CrossRef]

- Vabalas, A.; Gowen, E.; Poliakoff, E.; Casson, A.J. Machine learning algorithm validation with a limited sample size. PLoS ONE 2019, 14, e0224365. [Google Scholar] [CrossRef] [PubMed]

- Smith, L.B.; Colunga, E.; Yoshida, H. Knowledge as process: Contextually cued attention and early word learning. Cogn. Sci. 2010, 34, 1287–1314. [Google Scholar] [CrossRef]

- Weinstein, Y. Mind-wandering, how do I measure thee with probes? Let me count the ways. Behav. Res. Methods 2018, 50, 642–661. [Google Scholar] [CrossRef]

- Schooler, J.W. Re-representing consciousness: Dissociations between experience and meta-consciousness. Trends Cogn. Sci. 2002, 6, 339–344. [Google Scholar] [CrossRef]

- Smallwood, J.; McSpadden, M.; Schooler, J.W. When attention matters: The curious incident of the wandering mind. Mem. Cogn. 2008, 36, 1144–1150. [Google Scholar] [CrossRef] [PubMed]

- Schooler, J.W. Zoning out while reading: Evidence for dissociations between experience and metaconsciousness jonathan w. schooler, erik d. reichle, and david v. halpern. Think. Seeing Vis. Metacogn. Adults Child. 2004, 203, 1942–1947. [Google Scholar]

- Robison, M.K.; Miller, A.L.; Unsworth, N. Examining the effects of probe frequency, response options, and framing within the thought-probe method. Behav. Res. Methods 2019, 51, 398–408. [Google Scholar] [CrossRef]

- Deng, Y.Q.; Li, S.; Tang, Y.Y. The relationship between wandering mind, depression and mindfulness. Mindfulness 2014, 5, 124–128. [Google Scholar] [CrossRef]

- Szpunar, K.K.; Khan, N.Y.; Schacter, D.L. Interpolated memory tests reduce mind wandering and improve learning of online lectures. Proc. Natl. Acad. Sci. USA 2013, 110, 6313–6317. [Google Scholar] [CrossRef]

- Pham, P.; Wang, J. AttentiveLearner: Improving mobile MOOC learning via implicit heart rate tracking. In Proceedings of the International Conference on Artificial Intelligence in Education, Chicago, IL, USA, 25–29 June 2015; Springer: New York, NY, USA, 2015; pp. 367–376. [Google Scholar]

- Stawarczyk, D.; Majerus, S.; Maj, M.; Van der Linden, M.; D’Argembeau, A. Mind-wandering: Phenomenology and function as assessed with a novel experience sampling method. Acta Psychol. 2011, 136, 370–381. [Google Scholar] [CrossRef]

- McVay, J.C.; Kane, M.J. Why does working memory capacity predict variation in reading comprehension? On the influence of mind wandering and executive attention. J. Exp. Psychol. Gen. 2012, 141, 302. [Google Scholar] [CrossRef] [PubMed]

- D’Mello, S.K.; Mills, C.; Bixler, R.; Bosch, N. Zone out No More: Mitigating Mind Wandering during Computerized Reading. In Proceedings of the International Conference on Educational Data Mining (EDM), Wuhan, China, 25–28 June 2017. [Google Scholar]

- Hutt, S.; Hardey, J.; Bixler, R.; Stewart, A.; Risko, E.; D’Mello, S.K. Gaze-Based Detection of Mind Wandering during Lecture Viewing. In Proceedings of the International Conference on Educational Data Mining (EDM), Wuhan, China, 25–28 June 2017. [Google Scholar]

- Szafir, D.; Mutlu, B. ARTFul: Adaptive review technology for flipped learning. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Paris, France, 27 April–2 May 2013; pp. 1001–1010. [Google Scholar]

- Zhang, S.; Meng, X.; Liu, C.; Zhao, S.; Sehgal, V.; Fjeld, M. ScaffoMapping: Assisting concept mapping for video learners. In Proceedings of the IFIP Conference on Human-Computer Interaction, Bombay, India, 14–18 September 2019; pp. 314–328. [Google Scholar]

- Padilla, M.L.; Wood, R.A.; Hale, L.A.; Knight, R.T. Lapses in a prefrontal-extrastriate preparatory attention network predict mistakes. J. Cogn. Neurosci. 2006, 18, 1477–1487. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Christoff, K.; Gordon, A.M.; Smallwood, J.; Smith, R.; Schooler, J.W. Experience sampling during fMRI reveals default network and executive system contributions to mind wandering. Proc. Natl. Acad. Sci. USA 2009, 106, 8719–8724. [Google Scholar] [CrossRef]

- Rodrigue, M.; Son, J.; Giesbrecht, B.; Turk, M.; Höllerer, T. Spatio-temporal detection of divided attention in reading applications using EEG and eye tracking. In Proceedings of the 20th International Conference on Intelligent User Interfaces, Atlanta, GA, USA, 29 March–1 April 2015; pp. 121–125. [Google Scholar]

- Bixler, R.; D’Mello, S. Automatic gaze-based detection of mind wandering with metacognitive awareness. In Proceedings of the International Conference on User Modeling, Adaptation, and Personalization, Dublin, Ireland, 29 June–3 July 2015; Springer: New York, NY, USA, 2015; pp. 31–43. [Google Scholar]

- Dudley, C.; Jones, S.L. Fitbit for the Mind? An Exploratory Study of ‘Cognitive Personal Informatics’. In Proceedings of the Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal, QC, Canada, 21–26 April 2018; pp. 1–6. [Google Scholar]

- Maffei, A.; Angrilli, A. Spontaneous eye blink rate: An index of dopaminergic component of sustained attention and fatigue. Int. J. Psychophysiol. 2018, 123, 58–63. [Google Scholar] [CrossRef]

- Waersted, M.; Westgaard, R. Attention-related muscle activity in different body regions during VDU work with minimal physical activity. Ergonomics 1996, 39, 661–676. [Google Scholar] [CrossRef]

- Torrey, L.; Shavlik, J. Transfer learning. In Handbook of Research on Machine Learning Applications and Trends: Algorithms, Methods, and Techniques; IGI Global: Hershey, PA, USA, 2010; pp. 242–264. [Google Scholar]

- Elsayed, M.; Badawy, A.; Mahmuddin, M.; Elfouly, T.; Mohamed, A.; Abualsaud, K. FPGA implementation of DWT EEG data compression for wireless body sensor networks. In Proceedings of the 2016 IEEE Conference on Wireless Sensors (ICWiSE), Langkawi, Malaysia, 10–12 October 2016; pp. 21–25. [Google Scholar]

| Sensors | Attention States | Attention State Labeling Method | Time Scale of Ground Truth | Classifier | Result |

|---|---|---|---|---|---|

| Thermal image and Eye tracking [12] | Sustained attention | Controlled tasks | 3 min each task | Logistic Regression | 75.7% AUC score for user-independent condition-independent |

| Alternating attention | 87% AUC score for user-independent condition independent | ||||

| Selective attention | 77.4% AUC score for user-dependent | ||||

| Divided attention | |||||

| EDA [34] | Engaged | Self-report questionnaires | 45 min each questionnaire (after a lecture) | SVM | 0.60 for accuracy |

| Not engaged | |||||

| PPG [11] | Full Attention (FA) | Designed tasks based on the combination of internal and external distractions | 8 min each task | RBF-SVM classifiers | 50% for FA vs. EDA vs. LIDA vs. HIDA |

| Low internal divided attention (LIDA) | 72.2% for FA vs. EDA | ||||

| High internal divided attention (HIDA) | 75.0% for FA vs. LIDA | ||||

| External divided attention (EDA) | 83.3% for FA vs. HIDA |

| Feature | Description |

|---|---|

| Approx. Entropy | Approximate entropy of the signal |

| Total variation | Sum of gradients in the signal |

| Standard variation | Standard deviation of the signal |

| Energy | Sum of squares of the signal |

| Skewness | Sample skewness of the signal |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, S.; Yan, Z.; Sapkota, S.; Zhao, S.; Ooi, W.T. Moment-to-Moment Continuous Attention Fluctuation Monitoring through Consumer-Grade EEG Device. Sensors 2021, 21, 3419. https://doi.org/10.3390/s21103419

Zhang S, Yan Z, Sapkota S, Zhao S, Ooi WT. Moment-to-Moment Continuous Attention Fluctuation Monitoring through Consumer-Grade EEG Device. Sensors. 2021; 21(10):3419. https://doi.org/10.3390/s21103419

Chicago/Turabian StyleZhang, Shan, Zihan Yan, Shardul Sapkota, Shengdong Zhao, and Wei Tsang Ooi. 2021. "Moment-to-Moment Continuous Attention Fluctuation Monitoring through Consumer-Grade EEG Device" Sensors 21, no. 10: 3419. https://doi.org/10.3390/s21103419

APA StyleZhang, S., Yan, Z., Sapkota, S., Zhao, S., & Ooi, W. T. (2021). Moment-to-Moment Continuous Attention Fluctuation Monitoring through Consumer-Grade EEG Device. Sensors, 21(10), 3419. https://doi.org/10.3390/s21103419