Visibility Enhancement and Fog Detection: Solutions Presented in Recent Scientific Papers with Potential for Application to Mobile Systems

Abstract

1. Introduction

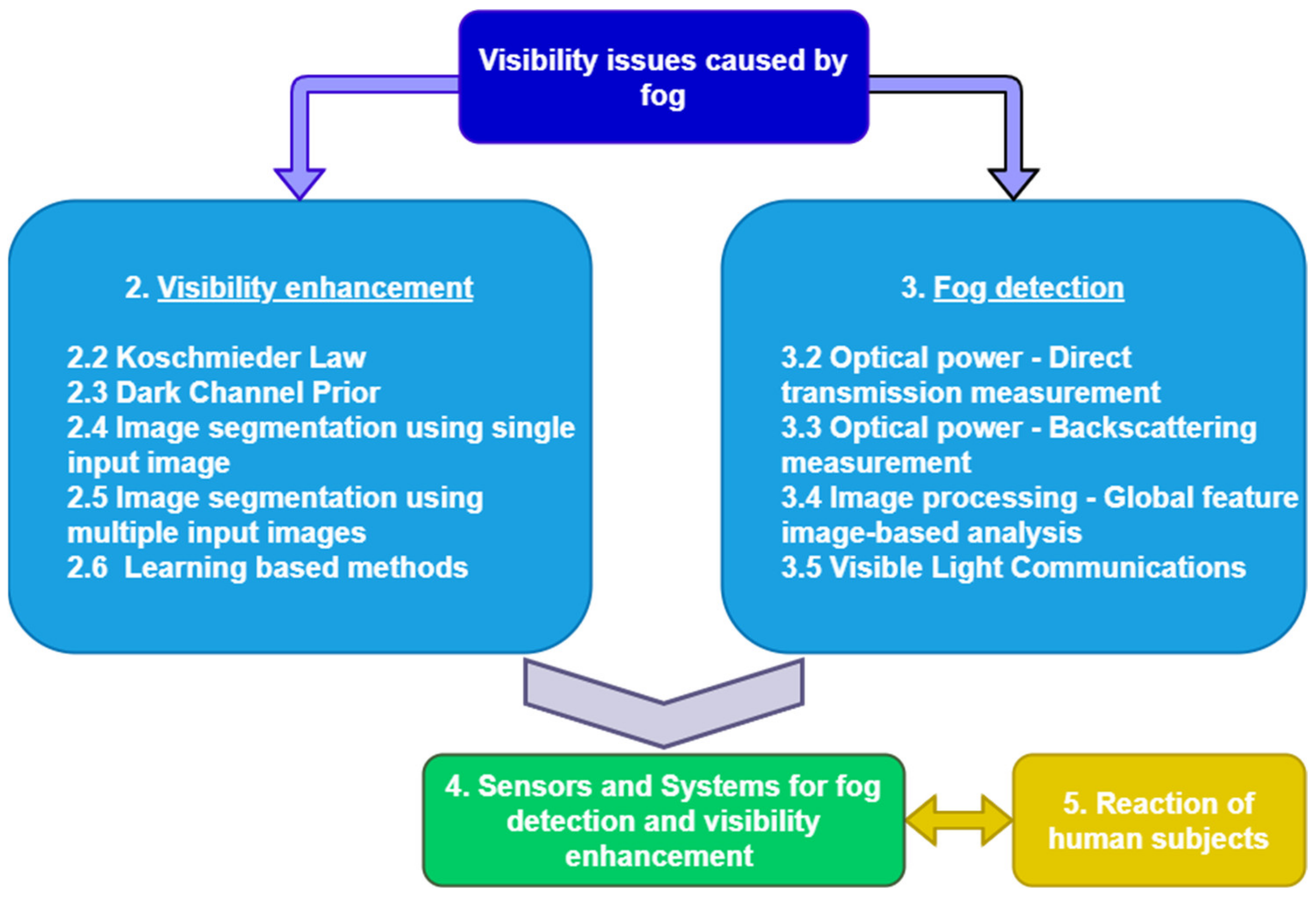

2. Visibility Enhancement Methods

2.1. Basic Theoretical Aspects

2.1.1. Koschmieder Law

2.1.2. Dark Channel Prior

2.2. Methods Based on Koschmieder Law

- Rtvr of the equation represents the distance traveled during the safety time margin (including the reaction time of the driver), and the second term is the braking distance. This is a generic case formula and does not take into account the mass of the vehicle and the performance of the vehicle’s breaking and tire system.

- Rt is a time interval that includes the reaction time of the driver and several seconds before a possible accident may occur.

- g is the gravitational acceleration, 9.8 m/s2

- f is the friction coefficient. For wet asphalt, we use a coefficient equal to 0.35.

- vr denotes the recommended driving speed.

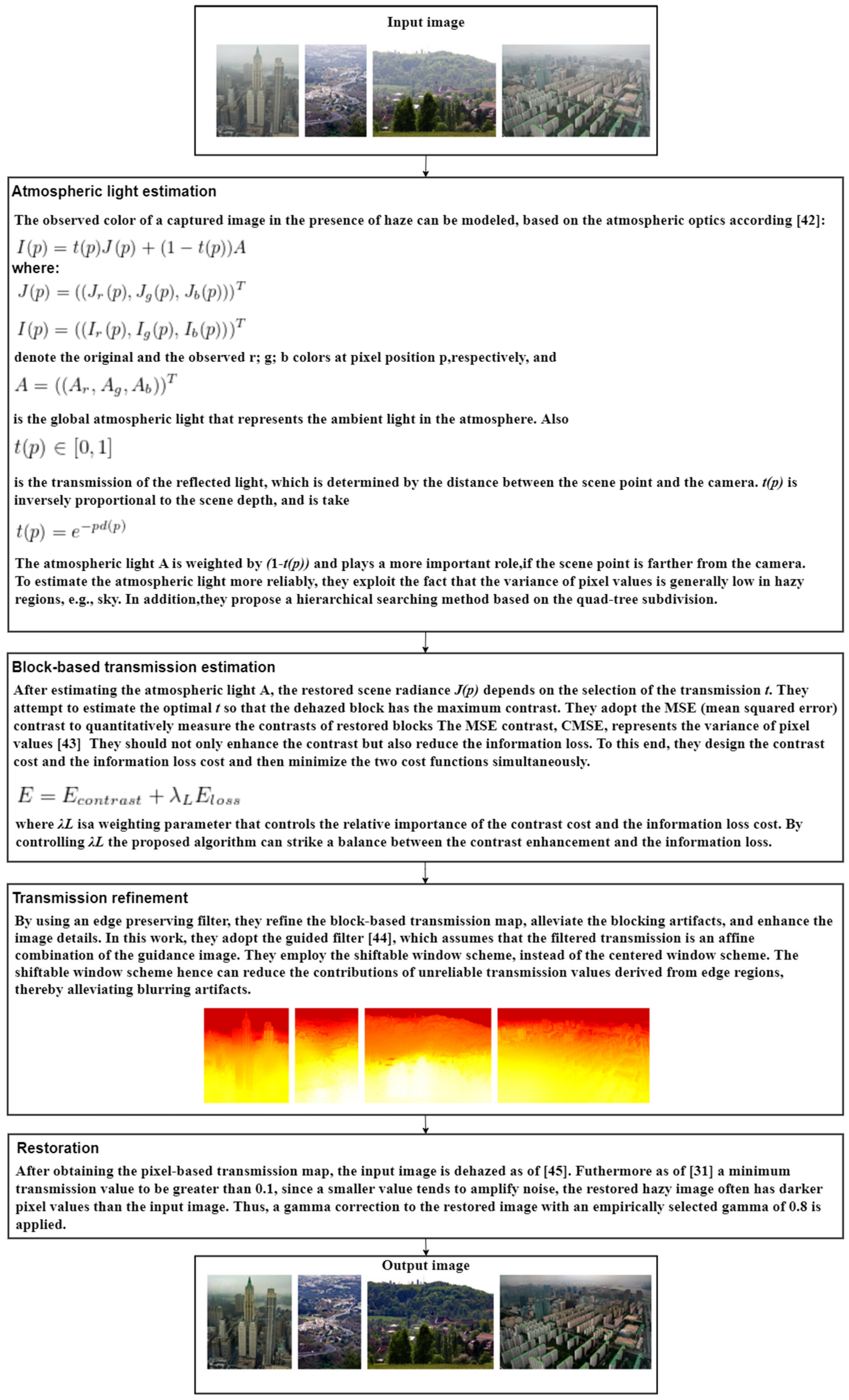

2.3. Methods Based on Dark Channel Prior

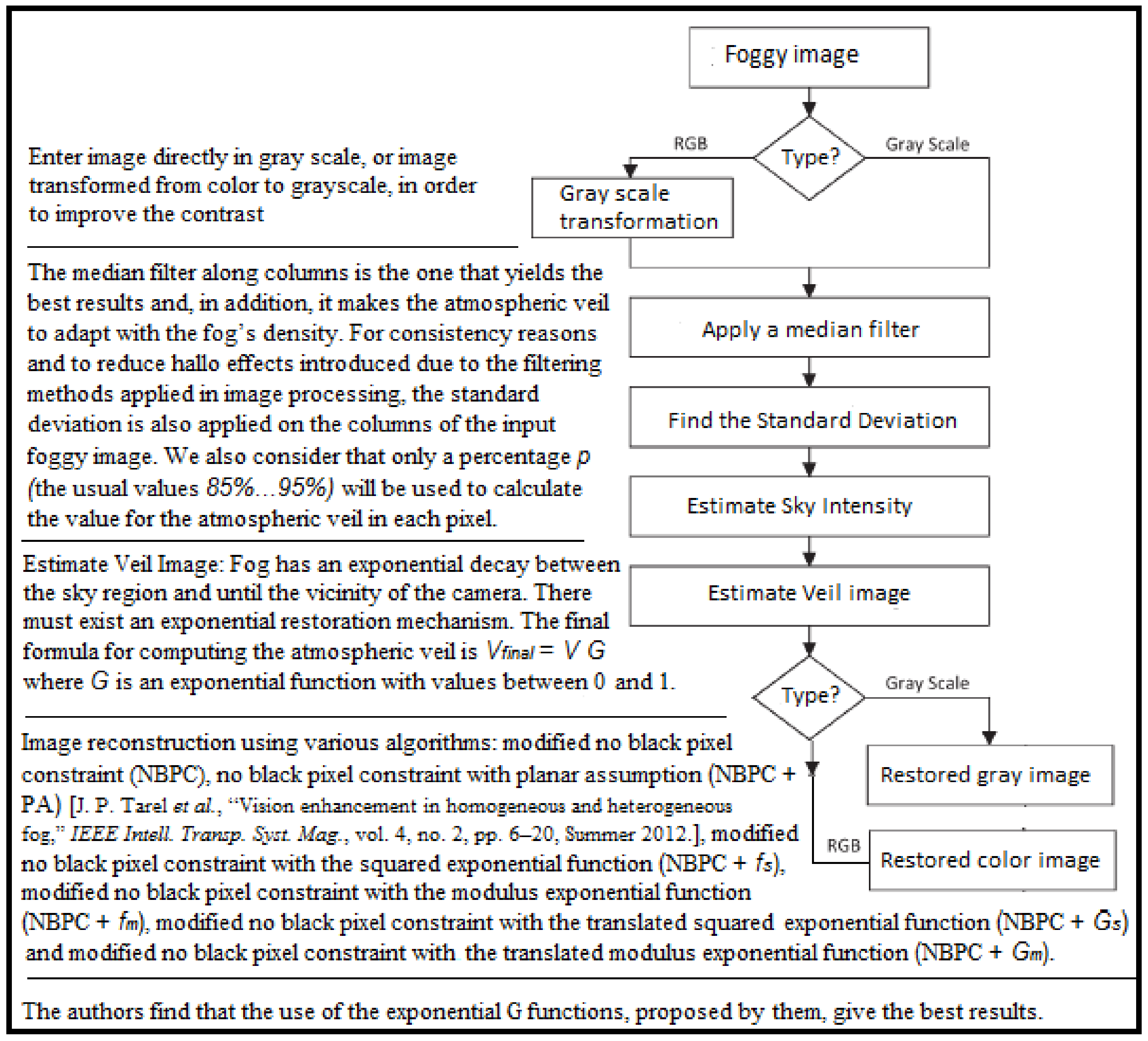

2.4. Image Segmentation Using Single Input Image

2.5. Image Segmentation Using Multiple Input Images

2.6. Learning-Based Methods

3. Fog Detection and Visibility Estimation Methods

3.1. Basic Theoretical Aspects

3.1.1. Rayleigh Scattering

3.1.2. Mie Scattering

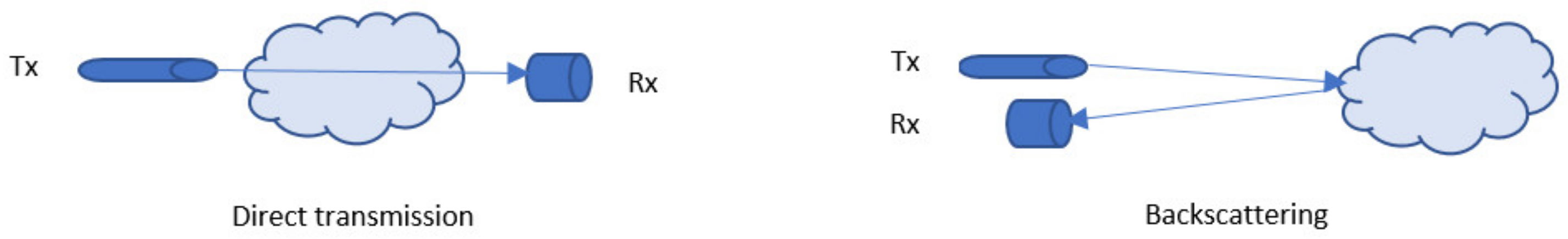

3.2. Optical Power: Direct Transmission Measurement

3.3. Optical Power: Backscattering Measurement

3.4. Image Processing: Global Feature Image-Based Analysis

3.5. Visible Light Communications

4. Sensors and Systems for Fog Detection and Visibility Enhancement

4.1. Principles and Methods

4.2. Onboard Sensors and Systems

4.3. External Sensors and Systems

5. Reaction of Human Subjects

6. Conclusions

7. Observations and Future Research

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- U.S. Department of Transportation. Traffic Safety Facts—Critical Reasons for Crashes Investigated in the National Motor Vehicle Crash Causation Survey; National Highway Traffic Safety Administration (NHTSA): Washington, DC, USA, 2015.

- OSRAM Automotive. Available online: https://www.osram.com/am/specials/trends-in-automotive-lighting/index.jsp (accessed on 30 June 2020).

- The Car Connection. 12 October 2018. Available online: https://www.thecarconnection.com/news/1119327_u-s-to-allow-brighter-self-dimming-headlights-on-new-cars (accessed on 30 June 2020).

- Aubert, D.; Boucher, V.; Bremond, R.; Charbonnier, P.; Cord, A.; Dumont, E.; Foucher, P.; Fournela, F.; Greffier, F.; Gruyer, D.; et al. Digital Imaging for Assessing and Improving Highway Visibility; Transport Research Arena: Paris, France, 2014. [Google Scholar]

- Rajagopalan, A.N.; Chellappa, R. (Eds.) Motion Deblurring Algorithms and Systems; Cambridge University Press: Cambridge, UK, 2014. [Google Scholar]

- Palvanov, A.; Giyenko, A.; Cho, Y.I. Development of Visibility Expectation System Based on Machine Learning. In Computer Information Systems and Industrial Management; Springer: Berlin/Heidelberg, Germany, 2018; pp. 140–153. [Google Scholar]

- Yang, L.; Muresan, R.; Al-Dweik, A.; Hadjileontiadis, L.J. Image-Based Visibility Estimation Algorithm for Intelligent Transportation Systems. IEEE Access 2018, 6, 76728–76740. [Google Scholar] [CrossRef]

- Ioan, S.; Razvan-Catalin, M.; Florin, A. System for Visibility Distance Estimation in Fog Conditions based on Light Sources and Visual Acuity. In Proceedings of the 2016 IEEE International Conference on Automation, Quality and Testing, Robotics (AQTR), Cluj-Napoca, Romania, 19–21 May 2016. [Google Scholar]

- Ovseník, Ľ.; Turán, J.; Mišenčík, P.; Bitó, J.; Csurgai-Horváth, L. Fog density measuring system. Acta Electrotech. Inf. 2012, 12, 67–71. [Google Scholar] [CrossRef]

- Gruyer, D.; Cord, A.; Belaroussi, R. Vehicle detection and tracking by collaborative fusion between laser scanner and camera. In Proceedings of the 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems, Tokyo, Japan, 3–7 November 2013; pp. 5207–5214. [Google Scholar]

- Gruyer, D.; Cord, A.; Belaroussi, R. Target-to-track collaborative association combining a laser scanner and a camera. In Proceedings of the 16th International IEEE Conference on Intelligent Transportation Systems (ITSC 2013), The Hague, The Netherlands, 6–9 October 2013. [Google Scholar]

- Dannheim, C.; Icking, C.; Mäder, M.; Sallis, P. Weather Detection in Vehicles by Means of Camera and LIDAR Systems. In Proceedings of the 2014 Sixth International Conference on Computational Intelligence, Communication Systems and Networks, Tetova, Macedonia, 27–29 May 2014. [Google Scholar]

- Chaurasia, S.; Gohil, B.S. Detection of Day Time Fog over India Using INSAT-3D Data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 4524–4530. [Google Scholar] [CrossRef]

- Levinson, J.; Askeland, J.; Becker, J.; Dolson, J.; Held, D.; Kammel, S.; Kolter, J.Z.; Langer, D.; Pink, O.; Pratt, V.; et al. Towards fully autonomous driving: Systems and algorithms. In Proceedings of the 2011 IEEE Intelligent Vehicles Symposium (IV), Baden-Baden, Germany, 5–9 June 2011; pp. 163–168. [Google Scholar]

- Jegham, I.; Khalifa, A.B. Pedestrian Detection in Poor Weather Conditions Using Moving Camera. In Proceedings of the IEEE/ACS 14th International Conference on Computer Systems and Applications (AICCSA), Hammamet, Tunisia, 30 October–3 November 2017. [Google Scholar]

- Dai, X.; Yuan, X.; Zhang, J.; Zhang, L. Improving the performance of vehicle detection system in bad weathers. In Proceedings of the 2016 IEEE Advanced Information Management, Communicates, Electronic and Automation Control Conference (IMCEC), Xi’an, China, 3–5 October 2016. [Google Scholar]

- Miclea, R.-C.; Silea, I.; Sandru, F. Digital Sunshade Using Head-up Display. In Advances in Intelligent Systems and Computing; Springer: Cham, Switzerland, 2017; Volume 633, pp. 3–11. [Google Scholar]

- Tarel, J.-P.; Hautiere, N. Fast visibility restoration from a single color or gray level image. In Proceedings of the 2009 IEEE 12th International Conference on Computer Vision, Kyoto, Japan, 27 September–4 October 2009; pp. 2201–2208. [Google Scholar]

- Narasimhan, S.G.; Nayar, S.K. Contrast restoration of weather degraded images. IEEE Trans. Pattern Anal. Mach. Intell. 2003, 25, 713–724. [Google Scholar] [CrossRef]

- Hautiere, N.; Labayrade, R.; Aubert, D. Real-Time Disparity Contrast Combination for Onboard Estimation of the Visibility Distance. IEEE Trans. Intell. Transp. Syst. 2006, 7, 201–212. [Google Scholar] [CrossRef]

- Hautiére, N.; Tarel, J.-P.; Lavenant, J.; Aubert, D. Automatic fog detection and estimation of visibility distance through use of an onboard camera. Mach. Vis. Appl. 2006, 17, 8–20. [Google Scholar] [CrossRef]

- Hautière, N.; Tarel, J.P.; Aubert, D. Towards Fog-Free In-Vehicle Vision Systems through Contrast Restoration. In Proceedings of the 2007 IEEE Conference on Computer Vision and Pattern Recognition, Minneapolis, MN, USA, 18–23 June 2007. [Google Scholar]

- Tarel, J.-P.; Hautiere, N.; Caraffa, L.; Cord, A.; Halmaoui, H.; Gruyer, D. Vision Enhancement in Homogeneous and Heterogeneous Fog. IEEE Intell. Transp. Syst. Mag. 2012, 4, 6–20. [Google Scholar] [CrossRef]

- Hautière, N.; Tarel, J.P.; Halmaoui, H.; Brémond, R.; Aubert, D. Enhanced fog detection and free-space segmentation for car navigation. Mach. Vis. Appl. 2014, 25, 667–679. [Google Scholar] [CrossRef]

- Negru, M.; Nedevschi, S. Image based fog detection and visibility estimation for driving assistance systems. In Proceedings of the 2013 IEEE 9th International Conference on Intelligent Computer Communication and Processing (ICCP), Cluj-Napoca, Romania, 5–7 September 2013; pp. 163–168. [Google Scholar]

- Negru, M.; Nedevschi, S. Assisting Navigation in Homogenous Fog. In Proceedings of the 2014 International Conference on Computer Vision Theory and Applications (VISAPP), Lisbon, Portugal, 5–8 January 2014. [Google Scholar]

- Negru, M.; Nedevschi, S.; Peter, R.I. Exponential Contrast Restoration in Fog Conditions for Driving Assistance. IEEE Trans. Intell. Transp. Syst. 2015, 16, 2257–2268. [Google Scholar] [CrossRef]

- Abbaspour, M.J.; Yazdi, M.; Masnadi-Shirazi, M. A new fast method for foggy image enhancemen. In Proceedings of the 2016 24th Iranian Conference on Electrical Engineering (ICEE), Shiraz, Iran, 10–12 May 2016. [Google Scholar]

- Liao, Y.Y.; Tai, S.C.; Lin, J.S.; Liu, P.J. Degradation of turbid images based on the adaptive logarithmic algorithm. Comput. Math. Appl. 2012, 64, 1259–1269. [Google Scholar] [CrossRef][Green Version]

- Halmaoui, H.; Joulan, K.; Hautière, N.; Cord, A.; Brémond, R. Quantitative model of the driver’s reaction time during daytime fog—Application to a head up display-based advanced driver assistance system. IET Intell. Transp. Syst. 2015, 9, 375–381. [Google Scholar] [CrossRef]

- He, K.; Sun, J.; Tang, X. Single Image Haze Removal Using Dark Channel Prior. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 2341–2353. [Google Scholar] [CrossRef]

- Yeh, C.H.; Kang, L.W.; Lin, C.Y.; Lin, C.Y. Efficient image/video dehazing through haze density analysis based on pixel-based dark channel prior. In Proceedings of the 2012 International Conference on Information Security and Intelligent Control, Yunlin, Taiwan, 14–16 August 2012. [Google Scholar]

- Yeh, C.H.; Kang, L.W.; Lee, M.S.; Lin, C.Y. Haze Effect Removal from Image via Haze Density estimation in Optical Model. Opt. Express 2013, 21, 27127–27141. [Google Scholar] [CrossRef]

- Tan, R.T. Visibility in bad weather from a single image. In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Fattal, R. Single image dehazing. ACM Trans. Graph. 2008, 27, 1–9. [Google Scholar] [CrossRef]

- Huang, S.-C.; Chen, B.-H.; Wang, W.-J. Visibility Restoration of Single Hazy Images Captured in Real-World Weather Conditions. IEEE Trans. Circuits Syst. Video Technol. 2014, 24, 1814–1824. [Google Scholar] [CrossRef]

- Wang, Z.; Feng, Y. Fast single haze image enhancement. Comput. Electr. Eng. 2014, 40, 785–795. [Google Scholar] [CrossRef]

- Zhang, Y.-Q.; Ding, Y.; Xiao, J.-S.; Liu, J.; Guo, Z. Visibility enhancement using an image filtering approach. EURASIP J. Adv. Signal Process. 2012, 2012, 220. [Google Scholar] [CrossRef]

- Tarel, J.-P.; Hautiere, N.; Cord, A.; Gruyer, D.; Halmaoui, H. Improved visibility of road scene images under heterogeneous fog. In Proceedings of the 2010 IEEE Intelligent Vehicles Symposium, La Jolla, CA, USA, 21–24 June 2010. [Google Scholar]

- Wang, R.; Yang, X. A fast method of foggy image enhancement. In Proceedings of the 2012 International Conference on Measurement, Information and Control, Harbin, China, 18–20 May 2012. [Google Scholar]

- Kim, J.-H.; Jang, W.-D.; Sim, J.-Y.; Kim, C.-S. Optimized contrast enhancement for real-time image and video dehazing. J. Vis. Commun. Image Represent. 2013, 24, 410–425. [Google Scholar] [CrossRef]

- Narasimhan, S.G.; Nayar, S.K. Vision and the atmosphere. Int. J. Comput. Vis. 2002, 48, 233–254. [Google Scholar] [CrossRef]

- Peli, E. Contrast in complex images. J. Opt. Soc. Am. A 1990, 7, 2032–2040. [Google Scholar] [CrossRef]

- He, K.; Sun, J.; Tang, X. Guided image filtering. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 1397–1409. [Google Scholar] [CrossRef]

- Gonzalez, R.C.; Woods, R.E. Digital Image Processing, 3rd ed.; Prentice-Hall: Hoboken, NJ, USA, 2007. [Google Scholar]

- Su, C.; Wang, W.; Zhang, X.; Jin, L. Dehazing with Offset Correction and a Weighted Residual Map. Electronics 2020, 9, 1419. [Google Scholar] [CrossRef]

- Wu, X.; Wang, K.; Li, Y.; Liu, K.; Huang, B. Accelerating Haze Removal Algorithm Using CUDA. Remote Sens. 2020, 13, 85. [Google Scholar] [CrossRef]

- Ngo, D.; Lee, S.; Nguyen, Q.H.; Ngo, T.M.; Lee, G.D.; Kang, B. Single Image Haze Removal from Image En-hancement Perspective for Real-Time Vision-Based Systems. Sensors 2020, 20, 5170. [Google Scholar] [CrossRef] [PubMed]

- He, R.; Guo, X.; Shi, Z. SIDE—A Unified Framework for Simultaneously Dehazing and Enhancement of Nighttime Hazy Images. Sensors 2020, 20, 5300. [Google Scholar] [CrossRef] [PubMed]

- Zhu, Q.; Mai, J.; Song, Z.; Wu, D.; Wang, J.; Wang, L. Mean shift-based single image dehazing with re-refined transmission map. In Proceedings of the 2014 IEEE International Conference on Systems, Man, and Cybernetics (SMC), San Diego, CA, USA, 5–8 October 2014. [Google Scholar]

- Das, D.; Roy, K.; Basak, S.; Chaudhury, S.S. Visibility Enhancement in a Foggy Road Along with Road Boundary Detection. In Proceedings of the Blockchain Technology and Innovations in Business Processes, New Delhi, India, 8 October 2015; pp. 125–135. [Google Scholar]

- Yuan, H.; Liu, C.; Guo, Z.; Sun, Z. A Region-Wised Medium Transmission Based Image Dehazing Method. IEEE Access 2017, 5, 1735–1742. [Google Scholar] [CrossRef]

- Zhu, Y.-B.; Liu, J.-M.; Hao, Y.-G. An single image dehazing algorithm using sky detection and segmentation. In Proceedings of the 2014 7th International Congress on Image and Signal Processing, Hainan, China, 20–23 December 2014; pp. 248–252. [Google Scholar]

- Gangodkar, D.; Kumar, P.; Mittal, A. Robust Segmentation of Moving Vehicles under Complex Outdoor Conditions. IEEE Trans. Intell. Transp. Syst. 2012, 13, 1738–1752. [Google Scholar] [CrossRef]

- Yuan, Z.; Xie, X.; Hu, J.; Zhang, Y.; Yao, D. An Effective Method for Fog-degraded Traffic Image Enhance-ment. In Proceedings of the 2014 IEEE International Conference on Service Operations and Logistics, and Informatics, Qingdao, China, 8–10 October 2014. [Google Scholar]

- Wu, B.-F.; Juang, J.-H. Adaptive Vehicle Detector Approach for Complex Environments. IEEE Trans. Intell. Transp. Syst. 2012, 13, 817–827. [Google Scholar] [CrossRef]

- Cireşan, D.; Meier, U.; Masci, J.; Schmidhuber, J. Multi-column deep neural network for traffic sign classification. Neural Netw. 2012, 32, 333–338. [Google Scholar] [CrossRef]

- Hussain, F.; Jeong, J. Visibility Enhancement of Scene Images Degraded by Foggy Weather Conditions with Deep Neural Networks. J. Sens. 2015, 2016, 1–9. [Google Scholar] [CrossRef]

- Singh, G.; Singh, A. Object Detection in Fog Degraded Images. Int. J. Comput. Sci. Inf. Secur. 2018, 16, 174–182. [Google Scholar]

- Cho, Y.I.; Palvanov, A. A New Machine Learning Algorithm for Weather Visibility and Food Recognition. J. Robot. Netw. Artif. Life 2019, 6, 12. [Google Scholar] [CrossRef]

- Hu, A.; Xie, Z.; Xu, Y.; Xie, M.; Wu, L.; Qiu, Q. Unsupervised Haze Removal for High-Resolution Optical Remote-Sensing Images Based on Improved Generative Adversarial Networks. Remote Sens. 2020, 12, 4162. [Google Scholar] [CrossRef]

- Ha, E.; Shin, J.; Paik, J. Gated Dehazing Network via Least Square Adversarial Learning. Sensors 2020, 20, 6311. [Google Scholar] [CrossRef] [PubMed]

- Chen, J.; Wu, C.; Chen, H.; Cheng, P. Unsupervised Dark-Channel Attention-Guided CycleGAN for Sin-gle-Image Dehazing. Sensors 2020, 20, 6000. [Google Scholar] [CrossRef]

- Ngo, D.; Lee, S.; Lee, G.-D.; Kang, B.; Ngo, D. Single-Image Visibility Restoration: A Machine Learning Approach and Its 4K-Capable Hardware Accelerator. Sensors 2020, 20, 5795. [Google Scholar] [CrossRef] [PubMed]

- Feng, M.; Yu, T.; Jing, M.; Yang, G. Learning a Convolutional Autoencoder for Nighttime Image Dehazing. Information 2020, 11, 424. [Google Scholar] [CrossRef]

- Middleton, W.E.K.; Twersky, V. Vision through the Atmosphere. Phys. Today 1954, 7, 21. [Google Scholar] [CrossRef]

- McCartney, E.J.; Hall, F.F. Optics of the Atmosphere: Scattering by Molecules and Particles. Phys. Today 1977, 30, 76–77. [Google Scholar] [CrossRef]

- Surjikov, S.T. Mie Scattering. Int. J. Med. Mushrooms 2011. Available online: https://www.thermopedia.com/jp/content/956/ (accessed on 10 May 2021). [CrossRef]

- Pesek, J.; Fiser, O. Automatically low clouds or fog detection, based on two visibility meters and FSO. In Proceedings of the 2013 Conference on Microwave Techniques (COMITE), Pardubice, Czech Republic, 17–18 April 2013; pp. 83–85. [Google Scholar]

- Brazda, V.; Fiser, O.; Rejfek, L. Development of system for measuring visibility along the free space optical link using digital camera. In Proceedings of the 2014 24th International Conference Radioelektronika, Bratislava, Slovakia, 15–16 April 2014; pp. 1–4. [Google Scholar]

- Brazda, V.; Fiser, O. Estimation of fog drop size distribution based on meteorological measurement. In Proceedings of the 2015 Conference on Microwave Techniques (COMITE), Pardubice, Czech Republic, 23–24 April 2008; pp. 1–4. [Google Scholar]

- Ovseník, Ľ.; Turán, J.; Tatarko, M.; Turan, M.; Vásárhelyi, J. Fog sensor system: Design and measurement. In Proceedings of the 13th International Carpathian Control Conference (ICCC), High Tatras, Slovakia, 28–31 May 2012; pp. 529–532. [Google Scholar]

- Sallis, P.; Dannheim, C.; Icking, C.; Maeder, M. Air Pollution and Fog Detection through Vehicular Sensors. In Proceedings of the 2014 8th Asia Modelling Symposium, Taipei, Taiwan, 23–25 September 2014; pp. 181–186. [Google Scholar]

- Kim, Y.-H.; Moon, S.-H.; Yoon, Y. Detection of Precipitation and Fog Using Machine Learning on Backscatter Data from Lidar Ceilometer. Appl. Sci. 2020, 10, 6452. [Google Scholar] [CrossRef]

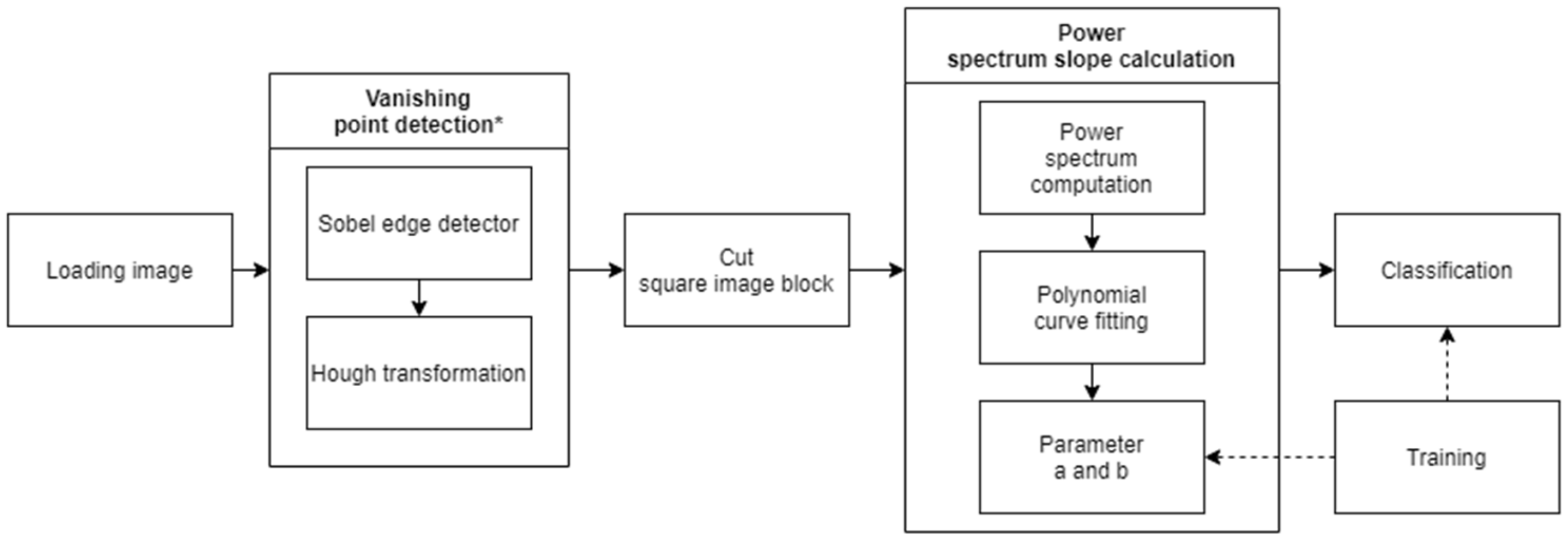

- Pavlic, M.; Belzner, H.; Rigoll, G.; Ilic, S. Image based fog detection in vehicles. In Proceedings of the 2012 IEEE Intelligent Vehicles Symposium, Madrid, Spain, 3–7 June 2012; pp. 1132–1137. [Google Scholar]

- Pavlic, M.; Rigoll, G.; Ilic, S. Classification of images in fog and fog-free scenes for use in vehicles. In Proceedings of the 2013 IEEE Intelligent Vehicles Symposium (IV), Gold Coast City, Australia, 23–26 June 2013. [Google Scholar]

- Spinneker, R.; Koch, C.; Park, S.B.; Yoon, J.J. Fast Fog Detection for Camera Based Advanced Driver Assistance Systems. In Proceedings of the 17th International IEEE Conference on Intelligent Transportation Systems (ITSC), The Hague, The Netherlands, 24–26 September 2014. [Google Scholar]

- Asery, R.; Sunkaria, R.K.; Sharma, L.D.; Kumar, A. Fog detection using GLCM based features and SVM. In Proceedings of the 2016 Conference on Advances in Signal Processing (CASP), Pune, India, 9–11 June 2016; pp. 72–76. [Google Scholar]

- Zhang, D.; Sullivan, T.; O’Connor, N.E.; Gillespie, R.; Regan, F. Coastal fog detection using visual sensing. In Proceedings of the OCEANS 2015, Genova, Italy, 18–21 May 2015. [Google Scholar]

- Alami, S.; Ezzine, A.; Elhassouni, F. Local Fog Detection Based on Saturation and RGB-Correlation. In Proceedings of the 2016 13th International Conference on Computer Graphics, Imaging and Visualization (CGiV), Beni Mellal, Morocco, 29 March–1 April 2016; pp. 1–5. [Google Scholar]

- Gallen, R.; Cord, A.; Hautière, N.; Aubert, D. Method and Device for Detecting Fog at Night. Versailles. France Patent WO 2 012 042 171 A2, 5 April 2012. [Google Scholar]

- Gallen, R.; Cord, A.; Hautière, N.; Dumont, É.; Aubert, D. Night time visibility analysis and estimation method in the presence of dense fog. IEEE Trans. Intell. Transp. Syst. 2015, 16, 310–320. [Google Scholar] [CrossRef]

- Pagani, G.A.; Noteboom, J.W.; Wauben, W. Deep Neural Network Approach for Automatic Fog Detection. In Proceedings of the CIMO TECO, Amsterdam, The Netherlands, 8–16 October 2018. [Google Scholar]

- Li, S.; Fu, H.; Lo, W.-L. Meteorological Visibility Evaluation on Webcam Weather Image Using Deep Learning Features. Int. J. Comput. Theory Eng. 2017, 9, 455–461. [Google Scholar] [CrossRef]

- Chaabani, H.; Kamoun, F.; Bargaoui, H.; Outay, F.; Yasar, A.-U.-H. A Neural network approach to visibility range estimation under foggy weather conditions. Procedia Comput. Sci. 2017, 113, 466–471. [Google Scholar] [CrossRef]

- Meng, G.; Wang, Y.; Duan, J.; Xiang, S.; Pan, C. Efficient image dehazing with boundary constraint and con-textual regularization. In Proceedings of the 2013 IEEE International Conference on Computer Vision, Sydney, Australia, 1–8 December 2013. [Google Scholar]

- Cai, B.; Xu, X.; Jia, K.; Qing, C.; Tao, D. DehazeNet: An End-to-End System for Single Image Haze Removal. IEEE Trans. Image Process. 2016, 25, 5187–5198. [Google Scholar] [CrossRef]

- Berman, D.; Treibitz, T.; Avidan, S. Non-local Image Dehazing. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 1674–1682. [Google Scholar]

- Martínez-Domingo, M.Á.; Valero, E.M.; Nieves, J.L.; Molina-Fuentes, P.J.; Romero, J.; Hernández-Andrés, J. Single Image Dehazing Algorithm Analysis with Hyperspectral Images in the Visible Range. Sensors 2020, 20, 6690. [Google Scholar] [CrossRef]

- Hautiere, N.; Tarel, J.P.; Aubert, D.; Dumont, E. Blind Contrast Enhancement Assessment by Gradient Rati-oing at Visible Edges. Image Anal. Stereol. 2008, 27, 87–95. [Google Scholar] [CrossRef]

- Luzón-González, R.; Nieves, J.L.; Romero, J. Recovering of weather degraded images based on RGB response ratio constancy. Appl. Opt. 2015, 54, B222–B231. [Google Scholar] [CrossRef]

- Zhang, M.; Ren, J. Driving and image enhancement for CCD sensing image system. In Proceedings of the 2010 3rd International Conference on Computer Science and Information Technology, Chengdu, China, 9–11 July 2010. [Google Scholar]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Memedi, A.; Dressler, F. Vehicular Visible Light Communications: A Survey. IEEE Commun. Surv. Tutor. 2021, 23, 161–181. [Google Scholar] [CrossRef]

- Eso, E.; Burton, A.; Hassan, N.B.; Abadi, M.M.; Ghassemlooy, Z.; Zvanovec, S. Experimental Investigation of the Effects of Fog on Optical Camera-based VLC for a Vehicular Environment. In Proceedings of the 2019 15th International Conference on Telecommunications (ConTEL), Graz, Austria, 3–5 July 2019; pp. 1–5. [Google Scholar]

- Tian, X.; Miao, Z.; Han, X.; Lu, F. Sea Fog Attenuation Analysis of White-LED Light Sources for Maritime VLC. In Proceedings of the 2019 IEEE International Conference on Computational Electromagnetics (ICCEM), Shanghai, China, 20–22 March 2019; pp. 1–3. [Google Scholar]

- Elamassie, M.; Karbalayghareh, M.; Miramirkhani, F.; Kizilirmak, R.C.; Uysal, M. Effect of Fog and Rain on the Performance of Vehicular Visible Light Communications. In Proceedings of the 2018 IEEE 87th Vehicular Technology Conference (VTC Spring), Porto, Portugal, 3–6 June 2018; pp. 1–6. [Google Scholar]

- Matus, V.; Eso, E.; Teli, S.R.; Perez-Jimenez, R.; Zvanovec, S. Experimentally Derived Feasibility of Optical Camera Communications under Turbulence and Fog Conditions. Sensors 2020, 20, 757. [Google Scholar] [CrossRef]

- Gopalan, R.; Hong, T.; Shneier, M.; Chellappa, R. A Learning Approach towards Detection and Tracking of Lane Markings. IEEE Trans. Intell. Transp. Syst. 2012, 13, 1088–1098. [Google Scholar] [CrossRef]

- Joshy, N.; Jose, D. Improved detection and tracking of lane marking using hough transform. IJCSMC 2014, 3, 507–513. [Google Scholar]

- Liu, Z.; He, Y.; Wang, C.; Song, R. Analysis of the Influence of Foggy Weather Environment on the Detection Effect of Machine Vision Obstacles. Sensors 2020, 20, 349. [Google Scholar] [CrossRef]

- Kim, B.K.; Sumi, Y. Vision-Based Safety-Related Sensors in Low Visibility by Fog. Sensors 2020, 20, 2812. [Google Scholar] [CrossRef]

- Gallen, R.; Hautiere, N.; Cord, A.; Glaser, S. Supporting Drivers in Keeping Safe Speed in Adverse Weather Conditions by Mitigating the Risk Level. IEEE Trans. Intell. Transp. Syst. 2013, 14, 1558–1571. [Google Scholar] [CrossRef]

- Coloma, J.F.; García, M.; Wang, Y.; Monzón, A. Green Eco-Driving Effects in Non-Congested Cities. Sustainability 2018, 10, 28. [Google Scholar] [CrossRef]

- Li, Y.; Hoogeboom, P.; Russchenberg, H. Radar observations and modeling of fog at 35 GHz. In Proceedings of the the 8th European Conference on Antennas and Propagation (EuCAP 2014), The Hague, The Netherlands, 6–11 April 2014; pp. 1053–1057. [Google Scholar]

- Liang, X.; Huang, Z.; Lu, L.; Tao, Z.; Yang, B.; Li, Y. Deep Learning Method on Target Echo Signal Recognition for Obscurant Penetrating Lidar Detection in Degraded Visual Environments. Sensors 2020, 20, 3424. [Google Scholar] [CrossRef] [PubMed]

- Miclea, R.-C.; Dughir, C.; Alexa, F.; Sandru, F.; Silea, A. Laser and LIDAR in A System for Visibility Distance Estimation in Fog Conditions. Sensors 2020, 20, 6322. [Google Scholar] [CrossRef] [PubMed]

- Miclea, R.-C.; Silea, I. Visibility Detection in Foggy Environment. In Proceedings of the 2015 20th International Conference on Control Systems and Computer Science, Bucharest, Romania, 27–29 May 2015; pp. 959–964. [Google Scholar]

- Li, L.; Zhang, H.; Zhao, C.; Ding, X. Radiation fog detection and warning system of highway based on wireless sensor networks. In Proceedings of the 2014 IEEE 7th Joint International Information Technology and Artificial Intelligence Conference, Chongqing, China, 20–21 December 2014; pp. 148–152. [Google Scholar]

- Tóth, J.; Ovseník, Ľ.; Turán, J. Free Space Optics—Monitoring Setup for Experimental Link. Carpathian J. Electron. Comput. Eng. 2015, 8, 27–30. [Google Scholar]

- Tsubaki, K. Measurements of fine particle size using image processing of a laser diffraction image. Jpn. J. Appl. Phys. 2016, 55, 08RE08. [Google Scholar] [CrossRef]

- Kumar, T.S.; Pavya, S. Segmentation of visual images under complex outdoor conditions. In Proceedings of the 2014 International Conference on Communication and Signal Processing, Chennai, India, 3–5 April 2014; pp. 100–104. [Google Scholar]

- Han, Y.; Hu, D. Multispectral Fusion Approach for Traffic Target Detection in Bad Weather. Algorithms 2020, 13, 271. [Google Scholar] [CrossRef]

- Ibrahim, M.R.; Haworth, J.; Cheng, T. WeatherNet: Recognising Weather and Visual Conditions from Street-Level Images Using Deep Residual Learning. ISPRS Int. J. Geo-Inf. 2019, 8, 549. [Google Scholar] [CrossRef]

- Qin, H.; Qin, H. Image-Based Dedicated Methods of Night Traffic Visibility Estimation. Appl. Sci. 2020, 10, 440. [Google Scholar] [CrossRef]

- Weston, M.; Temimi, M. Application of a Nighttime Fog Detection Method Using SEVIRI Over an Arid Environment. Remote Sens. 2020, 12, 2281. [Google Scholar] [CrossRef]

- Han, J.-H.; Suh, M.-S.; Yu, H.-Y.; Roh, N.-Y. Development of Fog Detection Algorithm Using GK2A/AMI and Ground Data. Remote Sens. 2020, 12, 3181. [Google Scholar] [CrossRef]

- Landwehr, K.; Brendel, E.; Hecht, H. Luminance and contrast in visual perception of time to collision. Vis. Res. 2013, 89, 18–23. [Google Scholar] [CrossRef] [PubMed]

- Razvan-Catalin, M.; Ioan, S.; Ciprian, D. Experimental Model for validation of anti-fog technologies. In Proceedings of the ITEMA 2017 Recent Advances in Information Technology, Tourism, Economics, Management and Agriculture, Budapest, Hungary, 26 October 2017; pp. 914–922. [Google Scholar]

| Method | Type of Method/Operations | Advantages to Base Solution | Results |

|---|---|---|---|

| Yeh et al. [32] | Addition of two priors pixel-based dark channel prior and pixel-based bright channel prior | Lower computational complexity | Outperforms or is comparable to the reference implementation |

| Yeh et al. [33] | Addition of two priors pixel-based dark channel prior and the pixel-based bright channel prior | Lower computational complexity | Outperforms or is comparable to the reference implementation |

| Tan [34] | Markov random fields (MRFs) | Does not require the geometrical information of the input image, nor any user interactions | No comparison to reference made |

| Fattal [35] | Surface shading model, color estimation | Provides transmission estimates | No comparison to reference made |

| Huang et al. [36] | Depth estimation module, color analysis module, and visibility restoration | Quality of results increased | Outperforms reference implementation |

| Algorithm | Dark Channel Prior | Tarel | Meng | Dehaze Net | Berman | |

|---|---|---|---|---|---|---|

| Metric | ||||||

| e Descriptor | 2 | 5 | 1 | 4 | 3 | |

| Gray Mean Gradient | 1 | 4 | 2 | 5 | 3 | |

| Standard Deviation | 1 | 5 | 4 | 3 | 2 | |

| Entropy | 1 | 5 | 4 | 2 | 3 | |

| Peak Signal to Noise Ratio | 5 | 3 | 2 | 1 | 4 | |

| Structural Similarity Index Measure | 5 | 2 | 4 | 1 | 3 | |

| Algorithm | Dark Channel Prior | Tarel | Meng | Dehaze Net | Berman | |

|---|---|---|---|---|---|---|

| Survey | ||||||

| Similarity to haze-free image | 4 | 5 | 1 | 2 | 3 | |

| Increase in visibility of the objects | 2 | 5 | 3 | 4 | 1 | |

| Traffic Elements | Traffic Situations | Possible Events That Shall Be Analyzed from VLC Perspective and the Influence of Weather Factors (Rain, Fog, Smog, Snow) |

|---|---|---|

| Infrastructure | Accidents | Unexpected, produce traffic jams by blocking road lanes |

| Road junctions | Poorly marked, can contain obstacles that reduce the visibility | |

| Traffic lights | Faulty functioning, intermittent functioning, not functioning | |

| Traffic signs | Not functioning, there can be obstacles that reduce visibility | |

| Vehicles in a junction | Head to Head | Faulty signaling |

| Head to Tail/ Tail to Head | Safety distance is not kept, headlights or rear lights are not working | |

| Left side | Can contain obstacles (such as vegetation) that reduce the visibility, traffic rules are not respected because blinkers are not used | |

| Right side | Can contain obstacles (such as vegetation) that reduce the visibility, traffic rules are not respected because blinkers are not used | |

| Parked vehicles | Parking slots | Moving backwards, sometimes simultaneously with other cars |

| Roadside parking | Leaving the parking spot | |

| Stationary vehicles | In forbidden areas, no warning lights, near junctions or crosswalks | |

| Pedestrians | Jaywalking | Areas with low visibility and no warnings lights |

| Exiting vehicle | Areas with high traffic load, getting out of the car without ensuring that there are safe circumstances |

| Pulse Amplitude Modulation Size | Maximum Achievable Distance for a Reliable Transmission | |||

|---|---|---|---|---|

| Clear | Rain | Fog, V = 50 m | Fog, V = 10 m | |

| 2-PAM | 72.21 | 69.13 | 52.85 | 26.93 |

| 8-PAM | 53.23 | 50.98 | 39.17 | 19.98 |

| 32-PAM | 38.73 | 37.11 | 28.71 | 14.66 |

| Equipment | Components | Communication Link | Roles and Functions |

|---|---|---|---|

| Sensor Terminal | Visibility Sensor/Fog Sensor Wireless Sensor Network Terminal | Wireless sensor network | Collects data from the environment and sends them to the local controller station |

| Local Controller Station | 3G module Satellite module | Processes information from the detector and alerts when pre-defined thresholds are reached | |

| Remote Station | 3G and Satellite links | Informs drivers about the visibility conditions in a specific area |

| Methods | Evaluation Criteria | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| Computation Complexity | Availability on Vehicles | Data Processing Speed | Day/Night Use | Real-Time Use | Result Distribution | Reliable | Link to Visual Accuracy | ||

| Image dehazing | Koschmieder’s law [22,23,24,25,26,27,28,29,30] | Medium/High | Partial (camera) | Medium | Daytime only | Yes | Local for 1 user | No (not for all inputs) | Yes |

| Dark channel prior [31,32,33,34,35,36,37,38,39,40,41,42,43,44,45,46,47,48] | High | Partial (camera) | Medium | Daytime only | Yes | Local for 1 user | No (not for all inputs) | Yes | |

| Dark channel prior integrated in SIDE [49] | High | Partial (camera) | Medium | Both | Yes | Local for 1 user | Yes | Yes | |

| Image segmentation using single input image [50,51,52,53] | High | Partial (camera) | Low | Daytime only | No | Local for 1 user | No | Yes | |

| Image segmentation using multiple input images [54,55,56] | High | Partial (camera) | Medium | Daytime only | Yes (notify drivers) | Local for many users (highways) | No (not for all cases) | Yes | |

| Learning-based methods I [57,58,59,60] | High | Partial (camera) | Medium | Daytime only | No | Local for many users (highways) | Depends on the training data | No | |

| Learning-based methods II [61] | High | No | Medium | Daytime only | No | Large area | Depends on the training data | Yes | |

| Learning-based methods III [62,63] | High | Partial (camera) | Medium | Daytime only | No | Local for 1 user | Depends on the training data | Yes | |

| Learning-based methods IV [64] | High | Partial (camera + extra hardware) | High | Daytime only | Yes | Local for 1 user | Depends on the training data | Yes | |

| Learning-based methods V [65] | High | Partial (camera) | High | Both | Yes | Local for 1 user | Depends on the training data | Yes | |

| Fog detection and visibility estimation | Direct transmission measurement [8,69,70,71] | Low | No | High | Both | Yes | Local for many users (highways) | Yes | No (still need to prove) |

| Backscattering measurement I [9,10,11,12,72,73] | Low | Partial (LIDAR) | High | Both | Yes | Local for 1 or many users | Yes | No (still need to prove) | |

| Backscattering measurement II [74] | Medium | No | Medium | Both | Yes | Local for 1 or many users | No | Yes | |

| Global feature image-based analysis [75,76,77,78,79,80,81,82,83,84,85] | Medium | Partial (camera) | Low | Both | No | Local for 1 user | No | Yes | |

| Sensors and Systems | Camera + LIDAR [12] | High | Partial (High-end vehicles) | High | Both | Yes | Local for 1 or many users | Yes | Yes |

| Learning based methods + LIDAR [106] | High | Partial (LIDAR) | Medium | Both | Yes | Local for 1 user | Depends on the training data | Yes | |

| Radar [80] | Medium | Partial (High-end vehicles) | High | Both | Yes | Local for 1 or many users | No (need to be prove in complex scenarios) | Yes | |

| Highway static system (laser) [108] | Medium | No (static system) | Medium | Both | Yes | Local (can be extend to a larger area) | Yes | No (still need to prove) | |

| Motion detection static system [112] | Medium | No (static system) | Medium | Day | Yes | Local for 1 or many users | No (not for all cases) | Yes | |

| Camera based static system [113,114,115] | High | No (static system) | Medium | Both | Yes | Local for 1 or many users | Depends on the training data | Yes | |

| Satellite-based system I [116] | High | No (satellite-based system) | Medium | Night | Yes | Large area | Yes | Yes | |

| Satellite-based system II [117] | High | No (satellite-based system) | Medium | Both | Yes | Large area | Yes | Yes | |

| Wireless sensor network [109] | High | No (static system) | Medium | Both | Yes | Large area | No (not tested in real conditions) | No | |

| Visibility Meter (camera) [69,70] | Medium | - | Medium | Day time only | No | Local for many users (highways) | No (not tested in real conditions) | No | |

| Fog sensor (LWC, particle surface, visibility) [71] | Medium | No (PVM-100) | Medium | Both | - | Local for many users (highways) | No (error rate ~20%) | No | |

| Fog sensor (density, temperature, humidity) [9,72] | Medium | No | Low | Both | No | Local for many users (highways) | No | No | |

| Fog sensor (particle size—laser and camera) [107,110] | High | Partial (High-end vehicles) | High | Day time only | No | Local for many users (highways) | No | No | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Miclea, R.-C.; Ungureanu, V.-I.; Sandru, F.-D.; Silea, I. Visibility Enhancement and Fog Detection: Solutions Presented in Recent Scientific Papers with Potential for Application to Mobile Systems. Sensors 2021, 21, 3370. https://doi.org/10.3390/s21103370

Miclea R-C, Ungureanu V-I, Sandru F-D, Silea I. Visibility Enhancement and Fog Detection: Solutions Presented in Recent Scientific Papers with Potential for Application to Mobile Systems. Sensors. 2021; 21(10):3370. https://doi.org/10.3390/s21103370

Chicago/Turabian StyleMiclea, Răzvan-Cătălin, Vlad-Ilie Ungureanu, Florin-Daniel Sandru, and Ioan Silea. 2021. "Visibility Enhancement and Fog Detection: Solutions Presented in Recent Scientific Papers with Potential for Application to Mobile Systems" Sensors 21, no. 10: 3370. https://doi.org/10.3390/s21103370

APA StyleMiclea, R.-C., Ungureanu, V.-I., Sandru, F.-D., & Silea, I. (2021). Visibility Enhancement and Fog Detection: Solutions Presented in Recent Scientific Papers with Potential for Application to Mobile Systems. Sensors, 21(10), 3370. https://doi.org/10.3390/s21103370