Correlations in Joint Spectral and Polarization Imaging

Abstract

1. Introduction

2. Joint Spectral and Polarization Imaging

2.1. Filter Array Imaging

2.2. Reflection Model

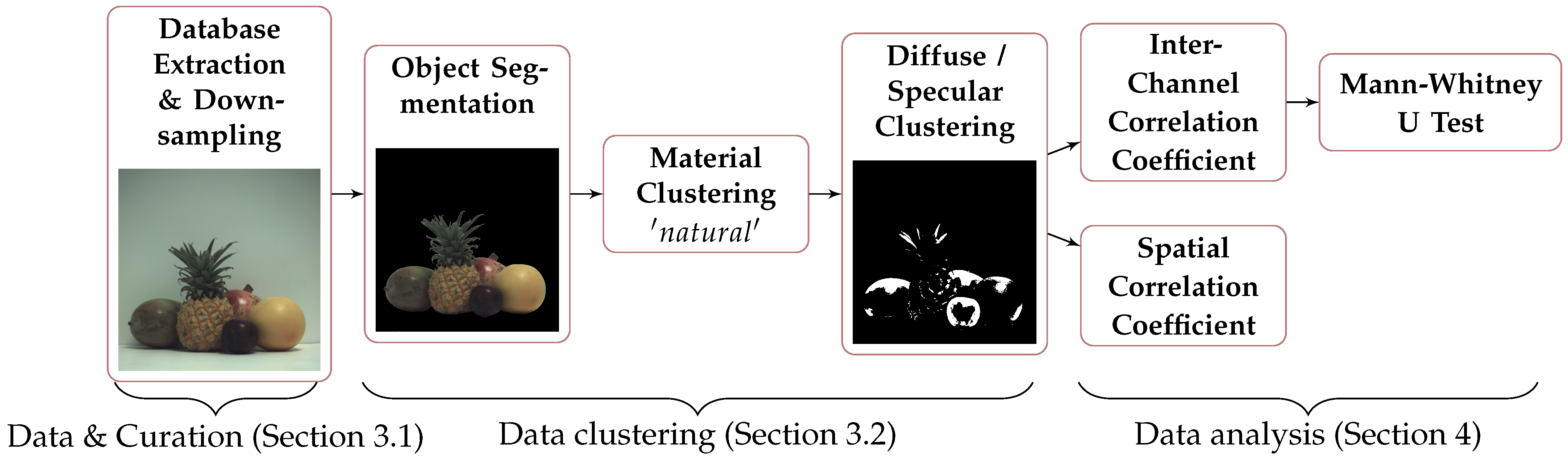

3. Experimental Protocol

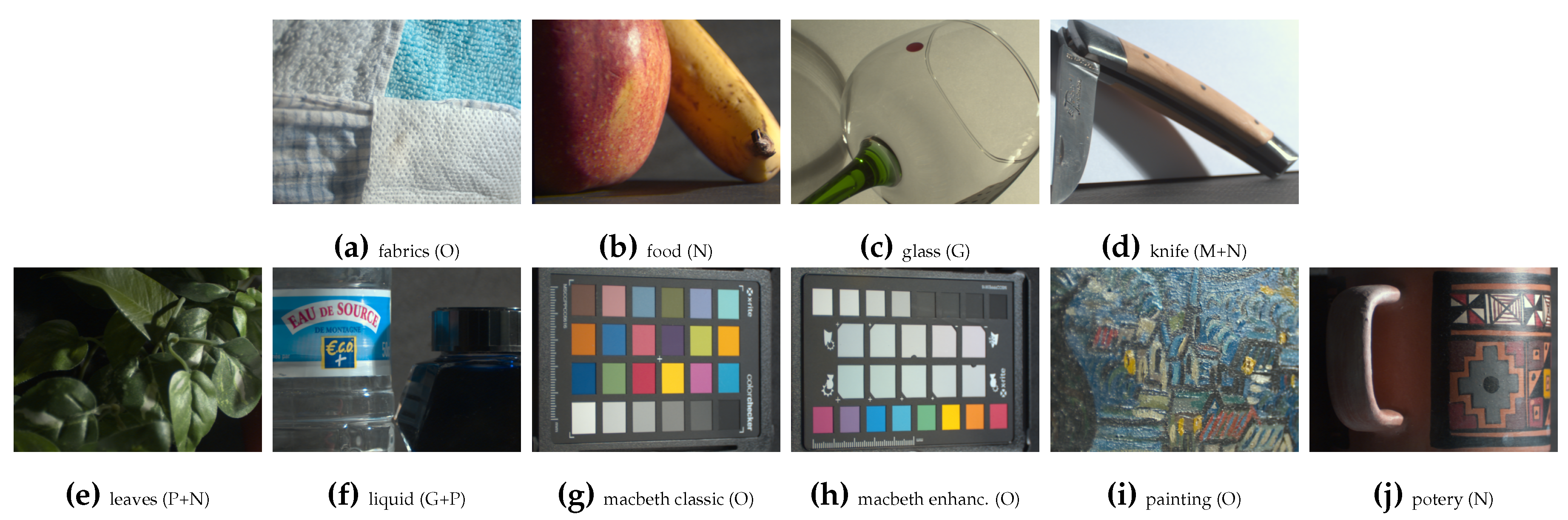

3.1. Data & Curation

3.2. Data Clustering

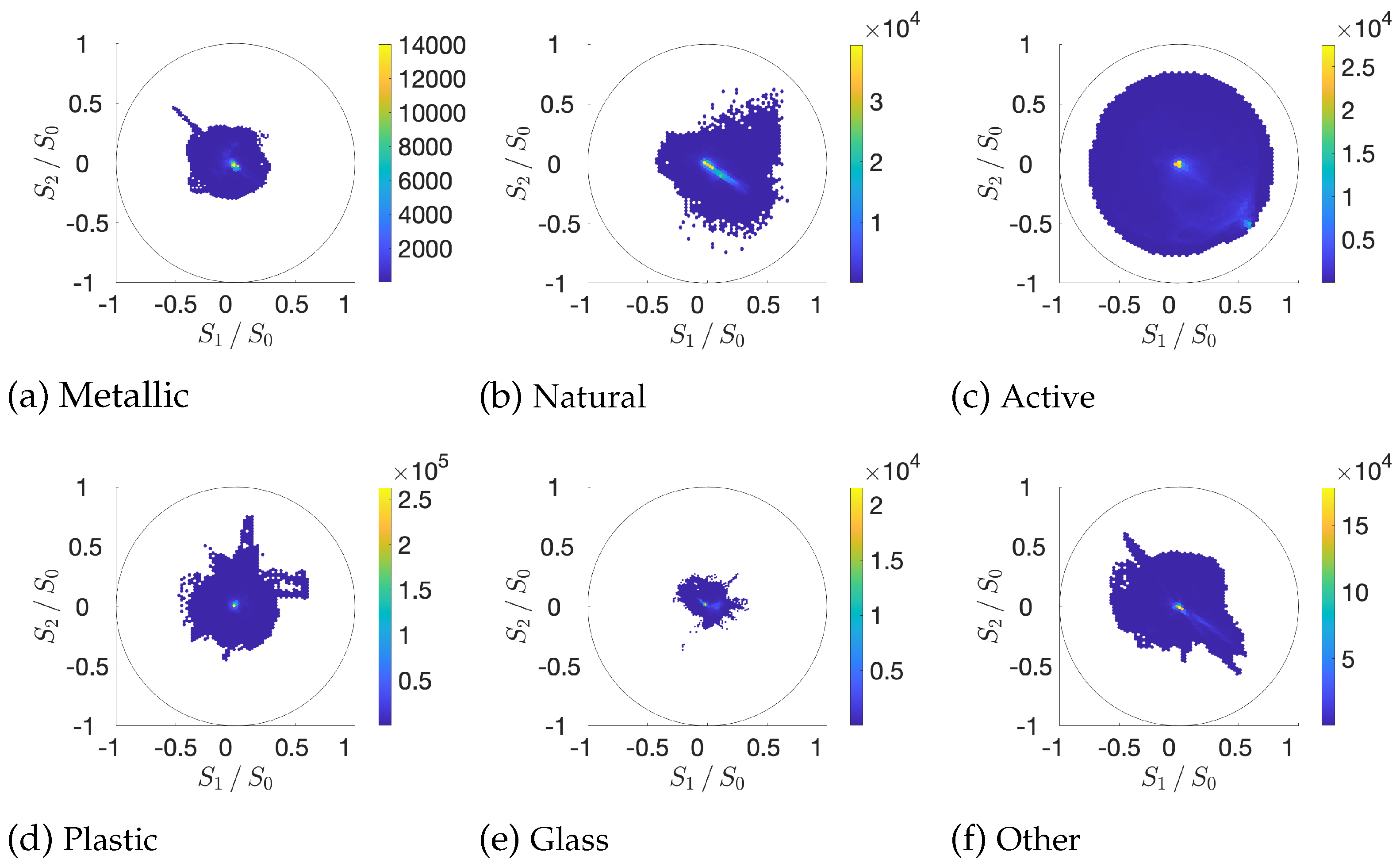

3.3. Global Visualization of Data

4. Data Analysis

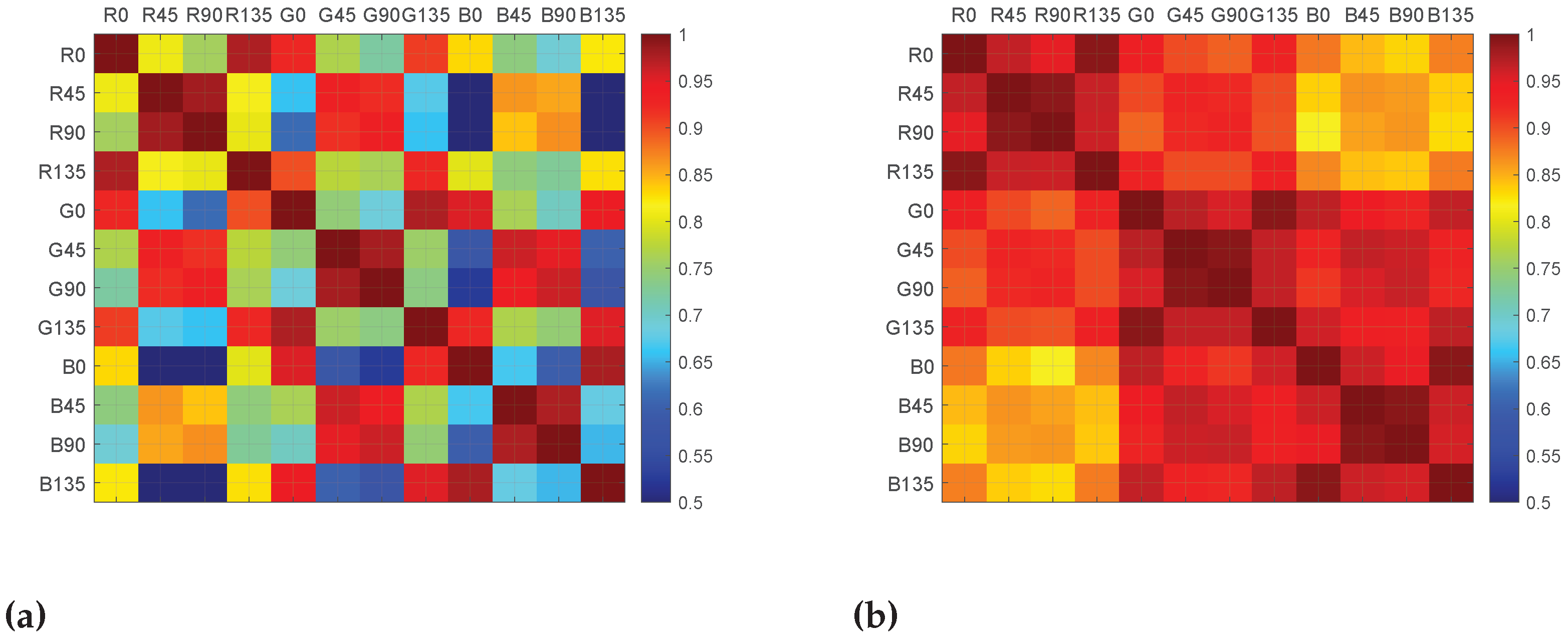

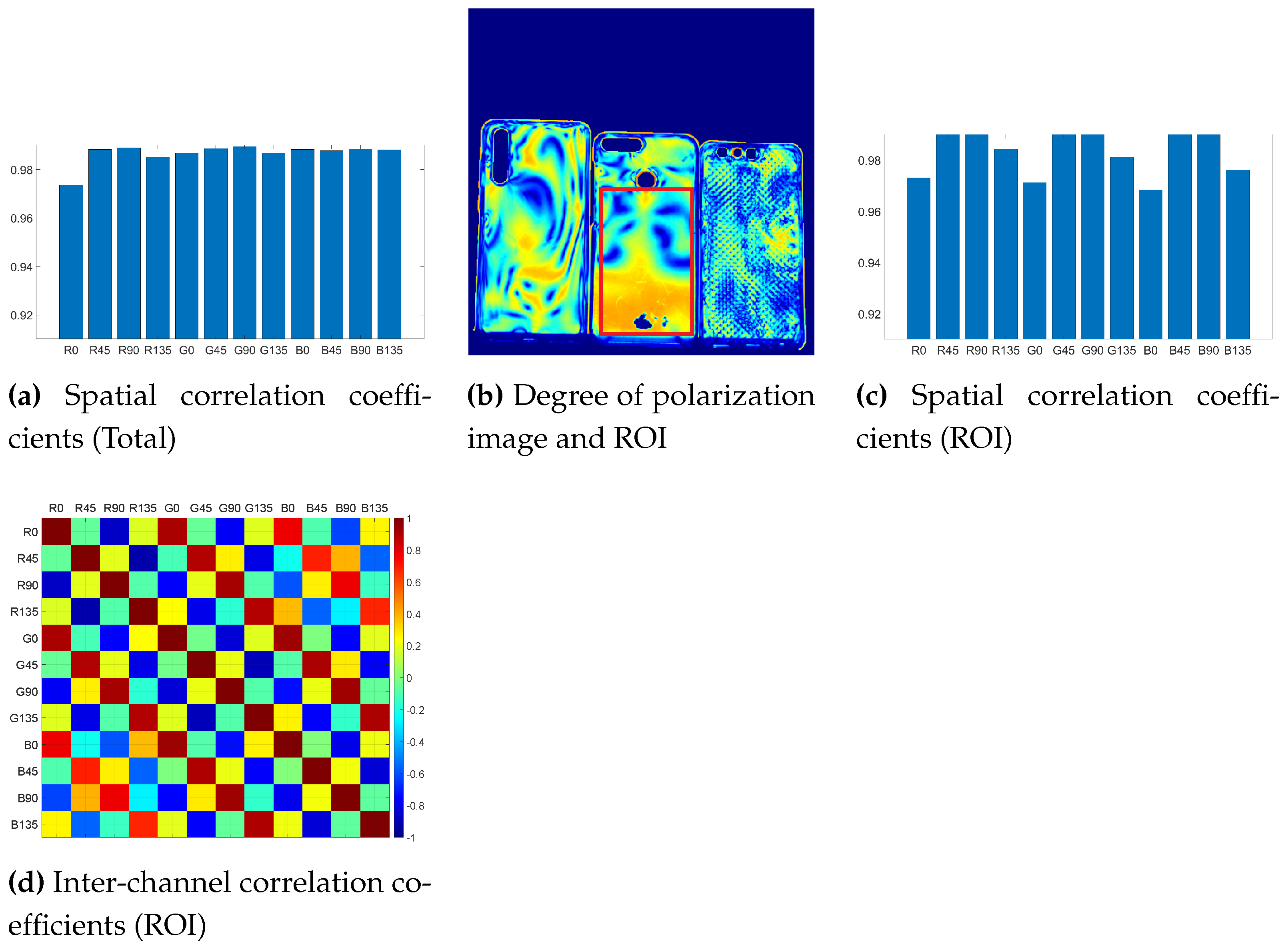

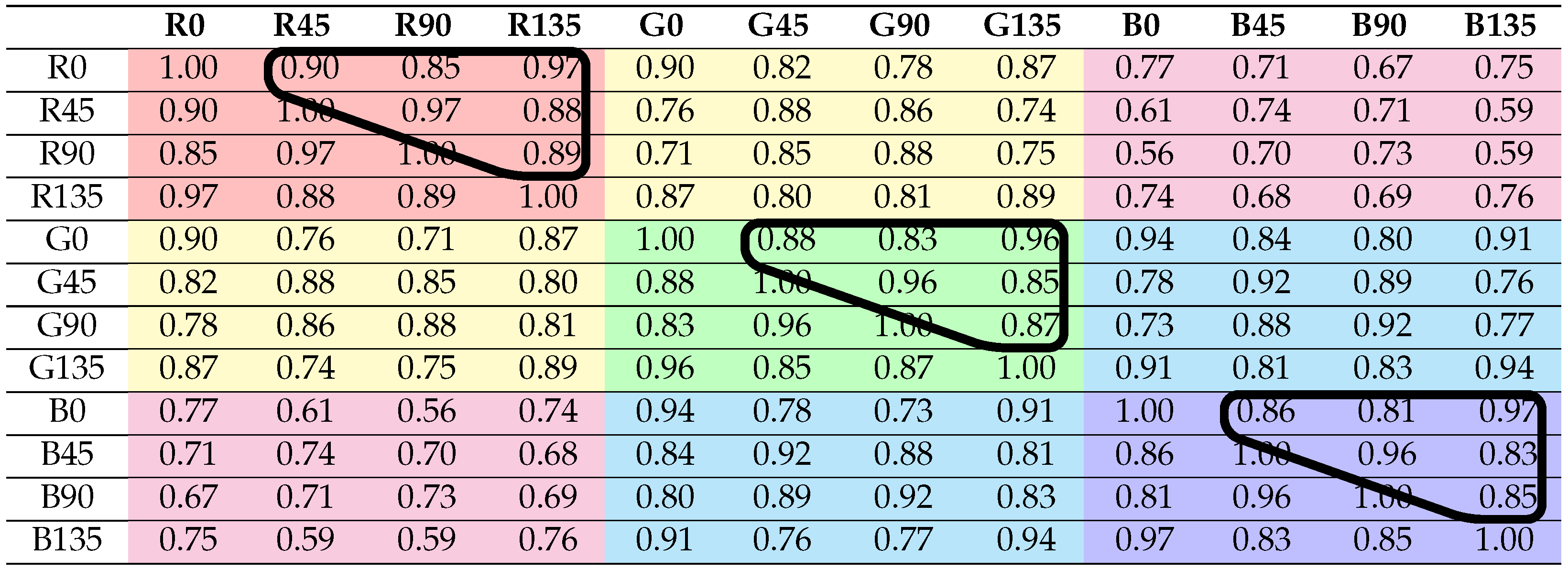

4.1. Inter-Channel Correlation

4.2. Spatial Correlation

4.3. Mann–Whitney U (MWU)

4.4. Impact on the Development of Spectropolarization Computational Imaging Solutions

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Fymat, A.L. Polarization Effects in Fourier Spectroscopy. I: Coherency Matrix Representation. Appl. Opt. 1972, 11, 160–173. [Google Scholar] [CrossRef] [PubMed]

- Nelson, R.D.; Leaird, D.E.; Weiner, A.M. Programmable polarization-independent spectral phase compensation and pulse shaping. Opt. Express 2003, 11, 1763–1769. [Google Scholar] [CrossRef] [PubMed]

- Tompkins, H.; Irene, E.A. Handbook of Ellipsometry; William Andrew: Norwich, NY, USA, 2005. [Google Scholar]

- Collins, R.W.; Koh, J. Dual rotating-compensator multichannel ellipsometer: Instrument design for real-time Mueller matrix spectroscopy of surfaces and films. J. Opt. Soc. Am. A 1999, 16, 1997–2006. [Google Scholar] [CrossRef]

- Sattar, S.; Lapray, P.J.; Foulonneau, A.; Bigué, L. Review of spectral and polarization imaging systems. In Proceedings of the Unconventional Optical Imaging II, online, 6–10 April 2020. [Google Scholar] [CrossRef]

- Nayar, S.K.; Fang, X.S.; Boult, T. Separation of reflection components using color and polarization. Int. J. Comput. Vis. 1997, 21, 163–186. [Google Scholar] [CrossRef]

- Martin, J.A.; Gross, K.C. Estimating index of refraction from polarimetric hyperspectral imaging measurements. Opt. Express 2016, 24, 17928–17940. [Google Scholar] [CrossRef] [PubMed]

- Fyffe, G.; Debevec, P. Single-Shot Reflectance Measurement from Polarized Color Gradient Illumination. In Proceedings of the 2015 IEEE International Conference on Computational Photography (ICCP), Houston, TX, USA, 24–26 April 2015; pp. 1–10. [Google Scholar]

- Riviere, J.; Reshetouski, I.; Filipi, L.; Ghosh, A. Polarization Imaging Reflectometry in the Wild. ACM Trans. Graph. 2017, 36. [Google Scholar] [CrossRef]

- Bayer, B.E. Color Imaging Array. U.S. Patent 3,971,065, 20 July 1976. [Google Scholar]

- Ramanath, R.; Snyder, W.E.; Qi, H. Mosaic multispectral focal plane array cameras. In Proceedings of the Infrared Technology and Applications XXX, Orlando, FL, USA, 12–16 April 2004; pp. 701–712. [Google Scholar] [CrossRef]

- Lapray, P.J.; Wang, X.; Thomas, J.B.; Gouton, P. Multispectral Filter Arrays: Recent Advances and Practical Implementation. Sensors 2014, 14, 21626–21659. [Google Scholar] [CrossRef]

- Rust, D.M. Integrated Dual Imaging Detector. U.S. Patent 5,438,414, 1 August 1995. [Google Scholar]

- Sony. Polarization Image Sensor. Available online: https://www.sony-semicon.co.jp/e/products/IS/industry/product/polarization.html (accessed on 19 December 2020).

- Chun, C.S.; Fleming, D.L.; Torok, E. Polarization-sensitive thermal imaging. In Proceedings of the SPIE’S International Symposium on Optical Engineering and Photonics in Aerospace Sensing, Orlando, FL, USA, 4–8 April 1994; pp. 275–286. [Google Scholar]

- Okawa, T.; Ooki, S.; Yamajo, H.; Kawada, M.; Tachi, M.; Goi, K.; Yamasaki, T.; Iwashita, H.; Nakamizo, M.; Ogasahara, T.; et al. A 1/2 inch 48M All PDAF CMOS Image Sensor Using 0.8 μm Quad Bayer Coding 2× 2OCL with 1.0 lux Minimum AF Illuminance Level. In Proceedings of the 2019 IEEE International Electron Devices Meeting (IEDM), San Francisco, CA, USA, 7–11 December 2019. [Google Scholar]

- Lapray, P.J.; Thomas, J.B.; Gouton, P. High Dynamic Range Spectral Imaging Pipeline For Multispectral Filter Array Cameras. Sensors 2017, 17, 1281. [Google Scholar] [CrossRef]

- Gunturk, B.K.; Altunbasak, Y.; Mersereau, R.M. Color plane interpolation using alternating projections. IEEE Trans. Image Process. 2002, 11, 997–1013. [Google Scholar] [CrossRef]

- Mihoubi, S.; Losson, O.; Mathon, B.; Macaire, L. Multispectral Demosaicing Using Pseudo-Panchromatic Image. IEEE Trans. Comput. Imaging 2017, 3, 982–995. [Google Scholar] [CrossRef]

- Mizutani, J.; Ogawa, S.; Shinoda, K.; Hasegawa, M.; Kato, S. Multispectral demosaicking algorithm based on inter-channel correlation. In Proceedings of the 2014 IEEE Visual Communications and Image Processing Conference, Valletta, Malta, 7–10 December 2014; pp. 474–477. [Google Scholar]

- Jaiswal, S.P.; Fang, L.; Jakhetiya, V.; Pang, J.; Mueller, K.; Au, O.C. Adaptive Multispectral Demosaicking Based on Frequency-Domain Analysis of Spectral Correlation. IEEE Trans. Image Process. 2017, 26, 953–968. [Google Scholar] [CrossRef]

- Mihoubi, S.; Lapray, P.J.; Bigué, L. Survey of Demosaicking Methods for Polarization Filter Array Images. Sensors 2018, 18, 3688. [Google Scholar] [CrossRef]

- Zhang, J.; Luo, H.; Hui, B.; Chang, Z. Image interpolation for division of focal plane polarimeters with intensity correlation. Opt. Express 2016, 24, 20799–20807. [Google Scholar] [CrossRef]

- Xu, X.; Kulkarni, M.; Nehorai, A.; Gruev, V. A correlation-based interpolation algorithm for division-of-focal-plane polarization sensors. In Proceedings of the Polarization: Measurement, Analysis, and Remote Sensing X, Baltimore, MD, USA, 23–27 April 2012. [Google Scholar] [CrossRef]

- Stokes, G.G. On the Composition and Resolution of Streams of Polarized Light from different Sources. In Mathematical and Physical Papers; Cambridge University Press: Cambridge, UK, 2009; Volume 3, pp. 233–258. [Google Scholar] [CrossRef]

- Tyo, J.S.; Goldstein, D.L.; Chenault, D.B.; Shaw, J.A. Review of passive imaging polarimetry for remote sensing applications. Appl. Opt. 2006, 45, 5453–5469. [Google Scholar] [CrossRef]

- Poincaré, H. Théorie mathématique de la lumière II.: Nouvelles études sur la diffraction.–Théorie de la dispersion de Helmholtz. Leçons professées pendant le premier semestre 1891–1892; Wentworth Press: Sydney, Australia, 1889; Volume 1. (In French) [Google Scholar]

- Gil, J.J. Review on Mueller matrix algebra for the analysis of polarimetric measurements. J. Appl. Remote. Sens. 2014, 8, 1–37. [Google Scholar] [CrossRef]

- Shafer, S.A. Using color to separate reflection components. Color Res. Appl. 1985, 10, 210–218. [Google Scholar] [CrossRef]

- Tominaga, S.; Kimachi, A. Polarization imaging for material classification. Opt. Eng. 2008, 47, 123201. [Google Scholar]

- Qiu, S.; Fu, Q.; Wang, C.; Heidrich, W. Polarization Demosaicking for Monochrome and Color Polarization Focal Plane Arrays. In Proceedings of the International Symposium on Vision, Modeling and Visualization, Rostock, Germany, 30 September–2 October 2019. [Google Scholar] [CrossRef]

- Lapray, P.J.; Gendre, L.; Foulonneau, A.; Bigué, L. Database of polarimetric and multispectral images in the visible and NIR regions. In Proceedings of the Unconventional Optical Imaging, Strasbourg, France, 22–26 April 2018; pp. 666–679. [Google Scholar] [CrossRef]

- Wen, S.; Zheng, Y.; Lu, F.; Zhao, Q. Convolutional demosaicing network for joint chromatic and polarimetric imagery. Opt. Lett. 2019, 44, 5646–5649. [Google Scholar] [CrossRef]

- Smith, D.G.; Smith, C. Photoelastic determination of mixed mode stress intensity factors. Eng. Fract. Mech. 1972, 4, 357–366. [Google Scholar] [CrossRef]

- Park, J.S.; Chung, M.S.; Hwang, S.B.; Lee, Y.S.; Har, D.H. Technical report on semiautomatic segmentation using the Adobe Photoshop. J. Digit. Imaging 2005, 18, 333–343. [Google Scholar] [CrossRef]

- Born, M.; Wolf, E. Principles of Optics: Electromagnetic Theory of Propagation, Interference and Diffraction of Light; Elsevier: Amsterdam, The Netherlands, 2013. [Google Scholar]

- Goldstein, D.H. Polarized Light; CRC Press: Boca Raton, FL, USA, 2003. [Google Scholar]

- Bravais, A. Analyse mathématique sur les probabilités des erreurs de situation d’un point; Impr. Royale: Paris, France, 1844. (In French) [Google Scholar]

- Pearson, K., VII. Note on regression and inheritance in the case of two parents. Proc. R. Soc. Lond. 1895, 58, 240–242. [Google Scholar] [CrossRef]

- Press, W.H.; Teukolsky, S.A.; Vetterling, W.T.; Flannery, B.P. Numerical Recipes 3rd Edition: The Art of Scientific Computing; Cambridge University Press: Cambridge, UK, 2007. [Google Scholar]

- Courtier, G.; Lapray, P.J.; Thomas, J.B.; Farup, I. Correlations in Joint Spectral and Polarization Imaging. Available online: https://figshare.com/s/edeb5e972905e7657a43 (accessed on 19 December 2020).

- Mann, H.B.; Whitney, D.R. On a test of whether one of two random variables is stochastically larger than the other. Ann. Math. Stat. 1947, 50–60. [Google Scholar] [CrossRef]

| Database | Num. Scenes | Full Resolution | Pre-Processing | Spectral Sensing | Polarization Sensing |

|---|---|---|---|---|---|

| Qiu et al. [31] | 40 | Averaging 100 images, pixel binning | —Bayer RGB sensor (CMOSIS CMV4000-3E5) | —Rotated linear polarizer (Thorlabs WP25M-VIS) | |

| Lapray et al. [32] | 10 | Linearization, FPN, PRNU | 6-band—Bandpass filters and Bayer RGB sensor (JAI AD-080GE camera) | —Rotated linear polarizer (Newport 10LP-VIS-B) |

| Reflection | Scene S | Scene P | Object S | Object P | Diffuse S | Diffuse P | Specular S | Specular P | |

|---|---|---|---|---|---|---|---|---|---|

| Material | |||||||||

| Total | 0.91 | 0.81 | 0.86 | 0.89 | 0.92 | 1.00 | 0.80 | 0.81 | |

| Total ∖ {Active} | 0.92 | 0.97 | 0.86 | 0.95 | 0.92 | 1.00 | 0.80 | 0.91 | |

| Metallic | - | - | 0.99 | 0.96 | 0.98 | 0.99 | 0.98 | 0.92 | |

| Natural | - | - | 0.86 | 0.96 | 0.91 | 1.00 | 0.84 | 0.97 | |

| Active | - | - | 0.90 | 0.22 | 0.97 | 0.99 | 0.88 | 0.05 | |

| Plastic | - | - | 0.89 | 0.98 | 0.88 | 1.00 | 0.88 | 0.93 | |

| Glass | - | - | 0.97 | 0.98 | 0.89 | 0.98 | 0.98 | 0.98 | |

| Others | - | - | 0.85 | 0.92 | 0.95 | 1.00 | 0.75 | 0.83 | |

| Reflection | Scene | Object | Diffuse | Specular | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Material | p-Value | h | Max | p-Value | h | Max | p-Value | h | Max | p-Value | h | Max | |

| Total | 0 | S | 0 | P | 1 | P | 0 | P | |||||

| Total ∖ {Active} | 1 | P | 1 | P | 1 | P | 1 | P | |||||

| Metallic | - | - | - | 1 | S | 1 | P | 1 | S | ||||

| Natural | - | - | - | 1 | P | 1 | P | 1 | P | ||||

| Active | - | - | - | 1 | S | 1 | P | 1 | S | ||||

| Plastic | - | - | - | 1 | P | 1 | P | 1 | P | ||||

| Glass | - | - | - | 1 | P | 1 | P | 0 | P | ||||

| Other | - | - | - | 0 | P | 1 | P | 0 | P | ||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Courtier, G.; Lapray, P.-J.; Thomas, J.-B.; Farup, I. Correlations in Joint Spectral and Polarization Imaging. Sensors 2021, 21, 6. https://doi.org/10.3390/s21010006

Courtier G, Lapray P-J, Thomas J-B, Farup I. Correlations in Joint Spectral and Polarization Imaging. Sensors. 2021; 21(1):6. https://doi.org/10.3390/s21010006

Chicago/Turabian StyleCourtier, Guillaume, Pierre-Jean Lapray, Jean-Baptiste Thomas, and Ivar Farup. 2021. "Correlations in Joint Spectral and Polarization Imaging" Sensors 21, no. 1: 6. https://doi.org/10.3390/s21010006

APA StyleCourtier, G., Lapray, P.-J., Thomas, J.-B., & Farup, I. (2021). Correlations in Joint Spectral and Polarization Imaging. Sensors, 21(1), 6. https://doi.org/10.3390/s21010006