Refining Network Lifetime of Wireless Sensor Network Using Energy-Efficient Clustering and DRL-Based Sleep Scheduling

Abstract

1. Introduction

- Energy consumption is still a major problem in the WSN. Most of the works concentrate on clustering to reduce energy consumption. However, it lacks a mechanism for the selection of optimal cluster head. This reduces data aggregation efficiency.

- The energy consumption of the sensor node is high due to the ineffective duty cycling process.

- Lack of parameter consideration in scheduling leads to frequent dead sensor nodes in the network.

- The network delay is high due to distance and energy-based path selection.

- To reduce energy consumption during data aggregation for efficient cluster head selection and clustering in WSN.

- To schedule the state of the individual sensor node so as to reduce energy consumption.

- To find an optimal path to deliver the sensed data packet to the sink without routing overhead and delay.

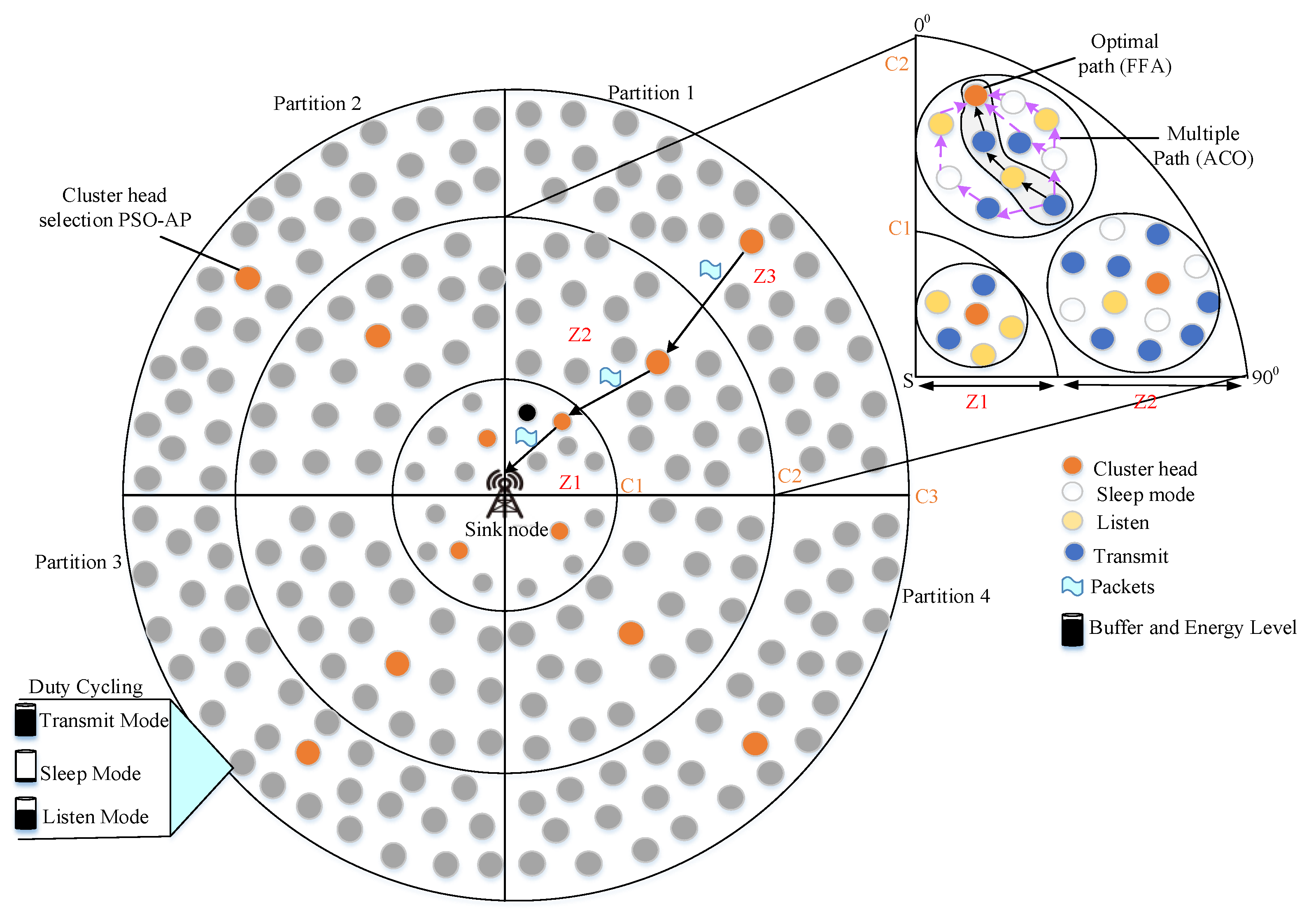

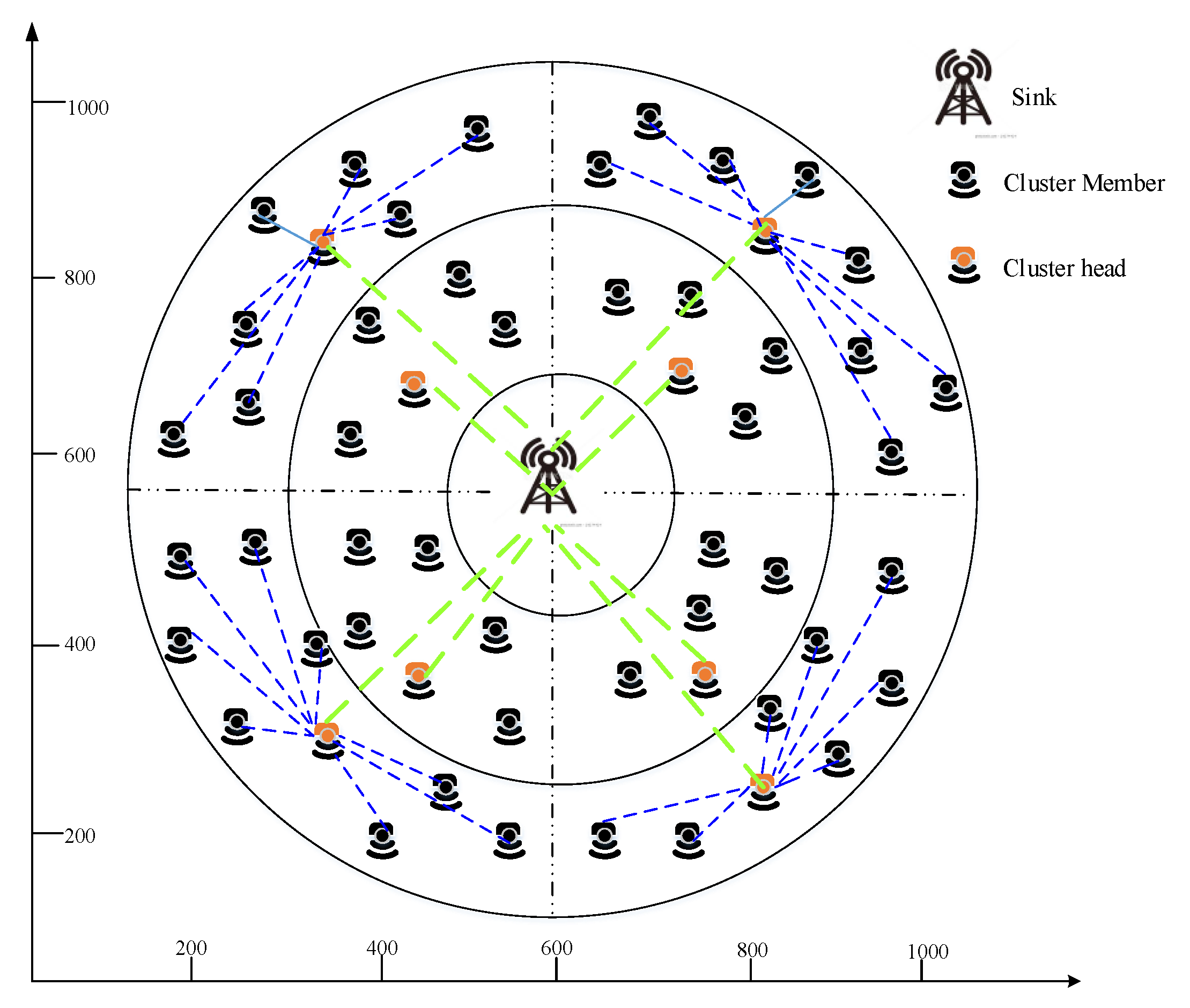

- We locate our sensor nodes in the corona field to aggregate data from all sensor nodes effectually and also, we split coronas into zones to reduce energy consumption. To improve network lifetime, our work is based in three phases, namely Zone-based Clustering (ZbC), duty cycling and routing.

- The first phase reduces energy consumption during data aggregation through the ZbC scheme that comprises a hybrid PSO and the Affinity Propagation (AP) algorithm. PSO computes fitness function for following metrics energy factor and node degree. The energy factor is a combination of residual energy and distance between the source and sink node.

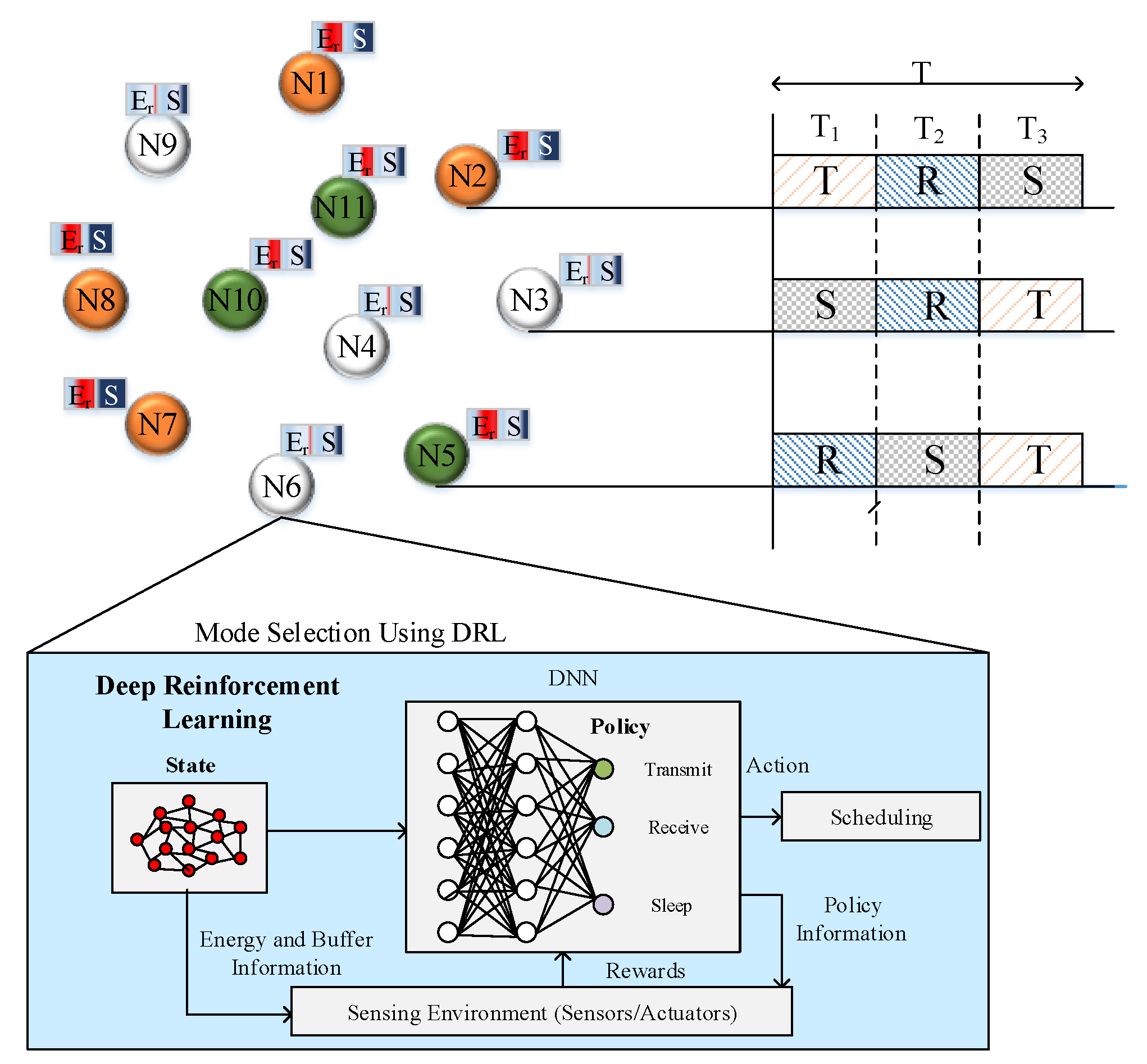

- The second phase enhances network lifetime through duty cycling. Duty cycling is performed using a DRL algorithm that schedules each sensor node in a distributed manner. Each scheduling slot considers three modes that are sleep, listen and transmit.

- In the last phase, routing is performed to reduce data transmission delay by finding the best path between source and cluster head. Ant Colony Optimization (ACO) algorithm is used to choose multiple paths between source and cluster head. Here, the ACO algorithm considers the following metrics to compute fitness functions such as residual energy, the distance between the source and the destination node, hop count and bandwidth. From the selected multiple paths, the best path between source and cluster head is selected using the firefly algorithm. The firefly algorithm considers succeeding metrics such as expected delay, packet delivery ratio and load.

2. Related Works

3. Proposed Work

3.1. Clustering Phase

3.1.1. PSO based Exemplar Selection

- (i)

- Initialization

- (ii)

- Fitness function computation

- (I)

- Node degree

- (II)

- Residual Energy

- (III)

- Distance

- (iii)

- Global Best and Local Best Computation

3.1.2. AP Cluster Formation

3.2. Duty Cycling Phase

DRL-Based Scheduling

- (a)

- Sleep Mode

- (b)

- Listen Mode

- (c)

- Transmit Mode

- (i)

- Actions (): In our work, we propose three actions that are Sleep (1), Listen (2) and Transmit (3). Actions are undertaken by each node based on the computed Q-value.

- (ii)

- States: We propose four states for each node that are and . indicates the node does not have any storage in its buffer . indicates a node has storage in its buffer as . indicates a node has storage in its buffer as . indicates a node has storage in its buffer as .

- (iii)

- Q-value: Q-value is computed to select actions for each node. Here, Q-value is computed using the payoff value of the particular node.

- (iv)

- Payoff: Payoff value is computed using the probability of the node to select an action. The payoff value is computed for each node to select particular action in each period.

- (v)

- Reward: Reward is provided to each action on the basis of the successful transmission of packets to the neighbors.

| Algorithm 1. Node Scheduling |

| Require: Energy and Buffer of Node Ensure: Selected Mode of Operation for Node |

| replay memory to the capacity ‘C’ InitializeQ network with random weights. Initializetarget Q network with random weights for do { payoff select action ; payoff 1- select maximizes ; } Execute action to obtain reward and Store () If (n==n+1) Set target ; Else Set target ; Update ; End for |

3.3. Routing Phase

3.3.1. ACO Algorithm

- (i)

- Hop Count

- (ii)

- Distance

- (iii)

- Bandwidth

- (a)

- Initial Rule

- (b)

- Revising rule

3.3.2. FFA

- (a)

- Packet Delivery Ratio

- (b)

- Expected Delay

- (c)

- Load

4. Performance Evaluation

4.1. Simulation Environment

4.2. Performance Metrics

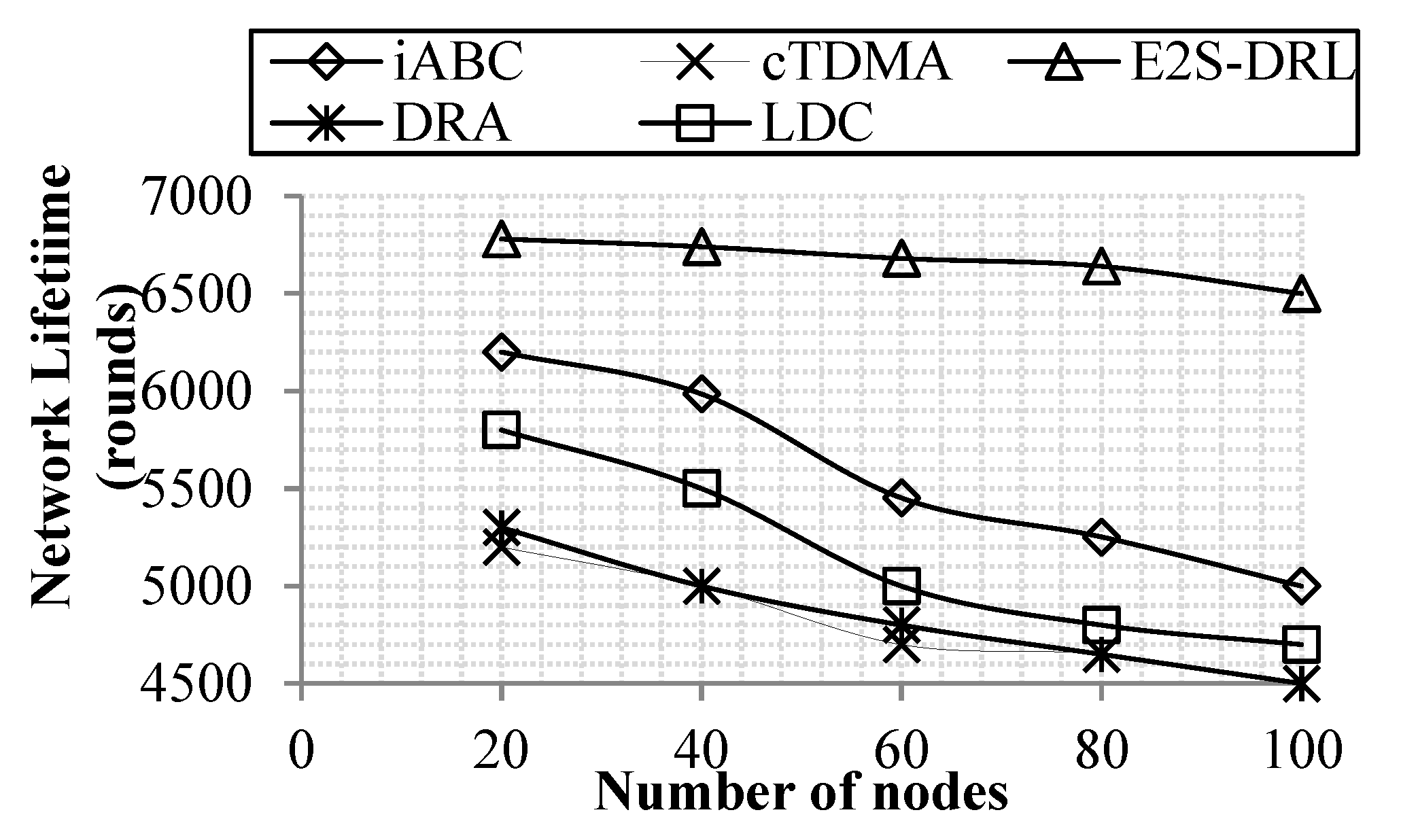

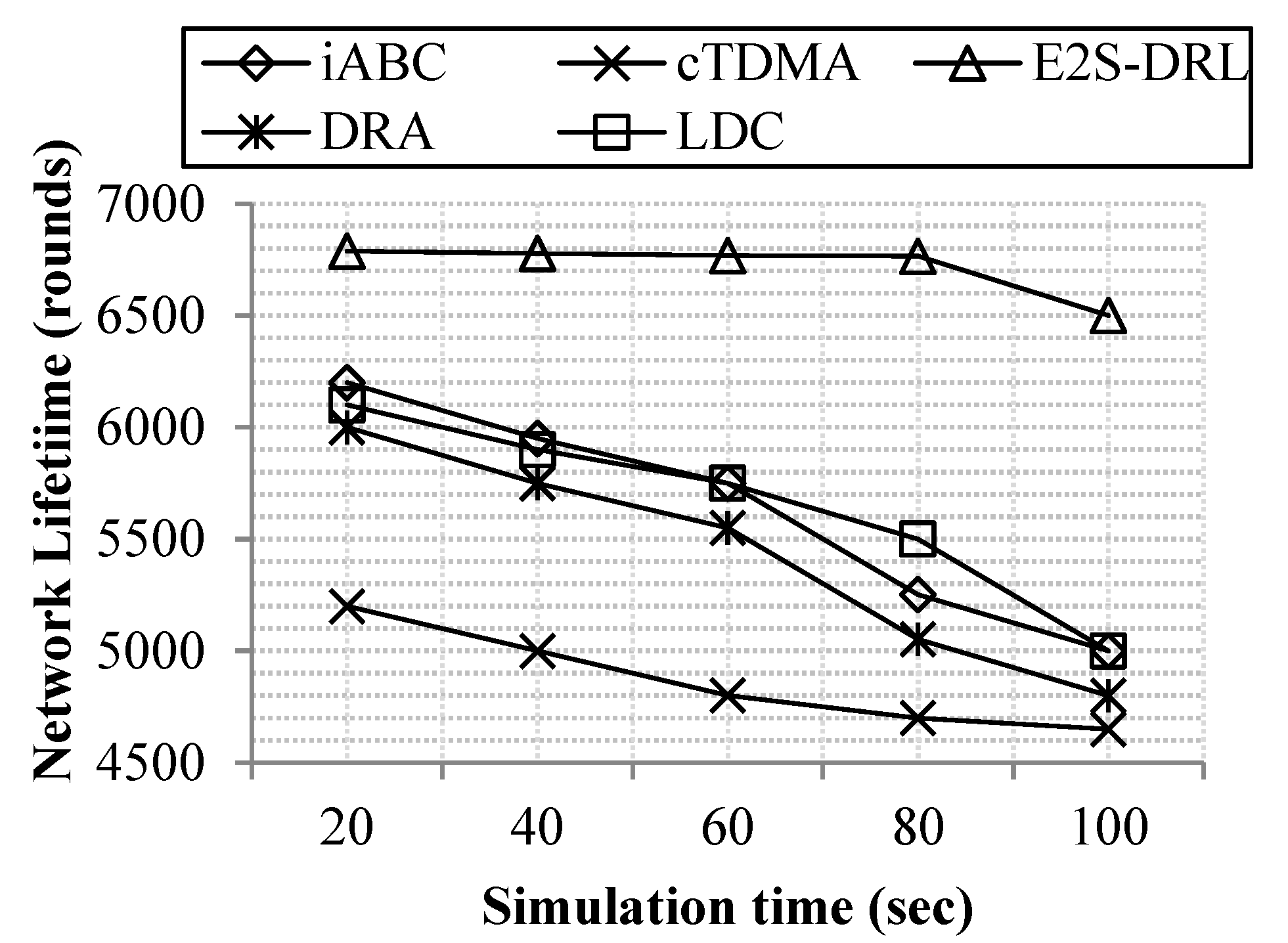

4.2.1. Network Lifetime

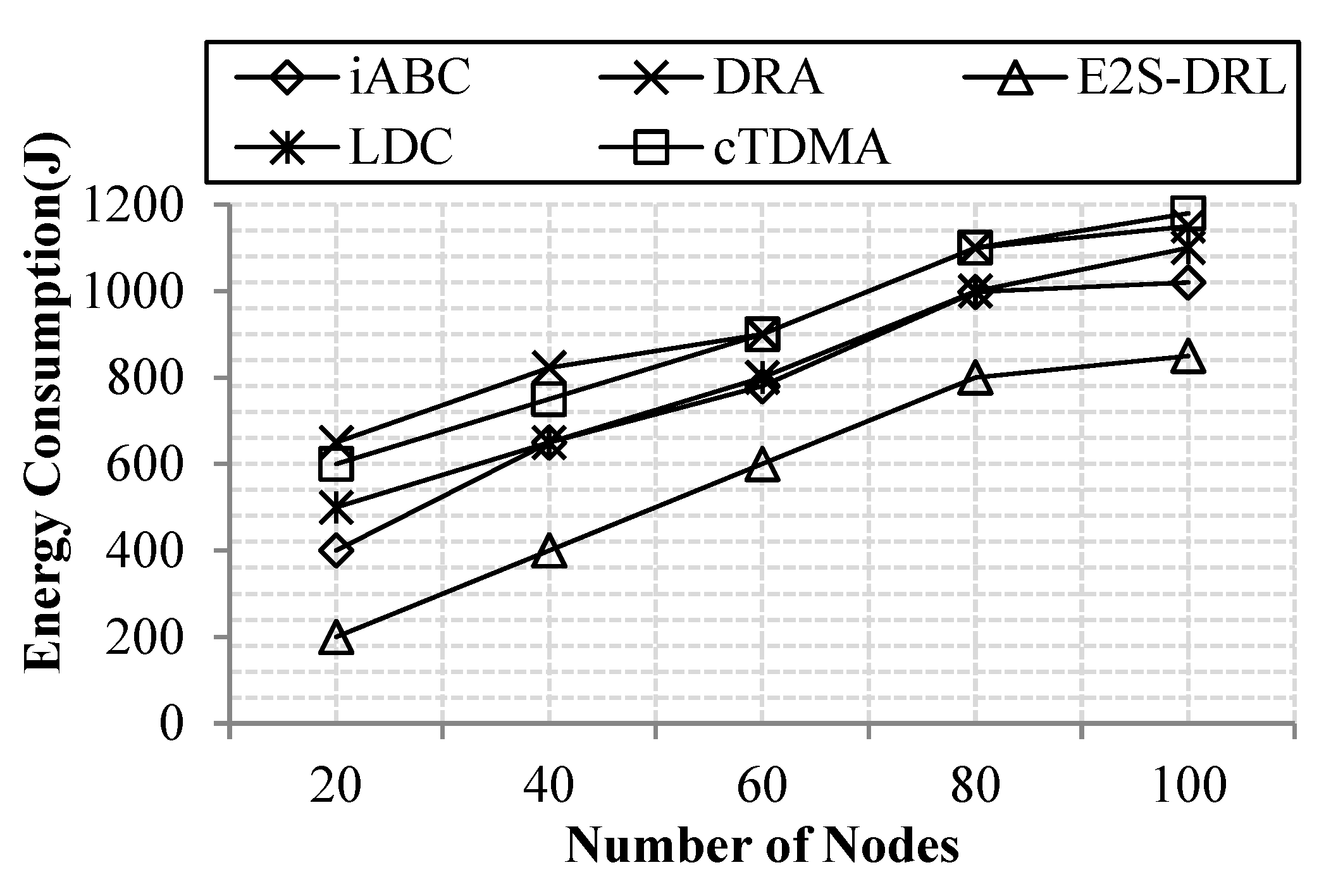

4.2.2. Energy Consumption

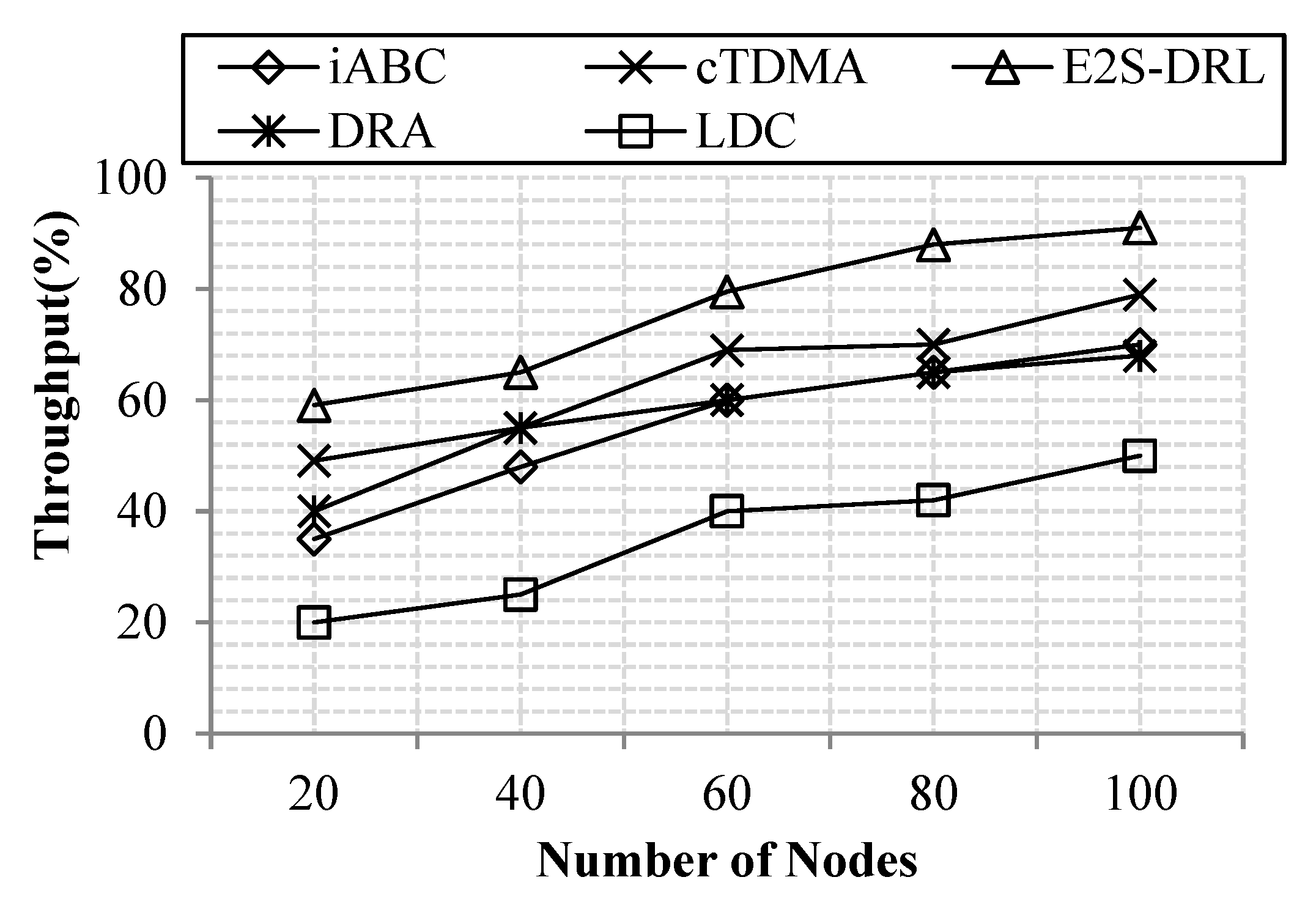

4.2.3. Throughput

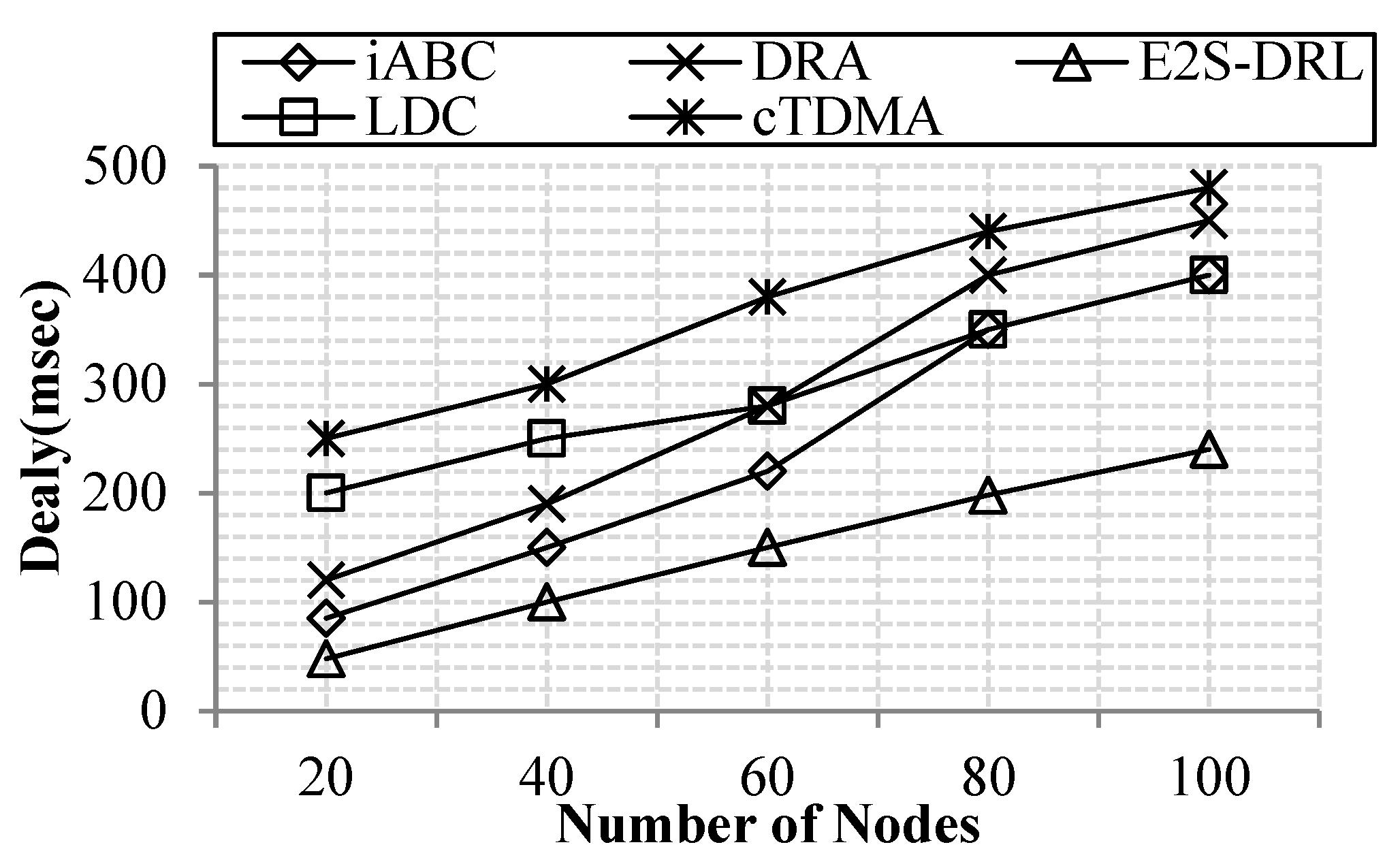

4.2.4. Delay

4.3. Comparative Analysis

4.3.1. Analysis of Network Lifetime

4.3.2. Analysis of Energy Consumption

4.3.3. Analysis of Throughput

4.3.4. Analysis of Delay

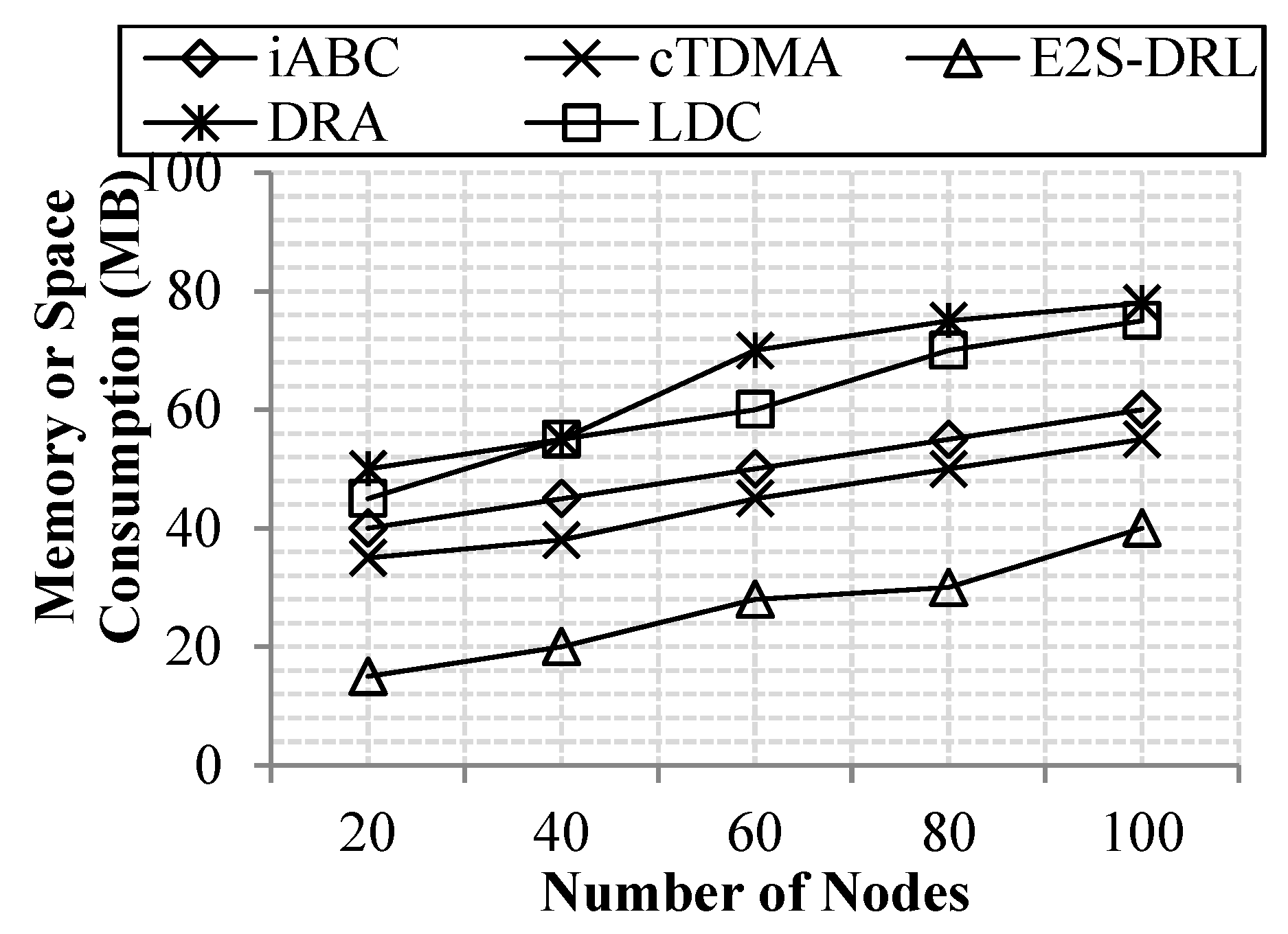

4.3.5. Memory Consumption Analysis

4.3.6. Computational Complexity Analysis

5. Discussion

- Clustering: In this phase, we mitigate the energy consumption that occurred during the aggregation of the data in the cluster head node. In cluster-based WSN, energy consumption during data aggregation is a big issue that leads to frequent cluster head election. These problems are resolved in our proposed clustering by selecting an optimal cluster head for data aggregation based on the effective parameters.

- Duty Cycling: This phase reduces the energy consumption of the individual sensor node by exploiting the DRL algorithm. Using this algorithm, each sensor node in the network decides its mode of operation perfectly. Using this, the proposed method reduces the energy consumption of the individual node effectively.

- Routing: The network delay is one of the significant issues in the WSN; it reduces the performance of the proposed system. To attain less network delay, our E2S-DRL method performs the multiple paths based on optimal path selection in WSN. It initially selects the multiple paths between source and destination node. From the selected multiple paths, our E2S-DRL chooses a better path to transmit the sensed data packet to the destination. This reduces the delay incurred during the data transmission between the source and sink node.

6. Conclusions and Future Work

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Verma, S.; Sood, N.; Sharmam, A.K. Design of a novel routing architecture for harsh environment monitoring in heterogeneous WSN. IET Wirel. Sens. Syst. 2018, 8, 284–294. [Google Scholar] [CrossRef]

- Zeng, M.; Huang, X.; Zheng, B.; Fan, X.-X. A Heterogeneous Energy Wireless Sensor Network Clustering Protocol. Wirel. Commun. Mob. Comput. 2019, 2019, 1–11. [Google Scholar] [CrossRef]

- Huang, M.; Liu, A.; Xiong, N.; Wang, T.; Vasilakos, A.V. A Low-Latency Communication Scheme for Mobile Wireless Sensor Control Systems. IEEE Trans. Syst. Man Cybern. Syst. 2018, 49, 317–332. [Google Scholar] [CrossRef]

- Lu, Y.; Zhang, T.; He, E.; Comsa, I.-S. Self-Learning-Based Data Aggregation Scheduling Policy in Wireless Sensor Networks. J. Sens. 2018, 2018, 1–12. [Google Scholar] [CrossRef]

- Huang, M.; Liu, A.; Zhao, M.; Wang, T. Multi working sets alternate covering scheme for continuous partial coverage in WSNs. Peer-to-Peer Netw. Appl. 2018, 12, 553–567. [Google Scholar] [CrossRef]

- Karthick, P.T.; Palanisamy, C. Optimized cluster head selection using krill herd algorithm for wireless sensor network. Automatika 2019, 60, 340–348. [Google Scholar] [CrossRef]

- Morsy, N.A.; Abdelhay, E.H.; Kishk, S.S. Proposed Energy Efficient Algorithm for Clustering and Routing in WSN. Wirel. Pers. Commun. 2018, 103, 2575–2598. [Google Scholar] [CrossRef]

- Yuan, X.; Elhoseny, M.; El-Minir, H.K.; Riad, A.M. A Genetic Algorithm-Based, Dynamic Clustering Method towards Improved WSN Longevity. J. Netw. Syst. Manag. 2016, 25, 21–46. [Google Scholar] [CrossRef]

- Elhabyan, R.; Shi, W.; St-Hilaire, M. A Pareto optimization-based approach to clustering and routing in Wireless Sensor Networks. J. Netw. Comput. Appl. 2018, 114, 57–69. [Google Scholar] [CrossRef]

- Mehra, P.S.; Doja, M.N.; Alam, B. Fuzzy based enhanced cluster head selection (FBECS) for WSN. J. King Saud Univ. Sci. 2020, 32, 390–401. [Google Scholar] [CrossRef]

- Hamzah, A.; Shurman, M.; Al-Jarrah, O.; Taqieddin, E. Energy-Efficient Fuzzy-Logic-Based Clustering Technique for Hierarchical Routing Protocols in Wireless Sensor Networks. Sensors 2019, 19, 561. [Google Scholar] [CrossRef] [PubMed]

- Mothku, S.K.; Rout, R.R. Adaptive Fuzzy-Based Energy and Delay-Aware Routing Protocol for a Heterogeneous Sensor Network. J. Comput. Netw. Commun. 2019, 2019, 1–11. [Google Scholar] [CrossRef]

- Ghrab, D.; Jemili, I.; Belghith, A.; Mosbah, M. Context-aware medium access control protocols in wireless sensor networks. Internet Technol. Lett. 2018, 1, e43. [Google Scholar] [CrossRef]

- Niu, B.; Qi, H.; Li, K.; Liu, X.; Xue, W. Dynamic scheming the duty cycle in the opportunistic routing sensor network. Concurr. Comput. Pract. Exp. 2017, 29, e4196. [Google Scholar] [CrossRef]

- Xiao, K.; Wang, R.; Deng, H.; Zhang, L.; Yang, C. Energy-aware Scheduling for Information Fusion in Wireless Sensor Network Surveillance. Inform. Fusion 2018, 48, 95–106. [Google Scholar] [CrossRef]

- Kang, B.; Nguyen, P.K.H.; Zalyubovskiy, V.; Choo, H. A Distributed Delay-Efficient Data Aggregation Scheduling for Duty-Cycled WSNs. IEEE Sens. J. 2017, 17, 3422–3437. [Google Scholar] [CrossRef]

- Le, D.T.; Lee, T.; Choo, H. Delay-aware tree construction and scheduling for data aggregation in duty-cycled wireless sensor networks. EURASIP J. Wirel. Commun. Netw. 2018, 2018, 95. [Google Scholar] [CrossRef]

- Yarinezhad, R.; Hashemi, S.N. A routing algorithm for wireless sensor networks based on clustering and an fpt-approximation algorithm. J. Syst. Softw. 2019, 155, 145–161. [Google Scholar] [CrossRef]

- Chithaluru, P.; Tiwari, R.; Kumar, K. AREOR–Adaptive ranking based energy efficient opportunistic routing scheme in Wireless Sensor Network. Comput. Netw. 2019, 162, 106863. [Google Scholar] [CrossRef]

- Singh, R.; Verma, A.K. Energy efficient cross layer based adaptive threshold routing protocol for WSN. AEU Int. J. Electron. Commun. 2017, 72, 166–173. [Google Scholar] [CrossRef]

- Muthukumaran, K.; Chitra, K.; Selvakumar, C. An energy efficient clustering scheme using multilevel routing for wireless sensor network. Comput. Electr. Eng. 2018, 69, 642–652. [Google Scholar]

- Kulkarni, N.; Prasad, N.R.; Prasad, R. Q-MOHRA: QoS Assured Multi-objective Hybrid Routing Algorithm for Heterogeneous WSN. Wirel. Pers. Commun. 2017, 100, 255–266. [Google Scholar] [CrossRef]

- Bhardwaj, R.; Kumar, D. MOFPL: Multi-objective fractional particle lion algorithm for the energy aware routing in the WSN. Pervasive Mob. Comput. 2019, 58, 101029. [Google Scholar] [CrossRef]

- Lahane, S.R.; Jariwala, K.N. Network Structured Based Routing Techniques in Wireless Sensor Network. In Proceedings of the 2018 3rd International Conference for Convergence in Technology (I2CT), Pune, India, 6–9 April 2018. [Google Scholar]

- Fei, X.; Wang, Y.; Liu, A.; Cao, N. Research on Low Power Hierarchical Routing Protocol in Wireless Sensor Networks. In Proceedings of the 2017 IEEE International Conference on Computational Science and Engineering (CSE) and IEEE International Conference on Embedded and Ubiquitous Computing (EUC), Guangzhou, China, 21–24 July 2017. [Google Scholar]

- Li, A.; Chen, G. Clustering Routing Algorithm Based on Energy Threshold and Location Distribution for Wireless Sensor Network. In Proceedings of the 2018 37th Chinese Control Conference (CCC), Wuhan, China, 25–27 July 2018. [Google Scholar]

- Mann, P.S.; Singh, S. Optimal Node Clustering and Scheduling in Wireless Sensor Networks. Wirel. Pers. Commun. 2018, 100, 683–708. [Google Scholar] [CrossRef]

- Nguyen, T.; Pan, J.; Dao, T. A Compact Bat Algorithm for Unequal Clustering in Wireless Sensor Networks. Appl. Sci. 2019, 9, 1973. [Google Scholar] [CrossRef]

- Mosavvar, I.; Ghaffari, A. Data Aggregation in Wireless Sensor Networks Using Firefly Algorithm. Wirel. Pers. Commun. 2018, 104, 307–324. [Google Scholar] [CrossRef]

- Kang, J.; Sohn, I.; Lee, S.H. Enhanced Message-Passing Based LEACH Protocol for Wireless Sensor Networks. Sensors 2018, 19, 75. [Google Scholar] [CrossRef]

- Kozłowski, A.; Sosnowski, J. Energy Efficiency Trade-Off between Duty-Cycling and Wake-Up Radio Techniques in IoT Networks. Wirel. Pers. Commun. 2019, 107, 1951–1971. [Google Scholar] [CrossRef]

- Du, Y.; Xu, Y.; Xue, L.; Wang, L.; Zhang, F. An Energy-Efficient Cross-Layer Routing Protocol for Cognitive Radio Networks Using Apprenticeship Deep Reinforcement Learning. Energies 2019, 12, 2829. [Google Scholar] [CrossRef]

- Serrano, W. Deep Reinforcement Learning Algorithms in Intelligent Infrastructure. Infrastructures 2019, 4, 52. [Google Scholar] [CrossRef]

- Adam, M.S.; Por, L.Y.; Hussain, M.R.; Khan, N.; Ang, T.F.; Anisi, M.H.; Huang, Z.; Ali, I. An Adaptive Wake-Up-Interval to Enhance Receiver-Based Ps-Mac Protocol for Wireless Sensor Networks. Sensors 2019, 19, 3732. [Google Scholar] [CrossRef] [PubMed]

- Bahbahani, M.S.; Alsusa, E. A Cooperative Clustering Protocol with Duty Cycling for Energy Harvesting Enabled Wireless Sensor Networks. IEEE Trans. Wirel. Commun. 2018, 17, 101–111. [Google Scholar] [CrossRef]

- Nguyen, N.-T.; Liu, B.-H.; Pham, V.-T.; Liou, T.-Y. An Efficient Minimum-Latency Collision-Free Scheduling Algorithm for Data Aggregation in Wireless Sensor Networks. IEEE Syst. J. 2018, 12, 2214–2225. [Google Scholar] [CrossRef]

- Elshrkawey, M.; Elsherif, S.M.; Wahed, M.E. An Enhancement Approach for Reducing the Energy Consumption in Wireless Sensor Networks. J. King Saud Univ. Comput. Inf. Sci. 2018, 30, 259–267. [Google Scholar] [CrossRef]

- Kaur, S.; Mahajan, R. Hybrid meta-heuristic optimization based energy efficient protocol for wireless sensor networks. Egypt. Inform. J. 2018, 19, 145–150. [Google Scholar] [CrossRef]

- Arora, V.K.; Sharma, V.; Sachdeva, M. A multiple pheromone ant colony optimization scheme, for energy-efficient wireless sensor networks. Soft Comput. 2019. [Google Scholar] [CrossRef]

- Arora, V.K.; Sharma, V.; Sachdeva, M. ACO optimized self-organized tree-based energy balance algorithm for wireless sensor network. J. Ambient Intell. Humaniz. Comput. 2019, 10, 4963–4975. [Google Scholar] [CrossRef]

- Rhim, H.; Tamine, K.; Abassi, R.; Sauveron, D.; Guemara, S. A multi-hop graph-based approach for an energy-efficient routing protocol in wireless sensor networks. Hum. Centric Comput. Inf. Sci. 2018, 8, 30. [Google Scholar] [CrossRef]

- Liu, F.; Wang, Y.; Lin, M.; Liu, K.; Wu, D. A Distributed Routing Algorithm for Data Collection in Low-Duty-Cycle Wireless Sensor Networks. IEEE Internet Things J. 2017, 4, 1420–1433. [Google Scholar] [CrossRef]

- Jiang, C.; Li, T.-S.; Liang, J.; Wu, H. Low-Latency and Energy-Efficient Data Preservation Mechanism in Low-Duty-Cycle Sensor Networks. Sensors 2017, 17, 1051. [Google Scholar] [CrossRef]

- Vijayalakshmi, K.; Manickam, J.M.L. A cluster based mobile data gathering using SDMA and PSO techniques in WSN. Clust. Comput. 2018, 22, 12727–12736. [Google Scholar] [CrossRef]

| Author Name | Method | Contribution | Objective | Demerits |

|---|---|---|---|---|

| Palvinder et al. [27] | iABC | It selects the optimal cluster head for data transmission in WSN. | To select the cluster head fast using the optimization algorithm. | The iABC-based cluster head selection lacks in analyzing the secondary information of the sensor nodes. This results in frequent cluster head selection. |

| Trong et al. [28] | BAT | It selects a cluster head and forms clusters in the sensor network. | To select the optimal cluster head to reduce energy consumption. | It consumes more time to select the cluster head. This reduces the energy consumption. |

| Islam et al. [29] | LEACH | It forms clusters and selects a cluster head using an optimization algorithm. | To perform efficient data aggregation in WSN. | The cluster head selection is not optimal that affects the data aggregation efficiency. |

| Jaeyoung et al. [30] | E-LEACH | It forms clustering and utilizes the distributed algorithm to pass the message. | To form clusters effectually to reduce energy consumption. | More parameters are required to select the best cluster head. Hence, it has frequent clustering in the network. |

| Kozlowsi et al. [31] | EEDC | It provides a duty-cycle based on transmitting and receiving energy. | To provide an energy-efficient duty-cycle. | It increases the delay during data transmission that tends to packet loss. |

| Mohamed et al. [35] | cTDMA | It allocates slots to transmit data packets based on the cluster head occurrence count. | To increase the lifetime of the WSN network. | It cannot change the transmission slots dynamically that leads to more energy drainage. |

| Ngoc et al. [36] | ISS | It provides a slot to each node based on the number of data packet count. | To minimize the data transmission delay in WSN. | It has high energy consumption due to a lack of consideration of energy-oriented metrics. |

| Mohammed et al. [37] | Modified TDMA | It allocates slots using set and steady phases. | To reduce the energy consumption of the cluster head. | It follows a complex computation process that decreases the performance of the system. |

| Supreet et al. [38] | ACO-PSO | The cluster-based routing is performed using the hybrid ACO-PSO algorithm. | To provide better data aggregation in data transmission. | The distance parameter only considered for next-hop selection thus increases the energy consumption. |

| Vishal et al. [39] | ACO | It routes the packet to the optimal path. | To balance the energy among the cluster head node in WSN. | The distance and energy are not sufficient to attain less delay in WSN routing. |

| Vishal et al. [40] | MPACO | It routes the packet by selecting the optimal path. | To design energy-efficient routing in WSN. | The parameters considered for routing are not sufficient to reduce packet loss in WSN. |

| Hana et al. [41] | MH-GEER | It provides cluster-based routing to route the sensed data. | Reducing the sensor node energy consumption to increase performance. | The next-hop is selected based on the energy, which thus leads to an increase in the load of each sensor node. |

| Feng et al. [42] | DRA | It selects the optimal path to transmits the data packet. | To prolong the lifetime of the WSN. | The distance between the source and destination node is not considered thus increases latency. |

| Jiang et al. [43] | LDC | It provides Low-Latency and Energy-Efficient Data Preservation Mechanism | To reduce the latency and energy consumption in WSN | The nodes are woken up based on the neighbor’s wakeup time which leads to the increase in energy dissipation of nodes |

| Vijayalakshmi and Manickam [44] | SDMA | It provides data gathering in WSN using SDMA and PSO | To prolong the lifetime of the WSN | CH selection was not effective due to the absence of significant parameter consideration such as distance and energy. |

| Parameters | Value | |

|---|---|---|

| Network Parameters | Simulation Area | 1000 * 1000 m |

| Number of sensor nodes | 100 | |

| Number of Sink node | 1 | |

| Initial Energy of the Node | 1200 J | |

| Packet Parameters | Number of Packets | |

| Number of retransmission | Max 7 | |

| Packet size | 512 KB | |

| Packet Interval | 0.1 s | |

| Traffic Type of Packet | Constant Bit Rate (CBR) | |

| Communication Parameters | Sensor Communication Range | 100 m |

| Data Rate | 20 Mbps | |

| Transmission Slot Parameters | Number of Slots | 16 |

| Data Packet length | 840 bits | |

| Slot length | 1050 bits | |

| Slot Duration | 10 s | |

| PSO Parameters | Number of Particles | [20–80] |

| Maximum Inertia Weight | 0.9 | |

| Minimum Inertia Weight | 0.1 | |

| ACO Parameters | Number of Ants | 100 |

| 0.6 | ||

| 0.6 | ||

| FFA parameters | Firefly population | 100 |

| 0.9 | ||

| 1 | ||

| Number of Run | 1000 | |

| Simulation time | 100 s | |

| Process | Method | Time Complexity |

|---|---|---|

| Clustering | iABC | O(2*P/2*D*I2) |

| PSO-AP | O(P*I+n2) | |

| Duty Cycling | cTDMA | 3O(n) |

| DRL | O(n) | |

| Routing | LEACH | nO(L) |

| ACO-FFA | O(n2+nlogn) |

| Proposed Algorithm | Benefits Related to Performance Metrics |

|---|---|

| PSO-AP | The PSO selects the optimal exemplar for the AP algorithm based on the energy-related metrics. Hence, the selected cluster head has the ability to sustain for a long time. This reduces the energy drain of the cluster head effectively. Therefore, the E2S-DRL method enhances energy consumption in WSN. Besides, the proposed AP algorithm forms clusters quickly compared to the traditional clustering algorithms such as K-means, etc. Thus, this reduces the time required during the setup phase. |

| DRL | The proposed DRL algorithm provides the proper mode of operation for each sensor node. For this purpose, it considers the buffer size parameter of each sensor node. Based on the buffer size, it allocates the modes to each node. This results in the reduction of energy consumption which tends to increase in the network lifetime drastically. Besides, it also reduces the network delay by considering the buffer size-based mode of operation allocation. |

| ACO-FFA | The throughput of the proposed work is increased by selecting an optimal path from the multiple paths. Here, ACO selects the best multiple paths for transmission with less amount of time. From the selected multiple paths, FFA selects the optimal path to transmit the message between the source and sink node. Here, expected delay, PDR and load parameters are considered to achieve less delay during packet transmission. These metrics also increase the throughput of the proposed system. |

| Methods | Performance Metrics | |||||

|---|---|---|---|---|---|---|

| Network Lifetime (rounds) | Energy Consumption (J) | Throughput (%) | Delay (ms) | |||

| #.N | S.T | #.N | S.T | |||

| iABC | 5577 | 5630 | 769 | 757 | 55 | 241 |

| DRA | 4850 | 5430 | 924 | 920 | 57 | 288 |

| LDC | 5160 | 5650 | 810 | 842 | 35 | 296 |

| cTDMA | 4810 | 4870 | 906 | 890 | 64 | 370 |

| MPACO | 1200 | 1150 | 300 | 280 | 60 | 365 |

| E2S-DRL | 6668 | 6719 | 570 | 582 | 90 | 147 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sinde, R.; Begum, F.; Njau, K.; Kaijage, S. Refining Network Lifetime of Wireless Sensor Network Using Energy-Efficient Clustering and DRL-Based Sleep Scheduling. Sensors 2020, 20, 1540. https://doi.org/10.3390/s20051540

Sinde R, Begum F, Njau K, Kaijage S. Refining Network Lifetime of Wireless Sensor Network Using Energy-Efficient Clustering and DRL-Based Sleep Scheduling. Sensors. 2020; 20(5):1540. https://doi.org/10.3390/s20051540

Chicago/Turabian StyleSinde, Ramadhani, Feroza Begum, Karoli Njau, and Shubi Kaijage. 2020. "Refining Network Lifetime of Wireless Sensor Network Using Energy-Efficient Clustering and DRL-Based Sleep Scheduling" Sensors 20, no. 5: 1540. https://doi.org/10.3390/s20051540

APA StyleSinde, R., Begum, F., Njau, K., & Kaijage, S. (2020). Refining Network Lifetime of Wireless Sensor Network Using Energy-Efficient Clustering and DRL-Based Sleep Scheduling. Sensors, 20(5), 1540. https://doi.org/10.3390/s20051540