Video Image Enhancement and Machine Learning Pipeline for Underwater Animal Detection and Classification at Cabled Observatories

Abstract

1. Introduction

1.1. The Development of Marine Imaging

1.2. The Human Bottleneck in Image Manual Processing

1.3. Objectives and Findings

2. Materials and Methods

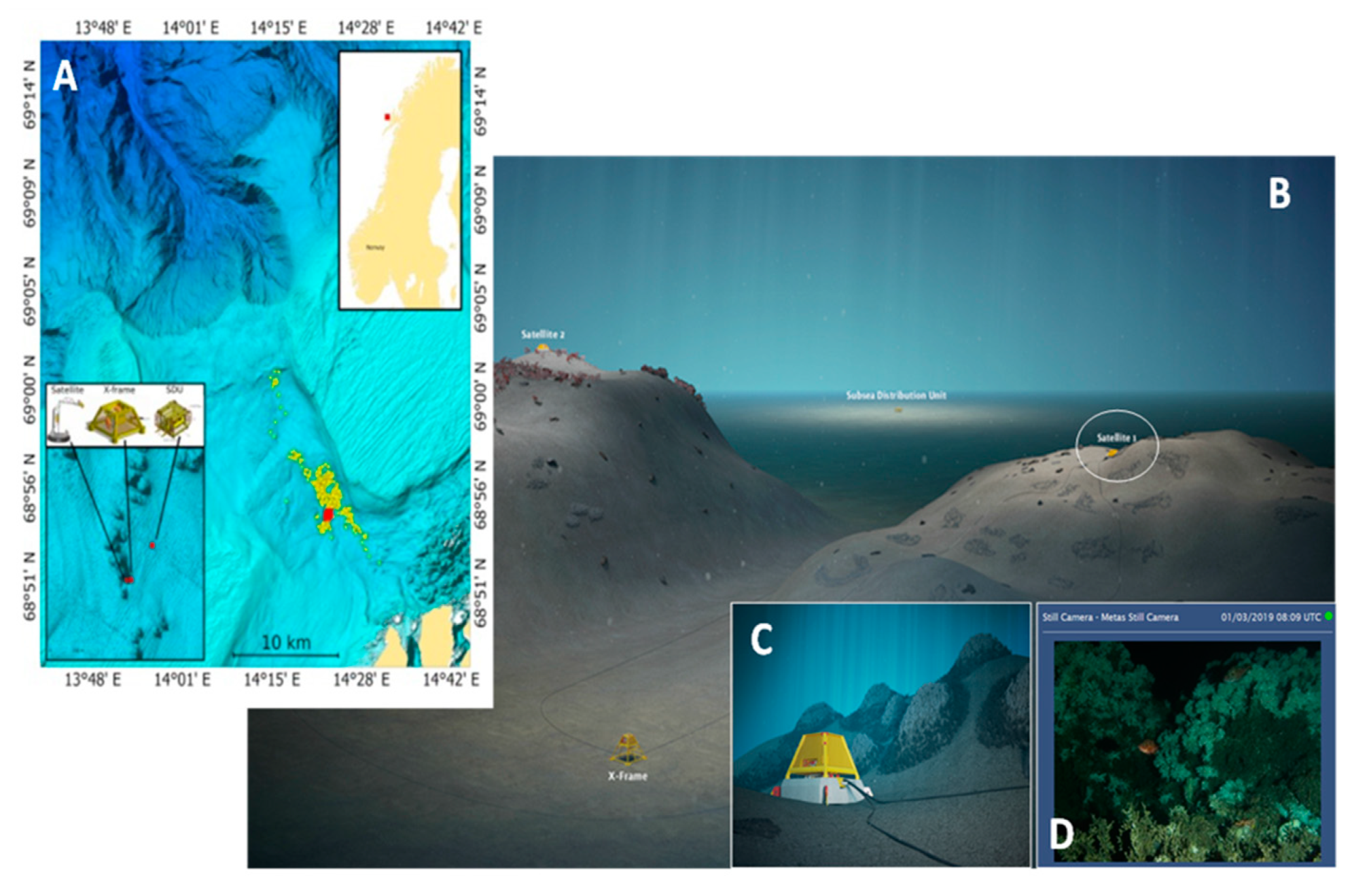

2.1. The Cabled Observatory Network Area

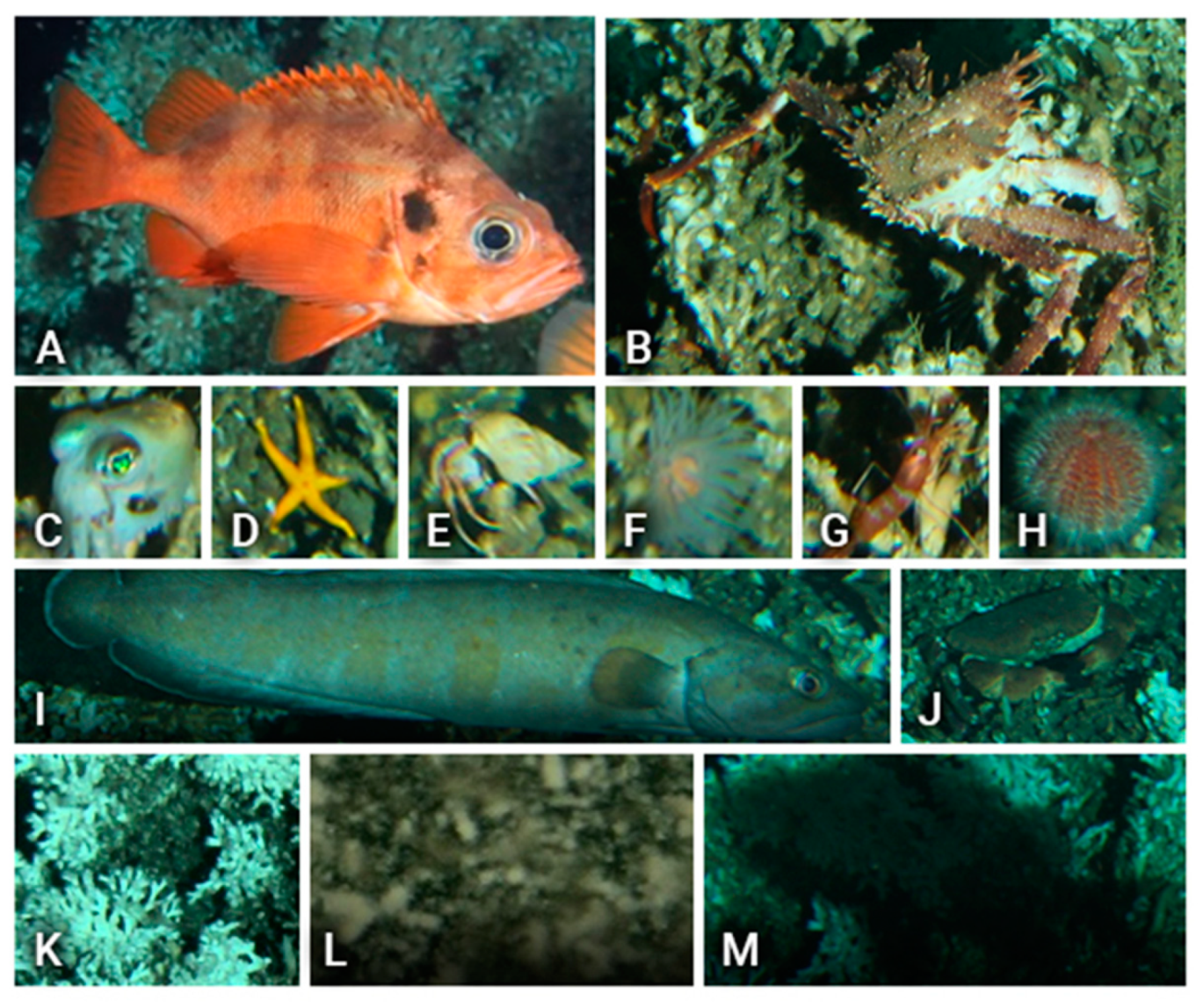

2.2. The Target Group of Species

2.3. Data Collection

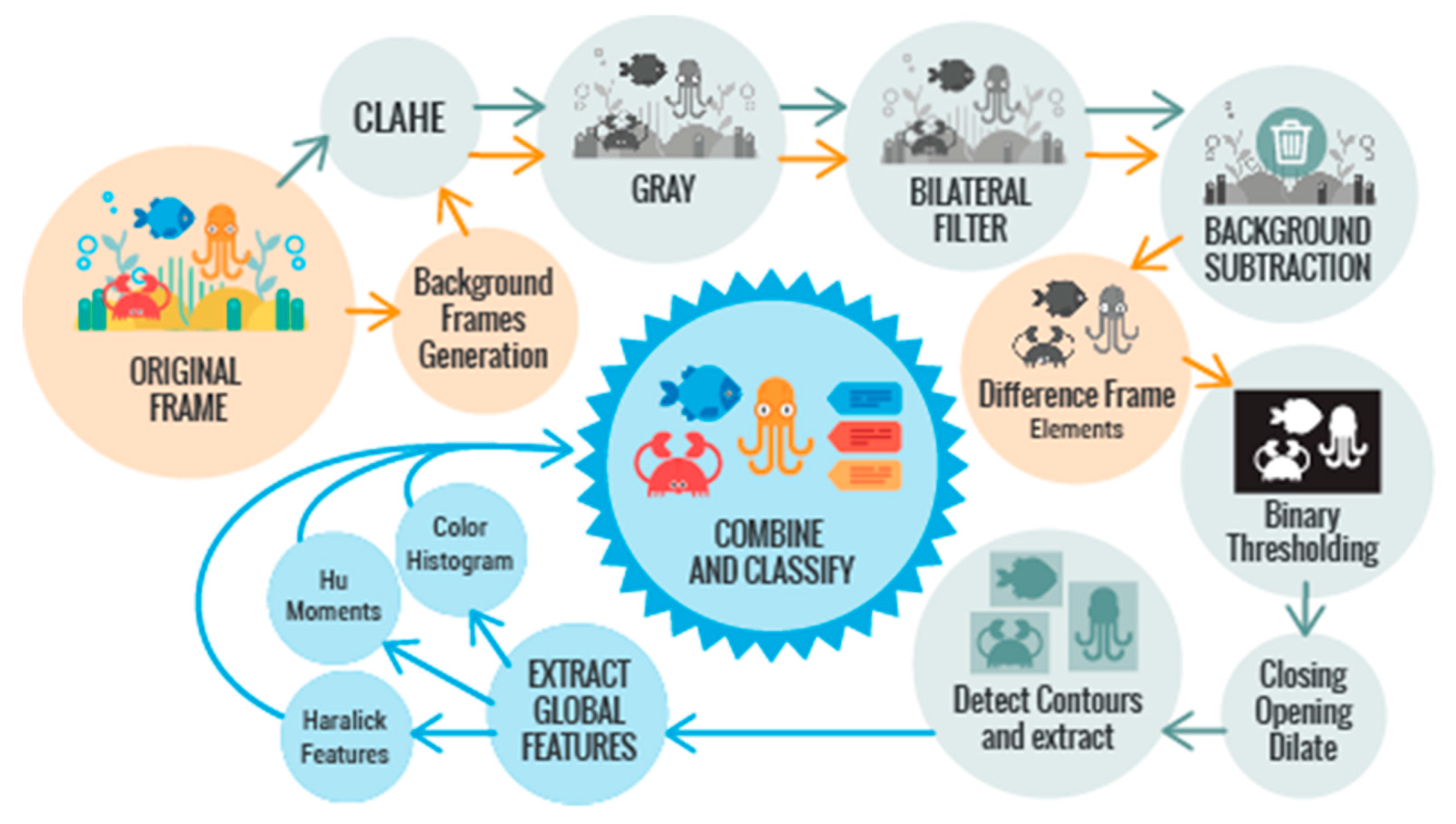

2.4. Image Processing Pipeline for Underwater Animal Detection And Annotation

2.5. Experimental Setup

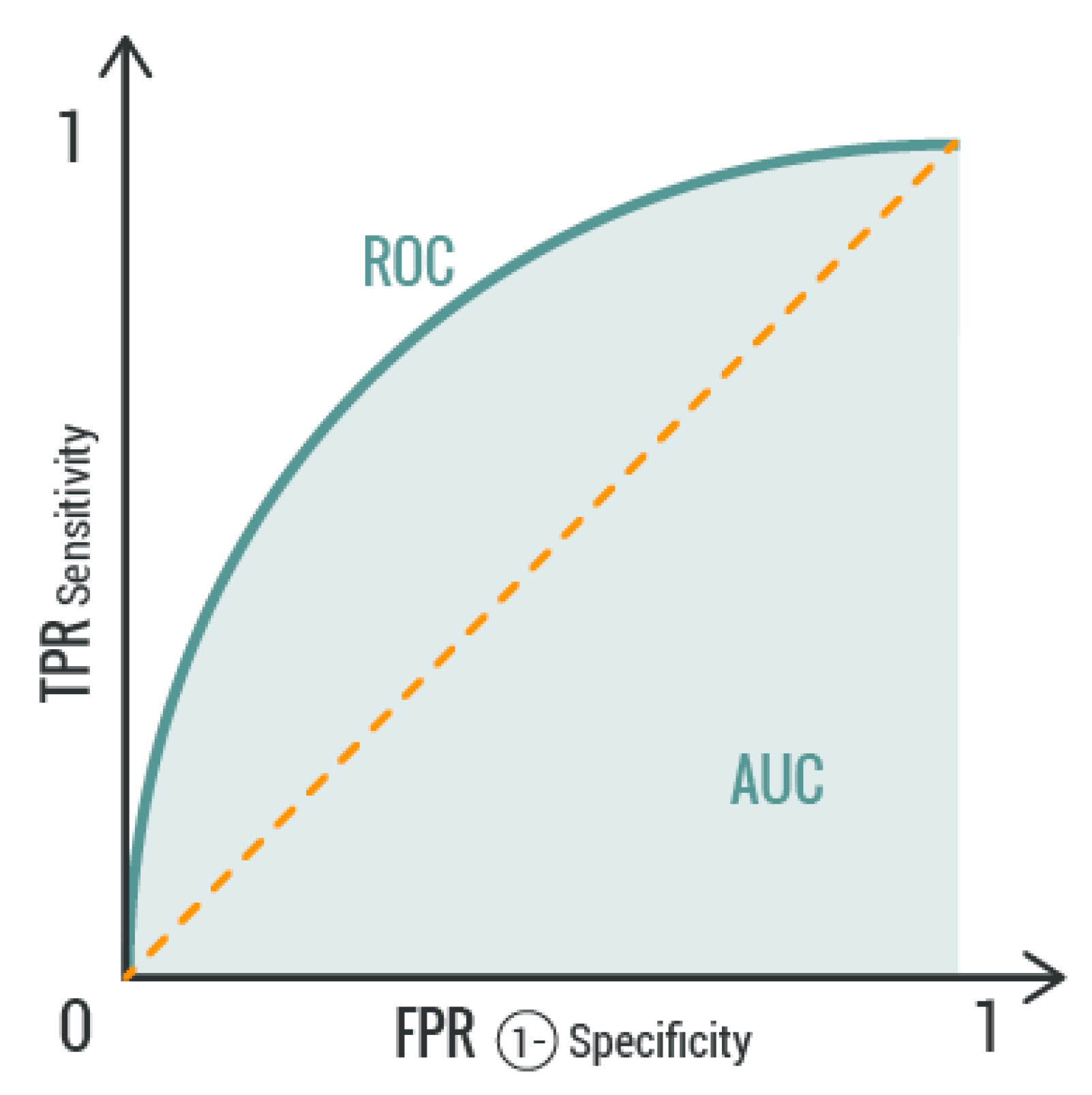

2.6. Metrics

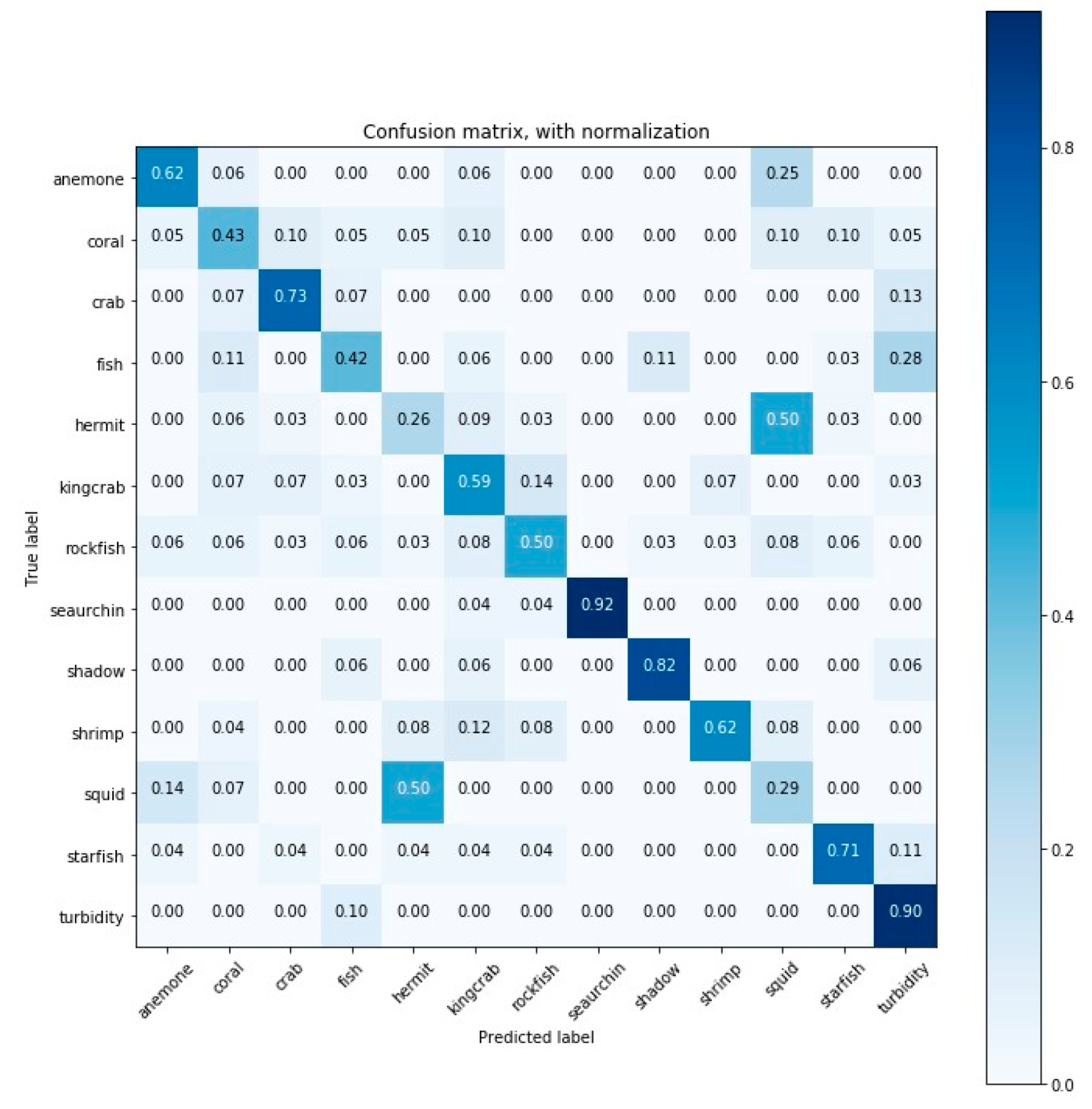

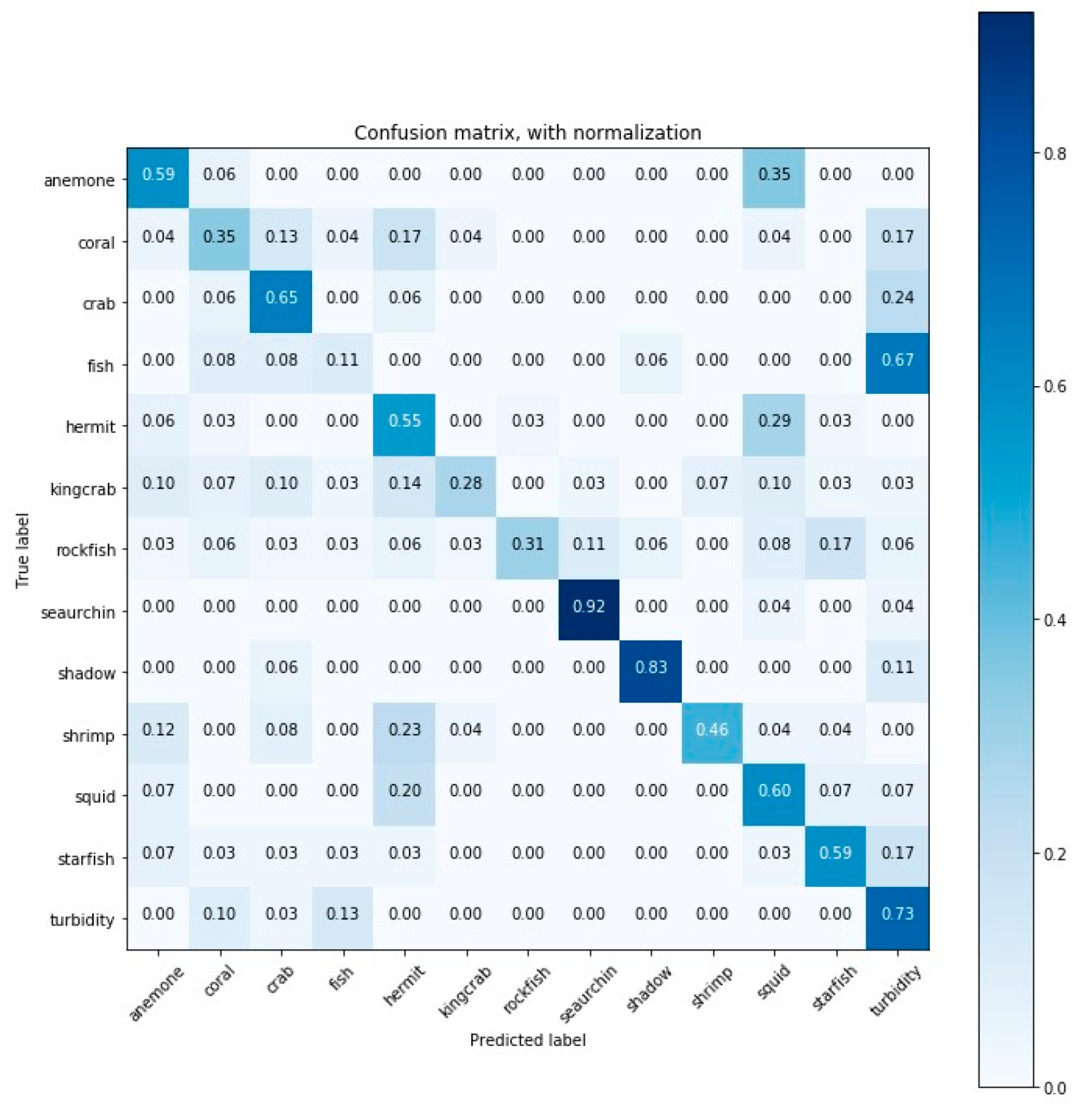

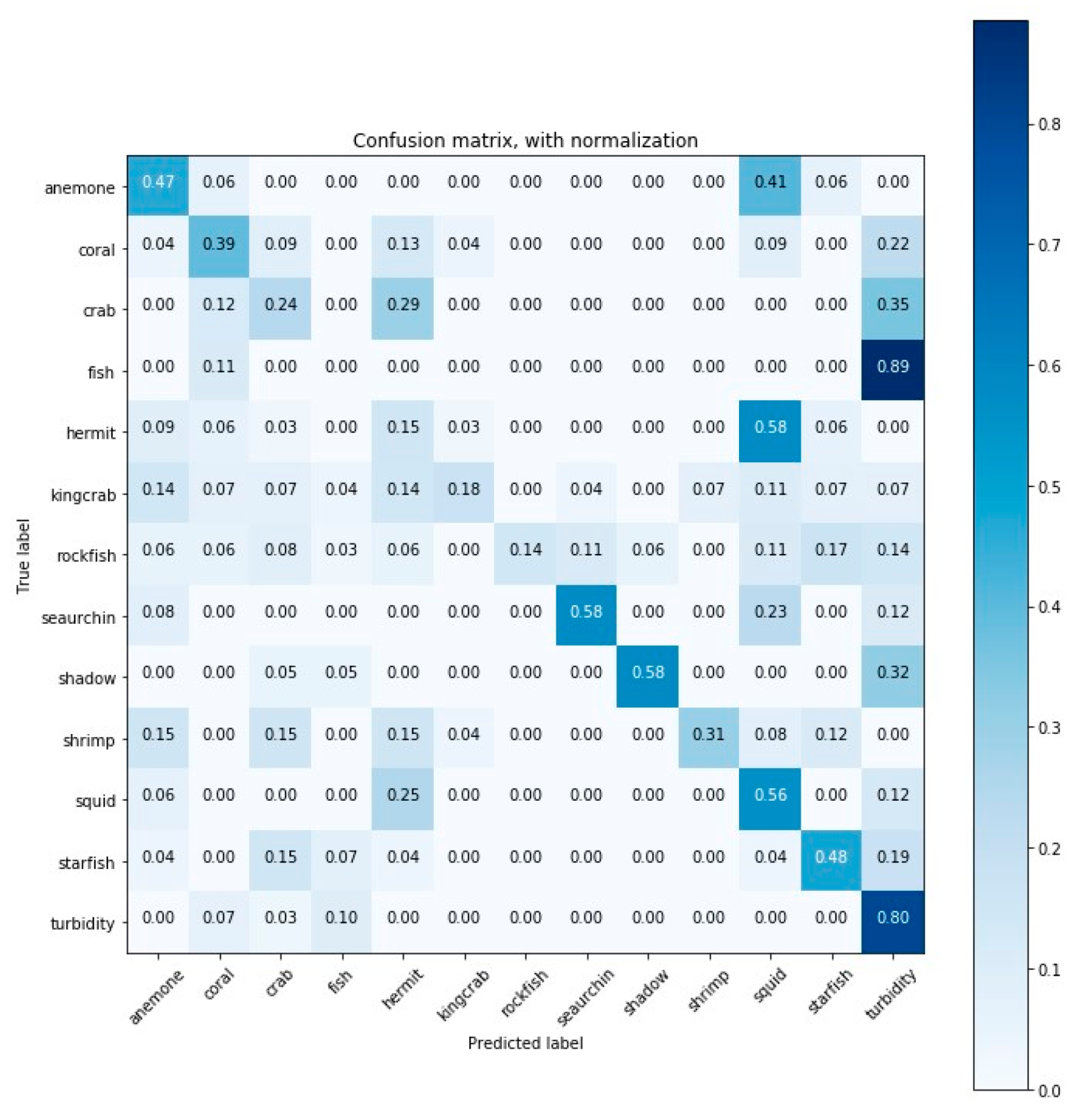

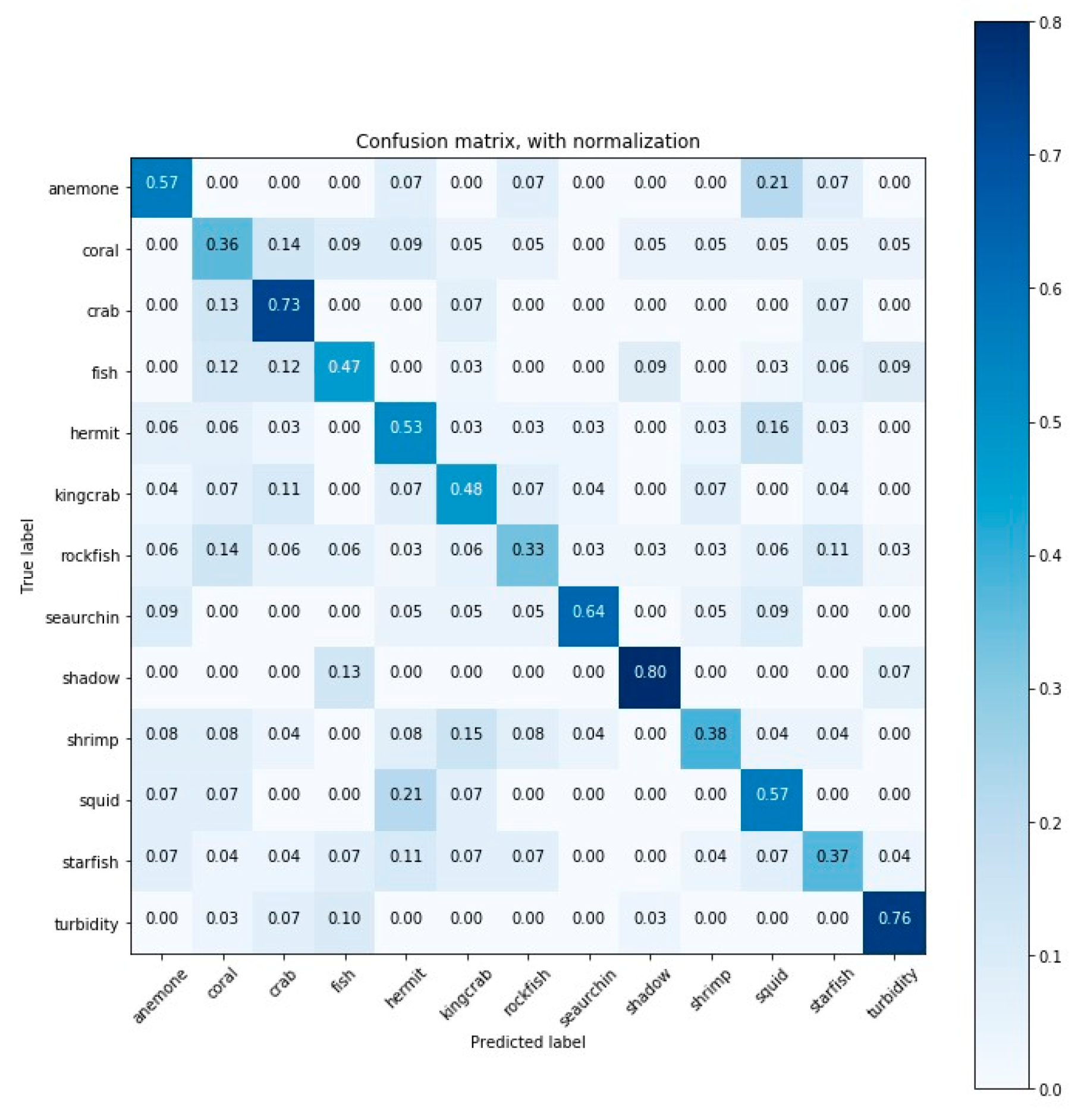

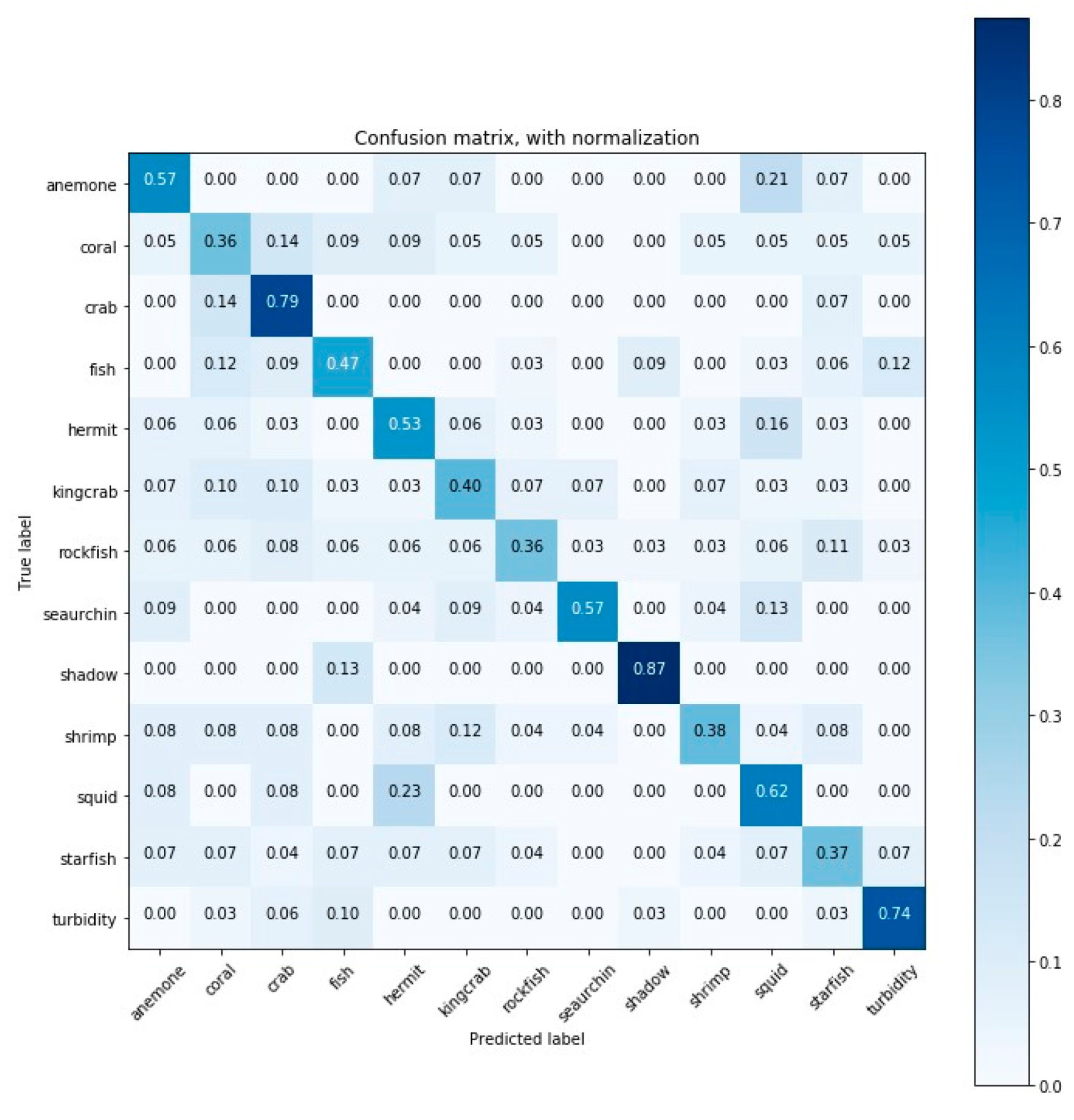

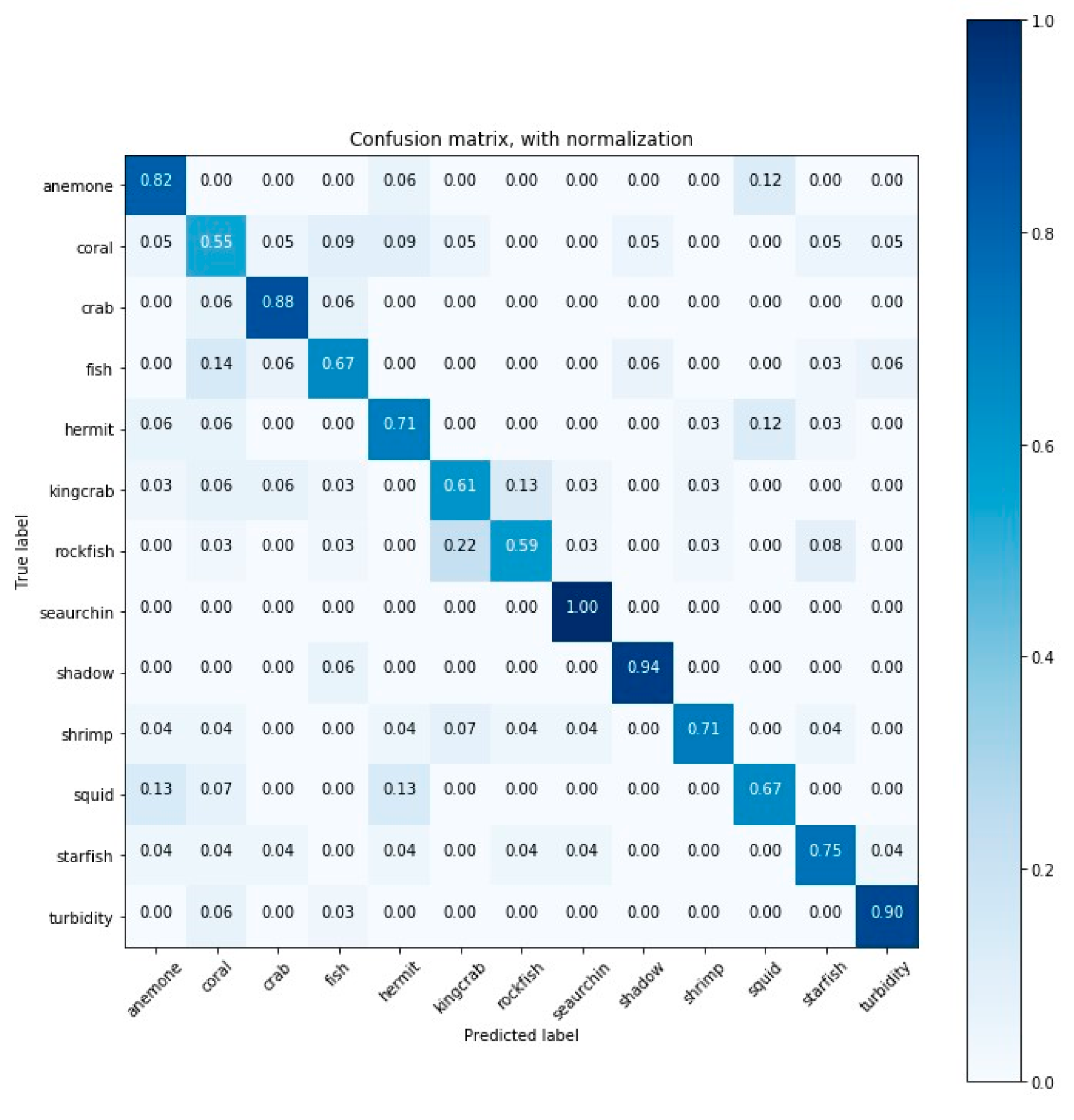

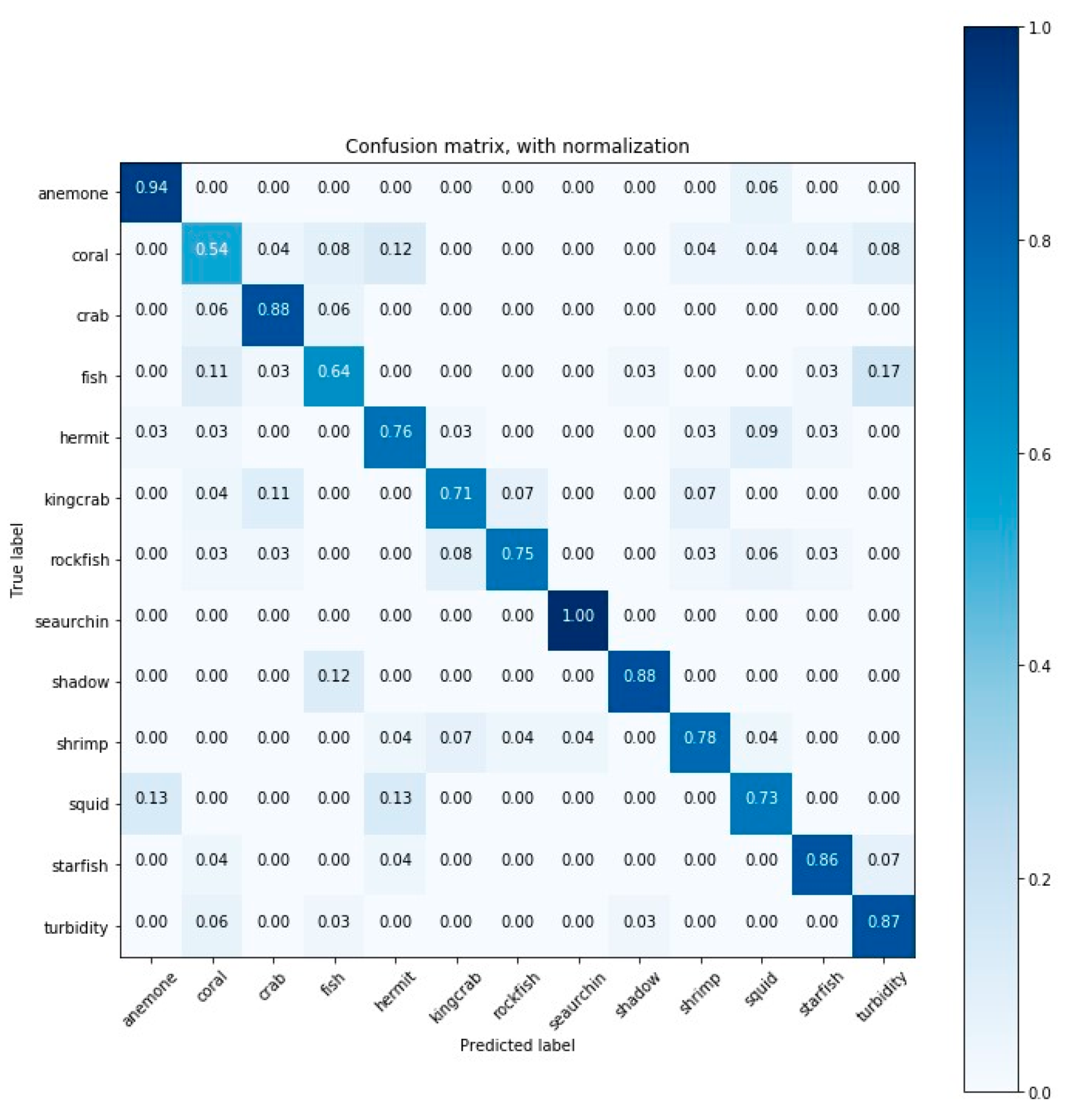

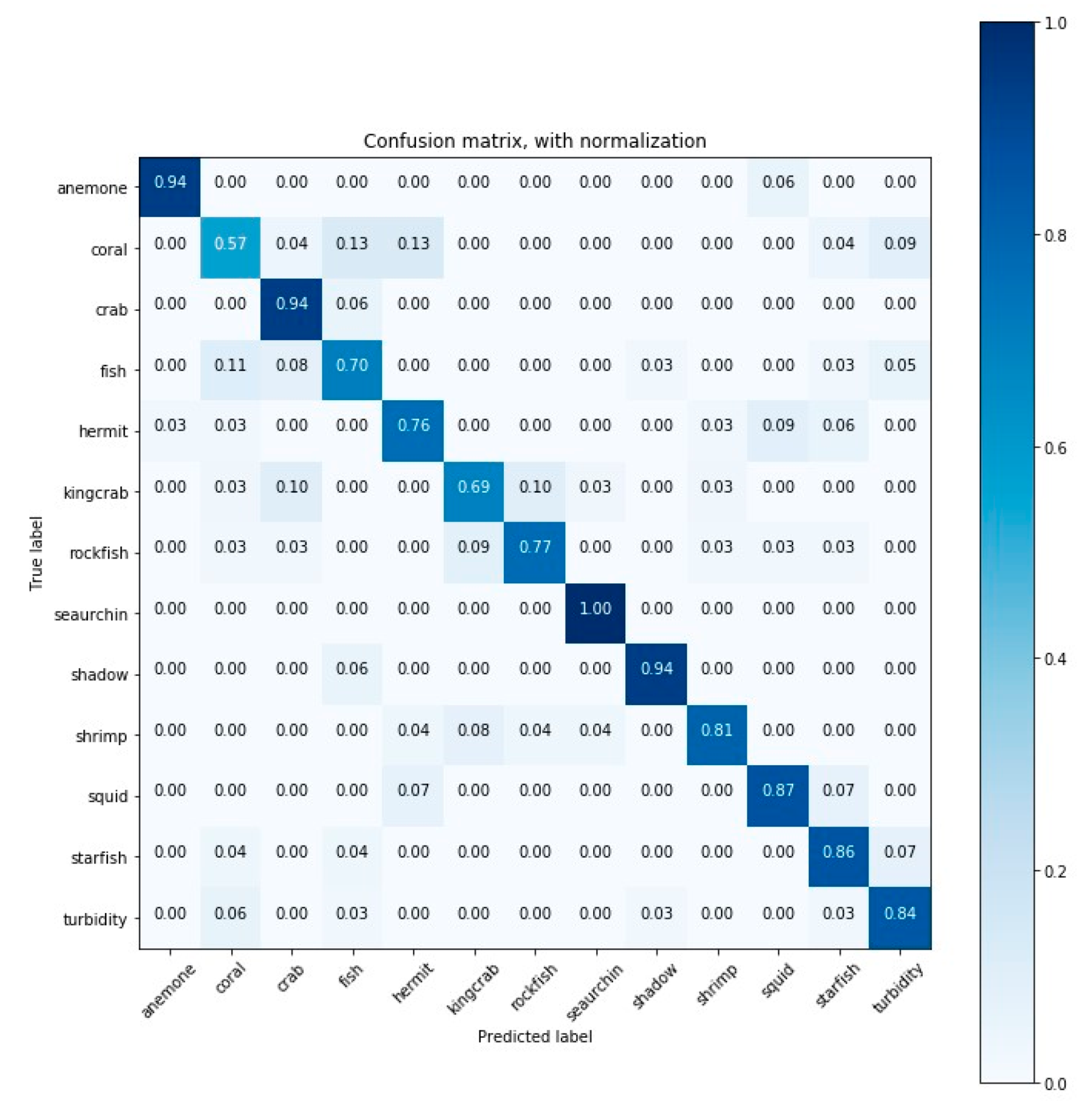

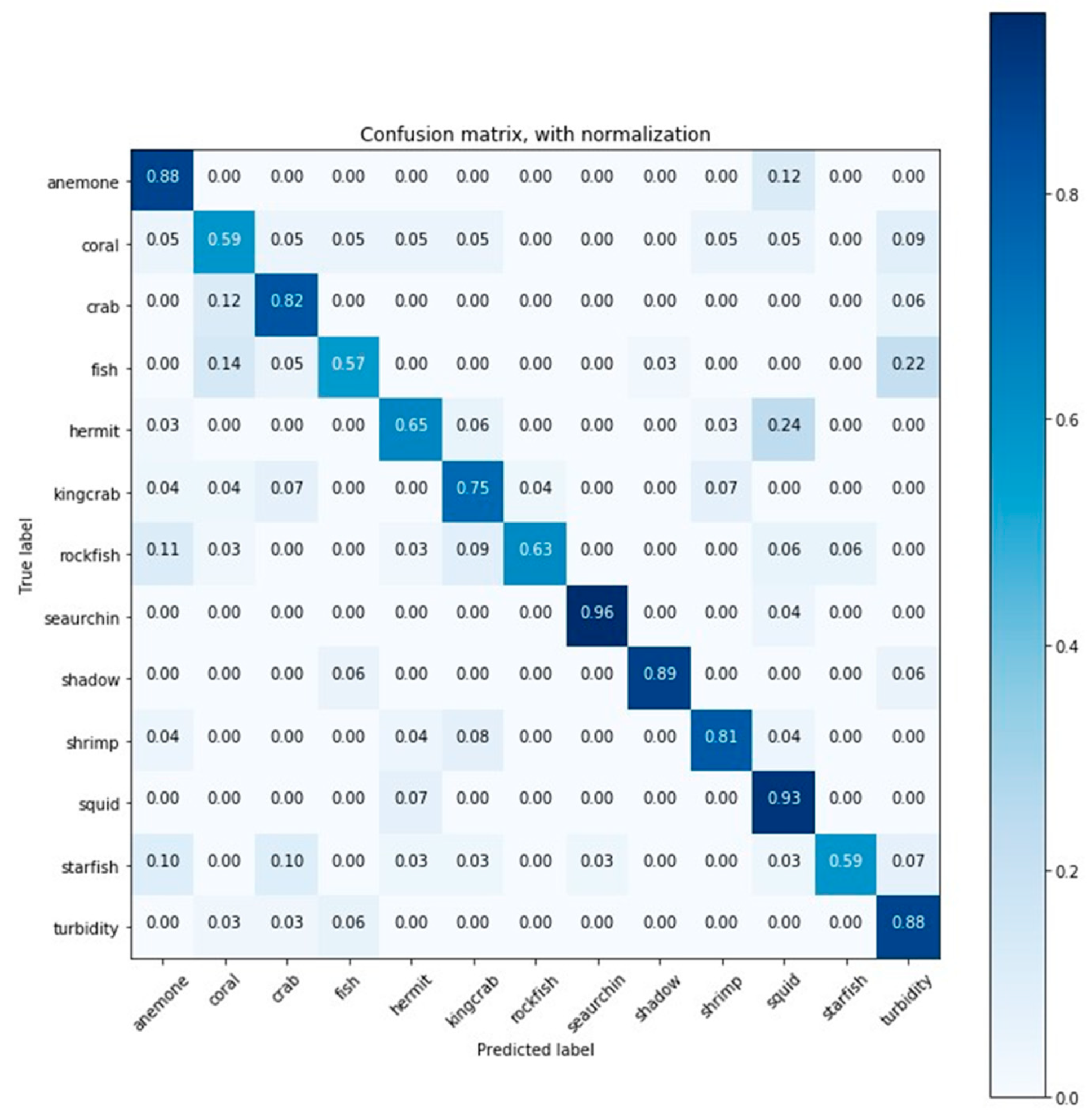

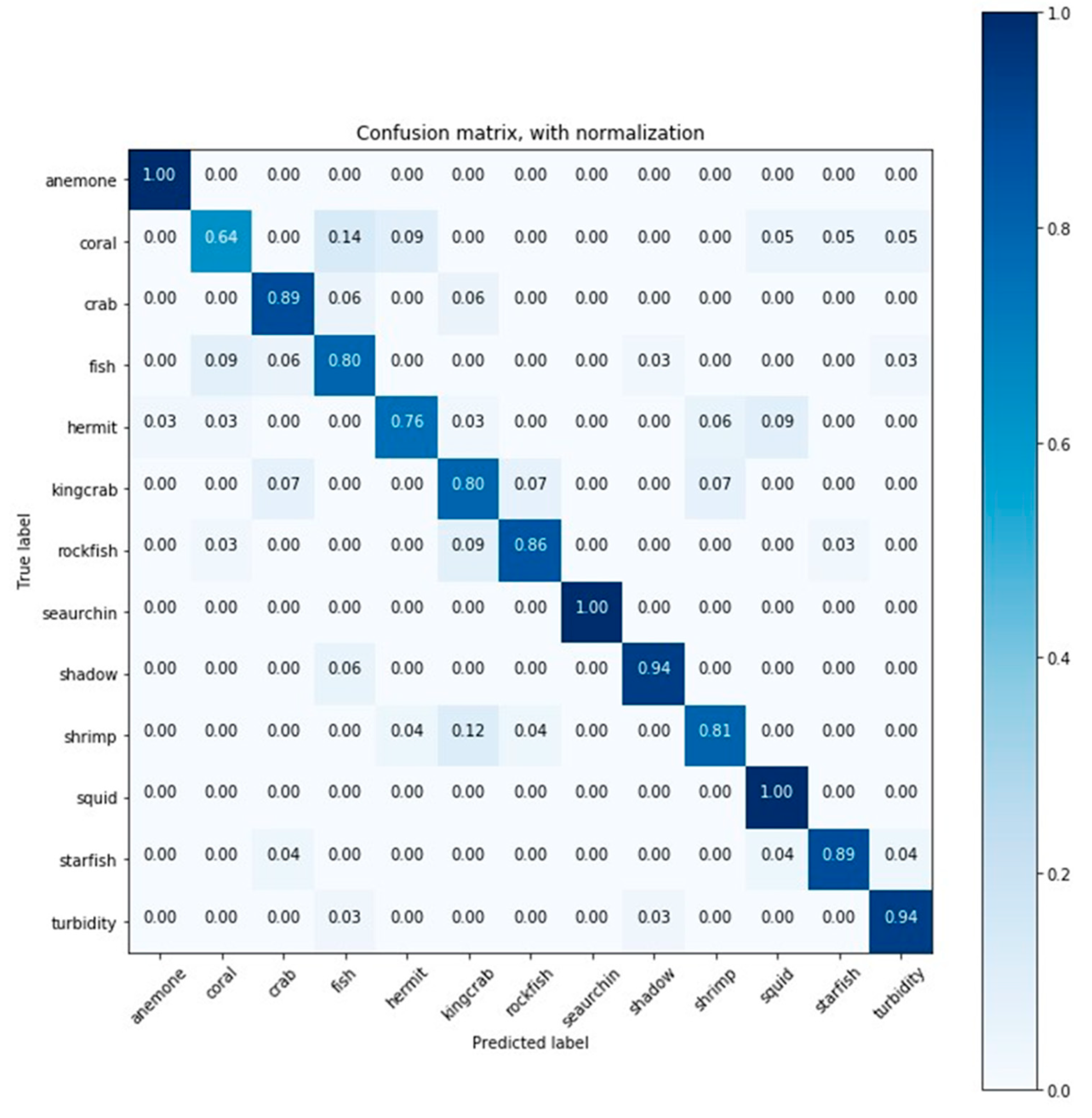

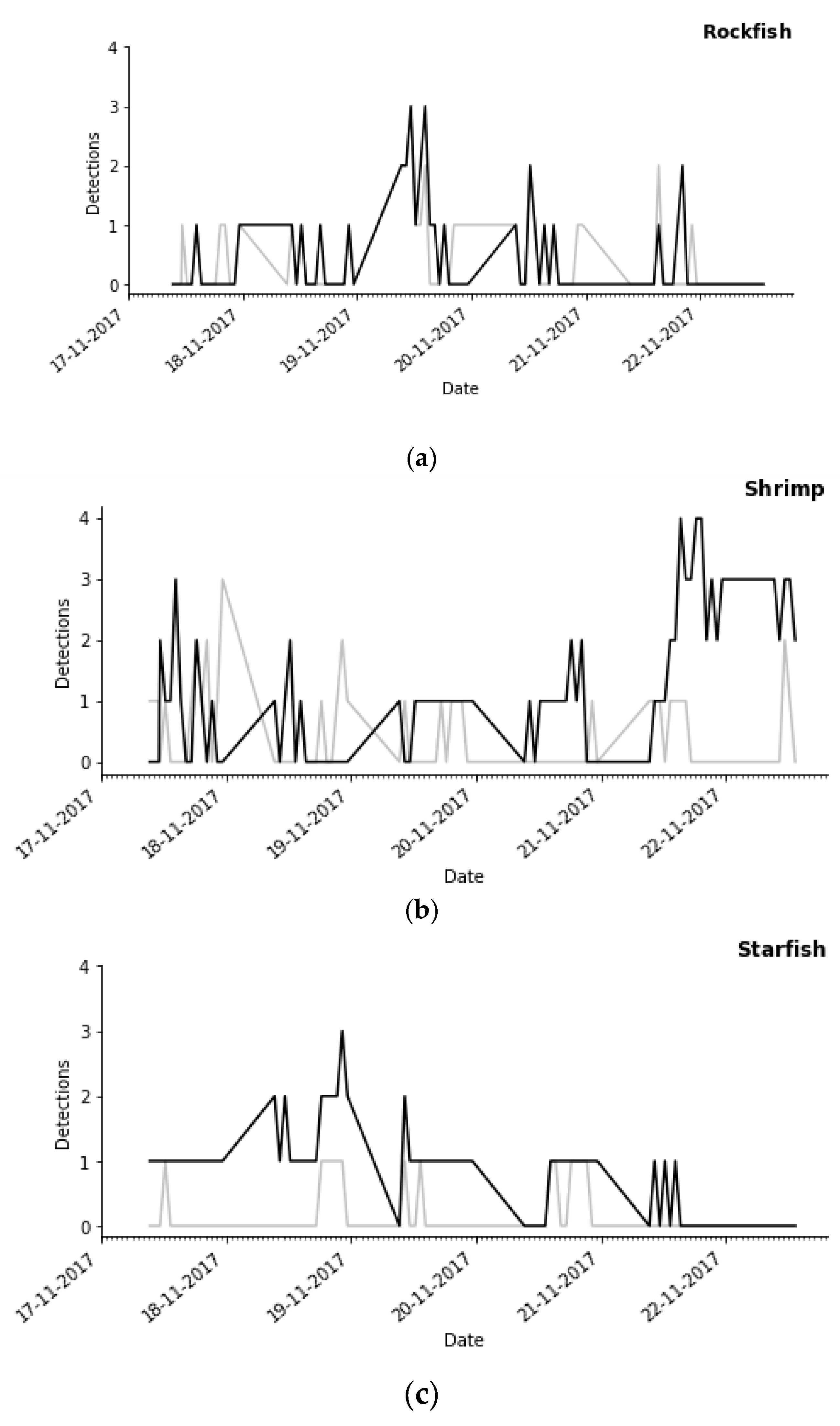

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

References

- Bicknell, A.W.; Godley, B.J.; Sheehan, E.V.; Votier, S.C.; Witt, M.J. Camera technology for monitoring marine biodiversity and human impact. Front. Ecol. Environ. 2016, 14, 424–432. [Google Scholar] [CrossRef]

- Danovaro, R.; Aguzzi, J.; Fanelli, E.; Billett, D.; Gjerde, K.; Jamieson, A.; Ramirez-Llodra, E.; Smith, C.; Snelgrove, P.; Thomsen, L.; et al. An ecosystem-based deep-ocean strategy. Science 2017, 355, 452–454. [Google Scholar] [CrossRef]

- Aguzzi, J.; Chatzievangelou, D.; Marini, S.; Fanelli, E.; Danovaro, R.; Flögel, S.; Lebris, N.; Juanes, F.; Leo, F.C.D.; Rio, J.D.; et al. New High-Tech Flexible Networks for the Monitoring of Deep-Sea Ecosystems. Environ. Sci. Tech. 2019, 53, 6616–6631. [Google Scholar] [CrossRef] [PubMed]

- Favali, P.; Beranzoli, L.; De Santis, A. SEAFLOOR OBSERVATORIES: A New Vision of the Earth from the Abyss; Springer Science & Business Media: Heidelberg, Germany, 2015. [Google Scholar]

- Schoening, T.; Bergmann, M.; Ontrup, J.; Taylor, J.; Dannheim, J.; Gutt, J.; Purser, A.; Nattkemper, T. Semi-Automated Image Analysis for the Assessment of Megafaunal Densities at the Arctic Deep-Sea Observatory HAUSGARTEN. PLoS ONE 2012, 7, e38179. [Google Scholar] [CrossRef] [PubMed]

- Aguzzi, J.; Doya, C.; Tecchio, S.; Leo, F.D.; Azzurro, E.; Costa, C.; Sbragaglia, V.; Rio, J.; Navarro, J.; Ruhl, H.; et al. Coastal observatories for monitoring of fish behaviour and their responses to environmental changes. Rev. Fish Biol. Fisher. 2015, 25, 463–483. [Google Scholar] [CrossRef]

- Widder, E.; Robison, B.H.; Reisenbichler, K.; Haddock, S. Using red light for in situ observations of deep-sea fishes. Deep-Sea Res PT I 2005, 52, 2077–2085. [Google Scholar] [CrossRef]

- Chauvet, P.; Metaxas, A.; Hay, A.E.; Matabos, M. Annual and seasonal dynamics of deep-sea megafaunal epibenthic communities in Barkley Canyon (British Columbia, Canada): a response to climatology, surface productivity and benthic boundary layer variation. Prog. Oceanogr. 2018, 169, 89–105. [Google Scholar] [CrossRef]

- Leo, F.D.; Ogata, B.; Sastri, A.R.; Heesemann, M.; Mihály, S.; Galbraith, M.; Morley, M. High-frequency observations from a deep-sea cabled observatory reveal seasonal overwintering of Neocalanus spp. in Barkley Canyon, NE Pacific: Insights into particulate organic carbon flux. Prog. Oceanogr. 2018, 169, 120–137. [Google Scholar] [CrossRef]

- Juniper, S.K.; Matabos, M.; Mihaly, S.F.; Ajayamohan, R.S.; Gervais, F.; Bui, A.O.V. A year in Barkley Canyon: A time-series observatory study of mid-slope benthos and habitat dynamics using the NEPTUNE Canada network. Deep-Sea Res PT II 2013, 92, 114–123. [Google Scholar] [CrossRef]

- Doya, C.; Aguzzi, J.; Chatzievangelou, D.; Costa, C.; Company, J.B.; Tunnicliffe, V. The seasonal use of small-scale space by benthic species in a transiently hypoxic area. J. Marine Syst. 2015, 154, 280–290. [Google Scholar] [CrossRef]

- Cuvelier, D.; Legendre, P.; Laes, A.; Sarradin, P.-M.; Sarrazin, J. Rhythms and Community Dynamics of a Hydrothermal Tubeworm Assemblage at Main Endeavour Field—A Multidisciplinary Deep-Sea Observatory Approach. PLoS ONE 2014, 9, e96924. [Google Scholar] [CrossRef]

- Matabos, M.; Bui, A.O.V.; Mihály, S.; Aguzzi, J.; Juniper, S.; Ajayamohan, R. High-frequency study of epibenthic megafaunal community dynamics in Barkley Canyon: A multi-disciplinary approach using the NEPTUNE Canada network. J. Marine Syst. 2013. [Google Scholar] [CrossRef]

- Aguzzi, J.; Fanelli, E.; Ciuffardi, T.; Schirone, A.; Leo, F.C.D.; Doya, C.; Kawato, M.; Miyazaki, M.; Furushima, Y.; Costa, C.; et al. Faunal activity rhythms influencing early community succession of an implanted whale carcass offshore Sagami Bay, Japan. Sci. Rep. 2018, 8, 11163. [Google Scholar]

- Mallet, D.; Pelletier, D. Underwater video techniques for observing coastal marine biodiversity: a review of sixty years of publications (1952–2012). Fish. Res. 2014, 154, 44–62. [Google Scholar] [CrossRef]

- Chuang, M.-C.; Hwang, J.-N.; Williams, K. A feature learning and object recognition framework for underwater fish images. IEEE Trans. Image Proc. 2016, 25, 1862–1872. [Google Scholar] [CrossRef] [PubMed]

- Qin, H.; Li, X.; Liang, J.; Peng, Y.; Zhang, C. DeepFish: Accurate underwater live fish recognition with a deep architecture. Neurocomputing 2016, 187, 49–58. [Google Scholar] [CrossRef]

- Siddiqui, S.A.; Salman, A.; Malik, M.I.; Shafait, F.; Mian, A.; Shortis, M.R.; Harvey, E.S.; Browman, H. editor: H. Automatic fish species classification in underwater videos: exploiting pre-trained deep neural network models to compensate for limited labelled data. ICES J. Marine Sci. 2017, 75, 374–389. [Google Scholar] [CrossRef]

- Marini, S.; Fanelli, E.; Sbragaglia, V.; Azzurro, E.; Fernandez, J.D.R.; Aguzzi, J. Tracking Fish Abundance by Underwater Image Recognition. Sci. Rep. 2018, 8, 13748. [Google Scholar] [CrossRef]

- Rountree, R.; Aguzzi, J.; Marini, S.; Fanelli, E.; De Leo, F.C.; Del Río, J.; Juanes, F. Towards an optimal design for ecosystem-level ocean observatories. Front. Mar. Sci. 2019. [Google Scholar] [CrossRef]

- Nguyen, H.; Maclagan, S.; Nguyen, T.; Nguyen, T.; Flemons, P.; Andrews, K.; Ritchie, E.; Phung, D. Animal Recognition and Identification with Deep Convolutional Neural Networks for Automated Wildlife Monitoring. In Proceedings of the IEEE International Conference on Data Science and Advanced Analytics (DSAA), Tokyo, Japan, 19–21 October 2017; pp. 40–49. [Google Scholar] [CrossRef]

- Roberts, J.; Wheeler, A.; Freiwald, A. Reefs of the Deep: The Biology and Geology of Cold-Water Coral Ecosystems. Sci. (New York, N.Y.) 2006, 312, 543–547. [Google Scholar] [CrossRef]

- Godø, O.; Tenningen, E.; Ostrowski, M.; Kubilius, R.; Kutti, T.; Korneliussen, R.; Fosså, J.H. The Hermes lander project - the technology, the data, and an evaluation of concept and results. Fisken Havet. 2012, 3. [Google Scholar]

- Rune, G.O.; Johnsen, S.; Torkelsen, T. The love ocean observatory is in operation. Mar. Tech. Soc. J. 2014, 48, 24–30. [Google Scholar]

- Hovland, M. Deep-water Coral Reefs: Unique Biodiversity Hot-Spots; Springer Science & Business Media: Heidelberg, Germany, 2008. [Google Scholar]

- Sundby, S.; Fossum, P.A.S.; Vikebø, F.B.; Aglen, A.; Buhl-Mortensen, L.; Folkvord, A.; Bakkeplass, K.; Buhl-Mortensen, P.; Johannessen, M.; Jørgensen, M.S.; et al. KunnskapsInnhenting Barentshavet–Lofoten–Vesterålen (KILO), Fisken og Havet 3, 1–186. Institute of Marine Research (in Norwegian); Fiskeri- og kystdepartementet: Bergen, Norway, 2013. [Google Scholar]

- Bøe, R.; Bellec, V.; Dolan, M.; Buhl-Mortensen, P.; Buhl-Mortensen, L.; Slagstad, D.; Rise, L. Giant sandwaves in the Hola glacial trough off Vesterålen, North Norway. Marine Geology 2009, 267, 36–54. [Google Scholar] [CrossRef]

- Engeland, T.V.; Godø, O.R.; Johnsen, E.; Duineveld, G.C.A.; Oevelen, D. Cabled ocean observatory data reveal food supply mechanisms to a cold-water coral reef. Prog. Oceanogr. 2019, 172, 51–64. [Google Scholar] [CrossRef]

- Fosså, J.H.; Buhl-Mortensen, P.; Furevik, D.M. Lophelia-korallrev langs norskekysten forekomst og tilstand. Fisken og Havet 2000, 2, 1–94. [Google Scholar]

- Ekman, S. Zoogeography of the Sea; Sidgwood and Jackson: London, UK, 1953; Volume 417. [Google Scholar]

- O’Riordan, C.E. Marine Fauna Notes from the National Museum of Ireland–10. INJ 1986, 22, 34–37. [Google Scholar]

- Hureau, J.C.; Litvinenko, N.I. Scorpaenidae. In Fishes of the North-eastern Atlantic and the Mediterranean (FNAM); P.J.P., W., Ed.; UNESCO: Paris, France, 1986; pp. 1211–1229. [Google Scholar]

- Marini, S.; Corgnati, L.; Mantovani, C.; Bastianini, M.; Ottaviani, E.; Fanelli, E.; Aguzzi, J.; Griffa, A.; Poulain, P.-M. Automated estimate of fish abundance through the autonomous imaging device GUARD1. Measurement 2018, 126, 72–75. [Google Scholar] [CrossRef]

- Reza, A.M. Realization of the contrast limited adaptive histogram equalization (CLAHE) for real-time image enhancement. J. VLSI Sig. Proc. Syst. Sig. Image Video Tech. 2004, 38, 35–44. [Google Scholar] [CrossRef]

- Pizer, S.M.; Amburn, E.P.; Austin, J.D.; Cromartie, R.; Geselowitz, A.; Greer, T.; Romeny, B.H.; Zimmerman, J.B.; Zuiderveld, K. Adaptive histogram equalization and its variations. Image Vis. Comput. 1987, 39, 355–368. [Google Scholar] [CrossRef]

- Ouyang, B.; Dalgleish, F.R.; Caimi, F.M.; Vuorenkoski, A.K.; Giddings, T.E.; Shirron, J.J. Image enhancement for underwater pulsed laser line scan imaging system. In Proceedings of the Ocean Sensing and Monitoring IV; International Society for Optics and Photonics, Baltimore, MD, USA, 24–26 April 2012; Volume 8372. [Google Scholar]

- Lu, H.; Li, Y.; Zhang, L.; Yamawaki, A.; Yang, S.; Serikawa, S. Underwater optical image dehazing using guided trigonometric bilateral filtering. In Proceedings of the 2013 IEEE International Symposium on Circuits and Systems (ISCAS2013), Beijing, China, 19–23 May 2013; pp. 2147–2150. [Google Scholar]

- Serikawa, S.; Lu, H. Underwater image dehazing using joint trilateral filter. Comput. Electr. Eng. 2014, 40, 41–50. [Google Scholar] [CrossRef]

- Tomasi, C.; Manduchi, R. Bilateral filtering for gray and color images. In Proceedings of the IEEE Sixth International Conference on Computer Vision, Bombay, India, 4–7 January 1998; pp. 839–846. [Google Scholar] [CrossRef]

- Aguzzi, J.; Costa, C.; Fujiwara, Y.; Iwase, R.; Ramirez-Llorda, E.; Menesatti, P. A novel morphometry-based protocol of automated video-image analysis for species recognition and activity rhythms monitoring in deep-sea fauna. Sensors 2009, 9, 8438–8455. [Google Scholar] [CrossRef] [PubMed]

- Peters, J. Foundations of Computer Vision: computational geometry, visual image structures and object shape detection; Springer International Publishing: Cham, Switzerland, 2017. [Google Scholar]

- Aguzzi, J.; Lázaro, A.; Condal, F.; Guillen, J.; Nogueras, M.; Rio, J.; Costa, C.; Menesatti, P.; Puig, P.; Sardà, F.; et al. The New Seafloor Observatory (OBSEA) for Remote and Long-Term Coastal Ecosystem Monitoring. Sensors 2011, 11, 5850–5872. [Google Scholar] [CrossRef] [PubMed]

- Albarakati, H.; Ammar, R.; Alharbi, A.; Alhumyani, H. An application of using embedded underwater computing architectures. In Proceedings of the IEEE International Symposium on Signal Processing and Information Technology (ISSPIT), Limassol, Cyprus, 12–14 December 2016; pp. 34–39. [Google Scholar]

- OpenCV (Open source computer vision). Available online: https://opencv.org/ (accessed on 25 November 2019).

- Coelho, L.P. Mahotas: Open source software for scriptable computer vision. arXiv 2012, arXiv:1211.4907. [Google Scholar]

- Spampinato, C.; Giordano, D.; Salvo, R.D.; Chen-Burger, Y.-H.J.; Fisher, R.B.; Nadarajan, G. Automatic fish classification for underwater species behavior understanding. In Proceedings of the MM ’10: ACM Multimedia Conference, Firenze, Italy, 25–29 October 2010; pp. 45–50. [Google Scholar]

- Tharwat, A.; Hemedan, A.A.; Hassanien, A.E.; Gabel, T. A biometric-based model for fish species classification. Fish. Res. 2018, 204, 324–336. [Google Scholar] [CrossRef]

- Kitasato, A.; Miyazaki, T.; Sugaya, Y.; Omachi, S. Automatic Discrimination between Scomber japonicus and Scomber australasicus by Geometric and Texture Features. Fishes 2018, 3, 26. [Google Scholar] [CrossRef]

- Wong, R.Y.; Hall, E.L. Scene matching with invariant moments. Comput. Grap. Image Proc. 1978, 8, 16–24. [Google Scholar] [CrossRef]

- Haralick, R.M.; Shanmugam, K. others Textural features for image classification. IEEE Trans. Syst. Man Cybern. 1973, SMC-3, 610–621. [Google Scholar] [CrossRef]

- Zuiderveld, K. Contrast limited adaptive histogram equalization. In Graphics gems IV; Academic Press Professional, Inc: San Diego, CA, USA, 1994; pp. 474–485. [Google Scholar]

- Tusa, E.; Reynolds, A.; Lane, D.M.; Robertson, N.M.; Villegas, H.; Bosnjak, A. Implementation of a fast coral detector using a supervised machine learning and gabor wavelet feature descriptors. In Proceedings of the 2014 IEEE Sensor Systems for a Changing Ocean (SSCO), Brest, France, 13–14 October 2014; pp. 1–6. [Google Scholar]

- Saberioon, M.; Císař, P.; Labbé, L.; Souček, P.; Pelissier, P.; Kerneis, T. Comparative Performance Analysis of Support Vector Machine, Random Forest, Logistic Regression and k-Nearest Neighbours in Rainbow Trout (Oncorhynchus Mykiss) Classification Using Image-Based Features. Sensors 2018, 18, 1027. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Vapnik, V.N. The Nature of Statistical Learning Theory; Springer-Verlag: Berlin/Heidelberg, Germany, 1995. [Google Scholar]

- Vapnik, V.N. Statistical learning theory; John Wiley: New York, NY, USA, 1998. [Google Scholar]

- Scholkopf, B.; Sung, K.-K.; Burges, C.J.C.; Girosi, F.; Niyogi, P.; Poggio, T.; Vapnik, V. Comparing support vector machines with Gaussian kernels to radial basis function classifiers. IEEE Trans. Signal Proc. 1997, 45, 2758–2765. [Google Scholar] [CrossRef]

- Spampinato, C.; Palazzo, S.; Joalland, P.-H.; Paris, S.; Glotin, H.; Blanc, K.; Lingrand, D.; Precioso, F. Fine-grained object recognition in underwater visual data. Multimed. Tools and Appl. 2016, 75, 1701–1720. [Google Scholar] [CrossRef]

- Rova, A.; Mori, G.; Dill, L.M. One fish, two fish, butterfish, trumpeter: Recognizing fish in underwater video. In Proceedings of the MVA, Tokyo, Japan, 16–18 May 2007; pp. 404–407. [Google Scholar]

- Fix, E.; Hodges, J.L. Discriminatory analysis-nonparametric discrimination: consistency properties; California Univ Berkeley: Berkeley, CA, USA, 1951. [Google Scholar]

- Magee, J.F. Decision Trees for Decision Making; Harvard Business Review, Harvard Business Publishing: Brighton, MA, USA, 1964. [Google Scholar]

- Argentiero, P.; Chin, R.; Beaudet, P. An automated approach to the design of decision tree classifiers. IEEE T Pattern Anal. 1982, PAMI-4, 51–57. [Google Scholar] [CrossRef]

- Kalochristianakis, M.; Malamos, A.; Vassilakis, K. Color based subject identification for virtual museums, the case of fish. In Proceedings of the 2016 International Conference on Telecommunications and Multimedia (TEMU), Heraklion, Greece, 25–27 July 2016; pp. 1–5. [Google Scholar]

- Freitas, U.; Gonçalves, W.N.; Matsubara, E.T.; Sabino, J.; Borth, M.R.; Pistori, H. Using Color for Fish Species Classification. Available online: gibis.unifesp.br/sibgrapi16/eproceedings/wia/1.pdf (accessed on 28 January 2020).

- Breiman, L. Random forests. Machine Learning 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Ho, T.K. Random decision forests. In Proceedings of the 3rd International Conference on Document Analysis and Recognition, Montreal, QC, Canada, 14–16 August 1995; pp. 278–282. [Google Scholar]

- Fang, Z.; Fan, J.; Chen, X.; Chen, Y. Beak identification of four dominant octopus species in the East China Sea based on traditional measurements and geometric morphometrics. Fish. Sci. 2018, 84, 975–985. [Google Scholar] [CrossRef]

- Ali-Gombe, A.; Elyan, E.; Jayne, C. Fish classification in context of noisy images. In Engineering Applications of Neural Networks, Proceedings of the 18th International Conference on Engineering Applications of Neural Networks, Athens, Greece, August 25–27, 2017; Boracchi, G., Iliadis, L., Jayne, C., Likas, A., Eds.; Springer: Cham, Switzerland, 2017; pp. 216–226. [Google Scholar]

- Rachmatullah, M.N.; Supriana, I. Low Resolution Image Fish Classification Using Convolutional Neural Network. In Proceedings of the 2018 5th International Conference on Advanced Informatics: Concept Theory and Applications (ICAICTA), Krabi, Thailand, 14–17 August 2018; pp. 78–83. [Google Scholar]

- Rathi, D.; Jain, S.; Indu, D.S. Underwater Fish Species Classification using Convolutional Neural Network and Deep Learning. arXiv 2018, arXiv:1805.10106. [Google Scholar]

- Rimavicius, T.; Gelzinis, A. A Comparison of the Deep Learning Methods for Solving Seafloor Image Classification Task. In Information and Software Technologies, Proceedings of the 23rd International Conference on Information and Software Technologies, Druskininkai, Lithuania, October 12–14; Damaševičius, R., Mikašytė, V., Eds.; Springer: Cham, Switzerland, 2017; pp. 442–453. [Google Scholar]

- Gardner, W.A. Learning characteristics of stochastic-gradient-descent algorithms: A general study, analysis, and critique. Sig. Proc. 1984, 6, 113–133. [Google Scholar] [CrossRef]

- Zou, H.; Hastie, T. Regularization and variable selection via the elastic net. J. R Stat. Soc: B 2005, 67, 301–320. [Google Scholar] [CrossRef]

- Duda, R.O.; Hart, P.E. Pattern Classification and Scene Analysis; A Wiley-Interscience Publication: New York, NY, USA, 1973. [Google Scholar]

- Jonsson, P.; Wohlin, C. An evaluation of k-nearest neighbour imputation using likert data. In Proceedings of the 10th International Symposium on Software Metrics, Chicago, IL, USA, 11–17 September 2004; pp. 108–118. [Google Scholar]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Zeiler, M.D. ADADELTA: an adaptive learning rate method. arXiv preprint 2012, arXiv:1212.5701. [Google Scholar]

- Tieleman, T.; Hinton, G. Divide the gradient by a running average of its recent magnitude. COURSERA Neural Netw. Mach. Learn 2012, 6, 26–31. [Google Scholar]

- Gulcehre, C.; Moczulski, M.; Denil, M.; Bengio, Y. Noisy activation functions. In Proceedings of the International Conference on Machine Learning, New York, NY, USA, 19–24 June 2016; pp. 3059–3068. [Google Scholar]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: a simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Fawcett, T. An introduction to ROC analysis. Pattern Recogn. Lett. 2006, 27, 861–874. [Google Scholar] [CrossRef]

- Shorten, C.; Khoshgoftaar, T.M. A survey on image data augmentation for deep learning. J. Big Data 2019, 6, 60. [Google Scholar] [CrossRef]

- Pan, Z.; Yu, W.; Yi, X.; Khan, A.; Yuan, F.; Zheng, Y. Recent progress on generative adversarial networks (GANs): A survey. IEEE Access 2019, 7, 36322–36333. [Google Scholar] [CrossRef]

- Cao, Y.-J.; Jia, L.-L.; Chen, Y.-X.; Lin, N.; Yang, C.; Zhang, B.; Liu, Z.; Li, X.-X.; Dai, H.-H. Recent Advances of Generative Adversarial Networks in Computer Vision. IEEE Access 2018, 7, 14985–15006. [Google Scholar] [CrossRef]

- Shao, L.; Zhu, F.; Li, X. Transfer learning for visual categorization: A survey. IEEE T Neural Networ. Learn. Syst. 2014, 26, 1019–1034. [Google Scholar] [CrossRef]

- Konovalov, D.A.; Saleh, A.; Bradley, M.; Sankupellay, M.; Marini, S.; Sheaves, M. Underwater Fish Detection with Weak Multi-Domain Supervision. arXiv preprint 2019, arXiv:1905.10708. [Google Scholar]

- Villon, S.; Chaumont, M.; Subsol, G.; Villéger, S.; Claverie, T.; Mouillot, D. Coral reef fish detection and recognition in underwater videos by supervised machine learning: Comparison between Deep Learning and HOG+ SVM methods. In Proceedings of the International Conference on Advanced Concepts for Intelligent Vision Systems, Lecce, Italy, 24–27 October 2016; pp. 160–171. [Google Scholar]

- Hu, G.; Wang, K.; Peng, Y.; Qiu, M.; Shi, J.; Liu, L. Deep learning methods for underwater target feature extraction and recognition. Computational Intell. Neurosci. 2018. [Google Scholar] [CrossRef]

- Salman, A.; Jalal, A.; Shafait, F.; Mian, A.; Shortis, M.; Seager, J.; Harvey, E. Fish species classification in unconstrained underwater environments based on deep learning. Limnol. Oceanogr: Meth. 2016, 14, 570–585. [Google Scholar] [CrossRef]

- Cao, X.; Zhang, X.; Yu, Y.; Niu, L. Deep learning-based recognition of underwater target. In Proceedings of the 2016 IEEE International Conference on Digital Signal Processing (DSP), Shanghai, China, 19–21 November 2016; pp. 89–93. [Google Scholar]

- Pelletier, S.; Montacir, A.; Zakari, H.; Akhloufi, M. Deep Learning for Marine Resources Classification in Non-Structured Scenarios: Training vs. Transfer Learning. In Proceedings of the 2018 31st IEEE Canadian Conference on Electrical & Computer Engineering (CCECE), Quebec City, QC, Canada, 13–16 May 2018; pp. 1–4. [Google Scholar]

- Sun, X.; Shi, J.; Liu, L.; Dong, J.; Plant, C.; Wang, X.; Zhou, H. Transferring deep knowledge for object recognition in Low-quality underwater videos. Neurocomputing 2018, 275, 897–908. [Google Scholar] [CrossRef]

- Xu, W.; Matzner, S. Underwater Fish Detection using Deep Learning for Water Power Applications. arXiv preprint 2018, arXiv:1811.01494. [Google Scholar]

- Wang, X.; Ouyang, J.; Li, D.; Zhang, G. Underwater Object Recognition Based on Deep Encoding-Decoding Network. J. Ocean Univ. Chin. 2018, 1–7. [Google Scholar] [CrossRef]

- Naddaf-Sh, M.; Myler, H.; Zargarzadeh, H. Design and Implementation of an Assistive Real-Time Red Lionfish Detection System for AUV/ROVs. Complexity 2018, 2018. [Google Scholar] [CrossRef]

- Villon, S.; Mouillot, D.; Chaumont, M.; Darling, E.S.; Subsol, G.; Claverie, T.; Villéger, S. A Deep learning method for accurate and fast identification of coral reef fishes in underwater images. Ecolog. Infor. 2018, 238–244. [Google Scholar] [CrossRef]

| Class (alias) | Species Name | # Specimens per Species in Dataset | Image in Figure 2 |

|---|---|---|---|

| Rockfish | Sebastes sp. | 205 | (A) |

| King crab | Lithodes maja | 170 | (B) |

| Squid | Sepiolidae | 96 | (C) |

| Starfish | Unidentified | 169 | (D) |

| Hermit crab | Unidentified | 184 | (E) |

| Anemone | Bolocera tuediae | 98 | (F) |

| Shrimp | Pandalus sp. | 154 | (G) |

| Sea urchin | Echinus esculentus | 138 | (H) |

| Eel like fish | Brosme brosme | 199 | (I) |

| Crab | Cancer pagurus | 102 | (J) |

| Coral | Desmophyllum pertusum | 142 | (K) |

| Turbidity | - | 176 | (L) |

| Shadow | - | 101 | (M) |

| Type | Description | Obtained Features |

|---|---|---|

| Hu invariant moments [49] | They are used for shape matching, as they are invariant to image transformations such as scale, translation, rotation, and reflection. | An array containing the image moments |

| Haralick texture features [50] | They describe an image based on texture, quantifying the gray tone intensity of pixels that are next to each other in space. | An array containing the Haralick features of the image |

| Color histogram [35,51] | The representation of the distribution of colors contained in an image. | An array (a flattened matrix to one dimension) containing the histogram of the image |

| CNN-1 | CNN-2 | CNN-3 | CNN-4 |

| Structure 1 | Structure 2 | Structure 1 | Structure 2 |

| Optimizer 1 | Optimizer 1 | Optimizer 2 | Optimizer 2 |

| Parameters 1 | Parameters 1 | Parameters 2 | Parameters 2 |

| DNN-1 | DNN-2 | DNN-3 | DNN-4 |

| Structure 1 | Structure 2 | Structure 1 | Structure 2 |

| Optimizer 1 | Optimizer 1 | Optimizer 2 | Optimizer 2 |

| Parameters 1 | Parameters 1 | Parameters 2 | Parameters 2 |

| Type of Approach | Classifier | Accuracy | AUC | Training Time (h:mm:ss) |

|---|---|---|---|---|

| Traditional classifiers | Linear SVM | 0.5137 | 0.7392 | 0:01:11 |

| LSVM + SGD | 0.4196 | 0.6887 | 0:00:28 | |

| K-NN (k = 39) | 0.4463 | 0.7140 | 0:00:02 | |

| K-NN (k = 99) | 0.3111 | 0.6390 | 0:00:02 | |

| DT-1 | 0.4310 | 0.6975 | 0:00:08 | |

| DT-2 | 0.4331 | 0.6985 | 0:00:08 | |

| RF-1 | 0.4326 | 0.6987 | 0:00:08 | |

| RF-2 | 0.6527 | 0.8210 | 0:00:08 | |

| CNN-1 | 0.6191 | 0.7983 | 0:01:26 | |

| CNN-2 | 0.6563 | 0.8180 | 0:01:53 | |

| DL | CNN-3 | 0.6346 | 0.8067 | 0:07:23 |

| CNN-4 | 0.6421 | 0.8107 | 0:08:18 | |

| DNN-1 | 0.7618 | 0.8759 | 0:07:56 | |

| DNN-2 | 0.7576 | 0.8730 | 0:08:27 | |

| DNN-3 | 0.6904 | 0.8361 | 0:06:50 | |

| DNN-4 | 0.7140 | 0.8503 | 0:07:16 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lopez-Vazquez, V.; Lopez-Guede, J.M.; Marini, S.; Fanelli, E.; Johnsen, E.; Aguzzi, J. Video Image Enhancement and Machine Learning Pipeline for Underwater Animal Detection and Classification at Cabled Observatories. Sensors 2020, 20, 726. https://doi.org/10.3390/s20030726

Lopez-Vazquez V, Lopez-Guede JM, Marini S, Fanelli E, Johnsen E, Aguzzi J. Video Image Enhancement and Machine Learning Pipeline for Underwater Animal Detection and Classification at Cabled Observatories. Sensors. 2020; 20(3):726. https://doi.org/10.3390/s20030726

Chicago/Turabian StyleLopez-Vazquez, Vanesa, Jose Manuel Lopez-Guede, Simone Marini, Emanuela Fanelli, Espen Johnsen, and Jacopo Aguzzi. 2020. "Video Image Enhancement and Machine Learning Pipeline for Underwater Animal Detection and Classification at Cabled Observatories" Sensors 20, no. 3: 726. https://doi.org/10.3390/s20030726

APA StyleLopez-Vazquez, V., Lopez-Guede, J. M., Marini, S., Fanelli, E., Johnsen, E., & Aguzzi, J. (2020). Video Image Enhancement and Machine Learning Pipeline for Underwater Animal Detection and Classification at Cabled Observatories. Sensors, 20(3), 726. https://doi.org/10.3390/s20030726