A Robust Feature Extraction Model for Human Activity Characterization Using 3-Axis Accelerometer and Gyroscope Data

Abstract

1. Introduction

2. Literature Review

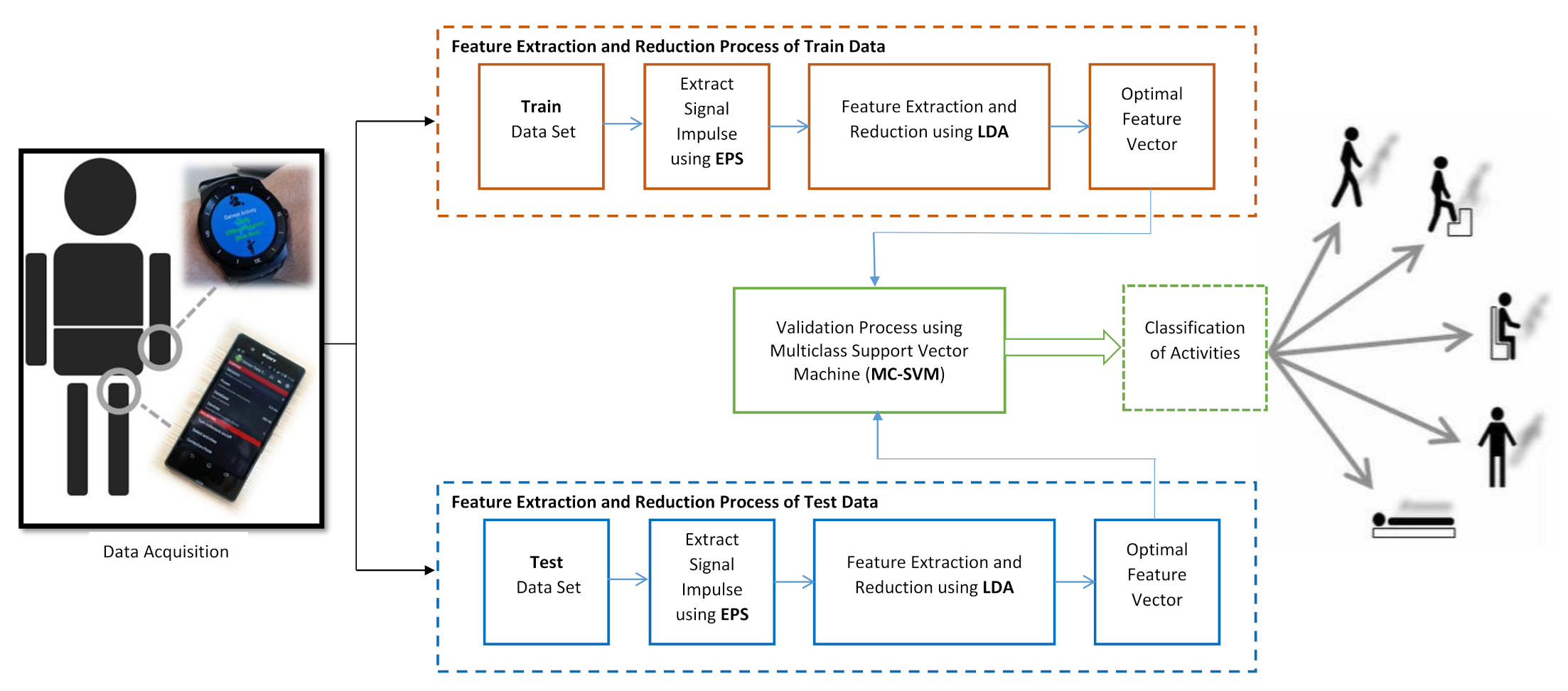

3. Methodology

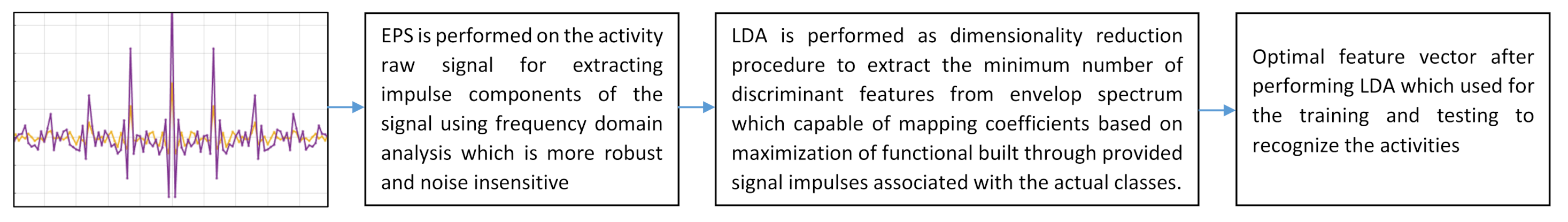

3.1. Proposed Feature Extraction and Reduction

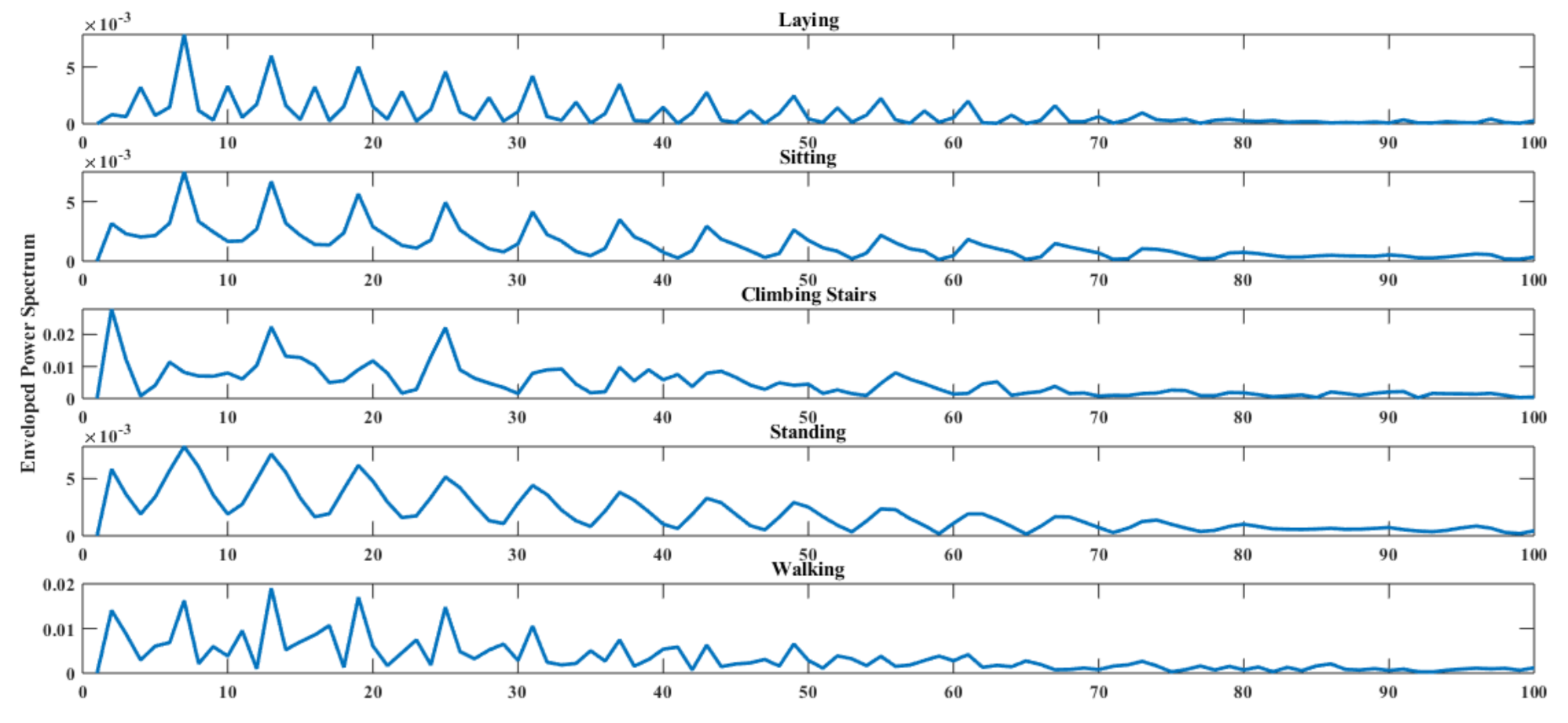

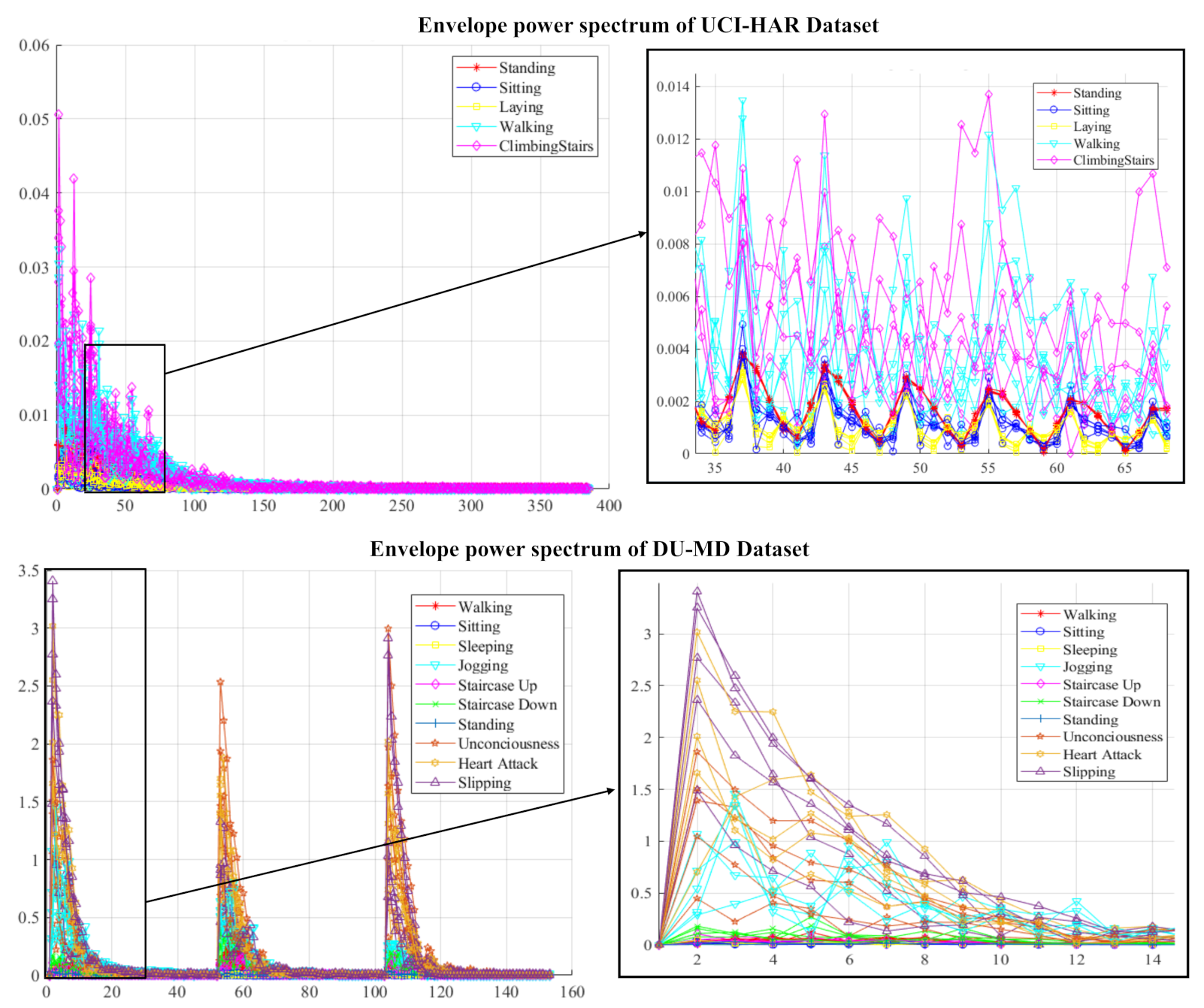

3.1.1. Enveloped Power Spectrum (EPS)

3.1.2. Linear Discriminant Analysis (LDA)

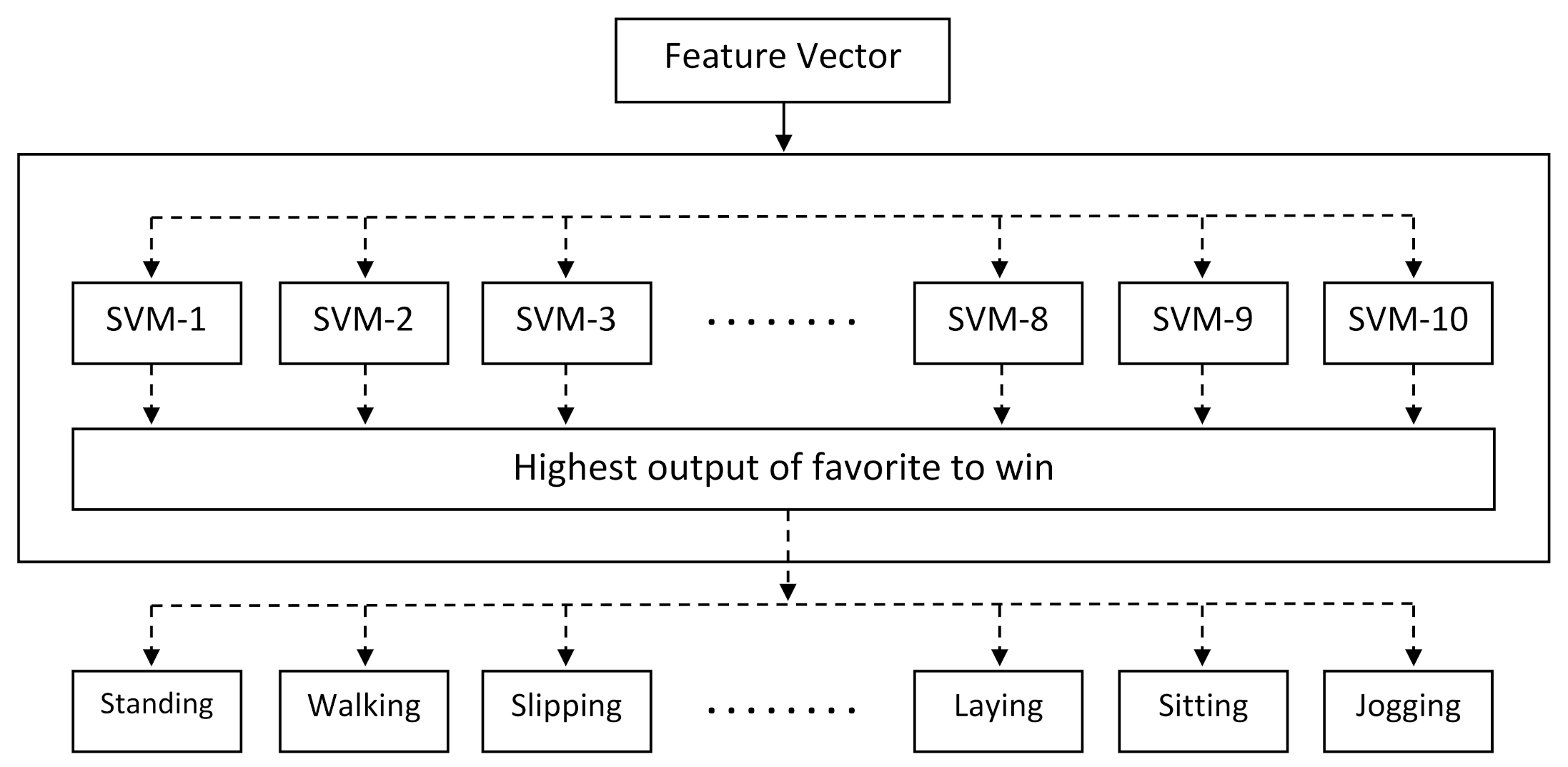

3.2. Classification

4. Experiment

4.1. Data Description

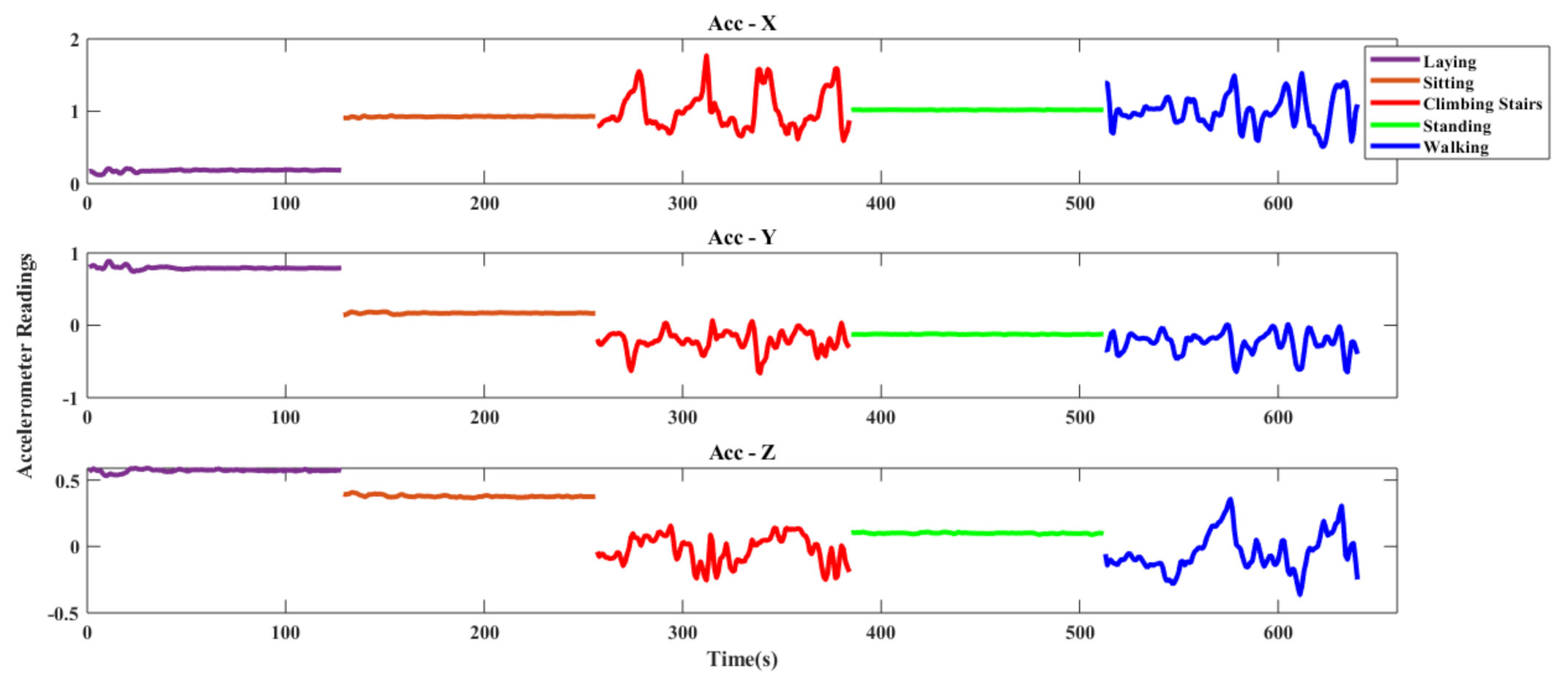

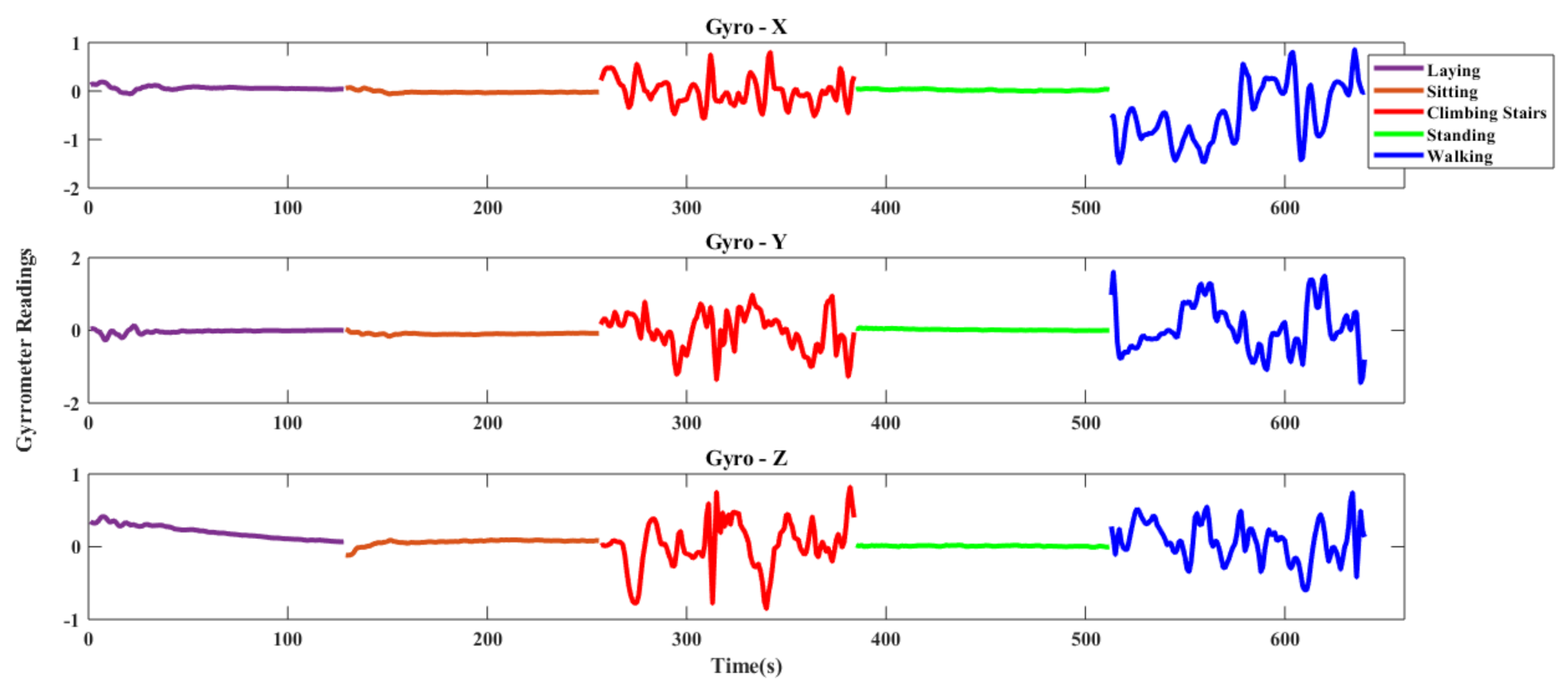

4.1.1. UCI-HAR Dataset

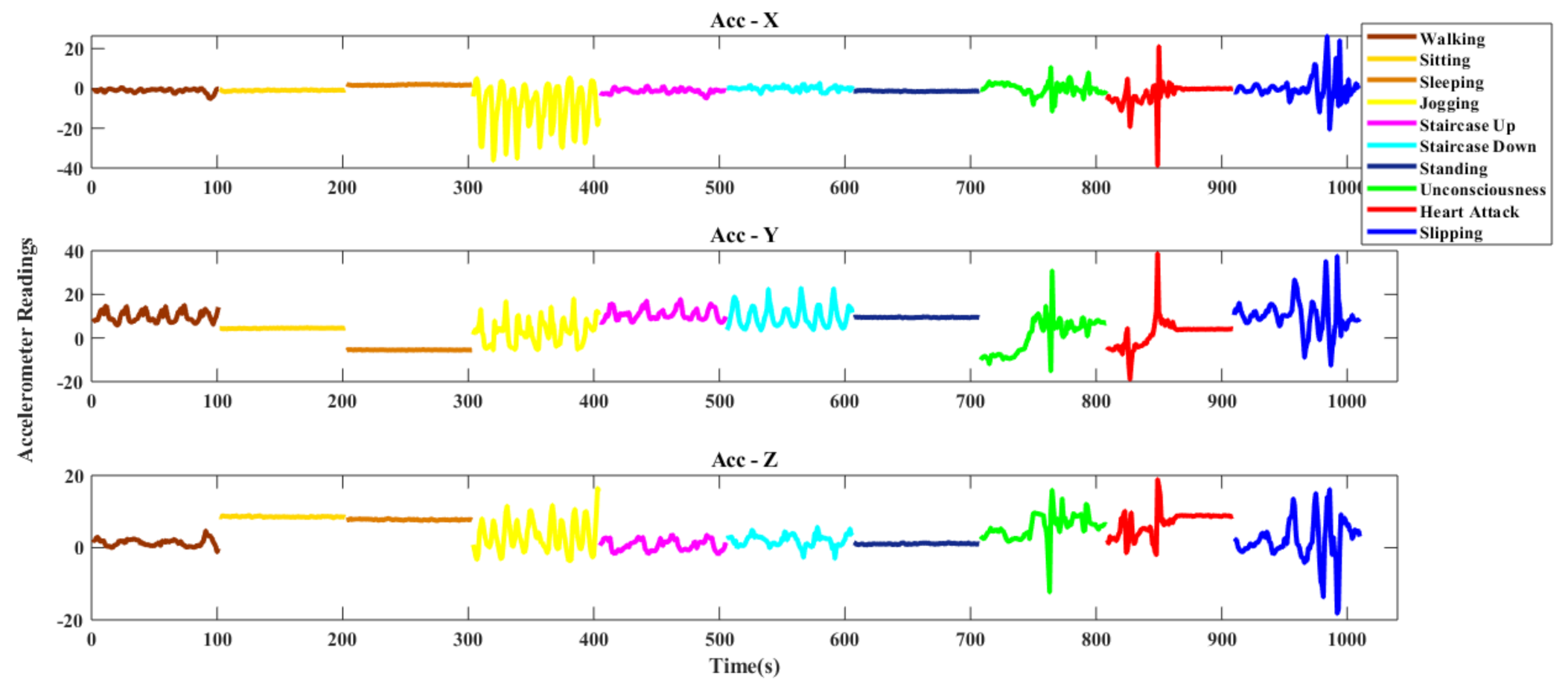

4.1.2. DU-MD Dataset

4.2. Experimental Setup and Performance Measurement Criteria

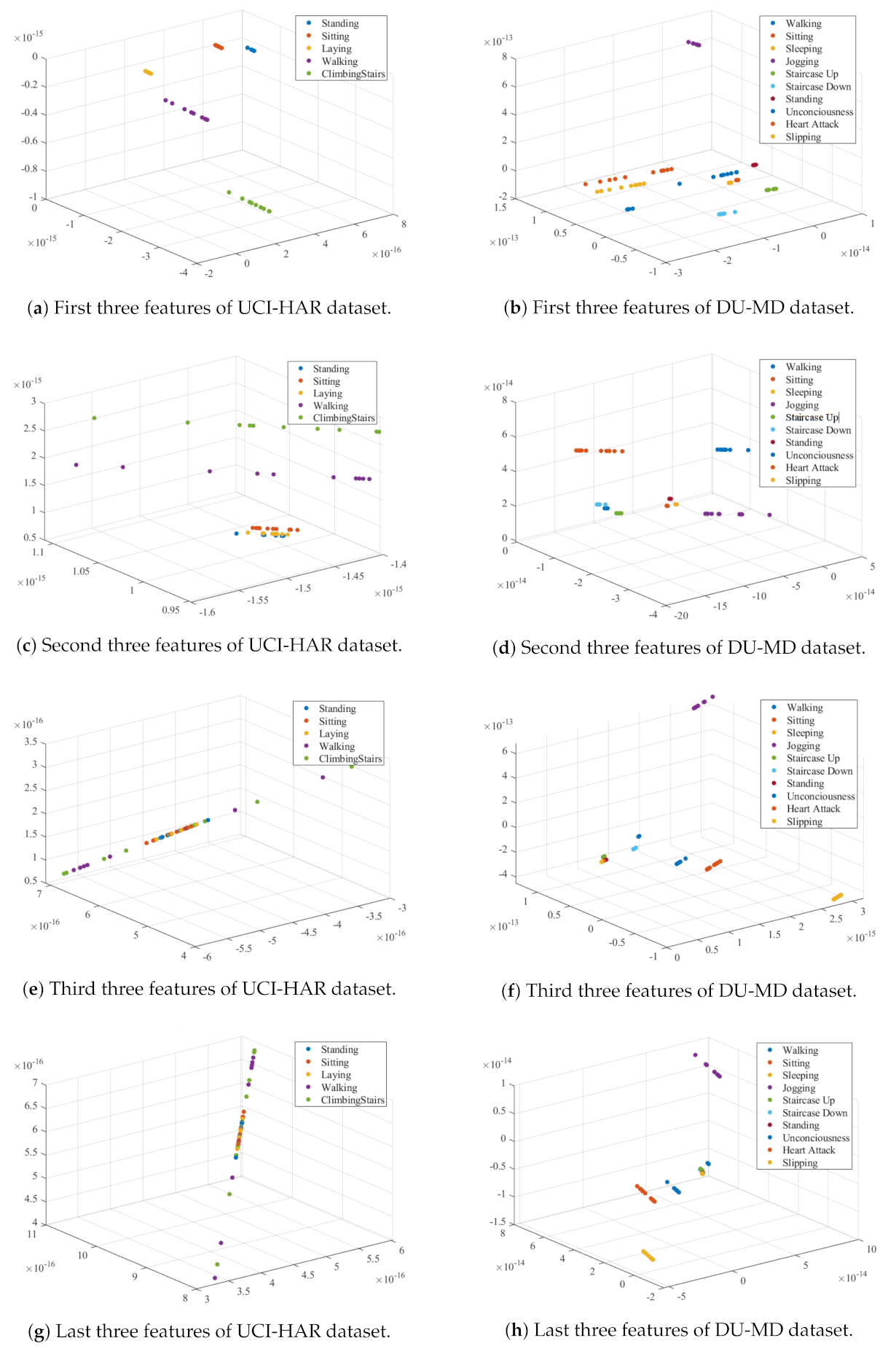

4.3. Feature Extraction and Reduction Analysis

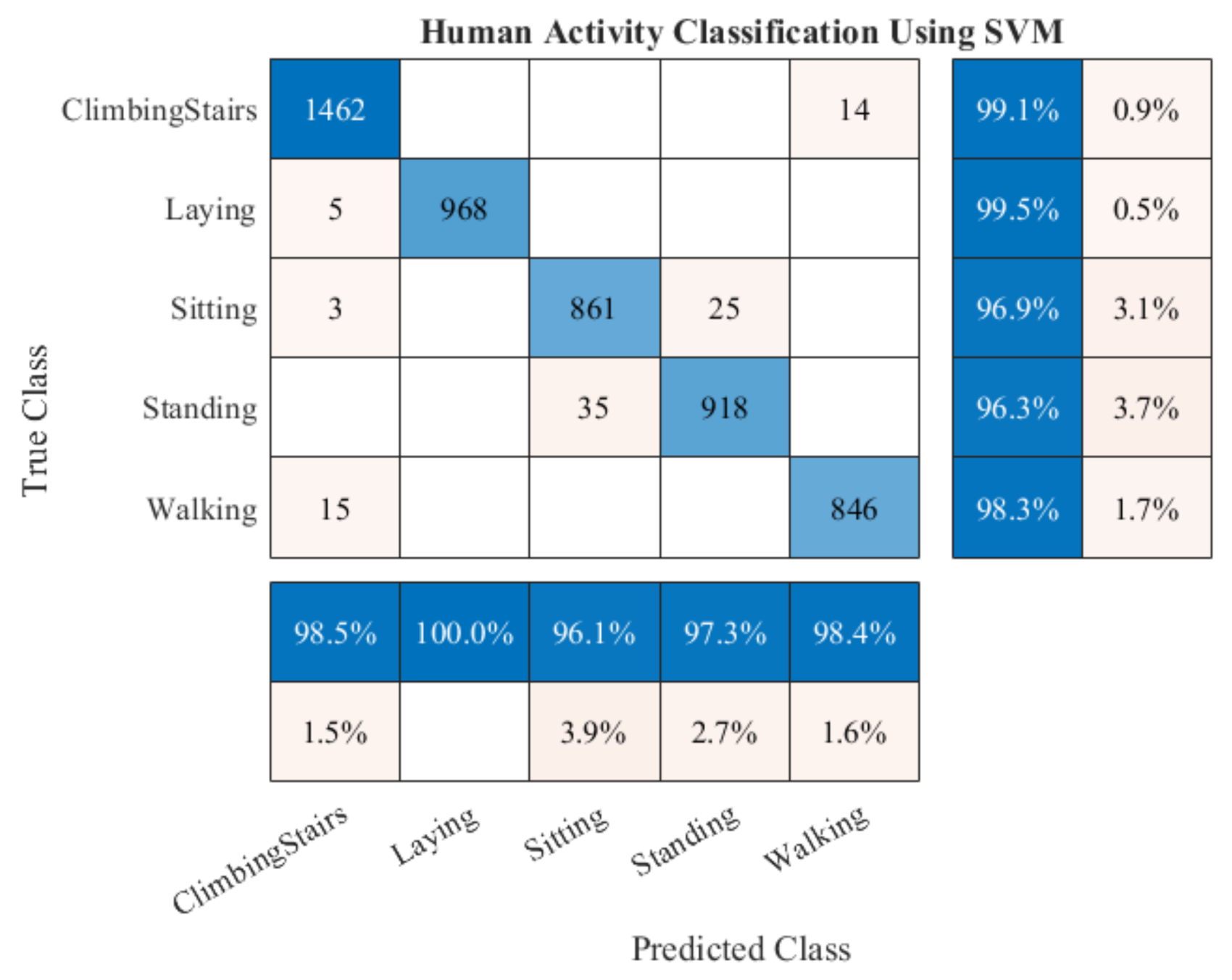

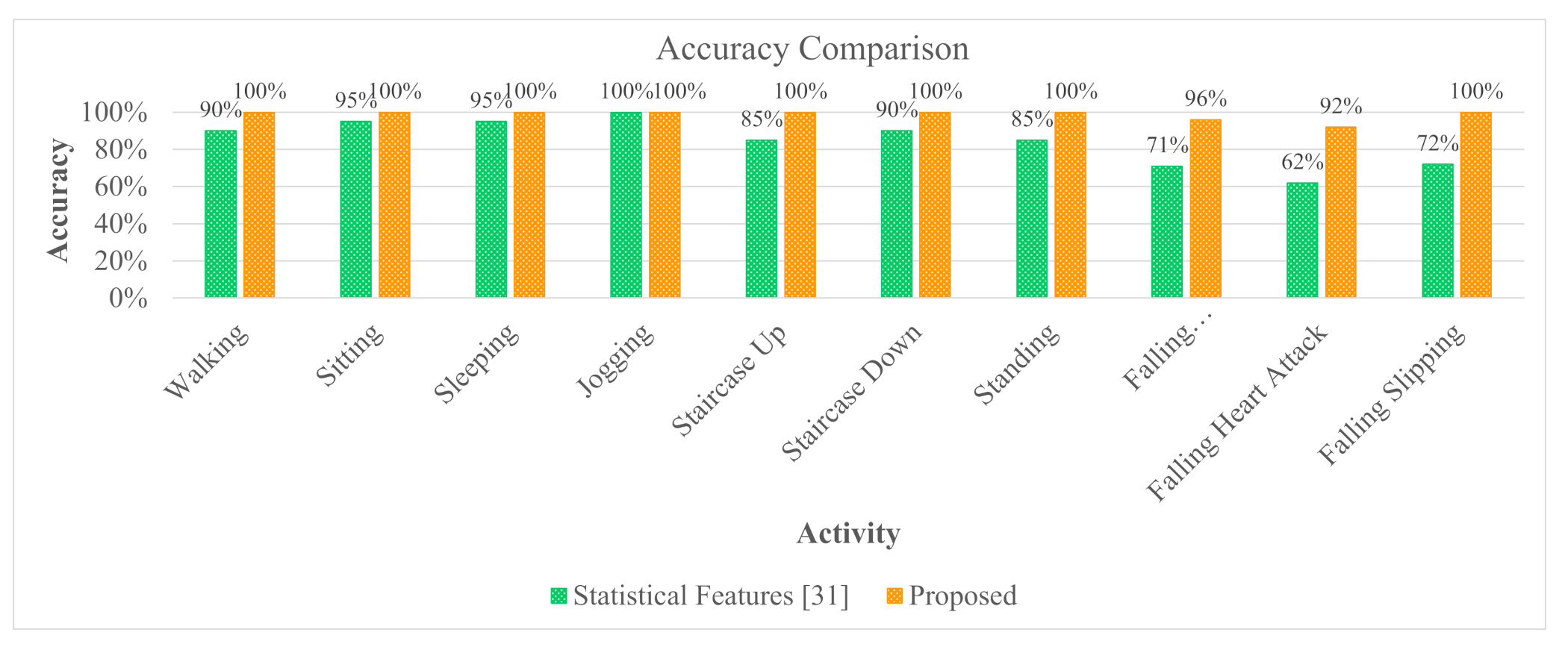

4.4. Result Analysis

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Nweke, H.F.; Teh, Y.W.; Al-Garadi, M.A.; Alo, U.R. Deep learning algorithms for human activity recognition using mobile and wearable sensor networks: State of the art and research challenges. Expert Syst. Appl. 2018, 105, 233–261. [Google Scholar] [CrossRef]

- Yuan, G.; Wang, Z.; Meng, F.; Yan, Q.; Xia, S. An overview of human activity recognition based on smartphone. Sens. Rev. 2019, 39, 288–306. [Google Scholar] [CrossRef]

- Cook, D.J.; Krishnan, N.C. Activity Learning: Discovering, Recognizing, and Predicting Human Behavior from Sensor Data; John Wiley & Sons: Hoboken, NJ, USA, 2015. [Google Scholar]

- Montero Quispe, K.G.; Sousa Lima, W.; Macêdo Batista, D.; Souto, E. MBOSS: A Symbolic Representation of Human Activity Recognition Using Mobile Sensors. Sensors 2018, 18, 4354. [Google Scholar] [CrossRef]

- Jain, A.; Kanhangad, V. Human activity classification in smartphones using accelerometer and gyroscope sensors. IEEE Sens. J. 2017, 18, 1169–1177. [Google Scholar] [CrossRef]

- Chen, Z.; Zhu, Q.; Soh, Y.C.; Zhang, L. Robust human activity recognition using smartphone sensors via CT-PCA and online SVM. IEEE Trans. Ind. Inform. 2017, 13, 3070–3080. [Google Scholar] [CrossRef]

- Mubashir, M.; Shao, L.; Seed, L. A survey on fall detection: Principles and approaches. Neurocomputing 2013, 100, 144–152. [Google Scholar] [CrossRef]

- Sazonov, E.; Metcalfe, K.; Lopez-Meyer, P.; Tiffany, S. RF hand gesture sensor for monitoring of cigarette smoking. In Proceedings of the 2011 Fifth International Conference on Sensing Technology, Palmerston North, New Zealand, 28 November–1 December 2011; pp. 426–430. [Google Scholar]

- Ehatisham-ul Haq, M.; Azam, M.A.; Loo, J.; Shuang, K.; Islam, S.; Naeem, U.; Amin, Y. Authentication of smartphone users based on activity recognition and mobile sensing. Sensors 2017, 17, 2043. [Google Scholar] [CrossRef]

- Akhavian, R.; Behzadan, A.H. Smartphone-based construction workers’ activity recognition and classification. Autom. Constr. 2016, 71, 198–209. [Google Scholar] [CrossRef]

- Yang, X.; Tian, Y. Super normal vector for human activity recognition with depth cameras. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 39, 1028–1039. [Google Scholar] [CrossRef]

- Franco, A.; Magnani, A.; Maio, D. A multimodal approach for human activity recognition based on skeleton and RGB data. Pattern Recognit. Lett. 2020, 131, 293–299. [Google Scholar] [CrossRef]

- Chaquet, J.M.; Carmona, E.J.; Fernández-Caballero, A. A survey of video datasets for human action and activity recognition. Comput. Vis. Image Underst. 2013, 117, 633–659. [Google Scholar] [CrossRef]

- Saha, S.S.; Rahman, S.; Rasna, M.J.; Islam, A.M.; Ahad, M.A.R. DU-MD: An open-source human action dataset for ubiquitous wearable sensors. In Proceedings of the 2018 Joint 7th International Conference on Informatics, Electronics & Vision (ICIEV) and 2018 2nd International Conference on Imaging, Vision & Pattern Recognition (icIVPR), Kitakyushu, Japan, 25–29 June 2018; pp. 567–572. [Google Scholar]

- Margarito, J.; Helaoui, R.; Bianchi, A.M.; Sartor, F.; Bonomi, A.G. User-independent recognition of sports activities from a single wrist-worn accelerometer: A template-matching-based approach. IEEE Trans. Biomed. Eng. 2015, 63, 788–796. [Google Scholar] [CrossRef]

- Wang, A.; Chen, G.; Yang, J.; Zhao, S.; Chang, C.Y. A comparative study on human activity recognition using inertial sensors in a smartphone. IEEE Sens. J. 2016, 16, 4566–4578. [Google Scholar] [CrossRef]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. A Public Domain Dataset for Human Activity Recognition Using Smartphones. In Proceedings of the European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning, Bruges, Belgium, 24–26 April 2013. [Google Scholar]

- Gu, F.; Khoshelham, K.; Valaee, S.; Shang, J.; Zhang, R. Locomotion activity recognition using stacked denoising autoencoders. IEEE Internet Things J. 2018, 5, 2085–2093. [Google Scholar] [CrossRef]

- Bragança, H.; Colonna, J.G.; Lima, W.S.; Souto, E. A Smartphone Lightweight Method for Human Activity Recognition Based on Information Theory. Sensors 2020, 20, 1856. [Google Scholar] [CrossRef]

- Figo, D.; Diniz, P.C.; Ferreira, D.R.; Cardoso, J.M. Preprocessing techniques for context recognition from accelerometer data. Pers. Ubiquitous Comput. 2010, 14, 645–662. [Google Scholar] [CrossRef]

- Sousa, W.; Souto, E.; Rodrigres, J.; Sadarc, P.; Jalali, R.; El-Khatib, K. A comparative analysis of the impact of features on human activity recognition with smartphone sensors. In Proceedings of the 23rd Brazillian Symposium on Multimedia and the Web, Gramado, Brazil, 17–20 October 2017; pp. 397–404. [Google Scholar]

- Ignatov, A. Real-time human activity recognition from accelerometer data using Convolutional Neural Networks. Appl. Soft Comput. 2018, 62, 915–922. [Google Scholar] [CrossRef]

- Xiao, F.; Pei, L.; Chu, L.; Zou, D.; Yu, W.; Zhu, Y.; Li, T. A Deep Learning Method for Complex Human Activity Recognition Using Virtual Wearable Sensors. arXiv 2020, arXiv:2003.01874. [Google Scholar]

- Ozcan, T.; Basturk, A. Human action recognition with deep learning and structural optimization using a hybrid heuristic algorithm. Clust. Comput. 2020, 23, 2847–2860. [Google Scholar] [CrossRef]

- Chen, K.; Yao, L.; Zhang, D.; Wang, X.; Chang, X.; Nie, F. A semisupervised recurrent convolutional attention model for human activity recognition. IEEE Trans. Neural Netw. Learn. Syst. 2019, 31, 1747–1756. [Google Scholar] [CrossRef]

- Cruciani, F.; Vafeiadis, A.; Nugent, C.; Cleland, I.; McCullagh, P.; Votis, K.; Giakoumis, D.; Tzovaras, D.; Chen, L.; Hamzaoui, R. Feature learning for Human Activity Recognition using Convolutional Neural Networks. CCF Trans. Pervasive Comput. Interact. 2020, 2, 18–32. [Google Scholar] [CrossRef]

- Ronao, C.A.; Cho, S.B. Human activity recognition with smartphone sensors using deep learning neural networks. Expert Syst. Appl. 2016, 59, 235–244. [Google Scholar] [CrossRef]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- Patil, C.M.; Jagadeesh, B.; Meghana, M. An approach of understanding human activity recognition and detection for video surveillance using HOG descriptor and SVM classifier. In Proceedings of the 2017 International Conference on Current Trends in Computer, Electrical, Electronics and Communication (CTCEEC), Mysore, India, 8–9 September 2017; pp. 481–485. [Google Scholar]

- Cao, L.; Wang, Y.; Zhang, B.; Jin, Q.; Vasilakos, A.V. GCHAR: An efficient Group-based Context—Aware human activity recognition on smartphone. J. Parallel Distrib. Comput. 2018, 118, 67–80. [Google Scholar] [CrossRef]

- Saha, S.S.; Rahman, S.; Rasna, M.J.; Zahid, T.B.; Islam, A.M.; Ahad, M.A.R. Feature extraction, performance analysis and system design using the du mobility dataset. IEEE Access 2018, 6, 44776–44786. [Google Scholar] [CrossRef]

- Ahmed, N.; Rafiq, J.I.; Islam, M.R. Enhanced Human Activity Recognition Based on Smartphone Sensor Data Using Hybrid Feature Selection Model. Sensors 2020, 20, 317. [Google Scholar] [CrossRef]

- Acharjee, D.; Mukherjee, A.; Mandal, J.; Mukherjee, N. Activity recognition system using inbuilt sensors of smart mobile phone and minimizing feature vectors. Microsyst. Technol. 2016, 22, 2715–2722. [Google Scholar] [CrossRef]

- Hsu, Y.L.; Lin, S.L.; Chou, P.H.; Lai, H.C.; Chang, H.C.; Yang, S.C. Application of nonparametric weighted feature extraction for an inertial-signal-based human activity recognition system. In Proceedings of the 2017 International Conference on Applied System Innovation (ICASI), Sapporo, Japan, 13–17 May 2017; pp. 1718–1720. [Google Scholar]

- Fang, L.; Yishui, S.; Wei, C. Up and down buses activity recognition using smartphone accelerometer. In Proceedings of the 2016 IEEE Information Technology, Networking, Electronic and Automation Control Conference, Chongqing, China, 20–22 May 2016; pp. 761–765. [Google Scholar]

- Tufek, N.; Yalcin, M.; Altintas, M.; Kalaoglu, F.; Li, Y.; Bahadir, S.K. Human Action Recognition Using Deep Learning Methods on Limited Sensory Data. IEEE Sens. J. 2019, 20, 3101–3112. [Google Scholar] [CrossRef]

- Nematallah, H.; Rajan, S.C.; Cret, A. Logistic Model Tree for Human Activity Recognition Using Smartphone-Based Inertial Sensors. In Proceedings of the 18th IEEE Sensors, Montreal, QC, Canada, 27–30 October 2019. [Google Scholar]

- Irvine, N.; Nugent, C.; Zhang, S.; Wang, H.; NG, W.W. Neural Network Ensembles for Sensor-Based Human Activity Recognition Within Smart Environments. Sensors 2020, 20, 216. [Google Scholar] [CrossRef]

- Wang, Z.; Jiang, M.; Hu, Y.; Li, H. An incremental learning method based on probabilistic neural networks and adjustable fuzzy clustering for human activity recognition by using wearable sensors. IEEE Trans. Inf. Technol. Biomed. 2012, 16, 691–699. [Google Scholar] [CrossRef]

- Xia, K.; Huang, J.; Wang, H. LSTM-CNN Architecture for Human Activity Recognition. IEEE Access 2020, 8, 56855–56866. [Google Scholar] [CrossRef]

- Teng, Q.; Wang, K.; Zhang, L.; He, J. The layer-wise training convolutional neural networks using local loss for sensor based human activity recognition. IEEE Sens. J. 2020, 20, 7265–7274. [Google Scholar] [CrossRef]

- Zhu, R.; Xiao, Z.; Cheng, M.; Zhou, L.; Yan, B.; Lin, S.; Wen, H. Deep ensemble learning for human activity recognition using smartphone. In Proceedings of the 2018 IEEE 23rd International Conference on Digital Signal Processing (DSP), Shanghai, China, 19–21 November 2018; pp. 1–5. [Google Scholar]

- Qin, Z.; Zhang, Y.; Meng, S.; Qin, Z.; Choo, K.K.R. Imaging and fusing time series for wearable sensor-based human activity recognition. Inf. Fusion 2020, 53, 80–87. [Google Scholar] [CrossRef]

- Kwon, Y.; Kang, K.; Bae, C. Unsupervised learning for human activity recognition using smartphone sensors. Expert Syst. Appl. 2014, 41, 6067–6074. [Google Scholar] [CrossRef]

- Hassan, M.M.; Uddin, M.Z.; Mohamed, A.; Almogren, A. A robust human activity recognition system using smartphone sensors and deep learning. Future Gener. Comput. Syst. 2018, 81, 307–313. [Google Scholar] [CrossRef]

- Hernandez, N.; Lundström, J.; Favela, J.; McChesney, I.; Arnrich, B. Literature Review on Transfer Learning for Human Activity Recognition Using Mobile and Wearable Devices with Environmental Technology. SN Comput. Sci. 2020, 1, 66. [Google Scholar] [CrossRef]

- Ding, R.; Li, X.; Nie, L.; Li, J.; Si, X.; Chu, D.; Liu, G.; Zhan, D. Empirical study and improvement on deep transfer learning for human activity recognition. Sensors 2019, 19, 57. [Google Scholar] [CrossRef]

- Shin, J.; Islam, M.R.; Rahim, M.A.; Mun, H.J. Arm movement activity based user authentication in P2P systems. Peer -Peer Netw. Appl. 2020, 13, 635–646. [Google Scholar] [CrossRef]

- Martis, R.J.; Acharya, U.R.; Min, L.C. ECG beat classification using PCA, LDA, ICA and discrete wavelet transform. Biomed. Signal Process. Control. 2013, 8, 437–448. [Google Scholar] [CrossRef]

| Activity | Number of Signal | Each Signal Dimension |

|---|---|---|

| Laying | 1944 | 768 |

| Sitting | 1777 | 768 |

| Standing | 1906 | 768 |

| Walking | 1722 | 768 |

| ClimbingStairs | 2950 | 768 |

| No. of Features | UCI-HAR Dataset | DU-MD Dataset | ||||||

|---|---|---|---|---|---|---|---|---|

| Accuracy | Precision | Recall | F1 Score | Accuracy | Precision | Recall | F1 Score | |

| 5 | 98.67 | 98.67 | 98.75 | 98.71 | 100 | 100 | 100 | 100 |

| 10 | 93.33 | 93.33 | 93.89 | 93.61 | 99.33 | 99.33 | 99.37 | 99.35 |

| 15 | 89.33 | 89.33 | 90.41 | 89.87 | 98.00 | 98.00 | 98.33 | 98.17 |

| 20 | 85.33 | 85.33 | 91.54 | 88.33 | 94.00 | 94.00 | 95.00 | 94.50 |

| 25 | 84.00 | 84.00 | 84.91 | 84.45 | 94.00 | 94.00 | 95.00 | 94.50 |

| 30 | 82.67 | 82.67 | 83.73 | 83.19 | 91.33 | 91.33 | 93.51 | 92.41 |

| 35 | 78.67 | 78.67 | 84.07 | 81.28 | 89.33 | 89.33 | 92.08 | 90.69 |

| 40 | 77.33 | 77.33 | 87.65 | 82.17 | 82.00 | 82.00 | 87.28 | 84.56 |

| All | 42.67 | 42.67 | 45.17 | 43.88 | 33.33 | 33.33 | 41.40 | 36.93 |

| No. of Features | UCI-HAR Dataset | DU-MD Dataset | ||||||

|---|---|---|---|---|---|---|---|---|

| Accuracy | Precision | Recall | F1 Score | Accuracy | Precision | Recall | F1 Score | |

| 5 | 99.73 | 99.69 | 99.77 | 99.73 | 100 | 100 | 100 | 100 |

| 10 | 95.00 | 95.67 | 95.00 | 95.33 | 100 | 100 | 100 | 100 |

| 15 | 91.00 | 91.18 | 91.00 | 91.09 | 100 | 100 | 100 | 100 |

| 20 | 87.33 | 87.63 | 87.33 | 87.48 | 98.00 | 98.33 | 98.00 | 98.17 |

| 25 | 86.00 | 87.14 | 86.00 | 86.57 | 96.00 | 97.14 | 96.00 | 96.57 |

| 30 | 83.73 | 83.77 | 83.69 | 83.73 | 93.00 | 95.38 | 94.00 | 94.69 |

| 35 | 80.00 | 80.39 | 81.00 | 80.83 | 90.00 | 92.47 | 90.00 | 91.22 |

| 40 | 79.00 | 79.14 | 79.00 | 79.07 | 82.00 | 88.13 | 82.00 | 84.95 |

| All | 46.33 | 47.29 | 48.00 | 47.83 | 36.00 | 36.25 | 36.00 | 36.12 |

| Activity | Feature Level Fusion+SVM [5] | CNN [22] | Hybrid Feature Selection [32] | Proposed Model |

|---|---|---|---|---|

| Laying | 100 | 99.40 | 99.26 | 100 |

| Sitting | 98.90 | 90.04 | 97.76 | 100 |

| Standing | 98.14 | 98.20 | 97.18 | 100 |

| Walking | 99.88 | 99.40 | 98.99 | 93.33 |

| ClimbingStairs | 99.96 | 98.81 | 97.24 | 100 |

| Authors | Methods | No. of Features | Accuracy (%) |

|---|---|---|---|

| Saha et al. [31] | DWT+SF+SVM | 39 | 90.50 |

| DWT+SF+EoC | 93.00 | ||

| Proposed Model | EPS+LDA+MCSVM | 5 | 100 |

| Author | Methods | Accuracy (%) | Each Sample Classification Time |

|---|---|---|---|

| Chen et al. [25] | Recurrent Convolutional Attention | 81.32 | 21.538 ms ∼ 57.253 ms |

| Ignatov et al. [22] | CNN | 97.63 | 33.286 ms ∼ 35.714 ms |

| Teng et al. [41] | CNN+Baseline (global loss) | 96.20 | 24.462 ms ∼ 26.055 ms |

| CNN+pred (local loss) | 95.42 | ||

| CNN+sim (local loss) | 96.16 | ||

| CNN+predsim (local loss) | 96.98 | ||

| Tufek et al. [36] | 2D CNN | 85.20 | 331.27 ms ∼ 338.03 ms |

| 1D CNN+LSTM | 88.50 | ||

| 2 layer LSTM | 93.70 | ||

| 3 layer LSTM | 97.40 | ||

| Jain et al. [5] | Score-level fusion+KNN | 84.02 | – |

| Feature-level fusion+KNN | 91.75 | ||

| Score-level fusion+SVM | 96.44 | ||

| Feature-level fusion+SVM | 97.12 | ||

| Xia et al. [40] | LSTM-CNN | 95.78 | 340.62 ms ∼ 349.80 ms |

| Proposed Model | EPS+LDA+MCSVM | 98.67 | 0.0500 ms ∼ 0.1235 ms |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ahmed Bhuiyan, R.; Ahmed, N.; Amiruzzaman, M.; Islam, M.R. A Robust Feature Extraction Model for Human Activity Characterization Using 3-Axis Accelerometer and Gyroscope Data. Sensors 2020, 20, 6990. https://doi.org/10.3390/s20236990

Ahmed Bhuiyan R, Ahmed N, Amiruzzaman M, Islam MR. A Robust Feature Extraction Model for Human Activity Characterization Using 3-Axis Accelerometer and Gyroscope Data. Sensors. 2020; 20(23):6990. https://doi.org/10.3390/s20236990

Chicago/Turabian StyleAhmed Bhuiyan, Rasel, Nadeem Ahmed, Md Amiruzzaman, and Md Rashedul Islam. 2020. "A Robust Feature Extraction Model for Human Activity Characterization Using 3-Axis Accelerometer and Gyroscope Data" Sensors 20, no. 23: 6990. https://doi.org/10.3390/s20236990

APA StyleAhmed Bhuiyan, R., Ahmed, N., Amiruzzaman, M., & Islam, M. R. (2020). A Robust Feature Extraction Model for Human Activity Characterization Using 3-Axis Accelerometer and Gyroscope Data. Sensors, 20(23), 6990. https://doi.org/10.3390/s20236990