Computation Offloading in a Cognitive Vehicular Networks with Vehicular Cloud Computing and Remote Cloud Computing

Abstract

1. Introduction

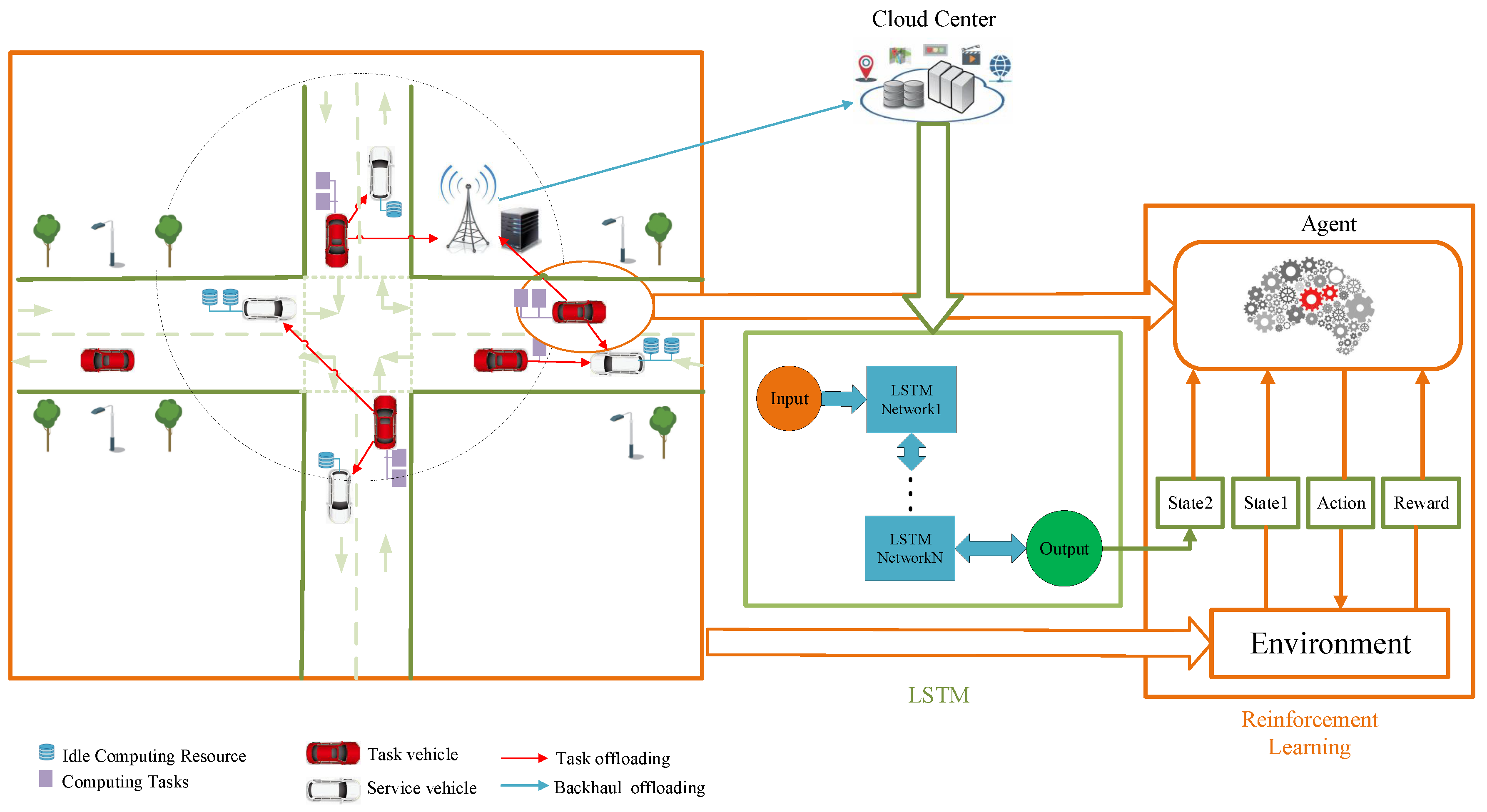

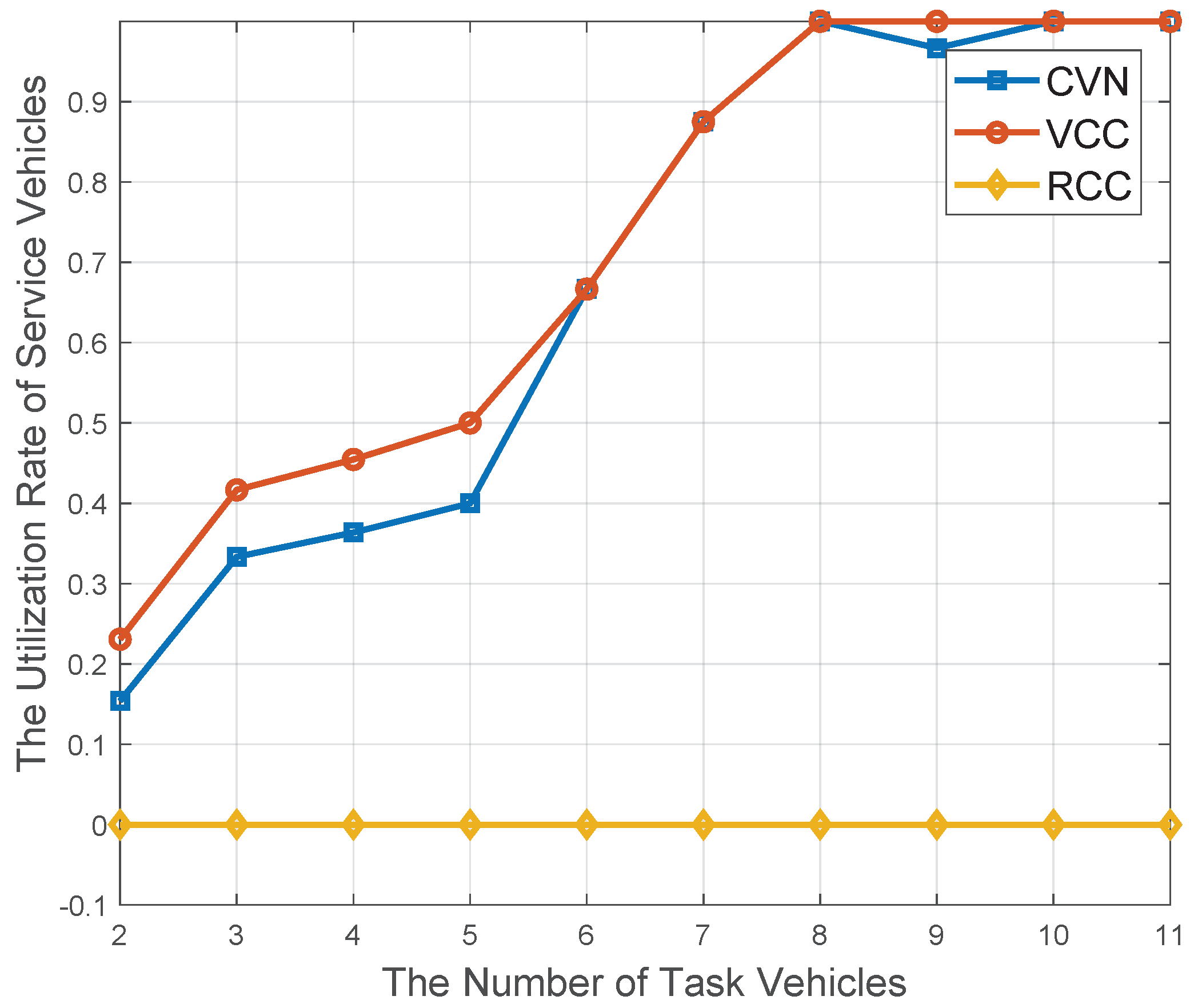

- To conduct the computation offloading for the resource-intensive vehicular applications, the concept of cognitive vehicle network (CVN) is proposed, in which vehicle cloud computing (VCC) and remote cloud computing (RCC) are jointly considered.

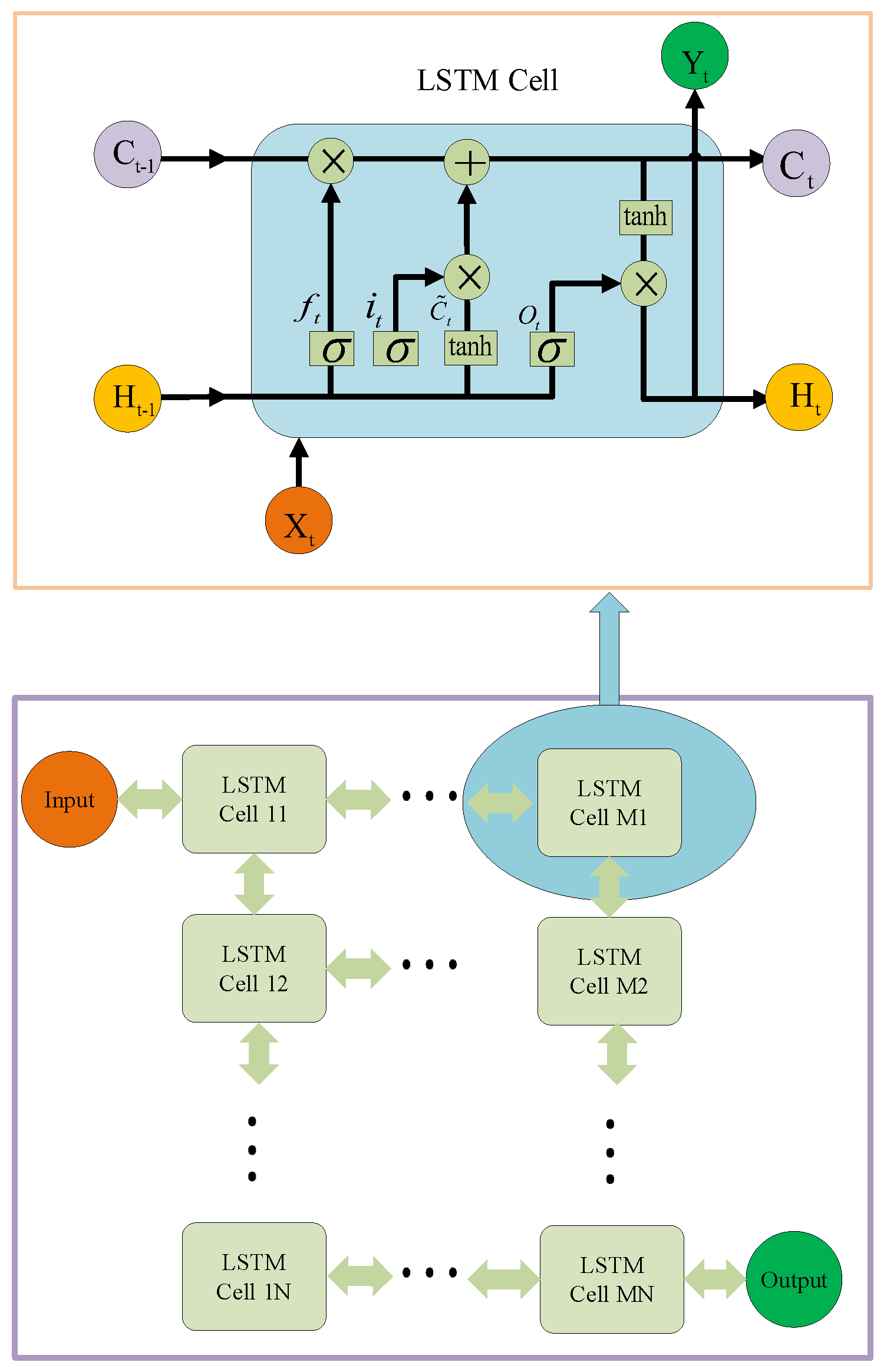

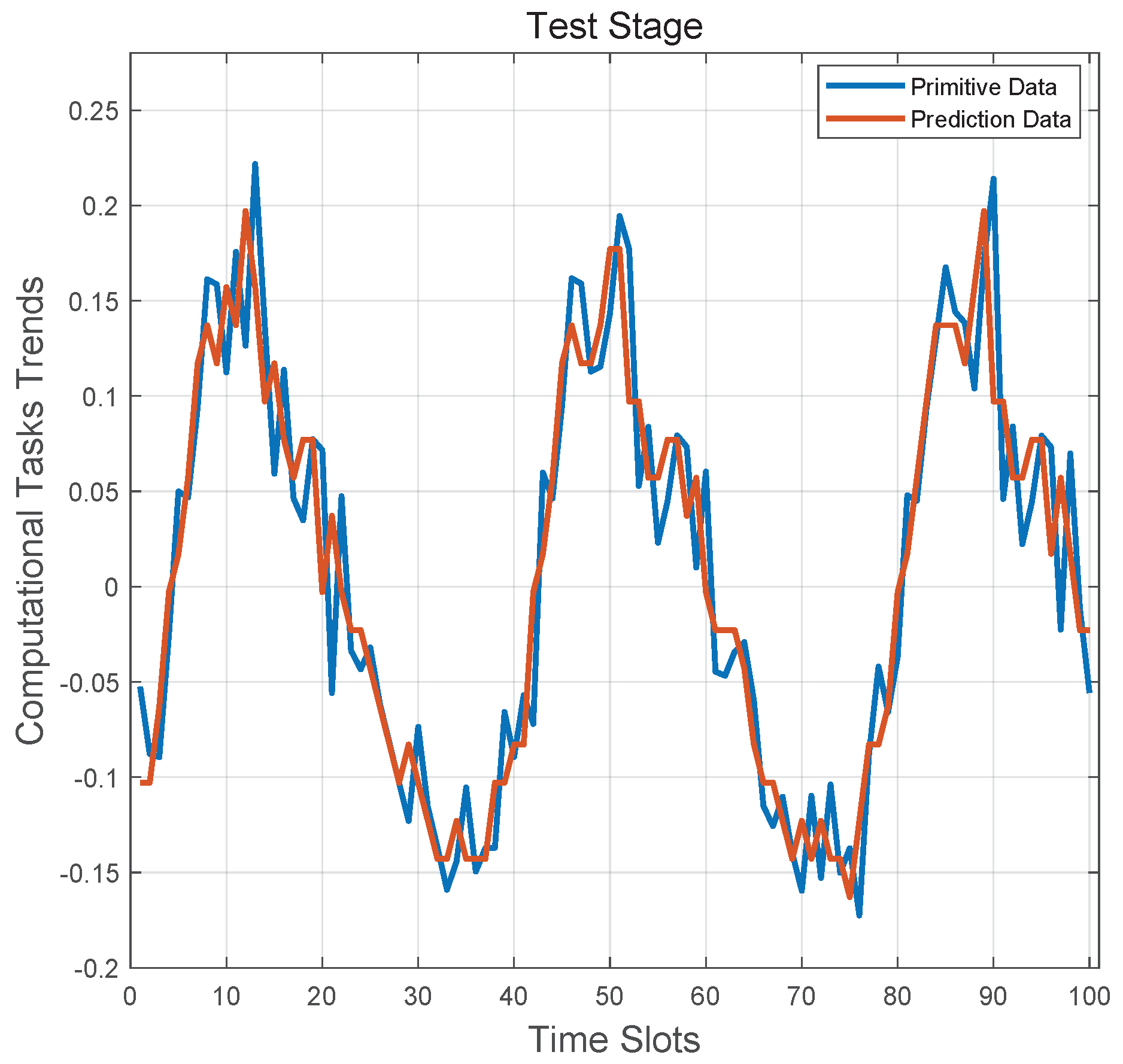

- To overcome challenges caused by the dynamics and uncertainty of on-board resource utilization status in a VCC, a perception-exploitation computation offloading scheme is proposed. In the perception stage, a Long Short-Term Memory (LSTM) model-based resource discovery mechanism is designed to predict the on-board computation resource utilization status in a VCC.

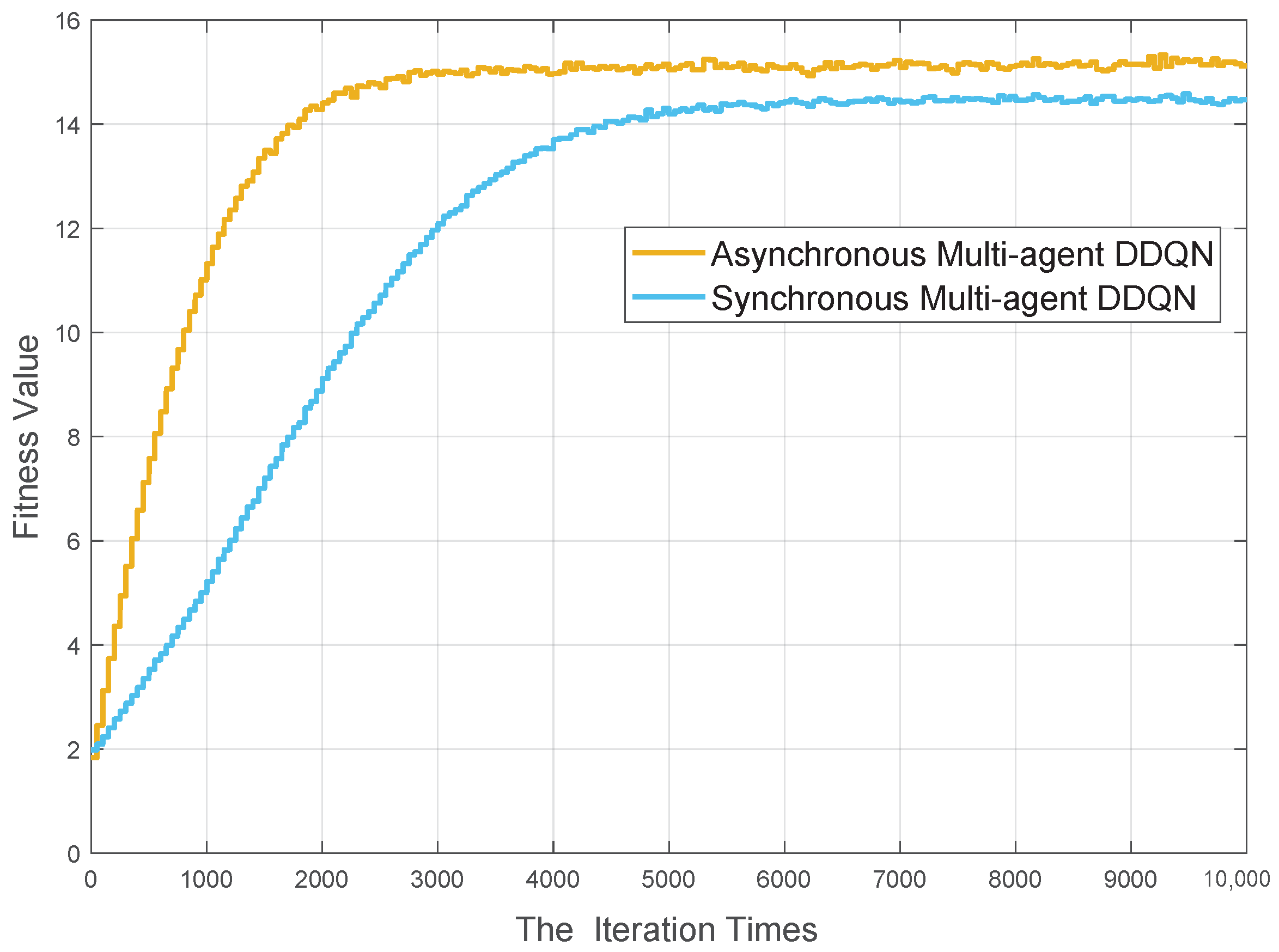

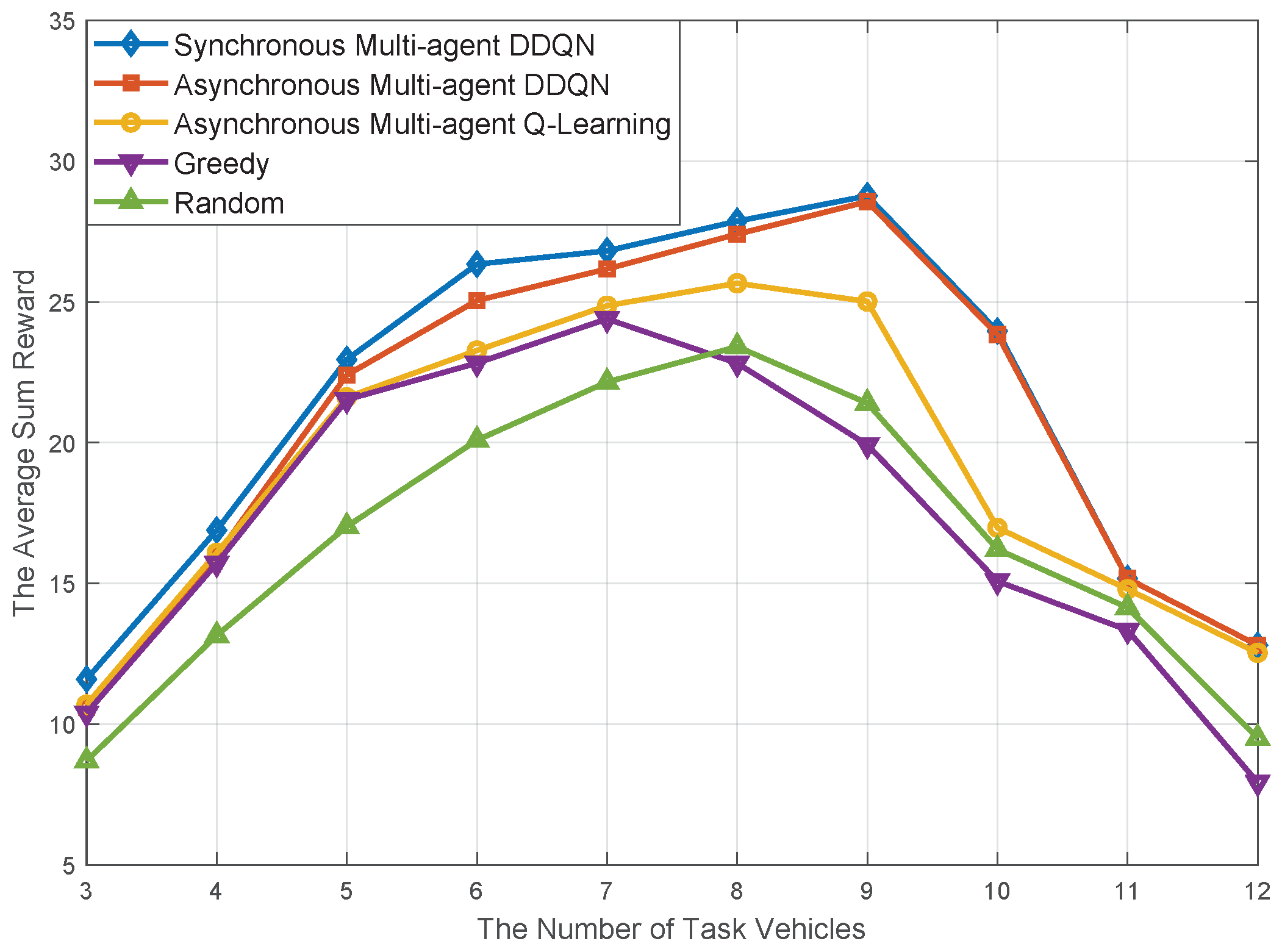

- Based on the resource discovery results, a decentralized DRL algorithm-based computational resource allocation scheme is proposed, in which an iterative updating policy is adopted to solve the non-stationary issue and reduce the computation complexity.

2. Related Works

2.1. Related Works on Cognitive Vehicular Network

2.2. Related Works on Jointly Computation Offloading Scheme in Vehicular Network

2.3. Related Works on Resource Allocation for Computation Offloading

2.4. Related Works on Computation Resource Utilizing Status Prediction in a VCC Network

3. System Model and Problem Formulation

3.1. System Description

- RSU: As a common transportation infrastructure in the vehicular network, it is usually equipped with functions, such as wireless access and vehicular tasks computation. In addition, it is assumed that the RSU is wire-connected with cloud computing center. In this way, we can think that its computing resources are sufficient to meet the needs of offloading computing tasks in the vehicular network. However, because it is usually constructed and maintained by a third-party company, its service price is relative high.

- Service Vehicles: The vehicles with resource availability are defined as service vehicles [41], which can share their limited idle computing resources to other vehicles with resource requests. Since the resource shared by service vehicles are idle, it is reasonable that the service price is lower than RSU.

- Task Vehicles: The vehicles with resource requests are defined as task vehicles [41], which send resource-demanding requests to the neighboring service vehicles or resource-rich RSUs for additional computational resource. They need to pay for the received computation offloading service from RSUs or service vehicles.

3.2. Communication Model

- V2V links: Their capacity can be defined with (3). Without the participation of central control unit and reliance on the assistance of transport infrastructure, V2V links is utilized for free.

- V2I links: Here, one task vehicle offloads its computing task to clouding computing empowered transport infrastructure, like RSUs., and its capacity is the same as V2V links in . V2I links need to pay for the assistance of transport infrastructure.

- V2mV links: To satisfy the requirements of multi-terminal access, the concept of V2mV link has been proposed in our previous work. Here, considering the delay and complexity caused by successive interference cancellation (SIC) technology-enabled decoding technology, it is assumed that each task vehicle can offload its computational tasks to at most two destinations. The power allocation scheme is shown in . Under the constraint of transmitting power , when channel gain between vehicle i and k is inferior to between vehicle i and in , power allocated to vehicle is more than power allocated to k. Thereafter, the capacity of V2mV is presented in (5)–(7).

3.3. Computation Model

- Due to selfishness and privacy, service vehicles are reluctant to share their idle computing resource with other vehicles. As a result, the task vehicles cannot efficiently obtain the service vehicles’ real-time resource utilization status.

- After receiving the resource requests from their neighboring task vehicles, the service vehicles can decide to refuse or accept these requests with their willingness.

- Even if these requests are accepted, the ongoing offloading service have to be ceased once the service vehicle itself resource demanding is abruptly surging.

3.4. Problem Formulation

4. LSTM-Based Resource Discovery in a VCN

- Firstly, different from channel utilization status prediction in a CRN, so far there is no effective approached to track the dynamic variability of on-board computation resources. In addition, there is not a central control unit or an unified coordination mechanism among vehicles.

- Secondly, the resource sharing policy in a CVN is fundamentally different from spectrum sharing principle in a CRN. Unlike the spectrum sharing with a predefined tolerable interference threshold in a CRN, the available resource is limited and it can only be shared with restricted amount of vehicular tasks.

- Last but not least, the CRN environments for channel status prediction are usually relatively static, whereas the CVN environment for resource utilization status prediction is highly dynamic. As a result, the resource discovery in a CVN is more challenging than the channel status prediction in a CRN.

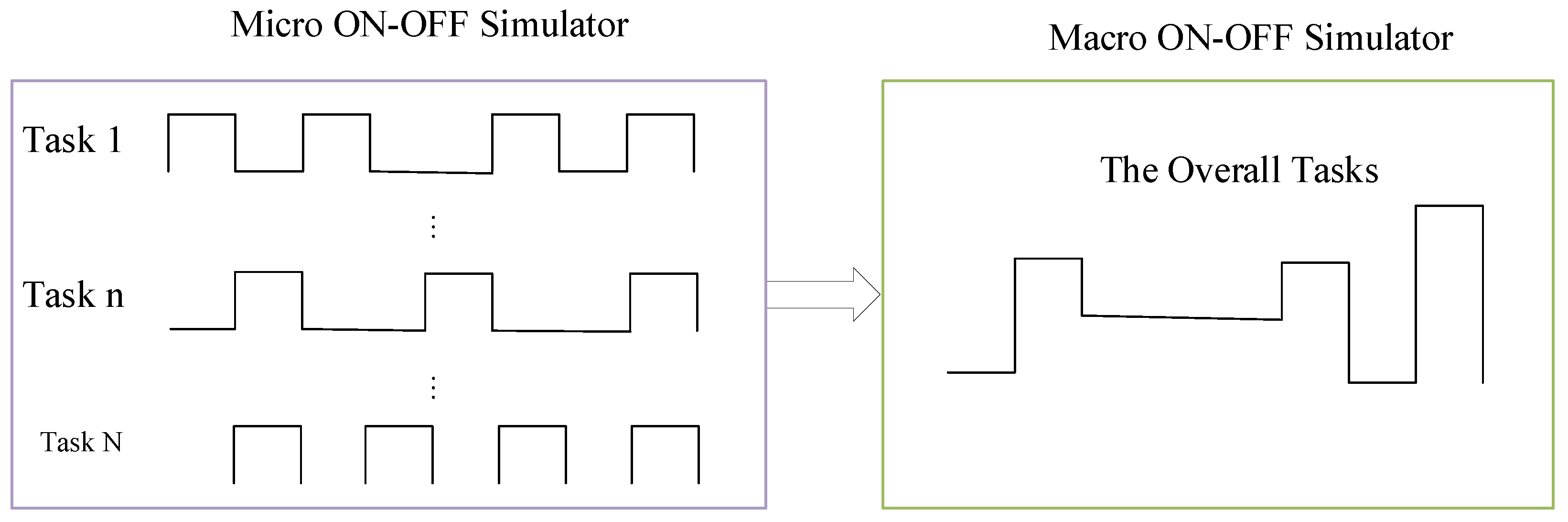

4.1. Self-Similar Traffic Simulator

| Algorithm 1 The Computation Resource Tasks Sequence with Self-similar Traffic Model |

| Input: Define the computing task types , system parameters , , , , |

| Output: The cumulative computing tasks sequence |

| 1: for alldo |

| 2: Initialization , , , , |

| 3: for alldo |

| 4: Generate random number u with Uniform distribution between 0 and 1 |

| 5: ifthen |

| 6: Achieve the period with |

| 7: Generate the computation tasks continuously within |

| 8: Set |

| 9: else |

| 10: Achieve the period with |

| 11: Keep sleep within |

| 12: Set |

| 13: end if |

| 14: end for |

| 15: end for |

| 16: return The cumulative computing tasks sequence |

4.2. LSTM-Based Resource Utilization Status Prediction in a VCN

5. Multi-Agent Double Deep Q Network (DDQN) Algorithm for Computation Offloading in a CVN

5.1. The Multi-Agent DRL Framework

5.2. Multi-Agent DDQN-Based Computation Offloading Management Scheme

| Algorithm 2 DDQN algorithm for Computation Offloading Management in a VCN |

| Input: One primary Q-network structure and one target Q-network and one replay memory M with size m |

| Output: The optimal computation offloading management solution |

| 1: Initialize network parameters and of primary network and target network |

| 2: for alldo |

| 3: Generate the current state based on the up to date environmental information |

| 4: Select a action based on policy |

| 5: Obtain reward from the environment and transfer to the new state |

| 6: Obtain the reward and store the tuple into memory M |

| 7: if and the reply buffer is filled up then |

| 8: Draw a mini-batch of tuples from the reply buffer for model training |

| 9: Compute the target Q-value by target network with the current state in |

| 10: Choose the action greedily with the optimal Q-value |

| 11: Compute the loss function value with and update current Q network with |

| 12: ifthen |

| 13: Update the network parameters of target network with |

| 14: end if |

| 15: end if |

| 16: end for |

| 17: return the final resource allocation result |

6. Simulation Results and Analysis

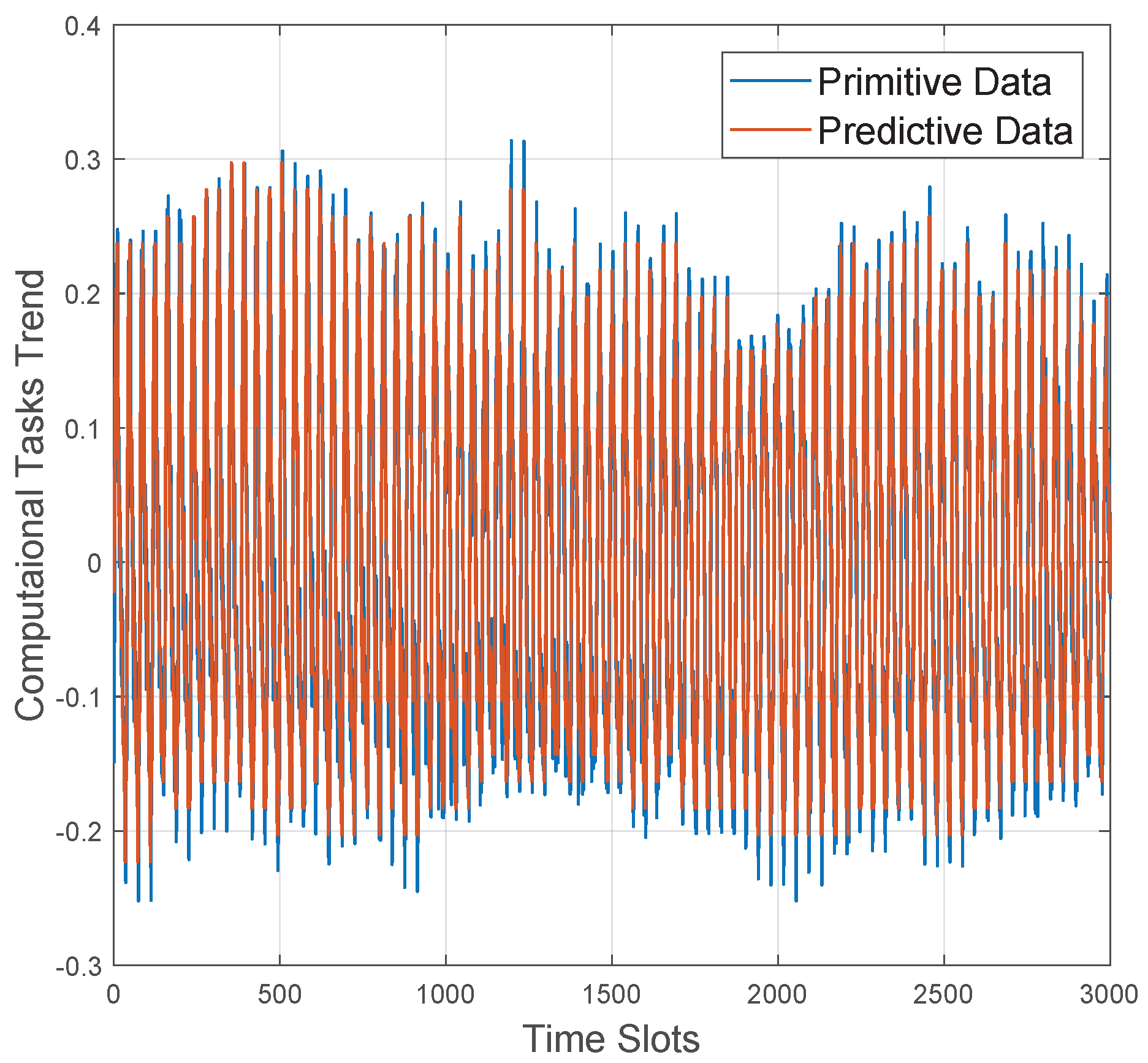

6.1. LSTM-Enabled Resource Discovery Algorithm

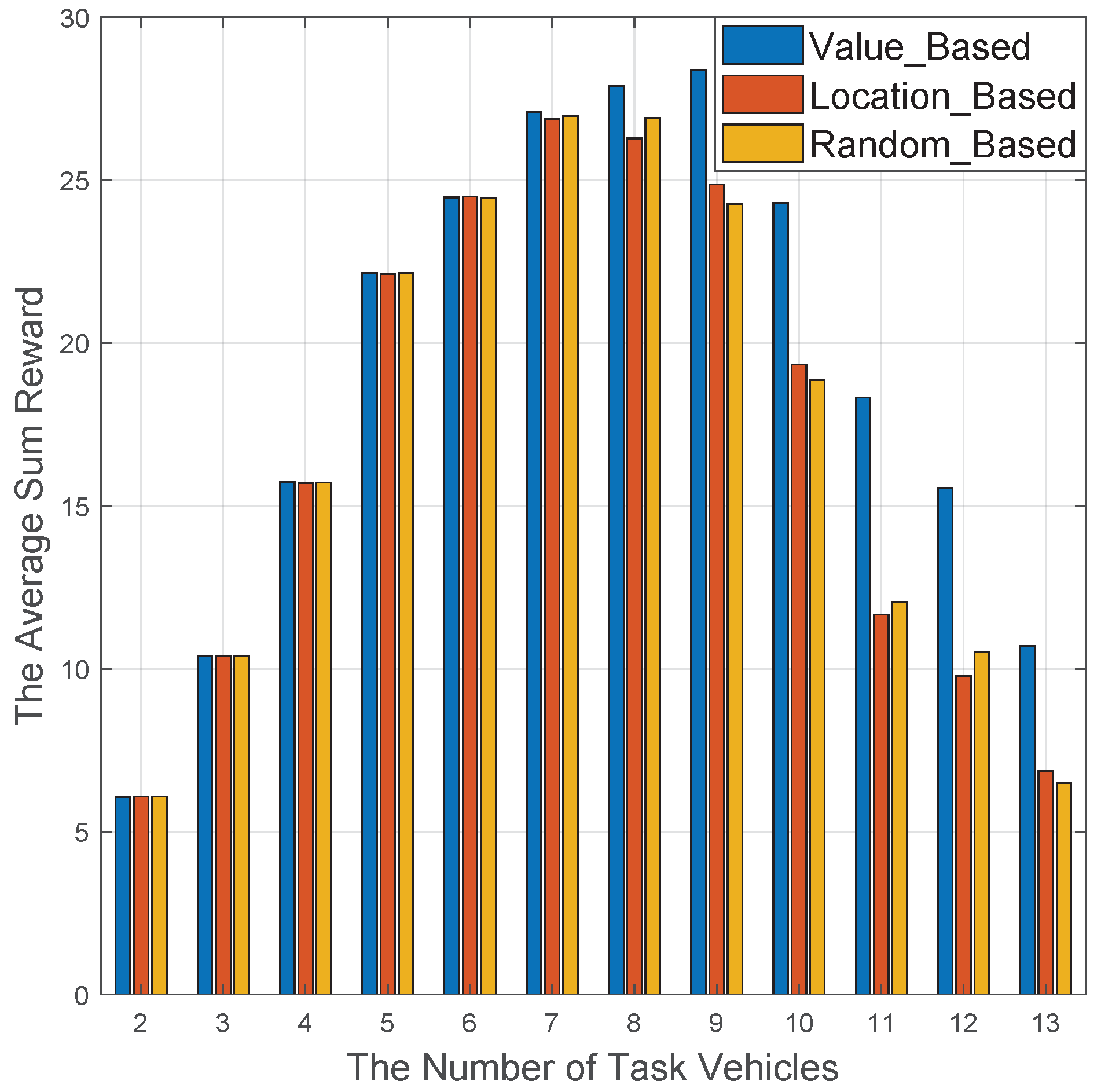

6.2. DDQN-Based Computing Offloading Algorithm

7. Discussion

8. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Cheng, X.; Chen, C.; Zhang, W.; Yang, Y. 5g-enabled cooperative intelligent vehicular (5genciv) framework: When benz meets marconi. IEEE Intell. Syst. 2017, 32, 53–59. [Google Scholar] [CrossRef]

- Sasaki, K.; Suzuki, N.; Makido, S.; Nakao, A. Vehicle control system coordinated between cloud and mobile edge computing. In Proceedings of the 2016 55th Annual Conference of the Society of Instrument and Control Engineers of Japan (SICE), Tsukuba, Japan, 20–23 September 2016; pp. 1122–1127. [Google Scholar]

- Hu, Y.C.; Patel, M.; Sabella, D.; Sprecher, N.; Young, V. Mobile edge computing a key technology towards 5g. ETSI White Pap. 2015, 11, 1–16. [Google Scholar]

- Yang, Q.; Zhu, B.; Wu, S. An architecture of cloud-assisted information dissemination in vehicular networks. IEEE Access 2016, 4, 2764–2770. [Google Scholar] [CrossRef]

- Mumtaz, S.; Bo, A.; Al-Dulaimi, A.; Tsang, K.-F. Guest editorial 5g and beyond mobile technologies and applications for industrial iot (iiot). IEEE Trans. Ind. Inform. 2018, 14, 2588–2591. [Google Scholar] [CrossRef]

- Xiao, Y.; Zhu, C. Vehicular fog computing: Vision and challenges. In Proceedings of the 2017 IEEE International Conference on Pervasive Computing and Communications Workshops (PerCom Workshops), Kona, HI, USA, 13–17 March 2017; pp. 6–9. [Google Scholar]

- Zhou, S.; Sun, Y.; Jiang, Z.; Niu, Z. Exploiting moving intelligence: Delay-optimized computation offloading in vehicular fog networks. IEEE Commun. Mag. 2019, 57, 49–55. [Google Scholar] [CrossRef]

- Darwish, T.S.; Bakar, K.A. Fog based intelligent transportation big data analytics in the internet of vehicles environment: Motivations, architecture, challenges, and critical issues. IEEE Access 2018, 6, 15679–15701. [Google Scholar] [CrossRef]

- Liu, Y.; Yu, H.; Xie, S.; Zhang, Y. Deep reinforcement learning for offloading and resource allocation in vehicle edge computing and networks. IEEE Trans. Veh. Technol. 2019, 68, 11158–11168. [Google Scholar] [CrossRef]

- Wang, K.; Yin, H.; Quan, W.; Min, G. Enabling collaborative edge computing for software defined vehicular networks. IEEE Netw. 2018, 32, 112–117. [Google Scholar] [CrossRef]

- Tao, M.; Ota, K.; Dong, M. Foud: Integrating fog and cloud for 5g-enabled v2g networks. IEEE Netw. 2017, 31, 8–13. [Google Scholar] [CrossRef]

- Wang, X.; Ning, Z.; Wang, L. Offloading in internet of vehicles: A fog-enabled real-time traffic management system. IEEE Trans. Ind. Inform. 2018, 14, 4568–4578. [Google Scholar] [CrossRef]

- Akherfi, K.; Gerndt, M.; Harroud, H. Mobile cloud computing for computation offloading: Issues and challenges. Appl. Comput. Inform. 2018, 14, 1–16. [Google Scholar] [CrossRef]

- Ning, Z.; Huang, J.; Wang, X. Vehicular fog computing: Enabling real-time traffic management for smart cities. IEEE Wirel. Commun. 2019, 26, 87–93. [Google Scholar] [CrossRef]

- Mach, P.; Becvar, Z. Mobile edge computing: A survey on architecture and computation offloading. IEEE Commun. Surv. Tutor. 2017, 19, 1628–1656. [Google Scholar] [CrossRef]

- Lin, C.-C.; Deng, D.-J.; Yao, C.-C. Resource allocation in vehicular cloud computing systems with heterogeneous vehicles and roadside units. IEEE Internet Things J. 2017, 5, 3692–3700. [Google Scholar] [CrossRef]

- Jang, I.; Choo, S.; Kim, M.; Pack, S.; Dan, G. The software-defined vehicular cloud: A new level of sharing the road. IEEE Veh. Technol. 2017, 12, 78–88. [Google Scholar] [CrossRef]

- Niyato, D.; Hossain, E.; Wang, P. Optimal channel access management with qos support for cognitive vehicular networks. IEEE Trans. Mobile Comput. 2010, 10, 573–591. [Google Scholar] [CrossRef]

- Li, H.; Irick, D.K. Collaborative spectrum sensing in cognitive radio vehicular ad hoc networks: Belief propagation on highway. In Proceedings of the 2010 IEEE 71st Vehicular Technology Conference, Taipei, Taiwan, 16–19 May 2010; pp. 1–5. [Google Scholar]

- Gu, J. Dynamic spectrum allocation algorithm for resolving channel conflict in cognitive vehicular networks. In Proceedings of the 2017 7th IEEE International Conference on Electronics Information and Emergency Communication (ICEIEC), Shenzhen, China, 21–23 July 2017; pp. 413–416. [Google Scholar]

- Zhang, K.; Leng, S.; Peng, X.; Pan, L.; Maharjan, S.; Zhang, Y. Artificial intelligence inspired transmission scheduling in cognitive vehicular communications and networks. IEEE Internet Things J. 2018, 6, 1987–1997. [Google Scholar] [CrossRef]

- Chen, M.; Tian, Y.; Fortino, G.; Zhang, J.; Humar, I. Cognitive internet of vehicles. Comput. Commun. 2018, 120, 58–70. [Google Scholar] [CrossRef]

- Lu, H.; Liu, Q.; Tian, D.; Li, Y.; Kim, H.; Serikawa, S. The cognitive internet of vehicles for autonomous driving. IEEE Netw. 2019, 33, 65–73. [Google Scholar] [CrossRef]

- He, H.; Shan, H.; Huang, A.; Sun, L. Resource allocation for video streaming in heterogeneous cognitive vehicular networks. IEEE Trans. Vehicular Technol. 2016, 65, 7917–7930. [Google Scholar] [CrossRef]

- Qian, Y.; Chen, M.; Chen, J.; Hossain, M.S.; Alamri, A. Secure enforcement in cognitive internet of vehicles. IEEE Internet Things J. 2018, 5, 1242–1250. [Google Scholar] [CrossRef]

- Tandon, R.; Simeone, O. Harnessing cloud and edge synergies: Toward an information theory of fog radio access networks. IEEE Commun. 2016, 54, 44–50. [Google Scholar] [CrossRef]

- Ning, Z.; Dong, P.; Kong, X.; Xia, F. A cooperative partial computation offloading scheme for mobile edge computing enabled internet of things. IEEE Internet Things J. 2018, 6, 4804–4814. [Google Scholar] [CrossRef]

- Yang, M.; Liu, N.; Wang, W.; Gong, H.; Liu, M. Mobile vehicular offloading with individual mobility. IEEE Access 2019, 8, 30706–30719. [Google Scholar] [CrossRef]

- Chen, M.; Qian, Y.; Hao, Y.; Li, Y.; Song, J. Data-driven computing and caching in 5g networks: Architecture and delay analysis. IEEE Wirel. Commun. 2018, 25, 70–75. [Google Scholar] [CrossRef]

- Zhang, K.; Leng, S.; He, Y.; Maharjan, S.; Zhang, Y. Cooperative content caching in 5g networks with mobile edge computing. IEEE Wirel. Commun. 2018, 25, 80–87. [Google Scholar] [CrossRef]

- Ning, Z.; Wang, X.; Rodrigues, J.J.; Xia, F. Joint computation offloading, power allocation, and channel assignment for 5g-enabled traffic management systems. IEEE Trans. Ind. Inform. 2019, 15, 3058–3067. [Google Scholar] [CrossRef]

- Ren, J.; Yu, G.; He, Y.; Li, G.Y. Collaborative cloud and edge computing for latency minimization. IEEE Trans. Veh. Technol. 2019, 68, 5031–5044. [Google Scholar] [CrossRef]

- Sun, J.; Gu, Q.; Zheng, T.; Dong, P.; Qin, Y. Joint communication and computing resource allocation in vehicular edge computing. Int. J. Distrib. Sens. Netw. 2019, 15. [Google Scholar] [CrossRef]

- Feng, J.; Liu, Z.; Wu, C.; Ji, Y. Ave: Autonomous vehicular edge computing framework with aco-based scheduling. IEEE Trans. Veh. Technol. 2017, 66, 10660–10675. [Google Scholar] [CrossRef]

- Zhao, Y.; Hong, Z.; Wang, G.; Huang, J. High-order hidden bivariate markov model: A novel approach on spectrum prediction. In Proceedings of the 2016 25th International Conference on Computer Communication and Networks (ICCCN), Waikoloa, HI, USA, 1–4 August 2016; pp. 1–7. [Google Scholar]

- Tumuluru, V.K.; Wang, P.; Niyato, D. A neural network based spectrum prediction scheme for cognitive radio. In Proceedings of the 2010 IEEE International Conference on Communications, Cape Town, South Africa, 23–27 May 2010; pp. 1–5. [Google Scholar]

- Yarkan, S.; Arslan, H. Binary time series approach to spectrum prediction for cognitive radio. In Proceedings of the 2007 IEEE 66th Vehicular Technology Conference, Baltimore, MD, USA, 30 September–3 October 2007; pp. 1563–1567. [Google Scholar]

- Manawadu, U.E.; Kawano, T.; Murata, S.; Kamezaki, M.; Muramatsu, J.; Sugano, S. Multiclass classification of driver perceived workload using long short-term memory based recurrent neural network. In Proceedings of the 2018 IEEE Intelligent Vehicles Symposium (IV), Suzhou, China, 26 June–1 July 2018; pp. 1–6. [Google Scholar]

- Wei, X.; Li, J.; Yuan, Q.; Chen, K.; Zhou, A.; Yang, F. Predicting fine-grained traffic conditions via spatio-temporal lstm. Wireless Communications Mob. Comput. 2019, 2019, 9242598. [Google Scholar] [CrossRef]

- Janardhanan, D.; Barrett, E. Cpu workload forecasting of machines in data centers using lstm recurrent neural networks and arima models. In Proceedings of the 2017 12th International Conference for Internet Technology and Secured Transactions (ICITST), Cambridge, UK, 11–14 December 2017; pp. 55–60. [Google Scholar]

- Sun, Y.; Guo, X.; Song, J.; Zhou, S.; Jiang, Z.; Liu, X.; Niu, Z. Adaptive learning-based task offloading for vehicular edge computing systems. IEEE Trans. Veh. Technol. 2019, 68, 3061–3074. [Google Scholar] [CrossRef]

- Andreev, S.; Galinina, O.; Pyattaev, A.; Hosek, J.; Masek, P.; Yanikomeroglu, H.; Koucheryavy, Y. Exploring synergy between communications, caching, and computing in 5g-grade deployments. IEEE Commun. Mag. 2016, 54, 60–69. [Google Scholar] [CrossRef]

- Dai, Y.; Xu, D.; Maharjan, S.; Zhang, Y. Joint computation offloading and user association in multi-task mobile edge computing. IEEE Trans. Veh. Technol. 2018, 67, 12313–12325. [Google Scholar] [CrossRef]

- Ding, G.; Jiao, Y.; Wang, J.; Zou, Y.; Wu, Q.; Yao, Y.-D.; Hanzo, L. Spectrum inference in cognitive radio networks: Algorithms and applications. IEEE Commun. Surv. Tutor. 2017, 20, 150–182. [Google Scholar] [CrossRef]

- Taqqu, M.S.; Levy, J.B. Using renewal processes to generate long-range dependence and high variability. In Dependence in Probability and Statistics; Springer: Berlin/Heidelberg, Germany, 1986; pp. 73–89. [Google Scholar]

- Peng, H.-W.; Wu, S.-F.; Wei, C.-C.; Lee, S.-J. Time series forecasting with a neuro-fuzzy modeling scheme. Appl. Soft Comput. 2015, 32, 481–493. [Google Scholar] [CrossRef]

- Aoude, G.S.; Desaraju, V.R.; Stephens, L.H.; How, J.P. Driver behavior classification at intersections and validation on large naturalistic data set. IEEE Trans. Intell. Transp. Syst. 2012, 13, 724–736. [Google Scholar] [CrossRef]

- Kumar, P.; Perrollaz, M.; Lefevre, S.; Laugier, C. Learning-based approach for online lane change intention prediction. In Proceedings of the 2013 IEEE Intelligent Vehicles Symposium (IV), Gold Coast City, Australia, 23–26 June 2013; pp. 797–802. [Google Scholar]

- Morris, B.T.; Trivedi, M.M. Trajectory learning for activity understanding: Unsupervised, multilevel, and long-term adaptive approach. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 2287–2301. [Google Scholar] [CrossRef]

- Liu, C.; Tomizuka, M. Enabling safe freeway driving for automated vehicles. In Proceedings of the 2016 American Control Conference (ACC), Boston, MA, USA, 6–8 July 2016; pp. 3461–3467. [Google Scholar]

- Schreier, M.; Willert, V.; Adamy, J. Bayesian, maneuver-based, long-term trajectory prediction and criticality assessment for driver assistance systems. In Proceedings of the 17th International IEEE Conference on Intelligent Transportation Systems (ITSC), Qingdao, China, 8–11 October 2014; pp. 334–341. [Google Scholar]

- Schlechtriemen, J.; Wirthmueller, F.; Wedel, A.; Breuel, G.; Kuhnert, K.-D. When will it change the lane? A probabilistic regression approach for rarely occurring events. In Proceedings of the 2015 IEEE Intelligent Vehicles Symposium (IV), Seoul, Korea, 28 June 28–1 July 2015; pp. 1373–1379. [Google Scholar]

- Deo, N.; Trivedi, M.M. Multi-modal trajectory prediction of surrounding vehicles with maneuver based lstms. In Proceedings of the 2018 IEEE Intelligent Vehicles Symposium (IV), Suzhou, China, 26–30 June 2018; pp. 1179–1184. [Google Scholar]

- Patel, S.; Griffin, B.; Kusano, K.; Corso, J.J. Predicting future lane changes of other highway vehicles using rnn-based deep models. arXiv 2018, arXiv:1801.04340. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Klaine, P.V.; Imran, M.A.; Onireti, O.; Souza, R.D. A survey of machine learning techniques applied to self-organizing cellular networks. IEEE Commun. Surv. Tutor. 2017, 19, 2392–2431. [Google Scholar] [CrossRef]

- Li, Z.; Guo, C. Multi-agent deep reinforcement learning based spectrum allocation for d2d underlay communications. IEEE Trans. Veh. Technol. 2019, 69, 1828–1840. [Google Scholar] [CrossRef]

| Parameter | Value |

|---|---|

| Cellular transmission power | 0.2 W |

| Baseline power for V2mV link | 0.1 W |

| Noise power | −174 dBm/Hz |

| Pathloss index | 2 |

| Number of Lanes | 3 |

| Velocity of Each Lane | [120 km/h, 90 km/h, 60 km/h] |

| Safety Distance of Each lane | [120 m, 90 m, 60 m] |

| Lane width | 4 m |

| Bandwidth of Each Vehicle | 20 MHz |

| Power allocation index | 0.8, 0.2 |

| Learning rate | 0.8 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xu, S.; Guo, C. Computation Offloading in a Cognitive Vehicular Networks with Vehicular Cloud Computing and Remote Cloud Computing. Sensors 2020, 20, 6820. https://doi.org/10.3390/s20236820

Xu S, Guo C. Computation Offloading in a Cognitive Vehicular Networks with Vehicular Cloud Computing and Remote Cloud Computing. Sensors. 2020; 20(23):6820. https://doi.org/10.3390/s20236820

Chicago/Turabian StyleXu, Shilin, and Caili Guo. 2020. "Computation Offloading in a Cognitive Vehicular Networks with Vehicular Cloud Computing and Remote Cloud Computing" Sensors 20, no. 23: 6820. https://doi.org/10.3390/s20236820

APA StyleXu, S., & Guo, C. (2020). Computation Offloading in a Cognitive Vehicular Networks with Vehicular Cloud Computing and Remote Cloud Computing. Sensors, 20(23), 6820. https://doi.org/10.3390/s20236820