Accelerometer-Based Human Activity Recognition for Patient Monitoring Using a Deep Neural Network

Abstract

1. Introduction

2. Related Work

2.1. Methods Used for Human Activity Recognition (HAR)

2.1.1. Feature-Based Approaches

2.1.2. Deep Neural Networks (DNNs)

2.2. Human Activity Recognition (HAR) for Patient Monitoring

3. Methods

3.1. Data Collection

3.2. Data Preprocessing

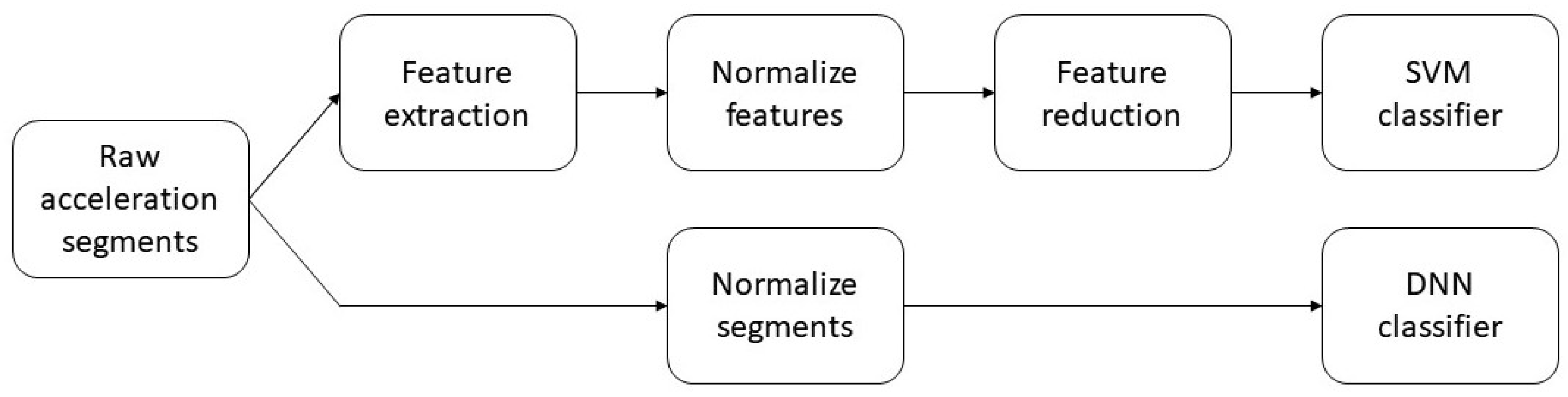

3.3. Classification

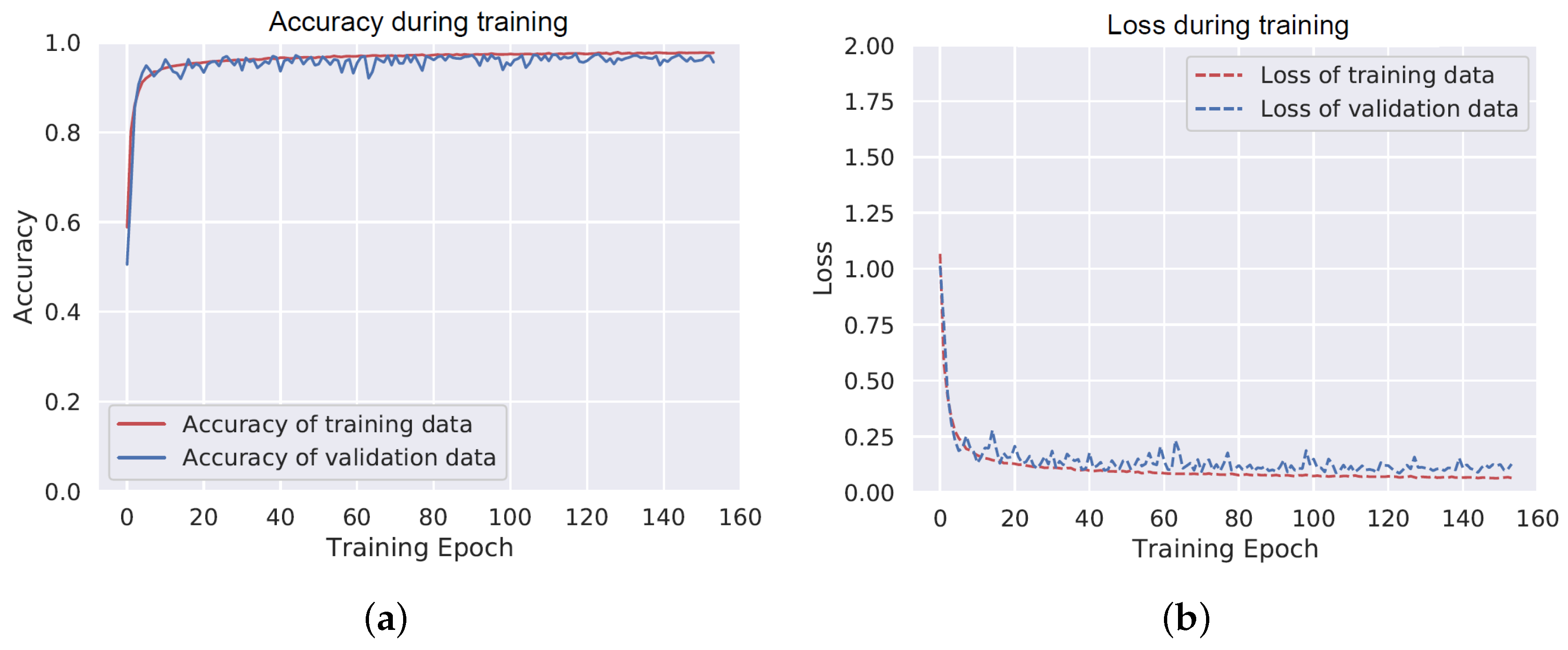

3.3.1. Deep Neural Network

3.3.2. Feature-Based Classifier

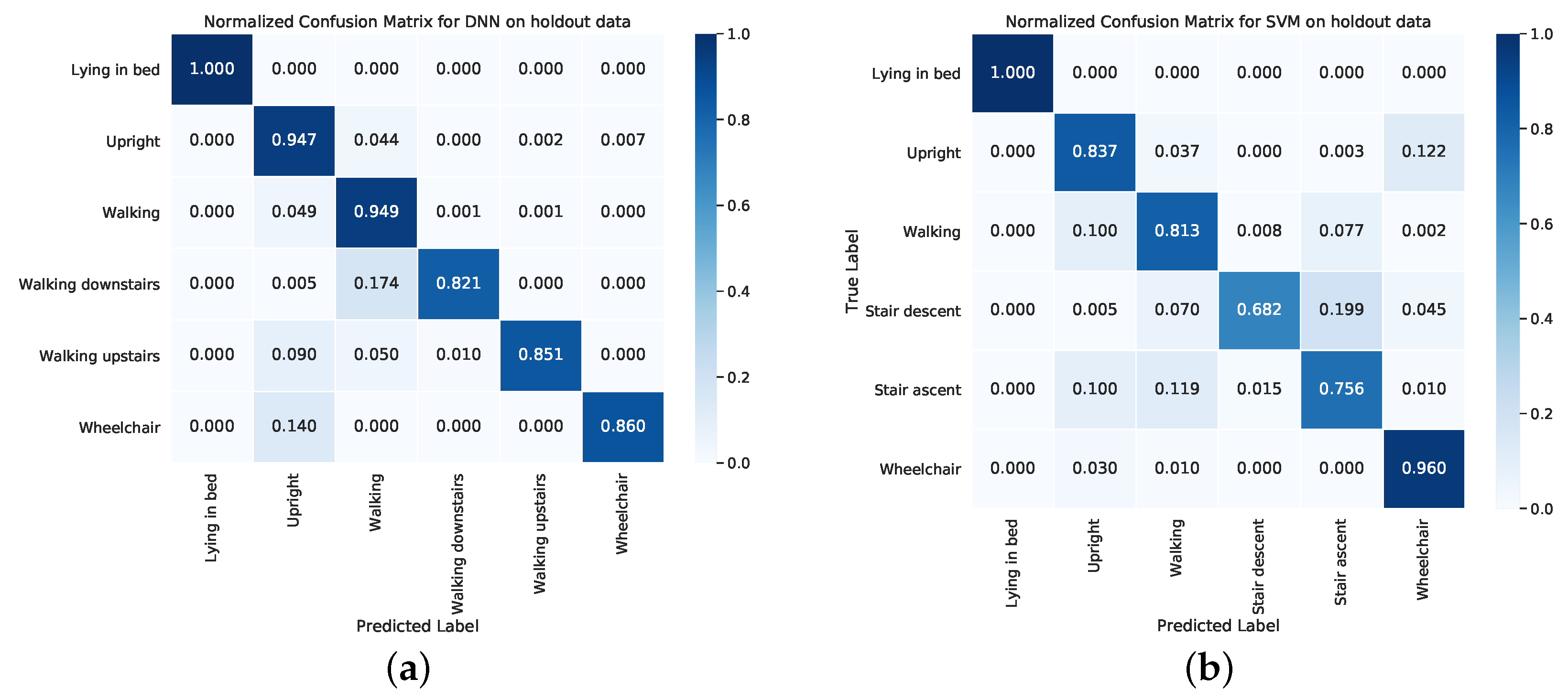

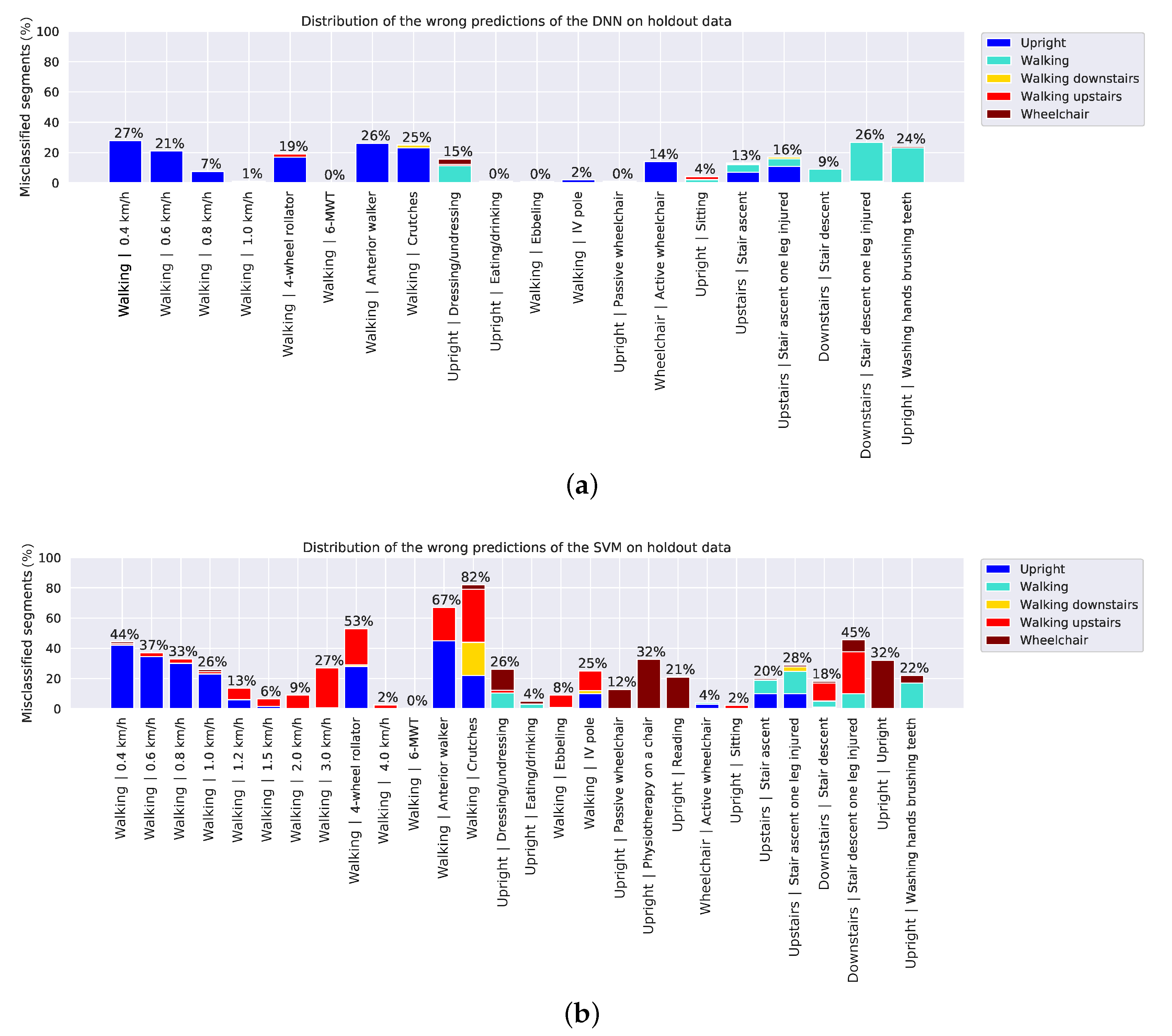

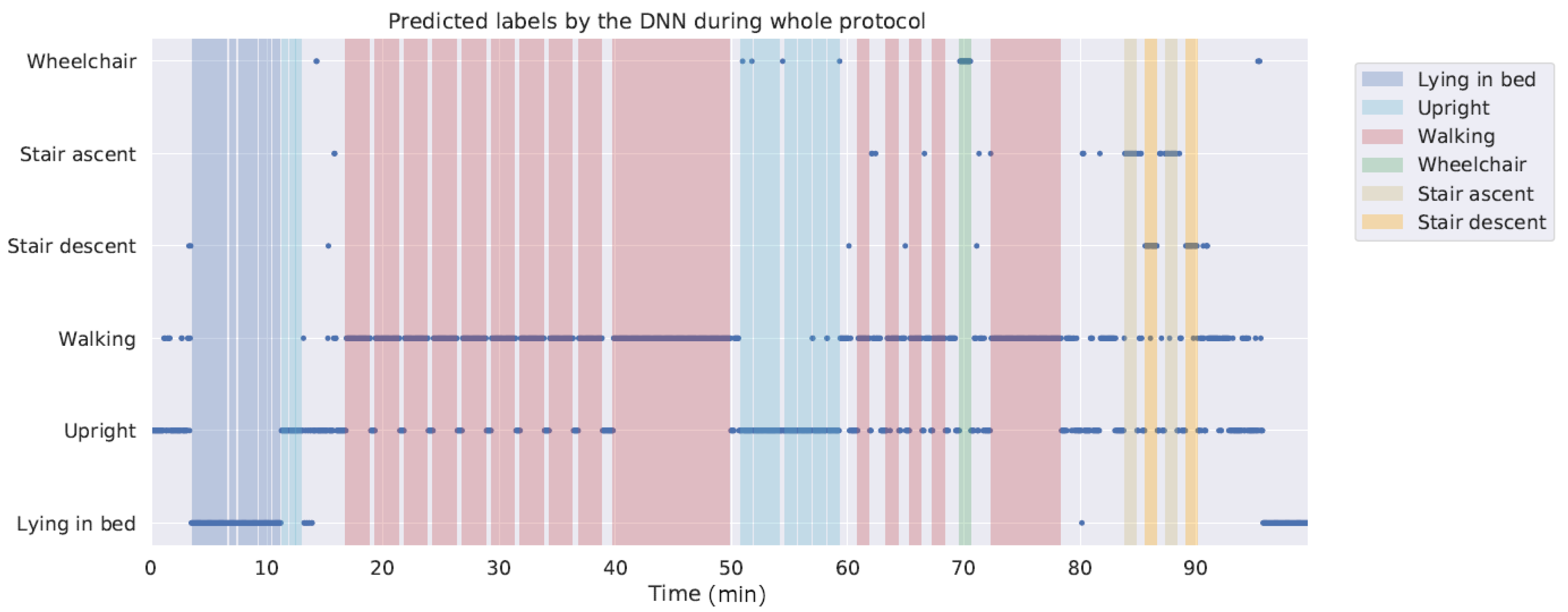

4. Results

5. Discussion

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Brown, C.J.; Redden, D.T.; Flood, K.L.; Allman, R.M. The underrecognized epidemic of low mobility during hospitalization of older adults. J. Am. Geriatr. Soc. 2009, 57, 1660–1665. [Google Scholar]

- Kuys, S.S.; Dolecka, U.E.; Guard, A. Activity level of hospital medical inpatients: An observational study. Arch. Gerontol. Geriatr. 2012, 55, 417–421. [Google Scholar] [PubMed]

- Mudge, A.M.; Mcrae, P.; Mchugh, K.; Griffin, L.; Hitchen, A.; Walker, J.; Cruickshank, M.; Morris, N.R.; Kuys, S. Poor mobility in hospitalized adults of all ages. J. Hosp. Med. 2016, 11, 289–291. [Google Scholar] [PubMed]

- Brown, C.J.; Friedkin, R.J.; Inouye, S.K. Prevalence and Outcomes of Low Mobility in Hospitalized Older Patients. J. Am. Soc. 2004, 52, 1263–1270. [Google Scholar]

- Sallis, R.; Roddy-Sturm, Y.; Chijioke, E.; Litman, K.; Kanter, M.H.; Huang, B.Z.; Shen, E.; Nguyen, H.Q. Stepping toward discharge: Level of ambulation in hospitalized patients. J. Hosp. Med. 2015, 10, 384–389. [Google Scholar] [PubMed]

- Chung, J.; Demiris, G.; Thompson, H.J. Instruments to assess mobility limitation in community-dwelling older adults: A systematic review. J. Aging Phys. Act. 2015, 23, 298–313. [Google Scholar]

- Appelboom, G.; Yang, A.H.; Christophe, B.R.; Bruce, E.M.; Slomian, J.; Bruyère, O.; Bruce, S.S.; Zacharia, B.E.; Reginster, J.-Y.; Connolly, E.S.; et al. The promise of wearable activity sensors to define patient recovery. J. Clin. Neurosci. 2014, 21, 1089–1093. [Google Scholar]

- Podsiadlo, D.; Richardson, S. The Timed “Up & Go”: A Test of Basic Functional Mobility for Frail Elderly Persons. J. Am. Geriatr. Soc. 1991, 39, 142–148. [Google Scholar]

- Enright, P.L. The Six-Minute Walk Test. Respir. Care 2003, 48, 783–785. [Google Scholar]

- Culhane, K.M.; Lyons, G.M.; Hilton, D.; Grace, P.A.; Lyons, D. Long-term mobility monitoring of older adults using accelerometers in a clinical environment. Clin. Rehabil. 2004, 18, 335–343. [Google Scholar]

- Browning, L.; Denehy, L.; Scholes, R.L. The quantity of early upright mobilisation performed following upper abdominal surgery is low: An observational study. Aust. J. Physiother. 2007, 53, 47–52. [Google Scholar] [PubMed]

- Daskivich, T.J.; Houman, J.; Lopez, M.; Luu, M.; Fleshner, P.; Zaghiyan, K.; Cunneen, S.; Burch, M.; Walsh, C.; Paiement, G.; et al. Association of Wearable Activity Monitors With Assessment of Daily Ambulation and Length of Stay Among Patients Undergoing Major Surgery. JAMA Netw. Open 2019, 2, e187673. [Google Scholar] [PubMed]

- Cook, D.J.; Thompson, J.E.; Prinsen, S.K.; Dearani, J.A.; Deschamps, C. Functional Recovery in the Elderly After Major Surgery: Assessment of Mobility Recovery Using Wireless Technology. Ann. Thoracic Surg. 2013, 96, 1057–1061. [Google Scholar]

- Dhillon, M.S.; McCombie, S.A.; McCombie, D.B. Towards the Prevention of Pressure Ulcers with a Wearable Patient Posture Monitor Based on Adaptive Accelerometer Alignment. In Proceedings of the 2012 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, San Diego, CA, USA, 28 August–1 September 2012; pp. 4513–4516. [Google Scholar]

- Beevi, F.H.A.; Miranda, J.; Pedersen, C.F.; Wagner, S. An Evaluation of Commercial Pedometers for Monitoring Slow Walking Speed Populations. Telemed. e-Health 2016, 22, 441–449. [Google Scholar]

- Ridder, R.; Blaiser, C. Activity trackers are not valid for step count registration when walking with crutches. Gait Posture 2019, 70, 30–32. [Google Scholar]

- Rusk, N. Deep learning. Nat. Methods 2016, 13, 35. [Google Scholar]

- Najafi, B.; Aminian, K.; Paraschiv-Ionescu, A.; Loew, F.; Bula, C.J.; Robert, P.; Büla, C.J.; Robert, P.; Member, S.; Aminian, K.; et al. Ambulatory system for human motion analysis using a kinematic sensor: Monitoring of daily physical activity in the elderly. IEEE Trans. Biomed. 2003, 50, 711–723. [Google Scholar]

- Godfrey, A.; Bourke, A.; Ólaighin, G.; Ven, P.V.; Nelson, J. Activity classification using a single chest mounted tri-axial accelerometer. Med. Eng. Phys. 2011, 33, 1127–1135. [Google Scholar]

- Aminian, K.; Robert, P.; Buchser, E.E.; Rutschmann, B.; Hayoz, D.; Depairon, M. Physical activity monitoring based on accelerometry: Validation and comparison with video observation. Med. Biol. Comput. 1999, 37, 304–308. [Google Scholar]

- Jeon, A.-Y.; Ye, S.-Y.; Park, J.-M.; Kim, K.-N.; Kim, J.-H.; Jung, D.-K.; Jeon, G.-R.; Ro, J.-H.; Ye, S.-Y.; Kim, J.-H. Emergency Detection System Using PDA Based on Self-Response Algorithm. In Proceedings of the 2007 International Conference on Convergence Information Technology (ICCIT 2007), Gyeongju, Korea, 21–23 November 2007; pp. 1207–1212. [Google Scholar]

- Rauen, K.; Schaffrath, J.; Pradhan, C.; Schniepp, R.; Jahn, K. Accelerometric Trunk Sensors to Detect Changes of Body Positions in Immobile Patients. Sensors 2018, 18, 3272. [Google Scholar]

- Zhu, J.; San-Segundo, R.; Pardo, J.M. Feature extraction for robust physical activity recognition. Hum. Cent. Comput. Inf. Sci. 2017, 7, 16. [Google Scholar] [CrossRef]

- Attal, F.; Mohammed, S.; Dedabrishvili, M.; Chamroukhi, F.; Oukhellou, L.; Amirat, Y. Physical Human Activity Recognition Using Wearable Sensors. Sensors 2015, 15, 31314–31338. [Google Scholar] [CrossRef] [PubMed]

- Moncada-Torres, A.; Leuenberger, K.; Gonzenbach, R.; Luft, A.; Gassert, R. Activity classification based on inertial and barometric pressure sensors at different anatomical locations. Physiol. Meas. 2014, 35, 1245–1263. [Google Scholar] [CrossRef] [PubMed]

- Parera, J.; Angulo, C.; Rodríguez-Molinero, A.; Cabestany, J.; Rodriguez-Molinero, A.; Cabestany, J. User Daily Activity Classification from Accelerometry Using Feature Selection and SVM. In Proceedings of the 10th International Work-Conference on Artificial Neural Networks (IWANN 2009), Salamanca, Spain, 10–12 June 2009; pp. 1137–1144. [Google Scholar]

- Cleland, I.; Kikhia, B.; Nugent, C.; Boytsov, A.; Hallberg, J.; Synnes, K.; McClean, S.; Finlay, D. Optimal Placement of Accelerometers for the Detection of Everyday Activities. Sensors 2013, 13, 9183–9200. [Google Scholar] [CrossRef] [PubMed]

- Awais, M.; Chiari, L.; Ihlen, E.A.F.; Helbostad, J.L.; Palmerini, L. Physical Activity Classification for Elderly People in Free-Living Conditions. IEEE J. Biomed. Health Inform. 2019, 23, 197–207. [Google Scholar] [CrossRef]

- Sasaki, J.E.; Hickey, A.; Staudenmayer, J.; John, D.; Kent, J.A.; Freedson, P.S. Performance of Activity Classification Algorithms in Free-living Older Adults. Med. Sci. Sport. Exerc. 2016, 48, 941–950. [Google Scholar] [CrossRef]

- Lyden, K.; Keadle, S.K.; Staudenmayer, J.; Freedson, P.S. A method to estimate free-living active and sedentary behavior from an accelerometer. Med. Sci. Sport. Exerc. 2014, 46, 386–397. [Google Scholar] [CrossRef]

- Jiang, W.; Yin, Z. Human Activity Recognition Using Wearable Sensors by Deep Convolutional Neural Networks. In Proceedings of the 2015 ACM Multimedia Conference (MM 2015), Brisbane, Australia, 26–30 October 2015; pp. 1307–1310. [Google Scholar]

- Hur, T.; Bang, J.; Huynh-The, T.; Lee, J.; Kim, J.-I.; Lee, S. Iss2Image: A Novel Signal-Encoding Technique for CNN-Based Human Activity Recognition. Sensors 2018, 18, 3910. [Google Scholar] [CrossRef]

- Almaslukh, B.; Artoli, A.M.; Al-Muhtadi, J. A Robust Deep Learning Approach for Position-Independent Smartphone-Based Human Activity Recognition. Sensors 2018, 18, 3726. [Google Scholar] [CrossRef]

- Ignatov, A. Real-time human activity recognition from accelerometer data using Convolutional Neural Networks. Appl. Soft Comput. 2018, 62, 915–922. [Google Scholar] [CrossRef]

- Avilés-Cruz, C.; Ferreyra-Ramírez, A.; Zú niga-López, A. Coarse-Fine Convolutional Deep-Learning Strategy for Human Activity Recognition. Sensors 2019, 19, 1556. [Google Scholar] [CrossRef] [PubMed]

- Uddin, M.Z.; Hassan, M.M. Activity Recognition for Cognitive Assistance Using Body Sensors Data and Deep Convolutional Neural Network. IEEE Sens. J. 2019, 19, 8413–8419. [Google Scholar] [CrossRef]

- Karim, F.; Majumdar, S.; Darabi, H.; Chen, S. LSTM Fully Convolutional Networks for Time Series Classification. IEEE Access 2018, 6, 1662–1669. [Google Scholar] [CrossRef]

- Jeong, C.Y.; Kim, M. An Energy-Efficient Method for Human Activity Recognition with Segment-Level Change Detection and Deep Learning. Sensors 2019, 19, 3688. [Google Scholar] [CrossRef] [PubMed]

- Ha, S.; Yun, J.-M.; Choi, S. Multi-modal Convolutional Neural Networks for Activity Recognition. In Proceedings of the 2015 IEEE International Conference on Systems, Man, and Cybernetics, Hong Kong, China, 9–12 October 2015; pp. 3017–3022. [Google Scholar]

- Cheng, W.-Y.; Scotland, A.; Lipsmeier, F.; Kilchenmann, T.; Jin, L.; Schjodt-Eriksen, J.; Wolf, D.; Zhang-Schaerer, Y.-P.; Garcia, I.F.; Siebourg-Polster, J.; et al. Human Activity Recognition from Sensor-Based Large-Scale Continuous Monitoring of Parkinson’s Disease Patients. In Proceedings of the 2017 IEEE/ACM International Conference on Connected Health: Applications, Systems and Engineering Technologies (CHASE), Philadelphia, PA, USA, 17–19 July 2017; pp. 249–250. [Google Scholar]

- Chen, Y.; Xue, Y. A Deep Learning Approach to Human Activity Recognition Based on Single Accelerometer. In Proceedings of the 2015 IEEE International Conference on Systems, Man, and Cybernetics, Hong Kong, China, 9–12 October 2015; pp. 1488–1492. [Google Scholar]

- Inoue, M.; Inoue, S.; Nishida, T. Deep recurrent neural network for mobile human activity recognition with high throughput. Artif. Life Robot. 2018, 23, 173–185. [Google Scholar] [CrossRef]

- Chen, W.-H.; Baca, C.A.; Tou, C.-H. LSTM-RNNs combined with scene information for human activity recognition. In Proceedings of the 2017 IEEE 19th International Conference on e-Health Networking, Applications and Services (Healthcom), Dalian, China, 12–15 October 2017; pp. 1–6. [Google Scholar]

- Welhenge, A.M.; Taparugssanagorn, A. Human activity classification using long short-term memory network. Signal Image Video Process. 2019, 13, 651–656. [Google Scholar] [CrossRef]

- Zebin, T.; Sperrin, M.; Peek, N.; Casson, A.J. Human activity recognition from inertial sensor time-series using batch normalized deep LSTM recurrent networks. In Proceedings of the 2018 40th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Honolulu, HI, USA, 18–21 July 2018; pp. 1–4. [Google Scholar]

- Li, H.; Trocan, M. Personal Health Indicators by Deep Learning of Smart Phone Sensor Data. In Proceedings of the 2017 3rd IEEE International Conference on Cybernetics (CYBCONF), Exeter, UK, 21–23 June 2017; pp. 1–5. [Google Scholar]

- Ordóñez, F.; Roggen, D. Deep Convolutional and LSTM Recurrent Neural Networks for Multimodal Wearable Activity Recognition. Sensors 2016, 16, 115. [Google Scholar] [CrossRef]

- Ioffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. arXiv 2015, arXiv:1502.03167. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Lemaitre, G.; Nogueira, F.; Aridas, C.K. Imbalanced-learn: A Python Toolbox to Tackle the Curse of Imbalanced Datasets in Machine Learning. J. Mach. Learn. Res. 2017, 18, 1–5. [Google Scholar]

- Arif, M.; Kattan, A. Physical Activities Monitoring Using Wearable Acceleration Sensors Attached to the Body. PLoS ONE 2015, 10, e0130851. [Google Scholar] [CrossRef] [PubMed]

- Duarte, F.; Lourenço, A.; Abrantes, A. Classification of Physical Activities using a Smartphone: Evaluation study using multiple users. Procedia Technol. 2014, 17, 239–247. [Google Scholar] [CrossRef]

- Altun, K.; Barshan, B.; Tunçel, O. Comparative study on classifying human activities with miniature inertial and magnetic sensors. Pattern Recognit. 2010, 43, 3605–3620. [Google Scholar] [CrossRef]

- Sharma, A.; Lee, H.J.; Chung, W.-Y. Principal Component analysis based Ambulatory monitoring of elderly. J. Korea Inst. Inf. Commun. Eng. 2008, 12, 2105–2110. [Google Scholar]

- Chen, Y.; Wang, Z. A hierarchical method for human concurrent activity recognition using miniature inertial sensors. Sens. Rev. 2017, 37, 101–109. [Google Scholar] [CrossRef]

- Nguyen, V.N.; Yu, H. Novel Automatic Posture Detection for In-patient Care Using IMU Sensors. In Proceedings of the 2013 6th IEEE Conference on Robotics, Automation and Mechatronics (RAM), Manila, Philippines, 12–15 November 2013; pp. 31–36. [Google Scholar]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. SciKit-learn: Machine Learning in {P}ython. J. Mach. Learn. 2011, 12, 2825–2830. [Google Scholar]

- David, R.; Duke, J.; Jain, A.; Reddi, V.J.; Jeffries, N.; Li, J.; Kreeger, N.; Nappier, I.; Natraj, M.; Regev, S.; et al. TensorFlow Lite Micro: Embedded Machine Learning on TinyML Systems. arXiv 2020, arXiv:2010.08678. [Google Scholar]

- Alfredsson, J.; Stebbins, A.; Brennan, J.M.; Matsouaka, R.; Afilalo, J.; Peterson, E.D.; Vemulapalli, S.; Rumsfeld, J.S.; Shahian, D.; Mack, M.J.; et al. Gait speed predicts 30-day mortality after transcatheter aortic valve replacement: Results from the Society of Thoracic Surgeons/American College of Cardiology Transcatheter Valve Therapy Registry. Circulation 2016, 133, 1351–1359. [Google Scholar] [CrossRef] [PubMed]

- Gyllensten, I.C.; Bonomi, A.G. Identifying types of physical activity with a single accelerometer: Evaluating laboratory-trained algorithms in daily life. IEEE Trans. Biomed. Eng. 2011, 58, 2656–2663. [Google Scholar] [CrossRef] [PubMed]

| Activity Label | Class Label | Duration (mm:ss) |

|---|---|---|

| Activities in and around bed | ||

| Lie supine | Lying in bed | 3:00 |

| Lie left | Lying in bed | 0:30 |

| Lie right | Lying in bed | 0:30 |

| Restless in bed | Lying in bed | 1:00 |

| Physiotherapy in bed | Lying in bed | 1:00 |

| Reclined | Lying in bed | 0:30 |

| Upright | Upright | 0:30 |

| Sitting edge of bed | Upright | 0:30 |

| Standing next to bed | Upright | 0:30 |

| Treadmill activities | ||

| 0.4 km/h | Walking | 2:00 |

| 0.6 km/h | Walking | 2:00 |

| 0.8 km/h | Walking | 2:00 |

| 1.0 km/h | Walking | 2:00 |

| 1.2 km/h | Walking | 2:00 |

| 1.5 km/h | Walking | 2:00 |

| 2.0 km/h | Walking | 2:00 |

| 3.0 km/h | Walking | 2:00 |

| 4.0 km/h | Walking | 2:00 |

| Ebbeling | Walking | ∼10:00 |

| Activities of daily hospital living | ||

| Dressing/undressing | Upright | 1:00 |

| Reading | Upright | 1:00 |

| Physiotherapy on a chair | Upright | 1:00 |

| Eating/drinking | Upright | 1:00 |

| Sit-to-Stand transitions | Upright | 1:00 |

| Hospital ambulation | ||

| Patient transport in wheelchair | Upright | 1:00 |

| Washing hands brushing teeth | Upright | 1:00 |

| Crutches | Walking | 1:00 |

| Anterior walker | Walking | 1:00 |

| IV pole | Walking | 1:00 |

| 4-wheel rollator | Walking | 1:00 |

| Self propelled wheelchair | Wheelchair | 1:00 |

| 6-MWT | Walking | 6:00 |

| Stair walking | ||

| Stair ascent one leg injured | Stair ascent | 1:00 |

| Stair descent one leg injured | Stair descent | 1:00 |

| Stair ascent | Stair ascent | 1:00 |

| Stair descent | Stair descent | 1:00 |

| Feature | Description |

|---|---|

| Mean | Mean value of the vector |

| Absolute mean | Mean of absolute values in the vector |

| Median | Median value of the vector |

| Mean absolute deviation | Mean absolute deviation of the vector |

| Standard deviation | Standard deviation of the vector |

| Variance | Variance of the vector |

| Minimum value | Lowest value in the vector |

| Maximum value | Highest value in the vector |

| Full range | Difference between the maximum and minimum value of the vector |

| Interquartile range | Difference between the 1st and 3rd quartile |

| Area | Sum of all values in the vector |

| Absolute area | Sum of all absolute values in the vector |

| Energy | Sum of squared components of the vector |

| Correlation | Correlation coefficients between each pair of vectors |

| Skewness | Shape of distribution |

| Kurtosis | Shape of distribution |

| Spectral entropy | A measure of the complexity of a signal |

| Spectral centroid | Mean of fourier transform |

| Spectral variance | Variance of fourier transform |

| Spectral skewness | Skewness of fourier transform |

| Spectral kurtosis | Kurtosis of fourier transform |

| Accuracy | Precision | Recall | F1-Score | |

|---|---|---|---|---|

| DNN | 0.9452 | 0.9507 | 0.9452 | 0.9464 |

| SVM | 0.8335 | 0.8919 | 0.8335 | 0.8507 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fridriksdottir, E.; Bonomi, A.G. Accelerometer-Based Human Activity Recognition for Patient Monitoring Using a Deep Neural Network. Sensors 2020, 20, 6424. https://doi.org/10.3390/s20226424

Fridriksdottir E, Bonomi AG. Accelerometer-Based Human Activity Recognition for Patient Monitoring Using a Deep Neural Network. Sensors. 2020; 20(22):6424. https://doi.org/10.3390/s20226424

Chicago/Turabian StyleFridriksdottir, Esther, and Alberto G. Bonomi. 2020. "Accelerometer-Based Human Activity Recognition for Patient Monitoring Using a Deep Neural Network" Sensors 20, no. 22: 6424. https://doi.org/10.3390/s20226424

APA StyleFridriksdottir, E., & Bonomi, A. G. (2020). Accelerometer-Based Human Activity Recognition for Patient Monitoring Using a Deep Neural Network. Sensors, 20(22), 6424. https://doi.org/10.3390/s20226424