1. Introduction

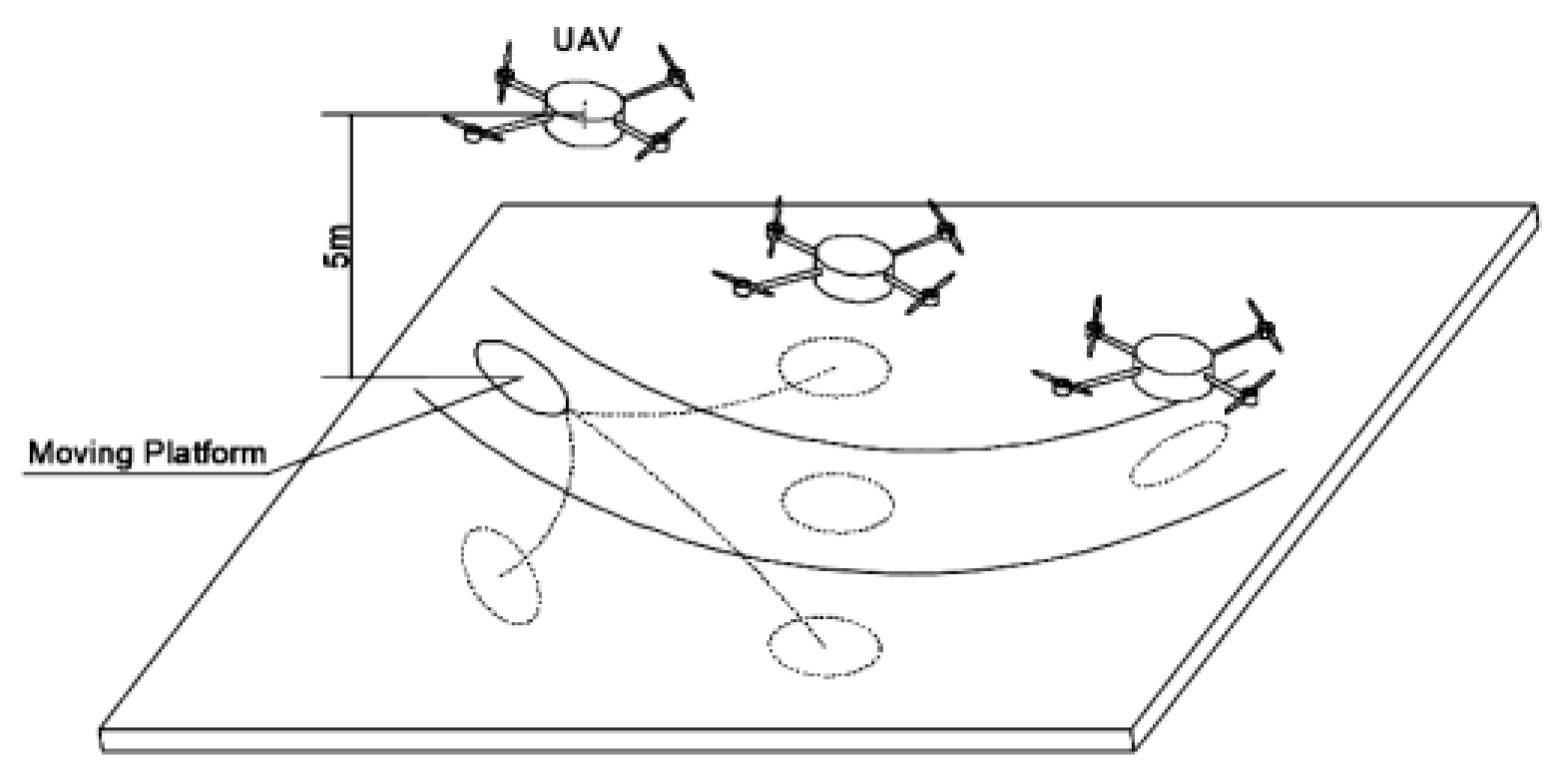

In recent years, with the rapid development of unmanned aerial vehicle (UAV) technology, the UAV has been widely used in military and civilian fields, such as search, rescue, exploration, and surveillance [

1,

2]. Autonomous tracking and landing is a key point in UAV application [

3,

4,

5,

6]. However, compared to landing in a simulated environment or a static platform, autonomous tracking and landing is more difficult because classical techniques have their limitations, in terms of model design and non-linearity approximation [

7]. Furthermore, due to the absence of precise sensors and the constraint of the sensors’ specific physical motion, autonomous tracking and landing works poorly with high sensor noise [

6,

8] and intermittent measurements [

9,

10].

Given the importance and complexity of the UAV tracking and autonomous landing, increasingly more scholars from different fields have shown interest in specific solutions such as perception and relative pose estimation [

11,

12] or trajectory optimization and control [

13,

14]. Regarding the control maneuvers when the relative state of the moving platform is assumed to be known, the Proportional-Integral-Derivative (PID) controller is the mainstream algorithm for aggressive landing from relatively short distances [

4,

5,

15], but a fixed gain of the PID controller cannot provide immediate response to overcome a nonlinear effect, moreover, PID gain tuning is a crucial part and needs a lot of effort for optimal gain [

16]. This calls for nonlinear control approaches for more precise control of UAV. By applying a state-dependent coefficient (SDC)-based nonlinear model inversion, the authors of reference [

17] eliminated the need for linearization of the aircraft dynamics, but this approach is quite sensitive towards sensor noise. In order to solve the problem of UAV autonomouslanding under special circumstances, such as landing on top of a moving inclined platform [

14,

18], or landing on a platform moving in a figure of eight [

3,

19] (the task of the competition in Mohamed Bin Zayed International Robotics Challenge (MBZIRC) 2017), a Model Predictive Controller (MPC) tracker was used to generate UAV feasible trajectories, which could minimize an error of UAV future states over a prediction horizon to fly precisely above the car given the dynamical constraints of the aircraft. MPC holds the ability to anticipate upcoming events and can yield control inputs accordingly, however, the development of accurate prediction models requires a tiresome design effort. Sliding mode methods have been widely used in UAV autonomous tracking and landing control algorithms [

20,

21,

22]. This approach changes the UAV nonlinear dynamics by the application of a discontinuous control signal, but the main issue with sliding mode control is chattering and a high control demand [

7]. Unlike the linear and non-linear controllers mentioned above, a fuzzy logic controller does not depend on a precise mathematical model. Regardless of the good performance by PID controllers, these still need to be adaptive for uncertain conditions. To achieve this purpose, PID control was extended further to fuzzy adaptive PID [

23,

24], and the authors of reference [

16,

25] presented a fuzzy logic-based UAV tracking and landing using computer vision.

Different from the above methods in solving the control of a UAV by building a prior model and then making a decision based on a dynamics model, reinforcement learning is an ideal solution to deal with unknown system dynamics in different tracking and landing circumstances [

26]. Recently, significant progress has been made by combining deep learning with reinforcement learning, resulting in the deep Q-learning network (DQN) [

27], the deterministic policy gradient (DPG) [

28], and the asynchronous advantage actor-critic (A3C) [

29]. These algorithms have achieved unprecedented success in challenging domains, such as the Atari 2600 [

27].

Concerning deep reinforcement learning for UAV autonomous tracking and landing tasks, a hierarchy of DQN was proposed in [

30,

31], which is used as a high-end control policy for the navigation in different phases. However, the flexibility of the method is limited because the UAV’s action space is defined by discrete space rather than continuous space. Furthermore, the authors of reference [

32] addressed the full problem with continuous state and actions spaces based on Deep Deterministic Policy Gradients (DDPG) [

33]. However, the altitude (

z-axis) is not included in the framework, there is a significant design effort during the reward plasticity, and the proposed Gazebo-based reinforcement learning framework has a weak generalization capability, which results in less autonomous agents.

In general, the current research on the problem of a UAV autonomous tracking and landing is mainly focused on the situation in which the platform has a constant speed. However, more sophisticated control is required to operate in unpredictable and harsh environments, such as high sensor noise and intermittent measurement. In terms of the trajectory control or classic controller, e.g., PID, nonlinear, fuzzy logic controllers are the mainstream algorithm, but these techniques are limited to model design, non-linarites approximation, and disturbances rejection. Although, in several studies, the deep reinforcement learning (DRL)-controller has performed well in UAV tracking and landing problems, the control problem of UAV tracking and landing on a randomly moving platform becomes intractable, due to the low reliability and weak generalization ability of the mathematical model.

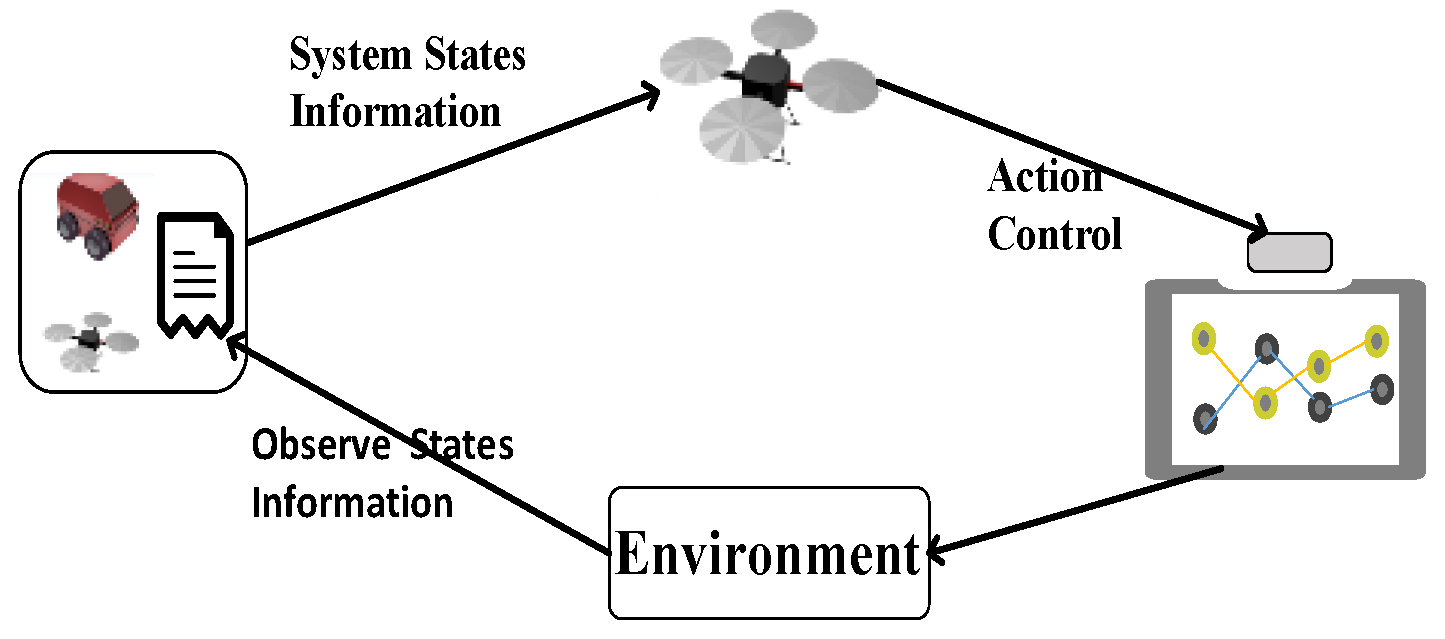

We decompose the problem of landing on a moving platform into two aspects: one is perception and relative pose estimation, the other is trajectory optimization and control. The reader is referred to [

6,

34] for algorithms which are used particularly for landing on a platform using visual inputs from UAV. In the present paper, we focus on trajectory planning and control, aiming to solve the control problem of UAV tracking and landing on a moving platform. We build a novel model-free-based tracking and landing algorithm to solve the problem of sensor noise and intermittent measurements. First, by taking the sensor noise, intermittent measurements, and randomness of UAV movement into consideration, a novel dynamic model based on the partially observable Markov decision process (POMDP) [

35] is built to describe the autonomous process of UAV tracking and landing. Then, an end-to-end neural network is used to approximate the action controller of UAV autonomous tracking and landing. Finally, a DRL-based algorithm is adopted to train the neural network to learn from the tracking and landing experience.

The rest of this article is organized as follows. In

Section 2, we introduce a UAV autonomous tracking and landing model based on the POMDP. In

Section 3, we discuss the hybrid strategy included with deep reinforcement learning and heuristic rules in order to calculate the optimal control output and realize UAV autonomous tracking and landing tasks. In

Section 4, we present the experimental results of our methods on a Modular Open Robots Simulation Engine (MORSE) simulator. Conclusions and future work are drawn in

Section 5.

3. UAV Autonomous Tracking and Landing Method Based on Hybrid Strategy

If the UAV autonomous landing problem can be described by an accurate mathematical model, then we can solve the objective function of the Markov decision model (shown in Equation (5)) by an iterative solution based on the direct method or indirect method of the optimal control theory and then directly obtain the above optimal decision strategy of the Markov decision process. However, as described in

Section 2, the model of the target system is unknown and difficult to describe. Much of the current research [

38,

39] uses a reduced model of the UAV for generating the optimal landing trajectory for landing on a moving target. However, such an approximation may not be accurate, and, consequently, the landing performance is decreased. Besides, most methods take a state as inputs and assume that the observation of the state is accurate, which is unlikely to be true. Different from the previous definition of optimal control [

40], the UAV optimal control method we propose refers to the control strategy that maximizes the action value function (shown in Equation (5)) of the UAV. Aided by reinforcement learning, a model-free method is adopted, and a novel network is used to map the observation to optimal action in an end-to-end way. In addition, we use the tracking and landing experience history to train the network to learn the optimal tracking strategy. Once the platform is tracked, a rule-based landing strategy is used to land the UAV.

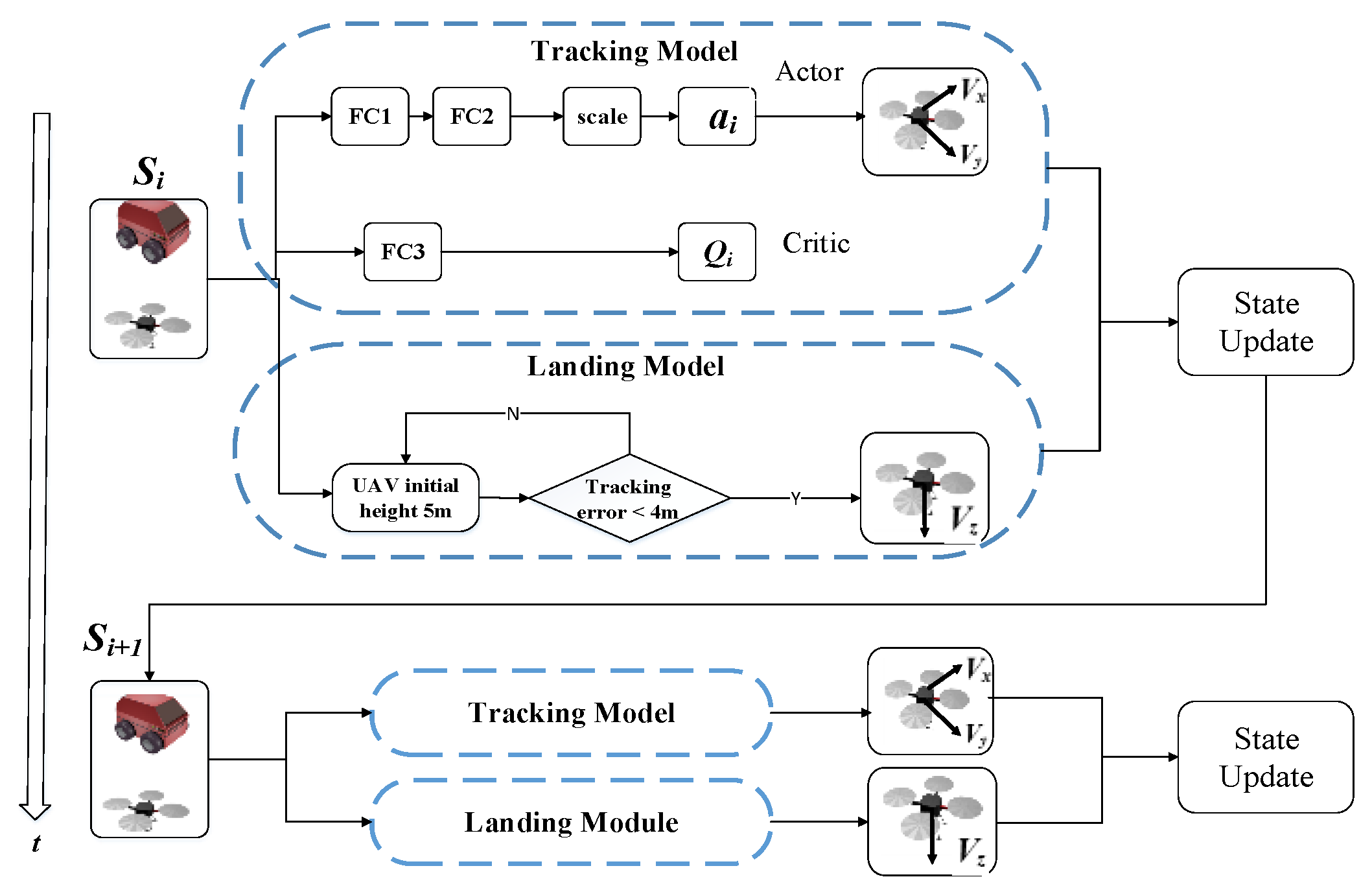

3.1. Hybrid Strategy Method

The hybrid strategy adopted in this paper is shown in

Figure 4. The strategy consists of two parts: tracking and landing modules. The tracking module introduces the reinforcement learning method to adjust the speed of the UAV in the horizontal direction, aiming to achieve the stable tracking of the moving platform. The landing module adjusts the height of the UAV in the vertical direction based on heuristic rules, so as to land the UAV on the platform.

Tracking module: The UAV autonomous tracking and landing problem has a continuous state and decision space, and the DDPG, which combines the DQN and DPG, is a deterministic strategy for a continuous action space. This method combines reinforcement learning with deep learning and has good potential for dealing with complex tasks. Thus, this network is introduced in this paper for mapping the observation to proper action in an end-to-end way.

Details of the decision network structure are shown in

Figure 4. The network adopts the actor-critic architecture [

41], in which the actor network input is the system state, mainly including the motion state information of the UAV and moving platform in the system. The output layer is a two-dimensional continuous action space, which corresponds to the speed value of the UAV in the longitudinal and lateral directions after scale conversion. The critic network estimates the action-value function that describes the expected reward after following policy

π. Since the decision is a deterministic action, to ensure that the environment is fully explored during the training process, we constructed a random action by adding noise sampled from a noise process

Ni, in which the noise is only needed in the training process:

where

is the output action,

represents the current state information,

) represents the decision taken when the policy parameter is

in state

, and

is the artificially added Gaussian noise attenuated over time.

As shown in

Figure 4, the network consists of three fully connected layers, the FC1 and FC3 layers are followed with the relu activation function, and the FC2 layer is followed with the tanh layer. The parameters of each layer in the network are shown in

Table 1. Furthermore, the effects of different network parameters on the UAV tracking performance are shown in

Appendix C.

Landing module: The landing module adjusts the height of the UAV in the vertical direction based on heuristic rules (shown in

Table 1). As show in

Table 2,

dist has been defined in Equation (4), and

height is defined as

. According to the rules table, the speed of the UAV in the vertical direction depends on the distance and height between the UAV and the moving platform. When the distance between the UAV and moving platform is less than 4 m, the UAV should gradually reduce its height while ensuring stable target tracking. When the relative height between the UAV height and moving platform is less than 0.1 m, and the distance error of the horizontal direction is less than 0.8 m, it is then considered that the landing task is successful. When the target is lost during landing, the UAV would stop landing and gradually restore the initial height and re-plan the landing trajectory.

3.2. Network Model Training

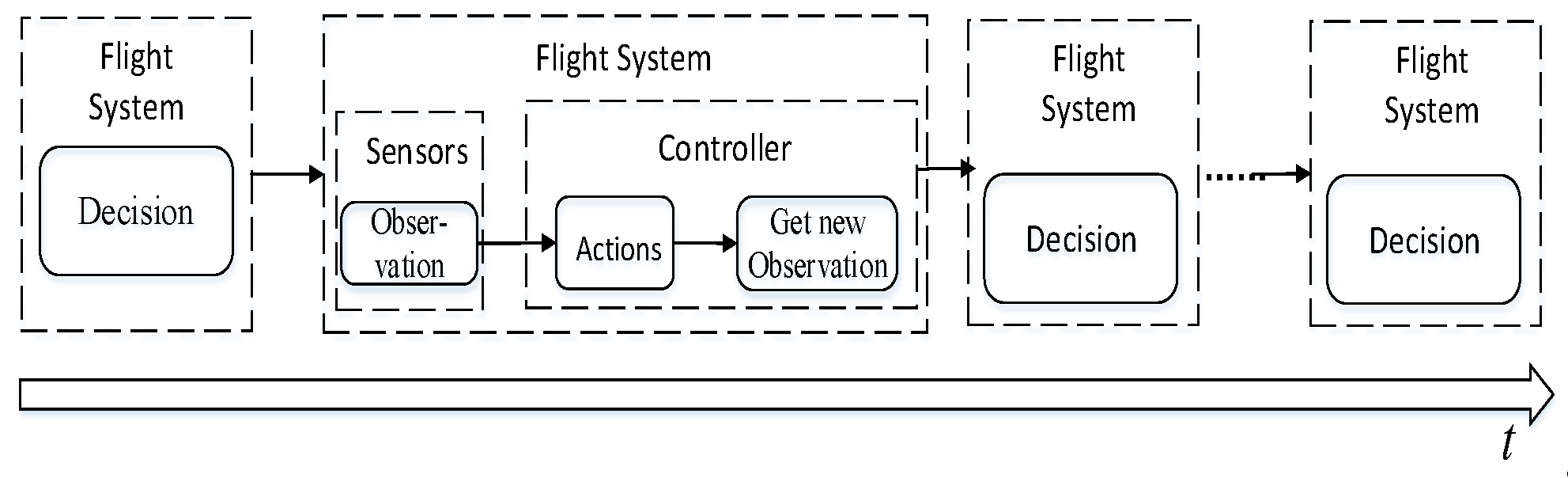

To train the UAV tracking neural network to learn the optimal tracking strategy, we adopted the reinforcement learning process (shown in

Figure 5). At each step, the UAV observes the states’ partially observable information and then interacts with the environment through actions while receiving immediate reward signals. After multi-step decisions, the agent gains decision-making experience, so as to obtain more cumulative rewards and maximize the action-value function (defined in

Section 2).

One challenge when training a neural network is that there is a correlation between the data generated by sequential exploration in the UAV autonomous tracking and landing environment. To address these issues, a replay buffer is used to define a control experience tuple: Di = {si, ai, ri, si+1}, indicating the UAV input state at time i, outputting the control action, receiving the reward, and obtaining the state at the next time i + 1. The tuple is stored in the replay buffer, and the neural network is updated by uniform random sampling of the mini-batch data in the replay buffer.

The decision network training method is now presented. The critic network uses the method of minimizing the loss function to approximate the value function, which is defined as

where

is the loss function,

is the estimate of the

Q value at time

i (the latter two values are the actual

Q values after the action

at time

i),

is the discount factor, and

is an immediate reward.

In the actor network, the neural network is also used to approximate the strategy function, and the actor policy is updated to output the optimal decision on the basis of the current state. The updated formula is

where

represents the gradient direction of the

Q value caused by the strategy

, thereby updating the policy parameter

,

represents the change in the

Q value generated by the action

in the current state, and

represents the current policy gradient direction.

To ensure the stability of the learning process, in this paper, the networks

and

are created to be the same as the actor and critic network, respectively, which are then used for calculating the target values, and the parameter-updated formula is