Optimization of Sparse Cross Array Synthesis via Perturbed Convex Optimization

Abstract

1. Introduction

2. Methods

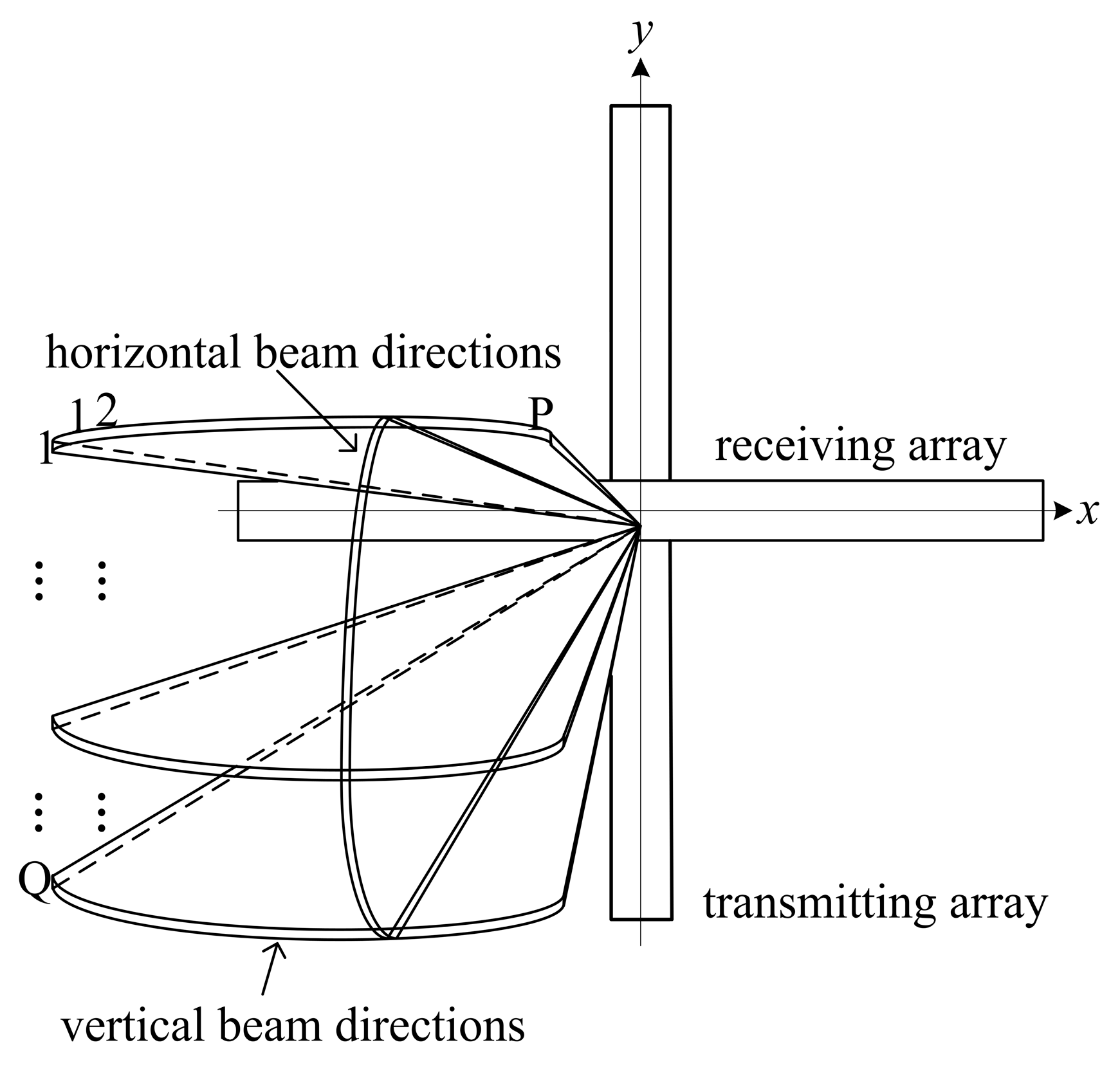

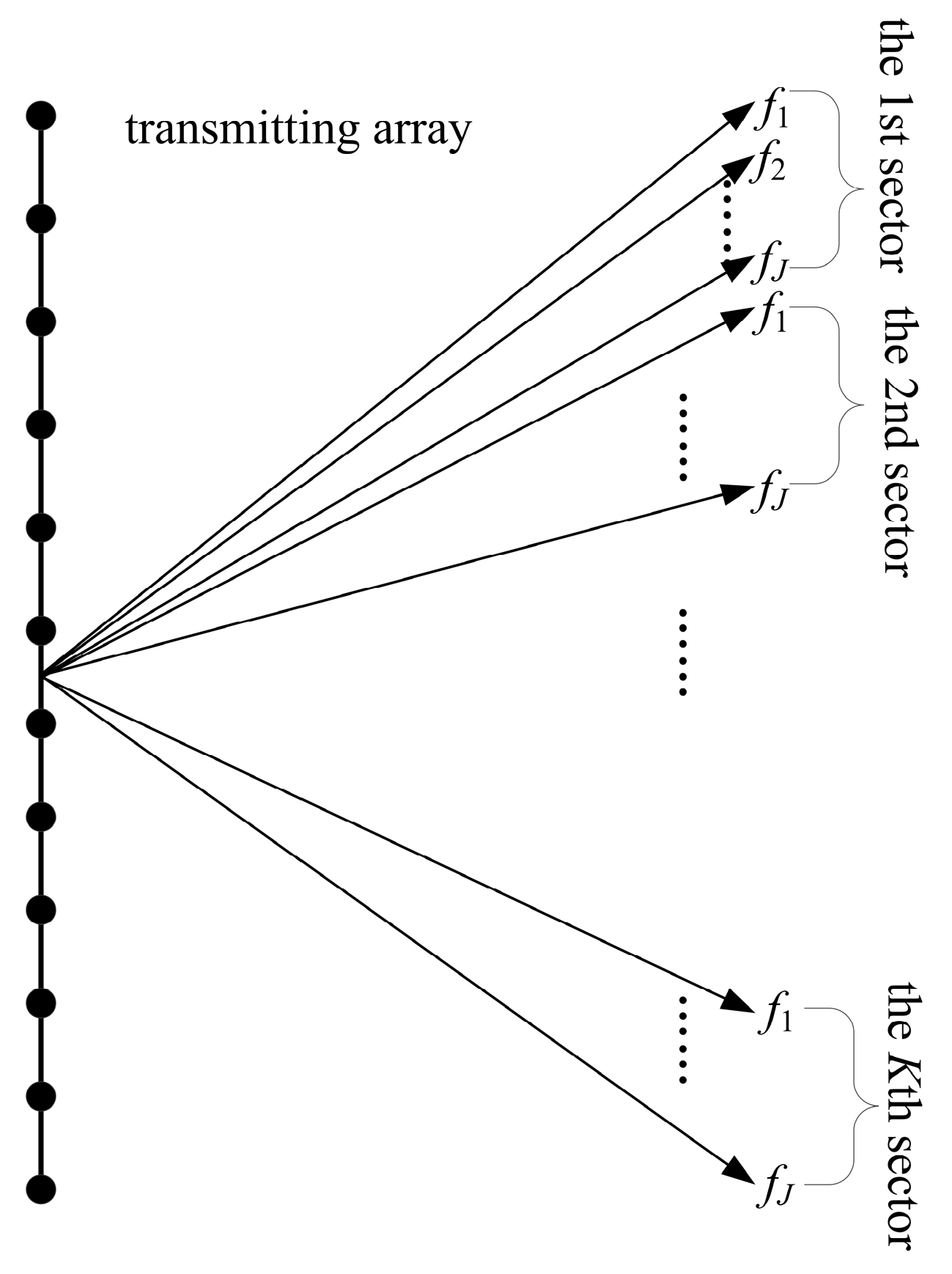

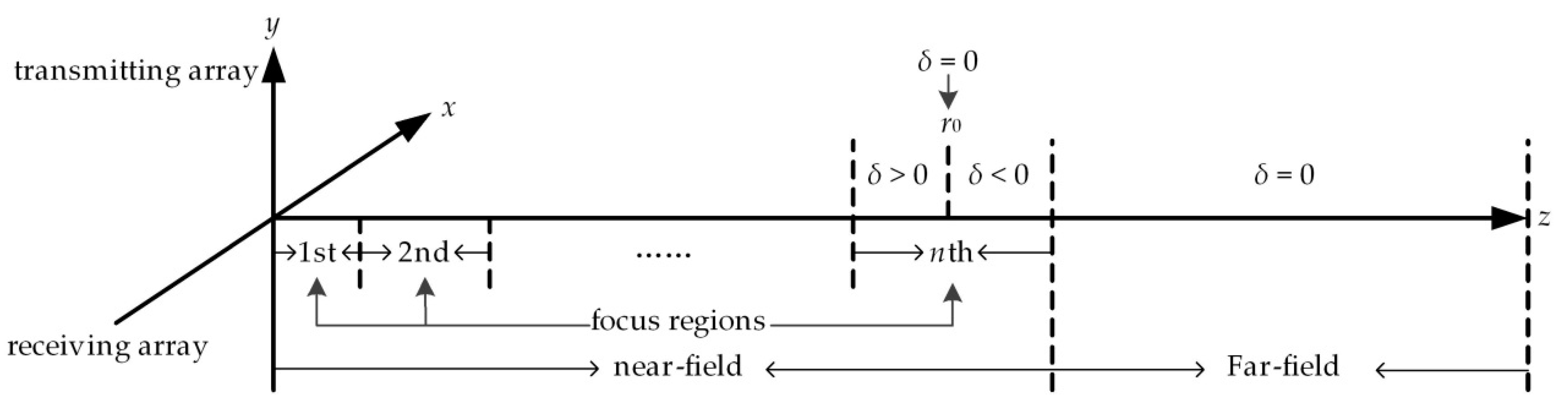

2.1. Multi-Frequency Cross Array Beamforming in the Near-Field and Far-Field

2.2. Sparse Cross Array Synthesis Method

2.2.1. Iterative Reweighted l1 Minimization

2.2.2. Perturbed Convex Optimization

3. Results

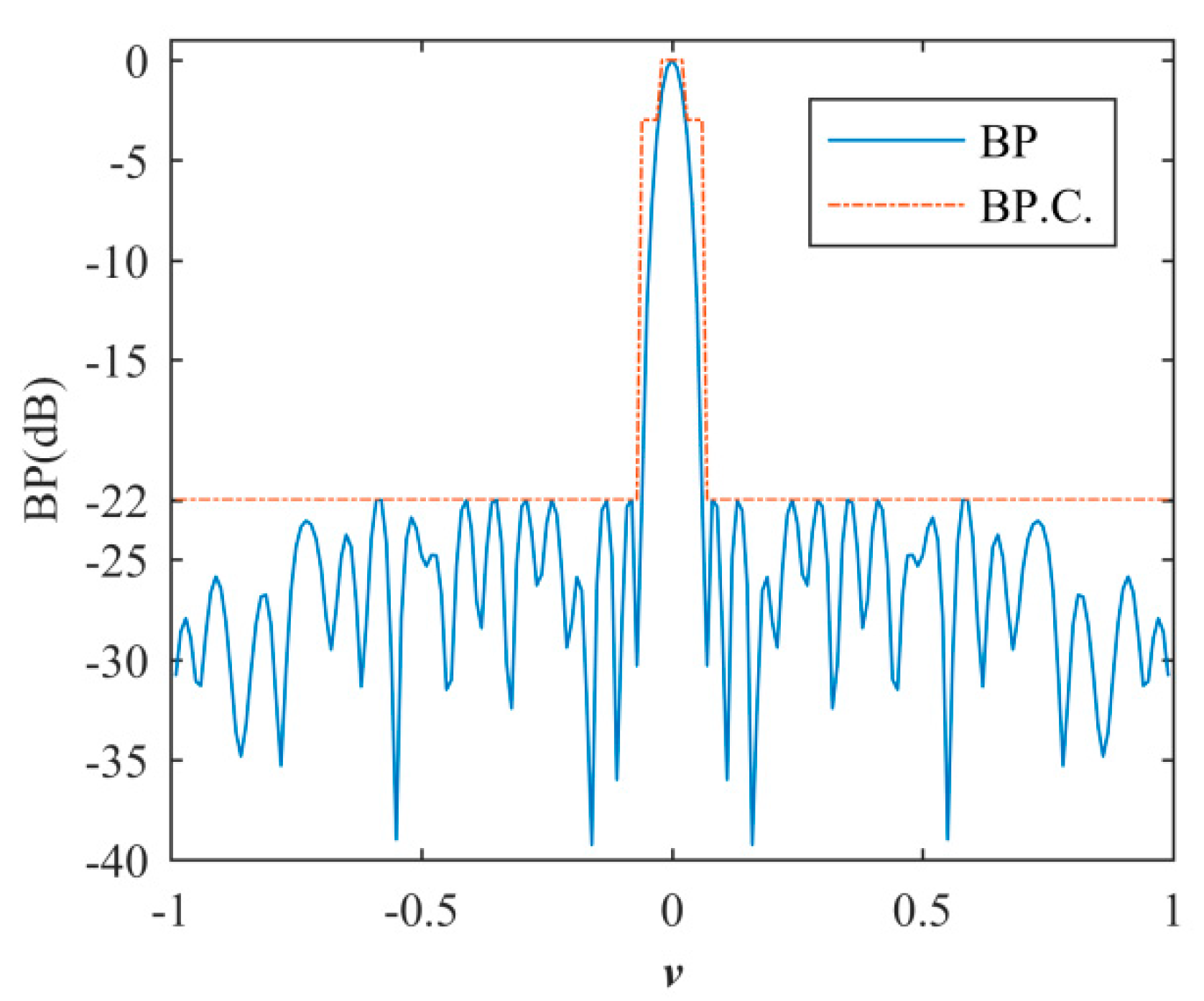

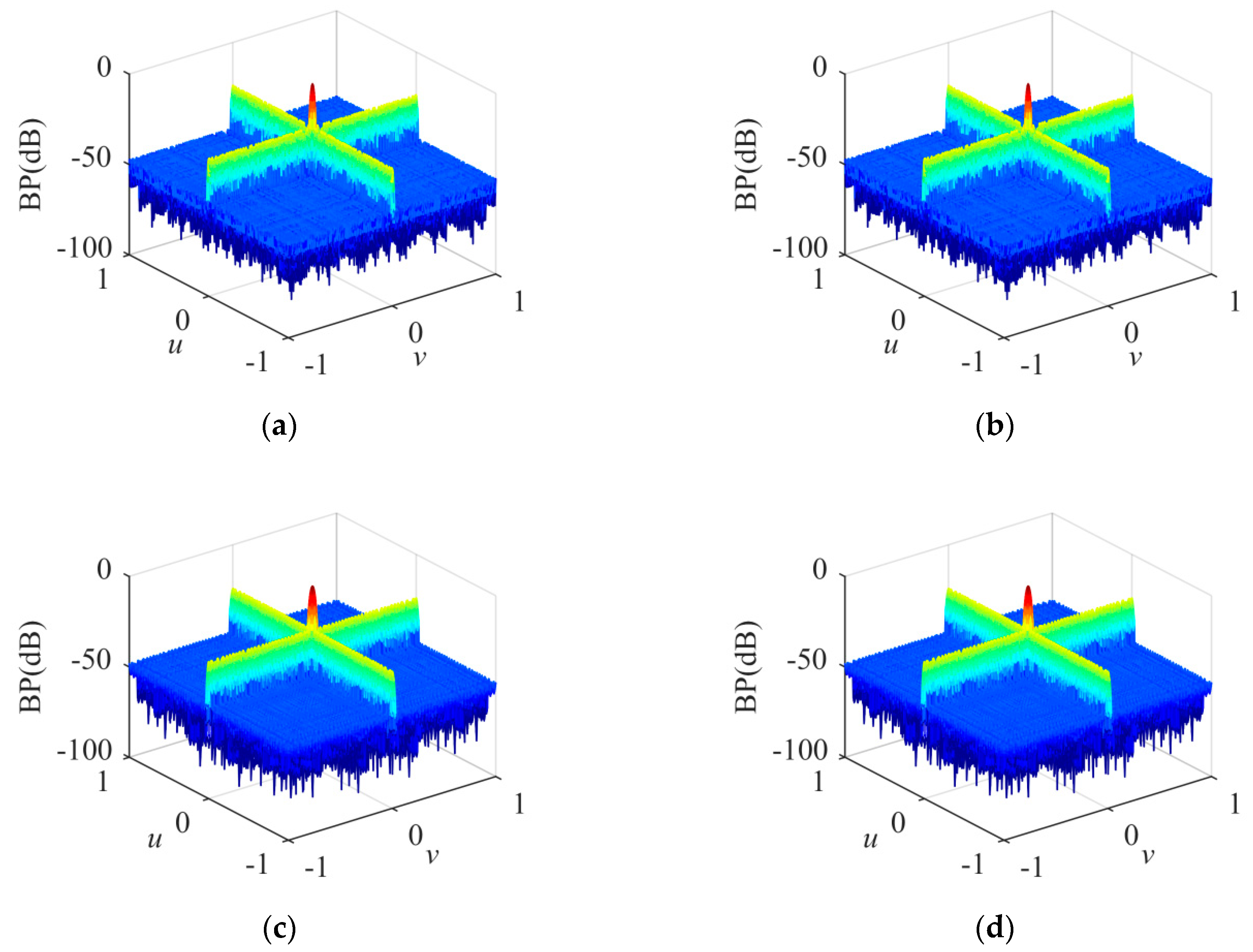

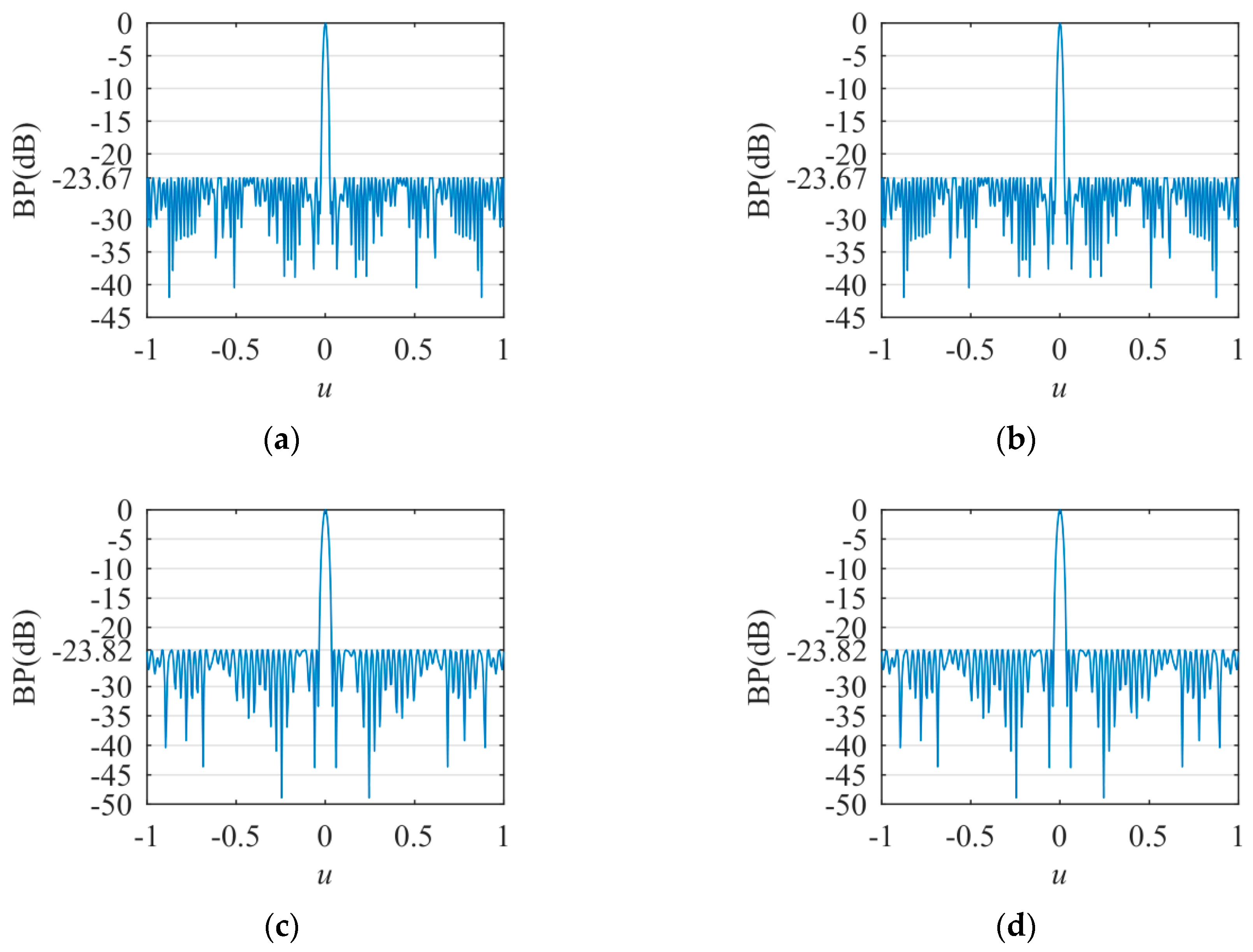

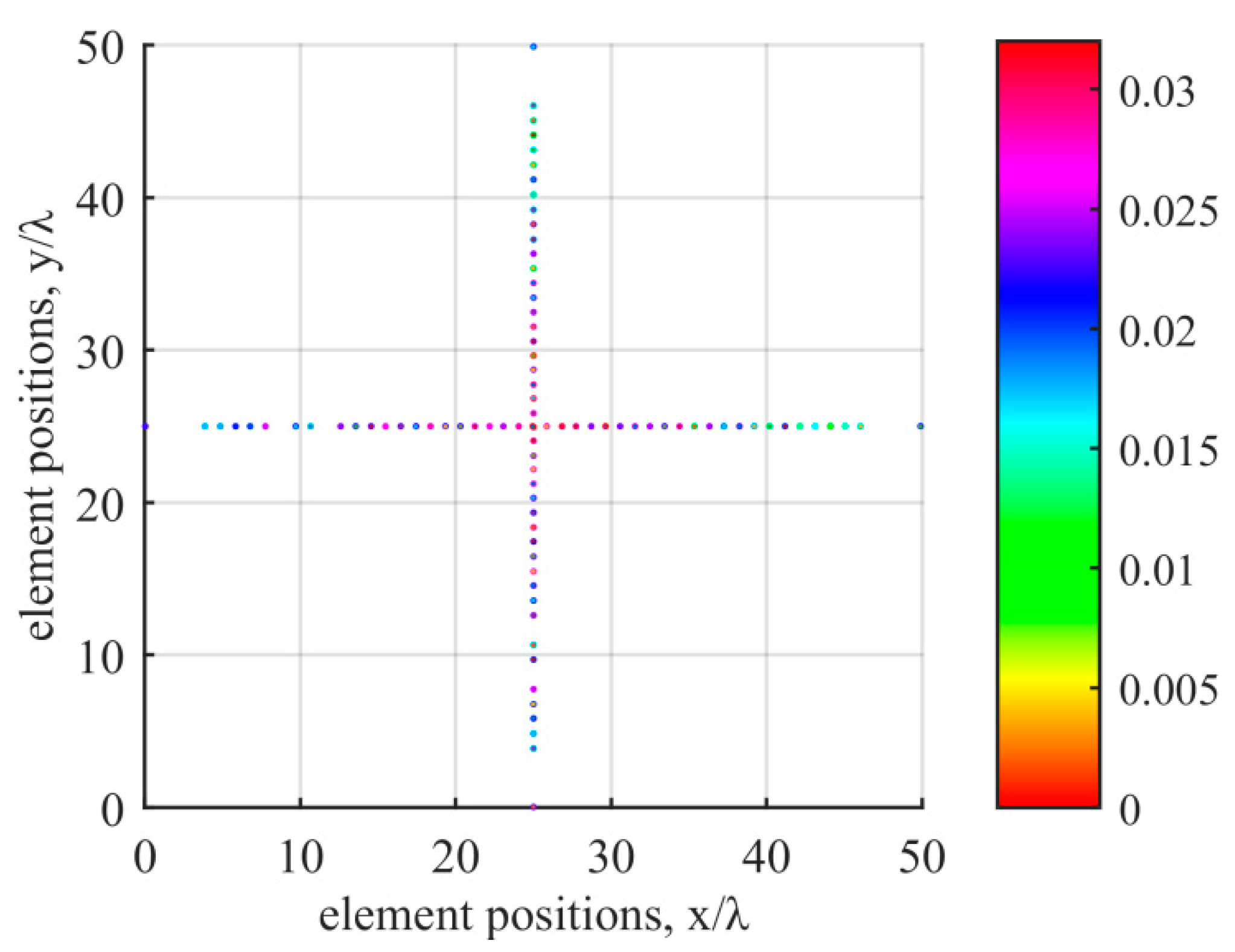

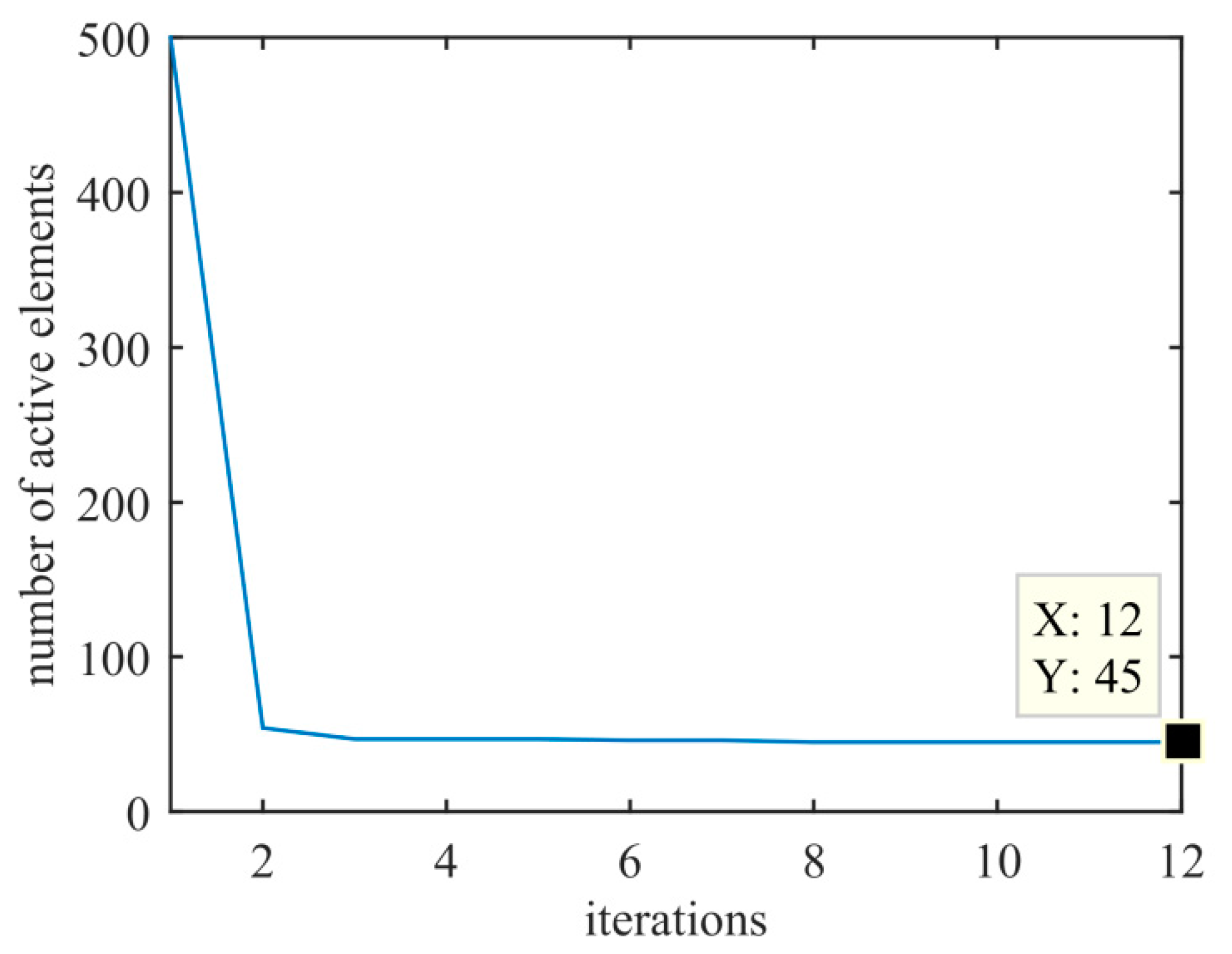

3.1. Sparse Cross Array Synthesis

3.2. Flat-Top BP Synthesis

3.3. Asymmetric Sidelobe BP Synthesis

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| Position (mm) | Weight (10−2) | Position (mm) | Weight (10−2) | Position (mm) | Weight (10−2) |

|---|---|---|---|---|---|

| 0.1038 | 2.2558 | 96.6578 | 2.2060 | 167.1522 | 2.2554 |

| 19.3194 | 1.7564 | 101.4893 | 2.2573 | 171.9609 | 2.6090 |

| 24.2500 | 1.8013 | 106.1168 | 2.6589 | 176.7500 | 1.2704 |

| 29.1723 | 2.0939 | 110.8537 | 2.7175 | 181.5949 | 2.4367 |

| 33.8341 | 2.0196 | 115.2992 | 2.4747 | 186.2500 | 1.8669 |

| 38.7665 | 2.5215 | 120.2500 | 2.8603 | 191.2500 | 2.2790 |

| 48.4842 | 2.0765 | 124.7500 | 3.1827 | 196.0028 | 1.7698 |

| 53.2712 | 1.7036 | 129.2546 | 2.7464 | 200.9293 | 1.2983 |

| 62.9700 | 2.4015 | 134.1519 | 3.0842 | 205.9162 | 2.0464 |

| 67.8223 | 2.0669 | 138.6537 | 2.8710 | 210.7037 | 1.3387 |

| 72.7500 | 2.3144 | 143.5647 | 2.3834 | 215.5961 | 1.6287 |

| 77.3822 | 2.7078 | 148.1585 | 3.1962 | 220.5116 | 1.0982 |

| 82.2757 | 2.3153 | 152.8504 | 2.3721 | 225.2500 | 1.4520 |

| 87.1984 | 2.1662 | 157.6643 | 2.5370 | 230.1645 | 1.5396 |

| 91.8423 | 2.8201 | 162.4364 | 2.4557 | 249.5222 | 2.0865 |

References

- Hansen, R.K.; Andersen, P.A. The application of real time 3D acoustical imaging. In Proceedings of the IEEE Oceanic Engineering Society, Nice, France, 28 September–1 October 1998. [Google Scholar]

- Wachowski, N.; Azimi-Sadjadi, M.R. A New Synthetic Aperture Sonar Processing Method Using Coherence Analysis. IEEE J. Ocean. Eng. 2011, 36, 665–678. [Google Scholar] [CrossRef]

- Negahdaripour, S.; Madjidi, H. Stereovision imaging on submersible platforms for 3-D mapping of benthic habitats and sea-floor structures. IEEE J. Ocean. Eng. 2003, 28, 625–650. [Google Scholar] [CrossRef]

- Murino, V.; Trucco, A. Three-dimensional image generation and processing in underwater acoustic vision. Proc. IEEE 2000, 88, 1903–1948. [Google Scholar] [CrossRef]

- Trucco, A.; Palmese, M.; Repetto, S. Devising an Affordable Sonar System for Underwater 3-D Vision. IEEE Trans. Instrum. Meas. 2008, 57, 2348–2354. [Google Scholar] [CrossRef]

- Karaman, M.; Wygant, I.O.; Oralkan, O.; Khuri-Yakub, B. Minimally Redundant 2-D Array Designs for 3-D Medical Ultrasound Imaging. IEEE Trans. Med. Imaging 2009, 28, 1051–1061. [Google Scholar] [CrossRef] [PubMed]

- Okino, M.; Higashi, Y. Measurement of seabed topography by multibeam sonar using CFFT. IEEE J. Ocean. Eng. 1986, 11, 474–479. [Google Scholar] [CrossRef]

- Henderson, J.; Lacker, S. Seafloor profiling by a wideband sonar: Simulation, frequency-response optimization, and results of a brief sea test. IEEE J. Ocean. Eng. 1989, 14, 94–107. [Google Scholar] [CrossRef]

- Liu, X.; Zhou, F.; Zhou, H.; Tian, X.; Jiang, R.; Chen, Y. A Low-Complexity Real-Time 3-D Sonar Imaging System with a Cross Array. IEEE J. Ocean. Eng. 2015, 41, 262–273. [Google Scholar] [CrossRef]

- Wilson, M.; McHugh, R. Sparse-periodic hybrid array beamformer. IET Radar Sonar Navig. 2007, 1, 116. [Google Scholar] [CrossRef]

- Pinchera, D.; Migliore, M.D.; Schettino, F.; Lucido, M.; Panariello, G. An Effective Compressed-Sensing Inspired Deterministic Algorithm for Sparse Array Synthesis. IEEE Trans. Antennas Propag. 2018, 66, 149–159. [Google Scholar] [CrossRef]

- Trucco, A. Thinning and weighting of large planar arrays by simulated annealing. IEEE Trans. Ultrason. Ferroelectr. Freq. Control. 1999, 46, 347–355. [Google Scholar] [CrossRef] [PubMed]

- Chen, P.; Zheng, Y.; Zhu, W. Optimized Simulated Annealing Algorithm for Thinning and Weighting Large Planar Arrays in Both Far-Field and Near-Field. IEEE J. Ocean. Eng. 2011, 36, 658–664. [Google Scholar] [CrossRef]

- Liu, X.; Zhou, H.; Tian, X.; Zhou, F. Synthesis of extreme sparse array for real-time 3D acoustic imaging. Electron. Lett. 2015, 51, 803–804. [Google Scholar] [CrossRef]

- Haupt, R. Thinned arrays using genetic algorithms. IEEE Trans. Antennas Propag. 1994, 42, 993–999. [Google Scholar] [CrossRef]

- Quevedo-Teruel, O.; Rajo-Iglesias, E. Ant Colony Optimization in Thinned Array Synthesis with Minimum Sidelobe Level. IEEE Antennas Wirel. Propag. Lett. 2006, 5, 349–352. [Google Scholar] [CrossRef]

- Hooker, J.W.; Arora, R.K. Optimal Thinning Levels in Linear Arrays. IEEE Antennas Wirel. Propag. Lett. 2010, 9, 771–774. [Google Scholar] [CrossRef]

- Gu, B.; Chen, Y.; Liu, X.; Zhou, F.; Jiang, R. A Distributed Convex Optimization Compressed Sensing Method for Sparse Planar Array Synthesis in 3-D Imaging Sonar Systems. IEEE J. Ocean. Eng. 2020, 45, 1022–1033. [Google Scholar] [CrossRef]

- Nai, S.E.; Ser, W.; Yu, Z.L.; Chen, H. Beampattern Synthesis for Linear and Planar Arrays with Antenna Selection by Convex Optimization. IEEE Trans. Antennas Propag. 2010, 58, 3923–3930. [Google Scholar] [CrossRef]

- Oliveri, G.; Massa, A. Bayesian Compressive Sampling for Pattern Synthesis with Maximally Sparse Non-Uniform Linear Arrays. IEEE Trans. Antennas Propag. 2010, 59, 467–481. [Google Scholar] [CrossRef]

- Oliveri, G.; Carlin, M.; Massa, A. Complex-Weight Sparse Linear Array Synthesis by Bayesian Compressive Sampling. IEEE Trans. Antennas Propag. 2012, 60, 2309–2326. [Google Scholar] [CrossRef]

- Oliveri, G.; Bekele, E.T.; Robol, F.; Massa, A. Sparsening Conformal Arrays Through a Versatile BCS-based method. IEEE Trans. Antennas Propag. 2014, 62, 1681–1689. [Google Scholar] [CrossRef]

- Yan, F.; Yang, F.; Dong, T.; Yang, P. Synthesis of planar sparse arrays by perturbed compressive sampling framework. IET Microw. Antennas Propag. 2016, 10, 1146–1153. [Google Scholar] [CrossRef]

- Yan, F.; Yang, P.; Yang, F.; Zhou, L.; Gao, M. Synthesis of Pattern Reconfigurable Sparse Arrays with Multiple Measurement Vectors FOCUSS Method. IEEE Trans. Antennas Propag. 2017, 65, 602–611. [Google Scholar] [CrossRef]

- Fuchs, B. Synthesis of Sparse Arrays with Focused or Shaped Beampattern via Sequential Convex Optimizations. IEEE Trans. Antennas Propag. 2012, 60, 3499–3503. [Google Scholar] [CrossRef]

- D’Urso, M.; Prisco, G.; Tumolo, R.M. Maximally Sparse, Steerable, and Nonsuperdirective Array Antennas via Convex Optimizations. IEEE Trans. Antennas Propag. 2016, 64, 3840–3849. [Google Scholar] [CrossRef]

- Pinchera, D.; Migliore, M.D.; Panariello, G. Synthesis of Large Sparse Arrays Using IDEA (Inflating-Deflating Exploration Algorithm). IEEE Trans. Antennas Propag. 2018, 66, 4658–4668. [Google Scholar] [CrossRef]

- Buttazzoni, G.; Vescovo, R. Synthesis of sparse arrays radiating shaped beams. In Proceedings of the 2016 IEEE International Symposium on Antennas and Propagation (APSURSI), Fajardo, Puerto Rico, 26 June–1 July 2016; pp. 759–760. [Google Scholar]

- Liang, L.; Jin, C.; Li, H.; Liu, J.; Jiang, Y.; Zhou, J. A hybrid algorithm of orthogonal perturbation method and convex optimization for beamforming of sparse antenna array. Electromagnetics 2020, 40, 227–243. [Google Scholar] [CrossRef]

- Zheng, M.-Y.; Chen, K.; Wu, H.-G.; Liu, X.-P. Sparse Planar Array Synthesis Using Matrix Enhancement and Matrix Pencil. Int. J. Antennas Propag. 2013, 2013, 1–7. [Google Scholar] [CrossRef]

- Zhao, D.; Liu, X.; Chen, W.; Chen, Y. Optimized Design for Sparse Cross Arrays in Both Near-Field and Far-Field. IEEE J. Ocean. Eng. 2019, 44, 783–795. [Google Scholar] [CrossRef]

- Candes, E.J.; Wakin, M.B.; Boyd, S.P. Enhancing Sparsity by Reweighted ℓ(1) Minimization. J. Fourier Anal. Appl. 2008, 14, 877–905. [Google Scholar] [CrossRef]

- CVX: MATLAB Software for Disciplined Convex Programming. Available online: http://cvxr.com/cvx/ (accessed on 5 July 2020).

- Toh, K.C.; Todd, M.J.; Tütüncü, R.H. Solving semidefinite-quadratic-linear programs using SDPT3. Math. Program. 2003, 95, 189–217. [Google Scholar] [CrossRef]

- Helmberg, C.; Rendl, F.; Vanderbei, R.J.; Wolkowicz, H. An Interior-Point Method for Semidefinite Programming. SIAM J. Optim. 1996, 6, 342–361. [Google Scholar] [CrossRef]

- Kojima, M.; Shindoh, S.; Hara, S. Interior-Point Methods for the Monotone Semidefinite Linear Complementarity Problem in Symmetric Matrices. SIAM J. Optim. 1997, 7, 86–125. [Google Scholar] [CrossRef]

- Monteiro, R.D.C. Primal--Dual Path-Following Algorithms for Semidefinite Programming. SIAM J. Optim. 1997, 7, 663–678. [Google Scholar] [CrossRef]

- Nesterov, Y.; Todd, M.J. Self-scaled barriers and interior-point methods in convex programming. Math. Oper. Res. 1997, 22, 1–42. [Google Scholar] [CrossRef]

- Mehrotra, S. On the Implementation of a Primal-Dual Interior Point Method. SIAM J. Optim. 1992, 2, 575–601. [Google Scholar] [CrossRef]

| Method | Ns1 | SLP (dB) | RES (°) 2 | ||

|---|---|---|---|---|---|

| Near-Field | Far-Field | ||||

| Liu et al. [14] | 110 | −18.7 | −22 | 1.28 | |

| Zhao et al. [31] | 1st optimization | 118 | −21.61 | −21.9 | 1.28 |

| 2nd optimization | 107 | - | −22 | 1.28 | |

| Proposed method | 90 | −23.67 | −23.67 | 1.22 | |

| Method | Ns1 | SLP (dB) | Beam Width (°) |

|---|---|---|---|

| Liang et al. [29] | 20 | −33.25 | 41.0 |

| proposed method | 18 | −35.27 | 41.4 |

| Position (λ) | Weight | Position (λ) | Weight | Position (λ) | Weight |

|---|---|---|---|---|---|

| 0.48 | 0.0080 | 4.93 | −0.0685 | 9.05 | −0.0753 |

| 0.78 | 0.0011 | 5.25 | −0.0421 | 10.00 | 0.0169 |

| 1.14 | 0.0266 | 6.34 | 0.2849 | 10.40 | 0.0479 |

| 2.31 | −0.0266 | 6.99 | 0.4608 | 11.68 | −0.0272 |

| 3.58 | 0.0454 | 7.64 | 0.2938 | 12.85 | 0.0145 |

| 3.98 | 0.0225 | 8.74 | −0.0365 | 13.26 | 0.0115 |

| Method | Ns1 | Left SLP (dB) | Right SLP (dB) | Beam Width (°) |

|---|---|---|---|---|

| Liang et al. [29] | 20 | −37.02 | −25.46 | 6.88 |

| proposed method | 14 | −37.01 | −26.00 | 6.21 |

| Position (λ) | │Weight│ | Weight (rad) | Position (λ) | │Weight│ | Weight (rad) |

|---|---|---|---|---|---|

| 0.00 | 0.005 | 0.1284 | 5.57 | 0.1292 | −0.0293 |

| 0.81 | 0.0188 | 0.5582 | 6.42 | 0.1225 | −0.0334 |

| 1.51 | 0.0387 | 0.3489 | 7.26 | 0.1094 | −0.0626 |

| 2.28 | 0.0625 | 0.2164 | 8.08 | 0.0885 | −0.1500 |

| 3.07 | 0.0842 | 0.0674 | 8.91 | 0.0641 | −0.1971 |

| 3.92 | 0.1040 | 0.1162 | 9.72 | 0.0388 | −0.2684 |

| 4.75 | 0.1232 | 0.0733 | 10.48 | 0.0264 | −0.5216 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gu, B.; Chen, Y.; Jiang, R.; Liu, X. Optimization of Sparse Cross Array Synthesis via Perturbed Convex Optimization. Sensors 2020, 20, 4929. https://doi.org/10.3390/s20174929

Gu B, Chen Y, Jiang R, Liu X. Optimization of Sparse Cross Array Synthesis via Perturbed Convex Optimization. Sensors. 2020; 20(17):4929. https://doi.org/10.3390/s20174929

Chicago/Turabian StyleGu, Boxuan, Yaowu Chen, Rongxin Jiang, and Xuesong Liu. 2020. "Optimization of Sparse Cross Array Synthesis via Perturbed Convex Optimization" Sensors 20, no. 17: 4929. https://doi.org/10.3390/s20174929

APA StyleGu, B., Chen, Y., Jiang, R., & Liu, X. (2020). Optimization of Sparse Cross Array Synthesis via Perturbed Convex Optimization. Sensors, 20(17), 4929. https://doi.org/10.3390/s20174929