Low-Cost Curb Detection and Localization System Using Multiple Ultrasonic Sensors

Abstract

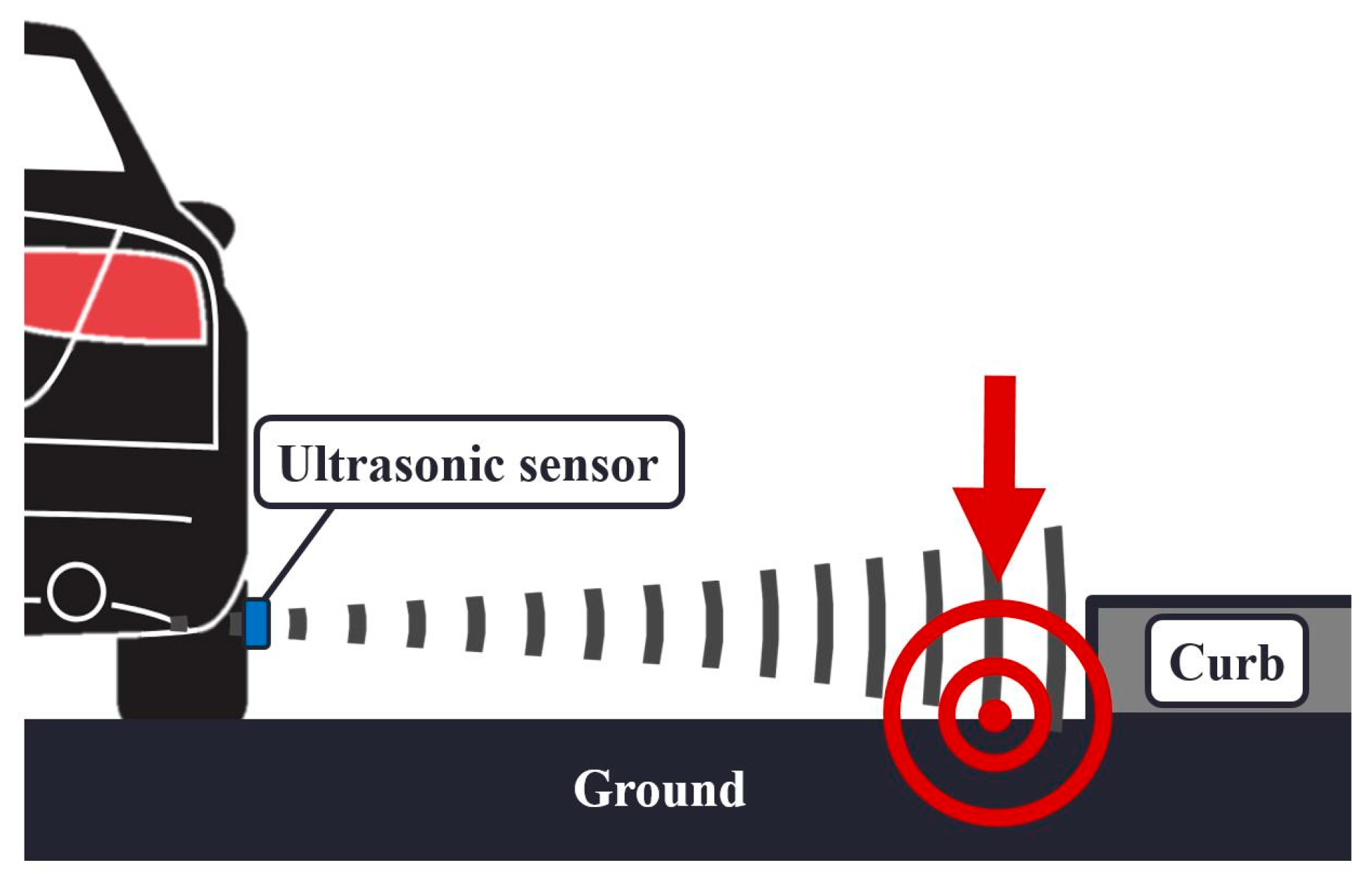

1. Introduction

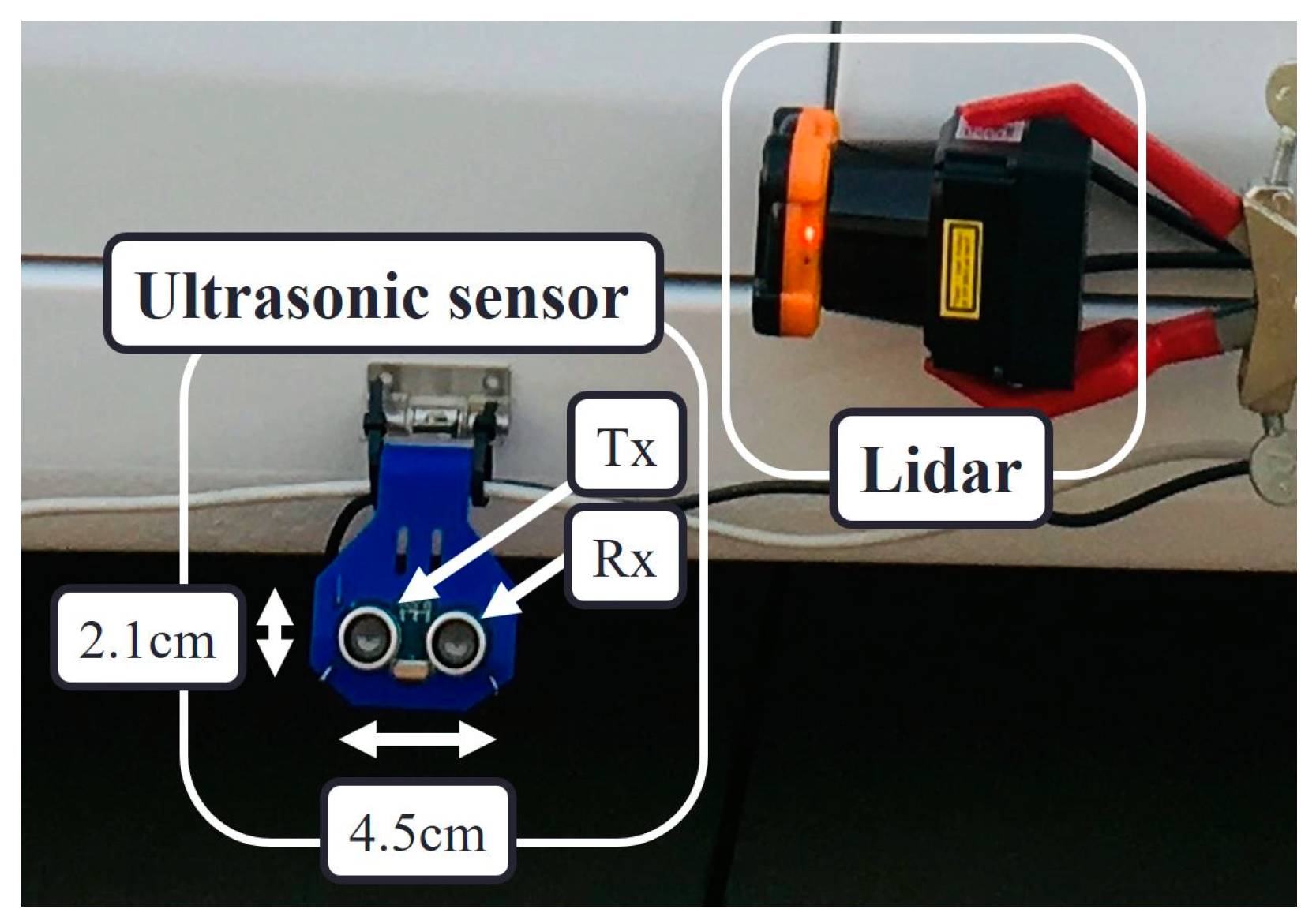

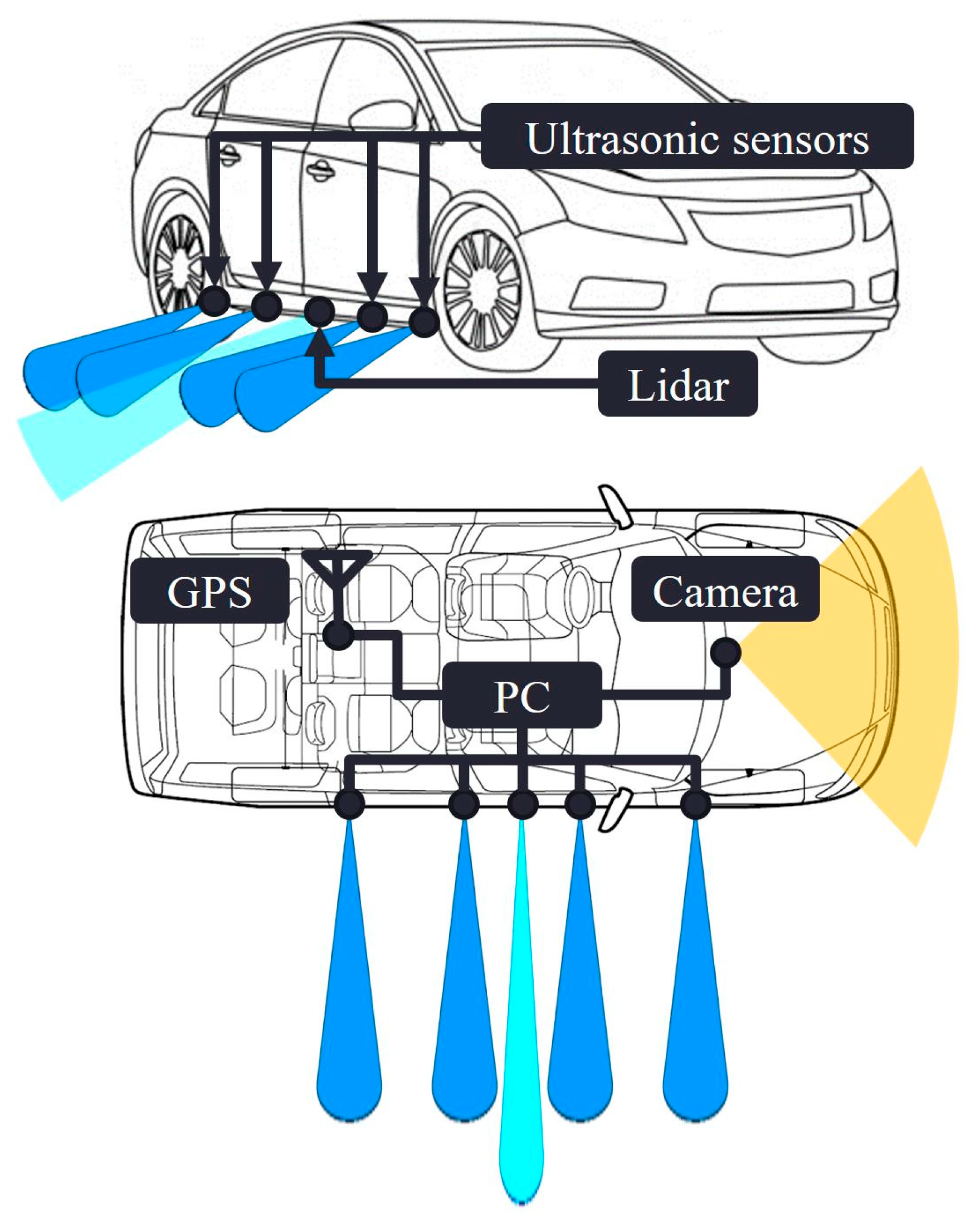

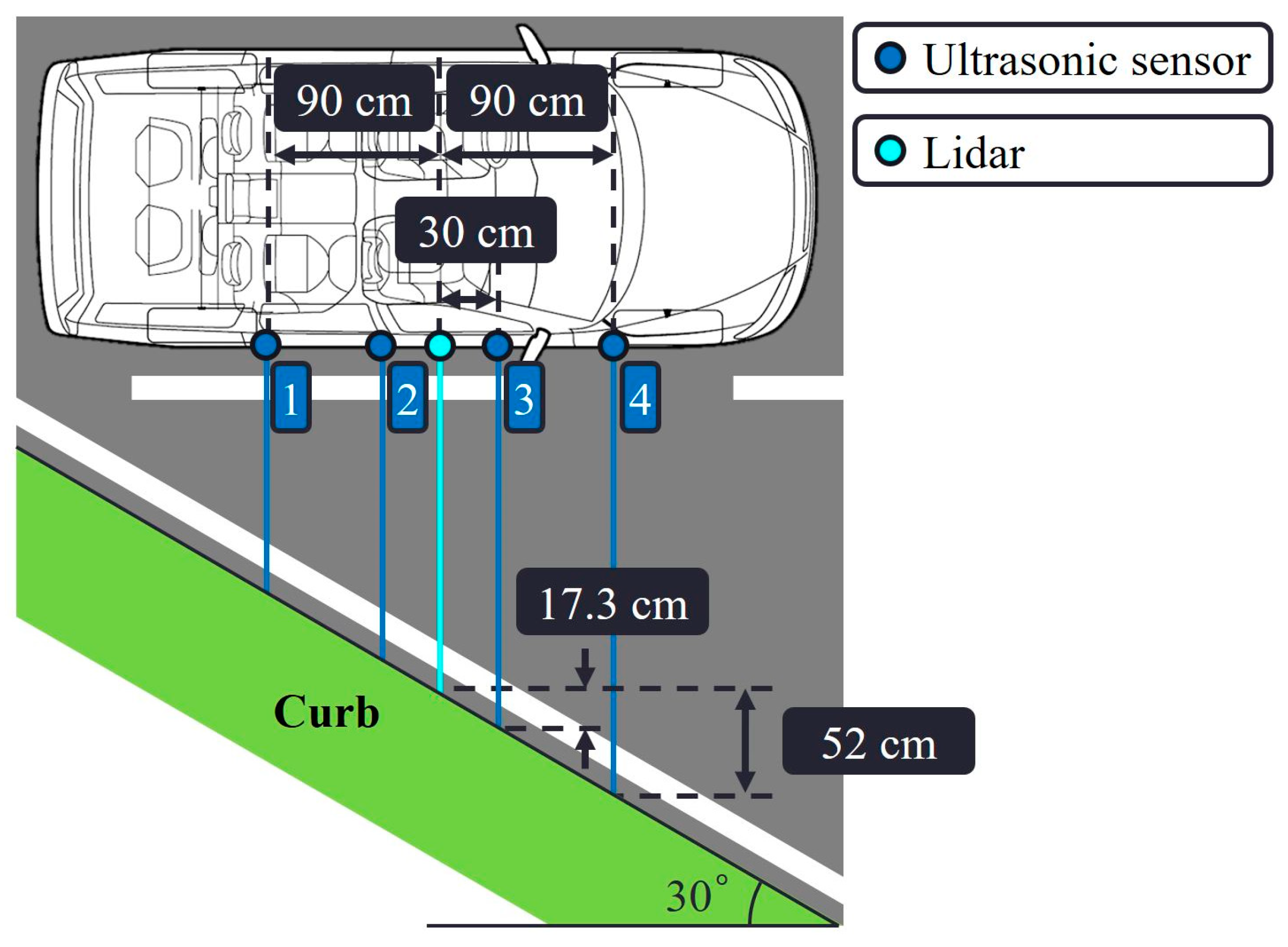

2. Testbed Implementation

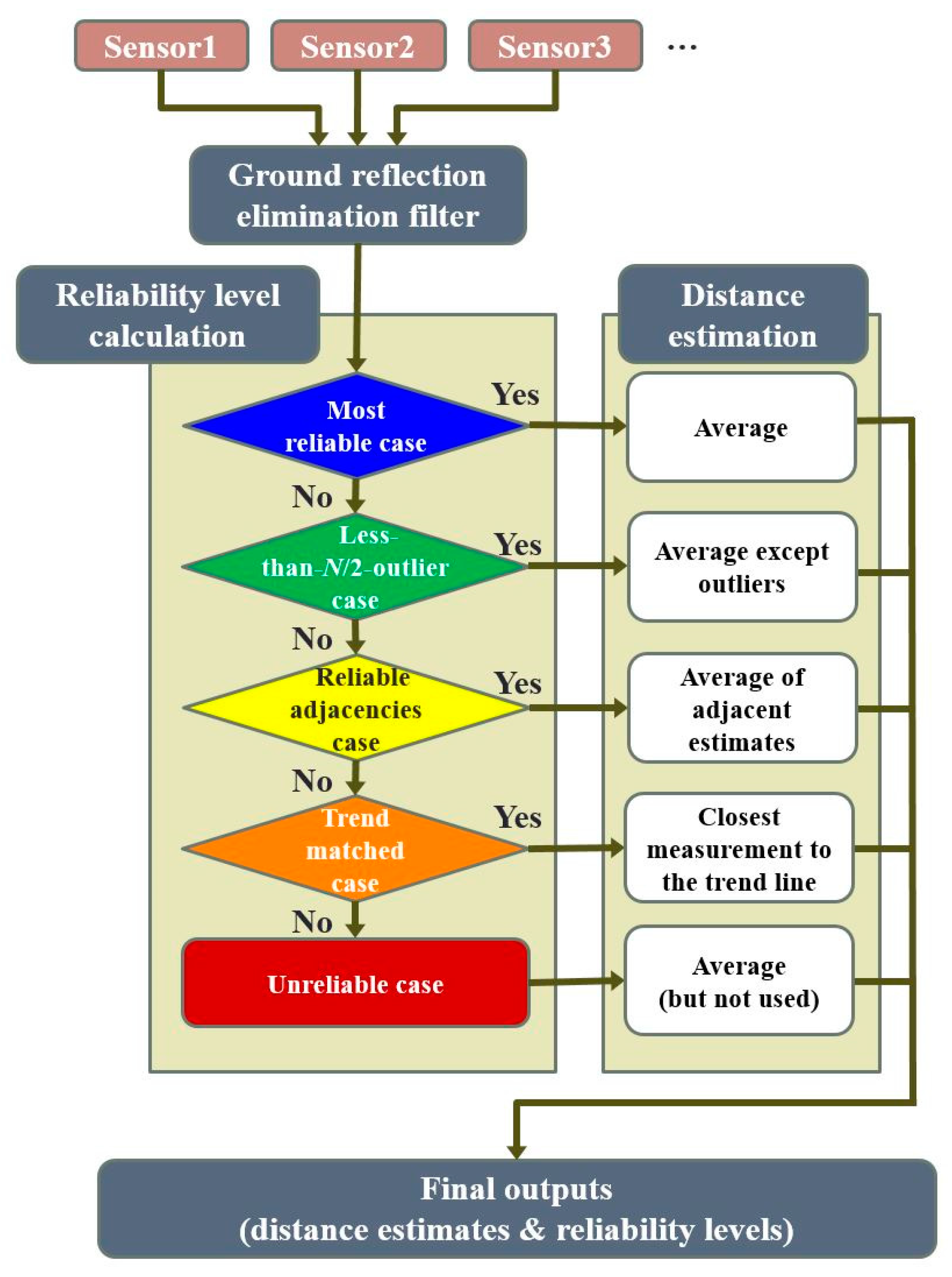

3. Distance Estimation Algorithms for Curb Detection and Localization

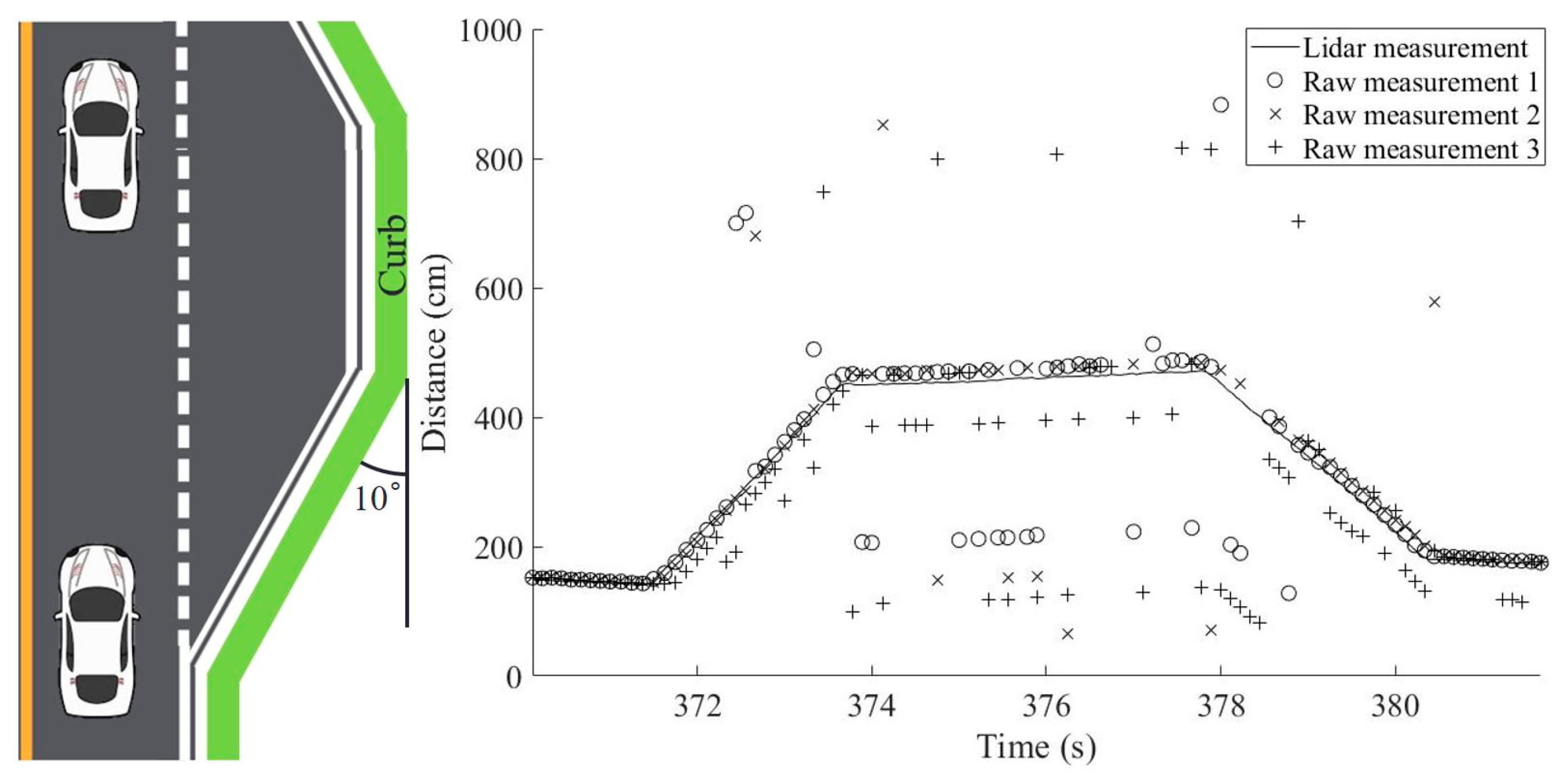

3.1. Simple Averaging and Majority-Voting Algorithms

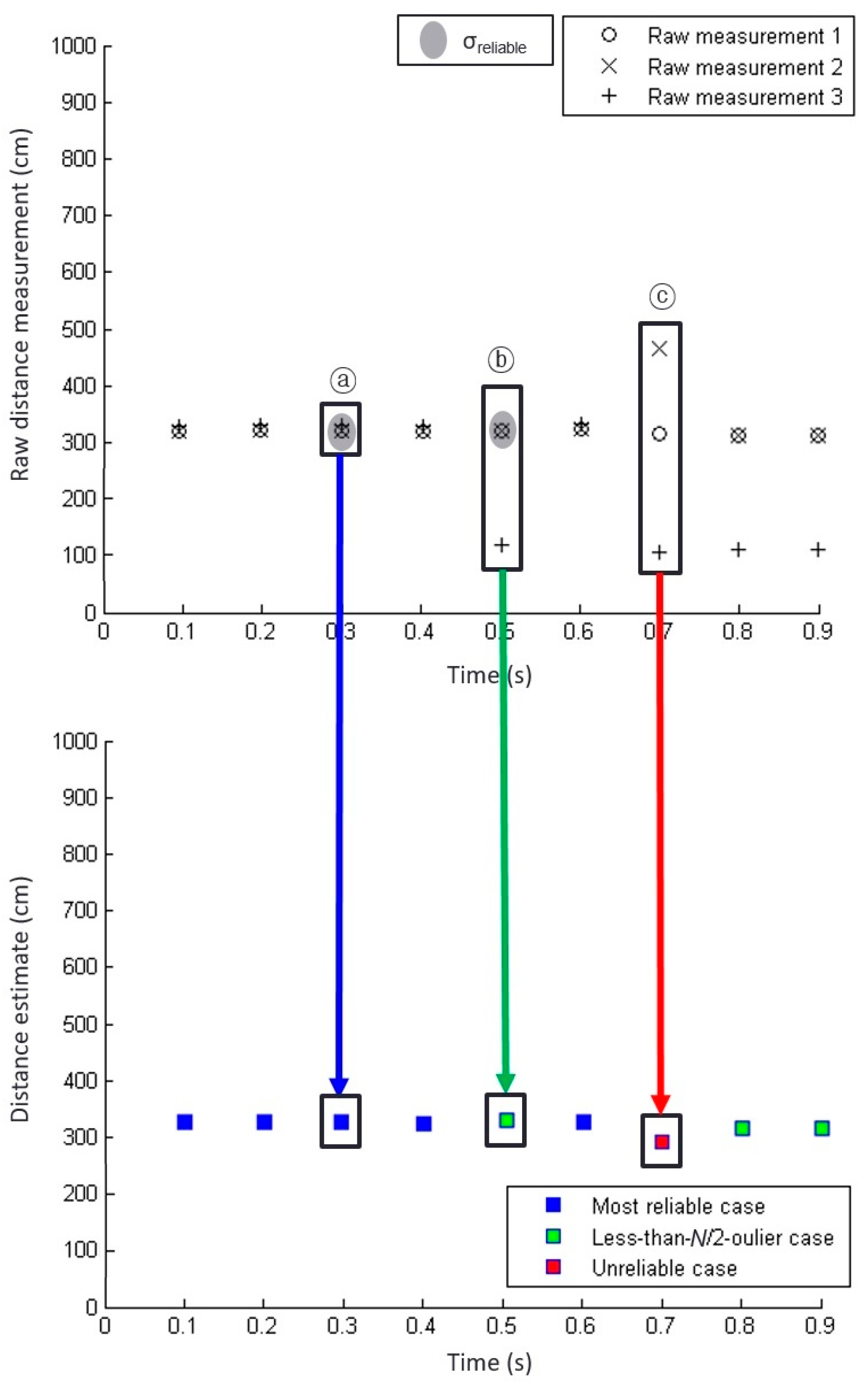

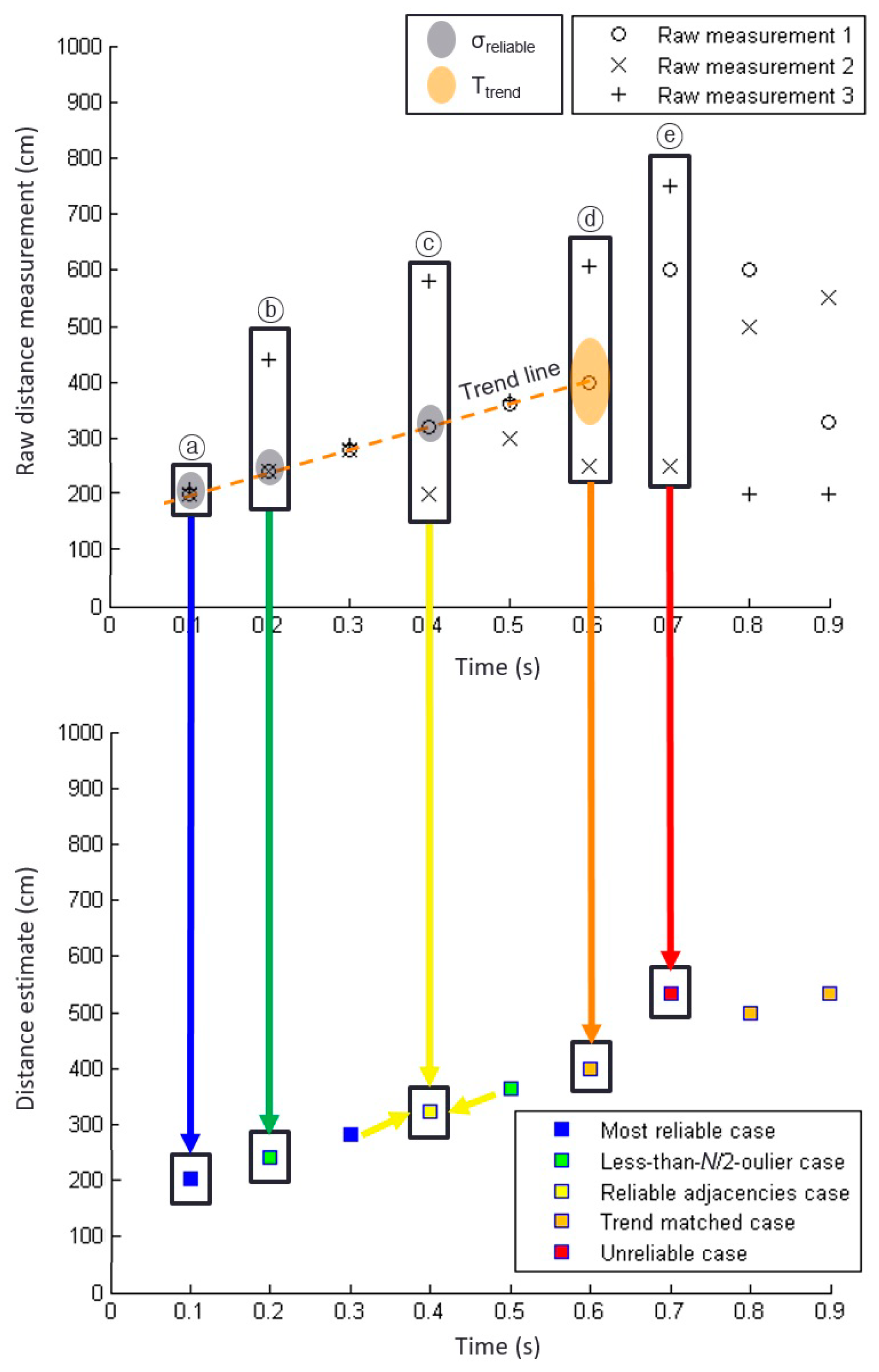

3.2. Improved Distance Estimation Algorithm Considering Measurement Reliability

3.2.1. Most Reliable Case

3.2.2. Less-than-N/2-outlier case

| Algorithm 1. Distance estimation algorithm for the less-than-N/2-outlier case | |

| 1 | For n from 1 to |

| 2 | For all combinations of {N − n sensors}, |

| 3 | If σ {N − n sensors} < σreliable, |

| 4 | Distance estimate = average of the measurements from {N − n sensors}. |

| 5 | Return. |

| 6 | End. |

| 7 | End. |

| 8 | End. |

3.2.3. Unreliable Case

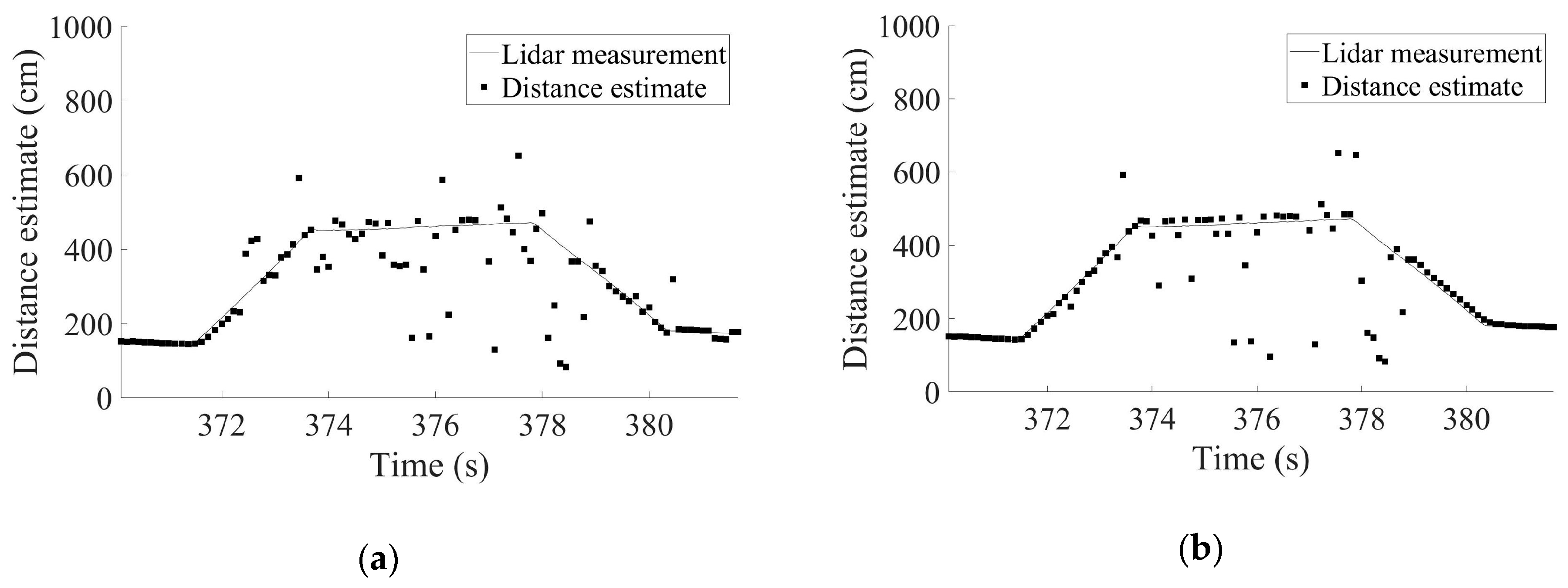

3.3. Outputs from the Improved Distance Estimation Algorithm

4. Proposed Algorithms to Enhance the Availability of Reliable Distance Estimates

4.1. Ground Reflection Elimination Filter

| Algorithm 2. Ground reflection elimination algorithm | |

| 1 | Separate the N sensor measurements of the current epoch into two sets: A = {measurements ≥ dcurb}, B = {measurements < dcurb}. |

| 2 | If the size of set B is smaller than the size of set A, |

| 3 | Replace the measurements in B with the average value of the measurements in A. |

| 4 | End. |

4.2. Distance Estimation Algorithms with Additional Reliablility Cases

4.2.1. Reliable Adjacencies Case

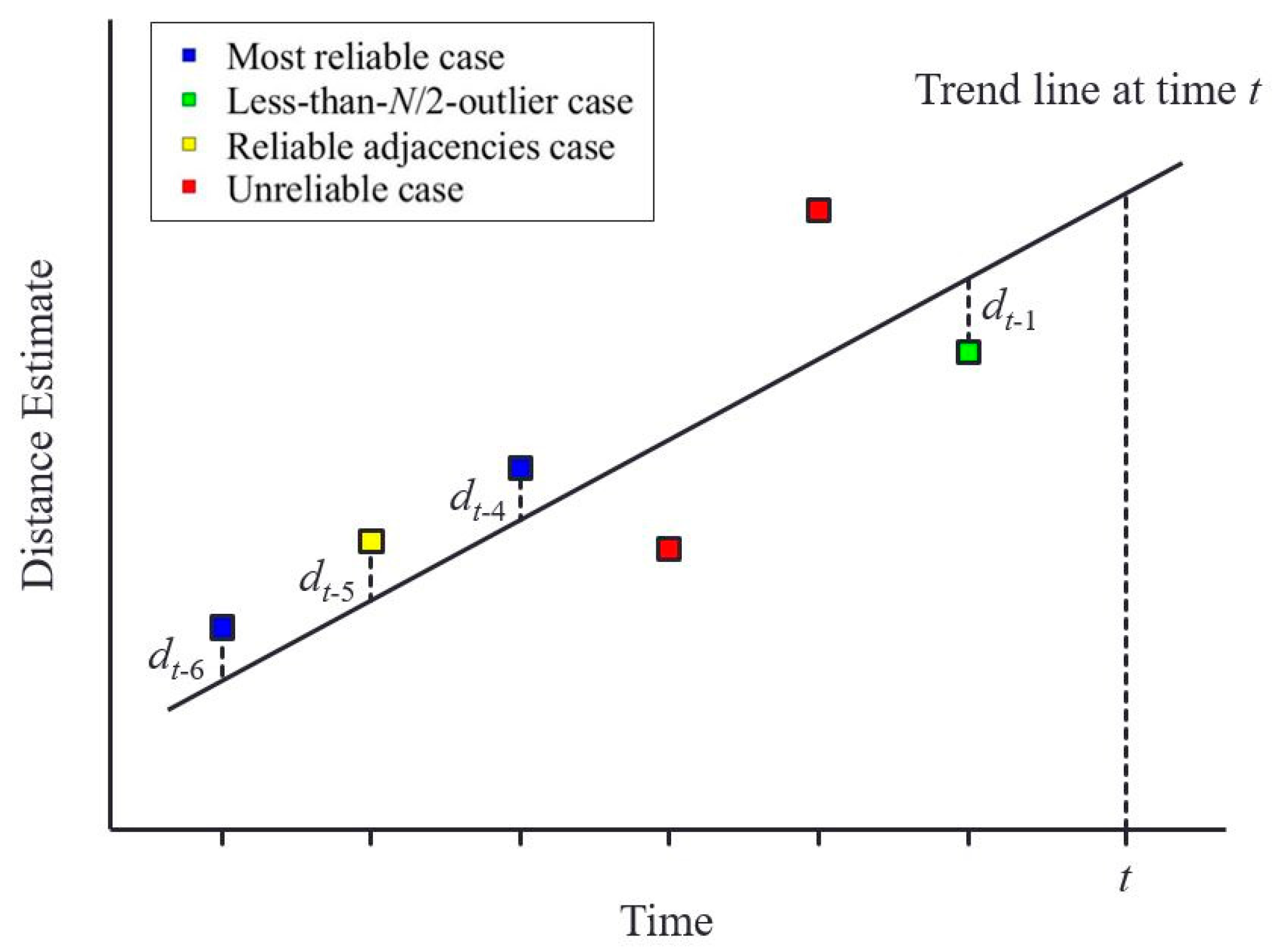

4.2.2. Trend-Matched Case

| Algorithm 3. Distance estimation algorithm for the trend-matched case | |

| 1 | Construct a linear line based on the reliable distance estimates belonging to the recent Ntrend epochs in the least-squares sense. |

| 2 | For all the N sensor measurements of the given epoch, |

| 3 | d = | each sensor measurement – value of the constructed trend line at the same epoch |. |

| 4 | If d < Ttrend, |

| 5 | c[i] = d. |

| 6 | e[i] = corresponding sensor measurement. |

| 7 | Increase i by 1. |

| 8 | End. |

| 9 | End. |

| 10 | ismallest = index i corresponding to the smallest c[i] value. |

| 11 | Distance estimate = e[ismallest]. |

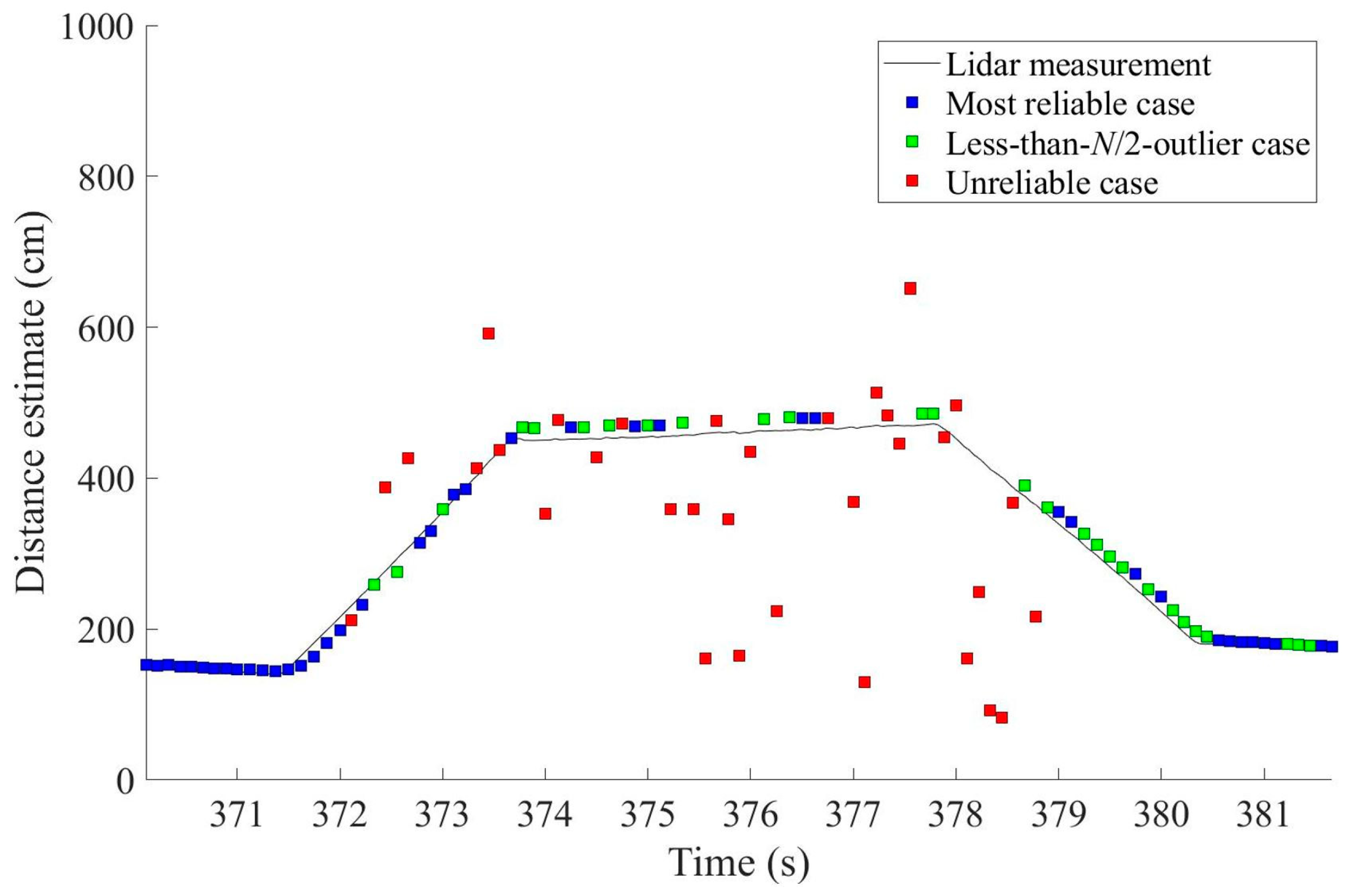

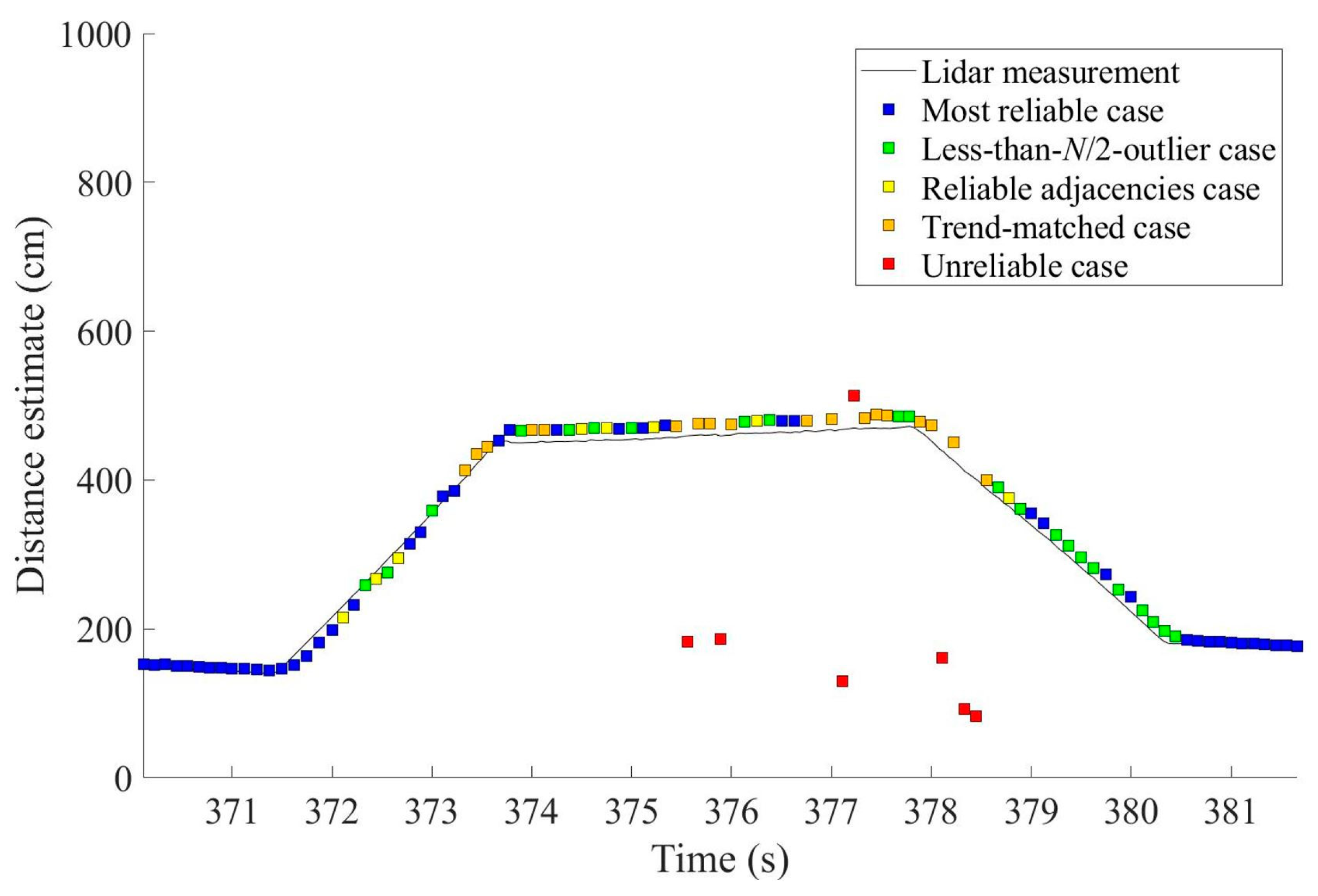

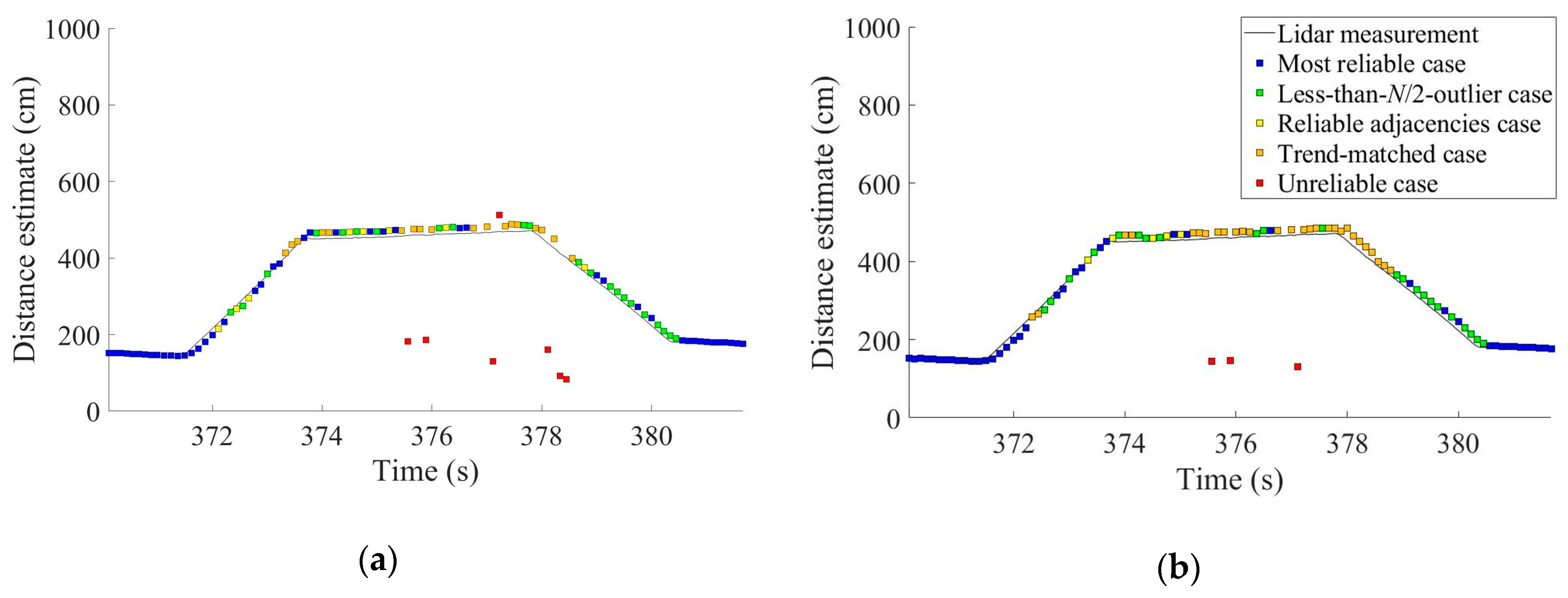

4.3. Outputs from the Proposed Algorithms

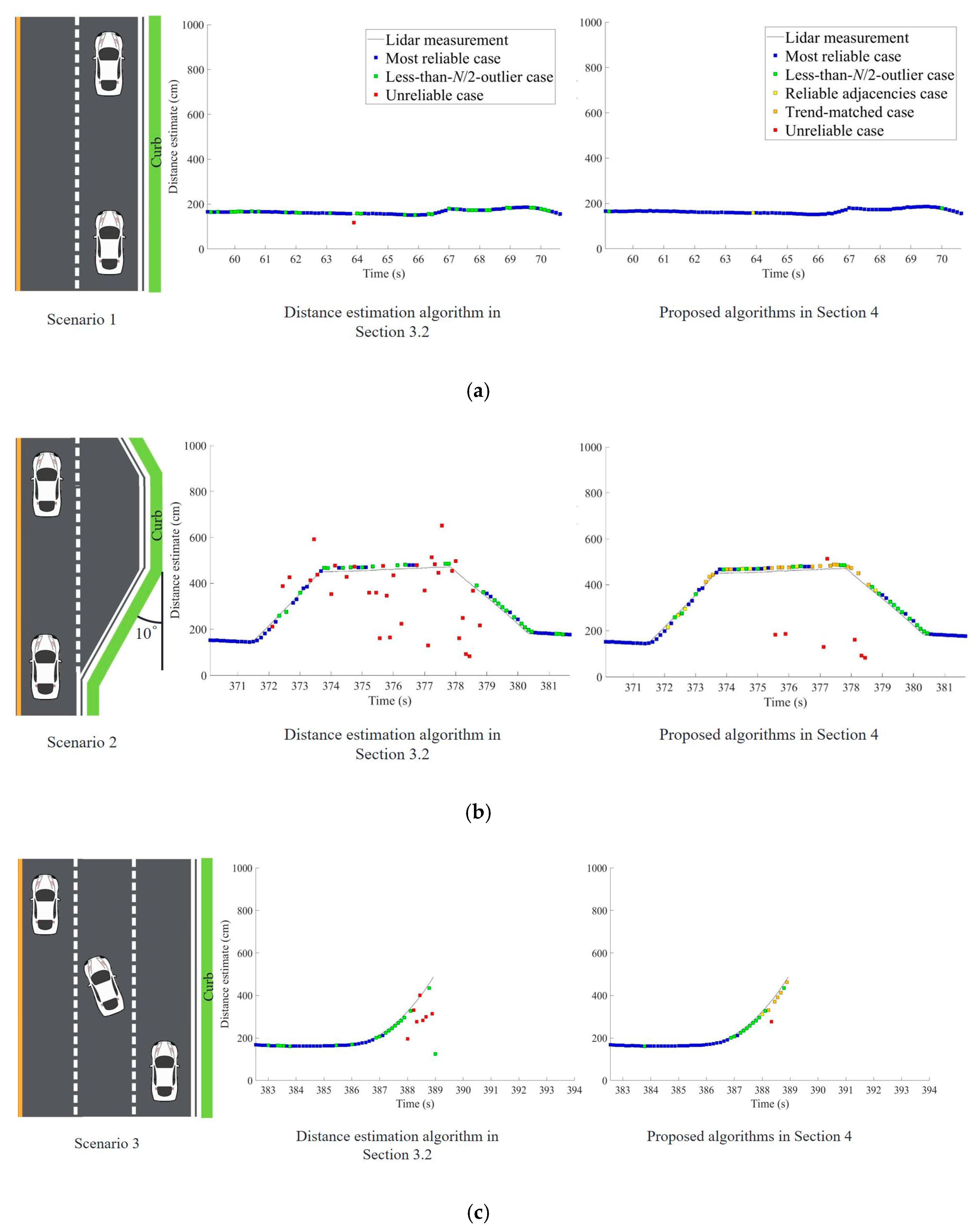

5. Field Test Results

5.1. Field Test Setup

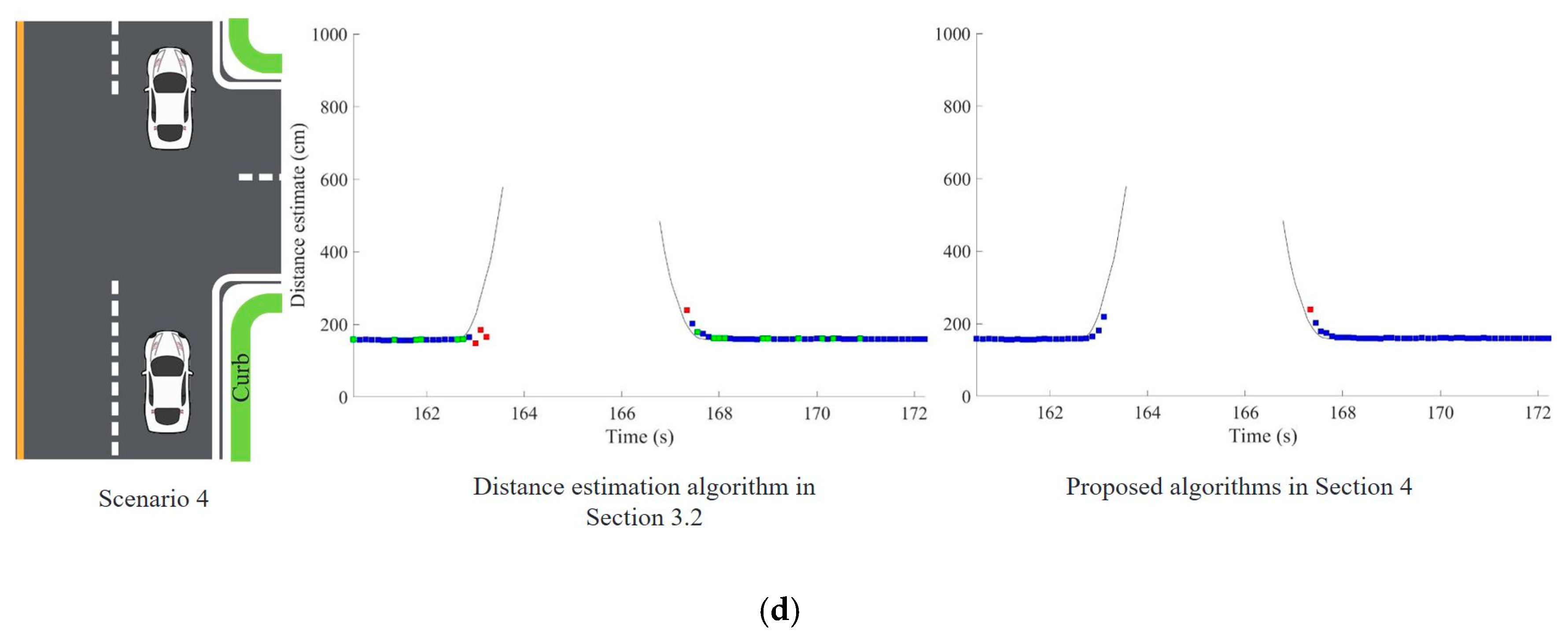

5.2. Test Results in Four Representative Driving Situations

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Rajasekhar, M.V.; Jaswal, A.K. Autonomous Vehicles: The future of automobiles. In Proceedings of the IEEE International Transportation Electrification Conference, Chennai, India, 27–29 August 2015; pp. 27–29. [Google Scholar]

- Schoettle, B.; Sivak, M. A Preliminary Analysis of Real-World Crashes Involving Self-Driving Vehicles; University of Michigan Transportation Research Institute: Ann Arbor, MI, USA, 2015. [Google Scholar]

- Meyer, G.; Deix, S. Research and innovation for automated driving in Germany and Europe. In Road Vehicle Automation; Meyer, G., Beiker, S., Eds.; Springer: Berlin, Germany, 2014; pp. 71–81. [Google Scholar]

- Bohm, F.; Hager, K. Introduction of Autonomous Vehicles in the Swedish Traffic System: Effects and Changes due to the New Self-driving Car Technology. Ph.D. Thesis, Department of Engineering Sciences, Uppsala University, Uppsala, Sweden, June 2015. [Google Scholar]

- Yozevitch, R.; Ben-Moshe, B.; Dvir, A. GNSS accuracy improvement using rapid shadow transitions. IEEE Trans. Intell. Transp. Syst. 2014, 15, 1113–1122. [Google Scholar] [CrossRef]

- Knoop, V.L.; Buist, P.J.; Tiberius, C.C.J.M.; van Arem, B. Automated lane identification using precise point positioning an affordable and accurate GPS technique. In Proceedings of the 15th International IEEE Conference on Intelligent Transportation Systems, Anchorage, AK, USA, 16–19 September 2012. [Google Scholar]

- Sun, R.; Ochieng, W.; Fang, C.; Feng, S. A new algorithm for lane level irregular driving identification. J. Navig. 2015, 68, 1173–1194. [Google Scholar] [CrossRef]

- Bertozzi, M.; Broggi, A. GOLD: A parallel real-time stereo vision system for generic obstacle and lane detection. IEEE Trans. Image Process. 1998, 7, 62–81. [Google Scholar] [CrossRef] [PubMed]

- Jung, S.; Youn, J.; Sull, S. Efficient lane detection based on spatiotemporal images. IEEE Trans. Intell. Transp. Syst. 2016, 17, 289–295. [Google Scholar] [CrossRef]

- De Paula, M.B.; Jung, C.R. Automatic detection and classification of road lane markings using onboard vehicular cameras. IEEE Trans. Intell. Transp. Syst. 2015, 16, 3160–3169. [Google Scholar] [CrossRef]

- Li, Q.; Chen, L.; Li, M.; Shaw, S.-L.; Nuchter, A. A sensor-fusion drivable-region and lane-detection system for autonomous vehicle navigation in challenging road scenarios. IEEE Trans. Veh. Technol. 2013, 63, 540–555. [Google Scholar] [CrossRef]

- Cui, G.; Wang, J.; Li, J. Robust multilane detection and tracking in urban scenarios based on LIDAR and mono-vision. IEEE Trans. Image Process. 2014, 8, 269–279. [Google Scholar] [CrossRef]

- Cheng, M.; Zhang, Y.; Su, Y.; Alvarez, J.M.; Kong, H. Curb detection for road and sidewalk detection. IEEE Trans. Veh. Technol. 2018, 67, 10330–10342. [Google Scholar] [CrossRef]

- Abbott, E.; Powell, D. Land-vehicle navigation using GPS. Proc. IEEE 2002, 87, 145–162. [Google Scholar] [CrossRef]

- Chen, Q.; Niu, X.; Zhang, Q.; Cheng, Y. Railway track irregularity measuring by GNSS/INS integration. Navig. J. Inst. Navig. 2015, 62, 83–93. [Google Scholar] [CrossRef]

- Seo, J.; Walter, T. Future dual-frequency GPS navigation system for intelligent air transportation under strong ionospheric scintillation. IEEE Trans. Intell. Transp. Syst. 2014, 15, 2224–2236. [Google Scholar] [CrossRef]

- Seo, J.; Walter, T.; Enge, P. Availability impact on GPS aviation due to strong ionospheric scintillation. IEEE Trans. Aerosp. Electron. Syst. 2011, 47, 1963–1973. [Google Scholar] [CrossRef]

- Yoon, D.; Kee, C.; Seo, J.; Park, B. Position Accuracy improvement by implementing the DGNSS-CP algorithm in smartphones. Sensors 2016, 16, 910. [Google Scholar] [CrossRef]

- Park, B.; Kee, C. The compact network RTK method: An effective solution to reduce GNSS temporal and spatial decorrelation error. J. Navig. 2010, 63, 343–362. [Google Scholar] [CrossRef]

- Axell, E.; Eklof, F.M.; Johansson, P.; Alexandersson, M.; Akos, D.M. Jamming detection in GNSS receivers: Performance evaluation of field trials. Navig. J. Inst. Navig. 2015, 62, 73–82. [Google Scholar] [CrossRef]

- Son, P.-W.; Rhee, J.H.; Seo, J. Novel multichain-based Loran positioning algorithm for resilient navigation. IEEE Trans. Aerosp. Electron. Syst. 2018, 54, 666–679. [Google Scholar] [CrossRef]

- Son, P.-W.; Rhee, J.H.; Hwang, J.; Seo, J. Universal kriging for Loran ASF map generation. IEEE Trans. Aerosp. Electron. Syst. in press. [CrossRef]

- Wildemeersch, M.; Slump, C.H.; Rabbachin, A. Acquisition of GNSS signals in urban interference environment. IEEE Trans. Aerosp. Electron. Syst. 2014, 50, 1078–1091. [Google Scholar] [CrossRef]

- Chen, Y.-H.; Juang, J.-C.; Seo, J.; Lo, S.; Akos, D.M.; de Lorenzo, D.S.; Enge, P. Design and implementation of real-time software radio for anti-interference GPS/WAAS sensors. Sensors 2012, 12, 13417–13440. [Google Scholar] [CrossRef]

- Seo, J.; Chen, Y.-H.; de Lorenzo, D.S.; Lo, S.; Enge, P.; Akos, D.M.; Lee, J. A real-time capable software-defined receiver using GPU for adaptive anti-jam GPS sensors. Sensors 2011, 11, 8955–8991. [Google Scholar] [CrossRef]

- Chen, Y.-H.; Juang, J.-C.; de Lorenzo, D.S.; Seo, J.; Lo, S.; Enge, P.; Akos, D.M. Real-time software receiver for gps controlled reception pattern antenna array processing. In Proceedings of the ION GNSS, Portland, OR, USA, 21–24 September 2010; pp. 1932–1941. [Google Scholar]

- Park, K.; Lee, D.; Seo, J. Dual-polarized GPS antenna array algorithm to adaptively mitigate a large number of interference signals. Aerosp. Sci. Technol. 2018, 78, 387–396. [Google Scholar] [CrossRef]

- Chen, Y.-H.; Juang, J.-C.; de Lorenzo, D.S.; Seo, J.; Lo, S.; Enge, P.; Akos, D.M. Real-time dual-frequency (L1/L5) GPS/WAAS software receiver. In Proceedings of the ION GNSS, Portland, OR, USA, 19–23 September 2011; pp. 767–774. [Google Scholar]

- Lee, J.; Morton, J.; Lee, J.; Moon, H.-S.; Seo, J. Monitoring and mitigation of ionospheric anomalies for GNSS-based safety critical systems. IEEE Signal Process. Mag. 2017, 34, 96–110. [Google Scholar] [CrossRef]

- Jiao, Y.; Xu, D.; Morton, Y.; Rino, C. Equatorial scintillation amplitude fading characteristics across the GPS frequency bands. Navig. J. Inst. Navig. 2016, 63, 267–281. [Google Scholar] [CrossRef]

- Chiou, T.-Y.; Seo, J.; Walter, T.; Enge, P. Performance of a doppler-aided GPS navigation system for aviation applications under ionospheric scintillation. In Proceedings of the ION GNSS, Savannah, GA, USA, 16–19 September 2008; pp. 490–498. [Google Scholar]

- Seo, J.; Lee, J.; Pullen, S.; Enge, P.; Close, S. Targeted parameter inflation within ground-based augmentation systems to minimize anomalous ionospheric impact. J. Aircr. 2012, 49, 587–599. [Google Scholar] [CrossRef]

- Olivares-Mendez, M.A.; Sanchez-Lopez, J.L.; Jimenez, F.; Campoy, P.; Sajadi-Alamdari, S.A.; Voos, H. Vision-based Steering Control, Speed Assistance and Localization for Inner-city Vehicles. Sensors 2016, 16, 362. [Google Scholar] [CrossRef] [PubMed]

- Kim, J.H.; Kwon, J.-W.; Seo, J. Multi-UAV-based stereo vision system without GPS for ground obstacle mapping to assist path planning of UGV. Electron. Lett. 2014, 50, 1431–1432. [Google Scholar] [CrossRef]

- Fasano, G.; Accardo, D.; Tirri, A.E.; Moccia, A.; de Lellis, E. Radar/electro-optical data fusion for non-cooperative UAS sense and avoid. Aerosp. Sci. Technol. 2015, 46, 436–450. [Google Scholar] [CrossRef]

- Quist, E.B.; Beard, R.W. Radar Odometry on fixed-wing small unmanned aircraft. IEEE Trans. Aerosp. Electron. Syst. 2016, 52, 396–410. [Google Scholar] [CrossRef]

- Shin, Y.H.; Lee, S.; Seo, J. Autonomous safe landing-area determination for rotorcraft UAVs using multiple IR-UWB radars. Aerosp. Sci. Technol. 2017, 69, 617–624. [Google Scholar] [CrossRef]

- Stainvas, I.; Buda, Y. Performance evaluation for curb detection problem. In Proceedings of the IEEE Intelligent Vehicle Symposium (IV), Dearborn, MI, USA, 8–11 June 2014; pp. 25–30. [Google Scholar]

- Kang, Y.; Roh, C.; Suh, S.-B.; Song, B. A lidar-based decision-making method for road boundary detection using multiple Kalman filters. IEEE Trans. Ind. Electron. 2012, 59, 4360–4368. [Google Scholar] [CrossRef]

- Kodagoda, K.R.S.; Wijesoma, W.S.; Balasuriya, A.P. CuTE: Curb Tracking and Estimation. IEEE Trans. Control Syst. Technol. 2006, 14, 951–957. [Google Scholar] [CrossRef]

- Guan, H.; Li, J.; Yu, Y.; Chapman, M.; Wang, C. Automated Road information extraction from mobile laser scanning data. IEEE Trans. Intell. Transp. Syst. 2015, 16, 194–205. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, J.; Wang, X.; Li, C.; Wang, L. A Real-time Curb Detection and Tracking Method for UGVs by using a 3D-LIDAR Sensor. In Proceedings of the IEEE Conference on Control Applications, Sydney, Australia, 21–23 September 2015; pp. 1020–1025. [Google Scholar]

- Kellner, M.; Hofmann, U.; Bousouraa, M.E.; Kasper, H.; Neumaier, S. Laserscanner based road curb feature detection and efficient mapping using local curb descriptions. In Proceedings of the IEEE 17th International Conference on Intelligent Transportation Systems, Qingdao, China, 8–11 October 2014; pp. 2602–2609. [Google Scholar]

- Jiménez, F.; Clavijo, M.; Castellanos, F.; Álvarez, C. Accurate and detailed transversal road section characteristics extraction using laser scanner. Appl. Sci. 2018, 8, 724. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, J.; Wang, X.; Dolan, J.M. Road-segmentation-based curb detection method for self-driving via a 3D-LiDAR sensor. IEEE Trans. Intell. Transp. Syst. 2018, 19, 3981–3991. [Google Scholar] [CrossRef]

- Su, Y.; Gao, Y.; Zhang, Y.; Alvarez, J.M.; Yang, J.; Kong, H. An illumination-invariant nonparametric model for urban road detection. IEEE Trans. Intell. Veh. 2019, 4, 14–23. [Google Scholar] [CrossRef]

- Wang, H.; Luo, H.; Wen, C.; Cheng, J.; Li, P.; Chen, Y.; Wang, C.; Li, J. Road boundaries detection based on local normal saliency from mobile laser scanning data. IEEE Geosci. Remote Sens. Lett. 2015, 12, 2085–2089. [Google Scholar] [CrossRef]

- Chen, T.; Dai, B.; Liu, D.; Song, J.; Liu, Z. Velodyne-based curb detection up to 50 meters away. In Proceedings of the IEEE Intelligent Vehicles Symposium (IV), Seoul, Korea, 28 June–1 July 2015; pp. 241–248. [Google Scholar]

- Zhao, G.; Yuan, J. Curb detection and tracking using 3D-LIDAR Scanner. In Proceedings of the 19th IEEE International Conference on Image Processing, Orlando, FL, USA, 30 September–3 October 2012; pp. 437–440. [Google Scholar]

- Fernandez, C.; Llorca, D.F.; Stiller, C.; Sotelo, M.A. Curvature-based curb detection method in urban environments using stereo and laser. In Proceedings of the IEEE Intelligent Vehicles Symposium (IV), Seoul, Korea, 29 June–1 July 2015; pp. 579–584. [Google Scholar]

- Hata, A.Y.; Wolf, D.F. Feature detection for vehicle localization in urban environments using a multilayer LIDAR. IEEE Trans. Intell. Transp. Syst. 2015, 17, 420–429. [Google Scholar] [CrossRef]

- Qi, J.; Liu, G.-P. A Robust high-accuracy ultrasound indoor positioning system based on a wireless sensor network. Sensors 2017, 17, 2554. [Google Scholar] [CrossRef]

- Peredes, J.A.; Álvarez, F.J.; Aguilera, T.; Villadangos, J.M. 3D indoor positioning of UAVs with spread spectrum ultrasound and time-of-flight cameras. Sensors 2018, 18, 89. [Google Scholar] [CrossRef]

- Li, S.; Feng, C.; Liang, X.; Qin, H.; Li, H.; Shi, L. A guided vehicle under fire conditions based on a modified ultrasonic obstacle avoidance technology. Sensors 2018, 18, 4366. [Google Scholar] [CrossRef]

- Schiefler, N.T., Jr.; Maia, J.M.; Schneider, F.K.; Zimbico, A.J.; Assef, A.A.; Costa, E.T. Generation and analysis of ultrasound images using plane wave and sparse arrays techniques. Sensors 2018, 18, 3660. [Google Scholar] [CrossRef] [PubMed]

- Bernas, M.; Placzek, B.; Korski, W.; Loska, P.; Smyla, J.; Szymala, P. A survey and comparison of low-cost sensing technologies for road traffic monitoring. Sensors 2018, 18, 3243. [Google Scholar] [CrossRef] [PubMed]

- Rhee, J.H.; Seo, J. Ground Reflection Elimination Algorithms for Enhanced Distance Measurement to the Curbs Using Ultrasonic Sensors. In Proceedings of the ION ITM, Reston, VA, USA, 29 January–1 February 2018; pp. 224–231. [Google Scholar]

| Simple Averaging Algorithm (N = 3) | Majority Voting Algorithm (N = 3) | Improved Algorithm in Section 3.2 (N = 3) | Proposed Algorithms in Section 4 (N = 3) | Proposed Algorithms in Section 4 (N = 4) | |

|---|---|---|---|---|---|

| Mean±SD (cm) | −23.62±95.15 | −24.66±99.19 | 6.48±9.89 | 7.99±10.08 | 7.89±11.01 |

| RMSE (cm) | 97.57 | 101.73 | 11.77 | 12.82 | 13.50 |

| Availability (%) | 99.01 | 99.01 | 66.34 | 92.08 | 96.04 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Rhee, J.H.; Seo, J. Low-Cost Curb Detection and Localization System Using Multiple Ultrasonic Sensors. Sensors 2019, 19, 1389. https://doi.org/10.3390/s19061389

Rhee JH, Seo J. Low-Cost Curb Detection and Localization System Using Multiple Ultrasonic Sensors. Sensors. 2019; 19(6):1389. https://doi.org/10.3390/s19061389

Chicago/Turabian StyleRhee, Joon Hyo, and Jiwon Seo. 2019. "Low-Cost Curb Detection and Localization System Using Multiple Ultrasonic Sensors" Sensors 19, no. 6: 1389. https://doi.org/10.3390/s19061389

APA StyleRhee, J. H., & Seo, J. (2019). Low-Cost Curb Detection and Localization System Using Multiple Ultrasonic Sensors. Sensors, 19(6), 1389. https://doi.org/10.3390/s19061389