Extended Codebook with Multispectral Sequences for Background Subtraction †

Abstract

:1. Introduction

1.1. Background Subtraction

1.2. Multispectral Sequences

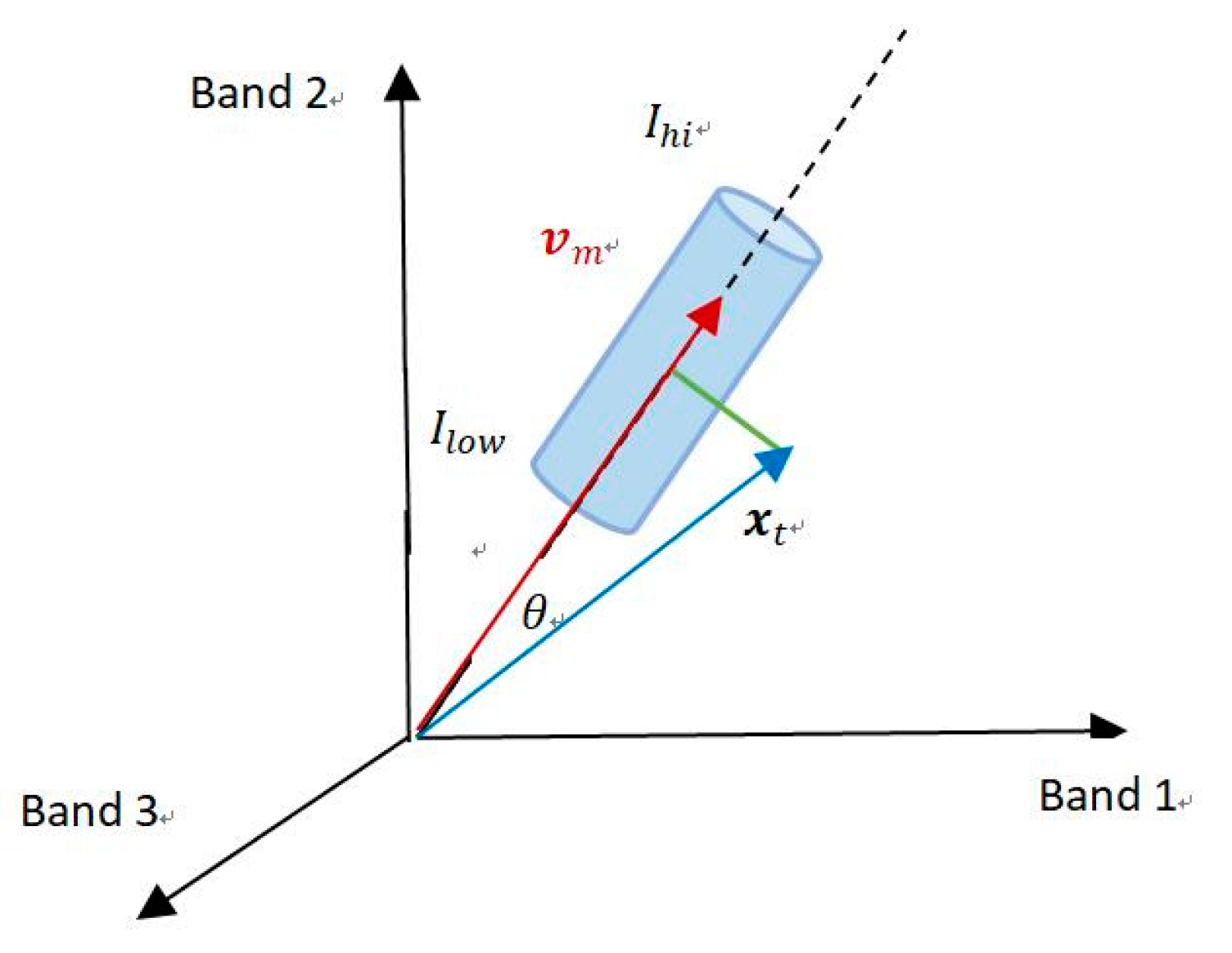

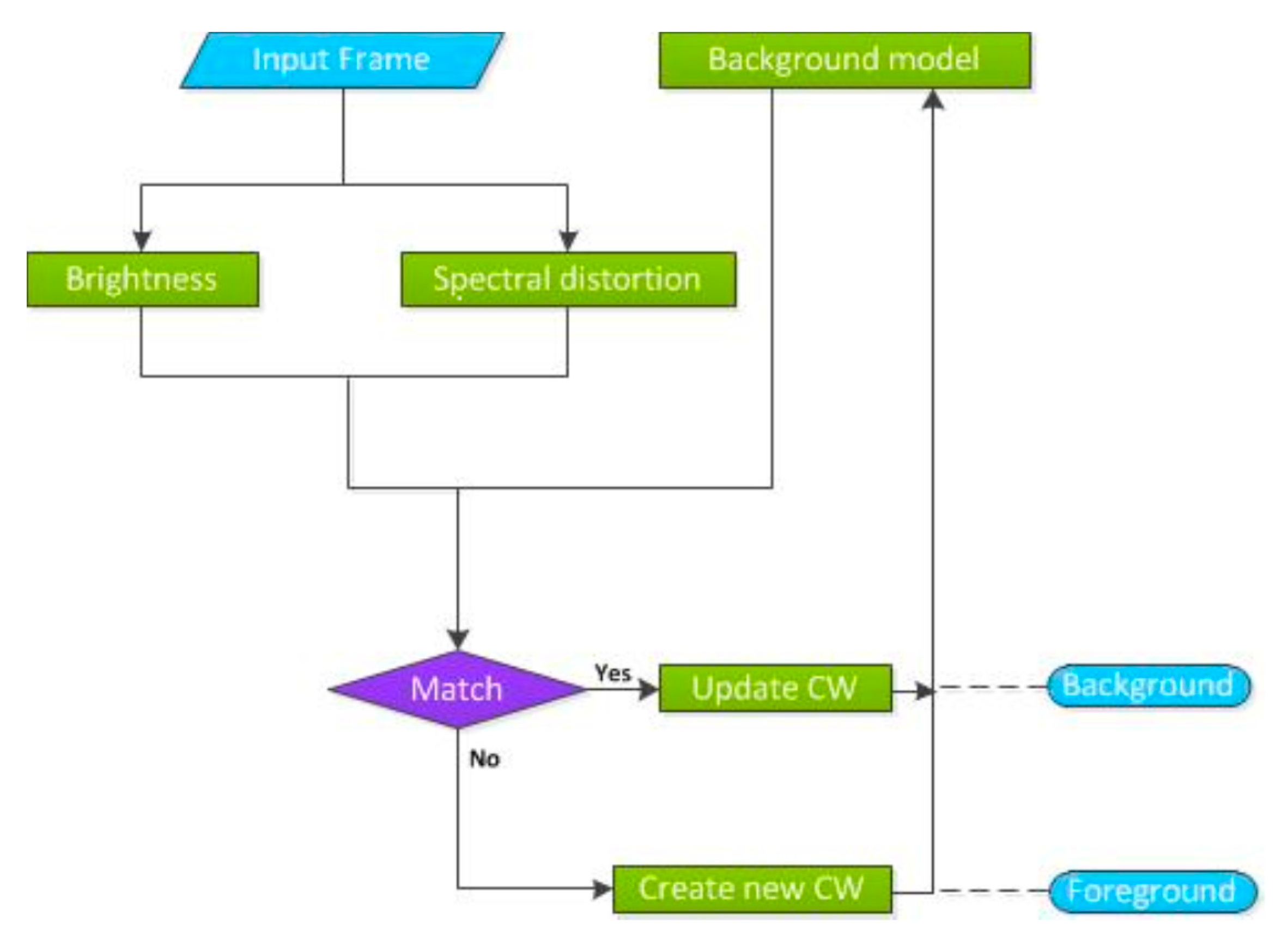

2. Multispectral Codebook

2.1. Codebook Construction

- , the min and max brightness, respectively, of all pixels assigned to codeword .

- , the frequency with which codeword has occurred.

- , the maximum negative run-length (MNRL), defined as the longest interval of time during the construction period that the codeword has not been updated., the first and the last times, respectively, that the codeword has been occurred.

| Algorithm 1 Codebook Construction |

| find the matching codeword to xt in C if (a) and (b) occur. |

| (a) brightness = true |

| (b) spectral_dist |

| if C⟵ϕ or there is no match, then L⟵L + 1, create a new codeword |

| v0 = xt |

| aux0 = 〈I,I,1,t−1,t,t〉. |

| Else, update the matched codeword, composed of |

| end for |

2.2. Foreground Detection

3. Multispectral Self-Adaptive Codebook

3.1. Self-Adaptive Mechanism

3.2. Spectral Information Divergence

4. Experiments

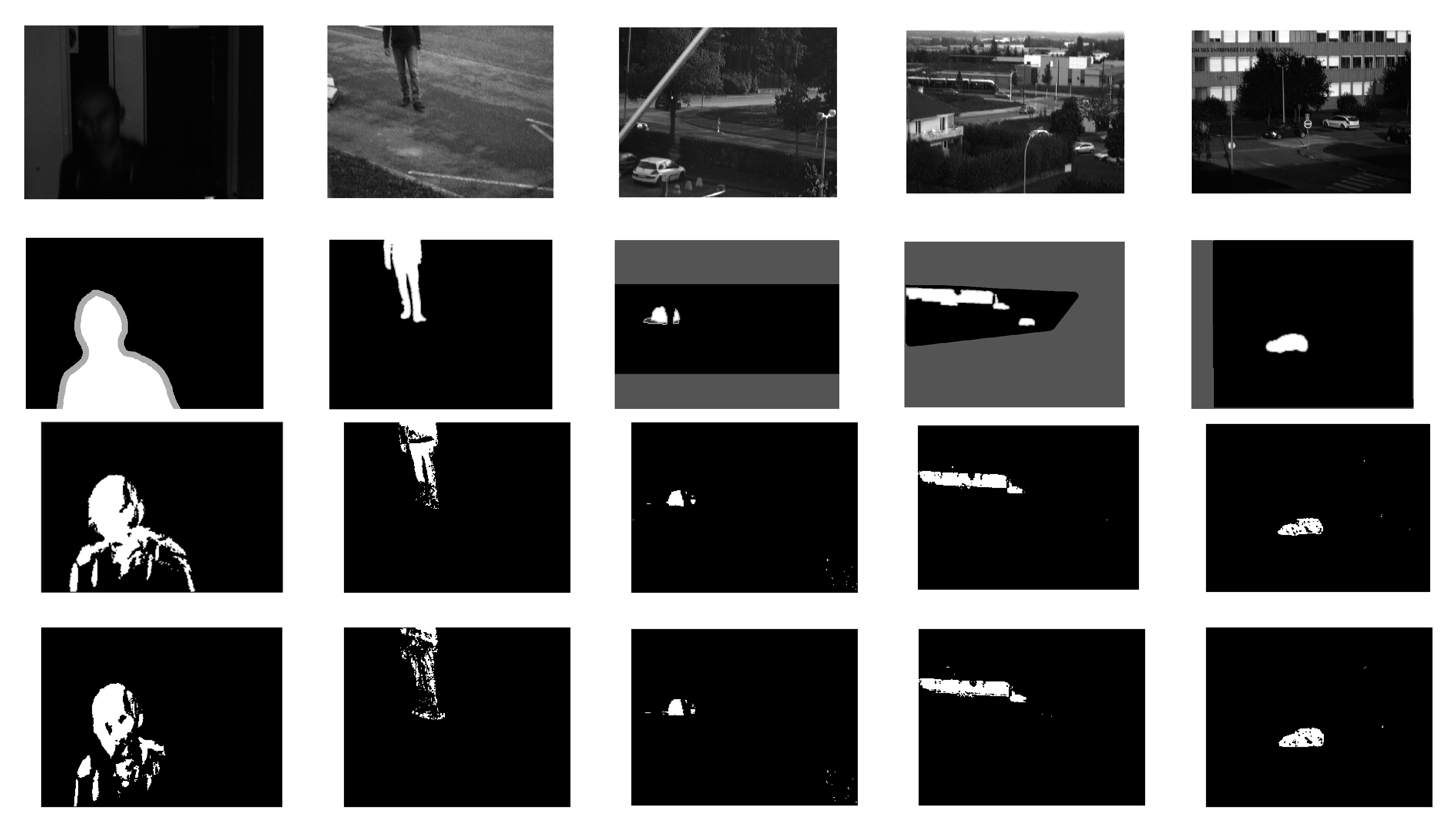

4.1. Dataset

4.2. Experiment Results

4.2.1. Multispectral Codebook

4.2.2. Multispectral Self-Adaptive Codebook

5. Conclusions and Perspectives

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Bouwmans, T. Traditional and recent approaches in background modeling for foreground detection: An overview. Comput. Sci. Rev. 2014, 11, 31–66. [Google Scholar] [CrossRef]

- Benezeth, Y.; Jodoin, P.M.; Emile, B.; Laurent, H.; Rosenberger, C. Comparative study of background subtraction algorithms. J. Electron. Imaging 2010, 19, 033003. [Google Scholar]

- Krungkaew, R.; Kusakunniran, W. Foreground segmentation in a video by using a novel dynamic codebook. In Proceedings of the 2016 13th International Conference on Electrical Engineering/Electronics, Computer, Telecommunications and Information Technology (ECTI-CON), Chiang Mai, Thailand, 28 June–1 July 2016. [Google Scholar]

- Xu, Y.; Dong, J.; Zhang, B.; Xu, D. Background modeling methods in video analysis: A review and comparative evaluation. CAAI Trans. Intell. Technol. 2016, 1, 43–60. [Google Scholar] [CrossRef]

- Stauffer, C.; Grimson, W.E.L. Adaptive background mixture models for real-time tracking. In Proceedings of the 1999 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (Cat. No PR00149), Fort Collins, CO, USA, 23–25 June 1999. [Google Scholar]

- Mittal, A.; Paragios, N. Motion-based background subtraction using adaptive kernel density estimation. In Proceedings of the 2004 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Washington, DC, USA, 27 June–2 July 2004. [Google Scholar]

- Doshi, A.; Trivedi, M. “Hybrid Cone-Cylinder” Codebook Model for Foreground Detection with Shadow and Highlight Suppression. In Proceedings of the IEEE International Conference on Video and Signal Based Surveillance, Sydney, Australia, 22–24 November 2006. [Google Scholar]

- Kim, K.; Chalidabhongse, T.H.; Harwood, D.; Davis, L. Real-time foreground–background segmentation using codebook model. Real-Time Imaging 2005, 11, 172–185. [Google Scholar] [CrossRef]

- Shah, M.; Deng, J.D.; Woodford, B.J. A Self-adaptive CodeBook (SACB) model for real-time background subtraction. Image Vision Comput. 2015, 38, 52–64. [Google Scholar] [CrossRef]

- Huang, J.; Jin, W.; Zhao, D.; Qin, N. Double-trapezium cylinder codebook model based on yuv color model for foreground detection with shadow and highlight suppression. J. Signal Process. Syst. 2016, 85, 221–233. [Google Scholar] [CrossRef]

- Bouchech, H. Selection of optimal narrowband multispectral images for face recognition. Ph.D. Thesis, Université de Bourgogne, Dijon, France, 2015. [Google Scholar]

- Viau, C.R.; Payeur, P.; Cretu, A.M. Multispectral image analysis for object recognition and classification. In Proceedings of the International Society for Optics and Photonics Automatic Target Recognition XXVI, San Jose, CA, USA, 21–25 February 2016. [Google Scholar]

- Shaw, G.A.; Burke, H.K. Spectral imaging for remote sensing. Lincoln Lab. J. 2003, 14, 3–28. [Google Scholar]

- Feng, C.H.; Makino, Y.; Oshita, S.; Martin, J.F.G. Hyperspectral imaging and multispectral imaging as the novel techniques for detecting defects in raw and processed meat products: Current state-of-the-art research advances. Food Cont. 2018, 84, 165–176. [Google Scholar] [CrossRef]

- Bourlai, T.; Cukic, B. Multi-spectral face recognition: Identification of people in difficult environments. In Proceedings of the 2012 IEEE International Conference on Intelligence and Security Informatics (ISI), Arlington, VA, USA, 11–14 June 2012; pp. 196–201. [Google Scholar]

- Kemker, R.; Salvaggio, C.; Kanan, C. High-Resolution Multispectral Dataset for Semantic Segmentation. arXiv, 2017; arXiv:1703.01918. [Google Scholar]

- Ice, J.; Narang, N.; Whitelam, C.; Kalka, N.; Hornak, L.; Dawson, J.; Bourlai, T. SWIR imaging for facial image capture through tinted materials. In Proceedings of the International Society for Optics and Photonics Infrared Technology and Applications XXXVIII, San Jose, CA, USA, 12–16 February 2012. [Google Scholar]

- Salerno, E.; Tonazzini, A.; Grifoni, E.; Lorenzetti, G. Analysis of multispectral images in cultural heritage and archaeology. J. Laser Appl. Spectrosc. 2014, 1, 22–27. [Google Scholar]

- Liu, R.; Ruichek, Y.; El Bagdouri, M. Background Subtraction with Multispectral Images Using Codebook Algorithm. In Proceedings of the International Conference on Advanced Concepts for Intelligent Vision Systems, Antwerp, Belgium, 18–21 September 2017; pp. 581–590. [Google Scholar]

- Benezeth, Y.; Sidibé, D.; Thomas, J.B. Background subtraction with multispectral video sequences. In Proceedings of the IEEE International Conference on Robotics and Automation workshop on Non-classical Cameras, Camera Networks and Omnidirectional Vision (OMNIVIS), Hong Kong, China, 31 May–7 June 2014; p. 6. [Google Scholar]

- Liu, R.; Ruichek, Y.; El Bagdouri, M. Enhanced Codebook Model and Fusion for Object Detection with Multispectral Images. In Proceedings of the International Conference on Advanced Concepts for Intelligent Vision Systems, Poitiers, France, 24–27 September 2018; pp. 225–232. [Google Scholar]

- Zhang, Y.T.; Bae, J.Y.; Kim, W.Y. Multi-Layer Multi-Feature Background Subtraction Using Codebook Model Framework. Available online: https://pdfs.semanticscholar.org/ae76/26ecc781b2157ce73c8954fc7f5ce24bcb4a.pdf (accessed on 2 January 2019).

- Li, F.; Zhou, H. A two-layers background modeling method based on codebook and texture. J. Univ. Sci. Technol. China 2012, 2, 4. [Google Scholar]

- Zaharescu, A.; Jamieson, M. Multi-scale multi-feature codebook-based background subtraction. In Proceedings of the 2011 IEEE International Conference on Computer Vision Workshops (ICCV Workshops), Barcelona, Spain, 6–13 November 2011; pp. 1753–1760. [Google Scholar]

- Chang, C.I. An information-theoretic approach to spectral variability, similarity, and discrimination for hyperspectral image analysis. IEEE Trans. Inf. Theory 2000, 46, 1927–1932. [Google Scholar] [CrossRef]

| Combination | Video 1 | Video 2 | Video 3 | Video 4 | Video 5 | Mean | |

|---|---|---|---|---|---|---|---|

| 1 | 123 | 0.6505 | 0.9422 | 0.7733 | 0.8037 | 0.7211 | 0.7782 |

| 2 | 124 | 0.8355 | 0.9420 | 0.7516 | 0.8065 | 0.7864 | 0.8244 |

| 3 | 125 | 0.8342 | 0.9450 | 0.7515 | 0.8148 | 0.7734 | 0.8238 |

| 4 | 126 | 0.7104 | 0.8950 | 0.7082 | 0.8047 | 0.8154 | 0.7867 |

| 5 | 127 | 0.7739 | 0.9396 | 0.6558 | 0.8071 | 0.7247 | 0.7802 |

| 6 | 134 | 0.8421 | 0.9461 | 0.7921 | 0.8513 | 0.7670 | 0.8397 |

| 7 | 135 | 0.8402 | 0.9538 | 0.7838 | 0.8350 | 0.7622 | 0.8350 |

| 8 | 136 | 0.7017 | 0.9040 | 0.7417 | 0.8381 | 0.8031 | 0.7977 |

| 9 | 137 | 0.7764 | 0.9463 | 0.6908 | 0.8354 | 0.7113 | 0.7920 |

| 10 | 145 | 0.8636 | 0.9440 | 0.7689 | 0.8475 | 0.7757 | 0.8399 |

| 11 | 146 | 0.8519 | 0.8952 | 0.7296 | 0.8435 | 0.8132 | 0.8267 |

| 12 | 147 | 0.7932 | 0.9488 | 0.6881 | 0.8084 | 0.7480 | 0.7973 |

| 13 | 156 | 0.8705 | 0.9038 | 0.7564 | 0.8440 | 0.8091 | 0.8368 |

| 14 | 157 | 0.7943 | 0.9538 | 0.6908 | 0.8252 | 0.7361 | 0.8000 |

| 15 | 167 | 0.7839 | 0.9302 | 0.6518 | 0.8105 | 0.7832 | 0.7919 |

| 16 | 234 | 0.8358 | 0.9434 | 0.7418 | 0.8168 | 0.7810 | 0.8238 |

| 17 | 235 | 0.8339 | 0.9448 | 0.7345 | 0.8180 | 0.7657 | 0.8194 |

| 18 | 236 | 0.6959 | 0.8849 | 0.6880 | 0.8104 | 0.8075 | 0.7773 |

| 19 | 237 | 0.7714 | 0.9459 | 0.6400 | 0.8259 | 0.7248 | 0.7816 |

| 20 | 245 | 0.8583 | 0.9386 | 0.7099 | 0.8199 | 0.7899 | 0.8233 |

| 21 | 246 | 0.8437 | 0.8795 | 0.6677 | 0.7901 | 0.8241 | 0.8010 |

| 22 | 247 | 0.7900 | 0.9467 | 0.6273 | 0.8083 | 0.7662 | 0.7877 |

| 23 | 256 | 0.8666 | 0.8831 | 0.6832 | 0.8000 | 0.8179 | 0.8102 |

| 24 | 257 | 0.7893 | 0.9472 | 0.6262 | 0.8211 | 0.7539 | 0.7875 |

| 25 | 267 | 0.7838 | 0.9050 | 0.5934 | 0.8221 | 0.7923 | 0.7793 |

| 26 | 345 | 0.8619 | 0.9423 | 0.7409 | 0.8585 | 0.7746 | 0.8356 |

| 27 | 346 | 0.8436 | 0.8831 | 0.7088 | 0.8423 | 0.8076 | 0.8171 |

| 28 | 347 | 0.7904 | 0.9455 | 0.6525 | 0.8116 | 0.7634 | 0.7927 |

| 29 | 356 | 0.8661 | 0.8904 | 0.7187 | 0.8650 | 0.8026 | 0.8286 |

| 30 | 357 | 0.7894 | 0.9546 | 0.6640 | 0.8298 | 0.7577 | 0.7991 |

| 31 | 367 | 0.7833 | 0.9169 | 0.6281 | 0.8308 | 0.7773 | 0.7873 |

| 32 | 456 | 0.8718 | 0.8799 | 0.6992 | 0.8297 | 0.8131 | 0.8187 |

| 33 | 457 | 0.7897 | 0.9402 | 0.6435 | 0.8140 | 0.7690 | 0.7913 |

| 34 | 467 | 0.7854 | 0.9060 | 0.6181 | 0.8071 | 0.7904 | 0.7814 |

| 35 | 567 | 0.7844 | 0.9098 | 0.6095 | 0.8027 | 0.7869 | 0.7787 |

| 36 | RGB | 0.8086 | 0.9431 | 0.7578 | 0.7679 | 0.7789 | 0.8113 |

| B+SD | 3 Bands | 4 Bands | 5 Bands | 6 Bands | 7 Bands | RGB |

|---|---|---|---|---|---|---|

| Video 1 | 0.7995 | 0.8046 | 0.8060 | 0.8043 | 0.7983 | 0.4789 |

| Video 2 | 0.9615 | 0.9624 | 0.9636 | 0.9643 | 0.9631 | 0.9535 |

| Video 3 | 0.9231 | 0.9248 | 0.9204 | 0.9051 | 0.8381 | 0.9188 |

| Video 4 | 0.8981 | 0.9001 | 0.8999 | 0.8918 | 0.8856 | 0.8871 |

| Video 5 | 0.9171 | 0.9198 | 0.9190 | 0.9189 | 0.9110 | 0.9130 |

| mean | 0.8999 | 0.9023 | 0.9018 | 0.8969 | 0.8792 | 0.8303 |

| B+SID | 3 Bands | 4 Bands | 5 Bands | 6 Bands | 7 Bands | RGB |

|---|---|---|---|---|---|---|

| Video 1 | 0.9208 | 0.9219 | 0.9059 | 0.8676 | 0.7883 | 0.6355 |

| Video 2 | 0.9538 | 0.9526 | 0.9504 | 0.9471 | 0.9451 | 0.9479 |

| Video 3 | 0.8939 | 0.8914 | 0.8825 | 0.8766 | 0.8351 | 0.8867 |

| Video 4 | 0.8784 | 0.8807 | 0.8783 | 0.8728 | 0.8558 | 0.8217 |

| Video 5 | 0.8765 | 0.8801 | 0.8842 | 0.8425 | 0.7855 | 0.8447 |

| mean | 0.9047 | 0.9053 | 0.9003 | 0.8813 | 0.8420 | 0.8273 |

| B+SD+SID | 3B | 4B | 5B | 6B | 7B | RGB |

|---|---|---|---|---|---|---|

| Video 1 | 0.9144 | 0.9147 | 0.8971 | 0.8607 | 0.7727 | 0.6555 |

| Video 2 | 0.9614 | 0.9623 | 0.9635 | 0.9642 | 0.9631 | 0.9535 |

| Video 3 | 0.9213 | 0.9180 | 0.8938 | 0.8634 | 0.8045 | 0.9054 |

| Video 4 | 0.8968 | 0.8979 | 0.8972 | 0.8885 | 0.8821 | 0.8867 |

| Video 5 | 0.8791 | 0.8800 | 0.8948 | 0.8459 | 0.7853 | 0.8543 |

| mean | 0.9146 | 0.9146 | 0.9093 | 0.8845 | 0.8415 | 0.8511 |

| Mechanism | Criteria | Sequences | Video 1 | Video 2 | Video 3 | Video 4 | Video 5 | Mean |

|---|---|---|---|---|---|---|---|---|

| Static parameters | B+SD | RGB | 0.8086 | 0.9431 | 0.7578 | 0.7679 | 0.7789 | 0.8113 |

| Multi | 0.8718 | 0.9546 | 0.7921 | 0.8650 | 0.8241 | 0.8615 | ||

| Self-adaptive mechanism | B+SD | RGB | 0.4789 | 0.9535 | 0.9188 | 0.8871 | 0.9130 | 0.8303 |

| Multi | 0.8060 | 0.9643 | 0.9248 | 0.9001 | 0.9198 | 0.9030 | ||

| B+ SID | RGB | 0.6355 | 0.9479 | 0.8867 | 0.8217 | 0.8447 | 0.8273 | |

| Multi | 0.9219 | 0.9538 | 0.8939 | 0.8807 | 0.8842 | 0.9069 | ||

| B+SD+SID | RGB | 0.6555 | 0.9535 | 0.9054 | 0.8867 | 0.8543 | 0.8511 | |

| Multi | 0.9147 | 0.9642 | 0.9213 | 0.8979 | 0.8948 | 0.9186 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, R.; Ruichek, Y.; El Bagdouri, M. Extended Codebook with Multispectral Sequences for Background Subtraction. Sensors 2019, 19, 703. https://doi.org/10.3390/s19030703

Liu R, Ruichek Y, El Bagdouri M. Extended Codebook with Multispectral Sequences for Background Subtraction. Sensors. 2019; 19(3):703. https://doi.org/10.3390/s19030703

Chicago/Turabian StyleLiu, Rongrong, Yassine Ruichek, and Mohammed El Bagdouri. 2019. "Extended Codebook with Multispectral Sequences for Background Subtraction" Sensors 19, no. 3: 703. https://doi.org/10.3390/s19030703

APA StyleLiu, R., Ruichek, Y., & El Bagdouri, M. (2019). Extended Codebook with Multispectral Sequences for Background Subtraction. Sensors, 19(3), 703. https://doi.org/10.3390/s19030703