Accuracy Evaluation of Videogrammetry Using A Low-Cost Spherical Camera for Narrow Architectural Heritage: An Observational Study with Variable Baselines and Blur Filters

Abstract

:1. Introduction

2. Materials and Methods

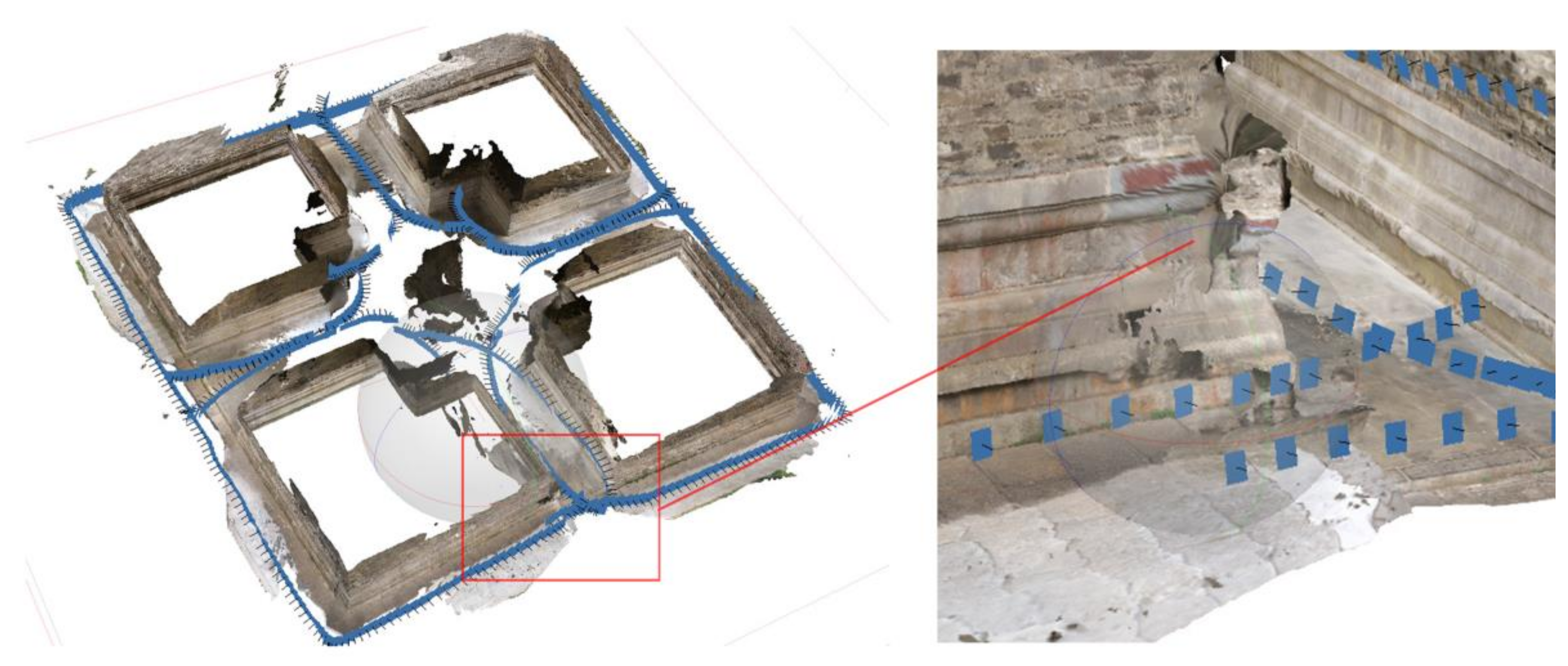

2.1. Studied Sites

2.2. Video Capture

2.3. 3D Reconstruction with Variable Baselines and Blur Filters

- Camera type: spherical;

- Align photos: default (accuracy: medium; key point limit: 40,000; tie point limit: 4000);

- Build dense cloud points generation: default (quality: medium; depth filtering: aggressive).

2.3.1. Frame Extraction Ratio

2.3.2. Blur Assessment Methods

2.4. Accuracy Assessments with GTMs

- The GTMs and the tested datasets were not exactly corresponding to each other in terms of completeness and density;

- The deviations of the tested groups to the GTMs may not follow a Gaussian distribution.

3. Results

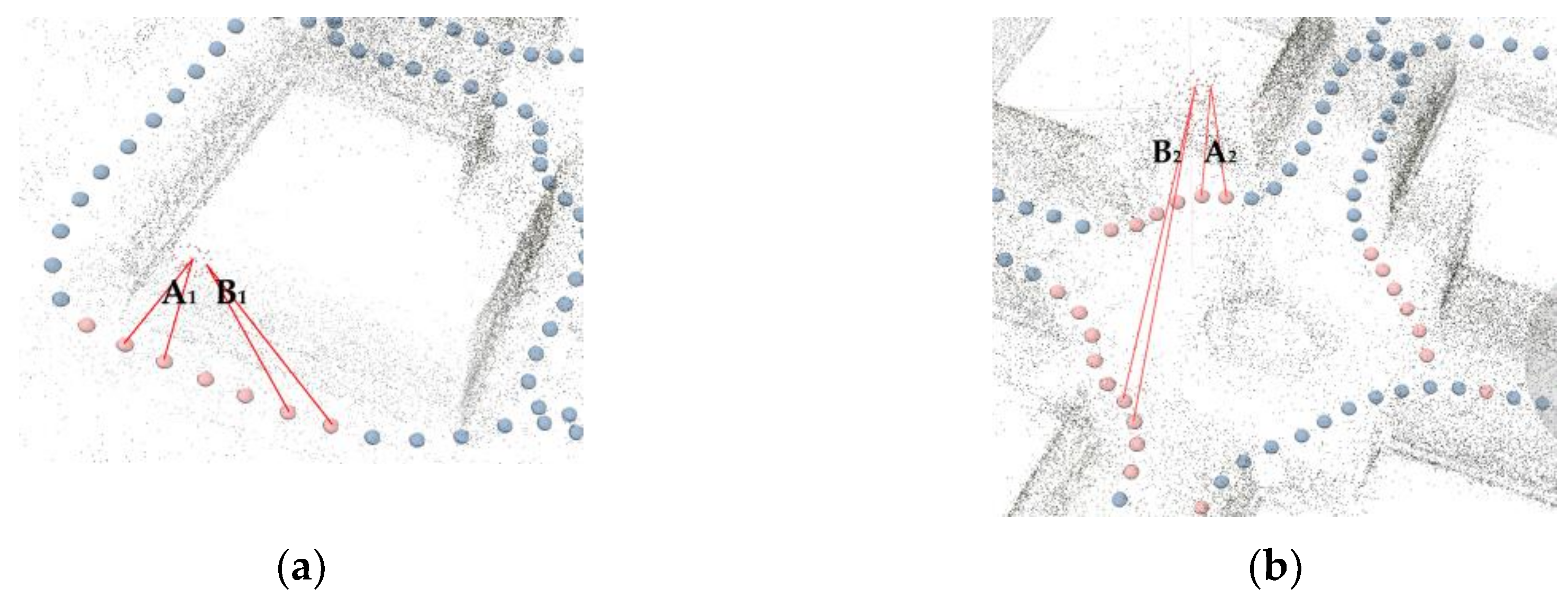

3.1. Impact of Baselines

3.2. Impact of Blur Filters

4. Discussion

4.1. Potential Applications

4.2. Results Analysis and Future Developments

5. Conclusions

- Videogrammetry with consumer-level spherical cameras is a robust method for surveying narrow architectural heritage, where the use of other optical measurement technologies (e.g., TLS, MMS, and perspective-camera photogrammetry/videogrammetry) is limited. The wide FOV and manageable frame extraction ratios lead to frame alignments robust to scene and lighting variations. It is low-cost, portable, fast, and easy to use for even nonexpert users.

- The achieved metric accuracy is at cm levels, relatively 1/500–1/2000 in both datasets of our tests. Although it is not comparable to those achieved with TLS or photogrammetry (coupled with precise GCPs and image processing), it is close to that achieved with MMS, and caters to surveying and mapping with medium accuracy and resolution in short periods. Such levels of accuracy, along with low-cost and portability, make it a promising method for surveying narrow architectural heritage in extreme conditions, such as remote areas.

- Baselines and blur filters are crucial factors to the accuracy of 3D reconstruction. Consistent correlations between baselines and accuracy, as those for perspective camera, were not observed in the tests. Relatively short baselines (<1 m) yield point clouds with more noise, but larger baselines do not necessarily lead to higher accuracy. An optimal frame extraction for videos from spherical cameras should consider radial distortions, degeneracy cases, and essential point density. Both blur filters had a positive impact on the accuracy in the tests: substituting blur frames with adjacent sharp frames can reduce global errors by 5–15%.

- Future developments will involve testing of different strategies for façades and for interiors, more layouts of architectural heritage, video processing algorithms, and emerging imaging sensors.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Remondino, F.; El-Hakim, S. Image-Based 3D Modelling: A Review. Photogramm. Rec. 2006, 21, 269–291. [Google Scholar] [CrossRef]

- Blais, F. Review of 20 Years of Range Sensor Development. J. Electron. Imaging 2004, 13, 231. [Google Scholar] [CrossRef]

- Snavely, N.; Seitz, S.M.; Szeliski, R. Photo Tourism: Exploring Photo Collections in 3D. ACM Trans. Graph. 2006, 25, 12. [Google Scholar] [CrossRef]

- Aicardi, I.; Chiabrando, F.; Lingua, A.M.; Noardo, F. Recent Trends in Cultural Heritage 3D Survey: The Photogrammetric Computer Vision Approach. J. Cult. Herit. 2018, 32, 257–266. [Google Scholar] [CrossRef]

- Sun, Z.; Zhang, Y. Using Drones and 3D Modeling to Survey Tibetan Architectural Heritage: A Case Study with the Multi-Door Stupa. Sustainability 2018, 10, 2259. [Google Scholar] [CrossRef]

- Remondino, F.; Gaiani, M.; Apollonio, F.; Ballabeni, A.; Ballabeni, M.; Morabito, D. 3D Documentation of 40 Kilometers of Historical Porticoes—The Challenge. In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Prague, Czech Republic, 12–19 July 2016; Volume XLI-B5, pp. 711–718. [Google Scholar]

- McCarthy, J.; Benjamin, J. Multi-image Photogrammetry for Underwater Archaeological Site Recording: An Accessible, Diver-Based Approach. J. Marit. Archaeol. 2014, 9, 95–114. [Google Scholar] [CrossRef]

- López, F.J.; Lerones, P.M.; Llamas, J.; Gómez-García-Bermejo, J.; Zalama, E. A Framework for Using Point Cloud Data of Heritage Buildings Toward Geometry Modeling in A BIM Context: A Case Study on Santa Maria La Real De Mave Church. Int. J. Archit. Herit. 2017, 1–22. [Google Scholar] [CrossRef]

- Campanaro, D.M.; Landeschi, G.; Dell’Unto, N.; Touati, A.-M.L. 3D GIS for Cultural Heritage Restoration: A ‘white Box’workflow. J. Cult. Herit. 2016, 18, 321–332. [Google Scholar] [CrossRef]

- Mandelli, A.; Fassi, F.; Perfetti, L.; Polari, C. Testing Different Survey Techniques to Model Architectonic Narrow Spaces. In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Ottawa, ON, Canada, 1–5 October 2017; Volume XLII-2/W5, pp. 505–511. [Google Scholar]

- Nocerino, E.; Menna, F.; Remondino, F.; Toschi, I.; Rodríguez-Gonzálvez, P. Investigation of Indoor and Outdoor Performance of Two Portable Mobile Mapping Systems. In Proceedings of the SPIE Optical Metrology; Remondino, F., Shortis, M.R., Eds.; International Society for Optics and Photonics: Munich, Germany, 2017; p. 103320I. [Google Scholar]

- Sammartano, G.; Spanò, A. Point Clouds by Slam-Based Mobile Mapping Systems: Accuracy and Geometric Content Validation in Multisensor Survey and Stand-Alone Acquisition. Appl. Geomat. 2018, 10, 317–339. [Google Scholar] [CrossRef]

- Chiabrando, F.; Della Coletta, C.; Sammartano, G.; Spanò, A.; Spreafico, A. “Torino 1911” Project: A Contribution of a Slam-Based Survey to Extensive 3D Heritage Modeling. In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Delft, The Netherlands, 1–5 October 2018; Volume XLII-2, pp. 225–234. [Google Scholar]

- Barazzetti, L.; Mussio, L.; Remondino, F.; Scaioni, M. Targetless Camera Calibration. In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Munich, Germany, 5–7 October 2011; Volume XXXVIII-5/W16, pp. 335–342. [Google Scholar]

- Perfetti, L.; Polari, C.; Fassi, F.; Troisi, S.; Baiocchi, V.; Del Pizzo, S.; Giannone, F.; Barazzetti, L.; Previtali, M.; Roncoroni, F. Fisheye Photogrammetry to Survey Narrow Spaces in Architecture and a Hypogea Environment. In Latest Developments in Reality-Based 3D Surveying and Modelling; MDPI: Basel, Switzerland, 2018; pp. 3–28. ISBN 978-3-03842-685-1. [Google Scholar]

- Kannala, J.; Brandt, S.S. A Generic Camera Model and Calibration Method for Conventional, Wide-Angle, and Fish-Eye Lenses. IEEE Trans. Pattern Anal. Mach. Intell. 2006, 28, 1335–1340. [Google Scholar] [CrossRef] [PubMed]

- Fangi, G.; Nardinocchi, C. Photogrammetric Processing of Spherical Panoramas. Photogramm. Rec. 2013, 28, 293–311. [Google Scholar] [CrossRef]

- PhotoScan; Agisoft; 2018. Available online: https://www.agisoft.com/ (accessed on 1 December 2018).

- Pix4DMapper; Pix4D; 2018. Available online: https://www.pix4d.com/ (accessed on 5 December 2018).

- ContextCapture; Bentley; 2018. Available online: https://www.acute3d.com/contextcapture/ (accessed on 1 December 2018).

- Barazzetti, L.; Previtali, M.; Roncoroni, F. 3D Modelling with the Samsung Gear 360. In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Ottawa, ON, Canada, 1–5 October 2017; Volume XLII-2/W3, pp. 85–90. [Google Scholar]

- Perfetti, L.; Polari, C.; Fassi, F. Fisheye Multi-Camera System Calibration for Surveying Narrow and Complex Architectures. In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Delft, The Netherlands, 1–5 October 2018; Volume XLII-2, pp. 877–883. [Google Scholar]

- Pollefeys, M.; Nistér, D.; Frahm, J.-M.; Akbarzadeh, A.; Mordohai, P.; Clipp, B.; Engels, C.; Gallup, D.; Kim, S.-J.; Merrell, P.; et al. Detailed Real-Time Urban 3D Reconstruction from Video. Int. J. Comput. Vis. 2008, 78, 143–167. [Google Scholar] [CrossRef]

- Kwiatek, K.; Tokarczyk, R. Photogrammetric Applications of Immersive Video Cameras. In Proceedings of the ISPRS Annals of Photogrammetry, Remote Sensing and Spatial Information Sciences, Riva del Garda, Italy, 23–25 June 2014; Volume II-5, pp. 211–218. [Google Scholar]

- Alsadik, B.; Gerke, M.; Vosselman, G. Efficient Use of Video for 3D Modelling of Cultural Heritage Objects. In Proceedings of the ISPRS Annals of Photogrammetry, Remote Sensing and Spatial Information Sciences, Munich, Germany, 25–27 March 2015; Volume II-3/W4, pp. 1–8. [Google Scholar]

- Alsadik, B.S.; Gerke, M.; Vosselman, G. Optimal Camera Network Design for 3D Modeling of Cultural Heritage. In Proceedings of the ISPRS Annals of Photogrammetry, Remote Sensing and Spatial Information Sciences, Melbourne, Australia, 25 August–1 September 2012; Volume I-3, pp. 7–12. [Google Scholar]

- Seo, Y.-H.; Kim, S.-H.; Doo, K.-S.; Choi, J.-S. Optimal Keyframe Selection Algorithm for Three-Dimensional Reconstruction in Uncalibrated Multiple Images. Opt. Eng. 2008, 47, 053201. [Google Scholar] [CrossRef]

- Rashidi, A.; Dai, F.; Brilakis, I.; Vela, P. Optimized Selection of Key Frames for Monocular Videogrammetric Surveying of Civil Infrastructure. Adv. Eng. Inform. 2013, 27, 270–282. [Google Scholar] [CrossRef]

- Cho, S.; Lee, S. Fast Motion Deblurring. ACM Trans. Graph. 2009, 28, 1. [Google Scholar] [CrossRef]

- Joshi, N.; Kang, S.B.; Zitnick, C.L.; Szeliski, R. Image Deblurring using Inertial Measurement Sensors. ACM Trans. Graph. 2010, 29, 1–8. [Google Scholar]

- Crete, F.; Dolmiere, T.; Ladret, P.; Nicolas, M. The Blur Effect: Perception and Estimation with a New No-Reference Perceptual Blur Metric. In Proceedings of the SPIE Electronic Imaging Symposium Conf Human Vision and Electronic Imaging, San Jose, CA, USA, 28 January–1 February 2017; Rogowitz, B.E., Pappas, T.N., Daly, S.J., Eds.; SPIE: San Jose, CA, USA, 2007. [Google Scholar]

- Barazzetti, L.; Previtali, M.; Roncoroni, F. Can We Use Low-Cost 360 Degree Cameras to Create Accurate 3D Models? In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Delft, The Netherlands, 1–5 October 2018; Volume XLII-2, pp. 69–75. [Google Scholar]

- Girardeau-Montaut, D. Cloudcompare-Open Source Project; 2018. Available online: https://www.danielgm.net/cc/ (accessed on 8 December 2018).

- Liang, H.; Li, W.; Lai, S.; Zhu, L.; Jiang, W.; Zhang, Q. The integration of terrestrial laser scanning and terrestrial and unmanned aerial vehicle digital photogrammetry for the documentation of Chinese classical gardens—A case study of Huanxiu Shanzhuang, Suzhou, China. J. Cult. Herit. 2018, 33, 222–230. [Google Scholar] [CrossRef]

- Volk, R.; Stengel, J.; Schultmann, F. Building Information Modeling (BIM) for existing buildings—Literature review and future needs. Autom. Constr. 2014, 38, 109–127. [Google Scholar] [CrossRef]

- Caroti, G.; Martínez-Espejo Zaragoza, I.; Piemonte, A. Accuracy Assessment in Structure from Motion 3D Reconstruction from UAV-Born Images: The Influence of the Data Processing Methods. In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Munich, Germany, 25–27 March 2015; Volume XL-1/W4, pp. 103–109. [Google Scholar]

- Hartley, R.; Zisserman, A. Multiple View Geometry in Computer Vision, 2nd ed.; Cambridge University Press: Cambridge, UK, 2004. [Google Scholar]

- Pollefeys, M.; Van Gool, L.; Vergauwen, M.; Verbiest, F.; Cornelis, K.; Tops, J.; Koch, R. Visual Modeling with a Hand-Held Camera. Int. J. Comput. Vis. 2004, 59, 207–232. [Google Scholar] [CrossRef]

- Gaiani, M.; Remondino, F.; Apollonio, F.; Ballabeni, A. An Advanced Pre-Processing Pipeline to Improve Automated Photogrammetric Reconstructions of Architectural Scenes. Remote Sens. 2016, 8, 178. [Google Scholar] [CrossRef]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

- Wu, C. Towards Linear-time Incremental Structure from Motion. In Proceedings of the International Conference on 3D Vision-3DV, Seattle, WD, USA, 29 June–1 July 2013; pp. 127–134. [Google Scholar]

- Koutsoudis, A.; Vidmar, B.; Ioannakis, G.; Arnaoutoglou, F.; Pavlidis, G.; Chamzas, C. Multi-image 3D reconstruction data evaluation. J. Cult. Herit. 2014, 15, 73–79. [Google Scholar] [CrossRef]

| Dimensions | 7.8 × 6.8 × 2.4 cm |

| Weight (batteries included) | 108 g |

| Lens | f/2.0 |

| Sensor size | 1/2.3 inch (6.17 × 4.55 mm) |

| Photography resolution | 6912 × 3456 pixels |

| Videography resolution | 2304 × 1152 pixels, 60 fps; 3840 × 1920 pixels, 30 fps |

| Format | Photo: DNG, JPG; Video: MPEG-4, H.264 |

| B10(222) | B20(111) | B30(74) | B40(56) | |

|---|---|---|---|---|

| RMS reprojection error (pixel) | 1.30 | 1.14 | 1.12 | 1.03 |

| Standard deviation (cm) | ±12.23 | ±12.26 | ±9.44 | ±9.20 |

| Mean absolute error (cm) | 8.91 | 9.30 | 6.21 | 6.39 |

| Median absolute error (cm) | 5.70 | 6.41 | 4.38 | 3.70 |

| B10(571) | B20(286) | B30(191) | B40(143) | B50(115) | |

|---|---|---|---|---|---|

| RMS reprojection error (pixel) | 2.25 | 1.62 | 1.54 | 1.41 | 1.53 |

| Standard deviation (cm) | ±10.26 | ±4.29 | ±5.53 | ±5.58 | ±5.02 |

| Mean absolute error (cm) | 6.98 | 2.41 | 2.71 | 3.03 | 3.30 |

| Median absolute error (cm) | 3.93 | 1.38 | 1.53 | 1.53 | 2.11 |

| Raw | Fps | Fbm | |

|---|---|---|---|

| RMS reprojection error (pixel) | 1.12 | 0.95 | 0.94 |

| Standard deviation (cm) | ±9.44 | ±9.09 | ±10.15 |

| Mean absolute error (cm) | 6.21 | 6.06 | 6.90 |

| Median absolute error (cm) | 4.38 | 3.74 | 4.38 |

| Raw | Fps | Fbm | |

|---|---|---|---|

| RMS reprojection error (pixel) | 1.54 | 1.34 | 1.41 |

| Standard deviation (cm) | ±5.53 | ±4.06 | ±4.35 |

| Mean absolute error (cm) | 2.71 | 2.39 | 2.54 |

| Median absolute error (cm) | 1.53 | 1.42 | 1.53 |

| Stupa (min) | Pavilion (min) | |

|---|---|---|

| Spherical-camera videogrammetry | 2 | 4 |

| TLS (i.e., Leica BLK 360) | ca. 60 | 120 |

| Perspective-camera photogrammetry | ca. 60–120 | ca. 100–200 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sun, Z.; Zhang, Y. Accuracy Evaluation of Videogrammetry Using A Low-Cost Spherical Camera for Narrow Architectural Heritage: An Observational Study with Variable Baselines and Blur Filters. Sensors 2019, 19, 496. https://doi.org/10.3390/s19030496

Sun Z, Zhang Y. Accuracy Evaluation of Videogrammetry Using A Low-Cost Spherical Camera for Narrow Architectural Heritage: An Observational Study with Variable Baselines and Blur Filters. Sensors. 2019; 19(3):496. https://doi.org/10.3390/s19030496

Chicago/Turabian StyleSun, Zheng, and Yingying Zhang. 2019. "Accuracy Evaluation of Videogrammetry Using A Low-Cost Spherical Camera for Narrow Architectural Heritage: An Observational Study with Variable Baselines and Blur Filters" Sensors 19, no. 3: 496. https://doi.org/10.3390/s19030496

APA StyleSun, Z., & Zhang, Y. (2019). Accuracy Evaluation of Videogrammetry Using A Low-Cost Spherical Camera for Narrow Architectural Heritage: An Observational Study with Variable Baselines and Blur Filters. Sensors, 19(3), 496. https://doi.org/10.3390/s19030496