Abstract

For the past decades, recognition technologies of multispectral palmprint have attracted more and more attention due to their abundant spatial and spectral characteristics compared with the single spectral case. Enlightened by this, an innovative robust L2 sparse representation with tensor-based extreme learning machine (RL2SR-TELM) algorithm is put forward by using an adaptive image level fusion strategy to accomplish the multispectral palmprint recognition. Firstly, we construct a robust L2 sparse representation (RL2SR) optimization model to calculate the linear representation coefficients. To suppress the affection caused by noise contamination, we introduce a logistic function into RL2SR model to evaluate the representation residual. Secondly, we propose a novel weighted sparse and collaborative concentration index (WSCCI) to calculate the fusion weight adaptively. Finally, we put forward a TELM approach to carry out the classification task. It can deal with the high dimension data directly and reserve the image spatial information well. Extensive experiments are implemented on the benchmark multispectral palmprint database provided by PolyU. The experiment results validate that our RL2SR-TELM algorithm overmatches a number of state-of-the-art multispectral palmprint recognition algorithms both when the images are noise-free and contaminated by different noises.

1. Introduction

Palmprint recognition technologies have become a novel biometric approach and have attracted increasingly attention in recent years. In comparison with some other biological features (i.e., the iris and fingerprints, etc.), palmprints have a larger collection area with more abundant information. Besides, palmprints possess the characteristics of uniqueness, stability, scalability and non-contact acquisition, etc. As a consequence, they have strong anti-noise capability and efficient discrimination performance.

The current palmprint recognition algorithms can be mainly categorized into various sorts, such as subspace-based methods, feature-based methods and sparse representation-based classification (SRC) methods, etc. The subspace-based methods [1,2,3,4,5,6,7,8,9,10] adopt dimension reduction theory to accomplish the feature space transformation. This can reduce the data complexity and efficiently improve the discrimination of image characteristics. The conventional subspace transformation methods mainly includes the principal component analysis (PCA) [1], linear discriminant analysis (LDA) [4] and independent component analysis (ICA) [7], etc. However, due to their sensitivity to lighting, noise and other contaminations, the conventional linear discriminant methods already don’t meet the requirements of actual palmprint recognition problems. To address these issues, a nonlinear spatial structure transformation technique, namely the kernel PCA method [9,10], was introduced into the palmprint recognition field. In addition, lots of feature-based methods were presented to implement palmprint recognition tasks. For instance, the coding-based method [11,12,13,14,15,16,17] has been extensively researched in the past decades. In these studies, the palmprint features were extracted by using the coding of some filtering results. The common coding methods include binarized statistical image features (BSIF) [13], double-orientation code (DOC) [14] and block dominate orientation code [17], etc. Minaee et al. [18] proposed a palmprint recognition algorithm by using the deep scattering network which achieved fine recognition performance. Some other feature- based methods [19,20,21,22,23,24] mainly take advantage of the statistic characteristics, such as mean, variance, and covariance and so on, to implement palmprint recognition. In recent years, the linear representation methods based on sparse theory [25] were proposed and popularly applied to the palmprint recognition problem [26,27,28,29,30,31]. These methods consider a testing sample as a linear representation of the training set. That is, a given testing sample was anticipated to be approximately expressed by the training samples lied in a unitary class. This can be effectively accomplished by imposing the sparseness constraint on the approximate representation with the training samples.

For the sake of higher recognition accuracy, some multispectral palmprint recognition methods [32,33,34,35,36,37,38,39,40,41,42,43,44] have been studied. Because the collected images under different spectra contain more plentiful feature information, the recognition rate can be effectively improved. In these studies, different fusion strategies were utilized to increase the recognition accuracy. The conventional multispectral palmprint recognition methods can be mainly categorized into image level fusion strategies and matching score level fusion strategies. The basic idea of image level fusion is to decompose the images under different spectra at the start, then integrate these separated decompositions for a compound approximation and reconstruct the fusion image through the inverse transformation to implement the recognition task. Based on this, Han et al. [32] used the discrete wavelet transform (DWT) method to decompose palmprint images acquired under different spectra, and then reconstructed the fused palmprint image to accomplish the multispectral palmprint recognition. Xu et al. [37] introduced the quaternion matrix to represent the palmprint images under different spectra, and then extended PCA and DWT into the quaternion domain to implement feature extraction. Finally, the Euclidean distance was used to perform the recognition task. Gumaei et al. [38] employed an autoencoder with the regularized extreme learning machine (AE-RELM) to accomplish the multispectral palmprint recognition and effectively improve the accuracy. Xu et al. [39] presented a novel multispectral palmprint recognition algorithm. They used the digital shearlet transform (DST) to implement the image fusion and proposed a multiclass projection ELM (MPELM) to accomplish the classification task. For the score level fusion method, the matching scores are obtained separately by a comparator for different spectral bands firstly, then the obtained matching scores are fused by utilizing some rules and accomplish the classification based on the fusion score. Zhang et al. [41] presented a novel algorithm named line orientation-based coding (LOC) to extract the featurew of the palmprint images with different spectrq, and then carried out the recognition task with a matching level fusion rule. Minaee et al. [42] used the co-occurrence matrix to extract the texture features, then employed the minimum distance classifier (MDC) and weighted majority voting system (WMV) to accomplish the multispectral palmprint recognition. Minaee et al. [43] presented a set of wavelet-DCT features for multispectral palmprint recognition. Although many achievements have been made in the study of multispectral palmprint recognition, there are still many open questions that need to be further studied. For example, how to increase the recognition accuracy when the collected images are contaminated by different noises.

Inspired by the these studes, in this article, we present a novel robust L2 sparse representation with a tensor-based extreme learning machine (RL2SR-TELM) algorithm by using an adaptive image level fusion strategy to accomplish the multispectral palmprint recognition. The key contributions of our algorithm can be summarized as follows: Firstly, a robust L2 norm-based sparse representation model is constructed to calculate the linear representation coefficients. It overcomes the defects of high computational complexity of the L1 norm regularization and the lack of robustness to noise contamination. Secondly, an adaptive weighted method is presented to accomplish the fusion of multispectral palmprint images at the image level. In this method, a weighted sparse and collaborative concentration index (WSCCI) is proposed that can quantify the multispectral palmprint image discrimination efficiently. By using the robust sparse coefficients and WSCCI, an adaptive weighted fusion strategy is proposed to reconstruct the fused palmprint image. Finally, aiming at the high order signal classification problem, we extend the conventional ELM [45] into the tensor space, then put forward a novel TELM method. It inherits the advantages of the conventional ELM (i.e., excellent learning speed and generalization performance) which achieves an outstanding recognition efficiency.

The rest of this paper is organized as follows: in Section 2, we introduce the principle of multispectral palmprint acquisition device. Then we discuss our proposed RL2SR-TELM algorithm in Section 3. In Section 4, simulation experiments and the result analysis of our proposed algorithm are illustrated in detail. Section 5 concludes this paper.

2. Acquisition Device of Multispectral Palmprint Images

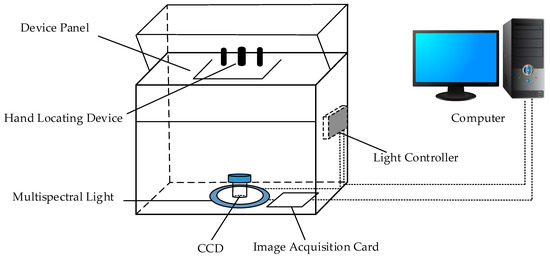

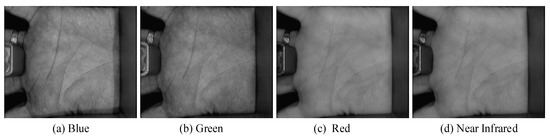

The Biometrics Research Centre (BRC) of Hong Kong Polytechnic University (PolyU) has developed an acquisition device [46] for multispectral palmprints. It can collect the palmprint images using the Blue, Green, Red and Near Infrared (NIR) spectra, respectively. Figure 1 illustrates the principle of the acquisition device. It mainly includes a multispectral light source module, a light source control module, a CCD imaging sensor, an image acquisition module (A/D conversion module) and an image display module, etc. The multispectral light source module locates at the bottom of the device and consists of four monochromatic light sources. The light controller module controls the multispectral light and enables CCD imaging module to acquire palmprint images under different spectrums. The image acquisition module captures the multispectral palmprint images and converts analog image into a digital one by an A/D conversion. Figure 2 shows the acquired palmprint images with different spectrums.

Figure 1.

Principle of the multispectral palmprint acquisition device.

Figure 2.

Palmprint images acquired with different spectrums.

3. Proposed Algorithm

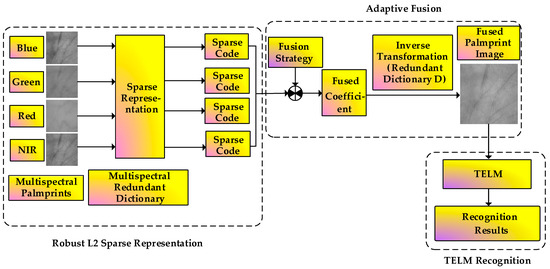

Figure 3 illustrates the flowchart of the presented RL2SR-TELM algorithm. It can be mainly separated into the following steps: Firstly, the acquired multispectral palmprint image is preprocessed to obtain the region of interest (ROI) of the image. Then, we calculate the sparse representation coefficients of sample images under different spectra by utilizing the proposed robust L2 sparse representation method. After that, an adaptive weighted fusion strategy is presented to obtain the fused images. Finally, by integrating the tensor theory with ELM, we propose a TELM method to complete the recognition task.

Figure 3.

Flowchart of the proposed RL2SR-TELM algorithm.

3.1. Robust L2 Sparse Representation Method

3.1.1. SRC Model

The sparse representation idea was introduced into the biometric recognition for the first time in 2009 by Wright et al. Given the training set matrix denoted as , where is a training sample, and denote the training sample dimension and number, respectively. For any given testing sample , we suppose that it can be coded over the training matrix approximately, then the SRC model can be described as:

where is the representation coefficient, denotes the L0 norm and it counts the nonzero element number of the vector. The objective of SRC is to find as fast as possible as sparse coefficient which can represent the testing sample over the training set. Model (1) is a NP hard problem and theoretically intractable. Reference [47] has proved that when the representation coefficient is sparse enough, the L0 norm can be approximately represented by using the L1 norm. On the basis of this theory, Wright et al. proposed the following model:

This is a classical model and it has been extensively used in various areas including the image reconstruction, image de-noising, compressive sensing and machine learning, and so on. Although many scholars have devoted themselves to this algorithm and proposed largely improvements, the drawback of inefficiency is still not completely resolved.

3.1.2. Robust L2 Sparse Representation Method

A SRC model actually supposes that the coding residual obeys a Gaussian or Laplacian probability density function distribution. However, this hypothetical description is not always accurate enough in practice. In addition, the SRC needs to solve the L1 regularization problem and its calculation speed is very slow. To address these drawbacks, many researchers have proposed a lot of improved SRC algorithms. For examples, Yang et al. [48] proposed a novel sparse representation method that solved the sparse representation problem by using the maximum likelihood estimation (MLE) method. It can deal with the occlusion and outliers more robustly. Xu et al. [49] made use of the L2 regularization to acquire the sparse coefficient and proposed a new discriminative sparse representation method (DSRM). Inspired by these ideas, we propose a novel robust L2 regularization based sparse representation method, namely RL2SR.

Suppose that there are different spectral bands, the class number of each spectral palmprint is and each class has training samples. Thus, there are training samples for each spectrum. Vectorize the training sample into the dimensional column vector, then the training sample matrix can be denoted as , where is the training sample sub-matrix of the i-th class, are the (m×i)-th training samples under different spectra. Then, given any testing sample , where denotes the spectral bands, we can construct the following optimization problem:

where is a constant namely regularization parameter which can balance the representation residual term and the regularization term. Here, is the linear representation coefficient with respect to the testing sample over the training set.

For the first term of the optimization function (3), it can be denoted as , where . Then:

where denotes the residual term with respect to the element between and its approximate linear representation . and are the k-th element of the testing sample and the row of the training set matrix, respectively. In general, the residual function is designed to minimize the effect generated by the occlusion and outliers. Huber, Cauchy and Welsch functions can be used to express the residual function. In reference [48], Yang et al. utilized the logistic function to describe the residual information and got satisfactory performance. The logistic function can be expressed as follows:

where and are the positive parameters. The selection of parameters and will be discussed in Section 4.2. In order to solve question (3), we derivative with respect to Ai, then we have:

Furthermore, since , where denotes the residual function, Equation (6) can be regarded as the derivative of .

By using Equation (5), the residual function can be calculated as follows:

For the residual matrix , the following method is proposed to calculate it:

Step 1: Initiate and calculate the collaborative code of each testing sample by using the collaborative representation model

Step 2: Substitute the collaborative residual into Equation (7) and obtain the residual matrix .

Step 3: If is not convergent, repeat step 1 and step 2, otherwise output .

With the residual matrix calculated, Equation (3) can be rewritten as follows

Due to the existence of parameter , omit the coefficient in front of the first term and the Equation (8) becomes:

For the second term of the optimal objective function, SRC [25] adopted the L1 norm to realize the sparseness of linear representation coefficient. In general, an iterative algorithm is employed to solve the L1 norm regularization based sparse representation problem. There are many famous algorithms [50] to implement the iteration, such as L1 regularized least squares (L1LS), homotopy method, augmented Lagrangian method (ALM), orthogonal matching pursuit method (OMP) [51] and fast iterative shrinkage thresholding algorithm (FISTA), etc. However, these methods still suffer from the issue of low efficiency. To address this issue, Zhang et al. [52] introduced the collaborative representation-based classification (CRC) into the method and utilized the L2 regularization to obtain the representation coefficient. Although CRC provided an efficient algorithm, it failed to give full consideration to the sparseness of linear representation. Reference [49] employed L2 regularization to implement the face recognition by utilizing a discriminative sparse representation method. Inspired by this, the L2 regularization item is introduced into our model and a novel RL2SR model is proposed as follows:

Since:

Equation (11) can be separated into two parts. Minimizing implies that the correlation between the i-th class and j-th class is also minimal with respect to the linear representation. This makes the linear approximation combination have the best discrimination ability. Thus, the second term of Equation (11) has the capability of decorrelating the linear representation combination with different classes. Correspondingly, minimization of the sum , instead of any individual terms, can accomplish the decorrelation affection for different classes. In consequence, this approach can discriminate the testing sample to the really nearest class. Minimization of means that the norm of the linear representation combination with each class is also small. Similar to the presented linear representation approaches, such as SRC and CRC, there is a competitive relationship between different classes of training samples. In other word, the testing sample can be denoted by the weight sum of the training samples from all of the classes. Obviously, that is a linear representation which means every class makes its impact to represent the testing sample. Competition in representation implies that when a class makes an important impact to the linear representation, the remainder classes make considerably less impact.

The objective function shown in Equation (10) can be rewritten as:

For the first term of objective function (12), using instead of implies that is a linear approximation of the test image. That is to say, this model can tolerate considerable noise contamination. In the meantime, the residual function can measure the linear representation residual well and enhance the noise robustness of the proposed model. In order to optimize the presented model, we introduce the following theorem:

Theorem 1.

The proposed RL2SR model (12) is convex and differentiable w.r.t. coefficient, and it has a closed form solution.

Proof.

Firstly, the objective function (12) can be considered as a combination of two L2 regularization terms, i.e., and . By adopting the properties of L2 norm, the convexity and derivative of the proposed model (12) can be easily proved.

Secondly, the derivative of function can be computed as follows:

On the other hand, for the second term , since it does not contain the coefficient explicitly, we could not compute the derivative directly. To address this issue, we compute the partial derivatives of w.r.t. . Denote , we have:

Then, we can obtain the derivative as follows:

By denoting:

we have:

As a consequence, the derivative of objective function (12) with respect to is:

By employing the property of optimal solution, and setting is as zero, the closed solution of objective function (12) is obtained as follows:

The proof of Theorem 1 is thus completed. □

The proposed RL2SR method is summarized in Table 1.

Table 1.

Robust L2 sparse representation algorithm.

3.2. Image Fusion Based on Adaptive Weighted Method

In this section, a weighted sparse and collaborative concentration index is introduced to quantify the discrimination of each spectral testing sample and an adaptive weighted fusion method is proposed to construct the fused palmprint image.

Definition 1.

[25] (sparse concentration index (SCI)) The SCI of a coefficient vectoris defined as:

whereis the class number,is an indicator function defined onwhich keeps the coefficients affiliated to theclass and sets all the other coefficients to be zero.

Obviously, implies that the training samples from a unitary class can express the testing sample well. On the contrary, means that all of the training samples have an average impact to represent the testing sample. Therefore, SCI can measure the sparseness of the linear representation coefficient and the discrimination ability of the testing sample efficiently. If , the testing sample has the strongest discrimination ability and it can be easily classified into the correct class. If , the testing sample has the weakest discrimination ability and we cannot determine the actual class that the testing sample should belong to.

The SCI uses the L1 norm to evaluate the sparseness of the linear representation coefficient and it can’t efficiently evaluate the coefficient obtained by our RL2SR method since the L2 norm regularization is utilized. It considers not only the sparseness, but also the collaborative representation information of the representation coefficient. To address this issue, the definition of SCI is extended and a weighted sparse and collaborative concentration index, namely WSCCI, is proposed to evaluate the representation coefficient obtained by our RL2SR model.

Definition 2.

(weighted sparse and collaborative concentration index (WSCCI)) The WSCCI of a coefficient vectoris defined as:

wheredenotes the class number,andare nonnegative parameters.

In WSCCI, the weighted fusion of the sparse and collaborative concentration index defined by the L1 norm and L2 norm is utilized to evaluate the discriminative performance of the given sample. As a consequence, it can be regarded as the weighted sum of SCI and CCI (i.e., collaborative concentration index). From the above analysis, the proposed WSCCI can be utilized to model our adaptive weighted fusion method.

The proposed adaptive weighted image fusion method can be summarized as follows:

(1) For the linear representation coefficients obtained by Equation (13), calculate the by using Equation (15).

(2) Normalize by using:

(3) Reconstruct the fused multispectral palmprint image y by using:

With the fused multispectral palmprint image obtained, TELM is proposed to implement the recognition task.

3.3. Principle of Tensor Based ELM

ELM can be considered as a generalized single hidden layer feedforward neural network (SLFN). Since ELM randomly chooses the initial values of the hidden nodes and analytically calculates the output weights, the learning speed is extremely fast compared to the conventional supervised learning algorithms (i.e., support vector machine (SVM) [53] and k-nearest neighbor (KNN) algorithm, etc.). In addition, its generalization ability is better than many back propagation neural networks algorithms. In consequence, ELM has been extensively studied and widely applied in lots of areas (such as pattern classification, clustering analysis and regression etc.) and plenty of research achievements have been acquired. Inspired by this idea, we present a novel TELM by extend the conventional ELM to the tensor space, and it can regard the image as a tensor to execute the recognition task.

3.3.1. ELM

Given a training set with different training samples , where denotes the training sample, represents the target of sample . A classical SLFNs can be theoretically defined by:

In this model, the hidden node number is and activation function is . denotes the input weight value which connects the input nodes with the hidden node. denotes the output weight value which connects the output nodes with the hidden node. denotes the bias for the hidden node. means a dot product between and . The classical SLFNs can approximate the given training samples set with the minimum residual.

Obviously, Equation (18) is a system of linear equations. By introducing the concept of matrix, we can rewrite it as follows:

where:

Theorem 2.

For a given normative SLFNs which possesseshidden nodes and an activation function, whereis an infinitely differentiable function on the definition interval. Given a training set withdifferent samples, wheredenotes the sample data andrepresents the target of. For any randomly assigned weightand bias, the output matrixof the hidden layer can be obtained by the pseudo-inverse and satisfiesfor probability one with respect to any continuous probability distribution.

For the proof of the Theorem 2 readers can refer to [45]. Based on this theory, ELM can be descripted as follows: With the initial weight vector and the biases of hidden layer nodes determined by random assignment, we can obtain the output matrix for the hidden layer based on the input samples. Therefore, we can transform the training procedure of ELM to a classical least squares problem of linear equations, i.e.,

We can obtain the least square solution of Equation (20) as follows:

where refers to the Moore-Penrose pseudo-inverse for matrix .

3.3.2. Tensor Based ELM

Although the conventional ELM can deal well with one-dimensional signals, for two-dimensional images, it needs to be vectorized and solved in the one-dimensional space. However, in this transformation it is easy to lose the spatial structure information of the image. In order to solve this problem, we extend the conventional ELM to the tensor space and put forward a novel tensor- based ELM to deal with the high-dimensional signals.

In view of the high-dimensional characteristics of the palmprint image, we regard the fused image as a second-order tensor and classify it by the proposed TELM. In our method, the high order singular value decomposition (HOSVD) algorithm [54] is utilized to decompose the fused palmprint image and construct the input weight values of the TELM model.

Given an order tensor and a matrix , we define as the modal product of and , the elements of can be calculated by:

so the m-th modal tensor product can be simply denoted by:

The HOSVD algorithm can be implemented by using the tensor product. Given an order tensor , we can use the tensor product to decompose in the following:

where denotes an order tensor which is called as a core tensor, are unitary matrices and each column is corresponding to the orthogonal basis of unfolded matrices .

The low rank approximation of tensor can be calculated by HOSVD, i.e.,

where represents the principal component core tensor, represents the truncation matrix composed by the first columns of , .

According to the above discussion, we summarize the detailed process of the tensor based ELM as follows: let be the fused training palmprint image, be the target of sample . Denoted the training sample set as . Then the HOSVD algorithm utilized to decompose can be formulated as:

where and represent the truncation matrix with and columns, respectively. Then TELM can be defined as:

where and denote the hidden layer node numbers along the tensor directions. In consequence, there are in total hidden layer nodes. and denote the input weight vectors of the hidden layer along the tensor directions, respectively. denotes the weight value between the output nodes and the node in the hidden layer. Similar to ELM algorithm, denotes the activation function. Finally, the output weight can be obtained from Equation (27) by utilizing the least squares method.

4. Experiments

In this section, we evaluate the presented multispectral palmprint recognition algorithm on the benchmark available database offered by PolyU. Extensive experiments are implemented to demonstrate the effectiveness of the presented RL2SR method, adaptive fusion strategy and TELM. In the experiment of this paper, we use the fused palmprint image as the input of TELM classifier. In this section, we accomplish the experiments on a PC equipped with Windows 7, Intel Core i5-2320 CPU (3.0 GHz), and 6 GB RAM, and the algorithm is programmed using MATLAB 2017a.

4.1. The PolyU Multispectral Palmprint Database

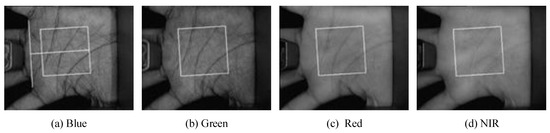

The PolyU multispectral palmprint database was taken from 250 persons where the males are 195 and females are 55. The age of volunteers was mainly between 20 and 60 years old. In order to embody the differences of the acquired palmprint and make the palmprint images be various, the palmprint images were acquired in two separate phases. The time interval between the two phases was 5–15 days and each phase lasted about 9 days. In each phase, both hands of the volunteers were acquired six times respectively under the condition of four different spectra: Blue (470 nm), Green (525 nm), Red (660 nm) and NIR (880 nm). For each spectrum, 500 different palmprints were acquired from the 250 volunteers in the two phases. Therefore, the database contains 6000 palmprint images under each spectrum. That is, the multispectral palmprint database contains 6000 × 4 = 24,000 images in total. Reference [46] provided the ROI extraction process from the acquired multispectral palmprint images and established the database namely PolyU multispectral palmprint database (see Figure 4).

Figure 4.

ROI extraction.

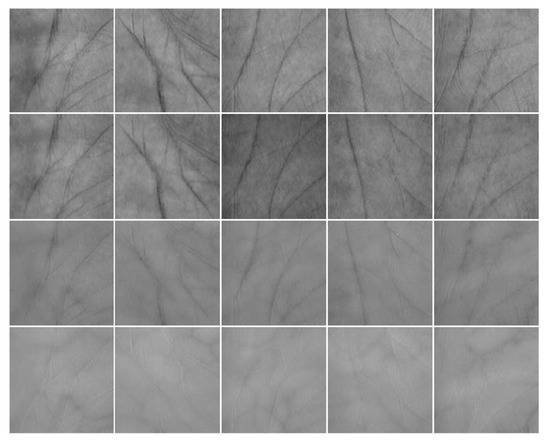

Figure 5 illustrates some images in the multispectral palmprint database. The images in the rows 1–4 are acquired under the Blue, Green, Red and NIR spectra, respectively. Every column is from the same class. In practice, the acquirement process is easily contaminated by various noises. To simulate this, the white Gaussian noise and salt & pepper noise are added into the images and the recognition experiments are implemented, respectively.

Figure 5.

Some multispectral palmprint images of PolyU database.

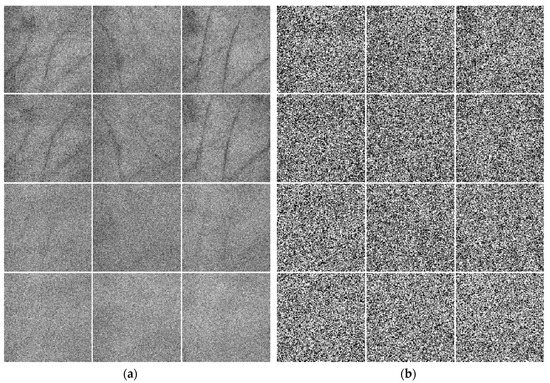

Figure 6 displays some multispectral palmprint images contaminated by different noises. Figure 6a shows the images contaminated by white Gaussian noise. Here, the mean is 0 and the standard deviation is 25. Meanwhile, Figure 6b shows the images contaminated by 50% salt & pepper noise. Rows 1–4 of Figure 6 exhibit the noisy palmprint images under the Blue, Green, Red and NIR spectra, respectively.

Figure 6.

Some multispectral palmprint images of PolyU database contaminated by different noises. (a) Palmprint images contaminated by white Gaussian noise. (b) Palmprint images contaminated by salt & pepper noise.

4.2. Parameter Selection

4.2.1. Selection of and for Residual Function

Now, let’s discuss the selection of parameters and for the residual function in Equation (7). It can be seen from Equation (7) that when . Similarly, when , . In order to make belong to (0, 1), set the product to be large enough, then . For simplicity, we denote . Since , in order to meet when , set . From Equation (7), , when , so the parameter determines the boundary point position of the residual function value. That is to say, is determined when the weight will pass through 0.5. For the sake of enhancing the robustness of the model for the outlier or noise contamination efficiently, a novel method of selecting the parameter is presented as follows. Firstly, vectorize the square of the error and denote it as , then arrange this vector’s elements in descending order and denote the new vector by . By denoting its maximum element as and the minimum element as , set , where is a constant and . Since the dimension of is , suppose that is the nearest integer to and the biggest element of is selected as . Finally, let . Once is selected, parameter can be calculated by . In our experiments, select the constant T = 8.

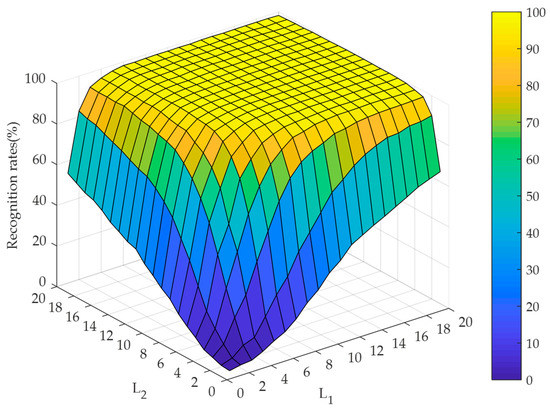

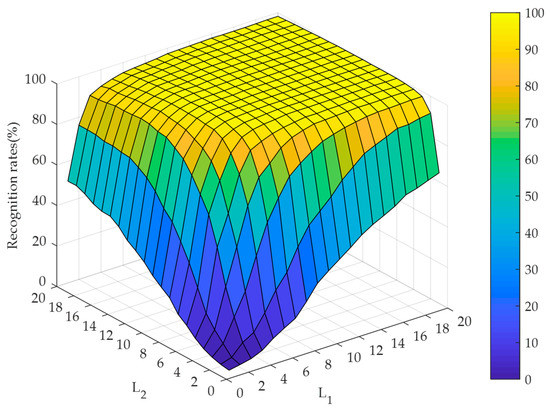

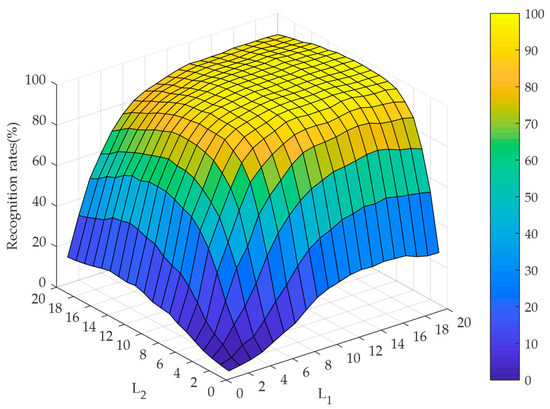

4.2.2. Selection of the Hidden Node Numbers Along the Directions of TELM

To evaluate the effect of the hidden node numbers along the directions of TELM, the experiments are implemented by setting the hidden node numbers varying from 1 to 20 under the cases of noise-free and different noise contaminations. The recognition performance is illustrated in Figure 7, Figure 8 and Figure 9. At the same time, Figure 7 and Figure 8 illustrate that our algorithm could converge rapidly with the increase of hidden node numbers. Obviously, when the hidden node numbers are both greater than 7, our algorithm achieves a perfect performance. From Figure 9, although the convergence performance is inferior to the noise-free case, our algorithm can still obtain better convergence speed. As a consequence, the appropriate hidden node numbers can be selected according to the above analysis. For simplicity, the hidden node numbers in our experiments are set as .

Figure 7.

Recognition rates for RL2SR-TELM algorithm when the hidden node numbers of TELM vary from 1 to 20 in the case of noise-free.

Figure 8.

Recognition rates for RL2SR-TELM algorithm when the hidden node numbers of TELM vary from 1 to 20 in the case of white Gaussian noise contamination.

Figure 9.

Recognition rates for RL2SR-TELM algorithm when the hidden node numbers of TELM vary from 1 to 20 in the case of 50% salt & pepper noise contamination.

4.3. Experiment Results and Analysis

In this subsection, the experiments are implemented to validate the efficiency of our presented algorithm from the aspects of sparse representation, fusion strategy, classification approach and the overall algorithm. For the sake of demonstrating the robustness of the presented RL2SR model, we accomplish the experiments compared with several different models, such as SRC, CRC and DSRM. The recognition rates are shown in Table 2.

Table 2.

Recognition rates for different representation methods.

From Table 2, it is easy to discover that each algorithm achieves the highest and the lowest recognition rates under the cases of noise-free and salt & pepper noise contamination, respectively. Since our proposed adaptive weighted fusion process approximates a spatial smoothing filtering, the decrease of recognition rate under the white Gaussian noise contamination is not obvious. Furthermore, by using our RL2SR coefficient for fusion, the recognition rates achieve 99.68%, 99.20% and 97.24%, which are 1.72%, 2.52 and 2.96% higher than DSRM under the cases of noise free, white Gaussian noise and SRC in the case of salt & pepper noise contamination, respectively. This indicates that our RL2SR is robust to different noises, which can improve the discriminant competency and increase the recognition rate of the fusion image.

To evaluate the efficiency of the presented adaptive fusion strategy, some comparison fusion experiments (i.e., the sum and min-max fusion strategy) are simulated and the recognition performance is listed in Table 3. In this experiment, the training sample number of each class varies from 2 to 4.

Table 3.

Recognition rates for different fusion methods when the training sample number per class varies from 2 to 4 under the cases of noise-free and different noise contaminations.

Table 3 illustrates that the recognition accuracies under different fusion strategies increases with the training sample number. In particularly, our presented fusion strategy achieves the highest recognition accuracy of 100%, 99.95% and 99.05% when we set the number of training samples as 4. Even when the training sample number declines to 2, our approach achieves an accuracy of 92.27% which is 19.74% higher than the min-max fusion strategy in the case of salt & pepper noise contamination (72.53%). This implies that our fusion strategy has the strongest robustness compared with the sum and min-max fusion methods.

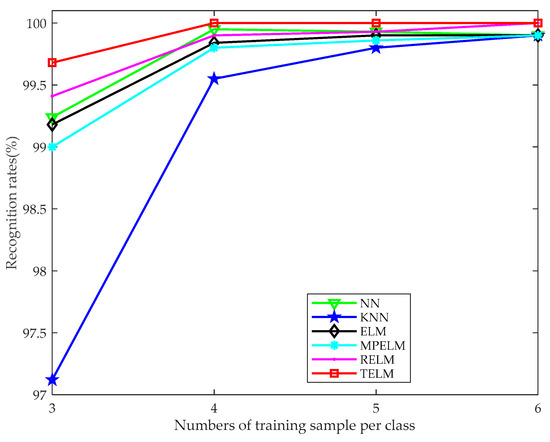

To demonstrate the classification efficiency of the presented TELM, we accomplish the experiments compared with some other classifiers, such as NN, KNN, ELM, MPELM and RELM. For these comparison classifiers, we vectorize the fused image and take this vector as the input. For each classifier, 3–6 training samples are selected to complete the recognition experiments and the classification accuracy curves are plotted in Figure 10.

Figure 10.

Recognition rate curves for different classifiers when the training sample number varies from 3 to 6 in the case of noise-free.

The curves in Figure 10 indicate that when the training sample number is greater than or equal to 4, the recognition rates of all the algorithms achieve excellent performance. The experimental results also show that, in the case of noise-free, the recognition rate of our proposed TELM algorithm gradually increases with the number of training samples. On the other hand, our TELM achieves higher recognition rates than the other algorithms. Although the improvement is not significant because the recognition rate is much approximate or even reaches to 100%. From the above analysis, it is easy to observe that the presented TELM algorithm can achieve efficient recognition performance and has strong stability compared with the other classifiers. Furthermore, more simulation experiments are implemented with the multispectral palmprint database when it is contaminated by the aforementioned noise. The recognition performances are illustrated in Table 4.

Table 4.

Recognition rates for different classifiers under the cases of noise-free and noise contaminations.

It is observed from Table 4 that, in the case of white Gaussian noise contamination, the recognition rate of TELM outperforms the other classifiers. Meanwhile, the recognition accuracy of the presented TELM is remarkably higher than the other methods under the case of salt & pepper noise contamination. In consideration of the pulse characteristic of the salt & pepper noise, it impacts remarkably on the distance measurement between different samples. When the testing samples are contaminated by salt & pepper noise, the recognition accuracy of KNN method achieves 38.92%, which is significantly lower than our TELM algorithms (97.24%). Since the proposed TELM abandons the eigenvectors corresponding to the smaller eigenvalues which have the higher correlation to the noise contamination, and retains the principal components corresponding to the major eigenvalues, TELM has the ability of noise reduction and the better discrimination ability. The experimental results in Table 4 also validate that our algorithm can achieve the higher recognition rate and possess the stronger robustness to noise contamination compared with the other classifiers.

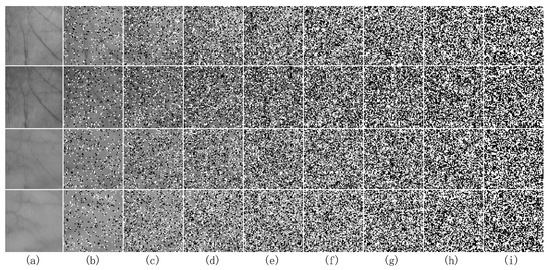

To further validate the robustness of the proposed TELM algorithm, we add different degrees of salt & pepper noise to the testing sample and implement the recognition experiment. Figure 11 shows some noisy multispectral palmprint images contaminated by salt & pepper noise with 10% to 80% percentages. Figure 11a is the original images under different spectra. Figure 11b–i are the noisy contaminated images under different spectra when the degree of salt & pepper noise varies from 10% to 80%.

Figure 11.

Some multispectral palmprint images contaminated by different percentages of salt & pepper noise. (a) is the original images under Blue, Green, Red and NIR spectrums. (b–i) are the images contaminated by 10–80% salt & pepper noise under different spectrums.

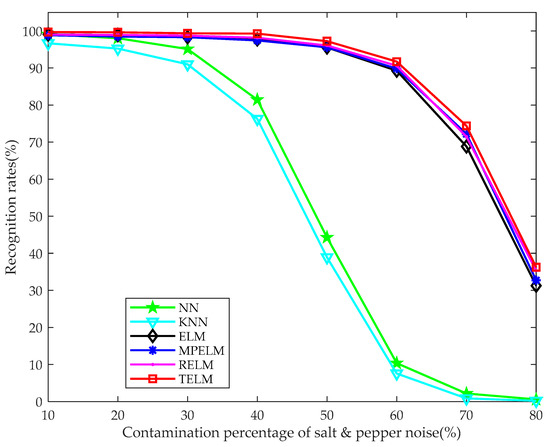

Figure 12 illustrates the recognition rate curves of our TELM algorithm and some of the aforementioned comparison classifiers. It is easy to find that the recognition rate curves of ELM MPELM, RELM and our algorithm drop significantly when the percentage of noise contamination is greater than 60%. Particularly, the recognition rate curves of NN and KNN methods are obviously lower than the other algorithms when the palmprint image is contaminated by more than 20% salt & pepper noise. That is to say, the accuracy curves of NN and KNN have the fast decline. The experiment result curves mean that our proposed TELM algorithm outperforms the comparison classifiers with different percentages of noise contamination and possesses stronger robustness.

Figure 12.

Recognition rates for different algorithms when the training sample number is 3 and the percentage of the salt & pepper noise contamination varies from 10% to 80%.

Table 5 illustrates the average classification times of the aforementioned classifiers on the whole database. Although our TELM classifier is slower than the ELM method, the difference (i.e., 0.08 s) is very small. Moreover, it is distinctly faster than NN, KNN, MPELM and RELM classifiers. Especially, the classification time of NN is about five times that of our TELM. In additional, the above experiment results demonstrate that the recognition performance of our classifier significantly exceeds the NN, KNN, ELM, MPELM and RELM classifiers. This validates the recognition ability and efficiency of our algorithm.

Table 5.

Classification time for different classifiers.

Table 6 lists the recognition rates of our RL2SR-TELM algorithm with different spectral combinations. This experiment is implemented under the cases of noise-free, white Gaussian noise and 50% salt & pepper noise contamination and the training sample number per class is 4.

Table 6.

Recognition rates for our RL2SR-TELM with different spectral combinations under the cases of noise-free and different noise contaminations.

Table 6 summarizes the excellent performance of our presented algorithm in the cases of noise-free and white Gaussian noise contamination. In the noise-free case, the recognition accuracy achieves 100% for most of the spectral combinations. Even when the sample is contaminated by white Gaussian noise, our algorithm achieves the accuracy of more than 99.50% for all of the spectral combinations and 99.95% under the combination of Blue, Green, Red and NIR spectra. When the testing sample is contaminated by salt & pepper noise, the recognition rate declines significantly and achieves the lowest recognition rate 76.75% under the NIR spectrum. At the meantime, our RL2SR-TELM algorithm achieves an recognition performance under the combination of Blue, Green, Red and NIR spectra in the noise-free , white Gaussian noise and salt & pepper noise contamination cases, i.e., 100%, 99.95% and 99.05%, respectively. This indicates that our proposed RL2SR-TELM algorithm has excellent robustness to noise pollution.

Table 7 illustrates the recognition rates of our RL2SR-TELM algorithm compared with some state-of-the art palmprint recognition methods, such as deep scattering network method [18], texture feature-based method [42], and DCT-based features method [43] etc. It is easy to find that in the case of different training samples, our algorithm achieves an excellent recognition performance. Although the recognition accuracy of our algorithm is 0.32% lower than the deep scattering network method when the training sample number is three, and it is higher than the texture feature based method and DCT-based features method when the training sample number is four. Particularly, the recognition rate of our proposed algorithm reaches 100% when the number of training samples is greater than four.

Table 7.

Recognition rates for different multispectral palmprint recognition algorithms in the case of noise-free.

Table 8 lists the recognition rates of our RL2SR-TELM algorithm comparing with some state-of-the-art multispectral palmprint recognition algorithms, such as matching score-level fusion by LOC method, DST-MPELM method, AE-RELM method, quaternion PCA using quaternion DWT method and image-level fusion by DWT method. In this experiment, we choose three samples per class to constitute the training set. The experimental results in Table 8 illustrate that our proposed algorithm can achieve an excellent recognition accuracy in the cases of both the noise-free (99.68%) and various noise contaminations (99.20% and 97.24%, respectively).

Table 8.

Recognition rates for our RL2SR-TELM and some other multispectral palmprint recognition algorithms.

When the sample is contaminated by salt & pepper noise, the presented algorithm has more obvious advantages, which an accuracy that is respectively 0.76%, 7.26%, 1.48%, 7.08% and 14.49% higher than that of the other comparison algorithms. Table 9 demonstrates the time cost of our proposed RL2SR-TELM multispectral recognition algorithm for each test sample. It is easy to find that our RL2SR-TELM algorithm takes about 0.10945 s for a test sample recognition task.

Table 9.

Time cost of our RL2SR-TELM algorithm.

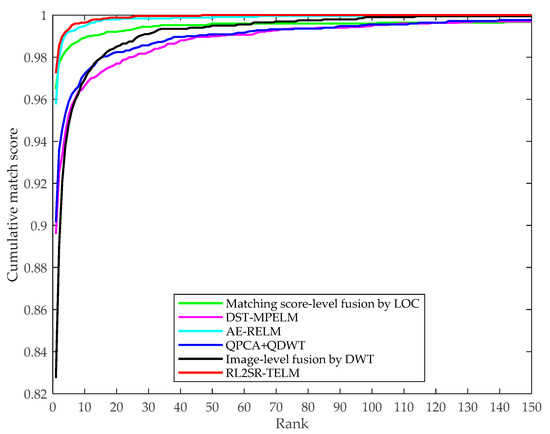

To further demonstrate the performance of our presented RL2SR-TELM method, in the case of salt & pepper noise contamination, we plot the cumulative match characteristic (CMC) curves generated by our RL2SR-TELM method and the aforementioned comparison methods. Figure 13 shows the CMC curves.

Figure 13.

Performance for different multispectral palmprint recognition algorithms in terms of cumulative match characteristic curves.

From Figure 13, it is easy to find that our presented RL2SR-TELM method has the highest rank-1 recognition accuracy. Meanwhile, the cumulative match characteristic curve of our algorithm is mostly close to the upper left corner of the coordinate system comparing with the comparison multispectral palmprint recognition approaches which means that it has the rapidest convergence speed. This implies that our algorithm outperforms the others in recognition accuracy and noise robustness, and it is quite consistent with the aforementioned experiment results and analysis.

5. Conclusions

In this paper, a novel RL2SR-TELM algorithm is presented to implement multispectral palmprint recognition. Since the L2 regularization term is employed, the regularization optimal objective function is convex and a closed solution can be efficiently obtained. In addition, a new measurement, namely WSCCI, and an adaptive fusion framework are proposed to construct the fused multispectral palmprint images. For the classification task, we extend the conventional extreme leaning machine to the tensor domain and present a TELM algorithm. It deals with the palmprint image in two-dimensional space directly and makes the best use of its spatial structure to enhance the classification ability. Extensive experiments on PolyU multispectral palmprint database confirm the strong robustness, excellent recognition accuracy and high efficiency of our proposed algorithm.

Author Contributions

Conceptualization, D.C. and X.Z.; Methodology, D.C.; Software, D.C.; Validation, D.C., X.Z. and X.X.; Formal Analysis, D.C.; Writing-Original Draft Preparation, D.C.; Writing-Review & Editing, D.C.; Supervision, X.Z.; Project Administration, X.Z.; Funding Acquisition, X.Z. and X.X.

Funding

This research was funded by National Natural Science Foundation (No. 61673316), Major Science and Technology Project of Guangdong Province (No. 2015B010104002).

Acknowledgements

The authors would like to thank the anonymous reviewers and academic editor for all the suggestions and comments and MDPI Branch Office in China for improving this manuscript.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Cui, J.R. 2D and 3D Palmprint fusion and recognition using PCA plus TPTSR method. Neural Comput. Appl. 2014, 24, 497–502. [Google Scholar] [CrossRef]

- Lu, G.M.; Zhang, D.; Wang, K.Q. Palmprint recognition using eigenpalms features. Pattern Recogn. Lett. 2003, 24, 1463–1467. [Google Scholar] [CrossRef]

- Bai, X.F.; Gao, N.; Zhang, Z.H.; Zhang, D. 3D palmprint identification combining blocked ST and PCA. Pattern Recogn. Lett. 2017, 100, 89–95. [Google Scholar] [CrossRef]

- Zuo, W.M.; Zhang, H.Z.; Zhang, D.; Wang, K.Q. Post-processed LDA for face and palmprint recognition: What is the rationale. Signal Process. 2010, 90, 2344–2352. [Google Scholar] [CrossRef]

- Rida, I.; Herault, R.; Marcialis, G.L.; Gasso, G. Palmprint recognition with an efficient data driven ensemble classifier. Pattern Recogn. Lett. In press. [CrossRef]

- Rida, I.; Al Maadeed, S.; Jiang, X.; Lunke, F.; Bensrhair, A. An ensemble learning method based on random subspace sampling for palmprint identification. In Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Calgary, AB, Canada, 15–20 April 2018; pp. 2047–2051. [Google Scholar]

- Shang, L.; Huang, D.S.; Du, J.X.; Zheng, C.H. Palmprint recognition using FastICA algorithm and radial basis probabilistic neural network. Neurocomputing 2006, 69, 1782–1786. [Google Scholar] [CrossRef]

- Pan, X.; Ruan, Q.Q. Palmprint recognition using Gabor feature-based (2D)2PCA. Neurocomputing 2008, 71, 3032–3036. [Google Scholar] [CrossRef]

- Ekinci, M.; Aykut, M. Gabor-based kernel PCA for palmprint recognition. Electron. Lett. 2007, 43, 1077–1079. [Google Scholar] [CrossRef]

- Ekinci, M.; Aykut, M. Palmprint recognition by applying wavelet-based kernel PCA. J. Comput. Sci. Technol. 2008, 23, 851–861. [Google Scholar] [CrossRef]

- Fei, L.; Zhang, B.; Xu, Y.; Yan, L.P. Palmprint recognition using neighboring direction indicator. IEEE Trans. Hum. Mach. Syst. 2016, 46, 787–798. [Google Scholar] [CrossRef]

- Zheng, Q.; Kumar, A.; Pan, G. A 3D feature descriptor recovered from a single 2D palmprint image. IEEE Trans. Pattern Anal. 2016, 38, 1272–1279. [Google Scholar] [CrossRef] [PubMed]

- Younesi, A.; Amirani, M.C. Gabor filter and texture based features for palmprint recognition. Procedia Comput. Sci. 2017, 108, 2488–2495. [Google Scholar] [CrossRef]

- Fei, L.K.; Xu, Y.; Tang, W.L.; Zhang, D. Double-orientation code and nonlinear matching scheme for palmprint recognition. Pattern Recogn. 2016, 49, 89–101. [Google Scholar] [CrossRef]

- Gumaei, A.; Sammouda, R.; Al-Salman, A.M.; Alsanad, A. An effective palmprint recognition approach for visible and multispectral sensor images. Sensors 2018, 18, 1575. [Google Scholar] [CrossRef]

- Tabejamaat, M.; Mousavi, A. Concavity-orientation coding for palmprint recognition. Multimed. Tools Appl. 2017, 76, 9387–9403. [Google Scholar] [CrossRef]

- Chen, H.P. An efficient palmprint recognition method based on block dominant orientation code. Optik 2015, 126, 2869–2875. [Google Scholar] [CrossRef]

- Minaee, S.; Wang, Y. Palmprint recognition using deep scattering convolutional network. In Proceedings of the 2017 IEEE International Symposium on Circuits and Systems (ISCAS), Baltimore, MD, USA, 28–31 May 2017; pp. 1–4. [Google Scholar]

- Tamrakar, D.; Khanna, P. Kernel discriminant analysis of block-wise Gaussian derivative phase pattern histogram for palmprint recognition. J. Vis. Commun. Image Represent. 2016, 40, 432–448. [Google Scholar] [CrossRef]

- Li, G.; Kim, J. Palmprint recognition with local micro-structure tetra pattern. Pattern Recogn. 2017, 61, 29–46. [Google Scholar] [CrossRef]

- Luo, Y.T.; Zhao, L.Y.; Zhang, B.; Jia, W.; Xue, F.; Lu, J.T.; Zhu, Y.H.; Xu, B.Q. Local line directional pattern for palmprint recognition. Pattern Recogn. 2016, 50, 26–44. [Google Scholar] [CrossRef]

- Jia, W.; Hu, R.X.; Lei, Y.K.; Zhao, Y.; Gui, J. Histogram of oriented lines for palmprint recognition. IEEE Trans. Syst. Man Cybern. Syst. 2014, 44, 385–395. [Google Scholar] [CrossRef]

- Zhang, S.W.; Wang, H.X.; Huang, W.Z.; Zhang, C.L. Combining modified LBP and weighted SRC for palmprint recognition. Signal Image Video Process. 2018, 12, 1035–1042. [Google Scholar] [CrossRef]

- Guo, X.M.; Zhou, W.D.; Zhang, Y.L. Collaborative representation with HM-LBP features for palmprint recognition. Mach. Vis. Appl. 2017, 28, 283–291. [Google Scholar] [CrossRef]

- Wright, J.; Yang, A.Y.; Ganesh, A.; Sastry, S.S.; Ma, Y. Robust face recognition via sparse representation. IEEE Trans. Pattern Anal. 2009, 31, 210–227. [Google Scholar] [CrossRef] [PubMed]

- Maadeed, S.A.; Jiang, X.D.; Rida, I.; Bouridane, A. Palmprint identification using sparse and dense hybrid representation. Multimed. Tools Appl. 2018, 1–15. [Google Scholar] [CrossRef]

- Tabejamaat, M.; Mousavi, A. Manifold sparsity preserving projection for face and palmprint recognition. Multimed. Tools Appl. 2017, 77, 12233–12258. [Google Scholar] [CrossRef]

- Zuo, W.M.; Lin, Z.C.; Guo, Z.H.; Zhang, D. The multiscale competitive code via sparse representation for palmprint verification. In Proceedings of the 2010 International IEEE Conference on Computer Vision and Pattern Recognition (CVPR), San Francisco, CA, USA, 13–18 June 2010; pp. 2265–2272. [Google Scholar]

- Xu, Y.; Fan, Z.Z.; Qiu, M.N.; Zhang, D.; Yang, J.Y. A sparse representation method of bimodal biometrics and palmprint recognition experiments. Neurocomputing 2013, 103, 164–171. [Google Scholar] [CrossRef]

- Rida, I.; Al Maadeed, N.; Al Maadeed, S. A novel efficient classwise sparse and collaborative representation for holistic palmprint recognition. In Proceedings of the 2018 IEEE NASA/ESA Conference on Adaptive Hardware and Systems (AHS), Edinburgh, UK, 6–9 August 2018; pp. 156–161. [Google Scholar]

- Rida, I.; Maadeed, S.A.; Mahmood, A.; Bouridane, A.; Bakshi, S. Palmprint identification using an ensemble of sparse representations. IEEE Access 2018, 6, 3241–3248. [Google Scholar] [CrossRef]

- Han, D.; Guo, Z.H.; Zhang, D. Multispectral palmprint recognition using wavelet-based image fusion. In Proceedings of the IEEE International Conference on Signal Processing (ICSP), Beijing, China, 26–29 October 2008; pp. 2074–2077. [Google Scholar]

- Aberni, Y.; Boubchir, L.; Daachi, B. Multispectral palmprint recognition: A state-of-the-art review. In Proceedings of the IEEE International Conference on Telecommunications and Signal Processing, Barcelona, Spain, 5–7 July 2017; pp. 793–797. [Google Scholar]

- Bounneche, M.D.; Boubchir, L.; Bouridane, A.; Nekhoul, B.; Cherif, A.A. Multi-spectral palmprint recognition based on oriented multiscale log-Gabor filters. Neurocomputing 2016, 205, 274–286. [Google Scholar] [CrossRef]

- Hong, D.F.; Liu, W.Q.; Su, J.; Pan, Z.K.; Wang, G.D. A novel hierarchical approach for multispectral palmprint recognition. Neurocomputing 2015, 151, 511–521. [Google Scholar] [CrossRef]

- Raghavendra, R.; Busch, C. Novel image fusion scheme based on dependency measure for robust multispectral palmprint recognition. Pattern Recogn. 2014, 47, 2205–2221. [Google Scholar] [CrossRef]

- Xu, X.P.; Guo, Z.H.; Song, C.J.; Li, Y.F. Multispectral palmprint recognition using a quaternion matrix. Sensors 2012, 12, 4633–4647. [Google Scholar] [CrossRef] [PubMed]

- Gumaei, A.; Sammouda, R.; Al-Salman, A.M.; Alsanad, A. An improved multispectral palmprint recognition system using autoencoder with regularized extreme learning machine. Comput. Intell. Neurosci. 2018, 2018, 8041069. [Google Scholar] [CrossRef] [PubMed]

- Xu, X.B.; Lu, L.B.; Zhang, X.M.; Lu, H.M.; Deng, W.Y. Multispectral palmprint recognition using multiclass projection extreme learning machine and digital shearlet transform. Neural Comput. Appl. 2016, 27, 143–153. [Google Scholar] [CrossRef]

- El-Tarhouni, W.; Boubchir, L.; Elbendak, M.; Bouridane, A. Multispectral palmprint recognition using Pascal coefficients-based LBP and PHOG descriptors with random sampling. Neural Comput. Appl. 2017, 1–11. [Google Scholar] [CrossRef]

- Zhang, D.; Guo, Z.H.; Lu, G.M.; Zhang, L.; Zuo, W.M. An online system of multispectral palmprint verification. IEEE Trans. Instrum. Meas. 2010, 59, 480–490. [Google Scholar] [CrossRef]

- Minaee, S.; Abdolrashidi, A.A. Multispectral palmprint recognition using textural features. In Proceedings of the 2014 IEEE Signal Processing in Medicine and Biology Symposium (SPMB), Philadelphia, PA, USA, 13 December 2014; pp. 1–5. [Google Scholar]

- Minaee, S.; Abdolrashidi, A.A. On the power of joint wavelet-DCT features for multispectral palmprint recognition. In Proceedings of the 2015 49th Asilomar Conference on Signals, Systems and Computers, Pacific Grove, CA, USA, 8–11 November 2015; pp. 1593–1597. [Google Scholar]

- Li, C.; Benezeth, Y.; Nakamura, K.; Gomez, R.; Yang, F. A robust multispectral palmprint matching algorithm and its evaluation for FPGA applications. J. Syst. Archit. 2018, 88, 43–53. [Google Scholar] [CrossRef]

- Huang, G.B.; Zhu, Q.Y.; Siew, C.K. Extreme learning machine: Theory and applications. Neurocomputing 2006, 70, 489–501. [Google Scholar] [CrossRef]

- Zhang, D.; Kong, W.K.; You, J.; Wong, M. Online palmprint identification. IEEE Trans. Pattern Anal. 2003, 25, 1041–1050. [Google Scholar] [CrossRef]

- Donoho, D. For most large underdetermined systems of linear equations the minimal 𝓁1-norm solution is also the sparsest solution. Commun. Pur. Appl. Math. 2006, 59, 797–829. [Google Scholar] [CrossRef]

- Yang, M.; Zhang, L.; Yang, J.; Zhang, D. Robust sparse coding for face recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Colorado Springs, CO, USA, 20–25 June 2011; pp. 625–632. [Google Scholar]

- Xu, Y.; Zhong, Z.F.; Jang, J.; You, J.; Zhang, D. A new discriminative sparse representation method for robust face recognition via 𝓁 2 regularization. IEEE Trans. Neural Netw. Learn Syst. 2017, 28, 2233–2242. [Google Scholar] [CrossRef]

- l1_ls: Simple MATLAB Solver for l1-Regularized Least Squares Problems. Available online: http://web.stanford.edu/~boyd/l1_ls/ (accessed on 15 May 2008).

- Yang, A.Y.; Zhou, Z.H.; Balasubramanian, A.G.; Sastry, S.S.; Ma, Y. Fast 𝓁1-minimization algorithms for robust face recognition. IEEE Trans. Image Process. 2013, 22, 3234–3246. [Google Scholar] [CrossRef] [PubMed]

- Zhang, L.; Yang, M.; Feng, X.C. Sparse representation or collaborative representation: Which helps face recognition? In Proceedings of the IEEE International Conference on Computer Vision, Barcelona, Spain, 6–13 November 2011; pp. 471–478. [Google Scholar]

- Cortes, C. Support vector network. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Kolda, T.G.; Bader, B.W. Tensor decompositions and applications. SIAM Rev. 2009, 51, 455–500. [Google Scholar] [CrossRef]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).