Evaluation of the Create@School Game-Based Learning–Teaching Approach

Abstract

:1. Introduction

1.1. The Learning Theory and Game-Based Learning

1.2. The Approach and Motivation

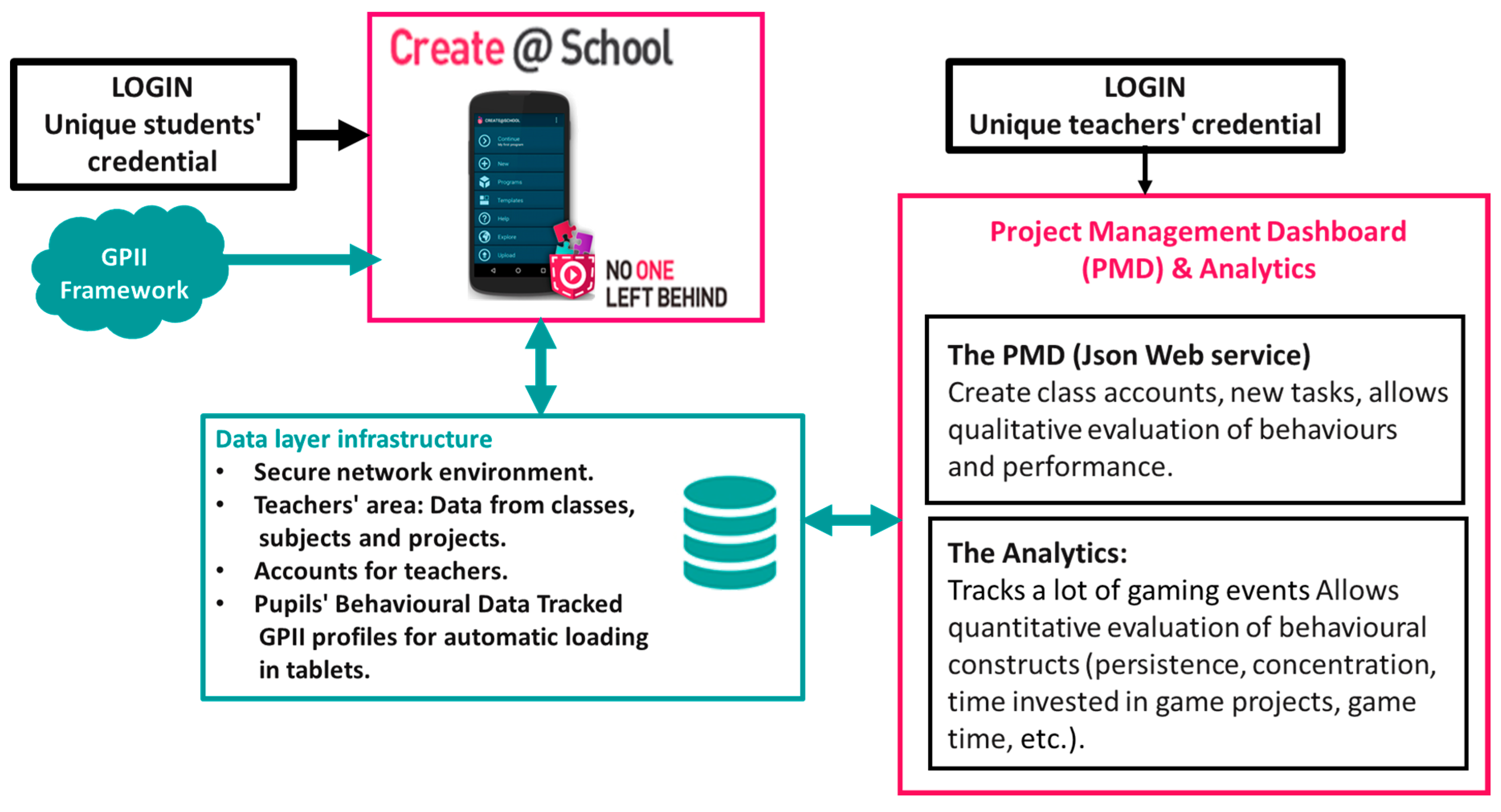

- The Create@School App, targeting the students; and

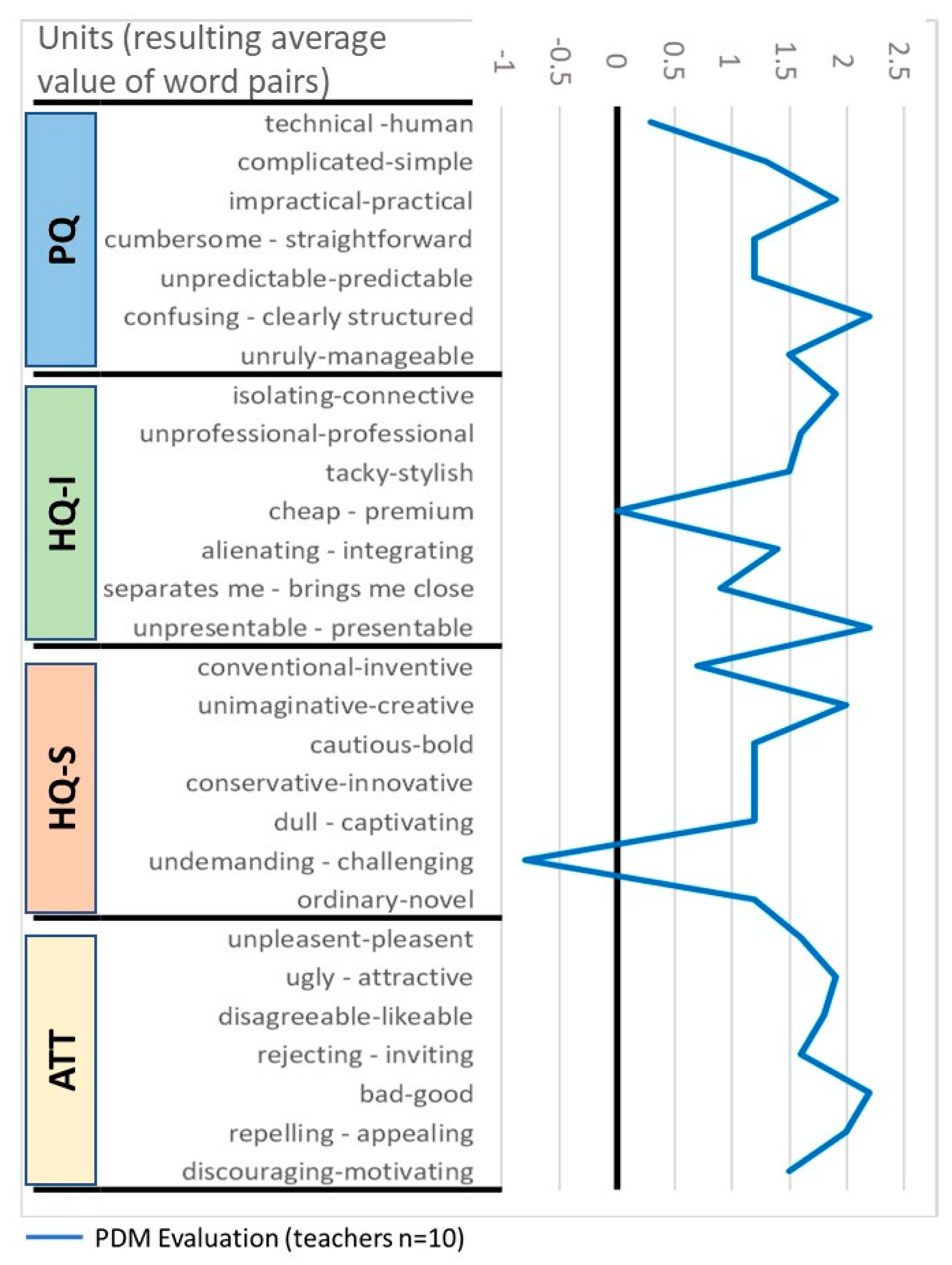

- The Project Management Dashboard (PMD) [31], targeting their educators.

2. Materials and Methods

2.1. Materials

- Pocket Code Framework [32]: An open-source framework for mobile devices that allows children to create their own games, animations, music, videos, and many types of apps, directly on their phones or tablets. Pocket Code provides pre-coded modules, so called bricks, that enable connection with mobile devices sensors, as well as links with other sensor-based approaches and developments, such as LEGO Mindstorms®. Pocket Code is the bais of the Create@School App and was used as a pre-designed version of this App to train both students and teachers, in coding skills.

- The Create@School App is an integrated development environment (IDE) for smartphones and tablets designed for children. It is the enhanced version of Pocket Code that has been customized for educational environments. The Create@School App embeds the concepts of game mechanics and dynamics through ready-to-use (pre-coded) game templates (based on different game genres coming from a leisure gaming environment). Students used the Create@School App in class, to integrate playful activities into regular classroom education. This kind of classroom setting allowed a hands-on approach to provide extrinsic motivation for students when starting to use a new tool [39]. Students need some time to start benefiting from new educational tools, and thus may take longer to become intrinsically motivated.

- Templates: Pre-coded templates were developed integrating nested objects as object collections (i.e., grouping several objects), clustered levels through scenes, or pre-coded interaction with a sensor. These templates were prepared (coded) in Pocket Code and linked to the different academic competences for different subjects and classroom ability levels. The templates allowed teachers and students to develop any kind of game genres in a standardized manner; being flexible enough to adapt to the preferences and likes of the students, and affording different methods to present and play with the academic content.

- The Global Public Inclusive Infrastructure (GPII) framework is an infrastructure that allows accessibility preferences to be set (e.g., text with bigger font sizes, appropriate colors, etc.) for children and people with special educational needs, making the Create@School App a more accessible IDE.

- Project Management Dashboard (PMD) and analytical tool: A web interface that allows orchestration of the class environment and enables the integration of information from all students in class, including the list of students per class, the projects assigned, and evaluation of the projects about the academic or curricular objectives. Through the PMD, the teachers not only can plan, assign, and manage the delivery of game projects to support new game-based teaching approaches, but can also evaluate students regarding the completion of projects and achievements of academic objectives. The PMD is based on the idea of implementing a game jam approach in classes [40]. This enables collaboration, engagement, and competition between the students that develop each project. The created game projects are uploaded to the PMD by each student at the end of the lesson.

- The analytical tool is embedded into the PMD and allows monitoring and assessing of the way the students’ work and code on a class project, thus providing a set of quantitative values that enables the evaluation of socio-behavioral constructs; including: confidence, self-efficacy, performance, interest, creativity, persistence, effort/dedicated time, and concentration amongst others. Thus, the analytical tool generates feedback data on each student’s progress, socio-behavioral constructs, coding, and use of elements of the Create@School App, streamlining the process of the assessment of student work.

- Tablets and mobile phones: In total, 338 tablets and mobiles devices were used for the classes comprising the following models and specifications: Seven-inch and 10-inch Android mobile devices, which include Google Nexus 7, MOTOG-2 as well as BQ Edison 3 10-inch and 8-inch. The resolution was: either 800 × 500; 1024 × 640; or 1280 × 800—a common aspect ratio of 1.6. The devices had a range of embedded physical and virtual sensors. The physical sensors were hardware-based sensors embedded directly into mobile devices that derive their data directly by measuring particular environmental characteristics (e.g., accelerometer, gyroscope, and proximity, etc.) and virtual sensors that were software-based, harvesting their data from several hardware-based sensors (e.g., in the Android platform - linear acceleration, and gravity sensors).

- LEGO MINDSTORMS® technology [41]: A programmable robotics construction set that allows the building, programming, and commanding of LEGO robots from their PC, Mac, tablet, or smartphone. It provides an interface to enable programmable intelligent bricks or modules, thus it is able to interact with Pocket Code. It comes as a set that includes connecter and universal serial bus (USB) cables, LEGO Technic pieces or elements, one EV3 Brick, two Large Interactive Servo Motors, one Medium Interactive Servo Motor, and touch, color, infrared, and infrared beacon sensors.

2.2. Methods

2.2.1. The Sample

2.2.2. The Technology Validation Cycles

2.2.3. Template-Based Methodological Approach

2.2.4. Measures and Evaluation

Hassenzahl Model and AttrakDiff Tool

- The usability and utility of the technologies perceived by the users;

- The satisfaction of the users that used the technologies, and the attractiveness of the technologies.

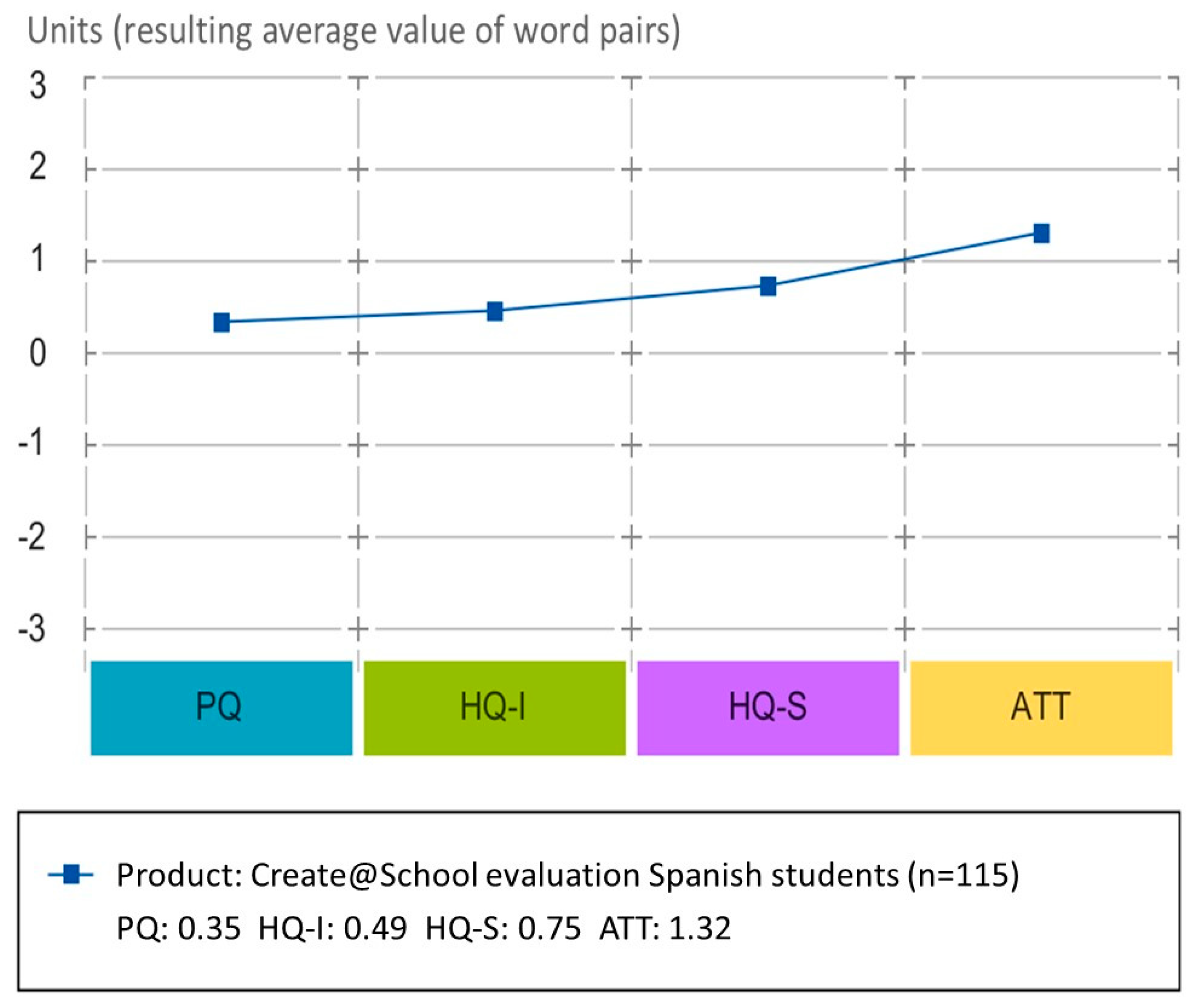

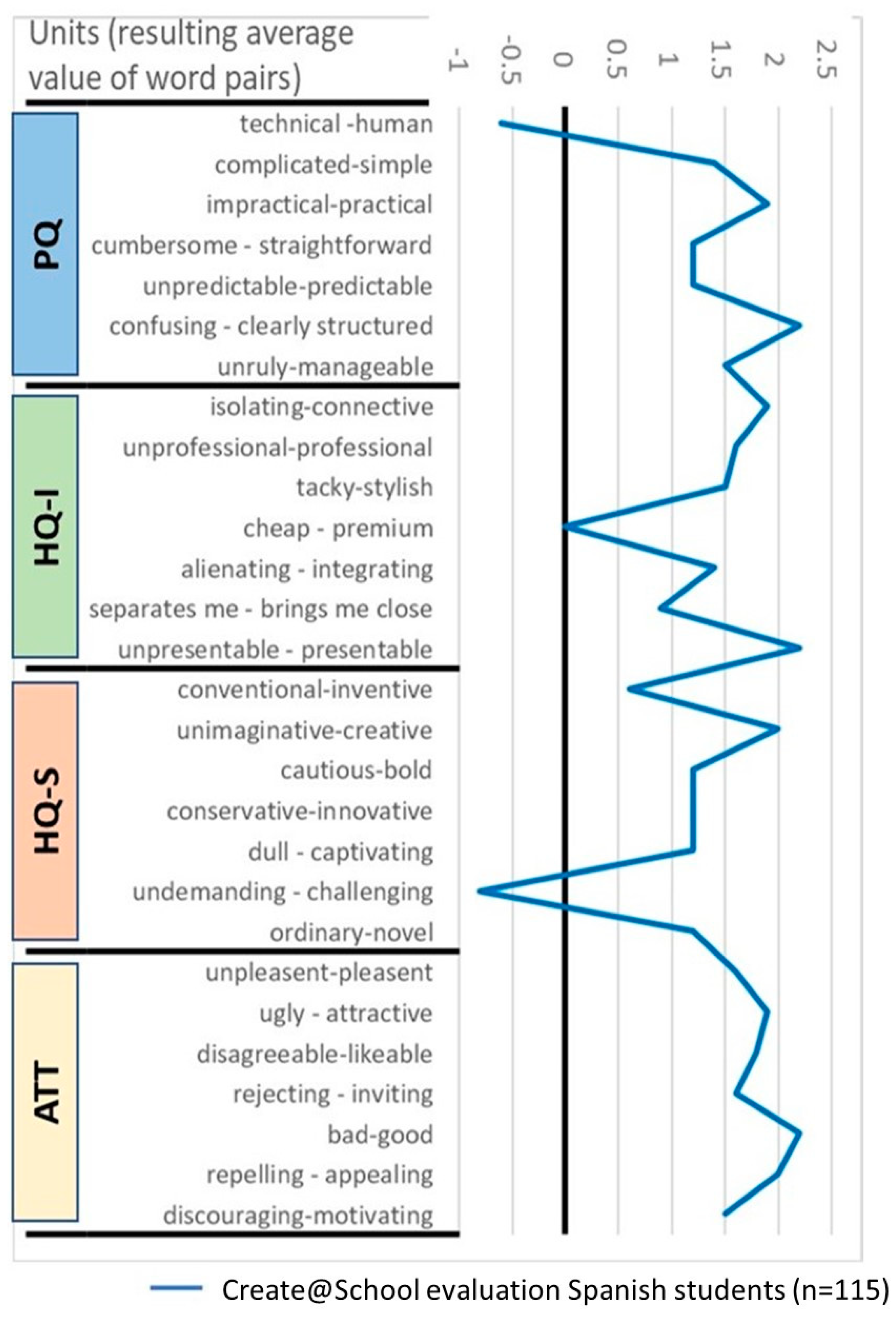

- Pragmatic qualities (PQs): These attributes are related to practicality and functionality. A consequence of pragmatic qualities is usefulness and usability. The pragmatic quality (PQ) scale has seven items, each with bipolar anchors that measure the pragmatic qualities of the product. This includes anchors (see) such as technical–human, complicated–simple, confusing–clear, and impractical–practical, among others.

- The hedonic qualities (HQs) reflect the psychological needs and emotional experience of the user. In the Hassenzahl model, hedonic qualities are divided into two categories:

- ○

- The stimulation quality (HQ-S) represents the users wants to be stimulated in order to enjoy their experience with a piece of software or product. These include rarely used functions that can stimulate the user and satisfy the human urge for personal development and increased skills. The hedonic stimulation quality (HQS) scales have seven anchors each. HQS has anchors, like typical–original, cautious–courageous, and easy–challenging.

- ○

- The identity quality (HQ-I) refers to the human need of expressing through objects, to control how people want to be perceived by others. Humans have a desire to communicate their identity to others through the things they own and the things they use. They help humans to express themselves; who they are, what they care about, and who they aspire to be. The hedonic stimulation quality (HQS) scales also have seven anchors each. HQI has anchors, like isolating–integrating, gaudy–classy, and cheap–valuable.

- ○

- There are seven items for overall appeal or attraction (ATT), which comprises opposite words scales (e.g., ugly–beautiful and bad–good). The items are presented on opposite sides of a seven-point Likert scale, ranging from −3 to 3, where zero represents the neutral value between the two items of the scale.

Competitive Validation Outside Classes Environment

3. Results

3.1. Design and Development of Create@School App and PMD

- Customization for education environments;

- Integration of game templates, providing pre-coded templates that have coded game mechanics (i.e., templates for adventure, action, quiz, or puzzle games). This reduces the time needed to develop games and applications in classes, as well as allowing personalization for different ages, personal interests, and academic content through the development of the game dynamics and aesthetics by pupils. The templates and the Create@School App enable game dynamics and aesthetics by the editing of an existing game design, whilst allowing personalization of backgrounds, landscapes, and characters, the creation of new challenging levels, as well as changing the difficulty of a game;

- Integration of 48 new features and improvements identified during the project and based on the experience and insights gathered from the No One Left Behind pilots’ users;

- Integration of the GPII framework that allowed automatic and individual personalization for students with learning disabilities and additional sensory impairments.

3.2. Evaluation Results

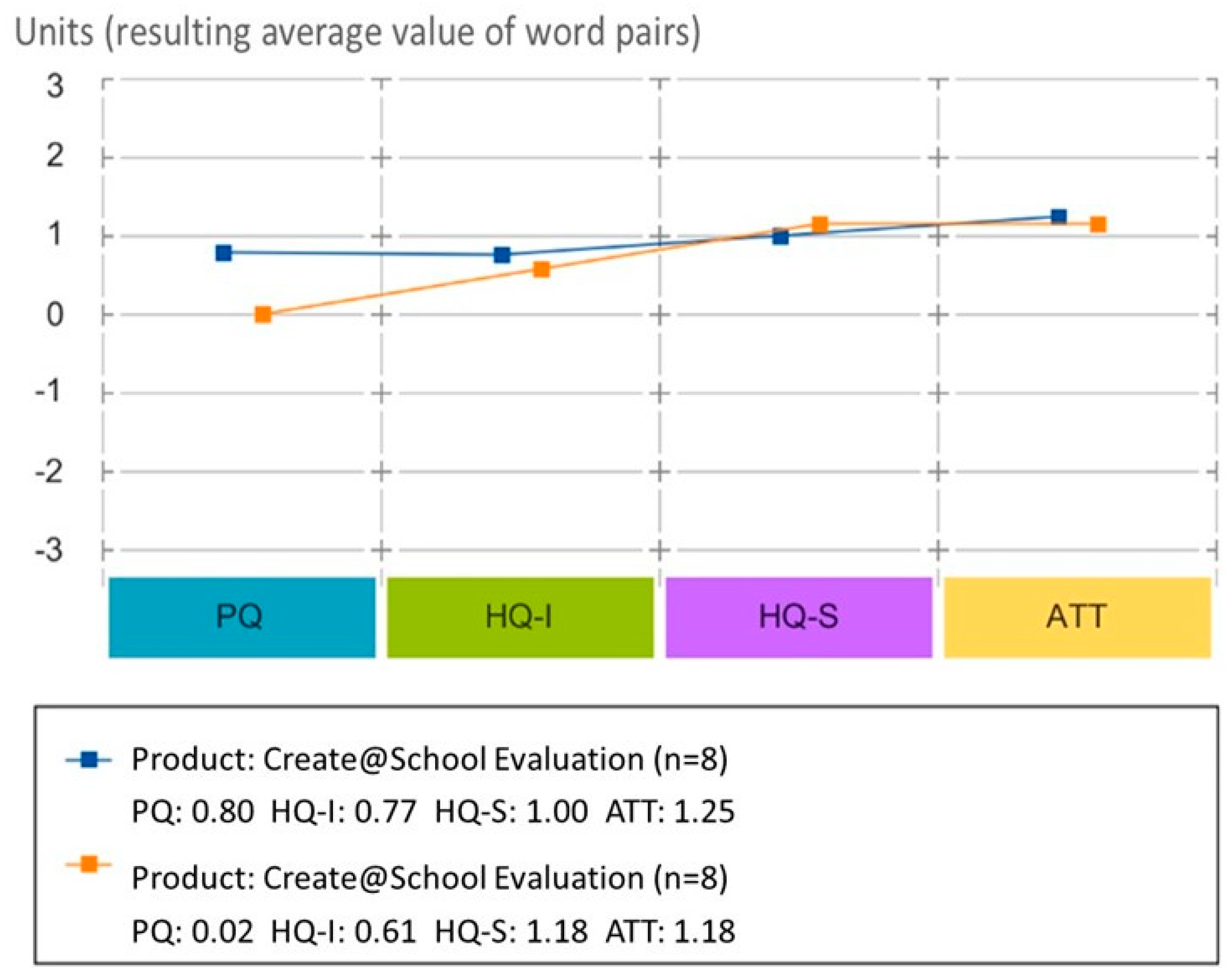

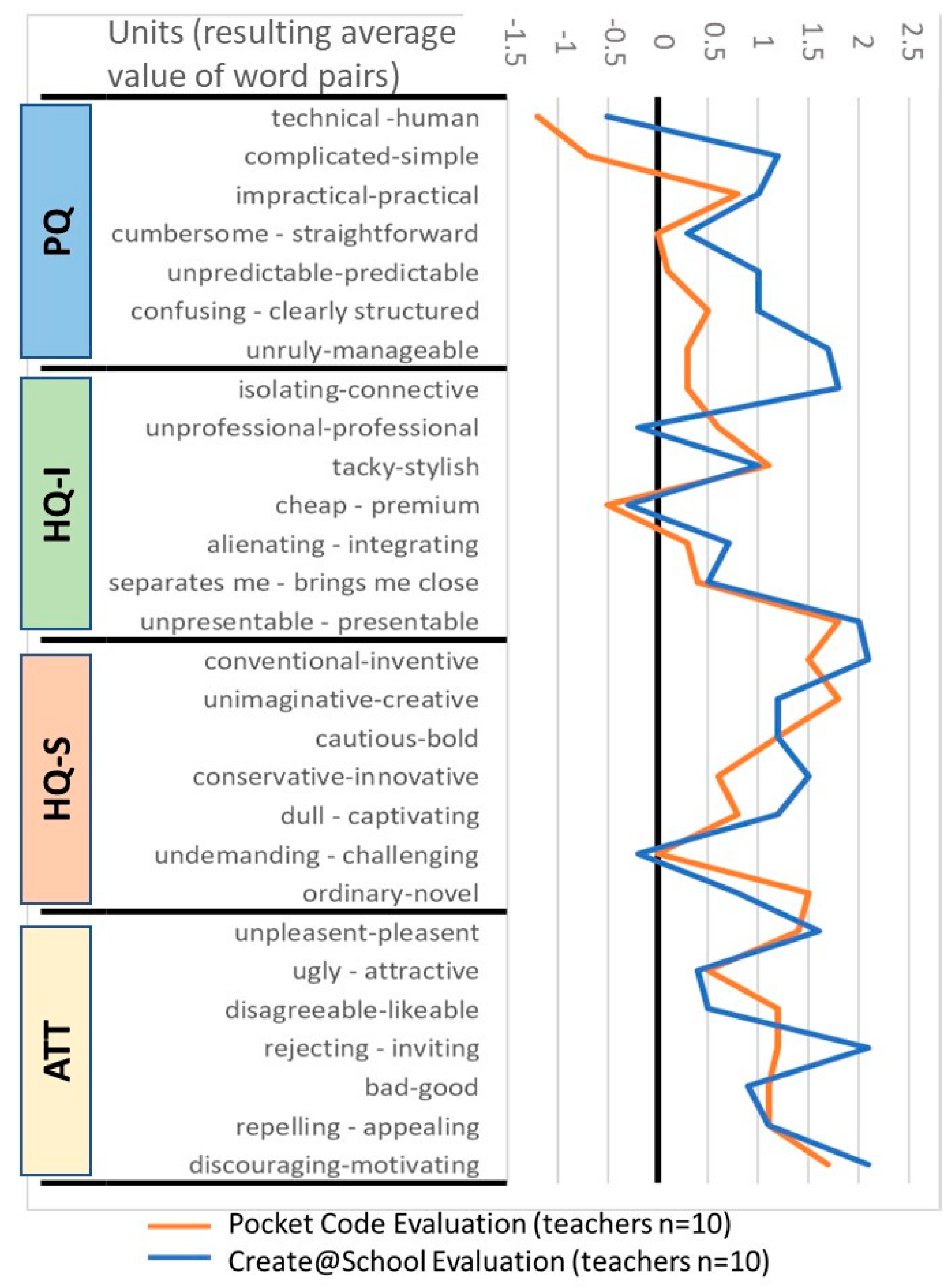

3.2.1. Results of the Evaluation of Pocket Code vs. Create@School by Teachers

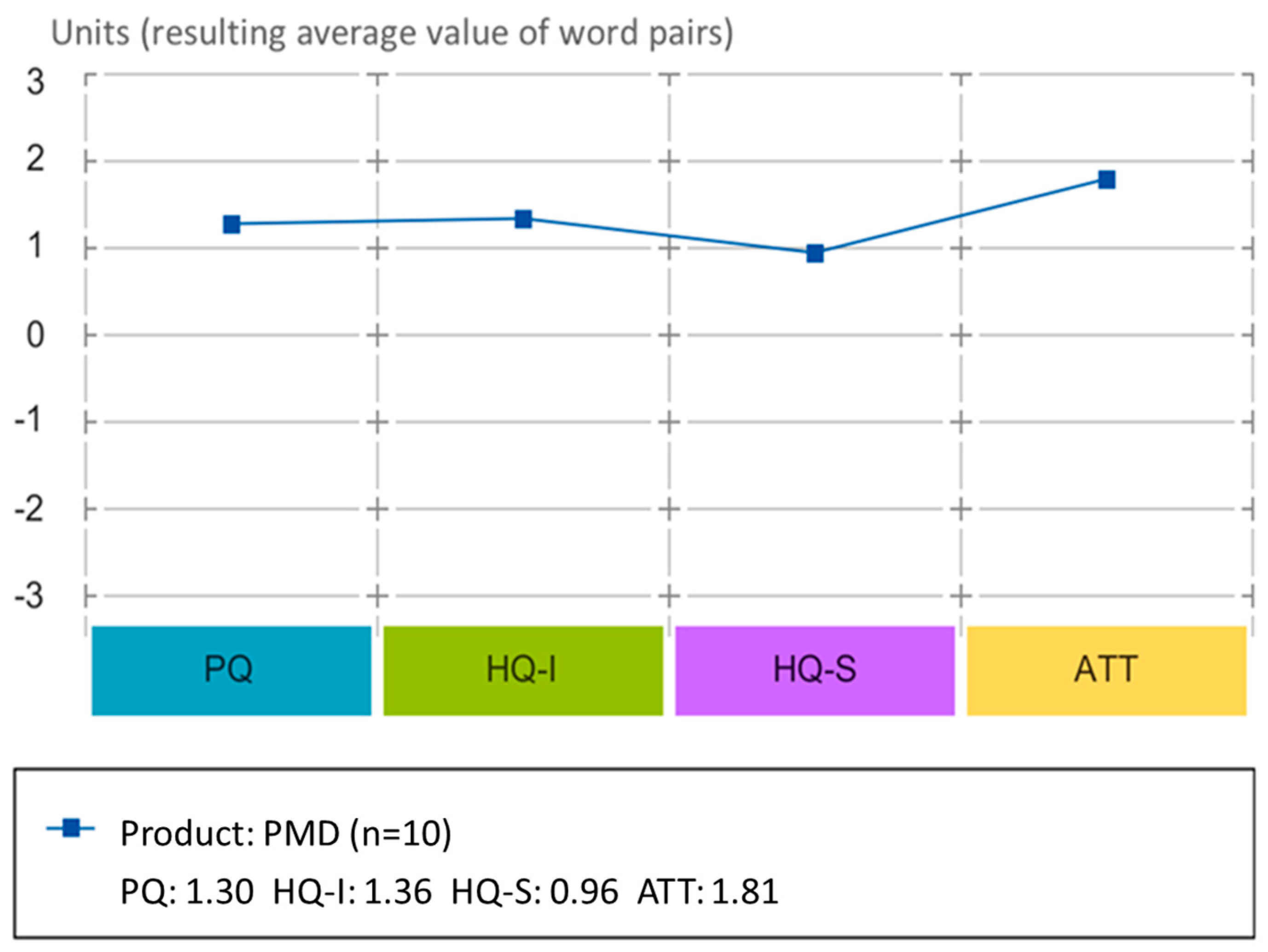

3.2.2. Results of the Evaluation Study of Project Management Dashboard (PMD)

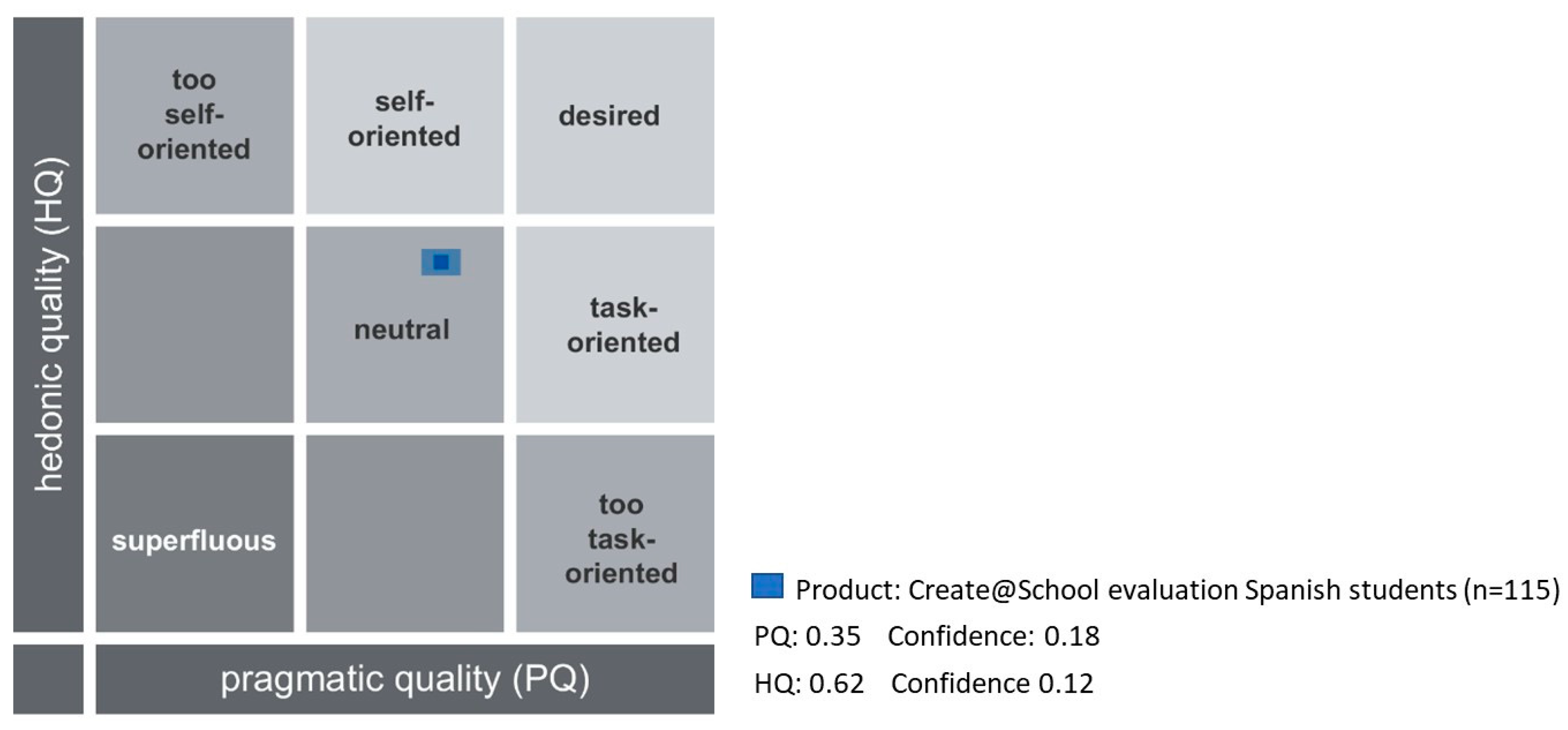

3.2.3. Results of the Evaluation of Create@School App by Students

3.2.4. LEGO NXT, EV3, and LEGO® League Validation

4. Discussion

4.1. Teachers’ Evaluation of Pocket Code vs. Create@School Study

4.2. Teachers’ Evaluation of the PMD Study (3.2)

4.3. The Students’ Evaluation of Create@School Study (3.3)

4.4. The LEGO® League Participation

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Rheinfrank, J.; Evenson, E. Design Languages. In Bringing Design to Software; Winograd, T., Ed.; ACM: New York, NY, USA, 1996; ISBN 0-201-85491-0. [Google Scholar]

- Papert, S. The Children’s Machine: Rethinking School in the Age of the Computer; Basic Books: New York, NY, USA, 1993; Available online: https://learn.media.mit.edu/lcl/resources/readings/childrens-machine.pdf (accessed on 28 March 2019).

- Kafai, Y.B. Minds in Play: Computer Game Design as a Context for Children’s Learning; Routledge: New York, NY, USA, 1995. [Google Scholar] [CrossRef]

- Zaibon, S.B.; Shiratuddin, N. Adapting learning theories in mobile game-based learning development. In Proceedings of the 2010 Third IEEE International Conference on Digital Game and Intelligent Toy Enhanced Learning (DIGITEL), Kaohsiung, Taiwan, 12–16 April 2010; pp. 124–128. [Google Scholar]

- Ferreira, A.; Pereira, E.; Anacleto, J.; Carvalho, A.; Carelli, I. The common sense-based educational quiz game framework What is it? In Proceedings of the VIII Brazilian Symposium on Human Factors in Computing Systems, Porto Alegre, Brazil, 21–24 October 2008; pp. 338–339. [Google Scholar]

- Hunicke, R.; Leblanc, M.; Zubek, R. MDA: A formal approach to game design and game research. In Proceedings of the Challenges in Games AI Workshop, Nineteenth National Conference of Artificial Intelligence, San Jose, CA, USA, 25–26 July 2004; pp. 1–5. [Google Scholar]

- Tiven, M.E.; Fuchs, E.; Bazari, A.; MacQuarrie, A. Evaluating Global Digital Education: Student Outcomes Framework. Available online: http://www.oecd.org/pisa/Evaluating-Global-Digital-Education-Student-Outcomes-Framework.pdf (accessed on 19 May 2019).

- Chandrasekaran, S.; Stojcevski, A.; Littlefair, G.; Joordens, M. Learning through projects in engineering education. In Proceedings of the 40th SEFI Annual Conference—Engineering Education 2020: Meet the Future (SEFI 2012), Thessaloni, Greece, 23–26 September 2012. [Google Scholar]

- Mohamad, S.N.M.; Sazali, N.S.S.; Salleh, M.A.M. Gamification Approach in Education to Increase Learning Engagement. Int. J. Humanit. Arts Soc. Sci. 2018, 4, 22–32. [Google Scholar] [CrossRef]

- Or-Bach, R.; Ilana, L. Cognitive activities of abstraction in object orientation: an empirical study. ACM SIGCSE Bull. 2004, 36, 82–86. [Google Scholar] [CrossRef]

- Li, F.W.B.; Watson, C. Game-based Concept Visualization for Learning Programming. In Proceedings of the Third International ACM Workshop on Multimedia Technologies for Distance Learning, Scottsdale, AZ, USA, 1 December 2011; pp. 37–42. [Google Scholar] [CrossRef]

- O’Kelly, J.; Gibson, J.P. Robo Code & problem-based learning: a non-prescriptive approach to teaching programming. In ACM SIGCSE Bulletin; ACM: New York, NY, USA, 2006; Volume 38. [Google Scholar]

- Cooper, S. The design of Alice. ACM Trans. Comput. Educ. 2010, 10, 15. [Google Scholar] [CrossRef]

- Meerbaum-Salant, O.; Armoni, M.; Ben-Ari, M. Learning Computer Science Concepts with Scratch. In Proceedings of the Sixth International Workshop on Computing Education Research, Aarhus, Denmark, 9–10 August 2010; pp. 69–76. [Google Scholar] [CrossRef]

- Paliokas, I.; Arapidis, C.; Mpimpitsos, M. PlayLOGO 3D: A 3D Interactive Video Game for Early Programming Education: Let LOGO Be a Game. In Proceedings of the 2011 Third International Conference on Games and Virtual Worlds for Serious Applications, Athens, Greece, 4–6 May 2011; pp. 24–31. [Google Scholar] [CrossRef]

- Barnes, T.; Richter, H.; Powell, E.; Chaffin, A.; Godwin, A. Game2Learn: Building CS1 Learning Games for Retention. In Proceedings of the 12th Annual SIGCSE Conference on Innovation and Technology in Computer Science Education, Dundee, UK, 25–27 June 2007; pp. 121–125. [Google Scholar] [CrossRef]

- Jiau, H.C.; Chen, J.C.; Su, K.F. Enhancing self-motivation in learning programming using game-based simulation and metrics. IEEE Trans. Educ. 2009, 52, 555–562. [Google Scholar] [CrossRef]

- Xinogalos, S.; Satratzemi, M.; Dagdilelis, V. An introduction to object-oriented programming with a didactic microworld: object Karel. Comput. Educ. 2006, 47, 148–171. [Google Scholar] [CrossRef]

- Deterding, S.; Dixon, D.; Khaled, R.; Nacke, L. From Game Design Elements to Gamefulness: Defining “Gamification”. In Proceedings of the 15th International Academic MindTrek Conference: Envisioning Future Media Environments, Tampere, Finland, 28–30 September 2011; pp. 9–15. [Google Scholar] [CrossRef]

- Glover, I. Play as You Learn: Gamification as a Technique for Motivating Learners. EdMedia+ Innovate Learning; Association for the Advancement of Computing in Education (AACE): Durham, NH, USA, 2013; pp. 1999–2008. [Google Scholar]

- Sheth, S.K.; Bell, J.S.; Kaiser, G.E. Increasing Student Engagement in Software Engineering with Gamificatiom; Columbia University Computer Science Technical Reports CUCS-018-12; Columbia University: New York, NY, USA, 2012. [Google Scholar] [CrossRef]

- Ziesemer, A.; Müller, L.; Silveira, M. Gamification Aware: Users Perception About Game Elements on Non-Game Context. In Proceedings of the 12th Brazilian Symposium on Human Factors in Computing Systems, Porto Alegre, Brazil, 8–11 October 2013; Brazilian Computer Society: Porto Alegre, Brazil, 1978; pp. 276–279. [Google Scholar]

- Deterding, S. Gamification: Designing for Motivation. Interactions 2012, 19, 14–17. [Google Scholar] [CrossRef]

- Zichermann, G.; Cunningham, C. Gamification by Design: Implementing Game Mechanics in Web and Mobile Apps, 1st ed.; O’Reilly Media, Inc.: Sbastopol, CA, USA, 2011. [Google Scholar]

- Seaborn, K.; Fels, D.I. Gamification in Theory and Action. Int. J. Hum. Comput. Stud. 2015, 74, 14–31. [Google Scholar] [CrossRef]

- Moccozet, L.; Tardy, C.; Opprecht, W.; Léonard, M. Gamification-based assessment of group work. In Proceedings of the International Conference on Interactive Collaborative Learning (ICL), Kazan, Russia, 25–27 September 2013; pp. 171–179. [Google Scholar]

- Lovászová, G.; Michaličková, V.; Capay, M. Mobile Technology in Secondary Education: A Conceptual Framework for Using Tablets and Smartphones within the Informatics Curriculum. In Proceedings of the 13th IEEE International Conference on Emerging eLearning Technologies and Application, Starý Smokovec, Slovakia, 26–27 November 2015. [Google Scholar] [CrossRef]

- Félix, I.; Castro, L.A.; Rodríguez, L.F.; Ruiz, E. Mobile Phone Sensing: Current Trends and Challenges; Sonora Institute of Technology (ITSON): Obregón, Son., Mexico, 2015; Available online: https://dialnet.unirioja.es/servlet/articulo?codigo=5826868 (accessed on 15 May 2019).

- Karavirta, E.; Hakulinen, L. Educational Accelerometer Games for Computer Science. In Proceedings of the 11th World Conference on Mobile and Contextual Learning, Helsinki, Finland, 16–18 October 2012. [Google Scholar]

- Inmark-No One Left Behind. Available online: http://no1leftbehind.eu (accessed on 18 June 2019).

- UPM-Project Management Dashboard. Available online: https://www.pmdnolb.cloud/ (accessed on 18 June 2019).

- Pocket Code. Available online: https://www.catrobat.org/intro/ (accessed on 16 May 2019).

- Scratch. Available online: https://scratch.mit.edu/about (accessed on 9 July 2019).

- Minecraft. Available online: https://education.minecraft.net/trainings/code-builder-for-minecraft-education-edition/ (accessed on 9 July 2019).

- Tiny Tap. Available online: https://www.tinytap.it/activities/ (accessed on 9 July 2019).

- Game Salad. Available online: https://gamesalad.com/ (accessed on 9 July 2019).

- Bloxels. Available online: http://home.bloxelsbuilder.com/ (accessed on 9 July 2019).

- Gamestar Mechanics. Available online: https://gamestarmechanic.com/ (accessed on 9 July 2019).

- Romero, M.; Usart, M.; Ott, M.; Earp, J.; de Freitas, S.; Arnab, S. Learning through playing for or against each other? Promoting collaborative learning in digital game-based learning. In Proceedings of the European Conference on Information Systems (ECIS), Barcelona, Spain, 10–13 June 2012. [Google Scholar]

- Spieler, B.; Petri, A.; Schindler, C.; Slany, W.; Beltran, E.; Boulton, H.; Gaeta, E.; Smith, J. Pocket Code Game Jams Challenge Traditional Classroom Teaching and Learning Through Game Creation. In Proceedings of the 6th Irish Conference on Game-Based Learning IGBL, Dublin, Ireland, 1–2 September 2016. [Google Scholar]

- LEGO Mindstorms Technology. Available online: https://www.lego.com/en-us/mindstorms/support (accessed on 16 May 2019).

- Spieler, B.; Schindler, C.; Slany, W.; Mashkina, O.; Beltrán, M.E.; Boulton, H.; Brown, D. Evaluation of Game Templates to support Programming Activities in Schools. In Proceedings of the European Conference of Game-Based Learning (ECGBL), Paisley, UK, 6–7 October 2016. [Google Scholar]

- Pocket Code-Catrobat Project. Available online: https://www.catrobat.org/ (accessed on 12 June 2019).

- Effie Law, E.; Vermeeren, A.; Hassenzahl, M.; Blythe, M. The Hedonic/Pragmatic Model of User Experience. Towards a UX Manifesto. Available online: http://www.academia.edu/2880396/The_hedonic_pragmatic_model_of_user_experience (accessed on 18 June 2019).

- Attrak Diff. Available online: http://attrakdiff.de/ (accessed on 18 June 2019).

- LEGO Education-Mindstorms. Available online: https://education.lego.com/en-us (accessed on 12 June 2019).

- FIRST LEGO League. Available online: http://www.firstlegoleague.org/ (accessed on 12 June 2019).

| School Site | Course | Age | Teacher Technical Background | Subject | Students |

|---|---|---|---|---|---|

| Puerto Santa María | Y11 | 15–16 | No | Mathematics | 30 |

| Y10 | 14–15 | No | PEMAR—Mathematics and Science | 13 | |

| Y9 | 13–14 | Yes | Mathematics | 30 | |

| Úbeda | Y10 | 14–15 | No | Science Methods | 12 |

| Y9 | 13–14 | Yes | Enrichment | 12 | |

| Y7 | 11–12 | No | Science | 25 | |

| Y6 | 10–11 | No | Science | 24 |

| School Site | Course | Age | Teacher Technical Background | Subject | Students |

|---|---|---|---|---|---|

| Puerto Santa María | Y11 | 15–16 | Yes | Computing | 17 |

| Y10 | 14–15 | No | Mathematics | 20 | |

| Y9 | 13–14 | No | Science | 9 | |

| Y8 | 12–13 | No | Mathematics | 11 | |

| Úbeda | Y11 | 15–16 | No | Programming basics | 30 |

| Y10 | 14–15 | No | Programming basics | 16 | |

| Y9 | 12–13 | No | Mathematics, Biology, and Geology | 21 | |

| Y8 | 12–13 | Yes | Enrichment | 12 | |

| Y7 | 11–12 | No | Language, Mathematics, and Social Sciences | 26 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gaeta, E.; Beltrán-Jaunsaras, M.E.; Cea, G.; Spieler, B.; Burton, A.; García-Betances, R.I.; Cabrera-Umpiérrez, M.F.; Brown, D.; Boulton, H.; Arredondo Waldmeyer, M.T. Evaluation of the Create@School Game-Based Learning–Teaching Approach. Sensors 2019, 19, 3251. https://doi.org/10.3390/s19153251

Gaeta E, Beltrán-Jaunsaras ME, Cea G, Spieler B, Burton A, García-Betances RI, Cabrera-Umpiérrez MF, Brown D, Boulton H, Arredondo Waldmeyer MT. Evaluation of the Create@School Game-Based Learning–Teaching Approach. Sensors. 2019; 19(15):3251. https://doi.org/10.3390/s19153251

Chicago/Turabian StyleGaeta, Eugenio, María Eugenia Beltrán-Jaunsaras, Gloria Cea, Bernadette Spieler, Andrew Burton, Rebeca Isabel García-Betances, María Fernanda Cabrera-Umpiérrez, David Brown, Helen Boulton, and María T. Arredondo Waldmeyer. 2019. "Evaluation of the Create@School Game-Based Learning–Teaching Approach" Sensors 19, no. 15: 3251. https://doi.org/10.3390/s19153251

APA StyleGaeta, E., Beltrán-Jaunsaras, M. E., Cea, G., Spieler, B., Burton, A., García-Betances, R. I., Cabrera-Umpiérrez, M. F., Brown, D., Boulton, H., & Arredondo Waldmeyer, M. T. (2019). Evaluation of the Create@School Game-Based Learning–Teaching Approach. Sensors, 19(15), 3251. https://doi.org/10.3390/s19153251