Foreground Detection with Deeply Learned Multi-Scale Spatial-Temporal Features

Abstract

1. Introduction

- We proposed a novel dual multi-scale 3D fully convolutional network structure for foreground detection. The network can learn deep and hierarchical multi-scale features in both spatial and temporal domains effectively, therefore has good performance for foreground detection of complex scenes.

- Our network demonstrates state-of-the-art performance comparing with other DNN based foreground detection methods, according to the experimental results on public datasets.

- The proposed DMFC3D network is a novel framework that establishes a mapping from image sequence inputs to pixel-wise classification results. It can be used for similar tasks that can benefit from effective multi-scale spatial-temporal features.

2. Related Works

2.1. Classic Background Models

2.2. Deep Learning Based Methods

3. Proposed Method

3.1. Network Structure

3.2. Encoder

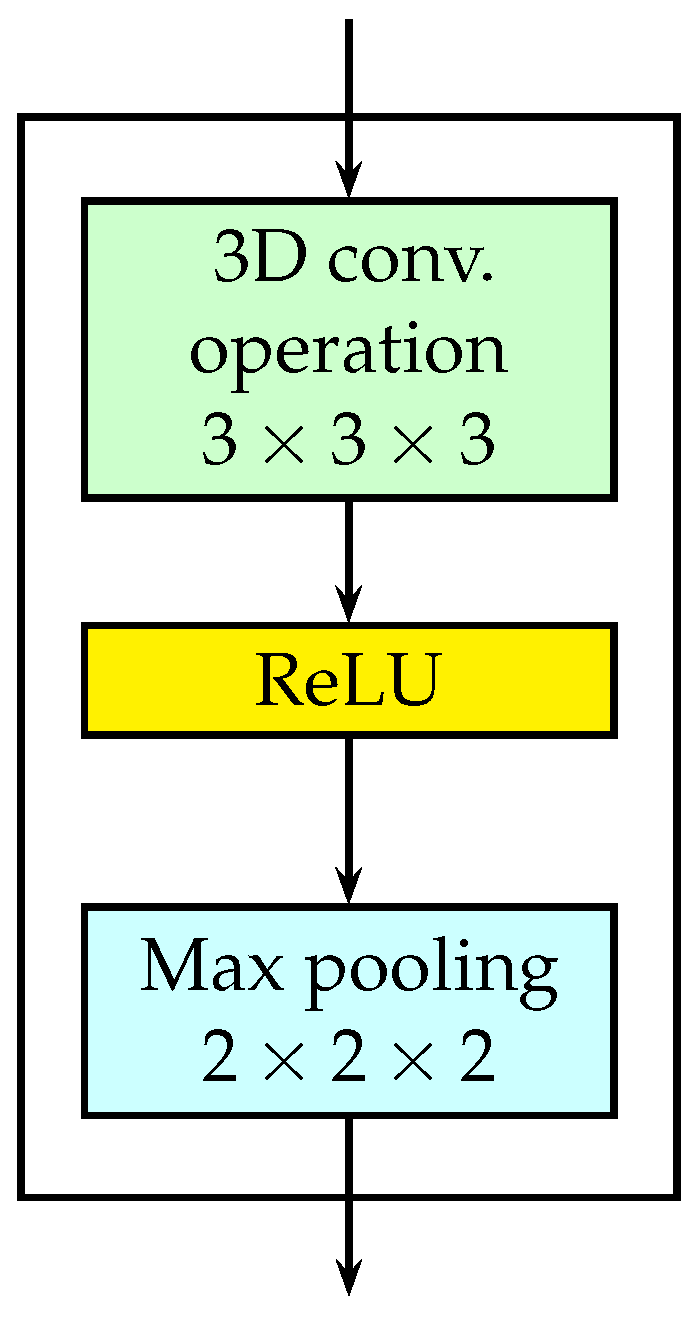

3.2.1. 3D Convolutional Layers

3.2.2. Multi-Scale Spatial Features

3.2.3. Multi-Scale Temporal Features

3.3. Decoder

3.3.1. 2D De-Convolutional Layer

3.3.2. Output Layer

3.4. Network Training

3.4.1. Training Method

3.4.2. Lost Function

4. Experiments

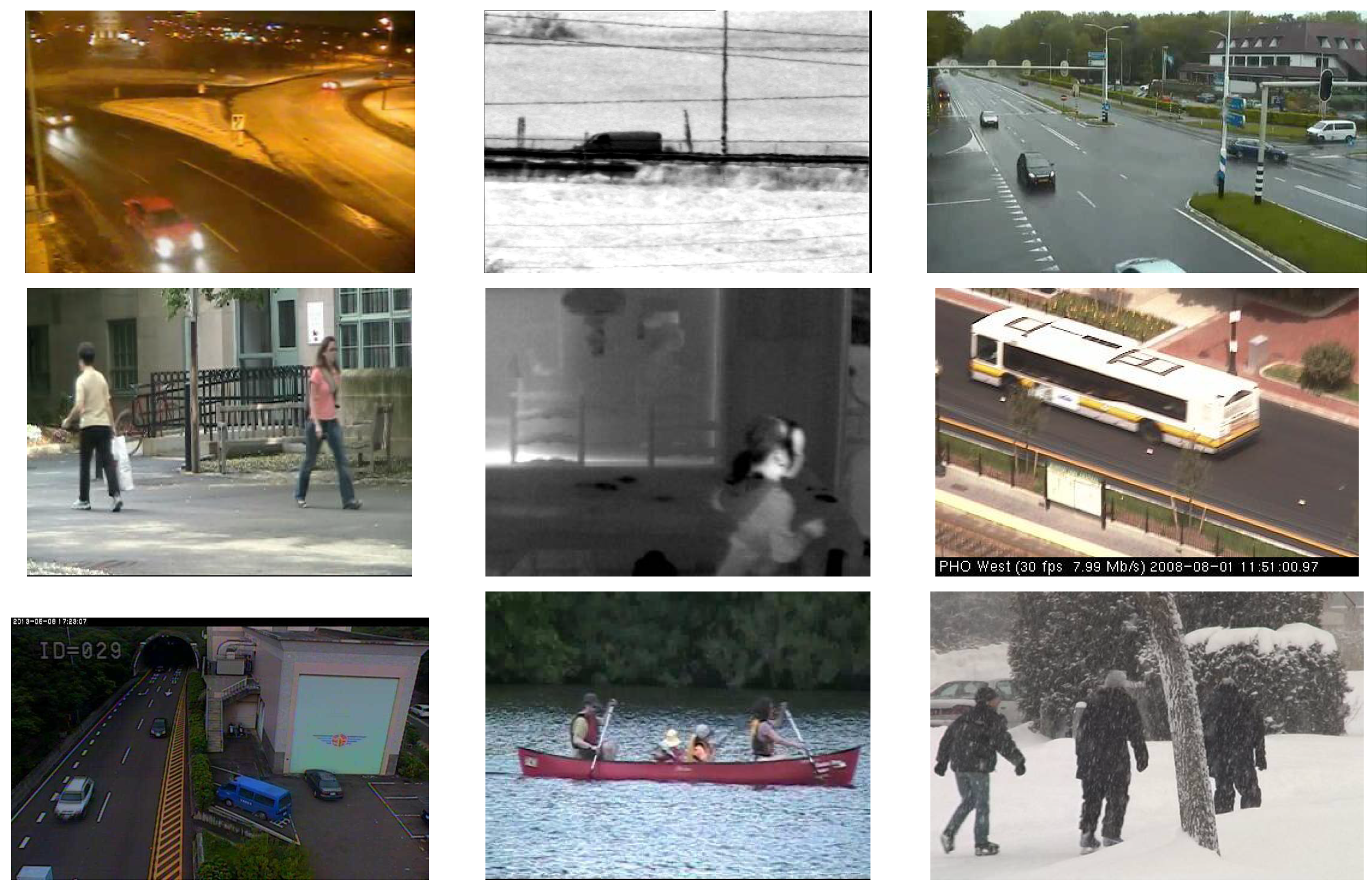

4.1. Dataset

4.2. Evaluating Metric

4.3. Implementation Details

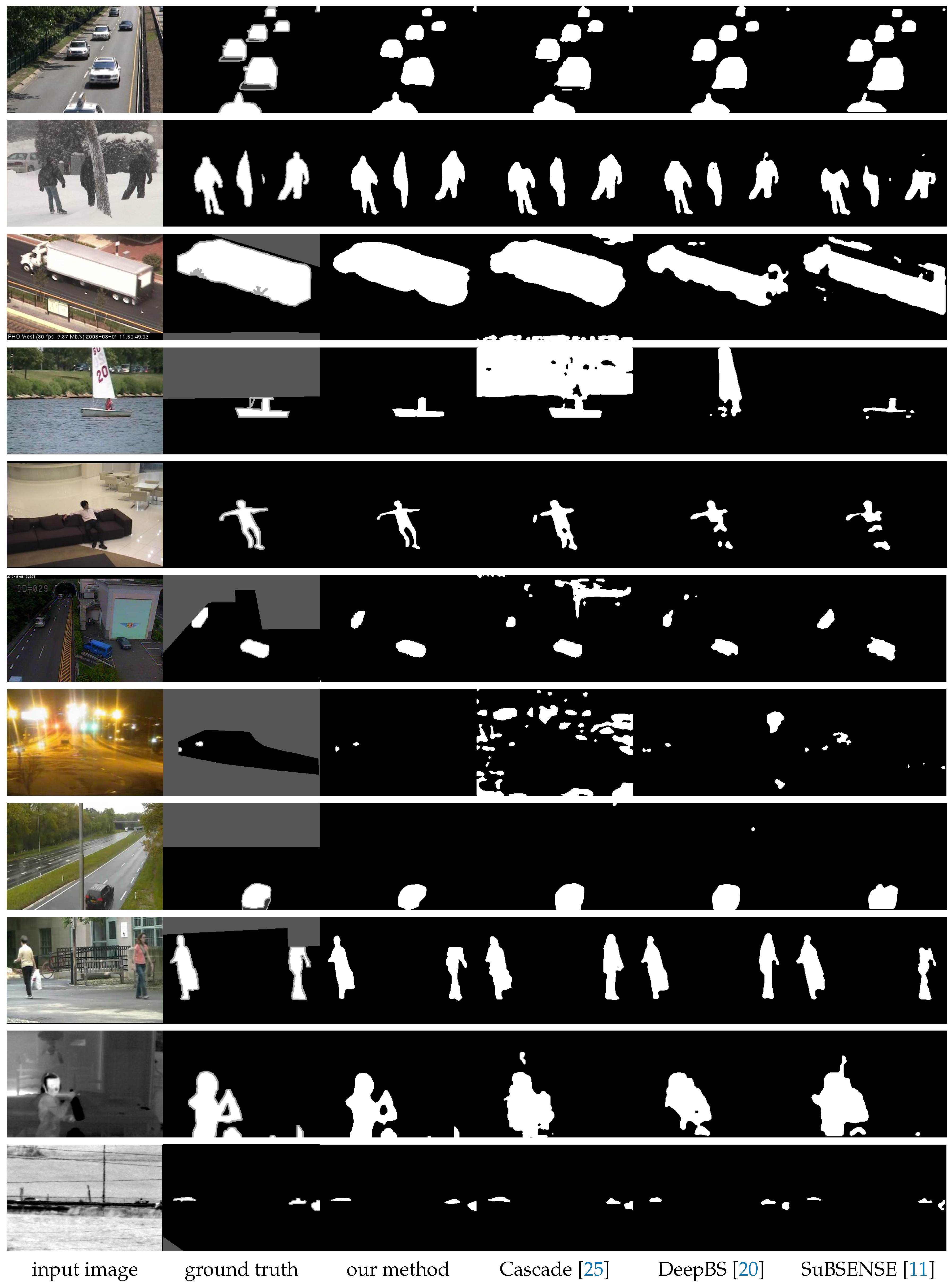

4.4. Results

4.4.1. Results on CDnet 2014 Dataset

4.4.2. Comparison with the State of the Arts

4.4.3. Comparing to the Single Scale FC3D

4.5. Running Time

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Sivaraman, S.; Trivedi, M.M. Looking at Vehicles on the Road: A Survey of Vision-Based Vehicle Detection, Tracking, and Behavior Analysis. IEEE Trans. Intell. Transp. Syst. 2013, 14, 1773–1795. [Google Scholar] [CrossRef]

- Unzueta, L.; Nieto, M.; Cortes, A.; Barandiaran, J.; Otaegui, O.; Sanchez, P. Adaptive Multicue Background Subtraction for Robust Vehicle Counting and Classification. IEEE Trans. Intell. Transp. Syst. 2012, 13, 527–540. [Google Scholar] [CrossRef]

- Bouwmans, T. Traditional and recent approaches in background modeling for foreground detection: An overview. Comput. Sci. Rev. 2014, 11–12, 31–66. [Google Scholar] [CrossRef]

- Sobral, A.; Vacavant, A. A comprehensive review of background subtraction algorithms evaluated with synthetic and real videos. Comput. Vis. Image Underst. 2014, 122, 4–21. [Google Scholar] [CrossRef]

- Maddalena, L.; Petrosino, A. Background Subtraction for Moving Object Detection in RGBD Data: A Survey. J. Imaging 2018, 4, 71. [Google Scholar] [CrossRef]

- Stauffer, C.; Grimson, W.E.L. Adaptive background mixture models for real-time tracking. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Fort Collins, CO, USA, 23–25 June 1999. [Google Scholar]

- Elgammal, A.; Harwood, D.; Davis, L. Non-Parametric Model for Background Subtraction; Vernon, D., Ed.; ECCV 2000; Springer: Berlin/Heidelberg, Germany, 2000; Volume 1843, pp. 751–767. [Google Scholar]

- Liao, S.; Zhao, G.; Kellokumpu, V.; Pietikainen, M.; Li, S.Z. Modeling pixel process with scale invariant local patterns for background subtraction in complex scenes. In Proceedings of the 2010 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), San Francisco, CA, USA, 13–18 June 2010; pp. 1301–1306. [Google Scholar]

- Yoshinaga, S.; Shimada, A.; Nagahara, H.; Taniguchi, R.I. Object detection based on spatiotemporal background models. Comput. Vis. Image Underst. 2014, 122, 84–91. [Google Scholar] [CrossRef]

- Moshe, Y.; Hel-Or, H.; Hel-Or, Y. Foreground detection using spatiotemporal projection kernels. In Proceedings of the Computer 2012 IEEE Conference on Vision and Pattern Recognition (CVPR), Providence, RI, USA, 16–21 June 2012; pp. 3210–3217. [Google Scholar]

- St-Charles, P.L.; Bilodeau, G.A.; Bergevin, R. SuBSENSE: A Universal Change Detection Method With Local Adaptive Sensitivity. IEEE Trans. Image Process. 2015, 24, 359–373. [Google Scholar] [CrossRef]

- Sheikh, Y.; Shah, M. Bayesian modeling of dynamic scenes for object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2005, 27, 1778–1792. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. In Proceedings of the International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Redmon, J.; Farhadi, A. YOLO9000: Better, Faster, Stronger. In Proceedings of the International Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2016; pp. 6517–6525. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef]

- Zeng, D. Multiscale Fully Convolutional Network for Foreground Object Detection in Infrared Videos. IEEE Geosci. Sens. Lett. 2018, 15, 617–621. [Google Scholar] [CrossRef]

- Babaee, M.; Tung, D.; Rigoll, G. A deep convolutional neural network for video sequence background subtraction. Pattern Recognit. 2018, 76, 635–649. [Google Scholar] [CrossRef]

- Yang, L.; Li, J.; Luo, Y.; Zhao, Y.; Cheng, H.; Li, J. Deep Background Modeling Using Fully Convolutional Network. IEEE Trans. Intell. Transp. Syst. 2018, 19, 254–262. [Google Scholar] [CrossRef]

- Cinelli, L.P.; Thomaz, L.A.; Silva, A.F.; Silva, E.A.B.; Netto, S.L. Foreground Segmentation for Anomaly Detection in Surveillance Videos Using Deep Residual Networks. In Proceedings of the XXXV Simpósio Brasileiro De Telecomunicações E Processamento De Sinais, Sao Pedro, Brazil, 3–6 September 2017. [Google Scholar]

- Zhao, X.; Chen, Y.; Tang, M.; Wang, J. Joint background reconstruction and foreground segmentation via a two-stage convolutional neural network. In Proceedings of the IEEE International Conference on Multimedia and Expo, Hong Kong, China, 10–14 July 2017; pp. 343–348. [Google Scholar] [CrossRef]

- Chen, Y.; Wang, J.; Zhu, B.; Tang, M.; Lu, H.; Member, S. Pixel-wise Deep Sequence Learning for Moving Object Detection. IEEE Trans. Circuits Syst. Video Technol. 2017. [Google Scholar] [CrossRef]

- Wang, Y.; Luo, Z.; Jodoin, P.M. Interactive deep learning method for segmenting moving objects. Pattern Recognit. Lett. 2017, 96, 66–75. [Google Scholar] [CrossRef]

- Braham, M.; Droogenbroeck, M.V. Deep Background Subtraction with Scene-Specific Convolutional Neural Networks. In Proceedings of the 23rd International Conference on System, Signals and Image Processing, Bratislava, Slovakia, 23–25 May 2016. [Google Scholar]

- Tran, D.; Bourdev, L.; Fergus, R.; Torresani, L.; Paluri, M. Learning Spatiotemporal Features with 3D Convolutional Networks. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 4489–4497. [Google Scholar]

- Barnich, O.; Van Droogenbroeck, M. ViBe: A Universal Background Subtraction Algorithm for Video Sequences. IEEE Trans. Image Process. 2011, 20, 1709–1724. [Google Scholar] [CrossRef] [PubMed]

- Spampinato, C.; Palazzo, S.; Kavasidis, I. A texton-based kernel density estimation approach for background modeling under extreme conditions. Comput. Vis. Image Underst. 2014, 122, 74–83. [Google Scholar] [CrossRef]

- He, D.; Wang, L. Texture Unit, Texture Spectrum, And Texture Analysis. IEEE Trans. Geosci. Remote Sens. 1990, 28, 509–512. [Google Scholar]

- Oliver, N.M.; Rosario, B.; Pentland, A.P. A Bayesian computer vision system for modeling human interactions. IEEE Trans. Pattern Anal. Mach. Intell. 2000, 22, 831–843. [Google Scholar] [CrossRef]

- Monnet, A.; Mittal, A.; Paragios, N.; Ramesh, V. Background modeling and subtraction of dynamic scenes. In Proceedings of the Ninth IEEE International Conference on Computer Vision, Nice, France, 13–16 October 2003; Volume 2, pp. 1305–1312. [Google Scholar]

- Maddalena, L.; Petrosino, A. A Self-Organizing Approach to Background Subtraction for Visual Surveillance Applications. IEEE Trans. Image Process. 2008, 17, 1168–1177. [Google Scholar] [CrossRef]

- Maddalena, L.; Petrosino, A. The SOBS algorithm: What are the limits? In Proceedings of the 2012 IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops, Providence, RI, USA, 16–21 June 2012; pp. 21–26. [Google Scholar]

- Ramirez-Quintana, J.A.; Chacon-Murguia, M.I. Self-adaptive SOM-CNN neural system for dynamic object detection in normal and complex scenarios. Pattern Recognit. 2015, 48, 1137–1149. [Google Scholar] [CrossRef]

- Maddalena, L.; Petrosino, A. Self-organizing background subtraction using color and depth data. Multimed. Tools Appl. 2018. [Google Scholar] [CrossRef]

- Chacon, M.; Ramirez, G.; Gonzalez-Duarte, S. Improvement of a neural-fuzzy motion detection vision model for complex scenario conditions. In Proceedings of the International Joint Conference on Neural Networks, Dallas, TX, USA, 4–9 August 2013; pp. 1–8. [Google Scholar]

- Culibrk, D.; Marques, O.; Socek, D.; Kalva, H.; Furht, B. Neural Network Approach to Background Modeling for Video Object Segmentation. IEEE Trans. Neural Netw. 2007, 18, 1614–1627. [Google Scholar] [CrossRef] [PubMed]

- Zeng, D.; Zhu, M.; Kuijper, A. Combining Background Subtraction Algorithms with Convolutional Neural Network. arXiv, 2018; arXiv:cs.CV/1807.02080. [Google Scholar]

- Sultana, M.; Mahmood, A.; Javed, S.; Jung, S.K. Unsupervised Deep Context Prediction for Background Foreground Separation. arXiv, 2018; arXiv:cs.CV/1805.07903. [Google Scholar]

- Bakkay, M.C.; Rashwan, H.A.; Salmane, H.; Khoudour, L.; Puigtt, D.; Ruichek, Y. BSCGAN: Deep Background Subtraction with Conditional Generative Adversarial Networks. In Proceedings of the 2018 25th IEEE International Conference on Image Processing (ICIP), Athens, Greece, 7–10 October 2018; pp. 4018–4022. [Google Scholar]

- Sakkos, D.; Liu, H.; Han, J.; Shao, L. End-to-end video background subtraction with 3d convolutional neural networks. Multimed. Tools Appl. 2018, 77, 23023–23041. [Google Scholar] [CrossRef]

- Hu, Z.; Turki, T.; Phan, N.; Wang, J.T.L. A 3D Atrous Convolutional Long Short-Term Memory Network for Background Subtraction. IEEE Access 2018, 6, 43450–43459. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 834–848. [Google Scholar] [CrossRef]

- Karpathy, A.; Toderici, G.; Shetty, S.; Leung, T.; Sukthankar, R.; Li, F.-F. Large-Scale Video Classification with Convolutional Neural Networks. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23 June 2014; pp. 1725–1732. [Google Scholar]

- Kingma, D.P.; Ba, J.L. Adam: A Method for Stochastic Optimization. In Proceedings of the International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Wang, Y.; Jodoin, P.M.; Porikli, F.; Konrad, J.; Benezeth, Y.; Ishwar, P. CDnet 2014: An Expanded Change Detection Benchmark Dataset. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition Workshops, Columbus, OH, USA, 23–28 June 2014; pp. 393–400. [Google Scholar]

- Abadi, M.; Agarwal, A.; Barham, P.; Brevdo, E.; Chen, Z.; Citro, C.; Corrado, G.S.; Davis, A.; Dean, J.; Devin, M.; et al. TensorFlow: Large-Scale Machine Learning on Heterogeneous Distributed Systems; Technical Report; Google Research: Mountain View, CA, USA, 2015. [Google Scholar]

- St-Charles, P.L.; Bilodeau, G.A.; Bergevin, R. A Self–Adjusting Approach to Change Detection Based on Background Word Consensus. In Proceedings of the 2015 IEEE Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 5–9 January 2015; pp. 990–997. [Google Scholar]

| Methods | BL | CJ | BW | DB | IOM | LF | NV | PTZ | SH | TH | TU | Average |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| DMFC3D (Ours) | 0.9950 | 0.9744 | 0.9703 | 0.9780 | 0.8835 | 0.9233 | 0.9696 | 0.9287 | 0.9893 | 0.9924 | 0.9773 | 0.9620 |

| Cascade [25] | 0.9786 | 0.9758 | 0.9451 | 0.9658 | 0.8505 | 0.8804 | 0.8926 | 0.9344 | 0.9593 | 0.8958 | 0.9215 | 0.9273 |

| DeepBS [20] | 0.9580 | 0.8990 | 0.8647 | 0.8761 | 0.6097 | 0.5900 | 0.6359 | 0.3306 | 0.9304 | 0.7583 | 0.8993 | 0.7593 |

| SuBSENSE [11] | 0.9503 | 0.8152 | 0.8594 | 0.8177 | 0.6569 | 0.6594 | 0.4918 | 0.3894 | 0.8986 | 0.8171 | 0.8423 | 0.7453 |

| PAWCS [50] | 0.9397 | 0.8137 | 0.8059 | 0.8938 | 0.7764 | 0.6433 | 0.4171 | 0.4450 | 0.8934 | 0.8324 | 0.7667 | 0.7479 |

| Methods | BL | CJ | BW | DB | IOM | LF | NV | PTZ | SH | TH | TU | Average |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| DMFC3D (Ours) | 0.0608 | 0.2253 | 0.0711 | 0.0613 | 1.1664 | 0.1201 | 0.2178 | 0.2031 | 0.0446 | 0.1301 | 0.0454 | 0.2133 |

| Cascade [25] | 0.1405 | 0.2105 | 0.1910 | 0.0522 | 1.5416 | 0.1317 | 0.6116 | 0.1221 | 0.3500 | 1.0478 | 0.0584 | 0.4052 |

| DeepBS [20] | 0.2424 | 0.8994 | 0.3784 | 0.2067 | 4.1292 | 1.3564 | 2.5754 | 7.7228 | 0.7403 | 3.5773 | 0.0838 | 1.9920 |

| SuBSENSE [11] | 0.3574 | 1.6469 | 0.4527 | 0.4042 | 3.8349 | 0.9968 | 3.7717 | 3.8160 | 1.0120 | 2.0125 | 0.1527 | 1.6780 |

| PAWCS [50] | 0.4491 | 1.4220 | 0.5319 | 0.1917 | 2.3536 | 0.7258 | 3.3386 | 1.1162 | 1.0230 | 1.4018 | 0.6378 | 1.1993 |

| Category | DMFC3D | FC3D |

|---|---|---|

| baseline | 0.9950 | 0.9941 |

| cameraJitter | 0.9744 | 0.9651 |

| badWeather | 0.9703 | 0.9699 |

| dynamicBackground | 0.9780 | 0.9775 |

| intermittentObjectMotion | 0.8835 | 0.8779 |

| lowFramerate | 0.9233 | 0.8575 |

| nightVideo | 0.9696 | 0.9595 |

| PTZ | 0.9287 | 0.9240 |

| shadow | 0.9893 | 0.9881 |

| thermal | 0.9924 | 0.9902 |

| turbulence | 0.9773 | 0.9729 |

| Average | 0.9620 | 0.9524 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, Y.; Yu, Z.; Zhu, L. Foreground Detection with Deeply Learned Multi-Scale Spatial-Temporal Features. Sensors 2018, 18, 4269. https://doi.org/10.3390/s18124269

Wang Y, Yu Z, Zhu L. Foreground Detection with Deeply Learned Multi-Scale Spatial-Temporal Features. Sensors. 2018; 18(12):4269. https://doi.org/10.3390/s18124269

Chicago/Turabian StyleWang, Yao, Zujun Yu, and Liqiang Zhu. 2018. "Foreground Detection with Deeply Learned Multi-Scale Spatial-Temporal Features" Sensors 18, no. 12: 4269. https://doi.org/10.3390/s18124269

APA StyleWang, Y., Yu, Z., & Zhu, L. (2018). Foreground Detection with Deeply Learned Multi-Scale Spatial-Temporal Features. Sensors, 18(12), 4269. https://doi.org/10.3390/s18124269