Airborne Infrared and Visible Image Fusion Combined with Region Segmentation

Abstract

:1. Introduction

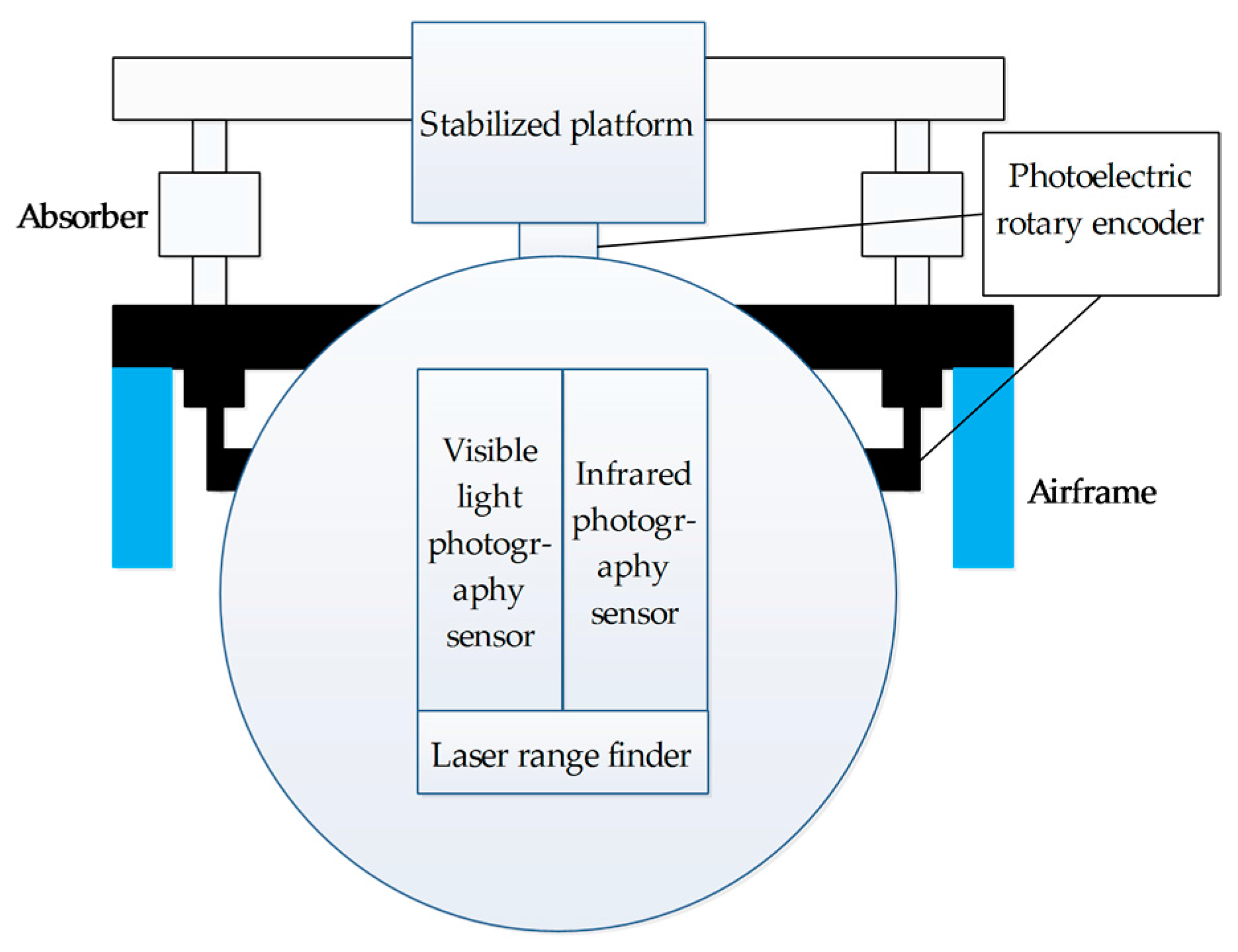

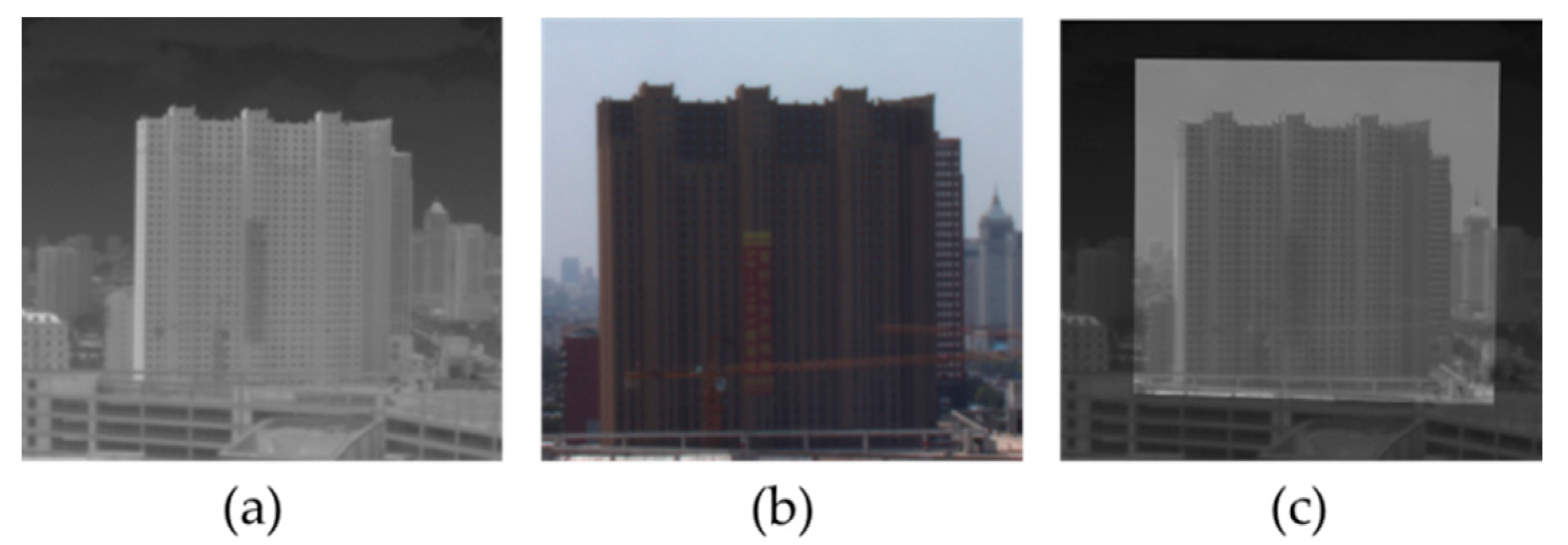

2. Fusion System on the Airborne Photoelectric Platform

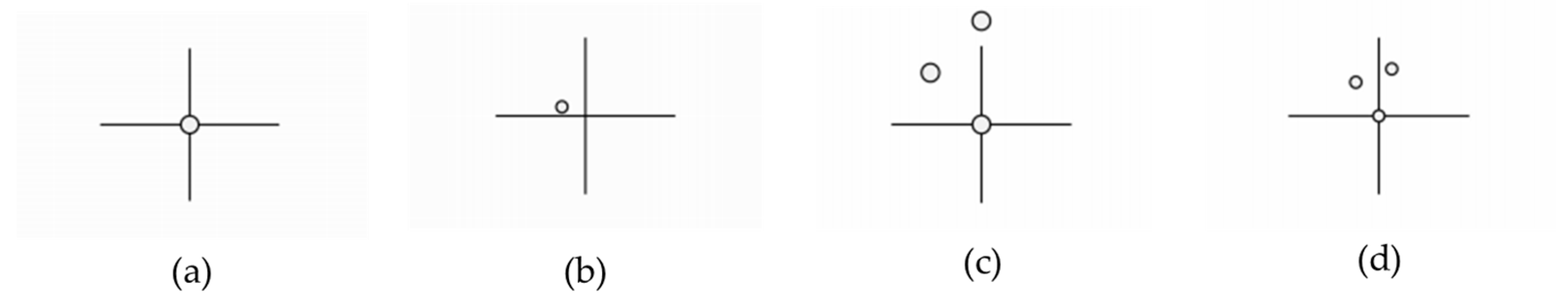

3. Fast Registration of IR and Visible Images

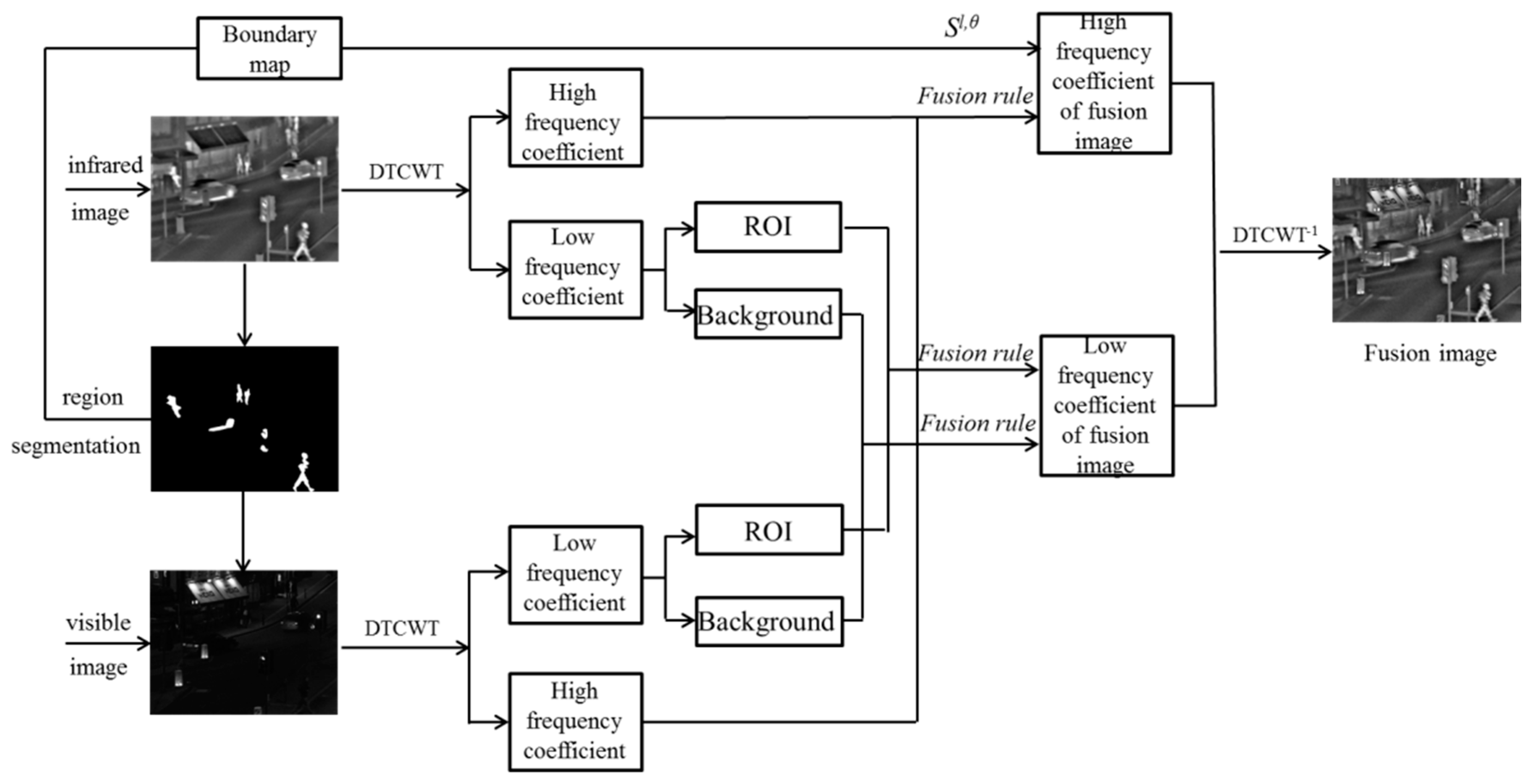

4. IR-Visible Image Fusion Method Combined with Region Segmentation

4.1. Image Region Segmentation Based on ROI

4.2. Low-Frequency Fusion Rule

4.3. High-Frequency Fusion Rule

5. Analysis of Experimental Results

5.1. Evaluation Indicators

5.2. Experiment of Image Fusion Contrast

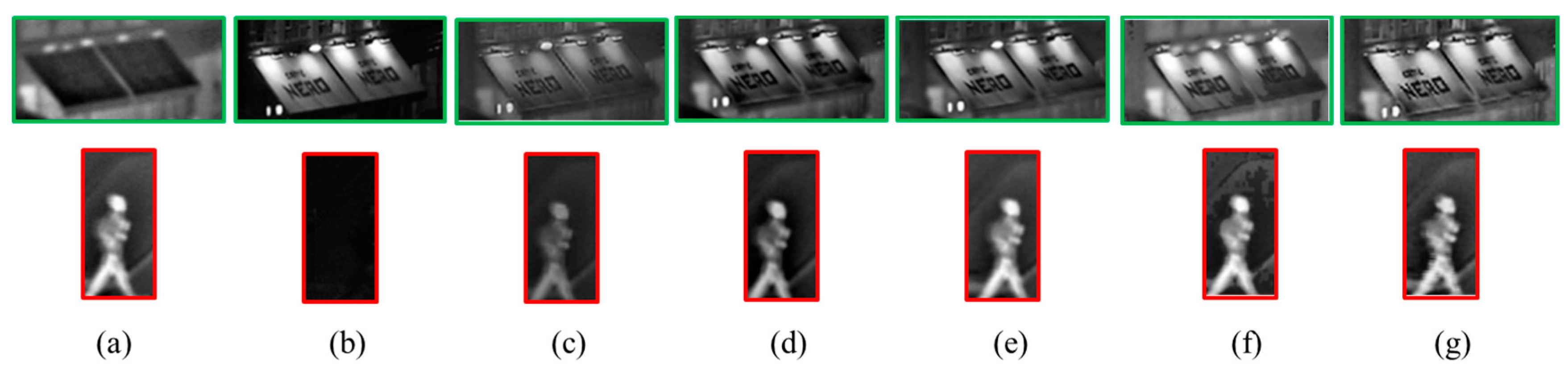

5.2.1. Experiment 1: Quad Image Fusion Comparison

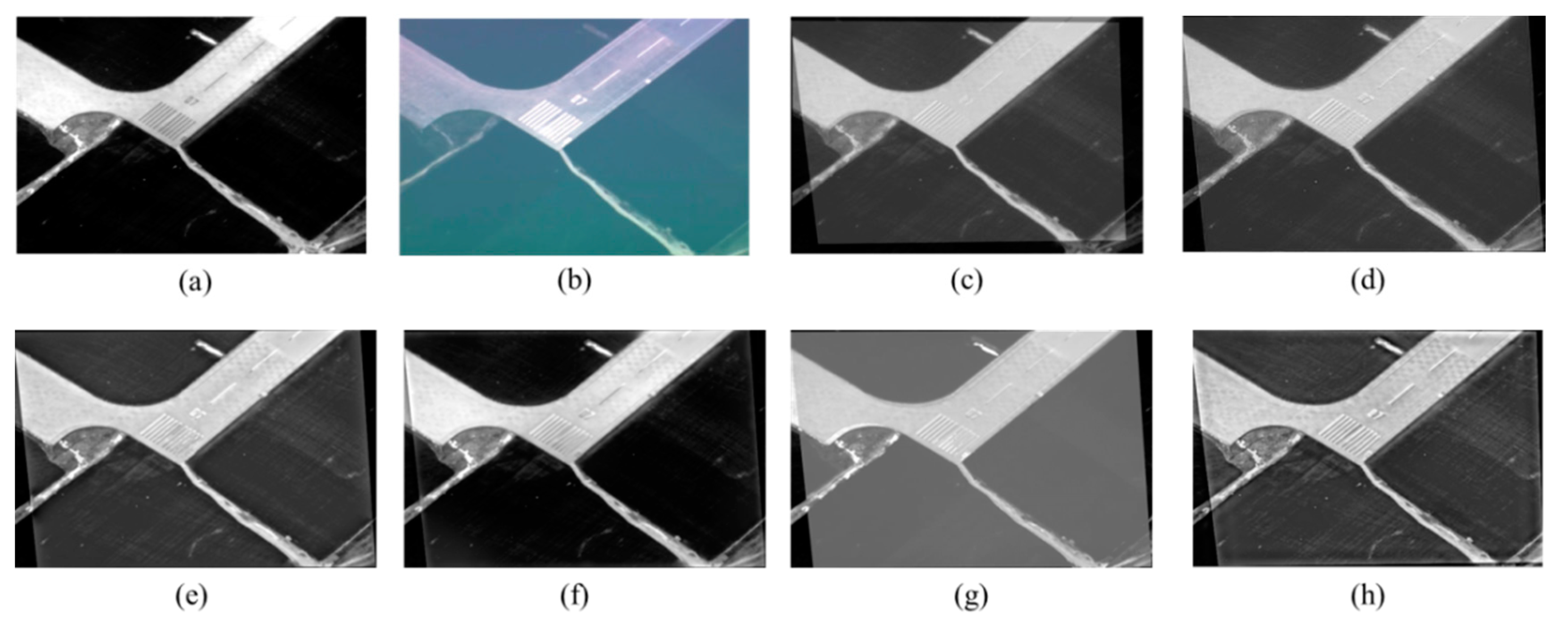

5.2.2. Experiment 2: Aviation IR-Visible Image Fusion Comparison

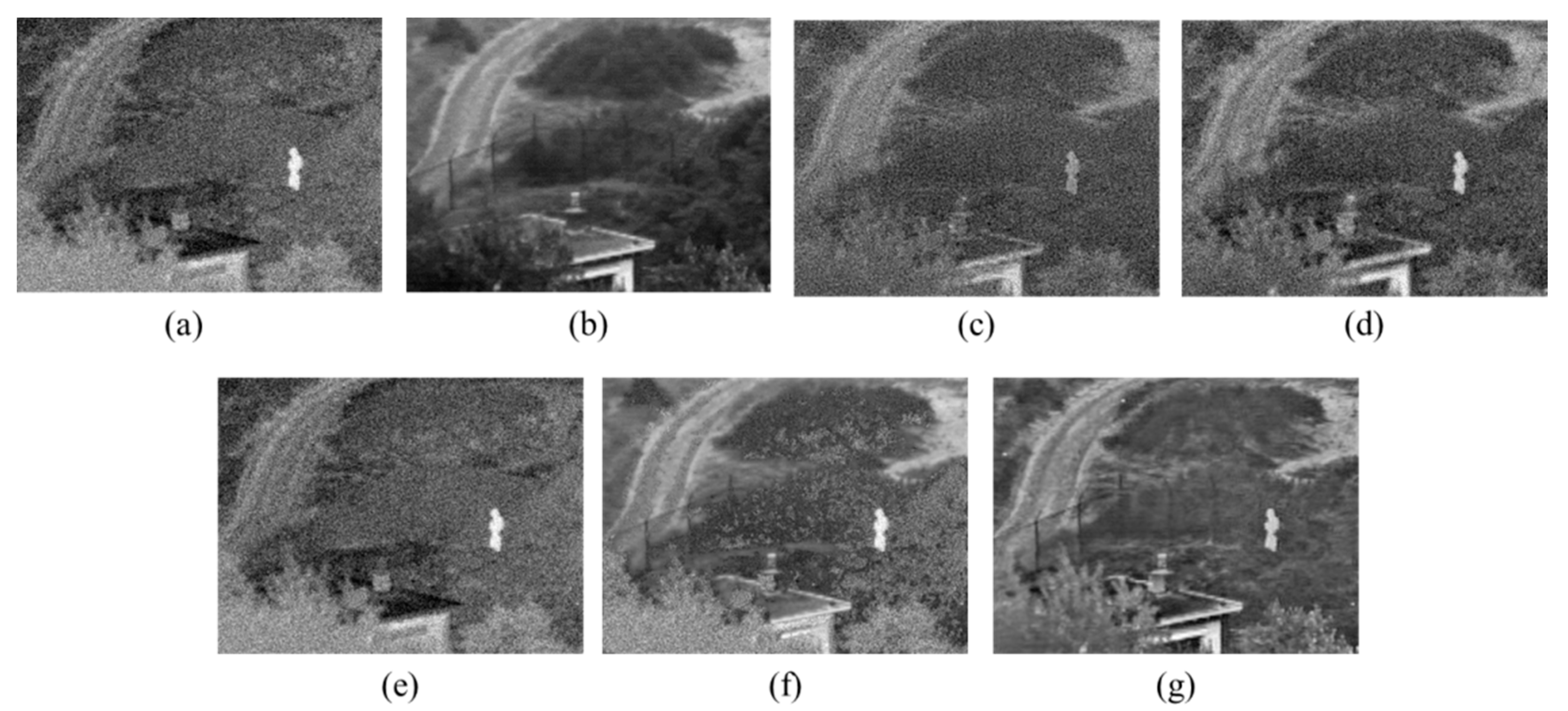

5.2.3. Experiment 3: Anti-Noise Performance Experiment

6. Conclusions

Author Contributions

Conflicts of Interest

References

- Huang, X.; Netravali, R.; Man, H.; Lawrence, V. Multi-sensor fusion of infrared and electro-optic signals for high resolution night images. Sensors 2012, 12, 10326–10338. [Google Scholar] [CrossRef] [PubMed]

- Ma, Y.; Wu, X.; Yu, G.; Xu, Y.; Wang, Y. Pedestrian detection and tracking from low-resolution unmanned aerial vehicle thermal imagery. Sensors 2016, 16, 446. [Google Scholar] [CrossRef] [PubMed]

- Jin, B.; Kim, G.; Cho, N.I. Wavelet-domain satellite image fusion based on a generalized fusion equation. J. Appl. Remote Sens. 2014, 8, 080599. [Google Scholar] [CrossRef]

- Smeelen, M.A.; Schwering, P.B.W.; Toet, A.; Loog, M. Semi-hidden target recognition in gated viewer images fused with thermal IR images. Inf. Fusion 2014, 18, 131–147. [Google Scholar] [CrossRef]

- Zitova, B.; Flusser, J. Image registration methods: A survey. Image Vis. Comput. 2003, 21, 977–1000. [Google Scholar] [CrossRef]

- Wu, F.; Wang, B.; Yi, X.; Li, M.; Hao, H.; Zhou, H. Visible and infrared image registration based on visual salient features. J. Electron. Imaging 2015, 24, 053017. [Google Scholar] [CrossRef]

- Guo, J.; Yang, F.; Tan, H.; Wang, J.; Liu, Z. Image matching using structural similarity and geometric constraint approaches on remote sensing images. J. Appl. Remote Sens. 2016, 10, 045007. [Google Scholar] [CrossRef]

- Chen, Y. Multimodal Image Fusion and Its Applications. Ph.D. Thesis, The University of Michigan, Ann Arbor, MI, USA, 2016. [Google Scholar]

- Zhen, J. Image Registration and Image Completion: Similarity and Estimation Error Optimization. Ph.D. Thesis, University of Cincinnati, Cincinnati, OH, USA, 2014. [Google Scholar]

- Xu, H.; Wang, Y.; Wu, Y.; Qian, Y. Infrared and multi-type images fusion algorithm based on contrast pyramid transform. Infrared Phys. Technol. 2016, 17, 133–146. [Google Scholar] [CrossRef]

- Mukane, S.M.; Ghodake, Y.S.; Khandagle, P.S. Image enhancement using fusion by wavelet transform and laplacian pyramid. Int. J. Comput. Sci. Issues 2013, 10, 122. [Google Scholar]

- Zehua, Z.; Min, T. Infrared image and visible image fusion based on wavelet transform. Adv. Mater. Res. 2014, 756–759, 2850–2856. [Google Scholar]

- Kingsbury, N.Q. The Dual-tree Complex Wavelet Transform: A New Technique for Shift Invariance and Directional Filters. In Proceedings of the 8th IEEE Digital Signal Processing Workshop, Bryce Canyon, UT, USA, 9–12 August 1998; pp. 120–131. [Google Scholar]

- Selesnick, W.; Baraniuk, R.G.; Kingsbury, N.Q. The dual-tree complex wavelet transform. IEEE Signal Process. Mag. 2005, 22, 123–151. [Google Scholar] [CrossRef]

- Do, M.N.; Vetterli, M. The contourlet transform: An efficient directional multiresolution image representation. IEEE Trans. Image Process. 2005, 14, 2091–2106. [Google Scholar] [CrossRef] [PubMed]

- Yang, L.; Guo, B.L.; Ni, W. Multimodality medical image fusion based on multiscale geometric analysis of contourlet transform. Neurocomputing 2008, 72, 203–211. [Google Scholar] [CrossRef]

- Starck, J.L.; Candes, E.J.; Donoho, D.L. The curvelet transform for image denoising. IEEE Trans. Image Process. 2002, 11, 670–684. [Google Scholar] [CrossRef] [PubMed]

- Bhadauria, H.S.; Dewal, M.L. Medical image denoising using adaptive fusion of curvelet transform and total variation. Comput. Electr. Eng. 2013, 39, 1451–1460. [Google Scholar] [CrossRef]

- Cunha, A.L.D.; Zhou, J.P.; Do, M.N. The nonsubsampled contourlet transform: Theory, design, and applications. IEEE Trans. Image Process. 2006, 15, 3089–3101. [Google Scholar] [CrossRef] [PubMed]

- Xiang, T.; Yan, L.; Gao, R. A fusion algorithm for infrared and visible images based on adaptive dual-channel unit-linking PCNN in NSCT domain. Infrared Phys. Technol. 2015, 69, 53–61. [Google Scholar] [CrossRef]

- Yang, Y.; Tong, S.; Huang, S.Y.; Lin, P. Multifocus Image Fusion Based on NSCT and Focused Area Detection. IEEE Sens. J. 2015, 15, 2824–2838. [Google Scholar] [CrossRef]

- Li, H.; Liu, L.; Huang, W.; Yue, C. An improved fusion algorithm for infrared and visible images based on multi-scale transform. Infrared Phys. Technol. 2016, 74, 28–37. [Google Scholar] [CrossRef]

- Shuo, L.; Yan, P.; Muhanmmad, T. Research on fusion technology based on low-light visible image and infrared image. Opt. Eng. 2016, 55, 123104. [Google Scholar] [CrossRef]

- Li, S.; Kang, S.; Hu, J. Image fusion with guided filtering. IEEE Trans. Image Process. 2013, 22, 2864–2874. [Google Scholar] [CrossRef] [PubMed]

- Montoya, M.D.G.; Gill, C.; Garcia, I. The load unbalancing problem for region growing image segmentation algorithm. J. Parallel Distrib. Comput. 2003, 63, 387–395. [Google Scholar] [CrossRef]

- Dawoud, A.; Netchaev, A. Preserving objects in markov random fields region growing image segmentation. Pattern Anal. Appl. 2012, 15, 155–161. [Google Scholar] [CrossRef]

- Chitade, A.Z.; Katiyar, D.S.K. Colour based image segmentation using K-means clustering. Int. J. Eng. Sci. Technol. 2010, 2, 5319–5325. [Google Scholar]

- Sulaiman, S.N.; Isa, N.A.M. Adaptive fuzzy-K-means clustering algorithm for image segmentation. IEEE Trans. Consum. Electron. 2010, 56, 2661–2668. [Google Scholar] [CrossRef]

- Chen, M.-M.; Zhang, G.; Mitra, N.J.; Huang, X.; Hu, S.-M. Global Contrast based salient region detection. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Colorado Springs, CO, USA, 20–25 January 2011. [Google Scholar]

- Carten, R.; Vladimir, K.; Andrew, B. “Grabcut”—Interactive foreground extraction using iterated graph cuts. ACM Trans. Graph. 2004, 23, 309–314. [Google Scholar] [CrossRef]

- Loza, A.; Bull, D.; Canagarajah, N.; Achim, A. Non-Gaussian model based fusion of noisy images in the wavelet domain. Comput. Vis. Image Underst. 2010, 114, 54–65. [Google Scholar] [CrossRef]

| Image | Quad | Airport | Noisy UNCamp |

|---|---|---|---|

| Resolution | 640 × 496 | 640 × 436 | 360 × 270 |

| Time/s | 0.036 | 0.029 | 0.019 |

| Method | SD | IE | QAB/F | AG | Time/s |

|---|---|---|---|---|---|

| DWT [12] | 21.4123 | 5.8733 | 0.2108 | 2.6492 | 1.943 |

| PCA-MST [22] | 31.2098 | 6.3987 | 0.2974 | 3.7382 | 1.019 |

| GFF [24] | 32.0211 | 6.4520 | 0.2752 | 3.2129 | 1.373 |

| IHS-PCNN [23] | 39.5745 | 6.6929 | 0.3269 | 3.6875 | 4.693 |

| Proposed | 35.0284 | 6.8894 | 0.5645 | 5.3487 | 0.977 |

| Method | SD | IE | QAB/F | AG | Time/s |

|---|---|---|---|---|---|

| DWT [12] | 40.3748 | 5.1037 | 0.2964 | 3.0552 | 1.526 |

| PCA-MST [22] | 48.6327 | 6.3254 | 0.3978 | 3.7553 | 0.847 |

| GFF [24] | 49.2637 | 7.0035 | 0.6755 | 3.3247 | 0.916 |

| IHS-PCNN [23] | 45.9653 | 6.1276 | 0.4458 | 3.7514 | 3.932 |

| Proposed | 53.3248 | 6.9054 | 0.6326 | 6.3072 | 0.852 |

| Method | SD | IE | QAB/F | AG | Time/s |

|---|---|---|---|---|---|

| DWT [12] | 33.6973 | 7.0965 | 0.3575 | 16.4053 | 0.960 |

| PCA-MST [22] | 37.3488 | 7.2314 | 0.3204 | 16.6699 | 0.499 |

| GFF [24] | 37.9777 | 7.2664 | 0.3870 | 16.6331 | 0.693 |

| IHS-PCNN [23] | 37.2536 | 7.1973 | 0.4544 | 11.2374 | 3.137 |

| Proposed | 39.3742 | 7.2013 | 0.6447 | 15.2238 | 0.547 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zuo, Y.; Liu, J.; Bai, G.; Wang, X.; Sun, M. Airborne Infrared and Visible Image Fusion Combined with Region Segmentation. Sensors 2017, 17, 1127. https://doi.org/10.3390/s17051127

Zuo Y, Liu J, Bai G, Wang X, Sun M. Airborne Infrared and Visible Image Fusion Combined with Region Segmentation. Sensors. 2017; 17(5):1127. https://doi.org/10.3390/s17051127

Chicago/Turabian StyleZuo, Yujia, Jinghong Liu, Guanbing Bai, Xuan Wang, and Mingchao Sun. 2017. "Airborne Infrared and Visible Image Fusion Combined with Region Segmentation" Sensors 17, no. 5: 1127. https://doi.org/10.3390/s17051127

APA StyleZuo, Y., Liu, J., Bai, G., Wang, X., & Sun, M. (2017). Airborne Infrared and Visible Image Fusion Combined with Region Segmentation. Sensors, 17(5), 1127. https://doi.org/10.3390/s17051127