An Adaptive Multi-Sensor Data Fusion Method Based on Deep Convolutional Neural Networks for Fault Diagnosis of Planetary Gearbox

Abstract

:1. Introduction

2. Deep Convolutional Neural Networks

2.1. Architecture of Deep Convolutional Neural Networks

2.2. Training Method

3. Adaptive Multi-Sensor Data Fusion Method Based on DCNN for Fault Diagnosis

3.1. Procedure of the Proposed Method

3.2. Model Design of DCNN

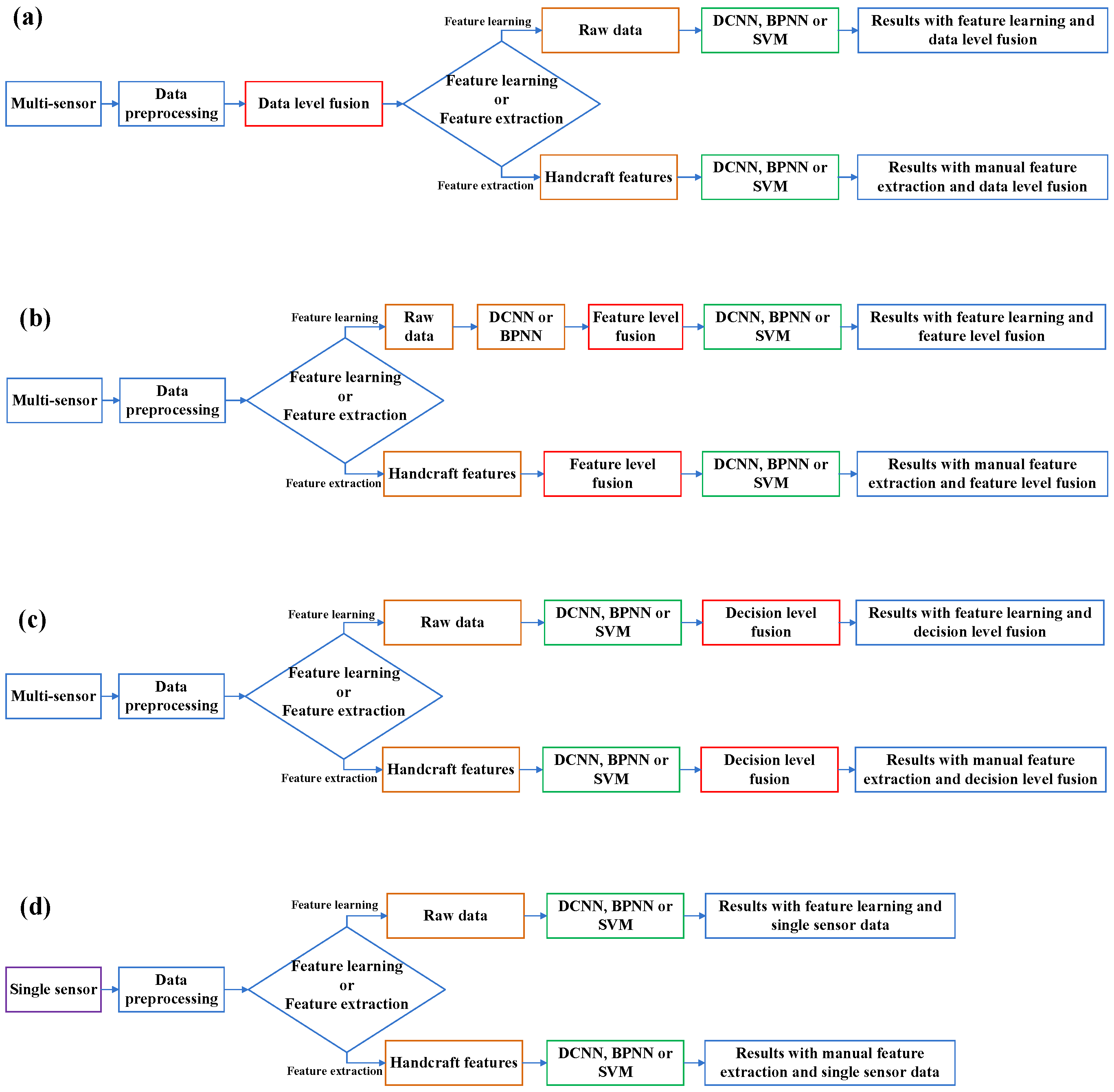

3.3. Comparative Methods

4. Experiment and Discussion

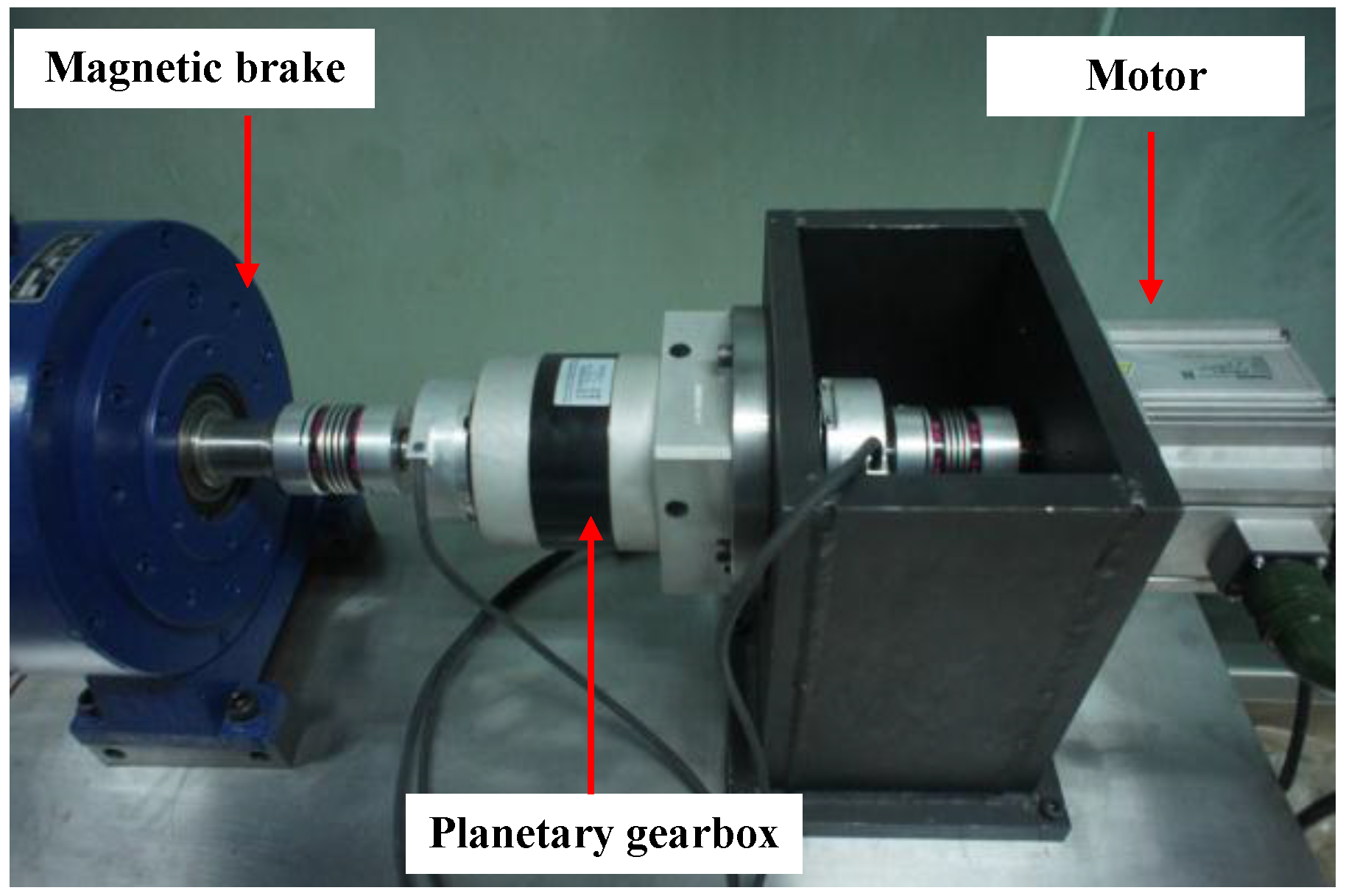

4.1. Experiment Setup

4.2. Data Processing

4.3. Model Design

4.4. Experimental Results

4.4.1. Results of Single Sensory Data

4.4.2. Results of Multi-Sensory Data

4.5. Principal Component Analysis of the Experimental Data and Learned Features

4.6. Discussion

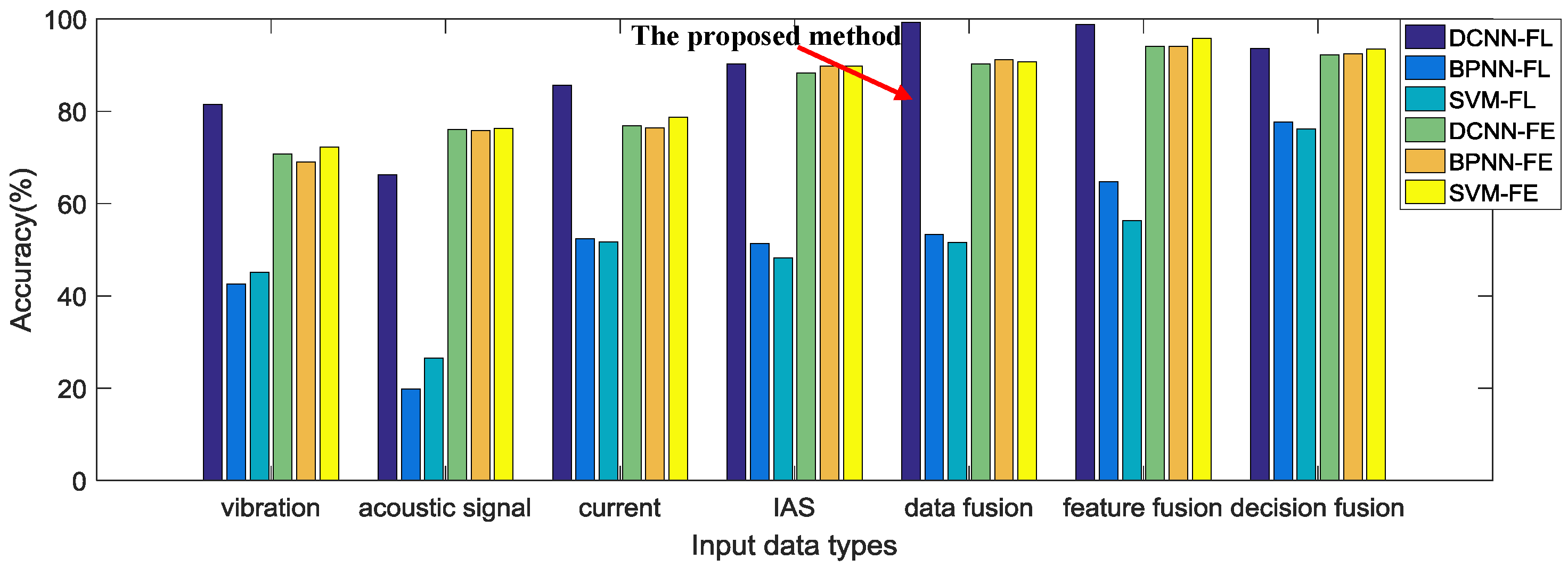

- The experimental results show that the proposed method is able to diagnose the faults of the planetary gearbox test rig effectively, yielding the best testing accuracy in the experiment. It can be seen from Table 4 and Figure 8 that the proposed method achieves the best testing accuracy 99.28% among all the comparative methods. We think that this result is significantly correlated with the deep architecture of the DCNN model of the proposed method. DCNN can fuse input data and learn basic features from it in its lower layers, fuse basic features into higher level features or decisions in its middle layers, and further fuse these features and decisions to obtain the final result in its higher layers. Although there is a data-level fusion before DCNN in the proposed method, DCNN still actually fuses the data again in its starting layers to further optimize the data structure. Optimized features and combinations of different level fusions are formed through this deep-layered model, which provides a better result than with manually selected features or fusion levels.

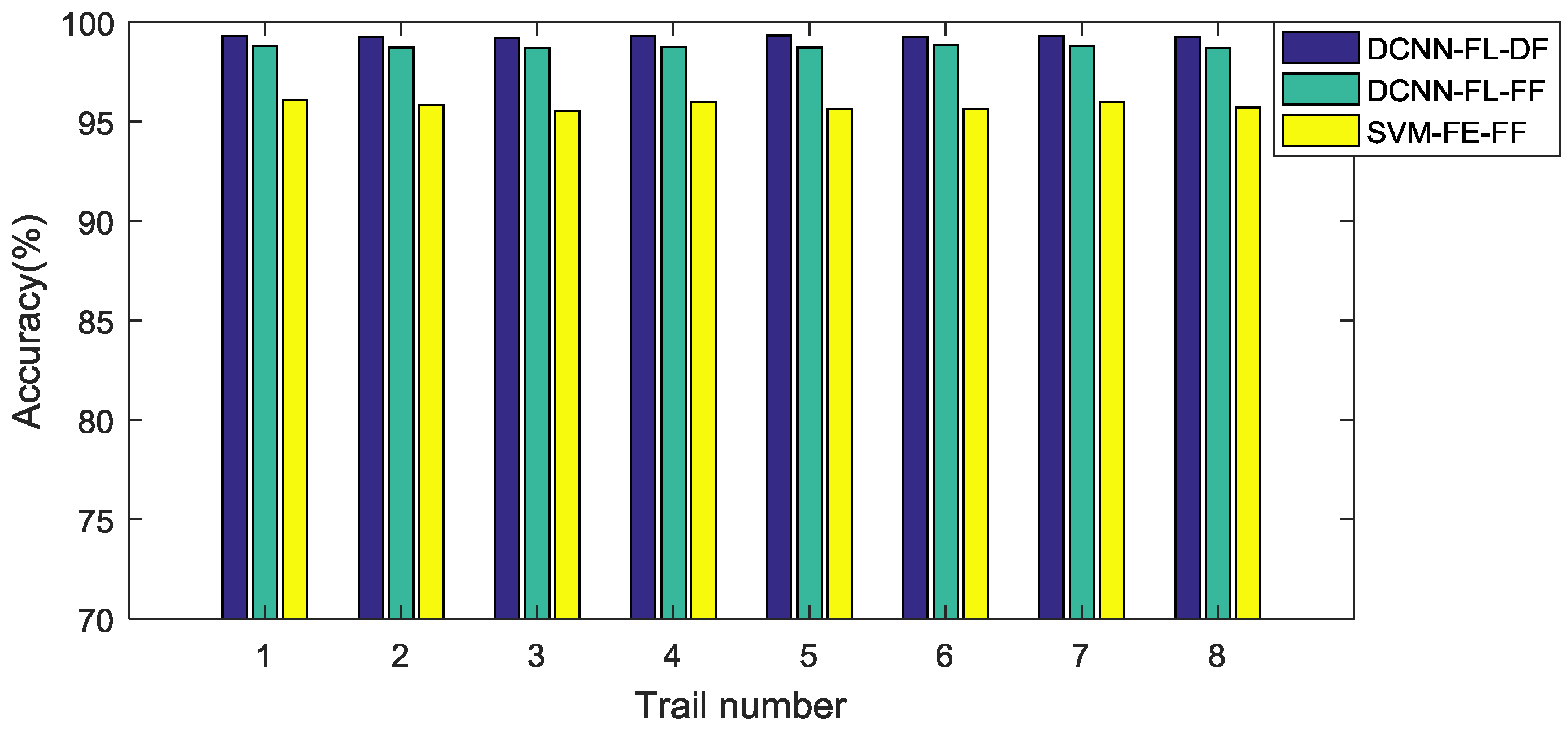

- The ability of automatic feature learning of the DCNN model with multi-sensory data is proven through the experiment. It can obviously be seen from Figure 8 that both the proposed method and the feature-level fusion method with feature learning through DCNN obtain a better result, 99.28% and 98.75%, than any other comparative methods with handcraft features or feature learning through BPNN. This result proves that the feature learning through DCNN with multi-sensory data can improve the performance of the multi-sensor data fusion method for fault diagnosis. In addition, the result also implies that the proposed method with adaptive fusion-level selection can achieve a better result 99.28% than the result 98.75% of the method with manual-selected feature-level fusion, which is the only difference between these two methods.

- However, the method with automatic feature learning of DCNN from the raw signal of a single sensor cannot achieve a better result than methods with handcraft features. Table 3 displays the diagnosis results using signals from a single sensor. Only with a vibration signal and current signal, can the DCNN-based feature learning method achieve better results than conventional methods with handcraft features. By contrast, the results of the DCNN-based feature learning method with an acoustic signal and IAS signal are worse than that of conventional methods. This implies that the DCNN-based method with learned features from single sensory data cannot provide stable improvements for all kinds of sensory data. We think that the performance of the DCNN-based feature learning is influenced by the characteristics of the input data. As can be seen from the results shown in Table 3, the performance of feature learning has a stronger positive correlation with the performance of time-domain features than frequency-domain features, which infers that the DCNN-based feature learning from a raw signal may be more sensitive to time-correlated features than frequency-correlated features.

- The effectiveness of the automatic feature learning and adaptive fusion-level selection of the proposed method is further confirmed through PCA. As can be seen from Figure 9a, most of the categories of the input raw data overlap each other, which makes it difficult to distinguish them. After the processing of the proposed method, the learned features with adaptive fusion levels along the first two PCs become distinguishable in Figure 9b. Meanwhile, Figure 9c,d presents the results of PCA with feature-level fused learned features and handcraft features as comparisons, respectively. The feature-level fused features learned through DCNN have just a slightly worse distinction between each category than the features of the proposed method, which not only verifies the feature learning ability of DCNN used in both methods, but also proves the better performance of the adaptive-level fusion of the proposed method than that of the manual-selected feature-level fusion. On the contrary, the fused handcraft features show a much worse distinction between different categories than the learned features of the proposed method. These analyses further demonstrate the effective performance of the automatic feature learning and adaptive fusion-level selection of the proposed method.

- While DCNN has a much better feature learning ability than BPNN, the three comparative models, DCNN, BPNN and SVM, obtain similar results with handcraft features. Figure 8 shows clearly that feature learning through DCNN achieves much better testing accuracies than through BPNN. Nevertheless, with handcrafts features, these three intelligent models provide similar accuracies, which suggests that DCNN cannot achieve much more improvements than conventional methods without using its ability of feature learning.

- Methods with multi-sensory data provide better results than those with single sensory data. It can be seen from Figure 8 that methods with multi-sensory data achieve higher testing accuracies than with single sensory data, no matter which fusion level or intelligent model is selected. This phenomenon indicates that multi-sensory data can improve the reliability and accuracy for fault diagnosis.

5. Conclusions and Future Work

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Lei, Y.; Lin, J.; Zuo, M.J.; He, Z. Condition monitoring and fault diagnosis of planetary gearboxes: A review. Measurement 2014, 48, 292–305. [Google Scholar] [CrossRef]

- Khazaee, M.; Ahmadi, H.; Omid, M.; Banakar, A.; Moosavian, A. Feature-level fusion based on wavelet transform and artificial neural network for fault diagnosis of planetary gearbox using acoustic and vibration signals. Insight Non-Destr. Test. Cond. Monit. 2013, 55, 323–330. [Google Scholar] [CrossRef]

- Lei, Y.; Lin, J.; He, Z.; Kong, D. A Method Based on Multi-Sensor Data Fusion for Fault Detection of Planetary Gearboxes. Sensors 2012, 12, 2005–2017. [Google Scholar] [CrossRef] [PubMed]

- Li, C.; Sanchez, R.V.; Zurita, G.; Cerrada, M.; Cabrera, D.; Vásquez, R.E. Gearbox fault diagnosis based on deep random forest fusion of acoustic and vibratory signals. Mech. Syst. Signal Process. 2016, 76, 283–293. [Google Scholar] [CrossRef]

- Hall, D.L.; Llinas, J. An introduction to multisensor data fusion. Proc. IEEE 1997, 85, 6–23. [Google Scholar] [CrossRef]

- Khaleghi, B.; Khamis, A.; Karray, F.O.; Razavi, S.N. Multisensor data fusion: A review of the state-of-the-art. Inf. Fusion 2013, 14, 28–44. [Google Scholar] [CrossRef]

- Serdio, F.; Lughofer, E.; Pichler, K.; Buchegger, T.; Pichler, M.; Efendic, H. Fault detection in multi-sensor networks based on multivariate time-series models and orthogonal transformations. Inf. Fusion 2014, 20, 272–291. [Google Scholar] [CrossRef]

- Lei, Y.; Jia, F.; Lin, J.; Xing, S. An Intelligent Fault Diagnosis Method Using Unsupervised Feature Learning Towards Mechanical Big Data. IEEE Trans. Ind. Electron. 2016, 63, 3137–3147. [Google Scholar] [CrossRef]

- Ince, T.; Kiranyaz, S.; Eren, L.; Askar, M. Real-Time Motor Fault Detection by 1D Convolutional Neural Networks. IEEE Trans. Ind. Electron. 2016, 63, 7067–7075. [Google Scholar] [CrossRef]

- Bengio, Y.; Courville, A.; Vincent, P. Representation Learning: A Review and New Perspectives. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 1798–1828. [Google Scholar] [CrossRef] [PubMed]

- Lecun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Schmidhuber, J. Deep learning in neural networks: An overview. Neural Netw. 2014, 61, 85–117. [Google Scholar] [CrossRef] [PubMed]

- Bengio, Y. Learning Deep Architectures for AI. Found. Trends® Mach. Learn. 2009, 2, 1–127. [Google Scholar] [CrossRef]

- Janssens, O.; Slavkovikj, V.; Vervisch, B.; Stockman, K.; Loccufier, M.; Verstockt, S.; Walle, R.V.D.; Hoecke, S.V. Convolutional Neural Network Based Fault Detection for Rotating Machinery. J. Sound Vib. 2016, 377, 331–345. [Google Scholar] [CrossRef]

- Jia, F.; Lei, Y.; Lin, J.; Zhou, X.; Lu, N. Deep neural networks: A promising tool for fault characteristic mining and intelligent diagnosis of rotating machinery with massive data. Mech. Syst. Signal Process. 2016, 72–73, 303–315. [Google Scholar] [CrossRef]

- Lu, C.; Wang, Z.Y.; Qin, W.L.; Ma, J. Fault diagnosis of rotary machinery components using a stacked denoising autoencoder-based health state identification. Signal Process. 2016, 130, 377–388. [Google Scholar] [CrossRef]

- Sun, W.; Shao, S.; Zhao, R.; Yan, R.; Zhang, X.; Chen, X. A sparse auto-encoder-based deep neural network approach for induction motor faults classification. Measurement 2016, 89, 171–178. [Google Scholar] [CrossRef]

- Zhao, R.; Yan, R.; Wang, J.; Mao, K. Learning to Monitor Machine Health with Convolutional Bi-Directional LSTM Networks. Sensors 2017, 17, 273. [Google Scholar] [CrossRef] [PubMed]

- Abdeljaber, O.; Avci, O.; Kiranyaz, S.; Gabbouj, M.; Inman, D.J. Real-Time Vibration-Based Structural Damage Detection Using One-Dimensional Convolutional Neural Networks. J. Sound Vib. 2017, 388, 154–170. [Google Scholar] [CrossRef]

- Guo, X.; Chen, L.; Shen, C. Hierarchical adaptive deep convolution neural network and its application to bearing fault diagnosis. Measurement 2016, 93, 490–502. [Google Scholar] [CrossRef]

- Ji, S.; Xu, W.; Yang, M.; Yu, K. 3D Convolutional Neural Networks for Human Action Recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 221–231. [Google Scholar] [CrossRef] [PubMed]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the IEEE Conference On Computer Vision and Patten Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 580–587.

- Sermanet, P.; Eigen, D.; Zhang, X.; Mathieu, M.; Fergus, R.; Lecun, Y. Overfeat: Integrated recognition, localization and detection using convolutional networks. arXiv 2014. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet Classification with Deep Convolutional Neural Networks. Adv. Neural Inf. Process. Syst. 2012, 25, 1097–1105. [Google Scholar]

- Nebauer, C. Evaluation of convolutional neural networks for visual recognition. IEEE Trans. Neural Netw. 1998, 9, 685–696. [Google Scholar] [CrossRef] [PubMed]

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; Rusu, A.A.; Veness, J.; Bellemare, M.G.; Graves, A.; Riedmiller, M.; Fidjeland, A.K.; Ostrovski, G. Human-level control through deep reinforcement learning. Nature 2015, 518, 529–533. [Google Scholar] [CrossRef] [PubMed]

- Lei, Y.; Han, D.; Lin, J.; He, Z. Planetary gearbox fault diagnosis using an adaptive stochastic resonance method. Mech. Syst. Signal Process. 2013, 38, 113–124. [Google Scholar] [CrossRef]

- Sun, H.; Zi, Y.; He, Z.; Yuan, J.; Wang, X.; Chen, L. Customized multiwavelets for planetary gearbox fault detection based on vibration sensor signals. Sensors 2013, 13, 1183–1209. [Google Scholar] [CrossRef] [PubMed]

- Amarnath, M.; Krishna, I.R.P. Local fault detection in helical gears via vibration and acoustic signals using EMD based statistical parameter analysis. Measurement 2014, 58, 154–164. [Google Scholar] [CrossRef]

- Immovilli, F.; Cocconcelli, M.; Bellini, A.; Rubini, R. Detection of Generalized-Roughness Bearing Fault by Spectral-Kurtosis Energy of Vibration or Current Signals. IEEE Trans. Ind. Electron. 2009, 56, 4710–4717. [Google Scholar] [CrossRef]

- Kar, C.; Mohanty, A.R. Monitoring gear vibrations through motor current signature analysis and wavelet transform. Mech. Syst. Signal Process. 2006, 20, 158–187. [Google Scholar] [CrossRef]

- Lu, D.; Gong, X.; Qiao, W. Current-Based Diagnosis for Gear Tooth Breaks in Wind Turbine Gearboxes. In Proceedings of the Energy Conversion Congress and Exposition, Raleigh, NC, USA, 15–20 September 2012; pp. 3780–3786.

- Fedala, S.; Rémond, D.; Zegadi, R.; Felkaoui, A. Contribution of angular measurements to intelligent gear faults diagnosis. J. Intell. Manuf. 2015. [Google Scholar] [CrossRef]

- Moustafa, W.; Cousinard, O.; Bolaers, F.; Sghir, K.; Dron, J.P. Low speed bearings fault detection and size estimation using instantaneous angular speed. J. Vib. Control 2014, 22, 3413–3425. [Google Scholar] [CrossRef]

- Turaga, S.C.; Murray, J.F.; Jain, V.; Roth, F.; Helmstaedter, M.; Briggman, K.; Denk, W.; Seung, H.S. Convolutional networks can learn to generate affinity graphs for image segmentation. Neural Comput. 2010, 22, 511–538. [Google Scholar] [CrossRef] [PubMed]

- Chen, Z.Q.; Li, C.; Sanchez, R.V. Gearbox Fault Identification and Classification with Convolutional Neural Networks. Shock Vib. 2015, 2015, 390134. [Google Scholar] [CrossRef]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the inception architecture for computer vision. In Proceedings of the IEEE Conference On Computer Vision and Patten Recognition, Seattle, WA, USA, 27–30 June 2016.

- Bergstra, J.; Bardenet, R.; Kégl, B.; Bengio, Y. Algorithms for Hyper-Parameter Optimization. In Proceedings of the Advances in Neural Information Processing Systems, Granada, Spain, 12–15 December 2011; pp. 2546–2554.

- Zhang, L.; Suganthan, P.N. A Survey of Randomized Algorithms for Training Neural Networks. Inf. Sci. 2016, 364–365, 146–155. [Google Scholar] [CrossRef]

- Abdel-Hamid, O.; Li, D.; Dong, Y. Exploring Convolutional Neural Network Structures and Optimization Techniques for Speech Recognition. In Proceedings of the INTERSPEECH, Lyon, France, 25–29 August 2013; pp. 1173–1175.

- Kane, P.V.; Andhare, A.B. Application of psychoacoustics for gear fault diagnosis using artificial neural network. J. Low Freq. Noise Vib. Act.Control 2016, 35, 207–220. [Google Scholar] [CrossRef]

- Fedala, S.; Rémond, D.; Zegadi, R.; Felkaoui, A. Gear Fault Diagnosis Based on Angular Measurements and Support Vector Machines in Normal and Nonstationary Conditions. In Condition Monitoring of Machinery in Non-Stationary Operations; Springer: Berlin/Heidelberg, Germany, 2016; pp. 291–308. [Google Scholar]

- Li, C.; Sánchez, R.V.; Zurita, G.; Cerrada, M.; Cabrera, D. Fault Diagnosis for Rotating Machinery Using Vibration Measurement Deep Statistical Feature Learning. Sensors 2016, 16, 895. [Google Scholar] [CrossRef] [PubMed]

- Lu, D.; Qiao, W. Adaptive Feature Extraction and SVM Classification for Real-Time Fault Diagnosis of Drivetrain Gearboxes. In Proceedings of the Energy Conversion Congress and Exposition, Denver, CO, USA, 15–19 September 2013; pp. 3934–3940.

- Abumahfouz, I.A. A comparative study of three artificial neural networks for the detection and classification of gear faults. Int. J. Gen. Syst. 2005, 34, 261–277. [Google Scholar] [CrossRef]

- Li, Y.; Gu, F.; Harris, G.; Ball, A.; Bennett, N.; Travis, K. The measurement of instantaneous angular speed. Mech. Syst. Signal Process. 2005, 19, 786–805. [Google Scholar] [CrossRef]

| Pattern Label | Gearbox Condition | Input Speed (rpm) | Load |

|---|---|---|---|

| 1 | Normal | 600, 1200 and 1800 | Zero |

| 2 | Pitting tooth | 600, 1200 and 1800 | Zero |

| 3 | Chaffing tooth | 600, 1200 and 1800 | Zero |

| 4 | Chipped tooth | 600, 1200 and 1800 | Zero |

| 5 | Root cracked tooth | 600, 1200 and 1800 | Zero |

| 6 | Slight worn tooth | 600, 1200 and 1800 | Zero |

| 7 | Worn tooth | 600, 1200 and 1800 | Zero |

| Layer | Type | Variables and Dimensions | Training Parameters |

|---|---|---|---|

| 1 | Convolution | CW = 65; CH = 1; CC = 1; CN = 10; B = 10 | SGD minibatch size = 20 |

| 2 | Pooling | S = 2 | Initial learning rate = 0.05 |

| 3 | Convolution | CW = 65; CH = 1; CC = 10; CN = 15; B = 15 | Decrease of learning rate after each ten epochs = 20% |

| 4 | Pooling | S = 2 | Momentum = 0.5 |

| 5 | Convolution | CW = 976; CH = 1; CC = 15; CN = 30; B = 30 | Weight decay = 0.04 |

| 6 | Hidden layer | Relu activation function | Max epochs = 200 |

| 7 | Softmax | 7 outputs | Testing sample rate = 50% |

| Sensory Data | Model | Feature Learning from Raw Data | Manual Feature Extraction | ||

|---|---|---|---|---|---|

| Time-Domain Features | Frequency-Domain Features | Handcraft Features | |||

| Vibration signal | DCNN | 81.45% | 55.84% | 70.74% | 73.64% |

| BPNN | 42.56% | 55.62% | 69.03% | 72.36% | |

| SVM | 45.11% | 56.35% | 72.23% | 73.86% | |

| Acoustic signal | DCNN | 66.23% | 31.42% | 76.45% | 76.02% |

| BPNN | 19.80% | 35.89% | 76.04% | 75.79% | |

| SVM | 26.54% | 33.62% | 77.36% | 76.32% | |

| Current signal | DCNN | 85.68% | 60.73% | 61.45% | 76.85% |

| BPNN | 52.36% | 60.47% | 61.21% | 76.43% | |

| SVM | 51.64% | 63.74% | 63.53% | 78.76% | |

| Instantaneous angular speed (IAS) signal | DCNN | 90.23% | 75.34% | 84.42% | 88.34% |

| BPNN | 51.37% | 75.36% | 85.22% | 89.82% | |

| SVM | 48.22% | 75.68% | 85.65% | 89.85% | |

| Fusion Level | Model | Feature Learning from Raw Data | Manual Feature Extraction | ||

|---|---|---|---|---|---|

| Time-Domain Features | Frequency-Domain Features | Handcraft Features | |||

| Data-level fusion | DCNN | 99.28% | 66.08% | 87.63% | 90.23% |

| BPNN | 53.28% | 65.95% | 87.89% | 91.22% | |

| SVM | 51.62% | 67.32% | 87.28% | 90.67% | |

| Feature-level fusion | DCNN | 98.75% | 86.35% | 92.34% | 94.08% |

| BPNN | 64.74% | 86.81% | 92.15% | 94.04% | |

| SVM | 56.27% | 86.74% | 94.62% | 95.80% | |

| Decision-level fusion | DCNN | 93.65% | 84.65% | 90.23% | 92.19% |

| BPNN | 77.62% | 84.47% | 91.19% | 93.42% | |

| SVM | 76.17% | 86.32% | 90.98% | 93.44% | |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license ( http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jing, L.; Wang, T.; Zhao, M.; Wang, P. An Adaptive Multi-Sensor Data Fusion Method Based on Deep Convolutional Neural Networks for Fault Diagnosis of Planetary Gearbox. Sensors 2017, 17, 414. https://doi.org/10.3390/s17020414

Jing L, Wang T, Zhao M, Wang P. An Adaptive Multi-Sensor Data Fusion Method Based on Deep Convolutional Neural Networks for Fault Diagnosis of Planetary Gearbox. Sensors. 2017; 17(2):414. https://doi.org/10.3390/s17020414

Chicago/Turabian StyleJing, Luyang, Taiyong Wang, Ming Zhao, and Peng Wang. 2017. "An Adaptive Multi-Sensor Data Fusion Method Based on Deep Convolutional Neural Networks for Fault Diagnosis of Planetary Gearbox" Sensors 17, no. 2: 414. https://doi.org/10.3390/s17020414

APA StyleJing, L., Wang, T., Zhao, M., & Wang, P. (2017). An Adaptive Multi-Sensor Data Fusion Method Based on Deep Convolutional Neural Networks for Fault Diagnosis of Planetary Gearbox. Sensors, 17(2), 414. https://doi.org/10.3390/s17020414