1. Introduction

The number of elderly people living alone has been continuously growing worldwide. This independence comes with the risk of not receiving prompt attention if an accident occurs. A third of the people over 65 years old suffer on average one fall per year [

1], and this number grows with age [

2] and previous falls, where about one third develop fear of falling again [

3,

4]. Not receiving attention in the first hour of the accident increases the risk of death and chronic affections [

5]. This issue has been widely addressed in recent years with systems that detect falls in elderly people, and generate a prompt alert that can reduce the consequences related to medical attention response time [

6]. These systems have acceptance among the objective population as a way to support their independence and reduce their fear of falling [

7].

Developers of fall detection systems are currently facing several challenges. Independently of the acquisition strategy, most works are not tested with the objective population (elderly people), reducing their accuracy in real-life applications [

8]. Moreover, all public datasets exclusively contain data from young adults, making it difficult to test new proposals [

9]. Here, we make publicly available a dataset with falls and activities of daily living (ADLs) acquired with a wearable device, and we provide results of some of the most commonly used detection features with both young and elderly people. The purpose of this work is to provide a benchmark for other researchers on the fall and movement detection field, and to address two rarely discussed open issues: training with young people features intended for elderly people, and setting-up algorithms for maximum accuracy instead of maximum sensitivity.

Falls are commonly detected with wearable or ambient-based systems (see [

6,

9,

10,

11,

12] for reviews in the field). Ambient-based sensors such as cameras are intrusive and do not solve the problem for independent adults, who are not confined to closed spaces. According to [

2], up to 50% of the falls in independent elderly people occur outside the home premises. Wearable devices offer portability as they can be used regardless of the user location. Available wearable devices include smartphone apps and self-developed systems. In both cases, the preferred sensor is the triaxial accelerometer because of its low cost, small size, and because it is built-in in almost all smartphones [

6]. Smartphones are a popular selection for authors because they include a robust hardware, a powerful processor, and they are economically affordable [

6,

11]. However, the low cost of the individual components and design tools has encouraged authors to develop their own embedded devices too [

13].

Independently of the device used, authors have faced problems such as energy consumption, battery life, false positives (the alarm turns on with ADL), false negatives (the alarm does not turn on with falls), and user comfort issues. Specifically for smartphones, these devices are not designed for constant use of the processor and sensors (the battery goes off in a couple of hours [

11]). Additionally, the smartphone may get hits and falls caused by manipulation, or the person may forget it in a table after calling, making it less feasible for permanent monitoring.

New strategies for solving the aforementioned problems require testing. It requires acquiring datasets with common types of falls and ADLs. In this sense, some authors analyzed how elderly people fall. Back in 1993, authors in [

5] performed a wide survey with 704 women over 65 years old. They reported that most falls were caused by trips, slips and loss of balance. However, they did not record data. About the conditions of the fall, in [

14], the authors found that women were three times more likely to hit the ground on the hips than men, and that most people fell in a forward direction with 60% of prevalence. Most activities currently selected for testing algorithms are based on these studies.

Once the selected ADL and falls are simulated and recorded, the raw acceleration data must be processed and classified. Authors commonly filter the data, apply a feature extraction, and classify activities as falls or ADL. The literature provides a wide number of features (see [

9], Table 4 and [

12], Table 2 for complete lists). Unfortunately, there are not works in the literature tested with independent elderly people (see [

9], Table 1, and [

12], Table 4) and available public datasets were all recorded exclusively with young adults. In [

8], for example, authors tested 13 state-of-the-art approaches with real elderly people falls, and they found that the performance of these approaches severely decreased under real-life conditions. However, they did not release the validation dataset, i.e., other authors cannot analyze why those features reduced their performance, and, more importantly, how to solve it. To our knowledge, there only exist four public datasets, all acquired using smartphones: Mobifall [

15], tFall [

16], DLR [

17], and project gravity [

18]. Igual et al. [

19] compared the former three and found severe variability and performance issues of the analyzed algorithms.

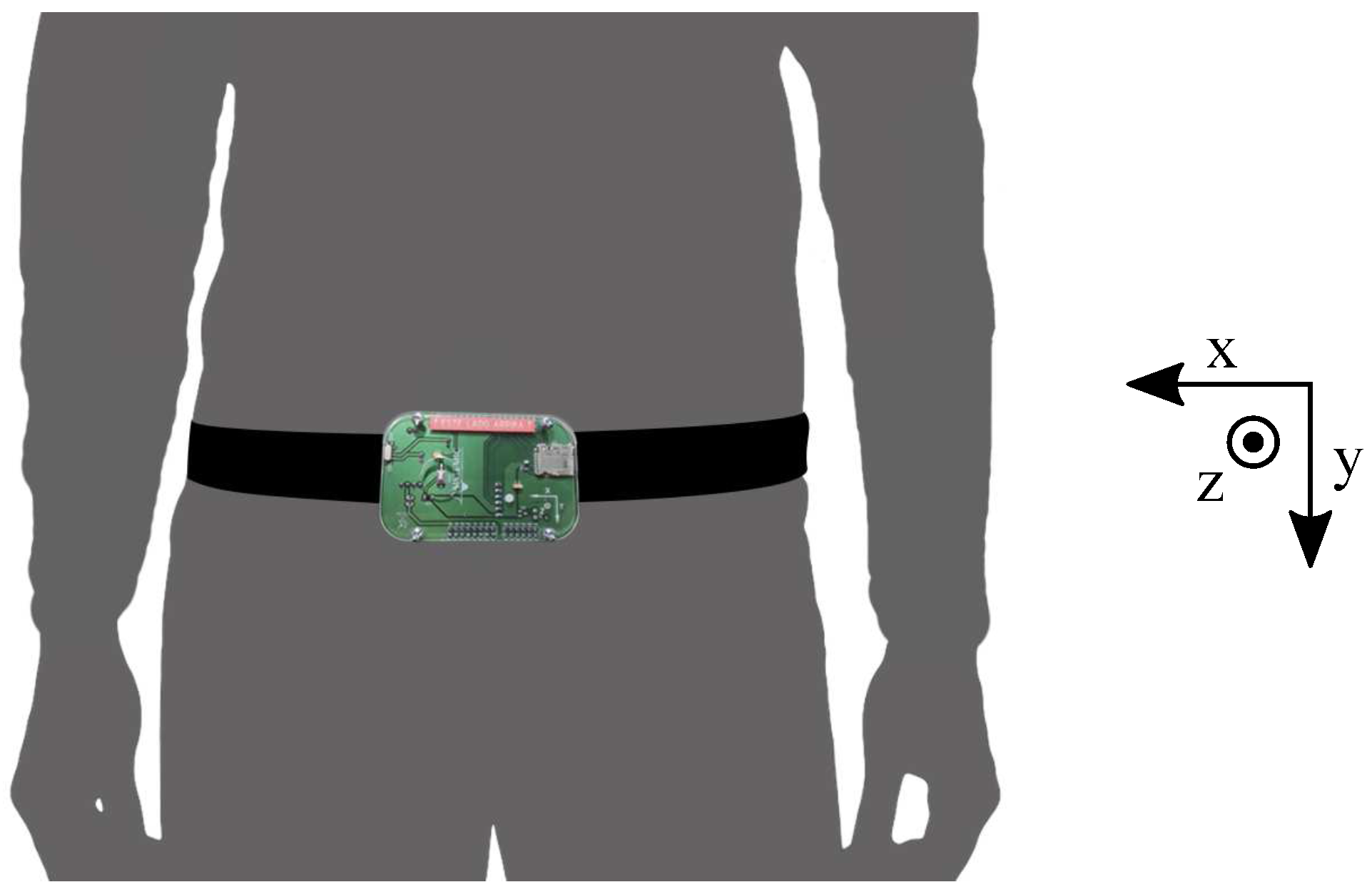

In this paper, we present and make publicly available a complete dataset of falls and ADL acquired with a self-developed embedded device that can be easily replicated (see [

20,

21] for example designs). It includes young adults and elderly people performing a wide variety of activities selected from a survey and previous studies. The dataset contains 19 types of ADLs and 15 types of falls. It includes acceleration (from two accelerometers) and rotation (from a gyroscope) data from 38 volunteers divided into two groups: 23 adults between 19 and 30 years old, and 15 elderly people between 60 and 75 years old. The dataset is available for free download as

Supplementary Materials [

22], and videos of each type of activity within the dataset are also included for helping the reader to replicate this work. Additionally, a comparative analysis between several features used in the literature is presented as a reference for future works.

5. Discussion

Research on elderly fall detection lacks public datasets with activities and falls simulated by elderly people. Available datasets have few activities and none include falls from the objective population. In this paper, we presented and made publicly available the SisFall dataset. It consisted of up to 34 activities (falls and ADLs) that were performed by 38 participants with a wearable device fixed to their waist. One of the participants was an elderly person that simulated both ADL and falls. Together with the dataset, we included videos of all simulated activities as an effort to help other researchers to replicate this work.

The SisFall dataset contains more participants, types of activities and recordings than any other publicly available dataset. It consists of 2706 ADL and 1798 falls, including data from 15 healthy independent elderly persons. To our knowledge, no public dataset contains data from elderly people, and their number of recordings is smaller (Mobifall: 342 ADLs and 288 falls; TFall: continuous ADLs and 240 falls; DLR: 961 ADLs and 56 falls; and Project Gravity: 138 ADLs and 72 falls).

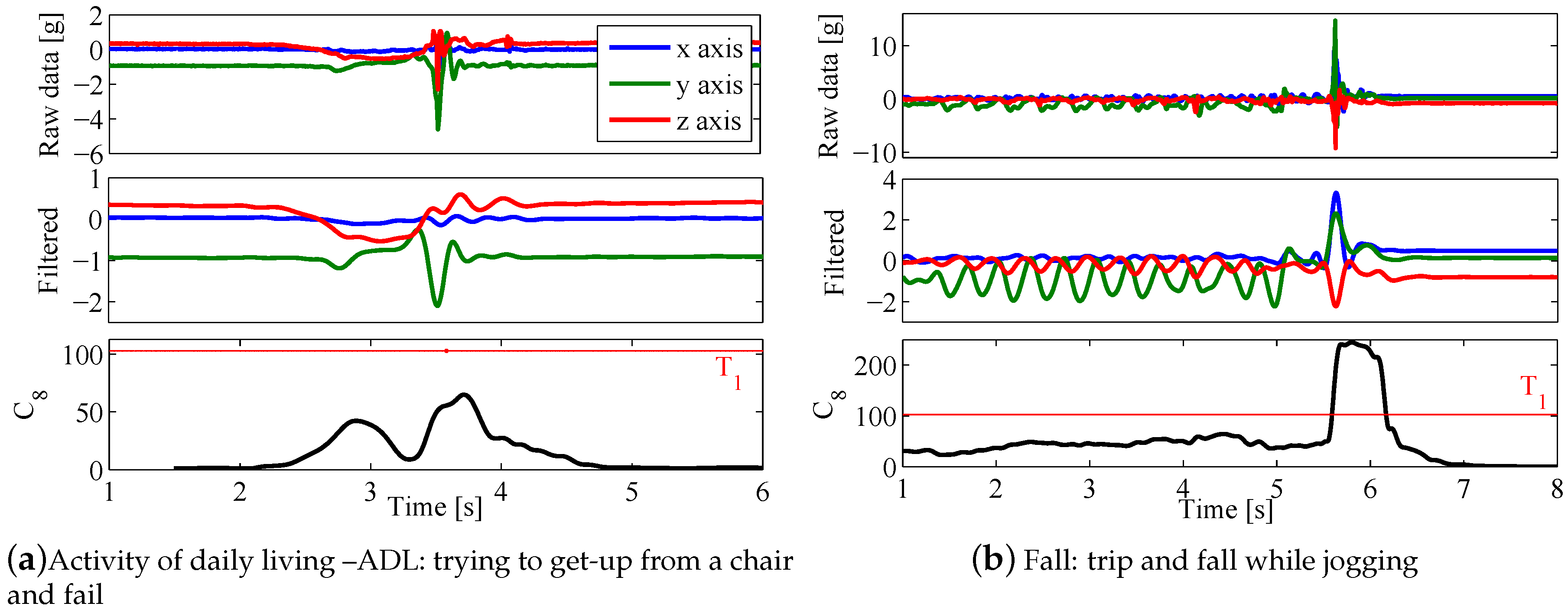

We developed and released this dataset as a benchmark for other authors in the field. In that sense, we tested it with some of the most widely used features to detect falls, with three proof-of-principle experiments: the effect of the preprocessing stage, the importance of including data from elderly people, and how a threshold focused on maximum sensitivity severely reduces the specificity. Explanations about preprocessing are commonly simplified in most approaches available in the literature. Here, with a simple 4th order Butterworth filter, we increased the accuracy of several features. However, not all features improved their performance, which is expected as they share an integral-based nature; but it is a fact not previously discussed in the literature. Nevertheless, preprocessing is crucial in fall detection as it defines the minimum acquisition frequency, which, in this work, we found to be at 11 Hz for those features that indeed improved with the filtering stage.

In the second test, we analyzed the effect of training with young adults on a system developed to work with elderly people, which is usual in the field despite preliminary evidence that the results are biased [

8]. Similar to this previous work, we found that the sensitivity is highly affected in all features once they are validated with the objective population. Note that Bagalà et al. [

8] used 29 real falls of highly impaired Parkinson’s patients. In our case, we used 75 falls under controlled conditions from a single independent elderly person, which is also a martial arts expert. It is noteworthy that, despite the large difference among validation sets, our results presented the same trend of [

8]. Moreover, when the classifiers were trained with elderly people, the accuracy was still lower than with young people. These findings suggest that, due to the overall higher acceleration that young people show in all activities, including ADLs, and falls from elderly people, it is crucial to obtain proper results. Additionally, the lower accuracy obtained when training with elderly people suggests that there is a need of a better feature extraction.

Developing a better feature extraction should be focused on specific activities. There are not many works focused on the types of falls elderly people suffer (most authors were limited to perform the same activities of previous works). However, the answers of our survey, previous works [

5] and our findings suggest that if properly selected, authors could use a small sample of activities for their own tests. Performing an individual activity analysis (as presented in

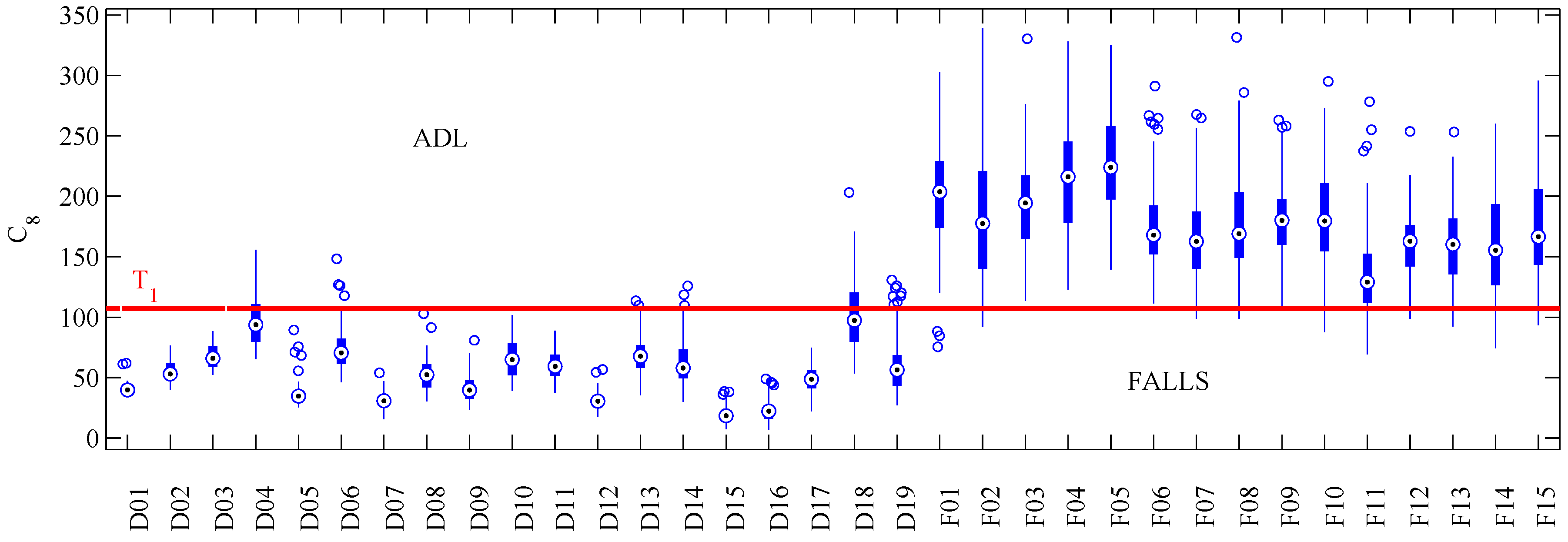

Figure 4) should help with the design of new features.

Our final test consisted of placing the threshold (

) below the fall value with minimum amplitude. In practice, fall detection systems are expected to detect all falls, while keeping the false positive rate as low as possible. Results of

Table 7 presented poor results in all features. Note how a not too large increment in sensitivity caused significant reductions in specificity. This fact is noteworthy, as most works focus on maximizing accuracy instead of favoring fall detection. Authors that addressed this issue usually included a false-alarm button as part of their methodology [

29].

Our dataset may be biased by two facts: (

i) all of our falls were simulated (mimicked). Klenk et al. [

28] stated that young people tend to fall faster than in real-life conditions; (

ii) we only included falls from one independent elderly person; and, as a martial arts expert, this subject is not representative of the population. With respect to the first fact, the results of

Section 4.2 show that training with young people effectively shows higher accelerations. However, this difference can be quantified and corrected by comparing their mean acceleration per activity versus the elderly subjects on the same dataset. About the second fact, our falls from an elderly person (

Section 4.2) presented the same trend of a previous work that included real falls of impaired elderly people [

8]. However, the crucial point here are the problems with obtaining real falls from healthy independent elderly people. In our case, we only had permission from the Ethics committee for simulating falls with one participant (SE06). Indeed, this participant always tried to soften the fall (as any person trying to avoid a fall would do). We consider that going farther with a wider and more realistic elderly fall dataset would be extremely challenging. Independent elderly people (the target of this work) fall on average once per year, i.e., to acquire a single fall would require a full year of continuous recording. Moreover, in this way, the actual conditions of the fall (activity, side of falling, etc.) may never be known. Despite these possible biases, we expect that this dataset will be a useful benchmark for other authors to test their own approaches and to solve the open issues presented in this work.