Integrating Virtual Worlds with Tangible User Interfaces for Teaching Mathematics: A Pilot Study

Abstract

:1. Introduction

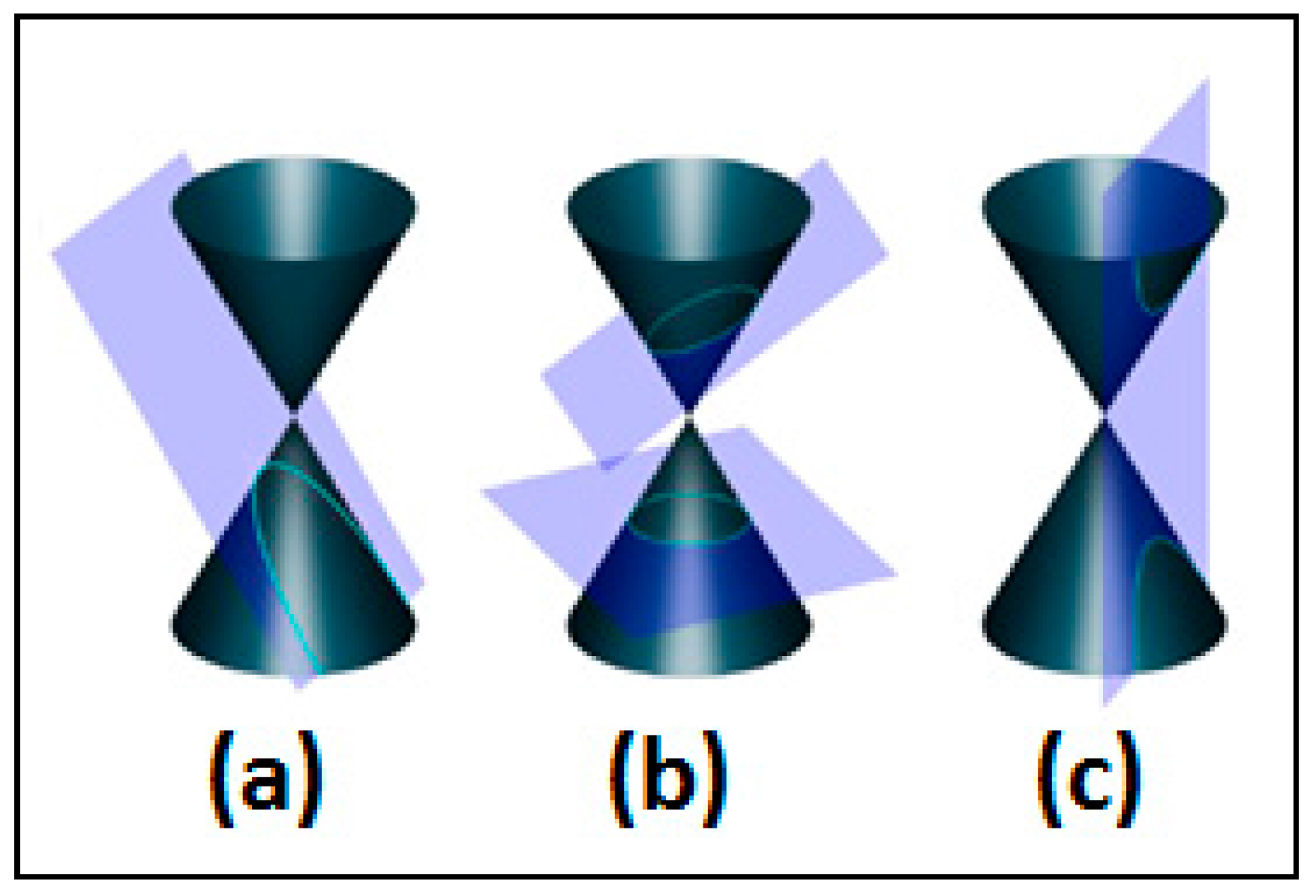

2. Materials and Methods

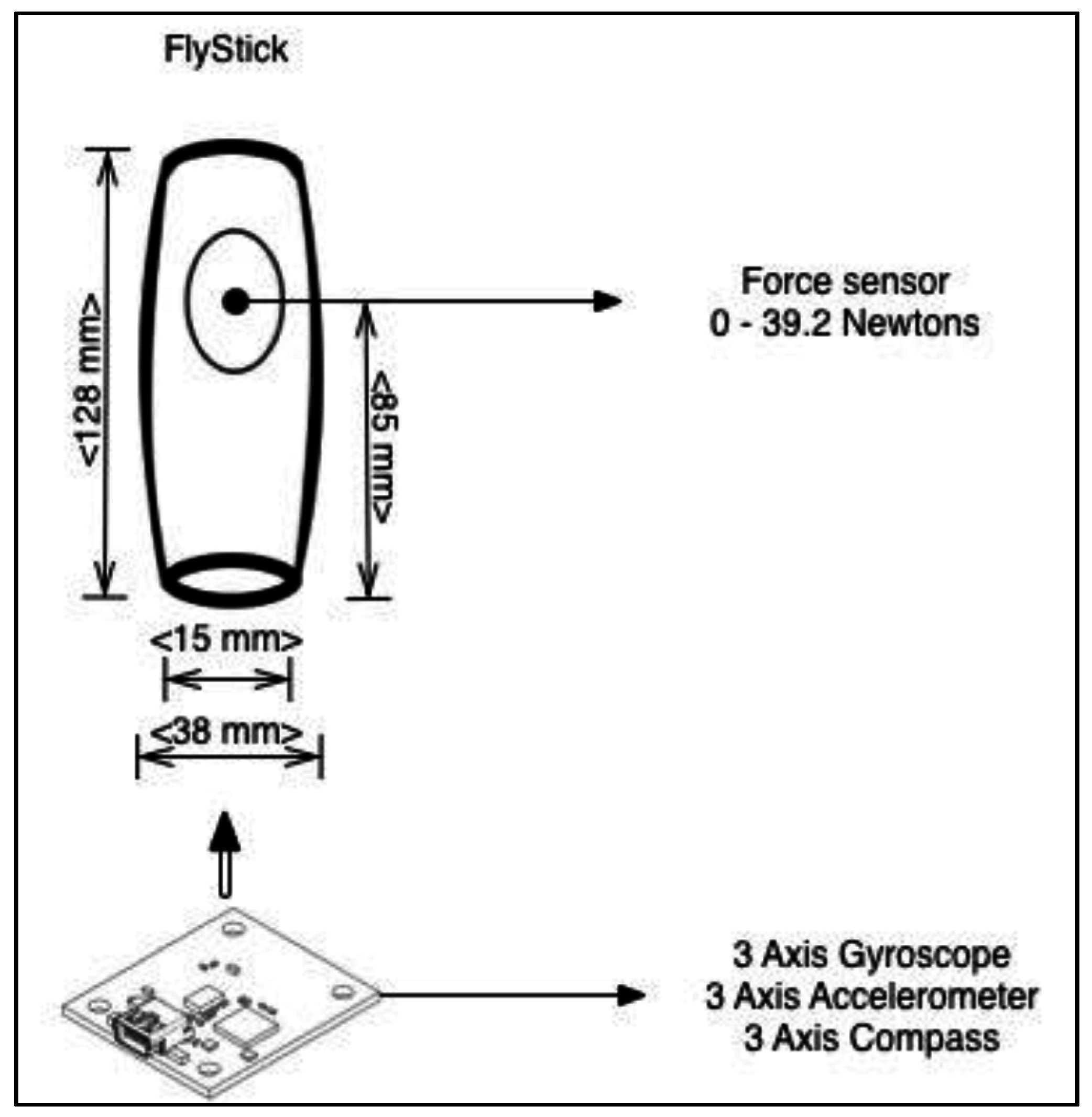

2.1. FlyStick Tangible Interface

2.1.1. Design of the Virtual World

2.1.2. Tangible Interface Design

- Shape and size: The educational activity detailed below is aimed at students between 12 and 14 years old, so the device shape was designed taking into account the dimensions of other devices aimed at teenagers, such as Sony’s DualShock, whose shape and dimensions were used as inspiration to house the sensors on a single handed device (see Figure 4).

- Material: The selection of a material for the construction of the housing is a relevant aspect, since it is going to be on direct contact with the user’s skin. The chosen material was plastic PLA (polylactide), due to its characteristic of being organic and presenting no toxicity to humans.

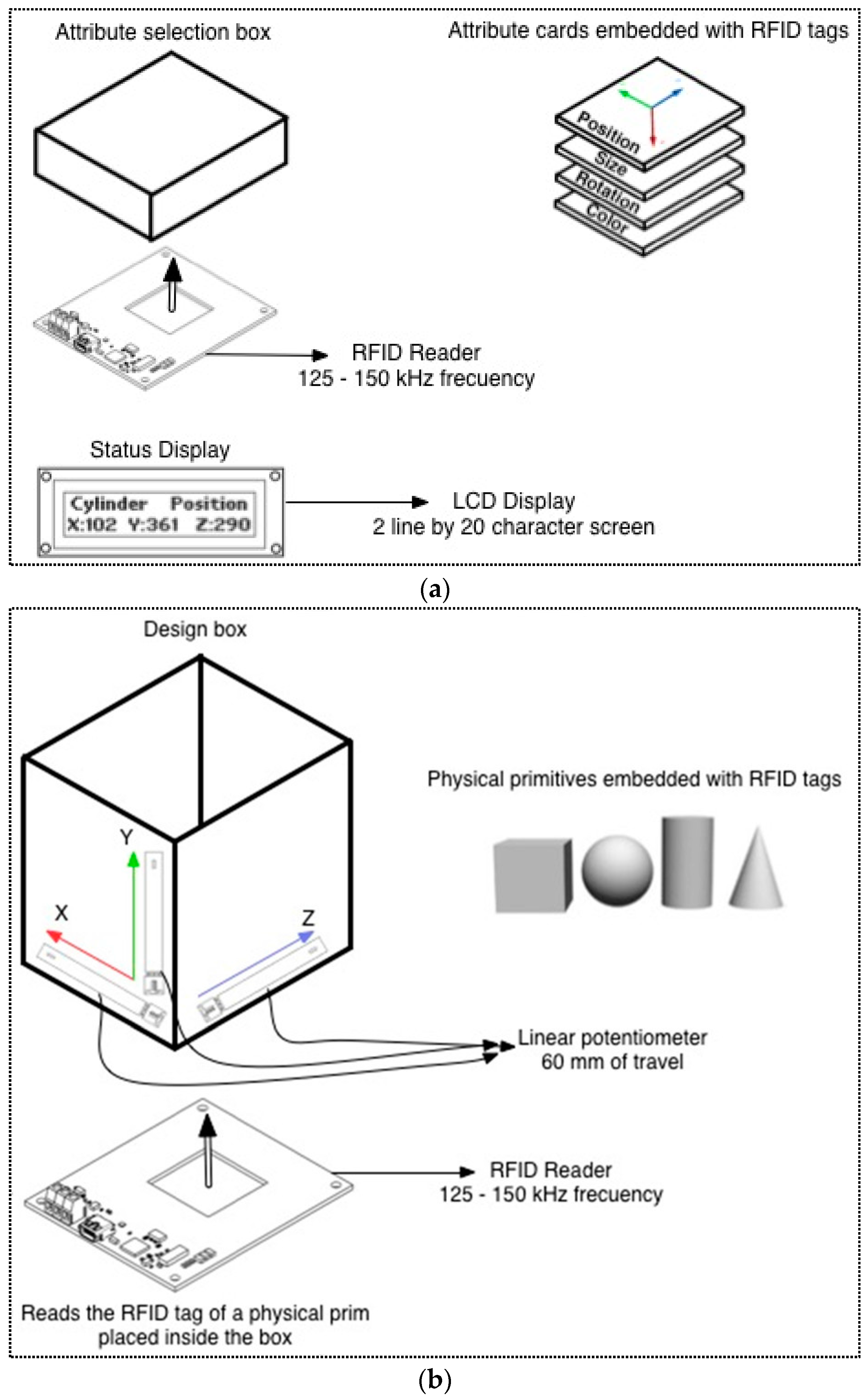

2.2. PrimBox Tangible Interface

Tangible Interface Design

3. Implementing Educational Applications with the Tangible Interfaces: A Pilot Study

3.1. Research Design

- An introductory questionnaire about virtual worlds.

- A usability test about PrimBox and FlyStick.

- A semi-structured interview with the Mathematics teacher.

- Exercises and tests on the subject.

3.2. Activity 1: PrimBox

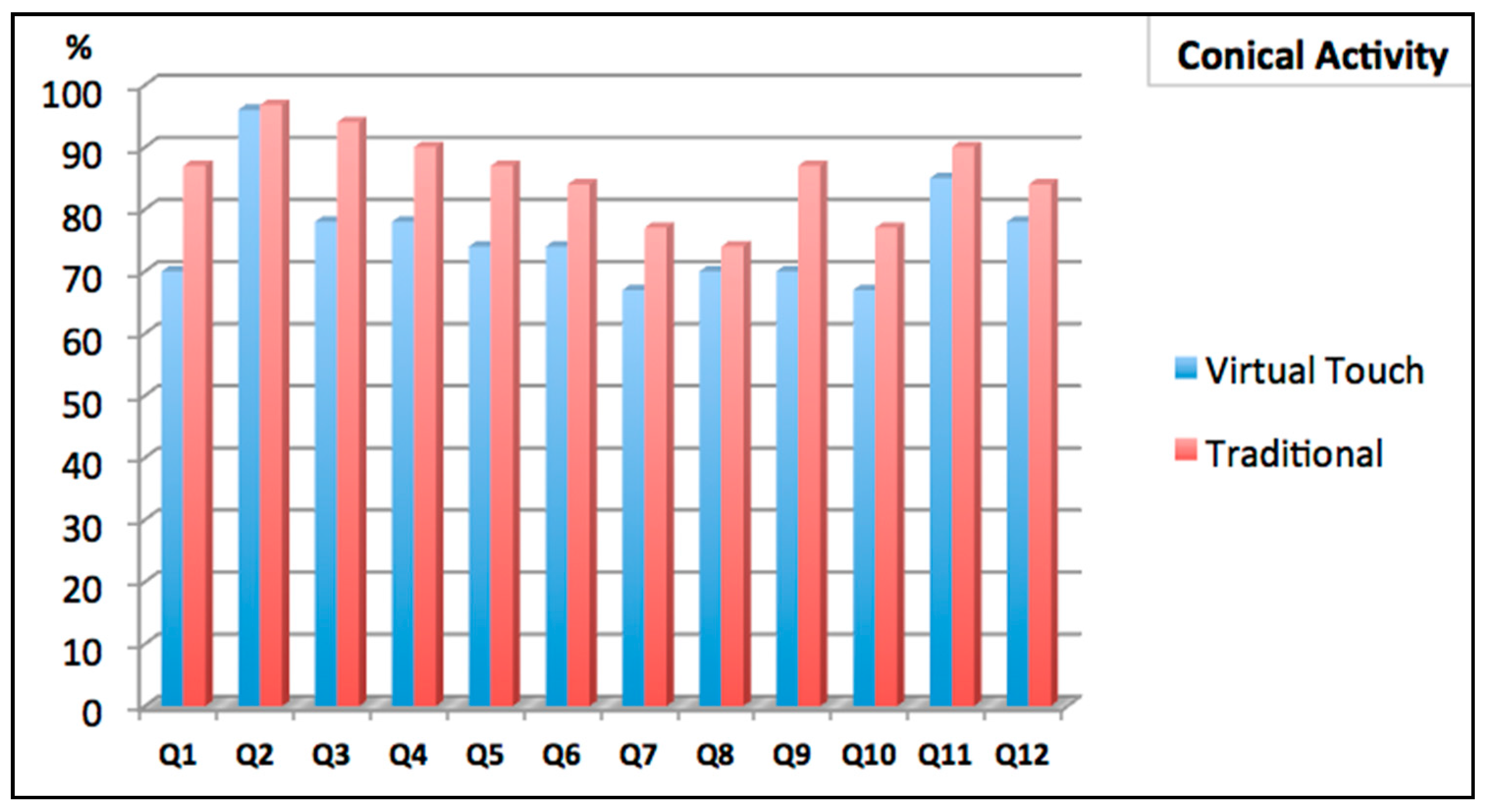

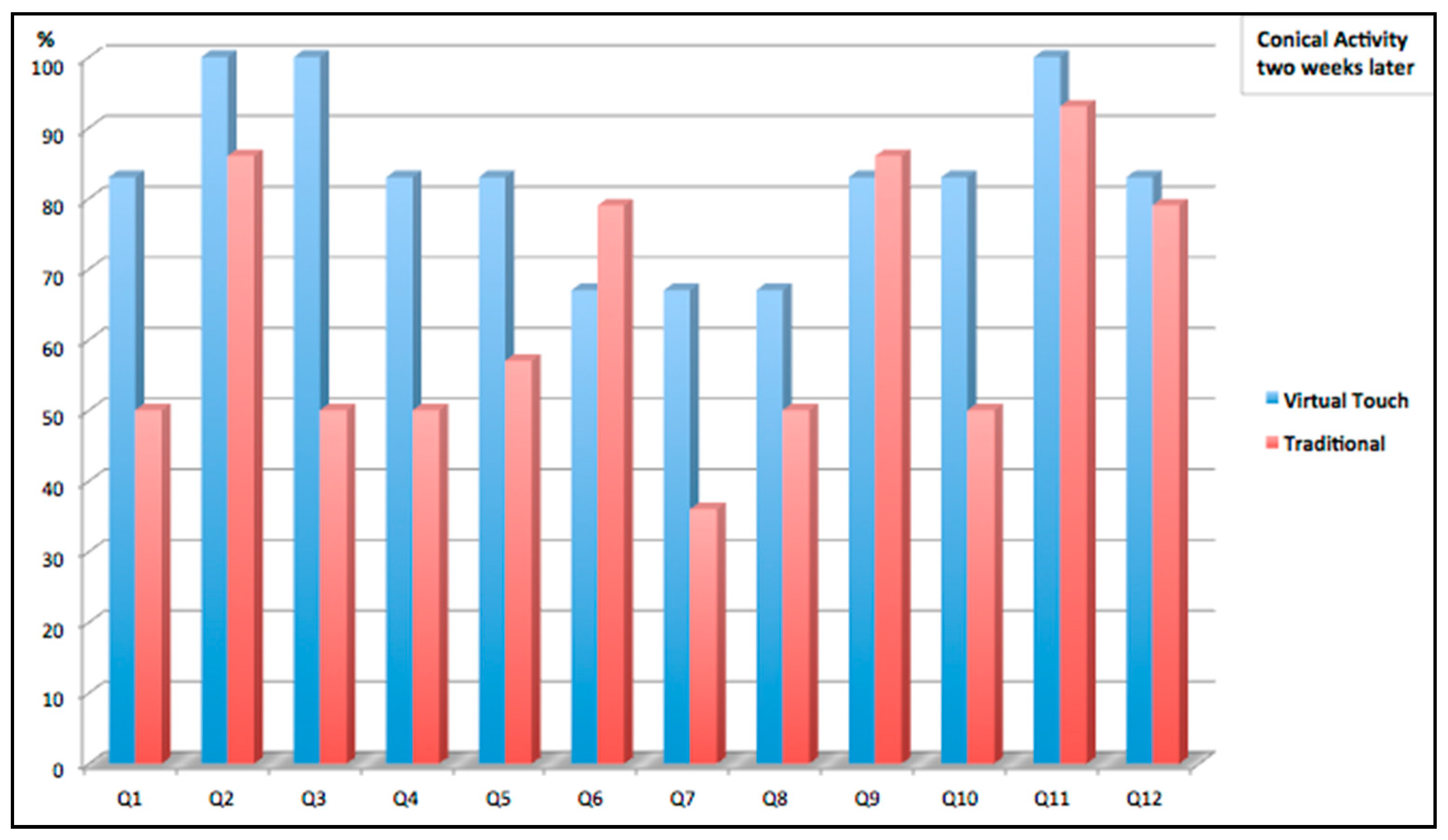

3.3. Activity 2: FlyStick

- H1: The scores for the students in the control group show no significant differences between the first and second tests.

- H2: The scores for the students in the mixed-reality group show no significant differences between the first and second tests.

4. Conclusions and Future Work

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Piaget, J. The Grasp of Consciousness; Harvard University Press: Cambridge, MA, USA, 1976. [Google Scholar]

- Papert, S.A. Mindstorms: Children Computers and Powerful Ideas; Perseus Publishing: Cambridge, MA, USA, 1980. [Google Scholar]

- Fitzmaurice, G.W. Bricks: Laying the foundations for graspable user interfaces. In Proceedings of the Conference on Human Factor in Computing Systems (CHI95), Denver, CO, USA, 7–11 May 1995.

- Ishii, H.; Ullmer, B. Tangible bits: Towards scamless interfaces between people, bits and atoms. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Atlanta, GA, USA, 22–27 March 1997; pp. 234–241.

- Price, S.; Rogers, Y.; Scaife, M.; Stanton, D.; Neale, H. Using ‘tangibles’ to promote novel forms of playful learning. Interact. Comput. 2003, 15, 169–185. [Google Scholar] [CrossRef]

- Shaer, O.; Hornecker, E. Tangible user interfaces: Past, present, and future directions. Found. Trends Hum. Comput. Interact. 2009, 3, 1–137. [Google Scholar] [CrossRef]

- Ullmer, B.; Ishii, H.; Jacob, R.J.K. Token+constraint systems for tangible interaction with digital information. ACM Trans. Comput. Hum. 2005, 12, 81–118. [Google Scholar] [CrossRef]

- Zuckerman, O.; Arida, S.; Resnick, M. Extending tangible interfaces of education: Digital Montessori-inspired manipulative. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Portland, OR, USA, 2–7 April 2005; pp. 859–868.

- Watanable, R.; Itoh, Y.; Asai, M.; Kitamura, Y.; Kishino, F.; Kikuchi, H. The soul of ActiveCube: Implementing a flexible, multimodal, three-dimensional spatial tangible interface. In Proceedings of the ACM SIGCHI International Conference on Advances in Computer Entertainment Technology (ACE’04), Singapore, 3–4 June 2004.

- Raffle, H.; Parkes, A.; Ishii, H. Topobo: A constructive assembly system with kinetic memory. In Proceedings of the SIGCHI Conference on Human Factors in Computing (CHI’04), Vienna, Austria, 24–29 April 2004.

- Newton-Dunn, H.; Nakano, H.; Gibson, J. Block Jam: A tangible interface for interactive music. In Proceedings of 2003 Conference on New Interfaces for Musical Expression (NIME-03), Montreal, QC, Canada, 22–24 May 2003.

- Schweikardt, E.; Gross, M.D. roBlocks: A robotic construction kit for mathematics and science education. In Proceedings of the 8th International Conference on Multimodal Interfaces, Banff, AB, Canada, 2–4 November 2006; pp. 72–75.

- Stanton, D.; Bayon, V.; Neale, H.; Ghali, A.; Benford, S.; Cobb, S.; Ingram, R.; O’Malley, C.; Wilson, J.; Pridmore, T. Classroom collaboration in the design of tangible interfaces for storytelling. In Proceedings of the SIGCHI Conference on Human Factors in Computer Systems (CHI’01), Seattle, WA, USA, 31 March–5 April 2001.

- Jordà, S.; Geiger, G.; Alonso, M.; Kaltenbrunner, M. The reacTable: Exploring the synergy between live music performance and tabletop tangible interfaces. In Proceedings of the 1st International Conference on Tangible and Embedded Interaction, Baton Rouge, LA, USA, 15–17 February 2007.

- Horn, M.S.; Jacob, R.J.K. Designing programming languages for classroom use. In Proceedings of the 1st Intertanionat Conference on Tangible and Embedded Interaction, Baton Rouge, LA, USA, 15–17 February 2007.

- Wang, D.; Zhang, C.; Wang, H. T-Maze: A tangible programming tool for children. In Proceedings of the 10th International Conference on Interaction Design and Children, Ann Arbor, MI, USA, 20–23 June 2011; pp. 127–135.

- Tada, K.; Tanaka, J. Tangible programming environment using paper cards as command objects. Procedia Manuf. 2015, 3, 5482–5489. [Google Scholar] [CrossRef]

- Anderson, D.; James, L.F.; Marks, J.; Leigh, D.; Sullivan, E.; Yedidia, J.; Ryall, K. Building virtual structures with physical blocks. In Proceedings of the 12th Annual ACM Symposium on User Interface Software and Technology, Asheville, NC, USA, 7–10 November 1999; pp. 71–72.

- Olkun, S. Comparing computer versus concrete manipulatives in learning 2D geometry. J. Comput. Math. Sci. Teach. 2003, 22, 43–56. [Google Scholar] [CrossRef]

- Klahr, D.; Triona, L.M.; Williams, C. Hands on what? The relative effectiveness of physical versus materials in an engineering design project by middle school children. J. Res. Sci. Teach. 2007, 44, 183–203. [Google Scholar] [CrossRef]

- Manches, A.; O’Malley, C.; Benford, S. The role of physical representations in solving number problems: A comparison of young children’s use of physical and virtual material. Comput. Educ. 2010, 54, 622–640. [Google Scholar] [CrossRef]

- Herbert, A.; Thompson, F.; Garnier, F. Immaterial art stock: Preserve, document and disseminate the pioneering works of art created inside online immersive platforms. In Proceedings of the 2nd European Immersive Education Summit, Paris, France, 26–27 November 2012; pp. 101–113.

- Frutos, P. Practice, context, dialogue: Using automated conversational agents to extend the reach and depth of learning activities in immersive worlds. In Proceedings of the World Conference on Educational Multimedia, Hypermedia and Telecommunications, Lisbon, Portugal, 27 June–1 July 2011.

- Dickey, M.D. Brave new (interactive) worlds: A review of the design affordances and constraints of two 3D virtual worlds as interactive learning environments. Interact. Learn. Environ. 2005, 13, 121–137. [Google Scholar] [CrossRef]

- Borgman, C.L. Fostering Learning in the Networked World: The Cyberlearning Opportunity and Challenge; DIANE Publishing: Darby, PA, USA, 2011; Volume 2. [Google Scholar]

- Ducan, I.; Miller, A.; Jiang, S. A taxonomy of virtual Worlds usage in education. Br. J. Educ. Technol. 2012, 43, 949–964. [Google Scholar] [CrossRef]

- Bull, G.; Bredder, E.; Malcolm, P. Mixed reality. Learn. Lead. Technol. 2013, 40, 10–11. [Google Scholar]

- Milgram, P.; Takemura, H.; Utsumi, A.; Kishino, F. Augmented reality: A class of displays on the reality-virtuality continuum. Photonics Ind. Appl. 1995, 2351, 282–292. [Google Scholar]

- Ishii, H. Tangible bits: Beyond pixels. In Proceedings of the 2nd International Conference on Tangible and Embedded Interaction, Bonn, Germany, 18–21 February 2008.

- Mateu, J.; Lasala, M.J.; Alamán, X. VirtualTouch: A tool for developing mixed reality educational applications and an example of use for inclusive education. Int. J. Hum. Comput. Interact. 2014, 30, 815–828. [Google Scholar] [CrossRef]

- Starcic, I.; Cotic, A.; Zajc, M. Design-based research on the use of a tangible user interface for geometry teaching in an inclusive classroom. Br. J. Educ. Technol. 2013, 44, 729–744. [Google Scholar] [CrossRef]

- Maekawa, T.; Itoh, Y.; Kawai, N.; Kitamura, Y.; Kishino, F. MADO Interface: A window like a tangible user interface to look into the virtual world. In Proceedings of the 3rd International Conference on Tangible and Embedded Interaction, Regent, UK, 16–18 February 2009; pp. 175–180.

- Triona, L.M.; Klahr, D. Point and click or grab and heft: Comparing the influence of physical and virtual instructional materials on elementary school students’ ability to design experiments. Cognit. Instr. 2003, 21, 149–173. [Google Scholar] [CrossRef]

- Kaufmann, H.; Schmalstieg, D.; Wagner, M. Construct3D: A virtual reality application for mathematics and geometry education. Educ. Inf. Technol. 2000, 5, 263–276. [Google Scholar] [CrossRef]

- Wang, D.; He, L.; Dou, K. StoryCube: Supporting children’s storytelling with a tangible tool. J. Supercomput. 2013, 70, 269–283. [Google Scholar] [CrossRef]

- Druin, A.; Stewart, J.; Proft, D.; Bederson, B.; Hollan, J. KidPad: A design collaboration between children, technologists, and educators. In Proceedings of the ACM SIGCHI Conference on Human Factors in Computing Systems, Atlanta, GA, USA, 22–27 March 1997.

- Ananny, M. Telling Tales: A New Toy for Encouraging Written Literacy through Oral Storytelling. Ph.D. Thesis, Massachusetts Institute of Technology, Cambridge, MA, USA, May 2001. [Google Scholar]

- Sylla, C.; Branco, P.; Coutinho, C.; Coquet, E.; Skaroupka, D. TOK: A tangible interface for storytelling. In Proceedings of the CHI’11 Extended Abstracts on Human Factors in Computing Systems, Vancouver, BC, Canada, 7–12 May 2011; pp. 1363–1368.

- Montemayor, J.; Druin, A.; Chipman, G.; Farber, A.; Guha, M. Tools for children to create physical interactive storyrooms. Theor. Pract. Comput. Appl. Entertain. (CIE) 2004, 2, 2–12. [Google Scholar] [CrossRef]

- Russell, A. ToonTastic: A global storytelling network for kids, by kids. In Proceedings of the Fourth International Conference on Tangible, Embedded, and Embodied Interaction (TEI’10), Cambridge, MA, USA, 25–27 January 2010; pp. 271–274.

- Shen, Y.; Mazalek, A. PuzzleTale: A tangible puzzle game for interactive storytelling. Comput. Entertain. 2010, 8, 11. [Google Scholar] [CrossRef]

- Cao, X.; Lindley, S.E.; Helmes, J.; Sellen, A. Telling the whole story: Anticipation, inspiration and reputation in a field deployment of TellTable. In Proceedings of the 2010 ACM Conference on Computer Supported Cooperative Work (CSCW’10), Savannah, GA, USA, 6–10 February 2010; pp. 251–260.

- Norman, D.; Draper, S. User Centered System Design: New Perspectives on Human-Computer Interaction; Lawrence Erlbaum Associates: Hillsdale, NJ, USA, 1986. [Google Scholar]

- Gu, N.; Kim, M.J.; Maher, M. Technological advancements in synchronous collaboration: The effect of 3D virtual worlds and tangible user interfaces on architectural design. Autom. Constr. 2011, 20, 270–278. [Google Scholar] [CrossRef]

- Moeslund, T.B.; Stoerring, M.; Liu, Y.; Broll, W.; Lindt, I.; Yuan, C.; Wittkaemper, M. Towards natural, intuitive and non-intrusive HCI devices for roundtable meetings. In Multi-User and Ubiquitous User Interfaces; Universität des Saarlandes: Saarland, Germany, 2004; pp. 25–29. [Google Scholar]

- Fjeld, M.; Bichsel, M.; Rauterberg, M. BUILD-IT: An intuitive design tool based on direct object manipulation. In Gesture and Sign Language in Human-Computer; Wachsmut, I., Frölich, M., Eds.; Springer: Berlin, Germany, 1998; Volume 1371, pp. 297–308. [Google Scholar]

- Dietz, P.; Darren, L. DiamondTouch: A multi-user touch technology. In Proceedings of the 14th Annual ACM Symposium on User Interface Software and Technology, Orlando, FL, USA, 11–14 November 2001; pp. 219–226.

- Nikolakis, G.; Fergadis, G.; Tzovaras, D.; Strintzis, M.G. A mixed reality learning environment for geometry education. In Lecture Notes in Artificial Intelligence (LNAI); Springer: Berlin/Heidelberg, Germany, 2004; Volume 3025, pp. 93–102. [Google Scholar]

- Zhai, S. User performance in relation to 3D input device design. Comput. Graph. ACM 1998, 32, 50–54. [Google Scholar] [CrossRef]

- Mine, M. Working in a Virtual World: Interaction Techniques Used in the Chapel Hill Immersive Modelling Program; Technical Report; University of North Carolina: Chapel Hill, NC, USA, 1996. [Google Scholar]

- Sachs, E.; Roberts, A.; Stoops, D. 3-Draw: A tool for designing 3D shapes. IEEE Comput. Graph. Appl. 1991, 11, 18–26. [Google Scholar] [CrossRef]

- Liang, J.; Green, M. DCAD: A highly interactive 3D modelling system. Comput. Graph. 1994, 18, 499–506. [Google Scholar] [CrossRef]

- Shaw, C.D.; Green, M. THRED: A two-handed design system. Multimed. Syst. 1997, 5, 126–139. [Google Scholar] [CrossRef]

- Gobbetti, E.; Balaguer, J.F. An integrated environment to visually construct 3D animations. In Proceedings of the 22nd Annual Conference on Computer Graphics and Interactive Techniques (SIGGRAPH’95), Los Angeles, CA, USA, 6–11 August 1995; pp. 395–398.

- Butterworth, J.; Davidson, A.; Hench, S.; Olano, T.M. 3DM: A three dimensional modeler using a head-mounted display. In Proceedings 1992 Symposium on Interactive 3D Graphics, Cambridge, MA, USA, 29 March–1 April 1992; pp. 135–138.

- Stoakley, R.; Conway, M.J.; Pausch, R. Virtual reality on a WIM: Interactive worlds in miniature. In Proceedings of the 1995 Conference on Human Factors in Computing Systems (CHI’95), Denver, CO, USA, 7–11 May 1995.

- Mapes, D.P.; Moshell, J.M. A Two-Handed interface for object manipulation in virtual environments. Presence 1995, 4, 403–416. [Google Scholar] [CrossRef]

- Martin, S.; Hillier, N. Characterisation of the Novint Falcon haptic device for application as a robot manipulator. In Proceedings of the Australasian Conference on Robotics and Automation (ACRA), Sydney, Australia, 2–4 December 2009; pp. 291–292.

- OpenSimulator. 2016. Available online: http://opensimulator.org/ (accessed on 5 January 2015).

- Coban, M.; Karakus, T.; Karaman, A.; Gunay, F.; Goktas, Y. Technical problems experienced in the transformation of virtual worlds into an education environment and coping strategies. J. Educ. Technol. Soc. 2015, 18, 37–49. [Google Scholar]

| Tangible Interface | Activity Description | Main Goal | |

|---|---|---|---|

| Activity 1 | VT PrimBox | One student is interacting with the virtual world constructing a geometrical figure following the oral instructions provided by a second student who is seeing a real world model | Using geometry language |

| Activity 2 | VT FlyStick | The students, interacting with the tangible interface, generate various conic curves in the virtual world (circle, parabola, ellipse and hyperbola) | Understanding conic sections |

| Kind of Question | Questions about Virtual Worlds (Session 1: Introduction to Virtual Worlds) | Yes | No |

|---|---|---|---|

| Previous Knowledge | Did you know virtual worlds previously? | 17 (65.4%) | 9 (34.6%) |

| Have you ever played with virtual worlds before? | 15 (57.7%) | 11 (42.3%) | |

| Easy to Use and Interactivity | Did you find it easy to interact in a virtual world? | 25 (96.2%) | 1 (3.8%) |

| Do you think that it’s difficult changing the properties of objects in a virtual world? | 10 (38.5%) | 16 (61.5%) | |

| Have you found difficult to do collaborative activities in a virtual world? | 4 (15.4%) | 22 (84.6%) | |

| Useful to Learn Mathematics and Geometry | Do you think that the virtual worlds help you to understand X, Y, Z coordinates? | 19 (73.1%) | 7 (26.9%) |

| Do you think that virtual worlds can help you to learn Mathematics? | 22 (84.6%) | 4 (15.4%) | |

| Motivation | Did you like the session about virtual worlds? | 25 (96.2%) | 1 (3.8%) |

| Would you like to perform activities using virtual worlds in class? | 25 (96.2%) | 1 (3.8%) | |

| Would you like to perform activities using virtual worlds at home? | 18 (69.2%) | 8 (30.8%) |

| Questions about Usability and User Experience (Session 2: Usability Survey) | Yes | No |

|---|---|---|

| Have you found the tangible elements easy to use? | 8 (61.5%) | 5 (38.5%) |

| Is Virtual Touch easy to use? | 9 (69.2%) | 4 (30.8%) |

| Was it quick to learn how to use the system? | 11 (84.6%) | 2 (15.4%) |

| Have you felt comfortable using Virtual Touch? | 10 (76.9%) | 3 (23.1%) |

| Virtual Touch facilitates group work or teamwork? | 11 (84.6%) | 2 (15.4%) |

| Have you needed help or assistance of the teacher? | 2 (15.4%) | 11 (84.6%) |

| Have the simulation of the activities in the virtual world been too complex? | 5 (38.5%) | 8 (61.5%) |

| Has the effort to solve the activities been very high? | 1 (7.7%) | 12 (92.4%) |

| Semi-Structured Interview Made to the Teacher | Answers |

|---|---|

| Have you ever played with virtual worlds before? | No |

| Have you found interesting the Virtual Touch system? | Yes |

| Have you needed technical support for using Virtual Touch? | Yes |

| Do you think that virtual worlds could improve the teaching of Mathematics? | Yes |

| |

| |

| |

| |

| Did you find difficult to use the tangible? | No |

| Did you require a lot of effort to create training activities in the virtual world? | Yes |

| In your opinion, the major advantages of the Virtual Touch system are… | The tangible interface allowed recreating movements, which did not require great accuracy in the virtual world, in a more comfortable and closer to the student way. |

| In your opinion, the major disadvantages of the Virtual Touch system are… |

|

| |

| |

| |

| Do you think the student may be distracted in the virtual world? | Yes |

| Rate the sessions |

|

| |

| |

| Assessment of “Virtual Touch FlyStick” |

|

| |

| Assessment of “Virtual Touch PrimBox” |

|

|

| Result | Control Group | Experimental Group |

|---|---|---|

| Sample size | n = 29 | m = 30 |

| Arithmetic average of results | X = 74.5 | Y = 100 |

| Arithmetic average on efficiency | X = 106.77 | Y = 113.28 |

| Variance | S1 = 5403.07 | S2 = 1708.7 |

| Standard deviation | SD1 = 73.50 | SD2 = 41.33 |

| Strong Points | Weak Points |

|---|---|

| Meaningful learning | Distraction in some students |

| Active learning | Need to have good equipment (high band width, computer with good graphic cards, configuration ports to connect with server.) |

| Reduce impulsivity | Evaluate the novelty factor in a longer period |

| Improve the motivation | Need to create and adapt all activities and teaching materials |

| Need to perform training for teachers | |

| Difficulty controlling the behavior of avatars |

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Guerrero, G.; Ayala, A.; Mateu, J.; Casades, L.; Alamán, X. Integrating Virtual Worlds with Tangible User Interfaces for Teaching Mathematics: A Pilot Study. Sensors 2016, 16, 1775. https://doi.org/10.3390/s16111775

Guerrero G, Ayala A, Mateu J, Casades L, Alamán X. Integrating Virtual Worlds with Tangible User Interfaces for Teaching Mathematics: A Pilot Study. Sensors. 2016; 16(11):1775. https://doi.org/10.3390/s16111775

Chicago/Turabian StyleGuerrero, Graciela, Andrés Ayala, Juan Mateu, Laura Casades, and Xavier Alamán. 2016. "Integrating Virtual Worlds with Tangible User Interfaces for Teaching Mathematics: A Pilot Study" Sensors 16, no. 11: 1775. https://doi.org/10.3390/s16111775

APA StyleGuerrero, G., Ayala, A., Mateu, J., Casades, L., & Alamán, X. (2016). Integrating Virtual Worlds with Tangible User Interfaces for Teaching Mathematics: A Pilot Study. Sensors, 16(11), 1775. https://doi.org/10.3390/s16111775