Developing Mixed Reality Educational Applications: The Virtual Touch Toolkit

Abstract

:1. Introduction: State of the Art

1.1. Virtual Worlds

| Cat. * | Area | Project | Target | Virtual World | Website or Reference | Timing |

|---|---|---|---|---|---|---|

| C | Foreign Language learning | Kamimo Project | Higher education | Second Life | [10] | 2007–2008 |

| C | AVALON Project | Language teachers/learners | Second Life | [11] | 2009–2011 | |

| C | AVATAR Project | Secondary school teachers | Second Life | [12] | 2009–2011 | |

| C | NIFLAR | Secondary education | Second Life, OpenSim | [13] | 2009–2011 | |

| C | TILA Project | Secondary education | OpenSim | [14] | 2013–2015 | |

| C | CAMELOT Project | Language educators | Second Life | [15] | 2013–2015 | |

| S | Art, Creativity | ST.ART | Secondary school | OpenSim | [16] | 2009–2011 |

| E | Mathematics and Geometry | TALETE Project | Secondary school | Unity | [17] | 2011–2013 |

| E | Programming | V-LEAF | Secondary school | OpenSim | [18] | 2011 |

| E | OpenSim & Scratch40S | Secondary school | OpenSim | [19] | 2014 | |

| S,E | Science, biology, chemistry, history | The River City | Middle school | Active Worlds | [20] | 2002 |

| S,E | EcoMUVE | Middle school | Unity | [21] | 2010 | |

| E | Collaborative, social skills | The Vertex Project | Primary school (K-12) | Active Worlds | [22] | 2000–2002 |

| E | Euroland | Primary and secondary | Active Worlds | [23] | 1999–2000 |

- Several educative levels: primary school, secondary school and university.

- Several subject areas: mathematics, programming, etc.

- Several methodologies: Machinima, problems solving, etc.

- Several virtual world environments: Second Life, Open Sim, Active Worlds.

1.2. Tangible Interfaces in Education

- Better understanding of complex structures through direct manipulation.

- Learning is more effective and natural.

- Tangible interfaces facilitate active participation and reflection.

- Accessible Learning is enabled (students with disabilities and students with special educational needs).

- Promotes collaborative learning through the development of collaborative spaces where the interaction among students is enforced.

- The results of the activities are more immediate, visible and palpable.

- Physical restrictions of the objects can prevent or reduce errors (e.g., programming blocks that do not fit because they can not go together).

- The activities with tangible interfaces strengthen motor skills.

1.3. Mixed Reality

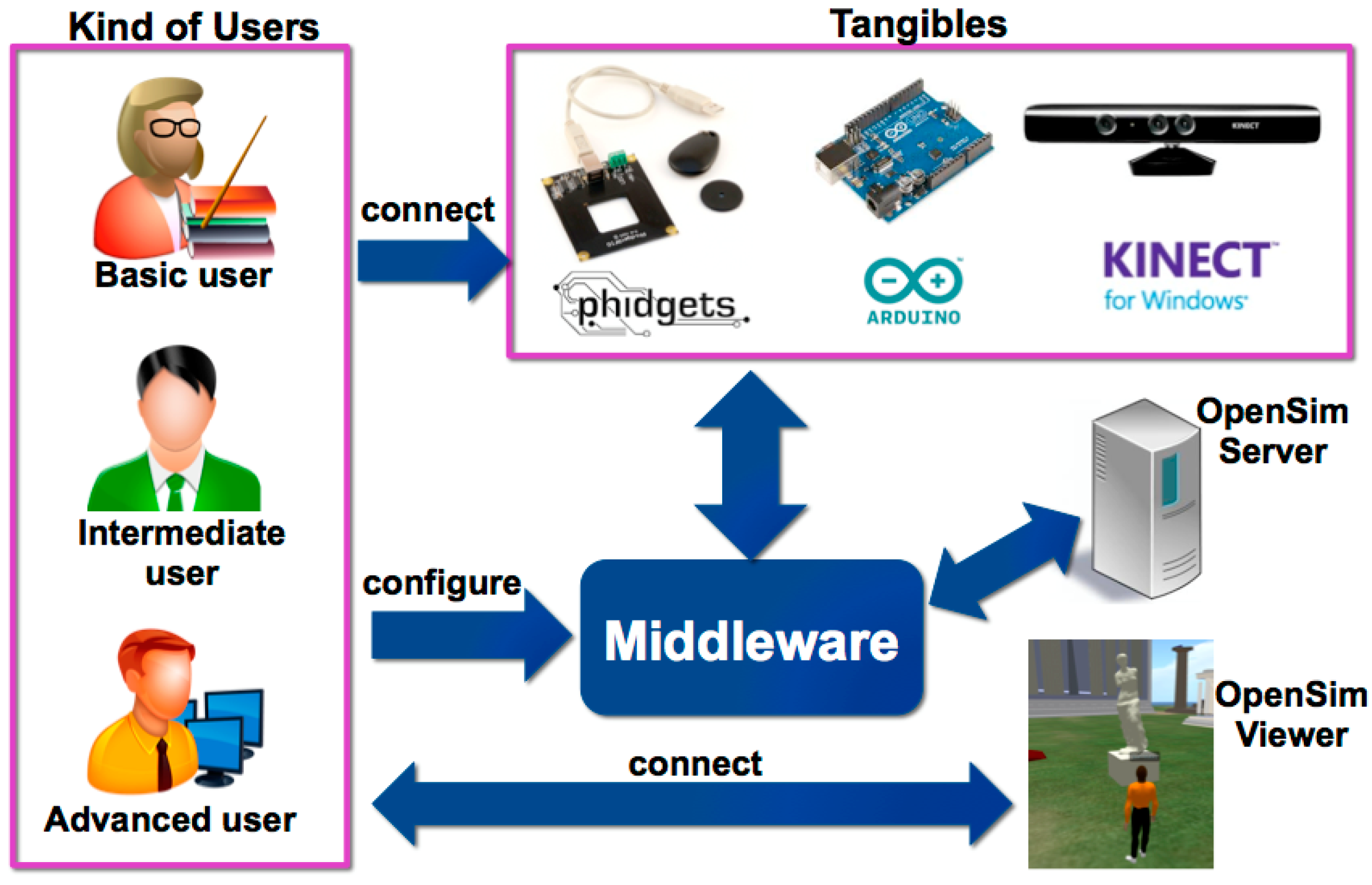

2. Virtual Touch: A Toolkit for Developing Mixed Reality Educational Applications

2.1. Virtual Touch Architecture

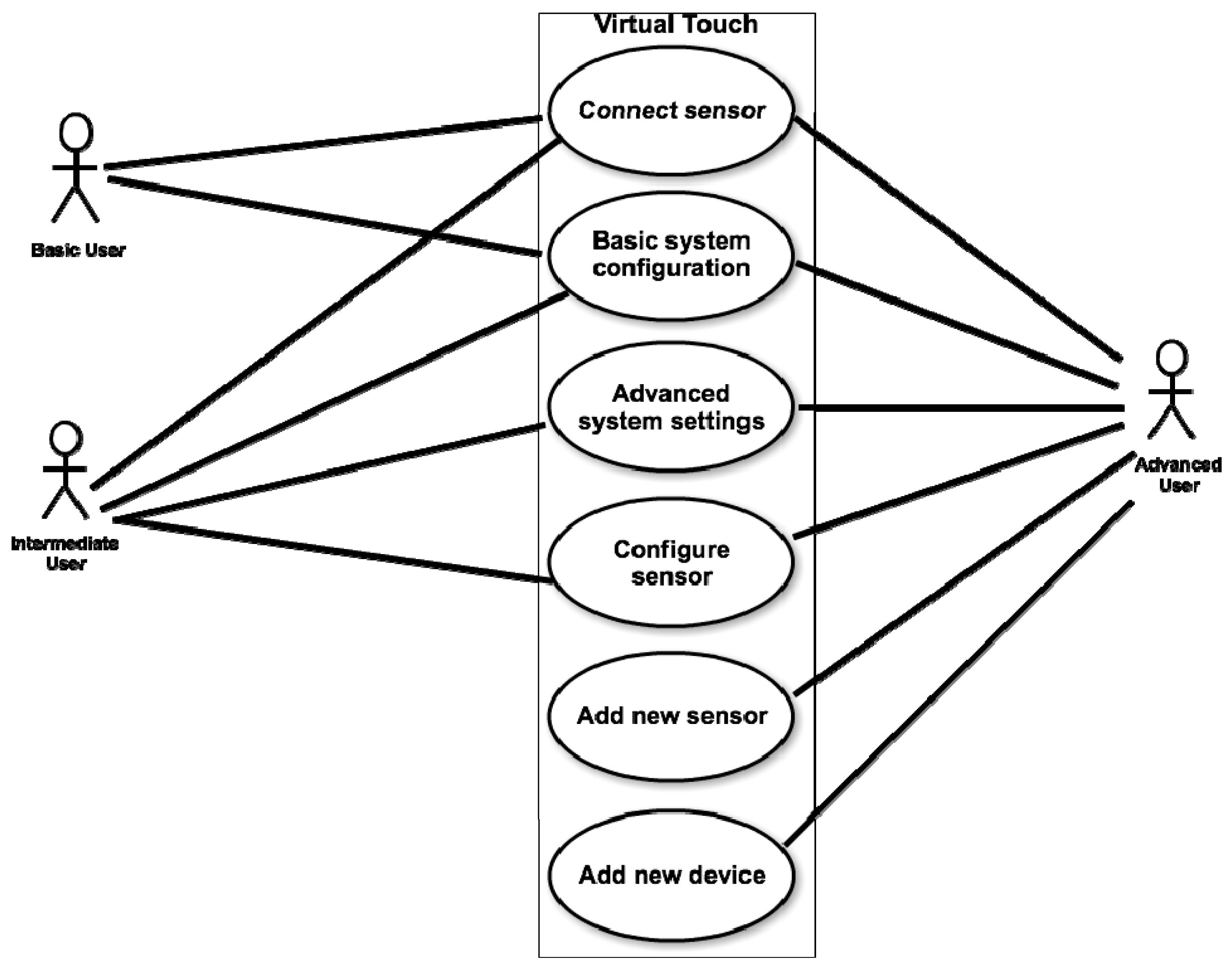

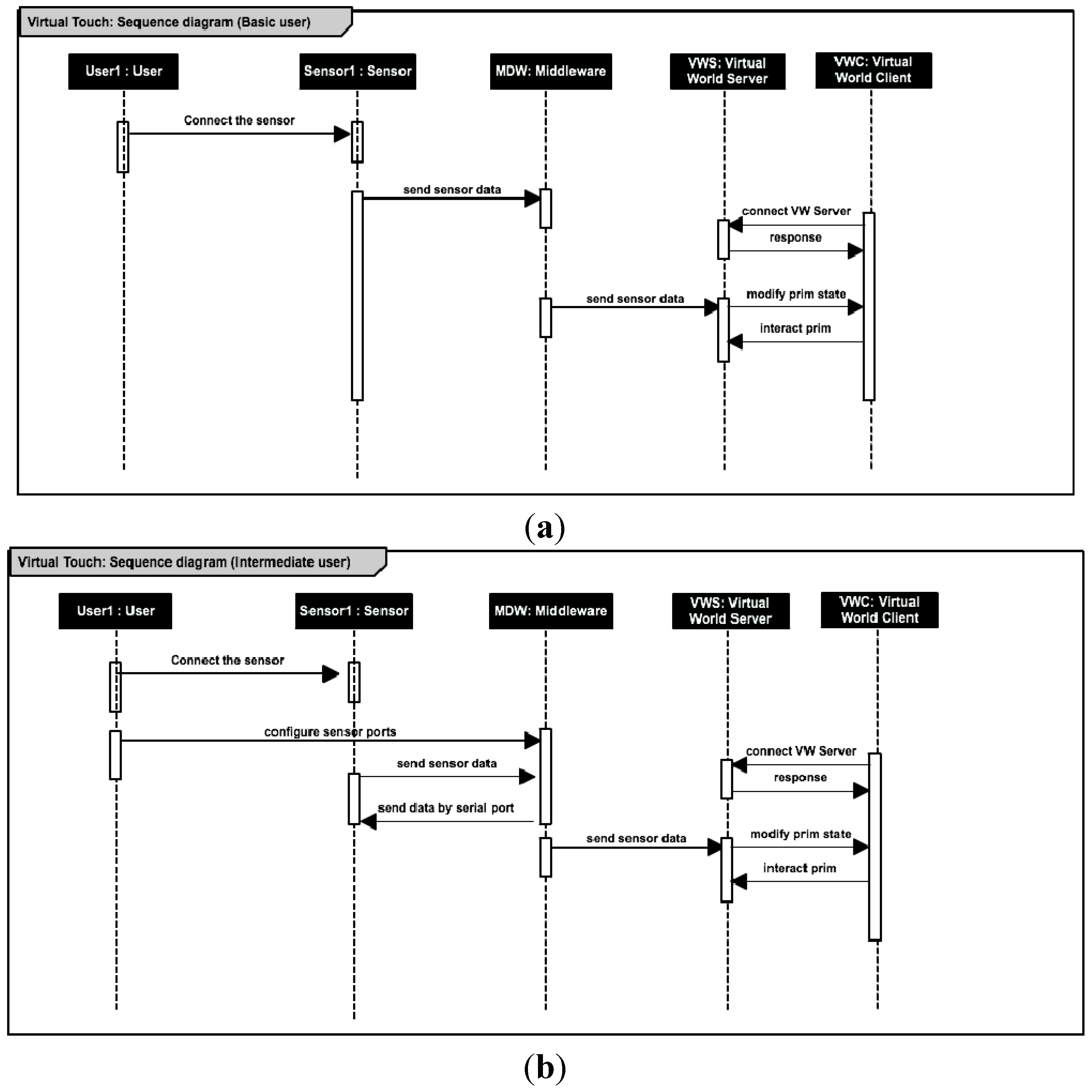

- Basic user: is a teacher who has a basic knowledge of computers and only have to know how to launch the educational programs provided to her; how to connect to the system the tangible elements needed for the activity (among those available within the toolkit); and how to perform a basic configuration of some parameters of the educational application.

- Intermediate user: is a teacher who has some knowledge of computers, being able (for example) to create macros in spreadsheets, to create objects and to edit scripts in virtual worlds, or to manipulate hardware elements in toolkits like Lego Mindstorms. This user may use Virtual Touch to create new educational applications, building her own virtual world and connecting it to the real world using the tangible elements available in the toolkit.

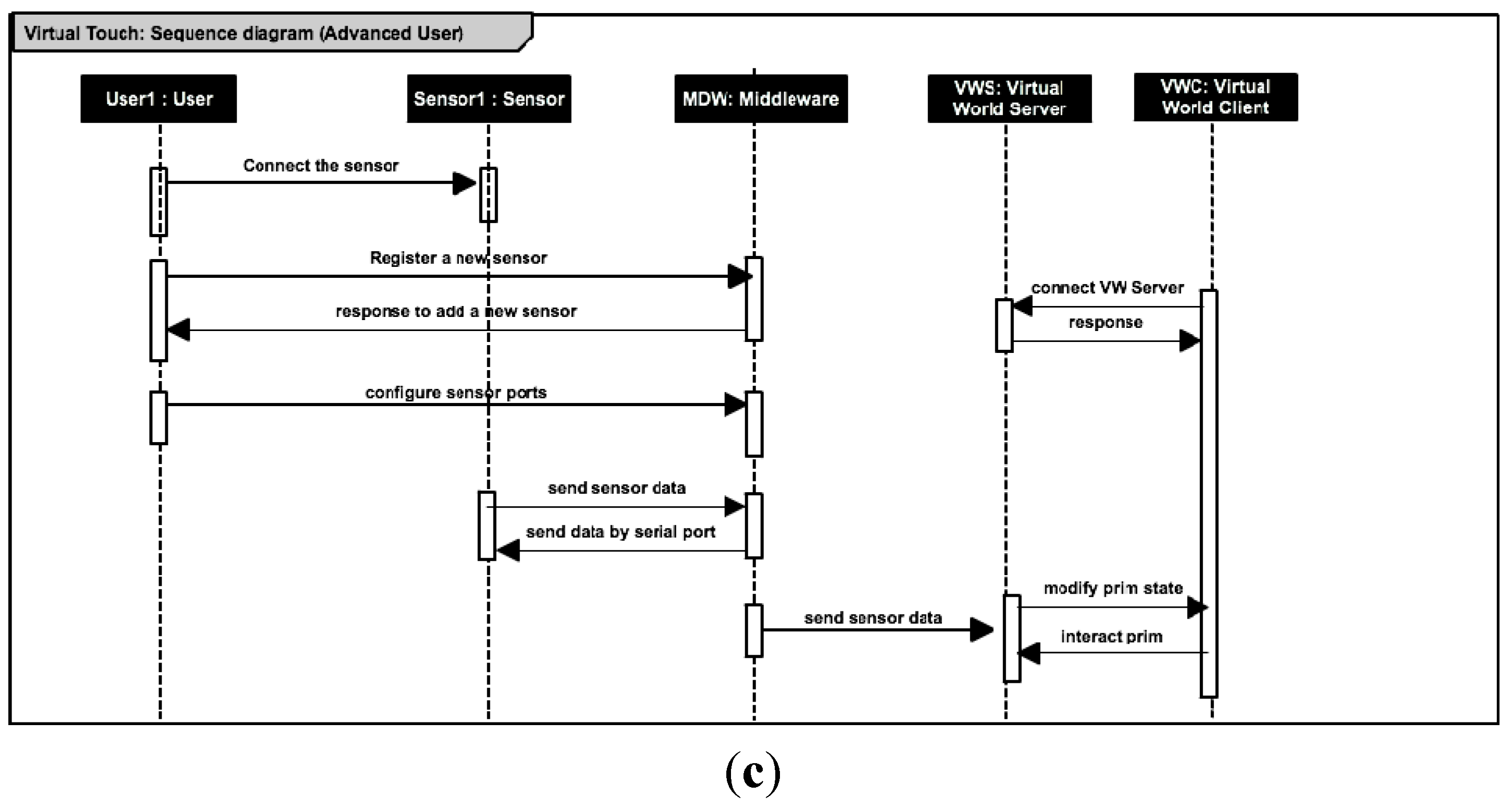

- Advanced user: is a teacher with extensive knowledge in programming and electronics, who will be able to add new modules to the system. This kind of user may add new elements (for example, creating new tangible elements using sensors and actuators that are not present in the initial toolkit) or may use different hardware technologies from the three contemplated in the toolkit as supplied.

- •

- A virtual world server.

- •

- A virtual world client.

- •

- A set of hardware elements: the tangible interaction devices.

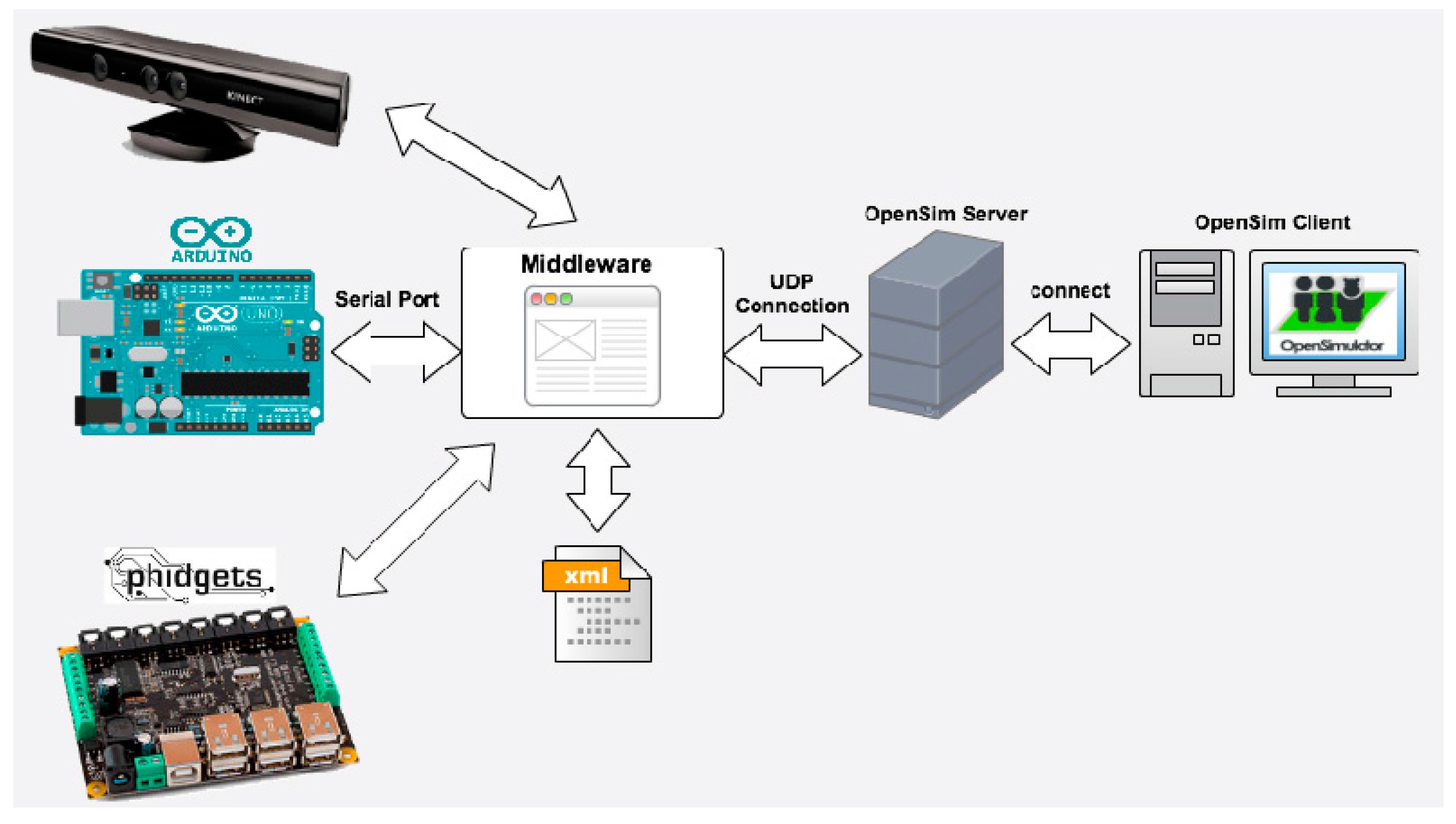

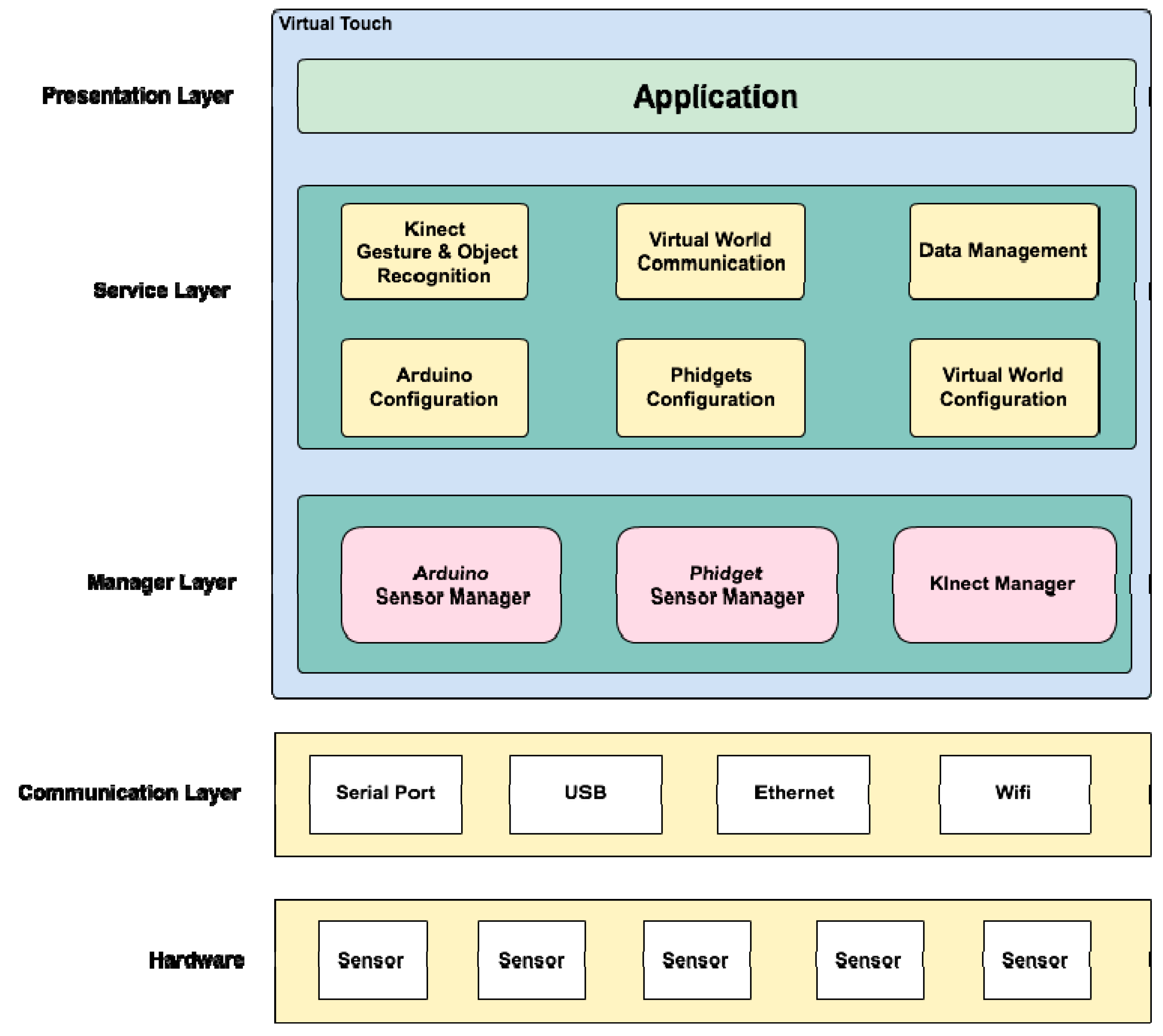

- Communication layer: this layer is the closest to the hardware, and therefore to the physical devices. It defines and manages the kind of connection that will be used, such as USB, WiFi, Ethernet, Bluetooth, etc.

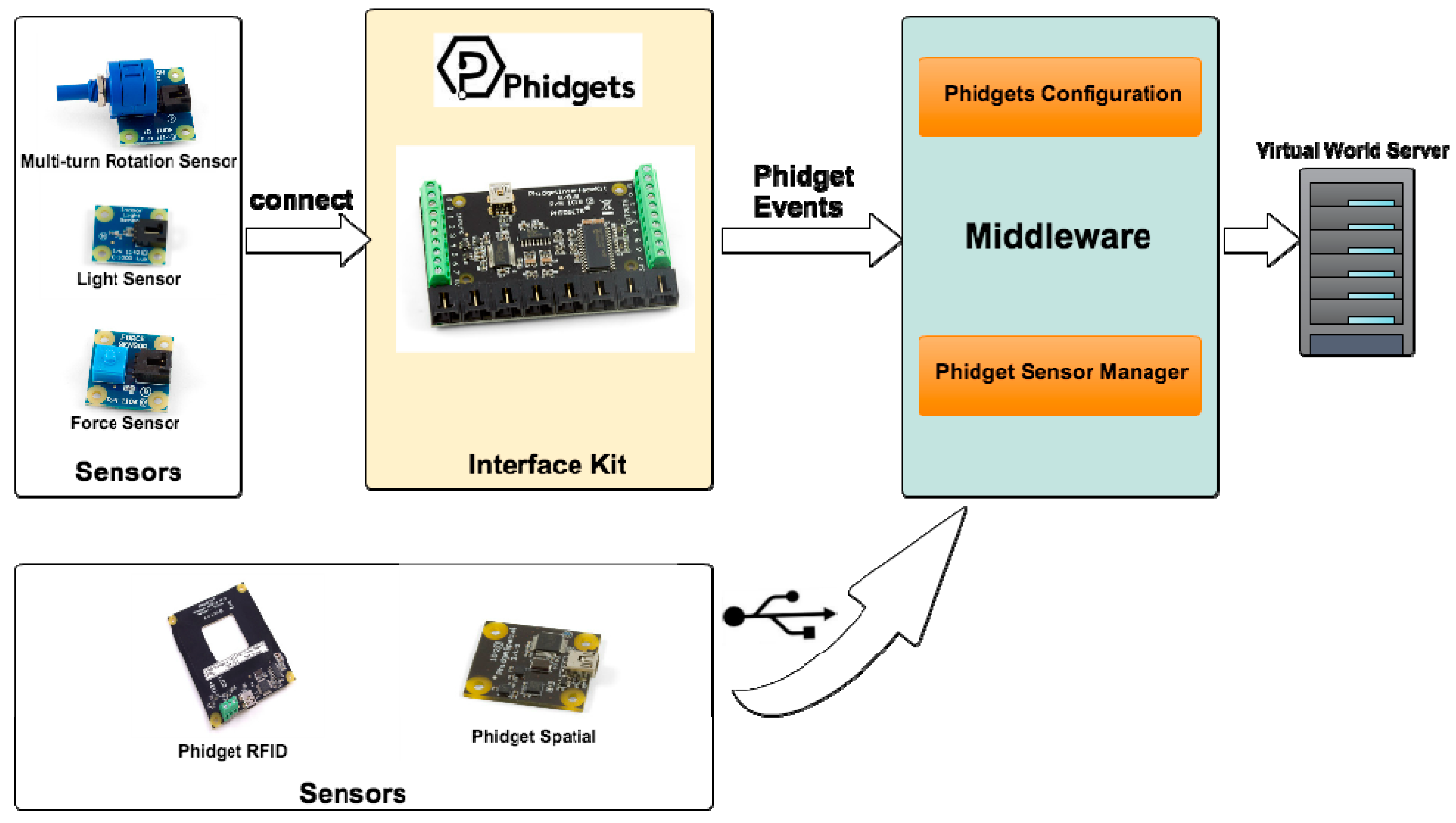

- Device management layer: this layer enables the interaction of the middleware with the three selected hardware technologies: Kinect, Arduino and Phidgets. This layer arranges for proper communication among the middleware and the sensors/actuators that conform the tangible interfaces.

- Service layer: the following services are available in this layer,

- ○

- Gesture and Object Recognition: this service is responsible for gesture recognition, voice recognition and forms recognition (it uses third part libraries such as OpenCV).

- ○

- Virtual World Communication: This service is responsible for sending and receiving messages to and from the OpenSim server. This service manages the data captured by the different sensors and actuators connected to Virtual Touch system.

- ○

- Data Management: This service is responsible for data management and storage. In particular, it stores some activities and parameters in a MySQL database. For example, multiple choice questions and answers (for tests) may be stored here, using a persistence mechanism.

- ○

- Configuration Services: This service is responsible for the configuration of the mixed reality system (for example, for specifying which OpenSim server, local or remote, is used). In the case of elements using the Arduino or the Phidgets technology, these services will allow the configuration of the ports where the various sensors and actuators are connected.

- Presentation layer: this is the layer closest to the user, in charge of displaying the graphical information. It is comprised mainly by the virtual world engine, and allows the configuration of how the interaction with the tangible interfaces is shown in the virtual world.

2.2. Virtual Touch Toolkit

3. Case Studies

| Architecture and Toolkit | Name | Tangibles and Devices | Virtual World Engine | Educational Application | Reference |

|---|---|---|---|---|---|

| Virtual Touch | Cubica | RFID reader and cubes with RFID tags inside. Wooden box simulating a vector. | OpenSimulator | Learning sorting algorithms for high school students | [50] |

| Virtual Touch Eye | Kinect device, wooden figures (square, triangle, pentagon) | Catalan language learning for pupils with special educational needs | [51] | ||

| Virtual Touch Book | Cardboard book with light sensors using Arduino. | Learning Catalan Language and learning Classical Greece culture | [52] |

- Encourage the active participation of students.

- Adapted to different levels.

- Encourage interaction between students and teacher.

- Allow the development of simulated training spaces

- Provide immediate feedback.

3.1. Cubica

- A first version of the Virtual Touch middleware, which allows the easy creation of mixed reality applications.

- A concrete tangible interface, consisting of a series of cubes that can be physically manipulated, and whose manipulation has an immediate effect in the virtual world. This tangible interface becomes part of the toolkit available for future applications.

- A specific educational application, including a virtual world (the “Algorithms Island”), with a set of activities, many of which make use of the tangible interface. This application also becomes part of the toolkit available to third parties, either for using it directly “as it is” or for modifying it to create other educational applications.

- A case study of the above in an educational secondary school.

3.2. Virtual Touch Eye

- A second version of the Virtual Touch middleware that, in addition to correct and optimize different aspects of the interaction with virtual worlds, added the possibility of incorporating tangible interfaces based on image recognition.

- A set of libraries and tools that enable the development of tangible interfaces based on image recognition. Thus, in this version of the middleware, two possible technologies for the development of tangible interfaces were contemplated: Phidgets and Kinect.

- A set of tangible elements in the form of geometric figures, so that the physical handling of the figures has immediate effects in the virtual world. This set of tangible items (and the libraries that incorporate their handling into the virtual world) are available on the Virtual Touch toolkit, and can be used for the development of future educational mixed reality applications.

- An example of educational application using mixed reality that is available within the Virtual Touch toolkit for possible employment “as it is” or for adapting it to new (similar) applications.

- A case study of the use of mixed reality systems in real environments.

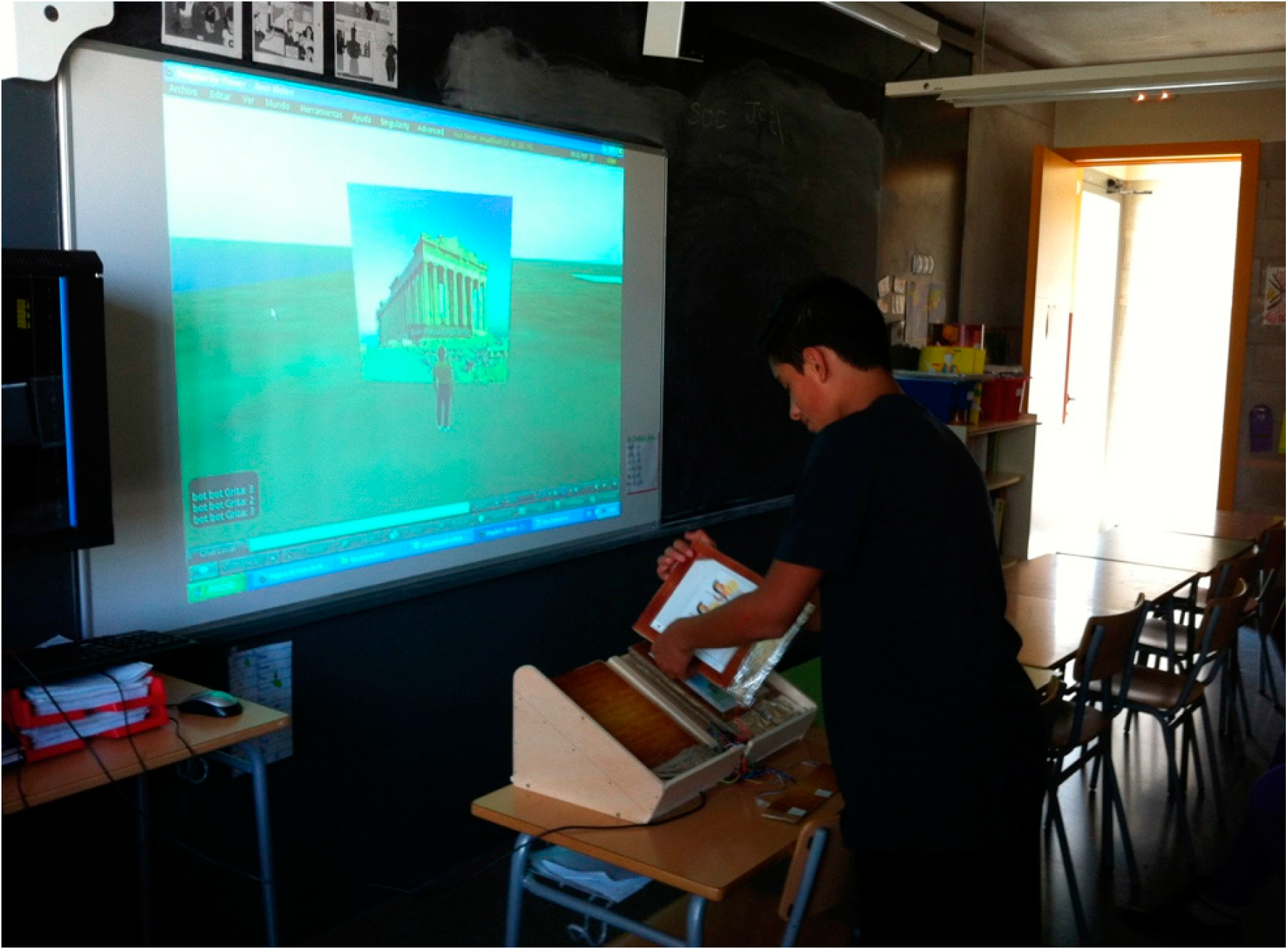

3.3. Virtual Touch Book

- A third version of the Virtual Touch middleware that, besides correcting and optimizing different aspects of the interaction with virtual worlds, adds the possibility of incorporating tangible interfaces that are based on Arduino technology.

- A set of libraries and tools that enable the development of tangible interfaces based on Arduino technology. Thus, in the current version of the middleware three possible technologies for the development of tangible elements are contemplated: Arduino, Phidgets and Kinect.

- A tangible element in the form of a traditional book, which interacts with the virtual world so that the activities in the virtual world are triggered depending on which page of the book is being studied. The book is available as part of the Virtual Touch toolkit, and can be used for the development of future mixed reality educational applications.

- An example of educational application (the Ancient Greece Island) that uses mixed reality, which is available within the Virtual Touch toolkit for possible use “as it is” or to be adapted as a new (similar) application.

- A case study of the use of mixed reality systems in real environments.

4. Conclusions and Future Work

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Dickey, M.D. Teaching in 3D: Pedagogical affordances and constraints of 3D virtual worlds for synchronous distance learning. Distance Educ. 2003, 24, 105–121. [Google Scholar] [CrossRef]

- Vygotsky, L.S. Mind in Society: The Development of Higher Psychological Processes; Cole, M., John-Steiner, V., Scribner Sand Souberman, E., Eds.; Harvard University Press: Cambridge, MA, USA, 1978. [Google Scholar]

- Dede, C. The evolution of constructivist learning environments: Immersion in distributed, virtual worlds. Educ. Technol. 1995, 35, 46–52. [Google Scholar]

- Jonassen, D.H. Designing constructivist learning environments. In Instructional-Design Theories and Models: A New Paradigm of Instructional Theory; Reigeluth, C.M., Ed.; Lawrence Erlbaum Associate: Mahwah, NJ, USA, 1999; pp. 215–239. [Google Scholar]

- Babu, S.; Suma, E.; Barnes, T.; Hodges, L.F. Can immersive virtual humans teach social conversational protocols? In Proceedings of the IEEE Virtual Reality Conference, Charlotte, NC, USA, 10–14 March 2007; pp. 10–14.

- Peterson, M. Learning interaction management in an avatar and chat-based virtual world. Comput. Assist. Lang. Learn. 2006, 19, 79–103. [Google Scholar] [CrossRef]

- Annetta, L.A.; Holmes, S. Creating presence and community in a synchronous virtual learning environment using avatars. Int. J. Instr. Technol. Distance Learn. 2006, 3, 27–44. [Google Scholar]

- Hew, K.F.; Cheung, W.S. Use of three-dimensional (3-D) immersive virtual worlds in K-12 and higher education settings: A review of the research. Br. J. Educ. Technol. 2010, 41, 33–65. [Google Scholar] [CrossRef]

- Dalgarno, B.; Lee, M.J.W. Exploring the relationship between afforded learning tasks and learning benefits in 3D virtual learning environments. In Proceedings of the 29th ASCILITE Conference, Wellington, New Zealand, 25–28 November 2012; pp. 236–245.

- Creelman, A.; Petrakou, A.; Richardson, D. Teaching and learning in Second Life—Experience from the Kamimo project. In Proceedings of the Online Information 2008 Conference: Information at the Heart of the Business, London, UK, 2–4 December 2008; pp. 85–89.

- AVALON Project. Available online: http://avalonlearning.eu/ (accessed on 24 April 2015).

- AVATAR Project. Available online: http://www.avatarproject.eu/ (accessed on 24 April 2015).

- NIFLAR Project. Available online: http://niflar.eu/ (accessed on 24 April 2015).

- TILA Project. Available online: http://www.tilaproject.eu/ (accessed on 24 April 2015).

- CAMELOT Project. Available online: http://camelotproject.eu/ (accessed on 24 April 2015).

- ST.ART Project. Available online: http://www.startproject.eu/ (accessed on 24 April 2015).

- TALETE Project. Available online: http://www.taleteproject.eu/ (accessed on 24 April 2015).

- Rico, M.; Martínez-Muñoz, G.; Alaman, X.; Camacho, D.; Pulido, E. A Programming Experience of High School Students in a Virtual World Platform. Int. J. Eng. Educ. 2011, 27, 52–60. [Google Scholar]

- Pellas, N. The development of a virtual learning platform for teaching concurrent programming languages in Secondary education: The use of Open Sim and Scratch4OS. J. E-Learn. Knowl. Soc. 2014, 10, 129–143. [Google Scholar]

- The River City Project. Available online: http://muve.gse.harvard.edu/rivercityproject/ (accessed on 24 April 2015).

- EcoMUVE. Available online: http://ecomuve.gse.harvard.edu/ (accessed on 24 April 2015).

- Bailey, F.; Moar, M. The Vertex Project: Exploring the creative use of shared 3D virtual worlds in the primary (K-12) classroom. In Proceedings of the ACM SIGGRAPH 2002 Conference Abstracts and Applications, San Antonio, TX, USA, 21–26 July 2002; pp. 52–54.

- Ligorio, M.B.; van Veen, K. Constructing a successful cross-national virtual learning environment in primary and secondary education. AACE J. 2006, 14, 103–128. [Google Scholar]

- Sheehy, K. Virtual worlds, inclusive education The TEALEAF framework. In Controversial Issues in Virtual Education: Perspectives on Virtual Worlds; Sheehy, K., Ferguson, R., Clough, G., Eds.; Nova Science Publishers: New York, NY, USA, 2010; pp. 1–15. [Google Scholar]

- Biever, C. Web removes social barriers for those with autism. New Sci. 2007, 2610, 26–27. [Google Scholar]

- Fitzmaurice, G.; Ishii, H.; Buxton, W. Bricks: Laying the Foundations for Graspable User Interfaces. In Proceedings of the CHI’95 SIGCHI Conference on Human Factors in Computing Systems, Denver, CO, USA, 7–11 May 1995; pp. 442–449.

- Ishii, H.; Ullmer, B. Tangible Bits: Towards Seamless Interfaces between People, Bits, and Atoms. In Proceedings of the CHI’97 the ACM SIGCHI Conference on Human factors in computing systems, Atlanta, Georgia, 22–27 March 1997; pp. 234–241.

- Papert, S. Mindstorms: Children, Computers, and Powerful Ideas; Basic Books, Inc.: New York, NY, USA, 1980. [Google Scholar]

- Stringer, M.; Toye, E.F.; Rode, J.; Blackwell, A. Teaching rethorical skills with a tangible user interface. In Proceedings of the 2004 Conference on Interaction Design and Children: Building a Community, Lisbon, Portugal, 5–9 September 2004; pp. 11–18.

- Africano, D.; Berg, S.; Lindbergh, K.; Lundholm, P.; Nilbrink, F.; Persson, A. Designing tangible interfaces for children’s collaboration. In Proceedings of the CHI04 Extended Abstracts on Human Factors in Computing Systems, Vienna, Austria, 24–29 April 2004; pp. 853–886.

- Stanton, D.; Bayon, V.; Neale, H.; Ghali, A.; Benford, S.; Cobb, S.; Ingram, R.; O’Malley, C.; Wilson, J.; Pridmore, T. Classroom collaboration in the design of tangible interfaces for storytelling. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Seattle, WA, USA, 31 March–5 April 2001; pp. 482–489.

- Horn, M.S.; Jacob, R.J.K. Designing tangible programming languages for classroom use. In Proceedings of the 1st international conference on Tangible and embedded interaction, Baton Rouge, LA, USA, 15–17 February 2007; pp. 159–162.

- Gallardo, D.; Julia, C.F.; Jordà, S. TurTan: A tangible programming language for creative exploration. In Proceedings of the Third annual IEEE international workshop on horizontal human-computer systems, Amsterdam, the Netherlands, 2–3 October 2008; pp. 89–92.

- Wang, D.; Qi, Y.; Zhang, Y.; Wang, T. TanPro-kit: A tangible programming tool for children. In Proceedings of the 12th International Conference on Interaction Design and Children, New York, NY, USA, 24–27 June 2013; pp. 344–347.

- Ullmer, B.; Ishii, H.; Jacob, R. Tangible query interfaces: Physically constrained tokens for manipulating database queries. In Proceedings of the International Conference on Computer-Human Interaction, Crete, Greece, 22–27 June 2003; pp. 279–286.

- Langner, R.; Augsburg, A.; Dachselt, R. CubeQuery: Tangible Interface for Creating and Manipulating Database Queries. In Proceedings of the Ninth ACM International Conference on Interactive TableTops and Surfaces, Dresden, Germany, 16–19 November 2014; pp. 423–426.

- Girouard, A.; Solovey, E.T.; Hirshfield, L.M.; Ecott, S.; Shaer, O.; Jacob, R.J.K. Smart Blocks: A tangible mathematical manipulative. In Proceedings of the 1st international conference on Tangible and embedded interaction, Baton Rouge, Louisiana, 15–17 February 2007; pp. 183–186.

- Jordà, S. On stage: The reactable and other musical tangibles go real. Int. J. Arts Technol. 2008, 1, 268–287. [Google Scholar] [CrossRef]

- Roberto, R.; Freitas, F.; Simões, F.; Teichrieb, V. A dynamic blocks platform based on projective augmented reality and tangible interfaces for educational activities. In Proceedings of the 2013 XV Symposium on Virtual and Augmented Reality (SVR), Cuiaba, Brazil, 28–31 May 2013; pp. 1–9.

- Muró, B.; Santana, P.; Magaña, M. Developing reading skills in Children with Down Syndrome through tangible interfaces. In Proceedings of the 4th Mexican Conference on Human-Computer Interaction, Mexico DF, Mexico, 3–5 October 2012; pp. 28–34.

- Jadán-Guerrero, J.; López, G.; Guerrero, L.A. Use of Tangible Interfaces to Support a Literacy System in Children with Intellectual Disabilities. In Ubiquitous Computing and Ambient Intelligence. Personalisation and User Adapted Services; Lecture Notes in Computer Science; Springer International Publishing: Gewerbestrasse, Switzerland, 2014; Volume 8867, pp. 108–115. [Google Scholar]

- Farr, W.; Yuill, N.; Raffle, H. Collaborative benefits of a tangible interface for autistic children. In Proceedings of the Human Factors in Computing Systems conference (CHI 2009), Boston, MA, USA, 4–9 April 2009; pp. 1–8.

- Milgram, P.; Kishino, A.F. Taxonomy of Mixed Reality Visual Displays. IEICE Trans. Inf. Syst. 1994, 12, 1321–1329. [Google Scholar]

- Gardner, M.; Scott, J.; Horan, B. Reflections on the use of Project Wonderland as a mixed-reality environment for teaching and learning. In Proceedings of the ReLIVE 08 Conference, Milton Keynes, UK, 20–21 November 2008; pp. 130–138.

- Tolentino, L.; Birchfield, D.; Kelliher, A. SMALLab for Special Needs: Using a Mixed-Reality Platform to Explore Learning for Children with Autism. In Proceedings of the NSF Media Arts, Science and Technology Conference, Santa Barbara, CA, USA, 29–30 January 2009; pp. 1–8.

- Müller, D.; Ferreira, J.M. MARVEL: A mixed-reality learning environment for vocational training in mechatronics. In Proceedings of the Technology Enhanced Learning International Conference, (TEL’03), Milan, Italy, 20–21 November 2003; pp. 25–33.

- Fiore, A.; Mainetti, L.; Vergallo, R. An Innovative Educational Format Based on a Mixed Reality Environment: A Case Study and Benefit Evaluation. Lecture Notes of the Institute for Computer Sciences. Soc. Inf. Telecommun. Eng. 2014, 138, 93–100. [Google Scholar]

- Nikolakis, G.; Fergadis, G.; Tzovaras, D.; Strinzis, M.G. A Mixed Reality Learning Environment for Geometry Education. Lect. Notes Comput. Sci. 2004, 3025, 93–102. [Google Scholar]

- Billinghurst, M.; Kato, H.; Poupyrev, I. The magicbook—Moving seamlessly between reality and virtuality. IEEE Comput. Graph. Appl. 2001, 21, 6–8. [Google Scholar]

- Mateu, J.; Alamán, X. An Experience of Using Virtual Worlds and Tangible Interfaces for Teaching Computer Science. In Proceedings of the 6th Ubiquitous and Ambient Intelligence Conference, Vitoria-Gasteiz, Spain, 3–5 December 2012; pp. 478–485.

- Mateu, J.; Lasala, M.J.; Alamán, X. Tangible Interfaces and Virtual Worlds: A New Environment for Inclusive Education. In Proceedings of the 7th Ubiquitous and Ambient Intelligence conference, Guanacaste, Costa Rica, 2–6 December 2013; pp. 119–126.

- Mateu, J.; Lasala, M.J.; Alamán, X. Virtual Touch Book: A Mixed-Reality Book for Inclusive Education. In Proceedings of the 8th Ubiquitous and Ambient Intelligence conference, Belfast, Northern Ireland, 2–5 December 2014; pp. 124–127.

- Phidgets. Available online: http://www.phidgets.com/ (accessed on 24 April 2015).

- Kinect for Windows. Available online: http://www.microsoft.com/en-us/kinectforwindows/ (accessed on 24 April 2015).

- Arduino. Available online: http://www.arduino.cc/ (accessed on 24 April 2015).

- Dede, C. The future of multimedia: Bridging to virtual worlds. Educ. Technol. 1992, 32, 54–60. [Google Scholar]

- Deterding, S.; Dixon, D.; Khaled, R.; Nacke, L.E. From Game Design Elements to Gamefulness: Defining Gamification. In Proceedings of the 15th International Academic MindTrek Conference: Envisioning Future Media Environments, Tampere, Finland, 28–30 September 2011; pp. 9–15.

© 2015 by the authors; license MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mateu, J.; Lasala, M.J.; Alamán, X. Developing Mixed Reality Educational Applications: The Virtual Touch Toolkit. Sensors 2015, 15, 21760-21784. https://doi.org/10.3390/s150921760

Mateu J, Lasala MJ, Alamán X. Developing Mixed Reality Educational Applications: The Virtual Touch Toolkit. Sensors. 2015; 15(9):21760-21784. https://doi.org/10.3390/s150921760

Chicago/Turabian StyleMateu, Juan, María José Lasala, and Xavier Alamán. 2015. "Developing Mixed Reality Educational Applications: The Virtual Touch Toolkit" Sensors 15, no. 9: 21760-21784. https://doi.org/10.3390/s150921760

APA StyleMateu, J., Lasala, M. J., & Alamán, X. (2015). Developing Mixed Reality Educational Applications: The Virtual Touch Toolkit. Sensors, 15(9), 21760-21784. https://doi.org/10.3390/s150921760