Abstract

Nature–inspired computing is a promising field of artificial intelligence. This area is mainly devoted to designing computational models based on natural phenomena to address complex problems. Nature provides a rich source of inspiration for designing smart procedures capable of becoming powerful algorithms. Many of these procedures have been successfully developed to treat optimization problems, with impressive results. Nonetheless, for these algorithms to reach their maximum performance, a proper balance between the intensification and the diversification phases is required. The intensification generates a local solution around the best solution by exploiting a promising region. Diversification is responsible for finding new solutions when the main procedure is trapped in a local region. This procedure is usually carryout by non-deterministic fundamentals that do not necessarily provide the expected results. Here, we encounter the stagnation problem, which describes a scenario where the search for the optimum solution stalls before discovering a globally optimal solution. In this work, we propose an efficient technique for detecting and leaving local optimum regions based on Shannon entropy. This component can measure the uncertainty level of the observations taken from random variables. We employ this principle on three well–known population–based bio–inspired optimization algorithms: particle swarm optimization, bat optimization, and black hole algorithm. The proposal’s performance is evidenced by solving twenty of the most challenging instances of the multidimensional knapsack problem. Computational results show that the proposed exploration approach is a legitimate alternative to manage the diversification of solutions since the improved techniques can generate a better distribution of the optimal values found. The best results are with the bat method, where in all instances, the enhanced solver with the Shannon exploration strategy works better than its native version. For the other two bio-inspired algorithms, the proposal operates significantly better in over 70% of instances.

1. Introduction

In computer science and mathematical optimization, nature–inspired methods are considered higher–level heuristics designed to find or generate potential solutions or to select a heuristic (partial search algorithm). These methods may provide near–optimal solutions in a limited amount of time. They properly work with incomplete or imperfect information or with bounded computation capacity [1]. These metaheuristic algorithms are inspired by interesting natural phenomena, such as the species’ selection and evolution mechanisms [2], swarm intelligence like the pathfinding skills of ants [3] and the attraction capabilities of fireflies [4], or the echolocation behavior of microbats [5]. Even physical [6] and chemical [7] laws have also been studied to design metaheuristic methods. During the last two decades, metaheuristics have attracted the scientific community’s attention due to their versatility and efficient performance when adapted to intractable optimization problems [8]. Different metaphors have guided the design of uncountable metaheuristic methods [9]. By grouping the metaheuristic algorithms according to their inspiration source, it is possible to detect at least the bio–inspired computation class, swarm intelligence methods, and genetic evolution. In this context, the literature has shown that when these techniques come from similar analogies, they often share common behavioral patterns, mainly in the initial parameter task and the intensification and diversification processes [10].

The evolutionary strategy of these bio–inspired techniques mainly depends on the appropriate balance between the diversification and the intensification phases. Diversification or exploration is the mechanism of entirely visiting new points of a search space. Intensification or exploitation is the process of refining those points within the neighborhood of previously visited locations to improve their solution quality [11]. When the diversification phase operates, the resolution process reduces accuracy to improve its capacity to generate new potential solutions. On the other hand, the intensification strategy allows refining existing solutions while adversely driving the process to locally optimal solutions.

Although metaheuristic algorithms present outstanding performance [12], they suffer from a common problem that arises when the exploration and exploitation process must be balanced, even more so when the number of variables in the problem increases. The greater the number of variables to handle, the more iterations will be necessary to find the best solution, thus converging the search in a specific area of the solution space [13]. In this context, all solutions are similar and can be considered good quality. Therefore, the iterative process that tries to improve existing solutions stagnates as it cannot continue to improve without leaving the feasible zone [1]. Different external methods have been used to solve this problem, such as random walk [14,15], roulette wheel [16,17,18], tabu list [19,20], among others. These mechanics allow the solution to be modified to move it from that space area to another. This movement alters the solutions randomly. The non–deterministic behavior that governs the update procedures lacks information to discriminate when to operate and which part of the region to visit.

In this work, we propose an efficient exploration module for bio–inspired algorithms based on Shannon entropy, a mathematical component to measure the average level of uncertainty inherent in observations from random variables [21,22,23]. The objective is to detect stagnation in local optimum, through a predictive entropy system, by computing entropy values of each variable. Next, solutions are moved toward a feasible region to find new and better solutions. This proposal is implemented and evaluated in three well–known population–based metaheuristics: particle swarm optimization, black hole algorithm, and bat optimization. The choice of these metaheuristics is supported by: (a) they work similarly because they belong to the same type of algorithms based on the swarm intelligence paradigm; (b) these population–based metaheuristics describe an iterative procedural structure to evolve their individuals (solutions), followed by many bio–inspired optimization algorithms; and (c) they have proven to be efficient optimization solvers for complex engineering problems. However, hybrid techniques such as [24,25] are also welcome. The Shannon diversification strategy runs as a background process and does not have an invasive role in the principal method.

Finally, to evidence that the proposed approach is a viable alternative that improves bio-inspired search algorithms, we evaluate it on a set of the most challenging instances of the Multidimensional Knapsack Problem (MKP), which is a widely recognized NP–complete optimization problem [26]. MKP was selected because it is suitable for Shannon’s diversification strategy, it has a wide range of practical applications [27], and it continues to be a hot topic in the operations research community [28,29]. Computational experiments run on 20 of the most challenging instances of the MKP taken from OR–Library [30]. Generated results are evaluated with descriptive analysis and statistical inference, mainly a hypothesis contrast by applying non–parametric evaluations.

The rest of this manuscript is structured as follows. Section 2 discusses the bibliographic search for relevant works in the field, fundamental concepts related to the diversification and the intensification phases, and it describes the information theory to measure uncertainty levels in random variables. Section 3 exposes the formal statement for the stagnation problem. Section 4 presents the developed solution, including the main aspects of the three bio–computing algorithms and the integration with the Shannon entropy. In Section 5, the experimental setup is detailed, while Section 5 discusses the main obtained results. Finally, conclusions and future work are included in Section 8.

2. Related Work

During the last two decades, bio-inspired computing methods have attracted the scientific community’s attention due to their remarkable ability to adapt search strategies to solve complex problems [31]. They are considered solvers devoted to tackling large instances of complex optimization problems [32,33]. These algorithms can be grouped according to their classification. Here, we can observe a division into nature-inspired vs. non-nature-inspired, population-based vs. single point search—or single solution—, dynamic vs. static objective function, single neighborhood vs. various neighborhood structures, and memory usage vs. memory-less methods, among many others [34,35,36].

Metaheuristics can usually provide near–optimal solutions in a limited time when no efficient problem–specific algorithm pre–exists [32]. After studying several metaheuristics, we can state that they operate similarly by combining local improvement procedures with higher-level strategies to explore the space of potential solutions efficiently [37,38,39]. During the last decades, metaheuristic algorithms have been analyzed by finding improved techniques capable of solving complex optimization problems [40]. This evolution has enabled them to merge theoretical principles from other science fields. For instance, Shannon entropy [21,22,23] has been used in a population distribution strategy based on historical information [41]. The study reveals a close relationship between the solutions’ diversity and the algorithm’s convergence. Also, ref. [42] proposed a multi–objective version of the particle swarm optimization algorithm enhanced by the Shannon entropy. The authors propose an evolution index of the algorithm to measure its convergence. Results show that the proposal is a viable alternative to boost swarm intelligence methods, even in mono–objective procedures. Another work that deals with metaheuristics enhanced by the Shannon entropy to treat multi–objective problems is [43]. Here, the uncertain information was employed to choose the optimum solution from the Pareto front. Shannon’s strategy was slightly lower than other proposed decision–making techniques.

Now, by considering smart alterations in search processes, we analyze [44], which proposes an entropy–assisted particle swarm optimizer for solving various instances of an optimization problem. This approach allows for adjusting the exploitation and exploration phases simultaneously. The reported computational experiments show that this work provides flexibility to the bio–inspired solver to self–organize its inner behaviors. Following this line of research, in [45], a hybrid algorithm between Shannon entropy and two swarm methods is introduced to improve the yield, memory, velocity, and, consequently, the move update. In [46], Shannon entropy is integrated into a chaotic genetic algorithm for taking data from solutions generated during the execution. This process runs in deterministic time series and operates from the initial population strategy. Another work that develops a similar proposal is detailed in [47]; here, the authors present a hybrid algorithm that includes the Shannon entropy in the evolving process of particle swarm optimization. The authors measure the convergence of solutions based on the distance between each solution and the best overall solution. They conclude that the algorithm can satisfactorily obtain outstanding results, especially regarding fitness evolution and convergence rate.

Following the integration between bio–inspired solvers and the Shannon entropy, we analyze [48], where the information component allows measuring the population diversity, the crossover probability, and the mutation operator to adjust the algorithm’s parameters adaptively. Results show that it is possible to generate a satisfactory global exploration, improve convergence speed, and maintain the algorithm’s robustness. The same approach is explored in [49]. Again, the convergence speed and the population diversity are key factors, balanced to improve the resolution procedure. Finally, in [50,51], the Shannon entropy allows handling the instance of the optimization problem. The first work solves the portfolio selection problem by minimizing the number of transactions, while the second computes the minimum loss and cost of the reactive power planning.

3. Preliminaries

This section briefly defines Shannon entropy and explains the stagnation problem.

3.1. Shannon Entropy

Claude Elwood Shannon proposed a mathematical component capable of measuring an information source’s uncertainty level based on the probability distribution followed by the data that compose it [22]. This component is called entropy, and it applies to different fields. In computer science, it is known as information entropy and is expressed by the following formula [22,23]:

where corresponds to a possible system event, and represents the probability that the event x occurs. The entropy of an information system is calculated from a set of possible events occurring in the system, together with their occurrence probability. The formula returns values between 0 to , corresponding to the entropy level of the evaluated system. Results close to 0 mean low entropy, so the system is considered more predictable. On the contrary, the higher the result, the higher the entropy, and the system is considered less predictable.

3.2. Stagnation Problem

Mono–objective optimization problems are commonly modeled as follows:

where is an n-dimensional vector that represents the set of decision variables—or solutions—, is the function to be optimized—or objective function—, and both and are the constraint sets of the problem.

The fitness is the output value obtained from evaluating a solution in the objective function. In a constrained optimization problem, the aim is to find the best fitness among several solutions which satisfy all constraints. Over the decades, many techniques have been developed to solve complex optimization problems. Recently, bio–inspired computing methods—or metaheuristics—have emerged as solvers able to generate fitness near to optimal values [9]. These nature–inspired mechanisms mimic the behavior of individuals in their environment based on survival of the fittest. This strategy allows altering solutions into new solutions that are potentially better than previous ones. The update is carried out by interactive search procedures, such as exploration and exploitation [52]. The exploration phase focuses on discovering new zones of non–visited heuristic spaces. The exploitation phase intensifies the local search process in an already visited promising zone. In both cases, the evolutionary operators are mathematical formulas abstracted from the natural phenomenon that inspires the algorithm, invoked for random time lapses.

Despite the outstanding performance of bio–inspired algorithms in solving complex problems, they present a common problem: local optima stagnation. The stagnation problem is formally defined as the premature convergence of an algorithm to a solution that is not necessarily the best or close to it [53]. Widely proven techniques treat this topic using internal characteristics, such as the memory of movements, prohibitive elements, and random restart, among several other methods. These procedures generally operate probabilistically, randomly alter solutions, and do not use the knowledge generated in the resolution process [52,53]. This issue presents an interesting problem: How much can we improve an algorithm if it internally considers the knowledge generated to recognize local optima stagnation and escape from it during the search process? Currently, the information produced by the optimization algorithms is not analyzed due to its uncertain and random nature. Nevertheless, in the next section, we will see that this is possible and useful for this problem.

4. Developed Solution

In this section, we detail how the Shannon entropy is applied to detect the stagnation issue on bio–computing methods and how it is also employed to escape from this problem.

4.1. Bio–Inspired Methods

We employ three population–based metaheuristic algorithms: particle swarm optimization, black hole algorithm, and bat optimization. These algorithms are among the most popular swarm intelligence methods. The first one is inspired by the behavior of birds flocking or fish schooling when they move from one place to another. The second one is based on the black hole phenomenon when it attracts and absorbs stars of a constellation. The third one imitates the echolocation phenomenon present in the microbats species, allowing them to avoid obstacles while flying and locate food or shelter. In general terms, the three metaheuristics work similarly. For example, solution positions are randomly generated at the beginning of the algorithm and updated via an alteration of the velocity.

4.1.1. Particle Swarm Optimization

In particle swarm optimization (PSO), each bird or fish describes a particle—or solution—with two components: position and velocity. A set of particles (the candidate solutions) forms the swarm that evolves during an iterative process giving rise to a powerful optimization method [31]. The method works by altering velocity through the search space and then updating its position according to its own experience and that of neighboring particles.

The traditional particle swarm optimization is governed by two vectors, the velocity and the position . First, the particles are randomly positioned in an n-dimensional heuristic space with random velocity values. During the evolution process, each particle updates its velocity and position—see Equation (3) and Equation (4), respectively—:

where w, , and are acceleration coefficients to obtain the new velocity, and are uniformly distributed random values in the range , represents the previous best position of ith particle, and finally, is the global best position found by all particles during the resolution process.

4.1.2. Black Hole Algorithm

The black hole (BH) algorithm begins with randomly generated initial positions of stars , each with a velocity of change . Both vectors belong to an n-dimensional heuristic space for an optimization problem.

The best candidate is chosen at each iteration to become in the black hole. At this moment, other solutions are pulling around the black hole, building the constellation of stars. A star will be permanently absorbed when it gets too close to the black hole. A new solution is randomly generated and located in the search space to keep the number of stars balanced. The absorption of stars by the black hole is formulated in Equations (5) and (6):

where r is a uniformly distributed random value in the range , and represents the global best location—or black hole—found by all the stars during the resolution process.

This algorithm attempts to solve the stagnation problem using a random component that acts when a participation probability is reached. This procedure is known as the event horizon and plays an important role in controlling the global and local search. The black hole will absorb every star that crosses the event horizon. The radius of the event horizon is calculated by Equation (7):

where s represents the number of stars, is the fitness value of the black hole, and is the fitness value of the ith star. When the distance between a ith star and the black hole () is less than the event horizon at an instant t, the star collapses into the black hole. The distance between a star and a black hole is the Euclidean distance computed by Equation (8) as follows:

As mentioned above, this procedure operates under random criteria. If a real random number between 0 and 1 is higher than an input parameter, the event horizon provides diversity among solutions. Otherwise, solutions keep intensifying the current search area.

4.1.3. Bat Optimization

Naturally inspired, bat optimization (BAT) follows the behavior of microbats when they communicate through bio–sonar, an inherent feature of this species. Bio–sonar, also called echolocation, allows bats to determine distances and distinguish between food, prey, and background obstacles. The algorithm extrapolates this characteristic, assuming that all bats develop it. In this abstraction, a bat flies at a position with a velocity and emitters sounds with a frequency , a loudness , and a pulse emission rate .

Similar to the previous metaheuristics, bat optimization is driven by two vectors, the velocity and the position . Again, both vectors belong to an n–dimensional heuristic space. Furthermore, the frequency varies in a range , and loudness can vary in many ways. It is assumed that it ranges from a large (positive) value to a minimum constant value .

The algorithm starts with an initial population of bat positions. The best position is selected in each iteration according to its yield, called the global solution. To find new solutions, the frequencies is computed by Equation (9), in order to adjust the new velocity, which is updated via Equation (10) and thus, new position are generated by Equation (11).

where is a uniformly distributed random value in the range , is set to have a small value, and varies according to the variance allowed in each time step. Next, describes the global best solution generated by all bats during the search process.

In bat optimization, the random walk trajectory governs the diversification phase to alter a solution. The new solution is generated based on the bat’s current loudness and maximum allowed variance during a time step. This procedure is computed by Equation (12).

where is a random value in .

Finally, the variation between loudness and pulse emission drives the intensification phase. This principle is given from hunter behavior when bats recognize prey. When it occurs, they decrease loudness and increase the rate of pulse emission. This strategy is calculated by Equation (13).

where and are ad-hoc constants to control the intensification phase. For and , we get , , .

4.1.4. Common Behavior

Population–based metaheuristics work similarly. We present a common work scheme (see Algorithm 1) to implement each bio–inspired solver. This scheme adapts the generalities of the search process and the particularities of each bio-inspired phenomenon.

4.2. Solving Stagnation

Our proposal covers two scopes: detecting stagnation and escaping from it. For the first step, we employ historical knowledge of metaheuristics while solving optimization problems. Next, a new formula based on random work is used, which considers certain information about the algorithm’s performance.

4.2.1. Stagnation Detecting

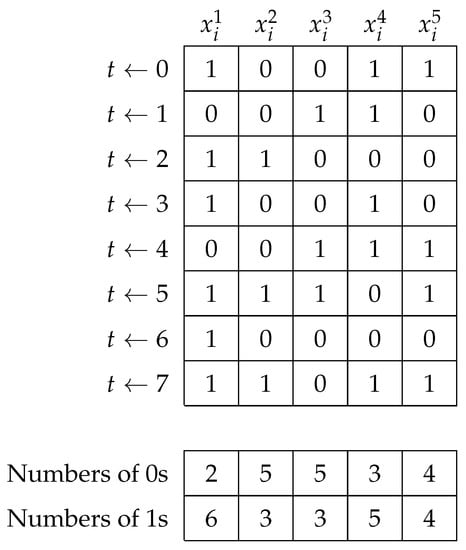

By considering a binary approach for each agent, this would remember the number of times their status changes (zero to one and one to zero) into decision variables until a time t. Figure 1 presents a history of changes for a solution vector of 5–dimension.

| Algorithm 1: Common work scheme used to implement the population–based algorithms |

|

Figure 1.

Example of history of changes from a solution vector along to iterations.

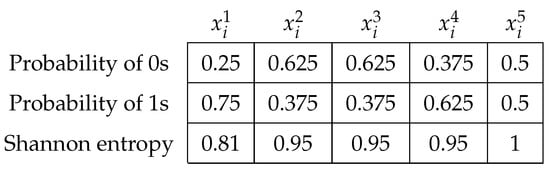

The evolutionary operator updated this solution vector seven times. It was initially generated in . Next, we computed the simple probability of occurrence for all decision variables. With these values, we finally calculate and show the Shannon entropy of each one in Figure 2:

Figure 2.

Probabilities and Shannon entropy values.

Entropy values close to give us a trust value near , i.e., when a solution vector generates this occurrence level, we will be in the presence of stagnation. It is relevant to note that the population of solutions is randomly instantiated under a uniform probability. Hence, first iterations do not produce low entropy values. For the above example, the Shannon entropy values , , , , and 1 are all far from , so the vector cannot be considered as stagnated. We consider that a vector stagnates if the median value of the Shannon entropy is at least . The procedure operation can be seen in Algorithm 2.

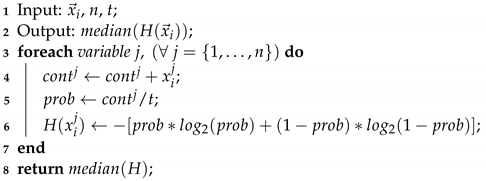

| Algorithm 2: Shannon entropy module |

|

The procedure requires the current solution vector , the number of decision variables n, and the time t it was called. In the end, the module provides the element right in the middle of the solution vector, which means at least half of the entropies reached the trust value. For that, Lines 3–6 compute the Shannon entropy values under the occurrence probability of state changes.

From the spatial complexity point of view, the proposal uses historical data but does not store it. Regarding temporal complexity, entropy values are computed after the evolutionary operator, so the solution vector is linearly traversed at different time steps.

4.2.2. Stagnation Escaping

As mentioned above, bio–inspired algorithms use random modifiers to balance the exploration and then avoid the stagnation problem. This strategy suggests that changes in decision variables have the same distribution and are independent of each other. Therefore, it assumes the past movement cannot be used to predict its future values [54,55]. Our proposal goes the opposite way, applying the entropy value in a similar form to a random walk but using historical data to make a decision. We propose to update only those decision variables that we already know a priori that are stagnant. The latter is one of the main differences in the random walk method. This modifier is formulated in Equation (14).

where is an uniformly distributed random integer value in the . A positive or negative value for provides to explore new promising zones. Furthermore, setting as a smaller step size allows the exploration phase to not stray too far from the current solution. This operation remains agnostic to bio–inspired algorithms, working on both native solver systems and hybridized/improved versions.

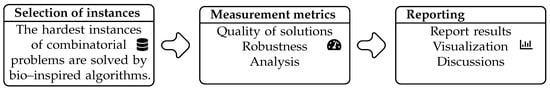

5. Experimental Setup

To suitably evaluate the performance of the improved swarm intelligence methods, a robust performance analysis is required. For that, we contrast the best solutions achieved by metaheuristics to the best–known result of the benchmark. Figure 3 depicts the procedures involved in thoroughly examining the enhanced metaheuristics. We design goals and suggestions for the experimental phase to show that the proposed approach is a viable alternative for enhancing the inner mechanisms of metaheuristics. Solving time is computed to determine the produced gap when the Shannon strategy runs on the bio–inspired method. We evaluate the best value as a vital indicator for assessing future results. Next, we use ordinal analysis and statistical testing to evaluate whether a strategy is significantly better. Finally, we detail the hardware and software used to replicate computational experiments. Results will visualize in tables and graphics.

Figure 3.

Schema of the experimental phase applied to this work.

A set of optimization problem instances were solved for the experimental process and, more specifically, to measure the algorithms’ performance. These instances come from OR–Library, which J.E. Beasley originally described in 1990 [56]. This “virtual library” has several test data sets of different natures with their respective solutions. We take 20 binary instances of the Multidimensional Knapsack Problem. Instances are identified by a name and a number, in the form MKP1 to MKP20, respectively. Table 1 details the size of each instance.

Table 1.

Instances of the Multidimensional Knapsack Problem.

The exact methods have not solved the instances from MKP17 to MKP20. For this reason, we use unknown to describe that this value has not yet been found.

The MKP is formally defined in Equation (15):

where describes whether the object is included or not in a knapsack, and the n value defines the total number of objects. Each object has a real value that represents its profit and is used to compute the objective function. Finally, stores the weight for each object in a knapsack k with maximum capacity . As can be seen, this is a combinatorial problem between including or not an object. The execution of continuous metaheuristics in a binary domain requires a binarization phase after the solution vector changes [60]. A standard Sigmoid function compared to a uniform random value between 0 and 1 was employ as a transformation function, i.e., , then a discretization method is employed, in this case, if . Otherwise, .

The performance of each algorithm is evaluated after solving each instance 30 times on it. Once the complete set of outputs of all the executions and instances has been obtained, an outliers analysis is performed to study possible irregular results. Here, we detect influence outliers using the Tukey test, which takes as a reference the difference between the first quartile (Q1) and the third quartile (Q3), or the interquartile range. In our case, in box plots, an outlier is considered to be 1.5 times that distance from one of those quartiles (mild outlier) or three times that distance (extreme outlier). This test was implemented by using a spreadsheet, so the statistics are calculated automatically. Thus, we remove them to avoid distortion of the samples. Immediately, a new run is launched to replace the eliminated solution.

In the end, descriptive and statistical analyses of these results are performed. For the first one, metrics such as max and min values, mean, standard quasi–deviation, median, and interquartile range are used to compare generated results. The second analysis corresponds to statistical inference. Two hypotheses are contracted to evidence a major statistical significance: (a) test of normality with Shapiro–Wilk and (b) test of heterogeneity by Wilcoxon–Mann–Whitney. Furthermore, it is essential to note that given the independent nature of instances, the results obtained in one of them do not affect others. The repetition of an instance neither involves other repetitions of the same instance.

Finally, all algorithms were coded in Java programming language. The infrastructure was a workstation running Windows 10 Pro operating system with eight processors i7 8700, and 32 GB of RAM. Parallel implementation was not required.

6. Discussion

The first results are illustrated in Table 2, which is divided into three parts: (a) number of best reached, (b) minimum solving time, and (c) maximum solving time. Results show that modified methods (S–PSO, S–BAT, and S–BH) exhibit better performance achieving greater optimum values than their native versions.

Table 2.

Experimental results.

Regarding the minimum and maximum solving times, PSO and S–PSO have similar performance, and a significant difference is not appreciable, being who better yield shows of all studied techniques. When BAT and S–BAT are contrasted, we again note that the Shannon Entropy strategy does not cause a significant increase in required solving time by the bio–solver algorithm, except in a few instances where BAT needs less time than S–BAT. Now, let us compare the results generated by the black hole optimizer and the improved S–BH. It is possible to observe that, in general terms, there is no significant difference between the minimum solving times required by the original bio–inspired method and its enhanced version.

To robust the experimental phase, we evaluate the quality of solutions based on the number of optimal findings. Thus, taking the generated data, we note that the modified algorithms S–PSO, S–BAT, and S–BH perform better than their native versions. Based on the result in Table 3 regarding the solution quality, S–PSO has a better performance than PSO. The latter is because S–PSO has a smaller maximum RPD, implying that his solutions are larger. Moreover, considering the standard deviations, we can see that values achieved by PSO are usually lower than those generated by S–PSO. Therefore, the results distribution and their differentiation concerning the average is better in the algorithm based on the Shannon entropy.

Table 3.

Experimental results of Shannon PSO against its native version.

The result of PSO in the median RPD is equal to or slightly lower than S–PSO. Thus, PSO has a slightly better performance. For the average RPD, both S–PSO and PSO had a similar performance. Finally, considering that the proposed approach S–PSO found a more significant number of optima, with a higher quality of solutions and a lower deviation, then we can conclude that S–PSO has a better general performance than native PSO.

The results shown in Table 4 show how S–BAT presents a much higher general performance than his native version. S–BAT has, for the 20 instances, maximum, mean, and average RPDs equal to, or less than, those presented by the native bat optimizer, in addition to having a smaller standard deviation for their values. This set of characteristics implies that the solutions found by S–BAT are significantly larger than those found by the bat algorithm, and, therefore, have a higher general performance compared to its native version.

Table 4.

Experimental results of Shannon BAT against its native version.

Concerning Table 5, we can infer that S–BH algorithm has a higher general performance than the BH algorithm. There are no significant differences between both algorithms in the mean RPD and average RPD, but the maximum RPD in 18 of the 20 instances is lower in S–BH. The above means that the solutions found by S–BH have a larger size and, therefore, a better performance. On the other hand, concerning its standard deviations, in 13 of the 20 instances, the native version of the algorithm has a lower variation. Therefore, both algorithms generally present a high dispersion in their data. Since both algorithms have a similar widespread distribution, based on the number of optima found, we can conclude that the S–BH algorithm has a higher overall performance because it can find a more significant number of better quality optima.

Table 5.

Experimental results of Shannon BH against its native version.

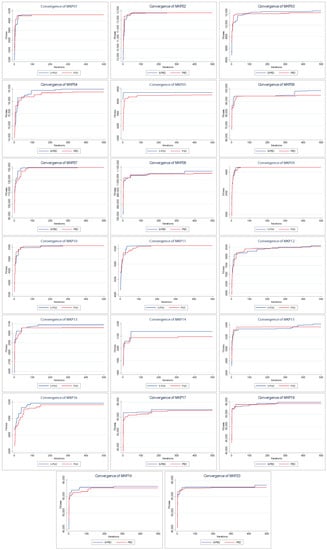

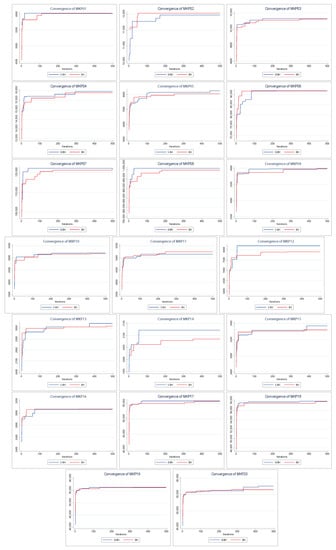

Figure 4 shows the convergence of the solutions found by PSO and S–PSO. The above indicates how the solution changes as the iterations go by (increases its value).

Figure 4.

Convergence charts of PSO vs. Shannon PSO.

For all instances, in early iterations, S–PSO has at least a similar performance to PSO. As the execution progresses, S–PSO gradually acquires higher–value solutions. In MKP instances 2, 7, 9, 10, and 11, both algorithms have the same final performance. S–PSO is superior in all other instances (15 of 20).

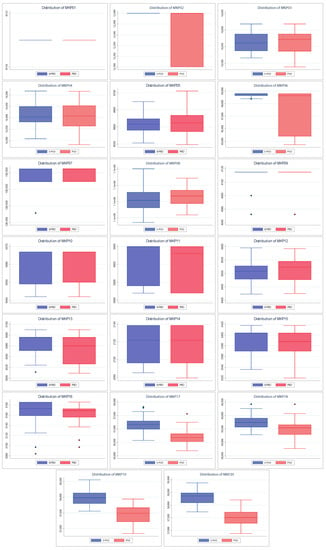

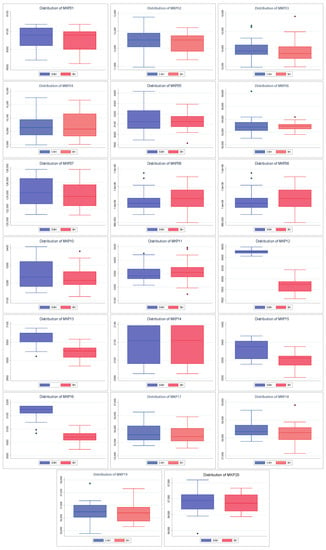

Also, Figure 5 shows the dispersion of the values found by both algorithms. The above allows knowing how close the values found are to their respective medians and their quartiles and extreme values. A better spread is smaller in size (more compact) and/or has larger medians and tails. As with convergences, the spreads of the S–PSO algorithm are usually at least similar to those of PSO. For MKP instances 3, 4, 5, 8, 10, and 12, the S–PSO spread is slightly lower. In MKP instances 1 and 9, both algorithms have the same dispersion. For the remaining 12 instances, S–PSO has values with lower dispersion and/or higher median. Therefore, it is considered to have better performance.

Figure 5.

Distribution charts of PSO vs. Shannon PSO.

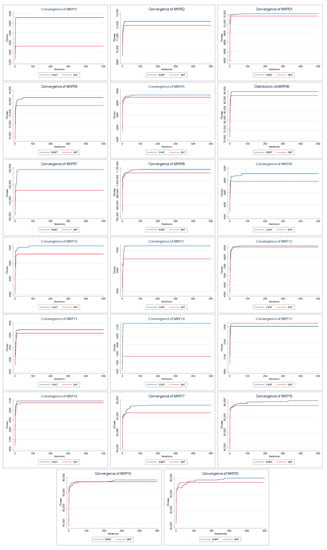

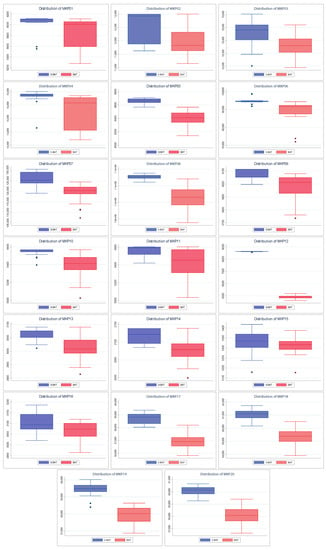

Figure 6 shows the convergence of the solutions found by BAT and S–BAT. In 19 of the 20 instances, S–BAT outperforms BAT. BAT is superior only in the MKP 15 instance. Both algorithms present similar values in early iterations, but S–BAT overlaps significantly as the execution progresses. On the other hand, Figure 7 shows the dispersion of the values found by both algorithms. In the 20 instances, the dispersion of the values found by S–BAT is smaller, presenting larger values and closer to their medians. Given the above, S–BAT has a significantly higher overall performance than BAT.

Figure 6.

Convergence charts of BAT vs. Shannon BAT.

Figure 7.

Distribution charts of BAT vs. Shannon BAT.

Figure 8 shows the convergences of the solutions found by BH and S–BH. For MKP instances 1, 3, 6, 16, 17, and 18, both algorithms present similar final values, with S–BH slightly higher. Only in three instances (MKP 2, 9, and 11) is the native BH algorithm slightly superior to S–BH. In the remaining eleven instances S–BH presents values significantly higher than BH.

Figure 8.

Convergence charts of BH vs. Shannon BH.

Furthermore, Figure 9 shows the dispersion of the values found by both algorithms. Because the behavior of these algorithms is more similar to each other than the previously seen ones (PSO and BAT), it is necessary to highlight that our performance approach is based on the largest values (maximization problem). Given the above, considering the MKP instances 1, 2, 5, 9, 17, 18, 19, and 20, we can say that S–BH has a better dispersion than BH. The above is because it has a median slightly higher than BH or larger extreme values. For MKP 6, 8, and 11 instances, native BH has superior performance for similar reasons. In the MKP 14 instance, both algorithms have the same dispersion. For the remaining eight instances, S–BH has a significantly higher dispersion in quality.

Figure 9.

Distribution charts of BH vs. Shannon BH.

Finally, considering everything mentioned above, we can say that approximately half of the S–BH instances have at least a dispersion equal to or higher (quality) than that of BH. Therefore, it is considered to have better performance.

7. Statistical Analysis

To evidence a statistical significance between the native bio-inspired algorithm and its improved version by the Shannon strategy, we perform a robust analysis that includes normality assessment and contrast of hypotheses to determine if the samples come or not from an equidistributed sequence. Firstly, Shapiro–Wilk is required to study the independence of samples. It determines if observations (runs per instance) draw a Gaussian distribution. Then, we establish as samples follow a normal distribution. Therefore, assumes the opposite. The traditional limit of p-value is , for which results under this threshold state the test is said to be significant ( rejects). Table 6 shows p-values obtained by native algorithms and their enhanced versions for each instance. Note that ∼0 indicates a small p-value near 0, and the hyphen means the test was not significant.

Table 6.

Test Shapiro–Wilk for native algorithms and their enhanced versions.

About 63% of the results confirm that the samples do not follow a normal distribution, so we decide to employ the non-parametric test Mann—Whitney—Wilcoxon. The idea behind this test is the following: if the two compared samples come from the same population, by joining all the observations and ordering them from smallest to largest, it would be expected that the observations of one and the other sample would be randomly interspersed [61].

To develop the test, we assume as the null hypothesis that affirms native methods generate better (smaller) values than their versions improved by the Shannon entropy. Thus, suggests otherwise. Table 7 exposes results of contrasts. Again, we use as the upper threshold for p-values and smaller values allow us to reject and, therefore, assume as true. To detail the results obtained by the test, we deployed more significant digits, and we applied hyphens when the test was not significant.

Table 7.

Test Mann–Whitney–Wilcoxon for native algorithms against their enhanced versions.

Finally, and consistent with the previous results, the test strongly establishes that the bat optimizer is the bio–inspired method that benefits the most from the Shannon strategy (see Figure 6 and Figure 7). The robustness of this test is also evident with PSO and BH. We can see that the best results are adjusted to those already shown. For example, S–PSO on MKP02 and MKP06 instances is noticeably better than its native version (see Figure 4 and Figure 5). Similarly, S–BH on MKP12, MKP13, MKP15, and MKP16 instances performs better than the original version (see Figure 8 and Figure 9).

8. Conclusions

Despite the efficiency shown over the last few years, bio-inspired algorithms tend to stagnate in local optimal when facing complex optimization problems. During iterations, one or more solutions are not modified; therefore, resources are spent without obtaining improvements. Various methods, such as Random Walk, Levy Flight, and Roulette Wheel, use random diversification components to prevent this problem. This work proposes a new exploration strategy using the Shannon entropy as a movement operator on three swarm bio-inspired algorithms: particle swarm optimization, bat optimization, and black hole algorithm. The mission of this component is first to recognize stagnated solutions by applying information given by the solving process and then provide a policy to explore new promising zones. To evidence the reached performances by three optimization methods, we solve twenty instances of the 0/1 multidimensional knapsack problem, which is a variation of the well–known traditional optimization problem. Regarding the solving time, the results show that including an additional component increases the required time to reach the best solutions. However, in terms of accuracy to achieve optimal solutions, there is no doubt that this component significantly improves the resolution process of metaheuristics. We performed a statistical study on the results to ensure this conjecture was correct. As samples are independent and do not follow a normal distribution, we employ the Wilcoxon—Mann—Whitney test, a non-parametric statistical evaluation, to contrast the null hypothesis that the means of two populations are equal. Effectively, the swarm intelligence methods improved by the Shannon entropy exhibit significantly better yields than their original versions.

In future work, we propose comparing this proposal against other entropy methods, such as linear, Rényiand, or Tsallis, because they work an occurrence probability similar to Shannon. On the other hand, this research opens a challenge to analyze data generated by metaheuristics when internal search mechanisms operate. For example, the local search, exploration, or exploitation processes can converge on common ground. If this information is used correctly, we can be in front of powerful self-improvement techniques. In this scenario, we can design data-driven optimization algorithms capable of solving the problem and self-managing to perform this resolution in the best possible way.

Author Contributions

Formal analysis, R.O., R.S., B.C., F.R. and R.M.; investigation, R.O., R.S., B.C., F.R., R.M., V.R., R.C. and C.C.; methodology, R.O., R.S. and C.C.; resources, R.S. and B.C.; software, R.O., V.R. and R.C.; validation, R.S., B.C., F.R., R.M. and C.C.; writing—original draft, R.O., V.R. and R.C.; writing—review & editing, R.O., R.S., B.C., F.R., R.M., V.R., R.C. and C.C. All the authors of this paper hold responsibility for every part of this manuscript. All authors have read and agreed to the published version of the manuscript.

Funding

Broderick Crawford and Ricardo Soto, both are supported by grant ANID/FONDECYT/REGULAR/1210810. Roberto Muñoz is supported by grant ANID/FONDECYT/REGULAR/1211905. Fabián Riquelme is partially supported by grant ANID/FONDECYT/INICIACIÓN/11200113.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Acknowledgments

We would like to thank the reviewers for their thoughtful comments and efforts toward improving our manuscript.

Conflicts of Interest

The authors declare no conflict of interest. The funding sponsors had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript, and in the decision to publish the results.

References

- Talbi, E.G. Metaheuristics: From Design to Implementation; John Wiley & Sons: Hoboken, NJ, USA, 2009. [Google Scholar]

- Reeves, C.R. Genetic Algorithms. In Handbook of Metaheuristics; Springer: Boston, MA, USA, 2010; pp. 109–139. [Google Scholar] [CrossRef]

- Dorigo, M.; Birattari, M.; Stutzle, T. Ant colony optimization. IEEE Comput. Intell. Mag. 2006, 1, 28–39. [Google Scholar] [CrossRef]

- Yang, X.S. Nature-Inspired Metaheuristic Algorithms: Second Edition; Luniver Press: Frome, UK, 2010. [Google Scholar]

- Mirjalili, S.; Mirjalili, S.M.; Yang, X.S. Binary bat algorithm. Neural Comput. Appl. 2014, 25, 663–681. [Google Scholar] [CrossRef]

- Hatamlou, A. Black hole: A new heuristic optimization approach for data clustering. Inf. Sci. 2013, 222, 175–184. [Google Scholar] [CrossRef]

- Van Laarhoven, P.J.; Aarts, E.H. Simulated annealing. In Simulated Annealing: Theory and Applications; Springer: Dordrecht, The Netherlands, 1987; pp. 7–15. [Google Scholar]

- Dokeroglu, T.; Sevinc, E.; Kucukyilmaz, T.; Cosar, A. A survey on new generation metaheuristic algorithms. Comput. Ind. Eng. 2019, 137, 106040. [Google Scholar] [CrossRef]

- Kar, A.K. Bio inspired computing—A review of algorithms and scope of applications. Expert Syst. Appl. 2016, 59, 20–32. [Google Scholar] [CrossRef]

- Glover, F.; Samorani, M. Intensification, Diversification and Learning in metaheuristic optimization. J. Heuristics 2019, 25, 517–520. [Google Scholar] [CrossRef]

- Cuevas, E.; Echavarría, A.; Ramírez-Ortegón, M.A. An optimization algorithm inspired by the States of Matter that improves the balance between exploration and exploitation. Appl. Intell. 2013, 40, 256–272. [Google Scholar] [CrossRef]

- Krause, J.; Cordeiro, J.; Parpinelli, R.S.; Lopes, H.S. A Survey of Swarm Algorithms Applied to Discrete Optimization Problems. In Swarm Intelligence and Bio-Inspired Computation; Elsevier: Amsterdam, The Netherlands, 2013; pp. 169–191. [Google Scholar] [CrossRef]

- Nanda, S.J.; Panda, G. A survey on nature inspired metaheuristic algorithms for partitional clustering. Swarm Evol. Comput. 2014, 16, 1–18. [Google Scholar] [CrossRef]

- Révész, P. Random Walk in Random and Non-Random Environments; World Scientific: Singapore, 2005. [Google Scholar]

- Weiss, G.H.; Rubin, R.J. Random walks: Theory and selected applications. Adv. Chem. Phys. 1983, 52, 363–505. [Google Scholar]

- Goldberg, D.E. Genetic Algorithms in Search, Optimization and Machine Learning, 1st ed.; Addison-Wesley Longman Publishing Co., Inc.: Boston, MA, USA, 1989; ISBN 0201157675. [Google Scholar]

- Lipowski, A.; Lipowska, D. Roulette-wheel selection via stochastic acceptance. Phys. Stat. Mech. Appl. 2012, 391, 2193–2196. [Google Scholar] [CrossRef]

- Blickle, T.; Thiele, L. A comparison of selection schemes used in evolutionary algorithms. Evol. Comput. 1996, 4, 361–394. [Google Scholar] [CrossRef]

- Glover, F. Tabu search—Part I. Orsa J. Comput. 1989, 1, 190–206. [Google Scholar] [CrossRef]

- Glover, F. Tabu search—Part II. Orsa J. Comput. 1990, 2, 4–32. [Google Scholar] [CrossRef]

- Rényi, A. On measures of entropy and information. In Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, Volume 1: Contributions to the Theory of Statistics; The Regents of the University of California: Oakland, CA, USA, 1961. [Google Scholar]

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Rao, M.; Chen, Y.; Vemuri, B.C.; Wang, F. Cumulative residual entropy: A new measure of information. IEEE Trans. Inf. Theory 2004, 50, 1220–1228. [Google Scholar] [CrossRef]

- Naderi, E.; Narimani, H.; Pourakbari-Kasmaei, M.; Cerna, F.V.; Marzband, M.; Lehtonen, M. State-of-the-Art of Optimal Active and Reactive Power Flow: A Comprehensive Review from Various Standpoints. Processes 2021, 9, 1319. [Google Scholar] [CrossRef]

- Naderi, E.; Azizivahed, A.; Asrari, A. A step toward cleaner energy production: A water saving-based optimization approach for economic dispatch in modern power systems. Electr. Power Syst. Res. 2022, 204, 107689. [Google Scholar] [CrossRef]

- Garey, M.R.; Johnson, D.S. Computers and Intractability: A Guide to the Theory of NP-Completeness; W. H. Freeman & Co.: New York, NY, USA, 1979. [Google Scholar]

- Liu, J.; Wu, C.; Cao, J.; Wang, X.; Teo, K.L. A Binary differential search algorithm for the 0–1 multidimensional knapsack problem. Appl. Math. Model. 2016, 40, 9788–9805. [Google Scholar] [CrossRef]

- Cacchiani, V.; Iori, M.; Locatelli, A.; Martello, S. Knapsack problems—An overview of recent advances. Part II: Multiple, multidimensional, and quadratic knapsack problems. Comput. Oper. Res. 2022, 143, 105693. [Google Scholar] [CrossRef]

- Rezoug, A.; el den, M.B.; Boughaci, D. Application of Supervised Machine Learning Methods on the Multidimensional Knapsack Problem. Neural Process. Lett. 2021, 54, 871–890. [Google Scholar] [CrossRef]

- Beasley, J.E. Multidimensional Knapsack Problems. In Encyclopedia of Optimization; Springer: Berlin/Heidelberg, Germany, 2009; pp. 1607–1611. [Google Scholar] [CrossRef]

- Mavrovouniotis, M.; Li, C.; Yang, S. A survey of swarm intelligence for dynamic optimization: Algorithms and applications. Swarm Evol. Comput. 2017, 33, 1–17. [Google Scholar] [CrossRef]

- Gendreau, M.; Potvin, J.Y. (Eds.) Handbook of Metaheuristics; Springer: New York, NY, USA, 2010. [Google Scholar]

- Panos, M.; Pardalos, M.G.R. Handbook of Applied Optimization; Oxford University Press: Oxford, UK, 2002. [Google Scholar]

- Voß, S.; Dreo, J.; Siarry, P.; Taillard, E. Metaheuristics for Hard Optimization. Math. Methods Oper. Res. 2007, 66, 557–558. [Google Scholar] [CrossRef]

- Voß, S.; Martello, S.; Osman, I.H.; Roucairol, C. (Eds.) Meta-Heuristics: Advances and Trends in Local Search Paradigms for Optimization; Springer: New York, NY, USA, 1998. [Google Scholar]

- Vaessens, R.; Aarts, E.; Lenstra, J. A local search template. Comput. Oper. Res. 1998, 25, 969–979. [Google Scholar] [CrossRef]

- El-Henawy, I.; Ahmed, N. Meta-Heuristics Algorithms: A Survey. Int. J. Comput. Appl. 2018, 179, 45–54. [Google Scholar] [CrossRef]

- Baghel, M.; Agrawal, S.; Silakari, S. Survey of Metaheuristic Algorithms for Combinatorial Optimization. Int. J. Comput. Appl. 2012, 58, 21–31. [Google Scholar] [CrossRef]

- Hussain, K.; Salleh, M.N.M.; Cheng, S.; Shi, Y. Metaheuristic research: A comprehensive survey. Artif. Intell. Rev. 2018, 52, 2191–2233. [Google Scholar] [CrossRef]

- Calvet, L.; de Armas, J.; Masip, D.; Juan, A.A. Learnheuristics: Hybridizing metaheuristics with machine learning for optimization with dynamic inputs. Open Math. 2017, 15, 261–280. [Google Scholar] [CrossRef]

- Pires, E.; Machado, J.; Oliveira, P. Dynamic shannon performance in a multiobjective particle swarm optimization. Entropy 2019, 21, 827. [Google Scholar] [CrossRef] [Green Version]

- Pires, E.J.S.; Machado, J.A.T.; de Moura Oliveira, P.B. Entropy diversity in multi-objective particle swarm optimization. Entropy 2013, 15, 5475–5491. [Google Scholar] [CrossRef]

- Weerasuriya, A.; Zhang, X.; Wang, J.; Lu, B.; Tse, K.; Liu, C.H. Performance evaluation of population-based metaheuristic algorithms and decision-making for multi-objective optimization of building design. Build. Environ. 2021, 198, 107855. [Google Scholar] [CrossRef]

- Guo, W.; Zhu, L.; Wang, L.; Wu, Q.; Kong, F. An Entropy-Assisted Particle Swarm Optimizer for Large-Scale Optimization Problem. Mathematics 2019, 7, 414. [Google Scholar] [CrossRef]

- Jamal, R.; Men, B.; Khan, N.H.; Raja, M.A.Z.; Muhammad, Y. Application of Shannon Entropy Implementation Into a Novel Fractional Particle Swarm Optimization Gravitational Search Algorithm (FPSOGSA) for Optimal Reactive Power Dispatch Problem. IEEE Access 2021, 9, 2715–2733. [Google Scholar] [CrossRef]

- Vargas, M.; Fuertes, G.; Alfaro, M.; Gatica, G.; Gutierrez, S.; Peralta, M. The Effect of Entropy on the Performance of Modified Genetic Algorithm Using Earthquake and Wind Time Series. Complexity 2018, 2018, 4392036. [Google Scholar] [CrossRef]

- Muhammad, Y.; Khan, R.; Raja, M.A.Z.; Ullah, F.; Chaudhary, N.I.; He, Y. Design of Fractional Swarm Intelligent Computing With Entropy Evolution for Optimal Power Flow Problems. IEEE Access 2020, 8, 111401–111419. [Google Scholar] [CrossRef]

- Zhang, H.; Xie, J.; Ge, J.; Lu, W.; Zong, B. An Entropy-based PSO for DAR task scheduling problem. Appl. Soft Comput. 2018, 73, 862–873. [Google Scholar] [CrossRef]

- Chen, J.; You, X.M.; Liu, S.; Li, J. Entropy-Based Dynamic Heterogeneous Ant Colony Optimization. IEEE Access 2019, 7, 56317–56328. [Google Scholar] [CrossRef]

- Mercurio, P.J.; Wu, Y.; Xie, H. An Entropy-Based Approach to Portfolio Optimization. Entropy 2020, 22, 332. [Google Scholar] [CrossRef]

- Khan, M.W.; Muhammad, Y.; Raja, M.A.Z.; Ullah, F.; Chaudhary, N.I.; He, Y. A New Fractional Particle Swarm Optimization with Entropy Diversity Based Velocity for Reactive Power Planning. Entropy 2020, 22, 1112. [Google Scholar] [CrossRef]

- Xu, J.; Zhang, J. Exploration-exploitation tradeoffs in metaheuristics: Survey and analysis. In Proceedings of the 33rd Chinese Control Conference, Nanjing, China, 28–30 July 2014; pp. 8633–8638. [Google Scholar]

- Binitha, S.; Sathya, S.S. A survey of bio inspired optimization algorithms. Int. J. Soft Comput. Eng. 2012, 2, 137–151. [Google Scholar]

- Montero, M. Random Walks with Invariant Loop Probabilities: Stereographic Random Walks. Entropy 2021, 23, 729. [Google Scholar] [CrossRef]

- Villarroel, J.; Montero, M.; Vega, J.A. A Semi-Deterministic Random Walk with Resetting. Entropy 2021, 23, 825. [Google Scholar] [CrossRef] [PubMed]

- Beasley, J. OR-Library. 1990. Available online: http://people.brunel.ac.uk/~mastjjb/jeb/info.html (accessed on 6 September 2022).

- Khuri, S.; Bäck, T.; Heitkötter, J. The zero/one multiple knapsack problem and genetic algorithms. In Proceedings of the 1994 ACM Symposium on Applied Computing, Phoenix, AZ, USA, 6–8 March 1994; pp. 188–193. [Google Scholar]

- Dammeyer, F.; Voß, S. Dynamic tabu list management using the reverse elimination method. Ann. Oper. Res. 1993, 41, 29–46. [Google Scholar] [CrossRef]

- Drexl, A. A simulated annealing approach to the multiconstraint zero-one knapsack problem. Computing 1988, 40, 1–8. [Google Scholar] [CrossRef]

- Crawford, B.; Soto, R.; Astorga, G.; García, J.; Castro, C.; Paredes, F. Putting Continuous Metaheuristics to Work in Binary Search Spaces. Complexity 2017, 2017, 8404231. [Google Scholar] [CrossRef]

- Fagerland, M.W.; Sandvik, L. The Wilcoxon-Mann-Whitney test under scrutiny. Stat. Med. 2009, 28, 1487–1497. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).