Symmetry-Breaking Bifurcations of the Information Bottleneck and Related Problems

Abstract

:1. Introduction

2. Bifurcation Analysis

2.1. Equivariant Branching Lemma

- and have independent bases, which implies that each is invariant to the action of , and so the decomposition shows that does not act absolutely irreducibly on , but it does act absolutely irreducibly on each of these disjoint subspaces separately. This is why we present a version of the Equivariant Branching Lemma that does not require absolute irreducibility.

- The Liapunov–Schmidt reduction onto is clear, but not onto .

- is two-dimensional with basiswhere .

2.2. A Gradient Flow

- is an -invariant, real-valued function of q, where the action of on q permutes the component vectors , , of .

- The Hessian is block diagonal, where the ith block is .

2.3. Equilibria with Symmetry

- ;

- ;

- .

- so that for every .

- For B, the M block(s) of the Hessian defined in (9), has dimension 2 with basis vectors . is associated with the crossing eigenvalues, and is associated with the constant zero eigenvalue of B.

- The block(s) of the Hessian , defined in (9), each have a one-dimensional kernel with basis vector .

- The vectors , and are linearly independent.

- The matrixis nonsingular. is the Moore–Penrose inverse of . When , we define .

2.4. The Kernel at a Bifurcation

2.5. Liapunov–Schmidt Reduction

- ;

- , , ;

- , , .

- For , not all equal, the value of the cube is

- For , not both equal, and , the value of the cube is

- For and , not both equal, the value of the cube is

- For , not all equal, the value of the cube is

2.6. Isotropy Subgroups of

2.7. Bifurcating Branches

2.8. The Crossing Condition for Annealing Problemsn

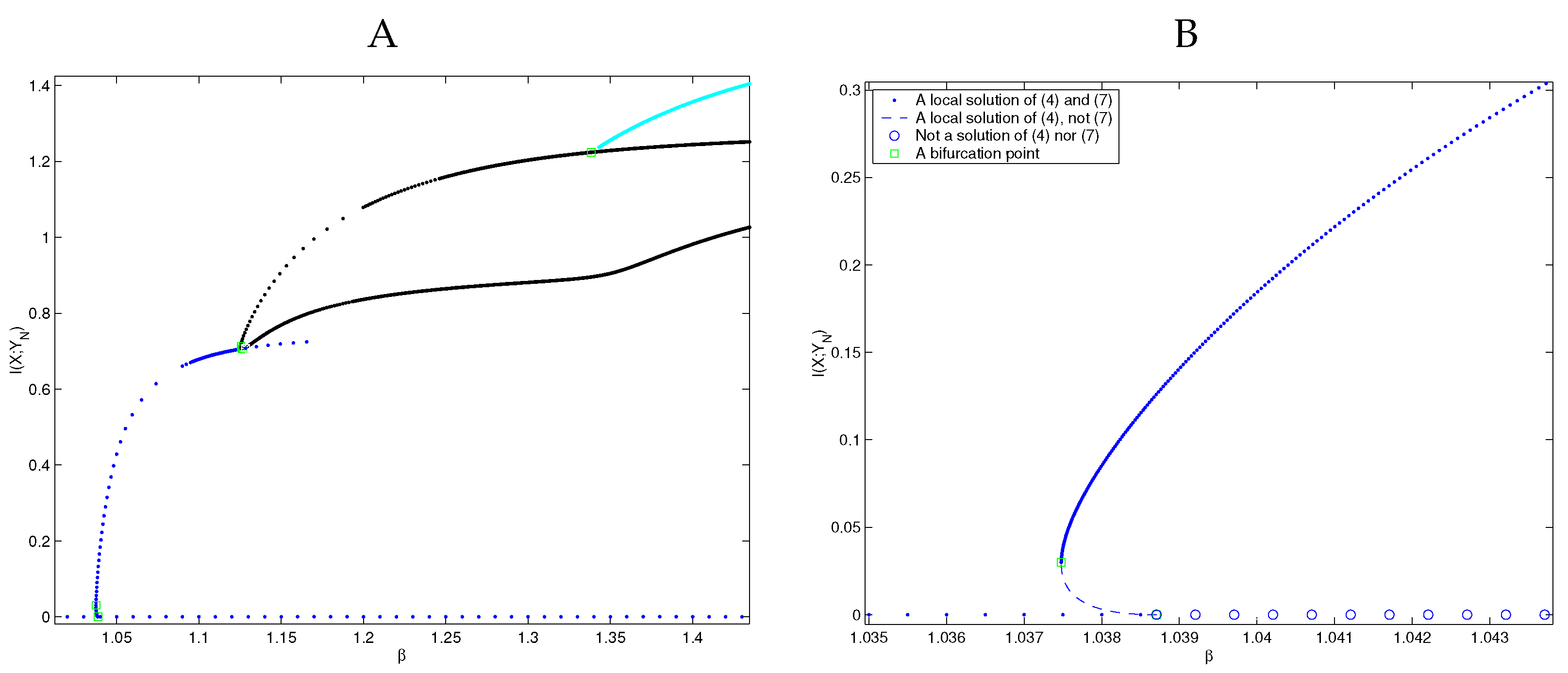

2.9. Bifurcation Type

2.10. Stability and Optimality

2.11. Structure of the Symmetry Projection

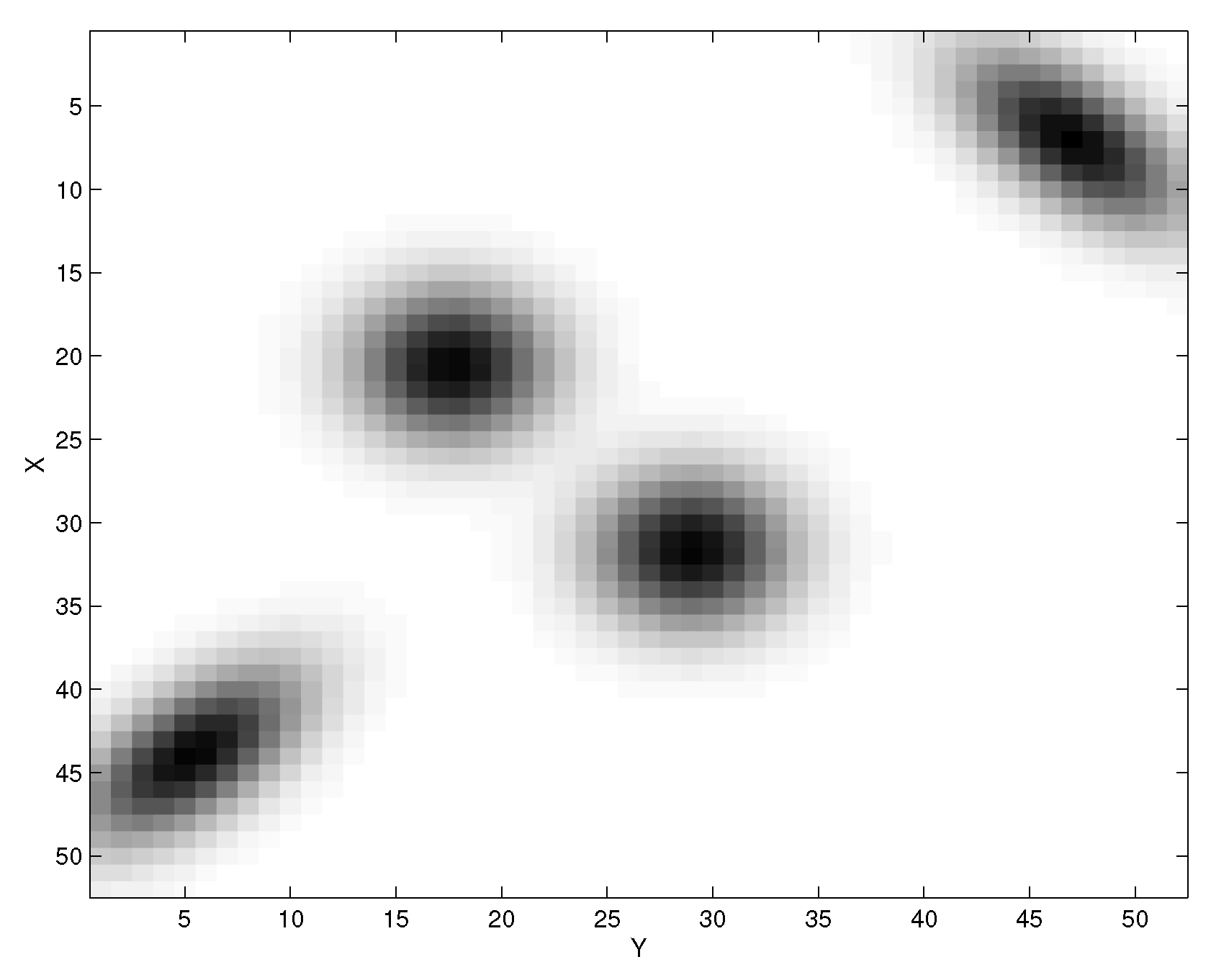

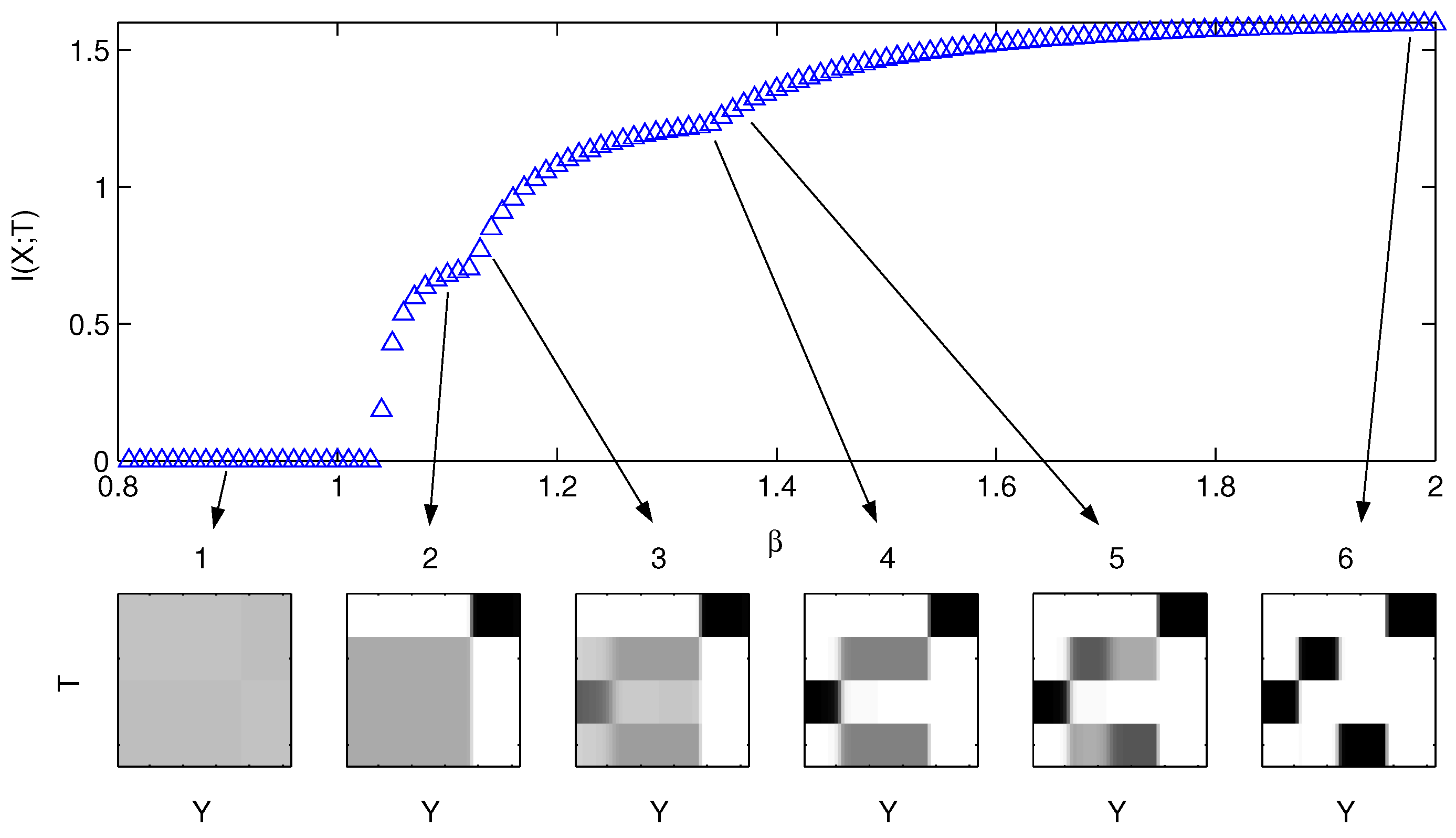

2.12. Visualizations of Sample Resultsn

3. Conclusions and Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Gray, R.M. Entropy and Information Theory; Springer: Berlin/Heidelberg, Germany, 1990. [Google Scholar]

- Cover, T.; Thomas, J. Elements of Information Theory; Wiley Series in Communication; Wiley: New York, NY, USA, 1991. [Google Scholar]

- Rose, K. Deteministic Annealing for Clustering, Compression, Classification, Regression, and Related Optimization Problems. Proc. IEEE 1998, 86, 2210–2239. [Google Scholar] [CrossRef]

- Madeira, S.C.; Oliveira, A.L. Biclustering algorithms for biological data analysis: A survey. IEEE/ACM Trans. Comput. Biol. Bioinform. 2004, 1, 24–45. [Google Scholar] [CrossRef] [PubMed]

- Tishby, N.; Pereira, F.C.; Bialek, W. The information bottleneck method. In 37th Annual Allerton Conference on Communication, Control, and Computing; University of Illinois: Champaign, IL, USA, 1999. [Google Scholar]

- Dimitrov, A.G.; Miller, J.P.; Aldworth, Z.; Gedeon, T.; Parker, A.E. Analysis of neural coding through quantization with an information-based distortion measure. Netw. Comput. Neural Syst. 2003, 14, 151–176. [Google Scholar] [CrossRef]

- Dimitrov, A.G.; Miller, J.P. Neural coding and decoding: Communication channels and quantization. Netw. Comput. Neural Syst. 2001, 12, 441–472. [Google Scholar] [CrossRef]

- Gersho, A.; Gray, R.M. Vector Quantization and Signal Compression; Kluwer Academic Publishers: New York, NY, USA, 1992. [Google Scholar]

- Mumey, B.; Gedeon, T. Optimal mutual information quantization is NP-complete. In Proceedings of the Neural Information Coding (NIC) Workshop, Snowbird, UT, USA, 1–4 March 2003. [Google Scholar]

- Slonim, N.; Tishby, N. Agglomerative Information Bottleneck. In Advances in Neural Information Processing Systems; Solla, S.A., Leen, T.K., Müller, K.R., Eds.; MIT Press: Cambridge, MA, USA, 2000; Volume 12, pp. 617–623. [Google Scholar]

- Slonim, N. The Information Bottleneck: Theory and Applications. Ph.D. Thesis, Hebrew University, Jerusalem, Israel, 2002. [Google Scholar]

- Dimitrov, A.G.; Miller, J.P. Analyzing sensory systems with the information distortion function. In Proceedings of the Pacific Symposium on Biocomputing 2001; Altman, R.B., Ed.; World Scientific Publishing Co.: Singapore, 2000. [Google Scholar]

- Gedeon, T.; Parker, A.E.; Dimitrov, A.G. Information Distortion and Neural Coding. Can. Appl. Math. Q. 2003, 10, 33–70. [Google Scholar]

- Slonim, N.; Somerville, R.; Tishby, N.; Lahav, O. Objective classification of galaxy spectra using the information bottleneck method. Mon. Not. R. Astron. Soc. 2001, 323, 270–284. [Google Scholar] [CrossRef]

- Bardera, A.; Rigau, J.; Boada, I.; Feixas, M.; Sbert, M. Image segmentation using information bottleneck method. IEEE Trans. Image Process. 2009, 18, 1601–1612. [Google Scholar] [CrossRef]

- Aldworth, Z.N.; Dimitrov, A.G.; Cummins, G.I.; Gedeon, T.; Miller, J.P. Temporal encoding in a nervous system. PLoS Comput. Biol. 2011, 7, e1002041. [Google Scholar] [CrossRef]

- Buddha, S.K.; So, K.; Carmena, J.M.; Gastpar, M.C. Function identification in neuron populations via information bottleneck. Entropy 2013, 15, 1587–1608. [Google Scholar] [CrossRef]

- Lewandowsky, J.; Bauch, G. Information-optimum LDPC decoders based on the information bottleneck method. IEEE Access 2018, 6, 4054–4071. [Google Scholar] [CrossRef]

- Parker, A.E.; Dimitrov, A.G.; Gedeon, T. Symmetry breaking in soft clustering decoding of neural codes. IEEE Trans. Inf. Theory 2010, 56, 901–927. [Google Scholar] [CrossRef] [Green Version]

- Gedeon, T.; Parker, A.E.; Dimitrov, A.G. The mathematical structure of information bottleneck methods. Entropy 2012, 14, 456–479. [Google Scholar] [CrossRef]

- Parker, A.E.; Gedeon, T. Bifurcations of a class of SN-invariant constrained optimization problems. J. Dyn. Differ. Equ. 2004, 16, 629–678. [Google Scholar] [CrossRef]

- Golubitsky, M.; Stewart, I.; Schaeffer, D.G. Singularities and Groups in Bifurcation Theory II; Springer: New York, NY, USA, 1988. [Google Scholar]

- Golubitsky, M.; Schaeffer, D.G. Singularities and Groups in Bifurcation Theory I; Springer: New York, NY, USA, 1985. [Google Scholar]

- Nocedal, J.; Wright, S.J. Numerical Optimization; Springer: New York, NY, USA, 2000. [Google Scholar]

- Parker, A.E. Symmetry Breaking Bifurcations of the Information Distortion. Ph.D. Thesis, Montana State University, Bozeman, MT, USA, 2003. [Google Scholar]

- Golubitsky, M.; Stewart, I. The Symmetry Perspective: From Equilibrium to Chaos in Phase Space and Physical Space; Birkhauser Verlag: Boston, MA, USA, 2002. [Google Scholar]

- Schott, J.R. Matrix Analysis for Statistics; John Wiley and Sons: New York, NY, USA, 1997. [Google Scholar]

- Parker, A.; Gedeon, T.; Dimitrov, A. Annealing and the rate distortion problem. In Advances in Neural Information Processing Systems 15; Becker, S.T., Obermayer, K., Eds.; MIT Press: Cambridge, MA, USA, 2003; Volume 15, pp. 969–976. [Google Scholar]

- Dimitrov, A.G.; Cummins, G.I.; Baker, A.; Aldworth, Z.N. Characterizing the fine structure of a neural sensory code through information distortion. J. Comput. Neurosci. 2011, 30, 163–179. [Google Scholar] [CrossRef] [PubMed]

- Schneidman, E.; Slonim, N.; Tishby, N.; de Ruyter van Steveninck, R.R.; Bialek, W. Analyzing neural codes using the information bottleneck method. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2003; Volume 15. [Google Scholar]

- Stewart, I. Self-Organization in evolution: A mathematical perspective. Philos. Trans. R. Soc. 2003, 361, 1101–1123. [Google Scholar] [CrossRef] [PubMed]

- Chechik, G.; Globerson, A.; Tishby, N.; Weiss, Y. Information bottleneck for Gaussian variables. In Proceedings of the Advances in Neural Information Processing Systems 16 (NIPS 2003), Vancouver, BC, Canada, 8–13 December 2003. [Google Scholar]

- Chechik, G.; Globerson, A.; Tishby, N.; Weiss, Y. Information Bottleneck for Gaussian Variables. J. Mach. Learn. Res. 2005, 6, 165–188. [Google Scholar]

- Gelfand, I.M.; Fomin, S.V. Calculus of Variations; Dover Publications: Mineola, NY, USA, 2000. [Google Scholar]

- Wu, T.; Fischer, I.; Chuang, I.L.; Tegmark, M. Learnability for the information bottleneck. In Proceedings of the Uncertainty in Artificial Intelligence, PMLR, Virtual, 3–6 August 2020; pp. 1050–1060. [Google Scholar]

- Ngampruetikorn, V.; Schwab, D.J. Perturbation theory for the information bottleneck. Adv. Neural Inf. Process. Syst. 2021, 34, 21008–21018. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Parker, A.E.; Dimitrov, A.G. Symmetry-Breaking Bifurcations of the Information Bottleneck and Related Problems. Entropy 2022, 24, 1231. https://doi.org/10.3390/e24091231

Parker AE, Dimitrov AG. Symmetry-Breaking Bifurcations of the Information Bottleneck and Related Problems. Entropy. 2022; 24(9):1231. https://doi.org/10.3390/e24091231

Chicago/Turabian StyleParker, Albert E., and Alexander G. Dimitrov. 2022. "Symmetry-Breaking Bifurcations of the Information Bottleneck and Related Problems" Entropy 24, no. 9: 1231. https://doi.org/10.3390/e24091231

APA StyleParker, A. E., & Dimitrov, A. G. (2022). Symmetry-Breaking Bifurcations of the Information Bottleneck and Related Problems. Entropy, 24(9), 1231. https://doi.org/10.3390/e24091231