The Radically Embodied Conscious Cybernetic Bayesian Brain: From Free Energy to Free Will and Back Again

Abstract

1. Introduction

1.1. Descartes’ Errors and Insights

“Any time a theory builder proposes to call any event, state, structure, etc., in a system (say the brain of an organism) a signal or message or command or otherwise endows it with content, he takes out a loan of intelligence. He implicitly posits along with his signals, messages, or commands, something that can serve a signal reader, message-understander, or commander, else his ‘signals’ will be for naught, will decay unreceived, uncomprehended. This loan must be repaid eventually finding and analyzing away these readers or comprehenders; for, failing this, the theory will have among its elements unanalyzed man-analogues endowed with enough intelligence to read the signals, etc., and thus the theory will postpone answering the major question: what makes for intelligence?”—Daniel Dennett [1]

- The mind-body problem: Separating bodies and minds as distinct orders of being.

- The theater fallacy: Describing perception in terms of the re-presentation of sensations to inner experiencers.

- The homunculus fallacy: Failing to realize the inadequacy of inner experiencers as explanations, since these would require further experiencers to explain their experiences, resulting in infinite regress.

- Minds are thoroughly embodied, embedded, enacted, and extended, but there are functionally important aspects of mind (e.g., integrative processes supporting consciousness) that do not extend into bodies, nor even throughout the entire brain.

- The brain not only infers mental spaces, but it populates these spaces with representations of sensations and actions, so providing bases for causal reasoning and planning via mental simulations.

- Not only are experiences re-presented to inner experiencers, but these experiencers take the form of embodied person-models with degrees of agency, and even more, these quasi-homunculi form necessary scaffolding for nearly all aspects of mind.

1.2. Radically Embodied Minds

“Now what are space and time? Are they actual entities? Are they only determinations or also relations of things, but still such as would belong to them even if they were not intuited? Or are they such that they belong only to the form of intuition, and therefore to the subjective constitution of our mind, without which these predicates could not be ascribed to any things at all?... Concepts without intuitions are empty, intuitions without concepts are blind… By synthesis, in its most general sense, I understand the act of putting different representations together, and of grasping what is manifold in them in one knowledge… The mind could never think its identity in the manifoldness of its representations… if it did not have before its eyes the identity of its act, whereby it subordinates all… to a transcendental unity… This thoroughgoing synthetic unity of perceptions is the form of experience; it is nothing less than the synthetic unity of appearances in accordance with concepts.”—Immanuel Kant [15]

“We shall never get beyond the representation, i.e. the phenomenon. We shall therefore remain at the outside of things; we shall never be able to penetrate into their inner nature, and investigate what they are in themselves... So far I agree with Kant. But now, as the counterpoise to this truth, I have stressed that other truth that we are not merely the knowing subject, but that we ourselves are also among those realities or entities we require to know, that we ourselves are the thing-in-itself. Consequently, a way from within stands open to us as to that real inner nature of things to which we cannot penetrate from without. It is, so to speak, a subterranean passage, a secret alliance, which, as if by treachery, places us all at once in the fortress that could not be taken by attack from without.”—Arthur Schopenhauer [16]

- Constant availability for observation, even prenatally.

- Multimodal sensory integration allowing for ambiguity reduction in one modality based on information within other modalities (i.e., cross-modal priors).

- Within-body interactions (e.g., thumb sucking; hand–hand interaction; skeletal force transfer).

- Action-driven perception (e.g., efference copies and corollary discharges as prior expectations; hypothesis testing via motion and interaction).

- Affective salience (e.g., body states influencing value signals, so directing attentional and meta-plasticity factors).

1.3. The Cybernetic Bayesian Brain

“Each movement we make by which we alter the appearance of objects should be thought of as an experiment designed to test whether we have understood correctly the invariant relations of the phenomena before us, that is, their existence in definite spatial relations.”—Hermann Ludwig Ferdinand von Helmholtz [50]

2. From Action to Attention and Back Again

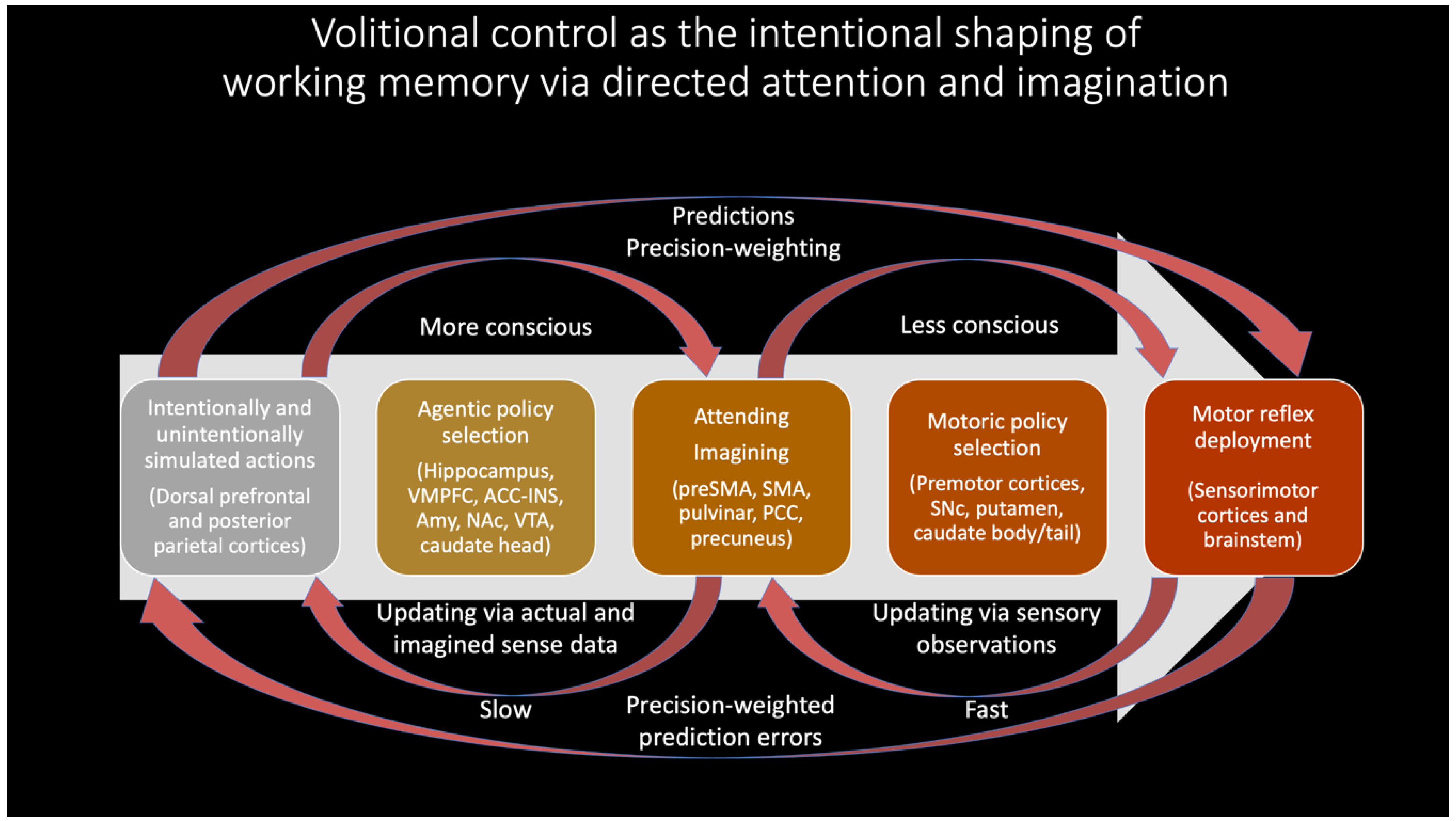

- Much of conscious goal-oriented behavior may largely be realized via iterative comparisons between sensed and imagined states, with predictive processing mechanisms automatically generating sensibly prioritized sub-goals based on prediction errors from these contrasting operations.

- Partially-expressed motor predictions—initially overtly expressed, and later internalized—may provide a basis for all intentionally-directed attention, working memory, and imagination.

- These imaginings may provide a basis for conscious control of overt patterns of enaction, including the pursuit of complex goals.

2.1. Actions from Imaginings

2.2. Attention from Actions

“A good way to begin to consider the overall behavior of the cerebral cortex is to imagine that the front of the brain is ‘looking at’ the sensory systems, most of which are at the back of the brain. This division of labor does not lead to an infinite regress… The hypothesis of the homunculus is very much out of fashion these days, but this is, after all, how everyone thinks of themselves. It would be surprising if this overwhelming illusion did not reflect in some way the general organization of the brain.”—Francis Crick and Christoff Koch [6]

2.3. Imaginings from Attention

3. Grounding Intentionality in Virtual Intrabody Interactions and Self-Annihilating Free Energy Gradients

“We have to reject the age-old assumptions that put the body in the world and the seer in the body, or, conversely, the world and the body in the seer as in a box. Where are we to put the limit between the body and the world, since the world is flesh? Where in the body are we to put the seer, since evidently there is in the body only "shadows stuffed with organs," that is, more of the visible? The world seen is not "in" my body, and my body is not "in" the visible world ultimately: as flesh applied to a flesh, the world neither surrounds it nor is surrounded by it. A participation in and kinship with the visible, the vision neither envelops it nor is enveloped by it definitively. The superficial pellicle of the visible is only for my vision and for my body. But the depth beneath this surface contains my body and hence contains my vision. My body as a visible thing is contained within the full spectacle. But my seeing body subtends this visible body, and all the visibles with it. There is reciprocal insertion and intertwining of one in the other...”.—Maurice Merleau-Ponty [150]

4. The Emergence of Conscious Teleological Agents

4.1. Generalized Dynamic Cores

“What is the first and most fundamental thing a new-born infant has to do? If one subscribes to the free energy principle, the only thing it has to do is to resolve uncertainty about causes of its exteroceptive, proprioceptive and interoceptive sensations... It is at this point the importance of selfhood emerges – in the sense that the best explanation for the sensations of a sentient creature, immersed in an environment, must entail the distinction between self (creature) and non-self (environment). It follows that the first job of structure learning is to distinguish between the causes of sensations that can be attributed to self and those that cannot… The question posed here is whether a concept or experience of minimal selfhood rests upon selecting (i.e. learning) models that distinguish self from non-self or does it require models that accommodate a partition of agency into self, other, and everything else.”—Karl Friston [88]

“[We] localize awareness of awareness and dream lucidity to the executive functions of the frontal cortex. We hypothesize that activation of this region is critical to self-consciousness — and repudiate any suggestion that ‘there is a little man seated in our frontal cortex’ or that ‘it all comes together’ there. We insist only that without frontal lobe activation the brain is not fully conscious. In summary, we could say, perhaps provocatively, that (self-) consciousness is like a theatre in that one watches something like a play, whenever the frontal lobe is activated. In waking, the ‘play’ includes the outside world. In lucid dreaming the ‘play’ is entirely internal. In both states, the ‘play’ is a model, hence virtual. But it is always physical and is always brain-based.”—Allan Hobson and Karl Friston [11]

4.2. Embodied Self-Models (ESMs) as Cores of Consciousness

4.2.1. The Origins of ESMs

4.2.2. Phenomenal Binding via ESMs

4.2.3. Varieties of ESMs

“We suggest that a useful conceptual space for a notion of the homunculus may be located at the nexus between those many parallel processes that the brain is constantly engaged in, and the input from other people, of top-top interactions. In this understanding, the role of a putative homunculus becomes one of a dual gatekeeper: On one hand, between those many parallel processes and the attended few, on the other hand be-tween one mind and another... [T]he feeling of control and consistency may indeed seem illusionary from an outside perspective. However, from the inside perspective of the individual, it appears to be a very important anchor point both for action and perception. If we did not have the experience of this inner homunculus that is in control of our actions, our sense of self would dissolve into the culture that surrounds us.”—Andreas Roepstorff and Chris Frith [12]

4.3. Free Energy; Will Power; Free Will

4.4. Mental Causation

5. Neurophenomenology of Agency

5.1. Implications for Theories of Consciousness: Somatically-Grounded World Models, Experiential Richness, and Grand Illusions

“For my part, when I enter most intimately into what I call myself, I always stumble on some particular perception or other, of heat or cold, light or shade, love or hatred, pain or pleasure. I never can catch myself at any time without a perception, and never can observe any thing but the perception. When my perceptions are remov’d for any time, as by sound sleep; so long am I insensible of myself, and may truly be said not to exist. And were all my perceptions remov’d by death, and cou’d I neither think, nor feel, nor see, nor love, nor hate after the dissolution of my body, I shou’d be entirely annihilated, nor do I conceive what is farther requisite to make me a perfect non-entity... But setting aside some metaphysicians of this kind, I may venture to affirm of the rest of mankind, that they are nothing but a bundle or collection of different perceptions, which succeed each other with an inconceivable rapidity, and are in a perpetual flux and movement.”—David Hume [316]

5.2. Conscious and Unconscious Cores and Workspaces; Physical Substrates of Agency

- Avoiding excessive exploitation (at the expense of exploration) in action selection (broadly construed to include mental acts with respect to attention and working memory).

- A process for generating novel possibilities as a source of counterfactuals for causal reasoning and planning.

- Game theoretic considerations such as undermining the ability of rival agents to plan agonistic strategies, potentially even including “adversarial attacks” from the agent itself.

5.3. Readiness Potentials and the Willingness to Act

5.4. Qualia Explained?

5.4.1. Emotions and Feelings

5.4.2. What Is Value? Reward Prediction Errors and Self-Annihilating Free Energy Gradients

5.4.3. Curiosity and Play/Joy

5.4.4. Synesthetic Affects

5.4.5. The Computational Neurophenomenology of Desires/Pains as Free Energy Gradients That Become Pleasure through Self-Annihilation

5.4.6. Desiring to Desire; Transforming Pain into Pleasure, and Back Again

5.4.7. Why Conscious Feelings?

5.5. Facing up to the Meta-Problem of Consciousness

6. Conclusions

“The intentionality of all such talk of signals and commands reminds us that rationality is being taken for granted, and in this way shows us where a theory is incomplete. It is this feature that, to my mind, puts a premium on the yet unfinished task of devising a rigorous definition of intentionality, for if we can lay claim to a purely formal criterion of intentional discourse, we will have what amounts to a medium of exchange for assessing theories of behavior. Intentionality abstracts from the inessential details of the various forms intelligence-loans can take (e.g., signal-readers, volition-emitters, librarians in the corridors of memory, egos and superegos) and serves as a reliable means of detecting exactly where a theory is in the red relative to the task of explaining intelligence; wherever a theory relies on a formulation bearing the logical marks of intentionality, there a little man is concealed.”—Daniel Dennett [1]

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Dennett, D. Brainstorms: Philosophical Essays on Mind and Psychology; The MIT Press: Cambridge, MA, USA, 1981; ISBN 978-0-262-54037-7. [Google Scholar]

- Marr, D. Vision: A Computational Investigation into the Human Representation and Processing of Visual Information; Henry Holt and Company: New York, NY, USA, 1983; ISBN 978-0-7167-1567-2. [Google Scholar]

- Varela, F.J.; Thompson, E.T.; Rosch, E. The Embodied Mind: Cognitive Science and Human Experience, Revised ed.; The MIT Press: Cambridge, MA, USA, 1992; ISBN 978-0-262-72021-2. [Google Scholar]

- Rudrauf, D.; Lutz, A.; Cosmelli, D.; Lachaux, J.-P.; Le Van Quyen, M. From Autopoiesis to Neurophenomenology: Francisco Varela’s Exploration of the Biophysics of Being. Biol. Res. 2003, 36, 27–65. [Google Scholar] [CrossRef] [PubMed]

- Clark, A.; Chalmers, D.J. The Extended Mind. Analysis 1998, 58, 7–19. [Google Scholar] [CrossRef]

- Crick, F.; Koch, C. A Framework for Consciousness. Nat. Neurosci. 2003, 6, 119–126. [Google Scholar] [CrossRef]

- Damasio, A. Descartes’ Error: Emotion, Reason, and the Human Brain, 1st ed.; Harper Perennial: New York, NY, USA, 1995; ISBN 0-380-72647-5. [Google Scholar]

- Dennett, D. Consciousness Explained, 1st ed.; Back Bay Books: New York, NY, USA, 1992; ISBN 0-316-18066-1. [Google Scholar]

- Dolega, K.; Dewhurst, J. CURTAIN CALL AT THE CARTESIAN THEATRE. J. Conscious. Stud. 2015, 22, 109–128. [Google Scholar]

- Forstmann, M.; Burgmer, P. The Cartesian Folk Theater: People Conceptualize Consciousness as a Spatio-Temporally Localized Process in the Human Brain 2021. PsyArXiv 2021. [Google Scholar] [CrossRef]

- Hobson, J.A.; Friston, K.J. A Response to Our Theatre Critics. J. Conscious. Stud. 2016, 23, 245–254. [Google Scholar]

- Roepstorff, A.; Frith, C. What’s at the Top in the Top-down Control of Action? Script-Sharing and “top-Top” Control of Action in Cognitive Experiments. Psychol. Res. 2004, 68, 189–198. [Google Scholar] [CrossRef]

- Deacon, T.W. Incomplete Nature: How Mind Emerged from Matter, 1st ed.; W. W. Norton & Company: New York, NY, USA, 2011; ISBN 978-0-393-04991-6. [Google Scholar]

- Dennett, D. From Bacteria to Bach and Back: The Evolution of Minds, 1st ed.; W. W. Norton & Company: New York, NY, USA, 2017; ISBN 978-0-393-24207-2. [Google Scholar]

- Kant, I. Critique of Pure Reason; Guyer, P., Wood, A.W., Eds.; Cambridge University Press: Cambridge, MA, USA, 1781; ISBN 978-0-521-65729-7. [Google Scholar]

- Schopenhauer, A. The World as Will and Representation; Courier Corporation: North Chelmsford, MA, USA, 1844; ISBN 978-0-486-13093-4. [Google Scholar]

- Safron, A. Multilevel Evolutionary Developmental Optimization (MEDO): A Theoretical Framework for Understanding Preferences and Selection Dynamics. arXiv 2019, arXiv:1910.13443. [Google Scholar]

- Mountcastle, V.B. The Columnar Organization of the Neocortex. Brain J. Neurol. 1997, 120 Pt 4, 701–722. [Google Scholar] [CrossRef]

- Bastos, A.M.; Usrey, W.M.; Adams, R.A.; Mangun, G.R.; Fries, P.; Friston, K.J. Canonical Microcircuits for Predictive Coding. Neuron 2012, 76, 695–711. [Google Scholar] [CrossRef] [PubMed]

- Walsh, K.S.; McGovern, D.P.; Clark, A.; O’Connell, R.G. Evaluating the Neurophysiological Evidence for Predictive Processing as a Model of Perception. Ann. N. Y. Acad. Sci. 2020, 1464, 242–268. [Google Scholar] [CrossRef] [PubMed]

- Bassingthwaighte, J.B. Fractal vascular growth patterns. Acta Stereol. 1992, 11, 305–319. [Google Scholar]

- Ermentrout, G.B.; Edelstein-Keshet, L. Cellular Automata Approaches to Biological Modeling. J. Theor. Biol. 1993, 160, 97–133. [Google Scholar] [CrossRef] [PubMed]

- Eaton, R.C.; Lee, R.K.; Foreman, M.B. The Mauthner Cell and Other Identified Neurons of the Brainstem Escape Network of Fish. Prog. Neurobiol. 2001, 63, 467–485. [Google Scholar] [CrossRef]

- Lancer, B.H.; Evans, B.J.E.; Fabian, J.M.; O’Carroll, D.C.; Wiederman, S.D. A Target-Detecting Visual Neuron in the Dragonfly Locks on to Selectively Attended Targets. J. Neurosci. 2019, 39, 8497–8509. [Google Scholar] [CrossRef]

- Sculley, D.; Holt, G.; Golovin, D.; Davydov, E.; Phillips, T.; Ebner, D.; Chaudhary, V.; Young, M. Machine Learning: The High Interest Credit Card of Technical Debt. 2014. Available online: https://research.google/pubs/pub43146/ (accessed on 26 May 2021).

- Wolfram, S. A New Kind of Science, 1st ed.; Wolfram Media: Champaign, IL, USA, 2002; ISBN 978-1-57955-008-0. [Google Scholar]

- Crispo, E. The Baldwin Effect and Genetic Assimilation: Revisiting Two Mechanisms of Evolutionary Change Mediated by Phenotypic Plasticity. Evol. Int. J. Org. Evol. 2007, 61, 2469–2479. [Google Scholar] [CrossRef]

- Jaeger, J.; Monk, N. Bioattractors: Dynamical Systems Theory and the Evolution of Regulatory Processes. J. Physiol. 2014, 592, 2267–2281. [Google Scholar] [CrossRef]

- Waddington, C.H. Canalization of development and the inheritance of acquired characters. Nature 1942, 150, 150563a0. [Google Scholar] [CrossRef]

- Hofsten, C.V.; Feng, Q.; Spelke, E.S. Object Representation and Predictive Action in Infancy. Dev. Sci. 2000, 3, 193–205. [Google Scholar] [CrossRef]

- Spelke, E.S.; Kinzler, K.D. Core Knowledge. Dev. Sci. 2007, 10, 89–96. [Google Scholar] [CrossRef]

- Partanen, E.; Kujala, T.; Näätänen, R.; Liitola, A.; Sambeth, A.; Huotilainen, M. Learning-Induced Neural Plasticity of Speech Processing before Birth. Proc. Natl. Acad. Sci. USA 2013, 110, 15145–15150. [Google Scholar] [CrossRef]

- Lake, B.M.; Ullman, T.D.; Tenenbaum, J.B.; Gershman, S.J. Building Machines That Learn and Think like People. Behav. Brain Sci. 2017, 40. [Google Scholar] [CrossRef] [PubMed]

- Tenenbaum, J.B.; Kemp, C.; Griffiths, T.L.; Goodman, N.D. How to Grow a Mind: Statistics, Structure, and Abstraction. Science 2011, 331, 1279–1285. [Google Scholar] [CrossRef] [PubMed]

- Zador, A.M. A Critique of Pure Learning and What Artificial Neural Networks Can Learn from Animal Brains. Nat. Commun. 2019, 10, 1–7. [Google Scholar] [CrossRef] [PubMed]

- Conant, R.C.; Ashby, W.R. Every Good Regulator of a System Must Be a Model of That System. Int. J. Syst. Sci. 1970, 1, 89–97. [Google Scholar] [CrossRef]

- Mansell, W. Control of Perception Should Be Operationalized as a Fundamental Property of the Nervous System. Top. Cogn. Sci. 2011, 3, 257–261. [Google Scholar] [CrossRef]

- Pfeifer, R.; Bongard, J. How the Body Shapes the Way We Think: A New View of Intelligence; A Bradford Book: Cambridge, MA, USA, 2006; ISBN 978-0-262-16239-5. [Google Scholar]

- Rochat, P. Emerging Self-Concept. In The Wiley-Blackwell Handbook of Infant Development; Bremner, J.G., Wachs, T.D., Eds.; Wiley-Blackwell: Hoboken, NJ, USA, 2010; pp. 320–344. ISBN 978-1-4443-2756-4. [Google Scholar]

- Bingham, G.P.; Snapp-Childs, W.; Zhu, Q. Information about Relative Phase in Bimanual Coordination Is Modality Specific (Not Amodal), but Kinesthesis and Vision Can Teach One Another. Hum. Mov. Sci. 2018, 60, 98–106. [Google Scholar] [CrossRef]

- Snapp-Childs, W.; Wilson, A.D.; Bingham, G.P. Transfer of Learning between Unimanual and Bimanual Rhythmic Movement Coordination: Transfer Is a Function of the Task Dynamic. Exp. Brain Res. 2015, 233, 2225–2238. [Google Scholar] [CrossRef]

- Zhu, Q.; Mirich, T.; Huang, S.; Snapp-Childs, W.; Bingham, G.P. When Kinesthetic Information Is Neglected in Learning a Novel Bimanual Rhythmic Coordination. Atten. Percept. Psychophys. 2017, 79, 1830–1840. [Google Scholar] [CrossRef]

- Tani, J. Exploring Robotic Minds: Actions, Symbols, and Consciousness as Self-Organizing Dynamic Phenomena; Oxford University Press: Oxford, UK, 2016. [Google Scholar]

- Buckner, R.L.; Krienen, F.M. The Evolution of Distributed Association Networks in the Human Brain. Trends Cogn. Sci. 2013, 17, 648–665. [Google Scholar] [CrossRef]

- Barsalou, L. Grounded Cognition. Annu. Rev. Psychol. 2008, 59, 617–645. [Google Scholar] [CrossRef]

- Barsalou, L. Perceptual Symbol Systems. Behav. Brain Sci. 1999, 22, 577–609; discussion 610–660. [Google Scholar] [CrossRef]

- Lakoff, G. Mapping the Brain’s Metaphor Circuitry: Metaphorical Thought in Everyday Reason. Front. Hum. Neurosci. 2014, 8. [Google Scholar] [CrossRef] [PubMed]

- Lakoff, G.; Johnson, M. Philosophy in the Flesh: The Embodied Mind and Its Challenge to Western Thought; Basic Books: New York, NY, USA, 1999; ISBN 0-465-05674-1. [Google Scholar]

- Metzinger, T. The Ego Tunnel: The Science of the Mind and the Myth of the Self, 1st ed.; Basic Books: New York, NY, USA, 2009; ISBN 978-0-465-04567-9. [Google Scholar]

- Helmholtz, H. The Facts in Perception. In Selected Writings of Hermann Helmholtz; Kahl, R., Ed.; Wesleyan University Press: Middletown, CT, USA, 1878. [Google Scholar]

- McGurk, H.; MacDonald, J. Hearing Lips and Seeing Voices. Nature 1976, 264, 746–748. [Google Scholar] [CrossRef] [PubMed]

- Nour, M.M.; Nour, J.M. Perception, Illusions and Bayesian Inference. Psychopathology 2015, 48, 217–221. [Google Scholar] [CrossRef] [PubMed]

- Harman, G.H. The Inference to the Best Explanation. Philos. Rev. 1965, 74, 88–95. [Google Scholar] [CrossRef]

- Friston, K. The Free-Energy Principle: A Unified Brain Theory? Nat. Rev. Neurosci. 2010, 11, 127–138. [Google Scholar] [CrossRef] [PubMed]

- Friston, K.J.; FitzGerald, T.; Rigoli, F.; Schwartenbeck, P.; Pezzulo, G. Active Inference: A Process Theory. Neural Comput. 2017, 29, 1–49. [Google Scholar] [CrossRef] [PubMed]

- Friston, K.J.; Kilner, J.; Harrison, L. A Free Energy Principle for the Brain. J. Physiol. Paris 2006, 100, 70–87. [Google Scholar] [CrossRef]

- Friston, K. Life as We Know It. J. R. Soc. Interface 2013, 10, 20130475. [Google Scholar] [CrossRef]

- Ramstead, M.J.D.; Badcock, P.B.; Friston, K.J. Answering Schrödinger’s Question: A Free-Energy Formulation. Phys. Life Rev. 2017. [Google Scholar] [CrossRef] [PubMed]

- Safron, A. Bayesian Analogical Cybernetics. arXiv 2019, arXiv:1911.02362. [Google Scholar]

- Safron, A.; DeYoung, C. Integrating Cybernetic Big Five Theory with the Free Energy Principle: A New Strategy for Modeling Personalities as Complex Systems. PsyArXiv 2020. [Google Scholar] [CrossRef]

- Seth, A.K. The Cybernetic Bayesian Brain; Open MIND; MIND Group: Frankfurt am Main, Germany, 2014; ISBN 978-3-95857-010-8. [Google Scholar]

- Hohwy, J. The Self-Evidencing Brain. Noûs 2016, 50, 259–285. [Google Scholar] [CrossRef]

- Damasio, A.R. The Strange Order of Things: Life, Feeling, and the Making of Cultures; Pantheon Books: New York, NY, USA, 2018; ISBN 978-0-307-90875-9. [Google Scholar]

- Friston, K.J. A Free Energy Principle for a Particular Physics. arXiv 2019, arXiv:1906.10184. [Google Scholar]

- Schopenhauer, A. Arthur Schopenhauer: The World as Will and Presentation: Volume I, 1st ed.; Kolak, D., Ed.; Routledge: New York, NY, USA, 1818; ISBN 978-0-321-35578-2. [Google Scholar]

- Spinoza, B. de Ethics; Penguin Classics: London, UK, 1677; ISBN 978-0-14-043571-9. [Google Scholar]

- Fuster, J.M. Cortex and Memory: Emergence of a New Paradigm. J. Cogn. Neurosci. 2009, 21, 2047–2072. [Google Scholar] [CrossRef] [PubMed]

- Hayek, F.A. The Sensory Order: An Inquiry into the Foundations of Theoretical Psychology; University of Chicago Press: Chicago, IL, USA, 1952; ISBN 0-226-32094-4. [Google Scholar]

- Baldassano, C.; Chen, J.; Zadbood, A.; Pillow, J.W.; Hasson, U.; Norman, K.A. Discovering Event Structure in Continuous Narrative Perception and Memory. Neuron 2017, 95, 709–721.e5. [Google Scholar] [CrossRef]

- Friston, K.; Buzsáki, G. The Functional Anatomy of Time: What and When in the Brain. Trends Cogn. Sci. 2016, 20, 500–511. [Google Scholar] [CrossRef]

- Hawkins, J.; Blakeslee, S. On Intelligence; Adapted; Times Books: New York, NY, USA, 2004; ISBN 0-8050-7456-2. [Google Scholar]

- Adams, R.; Shipp, S.; Friston, K.J. Predictions Not Commands: Active Inference in the Motor System. Brain Struct. Funct. 2013, 218, 611–643. [Google Scholar] [CrossRef]

- Safron, A. An Integrated World Modeling Theory (IWMT) of Consciousness: Combining Integrated Information and Global Neuronal Workspace Theories With the Free Energy Principle and Active Inference Framework; Toward Solving the Hard Problem and Characterizing Agentic Causation. Front. Artif. Intell. 2020, 3. [Google Scholar] [CrossRef]

- Safron, A. Integrated world modeling theory (IWMT) implemented: Towards reverse engineering consciousness with the free energy principle and active inference. PsyArXiv 2020. [Google Scholar] [CrossRef]

- Seth, A.K.; Friston, K.J. Active Interoceptive Inference and the Emotional Brain. Phil. Trans. R. Soc. B 2016, 371, 20160007. [Google Scholar] [CrossRef] [PubMed]

- Hesp, C.; Smith, R.; Allen, M.; Friston, K.; Ramstead, M. Deeply felt affect: The emergence of valence in deep active inference. PsyArXiv 2019. [Google Scholar] [CrossRef]

- Parr, T.; Friston, K.J. Working Memory, Attention, and Salience in Active Inference. Sci. Rep. 2017, 7, 14678. [Google Scholar] [CrossRef]

- Markram, K.; Markram, H. The Intense World Theory—A Unifying Theory of the Neurobiology of Autism. Front. Hum. Neurosci. 2010, 4, 224. [Google Scholar] [CrossRef]

- Pellicano, E.; Burr, D. When the World Becomes “Too Real”: A Bayesian Explanation of Autistic Perception. Trends Cogn. Sci. 2012, 16, 504–510. [Google Scholar] [CrossRef] [PubMed]

- Van de Cruys, S.; Evers, K.; Van der Hallen, R.; Van Eylen, L.; Boets, B.; de-Wit, L.; Wagemans, J. Precise Minds in Uncertain Worlds: Predictive Coding in Autism. Psychol. Rev. 2014, 121, 649–675. [Google Scholar] [CrossRef]

- Friston, K. Hallucinations and Perceptual Inference. Behav. Brain Sci. 2005, 28, 764–766. [Google Scholar] [CrossRef]

- Horga, G.; Schatz, K.C.; Abi-Dargham, A.; Peterson, B.S. Deficits in Predictive Coding Underlie Hallucinations in Schizophrenia. J. Neurosci. 2014, 34, 8072–8082. [Google Scholar] [CrossRef]

- Sterzer, P.; Adams, R.A.; Fletcher, P.; Frith, C.; Lawrie, S.M.; Muckli, L.; Petrovic, P.; Uhlhaas, P.; Voss, M.; Corlett, P.R. The Predictive Coding Account of Psychosis. Biol. Psychiatry 2018, 84, 634–643. [Google Scholar] [CrossRef]

- Smith, L.B.; Jayaraman, S.; Clerkin, E.; Yu, C. The Developing Infant Creates a Curriculum for Statistical Learning. Trends Cogn. Sci. 2018, 22, 325–336. [Google Scholar] [CrossRef] [PubMed]

- Emerson, C. The Outer Word and Inner Speech: Bakhtin, Vygotsky, and the Internalization of Language. Crit. Inq. 1983, 10, 245–264. [Google Scholar] [CrossRef]

- Piaget, J. The Role of Action in the Development of Thinking. In Knowledge and Development; Springer: Boston, MA, USA, 1977; pp. 17–42. ISBN 978-1-4684-2549-9. [Google Scholar]

- Ciaunica, A.; Constant, A.; Preissl, H.; Fotopoulou, A. The First Prior: From Co-Embodiment to Co-Homeostasis in Early Life. PsyArXiv 2021. [Google Scholar] [CrossRef]

- Friston, K.J. Self-Evidencing Babies: Commentary on “Mentalizing Homeostasis: The Social Origins of Interoceptive Inference” by Fotopoulou & Tsakiris. Neuropsychoanalysis 2017, 19, 43–47. [Google Scholar]

- Bruineberg, J.; Rietveld, E. Self-Organization, Free Energy Minimization, and Optimal Grip on a Field of Affordances. Front. Hum. Neurosci. 2014, 8, 599. [Google Scholar] [CrossRef] [PubMed]

- Allen, M.; Tsakiris, M. The Body as First Prior: Interoceptive Predictive Processing and the Primacy of Self-Models. In The Interoceptive Mind: From Homeostasis to Awareness; Tsakiris, M., De Preester, H., Eds.; Oxford University Press: Oxford, UK, 2018; pp. 27–45. [Google Scholar]

- Ciaunica, A.; Safron, A.; Delafield-Butt, J. Back to square one: From embodied experiences in utero to theories of consciousness. PsyArXiv 2021. [Google Scholar] [CrossRef]

- Fotopoulou, A.; Tsakiris, M. Mentalizing Homeostasis: The Social Origins of Interoceptive Inference-Replies to Commentaries. Neuropsychoanalysis 2017, 19, 71–76. [Google Scholar] [CrossRef]

- Palmer, C.J.; Seth, A.K.; Hohwy, J. The Felt Presence of Other Minds: Predictive Processing, Counterfactual Predictions, and Mentalising in Autism. Conscious. Cogn. 2015, 36, 376–389. [Google Scholar] [CrossRef]

- Cisek, P. Cortical Mechanisms of Action Selection: The Affordance Competition Hypothesis. Philos. Trans. R. Soc. B Biol. Sci. 2007, 362, 1585–1599. [Google Scholar] [CrossRef] [PubMed]

- Gibson, J.J. “The Theory of Affordances,” in Perceiving, Acting, and Knowing. Towards an Ecological Psychology; John Wiley & Sons Inc.: Hoboken, NJ, USA, 1977. [Google Scholar]

- Reed, E. James Gibson’s ecological approach to cognition. In Cognitive Psychology in Question; Costall, A., Still, A., Eds.; St Martin’s Press: New York, NY, USA, 1991; pp. 171–197. [Google Scholar]

- Hofstadter, D.; Sander, E. Surfaces and Essences: Analogy as the Fuel and Fire of Thinking, 1st ed.; Basic Books: New York, NY, USA, 2013; ISBN 978-0-465-01847-5. [Google Scholar]

- Haken, H. Synergetics of the Brain: An Outline of Some Basic Ideas. In Induced Rhythms in the Brain; Başar, E., Bullock, T.H., Eds.; Brain Dynamics; Birkhäuser Boston: Boston, MA, USA, 1992; pp. 417–421. ISBN 978-1-4757-1281-0. [Google Scholar]

- Friston, K.J.; Rigoli, F.; Ognibene, D.; Mathys, C.; Fitzgerald, T.; Pezzulo, G. Active Inference and Epistemic Value. Cogn. Neurosci. 2015, 6, 187–214. [Google Scholar] [CrossRef]

- Dreyfus, H.L. Why Heideggerian AI Failed and How Fixing It Would Require Making It More Heideggerian. Philos. Psychol. 2007, 20, 247–268. [Google Scholar] [CrossRef]

- Husserl, E. The Crisis of European Sciences and Transcendental Phenomenology: An Introduction to Phenomenological Philosophy; Northwestern University Press: Evanston, IL, USA, 1936; ISBN 978-0-8101-0458-7. [Google Scholar]

- Shanahan, M. Embodiment and the Inner Life: Cognition and Consciousness in the Space of Possible Minds, 1st ed.; Oxford University Press: Oxford, UK; New York, NY, USA, 2010; ISBN 978-0-19-922655-9. [Google Scholar]

- Williams, J.; Störmer, V.S. Working Memory: How Much Is It Used in Natural Behavior? Curr. Biol. 2021, 31, R205–R206. [Google Scholar] [CrossRef]

- Dehaene, S.; Changeux, J.-P. Experimental and Theoretical Approaches to Conscious Processing. Neuron 2011, 70, 200–227. [Google Scholar] [CrossRef]

- Ho, M.K.; Abel, D.; Correa, C.G.; Littman, M.L.; Cohen, J.D.; Griffiths, T.L. Control of Mental Representations in Human Planning. arXiv 2021, arXiv:2105.06948. [Google Scholar]

- Parr, T.; Friston, K.J. The Discrete and Continuous Brain: From Decisions to Movement-And Back Again. Neural Comput. 2018, 30, 2319–2347. [Google Scholar] [CrossRef] [PubMed]

- Hassabis, D.; Kumaran, D.; Summerfield, C.; Botvinick, M. Neuroscience-Inspired Artificial Intelligence. Neuron 2017, 95, 245–258. [Google Scholar] [CrossRef]

- Latash, M.L. Motor Synergies and the Equilibrium-Point Hypothesis. Motor Control 2010, 14, 294–322. [Google Scholar] [CrossRef] [PubMed]

- Hipólito, I.; Baltieri, M.; Friston, K.; Ramstead, M.J.D. Embodied Skillful Performance: Where the Action Is. Synthese 2021. [Google Scholar] [CrossRef]

- Mannella, F.; Gurney, K.; Baldassarre, G. The Nucleus Accumbens as a Nexus between Values and Goals in Goal-Directed Behavior: A Review and a New Hypothesis. Front. Behav. Neurosci. 2013, 7, 135. [Google Scholar] [CrossRef]

- James, W. The Principles of Psychology, Vol. 1, Reprint edition; Dover Publications: New York, NY, USA, 1890; ISBN 978-0-486-20381-2. [Google Scholar]

- Shin, Y.K.; Proctor, R.W.; Capaldi, E.J. A Review of Contemporary Ideomotor Theory. Psychol. Bull. 2010, 136, 943–974. [Google Scholar] [CrossRef]

- Woodworth, R.S. The Accuracy of Voluntary Movement. Psychol. Rev. Monogr. Suppl. 1899, 3, i–114. [Google Scholar] [CrossRef]

- Brown, H.; Friston, K.; Bestmann, S. Active Inference, Attention, and Motor Preparation. Front. Psychol. 2011, 2, 218. [Google Scholar] [CrossRef]

- Menon, V.; Uddin, L.Q. Saliency, Switching, Attention and Control: A Network Model of Insula Function. Brain Struct. Funct. 2010, 214, 655–667. [Google Scholar] [CrossRef]

- Caporale, N.; Dan, Y. Spike Timing-Dependent Plasticity: A Hebbian Learning Rule. Annu. Rev. Neurosci. 2008, 31, 25–46. [Google Scholar] [CrossRef] [PubMed]

- Markram, H.; Gerstner, W.; Sjöström, P.J. A History of Spike-Timing-Dependent Plasticity. Front. Synaptic Neurosci. 2011, 3, 4. [Google Scholar] [CrossRef]

- Graziano, M.S.A. Rethinking Consciousness: A Scientific Theory of Subjective Experience, 1st ed.; WWNorton & Company: New York, NY, USA, 2019; ISBN 978-0-393-65261-1. [Google Scholar]

- Pearl, J.; Mackenzie, D. The Book of Why: The New Science of Cause and Effect; Basic Books: New York, NY, USA, 2018; ISBN 978-0-465-09761-6. [Google Scholar]

- Vygotsky, L.S. Thought and Language—Revised Edition, Kozulin, A., Ed.; revised edition; The MIT Press: Cambridge, MA, USA, 1934; ISBN 978-0-262-72010-6. [Google Scholar]

- Tomasello, M. A Natural History of Human Thinking; Harvard University Press: Cambridge, MA, USA, 2014; ISBN 978-0-674-72636-9. [Google Scholar]

- Rizzolatti, G.; Riggio, L.; Dascola, I.; Umiltá, C. Reorienting Attention across the Horizontal and Vertical Meridians: Evidence in Favor of a Premotor Theory of Attention. Neuropsychologia 1987, 25, 31–40. [Google Scholar] [CrossRef]

- Desimone, R.; Duncan, J. Neural Mechanisms of Selective Visual Attention. Annu. Rev. Neurosci. 1995, 18, 193–222. [Google Scholar] [CrossRef]

- Marvel, C.L.; Morgan, O.P.; Kronemer, S.I. How the Motor System Integrates with Working Memory. Neurosci. Biobehav. Rev. 2019, 102, 184–194. [Google Scholar] [CrossRef] [PubMed]

- Veniero, D.; Gross, J.; Morand, S.; Duecker, F.; Sack, A.T.; Thut, G. Top-down Control of Visual Cortex by the Frontal Eye Fields through Oscillatory Realignment. Nat. Commun. 2021, 12, 1757. [Google Scholar] [CrossRef]

- Liang, W.-K.; Tseng, P.; Yeh, J.-R.; Huang, N.E.; Juan, C.-H. Frontoparietal Beta Amplitude Modulation and Its Interareal Cross-Frequency Coupling in Visual Working Memory. Neuroscience 2021, 460, 69–87. [Google Scholar] [CrossRef]

- Watanabe, T.; Mima, T.; Shibata, S.; Kirimoto, H. Midfrontal Theta as Moderator between Beta Oscillations and Precision Control. NeuroImage 2021, 118022. [Google Scholar] [CrossRef] [PubMed]

- Landau, S.M.; Lal, R.; O’Neil, J.P.; Baker, S.; Jagust, W.J. Striatal Dopamine and Working Memory. Cereb. Cortex 2009, 19, 445–454. [Google Scholar] [CrossRef] [PubMed]

- Baars, B.J.; Franklin, S.; Ramsoy, T.Z. Global Workspace Dynamics: Cortical “Binding and Propagation” Enables Conscious Contents. Front. Psychol. 2013, 4. [Google Scholar] [CrossRef]

- Craig, A.D.B. How Do You Feel--Now? The Anterior Insula and Human Awareness. Nat. Rev. Neurosci. 2009, 10, 59–70. [Google Scholar] [CrossRef]

- Estefan, D.P.; Zucca, R.; Arsiwalla, X.; Principe, A.; Zhang, H.; Rocamora, R.; Axmacher, N.; Verschure, P.F.M.J. Volitional Learning Promotes Theta Phase Coding in the Human Hippocampus. Proc. Natl. Acad. Sci. USA 2021, 118. [Google Scholar] [CrossRef]

- Herzog, M.H.; Kammer, T.; Scharnowski, F. Time Slices: What Is the Duration of a Percept? PLoS Biol. 2016, 14, e1002433. [Google Scholar] [CrossRef] [PubMed]

- Canolty, R.T.; Knight, R.T. The Functional Role of Cross-Frequency Coupling. Trends Cogn. Sci. 2010, 14, 506–515. [Google Scholar] [CrossRef]

- Sweeney-Reed, C.M.; Zaehle, T.; Voges, J.; Schmitt, F.C.; Buentjen, L.; Borchardt, V.; Walter, M.; Hinrichs, H.; Heinze, H.-J.; Rugg, M.D.; et al. Anterior Thalamic High Frequency Band Activity Is Coupled with Theta Oscillations at Rest. Front. Hum. Neurosci. 2017, 11. [Google Scholar] [CrossRef]

- Hassabis, D.; Kumaran, D.; Vann, S.D.; Maguire, E.A. Patients with Hippocampal Amnesia Cannot Imagine New Experiences. Proc. Natl. Acad. Sci. USA 2007, 104, 1726–1731. [Google Scholar] [CrossRef]

- Schacter, D.L.; Addis, D.R. On the Nature of Medial Temporal Lobe Contributions to the Constructive Simulation of Future Events. Philos. Trans. R. Soc. Lond. B. Biol. Sci. 2009, 364, 1245–1253. [Google Scholar] [CrossRef] [PubMed]

- MacKay, D.G. Remembering: What 50 Years of Research with Famous Amnesia Patient H. M. Can Teach Us about Memory and How It Works; Prometheus Books: Amherst, NY, USA, 2019; ISBN 978-1-63388-407-6. [Google Scholar]

- Voss, J.L.; Cohen, N.J. Hippocampal-Cortical Contributions to Strategic Exploration during Perceptual Discrimination. Hippocampus 2017, 27, 642–652. [Google Scholar] [CrossRef]

- Koster, R.; Chadwick, M.J.; Chen, Y.; Berron, D.; Banino, A.; Düzel, E.; Hassabis, D.; Kumaran, D. Big-Loop Recurrence within the Hippocampal System Supports Integration of Information across Episodes. Neuron 2018, 99, 1342–1354.e6. [Google Scholar] [CrossRef]

- Rodriguez-Larios, J.; Faber, P.; Achermann, P.; Tei, S.; Alaerts, K. From Thoughtless Awareness to Effortful Cognition: Alpha - Theta Cross-Frequency Dynamics in Experienced Meditators during Meditation, Rest and Arithmetic. Sci. Rep. 2020, 10, 5419. [Google Scholar] [CrossRef]

- Hasz, B.M.; Redish, A.D. Spatial Encoding in Dorsomedial Prefrontal Cortex and Hippocampus Is Related during Deliberation. Hippocampus 2020, 30, 1194–1208. [Google Scholar] [CrossRef] [PubMed]

- Kunz, L.; Wang, L.; Lachner-Piza, D.; Zhang, H.; Brandt, A.; Dümpelmann, M.; Reinacher, P.C.; Coenen, V.A.; Chen, D.; Wang, W.-X.; et al. Hippocampal Theta Phases Organize the Reactivation of Large-Scale Electrophysiological Representations during Goal-Directed Navigation. Sci. Adv. 2019, 5, eaav8192. [Google Scholar] [CrossRef] [PubMed]

- Hassabis, D.; Spreng, R.N.; Rusu, A.A.; Robbins, C.A.; Mar, R.A.; Schacter, D.L. Imagine All the People: How the Brain Creates and Uses Personality Models to Predict Behavior. Cereb. Cortex 2014, 24, 1979–1987. [Google Scholar] [CrossRef]

- Zheng, A.; Montez, D.F.; Marek, S.; Gilmore, A.W.; Newbold, D.J.; Laumann, T.O.; Kay, B.P.; Seider, N.A.; Van, A.N.; Hampton, J.M.; et al. Parallel Hippocampal-Parietal Circuits for Self- and Goal-Oriented Processing. bioRxiv 2020, 2020.12.01.395210. [Google Scholar] [CrossRef]

- Ijspeert, A.J.; Crespi, A.; Ryczko, D.; Cabelguen, J.-M. From Swimming to Walking with a Salamander Robot Driven by a Spinal Cord Model. Science 2007, 315, 1416–1420. [Google Scholar] [CrossRef] [PubMed]

- Di Lallo, A.; Catalano, M.G.; Garabini, M.; Grioli, G.; Gabiccini, M.; Bicchi, A. Dynamic Morphological Computation Through Damping Design of Soft Continuum Robots. Front. Robot. AI 2019, 6. [Google Scholar] [CrossRef]

- Othayoth, R.; Thoms, G.; Li, C. An Energy Landscape Approach to Locomotor Transitions in Complex 3D Terrain. Proc. Natl. Acad. Sci. USA 2020, 117, 14987–14995. [Google Scholar] [CrossRef] [PubMed]

- Clark, A. Whatever next? Predictive Brains, Situated Agents, and the Future of Cognitive Science. Behav. Brain Sci. 2013, 36, 181–204. [Google Scholar] [CrossRef] [PubMed]

- Constant, A.; Ramstead, M.J.D.; Veissière, S.P.L.; Campbell, J.O.; Friston, K.J. A Variational Approach to Niche Construction. J. R. Soc. Interface 2018, 15. [Google Scholar] [CrossRef] [PubMed]

- Merleau-Ponty, M. The Visible and the Invisible: Followed by Working Notes; Northwestern University Press: Evanston, IL, USA, 1968; ISBN 978-0-8101-0457-0. [Google Scholar]

- Araya, J.M. Emotion and the predictive mind: Emotions as (almost) drives. Revista de Filosofia Aurora 2019. [Google Scholar] [CrossRef]

- Craig, A.D. A New View of Pain as a Homeostatic Emotion. Trends Neurosci. 2003, 26, 303–307. [Google Scholar] [CrossRef]

- Critchley, H.D.; Garfinkel, S.N. Interoception and Emotion. Curr. Opin. Psychol. 2017, 17, 7–14. [Google Scholar] [CrossRef]

- Seth, A.K.; Suzuki, K.; Critchley, H.D. An Interoceptive Predictive Coding Model of Conscious Presence. Front. Psychol. 2011, 2, 395. [Google Scholar] [CrossRef]

- Parr, T.; Limanowski, J.; Rawji, V.; Friston, K. The Computational Neurology of Movement under Active Inference. Brain J. Neurol. 2021. [Google Scholar] [CrossRef]

- Damasio, A. The Feeling of What Happens: Body and Emotion in the Making of Consciousness, 1st ed.; Mariner Books: Boston, MA, USA, 2000; ISBN 0-15-601075-5. [Google Scholar]

- Cappuccio, M.L.; Kirchhoff, M.D.; Alnajjar, F.; Tani, J. Unfulfilled Prophecies in Sport Performance: Active Inference and the Choking Effect. J. Conscious. Stud. 2019, 27, 152–184. [Google Scholar]

- Sengupta, B.; Tozzi, A.; Cooray, G.K.; Douglas, P.K.; Friston, K.J. Towards a Neuronal Gauge Theory. PLoS Biol. 2016, 14, e1002400. [Google Scholar] [CrossRef]

- Bartolomei, F.; Lagarde, S.; Scavarda, D.; Carron, R.; Bénar, C.G.; Picard, F. The Role of the Dorsal Anterior Insula in Ecstatic Sensation Revealed by Direct Electrical Brain Stimulation. Brain Stimul. Basic Transl. Clin. Res. Neuromodulation 2019, 12, 1121–1126. [Google Scholar] [CrossRef]

- Belin-Rauscent, A.; Daniel, M.-L.; Puaud, M.; Jupp, B.; Sawiak, S.; Howett, D.; McKenzie, C.; Caprioli, D.; Besson, M.; Robbins, T.W.; et al. From Impulses to Maladaptive Actions: The Insula Is a Neurobiological Gate for the Development of Compulsive Behavior. Mol. Psychiatry 2016, 21, 491–499. [Google Scholar] [CrossRef]

- Campbell, M.E.J.; Nguyen, V.T.; Cunnington, R.; Breakspear, M. Insula Cortex Gates the Interplay of Action Observation and Preparation for Controlled Imitation. bioRxiv 2021. [Google Scholar] [CrossRef]

- Rueter, A.R.; Abram, S.V.; MacDonald, A.W.; Rustichini, A.; DeYoung, C.G. The Goal Priority Network as a Neural Substrate of Conscientiousness. Hum. Brain Mapp. 2018, 39, 3574–3585. [Google Scholar] [CrossRef]

- Park, H.-D.; Barnoud, C.; Trang, H.; Kannape, O.A.; Schaller, K.; Blanke, O. Breathing Is Coupled with Voluntary Action and the Cortical Readiness Potential. Nat. Commun. 2020, 11, 1–8. [Google Scholar] [CrossRef]

- Zhou, Y.; Friston, K.J.; Zeidman, P.; Chen, J.; Li, S.; Razi, A. The Hierarchical Organization of the Default, Dorsal Attention and Salience Networks in Adolescents and Young Adults. Cereb. Cortex N. Y. NY 2018, 28, 726–737. [Google Scholar] [CrossRef] [PubMed]

- Smigielski, L.; Scheidegger, M.; Kometer, M.; Vollenweider, F.X. Psilocybin-Assisted Mindfulness Training Modulates Self-Consciousness and Brain Default Mode Network Connectivity with Lasting Effects. NeuroImage 2019, 196, 207–215. [Google Scholar] [CrossRef] [PubMed]

- Lutz, A.; Mattout, J.; Pagnoni, G. The Epistemic and Pragmatic Value of Non-Action: A Predictive Coding Perspective on Meditation. Curr. Opin. Psychol. 2019, 28, 166–171. [Google Scholar] [CrossRef] [PubMed]

- Deane, G.; Miller, M.; Wilkinson, S. Losing Ourselves: Active Inference, Depersonalization, and Meditation. Front. Psychol. 2020, 11. [Google Scholar] [CrossRef] [PubMed]

- Block, N. Phenomenal and Access Consciousness Ned Block and Cynthia MacDonald: Consciousness and Cognitive Access. Proc. Aristot. Soc. 2008, 108, 289–317. [Google Scholar]

- O’Regan, J.K.; Noë, A. A Sensorimotor Account of Vision and Visual Consciousness. Behav. Brain Sci. 2001, 24, 939–973; discussion 973–1031. [Google Scholar] [CrossRef]

- van den Heuvel, M.P.; Sporns, O. Rich-Club Organization of the Human Connectome. J. Neurosci. 2011, 31, 15775–15786. [Google Scholar] [CrossRef] [PubMed]

- Tononi, G.; Edelman, G. Consciousness and Complexity. Science 1998, 282, 1846–1851. [Google Scholar] [CrossRef] [PubMed]

- Baars, B.J. In the Theater of Consciousness: The Workspace of the Mind, Reprint edition; Oxford University Press: New York, NY, USA, 2001; ISBN 978-0-19-514703-2. [Google Scholar]

- Tononi, G. An Information Integration Theory of Consciousness. BMC Neurosci. 2004, 5, 42. [Google Scholar] [CrossRef] [PubMed]

- Kirchhoff, M.D.; Kiverstein, J. Extended Consciousness and Predictive Processing: A Third Wave View, 1st ed.; Routledge: New York, NY, USA, 2019; ISBN 978-1-138-55681-2. [Google Scholar]

- Buzsáki, G. Neural Syntax: Cell Assemblies, Synapsembles, and Readers. Neuron 2010, 68, 362–385. [Google Scholar] [CrossRef]

- Buzsáki, G.; Watson, B.O. Brain Rhythms and Neural Syntax: Implications for Efficient Coding of Cognitive Content and Neuropsychiatric Disease. Dialogues Clin. Neurosci. 2012, 14, 345–367. [Google Scholar]

- Ramstead, M.J.D.; Kirchhoff, M.D.; Friston, K.J. A Tale of Two Densities: Active Inference Is Enactive Inference. Available online: http://philsci-archive.pitt.edu/16167/ (accessed on 12 December 2019).

- Friedman, D.; Tschantz, A.; Ramstead, M.; Friston, K.; Constant, A. Active Inferants The Basis for an Active Inference Framework for Ant Colony Behavior. Front. Behav. Neurosci. 2020. [Google Scholar] [CrossRef]

- LeDoux, J. The Deep History of Ourselves: The Four-Billion-Year Story of How We Got Conscious Brains; Penguin Books: London, UK, 2019. [Google Scholar]

- Tsakiris, M. The Multisensory Basis of the Self: From Body to Identity to Others. Q. J. Exp. Psychol. 2017, 70, 597–609. [Google Scholar] [CrossRef]

- Rudrauf, D.; Bennequin, D.; Granic, I.; Landini, G.; Friston, K.J.; Williford, K. A Mathematical Model of Embodied Consciousness. J. Theor. Biol. 2017, 428, 106–131. [Google Scholar] [CrossRef]

- Sauciuc, G.-A.; Zlakowska, J.; Persson, T.; Lenninger, S.; Madsen, E.A. Imitation Recognition and Its Prosocial Effects in 6-Month Old Infants. PLoS ONE 2020, 15, e0232717. [Google Scholar] [CrossRef] [PubMed]

- Slaughter, V. Do Newborns Have the Ability to Imitate? Trends Cogn. Sci. 2021, 25, 377–387. [Google Scholar] [CrossRef]

- Bouizegarene, N.; Ramstead, M.; Constant, A.; Friston, K.; Kirmayer, L. Narrative as active inference. PsyArXiv 2020. [Google Scholar] [CrossRef]

- Gentner, D. Bootstrapping the Mind: Analogical Processes and Symbol Systems. Cogn. Sci. 2010, 34, 752–775. [Google Scholar] [CrossRef] [PubMed]

- Hofstadter, D.R. Gödel, Escher, Bach: An Eternal Golden Braid; 20 Anv.; Basic Books: New York, NY, USA, 1979; ISBN 0-465-02656-7. [Google Scholar]

- Friston, K.J.; Frith, C. A Duet for One. Conscious. Cogn. 2015, 36, 390–405. [Google Scholar] [CrossRef] [PubMed]

- Friston, K.J.; Frith, C.D. Active Inference, Communication and Hermeneutics. Cortex J. Devoted Study Nerv. Syst. Behav. 2015, 68, 129–143. [Google Scholar] [CrossRef] [PubMed]

- De Jaegher, H. Embodiment and Sense-Making in Autism. Front. Integr. Neurosci. 2013, 7, 15. [Google Scholar] [CrossRef]

- Tomasello, M.; Carpenter, M. Shared Intentionality. Dev. Sci. 2007, 10, 121–125. [Google Scholar] [CrossRef]

- Tomasello, M. The Cultural Origins of Human Cognition; Harvard University Press: Cambridge, MA, USA, 2001; ISBN 0-674-00582-1. [Google Scholar]

- Maschler, M. The Bargaining Set, Kernel, and Nucleolus. Handb. Game Theory Econ. Appl. 1992, 1, 591–667. [Google Scholar]

- Kuhn, T.S. The Structure of Scientific Revolutions, 3rd ed.; University of Chicago Press: Chicago, IL, USA, 1962; ISBN 978-0-226-45808-3. [Google Scholar]

- Blakeslee, S.; Blakeslee, M. The Body Has a Mind of Its Own: How Body Maps in Your Brain Help You Do (Almost) Everything Better; Random House Publishing Group: New York, NY, USA, 2008; ISBN 978-1-58836-812-6. [Google Scholar]

- Cardinali, L.; Frassinetti, F.; Brozzoli, C.; Urquizar, C.; Roy, A.C.; Farnè, A. Tool-Use Induces Morphological Updating of the Body Schema. Curr. Biol. 2009, 19, R478–R479. [Google Scholar] [CrossRef]

- Ehrsson, H.H.; Holmes, N.P.; Passingham, R.E. Touching a Rubber Hand: Feeling of Body Ownership Is Associated with Activity in Multisensory Brain Areas. J. Neurosci. Off. J. Soc. Neurosci. 2005, 25, 10564–10573. [Google Scholar] [CrossRef]

- Metral, M.; Gonthier, C.; Luyat, M.; Guerraz, M. Body Schema Illusions: A Study of the Link between the Rubber Hand and Kinesthetic Mirror Illusions through Individual Differences. BioMed Res. Int. 2017, 2017. [Google Scholar] [CrossRef]

- Ciaunica, A.; Petreca, B.; Fotopoulou, A.; Roepstorff, A. Whatever next and close to my self—the transparent senses and the ‘second skin’: Implications for the case of depersonalisation. PsyArXiv 2021. [Google Scholar] [CrossRef]

- Rochat, P. The Ontogeny of Human Self-Consciousness. Curr. Dir. Psychol. Sci. 2018, 27, 345–350. [Google Scholar] [CrossRef]

- Bullock, D.; Takemura, H.; Caiafa, C.F.; Kitchell, L.; McPherson, B.; Caron, B.; Pestilli, F. Associative White Matter Connecting the Dorsal and Ventral Posterior Human Cortex. Brain Struct. Funct. 2019, 224, 2631–2660. [Google Scholar] [CrossRef] [PubMed]

- O’Reilly, R.C.; Wyatte, D.R.; Rohrlich, J. Deep predictive learning: A comprehensive model of three visual streams. ArXiv 2017, ArXiv170904654 Q-Bio. Available online: http://arxiv.org/abs/1709.04654 (accessed on 19 October 2019).

- Heylighen, F.; Joslyn, C. Cybernetics and Second-Order Cybernetics. In Proceedings of the Encyclopedia of Physical Science & Technology, 3rd ed.; Academic Press: Cambridge, MA, USA, 2001. [Google Scholar]

- Goekoop, R.; de Kleijn, R. How Higher Goals Are Constructed and Collapse under Stress: A Hierarchical Bayesian Control Systems Perspective. Neurosci. Biobehav. Rev. 2021, 123, 257–285. [Google Scholar] [CrossRef] [PubMed]

- Whitehead, K.; Meek, J.; Fabrizi, L. Developmental Trajectory of Movement-Related Cortical Oscillations during Active Sleep in a Cross-Sectional Cohort of Pre-Term and Full-Term Human Infants. Sci. Rep. 2018, 8, 1–8. [Google Scholar] [CrossRef] [PubMed]

- Williford, K.; Bennequin, D.; Friston, K.; Rudrauf, D. The Projective Consciousness Model and Phenomenal Selfhood. Front. Psychol. 2018, 9. [Google Scholar] [CrossRef]

- Barsalou, L.W. Grounded Cognition: Past, Present, and Future. Top. Cogn. Sci. 2010, 2, 716–724. [Google Scholar] [CrossRef]

- Prinz, J. The Intermediate Level Theory of Consciousness. In The Blackwell Companion to Consciousness; John Wiley & Sons, Ltd.: Hoboken, NJ, USA, 2017; pp. 257–271. ISBN 978-1-119-13236-3. [Google Scholar]

- Zhou, J.; Cui, G.; Zhang, Z.; Yang, C.; Liu, Z.; Wang, L.; Li, C.; Sun, M. Graph Neural Networks: A Review of Methods and Applications. arXiv 2019, arXiv:1812.08434. [Google Scholar]

- Battaglia, P.W.; Hamrick, J.B.; Bapst, V.; Sanchez-Gonzalez, A.; Zambaldi, V.; Malinowski, M.; Tacchetti, A.; Raposo, D.; Santoro, A.; Faulkner, R.; et al. Relational Inductive Biases, Deep Learning, and Graph Networks. arXiv 2018, arXiv:1806.01261. [Google Scholar]

- Bapst, V.; Keck, T.; Grabska-Barwińska, A.; Donner, C.; Cubuk, E.D.; Schoenholz, S.S.; Obika, A.; Nelson, A.W.R.; Back, T.; Hassabis, D.; et al. Unveiling the Predictive Power of Static Structure in Glassy Systems. Nat. Phys. 2020, 16, 448–454. [Google Scholar] [CrossRef]

- Cranmer, M.; Sanchez-Gonzalez, A.; Battaglia, P.; Xu, R.; Cranmer, K.; Spergel, D.; Ho, S. Discovering Symbolic Models from Deep Learning with Inductive Biases. arXiv 2020, arXiv:2006.11287. [Google Scholar]

- Haun, A.; Tononi, G. Why Does Space Feel the Way It Does? Towards a Principled Account of Spatial Experience. Entropy 2019, 21, 1160. [Google Scholar] [CrossRef]

- Haun, A. What Is Visible across the Visual Field? Neurosci. Conscious. 2021, 2021. [Google Scholar] [CrossRef]

- Faul, L.; St. Jacques, P.L.; DeRosa, J.T.; Parikh, N.; De Brigard, F. Differential Contribution of Anterior and Posterior Midline Regions during Mental Simulation of Counterfactual and Perspective Shifts in Autobiographical Memories. NeuroImage 2020, 215, 116843. [Google Scholar] [CrossRef]

- Hills, T.T.; Todd, P.M.; Goldstone, R.L. The Central Executive as a Search Process: Priming Exploration and Exploitation across Domains. J. Exp. Psychol. Gen. 2010, 139, 590–609. [Google Scholar] [CrossRef]

- Kaplan, R.; Friston, K.J. Planning and Navigation as Active Inference. Biol. Cybern. 2018, 112, 323–343. [Google Scholar] [CrossRef]

- Çatal, O.; Verbelen, T.; Van de Maele, T.; Dhoedt, B.; Safron, A. Robot Navigation as Hierarchical Active Inference. Neural Netw. 2021, 142, 192–204. [Google Scholar] [CrossRef] [PubMed]

- Graziano, M.S.A. The Temporoparietal Junction and Awareness. Neurosci. Conscious. 2018, 2018. [Google Scholar] [CrossRef] [PubMed]

- Edelman, G.; Gally, J.A.; Baars, B.J. Biology of Consciousness. Front. Psychol. 2011, 2, 4. [Google Scholar] [CrossRef] [PubMed]

- Tononi, G.; Boly, M.; Massimini, M.; Koch, C. Integrated Information Theory: From Consciousness to Its Physical Substrate. Nat. Rev. Neurosci. 2016, 17, 450. [Google Scholar] [CrossRef]

- Luppi, A.I.; Mediano, P.A.M.; Rosas, F.E.; Allanson, J.; Pickard, J.D.; Carhart-Harris, R.L.; Williams, G.B.; Craig, M.M.; Finoia, P.; Owen, A.M.; et al. A Synergistic Workspace for Human Consciousness Revealed by Integrated Information Decomposition. bioRxiv 2020, 2020.11.25.398081. [Google Scholar] [CrossRef]

- Luppi, A.I.; Mediano, P.A.M.; Rosas, F.E.; Holland, N.; Fryer, T.D.; O’Brien, J.T.; Rowe, J.B.; Menon, D.K.; Bor, D.; Stamatakis, E.A. A Synergistic Core for Human Brain Evolution and Cognition. bioRxiv 2020, 2020.09.22.308981. [Google Scholar] [CrossRef]

- Betzel, R.F.; Fukushima, M.; He, Y.; Zuo, X.-N.; Sporns, O. Dynamic Fluctuations Coincide with Periods of High and Low Modularity in Resting-State Functional Brain Networks. NeuroImage 2016, 127, 287–297. [Google Scholar] [CrossRef] [PubMed]

- Madl, T.; Baars, B.J.; Franklin, S. The Timing of the Cognitive Cycle. PloS ONE 2011, 6, e14803. [Google Scholar] [CrossRef]

- Tomasi, D.; Volkow, N.D. Association between Functional Connectivity Hubs and Brain Networks. Cereb. Cortex N. Y. NY 2011, 21, 2003–2013. [Google Scholar] [CrossRef] [PubMed]

- Battiston, F.; Guillon, J.; Chavez, M.; Latora, V.; de Vico Fallani, F. Multiplex core–periphery organization of the human connectome. J. R. Soc. Interface 2018, 15, 20180514. [Google Scholar] [CrossRef]

- Castro, S.; El-Deredy, W.; Battaglia, D.; Orio, P. Cortical Ignition Dynamics Is Tightly Linked to the Core Organisation of the Human Connectome. PLoS Comput. Biol. 2020, 16, e1007686. [Google Scholar] [CrossRef] [PubMed]

- Davey, C.G.; Harrison, B.J. The Brain’s Center of Gravity: How the Default Mode Network Helps Us to Understand the Self. World Psychiatry 2018, 17, 278–279. [Google Scholar] [CrossRef]

- Deco, G.; Cruzat, J.; Cabral, J.; Tagliazucchi, E.; Laufs, H.; Logothetis, N.K.; Kringelbach, M.L. Awakening: Predicting External Stimulation to Force Transitions between Different Brain States. Proc. Natl. Acad. Sci. USA 2019, 116, 18088–18097. [Google Scholar] [CrossRef] [PubMed]

- Wens, V.; Bourguignon, M.; Vander Ghinst, M.; Mary, A.; Marty, B.; Coquelet, N.; Naeije, G.; Peigneux, P.; Goldman, S.; De Tiège, X. Synchrony, Metastability, Dynamic Integration, and Competition in the Spontaneous Functional Connectivity of the Human Brain. NeuroImage 2019. [Google Scholar] [CrossRef]

- Marshall, P.J.; Meltzoff, A.N. Body Maps in the Infant Brain. Trends Cogn. Sci. 2015, 19, 499–505. [Google Scholar] [CrossRef] [PubMed]

- Smith, G.B.; Hein, B.; Whitney, D.E.; Fitzpatrick, D.; Kaschube, M. Distributed Network Interactions and Their Emergence in Developing Neocortex. Nat. Neurosci. 2018, 21, 1600–1608. [Google Scholar] [CrossRef] [PubMed]

- Wan, Y.; Wei, Z.; Looger, L.L.; Koyama, M.; Druckmann, S.; Keller, P.J. Single-Cell Reconstruction of Emerging Population Activity in an Entire Developing Circuit. Cell 2019, 179, 355–372.e23. [Google Scholar] [CrossRef] [PubMed]

- Ramachandran, V.S.; Blakeslee, S.; Sacks, O. Phantoms in the Brain: Probing the Mysteries of the Human Mind; William Morrow Paperbacks: New York, NY, USA, 1999; ISBN 978-0-688-17217-6. [Google Scholar]

- Valyear, K.F.; Philip, B.A.; Cirstea, C.M.; Chen, P.-W.; Baune, N.A.; Marchal, N.; Frey, S.H. Interhemispheric Transfer of Post-Amputation Cortical Plasticity within the Human Somatosensory Cortex. NeuroImage 2020, 206, 116291. [Google Scholar] [CrossRef]

- Muller, L.; Chavane, F.; Reynolds, J.; Sejnowski, T.J. Cortical Travelling Waves: Mechanisms and Computational Principles. Nat. Rev. Neurosci. 2018, 19, 255–268. [Google Scholar] [CrossRef]

- Roberts, J.A.; Gollo, L.L.; Abeysuriya, R.G.; Roberts, G.; Mitchell, P.B.; Woolrich, M.W.; Breakspear, M. Metastable Brain Waves. Nat. Commun. 2019, 10, 1–17. [Google Scholar] [CrossRef]

- Zhang, H.; Watrous, A.J.; Patel, A.; Jacobs, J. Theta and Alpha Oscillations Are Traveling Waves in the Human Neocortex. Neuron 2018, 98, 1269–1281.e4. [Google Scholar] [CrossRef]

- Atasoy, S.; Deco, G.; Kringelbach, M.L. Playing at the Edge of Criticality: Expanded Whole-Brain Repertoire of Connectome-Harmonics. In The Functional Role of Critical Dynamics in Neural Systems; Tomen, N., Herrmann, J.M., Ernst, U., Eds.; Springer Series on Bio- and Neurosystems; Springer International Publishing: Cham, Switzerland, 2019; pp. 27–45. ISBN 978-3-030-20965-0. [Google Scholar]

- Atasoy, S.; Deco, G.; Kringelbach, M.L.; Pearson, J. Harmonic Brain Modes: A Unifying Framework for Linking Space and Time in Brain Dynamics. Neurosci. Rev. J. Bringing Neurobiol. Neurol. Psychiatry 2018, 24, 277–293. [Google Scholar] [CrossRef]

- Deco, G.; Kringelbach, M.L. Metastability and Coherence: Extending the Communication through Coherence Hypothesis Using A Whole-Brain Computational Perspective. Trends Neurosci. 2016, 39, 125–135. [Google Scholar] [CrossRef]

- Lord, L.-D.; Expert, P.; Atasoy, S.; Roseman, L.; Rapuano, K.; Lambiotte, R.; Nutt, D.J.; Deco, G.; Carhart-Harris, R.L.; Kringelbach, M.L.; et al. Dynamical Exploration of the Repertoire of Brain Networks at Rest Is Modulated by Psilocybin. NeuroImage 2019, 199, 127–142. [Google Scholar] [CrossRef]

- Seth, A.K. A Predictive Processing Theory of Sensorimotor Contingencies: Explaining the Puzzle of Perceptual Presence and Its Absence in Synesthesia. Cogn. Neurosci. 2014, 5, 97–118. [Google Scholar] [CrossRef]

- Drew, P.J.; Winder, A.T.; Zhang, Q. Twitches, Blinks, and Fidgets: Important Generators of Ongoing Neural Activity. The Neuroscientist 2019, 25, 298–313. [Google Scholar] [CrossRef] [PubMed]

- Musall, S.; Kaufman, M.T.; Juavinett, A.L.; Gluf, S.; Churchland, A.K. Single-Trial Neural Dynamics Are Dominated by Richly Varied Movements. Nat. Neurosci. 2019, 22, 1677–1686. [Google Scholar] [CrossRef]

- Benedetto, A.; Binda, P.; Costagli, M.; Tosetti, M.; Morrone, M.C. Predictive Visuo-Motor Communication through Neural Oscillations. Curr. Biol. 2021. [Google Scholar] [CrossRef]

- Graziano, M.S.A. The Spaces Between Us: A Story of Neuroscience, Evolution, and Human Nature; Oxford University Press: Oxford, UK, 2018; ISBN 978-0-19-046101-0. [Google Scholar]

- Safron, A. What Is Orgasm? A Model of Sexual Trance and Climax via Rhythmic Entrainment. Socioaffective Neurosci. Psychol. 2016, 6, 31763. [Google Scholar] [CrossRef] [PubMed]

- Miller, L.E.; Fabio, C.; Ravenda, V.; Bahmad, S.; Koun, E.; Salemme, R.; Luauté, J.; Bolognini, N.; Hayward, V.; Farnè, A. Somatosensory Cortex Efficiently Processes Touch Located Beyond the Body. Curr. Biol. 2019. [Google Scholar] [CrossRef] [PubMed]

- Bergouignan, L.; Nyberg, L.; Ehrsson, H.H. Out-of-Body–Induced Hippocampal Amnesia. Proc. Natl. Acad. Sci. USA 2014, 111, 4421–4426. [Google Scholar] [CrossRef]

- St. Jacques, P.L. A New Perspective on Visual Perspective in Memory. Curr. Dir. Psychol. Sci. 2019, 28, 450–455. [Google Scholar] [CrossRef]

- Graziano, M.S.A. Consciousness and the Social Brain; Oxford University Press: Oxford, UK, 2013; ISBN 978-0-19-992865-1. [Google Scholar]

- Guterstam, A.; Kean, H.H.; Webb, T.W.; Kean, F.S.; Graziano, M.S.A. Implicit Model of Other People’s Visual Attention as an Invisible, Force-Carrying Beam Projecting from the Eyes. Proc. Natl. Acad. Sci. USA 2019, 116, 328–333. [Google Scholar] [CrossRef]

- Corbetta, M.; Shulman, G.L. Spatial neglect and attention networks. Annu. Rev. Neurosci. 2011, 34, 569–599. [Google Scholar] [CrossRef]

- Blanke, O.; Mohr, C.; Michel, C.M.; Pascual-Leone, A.; Brugger, P.; Seeck, M.; Landis, T.; Thut, G. Linking Out-of-Body Experience and Self Processing to Mental Own-Body Imagery at the Temporoparietal Junction. J. Neurosci. Off. J. Soc. Neurosci. 2005, 25, 550–557. [Google Scholar] [CrossRef] [PubMed]

- Guterstam, A.; Björnsdotter, M.; Gentile, G.; Ehrsson, H.H. Posterior Cingulate Cortex Integrates the Senses of Self-Location and Body Ownership. Curr. Biol. 2015, 25, 1416–1425. [Google Scholar] [CrossRef] [PubMed]

- Saxe, R.; Wexler, A. Making Sense of Another Mind: The Role of the Right Temporo-Parietal Junction. Neuropsychologia 2005, 43, 1391–1399. [Google Scholar] [CrossRef]

- Dehaene, S. Consciousness and the Brain: Deciphering How the Brain Codes Our Thoughts; Viking: New York, NY, USA, 2014; ISBN 978-0-670-02543-5. [Google Scholar]

- Ramstead, M.J.D.; Veissière, S.P.L.; Kirmayer, L.J. Cultural Affordances: Scaffolding Local Worlds Through Shared Intentionality and Regimes of Attention. Front. Psychol. 2016, 7. [Google Scholar] [CrossRef]

- Veissière, S.P.L.; Constant, A.; Ramstead, M.J.D.; Friston, K.J.; Kirmayer, L.J. Thinking Through Other Minds: A Variational Approach to Cognition and Culture. Behav. Brain Sci. 2019, 1–97. [Google Scholar] [CrossRef]

- Frith, C.D.; Metzinger, T.K. How the Stab of Conscience Made Us Really Conscious. In The Pragmatic Turn: Toward Action-Oriented Views in Cognitive Science; MIT Press: Cambridge, MA, USA, 2015. [Google Scholar]

- Chang, A.Y.C.; Biehl, M.; Yu, Y.; Kanai, R. Information Closure Theory of Consciousness. arXiv 2019, arXiv:1909.13045. [Google Scholar]

- Barsalou, L.W. Simulation, Situated Conceptualization, and Prediction. Philos. Trans. R. Soc. B Biol. Sci. 2009, 364, 1281–1289. [Google Scholar] [CrossRef]

- Elton, M. Consciouness: Only at the Personal Level. Philos. Explor. 2000, 3, 25–42. [Google Scholar] [CrossRef]

- Dennett, D.C. The self as the center of narrative gravity. In Self and Consciousness; Psychology Press: East Sussex, UK, 2014; pp. 111–123. [Google Scholar]

- Haken, H. Synergetics. Phys. Bull. 1977, 28, 412. [Google Scholar] [CrossRef]

- Butterfield, J. Reduction, Emergence and Renormalization. arXiv 2014, arXiv:1406.4354. [Google Scholar] [CrossRef]

- Carroll, S. The Big Picture: On the Origins of Life, Meaning, and the Universe Itself; Dutton: Boston, MA, USA, 2016; ISBN 978-0-698-40976-7. [Google Scholar]

- Albarracin, M.; Constant, A.; Friston, K.; Ramstead, M. A Variational Approach to Scripts. PsyArXiv 2020. [Google Scholar] [CrossRef]

- Hirsh, J.B.; Mar, R.A.; Peterson, J.B. Personal Narratives as the Highest Level of Cognitive Integration. Behav. Brain Sci. 2013, 36, 216–217. [Google Scholar] [CrossRef] [PubMed]

- Harari, Y.N. Sapiens: A Brief History of Humankind, 1st ed.; Harper: New York, NY, USA, 2015; ISBN 978-0-06-231609-7. [Google Scholar]

- Henrich, J. The Secret of Our Success: How Culture Is Driving Human Evolution, Domesticating Our Species, and Making Us Smarter; Princeton University Press: Princeton, NJ, USA, 2017; ISBN 978-0-691-17843-1. [Google Scholar]

- Fujita, K.; Carnevale, J.J.; Trope, Y. Understanding Self-Control as a Whole vs. Part Dynamic. Neuroethics 2018, 11, 283–296. [Google Scholar] [CrossRef]

- Mahr, J.; Csibra, G. Why Do We Remember? The Communicative Function of Episodic Memory. Behav. Brain Sci. 2017, 1–93. [Google Scholar] [CrossRef]

- Ainslie, G. Précis of Breakdown of Will. Behav. Brain Sci. 2005, 28, 635–650; discussion 650–673. [Google Scholar] [CrossRef]

- Lewis, M. The Biology of Desire: Why Addiction Is Not a Disease; Public Affairs Books: New York, NY, USA, 2015; ISBN 978-1-61039-437-6. [Google Scholar]

- Peterson, J.B. Maps of Meaning: The Architecture of Belief; Psychology Press: East Sussex, UK, 1999; ISBN 978-0-415-92222-7. [Google Scholar]

- Shiller, R.J. Narrative Economics: How Stories Go Viral and Drive Major Economic Events; Princeton University Press: Princeton, NJ, USA, 2019; ISBN 978-0-691-18229-2. [Google Scholar]

- Edelman, G.J. Neural Darwinism: The Theory OF Neuronal Group Selection, 1st ed.; Basic Books: New York, NY, USA, 1987; ISBN 0-465-04934-6. [Google Scholar]

- Minsky, M. Society of Mind; Simon and Schuster: New York, NY, USA, 1988; ISBN 978-0-671-65713-0. [Google Scholar]

- Ainslie, G. Picoeconomics: The Strategic Interaction of Successive Motivational States within the Person, Reissue edition; Cambridge University Press: Cambridge, MA, USA, 2010; ISBN 978-0-521-15870-1. [Google Scholar]

- Traulsen, A.; Nowak, M.A. Evolution of Cooperation by Multilevel Selection. Proc. Natl. Acad. Sci. USA 2006, 103, 10952–10955. [Google Scholar] [CrossRef]

- Carhart-Harris, R.L.; Friston, K.J. The Default-Mode, Ego-Functions and Free-Energy: A Neurobiological Account of Freudian Ideas. Brain J. Neurol. 2010, 133, 1265–1283. [Google Scholar] [CrossRef]

- Barrett, L.F. How Emotions Are Made: The Secret Life of the Brain; Houghton Mifflin Harcourt: Boston, MA, USA, 2017. [Google Scholar]

- Damasio, A. Self Comes to Mind: Constructing the Conscious Brain, Reprint edition; Vintage: New York, NY, USA, 2012; ISBN 978-0-307-47495-7. [Google Scholar]

- Elston, T.W.; Bilkey, D.K. Anterior Cingulate Cortex Modulation of the Ventral Tegmental Area in an Effort Task. Cell Rep. 2017, 19, 2220–2230. [Google Scholar] [CrossRef]

- Luu, P.; Posner, M.I. Anterior Cingulate Cortex Regulation of Sympathetic Activity. Brain 2003, 126, 2119–2120. [Google Scholar] [CrossRef]

- Talmy, L. Force Dynamics in Language and Cognition. Cogn. Sci. 1988, 12, 49–100. [Google Scholar] [CrossRef]

- Baumeister, R.F.; Tierney, J. Willpower: Rediscovering the Greatest Human Strength; Penguin: London, UK, 2012; ISBN 978-0-14-312223-4. [Google Scholar]

- Bernardi, G.; Siclari, F.; Yu, X.; Zennig, C.; Bellesi, M.; Ricciardi, E.; Cirelli, C.; Ghilardi, M.F.; Pietrini, P.; Tononi, G. Neural and Behavioral Correlates of Extended Training during Sleep Deprivation in Humans: Evidence for Local, Task-Specific Effects. J. Neurosci. Off. J. Soc. Neurosci. 2015, 35, 4487–4500. [Google Scholar] [CrossRef]

- Hung, C.-S.; Sarasso, S.; Ferrarelli, F.; Riedner, B.; Ghilardi, M.F.; Cirelli, C.; Tononi, G. Local Experience-Dependent Changes in the Wake EEG after Prolonged Wakefulness. Sleep 2013, 36, 59–72. [Google Scholar] [CrossRef]

- Tononi, G.; Cirelli, C. Sleep and Synaptic Homeostasis: A Hypothesis. Brain Res. Bull. 2003, 62, 143–150. [Google Scholar] [CrossRef]

- Wenger, E.; Brozzoli, C.; Lindenberger, U.; Lövdén, M. Expansion and Renormalization of Human Brain Structure During Skill Acquisition. Trends Cogn. Sci. 2017, 21, 930–939. [Google Scholar] [CrossRef]

- Che, X.; Cash, R.; Chung, S.W.; Bailey, N.; Fitzgerald, P.B.; Fitzgibbon, B.M. The Dorsomedial Prefrontal Cortex as a Flexible Hub Mediating Behavioral as Well as Local and Distributed Neural Effects of Social Support Context on Pain: A Theta Burst Stimulation and TMS-EEG Study. NeuroImage 2019, 201, 116053. [Google Scholar] [CrossRef] [PubMed]

- Marshall, T.R.; O’Shea, J.; Jensen, O.; Bergmann, T.O. Frontal Eye Fields Control Attentional Modulation of Alpha and Gamma Oscillations in Contralateral Occipitoparietal Cortex. J. Neurosci. 2015, 35, 1638–1647. [Google Scholar] [CrossRef] [PubMed]

- Santostasi, G.; Malkani, R.; Riedner, B.; Bellesi, M.; Tononi, G.; Paller, K.A.; Zee, P.C. Phase-Locked Loop for Precisely Timed Acoustic Stimulation during Sleep. J. Neurosci. Methods 2016, 259, 101–114. [Google Scholar] [CrossRef]

- Clancy, K.J.; Andrzejewski, J.A.; Rosenberg, J.T.; Ding, M.; Li, W. Transcranial Stimulation of Alpha Oscillations Upregulates the Default Mode Network. bioRxiv 2021, 2021.06.11.447939. [Google Scholar] [CrossRef]

- Evans, D.R.; Boggero, I.A.; Segerstrom, S.C. The Nature of Self-Regulatory Fatigue and “Ego Depletion”: Lessons From Physical Fatigue. Personal. Soc. Psychol. Rev. Off. J. Soc. Personal. Soc. Psychol. Inc. 2015. [Google Scholar] [CrossRef] [PubMed]

- Dennett, D. Freedom Evolves, Illustrated edition; Viking Adult: New York, NY, USA, 2003; ISBN 0-670-03186-0. [Google Scholar]

- Dennett, D. Real Patterns. J. Philos. 1991, 88, 27–51. [Google Scholar] [CrossRef]

- Fry, R.L. Physical Intelligence and Thermodynamic Computing. Entropy 2017, 19, 107. [Google Scholar] [CrossRef]

- Kiefer, A.B. Psychophysical Identity and Free Energy. J. R. Soc. Interface 2020, 17, 20200370. [Google Scholar] [CrossRef] [PubMed]

- Ao, P. Laws in Darwinian Evolutionary Theory. Phys. Life Rev. 2005, 2, 117–156. [Google Scholar] [CrossRef]

- Haldane, J.B.S. Organisers and Genes. Nature 1940, 146, 413. [Google Scholar] [CrossRef]

- Sir, R.A.F.; Fisher, R.A. The Genetical Theory of Natural Selection: A Complete Variorum Edition; OUP Oxford: Oxford, UK, 1999; ISBN 978-0-19-850440-5. [Google Scholar]

- Wright, S. The Roles of Mutation, Inbreeding, Crossbreeding, and Selection in Evolution. J. Agric. Res. 1921, 20, 557–580. [Google Scholar]

- Tinbergen, N. On Aims and Methods of Ethology. Z. Tierpsychol. 1963, 20, 410–433. [Google Scholar] [CrossRef]

- Campbell, J.O. Universal Darwinism As a Process of Bayesian Inference. Front. Syst. Neurosci. 2016, 10, 49. [Google Scholar] [CrossRef]

- Friston, K.J.; Wiese, W.; Hobson, J.A. Sentience and the Origins of Consciousness: From Cartesian Duality to Markovian Monism. Entropy 2020, 22, 516. [Google Scholar] [CrossRef]

- Kaila, V.; Annila, A. Natural Selection for Least Action. Proc. R. Soc. Math. Phys. Eng. Sci. 2008, 464, 3055–3070. [Google Scholar] [CrossRef]

- Gazzaniga, M.S. The Split-Brain: Rooting Consciousness in Biology. Proc. Natl. Acad. Sci. USA 2014, 111, 18093–18094. [Google Scholar] [CrossRef]

- Gazzaniga, M.S. The Consciousness Instinct: Unraveling the Mystery of How the Brain Makes the Mind; Farrar, Straus and Giroux: New York, NY, USA, 2018; ISBN 978-0-374-12876-0. [Google Scholar]

- Rovelli, C. The Order of Time; Penguin: London, UK, 2018; ISBN 978-0-7352-1612-9. [Google Scholar]

- Ismael, J. How Physics Makes Us Free; Oxford University Press: Oxford, UK, 2016; ISBN 978-0-19-026944-9. [Google Scholar]

- Hoel, E.P.; Albantakis, L.; Marshall, W.; Tononi, G. Can the Macro Beat the Micro? Integrated Information across Spatiotemporal Scales. Neurosci. Conscious. 2016, 2016. [Google Scholar] [CrossRef] [PubMed]

- Hume, D. An Enquiry Concerning Human Understanding: With Hume’s Abstract of A Treatise of Human Nature and A Letter from a Gentleman to His Friend in Edinburgh, 2nd ed.; Steinberg, E., Ed.; Hackett Publishing Company, Inc.: Indianapolis, IN, USA, 1993; ISBN 978-0-87220-229-0. [Google Scholar]

- Baars, B.J. Global Workspace Theory of Consciousness: Toward a Cognitive Neuroscience of Human Experience. Prog. Brain Res. 2005, 150, 45–53. [Google Scholar] [CrossRef]

- Brang, D.; Teuscher, U.; Miller, L.E.; Ramachandran, V.S.; Coulson, S. Handedness and Calendar Orientations in Time-Space Synaesthesia. J. Neuropsychol. 2011, 5, 323–332. [Google Scholar] [CrossRef]

- Jaynes, J. The Origin of Consciousness in the Breakdown of the Bicameral Mind; Houghton Mifflin Harcourt: Boston, MA, USA, 1976; ISBN 978-0-547-52754-3. [Google Scholar]

- Balduzzi, D.; Tononi, G. Qualia: The Geometry of Integrated Information. PLoS Comput. Biol. 2009, 5, e1000462. [Google Scholar] [CrossRef]

- Brown, R.; Lau, H.; LeDoux, J.E. Understanding the Higher-Order Approach to Consciousness. Trends Cogn. Sci. 2019, 23, 754–768. [Google Scholar] [CrossRef]

- Modha, D.S.; Singh, R. Network Architecture of the Long-Distance Pathways in the Macaque Brain. Proc. Natl. Acad. Sci. USA 2010, 107, 13485–13490. [Google Scholar] [CrossRef]

- Preuss, T.M. The Human Brain: Rewired and Running Hot. Ann. N. Y. Acad. Sci. 2011, 1225 (Suppl. 1), E182–E191. [Google Scholar] [CrossRef]

- Abid, G. Deflating Inflation: The Connection (or Lack Thereof) between Decisional and Metacognitive Processes and Visual Phenomenology. Neurosci. Conscious. 2019, 2019. [Google Scholar] [CrossRef] [PubMed]

- Dennett, D.C. Facing up to the Hard Question of Consciousness. Philos. Trans. R. Soc. B Biol. Sci. 2018, 373. [Google Scholar] [CrossRef] [PubMed]

- Noë, A. Is the Visual World a Grand Illusion? J. Conscious. Stud. 2002, 9, 1–12. [Google Scholar]

- Tversky, B. Mind in Motion: How Action Shapes Thought, 1st ed.; Basic Books: New York, NY, USA, 2019; ISBN 978-0-465-09306-9. [Google Scholar]

- Morgan, A.T.; Petro, L.S.; Muckli, L. Line Drawings Reveal the Structure of Internal Visual Models Conveyed by Cortical Feedback. bioRxiv 2019, 041186. [Google Scholar] [CrossRef]

- Sutterer, D.W.; Polyn, S.M.; Woodman, G.F. α-Band Activity Tracks a Two-Dimensional Spotlight of Attention during Spatial Working Memory Maintenance. J. Neurophysiol. 2021, 125, 957–971. [Google Scholar] [CrossRef] [PubMed]

- Chater, N. Mind Is Flat: The Remarkable Shallowness of the Improvising Brain; Yale University Press: New Haven, CT, USA, 2018; ISBN 978-0-300-24061-0. [Google Scholar]

- Coupé, C.; Oh, Y.M.; Dediu, D.; Pellegrino, F. Different Languages, Similar Encoding Efficiency: Comparable Information Rates across the Human Communicative Niche. Sci. Adv. 2019, 5, eaaw2594. [Google Scholar] [CrossRef] [PubMed]

- Buonomano, D. Your Brain Is a Time Machine: The Neuroscience and Physics of Time; W. W. Norton & Company: New York, NY, USA, 2017; ISBN 978-0-393-24795-4. [Google Scholar]

- Wittmann, M. Felt Time: The Science of How We Experience Time, Reprint edition; The MIT Press: Cambridge, MA, USA, 2017; ISBN 978-0-262-53354-6. [Google Scholar]

- Whyte, C.J.; Smith, R. The Predictive Global Neuronal Workspace: A Formal Active Inference Model of Visual Consciousness. Prog. Neurobiol. 2020, 101918. [Google Scholar] [CrossRef]

- Cellai, D.; Dorogovtsev, S.N.; Bianconi, G. Message Passing Theory for Percolation Models on Multiplex Networks with Link Overlap. Phys. Rev. E 2016, 94, 032301. [Google Scholar] [CrossRef]

- Bianconi, G. Fluctuations in Percolation of Sparse Complex Networks. Phys. Rev. E 2017, 96, 012302. [Google Scholar] [CrossRef]

- Kryven, I. Bond Percolation in Coloured and Multiplex Networks. Nat. Commun. 2019, 10, 1–16. [Google Scholar] [CrossRef]

- Arese Lucini, F.; Del Ferraro, G.; Sigman, M.; Makse, H.A. How the Brain Transitions from Conscious to Subliminal Perception. Neuroscience 2019, 411, 280–290. [Google Scholar] [CrossRef]

- Kalra, P.B.; Gabrieli, J.D.E.; Finn, A.S. Evidence of Stable Individual Differences in Implicit Learning. Cognition 2019, 190, 199–211. [Google Scholar] [CrossRef]

- Hills, T.T. Neurocognitive Free Will. Proc. Biol. Sci. 2019, 286, 20190510. [Google Scholar] [CrossRef]

- Ha, D.; Schmidhuber, J. World Models. arXiv 2018. [Google Scholar] [CrossRef]

- Wang, J.X.; Kurth-Nelson, Z.; Kumaran, D.; Tirumala, D.; Soyer, H.; Leibo, J.Z.; Hassabis, D.; Botvinick, M. Prefrontal Cortex as a Meta-Reinforcement Learning System. Nat. Neurosci. 2018, 21, 860. [Google Scholar] [CrossRef] [PubMed]

- Peña-Gómez, C.; Avena-Koenigsberger, A.; Sepulcre, J.; Sporns, O. Spatiotemporal Network Markers of Individual Variability in the Human Functional Connectome. Cereb. Cortex 2018, 28, 2922–2934. [Google Scholar] [CrossRef] [PubMed]

- Toro-Serey, C.; Tobyne, S.M.; McGuire, J.T. Spectral Partitioning Identifies Individual Heterogeneity in the Functional Network Topography of Ventral and Anterior Medial Prefrontal Cortex. NeuroImage 2020, 205, 116305. [Google Scholar] [CrossRef]

- James, W. Are We Automata? Mind 1879, 4, 1–22. [Google Scholar] [CrossRef]

- Libet, B.; Gleason, C.A.; Wright, E.W.; Pearl, D.K. Time of Conscious Intention to Act in Relation to Onset of Cerebral Activity (Readiness-Potential). The Unconscious Initiation of a Freely Voluntary Act. Brain J. Neurol. 1983, 106 Pt 3, 623–642. [Google Scholar] [CrossRef]

- Fifel, K. Readiness Potential and Neuronal Determinism: New Insights on Libet Experiment. J. Neurosci. 2018, 38, 784–786. [Google Scholar] [CrossRef] [PubMed]

- Maoz, U.; Yaffe, G.; Koch, C.; Mudrik, L. Neural Precursors of Decisions That Matter-an ERP Study of Deliberate and Arbitrary Choice. eLife 2019, 8. [Google Scholar] [CrossRef]

- Seth, A.K.; Tsakiris, M. Being a Beast Machine: The Somatic Basis of Selfhood. Trends Cogn. Sci. 2018, 22, 969–981. [Google Scholar] [CrossRef]

- Bastos, A.M.; Lundqvist, M.; Waite, A.S.; Kopell, N.; Miller, E.K. Layer and Rhythm Specificity for Predictive Routing. Proc. Natl. Acad. Sci. USA 2020, 117, 31459–31469. [Google Scholar] [CrossRef]

- Pezzulo, G.; Rigoli, F.; Friston, K.J. Hierarchical Active Inference: A Theory of Motivated Control. Trends Cogn. Sci. 2018, 22, 294–306. [Google Scholar] [CrossRef] [PubMed]