1. Introduction

In recent decades, a tremendous effort has been done to explore capabilities of feed-forward networks and their application in various areas. Novel machine learning (ML) techniques go beyond conventional classification and regression tasks and enable revisiting well-known problems in fundamental areas such as information theory. The functional approximation power of neural networks is a compelling tool to be used for estimating information-theoretic quantities such as entropy, KL-divergence, mutual information (MI), and conditional mutual information (CMI). As an example, MI is estimated with neural networks in [

1] where numerical results show notable improvements compared to the conventional methods for high-dimensional, correlated data.

Information-theoretic quantities are characterized by probability densities and most classical approaches aim at estimating the densities. These techniques may vary depending on whether the random variables are discrete or continuous. In this paper, we focus on continuous random variables. Examples of conventional non-parametric methods to estimate these quantities are histogram and partitioning techniques, where the densities are approximated and plugged-in into the definitions of the quantities, or methods based on the distance of the

k-th nearest neighbor [

2]. Despite vast applications of nearest neighbor methods for estimation of information-theoretic quantities, such as the proposed technique in [

3], recent studies advocate using neural networks while simulations demonstrate that the accuracy of the estimations improves in several scenarios [

1,

4]. In particular, the results indicate that by increasing the dimension of the data, the bias of the estimation deteriorates less with neural estimators. In addition to superior performance, a neural estimator of information can be considered to be a stand-alone block and coupled in a larger network. The estimator can then be trained simultaneously with the rest of the network and measure the flow of information among variables of the network. Therefore, it facilitates the implementation of ML setups with constraints on information measures (e.g., information bottleneck [

5] and representation learning [

6]). These compelling features motivate exploring the benefits of neural networks to estimate other information measures and more complex data structures.

The cornerstone of neural estimators for MI is to approximate bounds on the relative entropy instead of computing it directly. These bounds are referred to as variational bounds and recently have gained attention due to their applications in ML problems. Examples are the lower bounds proposed originally in [

7] by Donsker and Varadhan, and in [

8] by Nguyen, Wainwright, and Jordan that are referred to as DV bound and NWJ bound, respectively. Several variants of these bounds have been reviewed in [

9]. Variational bounds are tight, and the estimators proposed in [

1,

4,

10,

11] leverage this property and use neural networks to approximate the bounds and correspondingly the desired information measure. These estimators were shown to be consistent (i.e., the estimation converges asymptotically to the true value) and suitably estimate MI and CMI when the samples are independently and identically distributed (i.i.d.). However, in several applications such as time series analysis, natural language processing, or estimating information rates in communication channels with feedback, there exists a dependency among samples in the data. In this paper, we investigate analytically the convergence of our neural estimator and verify the performance of the method in estimating several information quantities.

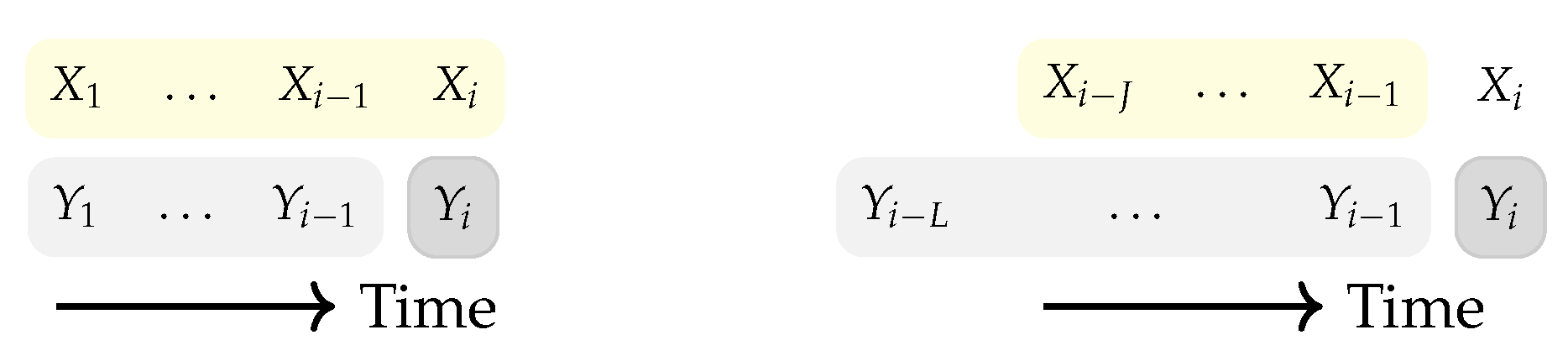

Consider several random processes such that their realizations are dependent in time. In addition to common information-theoretic measures such as MI and CMI, more complex quantities can be studied that are paramount in representing these processes. For instance, the (temporal) causal relationship between two random processes has been expressed with quantities such as directed information (DI) [

12,

13] and transfer entropy (TE) [

14]. Both DI and TE have a variety of applications in different areas. In communication systems, DI characterizes the capacity of a channel with feedback [

15], while it has several other applications in venues including portfolio theory [

16], source coding [

17], and control theory [

18] where DI is exploited as a measure of privacy in a cloud-based control setup. Additionally, DI was introduced as a measure of causal dependency in [

19] which led to a series of works in that direction with applications in neuroscience [

20,

21] and social networks [

22,

23]. TE is also a well-celebrated measure in neuroscience [

24,

25], and the physics community [

26,

27] to quantify causality for time series. In this paper, we investigate capability of the neural estimator proposed in [

11] to be used when the samples in the data are not generated independently.

Conventional approaches to estimate KL-divergence and MI such as nearest neighbor methods can be used for non-i.i.d. data; for example to estimate DI [

28] and TE [

29,

30]. However, it is possible to leverage the benefits of neural estimators highlighted in [

1] even though the data are generated from a source with dependency among its realizations. In a recent work [

31], the authors estimate TE using the neural estimator for CMI introduced in [

4]. Additionally, recurrent neural networks (RNN) are proposed in [

32] to capture the time dependency to estimate DI. However, showing convergence of these estimators requires further theoretical investigation. Although the neural estimators are shown to be consistent in [

1,

4,

11] for i.i.d. data, the extension of the proofs to dependent data needs to be addressed. In [

32], the authors address the consistency of the estimation of DI by referring to universal approximation of RNN [

33] and Breiman’s ergodic theorem [

34]. Because RNNs are more complicated to be implemented and tuned, in this paper, we assume simple feed-forward neural networks, which were also proposed in [

1,

4,

11] and in this paper. A conventional step to go beyond i.i.d. processes is to investigate stationary and ergodic Markov processes which have numerous applications in modeling real-world systems. Many convergence results for i.i.d. data such as the law of large numbers can be extended to ergodic processes; however, this generalization is not always trivial. The estimator proposed in [

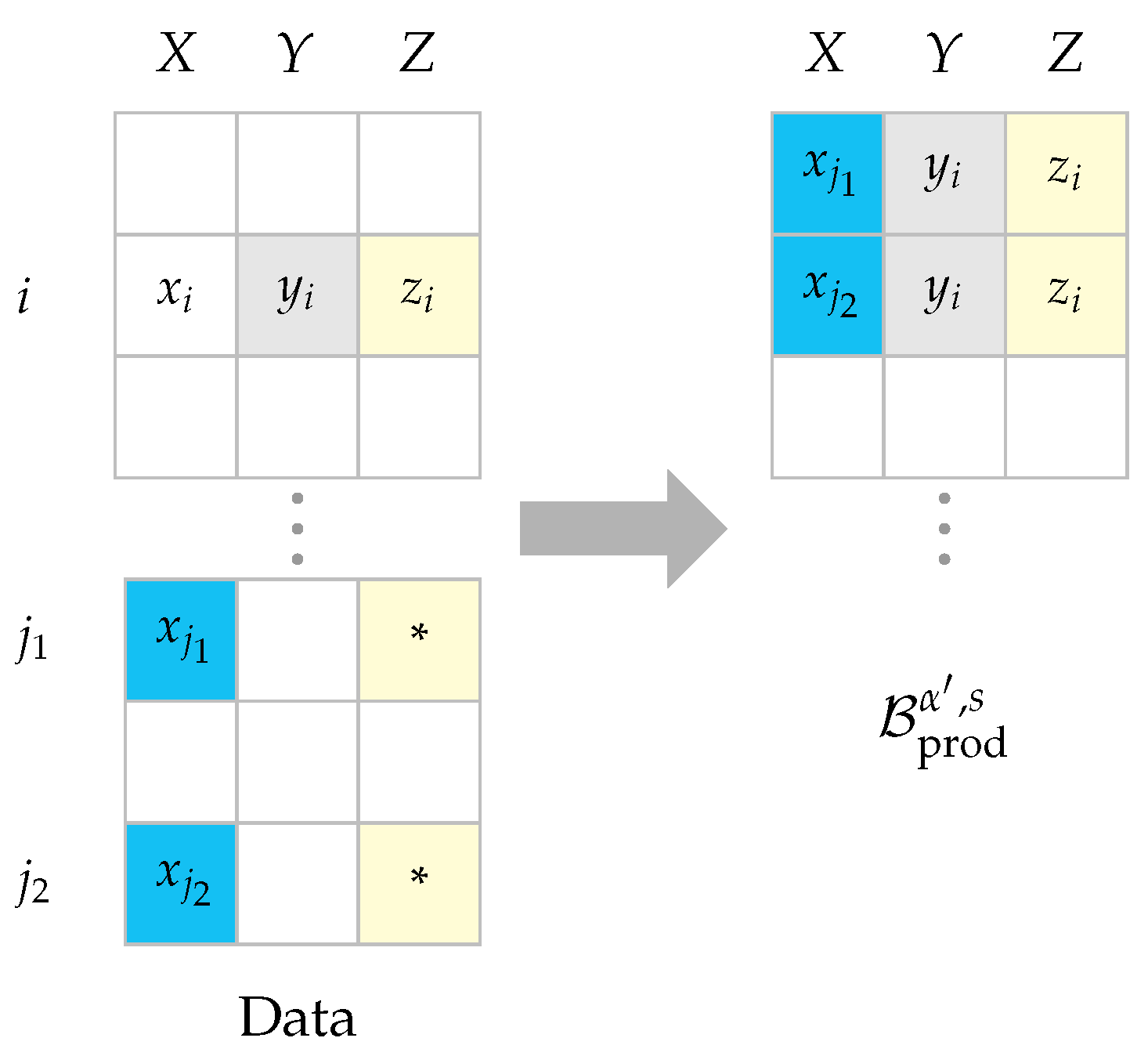

11] exhibits major improvements in estimating the CMI. Nevertheless, it is based on a

k-nearest neighbors (

k-NN) sampling technique which makes the extension of the convergence proofs to non-i.i.d. data more involved. The main contribution of this paper is to provide convergence results and consistency proofs for this neural estimator when the data are stationary and ergodic Markov.

The paper is organized as follows. Notations and basic definitions are introduced in

Section 2. Then, in

Section 3, the neural estimator and procedures are explained. Additionally, the convergence of the estimator is studied when the data are generated from a Markov source. Next, we provide simulation results in

Section 4 for synthetic scenarios and verify the effectiveness of our technique in estimating CMI and DI. Finally, we conclude the paper in

Section 5 and suggest potential future directions.

4. Simulation Results

In this section, we experiment with our proposed estimator of CMI and DI in the following auto-regressive model which is widely used in different applications, including wireless communications [

42], defining causal notions in econometrics [

43], and modeling traffic flow [

44], among others:

where

A and

B are

matrices and the rest of variables are

d-dimensional row vectors.

A models the instantaneous effect of

,

, and

on each other and its diagonal elements are zero, while

B models the effect of previous time instance.

,

, and

(denoted as noise in some contexts) are independent and generated i.i.d. according to zero-mean Gaussian distributions with covariance matrices

,

, and

, respectively (i.e., the dimensions are

d and components are uncorrelated). Please note that this model fulfills Assumptions 1 and 2 by setting appropriate initial random variables. Although the Gaussian random variables do not range in a compact set and thus, Assumption 3 does not hold, we could use truncated Gaussian distributions. Such adjustment does not significantly change the statistics of the generated dataset since the probability of finding a value far away from the mean is negligible.

In the following section, we test the capability of our estimator in estimating both conditional mutual information (CMI) and directed information (DI). In both cases,

n samples are generated from the model and the estimations are performed according to Algorithms 1 and 2. Then according to Algorithm 3, the joint and product batches are split randomly in half to construct train and evaluation sets. Then the parameters of the classifier are trained with the train set and the final estimation is computed with the evaluation set (Codes are available at

https://github.com/smolavipour/Neural-Estimator-of-Information-non-i.i.d, accessed on 20 May 2021).

To verify the performance of our technique, we also compared it with the approach taken in [

4,

31] which is as follows. Conditional mutual information can be computed by subtracting two mutual information terms, i.e.,

So instead of estimating the CMI term directly, one can use a neural estimator such as the classifier based estimator in [

4] or the MINE estimator [

1], and estimate each MI term in (

31) to estimate the CMI. In what follows, we refer to this technique as MI-diff since it computes the difference between two MI terms.

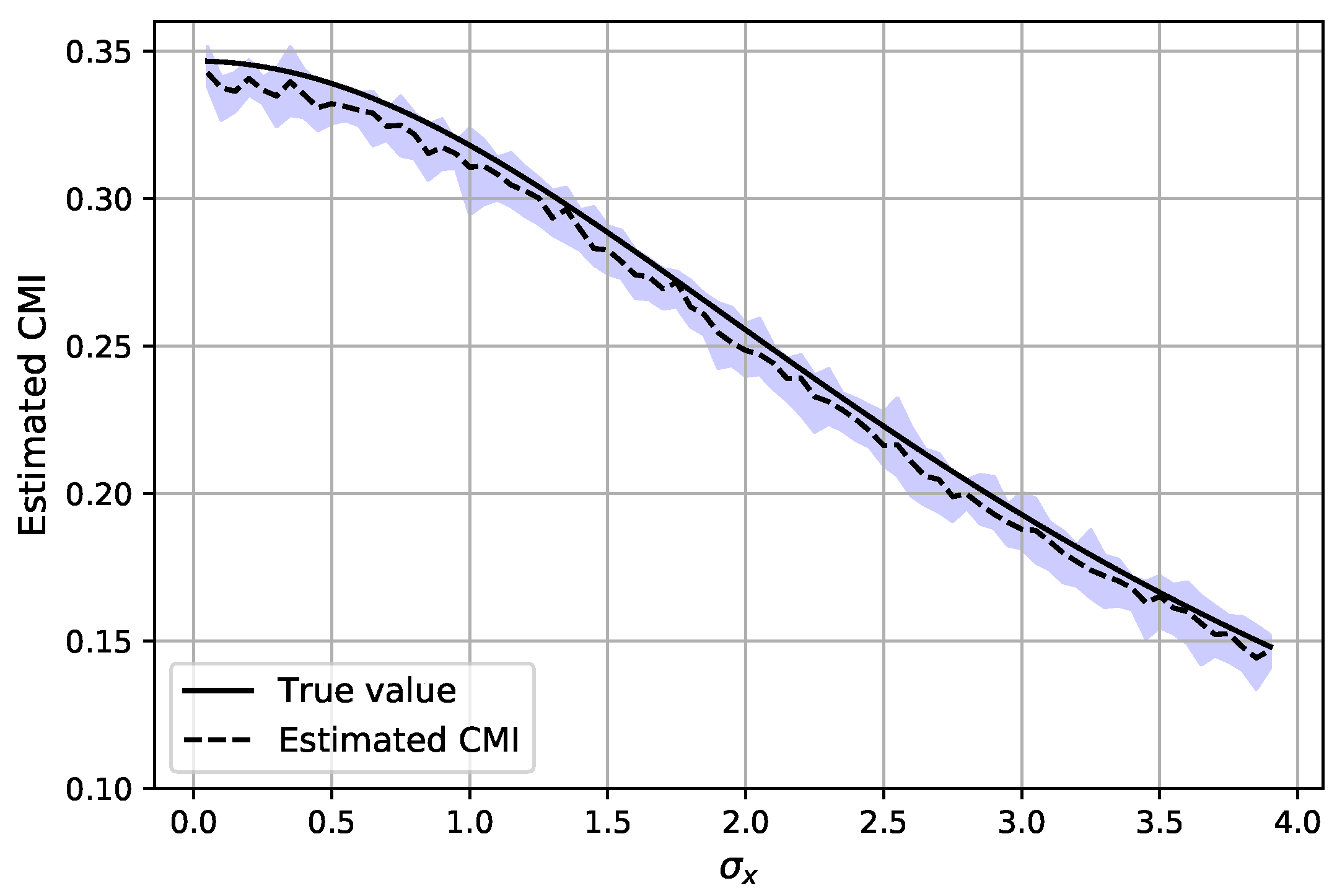

4.1. Estimating Conditional Mutual Information

In this scenario, we estimate

when

A and

B are chosen to be:

Then from (

30), the CMI can be computed as below:

Each estimated value is an average of

estimations, where in each round the batches are re-selected while having a fixed dataset. This procedure is repeated for 10 Monte Carlo trials and the data are re-generated for each trial. The hyper-parameters and settings of the experiment are provided in

Table 1. In

Figure 3, the CMI is estimated (as

in Algorithm 3) with

samples with dimension

when

,

and by varying

. It can be observed that the estimator can properly estimate the CMI while the variance of the estimation is also small. The latter can be inferred from the shaded region, which indicates the range of estimated CMI for a particular

over all Monte Carlo trials. Next, the experiment is repeated for

and the results are depicted in

Figure 4, where we compare our estimation of CMI with the

MI-diff approach, which is explained in (

31) and each MI term is estimated with the classifier-based estimator proposed in [

4]. It can be observed that the means of both estimators are similar; nonetheless, estimating the CMI directly is more accurate and has less variation compared to the

MI-diff approach. Additionally, our method is faster since it computes the information term only once, while in the

MI-diff approach, two different classifiers are trained to estimate each MI term.

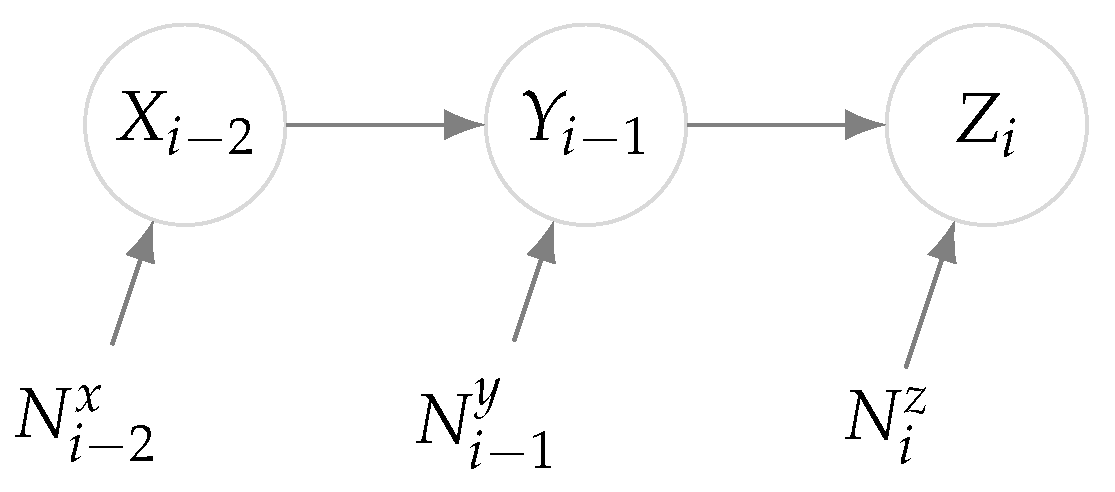

4.2. Estimating Directed Information

DI can explain the underlying causal relationship among processes. This notion has wide applications in various areas. For example, consider a social network where the activities of users are monitored (e.g., the messages times as studied in [

23]). The DI between these time-series data expresses how the activity of one user can affect the activity of the others. In addition, to such data analytic applications, DI characterizes the capacity of communication channels with feedback and by estimating the capacity, rates and powers of transmission can be adjusted in radio communications (see for example [

32]). Now in this experiment, consider a network of three processes

,

, and

, such that the time-series data are modeled with (

30) with

where

In this model, where the relations are depicted in

Figure 5, the process

is affecting

with a delay and similarly the signal of

appears on

in the next time instance while an independent noise is accumulated on both steps. The DIR from

in this network can be computed as follows:

Similarly, for the link

, we have:

Next we can compute the true DIR for the link

as:

Please note that the DIR corresponding to other links (i.e., the above links in the reverse direction) is zero by similar computations. Suppose we represent the causal relationships with a directed graph, where a link between two nodes exists if the corresponding DIR is non-zero. Then according to (

34)–(

36), the causal relationships are described with the graph of

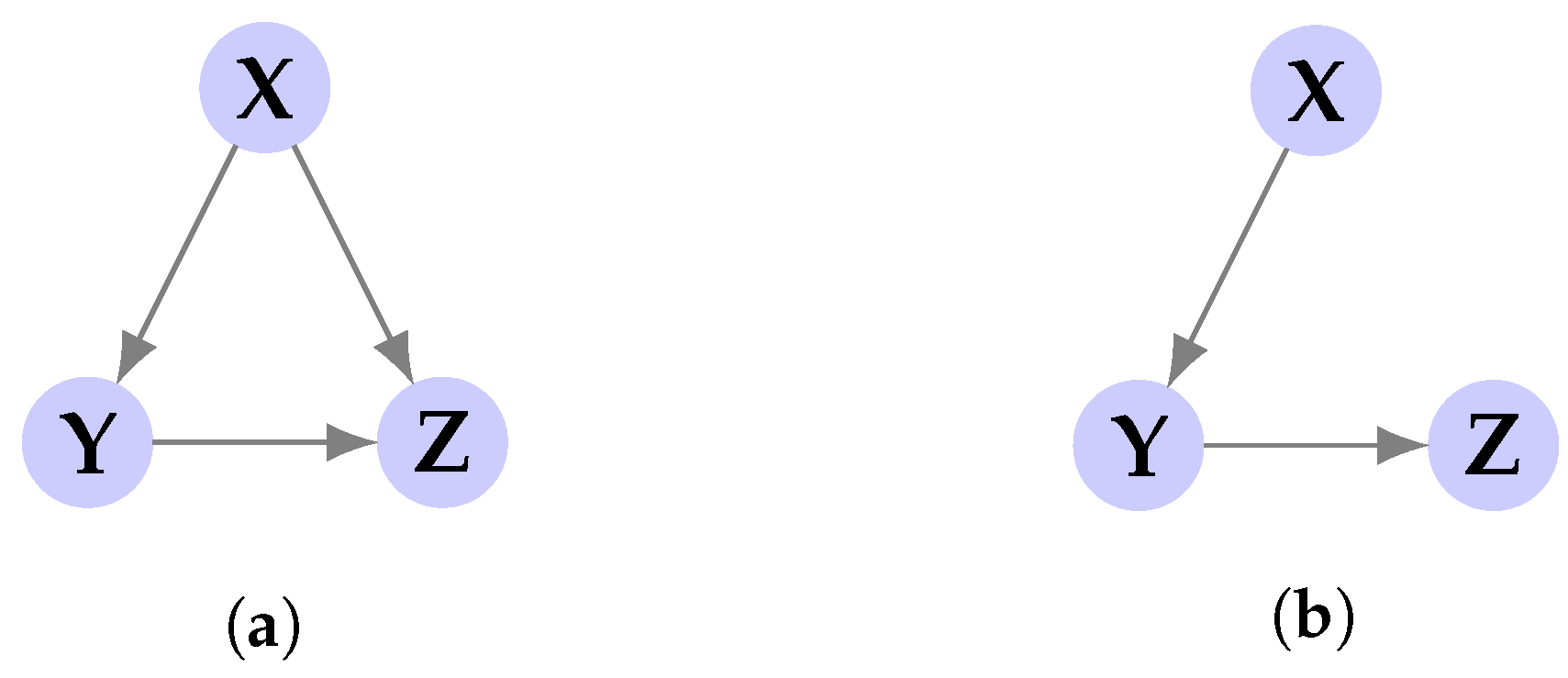

Figure 6a.

To estimate the DIR, note that the processes are Markov and the

maximum Markov order (

) for the set of all processes is

according to (

30) and (

33). Hence by (

7), we can estimate DIR with the CMI estimator. For instance the DIR for processes

can be obtained by:

where the right hand side is computed similar to (

21). We performed the experiment with

samples of dimension

generated according to the model (

30) and (

33) with

,

,

,

, and

, while the settings of the neural network were chosen as in

Table 1. The estimated values are stated in

Table 2. It can be seen that the bias of the estimator is fairly small while the variance of the estimations is negligible. This is inline with the observations in [

11] when estimating CMI for i.i.d. case.

Although

, intuitively

is only affecting

causally through

, which suggests that

. This event is referred to as

proxy effect when studying directed information graph (see [

45]). In fact the graphical representation of causal relationships can be simplified using the notion of causally conditioned DIR as depicted in

Figure 6b. To see this formally, note that from (

30) it yields that:

Considering

, the causally conditioned DIR terms can be estimated with our CMI estimator according to (

7); for instance,

The estimation results are provided in

Table 3 for all the links, where for each link we averaged over

estimations (as in Algorithm 3); then the procedure is repeated for 10 Monte Carlo trials in which we generate a new dataset according to the model.

In this experiment, we did not explore the effect of higher dimensions for data, although one should note that for the causally conditioned DIR estimation, with

the neural network is fed with data of size 9. Nevertheless, the performance of higher dimensions for this estimator with i.i.d. data has been studied in [

11] and the challenges of dealing with high dimensions when data has dependency can be considered to be a future direction of this work. Additionally, although the information about

may not always be available in practice, it can be approximated by data-driven approaches similar to the method described in [

45].

5. Conclusions and Future Directions

In this paper, we explored the potentials of a neural estimator for information measures when there exist time dependencies among the samples. We extended the analysis on the convergence of the estimation and provided experimental results to show the performance of the estimator in practice. Furthermore, we compared our estimation method with a similar approach taken in [

4,

31] (which we denoted as MI-diff), and demonstrations on synthetic scenarios show that the variances of our estimations are smaller. However, the main contribution is the derivation of proofs of convergence when the data are generated from a Markov source. Our estimator is based on a

k-NN method to re-sample the dataset such that the empirical average over the samples converges to the expectation with certain density. The convergence result derived for the re-sampling technique is stand-alone and can be adopted in other sampling application.

Our proposed estimator can be used potentially in the areas of information theory, communication systems, and machine learning. For instance, the capacity of channels with feedback can be characterized with directed information and estimated with our estimator and can be investigated as a future direction. Furthermore, in machine learning applications where the data has some form of dependency (either spatial of temporal), regularizing the training with information flow requires the estimator of information to capture causality which is considered in our technique. Finally, information measures can be used in modeling and controlling a complex system and the results in this work can provide meaningful measures such as conditional dependence and causal influence.