1. Introduction

This paper applies the constant-Q standardized Infrasonic Energy, Nth Octave (Inferno) framework [

1] to the Gabor wavelet [

2] and proposes binary metrics for signature characterization. One of the primary motivations of this work is to facilitate the fusion of multi-modal data streams in sensor systems that collect information at different temporal and spatial granularities. Consider a cyber-physical sensor system that converts observables into digital time series data consisting of signals and noise. Signals of interest can be hypothetically described by sparse representations that define their signature. If the signature characteristics are sufficiently unique and recognizable from those of ambient coherent and incoherent noise, they can be used to identify and classify an object or process.

The transformation of diverse digital measurements into robust, scalable, and transportable representations is a prerequisite for signal detection, source localization, and machine learning applications for signature classification. The challenge at hand is to construct sparse signal representations that contain sufficient information for classification. Unambiguous classification can be elusive; measurement artifacts, unexpected signal variability, and non-stationary noise often conspire to add uncertainty to our classifiers. As will be discussed in this paper, information and uncertainty quantification can be substantially simplified when using standardized wavelets and binary metrics.

1.1. Binary Representations of Time and Frequency

Oscillatory processes often exhibit spatial and temporal scalability and self-similarity. Although some physical processes scale linearly, many exhibit recurrent patterns that scale logarithmically and are well represented by power laws. Both linear and logarithmic scales can coexist. For example, overtones in harmonic acoustic systems are often linearly spaced in frequency, yet our sense of tone similarity is close to base 2 logarithmic (binary) octave scales. The term octave comes from the eight major notes in 12-tone musical notation, where every note frequency closely repeats with factors of two. This paper uses the term octave and binary interchangeably to denote the base 2 geometric scaling of frequency and time. The mapping between frequency (or pitch) and time (period) is direct for continuous tones, such as musical notes, or statistically stationary oscillations like the orbits of planets. Discrete Fourier transform methods are exceptionally well suited for the interpretation of steady tonal signals with linearly spaced harmonics. The Fourier transform deconstructs oscillations with distinct recurrent time periods into a spectral representation consisting of a set of discrete frequencies. The spectral transformation can be sparse because it removes time as a variable, facilitating the reconstruction of stable oscillations from a subset of coefficients in the Fourier spectrum.

Stable oscillators can be even more succinctly represented by a fundamental frequency or period (exclusive or, as they are not independent). For many physical systems, a map can be constructed between the fundamental frequency and its harmonics. Signals where the fundamental and its harmonics (when they exist) are statistically stationary and easily discernible above noise can be referred to as the easy continuous wave (CW) problem, or the zeroth (trivial) class of CW problems. The trivial CW problem is well understood and should routinely be used as a speed and performance benchmark for detection and classification algorithms.

The plot thickens when temporal variability is introduced in the signal or the noise. In the first class of CW problems, temporal variability is due to non-stationary broadband or band-limited noise. This is a chronic condition in infrasonic signal processing, where ambient noise can be coherent or incoherent across a dense sensor network [

3] or an array aperture [

4]. The first class of CW problems is also well understood when noise is predictable (e.g., normally distributed) over a time duration that is much longer or much shorter than the signal period in the detection band. However, this class of problems is not as well characterized when noise is not evenly distributed across the signal detection bandpass and can be particularly inconvenient when noise overwhelms the fundamental frequency band.

In the second class of CW problems, temporal variability is introduced by a change in the temporal, spectral, and/or statistical properties of the signal. These changes can be due to aging, failure, motion, communication, or any other change in state. In a simple two-state problem, one may quantify the properties of the first state, the transition period between states, and the properties on the final state. In a multiple-state problem, such as with communication systems, speech, or music, the Short-Time Fourier Transform (STFT) is often used to characterize spectral variability.

If the transition period between states is faster that the characteristic time scale of the initial state, the STFT does not always provide an accurate representation of this transient. For some signals, the details of the transient are not relevant and only the steady states are important. But a new class of signals emerges when the detection of transient anomalies is prioritized.

The zeroth class of transient problems consist of delta functions with their integrals and derivatives. Such instantaneous spikes do not exist in the natural world but can be readily constructed digitally to evaluate the impulse response of a system or represent a neuromorphic network [

5,

6]. The first class of transient problems would consist of realistic variants of the delta function that may be observed in the wild when a rapid change of state becomes the signal of interest. Just like a single-tone sinusoid may be regarded as the prototype end member for the trivial CW problem, an explosive detonation could be considered as a prototype transient signal source [

7]. A time series corresponding to a blast would vary from ambient noise to a brief blast transient that fades back to a possibly perturbed background noise state. The transition from noise to signal can be devastatingly fast. In general, poorly-conditioned STFTs provides inadequate representations of brief, rapidly changing signals because the signatures no longer resemble a CW and are not optimally represented by sinusoids. However, since a STFT is a windowed sinusoid, a well-conditioned STFT window at the peak frequency of a signal turns the waveform in the STFT window into a wavelet that is well-tuned for the main signal bandpass.

The concept of a windowed sinusoid to represent a transient signal was introduced by Gabor [

2] in 1946, and later mathematically formalized by others as wavelets. Variants of the Gabor wavelet are presented in the main text and the

Appendix A,

Appendix B,

Appendix C,

Appendix D,

Appendix E, and

Appendix F.

The second class of transient problems overlaps with the second class of CW problems. It corresponds to transients of significant durations which could be addressed with STFTs, wavelets, or their combination. Very often a transient is imbedded in a noise field with band-limited harmonic structure. Or the transient itself is a sweep, characterized by a substantial frequency change in the fundamental frequency and its harmonic structure.

The primary differences between STFTs and wavelet transform approaches are that the STFT uses a linear period mapping and a constant time window duration, while wavelets uses geometric pseudo-period mapping and time window durations that scales with the pseudo-period. Whereas in the Fourier framework there is a one-to-one mapping between time and frequency, the wavelet mapping between time scale and frequency can be less evident and depends on the selected wavelet.

This paper concentrates on developing highly standardized Gabor atoms [

2] for the design and evaluation of transportable, sensor-agnostic transient signal detection, sparse feature extraction, and classification algorithms.

1.2. Binary Representations of Energy and Information in Cyber-Physical Systems

A Cyber-Physical System (CPS) is an algorithm-controlled computer system with physical inputs and outputs. A typical example of a mobile CPS is a smartphone with a microphone input (sound activation) that outputs a response (speech, music, or signal recognition) to a screen. Cyber-physical Measurement and Signature Intelligence (MASINT) is an emerging discipline that concentrates on phenomena transmitted through cyber-physical devices and their interconnected data networks. For smartphones and other multi-sensor mobile platforms connected to wireless networks, this includes digital noise, bit errors, and latencies internal to the device and its communication channels [

8,

9,

10].

Data processed by the cyber part of CPSs are digital and represented as binary digits (bits). Although the precision of the data would be initially defined by its their allocated integer word size (16, 24 bit, etc.), the original data may be converted into floating point equivalents when an algorithms acts on them. For example, consider sound recorded by a smartphone at the standard rate of 48,000 samples per second. A typical sound record may have 16-bit resolution, so that its dynamic range in bits is 2−15 to 215 – 1. However, one may only be interested in the lower frequency components of the raw data, so one would implement a lowpass anti-aliasing filter before decimation. Such filters often require floating point arithmetic in double precision (52 bit mantissa re IEEE 754 at the time of this writing) to reduce instability. Therefore, the precision of the resulting lowpass filtered data would exceed the specification of the original 16-bit integral input. However, the theoretical dynamic range of the system would not exceed the specification of the integer 16 physical input. Furthermore, data compression can be more efficient on floats than integers, which leads us to the topic of fractional bits as a measure of CPS amplitude, power, and information.

Many of the metrics we used in traditional physical and geophysical systems are inherited from the analog era. The base 10 decibel scale is a measure of power relative to a reference level, and is used extensively in telecommunications, acoustics, and electrical engineering. Let us estimate the hypothetical dynamic range of a 16-bit microphone record of a sinusoid at full scale. The peak rms amplitude would be

All systems have quantization and system noise, and the noise can have a positive or negative bias. This is not a noise paper; for the sake of illustration, I model the system noise as oscillating around a mean of zero and alternating between −1 and 1,

The theoretical dynamic range of the system in dB for a sinusoid recorded with a 16-bit microphone and sound card combination with a one-bit noise floor could be characterized by the ratio of the power

where a digital response is converted to the legacy base 10 logarithmic system. One advantage of the decibel approach is that it can be compared to the response of the human ear and other analog systems. However, analog comparisons are not necessary for many cyber physical applications. A more natural unit for CPS is the binary logarithm

where the unit fbits corresponds to floating point representation of bits. For example, in 24-bit systems, present-day quantization error is ~3 bits, leading to an effective dynamic range of ~21 fbits. Likewise, a 24-bit integer cast into a 32-bit symbol can have 8 + 3 bits of noise, and may be converted to a float that still has ~21 fbits of dynamic range.

Another unit that is often specified is the ½ power point of the frequency response of a filter, which defines the quality factor of that filter. This is often referred to as the −3 dB point, since . However, accurate filter bank reproductions require a clear specification of the ½ power point, and conversion from base 10 to base 2 specification can lead to computational errors. Plotting filter responses in floating point bits can be informative as it reveals the precision of the computation. Because it is awkward and there is already a precedent in information theory for using bits outside of their original definition as a binary digit, from here onwards in this paper the word bits will be used to represent either the floating point equivalent of bits or as a metric for information.

Consider the communication channel capacity introduced by Shannon [

11], which in its simplest form can be expressed as

where

is a measure of the differential entropy of a signal in the presence of noise,

W is a measure of the bandwidth,

is representative of the power of a signal, and

Ns is representative of the noise power. The units of the channel capacity are in shannons per second, or bits per second, and represent the theoretical upper bound of the rate of information transfer in a communication channel. Since it is often impossible to separate noise embedded in a signal but it is often possible to construct a noise model, we can think the ratio

(Sg + Ns)/Ns as a practical measure of the signal to noise ratio (SNR) of an observed signal that has been carried through a cyber-physical system or a medium.

The effective SNR and therefore the detectability of a compressed pulse (such as a wavelet) is the product of the bandwidth, the signal to noise ratio, and the time duration of a signal [

12]. When using constant-Q Gabor wavelet with fractional octave (binary) bands n of order N and center frequency

to process a signal in the presence of noise, the next section shows that for

the signal detectability per band can be represented by

and the upper limit on rate of information in bits per second for a band-limited pulse with center frequency

can be estimated from

Energy and Shannon entropies using the binary log are constructed for both the wavelet coefficients and SNR in

Section 2.5.

2. Methods

This is an algorithmic paper providing foundational methods to construct standardized Gabor wavelets within a binary framework. No materials are included or required; all the algorithms required to reproduce the results are presented, with recommendations for specific existing functions in open-source software frameworks.

Although the methods are intended to be sensor-agnostic and transportable across diverse domains, the selection of the Gabor mother wavelet does define the optimal applicability of the algorithms: the methods in this paper will work best with a transient, or a portion of a transient, that can be well represented by a superposition of Gabor wavelets. Fortunately, this covers a fairly wide range of transient signature types. The fundamental principles in this work are expandable to other wavelets as well as to four-dimensional spatiotemporal representations.

2.1. Transforming Time and Frequency to Scale

A digital time series is constructed by collecting digital measurements at discrete times separated by a nominal sample interval . One may estimate a standard deviation from nominal associated with the sample interval; when this error is a very small percent of the sample interval (e.g., parts per million) it is generally treated as a constant. Some variability in the sample rate should be expected in cyber-physical sensing systems under different conditions (temperature, battery level, power load, data throughput, etc.) even when the systems have the same hardware configurations. This can have an impact when attempting high-accuracy time synchronization. If adequate performance metrics are collected, the sample rate error can be quantified and potentially compensated by an additional time-varying correction to the clock drift.

In many scientific domains, such as astronomy and climatology, the sample interval may be greater than one second. Domains where the phenomena of interest change more rapidly use the equivalent metric of samples per second, referred to as the sample rate and often expressed in units of Hertz. The relationship between the sample interval

and its standard deviation

and the sample rate

and its associated error can be expressed as

Although time is the primary discrete sampling parameter, system requirements are often provided as frequency specifications within the context of Fourier transforms. The nominal sample rate sets the maximum upper edge of the bandpass of the system; there should be negligible energy at the Nyquist frequency, which is half of the sample rate. The actual bandpass of a system is set by the low- and high- frequency cutoffs of a cyber-physical system, which may include the sensor response, hardware specifications, firmware and software modifications (such as anti-aliasing filtering), and data compression.

The mapping between frequency and period is simple for a continuous wave tone; the tone period is the inverse of the tone frequency. It is not so clear for transients. Following [

7], a transient with a single spectral peak at a center frequency

may be associated with a pseudo-period

. This mapping is important as the scale of wavelet representations is linearly proportional to the pseudo-period, which is also referred to as the scale period. A high-level overview of the

Appendix A,

Appendix B,

Appendix C,

Appendix D,

Appendix E, and

Appendix F is provided in this section for ease of reference.

Constant quality factor (

) bands with constant proportional bandwidth are traditionally defined as [

1]

where

is the bandwidth centered on

. The

is a measure of the number of cycles needed to reach the ½ power point at the bandwidth edges.

Appendix A shows that the bandwidth edges are well defined in fractional octave band representations of order

so that the quality factor can be evaluated precisely as,

From [

1], and as shown in

Appendix B and

Appendix C, the characteristic time duration of the Gabor atom can be represented as

where

is a measure of the number of oscillations in the characteristic time duration of a wavelet. For efficient computation all physical times are nondimensionalized and converted to equivalent sample points by multiplying by the sample rate. If

is the time in seconds, the nondimensionalized time

is

The approach is wavelet-agnostic up to this stage. Direct application of the ½ power points of the spectrum of Gabor-Morlet wavelet (

Appendix B) at the band edges (

Appendix C) yields

where

controls the duration of the wavelet to match the order’s

Q. This last step can be tailored to other wavelet types to produce constant-Q variants. Adherence to the specifications in Equations (10)–(14) yield standardized and well-constrained quantized Gabor atoms.

2.2. Binary Quantized Constant-Q Gabor Atoms

Gabor [

2] extended the Heisenberg principle to define the time-frequency uncertainty principle, and further proposed deconstructing signals into elementary waveforms referred to as time-frequency atoms [

2,

13]. These atoms provide the optimum compromise between time and frequency resolution and thus maximize information density. The Morlet wavelet [

14,

15], functional kin to the Gabor atom, was developed for seismic applications and is much beloved by mathematicians. Much has been said and written over the last 75 years about the merits, and limitations, e.g., [

16], of the Gabor atom in diverse fields of applied science ranging including quantum mechanics, e.g., [

17], neurophysiology, e.g., [

18] and radar target recognition, e.g., [

19].

Consider the translation and dilation of the familiar Gabor-Morlet mother wavelet

with dictionary [

13]

which can be fully expressed as

where the mapping between the nondimensional scale

and the band period is

The constant-Q Gabor atoms are constrained to the discrete set of values

with quality factor

defined by the ½ power points of the Fourier spectrum, quantized order

. For this functional form, the wavelet admissibility condition can be represented as

By quantizing constant-Q bands and the resulting wavelet scales it is possible to also discretize the uncertainty in time and frequency of the resulting analyses. Gaussian pulses in general [

12] and Gabor atoms in particular are well-known to have the lowest time-frequency uncertainty [

2,

13], making them natural building blocks for uncertainty quantification. The Gabor atom has the minimal value of the Heisenberg-Gabor uncertainty (

Appendix D), where the nondimensionalized temporal standard deviation

and angular frequency standard deviation

over all time and frequency satisfy

which quantify time and frequency uncertainty discretely, minimally, and unambiguously.

Converting to physical time with

yields a more familiar Morlet representation

where the scale

may be readily recognized as the standard deviation of a Gaussian envelope with integration variable

. This is very similar to the original form proposed by Gabor [

2], and makes intuitive sense as the oscillatory term is clearly exposed. However, the additional factor of

required to nondimensionalize the numerator of the Gaussian envelope for numerical computation has indubitably been an initial source of confusion amongst some physical scientists, author included.

2.3. Quantum Order

The recommended quanta for the Gabor atoms are positive integer band numbers

and the preferred orders

as in [

1]

though the special orders

N = 0.75 and 1.5 are considered. The mother wavelet is uniquely defined (and can be quantized) by the order

N, although it is often specified by the more accessible variable

. The mother wavelet is scale invariant. Each discrete atom in its dictionary is defined by its order

N, its band number n, and a refence scale at

n = 0. If the Gabor atoms remain within their quanta, there is only one degree of freedom: the reference scale. The reference scale can be set by the data acquisition system (e.g., the Nyquist frequency) or a standard reference frequency. The scale schema can also be set by a signal tuning frequency; the theoretical peak acoustic frequency for the detonation of one metric ton of TNT is used in

Section 3. When integrating multi-sensor time series with different evenly and unevenly sampled data, it would be preferrable to either use a standard reference frequency or time scale (e.g., 1 kHz for audio, 1 Hz for infrasound [

1]) or a shared target frequency. The resulting frequency bands will be evenly spaced logarithmically to standardize and facilitate multi-sensor cross-correlations and data fusion. It is important to reinforce that the mapping from physical time scale to nondimensional scale depends on the sample rate. Specifying a nominal sample rate

or sample interval

as in Equation (9) permits conversion to physical time

and scale pseudo-period

from the wavelet parameters,

and map to the physical scale center frequencies

It may be useful to think of the binary (base 2) order N as the quantized time and bandwidth stretch factor of the Gabor atom; as the order increases, the wavelet stretches in time and narrows in bandwidth, with each frequency band occupying a constant proportional frequency bandwidth that produces oscillations at the band frequency in the time domain. Although in theory it is possible to use any integer band indexes n, for computational implementation it is practical to use only nonnegative integers to represent temporal scales [Equation (26)], with corresponding to the smallest scale and to the highest center frequency below the Nyquist frequency.

This paper recommends atom quantization using the well-established fixed order

and quality factor

values of standard geometric binary intervals referred to as fractional octave bands in acoustic and infrasound applications (

Table 1).

Appendix A develops a useful approximation for the quality factor

of order

N,

with exact equivalence for octave bands at

N = 1 (

Table 2).

These relations are seldom made explicit for constant-Q wavelet representations, which often leads to inadvertently creative interpretations and implementations. In traditional fractional octave bands,

is an integer with preferred numbers 1, 3, 6, 12, 24 and its half-power (−3 dB) band edges and center frequencies are well established so their

Q can be readily computed (

Table 1 and

Table 2). The band spectrum will overlap at the half-power point band edges to reduce (or at least regulate) spectral leakage and improve energy estimation. Dyadic wavelets use order

N = 1 and are weakly admissible (

); carefully handled they do lead to very sparse and fast computational implementations (e.g., [

13]).

The estimate for

in terms of the order

is useful for practical application where we wish to specify the number of oscillations

in a window. If one abandons the bounds of the preferred bands, one can estimate the order for a wavelet that has any number of oscillations in its support window. Once N is estimated, exact values for the center frequencies and band edges can be computed from the expressions in

Appendix A. These bespoke constant-Q bands will not meet binary (factor of two) center frequency recursions with ½ power band edge overlap, but may be useful for highly customized tuning. Examples are provided in

Table 3.

Consider the curious case of a single oscillation in the window, where

and

Q is evaluated more precisely from the order

N. Although intuitive and compact, the resulting wavelets are marginally admissible (

) and produce oddly spaced, but legitimate, constant-Q frequency bands that grow rapidly and hit only every fourth standard octave every three bands. The window duration will be only 1.74 periods long and the spectral resolution of the Fourier transform will be exceedingly sparse. Adding another oscillation per window (increasing the quality factor to approximately two), would correspond to

The resulting wavelets that are more admissible (

) but also produce oddly spaced constant-Q frequency bands that land on every second standard octave every three bands. Third order bands hit exact powers of two every third band and have around four oscillations per window (

Appendix C). Although it is possible to force center frequency scales, if best practices for band overlap are ignored one will have a set of wavelet filter banks with substantial spectral leakage or gaps between adjacent bands, and the possibility for excessively overdetermined or underdetermined results. This is what usually happens with default parameters on most continuous or discrete wavelet transform algorithms. This paper standardizes and regulates band spacing by asserting the relationship between order, bandwidth, and duration. Since it is both silly and mathematically inadvisable (even inadmissible) to construct a wavelet with less than one oscillation in its window, it is recommended that

. This suggests a minimum order number (quantum) of

N = 3/4 for stable Gabor atoms, with

N = 1 yielding value exact power of two (binary) bands.

It is possible to estimate the smallest possible universal binary scale from the Planck time, the smallest measurable time scale

Since the Planck time would be the smallest possible sample interval, the smallest oscillation that could be observed would be at the universal Nyquist period

At the other end of the timeline, the age of the universe is estimated to be 13.8 billion years, or

so that all time scales in the known universe can be encompassed within ~200 temporal octave bands. Computationally speaking, this is a small range of octaves that can be spanned by 200 Gabor atoms. Earth is estimated to be ~4.6 billion years old, covering around about 57 of those temporal binary bands. The oldest bones associated with Homo Sapiens-Sapiens are ~200,000 years old and within the last 42 temporal sub-bands since Earth’s inception. The human voice for average individuals ranges between one and two octaves, and five octaves species-wide. The nondimensionalized scale

of the binary (

N = 1) Gabor atom at the Nyquist frequency is always the same whether one uses the Plank scale or a sample rate of 48 kHz

However, it is inadvisable make observations at the Nyquist limit, and it would be preferable to consider the starting center scale at one quarter of the sample rate, or four times the sample period. It would be possible to construct universal time scales with

, whereas all timescales would occupy temporal sub-bands. The corresponding sensor-agnostic nondimensionalized scale would be

.

A third order representation (

N = 3) of all the times scales in the universe can be represented by 600 temporal Gabor atoms. The beauty of the third order representation is that it is very close to the decimal representation, with every ten 1/3 octaves producing a decade

, and thus provide a geometrically elegant compromise between ten-digit humans and binary digit machines. In addition to better meeting the admissibility condition, third order bands will contain over 99% of the information within their octave (

Appendix D), making them compact temporal carriers. If the third order representation is used as the base order (

N = 3), the preferred numbers are binary multiples (

N = 3, 6, 12, 24 in

Table 1), with a proportional elongation in the wavelet support and increase in spectral resolution.

Many software packages readily produce a Gabor-Morlet wavelet with default parameters (

Appendix E). One of the most common values is

, which is close to order

(

Table 4). Other common values of the wavelet support correspond to

and the more reasonable

which is close to preferred order

.

Because none of these specifications correspond to standard orders, the resulting wavelets will tend to either overestimate (due to spectral leakage) or underestimate (due to spectral gaps between bands) the energy within adjacent constant-Q bands if binary center frequencies are forced, or will produce non-standard center frequencies.

Although it is possible to quantize the constant-Q Gabor atoms using the order

N, the quality factor

Q, or the multiplier

, the order is the most logical way to define the quanta of the wavelet. Describing the proposed wavelet dictionaries of preferred orders as the quantized constant-Q Gabor atoms with binary bases and overlapping ½ power points is rather awkward, and this paper proposes referring to these constructs as quantized wavelets, quantum wavelets of order

N, or Nth order Gabor atoms. Although

N = 1 provides a sparse clean binary (with power of two steps in frequency) representation with the tightest windows, the admissibility condition coupled with the better reconstruction capability presented in the next section suggests that using

N = 3 as the base order is preferable, with the added advantage that all subsequent preferred orders in

Table 1 are binary factors of base order 3.

2.4. Continuous Wavelet Transform Deconstruction and Reconstruction

The continuous wavelet transform (CWT) of a function

is represented in [

13] (Equation (1.13)) as

where the asterisk (*) represents the complex conjugate. The equivalent CWT for a discrete sequence of observations (or a synthetic time series)

is the convolution of

with a scaled and translated version of

. Consider the nondimensional Quantum mother wavelet of order

N,

The discrete CWT can be expressed as

where the symbol

denotes a convolution [

13], often computed using the discrete Fourier transform. This is comparable to the expression in [

20], although their convolution has no amplitude scaling as it is corrected afterwards. The CWT coefficients

provide a measure of the degree of similarity between the time series and the wavelet of scale index

n while translating along the time index

m. While exact waveform reconstruction from the CWT is challenging (e.g., [

21,

22]), reference [

20] provides an approximate expression for the wavelet-filtered time series

. The reconstruction filter from the Nth order Gabor atoms becomes,

where

Re{ } denotes the real part of the coefficients and the reconstruction factor

is scale independent and constant for wavelet function with fixed

. The reconstruction factor can be estimated by comparing against known test functions. Reference [

20] empirically computed a reconstruction coefficient of

with

, and [

23] provides other estimates. Numerical evaluation shows the product

, and the reconstruction approximation for the analytic (

Appendix F) quantum wavelet of arbitrary order is

It is important to note how substantially different this expression is to the inverse discrete Fourier transform, where

and

are the Fourier coefficients. Unlike the discrete Fourier transform, the standard wavelet reconstruction does not require multiplication by the mother wavelet. For the special case where the atoms are well matched to the signal of interest, consider the sparse set of coefficients corresponding the complex time indexes

of the maximum energy, entropy, or SNR at each scale

where the maximum coefficient indexes can be computed separately for real and imaginary components. This has the form of a sum over the dominant Gabor atoms for each scale. Since one is only considering the maxima in a given record window, this is a very sparse representation consisting of the coefficient and the time offset corresponding to the peak energy or entropy estimate. Numerical evaluation shows that this last expression can be used to estimate the full analytic function representation as long as reconstruction uses the complex coefficients but only the real atom function since the time shifts in the Hilbert transform already include the

time shift.

2.5. Wavelet Information and Entropy

One advantage of the constant Q wavelet representation is that it is possible to estimate the information content and detectability of a signal in a band by applying the same set of wavelet transforms to the signal and comparing them to the transform of a noise segment or model. Consider the definition for Shannon’s channel capacity [

11], with

where

Sg is the wavelet-transformed signal power and

Ns is the wavelet-transformed noise power in a band. Consider two possible estimates for the bandwidth

W (Shannon [

11] left some room for interpretation). The first estimate approximates

W by the ½ power point bandwidth

The second estimates

W using the Gabor box standard deviation for the angular frequency

so that

Taking the average of

and

provides a compromise between the two possible estimates, and a returns a tidy factor of ~0.5

The effective

and therefore the “detectability” of a bandwidth-limited compressed pulse [

12] can be represented by the product of the Gabor time-bandwidth product (

Appendix C) and the signal to noise ratio

Since the time-bandwidth product for the Gaussian wavelet is constant

and the uncertainty of its Gabor box is at the minimum, the likelihood of the detection of a signal of interest in a given band

is only proportional to its SNR.

Shannon’s definition of the channel capacity was intended to represent the highest theoretical transfer rate of information through an analog line. Since SNR is given in power, which is typically the square of the signal amplitude, an unscaled binary log is off by a factor of two from the original data in bits. To reconcile this definition with the original collection of a time series signal in floating point bits (fbits), I define the binary SNR to match the signal rms amplitude as well as Shannon’s units for the information rate per band

of the quantum compressed pulse as

The increase in higher information delivery rate with increasing frequency is intuitive as more cycles are transferred per second. As the order number increases, the bandwidth narrows and so the potential information rate decreases. Less obvious is the decrease in high-frequency information with increasing distance in a lossy transmission channel. Assuming the noise power remains unchanged, the decrease in SNR with increasing scaled distance

from the source origin on a lossy acoustic channel can be represented as

where

for spherical geometric spreading in free space and

for cylindrical spreading in a waveguide. The binary SNR can be represented as

The term in parenthesis shows the expected reduction of one bit per doubling of distance for spherical spreading

. The last term suggests the frequency dependence of the channel capacity in a lossy acoustic medium may have the general form

so that with increasing range the optimal information transmission frequency shifts to lower frequencies. One may readily extend the binary SNR definition to the measure of relative power

and the −3dB half-power point becomes the −1/2 bit power point.

The entropy of a signal of interest can be estimated by the wavelet coefficients. A practical approach is described in [

24]. The information content of each scale

n at the time step

m can be estimated from the wavelet energy. First estimate the complex wavelet coefficient energy from

The total energy in a given record can be estimated from

The complex probability of

in the record is

where

The log energy entropy (lee) per coefficient can be defined by the binary logarithm

where it should be noted that the factor of two scaling coefficient does not alter the relative weight of each coefficient. The Shannon entropy (se) per CWT coefficient is defined as

with corresponding complex versions that separate the real and imaginary components. These entropies can be readily evaluated to construct noise models from the lowest entropy components. If a stable noise model can be constructed from the record or from prior knowledge of the environment and transmission channel, SNR estimates can be computed and the process repeated to evaluate the dimensionless binary log of the SNR

and the product of the ratio and the binary ratio (

RbR), an entropy-like nondimensional metric of the SNR that can be readily evaluated to identify and extract the wavelet coefficients would be most representative of a signal of interest,

3. Discussion: Explosion Signature

The methods presented in this paper are foundational: the intention is to use the Gabor atoms as fundamental building blocks with minimal time-frequency uncertainty and high information density. These methods are illustrated and discussed in the context of a blast pressure pulse. Consider a normalized transient wave function characteristic of an explosion. Suppose one wanted to construct a sparse wavelet representation of a blast pulse with peak energy at 6.3 Hz, corresponding to the detonation of one metric ton of TNT observed at 1 km. It is known [

7] that at some distance from the source this center frequency may drop by an octave (factor of two in frequency) or more, as well as become stretched out (dispersed) in time due to propagation effects. A theoretical source pressure function for the detonation of high explosives was developed in some detail in [

7] with one kiloton as the case study, and is used here to construct a representative synthetic waveform for a one (metric) ton detonation. Define

as the pseudo-period of a blast pulse corresponding to the peak spectral energy at the frequency

and angular frequency

, where

is the time duration of the initial positive phase traditionally used in blast physics. The nondimensionalized time scale is

The form of the amplitude-normalized source pressure function for an explosive blast [

7] can be represented as

This pulse has an associated analytic function

discussed in

Appendix F. Since the theoretical Hilbert transform has some unresolved issues, the numerical Hilbert transform [

25] is used for comparison.

Note that the amplitude is not used in this exercise because in some cyber-physical systems, such as smartphones, the amplitude response of on-board sensors may not be known. However, sensor dynamic range is usually specified and available (e.g., int16, float32) and can be used for signal scaling relative to the full range or the noise.

The normalized pulse has zero mean (conservation of momentum) and its theoretical variance is

The complex Fourier transform

of this pulse is

where

and the peak in the spectrum is at

. Note there are at least two pseudoperiods of importance evident in the main blast pulse: the main spectral pseudoperiod

and the positive phase pseudoperiod of

. Near the source the positive phase pseudoperiod will dominate as it has the highest energy and bandwidth. With increasing distance and high-frequency attenuation the main pseudoperiod becomes more prominent and may also be downshifted in frequency [

7]. However, additional scales can be introduced by reflection and refraction in the transmission channel that can induce phase shifts often modeled with Hilbert transforms (

Appendix F).

The power spectra of real digital signals are usually expressed using only the positive frequencies up to the Nyquist frequency, where the unilateral spectral density

is defined as

Since the target signature corresponds to a one tonne (1000 kg) detonation, the analysis concentrates on a target frequency of 6.3 Hz [

7]. The general procedure for constructing target-tuned fractional binary bands of order N is to define a set of base 2 scales around the center or reference frequency

The upper limit is set by the Nyquist frequency, which means that the center frequency and its band edges should be below the Nyquist and the ½ power point of the anti-aliasing filter. A conservative estimate is

The lower limit is set by the largest data window duration

so that the center frequencies are defined by

which will be sufficient information to compute the Morlet scale

. If one must convert to a sorted, monotonically increasing pseudoperiod, let

and restart the counter for the period

This re-indexing is much easier to do numerically than to describe algorithmically. For the purposes of illustration and demonstration, let us choose a signal frequency that exactly matches the target frequency; if this example fails there is no purpose in continuing. A sample rate of 200 Hz will be more than sufficient for this example. Gaussian noise with a standard deviation that is one bit below the signal standard deviation (factor of 1/2) is superposed, and then anti-alias filtered for all frequencies below Nyquist. The analytic function is computed numerically from the real pulse for later comparisons with the wavelet-reconstructed signal.

The CWT scalogram is computed using the complex nondimensional mother quantum wavelet of order

N. The complex Gabor-Morlet wavelet in SciPy [

25] is represented by the function scipy.signal.morlet2, and has the desired canonical form,

The only free variables are the order

N, the smallest time scale

, and the sample rate

. Although the nondimensionalized scale will change with the sample rate, the final results can always be returned to the physical domain frequencies

. The nominal number of points per window can be estimated from

. The complex wavelet coefficients can be readily computed from the real part of the discrete version of the blast source-time function

After minor conditioning, the SciPy CWT function [

25] promptly invokes the convolution function. This is computationally expensive: we have turned a time series with Mp points into a complex 2[Mp x Nbands] array of band-passed waveforms. The terms wavelets and wavelet filter banks are often used interchangeably in the context of the CWT.

The wavelet-filtered reconstructed complex analytical signal can be approximated from

where the

indexes indicate that one may choose selected scales for the reconstruction over selected time indexes

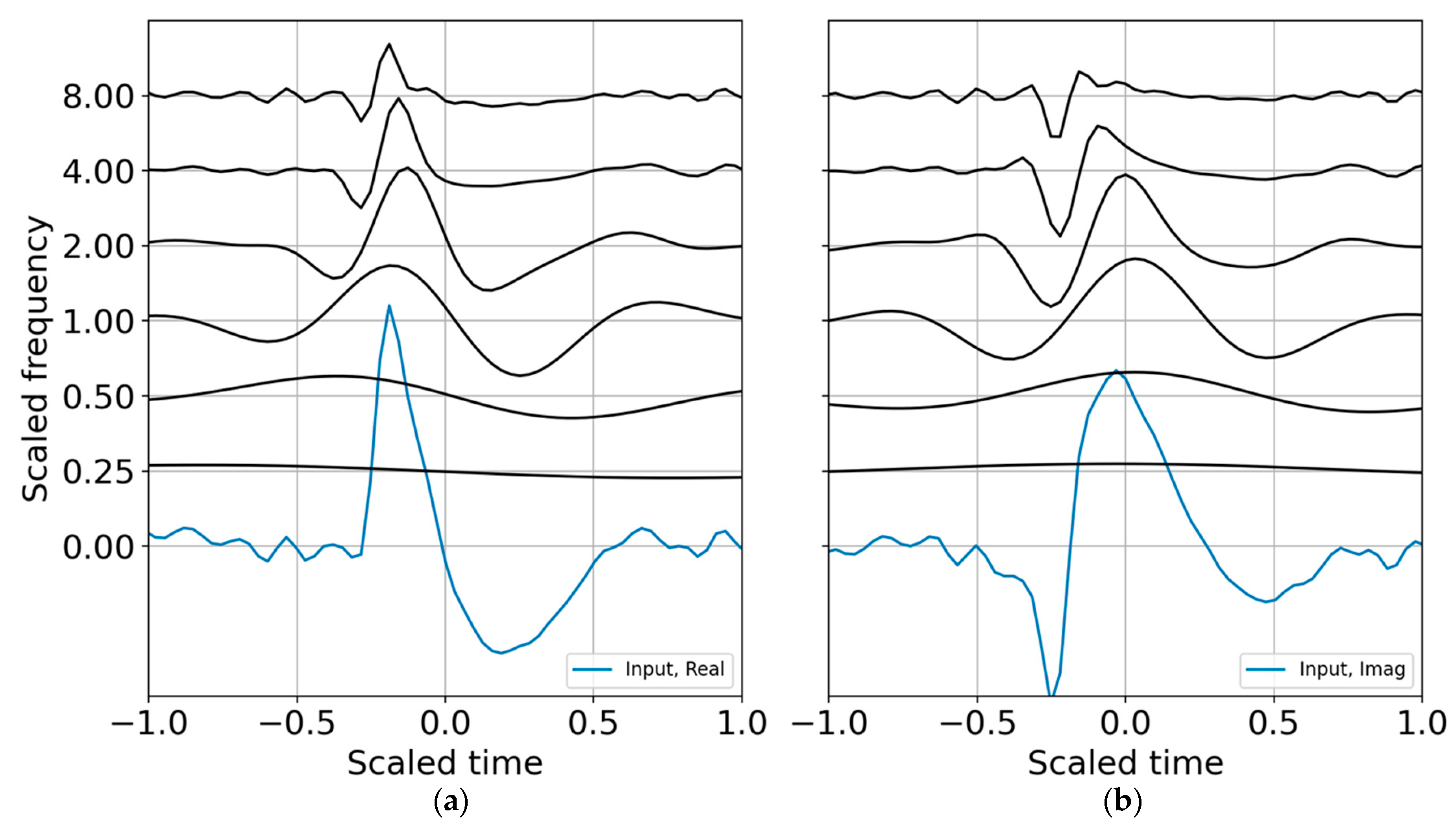

corresponding to the wavelet coefficients that best represent a signal of interest during the time interval of relevance. The wavelet CWT coefficients for the binary band decomposition are shown in

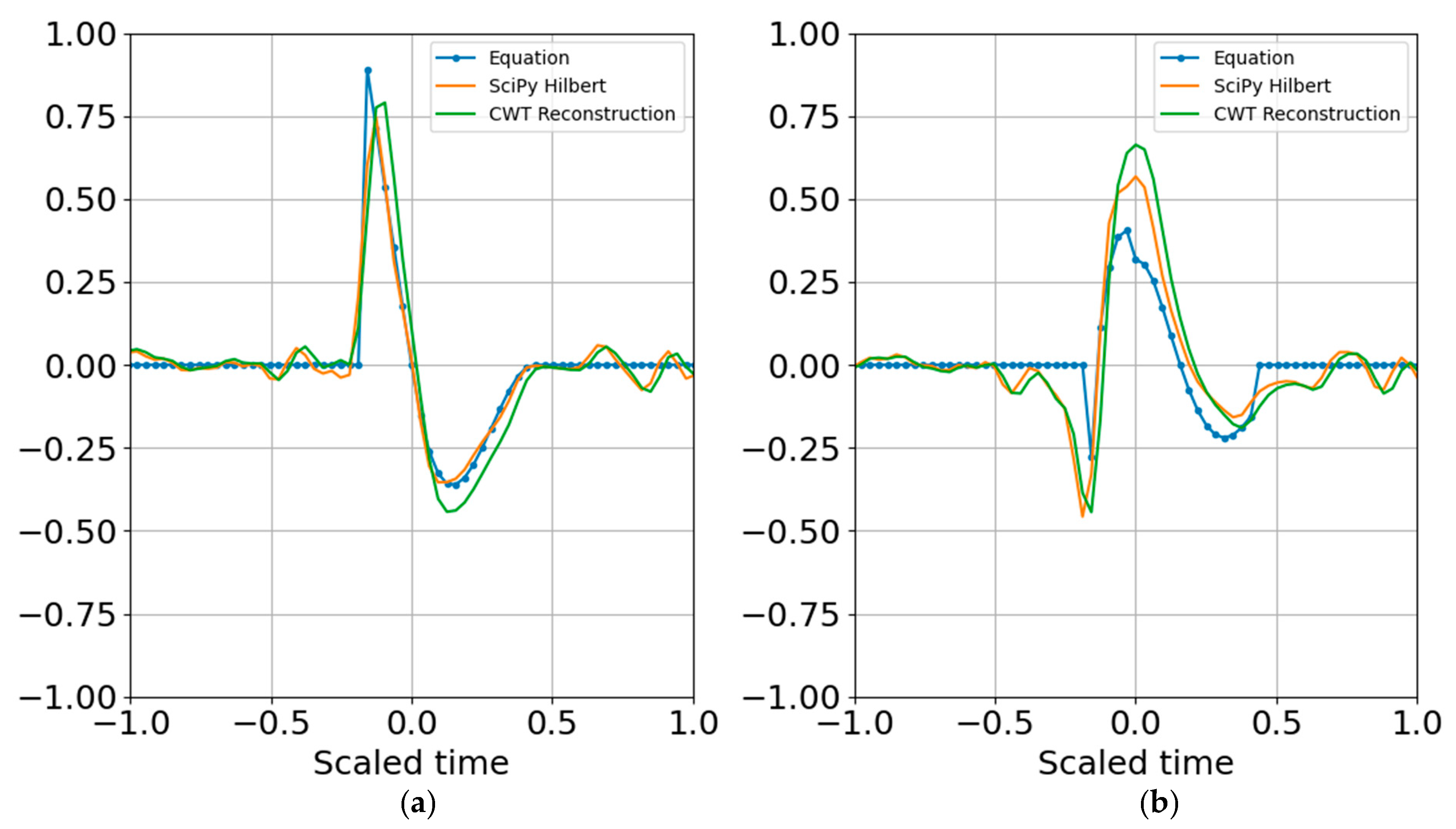

Figure 1; the CWT coefficients are scaled by the reconstruction coefficients. A comparison of the input synthetic analytic record and the analytic signal reconstruction (summed over all scales) for the octave band representation is shown in

Figure 2.

The reconstruction process recovers the original dimensionality of the time series but returns its Hilbert transform, so the total dimensionality may be doubled (2Mp sample points). If only the original real signal is desired, then the dimensionality is unchanged.

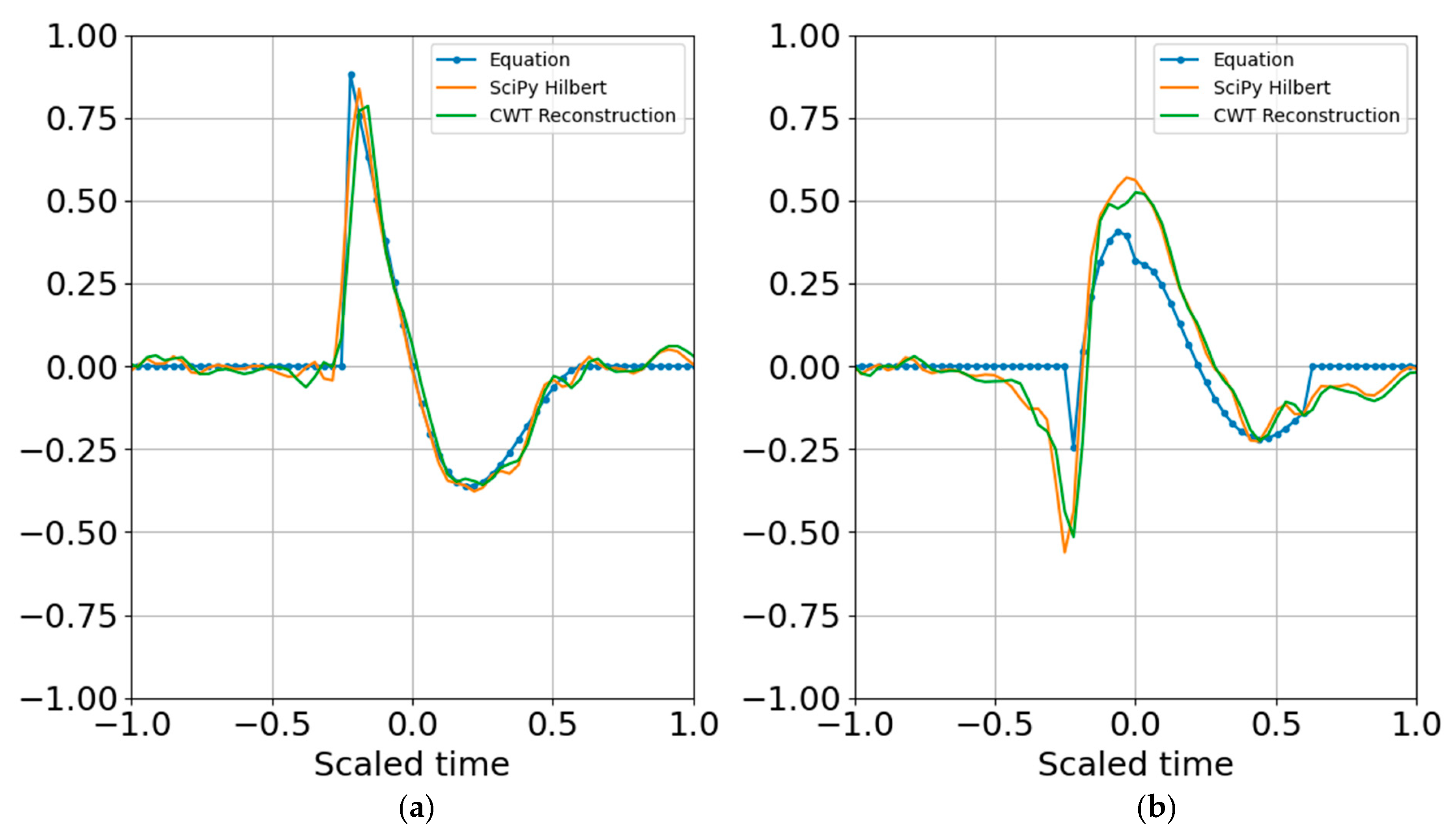

The next steps estimate entropy and SNR, and consider sparse signal representation. Although binary bands are adequate for characterizing this signal, and are routinely used in the discrete wavelet transform, I take advantage of the flexibility offered by the CWT and use third order bands (

N = 3) for the examples that follow. One of the benefits of third order bands is that the admissibility condition is better met and scales are recursive in powers of 2 and 10 ([

1]). As presented in

Appendix D, third order bands will contain over 99% of the Gabor box variance within an octave and within 80% of the full window

, reducing spectral leakage. If, in addition, one wants a factor of two accuracy in explosive yield estimates, 1/3 octave resolution is a minimum requirement. A third order band wavelet reconstruction is shown in

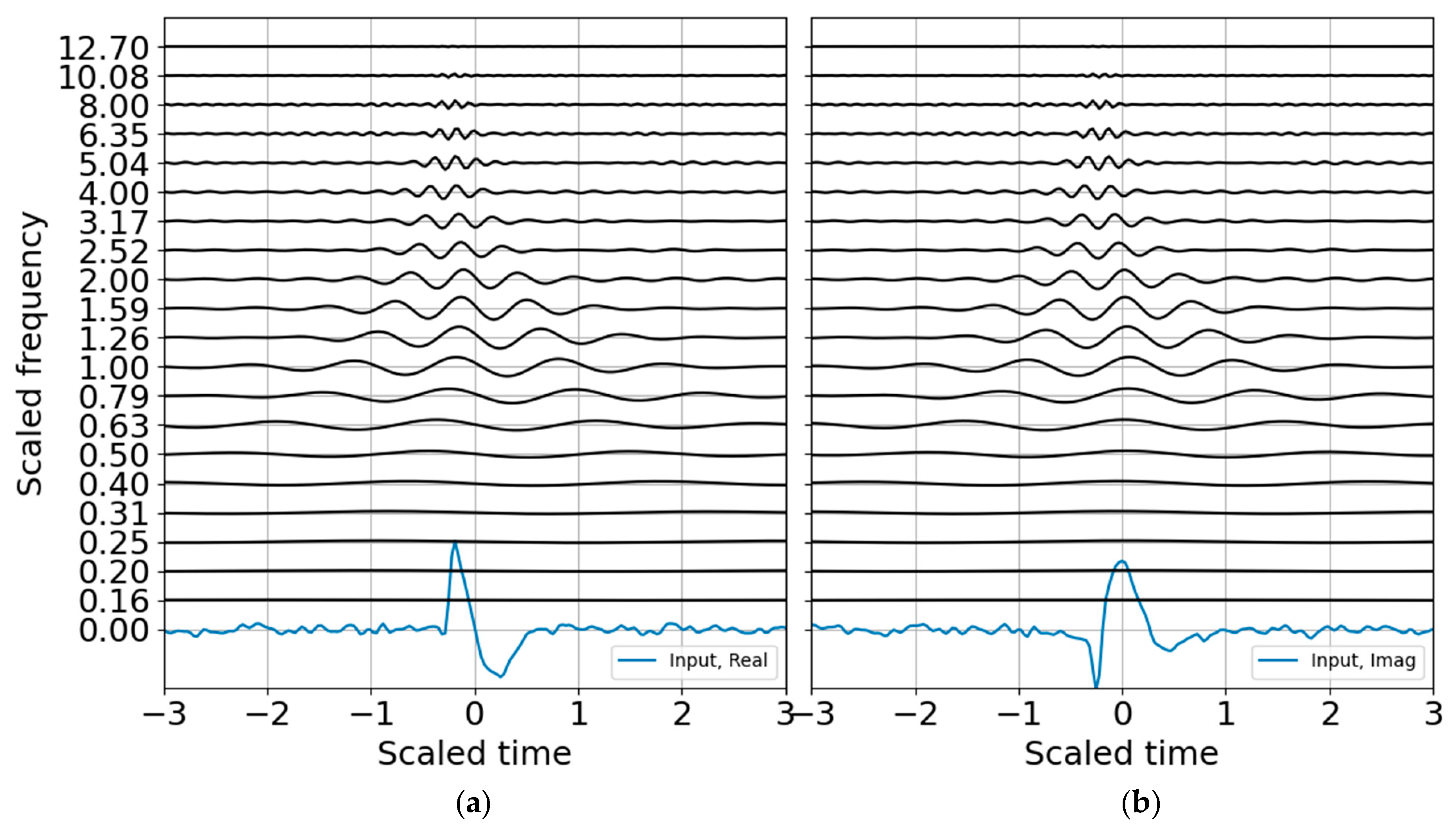

Figure 3 and corresponds to the CWT decomposition presented in

Figure 4. The wire mesh representation is the equivalent of the scalograms usually represented as color mesh plots, and illustrates the simplicity of the CWT decomposition. The primary difference between

Figure 4 and

Figure 5 is that the first scales the raw CWT coefficients by the reconstruction scaling, whereas

Figure 5 shows the raw coefficients.

The energy probability distribution is constructed from the wavelet coefficients to estimate entropy, as discussed in the previous section. The log energy entropy looks like any other scalogram and does not add much value, but the Shannon entropy plot is interesting and well scaled (

Figure 6). The peak entropy is at the scaled blast center frequency of unity, as expected.

Next a noise model is constructed to build the SNR and to establish criteria for standardized and reproducible sparse signal representation. Many are the ways to characterize noise, and few of them accurately characterize non-stationary noise over brief observation windows. An incorrect noise model can penalize the signal passband and degrade the signal SNR. For the white noise model with variance that is one bit below the signal variance, the CWT of the noise (

Figure 7) shows how the high-frequency oscillations are adequately sampled whereas the low-frequency oscillations are undersampled. This leads to instability if the noise is only estimated over a brief observation record. In principle, one may build a noise model over a substantial time period to improve statistical significance under the assumption that the noise is statistically stationary. This can be a tenuous assumption in some circumstances. Noise studies are beyond the scope of this paper; the noise spectrum is flattened by using the mean of the noise coefficients to estimate the band-averaged noise level.

As anticipated, the binary SNR appears much like the log energy entropy since they are both scaled by a constant value, with the former over the band-averaged noise and the latter over the total energy. The SNR RbR, as described in the previous section, should also look very much like the entropy, except it would be zero for SNR of unity and positive for SNR > 1. The SNR RbR is shown in

Figure 8 and indeed matches the Shannon entropy plot. This is encouraging; the entropy plot requires constructing an energy distribution that scales with the record, whereas the SNR requires constructing a noise model that is mostly independent of the record and should have more stability—as long as the ambient noise is approximately stationary or can at least be adequately modeled. If one is curating data for machine learning training, the entropy would be a good metric for picking and annotating possible signals as well as for refining noise models. If one is trying to trigger or detect signals operationally, the SNR may be a preferable metric since it makes no assumptions about the total energy in a record and only scales relative to a (preferably) stable noise representation.

One may use the CWT coefficient energy, the Shannon entropy, or the SNR RbR to test the feasibility of the sparse Gabor atom superposition. Suppose we use any of these Np scales x Mpoint time matrices to identify the peak contributions over the record, and define the complex time indexes as

. The quantum wavelet superposition would be expressed as

where the dimensionality of the representation is reduced to the complex coefficients and time indexes. Since the wavelet function can be reproduced for any time index, the time array need not be stored. In other words, if there are 20 scales, there will be 20 real coefficients and time offsets and 20 imaginary coefficients and time offsets, with total dimensionality of 4 × 20 = 80 parameters. If there is sufficient SNR and the signal is band limited it is possible to further reduce dimensionality by removing any coefficients below a specified threshold that may be fitting to noise (e.g., overfitting).

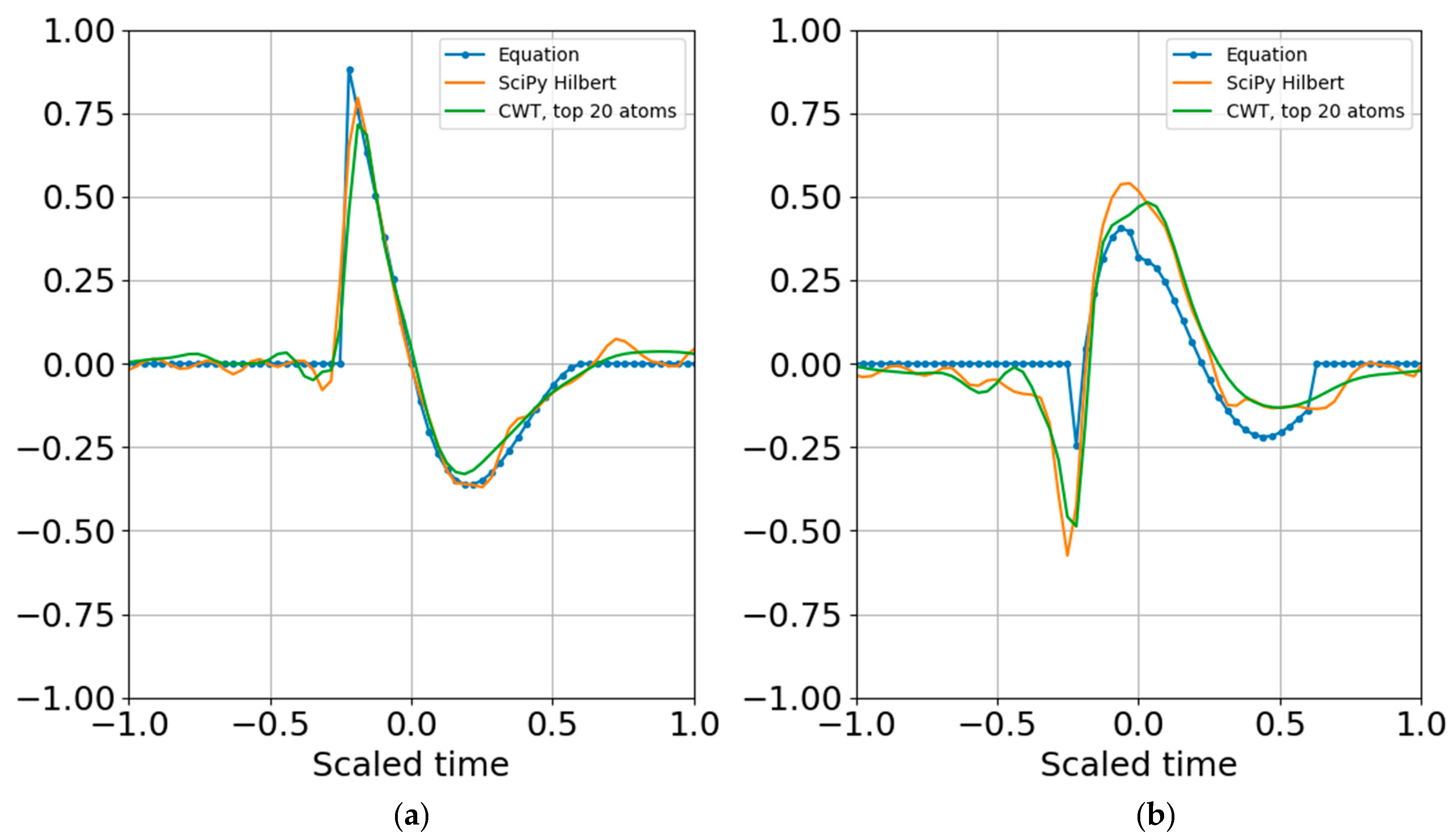

Figure 9 shows the result of reconstruction from the superposition of all the top atoms of the 20 scales, and

Figure 10 shows reconstruction from a sparser set of 12 scales with the highest SNR RbR. Similar results were obtained using the Shannon entropy. The Gaussian noise standard deviation for these two runs was one bit below the signal standard deviation.

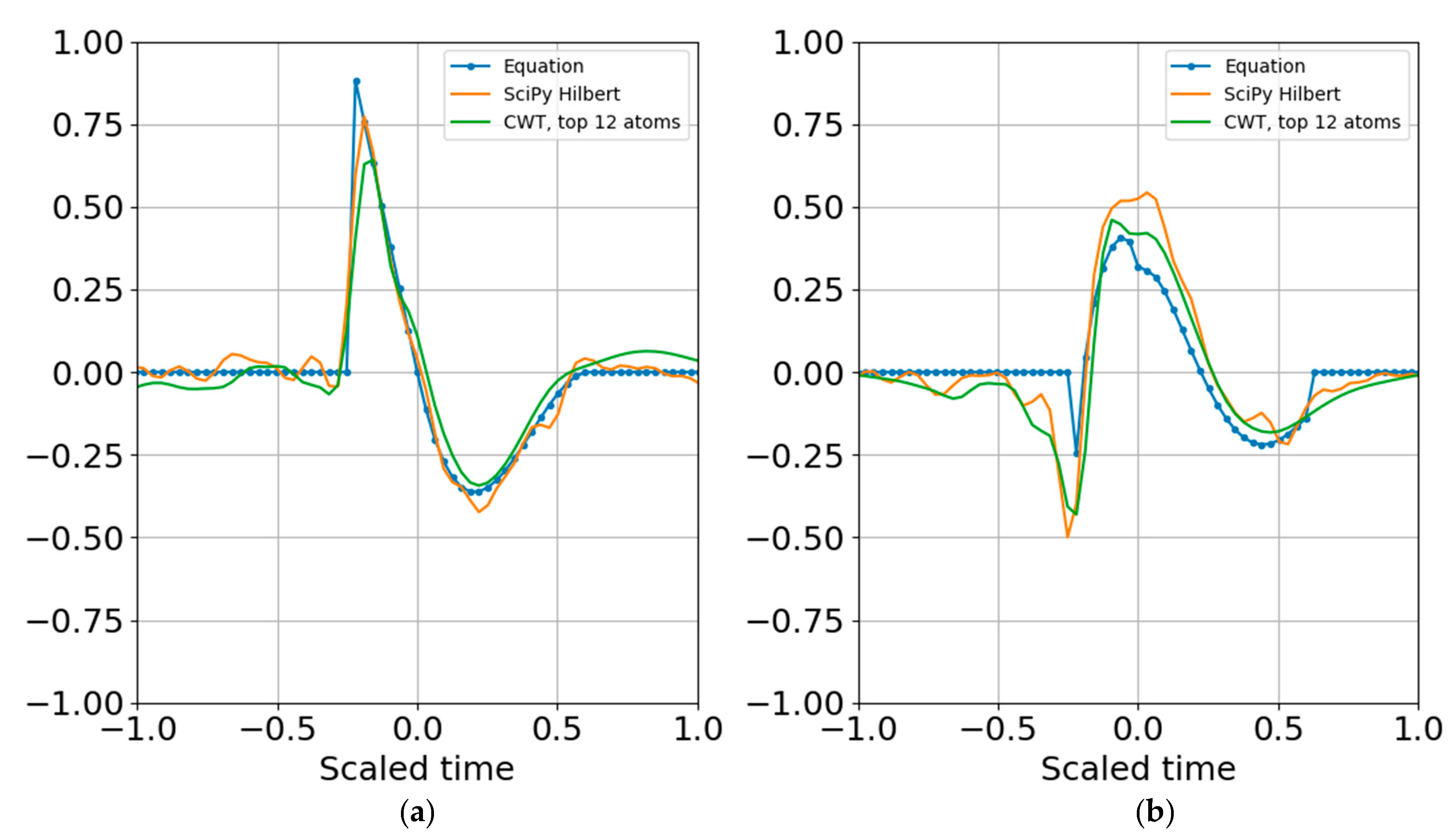

Increasing the noise standard deviation by a factor of two (one bit) still permits reconstruction from superposition (

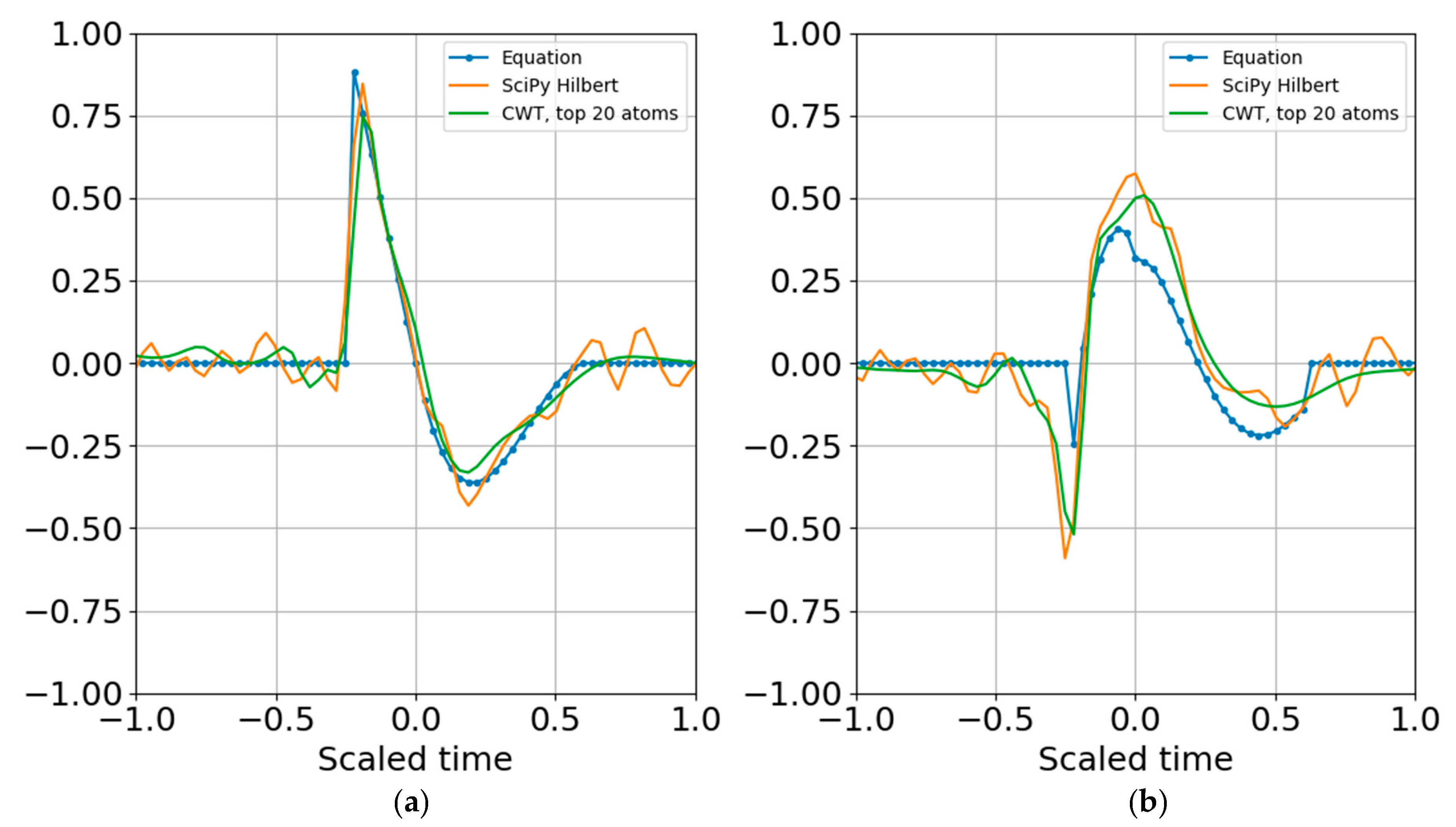

Figure 11), and increasing by another bit also allowed atomic reconstruction (

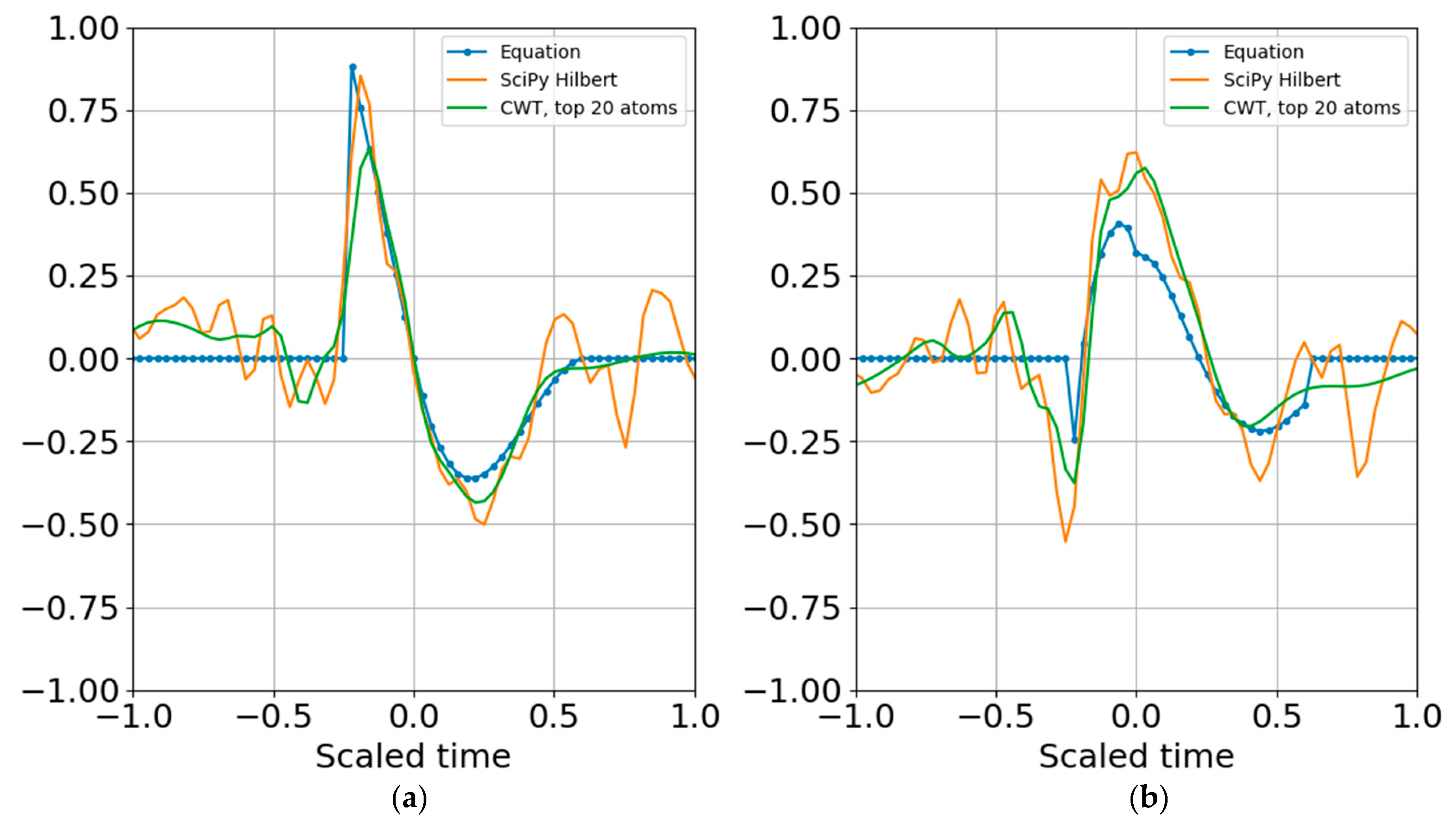

Figure 12).

There is no end to the number of sensitivity studies that can be performed; in addition to other SNR tests, shifting the peak blast frequency away from the nominal target frequency still returned a stable reconstruction. Increasing the order past N > 6 only worsened the fit to the target waveform, increasing dimensionality and computational cost while decreasing reconstruction fidelity. This is expected from using a wavelet that does not match the target signature.