Taylor’s Law in Innovation Processes

Abstract

1. Introduction

2. The Urn Model with Triggering

- if the color of the extracted ball is a new one, (it appears for the first time in , i.e., it is a realization of a novelty), then we add balls of the same color plus distinct balls of different new colors, which were not yet present in the urn; note that we use here the word new in two different acceptations: on one hand we refer to events that occur for the first time, on the other one to new colors that enter the space of events

- if the color of the extracted ball is already present in , we add balls of the same color.

Values of the Model Parameters

3. Triangular Urn Schemes and Innovation Rate

- if the color of the extracted ball is black, then we replace the extracted ball with a white ball and we add white balls plus black balls;

- if the color of the extracted ball is white, we return the extracted ball in the urn together with additional white balls.

- (Case )where D is a suitable random variable with finite moments. In particular, when , the random variable D has probability density function given bywhere c is a normalizing constant and denotes the probability density function of the Mittag-Leffler distribution with parameter . Hence, for , we have

- (Case )andwhere Z is a suitable random variable.

- (Case )and the second-order behaviour depends on the value of . Precisely, denoting by the normal distribution with mean value equal to zero and variance equal to , we have:

- –

- for ,

- –

- for ,

- –

- for ,where V is a suitable random variable.

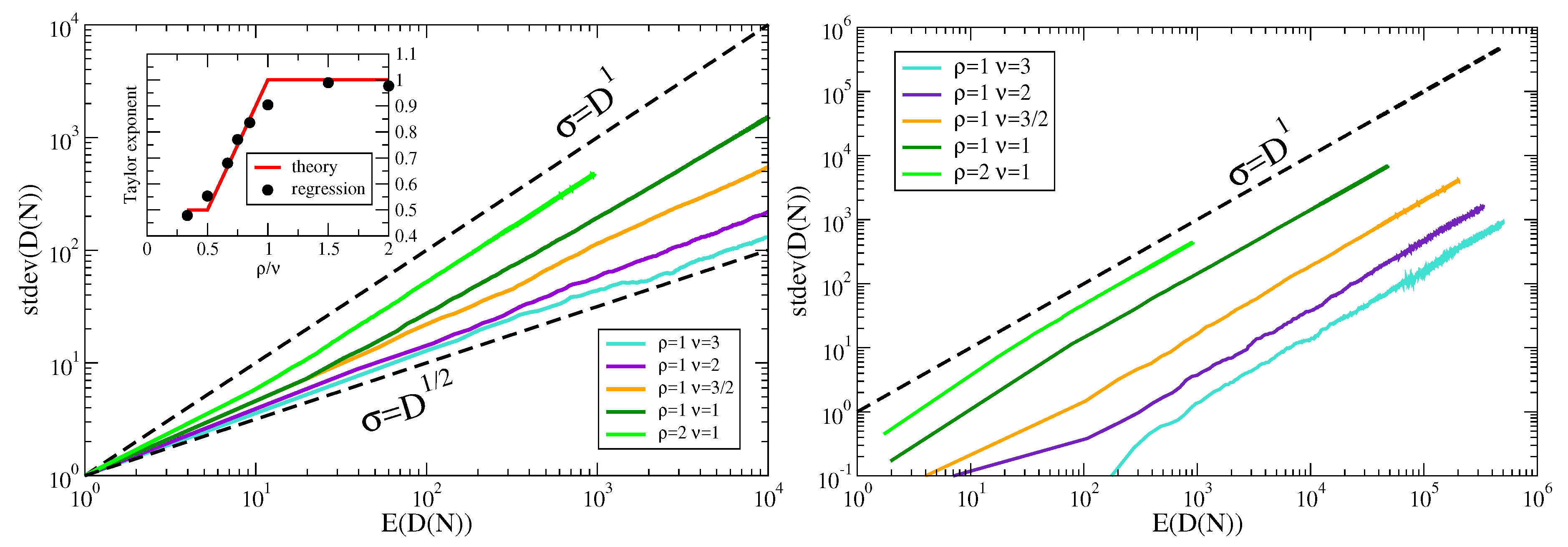

4. Taylor’s Law

- (Case ) From the almost sure convergence (6), we guess , where the constant of proportionality is .

- (Case ) Since the limit in (7) is a constant, we can not exploit the almost sure convergence (7) in order to obtain a Taylor’s law as done for the previous case . However, from the convergence in distribution (8), we can guessandHence, combining together the above two limit relations, we find

- (Case ) Since for all t, the almost sure convergence (9) implies the convergence of the moments (see [43]) for that equation. However, it is not enough in order to get a Taylor’s law, but we need to use (10), (11) and (12). First of all, we observe thatHence:

- –

- for , we guess from (10) that the first term on the right hand of the above equality behaves as , while the second term is , and so we get andwith the constant of proportionality equal to ;

- –

- for , we guess from (11) that the first term on the right hand of the above equality behaves as , while the second term is and so we get andwith the constant of proportionality equal to ;

- –

- for , we guess from (12) that the first term and the second term on the right hand of the above equality behave as and respectively and so we get andwith the constant of proportionality equal to .

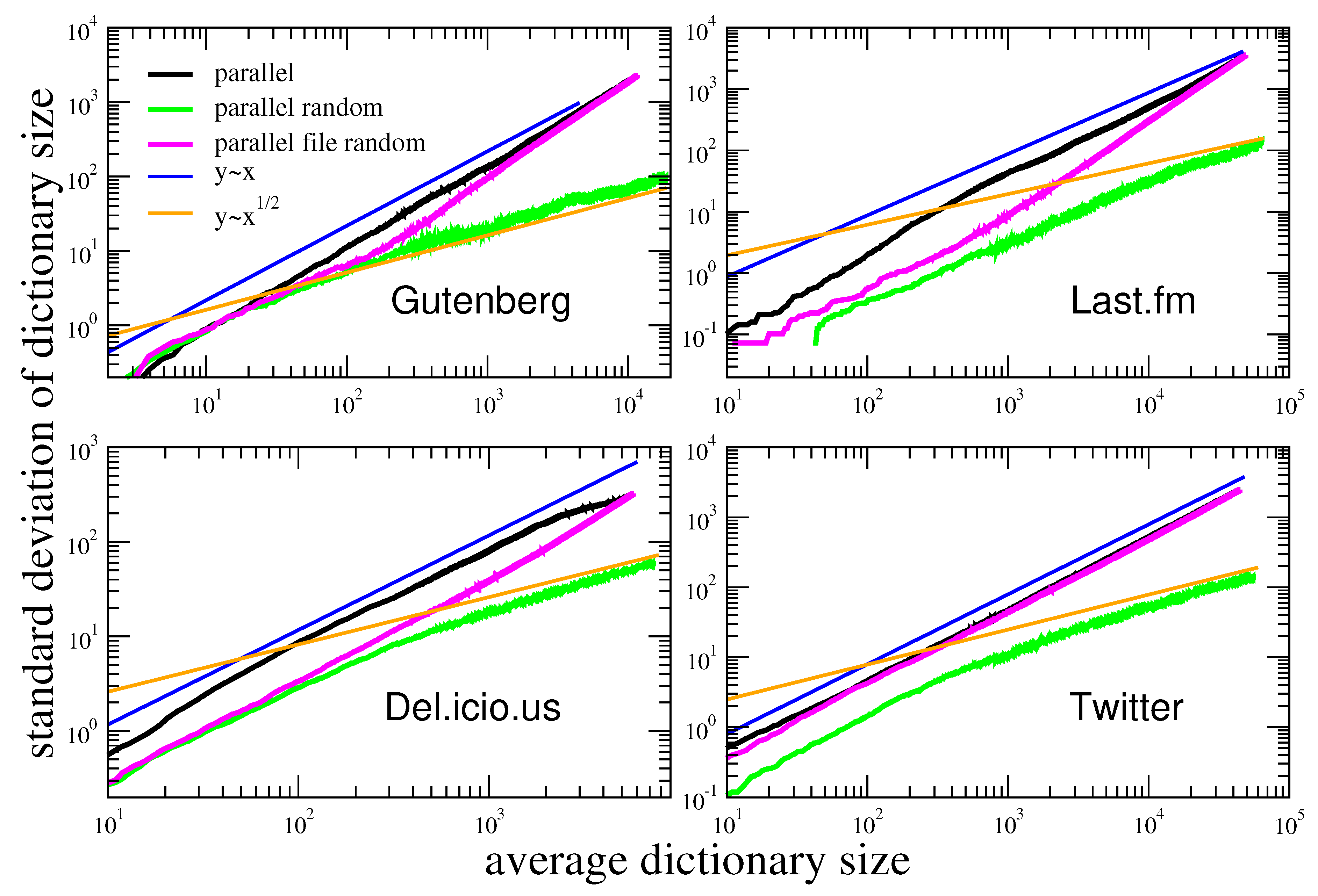

5. Taylor’s Law in Real World Systems

6. Two Mechanisms that Increase Fluctuations

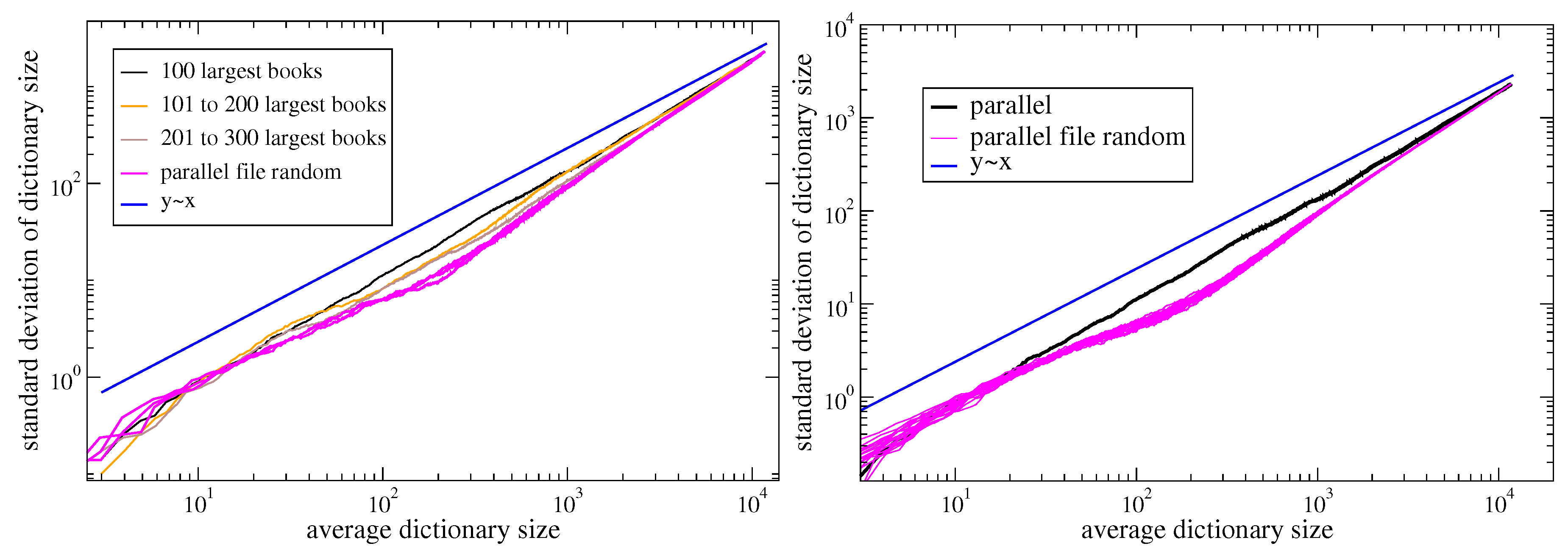

6.1. Random Parameters

- (Case ) As seen before, the Taylor’s exponent in the case is always smaller than 1. Suppose now that and are constants and there exists a random variable , with , that gives the value of . Given the value of , the urn process behaves as described before. If is concentrated on , that is almost surely, then, on the event , the sequence converges almost surely to the value . Therefore, since is bounded, we have [43]Therefore, by setting , we findThis means that while a deterministic parameter gives a Taylor’s exponent smaller than 1, a random parameter , with almost surely, gives a Taylor’s exponent equal to 1.

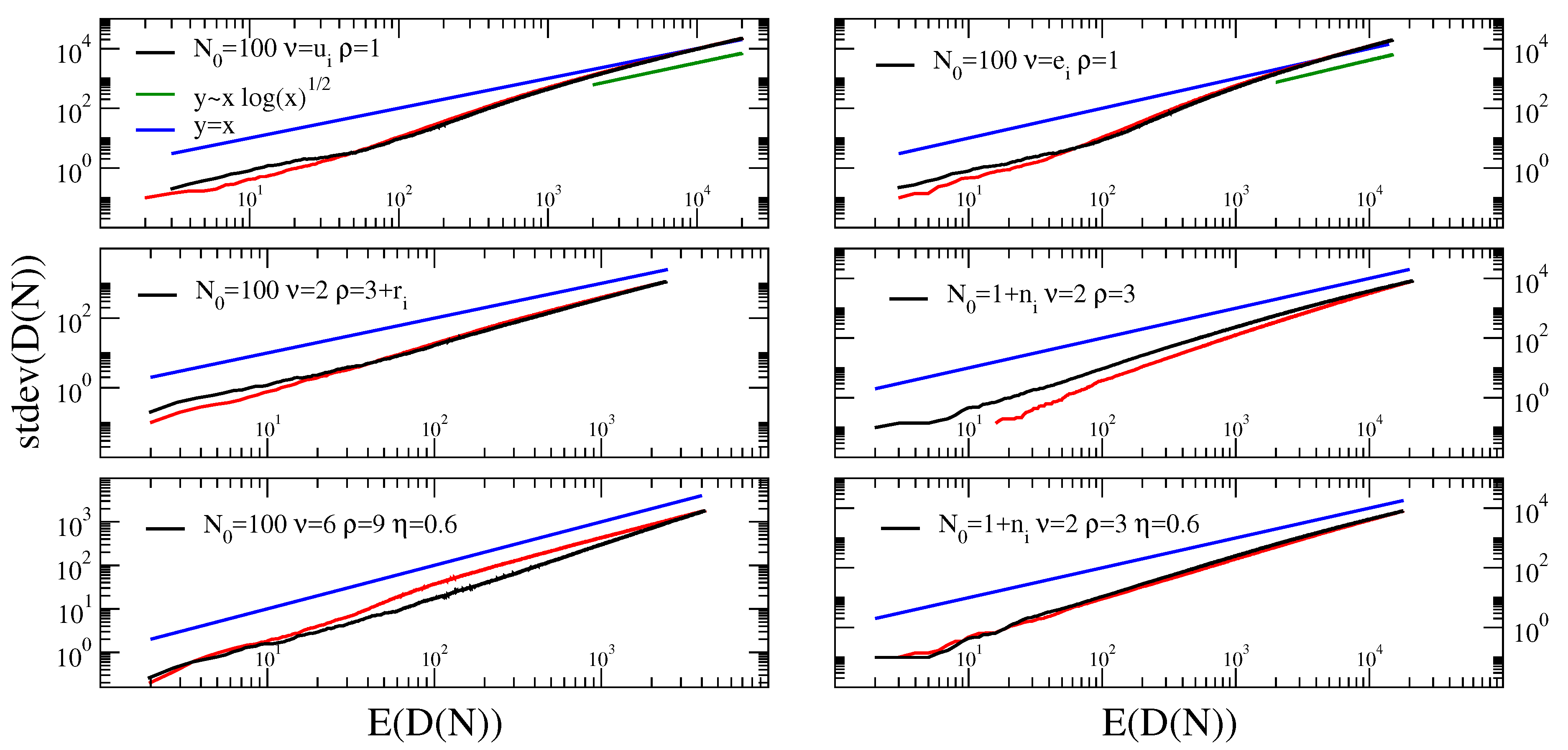

- (Case ) As seen before, the Taylor’s exponent in the case is equal to 1. Suppose now, as before, that is a random variable, with , that gives the value of , while the other parameters are constant. If is concentrated on , that is almost surely, then, on the event , the sequence converges almost surely to a suitable random variable . Moreover, from [33], we haveAssuming, as in the previous section, a condition of uniform integrability, we can say thatwhere is the function given in (17) with . Similarly,where is the function given in (17) with .If we neglect the terms in the above mean values, we havewhere is the moment-generating function of . For instance, if is uniformly distributed on , we getand so . Finally, if we use the approximation , we obtain .Similarly, if is exponentially distributed on , that is with and , we getand so, as above, .From Figure 5 we see that the above predictions are valid asymptotically, after a long transient where a law , seems to be valid.

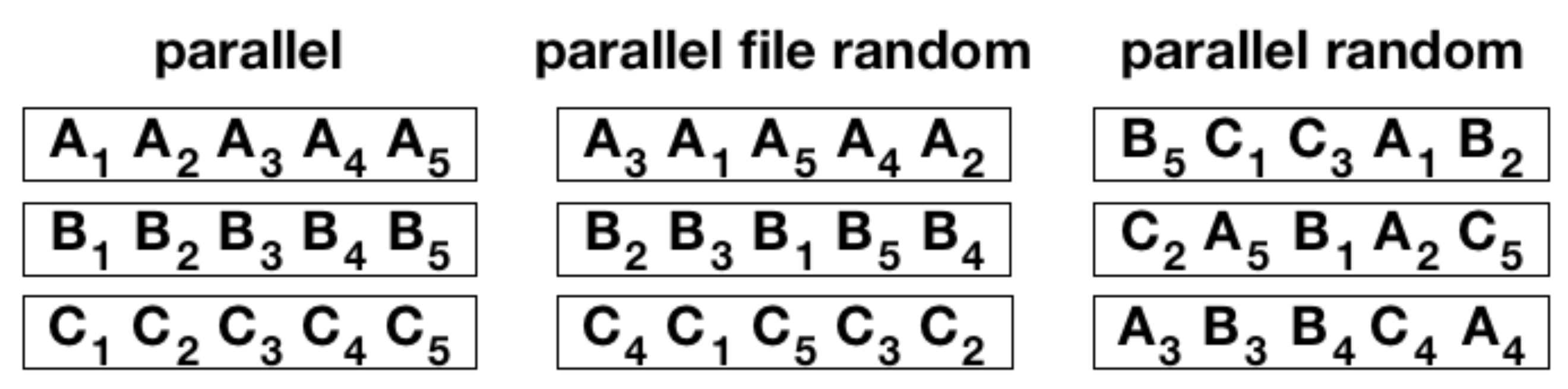

6.2. Urn Model with Semantic Triggering

- (i)

- we give weight 1 to: (a) each element in with the same label, say C, as (the last element added in the sequence), (b) to the element that triggered the enter in the urn of , and (c) to the elements triggered by ; a weight is given to any other element in ;

- (ii)

- The element is chosen by drawing randomly from , each element with a probability proportional to its weight;

- (iii)

- the element is added to the sequence and put back into along with additional copies of it;

- (iv)

- if and only if the chosen element is new (i.e., it appears for the first time in the sequence ), brand new distinct elements (balls with different colors, not yet present in the urn), all with a common brand new label, are added to .

7. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Zipf, G.K. Relative Frequency as a Determinant of Phonetic Change. Harv. Stud. Class. Philol. 1929, 40, 1–95. [Google Scholar] [CrossRef]

- Zipf, G.K. The Psychobiology of Language; Houghton-Mifflin: New York, NY, USA, 1935. [Google Scholar]

- Zipf, G.K. Human Behavior and the Principle of Least Effort; Addison-Wesley: Reading MA, USA, 1949. [Google Scholar]

- Herdan, G. Type-Token Mathematics: A Textbook of Mathematical Linguistics; Janua linguarum. series maior. no. 4; Mouton en Company: Berlin, Germany, 1960. [Google Scholar]

- Heaps, H.S. Information Retrieval-Computational and Theoretical Aspects; Academic Press: Cambridge, MA, USA, 1978. [Google Scholar]

- Taylor, L. Aggregation, Variance and the Mean. Nature 1961, 189, 732. [Google Scholar] [CrossRef]

- Eisler, Z.; Bartos, I.; Kertész, J. Fluctuation scaling in complex systems: Taylor’s law and beyond. Adv. Phys. 2008, 57, 89–142. [Google Scholar] [CrossRef]

- Newman, M.E.J. Power laws, Pareto distributions and Zipf’s law. Contemp. Phys. 2005, 46, 323–351. [Google Scholar] [CrossRef]

- Corominas-Murtra, B.; Hanel, R.; Thurner, S. Understanding scaling through history-dependent processes with collapsing sample space. Proc. Natl. Acad. Sci. USA 2015, 112, 5348–5353. [Google Scholar] [CrossRef]

- Cubero, R.J.; Jo, J.; Marsili, M.; Roudi, Y.; Song, J. Statistical Criticality arises in Most Informative Representations. J. Stat. Mech. 2019, 1906, 063402. [Google Scholar] [CrossRef]

- Lü, L.; Zhang, Z.K.; Zhou, T. Zipf’s law leads to Heaps’ law: Analyzing their relation in finite-size systems. PLoS ONE 2010, 5, e14139. [Google Scholar] [CrossRef]

- Tria, F.; Loreto, V.; Servedio, V.D.P.; Strogatz, S.H. The dynamics of correlated novelties. Sci. Rep. 2014, 4. [Google Scholar] [CrossRef]

- Kauffman, S.A. Investigations; Oxford University Press: New York, NY, USA, 2000. [Google Scholar]

- Monechi, B.; Ruiz-Serrano, A.; Tria, F.; Loreto, V. Waves of novelties in the expansion into the adjacent possible. PLoS ONE 2017, 12, e0179303. [Google Scholar] [CrossRef]

- Iacopini, I.; Milojević, S.C.V.; Latora, V. Network Dynamics of Innovation Processes. Phys. Rev. Lett. 2018, 120, 048301. [Google Scholar] [CrossRef]

- Kilpatrick, A.M.; Ives, A.R. Species interactions can explain Taylor’s power law for ecological time series. Nature 2003, 422, 65–68. [Google Scholar] [CrossRef]

- Ballantyne, F.; Kerkhoff, A.J. The observed range for temporal mean-variance scaling exponents can be explained by reproductive correlation. Oikos 2007, 116, 174–180. [Google Scholar] [CrossRef]

- Cohen, J.E.; Xu, M.; Schuster, W.S.F. Stochastic multiplicative population growth predicts and interprets Taylor’s power law of fluctuation scaling. Proc. Biol. Sci. 2013, 280, 20122955. [Google Scholar] [CrossRef]

- Cohen, J.E. Stochastic population dynamics in a Markovian environment implies Taylor’s power law of fluctuation scaling. Theor. Popul. Biol. 2014, 93, 30–37. [Google Scholar] [CrossRef]

- Giometto, A.; Formentin, M.; Rinaldo, A.; Cohen, J.E.; Maritan, A. Sample and population exponents of generalized Taylor’s law. Proc. Natl. Acad. Sci. USA 2015, 112, 7755–7760. [Google Scholar] [CrossRef]

- Gerlach, M.; Altmann, E.G. Scaling laws and fluctuations in the statistics of word frequencies. New J. Phys. 2014, 16, 113010. [Google Scholar] [CrossRef]

- Simon, H. On a class of skew distribution functions. Biometrika 1955, 42, 425–440. [Google Scholar] [CrossRef]

- Zanette, D.; Montemurro, M. Dynamics of Text Generation with Realistic Zipf’s Distribution. J. Quant. Linguist. 2005, 12, 29. [Google Scholar] [CrossRef]

- Tria, F.; Loreto, V.; Servedio, V.D.P. Zipf’s, Heaps’ and Taylor’s Laws are Determined by the Expansion into the Adjacent Possible. Entropy 2018, 20, 752. [Google Scholar] [CrossRef]

- Zabell, S. Predicting the unpredictable. Synthese 1992, 90, 205–232. [Google Scholar] [CrossRef]

- Pitman, J. Exchangeable and partially exchangeable random partitions. Probab. Theory Relat. Fields 1995, 102, 145–158. [Google Scholar] [CrossRef]

- Pitman, J. Combinatorial Stochastic Processes; Ecole d’Eté de Probabilités de Saint-Flour XXXII; Springer: Berlin/Heidelberg, Germany, 2002. [Google Scholar]

- Pitman, J.; Yor, M. The two-parameter Poisson-Dirichlet distribution derived from a stable subordinator. Ann. Appl. Probab. 1997, 25, 855–900. [Google Scholar] [CrossRef]

- Buntine, W.; Hutter, M. A Bayesian View of the Poisson-Dirichlet Process. arXiv 2010, arXiv:1007.0296. [Google Scholar]

- Teh, Y.W. A Hierarchical Bayesian Language Model based on Pitman-Yor Processes. In Proceedings of the 21st International Conference on Computational Linguistics and the 44th annual meeting of the Association for Computational Linguistics, Sydney, Australia, 17–21 July 2006. [Google Scholar] [CrossRef]

- Du, L.; Buntine, W.; Jin, H. A segmented topic model based on the two-parameter Poisson-Dirichlet process. Mach. Learn. 2010, 81, 5–19. [Google Scholar] [CrossRef]

- Janson, S. Functional limit theorems for multiple branching processes and generalized Pólya urns. Stoch. Process. Appl. 2004, 110, 177–245. [Google Scholar] [CrossRef]

- Janson, S. Limit theorems for triangular urn schemes. Probab. Theory Relat. Fields 2006, 134, 417–452. [Google Scholar] [CrossRef][Green Version]

- Kauffman, S.A. The Origins of Order: Self-Organization and Selection in Evolution; Oxford University Press: New York, NY, USA, 1993. [Google Scholar]

- Kauffman, S.A. Investigations: The Nature of Autonomous Agents and the Worlds They Mutually Create; SFI Working Papers; Santa Fe Institute: Santa Fe, NM, USA, 1996. [Google Scholar]

- James, L.F. Large Sample Asymptotics for the Two-Parameter Poisson-Dirichlet Process. Pushing the Limits of Contemporary Statistics: Contributions in Honor of Jayanta K. Ghosh; Institute of Mathematical Statistics: Beachwood, OH, USA, 2008; pp. 187–199. [Google Scholar]

- Hoppe, F.M. The sampling theory of neutral alleles and an urn model in population genetics. J. Math. Biol. 1987, 25, 123–159. [Google Scholar] [CrossRef]

- Bassetti, F.; Crimaldi, I.; Leisen, F. Conditionally identically distributed species sampling sequences. Adv. Appl. Probab. 2010, 42, 433–459. [Google Scholar] [CrossRef][Green Version]

- Aguech, R. Limit theorems for random triangular urn schemes. J. Appl. Probab. 2009, 46, 827–843. [Google Scholar] [CrossRef]

- Mahmoud, H.M. Pólya Urn Models; Texts in Statistical Science Series; CRC Press: Boca Raton, FL, USA, 2009; p. xii+290. [Google Scholar]

- Flajolet, P.; Dumas, P.; Puyhaubert, V. Some exactly solvable models of urn process theory. DMTCS Proc. 2006, 59–118. [Google Scholar]

- DasGupta, A. Moment Convergence and Uniform Integrability. In Asymptotic Theory of Statistics and Probability; Springer Texts in Statistics; Springer: New York, NY, USA, 2008. [Google Scholar]

- Williams, D. Probability with Martingales; Cambridge Mathematical Textbooks; Cambridge University Press: Cambridge, UK, 1991; p. xvi+251. [Google Scholar] [CrossRef]

- Hart, M. Project Gutenberg. 1971. Available online: https://www.gutenberg.org/ (accessed on 18 May 2020).

- Miller, F.; Stiksel, M.; Breidenbruecker, M.; Willomitzer, T. Last.fm. 2002. Available online: https://www.last.fm/ (accessed on 7 April 2020).

- Schachter, J. del.icio.us. 2003. Available online: http://delicious.com/ (accessed on 8 January 2018).

- Jack, D.; Noah Glass, B.S.; Williams, E. Twitter.com. 2006. Available online: https://twitter.com/ (accessed on 7 April 2020).

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tria, F.; Crimaldi, I.; Aletti, G.; Servedio, V.D.P. Taylor’s Law in Innovation Processes. Entropy 2020, 22, 573. https://doi.org/10.3390/e22050573

Tria F, Crimaldi I, Aletti G, Servedio VDP. Taylor’s Law in Innovation Processes. Entropy. 2020; 22(5):573. https://doi.org/10.3390/e22050573

Chicago/Turabian StyleTria, Francesca, Irene Crimaldi, Giacomo Aletti, and Vito D. P. Servedio. 2020. "Taylor’s Law in Innovation Processes" Entropy 22, no. 5: 573. https://doi.org/10.3390/e22050573

APA StyleTria, F., Crimaldi, I., Aletti, G., & Servedio, V. D. P. (2020). Taylor’s Law in Innovation Processes. Entropy, 22(5), 573. https://doi.org/10.3390/e22050573