On the Downlink Capacity of Cell-Free Massive MIMO with Constrained Fronthaul Capacity

Abstract

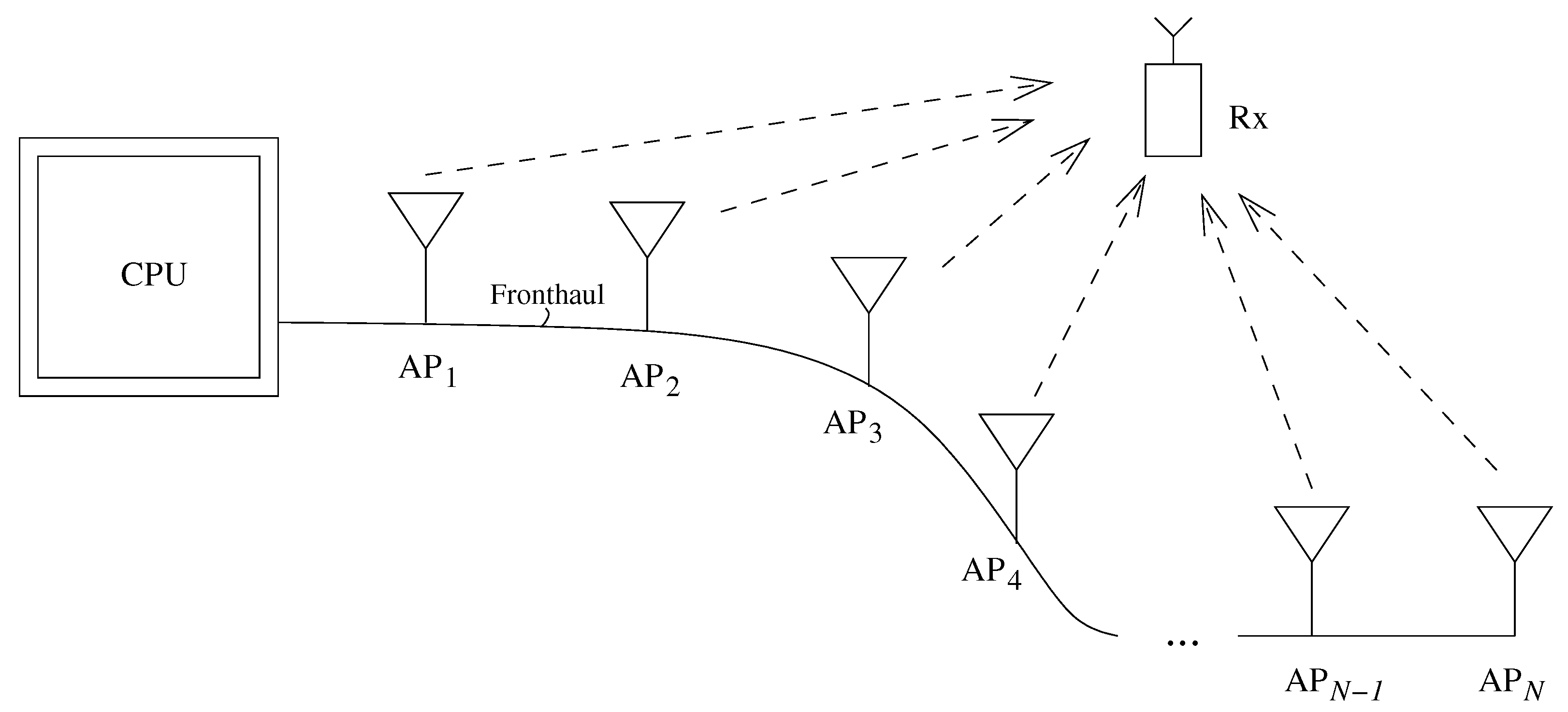

1. Introduction

1.1. Related Work

1.2. Contributions and Organization

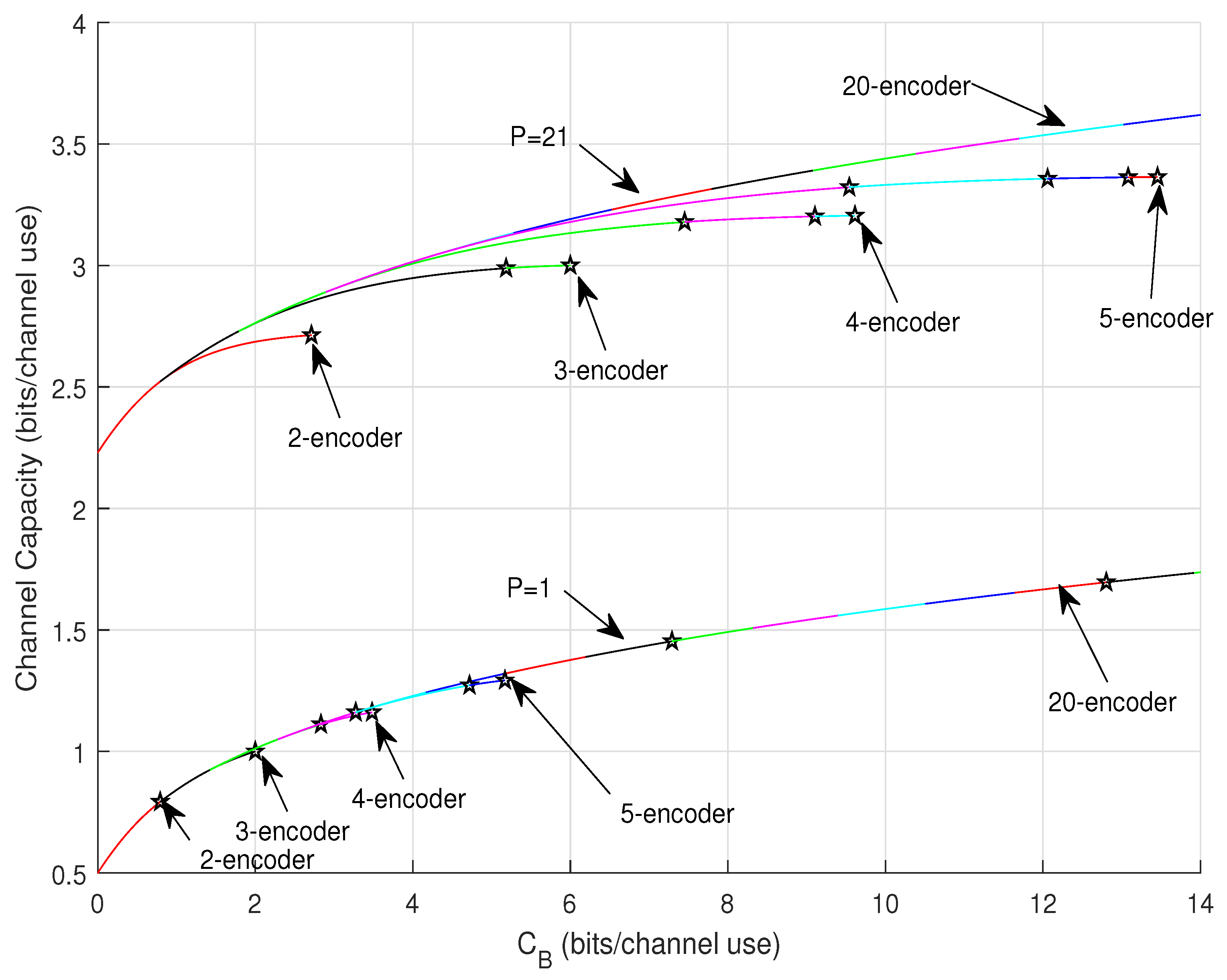

- The channel capacity is found for an arbitrary number of APs for both discrete channel and the Gaussian channel with constrained transit power, where the total fronthaul capacity and the total number of APs are limited.

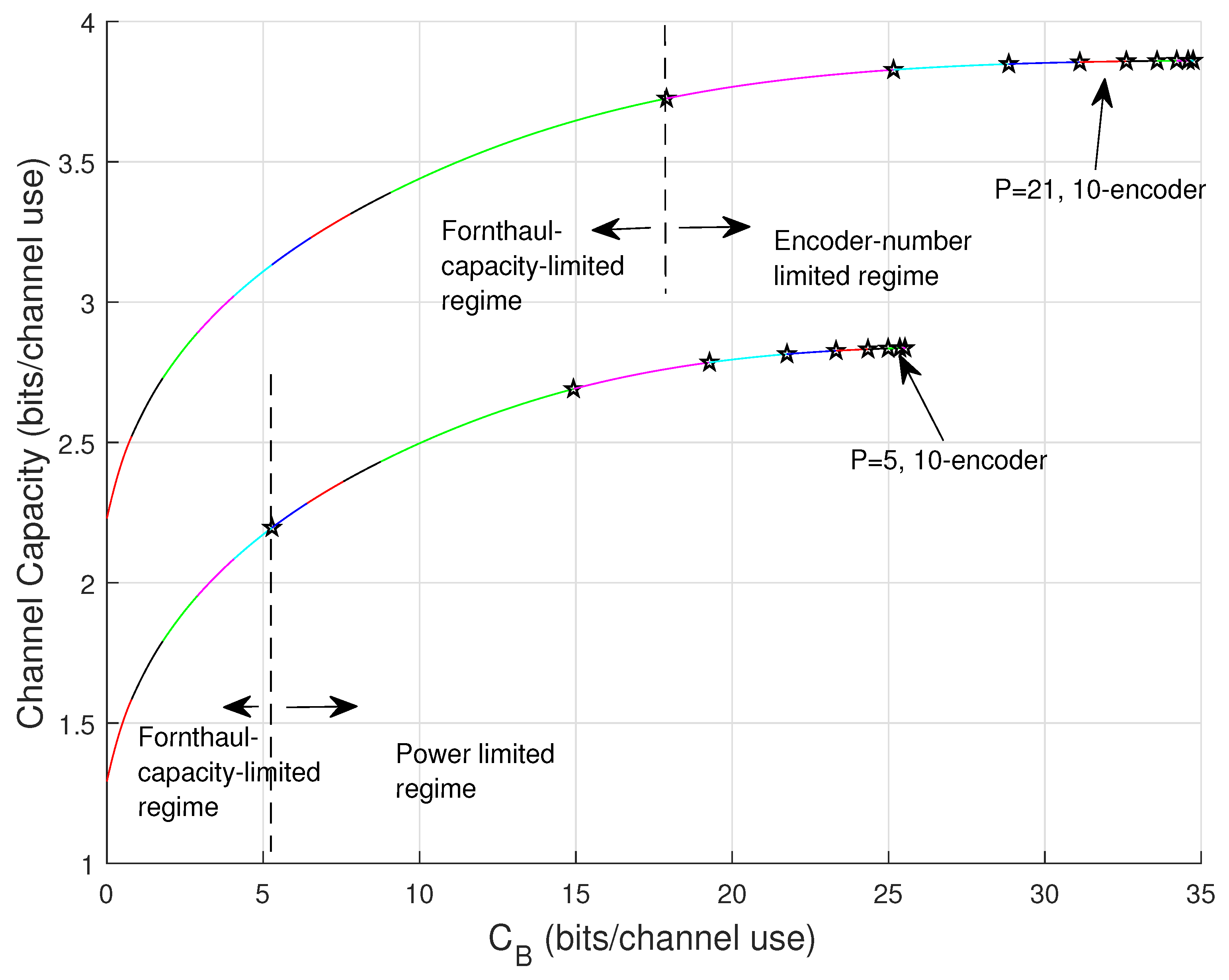

- When numerous APs are engaged, a linear growth of total fronthaul capacity results in a logarithmic growing of the channel (beamforming) capacity.

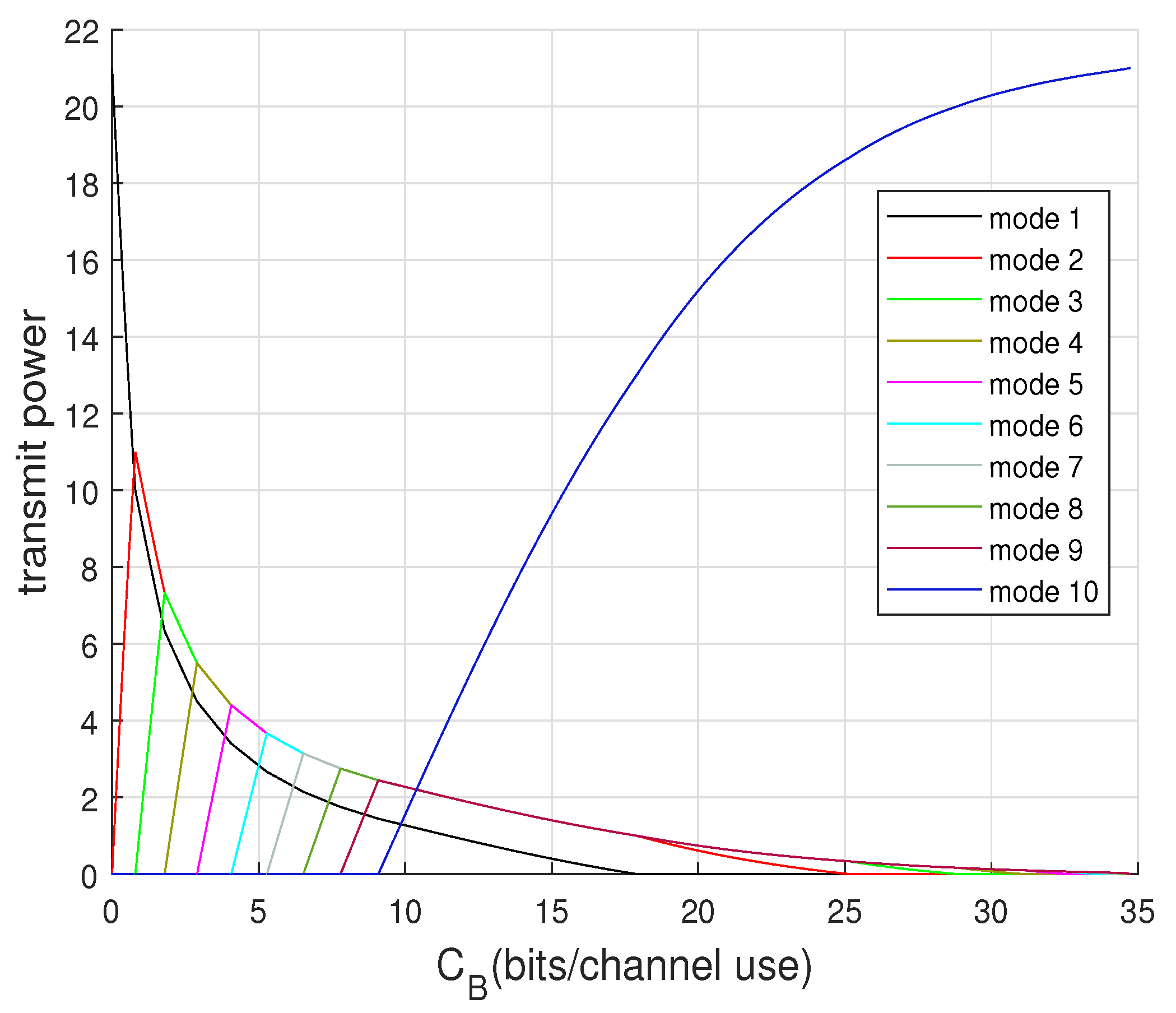

- A concept of cooperating modes is developed to demonstrate the optimal cooperation among APs to achieve capacity based on superposition coding.

- When the channel capacity is only limited by the fronthaul capacity, the number of required APs is quasi-linear to the available fronthaul capacity even if the number of APs would be unlimited.

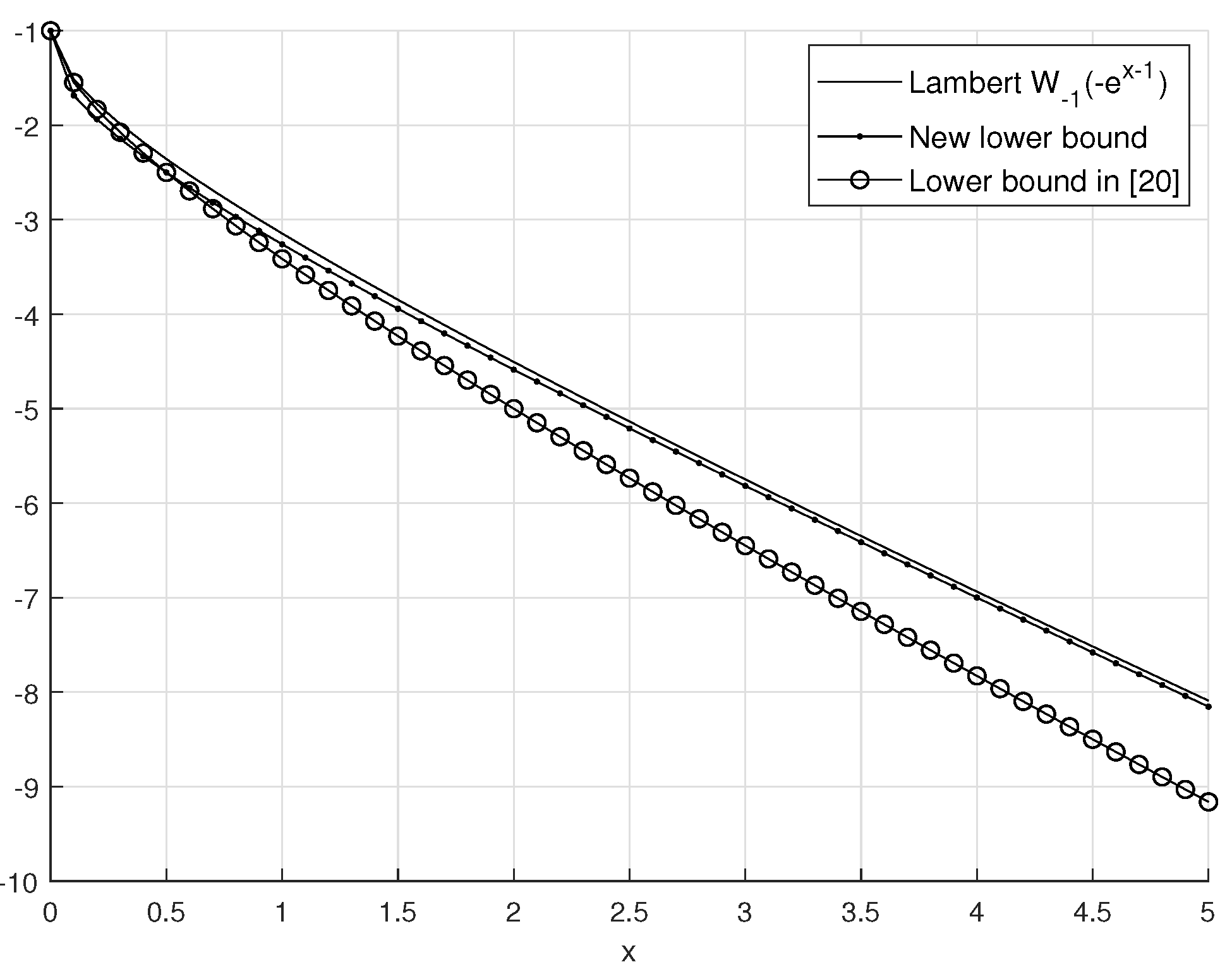

- A new and sharp lower bound of the Lambert-W function is derived for computing the number of required APs given by the total fronthaul constraint.

2. Problem Setup

2.1. Notation

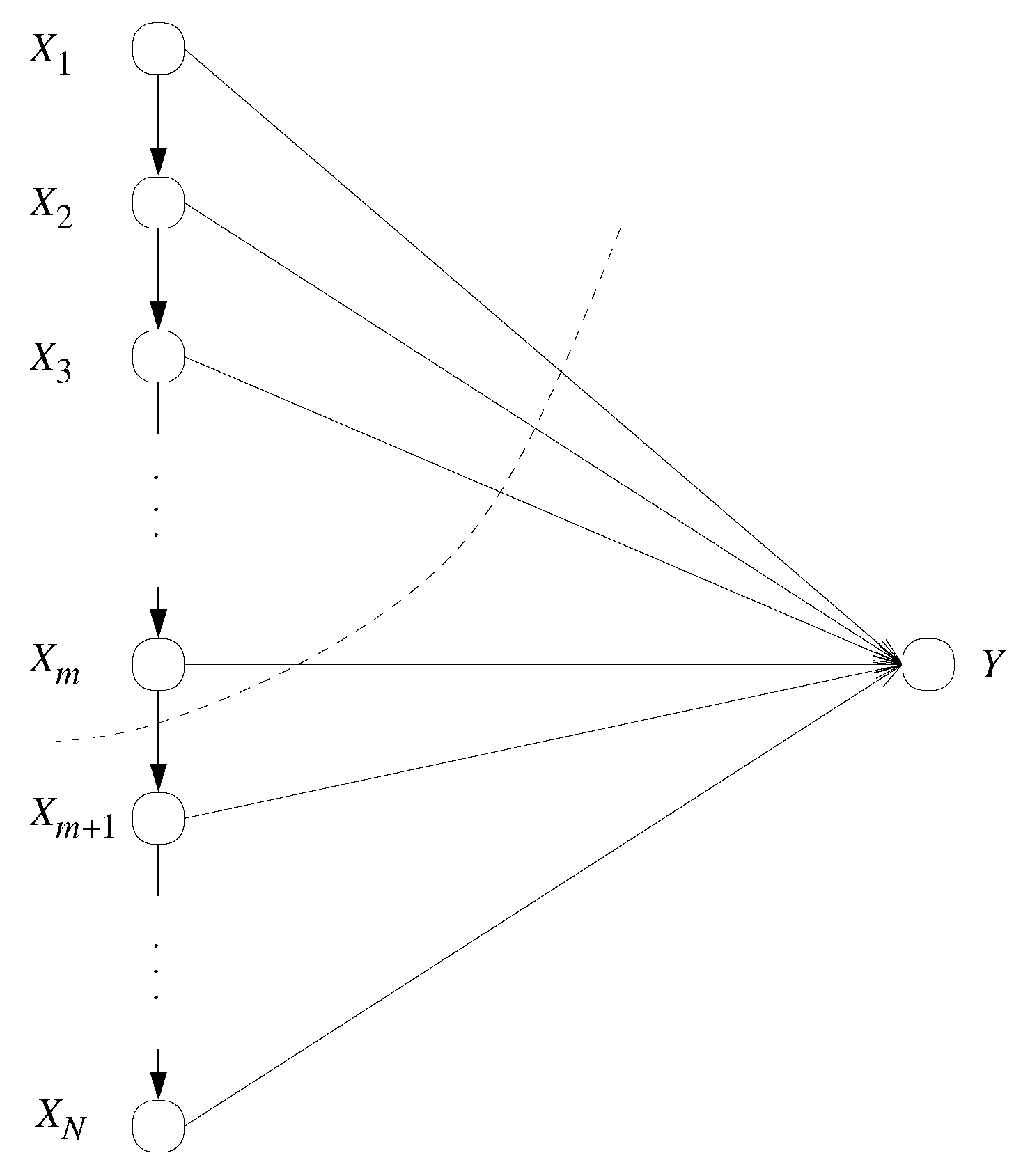

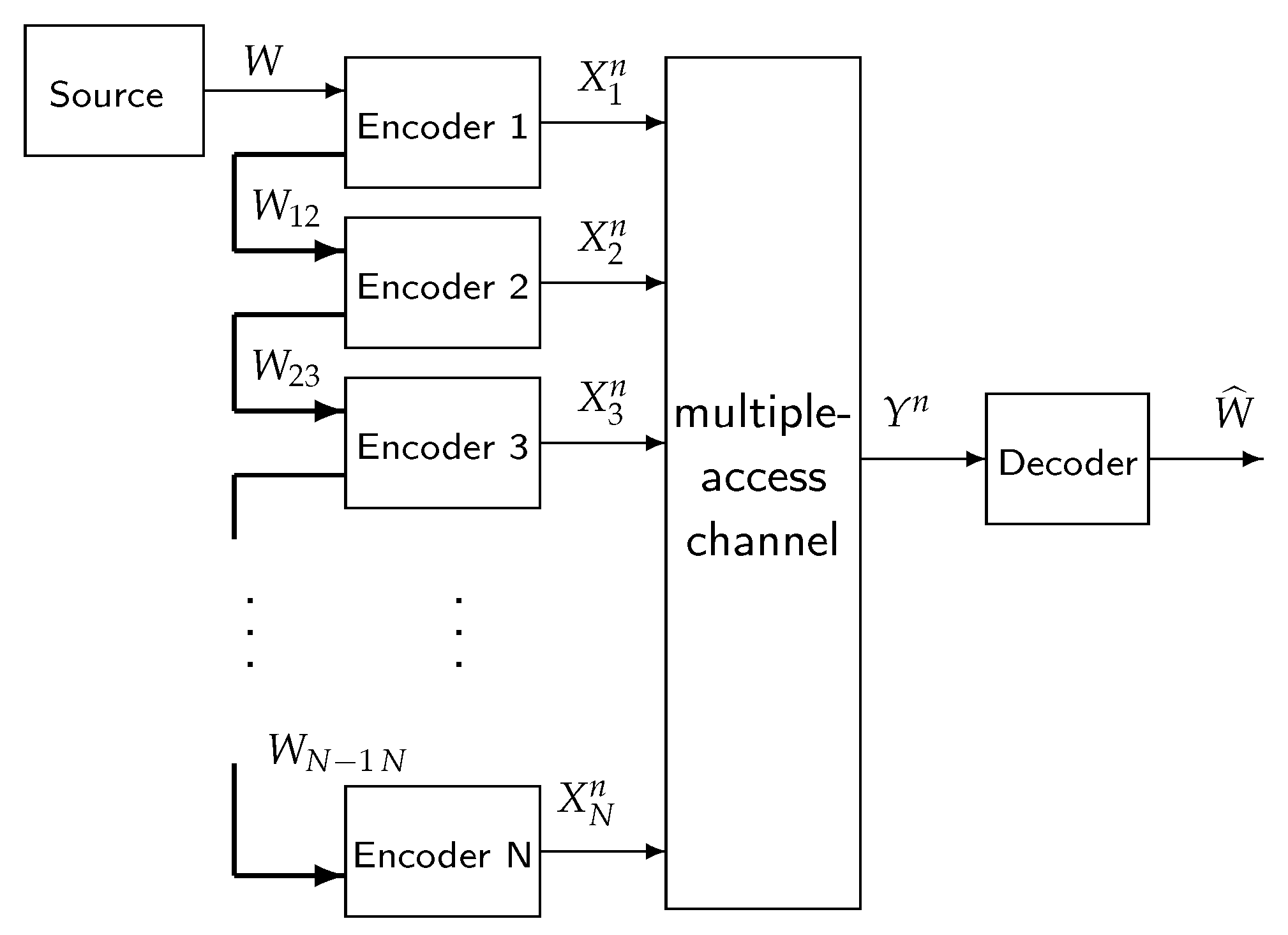

2.2. System Model

3. Two-Encoder Result

3.1. Discrete Channel

3.2. Gaussian Channel

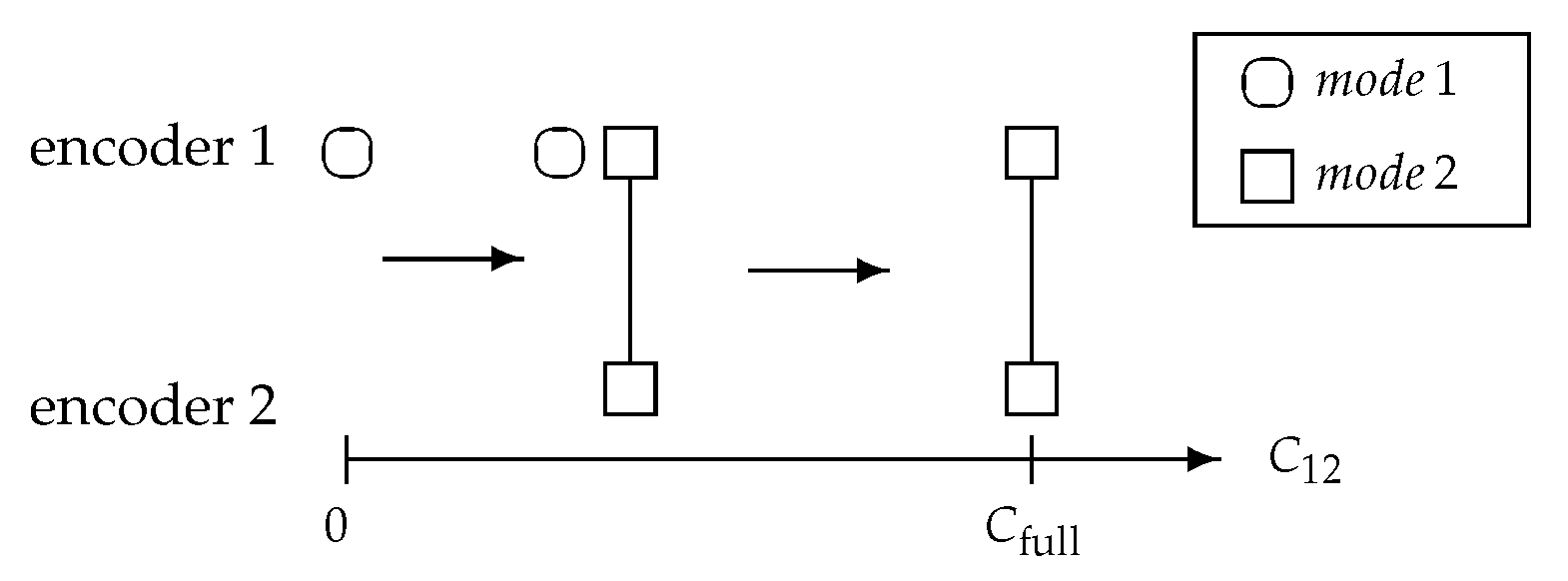

3.3. Cooperating Modes

- mode 1: Sending a private message given by from encoder 1;

- mode 2: Coherently sending a common message given by from encoder 2 and encoder 1.

4. N-Encoder Result

4.1. Discrete Channel

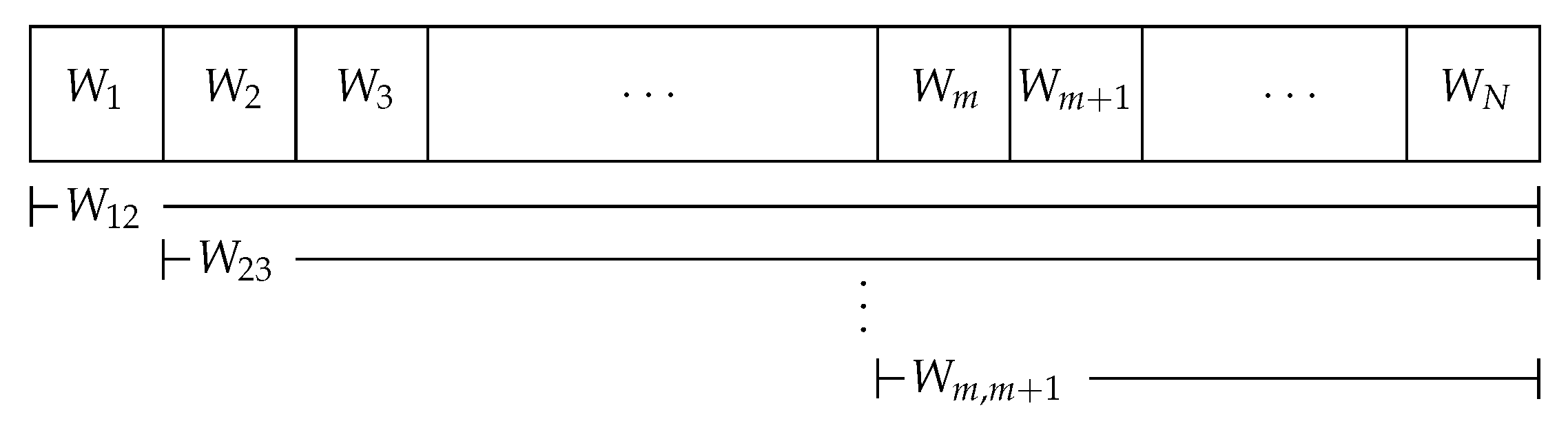

4.2. Gaussian Channel under Total fronthaul Constraint

4.3. Capacity Behavior Bounds

4.4. Compound Mode and Exact Solution

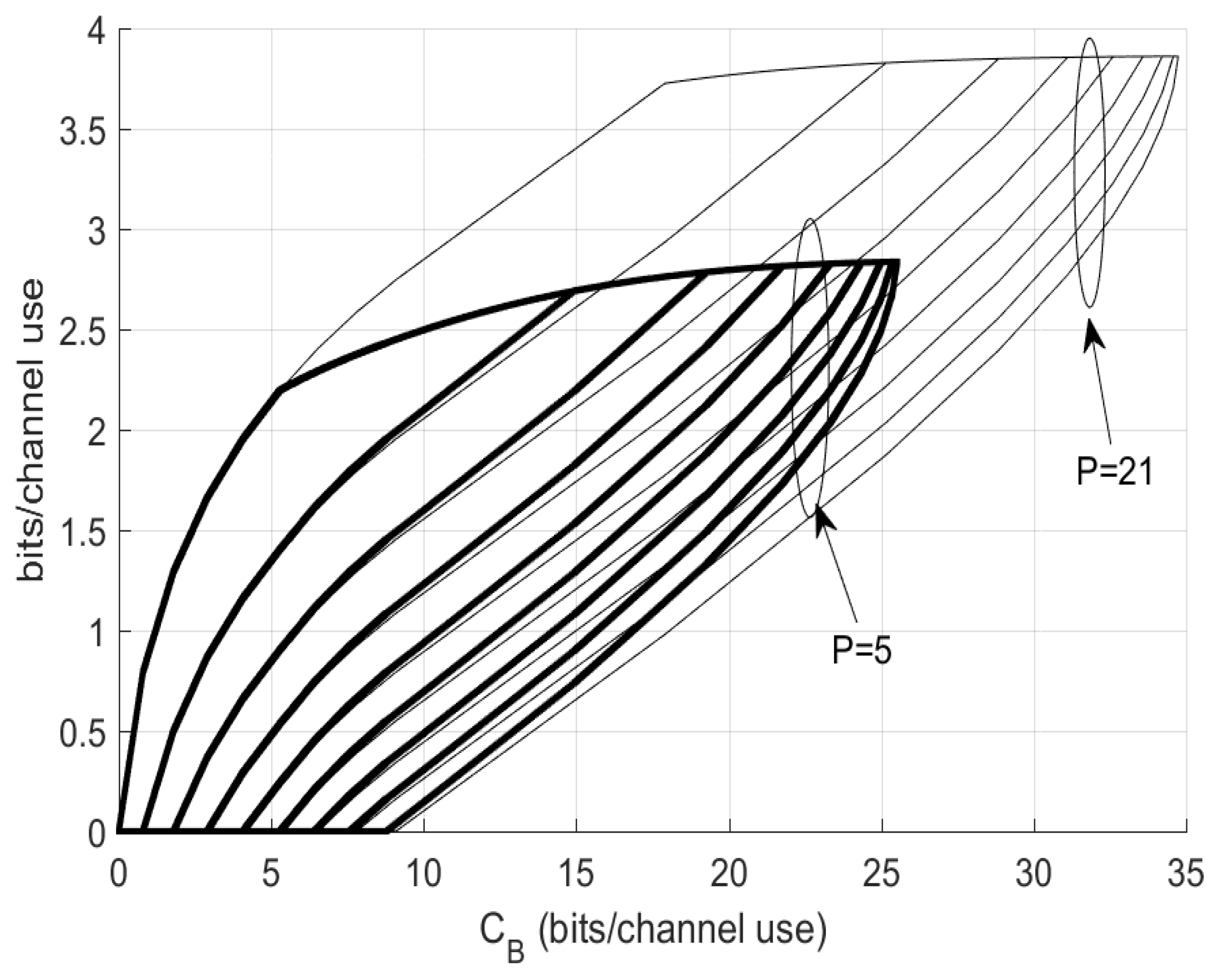

4.5. Modes Selection for Capacity Achieving

| Algorithm 1 Compute C and from no cooperation to full cooperation |

| Initialize: and Ensure:

|

4.6. Mode and Capacity Regimes

5. Infinitely Many Encoders

6. Concluding Remarks

Author Contributions

Funding

Conflicts of Interest

Appendix A. Proofs and Derivations

Appendix A.1. Proof of Theorem 1

Appendix A.2. Proof of Theorem 3

Appendix A.3. Proof of Lemma 1

Appendix A.4. Proof of Proposition 2

Appendix A.5. Proof of Corollary 3

Appendix A.6. Proof of Lemma 2

- Scenario 1: if , we must have , i.e., gives a local maxima and gives a minima;

- Scenario 2: if , we must have , i.e., gives a global minima and gives a maxima.

References

- Ngo, H.Q.; Ashikhmin, A.; Yang, H.; Larsson, E.G.; Marzetta, T.L. Cell-free massive MIMO versus small cells. IEEE Trans. Wirel. Commun. 2017, 16, 1834–1850. [Google Scholar] [CrossRef]

- Zhang, J.; Chen, S.; Lin, Y.; Zheng, J.; Ai, B.; Hanzo, L. Cell-free massive MIMO: A new next-generation paradigm. IEEE Access 2019, 7, 99878–99888. [Google Scholar] [CrossRef]

- Interdonato, G.; Björnson, E.; Ngo, H.G.; Frenger, P.; Larsson, E.G. Ubiquitous cell-free massive MIMO communications. EURASIP J. Wirel. Commun. Netw. 2019, 197. [Google Scholar] [CrossRef]

- Bashar, M.; Cumanan, K.; Burr, A.G.; Ngo, H.Q.; Debbah, M. Cell-Free massive MIMO with limited fronthaul. In Proceedings of the 2018 IEEE International Conference on Communications (ICC), Kansas, MO, USA, 20–24 May 2018; pp. 1–7. [Google Scholar]

- Bashar, M.; Cumanan, K.; Burr, A.G.; Ngo, H.Q.; Larsson, E.G.; Xiao, P. On the energy efficiency of limited-fronthaul cell-free massive MIMO. In Proceedings of the 2019 IEEE International Conference on Communications (ICC), Shanghai, China, 20–24 May 2019; pp. 1–7. [Google Scholar]

- Femenias, G.; Riera-Palou, F. Cell-free millimeter-wave massive MIMO systems with limited fronthaul capacity. IEEE Access 2019, 7, 44596–44612. [Google Scholar] [CrossRef]

- Frenger, P.; Hederen, J.; Hessler, M.; Interdonato, G. Improved Antenna Arrangement for Distributed Massive MIMO (2017). Patent Application WO2018103897. Available online: patentscope.wipo.int/search/en/WO2018103897 (accessed on 21 January 2020).

- Radio Stripes: Re-Thinking Mobile Networks. Available online: https://www.ericsson.com/en/blog/2019/2/radio-stripes (accessed on 21 January 2020).

- Willems, F. The discrete memoryless multiple access channel with partially cooperating encoders (corresp). IEEE Trans. Inf. Theory 1983, 29, 441–445. [Google Scholar] [CrossRef]

- El Gamal, A.; Zahedi, S. Capacity of a class of relay channels with orthogonal components. IEEE Trans. Inf. Theory 2005, 51, 1815–1817. [Google Scholar] [CrossRef]

- Ghabeli, L.; Aref, M.R. A new achievable rate and the capacity of some classes of multilevel relay network. EURASIP J. Wirel. Commun. Netw. 2008, 2008, 135857. [Google Scholar] [CrossRef][Green Version]

- Kang, W.; Liu, N.; Chong, W. The Gaussian multiple access diamond channel. IEEE Trans. Inf. Theory 2015, 61, 6049–6059. [Google Scholar] [CrossRef]

- Saeedi Bidokhti, S.; Kramer, G. Capacity Bounds for Diamond Networks With an Orthogonal Broadcast Channel. IEEE Trans. Inf. Theory 2016, 62, 7103–7122. [Google Scholar] [CrossRef]

- El Gamal, A.; Aref, M. The capacity of the semideterministic relay channel (corresp.). IEEE Trans. Inf. Theory 1982, 28, 536. [Google Scholar] [CrossRef]

- EL Gamal, A.; Hassanpour, N.; Mammen, J. Relay networks with delays. IEEE Trans. Inf. Theory 2007, 53, 3413–3431. [Google Scholar] [CrossRef]

- Zhang, P.; Willems, F.; Huang, L. Capacity study of distributed beamforming in relation to constrained backbone communication. In Proceedings of the 2014 International Symposium on Information Theory and its Applications, Melbourne, VIC, Australia, 26–29 October 2014; pp. 473–477. [Google Scholar]

- Golub, G.H.; Van Loan, C.F. Matirx Computations; The Johns Hopkins University Press: Baltimore, MD, USA, 2013. [Google Scholar]

- Cover, T.; Thomas, J. Elements of Information Theory; Wiley: New York, NY, USA, 2006. [Google Scholar]

- Mitrinović, D.; Vasić, P. Analytic Inequalities, ser. Grundlehren der Mathematischen Wissenschaften; Springer: Berlin, Germany, 1970. [Google Scholar]

- Chatzigeorgiou, I. Bounds on the Lambert function and their application to the outage analysis of user cooperation. IEEE Commun. Lett. 2013, 17, 1505–1508. [Google Scholar] [CrossRef]

- Corless, R.M.; Gonnet, G.H.; Hare, D.; Jeffrey, D.J.; Knuth, D.E. On the Lambert W function. Adv. Comput. Math. 1996, 5, 329. [Google Scholar] [CrossRef]

- Abramowitz, M.; Stegun, I. Handbook of Mathematical Functions, with Formulas, Graphs, and Mathematical Tables; Dover Publications, Incorporated: St. Mineola, NY, USA, 1964. [Google Scholar]

- Gallager, G. Information Theory and Reliable Communication; John Wiley & Sons, Inc.: New York, NY, USA, 1968. [Google Scholar]

- Hoorfar, A.; Hassani, M. Inequalities on the Lambert W function and hyperpower function. J. Inequal. Pure Appl. Math. 2008, 9, 51. [Google Scholar]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, P.; Willems, F.M. . On the Downlink Capacity of Cell-Free Massive MIMO with Constrained Fronthaul Capacity. Entropy 2020, 22, 418. https://doi.org/10.3390/e22040418

Zhang P, Willems FM . On the Downlink Capacity of Cell-Free Massive MIMO with Constrained Fronthaul Capacity. Entropy. 2020; 22(4):418. https://doi.org/10.3390/e22040418

Chicago/Turabian StyleZhang, Peng, and Frans M. J. Willems. 2020. "On the Downlink Capacity of Cell-Free Massive MIMO with Constrained Fronthaul Capacity" Entropy 22, no. 4: 418. https://doi.org/10.3390/e22040418

APA StyleZhang, P., & Willems, F. M. . (2020). On the Downlink Capacity of Cell-Free Massive MIMO with Constrained Fronthaul Capacity. Entropy, 22(4), 418. https://doi.org/10.3390/e22040418