Fractional Dynamics Identification via Intelligent Unpacking of the Sample Autocovariance Function by Neural Networks

Abstract

1. Introduction

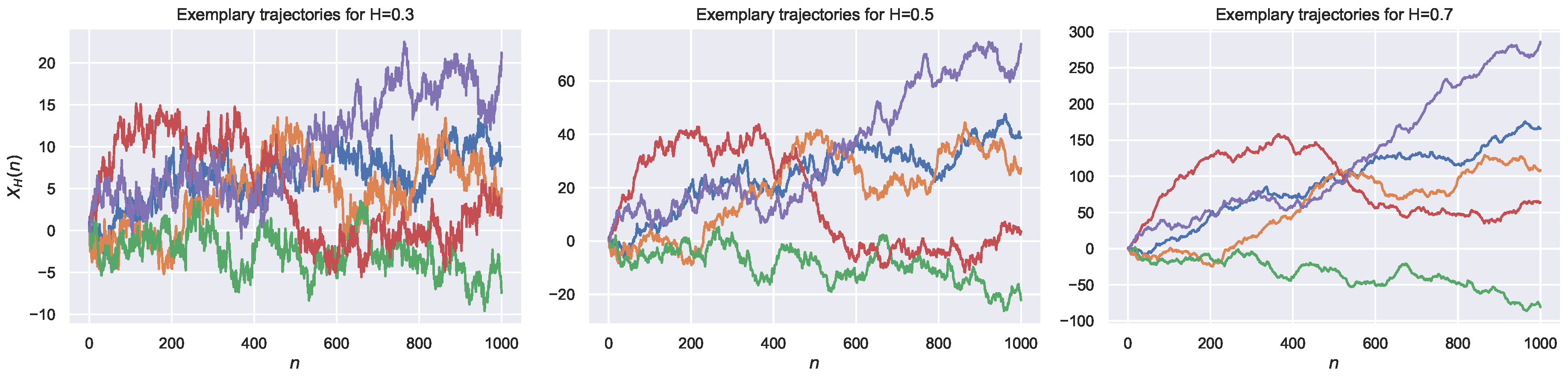

2. Fractional Brownian Motion

3. ACVF-Based Methods for the Estimation of the Hurst Exponent

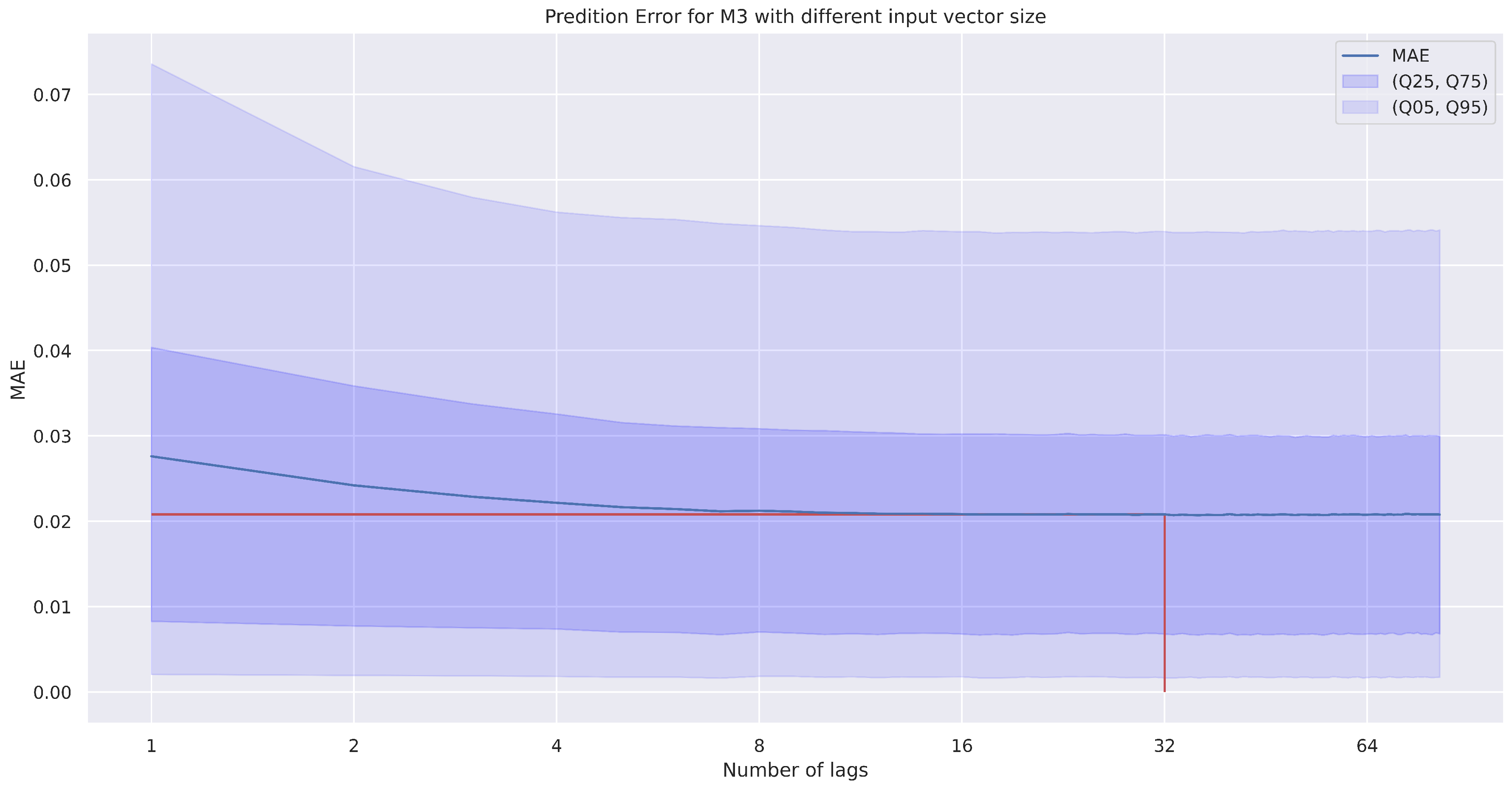

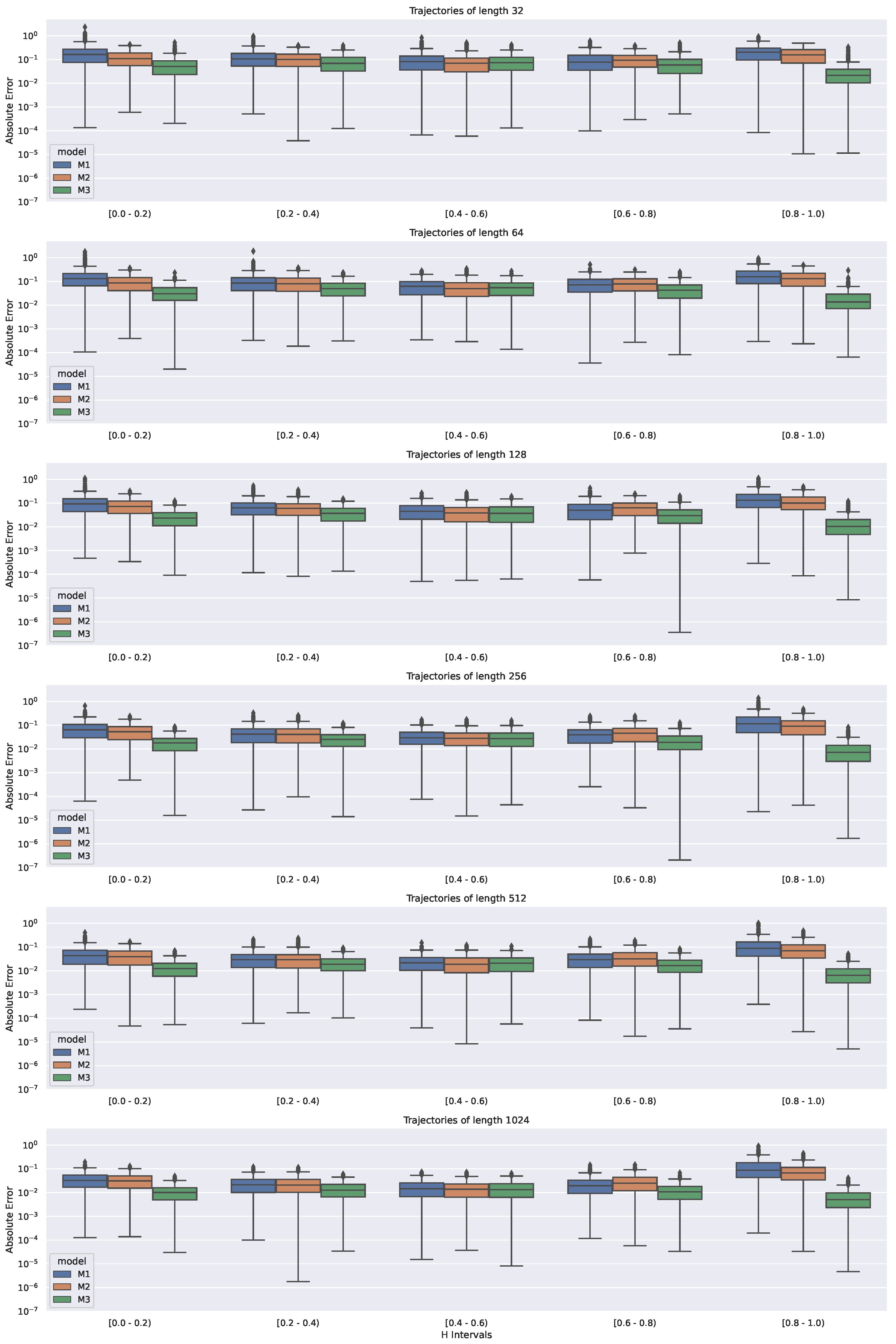

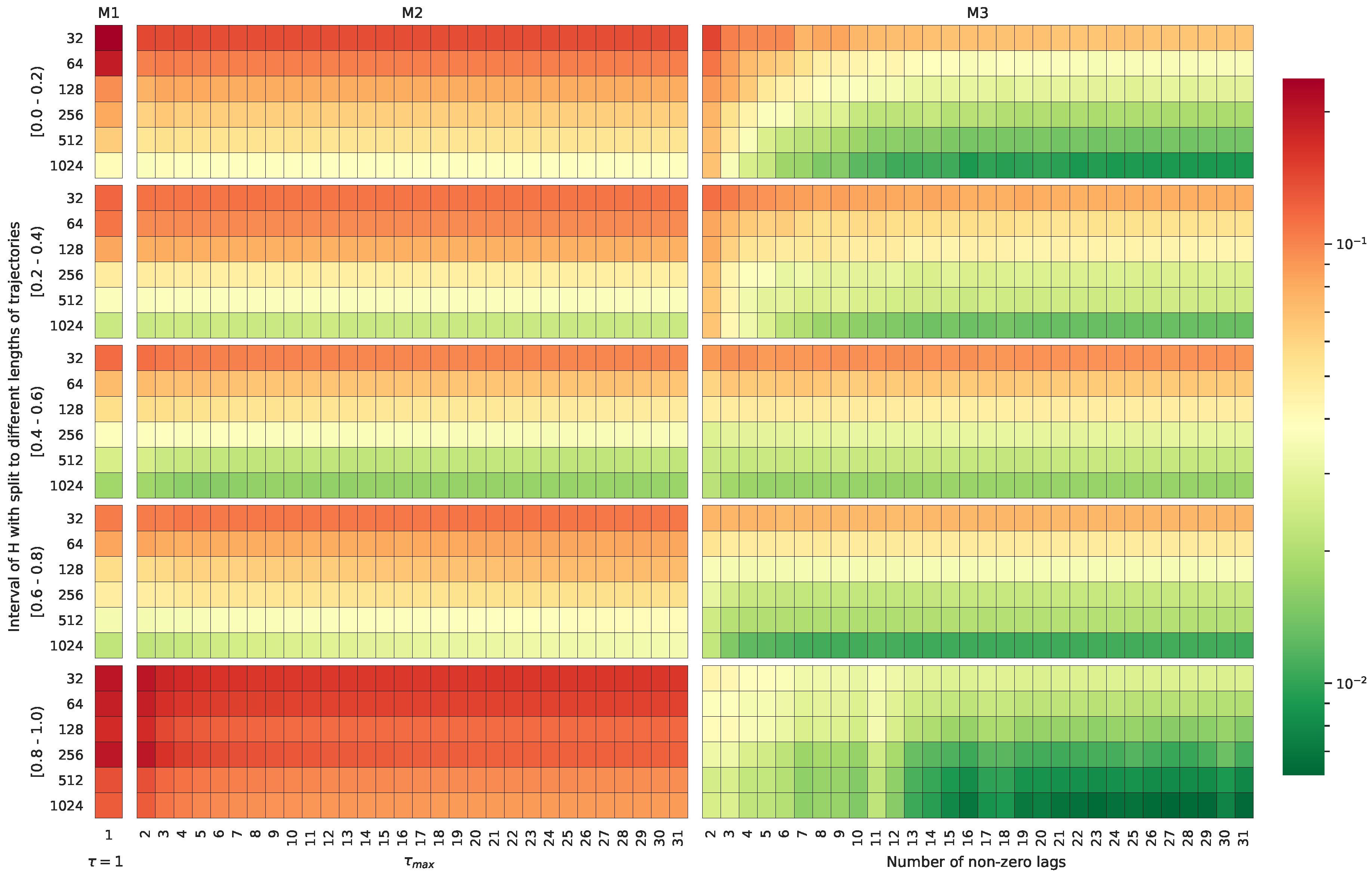

4. ACVF and NN-Based Methods for the Estimation of the Hurst Exponent

5. Simulation Study

6. Summary and Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Nezhadhaghighi, M.G.; Rajabpour, M.A.; Rouhani, S. First-passage-time processes and subordinated Schramm-Loewner evolution. Phys. Rev. E 2011, 84, 011134. [Google Scholar] [CrossRef] [PubMed]

- Failla, R.; Grigolini, P.; Ignaccolo, M.; Schwettmann, A. Random growth of interfaces as a subordinated process. Phys. Rev. E 2004, 70, 010101(R). [Google Scholar] [CrossRef] [PubMed]

- Gabaix, X.; Gopikrishnan, P.; Plerou, V.; Stanley, H. A Theory of Power Law Distributions in Financial Market Fluctuations. Nature 2003, 423, 267–270. [Google Scholar] [CrossRef] [PubMed]

- Ivanov, P.C.; Yuen, A.; Podobnik, B.; Lee, Y. Common scaling patterns in intertrade times of U.S. stocks. Phys. Rev. E 2004, 69, 05610. [Google Scholar] [CrossRef] [PubMed]

- Scher, H.; Margolin, G.; Metzler, R.; Klafter, J. The dynamical foundation of fractal stream chemistry: The origin of extremely long retention times. Geophys. Res. Lett. 2002, 29, 1061. [Google Scholar] [CrossRef]

- Doukhan, P.; Oppenheim, G. (Eds.) Theory and Applications of Long-Range Dependence; Birkhäuser Boston, Inc.: Boston, MA, USA, 2003. [Google Scholar]

- Golding, I.; Cox, E.C. Physical Nature of Bacterial Cytoplasm. Phys. Rev. Lett. 2006, 96, 098102. [Google Scholar] [CrossRef]

- Mandelbrot, B.B.; Ness, J.W.V. Fractional Brownian Motions, Fractional Noises and Applications. SIAM Rev. 1968, 10, 422–437. [Google Scholar] [CrossRef]

- Stanislavsky, A.; Burnecki, K.; Magdziarz, M.; Weron, A.; Weron, K. FARIMA modelling of solar flar activity from empirical time series of soft X-Ray Solar emission. Astrophys. J. 2009, 693, 1877–1882. [Google Scholar] [CrossRef]

- Zeng, C.; Chen, Y.; Yang, Q. The fBm-driven Ornstein-Uhlenbeck process: Probability density function and anomalous diffusion. Fract. Calc. Appl. Anal. 2012, 15, 479–492. [Google Scholar] [CrossRef]

- Chen, W.; Sun, H.; Zhang, X.; Korošak, D. Anomalous diffusion modeling by fractal and fractional derivatives. Comput. Math. Appl. 2010, 59, 1754–1758. [Google Scholar] [CrossRef]

- Mura, A.; Pagnini, G. Characterizations and simulations of a class of stochastic processes to model anomalous diffusion. J. Phys. A 2008, 41, 285003. [Google Scholar] [CrossRef]

- Piryatinska, A.; Saichev, A.I.; Woyczynski, W.A. Models of anomalous diffusion: The subdiffusive case. Phys. A Stat. Mech. Its Appl. 2005, 349, 375–420. [Google Scholar] [CrossRef]

- Beran, J. Statistics for Long-Memory Processes; Chapman & Hall: New York, NY, USA, 1994. [Google Scholar]

- Samorodnitsky, G.; Taqqu, M. Stable Non-Gaussian Random Processes; Chapman & Hall: New York, NY, USA, 1994. [Google Scholar]

- Metzler, R.; Klafter, J. The random walk’s guide to anomalous diffusion: A fractional dynamics approach. Phys. Rep. 2000, 339, 1–77. [Google Scholar] [CrossRef]

- Metzler, R.; Klafter, J. The restaurant at the end of the random walk: Recent developments in the description of anomalous transport by fractional dynamics. J. Phys. A 2004, 37, R161–R208. [Google Scholar] [CrossRef]

- Sato, K.I. Lévy Processes and Infinitely Divisible Distributions; Cambridge University Press: Cambridge, UK, 1999. [Google Scholar]

- Wyłomańska, A. Arithmetic Brownian motion subordinated by tempered stable and inverse tempered stable processes. Physica A 2012, 391, 5685–5696. [Google Scholar] [CrossRef][Green Version]

- Wyłomańska, A. Tempered stable process with infinitely divisible inverse subordinators. J. Stat. Mech. 2013, 10, P10011. [Google Scholar] [CrossRef]

- Wyłomańska, A.; Gajda, J. Stable continuous-time autoregressive process driven by stable subordinator. Phys. A Stat. Mech. Its Appl. 2016, 444, 1012–1026. [Google Scholar] [CrossRef]

- Magdziarz, M.; Weron, A. Fractional Fokker-Planck dynamics: Stochastic representation and computer simulation. Phys. Rev. E 2007, 75, 056702. [Google Scholar] [CrossRef]

- Magdziarz, M. Langevin Picture of Subdiffusion with Infinitely Divisible Waiting Times. J. Stat. Phys. 2009, 135, 763–772. [Google Scholar] [CrossRef]

- Gajda, J.; Magdziarz, M. Fractional Fokker-Planck equation with tempered alpha-stable waiting times: Langevin picture and computer simulation. Phys. Rev. E 2010, 82, 011117. [Google Scholar] [CrossRef]

- Gajda, J.; Wyłomańska, A. Fokker–Planck type equations associated with fractional Brownian motion controlled by infinitely divisible processes. Physica A 2014, 405, 104–113. [Google Scholar] [CrossRef]

- Bodrova, A.S.; Chechkin, A.V.; Sokolov, I.M. Scaled Brownian motion with renewal resetting. Phys. Rev. E 2019, 100, 012120. [Google Scholar] [CrossRef] [PubMed]

- Thalpa, S.; Wyłomańska, A.; Sikora, G.; Wagner, C.E.; Krapf, D.; Kantz, H.; Chechkin, A.V.; Metzler, R. Leveraging large-deviation statistics to decipher the stochastic properties of measured trajectories. arXiv 2020, arXiv:2005.02099. [Google Scholar]

- Fulinski, A. Anomalous Diffusion and Weak Nonergodicity. Phys. Rev. E 2011, 83, 061140. [Google Scholar] [CrossRef]

- Beck, C.; Cohen, E.G.D. Superstatistics. Physica A 2003, 322, 267–275. [Google Scholar] [CrossRef]

- Chechkin, A.V.; Seno, F.; Metzler, R.; Sokolov, I.M. Brownian yet Non-Gaussian Diffusion: From Superstatistics to Subordination of Diffusing Diffusivities. Phys. Rev. X 2017, 7, 021002. [Google Scholar] [CrossRef]

- Sokolov, I.M. Models of anomalous diffusion in crowded environments. Soft Matter 2012, 8, 9043–9052. [Google Scholar] [CrossRef]

- Klages, R.; Radons, G. (Eds.) Anomalous Transport: Foundations and Applications; Wiley: Hoboken, NJ, USA, 2008. [Google Scholar]

- Hoefling, F.; Franosch, T. Anomalous transport in the crowded world of biological cellsE. Rep. Prog. Phys. 2013, 76, 046602. [Google Scholar] [CrossRef]

- Metzler, R.; Jeon, J.; Cherstvy, A.; Barkai, E. Anomalous transport in the crowded world of biological cellsE. Phys. Chem. Chem. Phys. 2014, 16, 24128–24164. [Google Scholar] [CrossRef]

- Sikora, G.; Burnecki, K.; Wyłomańska, A. Mean-squared displacement statistical test for fractional Brownian motion. Phys. Rev. E 2017, 95, 032110. [Google Scholar] [CrossRef]

- Sikora, G.; Teuerle, M.; Wyłomańska, A.; Grebenkov, D. Statistical properties of the anomalous scaling exponent estimator based on time averaged mean square displacement. Phys. Rev. E 2017, 96, 022132. [Google Scholar] [CrossRef] [PubMed]

- Taqqu, M.S.; Teverovsky, V.; Willinger, W. Estimators for Long-Range Dependence: An Empirical Study. Fractals 1995, 3, 785–798. [Google Scholar] [CrossRef]

- Movahed, M.; Hermanis, E. Fractal analysis driver flow fluctuations. Phys. A Stat. Mech. Its Appl. 2008, 387, 915–932. [Google Scholar] [CrossRef]

- Sikora, G. Statistical test for fractional Brownian motion based on detrending moving average algorithm. Chaos Solitons Fractals 2018, 114, 54–62. [Google Scholar] [CrossRef]

- Carbone, A.; Kiyono, K. Detrending moving average algorithm: Frequency response and scaling performances. Phys. Rev. E 2016, 93, 063309. [Google Scholar] [CrossRef]

- Arianos, S.; Carbone, A. Detrending moving average algorithm: A closed-form approximation of the scaling law. Phys. A Stat. Mech. Its Appl. 2017, 382, 9–15. [Google Scholar] [CrossRef]

- Sikora, G.; Hoell, M.; Gajda, J.; Kantz, H.; Chechkin, A.; Wyłomańska, A. Probabilistic properties of detrended fluctuation analysis for Gaussian processes. Phys. Rev. E 2020, 101, 032114. [Google Scholar] [CrossRef]

- Balcerek, M.; Burnecki, K. Testing of fractional Brownian motion in a noisy environment. Chaos Solitons Fractals 2020, 140, 110097. [Google Scholar] [CrossRef]

- Bishop, C. Neural Networks for Pattern Recognition; Oxford University Press: Oxford, UK, 1996. [Google Scholar]

- Jabłoński, I. Computer assessment of indirect insight during an airflow interrupter maneuver of breathing. Comput. Meth. Progr. Biomed. 2013, 110, 320–332. [Google Scholar] [CrossRef]

- Kumar, R.; Srivastava, S.; Gupta, J.R.P.; Mohindru, A. Comparative study of neural networks for dynamic nonlinear systems identification. Soft Comput. 2019, 21, 103–114. [Google Scholar] [CrossRef]

- Tutunji, T.A. Parametric system identification using neural networks. Appl. Soft Comput. 2016, 47, 251–261. [Google Scholar] [CrossRef]

- Szymański, J.; Weiss, M. Elucidating the Origin of Anomalous Diffusion in Crowded Fluids. Phys. Rev. Lett. 2009, 103, 038102. [Google Scholar] [CrossRef] [PubMed]

- Weiss, M. Single-particle tracking data reveal anticorrelated fractional Brownian motion in crowded fluids. Phys. Rev. E 2013, 88, 010101. [Google Scholar] [CrossRef] [PubMed]

- Krapf, D.; Lukat, N.; Marinari, E.; Metzler, R.; Oshanin, G.; Selhuber-Unkel, C.; Squarcini, A.; Stadler, L.; Weiss, M.; Xu, X. Spectral Content of a Single Non-Brownian Trajectory. Phys. Rev. X 2019, 9, 011019. [Google Scholar] [CrossRef]

- Brockwell, P.J.; Davis, R.A. Introduction to Time Series and Forecasting; Springer: New York, NY, USA, 1994. [Google Scholar]

- Sikora, G.; Kepten, E.; Weron, A.; Balcerek, M.; Burnecki, K. An efficient algorithm for extracting the magnitude of the measurement error for fractional dynamics. Phys. Chem. Chem. Phys. 2017, 19, 26566–26581. [Google Scholar] [CrossRef]

- Lanoiselee, Y.; Grebenkov, D.; Sikora, G.; Grzesiek, A.; Wyłomańska, A. Optimal parameters for anomalous diffusion exponent estimation from noisy data. Phys. Rev. E 2018, 98, 062139. [Google Scholar] [CrossRef]

- Borodinov, N.; Neumayer, S.; Kalinin, S.V.; Ovchinnikova, O.S.; Vasudevan, R.K.; Jesse, S. Deep neural networks for understanding noisy data applied to physical property extraction in scanning probe microscopy. Comput. Mater. 2019, 5, 1–8. [Google Scholar] [CrossRef]

- Gupta, S.; Gupta, A. Dealing with noise problem in machine learning data-sets: A systematic review. Procedia Comput. Sci. 2019, 161, 466–474. [Google Scholar] [CrossRef]

- Gong, C.; Liu, T.; Yang, J.; Tao, D. Large-margin label-calibrated support vector machines for positive and unlabeled learning. IEEE Trans. Neural Netw. 2019, 30, 3471–3483. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Pascanu, R.; Mikolov, T.; Bengio, Y. Understanding the exploding gradient problem. CoRR 2012, 4, 417. [Google Scholar]

- Prajit, R.; Barret, Z.; Quoc, V. Searching for Activation Functions. arXiv 2017, arXiv:1710.05941. [Google Scholar]

- Diederik, P.; Jimmy, B. Adam: A Method for Stochastic Optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Lample, G.; Ballesteros, M.; Subramanian, S.; Kawakami, K.; Dyer, C. Neural architectures for named entity recognition. arXiv 2016, arXiv:1603.01360. [Google Scholar]

- Sabour, S.; Frosst, N.; Hinton, G. Dynamic Routing Between Capsules. In Advances in Neural Information Processing Systems 30; Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates, Inc.: New York, NY, USA, 2017; pp. 3856–3866. [Google Scholar]

- Dosovitskiy, A.; Fischer, P.; Ilg, E.; Hausser, P.; Hazirbas, C.; Golkov, V.; van der Smagt, P.; Cremers, D.; Brox, T. FlowNet: Learning Optical Flow With Convolutional Networks. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015. [Google Scholar]

- Mao, X.; Li, Q.; Xie, H.; Lau, R.; Wang, Z.; Smolley, S.P. Least Squares Generative Adversarial Networks. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017. [Google Scholar]

- Watkins, D. Fundamentals of Matrix Computations, 2nd ed.; Pure and Applied Mathematics; Wiley-Interscience: New York, NY, USA, 2002. [Google Scholar]

- Craigmile, P. Simulating a class of stationary Gaussian processes using the Davies—Harte algorithm, with application to long memory processes. J. Time Ser. Anal. 2003, 24, 505–511. [Google Scholar] [CrossRef]

- Caruana, R.; Lawrence, S.; Giles, C. Overfitting in neural nets: Backpropagation, conjugate gradient, and early stopping. In Proceedings of the Conference: Advances in Neural Information Processing Systems 13, Denver, CO, USA, 8–13 December 2000; pp. 402–408. [Google Scholar]

- Kowalek, P.; Loch-Olszewska, H.; Szwabiński, J. Classification of diffusion modes in single-particle tracking data: Feature-based versus deep-learning approach. Phys. Rev. E 2019, 100, 032410. [Google Scholar] [CrossRef]

- Janczura, J.; Kowalek, P.; Loch-Olszewska, H.; Szwabiński, J.; Weron, A. Classification of particle trajectories in living cells: Machine learning versus statistical testing hypothesis for fractional anomalous diffusion. Phys. Rev. E 2020, 102, 032402. [Google Scholar] [CrossRef]

- Bondarenko1, A.N.; Bugueva, T.V.; Dedok, V.A. Inverse Problems of Anomalous Diffusion Theory: An Artificial Neural Network Approach. J. Appl. Ind. Math. 2016, 3, 311–321. [Google Scholar] [CrossRef]

- Bosman, H.; Iacca, G.; Tejada, A.; Wortche, H.J.; Liotta, A. Inverse Problems of Anomalous Diffusion Theory: An Artificial Neural Network Approach. Inf. Fusion 2017, 33, 41–56. [Google Scholar] [CrossRef]

- Dosset, P.; Rassam, P.; Fernandez, L.; Espenel, C.; Rubinstein, E.; Margeat, E.; Milhiet, P.E. Automatic detection of diffusion modes within biological membranes using backpropagation neural network. BMC Bioinform. 2016, 17, 197. [Google Scholar] [CrossRef]

- Bo, S.; Schmidt, F.; Eichhorn, R.; Volpe, G. Measurement of anomalous diffusion using recurrent neural networks. Phys. Rev. E 2019, 100, 010102. [Google Scholar] [CrossRef] [PubMed]

- Munoz-Gil, G.; Garcia-March, M.A.; Manzo, C.; Martin-Guerrero, J.; Lewenstein, M. Single trajectory characterization via machine learning. New J. Phys. 2020, 22, 013010. [Google Scholar] [CrossRef]

- Arts, M.; Smal, I.; Paul, M.W.; Wyman, C.; Meijering, E. Particle Mobility Analysis Using Deep Learning and the Moment Scaling Spectrum. Sci. Rep. 2019, 9, 17160. [Google Scholar] [CrossRef] [PubMed]

- Wagner, T.; Kroll, A.; Haramagatti, C.R.; Lipinski, H.G.; Wiemann, M. Classification and Segmentation of Nanoparticle Diffusion Trajectories in Cellular Micro Environments. PLoS ONE 2016, 12, e0170165. [Google Scholar] [CrossRef]

- Granik, N.; Weiss, L.E.; Nehme, E.; Levin, M.; Chein, M.; Perlson, E.; Roichman, Y.; Shechtman, Y. Single-Particle Diffusion Characterization by Deep Learning. Biophys. J. 2019, 117, 185–192. [Google Scholar] [CrossRef]

- Maraj, K.; Szarek, D.; Sikora, G.; Balcerek, M.; Wyłomańsk, A.; Jabłoński, I. Measurement instrumentation and selected signal processing techniques for anomalous diffusion analysis. Meas. Sens. 2020. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Szarek, D.; Sikora, G.; Balcerek, M.; Jabłoński, I.; Wyłomańska, A. Fractional Dynamics Identification via Intelligent Unpacking of the Sample Autocovariance Function by Neural Networks. Entropy 2020, 22, 1322. https://doi.org/10.3390/e22111322

Szarek D, Sikora G, Balcerek M, Jabłoński I, Wyłomańska A. Fractional Dynamics Identification via Intelligent Unpacking of the Sample Autocovariance Function by Neural Networks. Entropy. 2020; 22(11):1322. https://doi.org/10.3390/e22111322

Chicago/Turabian StyleSzarek, Dawid, Grzegorz Sikora, Michał Balcerek, Ireneusz Jabłoński, and Agnieszka Wyłomańska. 2020. "Fractional Dynamics Identification via Intelligent Unpacking of the Sample Autocovariance Function by Neural Networks" Entropy 22, no. 11: 1322. https://doi.org/10.3390/e22111322

APA StyleSzarek, D., Sikora, G., Balcerek, M., Jabłoński, I., & Wyłomańska, A. (2020). Fractional Dynamics Identification via Intelligent Unpacking of the Sample Autocovariance Function by Neural Networks. Entropy, 22(11), 1322. https://doi.org/10.3390/e22111322