1. Introduction

Integer-valued time series are relevant to many fields of knowledge, ranging from finance and econometrics to ecology and meteorology. An extensive number of models for this kind of data has been proposed since the introduction of the INAR(1) model in the pioneering works of McKenzie [

1] and Al-Osh and Alzaid [

2] (see also the book by Weiss [

3]). A higher-order INAR(

p) model was considered in the work of Du and Li [

4].

In this paper, we generalize the Bayesian version of the INAR(

p) model studied by Neal and Kypraios [

5]. In our model, the innovation rates are allowed to vary through time, with the distribution of the innovation rates being modeled hierarchically by means of a Pitman–Yor process [

6]. In this way, we account for potential heterogeneity in the innovation rates as the process evolves through time, and this feature is automatically incorporated in the Bayesian forecasting capabilities of the model.

The semi-parametric form of the model demands a robustness analysis of our inferential conclusions as we vary the hyperparameters of the Pitman–Yor process. We investigate this prior sensitivity issue carefully and find ways to control the hyperparameters in order to achieve robust results.

This paper is organized as follows. In

Section 2, we construct a generalized INAR(

p) model with variable innovation rates. The likelihood function of the generalized model is derived and a data augmentation scheme is developed, which gives a specification of the model in terms of conditional distributions. This data augmented representation of the model enables the derivation in

Section 4 of full conditional distributions in simple analytical form, which are essential for the stochastic simulations in

Section 5.

Section 3 recollects the main properties of the Pitman–Yor process which are used to define the PY-INAR(

p) model in

Section 4, including its clustering properties. In building the PY-INAR(

p), we propose a form for the prior distribution of the thinning parameters vector which improves on the choice made for the Bayesian INAR(

p) model studied in [

5]. In

Section 5, we investigate the robustness of the inference with respect to changes in the Pitman–Yor process hyperparameters. Using the full conditional distributions of the innovation rates derived in

Section 4, we inspect the behavior of the model as we concentrate or spread the mass of the Pitman–Yor base measure. This leads us to a graphical criterion that identifies an elbow in the posterior expectation of the number of clusters as we vary the hyperparameters of the base measure. Once we have control over the base measure, we study its interaction with the concentration and discount hyperparameters, showing how to make choices that yield robust results. In the course of this development, we use geometrical tools to inspect the clustering of the innovation rates produced by the model.

Section 6 puts the graphical criterion to work for simulated data. In

Section 7, using a time series of worldwide earthquake events, we finish the paper comparing the forecasting performance of the PY-INAR(

p) model against the original INAR(

p) model, with favorable results.

2. A Generalization of the INAR(p) Model

We begin by generalizing the original INAR(

p) model of Du and Li [

4] as follows.

Let

be an integer-valued time series, and, for some integer

, let the

innovations, given positive parameters

, be a sequence of conditionally independent

random variables. For a given vector of parameters

, let

be a family of conditionally independent and identically distributed

random variables. For

, suppose that

and

are conditionally independent, given

. Furthermore, assume that the innovations

and the families

are conditionally independent, given

and

. The generalized INAR(

p) model is defined by the functional relation

for

, in which ∘ denotes the binomial thinning operator, defined by

, if

, and

, if

. In the homogeneous case, when all the

’s are assumed to be equal, we recover the original INAR(

p) model.

When

, this model can be interpreted as specifying a birth-and-death process, in which, at epoch

t, the number of cases

is equal to the new cases

plus the cases that survived from the previous epoch; the role of the binomial thinning operator being to remove a random number of the

cases present at the previous epoch

(see [

7] for an interpretation of the order

p case as a birth-and-death process with immigration).

Let

denote the values of an observed time series. For simplicity, we assume that

with probability one. The joint distribution of

, given parameters

and

, can be factored as

Since, with probability one,

and

, the likelihood function of the generalized INAR(

p) model is given by

For some epoch

t and

, suppose that we could observe the values of the latent

maturations. Postulate that

, so that the conditional probability function of

is given by

Furthermore, suppose that

Using the law of total probability and the product rule, we have that

Since

and

we recover the original likelihood of the generalized INAR(

p), showing that the introduction of the latent maturations

with the specified distributions is a valid data augmentation scheme (see [

8,

9] for a general discussion of data augmentation techniques).

In the next section, we review the needed definitions and properties of the Pitman–Yor process.

3. Pitman–Yor Process

Let the random probability measure

be a Dirichlet process [

10,

11,

12] with concentration parameter

and base measure

. If the random variables

, given

, are conditionally independent and identically distributed as

G, then it follows that

for every Borel set

B. If we imagine the sequential generation of the

’s, for

, the former expression shows that a value is generated anew from

with probability proportional to

, or we repeat one the previously generated values with probability proportional to its multiplicity. Therefore, almost surely, realizations of a Dirichlet process are discrete probability measures, perhaps with denumerable infinite support, depending on the nature of

. Also, this data-generating process, known as the Pólya–Blackwell–MacQueen urn, implies that the

’s are “softly clustered”, in the sense that in one realization of the process the elements of a subset of the

’s may have exactly the same value.

The Pitman–Yor process [

6] is a generalization of the Dirichlet process which results in a model with added flexibility. Essentially, the Pitman–Yor process modifies the expression of the probability associated with the Pólya-Blackwell-MacQueen urn introducing a new parameter so that the posterior predictive probability becomes

in which

is the discount parameter,

,

k is the number of distinct elements in

, and

is the number of elements in

which are equal to

, for

. It is well known that

and

for every Borel set

B. Hence,

is centered on the base probability measure

, while

and

control the concentration of

around

. We use the notation

. When

, we recover the Dirichlet process as a special case. The PY process is also defined for

and

, for some positive integer

m. For our purposes, it is enough to consider the case of non-negative

.

Pitman [

6] derived the distribution of the number of clusters

K (the number of distinct

’s), conditionally on both the concentration parameter

and the discount parameter

, as

in which

is the rising factorial and

is the generalized factorial coefficient [

13].

In the next section, we use a Pitman–Yor process to model the distribution of the innovation rates in the generalized INAR(p) model.

4. PY-INAR(p) Model

The PY-INAR(

p) model is as a hierarchical extension of the generalized INAR(

p) model defined in

Section 2. Given a random measure

, in which

is a

distribution, let the innovation rates

be conditionally independent and identically distributed with distribution

.

To complete the PY-INAR(

p) model, we need to specify the form of the prior distribution for the vector of thinning parameters

. By comparison with standard results from the theory of the AR(

p) model [

14], Du and Li [

4] found that in the INAR(

p) model the constraint

must be fulfilled to guarantee the non-explosiveness of the process. In their Bayesian analysis of the INAR(

p) model, Neal and Kypraios [

5] considered independent beta distributions for the

’s. Unfortunately, this choice is problematic. For example, in the particular case when the

’s have independent uniform distributions, it is possible to show that

, implying that we would be concentrating most of the prior mass on the explosive region even for moderate values of the model order

p. We circumvent this problem using a prior distribution for

that places all of its mass on the nonexplosive region and still allows us to derive the full conditional distributions of the

’s in simple closed form. Specifically, we take the prior distribution of

to be a Dirichlet distribution with hyperparameters

, and corresponding density

in which

, for

, and

.

Let

:

,

denote the set of all maturations, and let

be the distribution of

. Our strategy to derive the full conditionals distributions of the model parameters and latent variables is to consider the marginal distribution

From this expression, using the results in

Section 3, the derivation of the full conditional distributions is straightforward. In the following expressions, the symbol ∝ denotes proportionality up to a suitable normalization factor, and the label “all others” designate the observed counts

y and all the other latent variables and model parameters, with the exception of the one under consideration.

Let

denote the set

with the element

removed. Then, for

, we have

in which the weight

is the number of elements in

which are equal to

, and

is the number of distinct elements in

. In this mixture, we suppressed the normalization constant that makes all weights add up to one.

Making the choice

, we have

for

, in which TBeta denotes the right truncated Beta distribution with support

.

For the latent maturations, we find

To explore the posterior distribution of the model, we build a Gibbs sampler [

15] using these full conditional distributions. Escobar and West [

16] showed, in a similar context, that we can improve mixing by resampling simultaneously the values of all

’s inside the same cluster at the end of each iteration of the Gibbs sampler. Letting

be the

k unique values among

, define the number of occupants of cluster

j by

, for

. It follows that

for

. At the end of each iteration of the Gibbs sampler, we update the values of all

’s inside each cluster by the corresponding

using this distribution.

5. Prior Sensitivity

As it is often the case for Bayesian models with nonparametric components, a choice of the prior parameters for the PY-INAR(

p) model which yields robustness of the posterior distribution is nontrivial [

17].

The first aspect to be considered is the fact that the base measure

plays a crucial role in the determination of the posterior distribution of the number of clusters

K. This can be seen directly by inspecting the form of the full conditional distributions derived in

Section 4. Recalling that

is a gamma distribution with mean

and variance

, from the full conditional distribution of

one may note that the probability of generating, on each iteration of the Gibbs sampler, a value for

anew from

is proportional to

Therefore, supposing that all the other terms are fixed, if we concentrate the mass of around zero by making , this probability decreases to zero. This is not problematic, because it is hardly the case that we want to make such a drastic choice for . The behavior in the other direction is more revealing, since taking , in order to spread the mass of , also makes the limit of this probability to be zero. Due to this behavior, we need to establish a criterion to choose the hyperparameters of the base measure which avoids these extreme cases.

In our analysis, it is convenient to have a single hyperparameter regulating how the mass of

is spread over its support. For a given

, we find numerically the values of

and

which minimize the Kullback-Leibler divergence between

and a uniform distribution on the interval

. This Kullback-Leibler divergence can be computed explicitly as

In this new parameterization, our goal is to make a sensible choice for . It is worth emphasizing that by this procedure we are not truncating the support of , but only using the uniform distribution on the interval as a reference for our choice of the base measure hyperparameters and .

Our proposal to choose

goes as follows. We fix some value

for the discount parameter and choose an integer

as the prior expectation of the number of clusters

K, which, using the results at the end of

Section 3, can be computed explicitly as

in which

is the digamma function (see [

6] for a derivation of this result). Next, we find the value of the concentration parameter

by solving

numerically. After this, for each

in a grid of values, we run the Gibbs sampler and compute the posterior expectation of the number of clusters

. Finally, in the corresponding graph, we look for the value of

located at the “elbow” of the curve, that is, the value of

at which the values of

level off.

6. Simulated Data

As an explicit example of the graphical criterion in action, we used the functional form of a first-order model with thinning parameter

to simulate a time series of length

, for which the distribution of the innovations is a symmetric mixture of three Poisson distributions with parameters 1, 8, and 15.

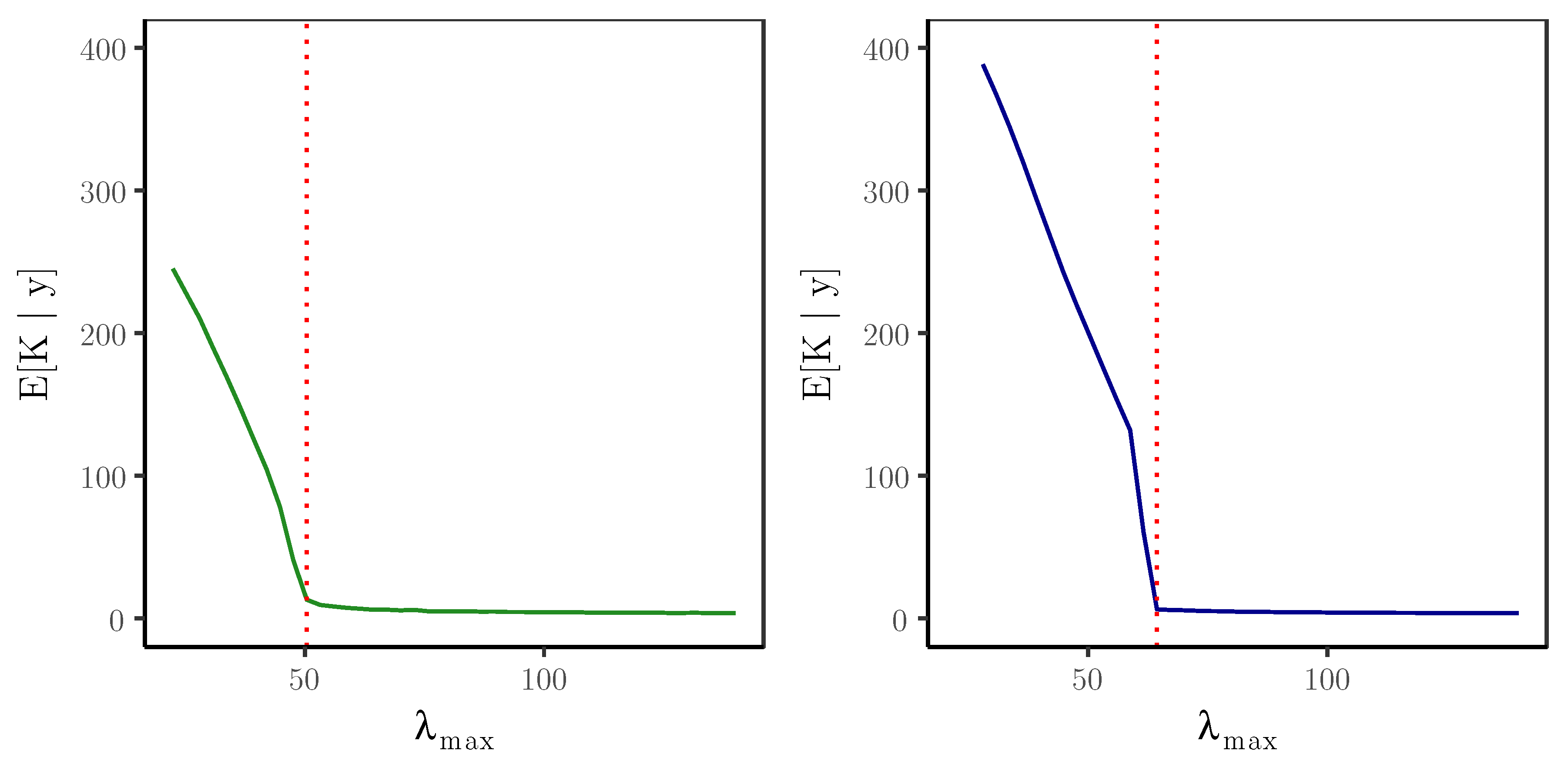

Figure 1 shows the formations of the elbows for two values of the discount parameter:

and

.

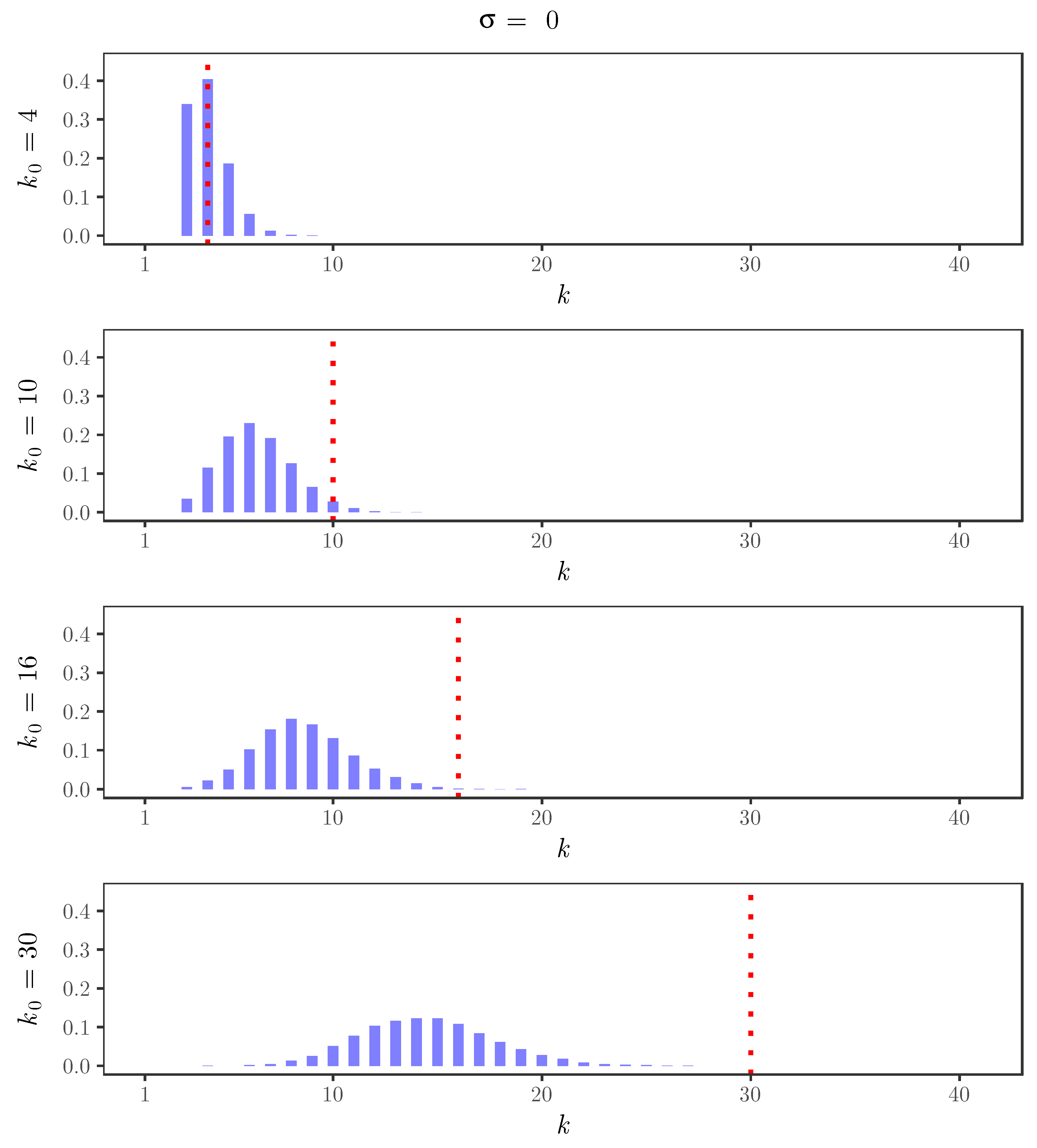

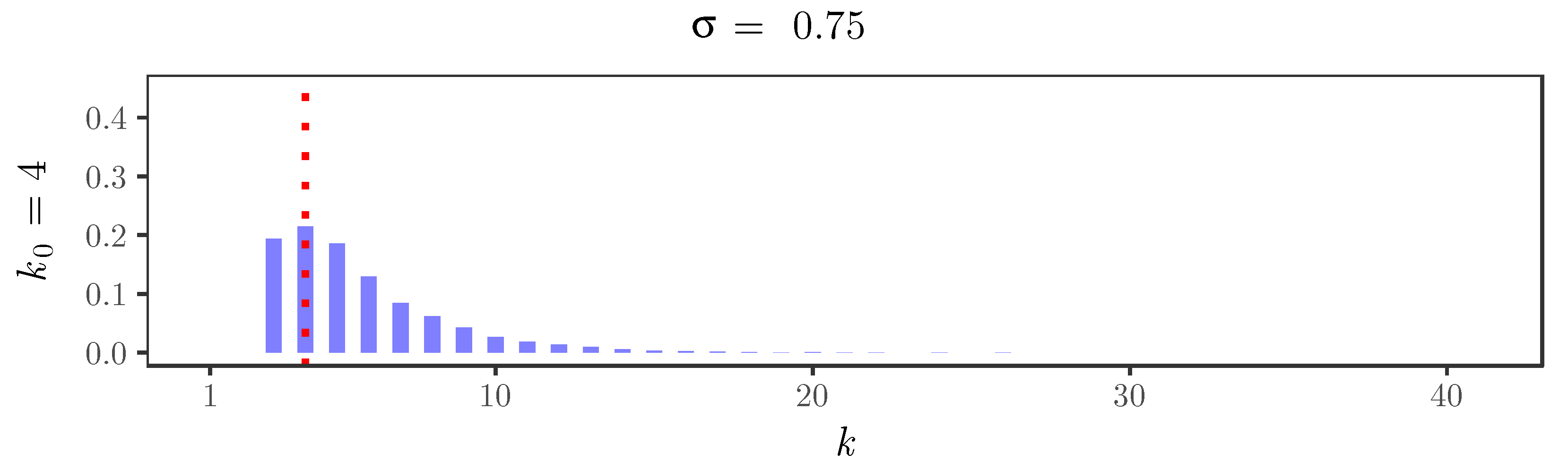

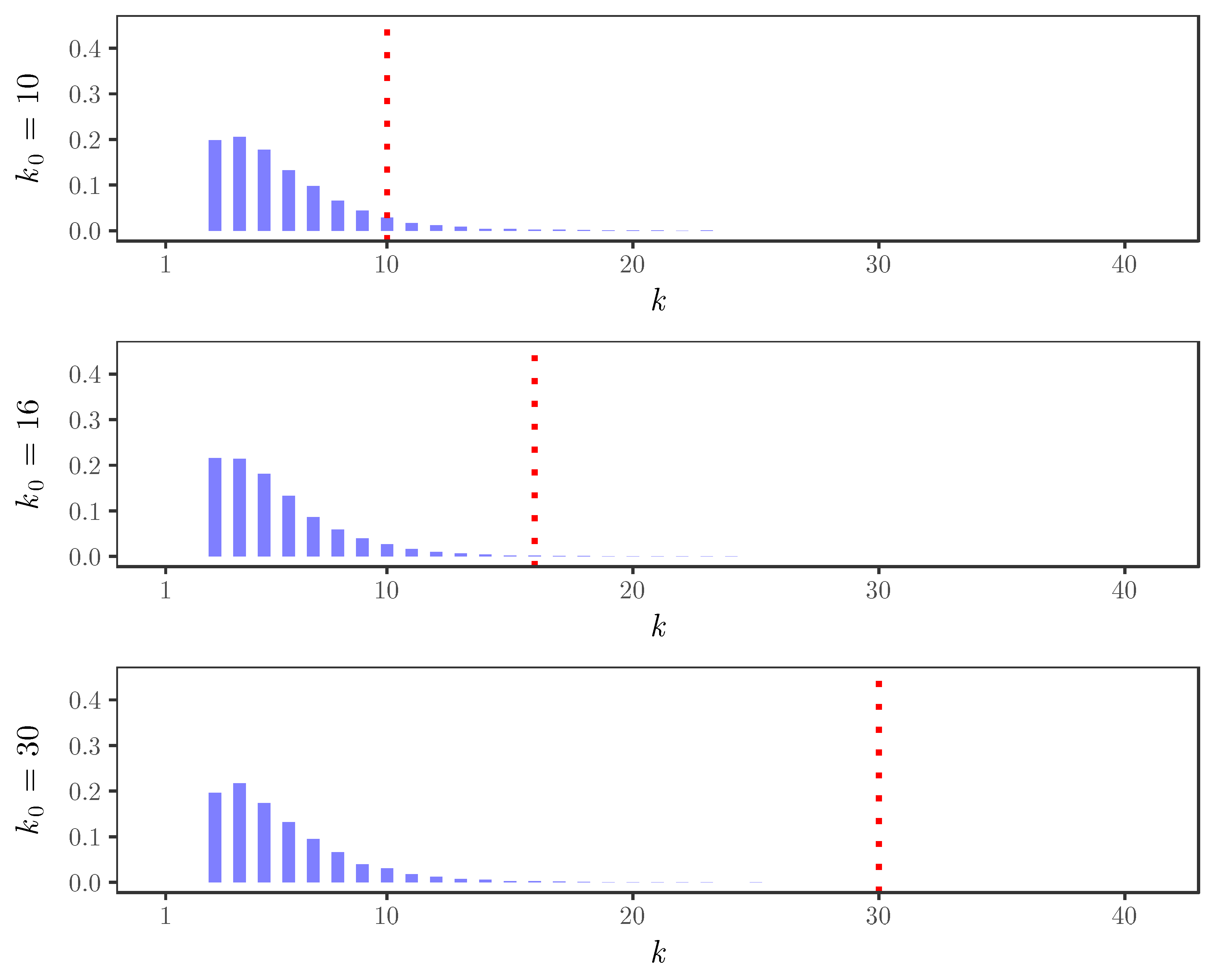

For the simulated time series,

Figure 2,

Figure 3,

Figure 4 and

Figure 5 display the behavior of the posterior distributions obtained using the elbow method for

. These figures make the relation between the choice of the value of the discount parameter

and the achieved robustness of the posterior distribution quite explicit: as we increase the value of the discount parameter

, the posterior becomes insensitive to the choice of

. In particular, for

, the posterior mode is always near 3, which is the number of components used in the distribution of the innovations of the simulated time series.

Once we understand the influence of the prior parameters on the robustness of the posterior distribution, an interesting question is how to get a point estimate for the distribution of clusters, in the sense that each , for , would be assigned to one of the available clusters.

From the Gibbs sampler, we can easily get a Monte Carlo approximation for the probabilities

, for

. These probabilities define a dissimilarity matrix

among the innovation rates. Although

D is not a distance matrix, we can use it as a starting point to represent the innovation rates in a two-dimensional Euclidean space using the technique of metric multidimensional scaling (see [

18] for a general discussion). From this two-dimensional representation, we use hierarchical clustering techniques to build a dendrogram, which is appropriately cut in order to define three clusters, allowing us to assign a single cluster label to each innovation rate.

Table 1 displays the confusion matrix of this assignment, showing that

of the innovations were grouped correctly in the clusters which correspond to the mixture components used to simulate the time series.

7. Earthquake Data

The forecasting performances of the INAR(p) and the PY-INAR(p) models are compared using a cross-validation procedure in which the models are trained with data ranging from the beginning of the time series up to a certain time, and predictions are made for epochs outside this training range.

Using this cross-validation procedure, we trained the INAR(

p) and the PY-INAR(

p) models with orders

, and made one-step-ahead predictions.

Table 2 shows the out-of-sample mean absolute errors (MAE) for the INAR(

p) and the PY-INAR(

p) models. In this table, the MAE’s are computed predicting the counts for the last 36 months. For the three model orders, the PY-INAR(

p) model yields a smaller MAE than the original INAR(

p) model.