Abstract

We describe a classifier made of an ensemble of decision trees, designed using information theory concepts. In contrast to algorithms C4.5 or ID3, the tree is built from the leaves instead of the root. Each tree is made of nodes trained independently of the others, to minimize a local cost function (information bottleneck). The trained tree outputs the estimated probabilities of the classes given the input datum, and the outputs of many trees are combined to decide the class. We show that the system is able to provide results comparable to those of the tree classifier in terms of accuracy, while it shows many advantages in terms of modularity, reduced complexity, and memory requirements.

1. Introduction

Supervised classification is at the core of many modern applications of machine learning. The history of classifiers is rich and many variants have been proposed, such as decision trees, logistic regression, Bayesian networks, and neural networks (for an overview of general methods, see [1,2,3]). Despite the power of modern deep learning, for many problems involving categorical structured datasets, decision trees [4,5,6,7] or Bayesian networks [8,9,10] usually outperform neural network based approaches.

Decision trees are particularly interesting because they can be easily interpreted. Various types of tree classifiers can be discriminated according to the metric for the iterative construction and selection of features [4]: popular tree classifiers are based on information theoretic metrics, such as ID3 and C4.5 [6,7]. However, it is known that the greedy splitting procedure at each node can be sub-optimal [11], and that decision trees are prone to overfitting when dealing with small datasets. When a classifier is not strong enough, there are, roughly speaking, two possibilities: choosing a more sophisticated classifier or ensembling multiple “weak” classifiers [12,13]. This second approach is usually called the ensemble method. In the performance tradeoff by using multiple classifiers simultaneously, we improve classification performance, paying with the loss of interpretability.

The so-called “information bottleneck”, described by Tishby and Zaslavsky [14] and Tishby et al. [15], was proposed in [16] to build a classifier (Deep Information Network, DIN) with a tree topology that compresses the input data and generates the estimated class. DINs [16] are based on the so-called information node that, using the input samples of a feature , generates samples of a new feature , according to the conditional probabilities obtained by minimizing the mutual information , with the constraint of a given mutual information between and the target/class Y (information bottleneck [14]). The outputs of two or more nodes are combined, without information loss, to generate samples of a new feature passed to a subsequent information node. The final node (root) directly outputs the class of each input datum. The tree structure of the network is thus built from the leaves, whereas C4.5 and ID3 build it from the root.

We here propose an improved implementation of the DIN scheme in [16] that only requires the propagation through the tree of small matrices containing conditional probabilities. Notice that the previous version of the DIN was stochastic, while the one we propose here is deterministic. Moreover, we use an ensemble (e.g., [12,13]) of trees with randomly permuted features and weigh their outputs to improve classification accuracy.

The proposed architecture has several advantages in terms of:

- extreme flexibility and high modularity: all the nodes are functionally equivalent and with a reduced number of inputs and outputs, which gives good opportunities for a possible hardware implementation;

- high parallelizability: each tree can be trained in parallel with the others;

- memory usage: we need to feed the network with data only at the first layer and simple incremental counters can be used to estimate the initial probability mass distribution; and

- training time and training complexity: the locality of the computed cost function allows a nodewise training that does not require any kind of information from other points of the tree apart from its feeding nodes (that are usually a very small number, e.g., 2–3).

With respect to the DINs in [16], the main difference is that samples of the random variables in the inner layers of the tree are never generated, which is an advantage in the case of large datasets. However, an assumption of statistical independence (see Section 2.3) is necessary to build the probability matrices and this might be seen as a limitation of the newly proposed method. However, experimental results (see Section 5) show that this approximation does not compromise the performance.

We underline similarities and differences of the proposed classifier with respect to the methods described in [6,7] since they are among the best performing ones. When using decision trees, as well as DINs, categorical and missing data are easily managed, but continuous random variables are not: quantization of these input features is necessary in a pre-processing phase, and it can be performed as in C4.5 [6], using other heuristics, or manually. Concerning differences, instead, the first one is that normally a hierarchical decision tree is built starting from the root and splitting at each node, whereas we here propose a way to build a tree starting from the leaves. The topology of our network implies that, once the initial ordering of the features has been set, there is no need, after each node is trained, to perform a search of the best possible next node. The second important difference is that we do not use directly mutual information as a metric for building the tree but we base our algorithm on the Information Bottleneck principle [14,15,17,18,19,20,21]. This allows us to extract all the relevant information (the sufficient statistic) while removing the redundant one, which is helpful in avoiding overfitting. As in [12,13], we use an ensemble method. We choose the simplest possible form of ensemble combination: we train independently many structurally equivalent networks, using the same single dataset but permuting the order of the features, and produce a weighted average of the outputs based on a simple rule described in Section 3.1. Notice that we use a one-shot procedure, i.e., we do not iterate more than once over the entire dataset and exploit techniques similarly to [22,23]. We leave the study of more sophisticated techniques to future works.

2. The DIN Architecture and Its Training

The information network is made of input nodes (Section 2.1), information nodes (Section 2.2), and combiners joined together through a tree network described in Section 2.3. Moreover, an ensemble of trees is built, based on which the final estimated class is produced (Section 3.1). In [16], the input nodes are not present, the information node has a slightly different role, the combiners are much simpler than those described here, and just one tree was considered. As already stated, the new version of the DIN is more efficient when a large dataset with relatively few features is analyzed.

In the following, it is assumed that all the features take a finite number of discrete values; a case of continuous random variables is discussed in Section 5.2.

It is also assumed that points are used in the training phase, points in the testing phase, and that D features are present. The nth training point corresponds to one of possible classes.

2.1. The Input Node

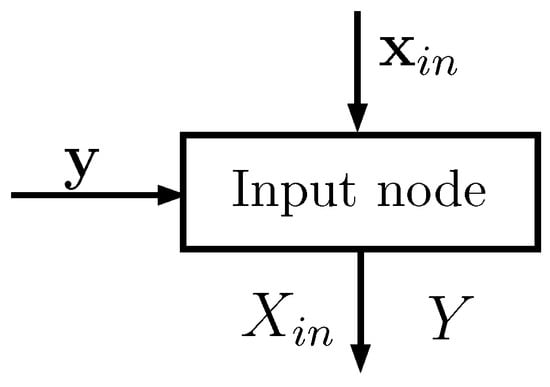

Each input node (see Figure 1) has two input vectors:

Figure 1.

Schematic representation of an input node: the inputs are two vectors and the outputs are matrices that statistically describe the random variables and Y.

- of size , whose elements take values in a set of cardinality ; corresponds to one of the D features of the dataset (typically one column)

- of size , whose elements take values in a set of cardinality ; corresponds to the known classes of the points

The notation we use in the equations below is the following: represent random variables; and are the nth elements of vectors and , respectively; and is equal to 1 if c is true, and is otherwise equal to 0. Using Laplace smoothing [2], the input node estimates the following probabilities (the probability mass function of Y in Equation (1) is common to all the input nodes: it can be evaluated only by the first one and passed to the others):

From basic application of probability rules, and are then computed. From now on, for simplicity, we denote all the estimated probabilities simply as P.

All the above probabilities can be organized in matrices defined as follows:

Note that vectors and are not needed by the subsequent elements in the tree; only the input nodes have access to them.

Notice also that the following equalities hold:

2.2. The Information Node

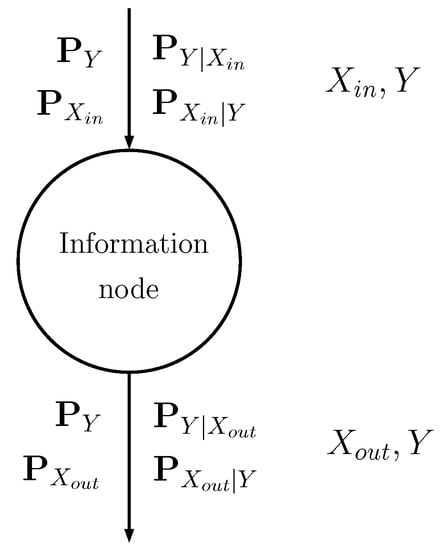

The information node is schematically shown in Figure 2: the input discrete random variable is stochastically mapped into another discrete random variable (see [16] for further details) through probability matrices:

Figure 2.

Schematic representation of an information node, showing the input and output matrices.

- The input probability matrices describe the input random variable , with possible values, and its relationship with class Y.

- The output matrices describe the output random variable , with possible values, and its relationship with Y.

Compression (source encoding) is obtained by setting .

In the training phase, the information node generates the conditional probability mass function that satisfies the following equation (see [14]):

where

- is the probability mass function of the output random variable

- is the Kullback–Leibler divergenceand

- is a real positive parameter.

- is a normalizing coefficient to get

The probabilities can be iteratively found using the Blahut–Arimoto algorithm [14,24,25].

Equation (10) solves the information bottleneck: it minimizes the mutual information under the constraint of a given mutual information . In particular, Equation (10) is the solution of the minimization of the Lagrangian

If the Lagrangian multiplier is increased, then the constraint is privileged and the information node tends to maximize the mutual information between its output and the class Y; if is reduced, then minimization of is obtained (compression). The information node must actually balance compression from to and propagation of the information about Y. In our implementation, the compression is also imposed by the fact that the cardinality of the output alphabet is smaller than that of the input alphabet .

The role of the information node is thus that of finding the conditional probability matrices

2.3. The Combiner

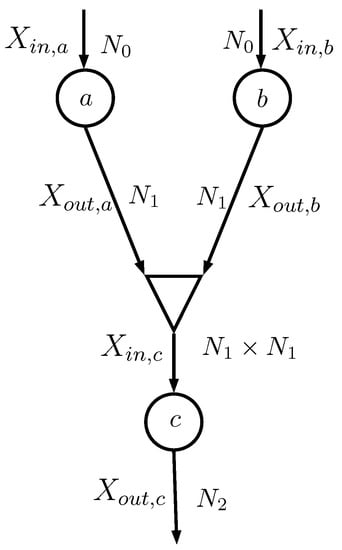

Consider the case depicted in Figure 3, where the two information nodes a and b feed a combiner (shown as a triangle) that generates the input of the information node c. The random variables and , both having alphabet with cardinality , are combined together as

that has an alphabet with cardinality .

Figure 3.

Sub-network: , , , , , and are all random variables; is the number of values taken by and ; is the number of values taken by and ; and is the number of values taken by .

The combiner actually does not generate ; it simply evaluates the probability matrices that describe and Y. In particular, the information node c needs , which can be evaluated assuming that and are conditionally independent given Y (notice that in implementation [16] this assumption was not necessary):

where . In particular, the mth row of is the Kronecker product of the mth rows of and

(here identifies the mth row of matrix ). The probability vector can be evaluated considering that

so that

At this point, matrix can be evaluated element by element since

It is straightforward to extend the equations to the case in which and have different cardinalities.

2.4. The Tree Architecture

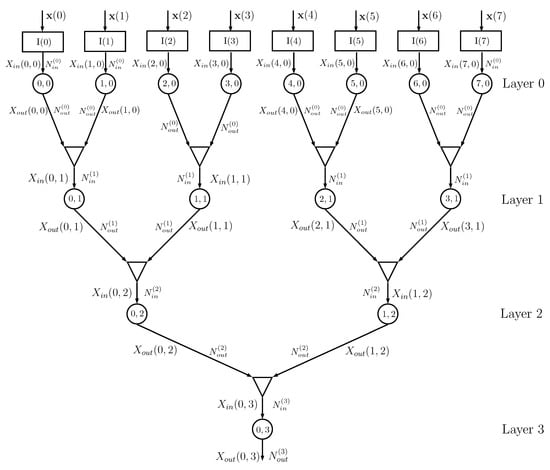

Figure 4 shows an example of a DIN, where we assume that the dataset has features and that training is thus obtained using a matrix with rows and columns, with a corresponding class vector . The kth column of matrix feeds, together with vector , the input node , .

Figure 4.

Example of a DIN for : the input nodes are represented as rectangles, the info nodes as circles, and the combiners as triangles. The numbers inside each circle identify the node (position inside the layer and layer number), is the number of values taken by the input of the info node at layer k, and is the number of values taken by the output of the info node at layer k. In this example, the info nodes at a given layer all have the same input and output cardinalities.

Information node at layer 0 processes the probability matrices generated by the input node , with possible values of , and evaluates the conditional probability matrices with possible values of , using the algorithm described in Section 2.2. The outputs of info nodes and are given to a combiner that outputs the probability matrices for , having alphabet of cardinality , using the equations described in Section 2.3. The sequence of combiners and information nodes is iterated, decreasing the number of information nodes from layer to layer, until the final root node is obtained. In the previous implementation of the DINs in [16], the root information node outputs the estimated class of the input and it is therefore necessary that the output cardinality of the root info node is equal to . In the current implementation, this cardinality can be larger than , since classification is based on the output probability matrix .

For a number of features , the number of layers is d. If D is not a power of 2, then it is possible to use combiners with 3 or more inputs (the changes in the equations in Section 2.3 are straightforward, since a combiner with three inputs can be seen as two cascaded combiners with two inputs each).

The overall binary topology proposed in Figure 4 requires a number of information nodes equal to

and a number of combiners equal to

All the info nodes run exactly the same algorithm and all the combiners are equal, apart from the input/output alphabet cardinalities. If the cardinalities of the alphabets are all equal, i.e., and do not depend on the layer i, then all the nodes and all the combiners are exactly equal, which might help in a possible hardware implementation; in this case, the number of parameters of the network is .

Actually, the network performance depends on how the features are coupled in subsequent layers and a random shuffling of the columns of matrix provides results that might be significantly different. This property is used in Section 3.1 for building the ensemble of networks.

2.5. A Note on Computational Complexity and Memory Requirements

The modular structure of the proposed method has several advantages in terms of both memory footprint and computational cost. The considered topology in this explanation is binary, similarly to what is depicted in Figure 4. We furthermore consider for simplicity cardinalities of the D input features all equal to and input/output cardinalities of subsequent layers information node to also be fixed constants and , respectively. As we show in the experiment (Section 5), small values for and such as 2, 3, or 4 are sufficient in the considered cases. Straightforward generalizations are possible when considering inhomogeneous cases.

At the first layer (the input node layer), each of the D input nodes stores the joint probabilities of the target variable Y and its input feature. Each node thus includes a simple counter that fills the probability matrix of dimension . Both the computational cost and the memory requirements for this first stage are the same as the Naive Bayes algorithm. Notice that, from the memory requirements point of view, it is not necessary to store all the training data but just counters with number of joint occurrences of features/classes. If after training, new data are observed, it is in fact sufficient to update the counters and properly renormalize the values to obtain the updated probability matrices. In this paper, we do not cover the topic of online learning as well as possible strategies to reduce the computational complexity in such a scenario.

At the second layer (the first information node layer), each node receives as input the joint probability matrix of feature and target variable and performs the Blahut–Arimoto algorithm. The internal memory requirement of this node is the space needed to store two probability matrices of dimensions and , respectively. The cost per iteration of Blahut–Aritmoto depends on matrix multiplication of sizes and , and thus obviously the complexity scales with the number of classes of the considered classification problem. To the best of our knowledge, the convergence rate for the Blahut–Arimoto algorithm applied to information bottleneck problems is unknown. In this study, however, we found empirically that, for the considered datasets, 5–6 iterations per node are sufficient, as discussed in Section 5.5.

Each combiner process the matrices generated by two information nodes: the memory requirement is zero and the computational cost is roughly Kronecker products between rows of probability matrices. Since for ease of explanation we chose the output probability matrix have again dimensions .

The overall memory requirement and computational complexity (for a single DIN) is thus going to scale as D times the requirements for an input node, times the requirements for an information node, and times the requirements for a combiner. To complete the discussion, we have to remember that a further multiplication factor of is required to take into account that we are considering an ensemble of networks (actually, at the first layer, the set of input nodes can be shared by the different architectures since only the relative position of the input nodes changes, see Section 3.1).

3. The Running Phase

During the running phase, the columns of matrix with N rows and D columns are used as inputs. Assume again that the network architecture is that depicted in Figure 4 with , and consider the nth input row .

In particular, assume that and . Then,

- (a)

- input node passes value i to info node ;

- (b)

- input node passes value j to info node ;

- (a)

- info node passes the probability vector (ith row) to the combiner; stores the conditional probabilities for ;

- (b)

- info node passes the probability vector (jth row) to the combiner; stores the conditional probabilities for ;

- the combiner generates vectorwhich stores the conditional probabilities for , where ;

- info node generates the probability vectorwhich stores the conditional probabilities for

- in the following layer, each combiner performs the Kronecker product of its two input vectors and each info node performs the product between the input vector and its conditional probability matrix ;

- the root information node at Layer 3, having the input vector , outputswhich stores the estimated probabilities for .According to the MAP criterion, the estimated class of the input point isbut we propose to use an improved method, as described in Section 3.1.

3.1. The DIN Ensemble

At the end of the training phase, when all the conditional matrices have been generated in each information node and combiner, the network is run with input matrix ( rows and D columns) and the probability vector is obtained for each input point . As anticipated at the end of Section 2.4, the DIN classification accuracy depends on how the input features are combined together. By permuting the columns of , a different probability vector is typically obtained. We thus propose to generate an ensemble of DINs by randomly permuting the columns of , and then combine their outputs.

Since in the training phase is known, it is possible to get for each DIN v the probability , and ideally , the estimated probability corresponding to the true class , should be equal to one. The weights

thus represent the reliability of the vth DIN.

In the running phase, feeding the machines each with the correctly permuted vector , the final estimated probability vector is determined as

and the estimated class is

4. The Probabilistic Point of View

This section is intended to underline the difference in probability terms formulation between the Naive Bayes classifier [2,26] and the proposed scheme, since both use the assumption of conditional independence of the input features. Both classifiers build in a simplified way the probability matrix with rows and , where is the cardinality for the input feature . In the next sections, we show the different structure of these two probability matrices.

4.1. Assumption of Conditionally Independent Features

The Naive Bayes assumption allows writing the output estimated probability of the Naive Bayes classifier as follows:

which is very easily implemented, without the need of generating the tree network. We rewrite this output probability in a fairly complex way to show the difference between the naive Bayes probability matrix and the DIN one. Consider the nth feature , which can take values in the set . Define ; then,

and thus obviously

We can write the joint probability matrix as

and the probability matrix of target class given observation as

The hypothesis of conditional statistical independence of the features is not always correct and thus we can incur obvious performance degradation.

4.2. The Overall Probability Matrix

We now instead compute the output estimated probability for the DIN classifier. Consider again the sub-network in Figure 3 made of info nodes a, b, and c. Info node a is characterized by matrix , whose element is ; similar definitions hold for and . Note that and have rows and columns, whereas has rows and columns; the overall probability matrix between the inputs , and the output is with rows and columns. Then,

It can be shown that

where ⊗ identifies the Kronecker matrix multiplication; note that has rows and columns. By iteratively applying the above rule, we can get the expression of the overall matrix for the exact topology of Figure 4, with eight input nodes and four layers:

The overall output probability matrix can finally be computed as

The DIN then behaves as a one-layer system that generates the output according to matrix , whose size might be impractically large. It is also possible to interpret the system as a sophisticated way of factorizing and approximating the exponentially large true probability matrix. In fact, the proposed layered structure needs smaller probability matrices, which makes the system computationally efficient. The equivalent probability matrix is thus different in the DIN (Equation (42)) and Naive Bayes (Equation (38)) cases.

5. Experiments

In this section, we analyze the results obtained with benchmark datasets. In particular, we consider the DIN ensemble when: (a) each DIN is based on the probability matrices (the scheme described in this paper); and (b) each information node of the DIN randomly generates the symbols, as described in the previous work [16]. We refer to these two variants in captions and labels as DIN(Prob) and DIN(Gen), respectively. The reason for this comparison is that conditional statistical independence is not required in the case DIN(Gen), and the classification accuracy could be different in the two cases. Note that Franzese and Visintin [16] considered just one DIN, not an ensemble of DINs. In the following, we introduce three datasets on which we tested the method (Section 5.1, Section 5.2 and Section 5.3) and propose some examples of DINs architectures. Complete analysis of numerical results is described in Section 5.4. Section 5.5 and Section 5.6 analyze the impact of changing the maximum number of iterations of Blahut–Arimoto algorithm and Lagrangian coefficient , respectively. Finally, a synthetic multiclass experiment is described in Section 5.7. In all experiments, the value of was optimized similarly to what is described in Section 5.6 using the training set.

5.1. UCI Congressional Voting Records Dataset

The first experiment on real data was conducted on the UCI Congressional Voting Records dataset [27], which collects the votes given by each of the U.S. House of Representatives Congressmen on 16 key laws (in 1985). Each vote can take three values corresponding to (roughly, see [27] for more details) yes, no, and missing value; each datum belongs to one of two classes (Democrats or Republican). The aim of the network is, given the list of 16 votes, decide if the voter is Republican or Democratic. In this dataset, we thus have features and 435 data split into data for training and data for testing. The architecture of the used network is the same as the one described in Section 2.4, except for the fact that there are 16 input features instead of 8 (the network has thus one more layer). The input cardinality in the first layer is (yes/no/missing) and the output cardinality is set to . From the second layer on, the input cardinality for each information node is equal to and . In the majority of the cases, the size of the probability matrices is therefore or . In this example, we used and (roughly 50% of the data). The value of was set to 2.2.

5.2. UCI Kidney Disease Dataset

The second considered dataset was the UCI Kidney Disease dataset [28]. The dataset has a total of 24 medical features, consisting of mixed categorical, integer, and real values, with missing values. Quantization of non-categorical features of the dataset was performed according to the thresholds in Appendix A, agreed upon by a medical doctor.

The aim of the experiment is to correctly classify patients affected by chronic kidney disease. We performed 100 different trials training the algorithms using only out of 400 samples for the training. Layer zero has 24 input nodes, and then the outputs of layer zero are mixed two at a time to get 12 information nodes at Layer 1, 6 at Layer 2, and 3 at Layer 3; the last three nodes are combined into a unique final node. The output cardinality of all nodes is equal to . The value of was set equal to 5.6. In addition, in this case, we used an ensemble of DINs.

5.3. UCI Mushroom Dataset

The last considered dataset was the UCI Mushroom dataset [29]. This dataset is comprised of 22 categorical features with different cardinalities, which describe some properties of mushrooms, and one target variable that defines whether the considered mushroom is edible or poisonous/unsafe. There are 8124 entries in the dataset. We padded the dataset with two null features to reach the cardinality of 24 and used exactly the same architecture as the kidney disease experiment. We selected , , and number of DINs equal to .

5.4. Misclassification Probability Analysis

We hereafter report results in terms of misclassification probability between the proposed method and several classification methods implemented using MATLAB® Classification Learner. All datasets were randomly split 100 times into training and testing subsets, thus generating 100 different experiments. The proposed method shows competitive results in the considered cases, as can be observed in Table 1. It is interesting to compare in terms of performance the proposed algorithm with respect to the Naive Bayes classifier, i.e., Equation (34), and the Bagged Tree algorithm, which is the closest algorithm (conceptually) to the one we propose. In general, the two variants of the DINs perform similarly to the Bagged Trees, while outperforming Naive Bayes. For Bagged Trees and KNN-Ensemble, the same number of learners as DIN ensembles were used.

Table 1.

Mean misclassification probability (over 100 random experiments) for the three datasets with the considered classifiers.

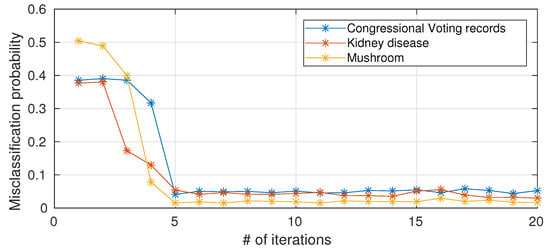

5.5. The Impact of Number of Iterations of Blahut–Arimoto on The Performance

As anticipated in Section 2.5, the computational complexity of a single node scales with the number of iterations of Blahut–Arimoto algorithm. To the best of our knowledge, a provable convergence rate for the Blahut–Arimoto algorithm in the information nottleneck setting does not exist. We hereafter (Figure 5) present empirical results on the impact of limiting the number of iterations of Blahut–Arimoto algorithm (for simplicity, the same bound is applied to all nodes in the networks). When the number of iterations is too small, there is a drastic decrease in performance because the probability matrices in the information nodes have not yet converged, while 5–6 iterations are sufficient and a further increase in the number of iterations is not necessary in terms of performance improvements.

Figure 5.

Misclassification probability versus number of iterations (average over 10 different trials) for the considered UCI datasets.

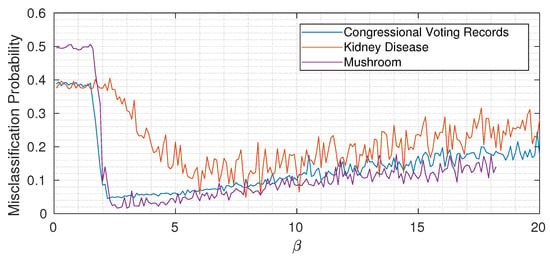

5.6. The Role of : Underfitting, Optimality, and Overfitting

As usual with almost all machine learning algorithms, the choice of hyperparameters is of fundamental importance. For simplicity, in all experiments described in the previous sections, we kept the value of constant through the network. To gain some intuition, Figure 6 shows the misclassification probability for different for the three considered datasets (each time keeping constant through the network). While the three curves are quantitatively different, we can notice the same qualitative trend: when is too small, not enough information about the target variable is propagated, and then by increasing above a certain threshold, the misclassification probability drops. Increasing too much however induces overfitting, as expected, and the classification error (slowly) increases again. Remember (from Equation (15)) that the Lagrangian we are minimizing is

Information theory tells us that at every information node we should propagate only the sufficient statistic about the target variable Y. In practice, this is reflected in the role of : when it is too small, we neglect the term and just minimize (that corresponds to underfitting), while increasing allows passing more information about the target variable through the bottleneck. It is important to remember, however, that we do not have direct access to the true mutual information values but just to an empirical estimate based on a finite dataset. Especially when the cardinalities of inputs and outputs are high, this translates into an increased probability of spotting spurious correlations that, if learned by the nodes, induce overfitting. The overall message is that has an extremely important role in the proposed method, and its value should be chosen to modulate between underfitting and overfitting.

Figure 6.

Misclassification probability versus (average over 20 different trials) for the considered UCI datasets.

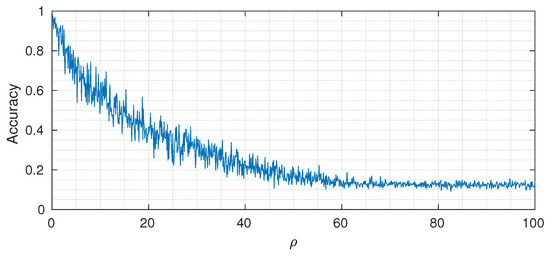

5.7. A Synthetic Multiclass Experiment

In this section we present results on a multiclass synthetic dataset. We generated 64-dimensional feature vectors drawn from multivariate Gaussian distributions with mean and covariance depending on a target class y and a control parameter :

where for the considered experiment . The mean is sampled from a normal 64-dimensional random vector and is randomly generated as (where is sampled from a matrix normal distribution) and normalized to have unit norm. The other parameter is inserted to modulate the signal to noise ratio of the generated samples: a smaller value of corresponds to smaller feature variances and more distinct, less overlapping, pdfs , and an easier classification task. We then perform quantization of the result using 1 bit, i.e., the input of the ensemble of DINs is the following random vector:

where is the Heaviside step operator. The designed architecture has at the first layer 64 input nodes, followed by 32, 16, 4, 2, and 1. The output cardinalities are equal to 2 for the first three layers, 4 for the fourth and fifth layer, and 8 at the last layer. We selected , (constant trough the network), and number of DINs equal to . Figure 7 shows the classification accuracy (on a test set of 1000 samples) for different values of . As expected, when the value of is small, we can reach almost perfect classification accuracy, whereas, by increasing it, the performance drops to the point where the useful signal is completely buried in noise and the classification accuracy reaches the asymptotic level of (that corresponds to random guessing when the number of classes is equal to 8).

Figure 7.

Varying of classification accuracy for different values of control parameter .

6. Conclusions

The proposed ensemble Deep Information Network (DIN) shows good results in terms of accuracy and represents a new simple, flexible, and modular structure. The required hyperparameters are the cardinality of the alphabet at the output of each information node, the value of the Lagrangian multiplier , and the structure of the tree itself (number of input information nodes of each combiner).

Simplistic architecture choices made for the experiments (such as equal cardinality of all node outputs, constant through the network, etc.) performed comparably to finely tuned networks. However, we expect that, similar to what happened in neural network applications, a domain specific design of the architectures will allow for consistent improvements in terms of performance on complex datasets.

Despite the local assumption of conditionally independent features, the proposed method always outperforms Naive Bayes. As discussed in Section 4, the induced equivalent probability matrix is different in the two cases. Intuitively, we can understand the difference in performance under the point of view of probability matrix factorization. On the one side, we have the true, exponentially large, joint probability matrix of all features and target class. On the other side, we have the Naive Bayes one, which is extremely simple in terms of complexity but obviously less performing. In between, we have the proposed method, where the complexity is still reasonable but the quality of the approximation is much better. The DIN(Gen) algorithm does not require the assumption of statistical independence, but the classification accuracy is very close to that of DIN(Prob), which further suggests that the assumption can be accepted from a practical point of view.

The proposed method leaves open the possibility of devising a custom hardware implementation. Differently from classical decision trees, in fact, the execution times of all branches as well as the precise number of operations is fixed per datum and known a priori, helping in various system design choices. In fact, with classical trees, where a node’s utilization depends on the datum, we are forced to design the system for the worst case, even if in the vast majority of time not all nodes are used. Instead, with DIN, there is no such a problem.

Finally, a clearly open point is related to the quantization procedure of continuous random variables. One possible self-consistent approach could be devising an information bottleneck based method (similar to the method for continuous random variables [20]).

Further studies on extremely large datasets will help understand principled ways of tuning hyperparameters and architecture choices and their relationship on performance.

Author Contributions

Conceptualization, G.F. and M.V.; methodology, G.F. and M.V.; software, G.F. and M.V.; validation, G.F. and M.V.; formal analysis, G.F. and M.V.; investigation, G.F. and M.V.; resources, G.F. and M.V.; data curation, G.F. and M.V.; writing–original draft preparation, G.F. and M.V.; writing–review and editing, G.F. and M.V.; visualization, G.F. and M.V.; All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Acknowledgments

A special thank to MD Gabriella Olmo who suggested a quantization of the continuous values of the features in the experiment in Section 5.2, which is correct from a medical point of view.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix A. Quantization

Hereafter, we present the quantization scheme used for the numerical features of chronic kidney disease dataset.

- Age (Years)

- Blood (mm/Hg)

- Blood Glucose Random (mg/dl)

- Blood Urea (mg/dl)

- Serum Creatinine (mg/dl)

- Sodium (mEq/l)

- Potassium (mEq/l)

- Haemoglobin (gm)

- Packed Cell Volume

- White Blood Cell Count (cells/mm)

- Red Blood Cell (millions/mm)

References

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements Of Statistical Learning; Springer: Berlin/Heidelberg, Germany, 2001. [Google Scholar] [CrossRef]

- Murphy, K. Machine Learning: A Probabilistic Perspective; The MIT Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Bergman, M.K. A Knowledge Representation Practionary; Springer: Basel, Switzerland, 2018. [Google Scholar]

- Rokach, L.; Maimon, O.Z. Data Mining with Decision Trees: Theory and Applications; World Scientific: Singapore, 2008; Volume 69. [Google Scholar]

- Quinlan, J.R. Induction of decision trees. Mach. Learn. 1986, 1, 81–106. [Google Scholar] [CrossRef]

- Quinlan, J. C4.5: Programs for Machine Learning; Morgan Kaufmann: Burlington, MA, USA, 1993. [Google Scholar]

- Quinlan, J. Improved Use of Continuous Attributes in C4.5. J. Artif. Intell. Res. 1996, 4, 77–90. [Google Scholar] [CrossRef]

- Pearl, J. Probabilistic Reasoning in Intelligent Systems: Networks of Plausible Inference; Elsevie: Burlington, MA, USA, 2014. [Google Scholar]

- Barber, D. Bayesian Reasoning and Machine Learning; Cambridge University Press: Cambridge, UK, 2012. [Google Scholar]

- Jensen, F.V. Introduction to Bayesian Networks; UCL Press: London, UK, 1996; Volume 210. [Google Scholar]

- Norouzi, M.; Collins, M.; Johnson, M.A.; Fleet, D.J.; Kohli, P. Efficient Non-greedy Optimization of Decision Trees. In Proceedings of the 28th International Conference on Neural Information Processing Systems, Montreal, QC, Canada, 7–12 December 2015; pp. 1729–1737. [Google Scholar]

- Breiman, L. Bagging Predictors. Mach. Learn. 1996, 24, 123–140. [Google Scholar] [CrossRef]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Tishby, N.; Zaslavsky, N. Deep Learning and the Information Bottleneck Principle. arXiv 2015, arXiv:1503.02406v1. [Google Scholar]

- Tishby, N.; Pereira, F.; Bialek, W. The Information Bottleneck method. arXiv 2000, arXiv:physics/0004057v1. [Google Scholar]

- Franzese, G.; Visintin, M. Deep Information Networks. arXiv 2018, arXiv:1803.02251v1. [Google Scholar]

- Slonim, N.; Tishby, N. Agglomerative information bottleneck. In Proceedings of the 12th International Conference on Neural Information Processing Systems, Denver, CO, USA, 29 November–4 December 1999; pp. 617–623. [Google Scholar]

- Still, S. Information bottleneck approach to predictive inference. Entropy 2014, 16, 968–989. [Google Scholar] [CrossRef]

- Still, S. Thermodynamic cost and benefit of data representations. arXiv 2017, arXiv:1705.00612. [Google Scholar]

- Chechik, G.; Globerson, A.; Tishby, N.; Weiss, Y. Information bottleneck for Gaussian variables. J. Mach. Learn. Res. 2005, 6, 165–188. [Google Scholar]

- Gedeon, T.; Parker, A.E.; Dimitrov, A.G. The mathematical structure of information bottleneck methods. Entropy 2012, 14, 456–479. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R. A short introduction to boosting. Jpn. Soc. Artif. Intell. 1999, 14, 1612. [Google Scholar]

- Chen, T.; Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd ACM Sigkdd International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

- Arimoto, S. An algorithm for computing the capacity of arbitrary discrete memoryless channels. IEEE Trans. Inf. Theory 1972, 18, 14–20. [Google Scholar] [CrossRef]

- Blahut, R. Computation of channel capacity and rate-distortion functions. IEEE Trans. Inf. Theory 1972, 18, 460–473. [Google Scholar] [CrossRef]

- Hand, D.J.; Yu, K. Idiot’s Bayes—Not so stupid after all? Int. Stat. Rev. 2001, 69, 385–398. [Google Scholar]

- UCI Machine Learning Repository, University of California, Irvine, School of Information and Computer Sciences. Available online: http://archive.ics.uci.edu/ml (accessed on 30 September 2010).

- Salekin, A.; Stankovic, J. Detection of chronic kidney disease and selecting important predictive attributes. In Proceedings of the IEEE International Conference on Healthcare Informatics (ICHI), Chicago, IL, USA, 4–7 October 2016; pp. 262–270. [Google Scholar]

- Duch, W.; Adamczak, R.; Grąbczewski, K. Extraction of logical rules from neural networks. Neural Process. Lett. 1998, 7, 211–219. [Google Scholar] [CrossRef]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).