Convergence Behavior of DNNs with Mutual-Information-Based Regularization

Abstract

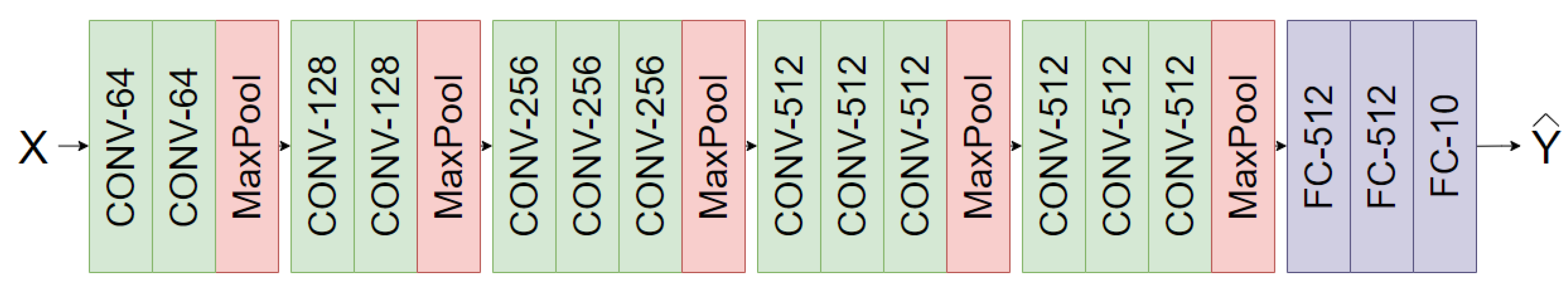

1. Introduction

2. The Information Bottleneck in DNNs

3. Non-Parametric Estimation of Mutual Information

3.1. Activation Binning

3.2. Non-Parametric Kernel Density Estimation

3.3. Rényi’s -Entropy

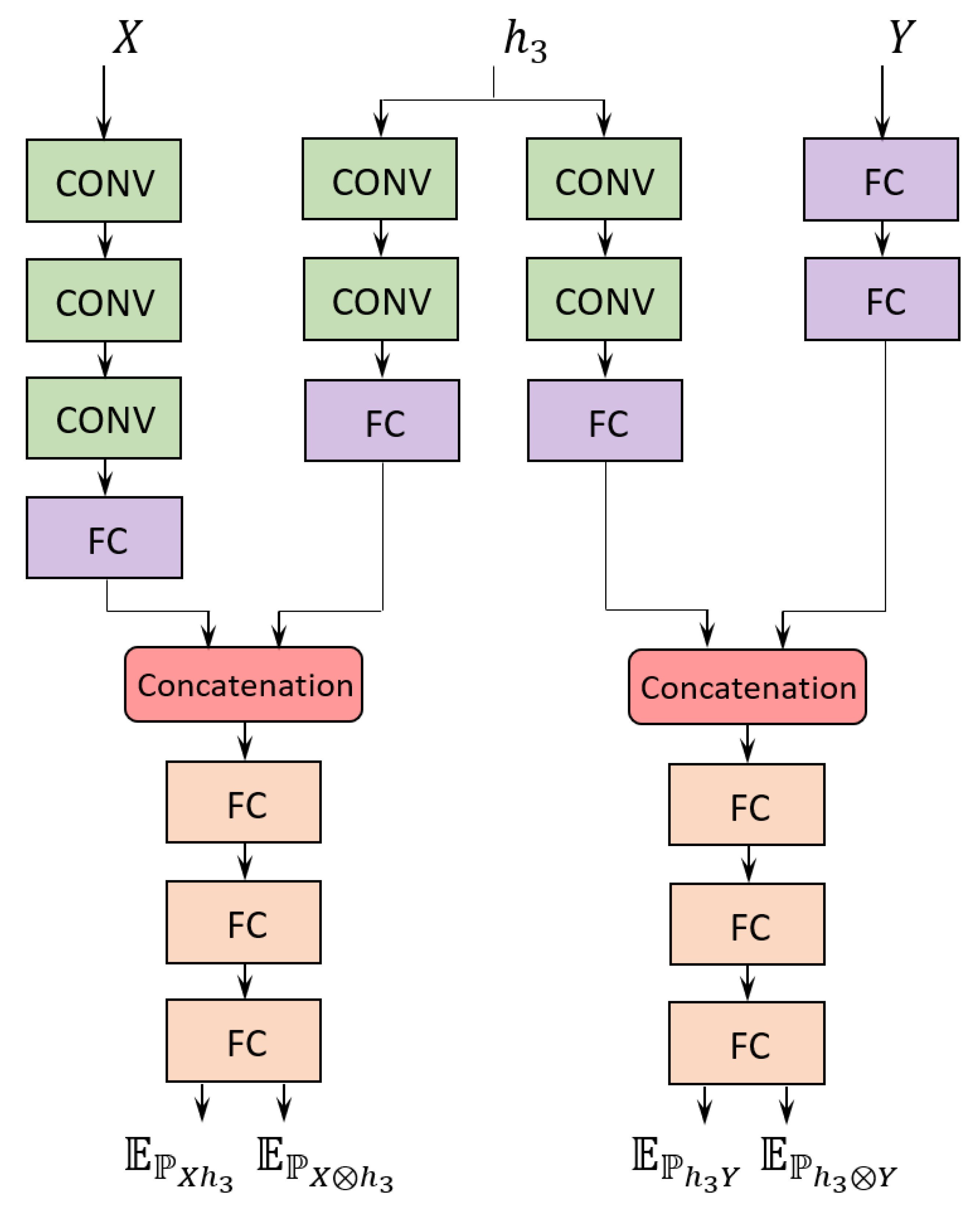

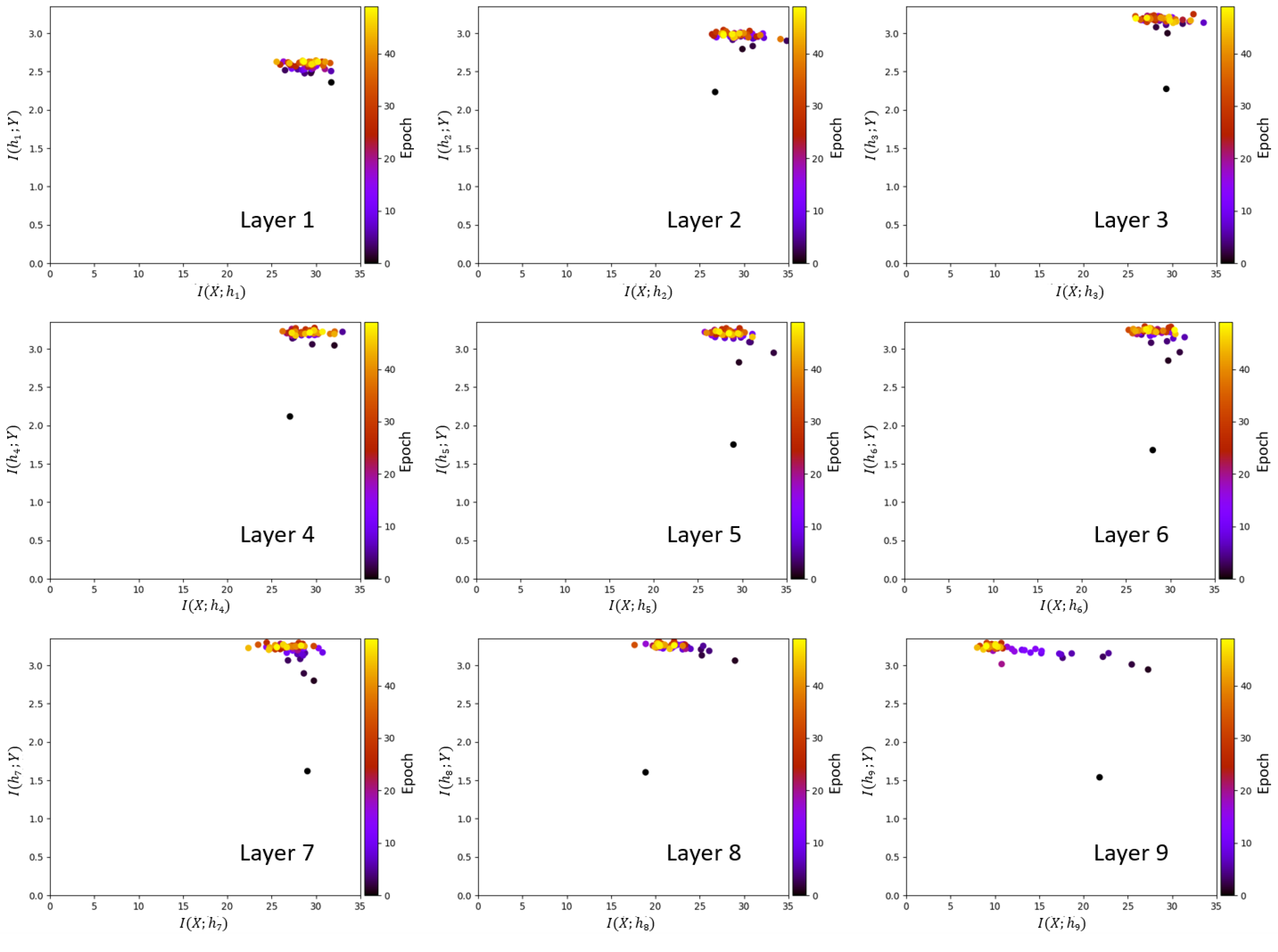

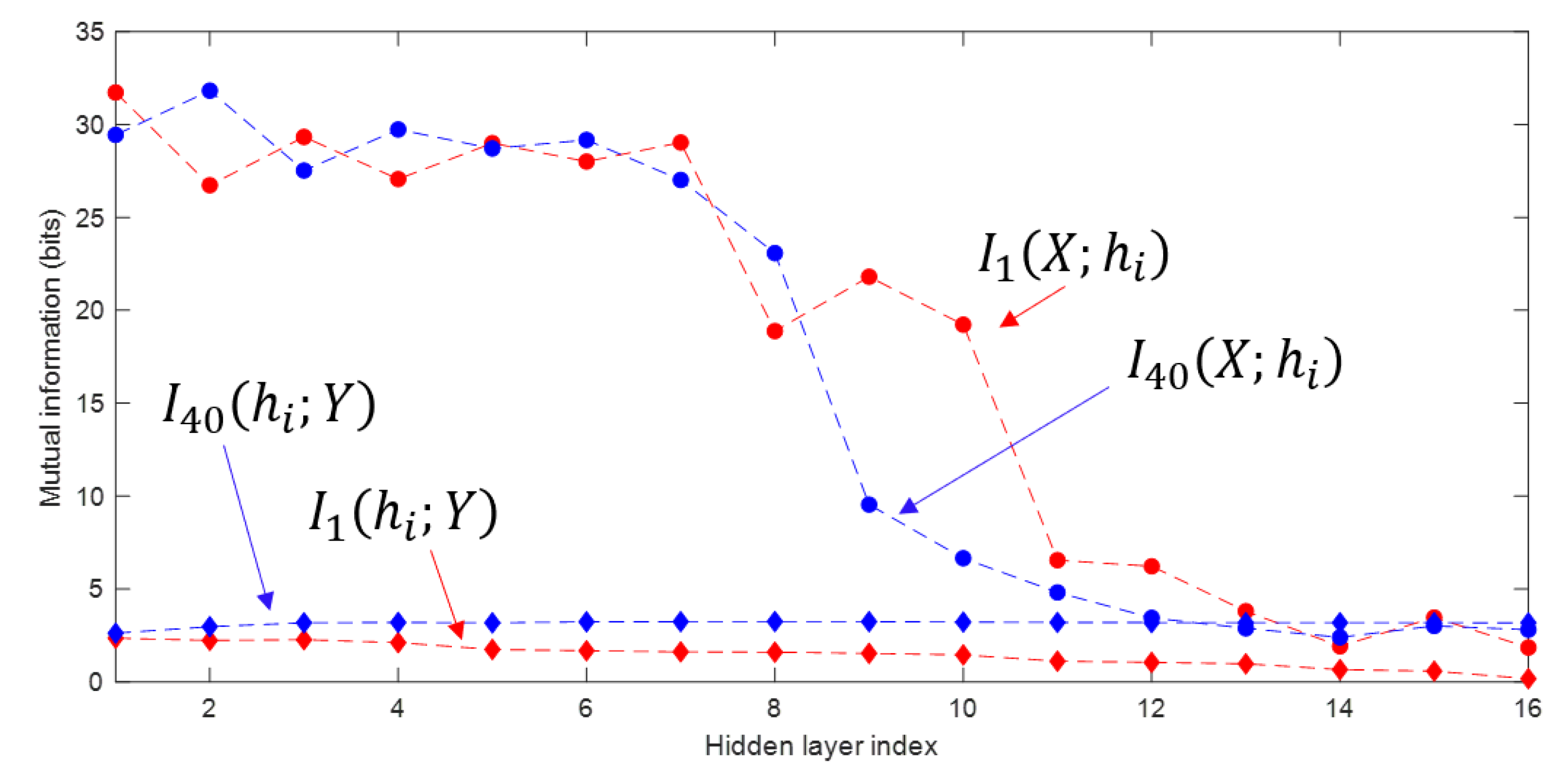

4. DNN Convergence Analysis Using MINE

4.1. Visualization of the Information Plane

5. Long-Term DNN Regularization

5.1. MINE-Based Regularization

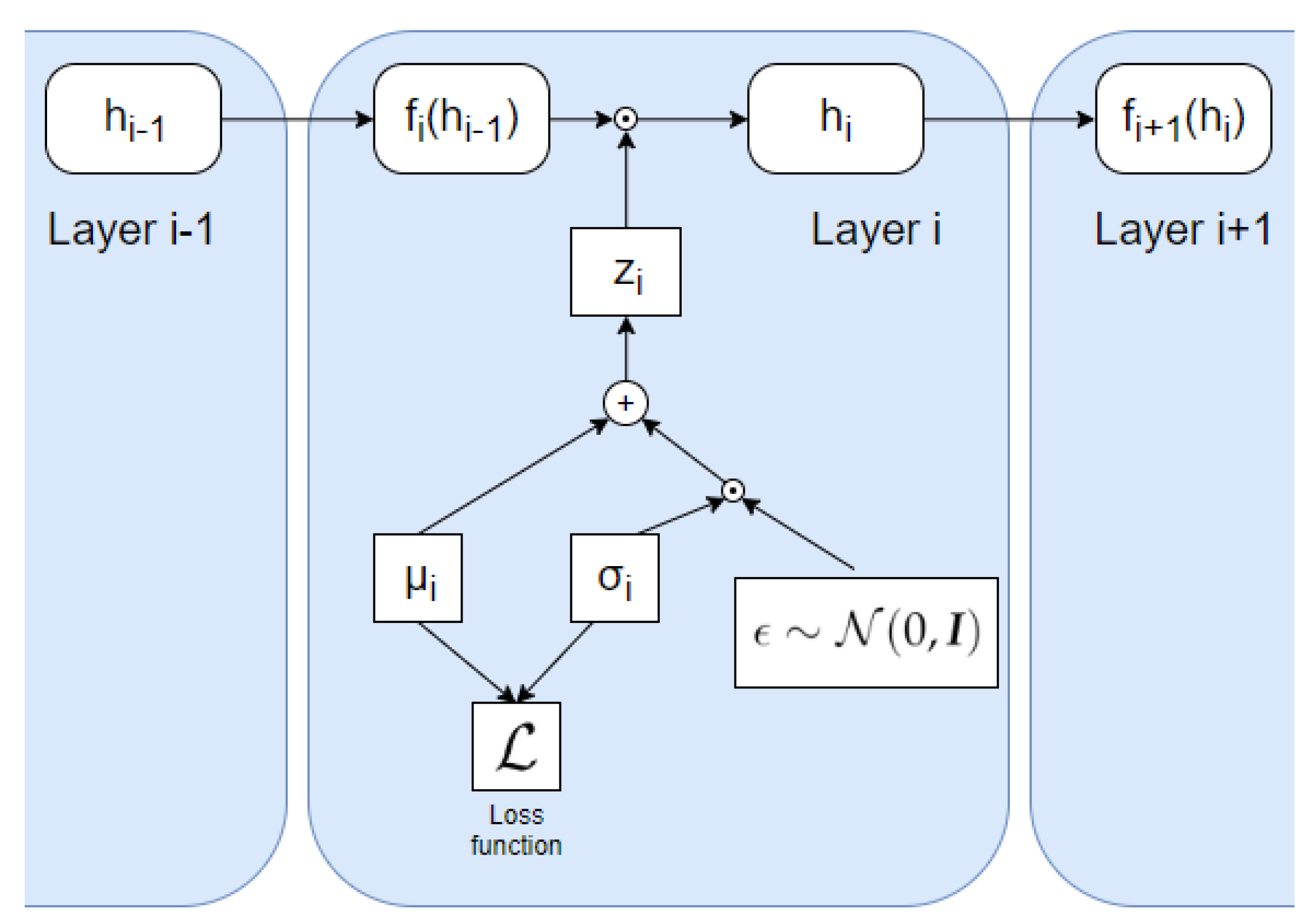

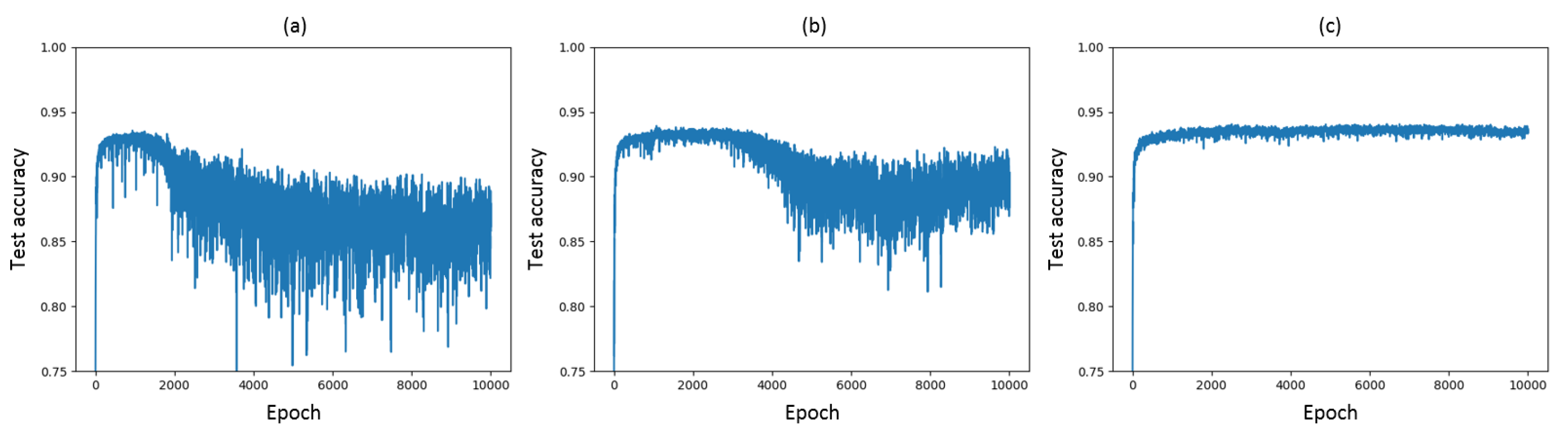

5.2. VIB-based Regularization

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| DNN | Deep Neural Network |

| CNN | Convolutional Neural Network |

| MINE | Mutual Information Neural Estimation |

| ReLU | Rectified Linear Unit |

| VGG | Visual Geometry Group |

| IB | Information Bottleneck |

| KL | Kullback–Leibler |

| DPI | Data Processing Inequality |

| CIFAR | Canadian Institute for Advanced Research |

| CONV | Convolutional |

| FC | Fully Connected |

| MaxPool | Maximum Pooling |

| VIB | Variational Information Bottleneck |

References

- Tishby, N.; Zaslavsky, N. Deep learning and the information bottleneck principle. In Proceedings of the 2015 IEEE Information Theory Workshop (ITW), Jerusalem, Israel, 26 April–1 May 2015; pp. 1–5. [Google Scholar]

- Shwartz-Ziv, R.; Tishby, N. Opening the black box of deep neural networks via information. arXiv 2017, arXiv:1703.00810. [Google Scholar]

- Yu, S.; Jenssen, R.; Principe, J.C. Understanding convolutional neural network training with information theory. arXiv 2018, arXiv:1804.06537. [Google Scholar]

- Saxe, A.M.; Bansal, Y.; Dapello, J.; Advani, M.; Kolchinsky, A.; Tracey, B.D.; Cox, D.D. On the information bottleneck theory of deep learning. J. Stat. Mech. Theory Exp. 2019, 12, 124020. [Google Scholar] [CrossRef]

- Amjad, R.A.; Geiger, B.C. Learning representations for neural network-based classification using the information bottleneck principle. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 41, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Geiger, B.C. On Information Plane Analyses of Neural Network Classifiers—A Review. arXiv 2020, arXiv:2003.09671. [Google Scholar]

- Goldfeld, Z.; van den Berg, E.; Greenewald, K.H.; Melnyk, I.; Nguyen, N.; Kingsbury, B.; Polyanskiy, Y. Estimating information flow in deep neural networks. arXiv 2014, arXiv:1810.05728v3. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Belghazi, M.I.; Baratin, A.; Rajeswar, S.; Ozair, S.; Bengio, Y.; Courville, A.; Hjelm, R.D. MINE: Mutual information neural estimation. arXiv 2018, arXiv:1801.04062. [Google Scholar]

- Alemi, A.A.; Fischer, I.; Dillon, J.V.; Murphy, K. Deep variational information bottleneck. arXiv 2016, arXiv:1612.00410. [Google Scholar]

- Achille, A.; Soatto, S. Information dropout: Learning optimal representations through noisy computation. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 2897–2905. [Google Scholar] [CrossRef] [PubMed]

- Tishby, N.; Pereira, F.C.; Bialek, W. The information bottleneck method. arXiv 2000, arXiv:physics/0004057. [Google Scholar]

- Kolchinsky, A.; Tracey, B.D.; Van Kuyk, S. Caveats for information bottleneck in deterministic scenarios. In Proceedings of the International Conference on Learning Representations (ICLR), New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory; John Wiley & Sons: Hoboken, NJ, USA, 2012. [Google Scholar]

- Krizhevsky, A. Learning Multiple Layers of Features from Tiny Images. Master’s Thesis, University of Toronto, Toronto, ON, Canada, 2009. [Google Scholar]

- Nair, V.; Hinton, G.E. Rectified Linear Units Improve Restricted Boltzmann Machines. In Proceedings of the 27th International Conference on Machine Learning, Haifa, Israel, 21–24 June 2010; pp. 807–814. [Google Scholar]

- Kolchinsky, A.; Tracey, B.D.; Wolpert, D.H. Nonlinear information bottleneck. arXiv 2017, arXiv:1705.02436. [Google Scholar]

- Wickstrøm, K.; Løkse, S.; Kampffmeyer, M.; Yu, S.; Principe, J.; Jenssen, R. Information plane analysis of deep neural networks via matrix-based Renyi’s entropy and tensor kernels. arXiv 2019, arXiv:1909.11396. [Google Scholar]

- Yu, S.; Giraldo, L.; Jenssen, R.; Principe, J. Multivariate extension of matrix-based Renyi’s α-order entropy functional. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 41, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Donsker, M.D.; Varadhan, S.S. Asymptotic evaluation of certain Markov process expectations for large time, I. Commun. Pure Appl. Math. 1975, 28, 1–47. [Google Scholar] [CrossRef]

- Genevay, A.; Cuturi, M.; Peyré, G.; Bach, F. Stochastic optimization for large-scale optimal transport. In Proceedings of the 2016 Conference on Neural Information Processing Systems, Barcelona, Spain, 5–10 December 2016; pp. 3440–3448. [Google Scholar]

- Jónsson, H. Mutual-Information-Based Regularization of DNNs with Application to Abstract Reasoning. Master’s Thesis, Department of Computer Science, Swiss Federal Institute of Technology, Zurich, Switzerland, 2019. [Google Scholar]

- Li, H.; Kadav, A.; Durdanovic, I.; Samet, H.; Graf, H.P. Pruning filters for efficient convnets. arXiv 2017, arXiv:1608.08710v3. [Google Scholar]

- Dai, B.; Zhu, C.; Wipf, D. Compressing neural networks using the variational information bottleneck. arXiv 2018, arXiv:1802.10399. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

| Regularization method | VIB | MINE | none | = 0.1 | = 0.2 | = 0.3 | = 0.4 |

| Accuracy (%) | 76.9 | 76.7 | 75.9 | 73.8 | 73.9 | 72.3 | 72.3 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jónsson, H.; Cherubini, G.; Eleftheriou, E. Convergence Behavior of DNNs with Mutual-Information-Based Regularization. Entropy 2020, 22, 727. https://doi.org/10.3390/e22070727

Jónsson H, Cherubini G, Eleftheriou E. Convergence Behavior of DNNs with Mutual-Information-Based Regularization. Entropy. 2020; 22(7):727. https://doi.org/10.3390/e22070727

Chicago/Turabian StyleJónsson, Hlynur, Giovanni Cherubini, and Evangelos Eleftheriou. 2020. "Convergence Behavior of DNNs with Mutual-Information-Based Regularization" Entropy 22, no. 7: 727. https://doi.org/10.3390/e22070727

APA StyleJónsson, H., Cherubini, G., & Eleftheriou, E. (2020). Convergence Behavior of DNNs with Mutual-Information-Based Regularization. Entropy, 22(7), 727. https://doi.org/10.3390/e22070727