Abstract

Previous works established that entropy is characterized uniquely as the first cohomology class in a topos and described some of its applications to the unsupervised classification of gene expression modules or cell types. These studies raised important questions regarding the statistical meaning of the resulting cohomology of information and its interpretation or consequences with respect to usual data analysis and statistical physics. This paper aims to present the computational methods of information cohomology and to propose its interpretations in terms of statistical physics and machine learning. In order to further underline the cohomological nature of information functions and chain rules, the computation of the cohomology in low degrees is detailed to show more directly that the k multivariate mutual information () are -coboundaries. The -cocycles condition corresponds to , which generalizes statistical independence to arbitrary degree k. Hence, the cohomology can be interpreted as quantifying the statistical dependences and the obstruction to factorization. I develop the computationally tractable subcase of simplicial information cohomology represented by entropy and information landscapes and their respective paths, allowing investigation of Shannon’s information in the multivariate case without the assumptions of independence or of identically distributed variables. I give an interpretation of this cohomology in terms of phase transitions in a model of k-body interactions, holding both for statistical physics without mean field approximations and for data points. The components define a self-internal energy functional and components define the contribution to a free energy functional (the total correlation) of the k-body interactions. A basic mean field model is developed and computed on genetic data reproducing usual free energy landscapes with phase transition, sustaining the analogy of clustering with condensation. The set of information paths in simplicial structures is in bijection with the symmetric group and random processes, providing a trivial topological expression of the second law of thermodynamics. The local minima of free energy, related to conditional information negativity and conditional independence, characterize a minimum free energy complex. This complex formalizes the minimum free-energy principle in topology, provides a definition of a complex system and characterizes a multiplicity of local minima that quantifies the diversity observed in biology. I give an interpretation of this complex in terms of unsupervised deep learning where the neural network architecture is given by the chain complex and conclude by discussing future supervised applications.

| 1 Introduction | 3 |

| 1.1 Observable Physics of Information | 3 |

| 1.2 Statistical Interpretation: Hierarchical Independences and Dependences Structures | 3 |

| 1.3 Statistical Physics Interpretation: K-Body Interacting Systems | 4 |

| 1.4 Machine Learning Interpretation: Topological Deep Learning | 5 |

| 2 Information Cohomology | 6 |

| 2.1 A Long March through Information Topology | 6 |

| 2.2 Information Functions (Definitions) | 7 |

| 2.3 Information Structures and Coboundaries | 10 |

| 2.3.1 First Degree () | 12 |

| 2.3.2 Second Degree () | 12 |

| 2.3.3 Third Degree () | 12 |

| 2.3.4 Higher Degrees | 13 |

| 3 Simplicial Information Cohomology | 13 |

| 3.1 Simplicial Substructures of Information | 13 |

| 3.2 Topological Self and Free Energy of K-Body Interacting System-Poincaré-Shannon Machine | 14 |

| 3.3 k-Entropy and k-Information Landscapes | 18 |

| 3.4 Information Paths and Minimum Free Energy Complex | 19 |

| 3.4.1 Information Paths (Definition) | 19 |

| 3.4.2 Derivatives, Inequalities and Conditional Mutual-Information Negativity | 20 |

| 3.4.3 Information Paths Are Random Processes: Topological Second Law of Thermodynamics and Entropy Rate | 21 |

| 3.4.4 Local Minima and Critical Dimension | 23 |

| 3.4.5 Sum over Paths and Mean Information Path | 24 |

| 3.4.6 Minimum Free Energy Complex | 25 |

| 4 Discussion | 27 |

| 4.1 Statistical Physics | 27 |

| 4.1.1 Statistical Physics without Statistical Limit? Complexity through Finite Dimensional Non-Extensivity | 27 |

| 4.1.2 Naive Estimations Let the Data Speak | 28 |

| 4.1.3 Discrete Informational Analog of Renormalization Methods: No Mean-Field Assumptions Let the Objects Differentiate | 29 |

| 4.1.4 Combinatorial, Infinite, Continuous and Quantum Generalizations | 29 |

| 4.2 Data Science | 29 |

| 4.2.1 Topological Data Analysis | 29 |

| 4.2.2 Unsupervised and Supervised Deep Homological Learning | 30 |

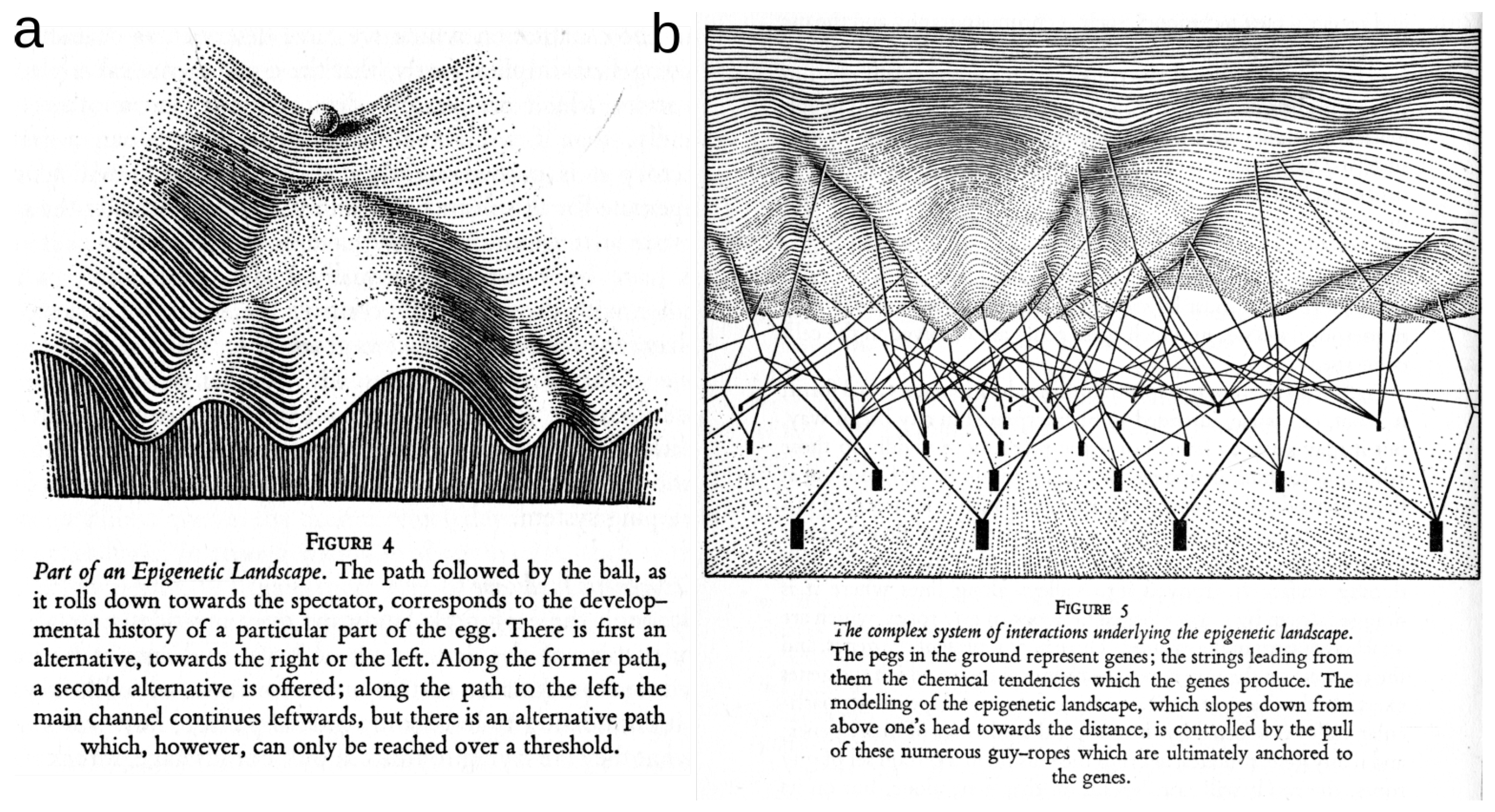

| 4.2.3 Epigenetic Topological Learning—Biological Diversity | 31 |

| 5 Conclusions | 32 |

| References | 33 |

“Now what is science? ...it is before all a classification, a manner of bringing together facts which appearances separate, though they are bound together by some natural and hidden kinship. Science, in other words, is a system of relations. ...it is in relations alone that objectivity must be sought. ...it is relations alone which can be regarded as objective. External objects... are really objects and not fleeting and fugitive appearances, because they are not only groups of sensations, but groups cemented by a constant bond. It is this bond, and this bond alone, which is the object in itself, and this bond is a relation.”H. Poincaré

1. Introduction

The present paper aims to provide a comprehensive introduction and interpretation in terms of statistics, statistical physics and machine learning of the information cohomology theory developed in References [1,2]. It presents the computational aspects of the application of information cohomology to data presented in Reference [3] and in the associated paper [2], which consists of an unsupervised classification of cell types or gene modules and provides a generic model for epigenetic co-regulation and differentiation.

1.1. Observable Physics of Information

In its application to empirically measured data, information cohomology is at the cross-roads of both data analysis and statistical physics and this article aims to give some keys to its interpretation within those two fields, which could be quoted as “passing the information between disciplines” in reference to Mezard’s review [4]. Just as topos have been used as a communication bridge allowing the translation of theorems between different domains and therefore to unify mathematical theories [5], information cohomology can help in further unraveling some equivalences between different disciplines (e.g., statistical physics and machine learning) and shall play a foundational role in both as already proposed by Doering and Isham considering only probability structures [6,7]. In doing so, information theory goes one step toward a general mathematical and physical theory of communication (or of measure and observation), where information conservation is an isomorphism. In other terms, following the paths of topology, this paper pursues the work that started with the studies of Brillouin [8], Jaynes [9,10], Landauer [11], Penrose [12], Wheeler [13], and Bennett [14], of an informational theory of physics that would be furthermore restricted to a theory of data: an austere empirical theory [15] with a minimum set of priors or axioms, a physics that “let the data speak”. It is the axiom of observability (“concepts which correspond to no conceivable observation should be eliminated from physics” [16], p. 264) which imposes that statistical physics and data sciences shall not be dissociated but unified in their foundations. Thermodynamics established that free energy is the energy that can be effectively used, whereas entropy was also called “lost heat”. The same holds generically on whatever data with mutual information and total correlations, some kind of relative or shared information: mutual information is the information that can be effectively used for pattern detection and classification. It hence appears that knowledge is a form of energy [17] and the results suggest that there are important resources of such information-energy in the k-body dependences even beyond pairwise interactions.

1.2. Statistical Interpretation: Hierarchical Independences and Dependences Structures

First, in order to provide a mathematical interpretation of information cohomology, I give a brief bibliographical overview of its multiple and diverse origins coming notably from the theory of motive and recall the open conjecture on the higher classes. In References [1,2], entropy was characterized uniquely as the first cohomology class in a topos theory and this result could be extended to quantum information, Kullback-Leibler divergence and cross-entropy (Proposition 4 [1]) and have been further developed and extended by Vigneaux [18,19] to Tsallis entropies and differential entropy following some preliminary results of Marcolli and Thorngren [20]. Here, we show directly by computing the cohomology in the low degrees that multivariate k-mutual information, denoted , are -coboundaries. Recalling a result of the associated paper (Theorem 2 [2]), establishing that n random variables are independent if and only if all the subsets of k-mutual information vanish (), allows one to conclude that the -cocycles condition corresponds to , which generalizes statistical independence to an arbitrary degree k. The interpretation and meaning of information cohomology is hence the quantification of the statistical dependences and of the obstruction to factorization—a result confirmed by the work of Mainiero, with a different approach [21]. Notably, the introduction of a symmetric action of conditioning, following Gerstenhaber and Schack [22], gives a 1-cocycle condition that characterizes the famous information pseudo-metric discovered by Shannon, Rajski, Zurek, Crutchfield and Bennett [23,24,25,26,27,28] and that can be generalized to the k-multivariate case by new symmetric non-negative information functions, the pseudo k-volumes .

1.3. Statistical Physics Interpretation: K-Body Interacting Systems

Second, to provide an interpretation of information cohomology in terms of statistical physics, this paper settles information structures in the context of a generic k-body interacting system. One can remark that the setting of information cohomology is equivalent to the Potts models that generalizes the spin models to arbitrary multivalued variables (see Reference [29] for review) and considers all possible k-ary statistical interactions in a similar way as the multispin interaction models do (k-spin interaction models that generalize pairwise and nearest-neighbor models, see Reference [30] and reference therein). I describe the computational and combinatorial restrictions from the lattice of partitions to the simplicial sub-lattice that allows one to compute in practice the information cohomology on data as in References [2,3] and that defines entropy and information landscapes and their respective paths that provide the discrete informational analog of path integrals. The combinatorics of possible interactions that are computed by the information landscapes is equivalent to the computational “exponential wall” encountered in many particle studies, notably density functional theory (DFT), as exposed by Kohn [31]. The component defines a self-internal energy functional and components define the contribution to a total free-energy functional of the k-body interactions (i.e., the total correlation). The total free energy is a Kullback-Leibler divergence and a special case (symmetric in the interacting body) of the free energy introduced by Baez and Pollard [32] (see also the appendix of the associated paper [2]). These definitions allow the recovery of usual equilibrium or semi-classical expressions in special cases. The set of all first critical points of information paths—a conditional-independence condition—gives a construction of the minimum free energy complex. Mutual information negativity, also called synergy [33]—a phenomenon known to provide the signature of frustrated states in glasses since the work of Matsuda [34]—is related here in the context of the more general conditional mutual information to a kind of (discrete) first-order transition, analogous to smooth phase transition in small systems [35], yet seen topologically as the critical points of a simplicial complex.

To further settle the thermodynamical interpretation of information cohomology, it is relevant to wonder what the cohomological expression of the first and second principles of thermodynamics could be. The set of information paths in simplicial structures is in bijection with the symmetric group and random processes, providing a trivial topological expression of the second law of thermodynamic as a consequence of entropy convexity, improving the theorem of Cover that needed to assume Markov conditions [36]. Thanks to the theorem of Noether [37], the first principle is expressed in terms of continuous symmetries and her theorem has been restated in more modern homological terms for finite elements by Mansfield [38] and for Markov chains by Baez and Fong [39]. Hence, the expression of the first principle in information cohomology, let as a conjecture here, should take the form of a Noether theorem for random discrete processes—that is, for the entire symmetric group , a question already asked by Neuenschwander [40].

As presented by Kadanov [41] or in References [42,43], close to phase transition (notably in three dimensions), the failure of the mean field theories introduced by Van der Waals, Maxwell and Landau led to the development of renormalization group methods. Renormalization methods intrinsically rely on asymptotic unrealistic assumptions such as an infinite number of particles [41,43] and neglect infinite quantities, which has raised fundamental criticism [44,45]. In order to compute an analog of a mean field model like van der Waals interactions [46,47,48], we define and compute the mean information paths that correspond to the homogeneous case of identically distributed random variables. On a dataset of expected interacting genes, the mean information path reproduces the usual behavior of the free energy in the condensed phase (i.e., with a critical point), while for genes that are less expected to interact, the path exhibits a monotonic decrease without a non-trivial minimum which corresponds to the usual free-energy potential in the uncondensed disordered phase for which the n-body interactions are negligible. However, compared with the non-averaged original information paths as presented in References [2,3], it is clear that this analog mean-field approach erases the multiplicity of critical points and the diversity or richness of the complexes.

Hence, with respect to statistical physics, the main novelty brought by the information cohomology approach is the introduction of purely finite and discrete methods that can account for transition phenomena in heterogeneous systems without mean field assumptions and of new measures of correlations (i.e., multivariate mutual information that generalizes the usual correlation coefficients to non-linear relations) [2,3]. Among the work left for further studies in this preliminary line, I conjecture that the infinite dimensional continuous extension of the information cohomology formalism should be equivalent to renormalization methods, while I underline that, in practice, there is no need of these physically unrealistic assumptions.

1.4. Machine Learning Interpretation: Topological Deep Learning

The interpretation of the information cohomology in terms of machine learning and data analysis first relies on the definition of random variables as partition and of information structures and complexes on the lattice of partition [1]. Partitions are equivalent to equivalence classes (see Ellerman [49,50] for review) and hence the complex of random variables spans all possible equivalence classes of the data point. Therefore, the information cochain complex deserves the function of a universal classifier. Moreover, these information structures defined on the whole lattice of partitions encompass all possible statistical dependences and relations, since by definition it considers all possible equivalent classes on a probability space and hence fully answers to the problem raised by James and Crutchfield [51], who remarked on some partitions that are not distinguished by the simplicial structure. The combinatorics of these structures forbid any computation in practice, until quantum computers become available and the severe restriction to the simplicial case of the cohomology with complexity implies that not all statistical dependencies can be estimated, as shown by James and Crutchfield [51]. The interpretation makes two phenomena coincide (i.e., condensation in statistical physics and clustering of data points in data science), which are signed by information and conditional information negativity as studied in the companion paper [2]. This generalizes the idea and results obtained on networks by the team of Grassberger [52].

On the side of applied algebraic topology, the identification of the topological structures of a dataset has motivated important research following the development of persistent homology [53,54,55]. Combining statistical and topological structures in a single framework remains an active challenge of data analysis that has already yielded some interesting results [56,57]. Some recent works have proposed information theoretical approaches grounded on homology, defining persistent entropy [58,59], graph topological entropy [60], spectral entropy [61] or multilevel integration entropies [62]. The present work is formally different and arose independent of persistence and provides a cohomology intrinsically based on probability for which the invariants are arguably the most important features of statistics and free of any metric assumption. The interpretation in terms of deep neural networks is also straightforward. It is preliminarily developed and applied here and in Reference [2] for the unsupervised case, while the supervised subcase is only briefly discussed here and left for further work. See Reference [63] for a presentation and preliminary applications to the digit images database of the Mixed National Institute of Standards and Technology (MNIST). The informational approach of topological data analysis provides a direct probabilistic and statistical analysis of the structure of a dataset which allows the gap with neural network analysis to be bridged and may be a step toward their formalization in mathematics and the characterization of the network architecture necessary for a given dataset. The original work based on spin networks by Hopfield [64] formalized fully recurrent networks as n binary random variables (). Ackley, Hinton and Sejnowski [65] followed up by imposing the Markov Field condition, allowing the introduction of conditional independence to handle network structures with hidden layers and hidden nodes. The result—the Boltzmann or Helmholtz machine [66]—relies on the maximum entropy or free-energy minimization principle and originally on minimizing the relative entropy between the network and environmental states [65]. Indeed, Reference [67] and references therein (notably see the whole opus of Marcolli with application to linguistics [68]) provides a review of the relevance of homology to artificial or natural cognition. Considering neurons as binary random variables or more generally N-ary variables (corresponding to a rate coding hypothesis) in the present context provides a homologically constrained approach of those neural networks, where the first input layer is represented by the marginal (single variable, degree 1 component) while hidden layers are associated to higher degrees. In a very naive sense, higher cohomological degrees distinguish higher-order patterns (or higher-dimensional patterns in the simplicial case), just as receptive fields of convolutional neural networks recognize higher-order features when going to higher depth-rank of neural layers as described in David Marr’s original sketch [69] and now implemented efficiently in deep network structures. Notably, the notion of geodesic used in machine learning is replaced by the homotopical notion of path. On the data analysis side, it provides a new algorithm and tools for topological data analysis allowing one to rank and detect clusters and functional modules, and to make dimensionality reduction; indeed, all these classical tasks in data analysis have a direct homological meaning. I propose to call the data analysis method presented here the Poincaré-Shannon machine, since it implements simplicial homology (see Poincaré’s Analysis Situs [70]) and information theory in a single framework (see Shannon’s theory of communication [71]), applied effectively to empirical data.

2. Information Cohomology

This section provides a short bibliographical note on the inscription of information and probability theory within homological theories Section 2.1. We also recall the definition of information functions Section 2.2 and provide a short description of information cohomology computed in the low degrees Section 2.3, such that the interpretation of entropy and mutual information within Hochschild cohomology appears straightforward and clear. There are no new results in this section but I hope to provide a more simple and helpful presentation for some researchers outside the field of topology of what can be found in References [1,18,19] that should be considered for more precise and detailed exposition.

2.1. A Long March through Information Topology

From the mathematical point of view, a motivation of information topology is to capture the ambiguity theory of Galois, which is the essence of group theory or discrete symmetries (see André’s reviews [72,73]) and Shannon’s information uncertainty theory in a common framework—a path already paved by some results on information inequalities (see Yeung’s results [74]) and in algebraic geometry. In the work of Cathelineau, [75], entropy first appeared in the computation of the degree-one homology of the discrete group with coefficients in the adjoint action by choosing a pertinent definition of the derivative of the Bloch–Wigner dilogarithm. It could be shown that the functional equation with five terms of the dilogarithm implies the functional equation of entropy with four terms. Kontsevitch [76] discovered that a finite truncated version of the logarithm appearing in cyclotomic studies also satisfied the functional equation of entropy, suggesting a higher-degree generalization of information, analog to polylogarithm and hence showing that the functional equation of entropy holds in p and 0 field characteristics. Elbaz-Vincent and Gangl used algebraic means to construct this information generalization which holds over finite fields [77], where information functions appear as derivations [78]. After entropy appeared in tropical and idempotent semi-ring analysis in the study of the extension of Witt semiring to the characteristic 1 limit [79], Marcolli and Thorngren developed the thermodynamic semiring, an entropy operad that could be constructed as a deformation of the tropical semiring [20]. Introducing Rota–Baxter algebras, it allowed the derivation of a renormalization procedure [80]. In defining the category of finite probability and using Fadeev axiomatization, Baez, Fritz and Leinster could show that the only family of functions that has the functorial property is Shannon information loss [81,82]. Basing his approach on information and Koszul geometry, Boyom developed a more geometrical view of statistical models that notably considers foliations in place of the random variables [83]. Introducing a deformation theoretic framework and chain complex of random variables, Drumond-Cole, Park and Terilla [84,85,86] constructed a homotopy probability theory for which the cumulants coincide with the morphisms of the homotopy algebras. The probabilistic framework used here was introduced in Reference [1] and generalized to Tsallis entropies by Vigneaux [18,19]. The diversity of the formalisms employed in these independent but convergent approaches is astonishing. So, as to the question “what is information topology?”, it is only possible to answer that it is under development at the moment. The results of Catelineau, Elbaz-Vincent and Gangl inscribed information into the theory of motives, which according to Beilison’s program is a mixed Hodge-Tate cohomology [87]. All along the development of the application to data, following the cohomology developed by References [1,18] on an explicit probabilistic basis, we aimed to preserve such a structure and unravel its expression in information theoretic terms. Moreover, following Aomoto’s results [88,89], the actual conjecture [1] is that the higher classes of information cohomology should be some kind of polylogarithmic k-form (k-differential volumes that are symmetric and additive and that correspond to the cocycle conditions for the cohomology of Lie groups [88]). The following developments suggest that these higher information groups should be the families of functions satisfying the functional equations of k-independence − a rather vague but intuitive view that can be tested in special cases.

2.2. Information Functions (Definitions)

The information functions used in Reference [1] and the present study were originally defined by Shannon [71] and Kullback [90] and further generalized and developed by Hu Kuo Ting [91] and Yeung [92] (see also McGill [93]). These functions include entropy, denoted ; joint entropy, denoted ; mutual information, denoted ; multivariate k-mutual information, denoted ; and the conditional entropy and mutual information, denoted and . The classical expression of these functions is the following (using , the usual bit unit):

- The Shannon-Gibbs entropy of a single variable is defined by [71]:where denotes the alphabet of .

- The relative entropy or Kullback-Liebler divergence, which was also called “discrimination information” by Kullback [90], is defined for two probability mass functions and by:where is the cross-entropy and the Shannon entropy. It hence generates minus entropy as a special case, taking the deterministic constant probability . With the convention , is always positive or null.

- The joint entropy is defined for any joint product of k random variables and for a probability joint distribution by [71]:where denotes the alphabet of .

- The mutual information of two variables is defined as [71]:and it can be generalized to k-mutual information (also called co-information) using the alternated sums given by Equation (17), as originally defined by McGill [93] and Hu Kuo Ting [91], giving:For example, the 3-mutual information is the function:For , can be negative [91].

- The total correlation introduced by Watanabe [94] called integration by Tononi and Edelman [95] or multi-information by Studený and Vejnarova [96] and Margolin and colleagues [97], which we denote , is defined by:For two variables, the total correlation is equal to the mutual information (). The total correlation has the favorable property of being a relative entropy 2 between marginal and joint-variable and hence of being always non-negative.

- The conditional entropy of knowing (or given) is defined as [71]:Conditional joint-entropy, or , is defined analogously by replacing the marginal probabilities by the joint probabilities.

- The conditional mutual information of two variables knowing a third is defined as [71]:Conditional mutual information generates all the preceding information functions as subcases, as shown by Yeung [92]. We have the theorem: if , then it gives the mutual information; if , it gives conditional entropy; and if both conditions are satisfied, it gives entropy. Notably, we have .

We now give the few information equalities and inequalities that are of central use in the homological framework, in the information diagrams and for the estimation of the information from the data.

We have the chain rules (see Reference [36] for proofs):

which we can write more generally as (where the hat denotes the omission of the variable):

that we can write in short

which we can write in short , generating the chain rule (10) as a special case.

These two equations provide recurrence relationships that give an alternative formulation of the chain rules in terms of a chosen path on the lattice of information structures:

where we assume and hence that is the greatest element .

We have the alternated sums or inclusion–exclusion rules [1,34,91]:

For example: .

The chain rule of mutual information goes together with the following inequalities discovered by Matsuda [34]. For all random variables with associated joint probability distribution P, we have the theorem due to Matsuda [34]

- if and only if (in short: ),

- if and only if (in short: ),

Which fully characterize the phenomenon of information negativity as an increasing or diverging sequence of mutual information.

2.3. Information Structures and Coboundaries

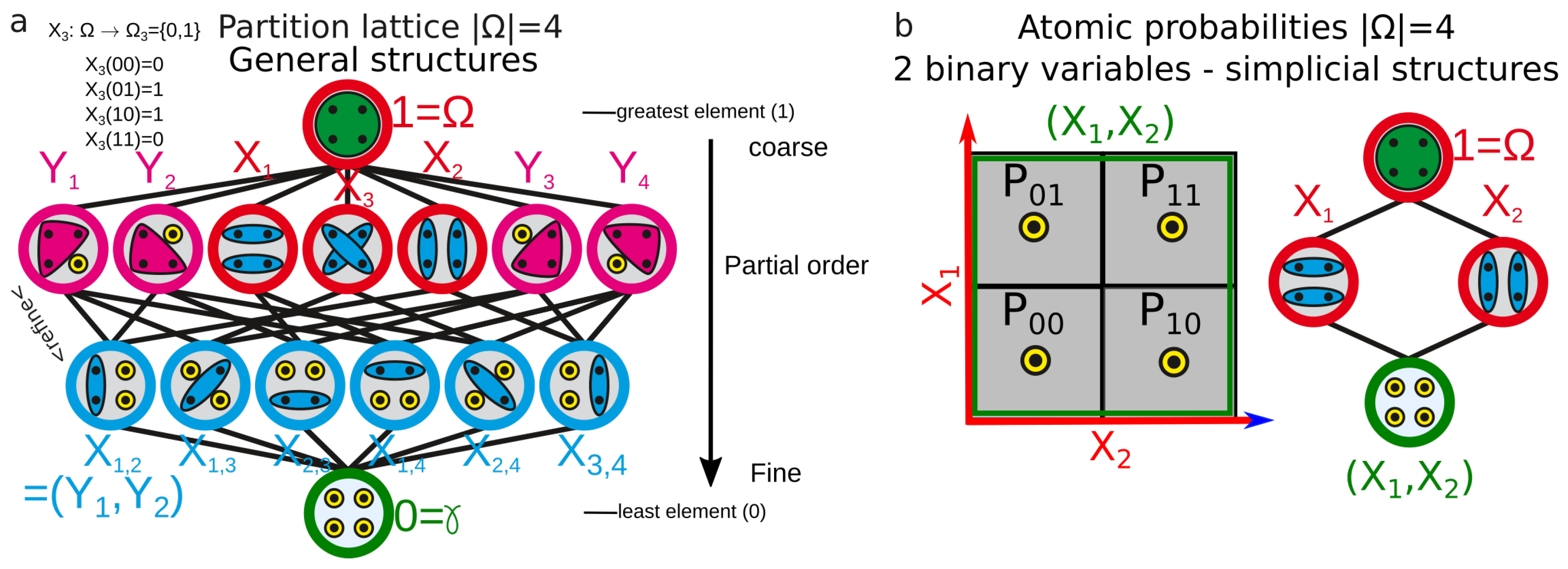

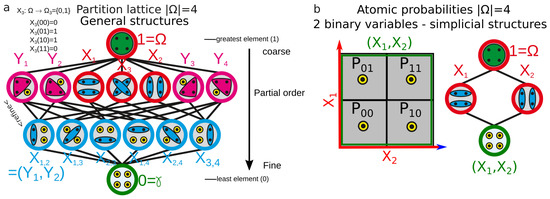

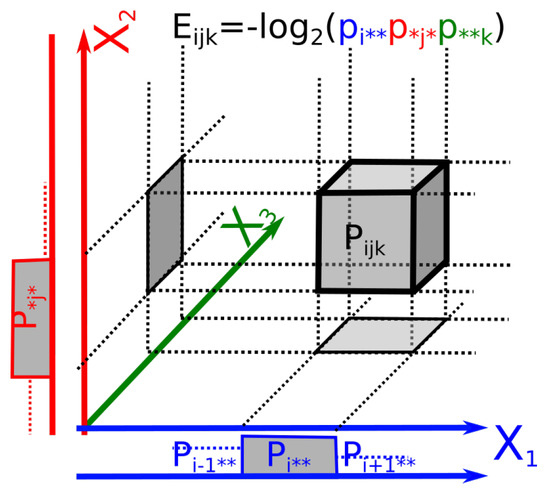

This section justifies the choice of functions and algorithm, the topological nature of the data analysis and the approximations we had to concede for the computation. In the general formulation of information cohomology, the random variables are partitions of the atomic probabilities of a finite probability space (e.g., all their equivalence classes). The Joint-Variable is the less-fine partition that is finer than and ; the whole lattice of partitions [98] corresponds to the lattice of joint random variables [1,99]. Then, a general information structure is defined to be the triple . A more modern and general expression in category theory and topos is given in References [1,18]. designates the image law of the probability P by the measurable function of joint variables . Figure 1 gives a simple example of the lattice of partitions for four atomic probabilities, with the simplicial sublattice used for data analysis. Atomic probabilities are also illustrated in a figure in the associated paper [2].

Figure 1.

Example of general and simplicial information structures. (a) Example of lattice of random variables (partitions): the lattice of partitions of atomic-elementary events for a sample space of four atomic elements (e.g., two coins and ), each element being denoted by a black dot in the circles representing the random variables. The joint operation of random variables denoted or of two partitions is the less-fine partition Z that is finer than X and Y (Z divides Y and X or Z is the greatest common divisor of Y and X). It is represented by the coincidence of two edges of the lattices. The joint operation has an identity element denoted (that we will denote 0 hereafter), with and is idempotent . The structure is a partially ordered set (poset) with a refinement relation. (b) Illustration of the simplicial structure (sublattice) used for the data analysis ( as previously).

On this general information structure, we consider the real module of all measurable functions and the conditioning-expectation by Y of measurable functions as the action of Y on the functional module, denoted , such that it corresponds to the usual definition of conditional entropy (Equation (8)). We define our complexes of measurable functions of random variables and the cochain complexes as:

where is the left action co-boundary that Hochschild proposed for associative and ring structures [100]. A similar construction of a random variable complex was given by Drumond-Cole, Park and Terilla [84,85]. We also consider the two other directly related cohomologies defined by considering a trivial left action [1] and a symmetric (left and right) action [22,101,102] of conditioning:

- The left action Hochschild-information coboundary and cohomology (with trivial right action):This coboundary, with a trivial right action, is the usual coboundary of Galois cohomology ([103], p. 2) and in general it is the coboundary of homological algebra obtained by Cartan and Eilenberg [104] and MacLane [105] (non-homogenous bar complex).

- The “topological-trivial” Hochschild-information coboundary and cohomology: consider a trivial left action in the preceding setting (e.g., ). It is the subset of the preceding case, which is invariant under the action of conditioning. We obtain the topological coboundary [1]:

- The symmetric Hochschild-information coboundary and cohomology: as introduced by Gerstenhaber and Shack [22], Kassel [102] (p. 13) and Weibel [101] (chap. 9), we consider a symmetric (left and right) action of conditioning, that is, . The left action module is essentially the same as considering a symmetric action bimodule [22,101,102]. We hence obtain the following symmetric coboundary :

Based on these definitions, Baudot and Bennequin [1] computed the first homology class in the left action Hochschild-information cohomology case and the coboundaries in higher degrees. We introduce here the symmetric case and detail the higher-degree cases by direct specialization of the co-boundary formulas, such that it appears that information functions and chain rules are homological by nature. For notation clarity, we omit the probability in the writing of the functions and when specifically stated replace their notation F by their usual corresponding informational function notation .

2.3.1. First Degree ()

For the first degree , we have the following results:

- The left 1-co-boundary is . The 1-cocycle condition gives , which is the chain rule of information shown in Equation (10). Then, following Kendall [106] and Lee [107], it is possible to recover the functional equation of information and to characterize uniquely—up to the arbitrary multiplicative constant k—the entropy (Equation (1)) as the first class of cohomology [1,18]. This main theorem allows us to obtain the other information functions in what follows. Marcolli and Thorngren [20] and the group of Leinster, Fritz and Baez [81,82] independently obtained an analog result using a measure-preserving function and a characteristic one Witt construction, respectively. In these various theoretical settings, this result extends to relative entropy [1,20,82] and Tsallis entropies [18,20].

- The topological 1-coboundary is , which corresponds to the definition of mutual information and hence is a topological 1-coboundary.

- The symmetric 1-coboundary is , which corresponds to the negative of the pairwise mutual information and hence is a symmetric 1-coboundary. Moreover, the 1-cocycle condition characterizes functions satisfying , which corresponds to the information pseudo-metric discovered by Shannon [23], Rajski [24], Zurek [25] and Bennett [26] and has further been applied for hierarchical clustering and finding categories in data by Kraskov and Grassberger [27]: . Therefore, up to an arbitrary scalar multiplicative constant k, the information pseudo-metric is the first class of symmetric cohomology. This pseudo-metric is represented in Figure 3. It generalizes to pseudo k-volumes that we define by (particularly interesting symmetric nonnegative functions computed by the provided software).

2.3.2. Second Degree ()

For the second degree , we have the following results:

- The left 2-co-boundary is , which corresponds to minus the 3-mutual information and hence is the left 2-coboundary.

- The topological 2-coboundary is , which corresponds in information to and hence the topological 2-coboundary is always null-trivial.

- The symmetric 2-coboundary is , which corresponds in information to and hence the symmetric 2-coboundary is always null-trivial.

2.3.3. Third Degree ()

For the third degree , we have the following results:

- The left 3-co-boundary is , which corresponds in information to and hence the left 3-coboundary is always null-trivial.

- The topological 3-coboundary is , which corresponds in information to and hence is a topological 3-coboundary.

- The symmetric 3-coboundary is , which corresponds in information to and hence is a symmetric 3-coboundary.

2.3.4. Higher Degrees

For , we obtain and and . For arbitrary k, the symmetric coboundaries are just the opposite of the topological coboundaries . It is possible to generalize to arbitrary degrees [1] by remarking that:

- For even degrees : we have , and then with boundary terms. In conclusion, we have:

- For odd degrees : and then with boundary terms. In conclusion, we have:

In References [2,108] (Theorem 2), we show that the mutual independence of n variables is equivalent to the vanishing of all functions for all . As a probabilistic interpretation and conclusion, the information cohomology hence quantifies statistical dependences at all degrees and the obstruction to factorization. Moreover, k-independence coincides with cocycles. We therefore expect that the higher cocycles of information, conjectured to be polylogarithmic forms [1,77,78], are characterized by the functional equations and quantify statistical k-independence.

3. Simplicial Information Cohomology

3.1. Simplicial Substructures of Information

The general information structure, relying on the information functions defined on the whole lattice of partitions, encompasses all possible statistical dependences and relations, since by definition it considers all possible equivalent classes on a probability space. One could hence expect this general structure to provide a promising theoretical framework for classification tasks on data and this is probably true in theory. However, this general case hardly allows any interesting computational investigation, as it implies an exhaustive exploration of computational complexity following Bell’s combinatoric in ) for nN-ary variables. This fact was already remarked in the study of aggregation for artificial intelligence by Lamarche-Perrin and colleagues [109]. At each order k, the number of k-joint-entropy and k-mutual-information to evaluate is given by Stirling numbers of the second kind that sum to Bell number , . For example, considering 16 variables that can take 8 values each, we have atomic probabilities and the partition lattice of variables exhibits around elements to compute. This computational reef can be decreased by considering the sample size m, which is the number of trials, repetitions or points used to effectively estimate the empirical probability. It restricts the computation to , which remains insurmountable in practice with our current classical Turing machines. To circumvent this computational barrier, data analysis is developed on the simplest and oldest subcase of Hochschild cohomology—the simplicial cohomology, which we hence call the simplicial information cohomology and structure and which corresponds to a subcase of cohomology and structure introduced previously (see Figure 1b). It corresponds to Examples 1 and 4 in Reference [1], and to the python scripts shared on Github (cf. Supplementary Materials). For simplicity, we note also the simplicial information structure , , as we will not come back to the general setting. Joint and meet operations on random variables are the usual joint-union and meet-intersection of Boolean algebra and define two opposite-dual monoids, freely generating the lattice of all subsets and its dual. The combinatorics of the simplicial information structure follow binomial coefficients and, for each degree k in an information structure of n variables, we have elements that are in one-to-one correspondence with the k-faces (the k-tuples) of the n-simplex of random variables (or its barycentric subdivisions). It is a (simplicial) substructure of the general structure, since any finite lattice is a sub-lattice of the partition lattice [110]. This lattice embedding and the fact that simplicial cohomology is a special case of Hochschild cohomology can also be inferred directly from their coboundary expression and has been explicitly formalized in homology: notably, Gerstenhaber and Shack showed that a functor, denoted , induces an isomorphism between simplicial and Hochschild cohomology (see [111] for precisions). A simplicial complex of measurable functions is any subcomplex of this simplex with and any simplicial complex can be realized as a subcomplex of a simplex (see p. 296 in Reference [112]). The information landscapes presented in Section 3.3 illustrate an example of such a lattice/information structure. Moreover in this ordinary homological structure, the degree obviously coincides with the dimension of the data space (the data space is in general , the space of “co-ordinate” values of the variables). This homological (algebraic, geometric and combinatorial) restriction to the simplicial subcase can have some important statistical consequences. In practice, whereas the consideration of the partition lattice ensured that no reasonable (up to logical equivalence) statistical dependences could be missed (since all the possible equivalence classes on the atomic probabilities were considered), the monoidal simplicial structure unavoidably misses some possible statistical dependences, as shown and exemplified by James and Crutchfield [51].

3.2. Topological Self and Free Energy of K-Body Interacting System-Poincaré-Shannon Machine

Topological Self and Free Energy of K-Body Interacting Systems

The basic idea behind the development of topological quantum field theories [113,114,115] was to define the action and energy functionals on a purely topological ground, independently of any metric assumptions and to derive from this the correlation functions or partition functions. Here, in an elementary model for applied purposes, we define, in the special case of classical and discrete probability, the k-mutual information (that generalize the correlation functions to nonlinear relation [116]), as the contribution of the k-body interactions to the energy functional. Some further observations support such a definition: (i) as stated in Reference [1], the signed mutual information defining energy are sub-harmonic, a kind of weak convexity; (ii) in the next sections, we define the paths of information and show that they are equivalent to the discrete symmetry group; (iii) from the empirical point of view, the results in Section 3.4.5 shows that these energy functionals estimated on real data behave as expected for usual k-body homogeneous formalism such as the van der Waals model or more refined density functional theory (DFT) [117,118]. Given in the context of simplicial structures, these definitions generalize to the case of a partitions lattice and altogether provide the usual thermodynamical and machine-learning expressions and interpretation of mutual information quantities: some new methods free of metric assumptions. There are two qualitatively and formally different components in the , that give the two following definitions.

Definition 1.

Self-internal energy (definition): for , and their sum in an information structure expressed in Equation (16), namely, , are a self-interaction component, since they sum over marginal information entropy . We call the first-dimension mutual-information component the self-information or internal energy, in analogy to usual statistical physics and notably DFT:

Note that in the present context, which is discrete and where the interactions do not depend on a metric, the self-interaction does not diverge, which is a usual problem with metric continuous formalism and was the original motivation for regularization and renormalization infinite corrections, considered by Feynman and Dirac as the mathematical default of the formalism [44,45].

Definition 2.

k-free-energy and total-free-energy: for , and their sum in an information structure (Equation 16) quantify the contribution of the k-body interactions. We call the kth dimension mutual-information component given in Equation (5) the k-free-information-energy. We call the (cumulative) sum over dimensions of these k-free-information-energies starting at pairwise interactions (dimension 2), the total n-free-information-energy and denote it :

The total free energy is the total correlation (Equation (7)) introduced by Watanabe in 1960 [94] that quantifies statistical dependence in the work of Studený and Vejnarova [96] and Margolin and colleagues [97] and among other examples consciousness in the work of Tononi and Edelman [95]. In agreement with the results of Baez and Pollard in their study of biological dynamics using out-of-equilibrium formalism [32] and the appendix of the companion paper on Bayes free energy [2], the total free energy is a relative entropy. The consideration that free energy is the peculiar case of total correlation within the set of relative entropies accounts for the fact that the free energy shall be a symmetric function of the variables associated to the various bodies (e.g., in the pairwise interaction case). Moreover, whereas the energy component can be negative, the total energy component is always non-negative. Each term in the free energy can be understood as a free-energy correction accounting for the k-body interactions.

Entropy is given by the alternated sums of information (Equation (16)), which then read as the usual isotherm thermodynamic relation:

This information-theoretic formulation of thermodynamic relation follows Jaynes [9,10], Landauer [11], Wheeler [119] and Bennett’s [14] original work and is general in the sense that it is finite and discrete and holds independently of the assumption of the system being in equilibrium or not (i.e., for any finite probability). In more probabilistic terms, it does not assume that the variables are identically distributed—a required condition for the application of classical central limit theorems (CLTs) to obtain the normal distributions in the asymptotic limit [120]. In the special case where one postulates that the probability follows the equilibrium Gibbs distribution, which is also the maximum entropy distribution [121,122], the expression of the joint entropy () allows recovery of the equilibrium fundamental relation, as usually achieved in statistical physics (see Adami and Cerf [123] and Kapranov [124] for more details). Explicitly, let us consider Gibbs’ distribution:

where is the energy of the elementary-atomic probability , is Boltzmann’s constant, T is the temperature and is the partition function, such that . Since equals the thermodynamic entropy function S up to the arbitrary Landauer constant factor , , the entropy for the Gibbs distribution gives:

which gives the expected thermodynamical relation:

where G is the free energy .

In the general case of arbitrary random variables (not necessarily i.i.d.) and discrete probability space, the identification of marginal information with internal energy

implies by direct algebraic calculus that:

where the marginal probability is the sum over all probabilities for which . It is hence tempting to identify the elementary atomic energies with the elementary marginal information . This is achieved uniquely by considering that such an elementary energy function must satisfy the additivity axiom (extensivity): (), which is the functional equation of the logarithm. The original proof goes back at least to Kepler, an elementary version was given by Erdos [125] and in information theoretic terms can be found in the proofs of uniqueness of “single event information function” by Aczel and Darokzy ([126], p. 3). It establishes the following proposition:

Theorem 1.

Given a simplicial information structure, the elementary energies satisfying the extensivity axiom are the functions:

where k is an arbitrary constant settled to for units in bits.

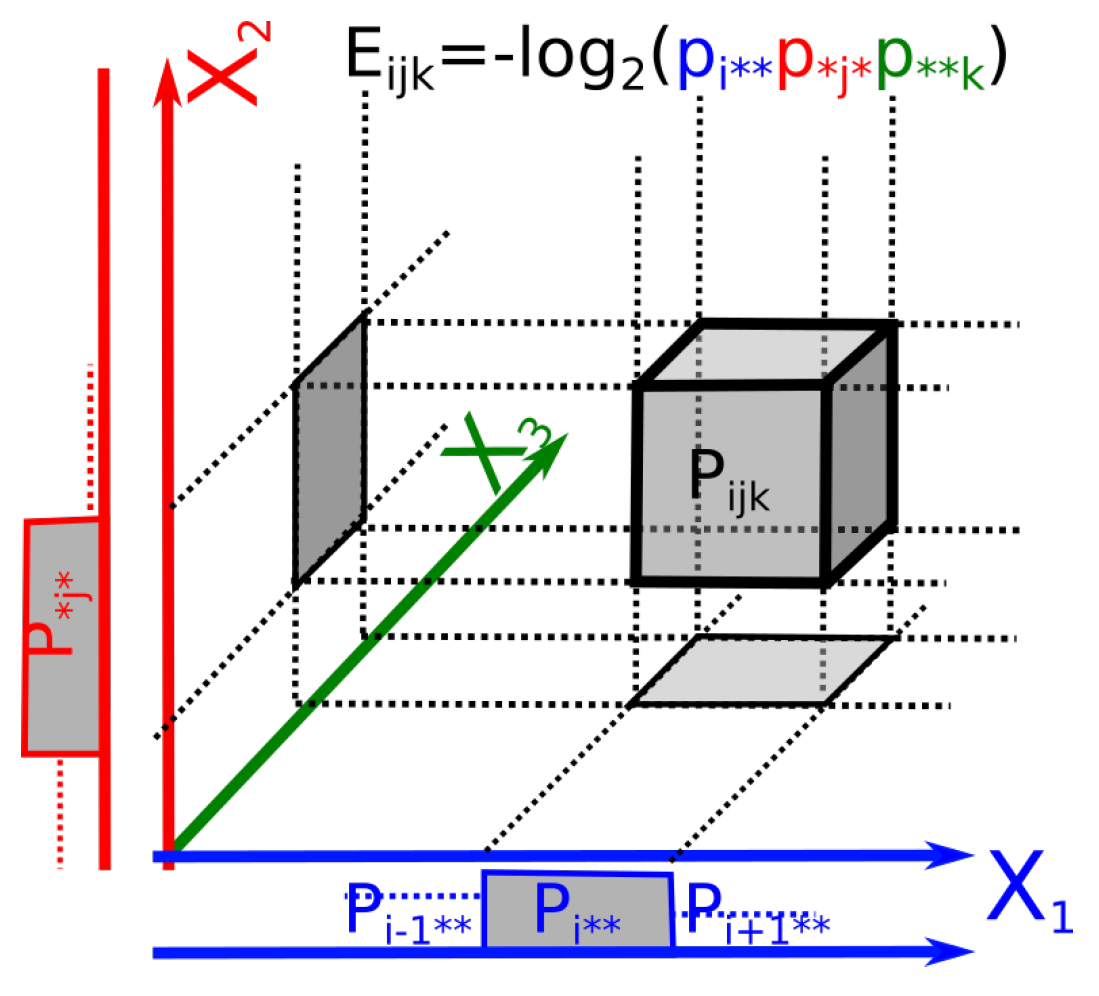

The geometric meaning of these elementary energies as log of marginal elementary probability volumes (locally Euclidean) is illustrated in Figure 2 and further underlines that are volume corrections accounting for the statistical dependences among marginal variables.

Figure 2.

Elementary energy as logarithm of locally Euclidean probability volumes. Example of an elementary energy associated to a probability ( variables). The histograms of the marginal distributions of each variable are plotted beside the axes.

Examples: (i) In the example of three binary random variables (, three variables of Bernoulli) illustrated in the figure of the associated paper [2], we have , and in the configuration of negative-entangled-Borromean information of the figure of the associated paper [2], we obtain in bit units and similarly and we hence recover bits. Note that the limit avoids singularity of elementary energies.

(ii) In the special case of identically distributed variables, , we have , and hence the marginal Gibbs distribution:

(iii) For independent identically distributed variables (non-interacting), we have and hence:

(iv) Considering the variables to be the variables of the phase space, with one variable of position and one variable of momentum per body (denoted for the kth body), it is possible to re-express the semi-classical formalism, according to which the entropy formulation is (p. 22, [127]):

This is achieved by identifying the internal and free energy as follows:

This identifies the elementary volumes/probabilities with the Planck constant, the quantum of action (the consistency in the units is realized in Section 3.4.3 by the introduction of time). The quantum of action can be illustrated by considering in Figure 2 that it is the surface of the square/rectangle for two conjugate variables (considered as position and momentum). In this setting, quantifies the non-extensivity of the volume in the phase-space due to interactions or in other words, the mutual information accounts for the consideration of the dependence of the subsystems considered as opened and exchanging energy. As noted by Baez and Pollard, the relative entropy provides a quantitative measure of how far from equilibrium the whole system is [32]. The basic principle of this expression of information theory in physics has been known at least since Jaynes’s work [9,10].

As a conclusion, information topology applies—without imposing metric, symplectic or contact structures—to the physical formalism of n-body interacting systems relying on empirical measures. Considering the or dimensions (degrees of freedom) of a configuration or a phase space as random variables, it is possible to recover the (semi)classical statistical physics formalism. It is also interesting to discuss the status of the analog of the temperature variable in the present formalism which is played by the graining, which is the size of the alphabet of a variable . In usual thermodynamics we have and to stay consistent, temperature shall be a functional inverse of the graining N, the lowest temperature being the finest grain (large N) and the highest temperature being the coarsest grain (small N).

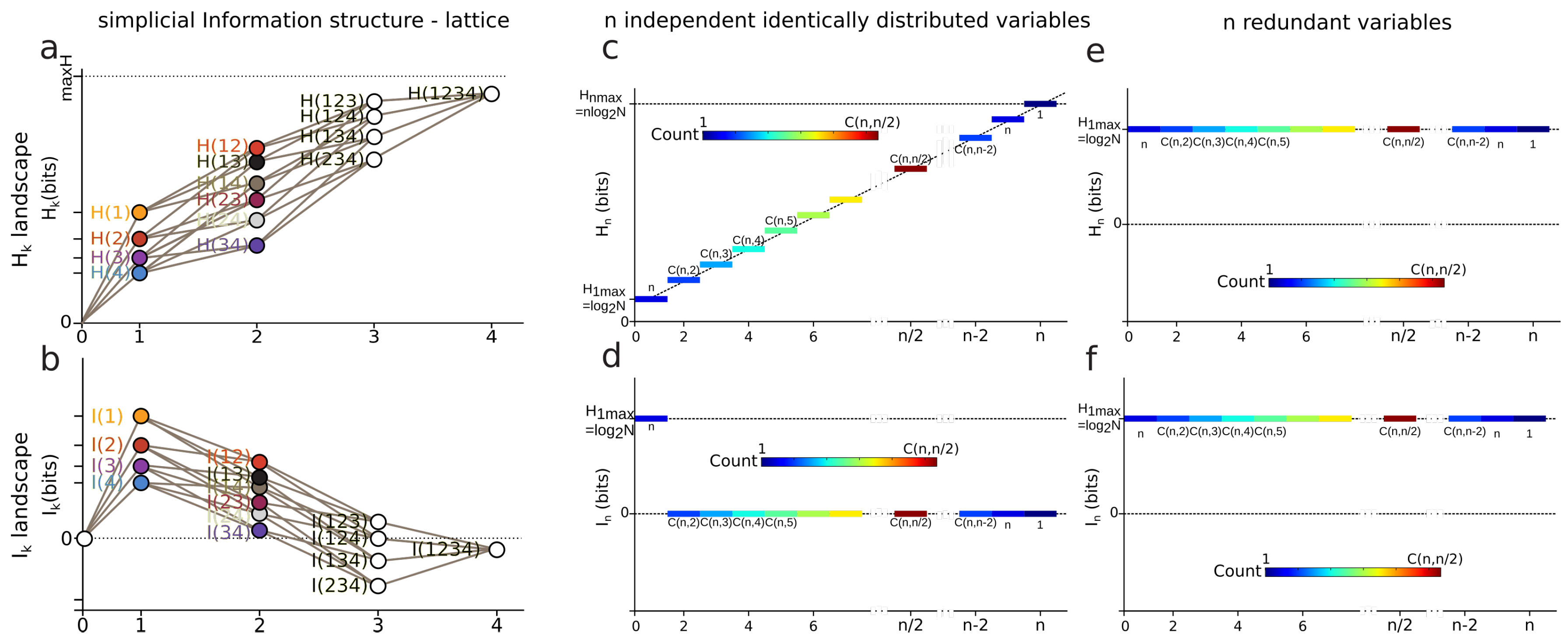

3.3. k-Entropy and k-Information Landscapes

Definition 3.

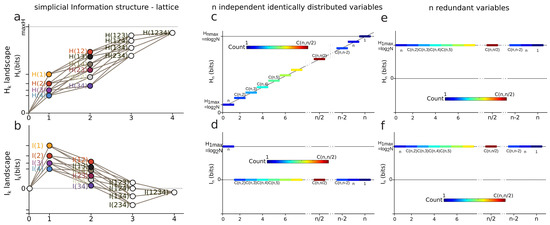

Information Landscapes: Information landscapes are a representation of the (semi)lattice of information structures where each element is represented as a function of its corresponding value of entropy or mutual information. In abscissa are the dimensions k and in ordinate the values of the information functions of a given subset of k variables.

In data science terms, these landscapes provide a visualization of the potentially high-dimensional structure of the data points. In information theoretic terms, it provides a representation of Shannon’s work on lattices [23], further developed by Han [128]. and , as real continuous functions, provide a ranking of the lattices at each dimension k. It is the ranking (i.e., the relative values of information) which matters and comes out of the homological approach, rather than the absolute values. The principle of and landscapes is illustrated in Figure 3 for . and analyze and quantify the variability-randomness and statistical dependences at all dimensions k, respectively, from 1 to n, n being the total number of variables under study. The landscape represents the values of joint entropy for all k-tuples of variables as a function of the dimensions k, the number of variables in the k-tuple, together with the associated edges–paths of the lattice (in grey). The landscape represents the values of mutual information for all k-tuples of variables as a function of the dimension k, which is the number of variables in the k-tuple. Figure 3 gives two theoretical extremal examples of such landscapes: one for independent and identically distributed variables (totally disordered) and one for fully dependent identically distributed variables (totally ordered). The degeneracy of and values is given by the binomial coefficient (color code in Figure 3), hence allowing one to derive the normal exact expression of the information landscapes in the asymptotic infinite dimensional limit () by application of Laplace–Lemoivre theorem. These are theoretical extremal examples: and landscapes effectively computed and estimated on biological data with a finite sample are shown in References [2,3,108] and in practice the finite sample size (m) may impose some bounds on the landscapes.

Figure 3.

Entropy and information landscapes. (a) Illustration of the principle of an entropy landscape and (b) of a mutual information landscape for random variables. The lattice of the simplicial information structure is depicted with grey lines. Theoretical examples of entropy and information landscapes. (c,d) and landscapes for n independent and identically distributed variables. The degeneracy of and values is represented by a color code: the number of k-tuples having the same information value. (e,f) and landscapes for n fully redundant variables. Such variables are equivalent from the information point of view; they are identically distributed and fully dependent.

3.4. Information Paths and Minimum Free Energy Complex

In this section we establish that information landscapes and paths directly encode the basic equalities, inequalities and functions of information theory and allow us to obtain the minimum free energy complex that we estimate on data.

3.4.1. Information Paths (Definition)

Definition 4.

Information Paths: On the discrete simplicial information lattice , we define a path of degree k as a sequence of edges of the lattice that begins at the least element of the lattice (the identity constant “0”), travels along edges from vertex to vertex of increasing dimension and ends at the greatest element of the lattice of dimension k. Information paths are defined on both joint-entropy and meet-mutual-information semi-lattices and the usual joint-entropy and mutual-information functions are defined on each element of such paths. The entropy path and information path of degree k are denoted and , respectively and the set of all information paths is denoted for the entropy paths and for the mutual-information paths.

We have the theorem:

Theorem 2.

The two sets of all information paths and in the simplicial information structure are both in bijection with the symmetric group . Notably, there are information paths in .

Proof.

by simple enumeration, an edge of dimension m connects edges of dimension , the number of paths is hence , hence the conclusion. □

A given path can be identified with a permutation or a total order by extracting the missing variable in a previous node when increasing the dimension, for example the mutual-information path in : can be noted as the permutation :

We note an information path with arrows, giving for the previous example . These paths shall be seen as the automorphisms of and the space of entropy and mutual-information paths can be endowed with the structure of two opposite symmetric groups and . The equivalence of the set of paths and symmetric group only holds for the subcase of simplicial structures and the information paths in the lattice of partition are obviously much richer. More precisely, the subset of simplicial information paths in the lattice of partitions corresponds to the automorphisms of the lattice. It is known that the finite symmetric group is the automorphism group of the finite partition lattice [129]. The geometrical realization of information paths and consists of two dual permutohedra (see Postnikov [130]) and gives the informational version of the work of Matúš on conditional probability and permutohedra [131].

3.4.2. Derivatives, Inequalities and Conditional Mutual-Information Negativity

Derivatives of information paths:

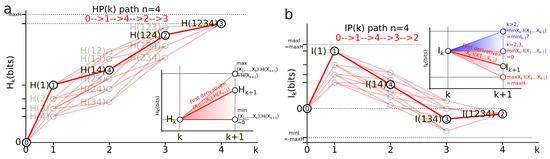

In the information landscapes, the paths and are piecewise linear functions with , where is the mutual information of the k-tuple of variables pertaining to the path . We define the first derivatives of the paths for both entropy and mutual-information structures as piecewise linear functions:

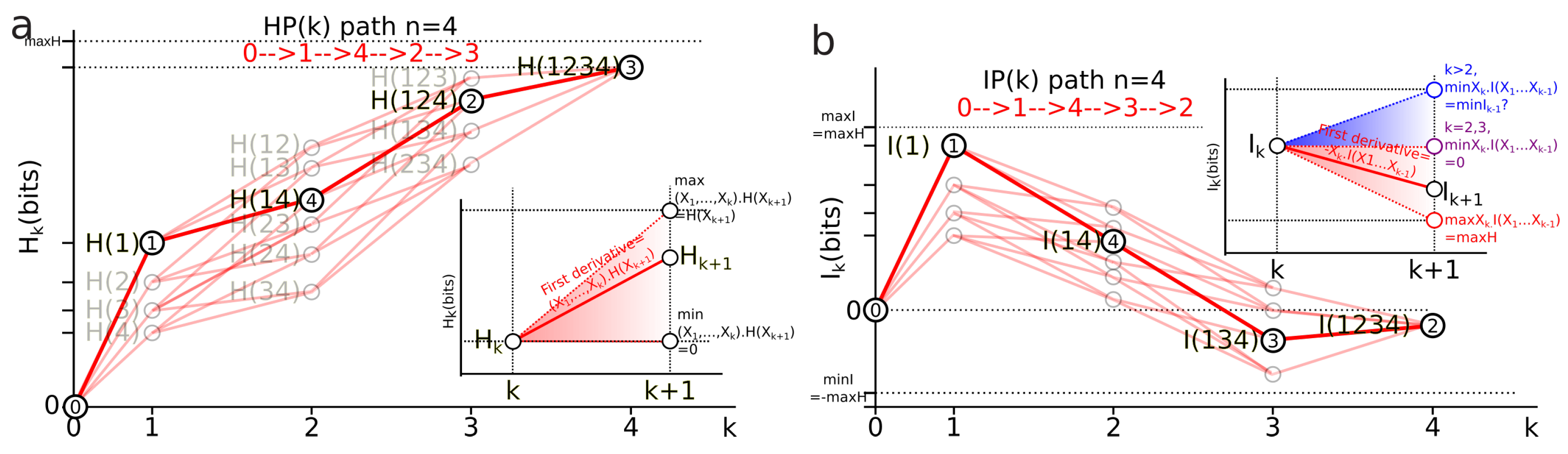

First derivative of entropy path: the first derivative of an entropy path is the conditional information :

This derivative is illustrated in the graph of Figure 4a. It implements the chain rule of entropy (Equation (12)) and in homology provides a diagram where conditional entropy is a simplicial coface map , as a simplicial special case of Hochschild coboundaries (Section 2.3).

Figure 4.

Entropy and information paths. Illustration of an entropy path (a) and of a mutual-information path (b) for random variables (see text).

First derivative of mutual-information path: the first derivative of an information path is minus the conditional information :

This derivative is illustrated in the graph of Figure 4b. It implements the chain rule of mutual information (Equation (13)) and in homology provides a diagram where minus the conditional mutual information is a simplicial coface map , introduced in Section 2.3.

Bounds of the derivatives and information inequalities

The slope of entropy paths is bounded by the usual conditional entropy bounds ([92] pp. 27–28). Its minimum is 0 and is achieved in the case where is a deterministic function of () (lower dashed red line in Figure 4a). Its global upper bound is and its sharp bound given by is achieved in the case where is independent of (we have (higher dashed red line in Figure 4a). Hence, any entropy path lies in the (convex) entropy cone defined by the three points labeled , and : the three vertices of the cone depicted as a red surface in Figure 4a and called the Shannonian cone following Yeung’s seminal work [132]. The behavior of a mutual-information path and the bounds of its slope are richer and more complex than the preceding conditional entropy:

- For , the conditional information is the conditional entropy and has the same usual bounds .

- For the conditional mutual information is always positive or null and hence ([92], p. 26, the opposite of Theorem 2.40, p. 30), whereas the higher limit is given by ([34] th. 2.17), with equality iff and are conditionally independent given and implying that the slope from to increases in the landscape.

- For , can be negative as a consequence of the preceding inequalities. In terms of information landscape this negativity means that the slope is positive, hence that the information path has crossed a critical point—a minimum. As expressed by Theorem due to Matsuda [34], iff . The minima correspond to zeros of conditional information (conditional independence) and hence detect cocycles in the data. The results on information inequalities define as “Shannonian” [133,134,135] the set of inequalities that are obtained from conditional information positivity () by linear combination, which forms a convex “positive” cone after closure. “Non-Shannonian” inequalities could also be exhibited [133,134], hence defining a new convex cone that includes and is strictly larger than the Shannonian set. Following Yeung’s nomenclature and to underline the relation with his work, we call the positive conditional mutual-information cone (the surface colored in red in Figure 4b) the “Shannonian” cone and the negative conditional mutual-information cone (the surface colored in blue in Figure 4b) the “non-Shannonian” cone.

3.4.3. Information Paths Are Random Processes: Topological Second Law of Thermodynamics and Entropy Rate

Here we present the dynamical aspects of information structures. Information paths directly provide the standard definition of a stochastic process and it imposes how the time arrow appears in the homological framework, how time series can be analyzed, how entropy rates can be defined and so forth.

Definition 5.

Random (stochastic) process ([136]): A random process is a collection of random variables on the same probability space and the index set T is a totally ordered set.

A stochastic process is a collection of random variables indexed by time—the probabilistic version of a time series. We have the following lemma:

Lemma 1.

(Stochastic process and information paths): Let be a simplicial information structure, then the set of entropy paths and of mutual-information paths are in one-to-one correspondence with the set of stochastic processes .

Proof.

Considering each symbol of a time series as a random variable, the definition of a stochastic process corresponds to the unique information paths and , whose total order is the time order of the series. More formally, we can prove that the number of different total order on the finite set with k elements is , such that we can establish a one-to-one correspondence of total orders on T with permutations on T and by Theorem 2 with entropy and information paths. Let T be a finite set with k elements and ≤ a total order relation on T. Consider that , then the set T contains only 1 element and any relation is trivially a total order on T. Now, consider that and that . Suppose that . By the definition of a total order (Definition 9, p. 146 Bourbaki [137]), since ≤ is a total order on T then for y in T and where we have that or . If then we define x to be the minimum element in T. If then there exists a such that and such a process can be achieved recurrently until we obtain this minimal element. Without loss of generality, consider that is this minimal element. We then take the set and repeat the preceding reasoning to find a minimal element of and by recurrence we obtain and hence that there are total orders in T. □

In other words, these paths are the automorphisms of . We immediately obtain a topological version of the second law of thermodynamics, which follows from an elementary convexity property and improves the result of Cover [36]:

Theorem 3.

(Stochastic process and information paths): Let be a simplicial information structure and let be a stochastic process defined by a collection of random variables on the same probability space with cardinality , where the index set T is a totally ordered set, then the entropy , where is the joint-random variable of the variables in and can only increase or stay constant with t.

Proof.

given the correspondence we just established in Lemma 1, the statement is equivalent to or which by the chain rule of information (12) with gives . It is hence sufficient to prove the non-negativity of conditional entropy which can be found in Yeung ([92], p. 27). Consider the definition of conditional entropy given in 8, that we denote for simplicity:

with . It is hence sufficient to show that , which follows from the fact that for , we have with , which follows from the concavity of the logarithm. The generalization with respect to the stationary Markov condition on used by Cover comes from the remark that in any case the indexing set of the variable is a total order. □

Remark 1.

The equality corresponds to a statistical independence condition and to an equilibrium condition. Note that the homological formalism imposes an “initial” minimally low entropy state , which is a usual assumption in physics and which corresponds to the constant and zero degree homology, which has to have at least one component to talk about the cohomology. The meaning of this theorem in common terms was summarized by Gabor and Brillouin: “you cannot have something for nothing, not even an observation” [138]. This increase in entropy is illustrated in Figure 5a. The usual stochastic approach of time series assumes a Markov chain structure, imposing peculiar statistical dependences that restrict memory effects (cf. associated paper [2] proposition 8). The consideration of stochastic processes without restriction allows any kind of dependences and arbitrary long, historical and “non-trivial” memory. From the biological point of view, it formalizes the phenomenon of arbitrary long-lasting memory. From the physical point of view, without proof, such a framework appears as a classical analog of the consistent or decoherent histories developed notably by Griffiths [139], Omnes [140] and Gell-Mann and Hartle [141]. The information structures impose a stronger constraint of a totally ordered set (or more generally a weak ordering) than the preorder imposed by Lieb and Yngvason [142] to derive the second law.

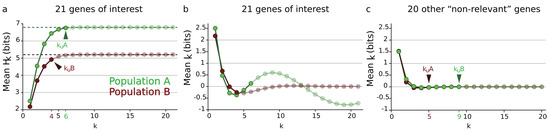

Figure 5.

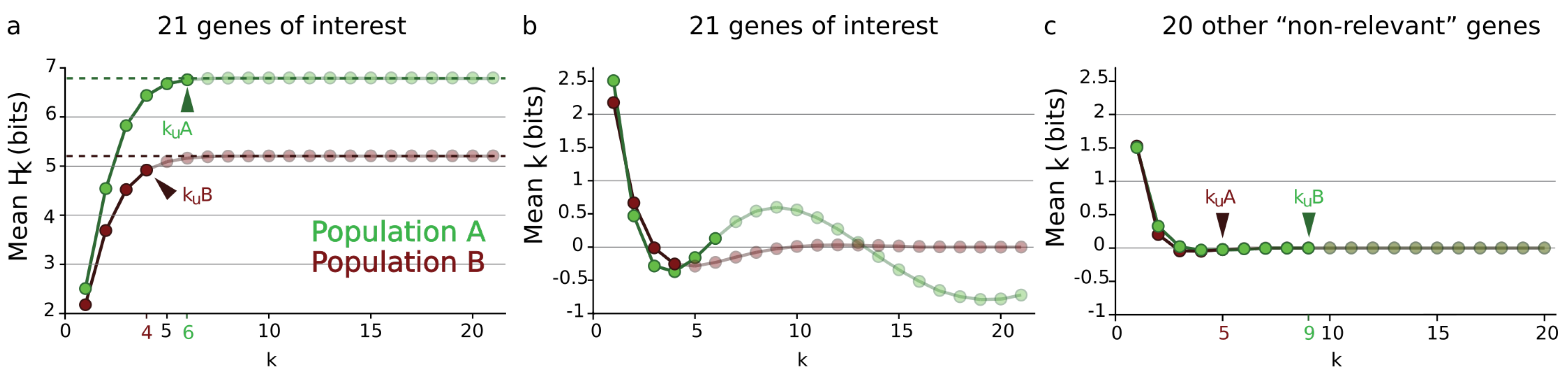

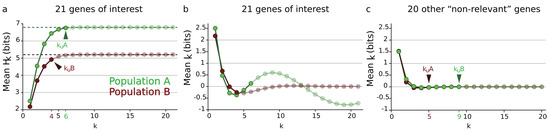

Example of mean entropy and information paths of gene expression. (a) Mean entropy path for the 21 genes of interest for population A (green line) and population B neurons (red line). (b) Mean information path for the same pool of genes. (c) Mean information path for the remaining 20 genes (“non-relevant”). The undersampling dimension introduced in the associated paper [2] is depicted with arrows.

It is also interesting to note that even in this classical probability framework, the entropy cone (the topological cone depicted in Figure 4a) imposed by information inequalities, when considered with this time ordering, is a time-like cone (much like the special relativity cone) but with the arguably remarkable fact that we did not introduce any metric.

The stochastic process definition allows definition of the finite and asymptotic information rate:

Definition 6.

Information rate: the finite information rate r of an information path is .

The asymptotic information rate r of an information path is . It requires the generalization of the present formalism to the infinite dimensional setting or infinite information structures, which is not trivial and will be investigated in further work. We also let the question of the expression in information cohomology of the first principle as an open problem. Question: recently Baez and Fong published a Noether Theorem for Markov processes [39]; can we derive a Noether theorem for random discrete processes in general, that is, for all the symmetric groups using the present construction? Such a theorem would provide the topological expression of the first law of thermodynamics. This question was asked by Neuenschwander [40] and related to this aim, Mansfield gave a Noether theorem for finite elements [38].

3.4.4. Local Minima and Critical Dimension

The derivative of information paths allows establishment of the lemma on which information path analysis is based. A critical point is said to be non-trivial if at this point the sign of the derivative of the path (i.e., the conditional information) changes.

Lemma 2.

Local minima of information paths: if , then all paths from 0 to passing by have at least one local minimum. In order for an information path to have a non-trivial critical point, it is necessary that , the smallest possible dimension of a critical point being .

Proof.

it is a direct consequence of the definitions of paths and of conditional 2-mutual information positivity (, cf. Theorem 3.4.2.2 [92]). □

Note that, by definition, a local minimum can be a global minimum. If it exists, we will call the dimension k of the first local minimum of an information path the first informational critical dimension of the information path and denote it . This allows us to define maximal information paths:

Definition 7.

Positive information path: A positive information path is an information path from 0 to a given corresponding to a given k-tuple of variables such that .

Definition 8.

Maximal positive information path: A maximal positive information path is a positive information path of maximal length. More formally, a maximal positive information path is a positive information path that is not a proper subset of positive information paths.

The definitions make positive information paths and maximal positive information paths coincide with chains (faces) and maximal chains (facets), respectively. The maximal positive information path stops at the first local minimum of an information path, if it exists. The first informational critical dimension of a time series , whenever it exists, gives a quantification of the duration of the memory of the system.

3.4.5. Sum over Paths and Mean Information Path

As previously, for , can be identified with the self-internal energy and for , corresponds to the k-free-energy of a single path . The chain rule of mutual information (Equation (15)) and the derivative of an path (Equation (38)) imply that the k-free-energy can be obtained from a single path:

Hence, the global thermodynamical relation (25) can be understood as the sum over all paths, the sum over informational histories: the classical, discrete and informational version of the path integrals in statistical physics [143]. Indeed, considering an inverse relation between time and dimension in the probability expression (32) for iid processes gives the usual expression of a unitary evolution operator . Free-information-energy integrates over the simplicial structure of the whole lattice of partitions over degrees , which further justifies its free-energy name.

In order to obtain a single state function instead of a group of path functions, we can compute the mean behavior of the information structure, which is achieved by defining the mean and , denoted and :

and

For example, considering , then . This defines the mean mutual-information path and a mean entropy path denoted and in the information landscape. The case of those functions introduced in Reference [108] is studied in Merkh and Montúfar [144] with a characterization of the degeneracy of their maxima and are called factorized mutual information. As previously, can be identified with the mean self-internal energy and for to the mean k-free-information-energy , giving the usual isotherm relation:

The computation of the mean paths corresponds to an idealized information structure for which all the variables would be identically distributed, would have the same entropy and would share the same mutual information at each dimension k: a homogeneous information structure, with homogeneous high-dimension k-body interactions. As is usually achieved in physics notably in mean-field theory (e.g., Weiss [145] or Hartree), it aims to provide a single function summarizing the average behavior of the system (we will see that in practice it misses the important biological structures, pointing out the constitutive heterogeneity of biological systems; see Section 4.1.3). Using the same dataset and results presented in References [2,3,108], the paths estimated on a genetic expression data set are shown for two populations of neurons (A and B) in Figure 5. We quantified the gene expression levels for 41 genes in two populations of cells (A or B) as presented in References [2,3,108]. We estimated and landscapes for these two populations and for two sets of genes (“genes of interest” and “non-relevant”) according to the computational and estimation methods presented in References [2,3,108]. The available computational power restricted the analysis to a maximum of variables (or 21 dimensions) and imposed us to divide the genes between the two classes “genes of interest” and “non-relevant”. The 21 genes of interest were selected within the 41 quantified genes according to their known specific involvement in the function of population A cells.

Figure 5 exhibits the critical phenomenon usually encountered in condensed matter physics, like the example of van der Waals interactions [146]. Like any path, can have a first minimum with a critical dimension that could be called the homogeneous critical dimension. For the 21 genes of interest (whose expression levels, given the literature, are expected to be linked in these cell types) the path exhibited a clear minimum at the critical dimension for population A neurons and population B neurons, reproducing the usual free-energy potential in the condensed phase for which n-body interactions are non-negligible. For the 20 other genes, less expected to be related in these cell types, the path exhibited a monotonic decrease without a non-trivial minimum, which corresponds to the usual free-energy potential in the uncondensed-disordered phase for which the n-body interactions are negligible. Indeed, as shown in the work of Xie and colleagues [147], the tensor network renormalization approach of n-body interacting quantum systems gives rise to an expression of the free-energy as a function of the dimension of the interactions, in the same way achieved here.

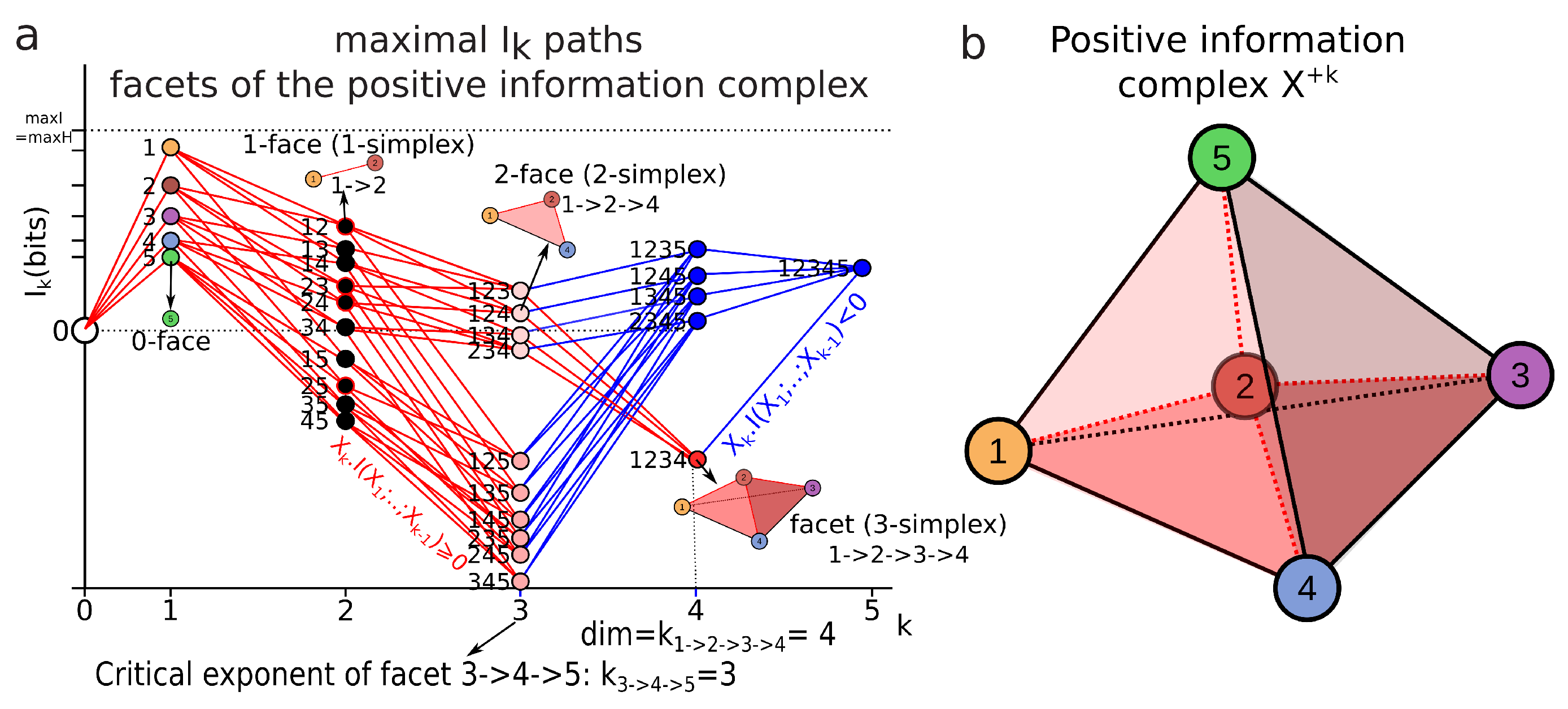

3.4.6. Minimum Free Energy Complex

The analysis of information paths that we now propose aims to determine all the first critical points of information paths, in other words, to determine all the information paths for which conditional information stays positive and all first local minima of the information landscape that can also be interpreted as a conditional independence criterion. Such an exhaustive characterization would give a good description of the landscape and of the complexity of the measured system. The qualitative reason for considering only the first extrema for the data analysis is that beyond that point, mutual information diverges (as explained in Section 3.4.4) and the maximal positive information paths correspond to stable functional modules in the application to data (gene expression).

A more mathematical justification is that they define the facets of a complex in our simplicial structure, which we will call the minimum energy complex of our information structure, underlining that this complex is the formalization of the minimum free-energy principle in a degenerate case.

We now obtain the theorem that our information path analysis aims to characterize empirically:

Theorem 4.

(Minimum free energy complex):the set of all maximal positive information paths forms a simplicial complex that we call the minimum free energy complex. Moreover, the dimension-degree of the minimum free energy complex is the maximum of all the first informational critical dimensions (), if it exists, or the dimension of the whole simplicial structure n. The minimum free energy complex is denoted . A necessary condition for this complex not to be a simplex is that its dimension is greater than or equal to four ().

Proof.

It is known that there is a one-to-one correspondence between simplicial complexes and their set of maximal chains (facets) (see Reference [148], p. 95, for an example). The last part follows from Lemma 2. □

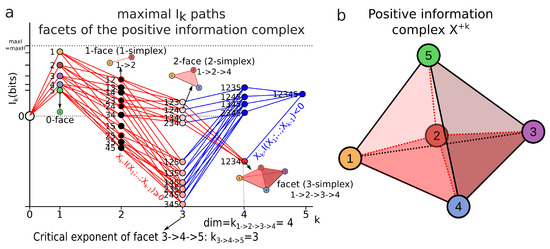

In simple words, the maximal faces (e.g., the maximal positive information paths) encode all the structures of the minimum free energy complex. Figure 6 illustrates one of the simplest examples of a minimum free energy complex that is not a simplex, of dimension four in a five-dimensional simplicial structure of information .

Figure 6.

Example of maximal paths in an landscape for together with its corresponding minimum free energy complex. (a) Maximal paths in an landscape for . The maximum positive information paths are depicted in red, for example, the paths but also , and are maximum positive information paths (i.e., facets/maximal chains). The facet is a 3-simplex while is a 2-simplex with critical dimension . The usual dimension of the simplex is used here but we could have augmented it by one, since we added the constant element “0” to the algebra (pointed space), such that the usual simplicial dimension and the critical dimension correspond. The maximal critical dimension of the positive information paths is the dimension of the complex and hence . (b) The minimum free energy complex corresponding to the preceding maximal paths. It is a subcomplex of the 4-simplex, also called the 5-cell, with only one four-dimensional cell among the five depicted as the bottom tetrahedron with darker red volume. It has 5 vertices, 10 edges, 10 2-faces and 1 3-face (cell), hence its Euler characteristic is and its minimum free energy characteristic characteristic is: .

We define the minimum free energy characteristic as:

where the component with dimension higher than one is a free energy. In the example of Figure 6, it gives:

We propose that this complex defines a complex system:

Definition 9.

Complex system: A complex system is a minimum free energy complex.

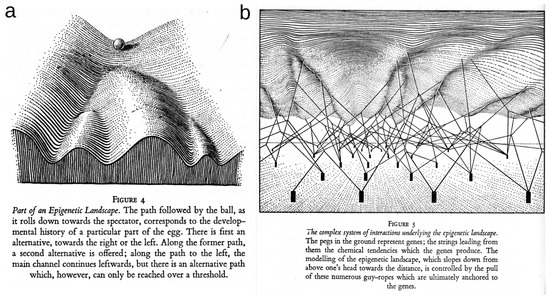

It has the merit to provide a formal definition of complex systems as simple as the definition of an abstract simplicial complex can be and to be quite consensual with respect to some of the approaches in this domain, as reviewed by Newman [149]. Notably, it provides a formal basis to define some of the important concepts in complex systems: emergence being the coboundary map, imergence the boundary map, synergy being information negativity, organization scales being the ranks of random-variable lattices, a collective interaction being a local minimum of free-energy, diversity being the multiplicity of these minima quantified by the number of facets, a network being a 1-complex, a network of networks being a 1-complex in hyper-cohomology.