Differential Effect of the Physical Embodiment on the Prefrontal Cortex Activity as Quantified by Its Entropy

Abstract

1. Introduction

2. Results

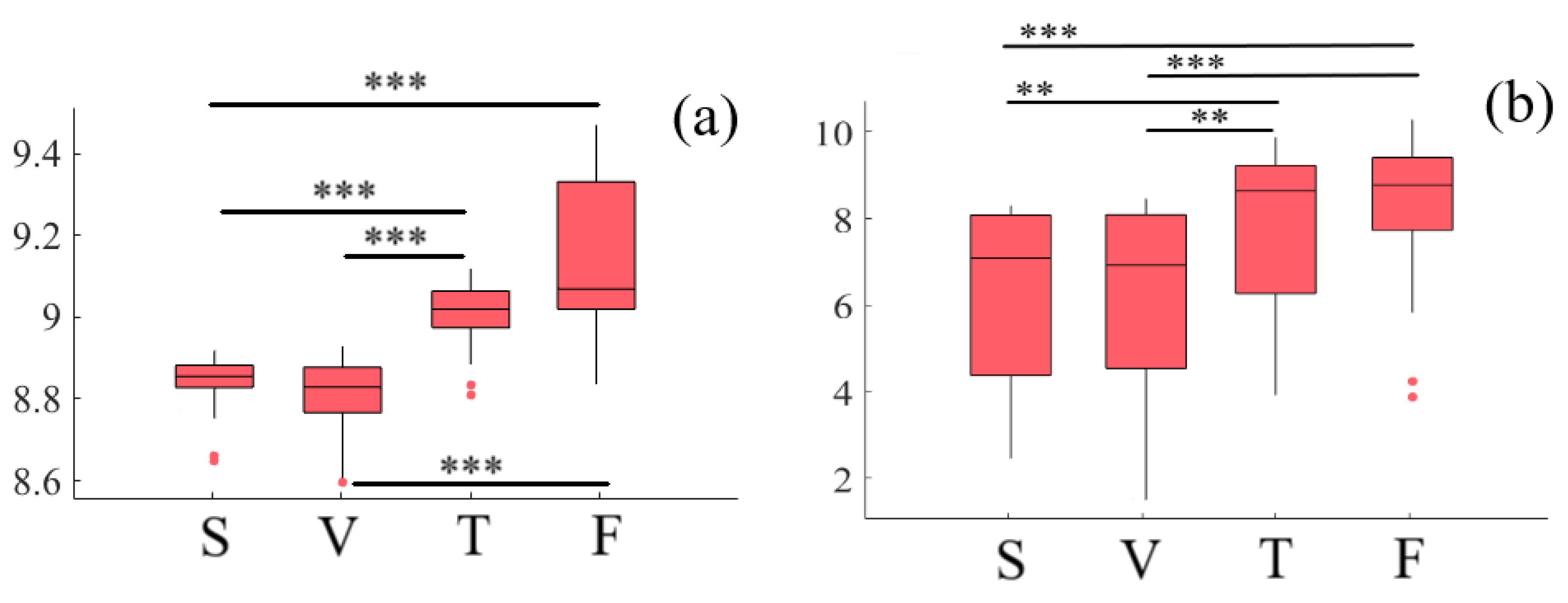

2.1. Variational Information of Frontal Brain Activity

2.1.1. Left-Hemispheric PFC

2.1.2. Right-Hemispheric PFC

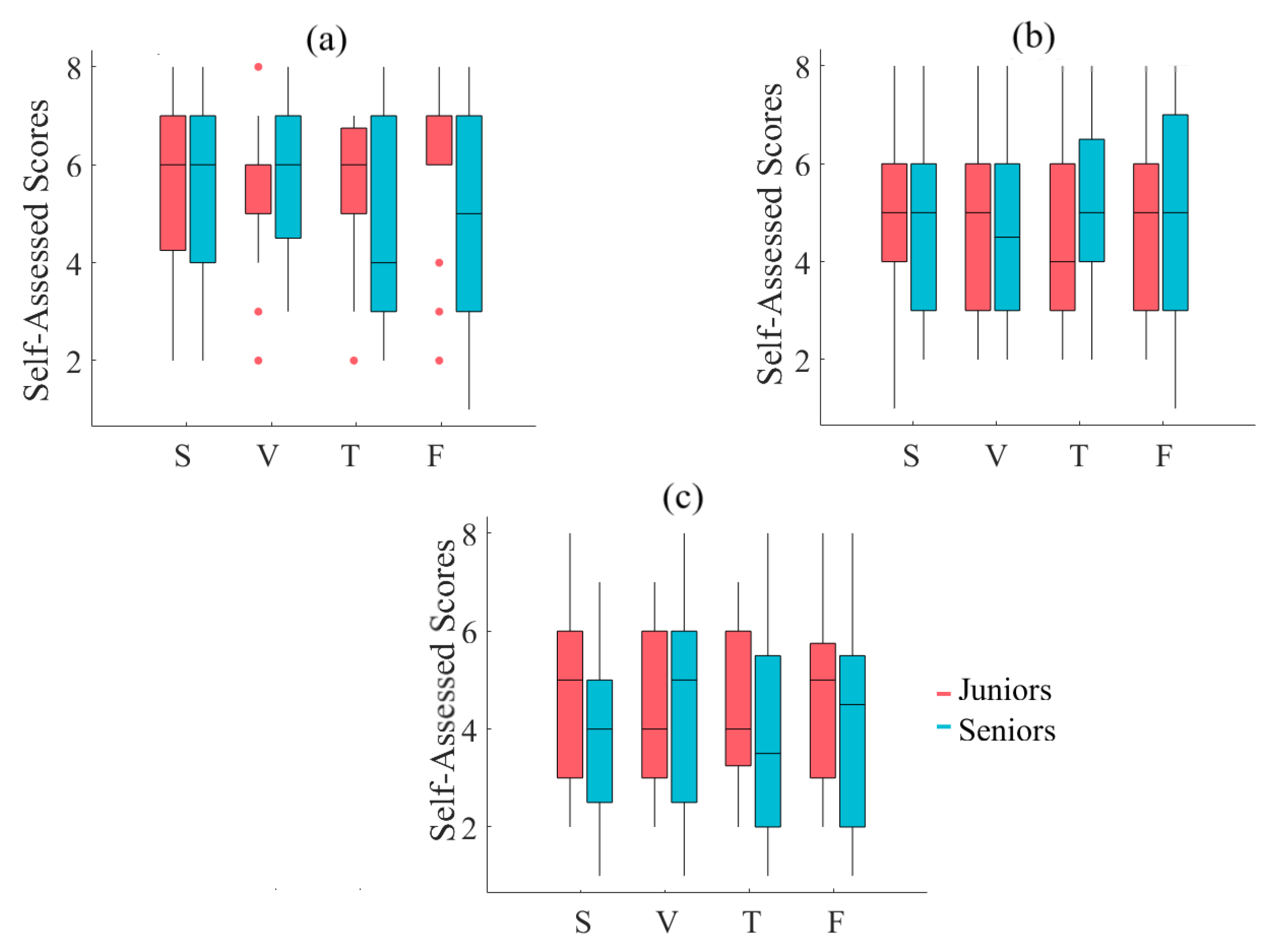

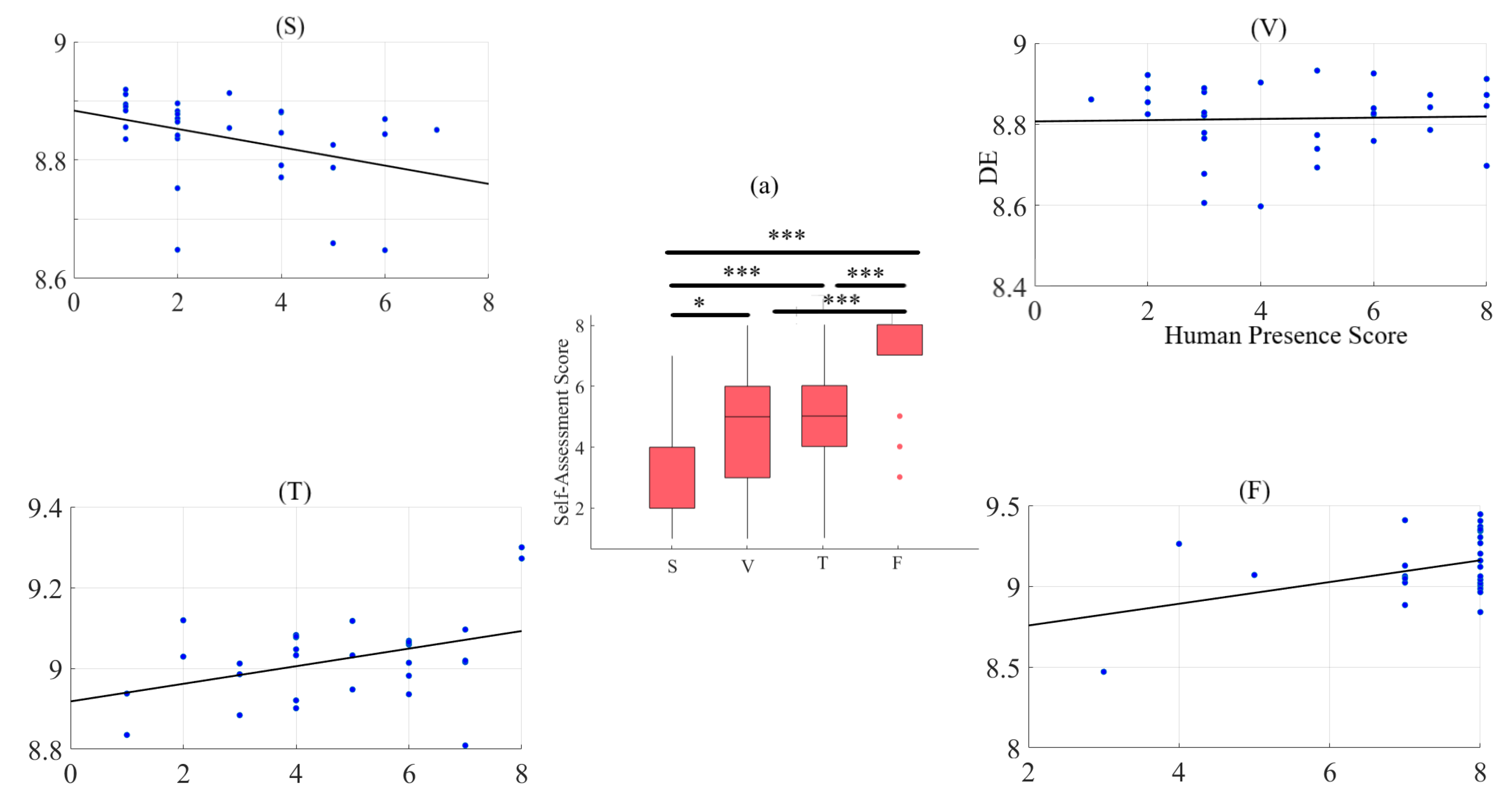

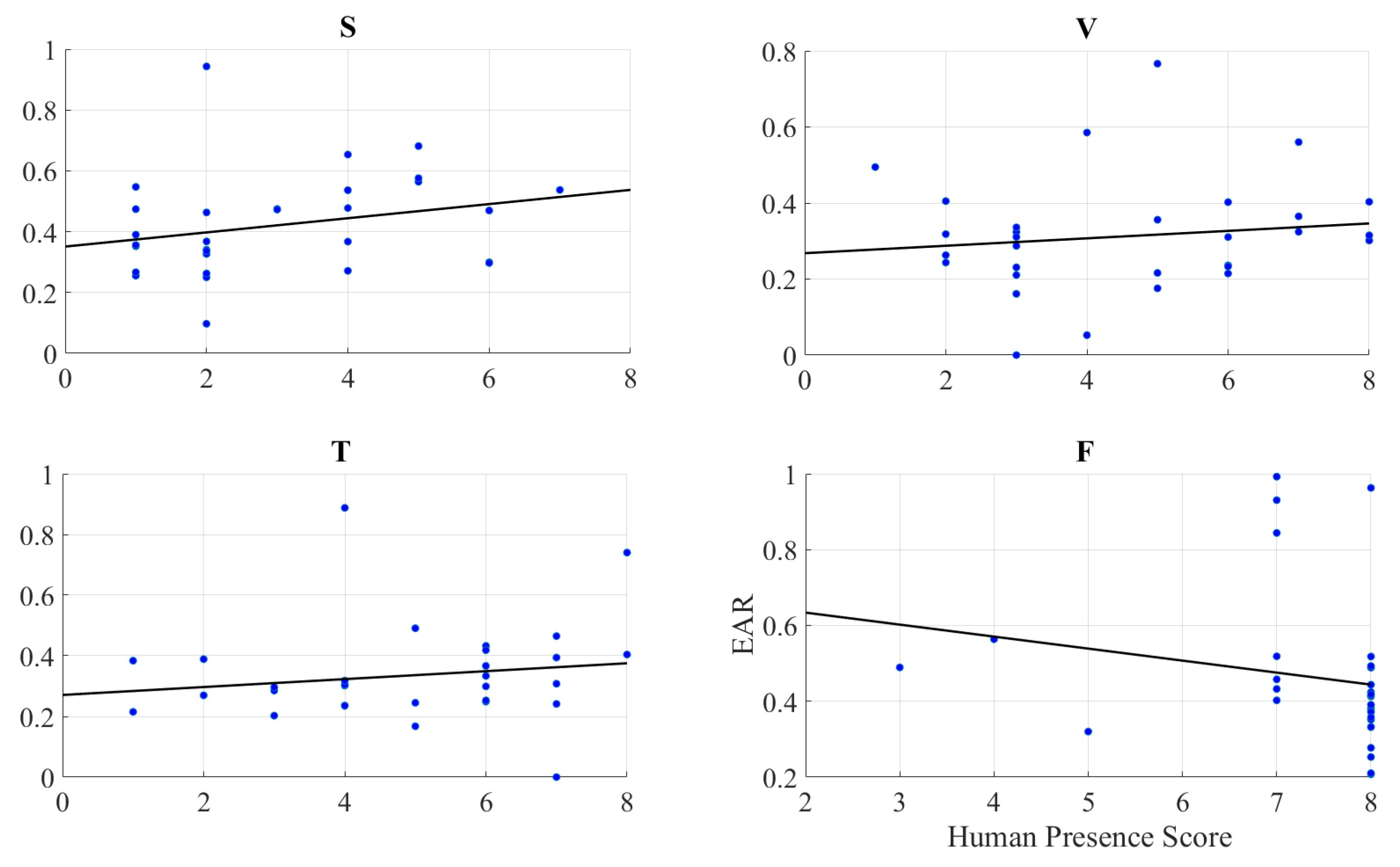

2.2. Self-Assessed Responses to Feeling of Human Presence

2.2.1. Left-Hemispheric PFC

2.2.2. Feeling of Human Presence and Left-Hemispheric Information Content

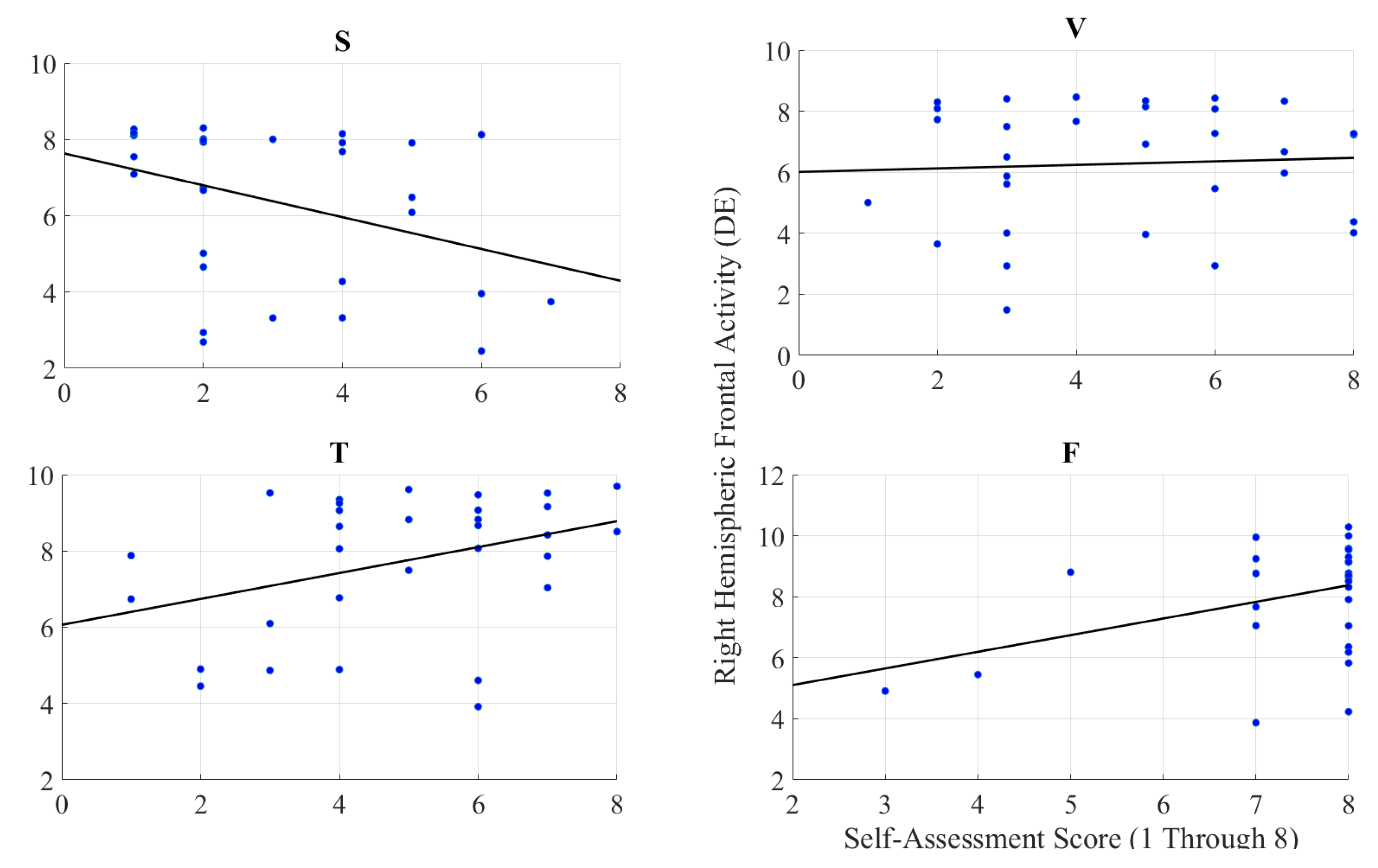

2.2.3. Feeling of Human Presence and Right-Hemispheric Information Content

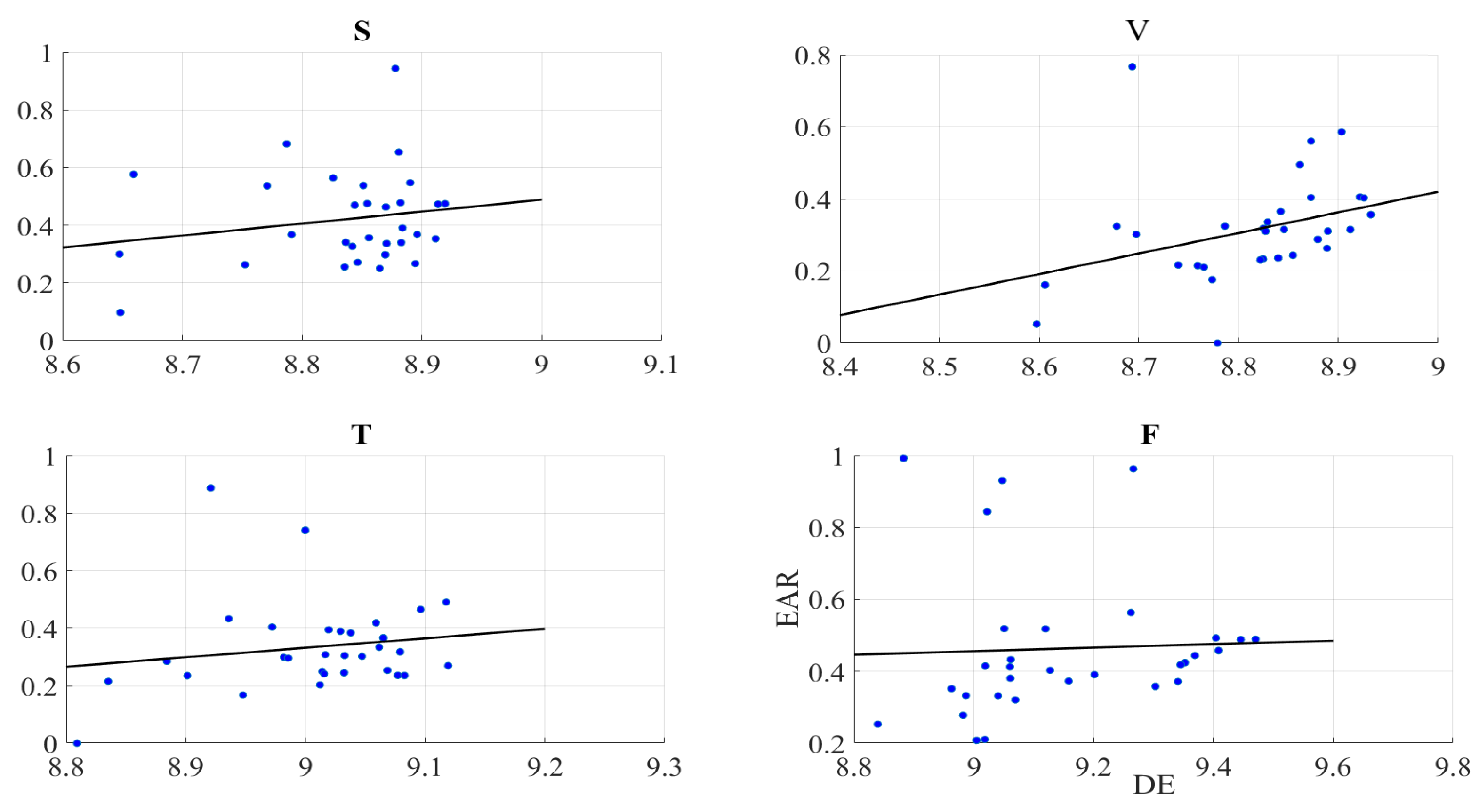

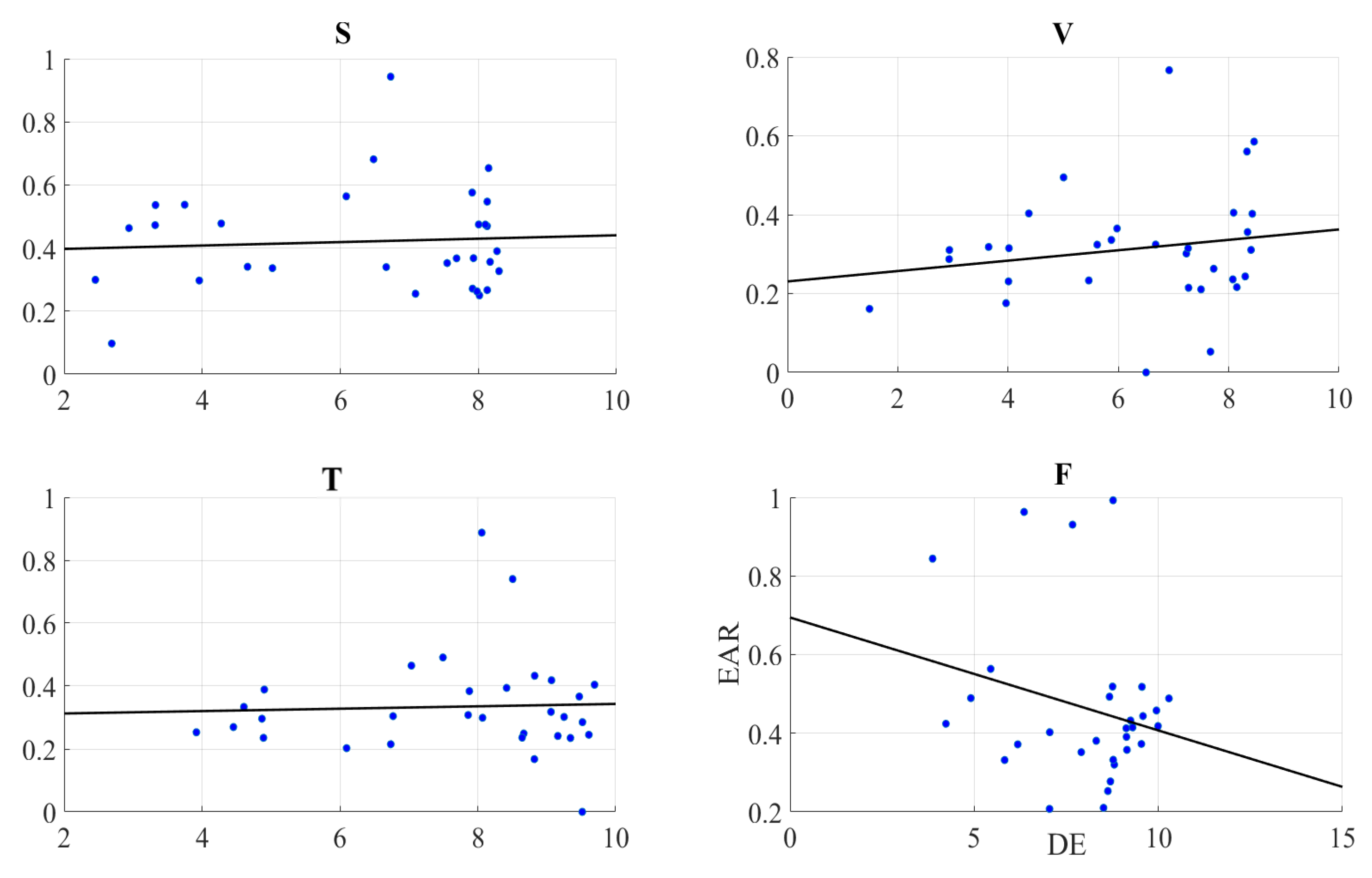

2.3. Gaze Data

Right-Hemispheric PFC

3. Discussion

3.1. PFC Activation and Physical Embodiment

3.2. PFC Activation and Feeling of Human Presence

3.3. PFC Activation and Gazing Patterns

3.4. PFC Activation and Aging

4. Concluding Remarks

5. Materials and Methods

5.1. Participants

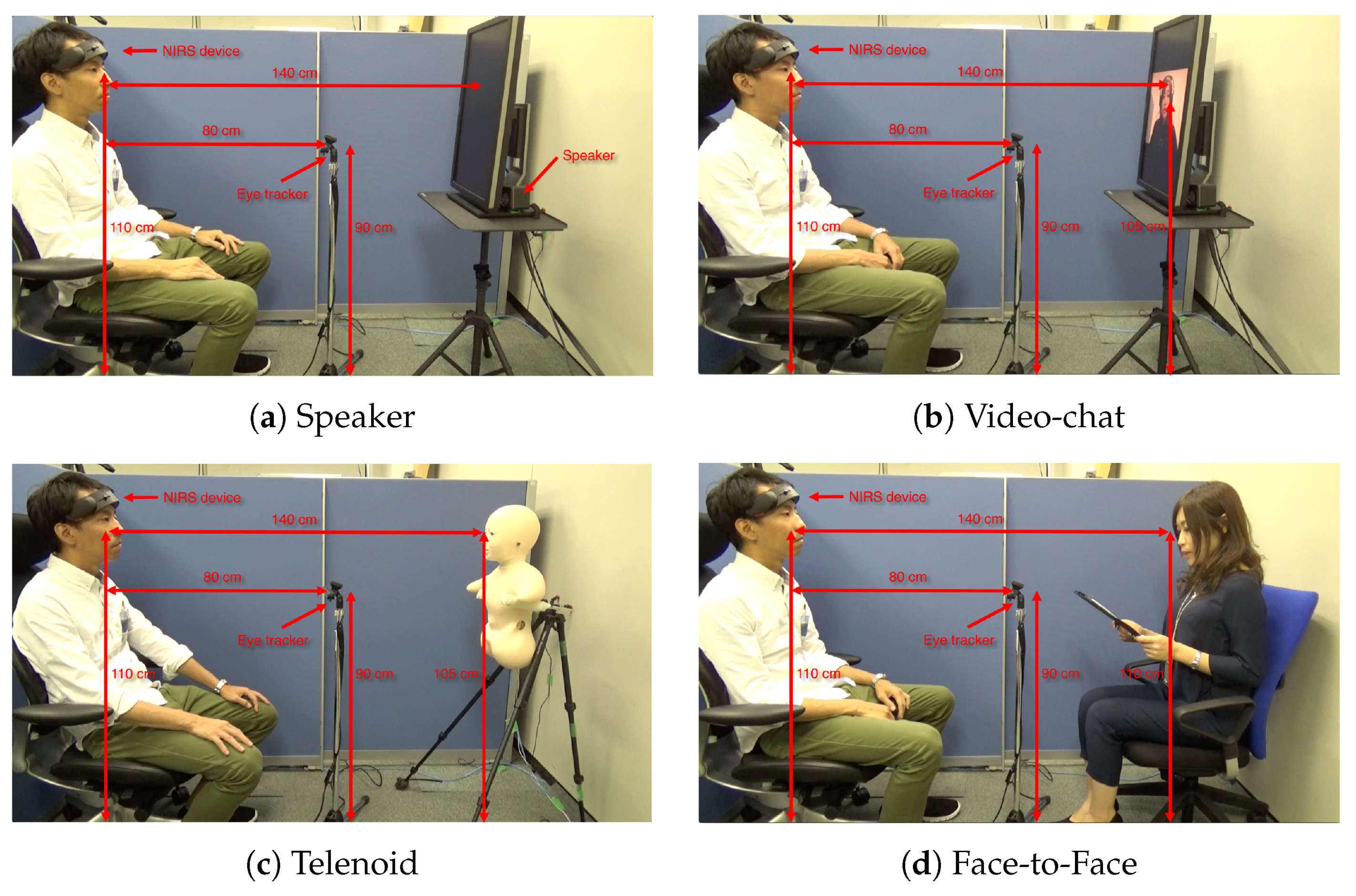

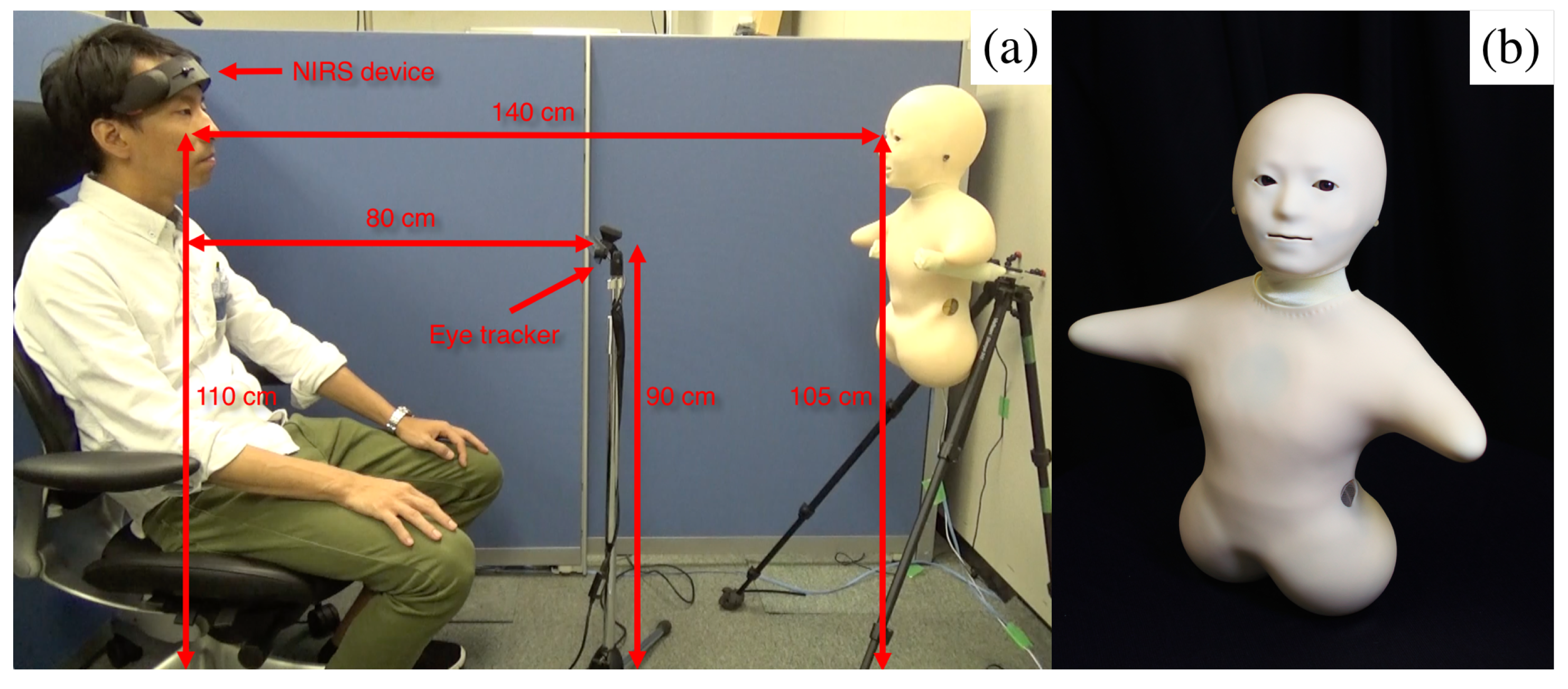

5.2. Communication Media

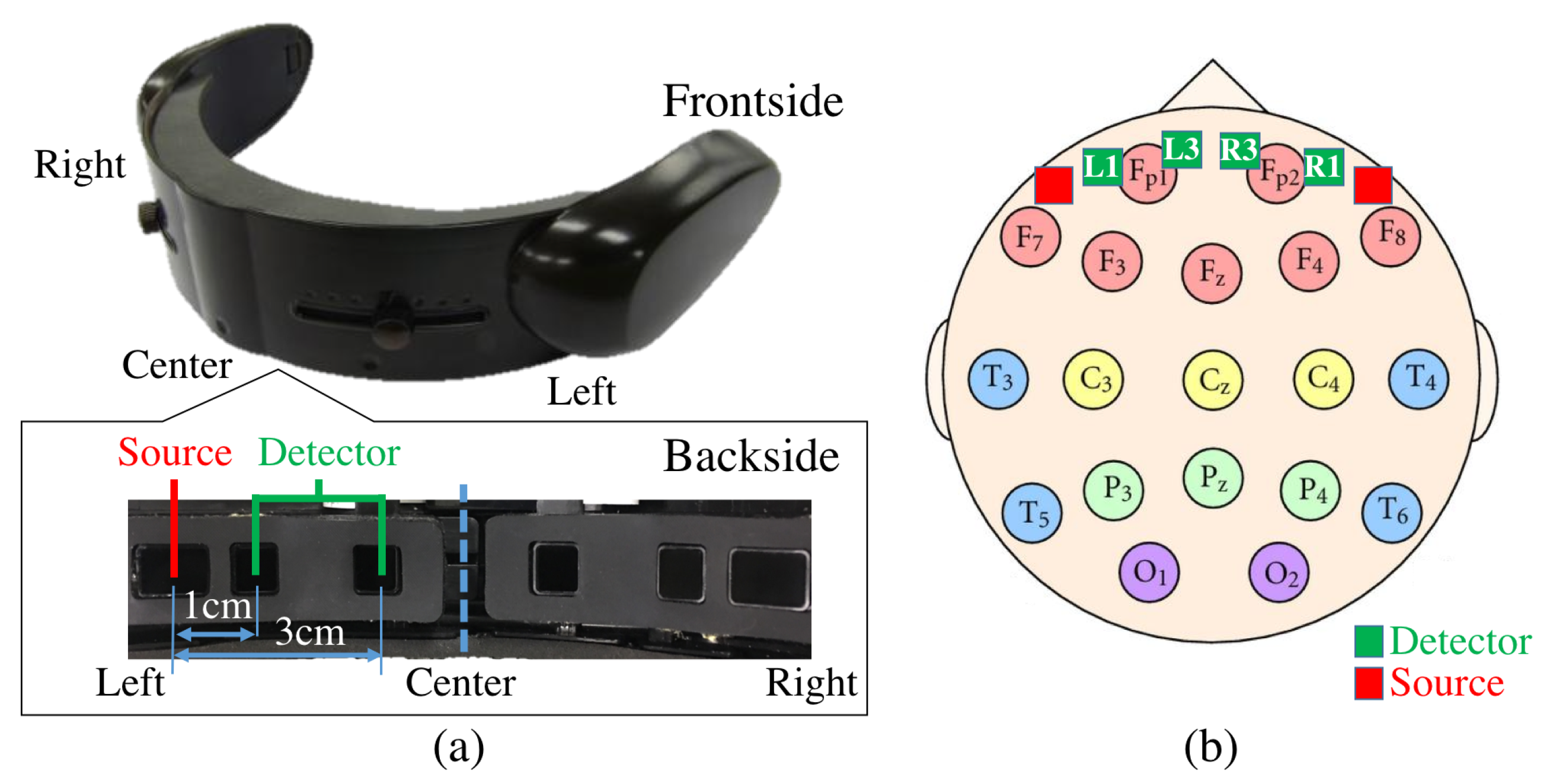

5.3. Sensor Devices

5.4. Paradigm

5.5. Data Processing

5.5.1. NIRS

5.5.2. Gaze Data

5.6. Statistical Analyses

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| PFC | Prefrontal cortex |

| MSE | Differential entropy |

| NIRS | Near-infrared spectroscopy |

| EAR | Eye aversion ratio |

| S | Speaker setting |

| V | Video-chat setting |

| T | Telenoid setting |

| F | Face-to-face (in-person) setting |

| M | Mean of the variable “a” |

| SD | Standard deviation of the variable “a” |

Appendix A. Experimental Setup

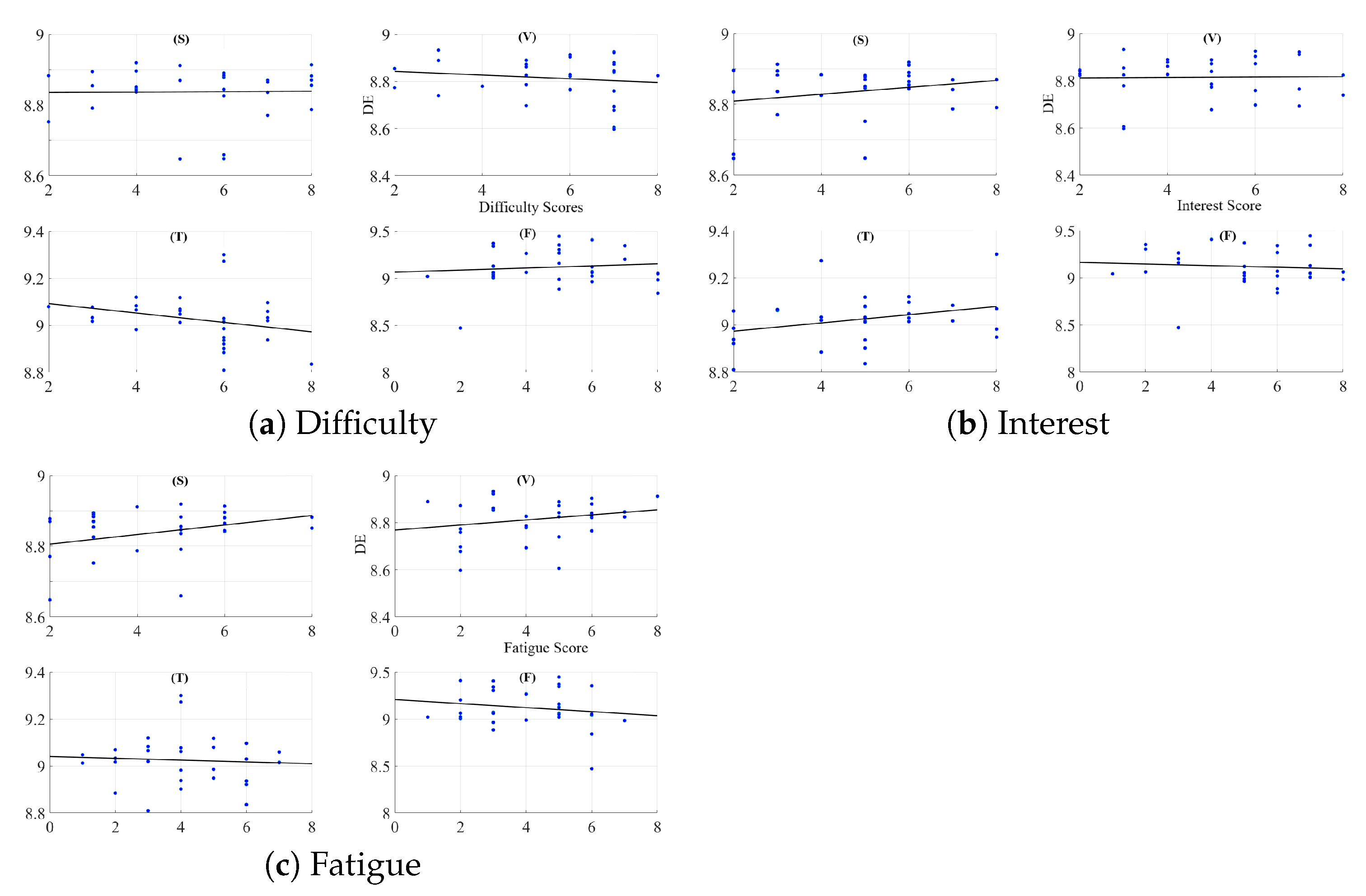

Appendix B. Difficulty of Narrated Stories

Appendix C. Interest in Content of Stories

Appendix D. Induced Fatigue Due to Listening to Stories

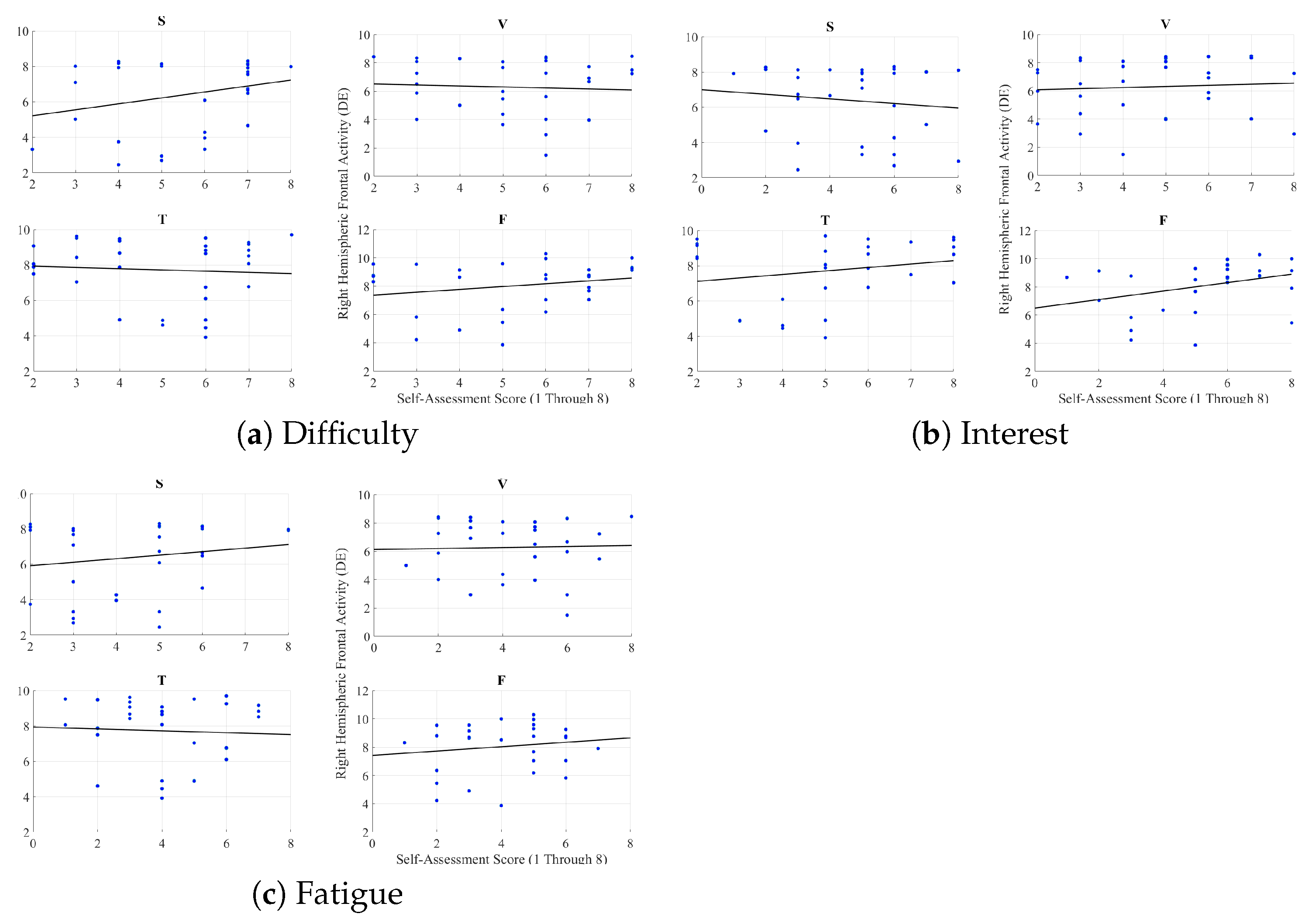

Appendix E. Left Hemispheric Frontal Brain Activity and Self-Assessed Responses of the Participants

Appendix F. Right Hemispheric Frontal Brain Activity and Self-Assessed Responses of the Participants

Appendix G. Pearson Correlation Between Eye Aversion Ratio (EAR) and Self-Assessed Responses of the Participants

References

- Holt-Lunstad, J.; Smith, T.B.; Layton, J.B. Social relationships and mortality risk: A meta-analytic review. PLoS Med. 2013, 7, 369–379. [Google Scholar] [CrossRef]

- Nakanishi, J.; Sumioka, H.; Ishiguro, H. Impact of mediated intimate interaction on education: A huggable communication medium that encourages listening. Front. Psychol. 2016, 7, 510. [Google Scholar] [CrossRef] [PubMed]

- Michaelis, J.E.; Mutlu, B. Reading socially: Transforming the in-home reading experience with a learning-companion robot. Sci. Robot. 2018, 3, eaat5999. [Google Scholar] [CrossRef]

- Carroll, J.M. Human-computer interaction: Psychology as a science of design. Annu. Rev. Psychol. 1997, 48, 61–83. [Google Scholar] [CrossRef] [PubMed]

- Reeves, B.; Nass, C. The Media Equation: How People Treat Computers, Television, and New Media Like Real People and Places, 1st ed.; CSLI Publications: Los Angeles, CA, USA, 2002. [Google Scholar]

- Nass, C.; Moon, Y.; Green, N. Are machines gender neutral? Gender-stereotypic responses to computers with voices. J. Appl. Soc. Psychol. 1997, 27, 864–876. [Google Scholar] [CrossRef]

- Nass, C.; Moon, Y.; Carney, P. Are respondents polite to computers? Social desirability and direct responses to computers. J. Appl. Soc. Psychol. 1999, 29, 1093–1110. [Google Scholar] [CrossRef]

- Holt-Lunstad, J.; Smith, T.B.; Layton, J.B. Computer-mediated communication: Impersonal, Interpersonal, and hyperpersonal integration. Commun. Res. 1996, 23, 3–43. [Google Scholar] [CrossRef]

- Finn, K.E.; Abigail, J.S.; Sylvia, B.W. Video-Mediated Communication, 2nd ed.; Lawrence Erlbaum Associates: Mahwah, NJ, USA, 1997. [Google Scholar]

- Joinson, A.N. Self-disclosure in computer-mediated communication: The role of self-awareness and visual anonymity. Eur. J. Soc. Psychol. 2001, 31, 177–192. [Google Scholar] [CrossRef]

- Joinson, A.N. Effects of computer conferencing on the language use of emotionally disturbed adolescents. Behav. Res. Methods Instrum. Comput. 1987, 19, 224–230. [Google Scholar] [CrossRef]

- Cicourel, F.T.A.; Movellan, J.R. Socialization between toddlers and robots at an early childhood education center. Proc. Natl. Acad. Sci. USA 2007, 104, 17954–17958. [Google Scholar] [CrossRef]

- Mann, J.A.; MacDonald, B.A.; Kuo, I.H.; Li, X.; Broadbent, E. People respond better to robots than computer tablets delivering healthcare instructions. Comput. Hum. Behav. 2015, 43, 112–117. [Google Scholar] [CrossRef]

- Sakamoto, D.; Kanda, T.; Ono, T.; Ishiguro, H.; Hagita, N. Android as a telecommunication medium with a human-like presence. In Proceedings of the Human Robot Interaction (HRI), Arlington, VA, USA, 9–11 March 2007; ACM: Washington, DC, USA, 2007; pp. 193–200. [Google Scholar]

- Kanda, T.; Nakanishi, H.; Ishiguro, H. Physical embodiment can produce robot operator’s pseudo presence. Front. ICT 2015, 2, 112–117. [Google Scholar] [CrossRef]

- Tanaka, M.; Ishii, A.; Yamano, E.; Ogikubo, H.; Okazaki, M.; Kamimura, K.; Konishi, Y.; Emoto, S.; Watanabe, Y. Effect of a human-type communication robot on cognitive function in elderly women living alone. Med. Sci. Monit. 2012, 18, CR550–CR557. [Google Scholar] [CrossRef] [PubMed]

- Pu, L.; Moyle, W.; Jones, C.; Todorovic, M. The effectiveness of social robots for older adults: A systematic review and meta-analysis of randomized controlled studies. Gerontologist 2018, 59, e37–e51. [Google Scholar] [CrossRef] [PubMed]

- Broadbent, E. Interactions with robots: The truths we reveal about ourselves. Annu. Rev. Psychol. 2017, 68, 627–652. [Google Scholar] [CrossRef] [PubMed]

- Forbes, C.E.; Grafman, J. The role of the human prefrontal cortex in social cognition and moral judgment. Annu. Rev. Neurosci. 2010, 33, 299–324. [Google Scholar] [CrossRef] [PubMed]

- Mayer, R.F. The Prefrontal Cortex: Anatomy, Physiology, and Neuropsychology of the Frontal Lobe; Raven: New York, NY, USA, 1997. [Google Scholar]

- Mitchell, J.P.; Macrae, C.N.; Banaji, M.R. Dissociable medial prefrontal contributions to judgments of similar and dissimilar others. Neuron 2006, 50, 655–663. [Google Scholar] [CrossRef] [PubMed]

- Amodio, D.M.; Frith, C.D. Meeting of minds: The medial frontal cortex and social cognition. Nat. Rev. Neurosci. 2006, 7, 268–534. [Google Scholar] [CrossRef]

- Molenberghs, P.; Johnson, H.; Henry, J.D.; Mattingley, J.B. Understanding the minds of others: A neuroimaging meta-analysis. Neurosci. Biobehav. Rev. 2016, 65, 276–291. [Google Scholar] [CrossRef]

- Grossmann, T. The role of medial prefrontal cortex in early social cognition. Front. Hum. Neurosci. 2013, 7, 340. [Google Scholar] [CrossRef]

- Takahashi, H.; Terada, K.; Morita, T.; Suzuki, S.; Haji, T.; Kozima, H.; Yoshikawah, M.; Matsumotoi, Y.; Omorib, T.; Asadaa, M.; et al. Different impressions of other agents obtained through social interaction uniquely modulate dorsal and ventral pathway activities in the social human brain. Cortex 2014, 58, 289–300. [Google Scholar] [CrossRef] [PubMed]

- Krach, S.; Hegel, F.; Wrede, B.; Sagerer, G.; Binkofski, F.; Kircher, T. Can machines think? Interaction and perspective taking with robots investigated via fMRI. PLoS ONE 2008, 3, e2597. [Google Scholar] [CrossRef] [PubMed]

- Chaminade, T.; Rosset, D.; Fonseca, D.D.; Nazarian, B.; Lutscher, E.; Cheng, G.; Deruelle, C. How do we think machines think? An fMRI study of alleged competition with an artificial intelligence. Front. Hum. Neurosci. 2012, 6, 103. [Google Scholar] [CrossRef] [PubMed]

- Baddeley, A. Working memory: Theories, models, and controversies. Annu. Rev. Psychol. 2012, 63, 1–29. [Google Scholar] [CrossRef] [PubMed]

- Owen, A.M.; McMillan, K.M.; Laird, A.R.; Bullmore, E. N-Back working memory paradigm: A meta-analysis of normative functional neuroimaging studies. Hum. Brain Mapp. 2005, 25, 46–59. [Google Scholar] [CrossRef]

- Wolf, I.; Dziobek, I.; Heekeren, H.R. Neural correlates of social cognition in naturalistic settings: A model-free analysis approach. NeuroImage 2010, 49, 894–904. [Google Scholar] [CrossRef]

- Mar, R.A. The Neural Bases of social cognition and story comprehension. Ann. Rev. Psychol. 2011, 62, 103–134. [Google Scholar] [CrossRef]

- Mar, R.A. The neuropsychology of narrative: Story comprehension, story production and their interrelation. Neuropsychologia 2004, 42, 1414–1434. [Google Scholar] [CrossRef]

- Ferrari, M.; Quaresima, V. A brief review on the history of human functional near-infrared spectroscopy (fNIRS) development and fields of application. NeuroImage 2012, 63, 921–935. [Google Scholar] [CrossRef]

- Ferrari, M.; Quaresima, V. Functional near-infrared spectroscopy for the assessment of speech related tasks. Brain Lang. 2012, 121, 90–109. [Google Scholar] [CrossRef]

- Naseer, N.; Hong, K.S. fNIRS-based brain-computer interfaces: A review. Front. Hum. Neurosci. 2015, 9, 3. [Google Scholar] [CrossRef] [PubMed]

- Canning, C.; Scheutz, M. Functional near-infrared spectroscopy in human-robot interaction. J. Hum. Robot Interact. 2013, 2, 62–84. [Google Scholar] [CrossRef]

- Gagnon, L.; Yücel, M.A.; Dehaes, M.; Cooper, R.J.; Perdue, K.L.; Selb, J.; Huppert, T.J.; Hoge, R.D.; Boas, D.A. Quantification of the cortical contribution to the NIRS signal over the motor cortex using concurrent NIRS-fMRI measurements. NeuroImage 2011, 59, 3933–3940. [Google Scholar] [CrossRef] [PubMed]

- Cui, X.; Bray, S.; Bryant, D.M.; Glover, G.H.; Reiss, A.L. A quantitative comparison of NIRS and fMRI across multiple cognitive tasks. NeuroImage 2011, 54, 2808–2821. [Google Scholar] [CrossRef] [PubMed]

- Goldberger, A.L.; Amaral, L.A.; Hausdorff, J.M.; Ivanov, P.C.; Peng, C.K.; Stanley, H.E. Fractal dynamics in physiology: Alterations with disease and aging. Proc. Natl. Acad. Sci. USA 2002, 99, 2466–2472. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.C. Complexity and noise. A phase space approach. J. Phys. I 1991, 1, 971–977. [Google Scholar]

- Schultze, U. Embodiment and presence in virtual worlds: A review. J. Inf. Technol. 2010, 25, 434–449. [Google Scholar] [CrossRef]

- Rauchbauer, B.; Nazarian, B.; Bourhis, M.; Ochs, M.; Prévot, L.; Chaminade, T. Brain activity during reciprocal social interaction investigated using conversational robots as control condition. Philos. Trans. R. Soc. B 2019, 374, 20180033. [Google Scholar] [CrossRef]

- Keshmiri, S.; Sumioka, H.; Yamazaki, R.; Ishiguro, H. Older People Prefrontal Cortex Activation Estimates Their Perceived Difficulty of a Humanoid-Mediated Conversation. IEEE Robot. Autom. Lett. 2019, 4, 4108–4115. [Google Scholar] [CrossRef]

- Keshmiri, S.; Sumioka, H.; Okubo, M.; Yamazaki, R.; Nakae, A.; Ishiguro, H. Potential Health Benefit of Physical Embodiment in Elderly Counselling: A Longitudinal Case Study. In Proceedings of the International Conference on Systems, Man, and Cybernetics (SMC2018), Miyazaki, Japan, 7–10 October 2018. [Google Scholar] [CrossRef]

- Matarić, M.J. Socially assistive robotics: Human augmentation versus automation. Sci. Robot. 2017, 2, eaam5410. [Google Scholar] [CrossRef]

- Robinson, H.; MacDonald, B.; Kerse, N.; Broadbent, E. The psychosocial effects of a companion robot: A randomized controlled trial. J. Am. Med. Dir. Assoc. (JAMA) 2013, 14, 661–667. [Google Scholar] [CrossRef]

- Soler, M.V.; Agüera-Ortiz, L.; Rodrìguez, J.O.; Rebolledo, C.M.; Muñoz, P.A.; Pérez, I.R.; Ruiz, E.O.; Sánchez, A.B.; Cano, V.H.; Chillón, L.C.; et al. Social robots in advanced dementia. Front. Aging Neurosci. 2015, 14, 133. [Google Scholar] [CrossRef]

- Yang, G.Z.; Bellingham, J.; Dupont, P.E.; Fischer, P.; Floridi, L.; Full, R.; Jacobstein, N.; Kumar, V.; McNutt, M.; Merrifield, R.; et al. The grand challenges of Science Robotics. Sci. Robot. 2018, 3, eaar7650. [Google Scholar] [CrossRef]

- Scassellati, B. Theory of mind for a humanoid robot. Auton. Robots 2002, 12, 13–24. [Google Scholar] [CrossRef]

- Clabaugh, C.; Matarić, M. Robots for the people, by the people: Personalizing human-machine interaction. Sci. Robot. 2018, 3, eaat7451. [Google Scholar] [CrossRef]

- Lerner, Y.; Honey, C.J.; Silbert, L.J.; Hasson, U. Topographic mapping of a hierarchy of temporal receptive windows using a narrated story. J. Neurosci. 2011, 31, 2906–2915. [Google Scholar] [CrossRef]

- Huth, A.G.; de Heer, W.A.; Griffiths, T.L.; Theunissen, E.F.; Gallant, J.L. Natural speech reveals the semantic maps that tile human cerebral cortex. Nature 2016, 532, 453–458. [Google Scholar] [CrossRef] [PubMed]

- Gilbert, D.T.; Malone, P.S. The correspondence bias. Psychol. Bull. 1995, 117, 21. [Google Scholar] [CrossRef]

- Storms, M.D. Videotape and the attribution process: Reversing actors’ and observers’ points of view. J. Pers. Soc. Psychol. 1973, 27, 165. [Google Scholar] [CrossRef]

- Chaminade, T.; Zecca, M.; Blakemore, S.J.; Takanishi, A.; Frith, C.D.; Micera, S.; Dario, P.; Rizzolatti, G.; Gallese, V.; Umiltà, M.A. Brain response to humanoid robot in areas implicated in the perception of human emotional gesture. PLoS ONE 2010, 5, e11577. [Google Scholar] [CrossRef]

- Sleimen-Malkoun, R.; Temprado, J.J.; Hong, S.L. Aging induced loss of complexity and dedifferentiation: Consequences for coordination dynamics within and between brain, muscular and behavioral levels. Front. Aging Neurosci. 2014, 6, 140. [Google Scholar] [CrossRef]

- Li, T.; Luo, Q.; Gong, H. Gender-specific hemodynamics in prefrontal cortex during a verbal working memory task by near-infrared spectroscopy. Behav. Brain Res. 2010, 209, 148–153. [Google Scholar] [CrossRef]

- Nomura, T.; Kanda, T.; Suzuki, T.; Kato, K. Prediction of human behavior in human robot interaction using psychological scales for anxiety and negative attitudes toward robots. IEEE Trans. Robot. 2008, 24, 442–451. [Google Scholar] [CrossRef]

- Ezer, N.; Fisk, A.D.; Rogers, W.A. More than a servant: Self-reported willingness of younger and older adults to having a robot perform interactive and critical tasks in the home. In Proceedings of the Human Factors and Ergonomics Society Annual Meeting; SAGE Publications: Los Angeles, CA, USA, 2009; Volume 53, pp. 136–140. [Google Scholar] [CrossRef]

- Seider, T.R.; Fieo, R.A.; O’Shea, A.; Porges, E.C.; Woods, A.J.; Cohen, R.A. Cognitively Engaging Activity Is Associated with Greater Cortical and Subcortical Volumes. Front. Aging Neurosci. 2016, 8, 94. [Google Scholar] [CrossRef]

- Garrett, D.D.; Samanez-Larkin, G.R.; MacDonald, S.W.; Lindenberger, U.; McIntosh, A.R.; Grady, C.L. Moment-to-moment brain signal variability: A next frontier in human brain mapping? Neurosci. Biobehav. Rev. 2013, 37, 610–624. [Google Scholar] [CrossRef]

- Miller, G.A. The magical number seven, plus or minus two: Some limits on our capacity for processing information. Physiol. Rev. 2001, 10, 343–352. [Google Scholar] [CrossRef]

- Cohen, J.D.; Daw, N.; Engelhardt, B.; Hasson, U.; Li, K.; Niv, Y.; Norman, K.A.; Pillow, J.; Ramadge, P.J.; Turk-Browne, N.B.; et al. Computational approaches to fMRI analysis. Nat. Neurosci. 2017, 20, 304–313. [Google Scholar] [CrossRef]

- Keshmiri, S.; Sumioka, H.; Yamazaki, R.; Ishiguro, H. Differential Entropy Preserves Variational Information of Near-Infrared Spectroscopy Time Series Associated with Working Memory. Front. Neuroinform. 2018, 12, 33. [Google Scholar] [CrossRef]

- Ben-Yakov, A.; Honey, C.J.; Lerner, Y.; Hasson, U. Loss of reliable temporal structure in event-related averaging of naturalistic stimuli. NeuroImage 2012, 63, 501–506. [Google Scholar] [CrossRef]

- Haynes, J.D.; Rees, G. Decoding mental states from brain activity in humans. Nat. Rev. Neurosci. 2007, 7, 523–534. [Google Scholar] [CrossRef]

- Saygin, A.P.; Chaminade, T.; Ishiguro, H.; Driver, J.; Frith, C. The thing that should not be: Predictive coding and the uncanny valley in perceiving human and humanoid robot actions. Soc. Cognit. Affect. Neurosci. (SCAN) 2012, 7, 413–422. [Google Scholar] [CrossRef]

- Streeck, J.; Goodwin, C.; LeBaron, C. Embodied Interaction: Language and Body in the Material World; Cambridge University Press: New York, NY, USA, 2011. [Google Scholar]

- Sacks, H.; Schegloff, E.; Jefferson, G. A simplest systematics for the organization of turn taking for conversation. In Studies in the Organization of Conversational Interaction; Academic Press: New York, NY, USA, 1978. [Google Scholar]

- Selting, M. On the interplay of syntax and prosody in the constitution of turn-constructional units and turns in conversation. Pragmat. Q. Publ. Int. Pragmat. Assoc. (IPrA) 1996, 6, 371–388. [Google Scholar] [CrossRef]

- Arminen, I.; Licoppe, C.; Spagnolli, A. Respecifying mediated interaction. Res. Lang. Soc. Interact. 2016, 49, 290–309. [Google Scholar] [CrossRef]

- Redcay, E.; Schilbach, L. Using second-person neuroscience to elucidate the mechanisms of social interaction. Nat. Rev. Neurosci. 2019, 25, 495–505. [Google Scholar] [CrossRef]

- Sumioka, H.; Nishio, S.; Minato, T.; Yamazaki, R.; Ishiguro, H. Minimal human design approach for sonzai-kan media: Investigation of a feeling of human presence. Cognit. Comput. 2014, 6, 760–774. [Google Scholar] [CrossRef]

- Sakai, K.; Minato, T.; Ishi, C.T.; Ishiguro, H. Novel Speech Motion Generation by Modelling Dynamics of Human Speech Production. Front. Robot. AI-Humanoid Robot. 2017, 4, 760–774. [Google Scholar] [CrossRef]

- Takahashi, T.; Takikawa, Y.; Kawagoe, R.; Shibuya, S.; Iwano, T.; Kitazawa, S. Social relationships and mortality risk: A meta-analytic review. NeuroImage 2013, 57, 991–1002. [Google Scholar] [CrossRef]

- Gagnon, L.; Perdue, K.; Greve, D.N.; Goldenholz, D.; Kaskhedikar, G.; Boas, D.A. Improved recovery of the hemodynamic response in diffuse optical imaging using short optode separations and state-space modeling. NeuroImage 2013, 56, 1362–1371. [Google Scholar] [CrossRef]

- Sato, T.; Nambu, I.; Takeda, K.; Takatsugu, A.; Yamashita, O.; Isogaya, Y.; Inoueand, Y.; Otaka, Y.; Wadaand, Y.; Kawato, M.; et al. Reduction of global interference of scalp-hemodynamic in functional near-infrared spectroscopy using short distance probes. NeuroImage 2014, 141, 72–91. [Google Scholar] [CrossRef]

- Yamada, T.; Umeyama, S.; Matsuda, K. Multidistance probe arrangement to eliminate artifacts in functional near-infrared spectroscopy. J. Biomed. Opt. 2009, 14, 064034. [Google Scholar] [CrossRef]

- Swartz Center for Computational Neuroscience (UCSD). Multi-Modal Time-Synched Data Transmission over Local Network (Labstreaminglayer); Swartz Center for Computational Neuroscience (UCSD): Brooklyn, NY, USA, 2017. [Google Scholar]

- Tak, S.; Ye, J.C. Statistical analysis of fNIRS data: A comprehensive review. NeuroImage 2016, 85, 120–132. [Google Scholar] [CrossRef]

- Zhang, Y.; Brooks, D.H.; Franceschini, M.A.; Boas, D.A. Eigenvector-based spatial filtering for reduction of physiological interference in diffuse optical imaging. J. Biomed. Opt. 2005, 10, 011014. [Google Scholar] [CrossRef]

- Cooper, R.; Selb, J.; Gagnon, L.; Phillip, D.; Schytz, H.W.; Iversen, H.K.; Ashina, M.; Boas, D.A. A systematic comparison of motion artifact correction techniques for functional near-infrared spectroscopy. Front. Neurosci. 2012, 6, 147. [Google Scholar] [CrossRef]

- Basso, M.; Cutini, S.S.; Ursini, M.L.; Ferrari, M.; Quaresima, V. Prefrontal cortex activation during story encoding/retrieval: A multi-channel functional near-infrared spectroscopy study. Front. Hum. Neurosci. 2013, 7, 925. [Google Scholar] [CrossRef]

- Gabrieli, J.D.; Poldrack, R.A.; Desmond, J.E. The role of left prefrontal cortex in language and memory. Proc. Natl. Acad. Sci. USA 1998, 95, 906–913. [Google Scholar] [CrossRef]

- Frith, C.D.; Frith, U. The neural basis of mentalizing. Neuron 2006, 50, 531–534. [Google Scholar] [CrossRef]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory, 2nd ed.; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 2006. [Google Scholar]

- Shi, L.C.; Jiao, Y.Y.; Lu, B.L. Differential entropy feature for EEG-based vigilance estimation. In Proceedings of the 35th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Osaka, Japan, 3–7 July 2013; pp. 6627–6630. [Google Scholar]

- Keshmiri, S.; Sumioka, H.; Yamazaki, R.; Ishiguro, H. A Non-parametric Approach to the Overall Estimate of Cognitive Load Using NIRS Time Series. Front. Hum. Neurosci. 2017, 11, 15. [Google Scholar] [CrossRef]

- Morel, P. Gramm: Grammar of graphics plotting for Matlab. Github 2016, 3, 568. [Google Scholar] [CrossRef]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Keshmiri, S.; Sumioka, H.; Yamazaki, R.; Ishiguro, H. Differential Effect of the Physical Embodiment on the Prefrontal Cortex Activity as Quantified by Its Entropy. Entropy 2019, 21, 875. https://doi.org/10.3390/e21090875

Keshmiri S, Sumioka H, Yamazaki R, Ishiguro H. Differential Effect of the Physical Embodiment on the Prefrontal Cortex Activity as Quantified by Its Entropy. Entropy. 2019; 21(9):875. https://doi.org/10.3390/e21090875

Chicago/Turabian StyleKeshmiri, Soheil, Hidenobu Sumioka, Ryuji Yamazaki, and Hiroshi Ishiguro. 2019. "Differential Effect of the Physical Embodiment on the Prefrontal Cortex Activity as Quantified by Its Entropy" Entropy 21, no. 9: 875. https://doi.org/10.3390/e21090875

APA StyleKeshmiri, S., Sumioka, H., Yamazaki, R., & Ishiguro, H. (2019). Differential Effect of the Physical Embodiment on the Prefrontal Cortex Activity as Quantified by Its Entropy. Entropy, 21(9), 875. https://doi.org/10.3390/e21090875