2. Part I. Where?

2.1. A Simple Model, First Version

Let me start by recalling some well-established facts in thermodynamics. These are illustrated by a simple model that captures some salient aspects of the thermal history of the universe.

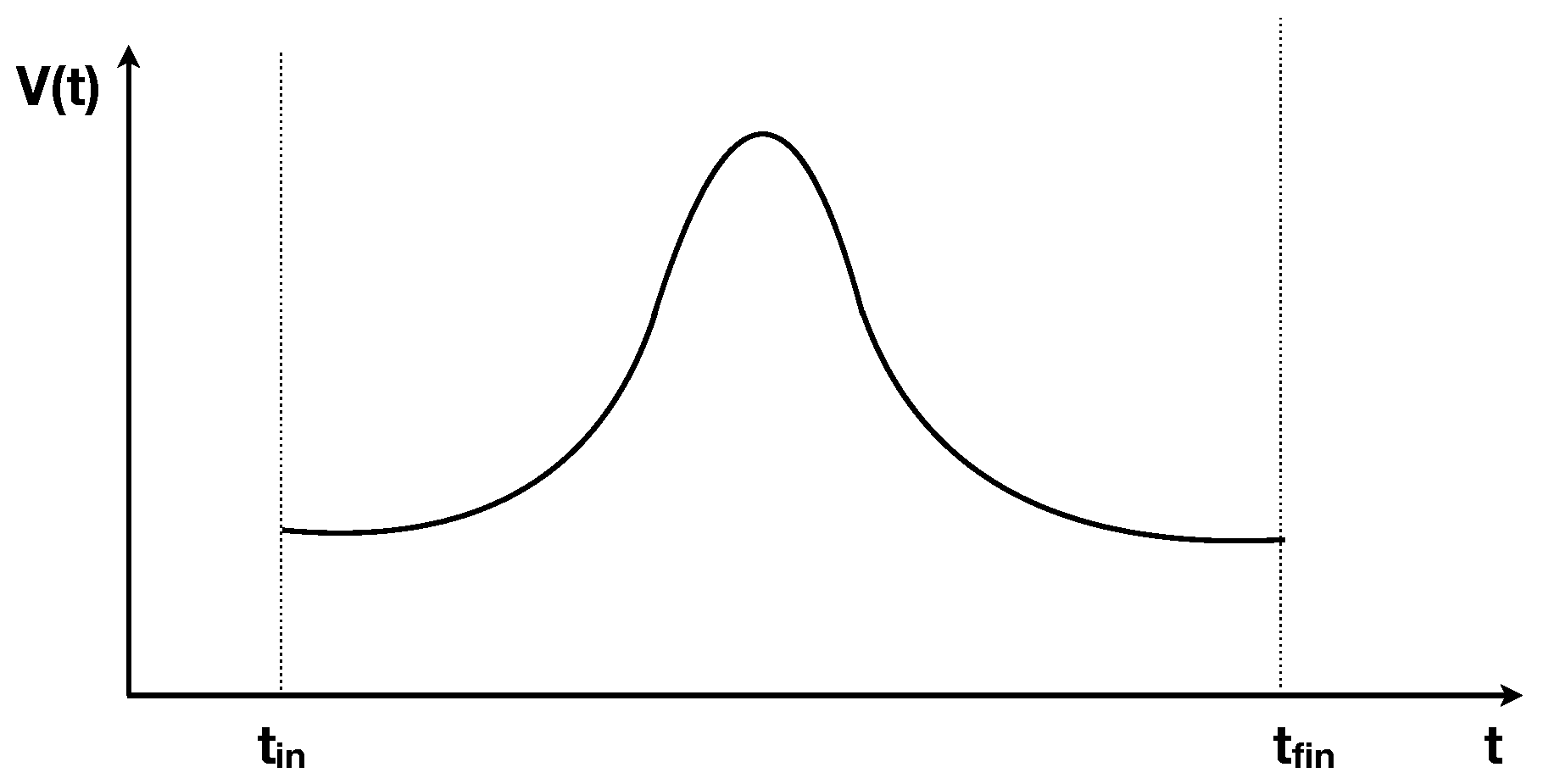

Consider an ideal gas in a thermally isolated container whose volume

V can be modified with a piston, moved by an external force. Assume that the volume is increased from the initial value

V at some negative time

, to a maximum value

at

and then symmetrically decreased back to

V at a positive time

, so that the volume evolves in time respecting

as in

Figure 1. Assume that at time

the gas was in thermal equilibrium at temperature

T. Does the entropy of the gas increase in the process?

The answer depends on the rapidity of the change of the volume. If this is much slower than the thermalization time of the gas, the gas remains constantly close to an equilibrium state. From and the equilibrium relation it follows that the pressure p satisfies , and therefore the work done on the gas during the compression is equal to the work extracted from the gas during the expansion. Hence the net exchange of energy is zero. At the gas will therefore have the same temperature as the initial one, hence the same entropy, and there is no increase of entropy in the process. The process is reversible.

But if the volume changes fast enough, the process is irreversible and entropy increases: the temperature at is higher than at . Mechanically, this is easily understood: during a fast expansion, there are less molecules that hit the piston than during a fast compression. In the limiting case where the speed of the piston is much faster than molecular speeds, the pressure during expansion is null, hence no work is extracted during the expansion, while work is done in the compression. Since the work on the gas during compression is larger than the work extracted during expansion, energy is put into the gas, and the final temperature is higher than the initial one. The process is irreversible. During the process, the gas finds itself away from equilibrium.

The moral is that a system initially at equilibrium in a space that expands faster than its thermalization time goes out of equilibrium and generates irreversibility.

Below I argue that this model reproduces a key aspect of what has happened to the universe in which we live: its rapid cosmological expansion has driven its matter content out of equilibrium and this is the dominant source of the present irreversibility in the cosmos. Before doing so, however, we need to refine the model.

2.2. A Simple Model, Second Version

The model discussed above includes an external force that drives the piston. There are no external forces in the universe: all degrees of freedom interact with one another and belong to the same coupled dynamics.

Among these degrees of freedom is the cosmological phase factor

, which is the dynamical variable that governs the volume of each co-moving region of space in a Friedman cosmology

where

is the metric of a homogenous three-dimensional space. The single degree of freedom

acts very much like the volume

of the above model, but it is not driven by external forces: it is driven by a dynamical interaction with the other degrees of freedom of the universe. To mimic this aspect of the thermal history of the universe, let us modify the model and get rid of the external force.

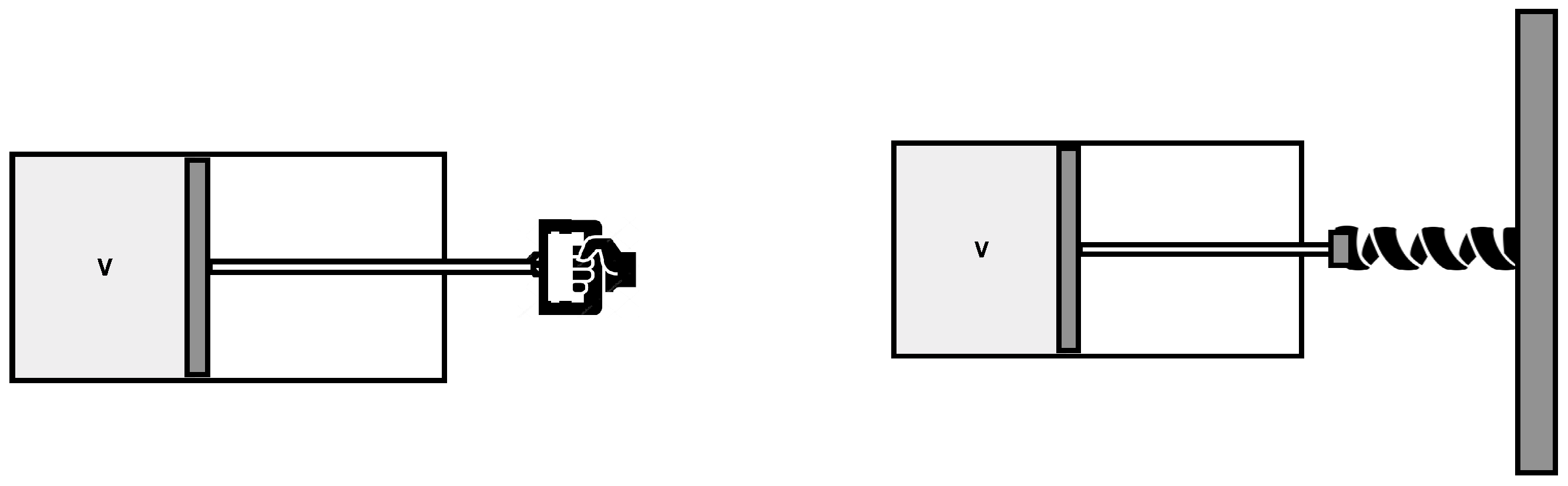

To this aim, consider again the gas in a volume with a piston, but assume now that the piston is attached to a spring, and can exchange energy with the gas. See

Figure 2.

Let us denote the position of the piston at time t. With appropriate parameters for the dynamics of the piston-spring system and appropriate initial conditions , the variation of the volume in time between and can be set to be similar to the previous case.

If we disregard dissipation, the piston oscillates indefinitely. But since the temperature and the entropy of the gas increase at each oscillation, there is dissipation. The force that the gas exerts on the piston during expansion is lower than during compression. Therefore the piston–spring system gradually transfers its (kinetic and potential) energy to the gas. The temperature and the entropy of the gas raise. The process is irreversible. The source of irreversibility is now transparent: since there is no external force, irreversibility is simply conventional, statistical redistribution of energy between the gas and the piston–spring system.

In fact, the piston continues to oscillate, but at each oscillation the temperature of the gas raises at the expense of the energy in the piston-spring system. The oscillations slowly reduce in amplitude until the piston sets to its equilibrium position . More precisely, the piston ends up fluctuating thermally around this position, since it is coupled to the hot gas. At this point thermal equilibrium is achieved, the temperature of the gas stops increasing, and the entropy has reached its maximum. The source of irreversibility in this process is clear: although the gas was in equilibrium by itself, the system of gas+piston–spring was far from equilibrium. There was far more energy in the piston–spring system than its proper equilibrium share prescribed by energy equipartition. Standard entropy growth dissipates irreversibly this energy into the gas as heat.

To start with, the gas was in thermal equilibrium by itself, but the system as a whole was badly out of equilibrium, because of the energy unbalance between the gas and the piston. This is the origin of the later irreversibility. The answer to the questions “What was out of equilibrium? Where was the low entropy?” is clearly: in the piston–spring system, namely in the single variable . A single variable far away from its equilibrium value is sufficient to determine the initial low-entropy and produce irreversibility, because it can hold a big share of free energy. Microscopically: it can hold energy from the rest of the variables, much decreasing their available phase space. That is the initial source of low entropy.

I argue below that this model reproduces the key features of the thermodynamical history of our universe that are responsible for the observable irreversibility. The state of the universe shortly after the Big Bang was badly out of equilibrium because one single degree of freedom, the scale factor , was. The full initial low-entropy of the universe is entirely stored in the out-of-equilibrium value of a single variable. In the following two sections, I make this claim concrete, showing how this process was realized in the real universe.

2.3. Metastable States and the Thermal History of the Universe

To understand the thermal history of the universe, the notion of metastable, or quasi-equilibrium state, and the related notion of channel for entropy-increase, are essential.

A pile of wood in a room full of air is thermodynamically quite stable: it can remain for many years as it is. But the basic elements forming it are not at the maximum possible value of their entropy. Far from that. This is obvious from the fact that if we ignite a fire, the wood burns. Burning is a violently irreversible phenomenon that dramatically increases entropy. After burning, the content of the room is reduced to ashes, smoke and vapour, which form a state of the ingredients in the room much higher-entropy than the initial state formed by wood and air. Therefore the constituents forming the pile of wood in the room full of air are in a remarkably stable configuration and yet far from their maximum entropy state. They are in a metastable, or quasi-equilibrium state.

The reason metastable states exist is that there can be obstructions in the phase space of a system, that do not allow the system to easily explore the full region of phase space that is in principle accessible on energetic grounds. The system remains trapped in a relatively small region of phase space for a very long time. In macroscopic terms, the scale of its thermalization time can be very long: longer than the observation time. This may change if some dynamical event allows the system to overcome the obstruction, thus opening a channel through which the system can exit the phase-space region where it was trapped, and move out to a larger region of its phase space. In the example above, the channel is represented by the combustion process and is opened by the ignition of the fire.

Metastable states are ubiquitous and represent vast storages of low entropy from which irreversible phenomena are fuelled.

The most common metastable systems in the universe are the large clouds of hydrogen. The reason they are metastable is that the protons forming them can fuse into helium, and since fusion is an irreversible process, helium is a much higher entropy state of its hadronic constituents than hydrogen. Since there are potential barriers for protons to fuse into helium, which make spontaneous fusion highly improbable, hydrogen is (meta-)stable. But there are processes that can overcome these potential barriers. A large hydrogen cloud has also a slow gravitational instability that makes it progressively clump [

8], emitting heat but also increasing pressure and temperature (gravitational systems typically have negative heat capacity [

9]) at its center, until the potential barrier preventing hydrogen to fuse into helium becomes insufficient. Hydrogen starts burning, further increasing temperature, and a channel for rapid increase of entropy is open: a star is born.

A star like the sun is a strongly irreversible process. It produces vast amounts of photons full of free energy, that impact the Earth and fuel a huge amount of irreversible phenomena on the Earth’s surface, including the entire biosphere. Hence the entire irreversibility of life can be traced to the low entropy of the initial metastable hydrogen clouds.

How could the the protons in the hydrogen clouds be in a lower-than-maximal-entropy state, if the matter content of the early universe was in thermal equilibrium, namely a maximal entropy state, according to standard cosmology [

1]?

The answer is the fact that the expansion of the universe has been too fast for equilibrium to keep up in the matter component of the universe, in analogy with the rapid expansion of the gas in the model discussed above.

The key event in the history of the universe in this regard is the freezing out of the reactions and that happened around the “freeze out” temperature MeV (about one second after the Big Bang). Before this event, protons and neutrons where in thermal equilibrium. From this time on, the temperature is too low to allow thermalization between the two species. Some neutrons had the time to decay to protons, most fused into helium, fixing the primordial helium to hydrogen ratio, which is still observable in the present universe. This helium to hydrogen ratio represents a metastable configuration, analog to the pile of wood of the example above, because hydrogen can ignite and fuse into helium, increasing entropy. This is the low-entropy fuelling the majority of the irreversible phenomena we see, including life.

The thermal history of the universe is therefore analogous to the rapid expansion of the gas in the model discussed above. As in that model, an initial equilibrium system undergoes a rapid volume expansion, too fast for thermalization to keep up, and this generates irreversibility.

Since the cosmological scale factor is not manoeuvred by an external force from outside the universe, but is rather a dynamical variable interacting with the rest, the proper idealized model or the universe thermal history is the second version of the gas with the piston discussed above. As in that model, the initial state was not at equilibrium because while matter was so, there was a (single) degree of freedom, the cosmological scale factor , out of equilibrium, in the following sense.

For a degree of freedom

a part of a large system, we say that the value

of

a and its time derivative

, is an equilibrium value, if the number

of microstates of the large system having the values of

a and

is maximized by

; hence

If this is not the case, we say that the degree of freedom is out of equilibrium. Notice that we say that a variable is out of equilibrium also if there are no equilibrium values at all (which might be the case for the scale factor of the universe).

Consider for simplicity a spatially flat homogeneous cosmological model with vanishing cosmological constant. For a given matter content, the Friedman equation,

determines trajectories in the

plane. Fixing a value of

a, a trajectory with higher

and higher

has higher entropy, because matter has larger energy and hence access to a larger region of phase space. A dissipative phenomenon can move the system to a different trajectory, increasing the entropy and producing irreversibility. As we shall see in the next section, this is what happens in the universe when stars burn.

The energy exchanged between the single degree of freedom formed by the scale factor and all the others drive the full irreversibility of the universe we see. By far the dominant source of the irreversibility we observe is therefore the single fact that the scale factor was, in the technical sense above, out of equilibrium in the early universe (and it is still now). In the next section I am more precise about the cosmological evolution of entropy.

2.4. Going Out of Equilibrium Keeping Entropy Constant

The larger component of the entropy of the universe is the radiation. In the radiation dominated phase, the entropy of the radiation is

where

V is the volume and

T is the temperature of a co-moving region, and is therefore constant. The irreversibility we concretely observe in the universe is due to phenomena that involve far smaller amounts of entropy than the large amount of entropy stored in the radiation, and are usually neglected in a first approximation.

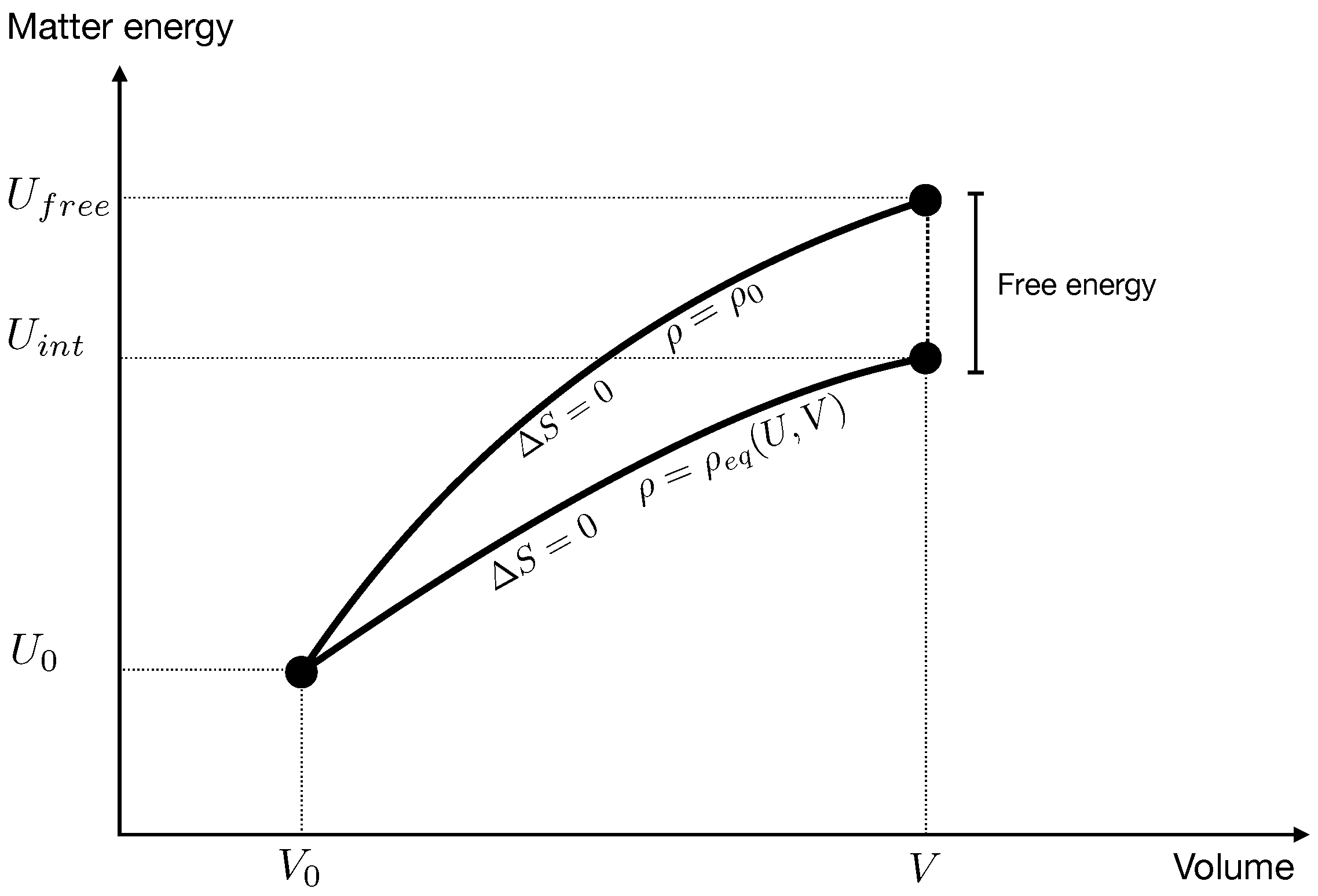

Furthermore, perhaps confusingly, a system can go out of equilibrium even if its entropy remains constant. To see this, consider a co-moving volume in an idealized universe containing only two species of matter. Call

V the volume,

U the total internal energy of the matter and

the relative density (the number of particles of the first species over the total number of particles). The entropy, generally, is a function of these macroscopic variables.

Say at some initial time the value of the macroscopic variables is

and the entropy

. Consider the expansion to a state

with

. Because of homogeneity, there is no exchange of energy between co-moving regions, but matter exchanges energy with the gravitational field, therefore in general

. Since because of the homogeneity there is no exchange of heat either, as long as the expansion is reversible, entropy

S remains constant:

In the course of an expansion from the volume to a volume V, there are two relevant possibilities: either the two species do not interact at all, each expanding freely, or they can be transformed into one another by some process, and changes accordingly.

In the first case the ratio of the densities remains constant,

, and the change of

U can be computed from the last equation. That is, the final value

of the internal energy is determined by

In the second case, namely if the two species interact, transforming into each other, the density changes adjusting

to the value

that maximizes the entropy at given

U and

V.

In this case the entropy is only a function of two variables,

and the change of the energy is determined by this function remaining constant. That is, if the species interact, the final energy

is determined by

In general , because the work that the gravitational field does on the matter in the expansion from to V depends on whether there is interaction between the species or not: in the first case it must also account for energy needed to transform one species in the other.

Hence the two evolutions take the system to two states with the same volume and the same entropy, but different energy.

Imagine now that after an evolution where the species were not interacting (Hydrogen and Helium) a channel opens that allows one species to transform into the other (a star). Matter internal energy is conserved during this equilibration therefore the state

evolves to the state

. This is different from the state

because

, as we have seen, is different from

, hence its entropy is different from

. It is higher, because it comes from a transformation towards equilibrium. Therefore there is a net increase of entropy

where

is defined in (

7) and

in (

9), see

Figure 3.

This result may seem strange, because the system goes out of equilibrium even if its entropy remains constant. But of course there is nothing wrong with this; if we slowly shift a wall dividing a box filled with an ideal gas into two parts, the pressure dis-equilibrates, and—in this sense—we bring the system out of equilibrium without changing its entropy. We simply add energy bringing the system to a state where its maximal entropy could be higher; opening a hole in the wall starts an irreversible process. Stars are like that opening in the wall.

In conclusion, the universe has gone out of equilibrium at the freeze-out temperature. The overall entropy of matter and radiation has remained essentially constant, but the subsequent increase in volume has not been accompanied by the transfer of energy between gravity and the energy difference between hydrogen and helium that true matter equilibrium would have required, because the proton-neutron (and then hydrogen-helium) thermalization times have become too long, below the freeze-out temperature. Therefore, althout entropy remained constant, free energy has remained trapped into hydrogen. This is the free energy liberated in stars, which fuels the bulk of the irreversibility we witness.

Since the scale factor is a dynamical variable interacting with the other variables and since before the freeze-out time the radiation and matter content of the universe were in equilibrium among themselves, it is clear that it is the scale factor and only the scale factor that was not at equilibrium, and therefore was the ultimate source of low entropy.

2.5. Why Other Suspects Are Innocent

The scale factor is a component of the gravitational field. It is one of the dynamical variables of gravity. Therefore the above account shows that gravity has played a key role in the thermal history of the universe, via .

A second role of gravity in the thermal history of the universe mentioned above is in the collapse of the hydrogen clouds that ignites stars.

Roger Penrose has emphasized a different role of gravity for past low entropy, and this idea has had a strong impact on the theoretical physics community, especially on the gravitational community. In the standard cosmological model, the gravitational field is very close to homogeneity and isotropy in the early universe. Penrose pointed out that this is an “extremely special” configuration of the gravitational fields, because a “generic” configuration of the geometry is strongly crumpled, not homogeneous [

10]. This can be seen by studying the evolution of a re-collapsing universe: near the final big crunch, matter has collapsed into a large number of black holes and geometry is highly inhomogeneous. This progressive crumpling of the geometry can be seen as an entropy increase, from a low-entropy initial nearly spatially flat macro-state underpinned by a single microstate, towards a final higher entropy crumbled macro-state.

All this is of course theoretically correct, but it seems to me that there is clear evidence that this is not the source of the bulk of the irreversibility that we actually observe in our real universe. The reason is the following.

Imagine a universe—distinct from ours—where general relativity was not true, the metric of spacetime was precisely the metric (

1) and the gravitational interaction was instantaneous and governed by Newton’s law of gravity. In this universe, the gravitational field would have no independent degrees of freedom at all, except for the scale factor. As fas as we understand, such a universe could be very similar to ours, with nearly the same thermal history. The reason being that this model does indeed account entirely for the current cosmological standard model. In fact, gravitational waves, metric perturbations of a Friedman cosmology other than the scalar potential, and black holes, play a small role in the thermal history of our universe: if we ideally shut down the independent degrees of freedom of gravity other than the scale factor, we still have virtually the same cosmological evolution and hence the same irreversibility. This observation implies that the irreversibility we see is not the consequence of the existence of these other gravitational degrees of freedom, because their absence would not avoid it.

I am not disputing Penrose’s observation that the initial state of spacetime is peculiar in the phase space of general relativity. But it seems clear that this peculiarity is not the main responsible for the irreversibility we actually see around us. The irreversibility we see is pretty much unrelated to the behaviour of gravitational radiative modes, and follows largely from one single degree of freedom, the scale factor, being far from an equilibrium configuration. If the existing black holes accounted for a considerable portion of the entropy of the universe, which is possible, I do not see how real black holes would substantially affect the irreversibility of our experience: we (and the galaxies) do not grow old because of black holes; we grow old because the thermal processes we are are fuelled by the free energy of the stars, which derives from the free energy in the primordial hydrogen.

Gravity influences the thermal history of the universe also by giving rise to the clumping of matter that ultimately leads to the rich structure of the universe. A detailed calculation (see for instance [

6]), shows that a cloud itself lowers its entropy by shrinking, but emits heat, which rises the external (and the global) entropy. This suggests that we can relate the initial low entropy fuelling present irreversibility to the initial uniformity of the distribution of matter, whose entropy can increase by gravitational clumping. However, the total entropy produced by a star burning in enormously larger than the entropy produced by the heat emitted by the contraction of the hydrogen clouds. Hence once again, although matter uniformity might marginally contribute to current irreversibility, this effect is negligible with respect to the main one; the irreversibility produced by the fast increase of the scale factor, that have left hydrogen and helium badly trapped out of thermal equilibrium. The universe, in other words, has gone badly out of equilibrium much before any significative beginning of clumping of matter.

I think we can safely conclude that the past low entropy that gives rise to observed irreversibility is very largely in the single degree of freedom being not at an equilibrium value.

3. Part II: Why?

In this second part of the article, I discuss how to interpret the fact that the entropy of the early universe was low. To star with, let me review our present best understanding of the origin of the second law of thermodynamics. (See for instance [

2] or the general introductory parts in [

3,

4]). This allows me to sharpen the question.

It is easy to make sense of the ubiquitous phenomenon of irreversibility of the observed processes in nature in statistical terms, if the initial state of these processes has low entropy. Indeed, the vast majority of the micro-states that underpin a low-entropy macro-state evolve towards a microstate underpinning a higher entropy configuration. Therefore, barring extraordinarily atypical micro-states, entropy grows if the initial macro-state of these processes has low entropy.

Given any specific irreversible process, its initial low entropy can be understood because of the way the process was prepared, by us or by Nature. In either case, preparation of an initial low-entropy state requires that the previous processes giving rise to the preparation themselves started of from an even lower entropy.

Tracing back in time, we arrive at the low entropy of the early universe. Therefore current irreversibility depends on early low-entropy in the universe. The open question about the arrow of time, therefore, is not why entropy grows: it is why entropy was low to start with.

This story implies that there is something ironic in the state of our understanding of irreversibility: the statistical understanding of entropy growth is grounded on the idea of genericity (generic micro-states underpinning low entropy states evolve towards higher entropy), but to make use of this idea we need to deal with the fact that the initial state had very low entropy, and very low entropy means to be very badly non-generic. Thus, our current understanding of irreversibility is based on an assumption of genericity (the micro-states underpinning macro-states are typical) and an assumption of non-genericity (initial entropy was very low). Can we make sense of this?

A possible attitude towards this question is to discard it. After all, “why” questions need to stop at some point. This is an attitude defended for instance by David Albert, who recommends to promote the “past hypothesis”, namely the statement that entropy was low in the past, to a sort of law of nature, in the sense of being a general statement from which we can derive predictions that turn out to be true [

3]. To some extent, the discussion above supports David Albert’s position. Having identified initial low entropy with a small value of the scale factor, allows us to say that all current irreversibility is driven by the initial smallness of the universe, and this is it, as far as the arrow of time is concerned.

As for any other general fact of nature, not asking further why’s is a sensible option. Unless (or until) one finds something better. Can we find something better?

One possible relevant fact is that the scale factor might not have an equilibrium state at all. Hence there might not be state with maximal entropy at all and the universe might have no choice but being in a trajectory of increasing entropy. This idea is being explored for instance in [

11,

12,

13] and I shall not pursue this line here.

3.1. The Role of Corse Graining

Let us reconsider the model of the gas coupled to the piston, and assume for simplicity that the total energy is bounded. Consider the space

of all possible motions of this system. This is a phase space (at any given time

t it is in one-to-one correspondence with the space of the initial data) and carries a natural measure (the Liouville measure

, normalized by

, which does not depend on

t). For the vast majority of the motions in

, the piston is generally near its equilibrium value

:

Therefore if we witness a motion where the piston is far away from this value at some time , we are witnessing a very atypical motion. For the same reason, we may say that we are witnessing a very “atypical” motion of our universe, among the motions allowed by its dynamics.

However, there is something missing in this account of what “atypical” means. The reason is that any individual motion of a system with many degrees of freedom is atypical in this sense. In fact, pick an arbitrary motion in

. A generic degree of freedom

of the system has mean value

but out of many degrees of freedom there will generically most likely be some, say for instance

x, such that

is very different from

. Therefore in a system with many degrees of freedom there will generically be some degrees of freedom that are far out of equilibrium. So, having a single degree of freedom that is far out of equilibrium is not “atypical”: to the contrary: it is a generic occurrence: it is very typical! In which sense is then a low-entropy state “atypical”? What is missing?

What is missing is the fact that the notion of “typical” or “atypical”, like the notion of “low entropy” or “high entropy” make sense only if we patch together motions in families that we deem indistinguishable, namely if we “coarse grain” the phase space. Typicality makes no sense for micro-states alone: it only makes sense if macro-states are defined. Although rarely emphasized, it is essential to recall that irreversibility is a macroscopic notion. It is a property of a certain coarse graining. It is not a property of a microscopic dynamical evolution. In

Appendix A I recall and discuss this essential point.

A macro-state is a defined as a subset

m of

, or more in general, by a (normalized) distribution function

on

. Then the “typicality” of the macro-state is measured by the size of

m

or the entropy of

In particular, macro-states are defined if something selects some variables of the system as “macroscopic”. For instance, in the second model considered above, the volume of the cylinder (namely the position of the piston) and the total energy E were declared “macroscopic” variables. They define the macro-states formed by the region in where the volume is V or smaller and the energy equal or lower than E. The entropy of this region is a well defined quantity.

These observations show that the reason why the scale factor determines an initial low entropy is not just because it is at a value which is not at equilibrium: rather it is because the scale factor is considered a macroscopic variable. This is the main conceptual point I wish to make in this paper. Past low entropy is due to the fact that a single variable that happened to be far from its equilibrium value in the early universe is a variable that we treat as “macroscopic”. Why do we treat it as macroscopic?

3.2. Why We Treat the Scale Factor as Macroscopic?

What is the difference between a “macroscopic” and a “microscopic” vraiable? There are different answers to this question that one can find in the literature. These are:

External interactions. The classical paradigm of a thermodynamical system is a physical system with many degrees of freedom , acted upon by an agent that can control and measure a small number of its variables, (the thermodynamical variables). The ’s are the macroscopic variables that determine the statistical coarse graining, and underpin the definition of entropy in the statistical analysis of the system. The coarse graining is not arbitrary: it is physically determined by the interactions of the system with the agent. Thermodynamics describes the macroscopic behaviour of systems relative to the sets of existing physical interactions with an external agent measuring it and acting on it. When dealing with the thermal history of the entire universe, it is hard to apply this characterization of “macroscopic”: there is no “external” agent acting on the universe.

Heat versus work. Thermodynamics has developed as the science describing the exchanges of heat and work between a system and its environment. What is it that determines the difference between heat and work? Work is a form of mechanical energy. But so is heat, at the microscopic level. When we give a macroscopic account of a process, heat is not considered mechanical energy anymore only because the relevant degrees of freedom are not directly accessible. The distinction between heat and work is therefore more subtle than what it seems at first sight: heat refers to energy in microscopic variables, while work refers to energy in macroscopic variables. The distinction between heat and work, therefore does not determine the characterization of what is macroscopic: it follows from it.

Averages. Boltzmann’s approach to statistical mechanics considers a system

S formed by a large number

N identical copies

of a simple system

s. The typical example is a gas formed by many similar molecules. We can then define a distribution

on the phase space

of

s, which assigns to any region

the fraction of the molecules that are in states in this region. That is, if the

n-th molecule is in the state

,

Then an observables

o of a single molecule defines a macroscopic observable

O for the full system, by its average under this distribution:

This is a powerful tool that exploits the fact that the system is formed by many copies of a single system. However, it can be applied only for systems composed by a large number of identical subsystems. This is not always the case with field theory, general relativity, or the entire universe.

Relative entropy. Point (i) above can be stripped of its anthropocentric and subjectivist aspects as follows. If a system S with many degrees of freedom , interacts with another system O via an interaction hamiltonian that depends on a small number of variables of S, then a coarse graining on S is defined by defining the variables as macroscopic. This defines an entropy for S, which is objective but relative to O. This definition of “macroscopic” can be extended to the case of a closed system like the universe by identifying O as a subsystem of the universe and S as “the rest” of the universe.

At the light of this list, why is the scale factor a macroscopic variable?

The only definition that applies, seems to me, is the last one: relative entropy. We are part of the universe. Hence we belong to some subsystem O of the universe. The subsystem O concretely interacts with the rest of the universe via variables whose number is small compared to the number of degrees of freedom of the universe. These are the macroscopic variables in a statistical description of he universe. They define a coarse graining, hence an entropy. The scale factor belongs to this set , otherwise we could not do cosmology.

The conclusion is that past low entropy depends on the fact among the relatively few macroscopic variables that determine our own interaction with the rest of the universe, there is one that was out of equilibrium in the past.

This discussion opens a possible way to interpret the atypicality implicit in past low entropy. What is atypical is not something pertaining to the universe by itself, but to the interacting couple

. This opens the possibility that what is atypical is

O, not the state of

S. This is the idea of the perspectival origin of the arrow of time that was put forward in [

7]. It is based on a simple conjecture in statistical mechanics:

Conjecture: In a sufficiently complex dynamical system S with sufficiently many interacting degrees of freedom , for any generic finite motion there are some subsystems O that interact with the rest of S via interaction variables that define a coarse graining and hence an entropy for the rest of S that is arbitrarily low at one extreme of the motion.

If this conjecture is true, as it seems intuitively possible, then the universe was not in a a-typical state in the early universe. It is us that we happen to belong to one of the subsystems that the conjecture states exist generically. (On this, see also [

14]. The precise relation with the argument in this reference will be studied elsewhere.) The reason we happen to be part of one of these peculiar systems is simply that these are the systems constructed in terms of those macroscopic variables for which entropy was low in the past and hence increases in time. We are the product of this perspectival growth of entropy. When seeing the arrow of time, we are not seeing a property of the microscopic motion of the universe: we are seeing a feature of those special macroscopic variables that characterize us.

If this is the case, the arrow of time is real, but it is perspectival, like are real but perspectival the rotation of the sky or the setting of the sun.