Abstract

Quantifying behaviors of robots which were generated autonomously from task-independent objective functions is an important prerequisite for objective comparisons of algorithms and movements of animals. The temporal sequence of such a behavior can be considered as a time series and hence complexity measures developed for time series are natural candidates for its quantification. The predictive information and the excess entropy are such complexity measures. They measure the amount of information the past contains about the future and thus quantify the nonrandom structure in the temporal sequence. However, when using these measures for systems with continuous states one has to deal with the fact that their values will depend on the resolution with which the systems states are observed. For deterministic systems both measures will diverge with increasing resolution. We therefore propose a new decomposition of the excess entropy in resolution dependent and resolution independent parts and discuss how they depend on the dimensionality of the dynamics, correlations and the noise level. For the practical estimation we propose to use estimates based on the correlation integral instead of the direct estimation of the mutual information based on next neighbor statistics because the latter allows less control of the scale dependencies. Using our algorithm we are able to show how autonomous learning generates behavior of increasing complexity with increasing learning duration.

Keywords:

excess entropy; mutual information; predictive information; quantification; autonomous robots; behavior; correlation integral PACS classifications:

05.45.-a; 05.45.Tp; 89.70.Cf; 89.75.-k

MSC classifications:

68P30; 68T05; 68T40; 70E60; 93C10; 94A17

1. Introduction

Whenever we create behavior in autonomous robots we strive for a suitable measure in order to quantify success, learning progress or compare algorithms with each other. When a specific task is given, then typically the task provides a natural measure of success, for instance walking may be measured by velocity and perturbation stability. In cases where behavior is learned via optimization of a global objective function then the same function can also be used as a quantification, so creating and quantifying behavior often go hand in hand. This also applies in principle to behavior from task-independent objectives that have been recently more and more successful in generating emergent autonomous behavior in robots [1,2,3,4,5,6,7]. A task is here understood as a specific desired target behavior such as walking or reaching.

However, there are several cases of emergent behavior where this strategy fails: if the behavior arises from (a) optimizing a local function [8,9], (b) optimizing a crude approximation of a computationally expensive objective function [9], (c) local interaction rules without an explicit optimization function [10,11,12], and (d) a biological system (e.g., freely moving animals) where we do not know the underlying optimization function. Thus, independent of the origin of behavior it would be useful to have a quantitative description of its structure. This would allow to objectively compare algorithms with each other and to compare technical with biological autonomous systems. This paper presents a measure of behavioral complexity that is suitable for the analysis of this kind of emergent behavior.

We base our measure on the predictive information (PI) [13] of a set of observables, such as joint angles. The PI is the mutual information between past and future of a time-series and captures how much information (in bits) can be used from the past to predict the future. It is also closely linked to excess entropy [14,15] or effective measure complexity [16], and it is a natural measure for the complexity of a time series because it provides a lower bound for the information necessary for optimal prediction. Thus it is a promising choice as a measure. Given these favorable properties, the PI was also proposed as an intrinsic drive for behavior generation in autonomous robots [1,2,9]. On an intuitive level maximizing the PI of the sensor process, leads to a high variety of sensory input—large marginal entropy—while keeping a temporal structure—high predictability corresponding to a small entropy rate.

Unfortunately, there are conceptual and practical challenges in using the PI for generating and measuring autonomous behavior. Conceptually, for systems with continuous values the PI provides a family of functions depending on the resolution of the measurements and practically it is difficult to estimate the PI. For a fixed partition (single resolution) in a low-dimensional case it is possible to estimate the one-step PI and adapt a controller to maximize it [2]. In high-dimensional systems it cannot be used directly in an online algorithm. An alternative approach [9] uses a dynamical systems model, linearization and a time-local version of the PI [9] to obtain an explicit gradient for locally maximizing PI for continuous and high-dimensional state spaces (no global optimization). A simplified version of the resulting neural controller [11] was used for generating the examples presented in this paper.

The aim of the present paper is to provide a measure of behavioral complexity that can be applied to emergent autonomous behavior, animal behavior and potentially other time-series. In order to do this it turns out that the predictive information as a complexity measure has to be refined by taking into account its scale dependency. Because the systems of interest are high-dimensional we investigate different methods to estimate information theoretic quantities in a resolution dependent way and present results for different robotic experiments. The emergent behavior in these experiments are generated by the above mentioned learning rules. On the one hand we want to increase our understanding of the learning process guided by the time-local version of the PI and on the other hand demonstrate how the resolution dependence allows us to quantify behavior on different length-scales.

We argue that many natural behaviors are low-dimensional, at least on a coarse scale. For instance in walking all joint angles can be typically related to a single phase, so in the extreme case it is one-dimensional [17]. However, as we show below in Section 2.1, this contradicts to the global maximization of the PI which would maximize the dimension. We discuss this contradiction and how it was circumvented in the practical applications. Along with the paper there is a supplementary material and source code, see [18,19].

The plan of the paper is as follows. In Section 2.1, we introduce the information theoretic quantities and in Section 2.3 the two estimation methods used in the paper. In Section 2.4 we describe the algorithm used to calculate the quantification measures. In Section 2.5 we demonstrate their behavior at the example of the Lorenz system including the effects of noise. Section 3 starts with explaining the robots and the control framework used for the experiments which are described in Section 3.2. The results of the experiments quantification are presented in Section 3.3 and are discussed in Section 4.

2. Methods

2.1. Entropy, Dimension and Excess Entropy

Starting point is a (in general) vector valued stationary time series . It is used to reconstruct the phase space of the underlying dynamical system by using delay embedding: . If the original time series was dimensional the reconstructed phase space will be of dimension . More general embeddings are also possible, e.g., involving different delays or different embedding dimensions for each component of the time series. In the following, we will only consider the case , i.e., scalar time series. The generalization to vector-values time series is straight forward. Let us assume that we are able to reconstruct approximately the joint probability distribution in a reconstructed phase space. Then we can characterize its structure using measures from information theory. Information theoretic measures represent a general and powerful tool for the characterization of the structure of joint probability distributions [20,21]. The uncertainty about the outcome of a single measurement of the state, i.e., about is given by its entropy. For discrete-valued random variable X with values and a probability distribution it is defined as

An alternative interpretation for the entropy is the average number of bits required to encode a new measurement. In our case, however, the are considered as continuous-valued observables (that are measured with a finite resolution). For continuous random variables with a probability density one can also define an entropy, the differential entropy

However, it behaves differently than its discrete counterpart: It can become negative and it will get even minus infinity if the probability measure for X is not absolutely continuous with respect to the Lebesgue measure—for instance, in the case of the invariant measure of a deterministic system with an attractor dimension smaller than the phase space dimension. Therefore, when using information theoretic quantities for characterizing dynamical systems researchers often prefer using the entropy for discrete-valued random variables. In order to use them for dynamical systems with continuous variables usually either partitions of the phase space or entropy-like quantities based on coverings are employed. These methods do not explicitly reconstruct the underlying invariant measure, but exploit the neighbor statistics directly. Alternatively one could use direct reconstructions using kernel density estimators [22] or methods based on maximizing relative entropy [23,24] to gain parametric estimates. These reconstructions, however, will always lead to probability densities, and are not suitable for reconstructing fractal measures which appear as invariant measures of deterministic systems.

In this paper, we use estimators based on coverings, i.e., correlation entropies [25] and nearest neighbors based methods [26] considered in Section 2.3 below. For the moment let us consider a partition of the phase space into hypercubes with side-length ϵ. For a more general definition of an ϵ-partition see [27]. In principle one might consider scaling the different dimensions of differently, but for the moment we assume that the time series was measured using an appropriate rescaling. The entropy of the state vector observed with a ε-partition will be denoted in the following as with ϵ parameterizing the resolution.

How does the entropy change if we change ε? The uncertainty about the potential outcome of a measurement will increase if the resolution of the measurement is increased, because of the larger number of potential outcomes. If is a m-dimensional random variable and distributed according to a corresponding probability density function we have asymptotically for see ([27] or [28] (ch. 8, p. 248, theorem 8.3.1)).

This is what we would expect for a stochastic system. However, if we observe a deterministic system the behavior of an observable depends how its dimension relates to the attractor dimension. If the embedding dimension is smaller than the attractor dimension the deterministic character will not be resolved and Equation (2) still applies. However, if the embedding dimension is sufficiently high ( [29]) then instead of a density function we have to deal with a D-dimensional measure and the entropy will behave as

If an behavior such as in Equations (2) or (3) is observed for a range of ϵ values we will call this range a stochastic or deterministic scaling range, respectively.

Conditional entropy and mutual information Let us consider two discrete-valued random variables X and Y with values and , respectively. Then the uncertainty of a measurement of X is quantified by . Now we might ask, what is the average remaining uncertainty about X if we have seen already Y? This is quantified by the conditional entropy

The reduction of uncertainty about X knowing Y is the information that Y provides about X and is called the mutual information between X and Y

Having defined the ε-dependent state entropy we can now ask, how much information the present state contains about the state of the system at the next time step. The answer is given by the mutual information between and :

Using Equation (2) one see, that for stochastic systems the mutual information will remain finite In the limit and can be expressed by the differential entropies:

Note that this mutual information is invariant with respect to coordinate transformation of the system state, i.e., if is a continuous and invertible function, then

However, in the case of a deterministic system, the mutual information will diverge

This is reasonable behavior because in principle the actual state contains an arbitrary large amount of information about the future. In practice, however, the state is known only with a finite resolution determined by the measurement device or the noise level.

Predictive information, excess entropy and entropy rate The unpredictability of a time series can be characterized by the conditional entropy of the next state given the previous states. In the following we will use an abbreviated notation for these conditional entropies and the involved entropies:

The entropy rate ([28] see (ch. 4.2)) is this conditional information if we condition on the infinite past

In the following we assume stationarity, i.e., we have no explicit time dependence of the joint probabilities and therefore also of the entropies. Moreover, if it is clear from the context, which stochastic process is considered, we will write and instead of and , respectively and it holds

For deterministic systems the entropy rate will converge in the limit to the Kolmogorov-Sinai (KS-)entropy [30,31] which is a dynamical invariant of the system in the sense that it is independent on the specific state space reconstruction. Moreover, already for finite , will not depend on ε for sufficiently small ε because of Equation (3).

To quantify the amount of predictability in a state sequence one might consider subtracting the unpredictable part from the total entropy of a state sequence. By doing this one ends up with a well known complexity measure for time series, the excess entropy [14,15] or effective measure complexity [16]

with

The excess entropy provides a lower bound for the amount of information necessary for an optimal prediction. For deterministic systems, however, it will diverge because will behave according to Equation (3) and will become ϵ-independent for sufficiently large m and small ϵ, respectively

with D being the attractor dimension.

The predictive information [13] is the mutual information between the semi-infinite past and the future time series

with

If the limits Equations (14) and (17) exist the predictive information is equal to the excess entropy . For the finite time variants in general :

However, if the system is Markov of order p the conditional probabilities will only depend on the previous p time steps, , hence for and therefore .

2.2. Decomposing the Excess Entropy for Continuous States

In the literature [13,15,16] both the excess entropy and the predictive information were studied only for a given partition—usually a generating partition. Thus, using the excess entropy as a complexity measure for continuous valued time series has to deal with the fact that its value will be different for different partitions—even for different generating ones.

In Equation (16) we have seen that the excess entropy for deterministic systems becomes infinite in the limit . The same applies to the predictive information defined in Equation (18). Moreover, we have seen that the increase of these complexity measures with decreasing ε is controlled by the attractor dimension of the system. Does this means that in the case of deterministic systems the excess entropy as a complexity measure reduces to the attractor dimension? Not completely. The constant in Equation (16) reflects not only the scale of the signal, but also statistical dependencies or memory effects in the signal, in the sense that it will be larger if the conditional entropies converge slower towards the KS-entropy.

How can we separate the different contributions? We will start by rewriting Equation (15) as a sum. Using the conditional entropies (Equation (13)) we get

Using the differences between the conditional entropies

the excess entropy can be rewritten as

Note that the difference is the conditional mutual information

It measures dependencies over m time steps that are not captured by dependencies over time steps. In other words, how much uncertainty about can by reduced if in addition to the step past also the m th is taken into account. For a Markov process of order m-the vanish for . In this case the sum in Equation (20) contains only a finite number of terms. On the other side truncating the sum at finite m could be interpreted as approximating the excess entropy by the excess entropy of an approximating Markov process. What can be said about the scale dependency of the ? From Equations (2) and (3) follows that for and for . Using this and Equation (19) we have to distinguish four cases for deterministic systems. Note that denotes the fractal part of the attractor dimension.

Thus the sum Equation (20) can be decomposed into three parts:

with the ε dependence showing up only in the middle term (MT):

with denoting the length scale where the deterministic scaling range starts. Therefore we have decomposed the excess entropy in three terms: Two ideally ε independent terms and one ε-dependent term. The first term

will be called “state complexity” in the following because it is related to the information encoded in the state of the system. The constant c was added here in order to ensure that the ϵ-dependent term vanishes at —the beginning of the deterministic scaling range. The second ε-independent term

will be called “memory complexity” because it is related to the dependencies between the states on different time steps. What we call “state” in this context is related to the minimal embedding dimension to see the deterministic character of the dynamics which is [29]. In order to be able to get a one to one reconstruction of the attractor a higher embedding dimension might be necessary [32]. Both ε-independent terms together we will call “core complexity”

So far we only addressed the case of a deterministic scaling range. In the case of a noisy chaotic system we have to distinguish two ε regions: the deterministic scaling range described above and the noisy scaling range with with determined by the noise level. In the stochastic scaling range all become ε-independent and the decomposition Equation (22) seems to become unnecessary. This is not completely the case. Let us assume that the crossover between the two regions happens at a length scale . Moreover, let us assume that for sufficiently large m we have in the deterministic scaling range (see Section 2.5 for an example). Then we have

which allows to express the cross-over scale in terms of the KS-entropy and the noise level related continuous entropy

Moreover, the excess entropy in the deterministic scaling range will behave as

Evaluating this expression at the crossover length scale allows to express the value of the excess entropy in the stochastic scaling range as

In particular, this expression shows that decreasing the noise level, which increases , will increase the asymptotic value of the excess entropy for noisy systems. Thus, an increased excess entropy or predictive information for a fixed length scale or partition can be achieved in many ways:

- by increasing dimension D of the dynamics

- by decreasing the noise level

- by increasing the amplitude

- by increasing the state complexity

- by increasing the correlations measured by the “memory” complexity, i.e., by increasing the predictability

- by decreasing the entropy rate , i.e., by decreasing the unpredictability

Naturally, the effect of the noise level will be observed in the stochastic scaling range only. In practice there might be more than one deterministic and stochastic scaling range or even no clear scaling range at all. How we will deal with these cases will be described below when we introduce our algorithm.

2.3. Methods for Estimating the Information Theoretic Measures

Reliably estimating entropies and mutual information is very difficult in high-dimensional spaces due to the increasing bias of entropy estimates. Therefore we will employ two different approaches. On the one hand we will use an algorithm for the estimation of the mutual information proposed by Kraskov et al. [26] based on nearest neighbor statistics which allows to reduce the bias by employing partitions of different sizes in spaces of different dimensions. On the other hand we calculate a proxy for the excess entropy using correlation entropies [25] of order . These are related to the Rényi entropies of second order and the correlation sum provides an unbiased estimator. Both methods do not require binning but differ substantially in what they compute.

Estimation via local densities from nearest neighbor statistics (KSG) The most common approach to estimate information quantities of continuous processes, such as the mutual information, is to calculate the differential entropies (1) directly from the nearest neighbor statistics. The key idea is to use nearest neighbor distances [33,34,35] as proxies for the local probability density. This method corresponds in a way to an adaptive bin-size for each data point. For the mutual information (required e.g., to calculate the PI (Equation (18))), however, it is not recommended to naively calculate it directly from the individual entropies of X, Y and their joint because they may have very dissimilar scale such that the adaptive binning leads to spurious results. For that reason a new methods was proposed by Kraskov et al. [26], that we call KSG, which only uses the nearest neighbor statistics in the joint space. We denote the mutual information estimate where k nearest neighbors where used for the local estimation.

The length scale on which the mutual information is estimated by this algorithm depends on the available data. In the limit of infinite amount of data for . However, in order to evaluate the quantity at a certain length scale (similar to ε above) and assuming the same underlying space for X and Y, noise of strength η is added to the data resulting in

where is the uniform distribution in the interval . The idea of adding noise is to make the processes X and Y independent within neighborhood sizes below the length scale of the noise. In this way only the structures above the added noise-level contribute to the mutual information. Note that for small η the actual scale (k-neighborhood size) may be larger due to sparsity of the available data.

Estimation via correlation sum The correlation sum is one of the standard tools in nonlinear time series analysis [36,37]. Normally it is used to estimate the attractor dimension. However, it can also be used to provide approximate estimates of entropies and derived quantities such as the excess entropy. The correlation entropies for a random variable with measure are defined as [25]

where is the “ball” at with radius ε. For Equation (32) becomes . The integral in this formula is also known as “correlation integral”. For N data points it can be estimated using the correlation sum, which is the averaged relative number of pairs in an ε-neighborhood [36,37],

Θ denotes the Heaviside function

Then the correlation entropy is

For sufficiently small ε it behaves as

with being the correlation dimension of the system [38]. A scale dependent correlation dimension can be defined as difference quotient

For a temporal sequence of states (or state vectors) we can now define block entropies by using -dimensional delay vectors . Now, we can define also the quantities corresponding to conditional entropies and to the excess entropy using the correlation entropy

We expect the same qualitative behavior regarding the ε-dependence of these quantities as for those based on Shannon entropies, see Equations (11) and (15). Quantitatively there might be differences, in particular for large ε and strongly non-uniform measures.

A comparison of the two methods with analytical results are given in the Appendix A, where we find a good agreement. Although, the KSG method seems to underestimate the mutual information for larger embeddings (higher dimensional state space). The correlation integral method uses a unit ball of diameter whereas the KSG method measures the size of the hypercube enclosing k neighbors where the data was subject to additive noise in the interval . Thus comparable results are obtained with .

2.4. Algorithm for Excess Entropy Decomposition

We are now describing the algorithm used to compute the proposed decomposition of the excess entropy in Section 2.2. The algorithm is composed of several steps: preprocessing, determining the middle term (MT) (Equation (23)), determining the constant in MT, and the calculation of the decomposition and of quality measures.

Preprocessing: Ideally the curves are composed of straight lines in a log-linear representation, i.e., of the form . We will refer to s as the slope (it is actually the inverted slope). Thus we perform fitting, that attempts to find segments following this form, details are provided in the Appendix B. Then the data is substituted by the fits in the intervals where the fits are valid. As for very small scales the become very noisy we extrapolate below the fit with the smallest scale. In addition we calculate the derivative in each point, either from the fits (s, where available) or from finite differences of the data (using 5 points averaging).

Determining MT: In theory only two should have a non-zero slope at each scale ε, see Equation (21). However, in practice we often have more terms, such that we need to find for each ε the maximal range , where , i.e., the slope is larger than the threshold . However, this is only valid for deterministic scaling ranges. In stochastic ranges all should have zero slope. We introduce a measure of stochasticity, defined as which is 0 for purely deterministic ranges and 1 for stochastic ones. The separation between state and memory complexity is then inherited from the next larger deterministic range. Thus if we use at , where . Note that the here algorithmically defined is not necessarily equal to the defined above Equation (28) for an ideal-typical noisy deterministic system.

Determining the constant in MT: In order to obtain the scale-invariant constant c of the MT, see Equation (23), we would have to define a certain length scale . Since this cannot be done robustly in practice (in particular because it may not be the same for each m) we resort to a different approach. The constant parts of the terms in the MC can be determined from plateaus on larger scales. Thus, we define , where is smallest scale where we have a near-zero slope, i.e., . In case there is no such then .

Decomposition and quality measures: The decomposition of the excess entropy follows Equations (24) and (25) with and used for splitting the terms:

In addition we can compute different quality measures to indicate the reliability of the results, see Table 1.

Table 1.

Quality measures for decomposition algorithm. We use the Iverson bracket for Boolean expression: and . They are all normalized to where typically 0 is the best score and 1 is the worst.

| Quantity | Definition | Description |

|---|---|---|

| κ=stochastic(ε) | 0: fully deterministic, 1: fully stochastic at ε | |

| % negative(ε) | percentage of negative | |

| % no fits(ε) | percentage of where no fit is available | |

| % extrap.(ε) | percentage of that where extrapolated |

2.5. Illustrative Example

To get an understanding of the quantities, let us first apply the methods to the Lorenz attractor, as a deterministic chaotic system, and to its noisy version as a stochastic system.

Deterministic system: Lorenz attractor The Lorenz attractor is obtained as the solution to the following differential equations:

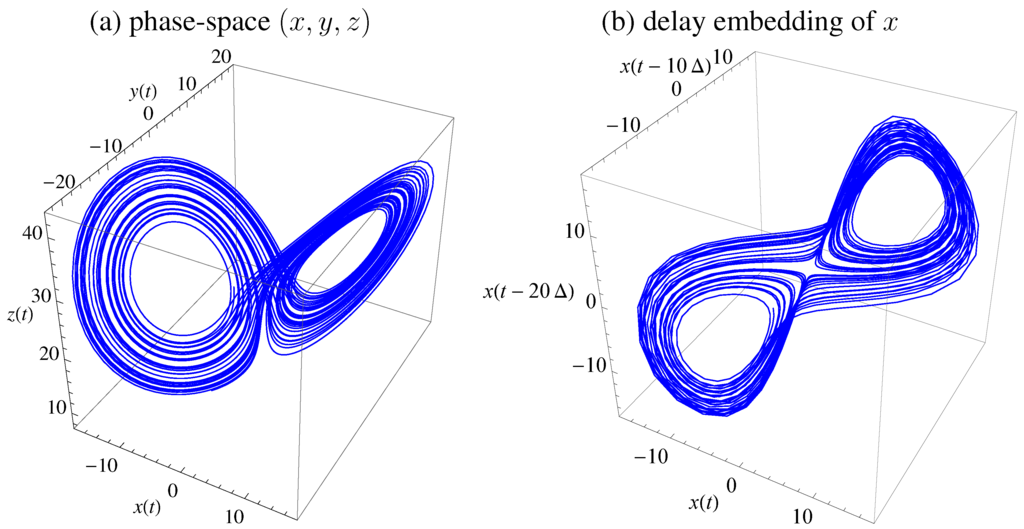

integrated with standard parameters , , . Figure 1 displays the trajectory in phase-space () and using delay embedding of using and time delay is (for sampling time ). The equations where integrated with Runge-Kutta fourth order with step size . An alternative time delay may be obtained from first minimum of mutual information as a standard choice [39,40], which would be , but yields clearer results for presentation.

Figure 1.

Lorenz attractor with standard parameters. (a) Trajectory in state space . (b) Embedding of x with , time-delay , and .

Figure 1.

Lorenz attractor with standard parameters. (a) Trajectory in state space . (b) Embedding of x with , time-delay , and .

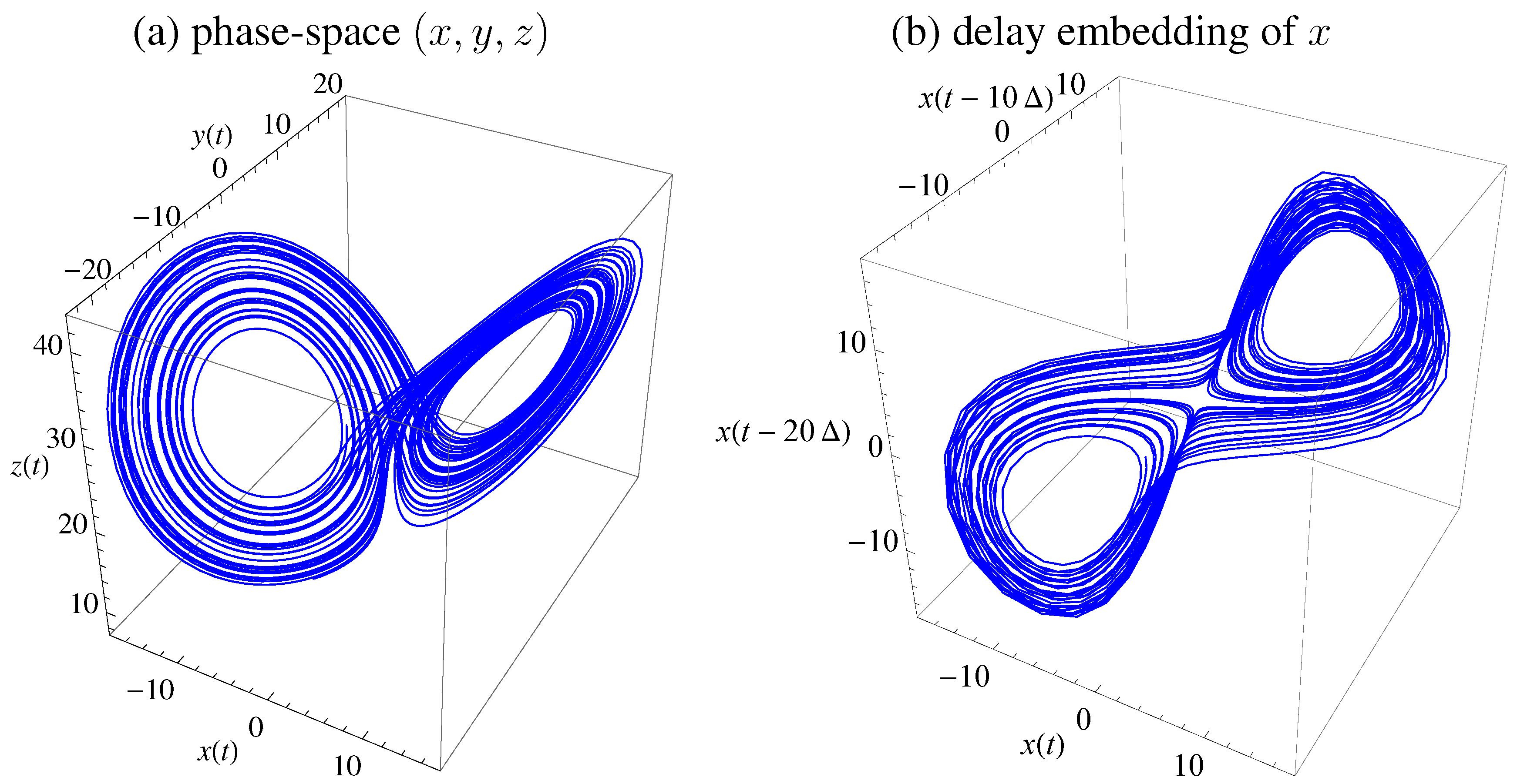

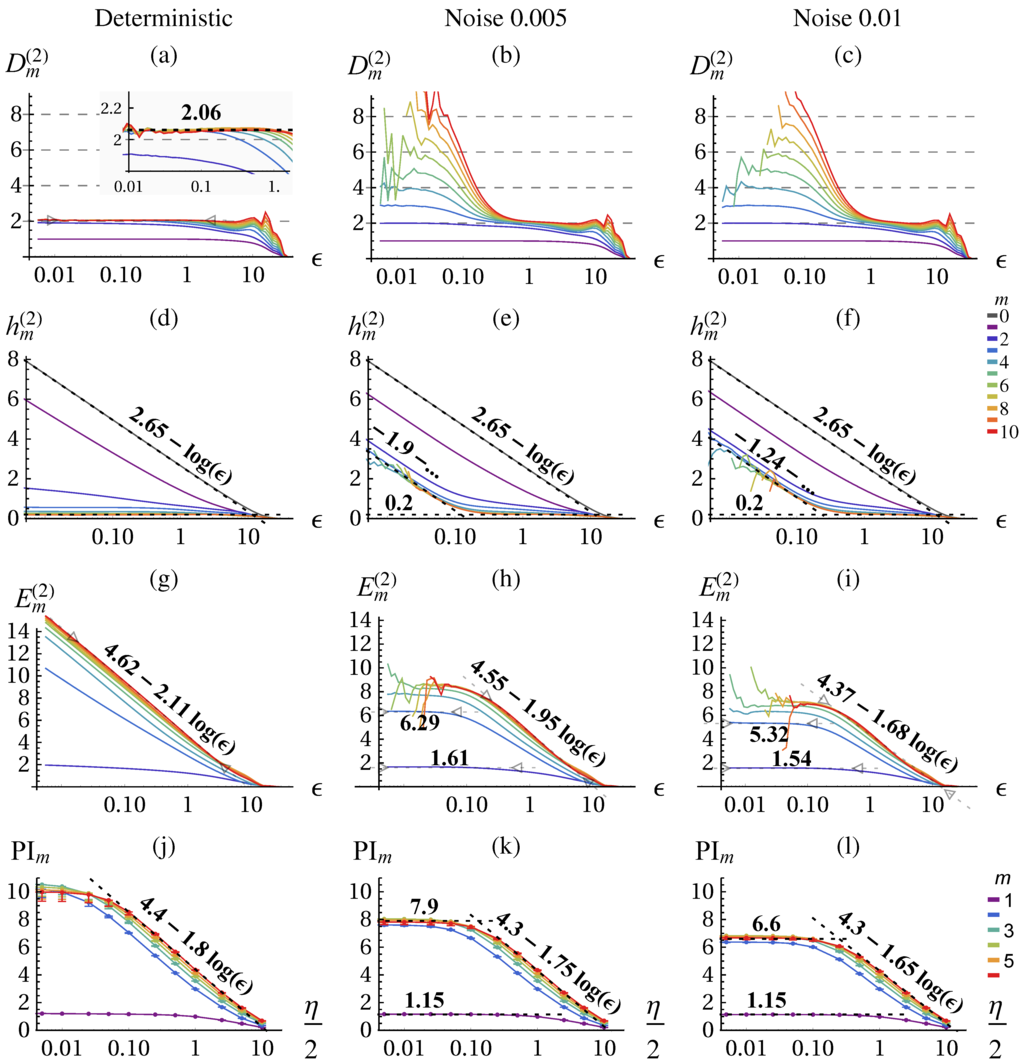

The result of the correlation sum method using TISEAN [41] is depicted in Figure 2 for data points. From the literature, we know that the chaotic attractor has a fractal dimension of [38] which we can verify with the correlation dimension , see Figure 2a for and small ε. The conditional (d) becomes constant and identical for larger m. The excess entropy (g) approaches the predicted scaling behavior of: (Equation (16)) with and . The predictive information . (j) shows the same scaling behavior on the coarse range but with a smaller slope and saturates at small ε.

Stochastic system: noisy Lorenz attractor In order to illustrate the fundamental differences between deterministic and stochastic systems when analyzed with information theoretic quantities we consider now the Lorenz dynamical system with dynamic noise (additive noise to the state () in Equations (38)–(40) before each integration step) as provided by the TISEAN package [41]. The dimension of a stochastic systems is infinite, i.e., for embedding m the correlation integral yields the full embedding dimension as shown in Figure 2b,c for small ε.

On large scales the dimensionality of the underlying deterministic system can be revealed to some precision either from or from the slope of or PI. Thus, we can identify a determinstic and a stochastic scaling range with different qualitative scaling behavior of the quantities. In contrast with the deterministic case, the excess entropy converges in the stochastic scaling range and no more contribution from are observed as shown in Figure 2h,i. By measuring the ε-dependence (slope) we can actually differentiate between deterministic and stochastic systems and scaling ranges, which is performed by the algorithm Section 2.4 and presented in Figure 3. The predictive information Figure 2k,l again yields a lower slope, meaning a lower dimension estimate, but is otherwise consistent. However, it does not allow to safely distinguish determinstic and stochastic systems, because it always saturates due to the effect of finite amount of data.

Figure 2.

Correlation dimension (Equation (34)) (a)–(c), conditional block entropies (Equation (35)) (d)–(f), excess entropy (Equation (36) (g)–(i), and predictive information (Equation (18)) (j)–(l) of the Lorenz attractor estimated with the correlation sum method (a)–(i) and KSG (j)–(l) for the deterministic system (first column) and with absolute dynamic noise , and (second and third column respectively). The error bars in (j)–(l) show the values calculated on half of the data. All quantities are given in nats (natural unit of information with base e) and in dependence of the scale (ε,η in space of x (Equation (38))) for a range of embeddings m, see color code. In (d)–(f) the fits for allow to determine and for give and , Equations (27)–(30). Parameters: delay embedding of x with .

Figure 2.

Correlation dimension (Equation (34)) (a)–(c), conditional block entropies (Equation (35)) (d)–(f), excess entropy (Equation (36) (g)–(i), and predictive information (Equation (18)) (j)–(l) of the Lorenz attractor estimated with the correlation sum method (a)–(i) and KSG (j)–(l) for the deterministic system (first column) and with absolute dynamic noise , and (second and third column respectively). The error bars in (j)–(l) show the values calculated on half of the data. All quantities are given in nats (natural unit of information with base e) and in dependence of the scale (ε,η in space of x (Equation (38))) for a range of embeddings m, see color code. In (d)–(f) the fits for allow to determine and for give and , Equations (27)–(30). Parameters: delay embedding of x with .

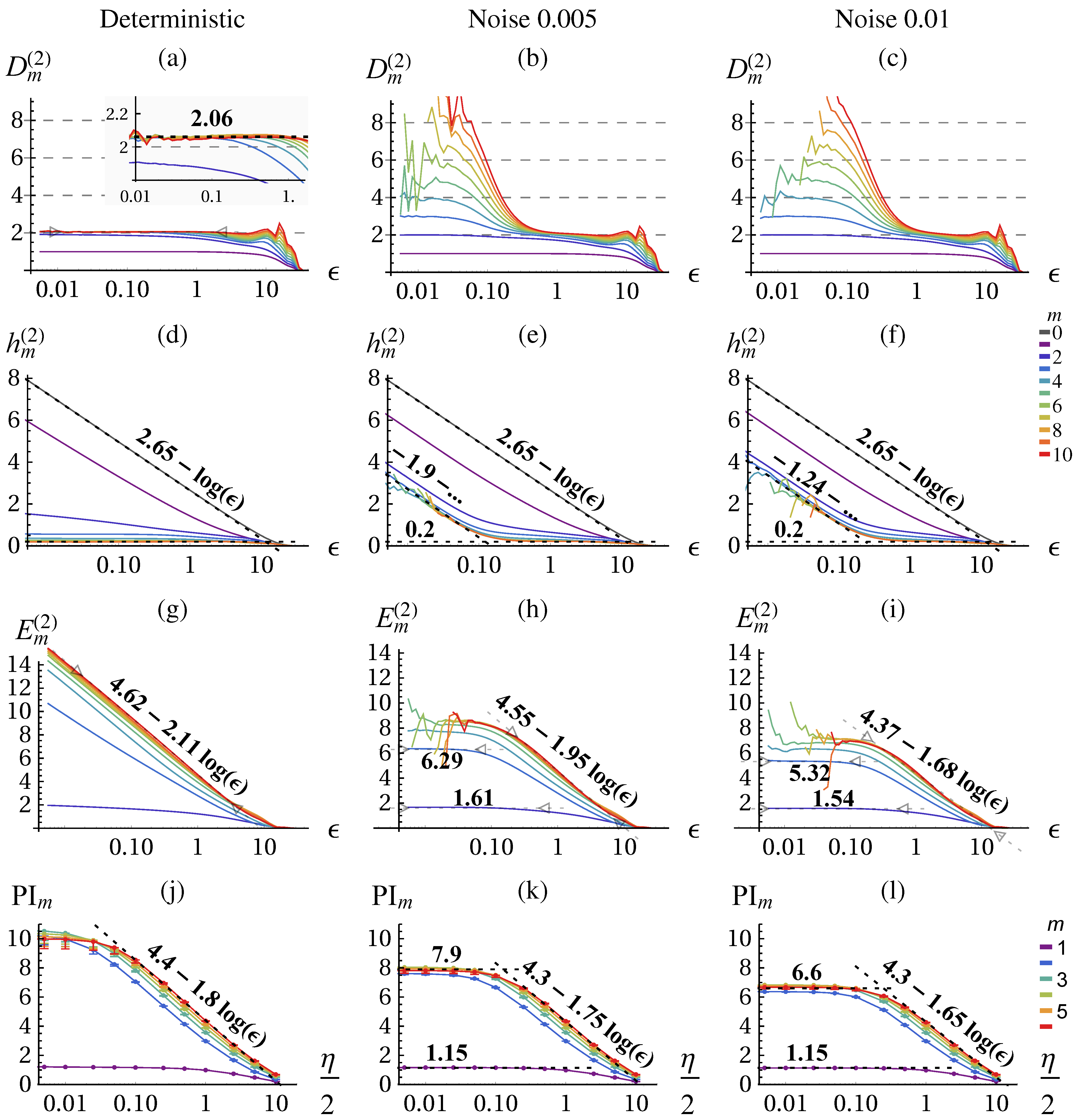

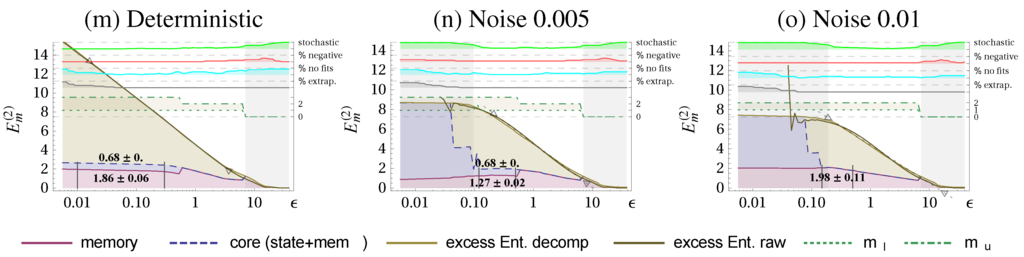

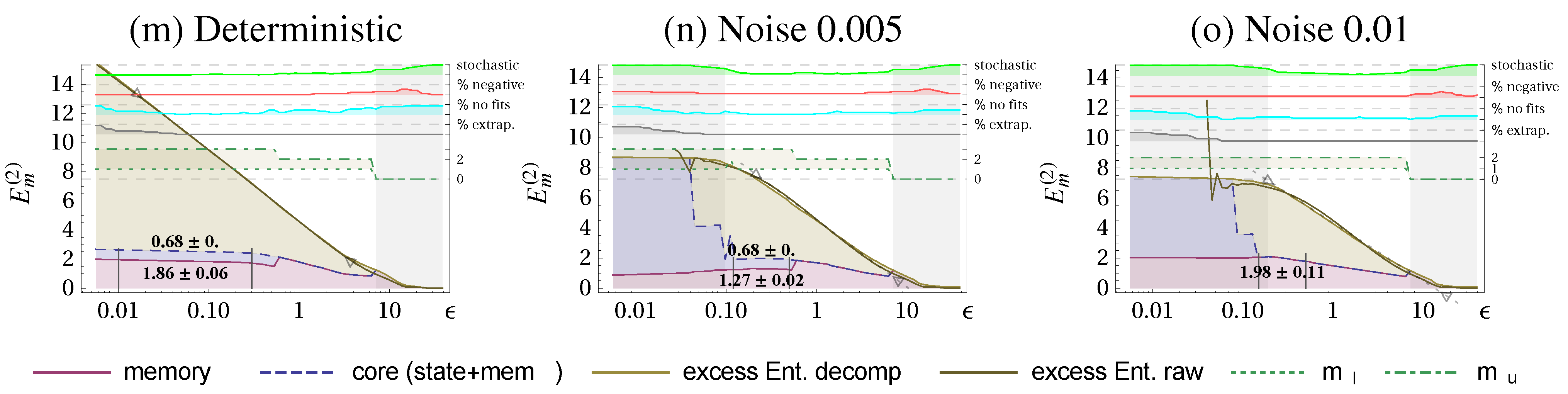

Figure 3.

Excess Entropy decomposition for the Lorenz attractor into state complexity (blue shading), memory complexity (red shading), and ε-dependent part (beige shading) (all in nats). Columns as in Figure 2: deterministic system (a), with absolute dynamic noise (b), and (c). A set of quality measures and additional information is displayed on the top using the right axis. and refer to the terms in (Equation (37)). Scaling ranges that are identified as stochastic are shaded in gray (stochastic indicator ). In manually chosen ranges, marked with vertical black lines, we evaluate the mean and standard deviation of the memory- and state complexity. Parameters: .

Figure 3.

Excess Entropy decomposition for the Lorenz attractor into state complexity (blue shading), memory complexity (red shading), and ε-dependent part (beige shading) (all in nats). Columns as in Figure 2: deterministic system (a), with absolute dynamic noise (b), and (c). A set of quality measures and additional information is displayed on the top using the right axis. and refer to the terms in (Equation (37)). Scaling ranges that are identified as stochastic are shaded in gray (stochastic indicator ). In manually chosen ranges, marked with vertical black lines, we evaluate the mean and standard deviation of the memory- and state complexity. Parameters: .

Let us now consider the decomposition of the excess entropy as discussed in Section 2.2 and Section 2.4 to see whether the method is consistent. Figure 3 shows the scale-dependent decomposition together with the determined stochastic and deterministic scaling ranges. The resulting values for comparing the determinstic scaling range are given in Table 2 and reveal, that the decreases for increasing noise level similarly as the constant reduces. This is a consistency check because the intrinsic scale (amplitudes) of the deterministic and noisy Lorenz system are almost identical. We also checked the decomposition for a scaled version of the data where we found the same values state-, memory- and core complexity. We see also that the state complexity vanishes for larger noise, because the dimension drops significantly below 2, where we have no summands in the state complexity and the constant is also 0.

Table 2.

Decomposition of the excess entropy for the Lorenz system. Presented are the unrefined constant and dimension of the excess entropy, the state-, memory-, and core complexity (in nats) determined in the deterministic scaling range, see Figure 3.

| Determ. | Noise | Noise | Noise | ||

|---|---|---|---|---|---|

| Equation (16) | D | 2.11 | 1.95 | 1.68 | 1.59 |

| Equation (16) | 4.62 | 4.55 | 4.37 | 4.21 | |

| Equation (27) | 0 | 0.12 | 0.23 | 0.45 | |

| Equation (24) | 0.68 ± 0 | 0.68 ± 0 | 0 | 0 | |

| Equation (25) | 1.86 ± 0.06 | 1.27 ± 0.02 | 1.98 ± 0.11 | 1.2 ± 0.1 | |

| Equation (26) | 2.54 ± 0.06 | 1.95 ± 0.02 | 1.98 ± 0.11 | 1.2 ± 0.1 |

To summarize, in deterministic systems with a stochastic component, we can identify different ε-ranges (scaling ranges) within which stereotypical behaviors of the entropy quantities are found. This allows us to determine the dimensionality of the deterministic attractor and decompose the excess entropy in order to obtain two ε-independent complexity measures.

3. Results

In this section, we will apply our methods to the behavior of robotic systems. We use a previously published control scheme that can generate a large variety of smooth and coherent behaviors from a local learning rule [11,42]. As it is a simplified version of the time-local predictive information maximization controller we aim at answering the hypothesis that the controller actually increases the complexity of behavior during learning.

3.1. Application to Robotics: Controller and Learning

Let us now briefly introduce the control framework and the robots used to generate the behavior we are analyzing in the following. The robots are simulated in a physically realistic rigid-body simulation [43]. Each robot has a number of sensors producing real-valued stream and a number of actuators, also controlled by real values . The sensors measure the actual joint angles and the body accelerations. In all examples the actuators are position controlled servo motors with the particular property to have no power around the set-point. This enables small perturbations to be perceivable in the joint-position sensor values. The time is discretized with an update frequency of 50 Hz.

The control is done in a reactive closed-loop fashion by a one layer feed-forward neural network:

where C is a weight matrix, h is a weight vector, and tanh is applied component-wise. For fixed weights this controller is very simple, however it can generate non-trivial behavioral modes due to the complexity of the body-environment interaction. For instance with a scaled rotation matrix C an oscillatory behavior may be controlled which frequency is adaptive to how fast the physical system follows. To get a variety of behavioral modes the parameters () have to be changed which can be done by optimizing certain objective functions. Several methods have been proposed that use generic (robot independent) internal drives. Homeokinesis [8,44] for instance balances dynamical instability with predictability. More recently the maximization of the time-local predictive information of the sensor process was used as driving force [9]. On an intuitive level, the maximization of the PI (Equation (18)) of the sensor process leads to a high variety of sensory input due to the entropy term while keeping a temporal structure by minimizing the future uncertainty reflected in the conditional entropy . Indeed we have found that it produces smooth and coordinated behavior in different robotic systems, including hexapod robots and humanoids. On the other hand, maximizing PI also leads to behavior of high complexity with maximal dimension as discussed in Section 2.2. A simplified version of the PI-maximizing algorithm, called ULR published in [11,42], leads to more coherent and defined behavioral modes of seemingly low dimensionality which we use in this paper. In order to apply the method to behavior of robots or possibly to that of animals the state space reconstruction has to be done from certain measurements. Here we use a single sensor value, but the formalism can be extended to multiple sensor values as well. It must be guaranteed that sufficient physical coupling exist between the measured quantity and the rest of the body.

3.2. Experiments

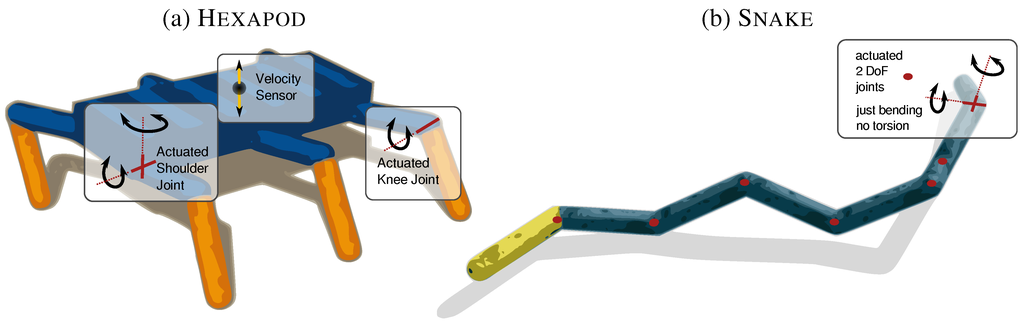

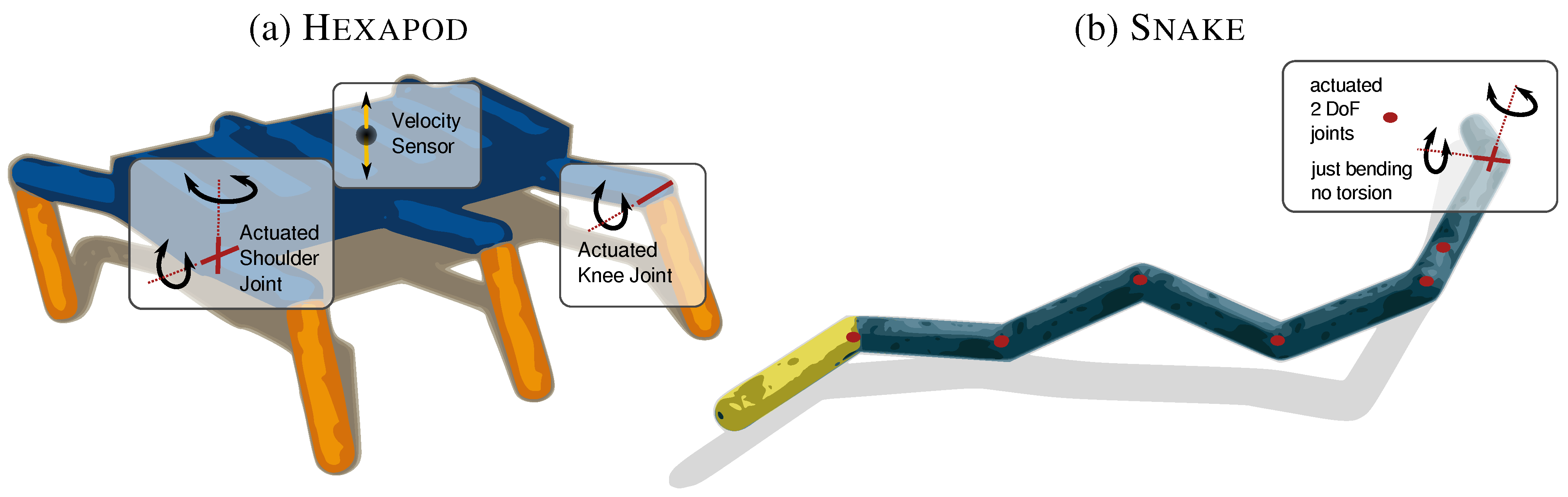

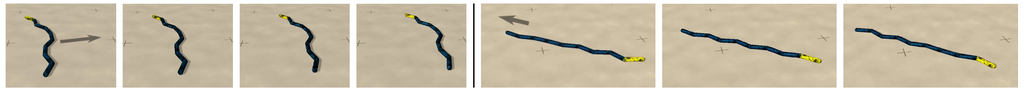

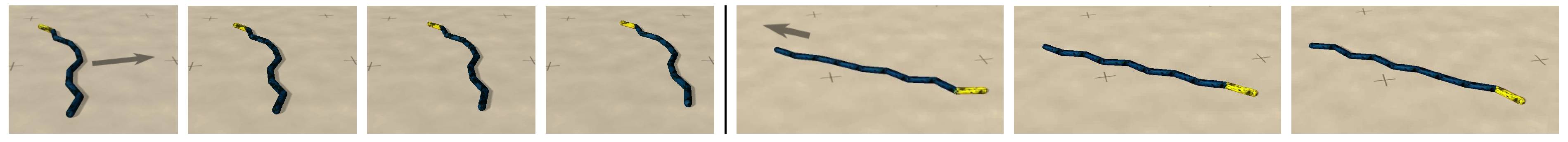

Let us now present the emergent behaviors obtained from the control algorithm ULR. We use two robots, a snake-like robot and an insect-like hexapod robot as illustrated in Figure 4. The behaviors of the snake are published here for the first time, whereas the behaviors of the hexapod have been published before in Der and Martius [11]. For an overview and links to the videos see Table 3. However, this paper focuses primarily on the quantification of behavior and not on its generation. The Snake has identical cylindrical segments which are connected by actuated 2 degrees of freedom (DoF) universal joints. In this way two neighboring segments can be inclined to each other, but without relative torsion along the segment-axes. The sensor values are the actual positions of the joints. If the robot is controlled with the ULR controller very quickly highly coordinated behavioral modes emerge, such as crawling or side-rolling, see Figure 5. An external perturbation can bring the robot into another behavior. Without perturbation the robot typically transitions into one behavior and remain in it. We recorded samples from a typically side-rolling and crawling behavior.

Table 3.

Experiments, data sets and videos, see [18].

| Robot | Behavior | Length | Dataset | Video |

|---|---|---|---|---|

| Snake | side rolling | D-S1 | Video S1 | |

| Snake | crawling | D-S2 | Video S2 | |

| Hexapod | jumping after 2 min | D-H1 | Video H1 | |

| Hexapod | jumping after 4 min | D-H2 | Video H2 | |

| Hexapod | jumping after 8 min | D-H3 | Video H3 |

Figure 4.

Robots used for the experiments. (a) The Hexapod . 18 actuated DoF: 2 shoulder and 1 knee joint per leg. (b) The Snake . 14 DoF: 8 segments connected by 2 DoF joints each.

Figure 4.

Robots used for the experiments. (a) The Hexapod . 18 actuated DoF: 2 shoulder and 1 knee joint per leg. (b) The Snake . 14 DoF: 8 segments connected by 2 DoF joints each.

Figure 5.

Side-rolling and crawling of the Snake . Note that also for rolling each actuator has to act accordingly because no torsion is possible, see Figure 4b.

Figure 5.

Side-rolling and crawling of the Snake . Note that also for rolling each actuator has to act accordingly because no torsion is possible, see Figure 4b.

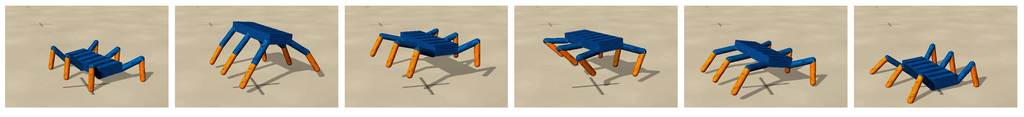

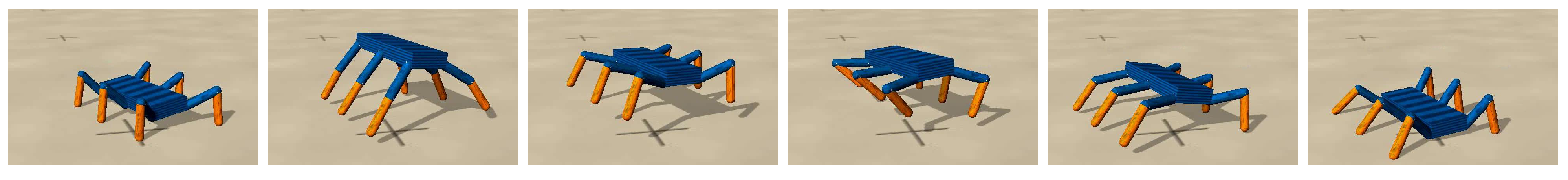

The Hexapod is a insect-like robot with 18 DoF, see Figure 4a. The sensor values are the joint angles and the vertical velocity of the trunk. Note, that the actual phase space dimension is as we have joint angles, joint velocities, and height and orientation of the trunk. Controlled with the ULR controller the robot develops a jumping behavior, see Figure 6, within 8 min of simulated time [11]. The behavior of the robot changes due to the update dynamics of the controller. For the analysis a stationary process is required, such that we stop the update at some time and obtain a static controller (fixed ). Using this the robots behavior stays essentially the same. We analyze three behaviors, where the learning was stopped at 2, 4 and 8 min after the start and recorded each samples. After “2 min” the robot moves periodically up and down. The “4 min” behavior shows already signs of jumping and the final behavior after “8 min” is a whole body periodic jumping as displayed in Figure 6.

Figure 6.

Jumping motion pattern emerging with vertical speed sensor 8 min after the start.

Figure 6.

Jumping motion pattern emerging with vertical speed sensor 8 min after the start.

3.3. Quantifying the Behavior

We start with analyzing the data from the Snake experiments followed by the Hexapod experiments.

3.3.1. Snake

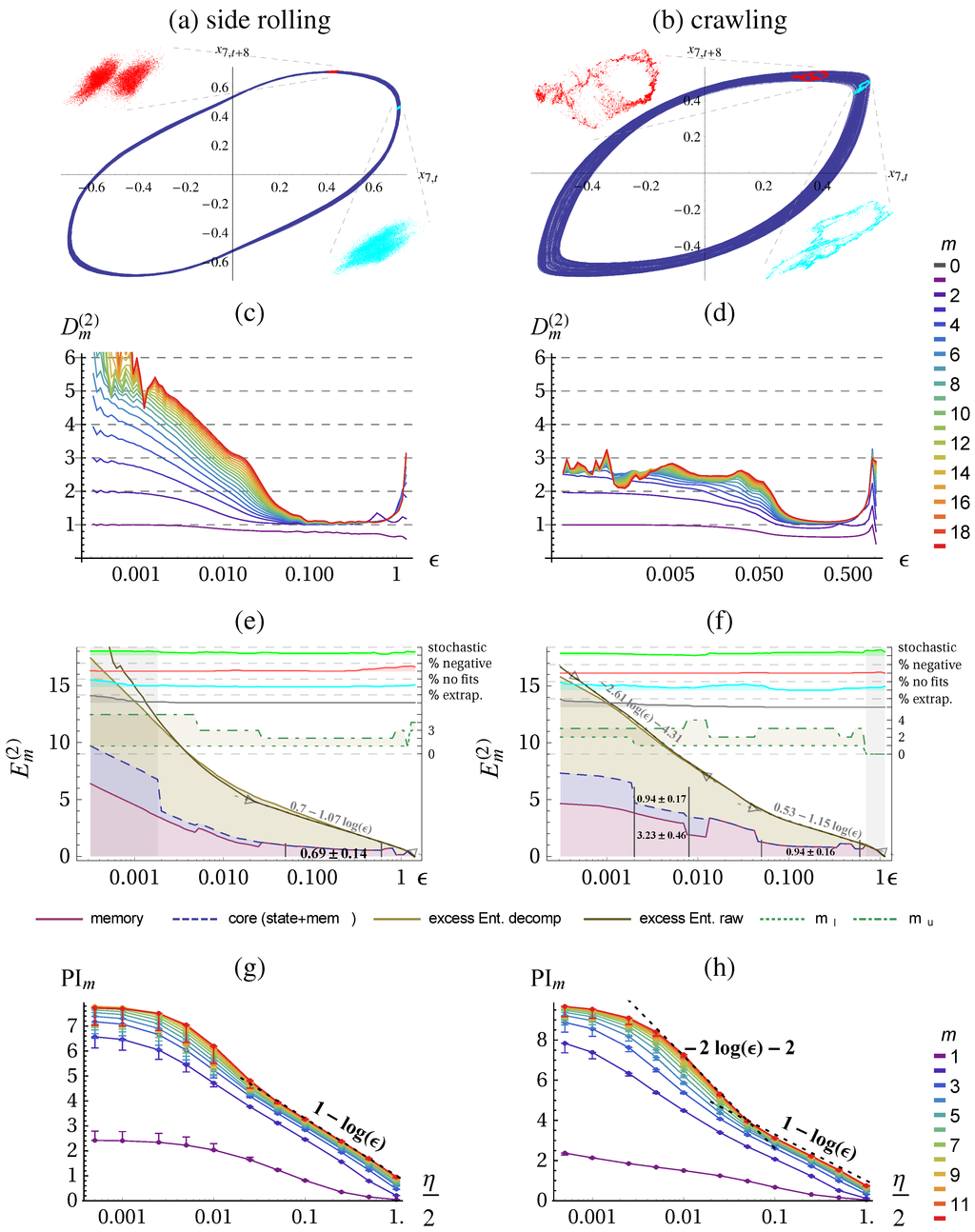

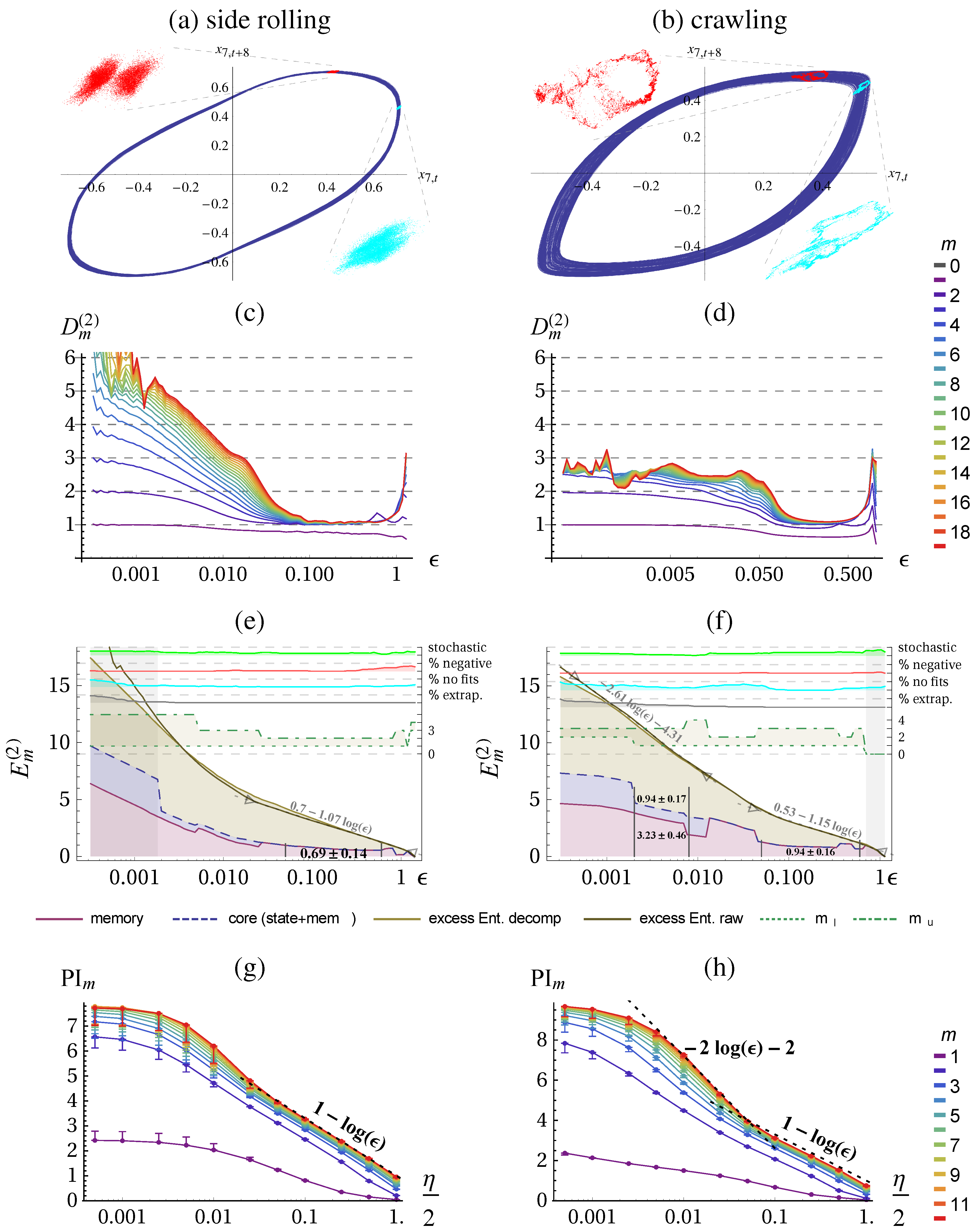

In Figure 7 the result of the quantification of the two behaviors (Figure 5) are presented. We used the delay embedding of the sensor which is one of the joint angular sensors of the middle joint. We find that the side-rolling behavior is roughly 1-dimensional on the behaviorally relevant scales (), see Figure 7c. On smaller scales the dimension raises with each embedding although the embedding dimension is not reached on the observable length scales and therefore the excess entropy does not saturate. On the larger scales we find the expected scaling behavior given by Equation (16) with .

The crawling behavior is also 1-dimensional on the coarse length scales (), see Figure 7d. The smaller scales, however, bear a surprise because the dimension raises to a value of . The excess entropy shows also a slope of on the coarse length scales

Calculating the predictive information using the KSG method yields a similar result on the large scales, as shown in Figure 7g,h. For smaller scales, however, the predictive information always saturates, as seen before in Figure 2j. In the crawling case we get again an underestimated dimension of .

Figure 7.

Quantification of two Snake behaviors. First column: side rolling; second column: crawling (see Figure 5(a,b). Phase plots with Poincaré sections at the maximum of first and second embedding. (e,f) Excess entropy with decomposition (in nats), see Figure 3 for more details. (g,h) Predictive information (in nats), see Figure 2 for more details. Parameters: delay embedding of sensor (middle segment) with , , .

Figure 7.

Quantification of two Snake behaviors. First column: side rolling; second column: crawling (see Figure 5(a,b). Phase plots with Poincaré sections at the maximum of first and second embedding. (e,f) Excess entropy with decomposition (in nats), see Figure 3 for more details. (g,h) Predictive information (in nats), see Figure 2 for more details. Parameters: delay embedding of sensor (middle segment) with , , .

The decomposition of the excess entropy follows for the above experiments are given in Table 4. Interestingly, the attributes a significantly higher complexity to the crawling behavior, whereas the plain excess entropy constant would suggest that side-rolling is more complex. Since we take out the effect of different scales our new quantities are more trustworthy.

Table 4.

Decomposition of the excess entropy for the Snake. Dimension and unrefined constant, state-, memory-, and core complexity (in nats) on the coarse deterministic scaling range .

| Side Rolling | Crawling | ||

|---|---|---|---|

| Equation Equation (16) | D | 1.07 | 1.15 |

| Equation Equation (16) | 0.7 | 0.53 | |

| Equation Equation (24) | 0.0 ± 0 | 0 ± 0 | |

| Equation Equation (25) | 0.69 ± 0.14 | 0.94 ± 0.16 | |

| Equation Equation (26) | 0.69 ± 0.14 | 0.94 ± 0.16 |

Note, that for the crawling behavior at the small scaling range () we can also perform the decomposition and obtain , , however these values are mostly useful for comparison, which we only have on the large scale. Note that and are not really constant in the plots because the deterministic scaling range is not very good in this case (cf. the quality measure “no fits” in Figure 7f).

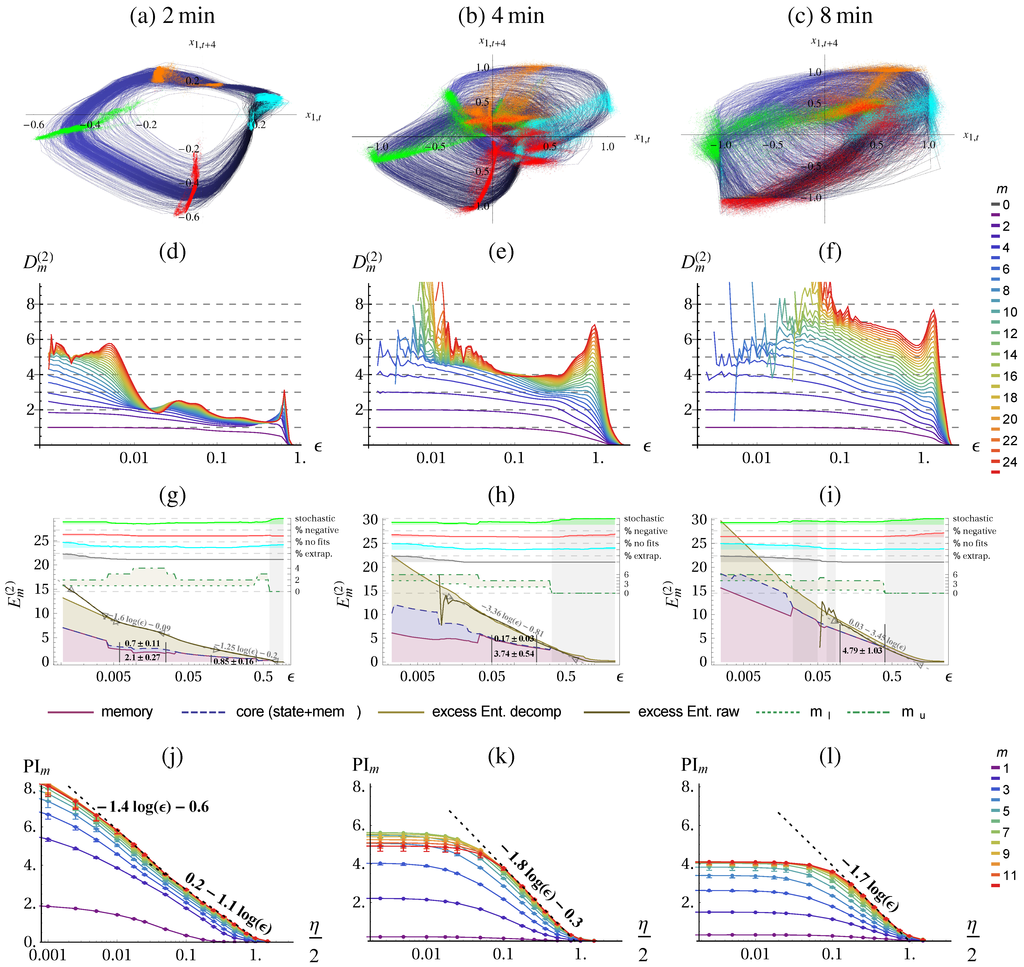

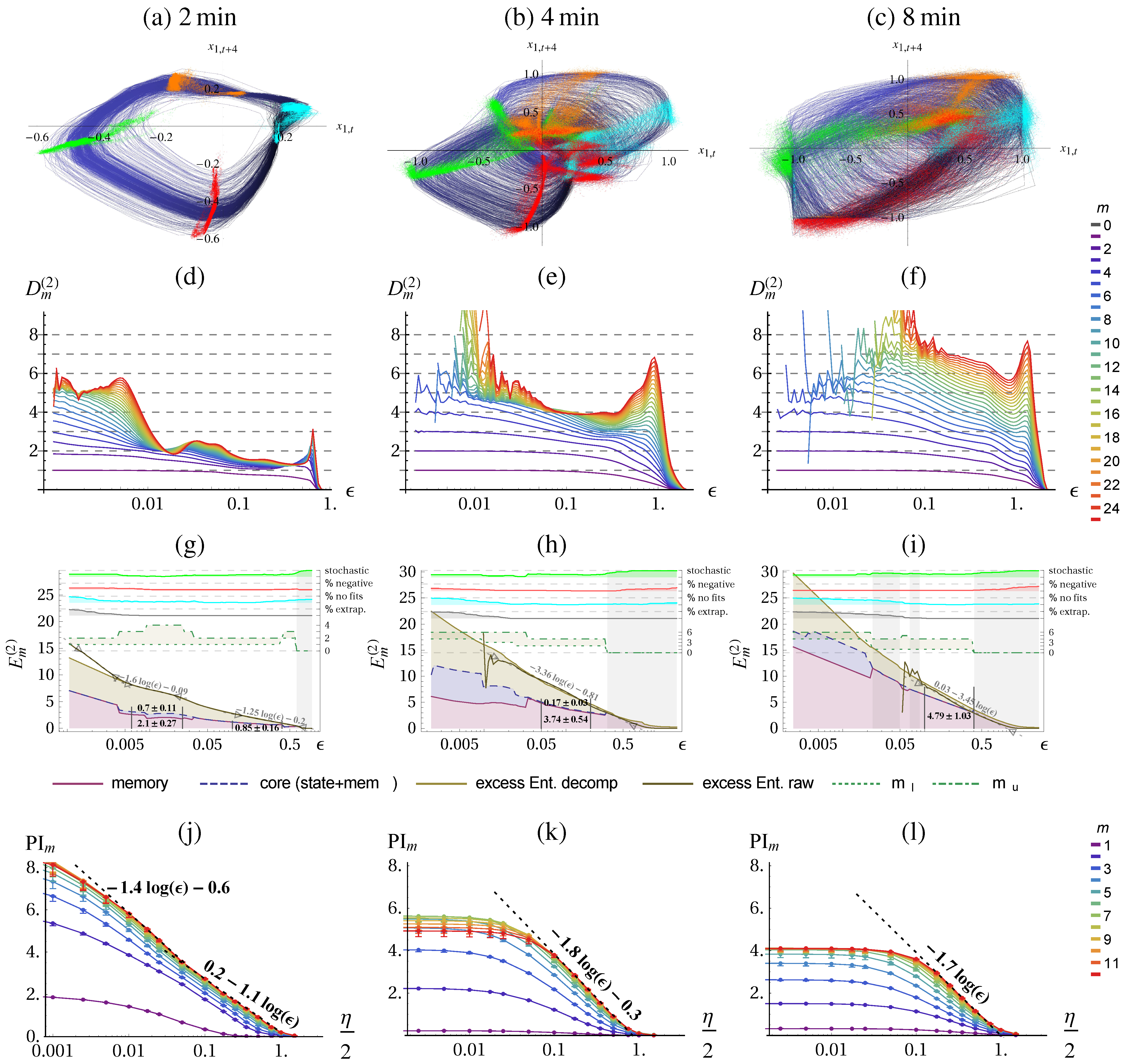

Figure 8 shows the quantification of the three successive behaviors of the Hexapod robot, reconstructed from the delay embedding of sensor (up-down direction of right hind leg). We clearly see an increase in dimensionality of the behavior, Figure 8d–f which we can expect because the high jumping behavior requires more compensation movements due to different landing poses. For the first behavior we can identify three scaling ranges of different dimensionality and complexity. On the coarse level the behavior is described by a dimension of whereas on medium scales we find an approximately dimensional behavior and on the small scales it appears 5 dimensional.

For the second behavior we find only a short plateau in Figure 8e at of dimensionality 4. The excess entropy shows a lower dimensionality of , see Figure 8h. For the third behavior we cannot identify a clear plateau in the dimension plot. The excess entropy allows us to attribute a dimensionality and core complexity to the behavior on large scales, as summarized in Table 5. Whereas the scaling behavior of the excess entropy suggests a similar dimensionality, the core complexity suggest that the 8 min behavior is more complex.

Figure 8.

Quantification of the three Hexapod behaviors. Behaviors: first column: 2 min; second column: 4 min; third column 8 min, see Figure 6. (a)–(c) Phase plots with Poincaré sections at the maximum and minimum of first and second embedding. The color of the trajectory (blue–black) encodes for the third embedding dimension. (g)–(i) Excess entropy with decomposition (in nats), see Figure 3 for more details. The excess entropy fit at the smallest scale in (g) is . (j)–(l) Predictive information (in nats), see Figure 2 for more details. Parameters: delay embedding of sensor (up-down direction of right hind leg) with , , .

Figure 8.

Quantification of the three Hexapod behaviors. Behaviors: first column: 2 min; second column: 4 min; third column 8 min, see Figure 6. (a)–(c) Phase plots with Poincaré sections at the maximum and minimum of first and second embedding. The color of the trajectory (blue–black) encodes for the third embedding dimension. (g)–(i) Excess entropy with decomposition (in nats), see Figure 3 for more details. The excess entropy fit at the smallest scale in (g) is . (j)–(l) Predictive information (in nats), see Figure 2 for more details. Parameters: delay embedding of sensor (up-down direction of right hind leg) with , , .

3.3.2. Hexapod

The predictive information calculated with the KSG method underestimates the dimension by far and is only able to report structure on the coarsest scale, see Figure 8j–l and compare for instance slope of PI in (k) with slope of in (h). Note that usually only the value of the mutual information without added noise (or only very small one) is reported (left-most value in our graphs). In our case this would attribute the highest complexity to the 2 min behavior and the lowest to the 8 min behavior, see also Table 5. This is because the intrinsic noise level increases from 2 min to 8 min, such that the plateau level is much lower.

Table 5.

Decomposition of the excess entropy for the Hexapod behaviors on the fine and coarse scale. For the 4 min and 8 min behavior we have no reliable estimate on the fine scale. The core complexity and the dimension rises from 2 min to 4 min. From 4 min to 8 min the dimension stayed the same but the core complexity increases. The last line provides the predictive information on the smallest scale.

| 2 min (Fine) | 2 min (Coarse) | 4 min (Coarse) | 8 min (Coarse) | ||

|---|---|---|---|---|---|

| ε-Range | |||||

| Equation (16) | D | 1.6 | 1.25 | 3.36 | 3.45 |

| Equation (16) | −0.09 | −0.2 | −0.81 | 0.03 | |

| Equation (24) | 0.7 ± 0.11 | 0 ± 0 | 0.17 ± 0.03 | 0 ± 0 | |

| Equation (25) | 2.1 ± 0.27 | 0.85 ± 0.16 | 3.74 ± 0.54 | 4.79 ± 1.03 | |

| Equation (26) | 2.8 ± 0.38 | 0.85 ± 0.16 | 3.91 ± 0.57 | 4.79 ± 1.03 | |

| Equation (18) | - |

4. Discussion

In recent years research in autonomous robots has been more and more successful in developing algorithms for generating behavior from generic task-independent objective functions [1,2,3,4,5,6,7,9]. This is valuable, on the one hand, as a step towards understanding and creating autonomy in artificial beings, and on the other hand, to obtain eventually more effective learning algorithms for mastering real-life problems. This is because the task-agnostic drives aim at efficient exploration of the agent’s possibilities by generically useful behavior [6,8,9,45,46] and at generating an operational state of the system [47] from which specific tasks can quickly be accomplished.

Very often emerging behaviors are not the result of optimizing a global function but for instance self-organize from local interaction rules. We propose to use information theoretic quantities for quantifying these behaviors. There are already studies using information theoretic measures to characterize emergent behavior of autonomous robots [48,49,50]—in particular with the aim to use them as task-independent objective functions [1,2,3,4,5,9]. Usually these information theoretic quantities are estimated for a fixed partition, i.e., a specific discretization, or by using differential entropies assuming that the data were sampled from a probability density. In this paper, we show that it is both necessary and fruitful to explicitly take into account the scale dependence of the information measures.

We quantify and compare behaviors emerging from self-organizing control of high-dimensional robots by estimating the excess entropy and the predictive information of their sensor time series. The estimation is done on the one hand using entropy estimates based on the correlation integral and on the other hand the predictive information is directly estimated as a mutual information using an estimator (KSG) based on differential entropies. The latter implicitly assumes stochastic data, i.e., the existence of a smooth probability density for the temporal joint distribution, such that the predictive information converges towards a finite value. However, this is not the case for deterministic systems. Due to the adaptivity of the algorithm the predictive information is then calculated at a certain length scale which depends on the available amount of data. Thus, a naive application of the KSG estimator can lead to totally meaningless results, because it does not allow to control for the length scale. To avoid this problem and to control the length scale, we applied additive noise. Nevertheless, our results show that the complexity estimates based on the KSG estimator are much less reliable than those based on the correlation integral. While for simple stochastic systems such as autoregressive models both estimators deliver consistent results for small embedding dimensions, the predictive information is underestimated due to finite sample effects for larger embedding dimensions (cf. Figure A1). For more complex systems such as the Lorenz attractor or the behavioral data from robots the KSG algorithm underestimates the dimensionality, see e.g., Figure 8j–l, does not allow to extract length scales properly, and blurs the distinction between stochastic and deterministic scaling ranges.

An important result of the presented study is that the complexity of the behavior can be different at different length scales: an example is the crawling behavior of the Snake which is 1-dimensional on the coarse scale and 2.6-dimensional on the small scales, see Figure 7 (the same for the Hexapod). In order to quantify these effects we analyzed for the first time the resolution dependence of the well-known complexity measure for time series known as excess entropy [14,15], effective measure complexity [16], or predictive information [13]. We show that the scale dependent excess entropy reflects different properties of the system: scale, dimension and correlations. For deterministic systems the attractor dimension controls how the complexity increases with increasing resolution, while the offset depends on the overall scale of the behavior, e.g., the amplitude of an oscillation, and on the structure of the temporal correlations. In order to disentangle the latter two effects we introduced a new decomposition of the excess entropy with the core complexity quantifying the structural complexity beyond the dimension. In order to estimate it one has to remove the effect of the overall scale of the behavior from the excess entropy. We devised an algorithm based on an automatic identification of scaling ranges in the conditional mutual information .

For noisy systems the excess entropy remains finite. The actual value, however, depends on the noise level, which is consistent with the intuition: The larger the noise the lower the complexity if all other factors remain constant. While we have already a good understanding of these quantities for systems with clearly identifiable scaling regions as in the noisy Lorenz system, very often real systems do not show such ideal types of behavior. Therefore we had to use heuristic procedures which provide plausible results for the data under study. Our quantification of the behaviors of the Snake found that crawling has a higher complexity than side-winding, even though the original excess entropy would have suggested the opposite. For the Hexapod as a learning system (aiming at maximizing time local PI) we were able to quantify the learning progress: The decomposition of the excess entropy revealed the strategy used to increase the complexity: (1) The dimensionality of the behavior increased on the large scales. This does not necessarily mean that the attractor dimension in the mathematical sense increased, because the latter is related to behavior on infinitely small scales which might be irrelevant for the character of the behavior. (2) The core complexity is increased on the large scales indicating also more complex temporal correlations in the learned behavior. We also discuss further strategies for increasing the complexity of a time-series, that could be employed in designing future learning algorithms, most notably, the decrease in the entropy rate and the increase of the state complexity.

Our study contributes a well grounded and practically applicable quantification measure for the behavior of autonomous systems as well as time-series in general. In addition, a set of quality indicators are provided for self-assessment in order to avoid spurious results.

Future work is to develop a better theoretical understanding of the scale dependence of the excess entropy for realistic behaviors that are not low dimensional deterministic or linearly stochastic such as the behavior observed in the Hexapod after 8 minutes of learning, Figure 8. We expect also further insights by applying the same kind of analysis to movement data from animals and humans.

Acknowledgments

This work was supported by the DFG priority program 1527 (Autonomous Learning) and by the European Community’s Seventh Framework Programme (FP7/2007-2013) under grant agreement no. 318723 (MatheMACS) and from the People Programme (Marie Curie Actions) of the European Union’s Seventh Framework Programme (FP7/2007-2013) under REA grant agreement no. 291734.

Author Contributions

Georg Martius and Eckehard Olbrich conceived and designed the methods and experiments; Georg Martius performed the simulations and numeric calculations; Georg Martius and Eckehard Olbrich analyzed the data; Georg Martius and Eckehard Olbrich wrote the paper. Both authors have read and approved the final manuscript.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix

Appendix A. Comparison and Validation of Estimators Using Autoregressive Model of the Lorenz Attractor

In this appendix we compare the estimates based on the correlation integral with the estimates using the KSG algorithms for a system for which the excess entropy can be computed analytically: an autoregressive (AR) model of the Lorenz attractor, which results in a stochastic system with defined correlation structure. The dimension of a stochastic systems is infinite, i.e., for embedding m the correlation integral yields the full embedding dimension. However, the excess entropy stays finite.

The AR model of a time series is given by

where p is the order and the are the parameters which are fit to the data and independent identically distributed Gaussian noise.

Let us consider the example of an AR2 (p = 2) process

and calculation the mutual information of subsequent time steps:

For larger time horizons it suffices to calculate because of the Markov property more terms will not increase I.

where the are the correlation coefficient for i-step delay ().

Thus the 2-step mutual information is given by:

If the process is observed with delay τ, i.e., then only the in Equation (A15) have to be substituted by because β cancels. For convenience the list of the first follows:

For larger delays values the expressions become very large such that it becomes intractable to compute the MI for (requires ) because numerical errors accumulate even after algebraic simplification. The supplementary material [19] contains also a Mathematica file for calculating the MI’s up to .

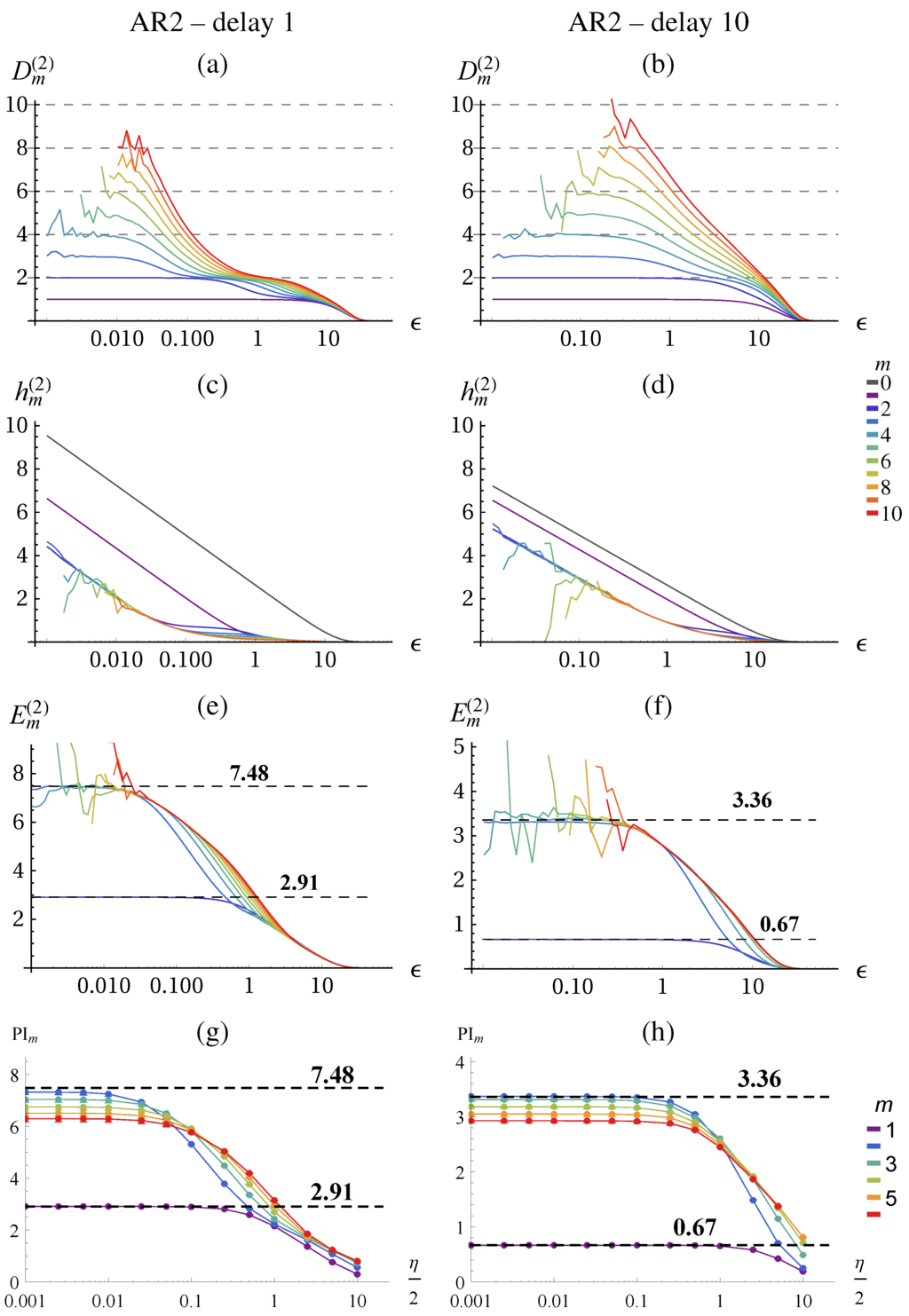

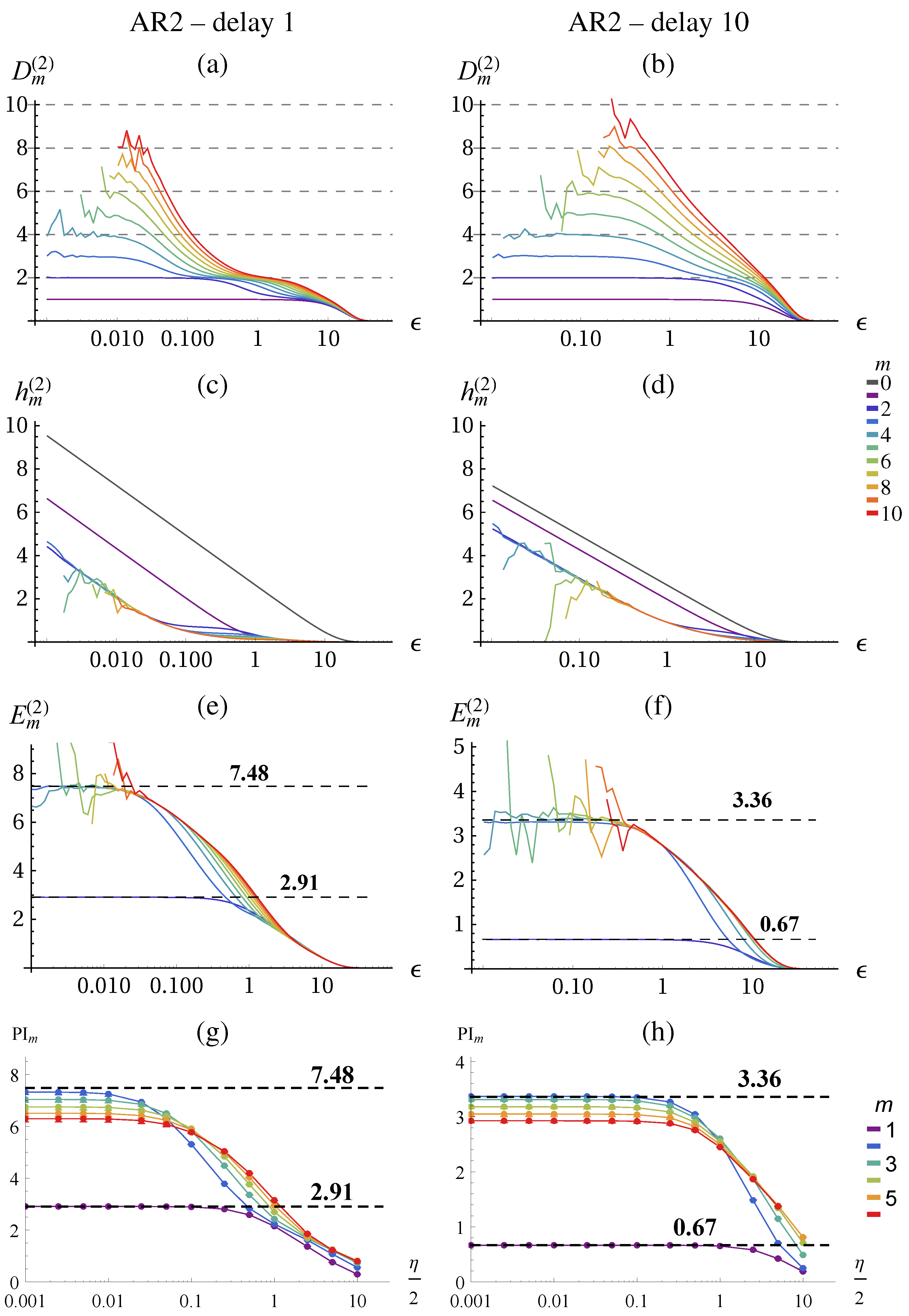

The predictive information (Equation (Equation (18)) can be also written as

For the AR2 process we have for due to Markov order 2. Thus, and for . For the excess entropy (Equation (15)) we find , , , for . Figure A1 shows the results for the AR2 model obtained from fitting the parameters to the lorenz system (). Indeed the conditional entropies become identical for , see (Figure A1c,d) and the excess entropies converge for to their theoretical values, see (Figure A1e,f). In the case of the KSG algorithm the values are correct for but for higher embedding dimensions we get a significant underestimation, see Figure A1g,h. This effect is even stronger for smaller neighborhood sizes k.

Figure A1.

Analysis of an AR-model () of the Lorenz attractor observed at delay 1 and delay 10. See Figure 2 for details. (a,b) The dimensionality corresponds to the embedding dimension (as long as the data suffices). (c,d) Conditional entropies show the entropy of the noise for . The difference between first and second, and second and third embedding reveal the actual structure of the time-series, better visible in (e) and (f). (e,f) The excess entropy converges to the theoretical values of for and to for (delay 1) and for and to for (delay 10). (g,h) Predictive information estimated with KSG algorithm with .

Figure A1.

Analysis of an AR-model () of the Lorenz attractor observed at delay 1 and delay 10. See Figure 2 for details. (a,b) The dimensionality corresponds to the embedding dimension (as long as the data suffices). (c,d) Conditional entropies show the entropy of the noise for . The difference between first and second, and second and third embedding reveal the actual structure of the time-series, better visible in (e) and (f). (e,f) The excess entropy converges to the theoretical values of for and to for (delay 1) and for and to for (delay 10). (g,h) Predictive information estimated with KSG algorithm with .

Appendix B. Details of the Decomposition Algorithm

Fitting

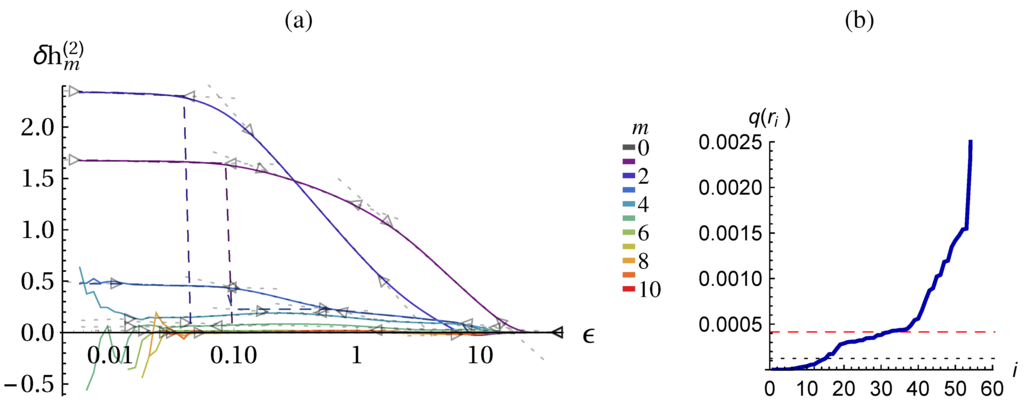

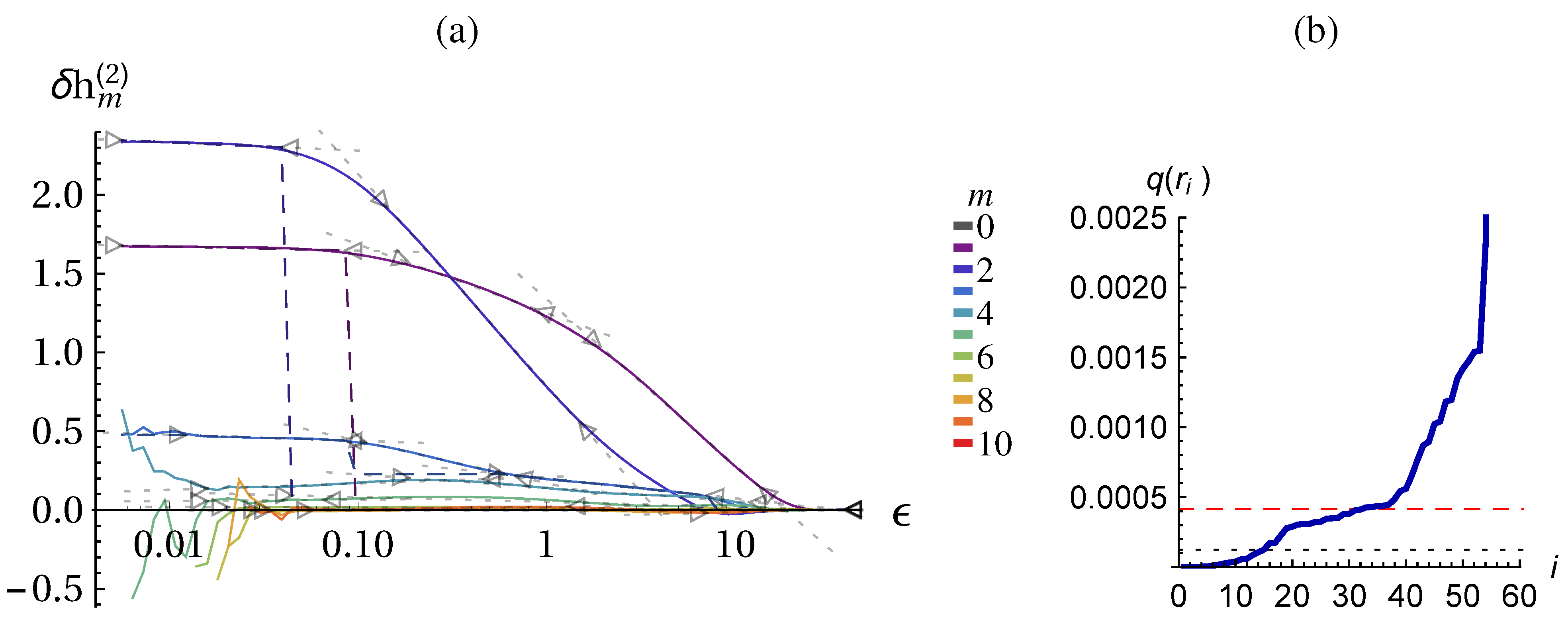

In deterministic scaling ranges the follow the form (possibly with s = 0). In a perfectly stochastic scaling range we have for all m. However, the behavior of a system may show several deterministic and stochastic scaling ranges. So we have to determine segments where a certain typical behavior is found. The following algorithm assumes that for each m the contain segments of the form and some parts that do not fit, see Figure A2. Because for different m and different systems the curves may have different amount of noise (jitter) we first determine a quality threshold for the fits. Internally the data is represented by n points which we denote , with . We denote the range where a fit was computed by where . The quality measure is the sum of the squared fit-residuals:

Figure A2.

Illustration of the fitting algorithm and the determination of and . (a) Shown are the curves for the Lorenz system with 0.005 dynamic noise, see Figure 2. The fits are shown by dotted gray lines and their validity range r is marked by triangles. The dashed colored lines show for . (b) Ranked fit qualities for all segments with 10 data points of (solid line) with percentile (dotted line) and threshold (dashed red line). For visibility y-axis is cut, .

Figure A2.

Illustration of the fitting algorithm and the determination of and . (a) Shown are the curves for the Lorenz system with 0.005 dynamic noise, see Figure 2. The fits are shown by dotted gray lines and their validity range r is marked by triangles. The dashed colored lines show for . (b) Ranked fit qualities for all segments with 10 data points of (solid line) with percentile (dotted line) and threshold (dashed red line). For visibility y-axis is cut, .

First, all fits with a certain number of data points (here 10) are computed and their quality measure is computed: , where is the length of the segment in number of points. There will be segments with low residual errors and segments with very high residuals, namely those that cover the regions between ideal-typical behavior. The heuristics for the quality threshold is the percentile of Q plus a small portion of the standard deviation: . The reasoning for the percentile is to pick a threshold among the good fits, and we only assume that at least of the curve has fitting segments. If there is however an extended range of good fits then a high percentage (say ) of short segments have very similar low q values. In this case a too low threshold would be selected which cuts away good fitting regions. The tens of the standard deviation is added to lift the threshold above the potential plateau, see Figure A2b.

For all “good” segments the longest extension of the fitting range is determined that keeps the quality of the fit below the threshold, i.e., . Thus we get many overlapping regions of good fits. For each pair of two regions that overlap more than (with respect to the smaller) we discard the shorter one. The remaining regions are our final fits, as displayed in Figure A2a.

The components for calculating the constant of the middle term in Equation (37) are also displayed in Figure A2a. For , the value of is for because of the shallow region at . This leads to the value of () for the state complexity in Figure 3b. For the constant takes the value of of the next (smaller scale) plateau. Similarly raise from zero to the value of the plateaus, which leads to the increase in state-complexity in the stochastic scaling range. Remember that in the stochastic scaling range () and are taken from the preceding deterministic scaling range.

References

- Ay, N.; Bertschinger, N.; Der, R.; Güttler, F.; Olbrich, E. Predictive information and explorative behavior of autonomous robots. Eur. Phys. J. B 2008, 63, 329–339. [Google Scholar] [CrossRef]

- Zahedi, K.; Ay, N.; Der, R. Higher coordination with less control—A result of information maximization in the sensorimotor loop. Adapt. Behav. 2010, 18, 338–355. [Google Scholar] [CrossRef]

- Klyubin, A.S.; Polani, D.; Nehaniv, C.L. Empowerment: A universal agent-centric measure of control. In Proceedings of the IEEE Congress on Evolutionary Computation, Edinburgh, Scotland, UK, 5–5 September 2005; Volume 1, pp. 128–135.

- Salge, C.; Glackin, C.; Polani, D. Empowerment—An Introduction. In Guided Self-Organization: Inception; Emergence, Complexity and Computation; Prokopenko, M., Ed.; Springer: Berlin/Heidelberg, Germany, 2014; Volume 9, pp. 67–114. [Google Scholar]

- Schmidhuber, J. Exploring the Predictable. In Advances in Evolutionary Computing; Ghosh, A., Tsuitsui, S., Eds.; Springer: Berlin, Germany, 2002; pp. 579–612. [Google Scholar]

- Oudeyer, P.Y.; Kaplan, F.; Hafner, V.V.; Whyte, A. The Playground Experiment: Task-Independent Development of a Curious Robot; Proceedings of the AAAI Spring Symposium on Developmental Robotics; Bank, D., Meeden, L., Eds.; Stanford: California, USA, 2005; pp. 42–47. [Google Scholar]

- Frank, M.; Leitner, J.; Stollenga, M.; Förster, A.; Schmidhuber, J. Curiosity Driven Reinforcement Learning for Motion Planning on Humanoids. Front. Neurorobotics 2014, 7. [Google Scholar] [CrossRef] [PubMed]

- Der, R.; Martius, G. The Playful Machine—Theoretical Foundation and Practical Realization of Self-Organizing Robots; Springer: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

- Martius, G.; Der, R.; Ay, N. Information Driven Self-Organization of Complex Robotic Behaviors. PLoS ONE 2013, 8. [Google Scholar] [CrossRef]

- Sayama, H. Guiding designs of self-organizing swarms: Interactive and automated approaches. In Guided Self-Organization: Inception, Emergence, Complexity and Computation; Prokopenko, M., Ed.; Springer: Berlin/Heidelberg, Germany, 2014; Volume 9, pp. 365–387. [Google Scholar]

- Der, R.; Martius, G. Behavior as broken symmetry in embodied self-organizing robots. In Advances in Artificial Life, ECAL 2013; MIT Press: Cambridge, MA, USA, 2013; pp. 601–608. [Google Scholar]

- Der, R.; Martius, G. A Novel Plasticity Rule Can Explain the Development of Sensorimotor Intelligence. Proc. Natl. Acad. Sci. USA 2015, in press. [Google Scholar]

- Bialek, W.; Nemenman, I.; Tishby, N. Predictability, Complexity and Learning. Neural Comput. 2001, 13, 2409–2643. [Google Scholar] [CrossRef] [PubMed]

- Shaw, R. The Dripping Faucet as a Model Chaotic System; Aerial Press: Santa Cruz, CA, USA, 1984. [Google Scholar]

- Crutchfield, J.P.; Feldman, D.P. Regularities unseen, randomness observed: Levels of entropy convergence. Chaos 2003, 13, 25–54. [Google Scholar] [CrossRef] [PubMed]

- Grassberger, P. Toward a quantitative theory of self-generated complexity. Int. J. Theor. Phys. 1986, 25, 907–938. [Google Scholar] [CrossRef]

- Van der Weele, J.P.; Banning, E.J. Mode interaction in horses, tea, and other nonlinear oscillators: The universal role of symmetry. Am. J. Phys. 2001, 69, 953–965. [Google Scholar] [CrossRef] [Green Version]

- Martius, G.; Olbrich, E. Supplementary Material. Available online: http://playfulmachines.com/QuantBeh2015/ (accessed on 20 October 2015).

- Martius, G. Source Code at the Repository. Available online: https://github.com/georgmartius/behavior-quant (accessed on 20 October 2015).

- Grassberger, P. Randomness, Information, and Complexity; Proceedings of the 5th Mexican School on Statistical Physics (EMFE 5); Ramos-Gómez, F., Ed.; World Scientific: Singapore, Singapore, 1991; corrected version arXiv:1208.3459. [Google Scholar]

- Prokopenko, M.; Boschetti, F.; Ryan, A.J. An information-theoretic primer on complexity, self-organization, and emergence. Complexity 2009, 15, 11–28. [Google Scholar] [CrossRef]

- Rosenblatt, M. Remarks on some nonparametric estimates of a density function. Ann. Math. Statist. 1956, 27, 832–837. [Google Scholar] [CrossRef]

- Shore, J.E.; Johnson, R.W. Axiomatic derivation of the principle of maximum entropy and the principle of minimum cross-entropy. IEEE Trans. Inf. Theory 1980, IT-26, 26–37. [Google Scholar] [CrossRef]

- Giffin, A. From physics to economics: An econometric example using maximum relative entropy. Physica A 2009, 388, 1610–1620. [Google Scholar] [CrossRef]

- Takens, F.; Verbitski, E. Generalized entropies: Rényi and correlation integral approach. Nonlinearity 1998, 11. [Google Scholar] [CrossRef]

- Kraskov, A.; Stögbauer, H.; Grassberger, P. Estimating mutual information. Phys. Rev. E 2004, 69. [Google Scholar] [CrossRef]

- Gaspard, P.; Wang, X.J. Noise, chaos and (ϵ,τ)-entropy per unit time. Phys. Rep. 1993, 235, 291–343. [Google Scholar] [CrossRef]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory; Wiley: New York, NY, USA, 2006. [Google Scholar]

- Ding, M.; Grebogi, C.; Ott, E.; Sauer, T.; Yorke, J.A. Plateau onset of correlation dimension: When does it occur? Phys. Rev. Lett. 1993, 70, 3872–3875. [Google Scholar] [CrossRef] [PubMed]

- Cohen, A.; Procaccia, I. Computing the Kolmogorov entropy from time signals of dissipative and conservative dynamical systems. Phys. Rev. A 1985, 31, 1872–1882. [Google Scholar] [CrossRef] [PubMed]

- Sinai, Y. Kolmogorov-Sinai entropy. Scholarpedia 2009, 4. [Google Scholar] [CrossRef]

- Sauer, T.; Yorke, J.A.; Casdagli, M. Embedology. J. Stat. Phys. 1991, 65, 579–616. [Google Scholar] [CrossRef]

- Vasicek, O. A test for normality based on sample entropy. J. Royal Stat. Soc. Ser. B 1976, 38, 54–59. [Google Scholar]

- Dobrushin, R. A simplified method of experimentally evaluating the entropy of a stationary sequence. Theory Probab. Appl. 1958, 3, 428–430. [Google Scholar] [CrossRef]

- Kozachenko, L.; Leonenko, N.N. Sample estimate of the entropy of a random vector. Problemy Peredachi Informatsii 1987, 23, 9–16. [Google Scholar]

- Kantz, H.; Schreiber, T. Nonlinear Time Series Analysis; Cambridge University Press: Cambridge, UK, 2004; Chapter 6; Volume 7. [Google Scholar]

- Grassberger, P. Grassberger-Procaccia algorithm. Scholarpedia 2007, 2. [Google Scholar] [CrossRef]

- Grassberger, P.; Procaccia, I. Characterization of strange attractors. Phys. Rev. Lett. 1983, 50. [Google Scholar] [CrossRef]

- Fraser, A.M.; Swinney, H.L. Independent coordinates for strange attractors from mutual information. Phys. Rev. A 1986, 33, 1134–1140. [Google Scholar] [CrossRef] [PubMed]

- Olbrich, E.; Kantz, H. Inferring chaotic dynamics from time-series: On which length scale determinism becomes visible. Phys. Lett. A 1997, 232, 63–69. [Google Scholar] [CrossRef]

- Hegger, R.; Kantz, H.; Schreiber, T. Practical implementation of nonlinear time series methods: The TISEAN package. Chaos 1999, 9, 413–435. [Google Scholar] [CrossRef] [PubMed]

- Der, R. On the role of embodiment for self-organizing robots: Behavior as broken symmetry. In Guided Self-Organization: Inception, Emergence, Complexity and Computation; Prokopenko, M., Ed.; Springer: Berlin, Germany, 2014; Volume 9, pp. 193–221. [Google Scholar]

- Martius, G.; Hesse, F.; Güttler, F.; Der, R. LpzRobots: A Free and Powerful Robot Simulator. Availiable online: http://robot.informatik.uni-leipzig.de/software (accessed on 1 July 2010).

- Der, R.; Martius, G.; Hesse, F. Let It Roll—Emerging Sensorimotor Coordination in a Spherical Robot. In Artificial Life X; Rocha, L.M., Yaeger, L.S., Bedau, M.A., Floreano, D., Goldstone, R.L., Vespignani, A., Eds.; MIT Press: Cambridge, MA, USA, 2006; pp. 192–198. [Google Scholar]

- Schmidhuber, J. Curious model-building control systems. In Proceedings of the 1991 IEEE International Joint Conference on Neural Networks, Singapore, 18–21 November 1991; Volume 2, pp. 1458–1463.

- Oudeyer, P.Y.; Kaplan, F.; Hafner, V. Intrinsic motivation systems for autonomous mental development. IEEE Trans. Evolut. Comput. 2007, 11, 265–286. [Google Scholar] [CrossRef]

- Doncieux, S.; Mouret, J.B. Beyond black-box optimization: A review of selective pressures for evolutionary robotics. Evolut. Intell. 2014, 7, 71–93. [Google Scholar] [CrossRef]

- Lungarella, M.; Sporns, O. Mapping information flow in sensorimotor networks. PLoS Comput. Biol. 2006, 2. [Google Scholar] [CrossRef] [PubMed]

- Schmidt, N.M.; Hoffmann, M.; Nakajima, K.; Pfeifer, R. Bootstrapping perception using information theory: Case studies in a quadruped robot running on different grounds. Adv. Complex Syst. 2013, 16. [Google Scholar] [CrossRef]

- Wang, X.R.; Miller, J.M.; Lizier, J.T.; Prokopenko, M.; Rossi, L.F. Quantifying and tracing information cascades in swarms. PLoS ONE 2012, 7. [Google Scholar] [CrossRef] [PubMed]

© 2015 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).