Chatbot-Based Services: A Study on Customers’ Reuse Intention

Abstract

1. Introduction

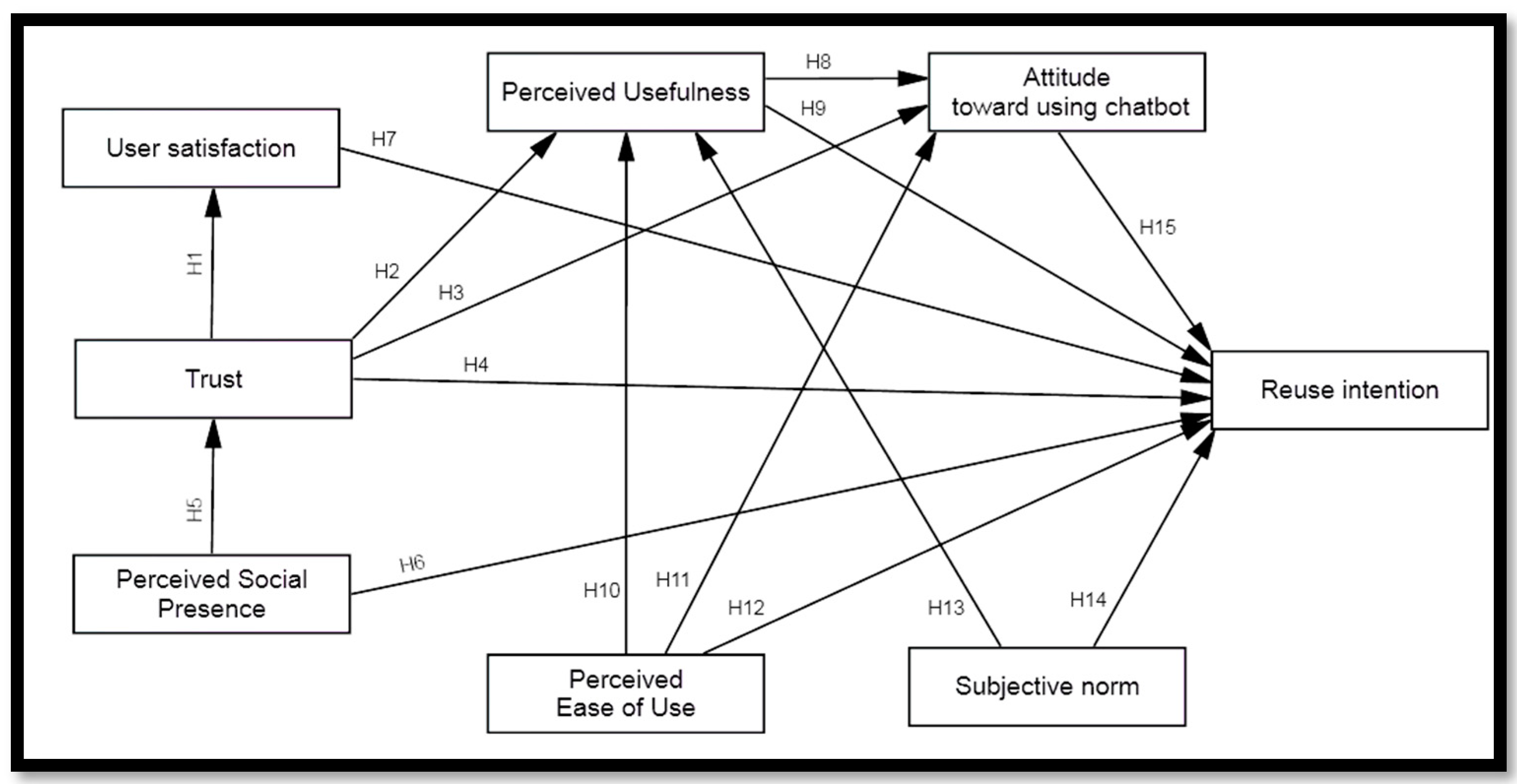

2. Literature Review and Hypotheses Development

2.1. Trust

2.2. Perceived Social Presence

2.3. Satisfaction

2.4. Perceived Usefulness

2.5. Perceived Ease of Use

2.6. Subjective Norm

2.7. Attitude toward Using Chatbots

3. Method

3.1. Materials and Measurements

3.2. Population and Sample

4. Results

4.1. Measurement Model Evaluation

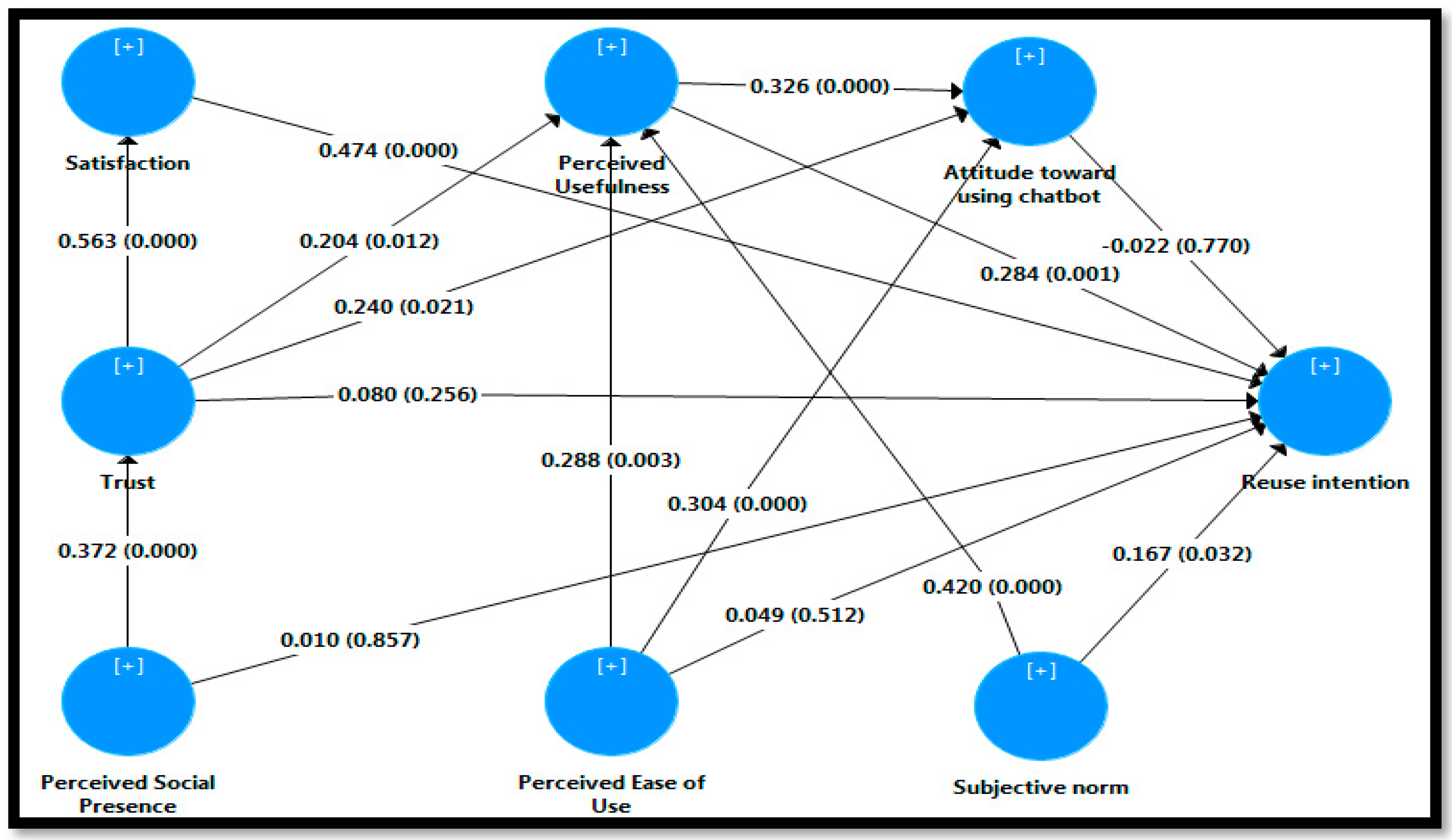

4.2. Assessment of Structural Model and Hypotheses Testing

5. Discussion

6. Implications

7. Limitations and Suggestions for Future Research

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Kwangsawad, A.; Jattamart, A. Overcoming customer innovation resistance to the sustainable adoption of chatbot services: A community-enterprise perspective in Thailand. J. Innov. Knowl. 2022, 7, 100211. [Google Scholar] [CrossRef]

- Araújo, T.; Casais, B. Customer Acceptance of Shopping-Assistant Chatbots. In Marketing and Smart Technologies; Rocha, Á., Reis, J.L., Peter, M.K., Bogdanović, Z., Eds.; Springer: Singapore, 2020; Volume 167, pp. 278–287. [Google Scholar] [CrossRef]

- Calvaresi, D.; Ibrahim, A.; Calbimonte, J.-P.; Fragniere, E.; Schegg, R.; Schumacher, M.I. Leveraging inter-tourists interactions via chatbots to bridge academia, tourism industries and future societies. J. Tour. Futur. 2021, 1–27. [Google Scholar] [CrossRef]

- Pillai, R.; Sivathanu, B. Adoption of AI-based chatbots for hospitality and tourism. Int. J. Contemp. Hosp. Manag. 2020, 32, 3199–3226. [Google Scholar] [CrossRef]

- Ceccarini, C.; Prandi, C. Tourism for all: A mobile application to assist visually impaired users in enjoying tourist services. In Proceedings of the 16th IEEE Annual Consumer Communications & Networking Conference (CCNC), Las Vegas, NV, USA, 11–14 January 2019; pp. 1–6. [Google Scholar] [CrossRef]

- Huang, D.-H.; Chueh, H.-E. Chatbot usage intention analysis: Veterinary consultation. J. Innov. Knowl. 2021, 6, 135–144. [Google Scholar] [CrossRef]

- Sheehan, B.; Jin, H.S.; Gottlieb, U. Customer service chatbots: Anthropomorphism and adoption. J. Bus. Res. 2020, 115, 14–24. [Google Scholar] [CrossRef]

- Sands, S.; Ferraro, C.; Campbell, C.; Tsao, H.-Y. Managing the human–chatbot divide: How service scripts influence service experience. J. Serv. Manag. 2021, 32, 246–264. [Google Scholar] [CrossRef]

- Seo, K.; Lee, J. The Emergence of Service Robots at Restaurants: Integrating Trust, Perceived Risk, and Satisfaction. Sustainability 2021, 13, 4431. [Google Scholar] [CrossRef]

- Murtarelli, G.; Collina, C.; Romenti, S. “Hi! How can I help you today?”: Investigating the quality of chatbots–millennials relationship within the fashion industry. TQM J. 2022. ahead-of-print. [Google Scholar] [CrossRef]

- Chang, I.-C.; Shih, Y.-S.; Kuo, K.-M. Why would you use medical chatbots? interview and survey. Int. J. Med. Inform. 2022, 165, 104827. [Google Scholar] [CrossRef]

- Seitz, L.; Bekmeier-Feuerhahn, S.; Gohil, K. Can we trust a chatbot like a physician? A qualitative study on understanding the emergence of trust toward diagnostic chatbots. Int. J. Hum.-Comput. Stud. 2022, 165, 102848. [Google Scholar] [CrossRef]

- Nguyen, D.; Chiu, Y.-T.; Le, H. Determinants of Continuance Intention towards Banks’ Chatbot Services in Vietnam: A Necessity for Sustainable Development. Sustainability 2021, 13, 7625. [Google Scholar] [CrossRef]

- Huang, S.Y.; Lee, C.-J. Predicting continuance intention to fintech chatbot. Comput. Hum. Behav. 2022, 129, 107027. [Google Scholar] [CrossRef]

- Cardona, D.R.; Janssen, A.; Guhr, N.; Breitner, M.H.; Milde, J. A Matter of Trust? Examination of Chatbot Usage in Insurance Business. In Proceedings of the Annual Hawaii International Conference on System Sciences, Kauai, HA, USA, 5 January 2021; pp. 556–565. [Google Scholar] [CrossRef]

- Eren, B.A. Determinants of customer satisfaction in chatbot use: Evidence from a banking application in Turkey. Int. J. Bank Mark. 2021, 39, 294–311. [Google Scholar] [CrossRef]

- Sarbabidya, S.; Saha, T. Role of chatbot in customer service: A study from the perspectives of the banking industry of Bangladesh. Int. Rev. Bus. Res. Pap. 2020, 16, 231–248. [Google Scholar]

- Ng, M.; Coopamootoo, K.P.; Toreini, E.; Aitken, M.; Elliot, K.; van Moorsel, A. Simulating the effects of social presence on trust, privacy concerns & usage intentions in automated bots for finance. In Proceedings of the 2020 IEEE European Symposium on Security and Privacy Workshops (EuroS & PW), Genoa, Italy, 7–11 September 2020; pp. 190–199. [Google Scholar]

- Patil, D.; Kulkarni, D.S. Artificial Intelligence in Financial Services: Customer Chatbot Advisor Adoption. Int. J. Innov. Technol. Explor. Eng. 2019, 9, 4296–4303. [Google Scholar] [CrossRef]

- Ferreira, M.; Barbosa, B. A Review on Chatbot Personality and Its Expected Effects on Users. In Trends, Applications, and Challenges of Chatbot Technology; Kuhail, M.A., Shawar, B.A., Hammad, R., Eds.; IGI Global: Hershey, PA, USA, 2023; pp. 222–243. [Google Scholar]

- Selamat, M.A.; Windasari, N.A. Chatbot for SMEs: Integrating customer and business owner perspectives. Technol. Soc. 2021, 66, 101685. [Google Scholar] [CrossRef]

- Clark, S. 5 Ways Chatbots Improve Employee Experience. Available online: https://www.reworked.co/employee-experience/5-ways-chatbots-improve-employee-experience/ (accessed on 12 January 2023).

- Fokina, M. The Future of Chatbots: 80+ Chatbot Statistics for 2023. Available online: https://www.tidio.com/blog/chatbot-statistics/ (accessed on 21 January 2023).

- Williams, R. Study: Chatbots to Drive $112B in Retail Sales by 2023. Available online: https://www.retaildive.com/news/study-chatbots-to-drive-112b-in-retail-sales-by-2023/554454/ (accessed on 12 January 2023).

- Følstad, A.; Nordheim, C.B.; Bjørkli, C.A. What Makes Users Trust a Chatbot for Customer Service? An Exploratory Interview Study. In Proceedings of the Internet Science; Bodrunova, S.S., Ed.; Springer: Cham, Switzerland, 2018; pp. 194–208. [Google Scholar] [CrossRef]

- van der Goot, M.J.; Hafkamp, L.; Dankfort, Z. Customer service chatbots: A qualitative interview study into the communication journey of customers. In Chatbot Research and Design; Følstad, A., Araujo, T., Papadopoulos, S., Law, E.L.C., Luger, E., Goodwin, M., Brandtzaeg, P.B., Eds.; Springer: Cham, Switzerland, 2021; pp. 190–204. [Google Scholar]

- Sladden, C.O. Chatbots’ Failure to Satisfy Customers Is Harming Businesses, Says Study. Available online: https://www.verdict.co.uk/chatbots-failure-to-satisfy-customers-is-harming-businesses-says-study/ (accessed on 12 January 2023).

- Hsiao, K.-L.; Chen, C.-C. What drives continuance intention to use a food-ordering chatbot? An examination of trust and satisfaction. Libr. Hi Tech. 2022, 40, 929–946. [Google Scholar] [CrossRef]

- Huang, M.-H.; Rust, R.T. Artificial Intelligence in Service. J. Serv. Res. 2018, 21, 155–172. [Google Scholar] [CrossRef]

- Wirtz, J.; Patterson, P.G.; Kunz, W.H.; Gruber, T.; Lu, V.N.; Paluch, S.; Martins, A. Brave new world: Service robots in the frontline. J. Serv. Manag. 2018, 29, 907–931. [Google Scholar] [CrossRef]

- Aslam, W.; Siddiqui, D.A.; Arif, I.; Farhat, K. Chatbots in the frontline: Drivers of acceptance. Kybernetes, 2022; ahead-of-print. [Google Scholar] [CrossRef]

- Rahane, W.; Patil, S.; Dhondkar, K.; Mate, T. Artificial intelligence based solarbot. In Proceedings of the 2018 Second International Conference on Inventive Communication and Computational Technologies (ICICCT), Coimbatore, India, 20–21 April 2018; pp. 601–605. [Google Scholar]

- Baier, D.; Rese, A.; Röglinger, M.; Baier, D.; Rese, A.; Röglinger, M. Conversational User Interfaces for Online Shops? A Categorization of Use Cases. In Proceedings of the 39th International Conference on Information Systems (ICIS), San Francisco, CA, USA, 13–16 December 2018. [Google Scholar]

- Toader, D.-C.; Boca, G.; Toader, R.; Măcelaru, M.; Toader, C.; Ighian, D.; Rădulescu, A.T. The Effect of Social Presence and Chatbot Errors on Trust. Sustainability 2019, 12, 256. [Google Scholar] [CrossRef]

- Lai, P.C. The literature review of technology adoption models and theories for the novelty technology. J. Inf. Syst. Technol. Manag. 2017, 14, 21–38. [Google Scholar] [CrossRef]

- Beldad, A.D.; Hegner, S.M. Expanding the Technology Acceptance Model with the Inclusion of Trust, Social Influence, and Health Valuation to Determine the Predictors of German Users’ Willingness to Continue Using a Fitness App: A Structural Equation Modeling Approach. Int. J. Hum.–Comput. Interact. 2018, 34, 882–893. [Google Scholar] [CrossRef]

- Lee, M.-C. Factors influencing the adoption of internet banking: An integration of TAM and TPB with perceived risk and perceived benefit. Electron. Commer. Res. Appl. 2009, 8, 130–141. [Google Scholar] [CrossRef]

- Fishbein, M.; Ajzen, I. Belief, Attitude, Intention, and Behavior: An Introduction to Theory and Research; Addison-Wesley: Reading, MA, USA, 1975. [Google Scholar]

- Davis, F.D. Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- Venkatesh, V.; Thong, J.Y.L.; Xu, X. Consumer Acceptance and Use of Information Technology: Extending the Unified Theory of Acceptance and Use of Technology. MIS Q. 2012, 36, 157–178. [Google Scholar] [CrossRef]

- Venkatesh, V.; Davis, F.D. A Theoretical Extension of the Technology Acceptance Model: Four Longitudinal Field Studies. Manag. Sci. 2000, 46, 186–204. [Google Scholar] [CrossRef]

- Pikkarainen, T.; Pikkarainen, K.; Karjaluoto, H.; Pahnila, S. Consumer acceptance of online banking: An extension of the technology acceptance model. Internet Res. 2004, 14, 224–235. [Google Scholar] [CrossRef]

- Tsui, H.-D. Trust, Perceived Useful, Attitude and Continuance Intention to Use E-Government Service: An Empirical Study in Taiwan. IEICE Trans. Inf. Syst. 2019, 102, 2524–2534. [Google Scholar] [CrossRef]

- Bhattacherjee, A. Understanding Information Systems Continuance: An Expectation-Confirmation Model. MIS Q. 2001, 25, 351–370. [Google Scholar] [CrossRef]

- Ashfaq, M.; Yun, J.; Yu, S.; Loureiro, S.M.C. I, Chatbot: Modeling the determinants of users’ satisfaction and continuance intention of AI-powered service agents. Telemat. Inform. 2020, 54, 101473. [Google Scholar] [CrossRef]

- Jiang, Y.; Yang, X.; Zheng, T. Make chatbots more adaptive: Dual pathways linking human-like cues and tailored response to trust in interactions with chatbots. Comput. Hum. Behav. 2023, 138, 107485. [Google Scholar] [CrossRef]

- Amoako-Gyampah, K.; Salam, A. An extension of the technology acceptance model in an ERP implementation environment. Inf. Manag. 2004, 41, 731–745. [Google Scholar] [CrossRef]

- Shahzad, F.; Xiu, G.; Khan, I.; Wang, J. m-Government Security Response System: Predicting Citizens’ Adoption Behavior. Int. J. Hum.–Comput. Interact. 2019, 35, 899–915. [Google Scholar] [CrossRef]

- Dhagarra, D.; Goswami, M.; Kumar, G. Impact of Trust and Privacy Concerns on Technology Acceptance in Healthcare: An Indian Perspective. Int. J. Med. Inform. 2020, 141, 104164. [Google Scholar] [CrossRef] [PubMed]

- Aljazzaf, Z.M.; Perry, M.; Capretz, M.A.M. Online trust: Definition and principles. In Proceedings of the ICCGI’10: Proceedings of the 2010 Fifth International Multi-Conference on Computing in the Global Information Technology, Valencia, Spain, 20–25 September 2010; pp. 163–168. [Google Scholar]

- Corritore, C.L.; Kracher, B.; Wiedenbeck, S. On-line trust: Concepts, evolving themes, a model. Int. J. Hum.-Comput. Stud. 2003, 58, 737–758. [Google Scholar] [CrossRef]

- Bhattacherjee, A. Individual Trust in Online Firms: Scale Development and Initial Test. J. Manag. Inf. Syst. 2002, 19, 211–241. [Google Scholar] [CrossRef]

- Gefen, D.; Straub, D. Managing User Trust in B2C e-Services. e-Serv. J. 2003, 2, 7. [Google Scholar] [CrossRef]

- Holsapple, C.W.; Sasidharan, S. The dynamics of trust in B2C e-commerce: A research model and agenda. Inf. Syst. e-Bus. Manag. 2005, 3, 377–403. [Google Scholar] [CrossRef]

- Zarmpou, T.; Saprikis, V.; Markos, A.; Vlachopoulou, M. Modeling users’ acceptance of mobile services. Electron. Commer. Res. 2012, 12, 225–248. [Google Scholar] [CrossRef]

- Agarwal, S. Trust or No Trust in Chatbots: A Dilemma of Millennial. In Cognitive Computing for Human-Robot Interaction; Elsevier: Cambridge, MA, USA, 2021; pp. 103–119. [Google Scholar] [CrossRef]

- Chung, M.; Joung, H.; Ko, E. The role of luxury brands conversational agents comparison between facetoface and chatbot. Glob. Fash. Manag. Conf. 2017, 2017, 540. [Google Scholar] [CrossRef]

- Przegalinska, A.; Ciechanowski, L.; Stroz, A.; Gloor, P.; Mazurek, G. In bot we trust: A new methodology of chatbot performance measures. Bus. Horiz. 2019, 62, 785–797. [Google Scholar] [CrossRef]

- Hoff, K.A.; Bashir, M. Trust in Automation: Integrating empirical evidence on factors that influence trust. Hum. Factors 2014, 57, 407–434. [Google Scholar] [CrossRef]

- Kumar, V.; Rajan, B.; Venkatesan, R.; Lecinski, J. Understanding the Role of Artificial Intelligence in Personalized Engagement Marketing. Calif. Manag. Rev. 2019, 61, 135–155. [Google Scholar] [CrossRef]

- Belanche, D.; Casaló, L.V.; Flavián, C. Integrating trust and personal values into the Technology Acceptance Model: The case of e-government services adoption. Cuad. Econ. Dir. Empresa 2012, 15, 192–204. [Google Scholar] [CrossRef]

- Kasilingam, D.L. Understanding the attitude and intention to use smartphone chatbots for shopping. Technol. Soc. 2020, 62, 101280. [Google Scholar] [CrossRef]

- Rajaobelina, L.; Tep, S.P.; Arcand, M.; Ricard, L. Creepiness: Its antecedents and impact on loyalty when interacting with a chatbot. Psychol. Mark. 2021, 38, 2339–2356. [Google Scholar] [CrossRef]

- Biocca, F.; Harms, C.; Burgoon, J.K. Toward a More Robust Theory and Measure of Social Presence: Review and Suggested Criteria. Presence Teleoperators Virtual Environ. 2003, 12, 456–480. [Google Scholar] [CrossRef]

- Oh, C.S.; Bailenson, J.N.; Welch, G.F. A Systematic Review of Social Presence: Definition, Antecedents, and Implications. Front. Robot. AI 2018, 5, 114. [Google Scholar] [CrossRef]

- Hassanein, K.; Head, M. Manipulating perceived social presence through the web interface and its impact on attitude towards online shopping. Int. J. Hum.-Comput. Stud. 2007, 65, 689–708. [Google Scholar] [CrossRef]

- Go, E.; Sundar, S.S. Humanizing chatbots: The effects of visual, identity and conversational cues on humanness perceptions. Comput. Hum. Behav. 2019, 97, 304–316. [Google Scholar] [CrossRef]

- Kilteni, K.; Groten, R.; Slater, M. The Sense of Embodiment in Virtual Reality. Presence Teleoperators Virtual Environ. 2012, 21, 373–387. [Google Scholar] [CrossRef]

- Moon, J.H.; Kim, E.; Choi, S.M.; Sung, Y. Keep the Social in Social Media: The Role of Social Interaction in Avatar-Based Virtual Shopping. J. Interact. Advert. 2013, 13, 14–26. [Google Scholar] [CrossRef]

- de Visser, E.J.; Monfort, S.S.; McKendrick, R.; Smith, M.A.B.; McKnight, P.E.; Krueger, F.; Parasuraman, R. Almost human: Anthropomorphism increases trust resilience in cognitive agents. J. Exp. Psychol. Appl. 2016, 22, 331–349. [Google Scholar] [CrossRef] [PubMed]

- Türkyılmaz, A.; Özkan, C. Development of a customer satisfaction index model. Ind. Manag. Data Syst. 2007, 107, 672–687. [Google Scholar] [CrossRef]

- Kim, B. Understanding Key Antecedents of Consumer Loyalty toward Sharing-Economy Platforms: The Case of Airbnb. Sustainability 2019, 11, 5195. [Google Scholar] [CrossRef]

- Oliver, R.L. A Cognitive Model of the Antecedents and Consequences of Satisfaction Decisions. J. Mark. Res. 1980, 17, 460–469. [Google Scholar] [CrossRef]

- Fornell, C.; Johnson, M.D.; Anderson, E.W.; Cha, J.; Bryant, B.E. The American Customer Satisfaction Index: Nature, Purpose, and Findings. J. Mark. 1996, 60, 7. [Google Scholar] [CrossRef]

- Santini, F.D.O.; Ladeira, W.J.; Sampaio, C.H. The role of satisfaction in fashion marketing: A meta-analysis. J. Glob. Fash. Mark. 2018, 9, 305–321. [Google Scholar] [CrossRef]

- Suchanek, P.; Králová, M. Customer satisfaction, loyalty, knowledge and competitiveness in the food industry. Econ. Res.-Ekon. Istraživanja 2019, 32, 1237–1255. [Google Scholar] [CrossRef]

- Chung, M.; Ko, E.; Joung, H.; Kim, S.J. Chatbot e-service and customer satisfaction regarding luxury brands. J. Bus. Res. 2020, 117, 587–595. [Google Scholar] [CrossRef]

- Ben Mimoun, M.S.; Poncin, I.; Garnier, M. Animated conversational agents and e-consumer productivity: The roles of agents and individual characteristics. Inf. Manag. 2017, 54, 545–559. [Google Scholar] [CrossRef]

- Silva, G.R.S.; Canedo, E.D. Towards User-Centric Guidelines for Chatbot Conversational Design. Int. J. Hum.–Comput. Interact. 2022, 1–23. [Google Scholar] [CrossRef]

- Jiang, H.; Cheng, Y.; Yang, J.; Gao, S. AI-powered chatbot communication with customers: Dialogic interactions, satisfaction, engagement, and customer behavior. Comput. Hum. Behav. 2022, 134, 107329. [Google Scholar] [CrossRef]

- Nowak, K.L.; Rauh, C. Choose your “buddy icon” carefully: The influence of avatar androgyny, anthropomorphism and credibility in online interactions. Comput. Hum. Behav. 2008, 24, 1473–1493. [Google Scholar] [CrossRef]

- Veeramootoo, N.; Nunkoo, R.; Dwivedi, Y.K. What determines success of an e-government service? Validation of an integrative model of e-filing continuance usage. Gov. Inf. Q. 2018, 35, 161–174. [Google Scholar] [CrossRef]

- Jang, Y.-T.J.; Liu, A.Y.; Ke, W.-Y. Exploring smart retailing: Anthropomorphism in voice shopping of smart speaker. Inf. Technol. People 2022. ahead-of-print. [Google Scholar] [CrossRef]

- Cheng, Y.; Jiang, H. How Do AI-driven Chatbots Impact User Experience? Examining Gratifications, Perceived Privacy Risk, Satisfaction, Loyalty, and Continued Use. J. Broadcast. Electron. Media 2020, 64, 592–614. [Google Scholar] [CrossRef]

- Hsu, C.-L.; Lin, J.C.-C. Understanding the user satisfaction and loyalty of customer service chatbots. J. Retail. Consum. Serv. 2023, 71, 103211. [Google Scholar] [CrossRef]

- Davis, F.D.; Bagozzi, R.P.; Warshaw, P.R. User acceptance of computer technology: A comparison of two theoretical models. Manag. Sci. 1989, 35, 982–1003. [Google Scholar] [CrossRef]

- Park, S.Y. An analysis of the technology acceptance model in understanding university students’ behavioral intention to use e-learning. J. Educ. Technol. Soc. 2009, 12, 150–162. [Google Scholar]

- Aggelidis, V.P.; Chatzoglou, P.D. Using a modified technology acceptance model in hospitals. Int. J. Med. Inform. 2009, 78, 115–126. [Google Scholar] [CrossRef]

- Lee, D.Y.; Lehto, M.R. User acceptance of YouTube for procedural learning: An extension of the Technology Acceptance Model. Comput. Educ. 2013, 61, 193–208. [Google Scholar] [CrossRef]

- Elmorshidy, A.; Mostafa, M.M.; El-Moughrabi, I.; Al-Mezen, H. Factors Influencing Live Customer Support Chat Services: An Empirical Investigation in Kuwait. J. Theor. Appl. Electron. Commer. Res. 2015, 10, 63–76. [Google Scholar] [CrossRef]

- Gümüş, N.; Çark, Ö. The Effect of Customers’ Attitudes Towards Chatbots on their Experience and Behavioural Intention in Turkey. Interdiscip. Descr. Complex Syst. 2021, 19, 420–436. [Google Scholar] [CrossRef]

- Rese, A.; Ganster, L.; Baier, D. Chatbots in retailers’ customer communication: How to measure their acceptance? J. Retail. Consum. Serv. 2020, 56, 102176. [Google Scholar] [CrossRef]

- Muchran, M.; Ahmar, A.S. Application of TAM model to the use of information technology. Int. J. Eng. Technol. 2018, 7, 37–40. [Google Scholar]

- Rahmayanti, P.L.D.; Widagda, I.G.N.J.A.; Yasa, N.N.K.; Giantari, I.G.A.K.; Martaleni, M.; Sakti, D.P.B.; Suwitho, S.; Anggreni, P. Integration of technology acceptance model and theory of reasoned action in pre-dicting e-wallet continuous usage intentions. Int. J. Data Netw. Sci. 2021, 5, 649–658. [Google Scholar] [CrossRef]

- Buabeng-Andoh, C. Predicting students’ intention to adopt mobile learning. J. Res. Innov. Teach. Learn. 2018, 11, 178–191. [Google Scholar] [CrossRef]

- Chismar, W.G.; Wiley-Patton, S. Does the extended technology acceptance model apply to physicians. In Proceedings of the 36th Annual Hawaii International Conference on System Sciences, Big Island, HI, USA, 6–9 January 2003; p. 8. [Google Scholar]

- Huang, Y.-S.; Kao, W.-K. Chatbot service usage during a pandemic: Fear and social distancing. Serv. Ind. J. 2021, 41, 964–984. [Google Scholar] [CrossRef]

- Arif, I.; Aslam, W.; Ali, M. Students’ dependence on smartphones and its effect on purchasing behavior. South Asian J. Glob. Bus. Res. 2016, 5, 285–302. [Google Scholar] [CrossRef]

- Rahi, S.; Ghani, M.A.; Alnaser, F.M.; Ngah, A.H. Investigating the role of unified theory of acceptance and use of technology (UTAUT) in internet banking adoption context. Manag. Sci. Lett. 2018, 8, 173–186. [Google Scholar] [CrossRef]

- Alalwan, A.A.; Dwivedi, Y.K.; Rana, N.P. Factors influencing adoption of mobile banking by Jordanian bank customers: Extending UTAUT2 with trust. Int. J. Inf. Manag. 2017, 37, 99–110. [Google Scholar] [CrossRef]

- Palau-Saumell, R.; Forgas-Coll, S.; Sánchez-García, J.; Robres, E. User Acceptance of Mobile Apps for Restaurants: An Expanded and Extended UTAUT-2. Sustainability 2019, 11, 1210. [Google Scholar] [CrossRef]

- Tak, P.; Panwar, S. Using UTAUT 2 model to predict mobile app based shopping: Evidences from India. J. Indian Bus. Res. 2017, 9, 248–264. [Google Scholar] [CrossRef]

- Chang, C.-M.; Liu, L.-W.; Huang, H.-C.; Hsieh, H.-H. Factors Influencing Online Hotel Booking: Extending UTAUT2 with Age, Gender, and Experience as Moderators. Information 2019, 10, 281. [Google Scholar] [CrossRef]

- Ajzen, I. The theory of planned behavior. In Handbook of Theories of Social Psychology; Van Lange, P.A.M., Kruglanski, A.W., Higgins, E.T., Eds.; SAGE: London, UK, 2012; pp. 438–459. [Google Scholar]

- Zahid, H.; Din, B.H. Determinants of Intention to Adopt E-Government Services in Pakistan: An Imperative for Sustainable Development. Resources 2019, 8, 128. [Google Scholar] [CrossRef]

- Yap, C.S.; Ahmad, R.; Newaz, F.T.; Mason, C. Continuous Use Intention of E-Government Portals the Perspective of Older Citizens. Int. J. Electron. Gov. Res. 2019, 15, 1–16. [Google Scholar] [CrossRef]

- Agag, G.; El-Masry, A.A. Why Do Consumers Trust Online Travel Websites? Drivers and Outcomes of Consumer Trust toward Online Travel Websites. J. Travel Res. 2017, 56, 347–369. [Google Scholar] [CrossRef]

- Ahmad, S.; Bhatti, S.; Hwang, Y. E-service quality and actual use of e-banking: Explanation through the Technology Acceptance Model. Inf. Dev. 2020, 36, 503–519. [Google Scholar] [CrossRef]

- Spears, N.; Singh, S.N. Measuring Attitude toward the Brand and Purchase Intentions. J. Curr. Issues Res. Advert. 2004, 26, 53–66. [Google Scholar] [CrossRef]

- Glanz, K.; Rimer, B.K.; Viswanath, K. Health Behavior and Health Education: Theory, Research, and Practice; John Wiley & Sons: San Francisco, CA, USA, 2008. [Google Scholar]

- Hair, J.F.; Ringle, C.M.; Sarstedt, M. PLS-SEM: Indeed a Silver Bullet. J. Mark. Theory Pract. 2011, 19, 139–152. [Google Scholar] [CrossRef]

- Chin, W.W. The partial least squares approach to structural equation modeling. In Modern Methods for Business Research; Marcoulides, G.A., Ed.; Lawrence Erlbaum Associates Publishers: New York, NY, USA, 1998; Volume 295, pp. 295–336. [Google Scholar]

- Sekaran, U.; Bougie, R. Research Methods for Business: A Skill Building Approach; John Wiley & Sons: Chichester, UK, 2016. [Google Scholar]

- Bagozzi, R.P.; Yi, Y. On the evaluation of structural equation models. J. Acad. Mark. Sci. 1988, 16, 74–94. [Google Scholar] [CrossRef]

- Fornell, C.; Larcker, D.F. Evaluating structural equation models with unobservable variables and measurement error. J. Mark. Res. 1981, 18, 39–50. [Google Scholar] [CrossRef]

- Streukens, S.; Leroi-Werelds, S. Bootstrapping and PLS-SEM: A step-by-step guide to get more out of your bootstrap results. Eur. Manag. J. 2016, 34, 618–632. [Google Scholar] [CrossRef]

- Cohen, J. Statistical Power Analysis for the Behavioral Sciences; Routledge: New York, NY, USA, 2013. [Google Scholar]

- Stone, M. Cross-Validatory Choice and Assessment of Statistical Predictions. J. R. Stat. Soc. Ser. B (Methodol.) 1974, 36, 111–133. [Google Scholar] [CrossRef]

- Geisser, S. A predictive approach to the random effect model. Biometrika 1974, 61, 101–107. [Google Scholar] [CrossRef]

- Pal, D.; Roy, P.; Arpnikanondt, C.; Thapliyal, H. The effect of trust and its antecedents towards determining users’ behavioral intention with voice-based consumer electronic devices. Heliyon 2022, 8, e09271. [Google Scholar] [CrossRef]

- Caldarini, G.; Jaf, S.; McGarry, K. A Literature Survey of Recent Advances in Chatbots. Information 2022, 13, 41. [Google Scholar] [CrossRef]

| Items | Mean | S.D. |

|---|---|---|

| Attitude toward using chatbots [109] 7-point Likert Scale (1—Strongly disagree to 7—Strongly agree) | ||

| ATT1: Tick the option that best describes your opinion about the use of chatbots: good/bad | 3.39 | 1.03 |

| ATT2: Tick the option that best describes your opinion about the use of chatbots: favorable/unfavorable | 3.33 | 1.12 |

| ATT3: Tick the option that best describes your opinion about the use of chatbots: high-quality/low-quality | 3.00 | 0.98 |

| ATT4: Tick the option that best describes your opinion about the use of chatbots: positive/negative | 3.39 | 1.02 |

| ATT5: Tick the option that best describes your opinion about the use of chatbots: lacks important benefits/offers important benefits | 3.38 | 1.03 |

| Satisfaction [77] 5-point Likert Scale (1—Strongly disagree to 5—Strongly agree) | ||

| SAT1: I am satisfied with chatbots | 3.21 | 0.94 |

| SAT2: I am content with chatbots | 3.17 | 0.95 |

| SAT3: The chatbots did a good job | 3.28 | 0.93 |

| SAT4: The chatbots did what I expected | 3.30 | 0.97 |

| SAT5: I am happy with the chatbots | 3.05 | 0.92 |

| SAT6: I was satisfied with the experience of interacting with chatbots | 3.27 | 0.95 |

| Perceived Usefulness [41,55,95] 7-point Likert Scale (1—Strongly disagree to 7—Strongly agree) | ||

| PUS1: Using chatbots improves my performance | 2.98 | 1.01 |

| PUS2: Using chatbots increases my productivity | 3.01 | 1.04 |

| PUS3: Using chatbots enhances my effectiveness to perform tasks | 3.07 | 1.06 |

| PUS4: I find chatbots useful in my daily life | 2.96 | 1.13 |

| PUS5: Using chatbots enables me to accomplish tasks more quickly | 3.22 | 1.18 |

| PUS6: Using chatbots would increase my efficiency | 3.03 | 1.13 |

| Perceived ease of use [41,95] 7-point Likert Scale (1—Strongly disagree to 7—Strongly agree) | ||

| PEU1: My interaction with chatbots is clear and understandable | 3.45 | 1.03 |

| PEU2: Interacting with chatbots does not require a lot of mental effort | 3.57 | 1.05 |

| PEU3: I find chatbots to be easy to use | 3.78 | 0.95 |

| PEU4: I find it easy to get the chatbots to do what I want them to do | 3.12 | 0.99 |

| PEU5: It is easy for me to become skillful at using chatbots | 3.62 | 0.96 |

| PEU6: I have the knowledge necessary to use chatbots | 3.72 | 1.01 |

| Subjective norm [95,110] 7-point Likert Scale (1—Strongly disagree to 7—Strongly agree) | ||

| SNO1: People who influence my behavior think that I should use chatbots | 2.60 | 1.00 |

| SNO2: People who are important to me will support me to use chatbots | 2.97 | 1.01 |

| SNO3: People whose views I respect support the use of chatbots | 3.03 | 0.96 |

| SNO4: It is expected of me to use chatbots | 3.12 | 1.07 |

| SNO5: I feel under social pressure to use chatbots | 2.16 | 1.17 |

| Trust [18] 7-point Likert Scale (1—Strongly disagree to 7—Strongly agree) | ||

| TRU1: I feel that the chatbots are trustworthy | 2.99 | 0.87 |

| TRU2: I do not think that chatbots will act in a way that is disadvantageous to me | 3.10 | 0.83 |

| TRU3: I trust in chatbots | 3.07 | 0.93 |

| Perceived Social Presence [34] 7-point Likert Scale (1—Strongly disagree to 7—Strongly agree) | ||

| PSP1: I feel a sense of human contact when interacting with chatbots | 2.53 | 1.17 |

| PSP2: Even though I could not see chatbots in real life, there was a sense of human warmth | 2.27 | 1.11 |

| PSP3: When interacting with chatbots, there was a sense of sociability | 2.42 | 1.13 |

| PSP4: I felt there was a person who was a real source of comfort to me | 2.26 | 1.14 |

| PSP5: I feel there was a person who is around when I am in need | 2.35 | 1.19 |

| Reuse intention [18] 7-point Likert Scale (1—Strongly disagree to 7—Strongly agree) | ||

| INT1: If I have access to chatbots, I will use it | 3.38 | 1.00 |

| INT2: I think my interest in chatbots will increase in the future | 3.40 | 0.98 |

| INT3: I will use chatbots as much as possible | 2.94 | 1.00 |

| INT4: I plan to use chatbots in the future | 3.35 | 0.99 |

| Variables | Factor Loadings | α | CR | AVE | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ATT | 0.800–0.886 | 0.92 | 0.92 | 0.74 | 0.86 | |||||||

| CI | 0.805–0.875 | 0.91 | 0.91 | 0.71 | 0.61 | 0.84 | ||||||

| PEU | 0.509–918 | 0.86 | 0.86 | 0.55 | 0.58 | 0.59 | 0.75 | |||||

| PSP | 0.811–0.984 | 0.96 | 0.96 | 0.82 | 0.33 | 0.45 | 0.24 | 0.91 | ||||

| PUS | 0.862–0.897 | 0.95 | 0.95 | 0.77 | 0.60 | 0.77 | 0.53 | 0.38 | 0.88 | |||

| SAT | 0.752–0.958 | 0.96 | 0.96 | 0.80 | 0.70 | 0.81 | 0.65 | 0.47 | 0.70 | 0.89 | ||

| SNO | 0.506–0.811 | 0.80 | 0.80 | 0.50 | 0.33 | 0.57 | 0.36 | 0.44 | 0.60 | 0.41 | 0.71 | |

| TRU | 0.659–886 | 0.84 | 0.84 | 0.65 | 0.52 | 0.56 | 0.42 | 0.37 | 0.48 | 0.56 | 0.37 | 0.80 |

| Endogenous Constructs | R Square | Q2 |

|---|---|---|

| Attitude toward using | 0.496 | 0.339 |

| Reuse intention | 0.760 | 0.513 |

| Perceived Usefulness | 0.500 | 0.343 |

| Satisfaction | 0.317 | 0.213 |

| Trust | 0.138 | 0.081 |

| Path | β | T Value | p Value | VIF | f2 |

|---|---|---|---|---|---|

| 0.563 | 6.427 | 0.001 | 1.000 | 0.464 |

| 0.204 | 2.501 | 0.012 | 1.300 | 0.064 |

| 0.240 | 2.300 | 0.021 | 1.368 | 0.083 |

| 0.080 | 1.136 | 0.256 | 1.608 | 0.016 |

| 0.372 | 5.112 | 0.001 | 1.000 | 0.160 |

| 0.010 | 0.180 | 0.857 | 1.461 | 0.001 |

| 0.474 | 4.326 | 0.001 | 3.312 | 0.282 |

| 0.326 | 4.149 | 0.001 | 1.555 | 0.135 |

| 0.284 | 3.387 | 0.001 | 2.678 | 0.125 |

| 0.288 | 3.014 | 0.003 | 1.288 | 0.130 |

| 0.304 | 3.848 | 0.001 | 1.453 | 0.127 |

| 0.049 | 0.656 | 0.512 | 1.881 | 0.005 |

| 0.420 | 6.184 | 0.001 | 1.230 | 0.287 |

| 0.167 | 2.148 | 0.032 | 1.762 | 0.066 |

| −0.022 | 0.293 | 0.770 | 2.225 | 0.001 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Silva, F.A.; Shojaei, A.S.; Barbosa, B. Chatbot-Based Services: A Study on Customers’ Reuse Intention. J. Theor. Appl. Electron. Commer. Res. 2023, 18, 457-474. https://doi.org/10.3390/jtaer18010024

Silva FA, Shojaei AS, Barbosa B. Chatbot-Based Services: A Study on Customers’ Reuse Intention. Journal of Theoretical and Applied Electronic Commerce Research. 2023; 18(1):457-474. https://doi.org/10.3390/jtaer18010024

Chicago/Turabian StyleSilva, Filipe Araújo, Alireza Shabani Shojaei, and Belem Barbosa. 2023. "Chatbot-Based Services: A Study on Customers’ Reuse Intention" Journal of Theoretical and Applied Electronic Commerce Research 18, no. 1: 457-474. https://doi.org/10.3390/jtaer18010024

APA StyleSilva, F. A., Shojaei, A. S., & Barbosa, B. (2023). Chatbot-Based Services: A Study on Customers’ Reuse Intention. Journal of Theoretical and Applied Electronic Commerce Research, 18(1), 457-474. https://doi.org/10.3390/jtaer18010024