Investigation of Tactile Sensory System Configuration for Construction Hazard Perception

Abstract

1. Introduction

1.1. Background

1.2. Research with Tactile Sensors

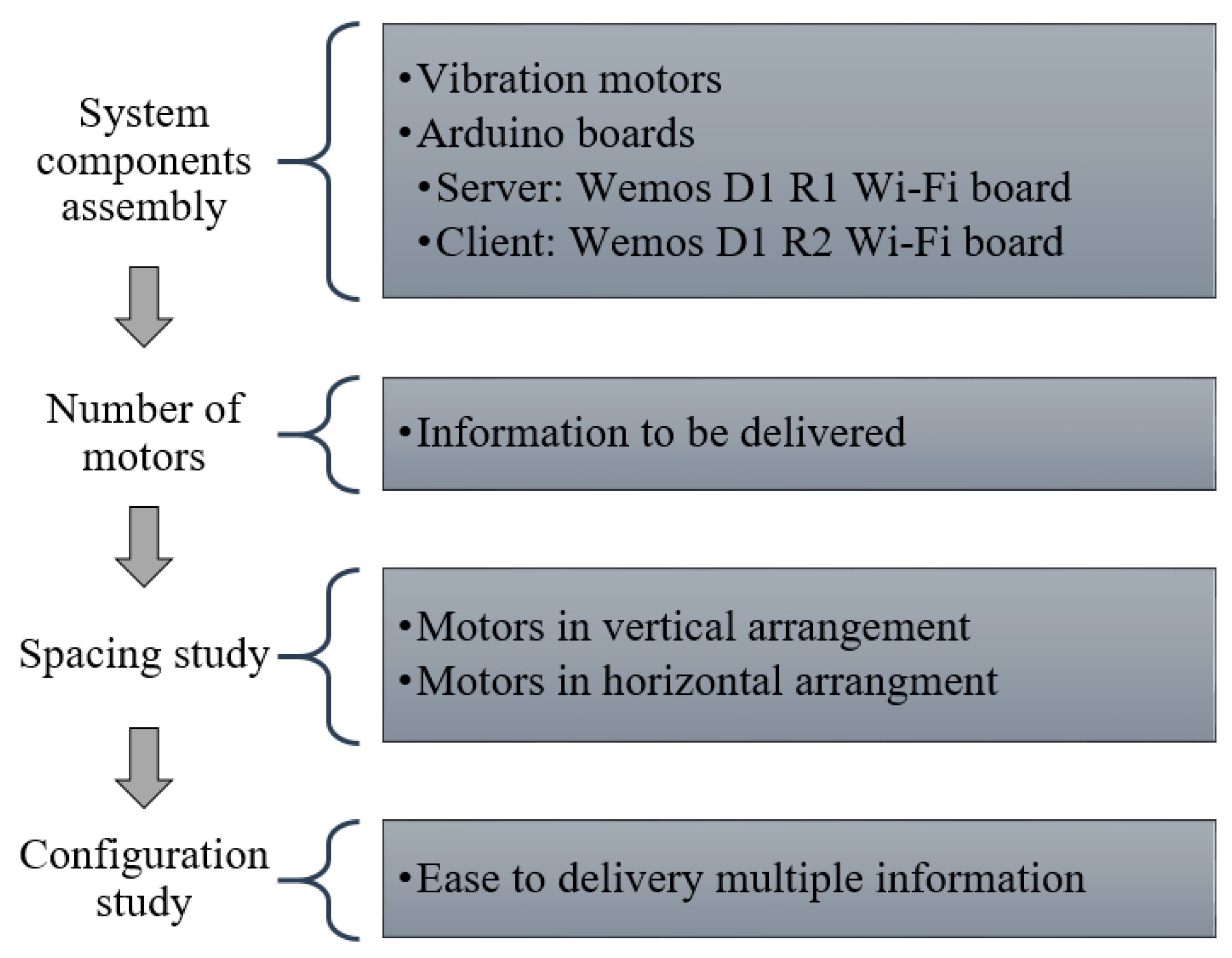

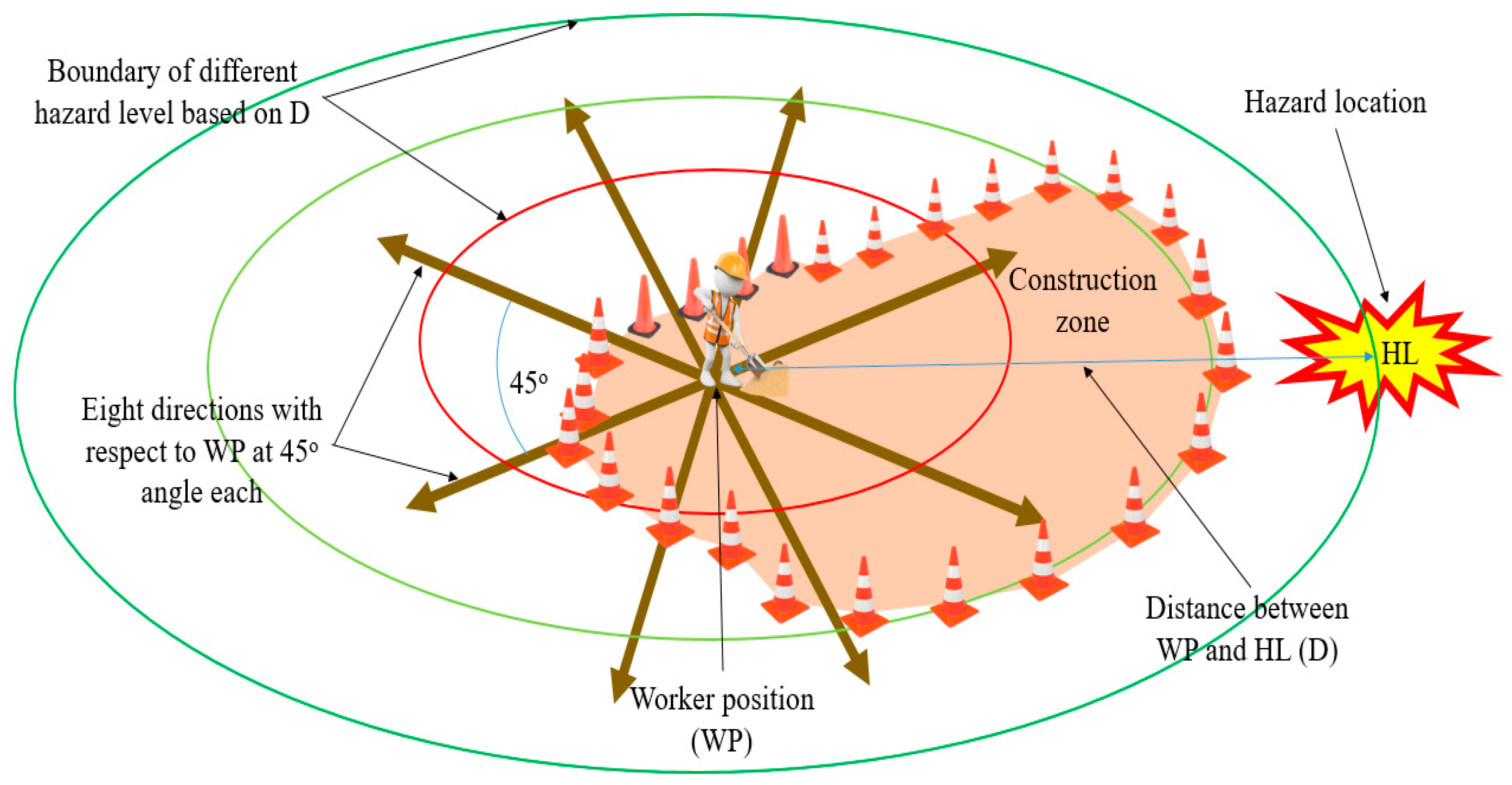

2. Research Objective and Scope

- To determine the number of vibration motors to be used in the system to effectively deliver hazard information.

- To determine the optimum spacing between the vibration sensors in both the horizontal and vertical axes; such spacing will result in a higher accuracy of detecting signals from consecutive sensors.

- To determine an arrangement of vibration motors in such a way that the configuration is capable of transmitting easily distinguishable and meaningful signals with higher perception accuracy.

3. Materials and Methods

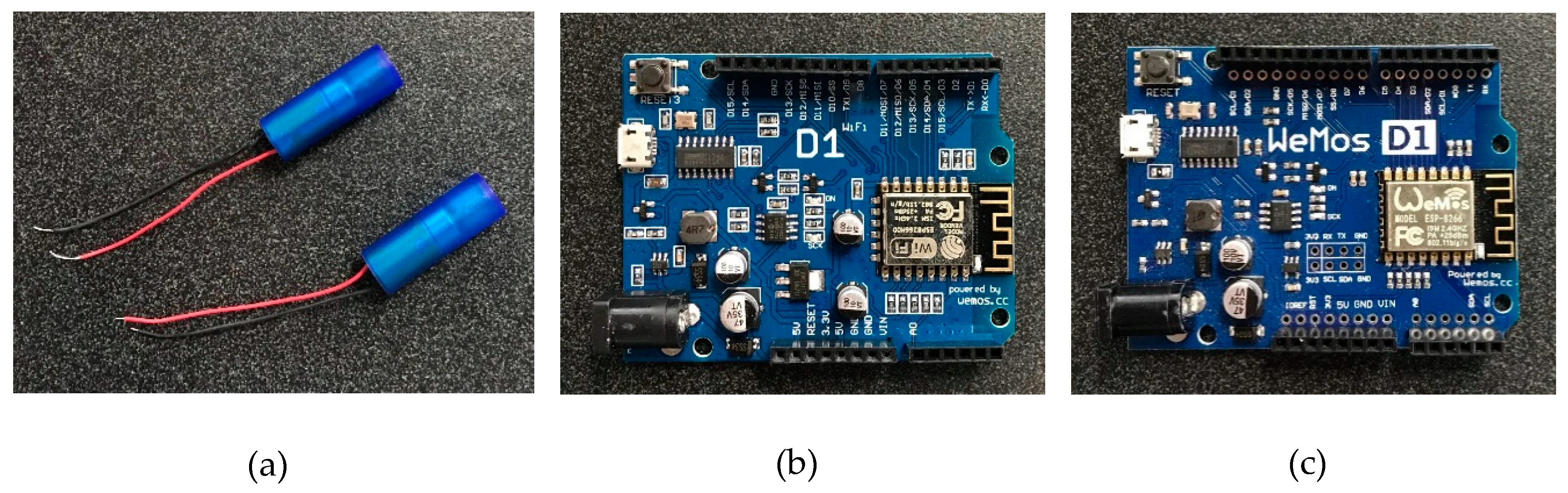

3.1. Assembly of System Components

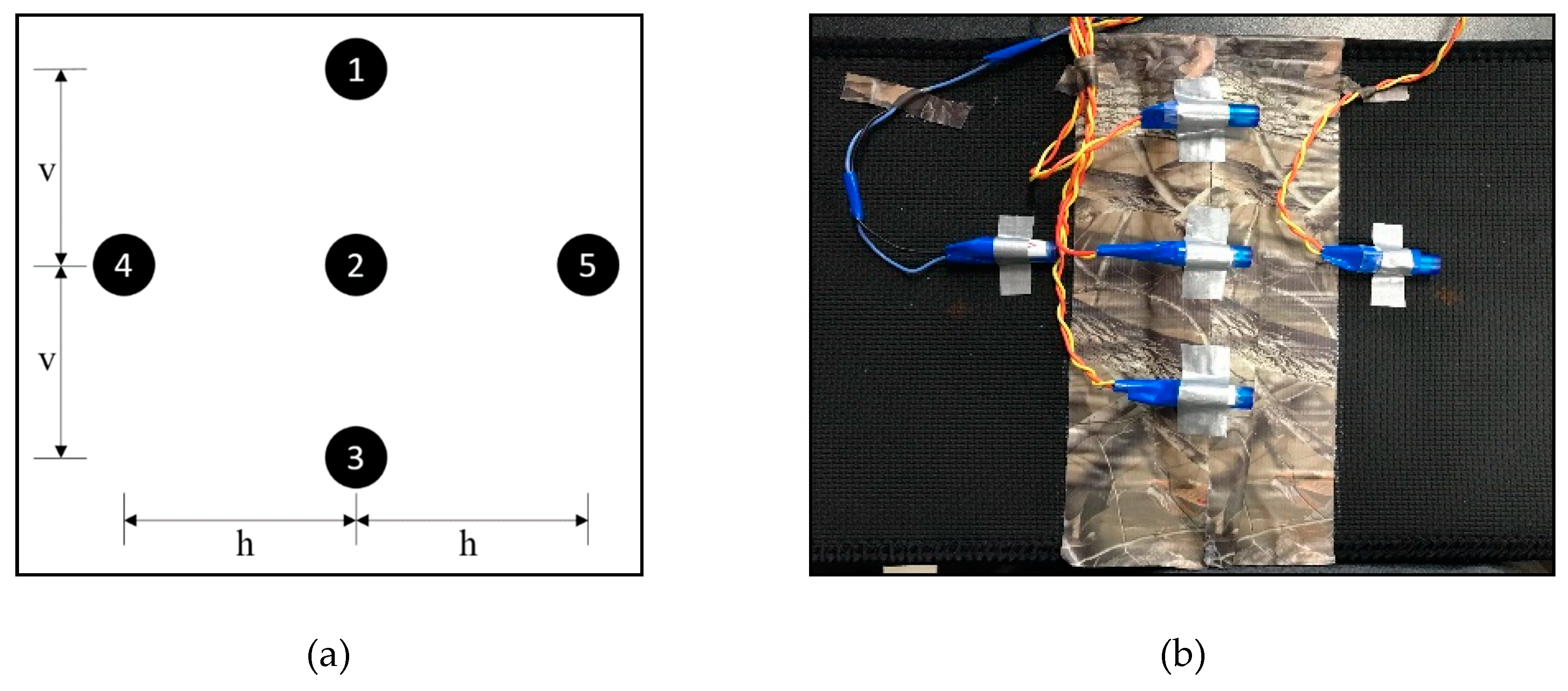

3.2. Number of Vibration Motors

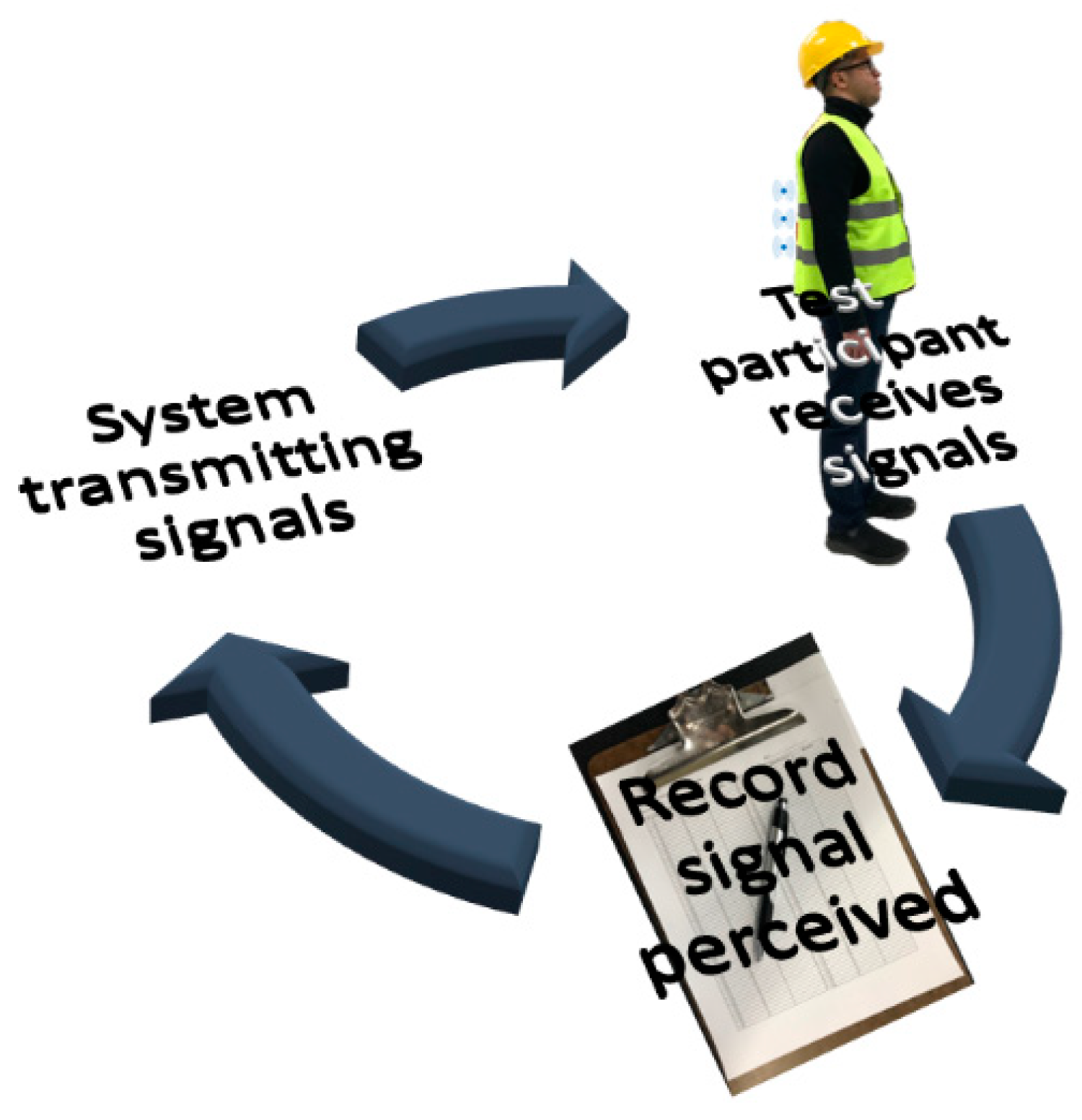

3.3. Experimental Study

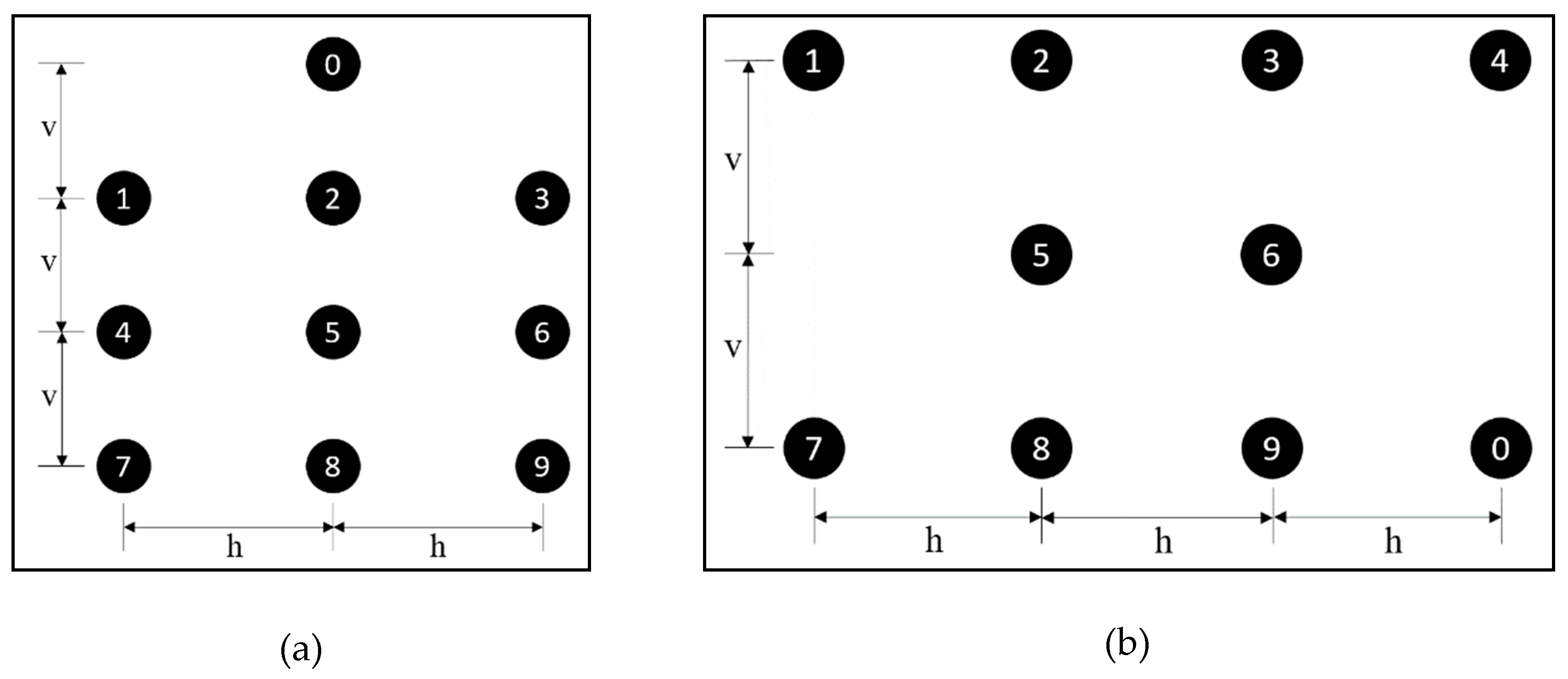

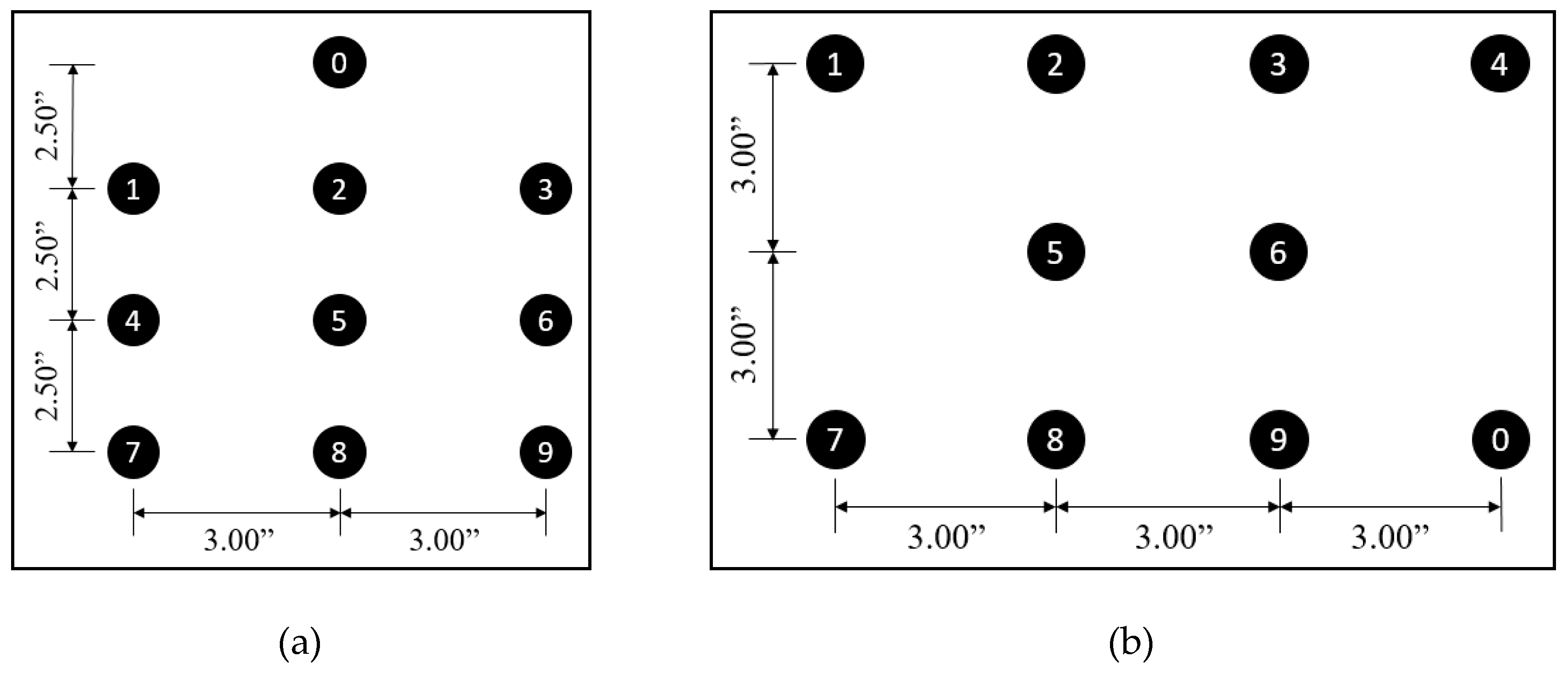

3.3.1. Spacing of Vibration Sensors

3.3.2. Configuration of Vibratory Sensors on Waist belt

Preliminary Test

Follow-Up Test on Selected Configuration

4. Experimental Study Results

4.1. Spacing Study Results

4.2. Configuration Study

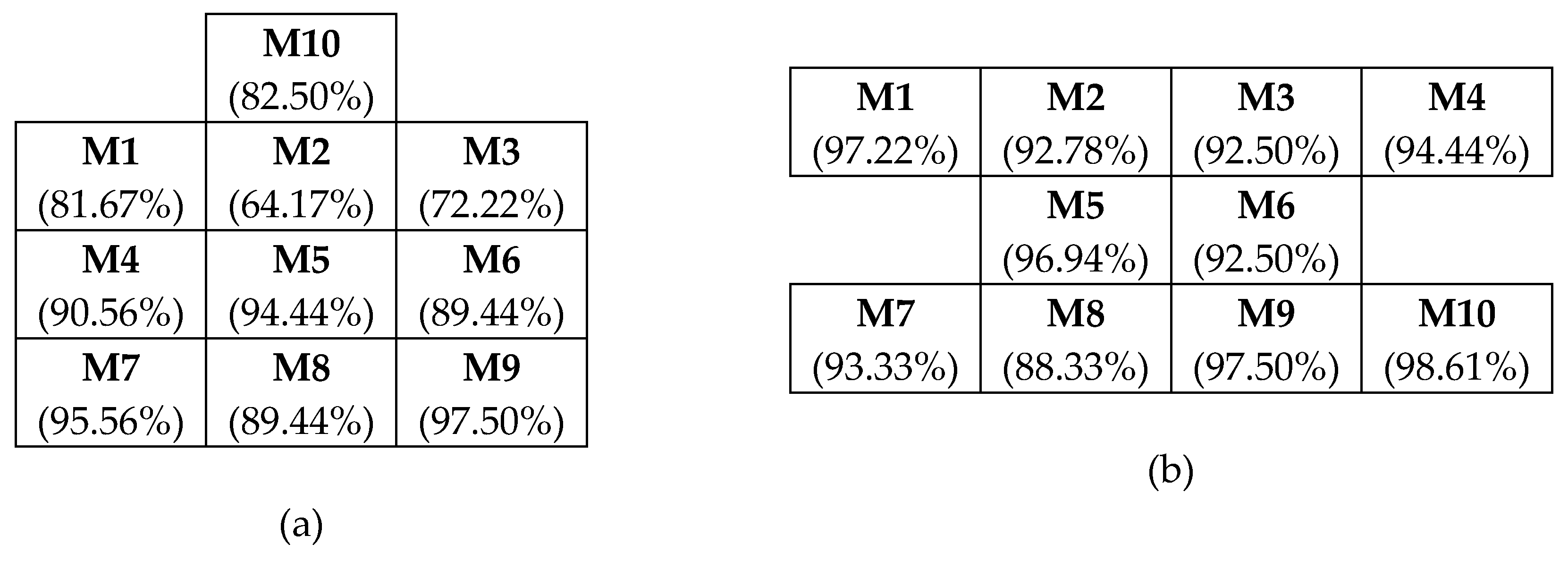

4.2.1. Preliminary Test Results

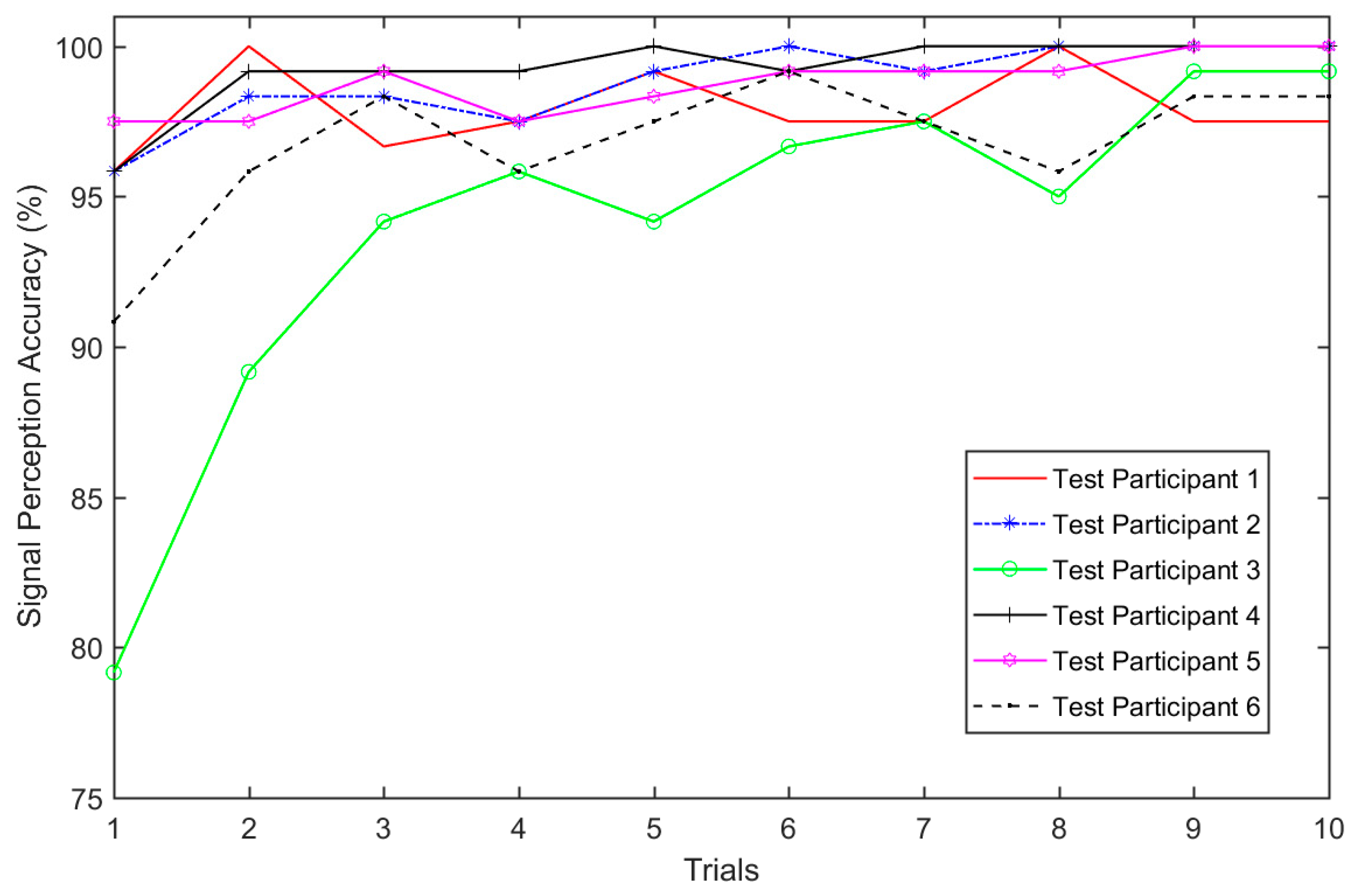

4.2.2. Follow-Up Test Results

5. Discussion

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Bureau of Labor Statistics National Census of Fatal Occupational Injuries in 2017. Available online: https://www.bls.gov/news.release/pdf/cfoi.pdf (accessed on 8 April 2019).

- OSHA Commonly Used Statistics. Available online: https://www.osha.gov/oshstats/commonstats.html (accessed on 3 May 2016).

- Carter, G.; Smith, S.D. Safety Hazard Identification on Construction Projects. J. Constr. Eng. Manag. 2006, 132, 197–205. [Google Scholar] [CrossRef]

- Sacks, R.; Rozenfeld, O.; Rosenfeld, Y. Spatial and Temporal Exposure to Safety Hazards in Construction. J. Constr. Eng. Manag. 2009, 135, 726–736. [Google Scholar] [CrossRef]

- Perlman, A.; Sacks, R.; Barak, R. Hazard recognition and risk perception in construction. Saf. Sci. 2014, 64, 22–31. [Google Scholar] [CrossRef]

- Park, J.; Kim, K.; Cho, Y.K. Framework of Automated Construction-Safety Monitoring Using Cloud-Enabled BIM and BLE Mobile Tracking Sensors. J. Constr. Eng. Manag. 2017, 143, 05016019. [Google Scholar] [CrossRef]

- Park, J.; Yang, X.; Cho, Y.K.; Seo, J. Improving dynamic proximity sensing and processing for smart work-zone safety. Autom. Constr. 2017, 84, 111–120. [Google Scholar] [CrossRef]

- Marks, E.; Teizer, J. Proximity Sensing and Warning Technology for Heavy Construction Equipment Operation. In Construction Research Congress 2012; ASCE: West Lafayette, IN, USA, 2012; pp. 981–990. [Google Scholar]

- Luo, X.; Li, H.; Huang, T.; Rose, T. A field experiment of workers’ responses to proximity warnings of static safety hazards on construction sites. Saf. Sci. 2016, 84, 216–224. [Google Scholar] [CrossRef]

- Wang, J.; Razavi, S.N. Low False Alarm Rate Model for Unsafe-Proximity Detection in Construction. J. Comput. Civ. Eng. 2016, 30, 04015005. [Google Scholar] [CrossRef]

- Teizer, J.; Allread, B.S.; Fullerton, C.E.; Hinze, J. Autonomous pro-active real-time construction worker and equipment operator proximity safety alert system. Autom. Constr. 2010, 19, 630–640. [Google Scholar] [CrossRef]

- Jo, B.W.; Lee, Y.S.; Khan, R.M.A.; Kim, J.H.; Kim, D.K. Robust Construction Safety System (RCSS) for Collision Accidents Prevention on Construction Sites. Sensors 2019, 19, 932. [Google Scholar] [CrossRef]

- Baek, J.; Choi, Y. Bluetooth-Beacon-Based Underground Proximity Warning System for Preventing Collisions inside Tunnels. Appl. Sci. 2018, 8, 2271. [Google Scholar] [CrossRef]

- Park, J.; Marks, E.D.; Cho, Y.K.; Suryanto, W. Performance Test of Wireless Technologies for Personnel and Equipment Proximity Sensing in Work Zones. J. Constr. Eng. Manag. 2016, 142, 04015049. [Google Scholar] [CrossRef]

- Park, J.; Cho, Y.K.; Timalsina, S.K. Direction Aware Bluetooth Low Energy Based Proximity Detection System for Construction Work Zone Safety. In Proceedings of the 33rd International Symposium on Automation and Robotics in Construction 2016, Atlanta, GA, USA, 18–21 July 2016; pp. 76–82. [Google Scholar] [CrossRef]

- Carbonari, A.; Giretti, A.; Naticchia, B. A proactive system for real-time safety management in construction sites. Autom. Constr. 2011, 20, 686–698. [Google Scholar] [CrossRef]

- Kim, D.; Liu, M.; Lee, S.; Kamat, V.R. Remote proximity monitoring between mobile construction resources using camera-mounted UAVs. Autom. Constr. 2019, 99, 168–182. [Google Scholar] [CrossRef]

- Zhuang, Y.; Yang, J.; Li, Y.; Qi, L.; El-Sheimy, N. Smartphone-based indoor localization with bluetooth low energy beacons. Sensors 2016, 16, 596. [Google Scholar] [CrossRef] [PubMed]

- Sakhakarmi, S.; Park, J.; Cho, C. Enhanced Machine Learning Classification Accuracy for Scaffolding Safety Using Increased Features. J. Constr. Eng. Manag. 2019, 145, 04018133. [Google Scholar] [CrossRef]

- Yang, K.; Jebelli, H.; Ahn, C.R.; Vuran, M.C. Threshold-Based Approach to Detect Near-Miss Falls of Iron-Workers Using Inertial Measurement Units. In Proceedings of the ASCE Internatinal Workshop Computing in Civil Engineering, Austin, TX, USA, 21–23 June 2015; pp. 148–155. [Google Scholar] [CrossRef]

- Fang, Q.; Li, H.; Luo, X.; Ding, L.; Luo, H.; Rose, T.M.; An, W. Detecting non-hardhat-use by a deep learning method from far-field surveillance videos. Autom. Constr. 2018, 85, 1–9. [Google Scholar] [CrossRef]

- Jung, Y. An Approach to Automated Detection of Failure in Temporary Structures using Image Processing. J. Eng. Archit. 2014, 2, 49–61. [Google Scholar]

- Jung, Y.; Oh, H.; Jeong, M.M. An approach to automated detection of structural failure using chronological image analysis in temporary structures. Int. J. Constr. Manag. 2018, 19, 178–185. [Google Scholar] [CrossRef]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. SSD: Single shot multibox detector. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; pp. 21–37. [Google Scholar] [CrossRef]

- Yan, X.; Li, H.; Li, A.R.; Zhang, H. Wearable IMU-based real-time motion warning system for construction workers’ musculoskeletal disorders prevention. Autom. Constr. 2017, 74, 2–11. [Google Scholar] [CrossRef]

- Nath, N.D.; Akhavian, R.; Behzadan, A.H. Ergonomic analysis of construction worker’s body postures using wearable mobile sensors. Appl. Ergon. 2017, 62, 107–117. [Google Scholar] [CrossRef]

- Akhavian, R.; Behzadan, A.H. Smartphone-based construction workers’ activity recognition and classification. Autom. Constr. 2016, 71, 198–209. [Google Scholar] [CrossRef]

- Lee, W.; Seto, E.; Lin, K.Y.; Migliaccio, G.C. An evaluation of wearable sensors and their placements for analyzing construction worker’s trunk posture in laboratory conditions. Appl. Ergon. 2017, 65, 424–436. [Google Scholar] [CrossRef]

- Yang, K.; Ahn, C.R.; Kim, H. Validating ambulatory gait assessment technique for hazard sensing in construction environments. Autom. Constr. 2019, 98, 302–309. [Google Scholar] [CrossRef]

- Antwi-Afari, M.F.; Li, H.; Yu, Y.; Kong, L. Wearable insole pressure system for automated detection and classification of awkward working postures in construction workers. Autom. Constr. 2018, 96, 433–441. [Google Scholar] [CrossRef]

- Nath, N.D.; Chaspari, T.; Behzadan, A.H. Automated ergonomic risk monitoring using body-mounted sensors and machine learning. Adv. Eng. Inform. 2018, 38, 514–526. [Google Scholar] [CrossRef]

- Yang, K.; Aria, S.; Ahn, C.R.; Stentz, T. Automated Detection of Near-miss Fall Incidents in Iron Workers Using Inertial Measurement Units. In Proceedings of the Construction Research Congress 2014, Atlanta, GA, USA, 19–21 May 2014; pp. 935–944. [Google Scholar]

- Yang, K.; Ahn, C.R.; Vuran, M.C.; Aria, S.S. Semi-supervised near-miss fall detection for ironworkers with a wearable inertial measurement unit. Autom. Constr. 2016, 68, 194–202. [Google Scholar] [CrossRef]

- Sedighi Maman, Z.; Alamdar Yazdi, M.A.; Cavuoto, L.A.; Megahed, F.M. A data-driven approach to modeling physical fatigue in the workplace using wearable sensors. Appl. Ergon. 2017, 65, 515–529. [Google Scholar] [CrossRef] [PubMed]

- Aryal, A.; Ghahramani, A.; Becerik-Gerber, B. Monitoring fatigue in construction workers using physiological measurements. Autom. Constr. 2017, 82, 154–165. [Google Scholar] [CrossRef]

- Lee, W.; Lin, K.Y.; Seto, E.; Migliaccio, G.C. Wearable sensors for monitoring on-duty and off-duty worker physiological status and activities in construction. Autom. Constr. 2016, 83, 341–353. [Google Scholar] [CrossRef]

- Guo, H.; Yu, Y.; Xiang, T.; Li, H.; Zhang, D. The availability of wearable-device-based physical data for the measurement of construction workers’ psychological status on site: From the perspective of safety management. Autom. Constr. 2017, 82, 207–217. [Google Scholar] [CrossRef]

- Antwi-Afari, M.F.; Li, H.; Seo, J.; Wong, A.Y.L. Automated detection and classification of construction workers’ loss of balance events using wearable insole pressure sensors. Autom. Constr. 2018, 96, 189–199. [Google Scholar] [CrossRef]

- Kim, K.; Kim, H.; Kim, H. Image-based construction hazard avoidance system using augmented reality in wearable device. Autom. Constr. 2017, 83, 390–403. [Google Scholar] [CrossRef]

- Drum, T. Work Zone Intrusion Alarms for Highway Workers. Available online: http://www.dot.ca.gov/newtech/researchreports/preliminary_investigations/docs/work_zone_warning_preliminary_investigation.pdf (accessed on 4 October 2017).

- Wang, M.H.; Schrock, S.D.; Bai, Y.; Rescot, R.A. Evaluation of Innovative Traffic Safety Devices at Short-Term Work Zones. Available online: https://rosap.ntl.bts.gov/view/dot/26339 (accessed on 4 April 2019).

- Ward, J.; Meijer, P. Visual experiences in the blind induced by an auditory sensory substitution device. Conscious. Cogn. 2010, 19, 492–500. [Google Scholar] [CrossRef]

- Chebat, D.R.; Schneider, F.C.; Kupers, R.; Ptito, M. Navigation with a sensory substitution device in congenitally blind individuals. Neuroreport 2011, 22, 342–347. [Google Scholar] [CrossRef] [PubMed]

- Van Erp, J.B.F. Presenting directions with a vibrotactile torso display. Ergonomics 2005, 48, 302–313. [Google Scholar] [CrossRef] [PubMed]

- Elliott, L.R.; Van Erp, J.B.F.; Redden, E.S.; Duistermaat, M. Field-Based Validation of a Tactile Navigation Device. IEEE Trans. Haptics 2010, 3, 78–87. [Google Scholar] [CrossRef] [PubMed]

- Elliott, L.R.; Redden, E.S.; Pettitt, R.A.; Carstens, C.B.; Van Erp, J.; Duistermaat, M. Tactile Guidance for Land Navigation. Available online: https://www.arl.army.mil/arlreports/2006/ARL-TR-3814.pdf (accessed on 25 April 2018).

- Van Erp, J.B.F.; Self, B.P. Tactile Displays for Orientation, Navigation and Communication in Air, Sea and Land Environments. Available online: https://apps.dtic.mil/dtic/tr/fulltext/u2/a492500.pdf (accessed on 25 April 2018).

- Grierson, L.E.M.; Zelek, J.; Carnahan, H. The Application of a Tactile Way-finding Belt to Facilitate Navigation in Older Persons. Ageing Int. 2009, 34, 203–215. [Google Scholar] [CrossRef]

- Grierson, L.E.M.; Zelek, J.; Lam, I.; Black, S.E.; Carnahan, H. Application of a Tactile Way-Finding Device to Facilitate Navigation in Persons with Dementia. Assist. Technol. 2011, 23, 108–115. [Google Scholar] [CrossRef]

- Durá-Gil, J.V.; Bazuelo-Ruiz, B.; Moro-Pérez, D.; Mollà-Domenech, F. Analysis of different vibration patterns to guide blind people. PeerJ 2017, 5, e3082. [Google Scholar] [CrossRef]

- Faugloire, E.; Lejeune, L. Evaluation of heading performance with vibrotactile guidance: The benefits of information–movement coupling compared with spatial language. J. Exp. Psychol. Appl. 2014, 20, 397–410. [Google Scholar] [CrossRef]

- Tsukada, K.; Yasumura, M. ActiveBelt: Belt-Type Wearable Tactile Display for Directional Navigation. Available online: https://link.springer.com/chapter/10.1007/978-3-540-30119-6_23 (accessed on 25 April 2018).

- Marston, J.R.; Loomis, J.M.; Klatzky, R.L.; Golledge, R.G. Nonvisual Route following with Guidance from a Simple Haptic or Auditory Display. J. Vis. Impair. Blind. 2007, 101, 203–211. [Google Scholar] [CrossRef]

- Marston, J.R.; Loomis, J.M.; Klatzky, R.L.; Golledge, R.G.; Smith, E.L. Evaluation of Spatial Displays for Navigation without Sight. ACM Trans. Appl. Percept. 2006, 3, 110–124. [Google Scholar] [CrossRef]

- Johnson, L.A.; Higgins, C.M. A Navigation Aid for the Blind Using Tactile-Visual Sensory Substitution. In Proceedings of the International Conference of the IEEE Engineering in Medicine and Biology Society, New York, NY, USA, 30 August–3 September 2006; pp. 6289–6292. [Google Scholar]

- Tan, H.Z.; Gray, R.; Young, J.J.; Traylor, R. A Haptic Back Display for Attentional and Directional Cueing. Haptics-e Electron. J. Haptics Res. 2003, 3, 1–20. [Google Scholar]

- Ross, D.A.; Blasch, B.B. Wearable Interfaces for Orientation and Wayfinding. In Proceedings of the fourth International ACM Conference on Assistive Technologies, Arlington, VA, USA, 13–15 November 2000; pp. 193–200. [Google Scholar] [CrossRef]

- Srikulwong, M.; O’Neill, E. A Direct Experimental Comparison of Back Array and Waist-Belt Tactile Interfaces for Indicating Direction. Available online: http://www.cs.bath.ac.uk/pervasive/publications/SrikulwongONeillPervasive2010.pdf (accessed on 25 April 2018).

- Cho, C.; Park, J. An embedded sensory system for worker safety: Prototype development and evaluation. Sensors 2018, 18, 1200. [Google Scholar] [CrossRef]

- Park, J.; Cho, Y.K.; Khodabandelu, A. Sensor-Based Safety Performance Assessment of Individual Construction Workers. Sensors 2018, 18, 3897. [Google Scholar] [CrossRef] [PubMed]

- Dodge, Y. Bernoulli Trial. In The Concise Encyclopedia of Statistics; Springer: New York, NY, USA, 2008; pp. 38–39. [Google Scholar]

| Spacing No. | Vertical Spacing (v) | Horizontal Spacing (h) |

|---|---|---|

| 1 | 3.25 | 3.25 |

| 2 | 3.00 | 3.00 |

| 3 | 2.75 | 2.75 |

| 4 | 2.50 | 2.50 |

| 5 | 2.25 | 2.50 |

| 6 | 2.00 | 2.50 |

| Spacing No. | Spacing between Motors (inch) | Accuracy of Identifying Individual Motors (%) | ||||||

|---|---|---|---|---|---|---|---|---|

| Vertical (v) | Horizontal (h) | M1 | M2 | M3 | M4 | M5 | Overall | |

| 1 | 3.25 | 3.25 | 99.44 | 100 | 100 | 100 | 100 | 99.89 |

| 2 | 3.00 | 3.00 | 99.72 | 100 | 99.72 | 100 | 100 | 99.89 |

| 3 | 2.75 | 2.75 | 94.17 | 99.17 | 100 | 100 | 100 | 98.67 |

| 4 | 2.50 | 2.50 | 94.72 | 98.61 | 96.67 | 99.72 | 100 | 97.94 |

| 5 | 2.25 | 2.50 | 90.28 | 98.06 | 95.83 | 100 | 100 | 96.83 |

| 6 | 2.00 | 2.50 | 86.67 | 97.50 | 93.33 | 100 | 100 | 95.50 |

| Overall Accuracy (%) | 94.17 | 98.89 | 97.59 | 99.95 | 100 | |||

| M1 | M2 | M3 | |

|---|---|---|---|

| M1 | 94.17% | 5.83% | 0.00% |

| M2 | 0.83% | 99.03% | 0.14% |

| M3 | 0.00% | 2.36% | 97.64% |

| M4 | M2 | M5 | |

|---|---|---|---|

| M4 | 99.95% | 0.05% | 0.00% |

| M2 | 0.05% | 99.86% | 0.09% |

| M5 | 0.00% | 0.00% | 100.00% |

| Test Participant | 1-3-3-3 Configuration | 4-2-4 Configuration | ||||

|---|---|---|---|---|---|---|

| Minimum Accuracy | Maximum Accuracy | Average Accuracy | Minimum Accuracy | Maximum Accuracy | Average Accuracy | |

| P1 | 82.50% | 91.67% | 87.17% | 90.83% | 96.67% | 94.33% |

| P2 | 85.00% | 89.17% | 87.17% | 93.33% | 97.50% | 95.83% |

| P3 | 73.33% | 86.67% | 81.17% | 80.83% | 96.67% | 90.67% |

| P4 | 79.17% | 92.50% | 84.33% | 90.83% | 99.17% | 95.50% |

| P5 | 73.33% | 92.50% | 85.33% | 89.17% | 99.17% | 93.50% |

| P6 | 82.50% | 94.17% | 89.33% | 89.17% | 100.00% | 96.67% |

| Motors | M1 | M2 | M3 | M4 | M5 | M6 | M7 | M8 | M9 | M10 |

|---|---|---|---|---|---|---|---|---|---|---|

| Accuracy (%) | 98.89 | 96.81 | 98.19 | 99.72 | 99.58 | 98.06 | 98.19 | 90.56 | 97.22 | 98.61 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sakhakarmi, S.; Park, J. Investigation of Tactile Sensory System Configuration for Construction Hazard Perception. Sensors 2019, 19, 2527. https://doi.org/10.3390/s19112527

Sakhakarmi S, Park J. Investigation of Tactile Sensory System Configuration for Construction Hazard Perception. Sensors. 2019; 19(11):2527. https://doi.org/10.3390/s19112527

Chicago/Turabian StyleSakhakarmi, Sayan, and JeeWoong Park. 2019. "Investigation of Tactile Sensory System Configuration for Construction Hazard Perception" Sensors 19, no. 11: 2527. https://doi.org/10.3390/s19112527

APA StyleSakhakarmi, S., & Park, J. (2019). Investigation of Tactile Sensory System Configuration for Construction Hazard Perception. Sensors, 19(11), 2527. https://doi.org/10.3390/s19112527