Operationalising CTT and IRT in Spreadsheets: A Methodological Demonstration for Classroom Assessment

Abstract

1. Introduction

2. Theoretical Framework

2.1. Classical Test Theory and Item Response Theory

2.2. Logistic Models in Item Response Theory (1PL, 2PL, 3PL)

2.3. Competence-Based Assessment Using CTT and IRT

2.4. Multiple-Choice Items and Psychometric Analysis

2.5. The Literature Gap: Democratising Psychometric Analysis Through Accessible Tools

3. Research Questions and Objectives

- RQ1—Which statistical analyses, feasible through the use of spreadsheets, can improve the robustness of test analysis?

- O1—To enhance spreadsheet-based scoring tools with accessible formulas that support improved statistical analysis of assessment instruments.

- RQ2—How can statistical results generated in spreadsheets be meaningfully interpreted by teachers?

- O2—To present the foundational principles necessary for effective statistical analysis of applied assessment instruments.

- RQ3—How can statistical analysis results be used to improve item construction or support the development of an assessment item database?

- O3—To use statistical evidence to refine test items, inform assessment strategies, and structure a reusable item database.

4. Methodology

5. Results

5.1. Descriptive Statistics of Total Scores

5.2. Classical Test Theory Results

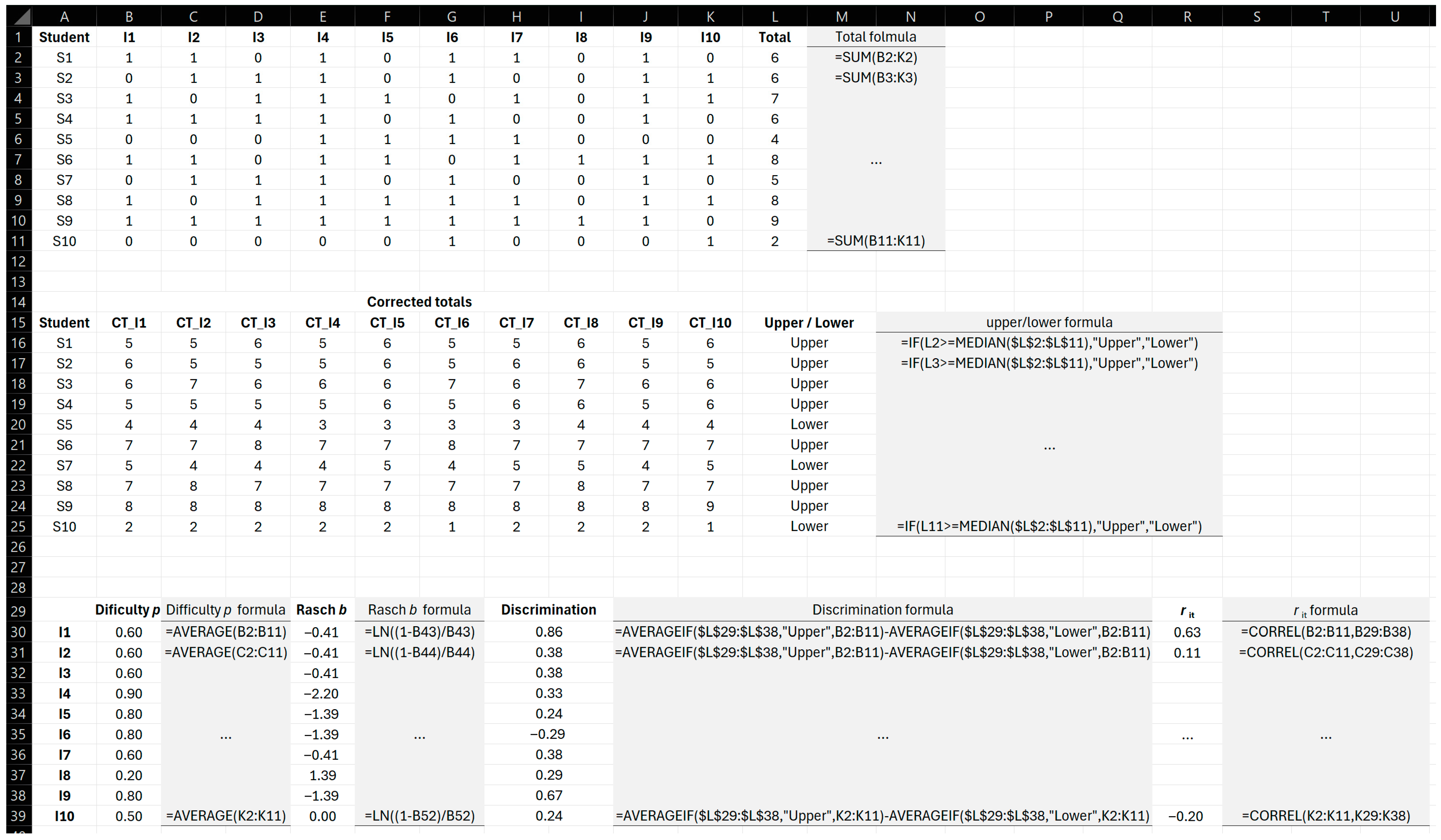

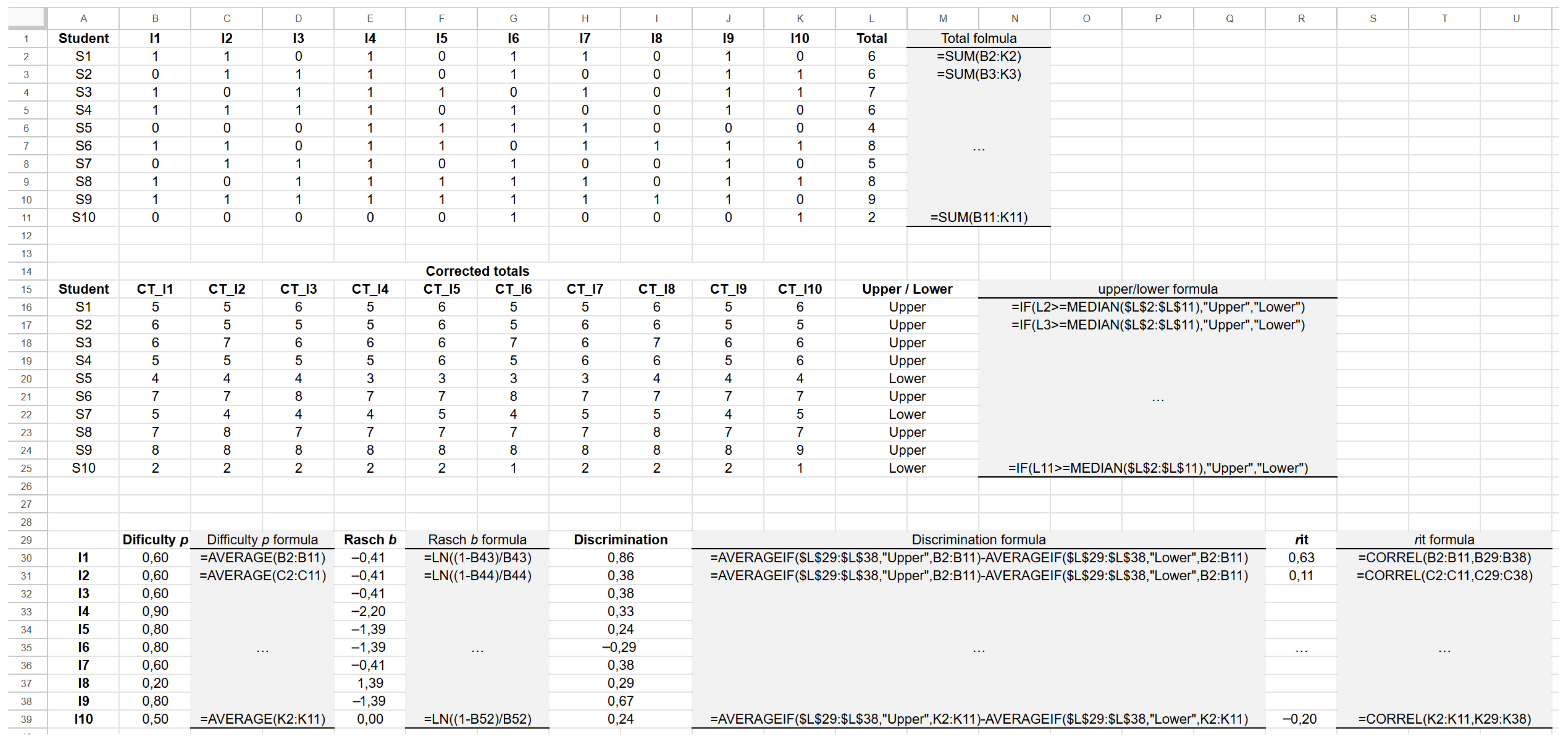

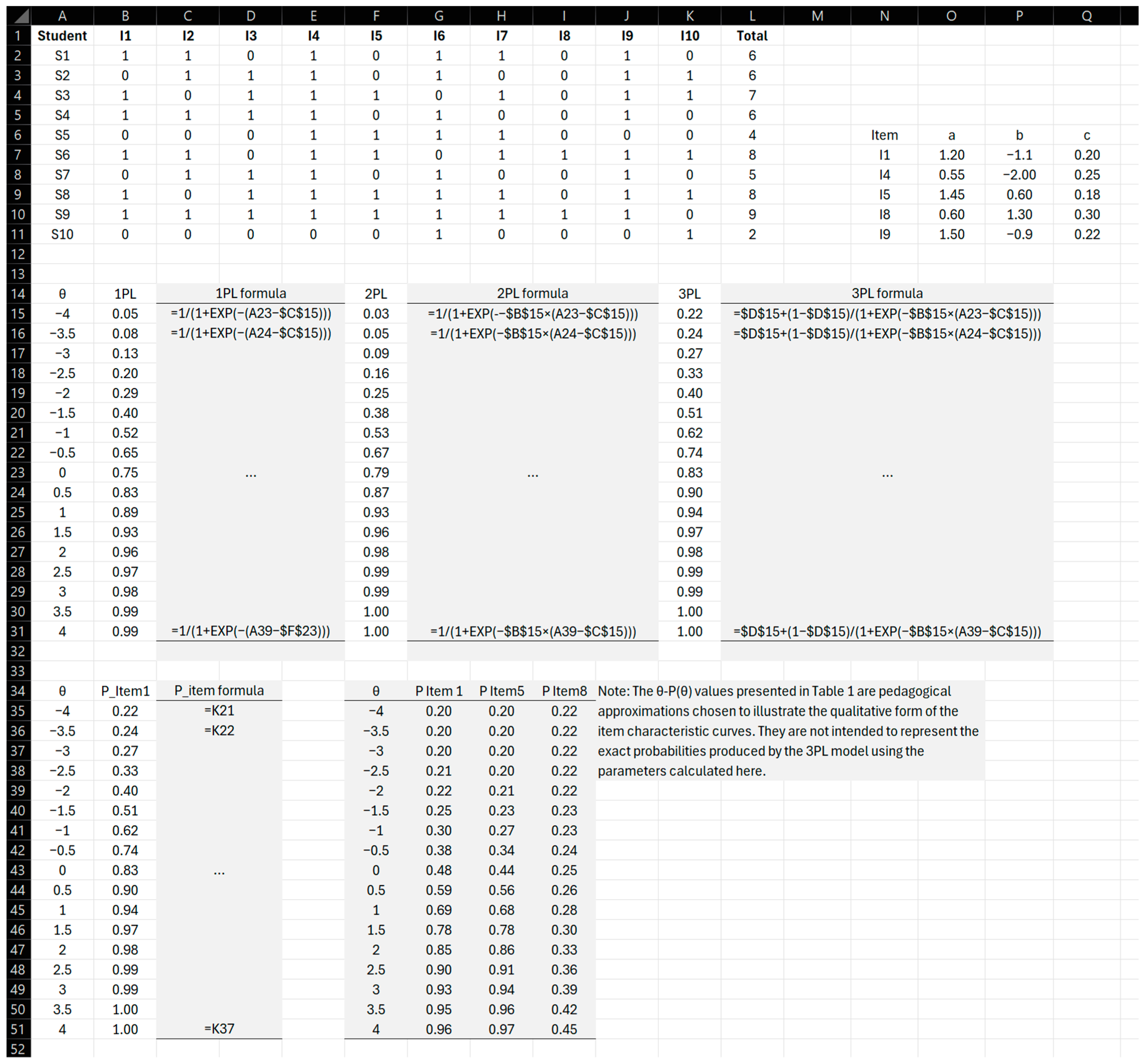

5.2.1. Item Difficulty

5.2.2. Rasch-Based Difficulty

5.2.3. Item Discrimination

5.2.4. Corrected Item–Total Correlations

5.2.5. Summary of CTT Findings

5.3. Item Response Theory Results

5.3.1. Parameter Behaviour (Demonstration)

5.3.2. Item Characteristic Curves Interpretation

5.3.3. Test Information Function (Conceptual)

5.4. CTT–IRT Comparison

5.5. Spreadsheet Structure

5.6. Pedagogical Interpretation

6. Discussion

6.1. Interpretation of CTT Findings

6.2. Interpretation of IRT Findings

6.3. Pedagogical Implications for Competence-Based Assessment

- identify weak items and revise distractors, stems, or cognitive alignment;

- ensure that assessments measure intended competences with appropriate difficulty;

- build small-scale item banks with known psychometric properties;

- interpret student performance beyond total scores;

- provide targeted feedback based on item-level evidence.

6.4. Feasibility, Practical Constraints and Ethical Considerations

6.5. Revisiting the Research Questions and Achievement of Objectives

6.6. Limitations and Future Research

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A

| Student | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | Total |

|---|---|---|---|---|---|---|---|---|---|---|---|

| S1 | 1 | 1 | 0 | 1 | 0 | 1 | 1 | 0 | 1 | 0 | 6 |

| S2 | 0 | 1 | 1 | 1 | 0 | 1 | 0 | 0 | 1 | 1 | 6 |

| S3 | 1 | 0 | 1 | 1 | 1 | 0 | 1 | 0 | 1 | 1 | 7 |

| S4 | 1 | 1 | 1 | 1 | 0 | 1 | 0 | 0 | 1 | 0 | 6 |

| S5 | 0 | 0 | 0 | 1 | 1 | 1 | 1 | 0 | 0 | 0 | 4 |

| S6 | 1 | 1 | 0 | 1 | 1 | 0 | 1 | 1 | 1 | 1 | 8 |

| S7 | 0 | 1 | 1 | 1 | 0 | 1 | 0 | 0 | 1 | 0 | 5 |

| S8 | 1 | 0 | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 1 | 8 |

| S9 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 0 | 9 |

| S10 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 1 | 2 |

| Item | p-Value | b-Value | Discrimination | rit |

|---|---|---|---|---|

| I1 | 0.8 | −1.39 | 0.45 | 0.42 |

| I2 | 0.6 | −0.41 | 0.28 | 0.29 |

| I3 | 0.6 | −0.41 | 0.31 | 0.27 |

| I4 | 0.9 | −2.2 | 0.1 | 0.15 |

| I5 | 0.4 | 0.41 | 0.52 | 0.48 |

| I6 | 0.7 | −0.85 | 0.33 | 0.31 |

| I7 | 0.6 | −0.41 | 0.29 | 0.26 |

| I8 | 0.2 | 1.39 | 0.05 | 0.1 |

| I9 | 0.8 | −1.39 | 0.44 | 0.39 |

| I10 | 0.5 | 0 | 0.22 | 0.24 |

| Item | a | b | c |

|---|---|---|---|

| I1 | 1.2 | −1.1 | 0.2 |

| I4 | 0.55 | −2 | 0.25 |

| I5 | 1.45 | 0.6 | 0.18 |

| I8 | 0.6 | 1.3 | 0.3 |

| I9 | 1.5 | −0.9 | 0.22 |

| Column | Description |

|---|---|

| A | Student |

| B–K | Item responses (1/0) |

| L | Total score (=SUM(B2:K2)) |

| M | Corrected total (=L2 − B2) |

| N | Difficulty p (=COUNTIF(B2:B11,B1)/10) |

| O | Rasch b (=LN((1 − N)/N)) |

| P | Discrimination (=AVG(upper) − AVG(lower)) |

| Q | rit (=CORREL(B2:B11,M2:M11)) |

| Column | Description |

|---|---|

| A | Ability values θ (from −4 to +4) |

| B | 1PL probability |

| C | 2PL probability |

| D | 3PL probability |

| F2 | Difficulty parameter b |

| G2 | Discrimination parameter a |

| H2 | Guessing parameter c |

Appendix B

| Function | Mathematical Formula | Excel |

|---|---|---|

| Sample mean | =AVERAGE(range) | |

| Population mean | =AVERAGE(range) | |

| Corrected sample variation | =VAR.S(range) | |

| Population variance | =VER.P(range) | |

| Population standard deviation | =STDEV.P(range) | |

| Minimum | =MIN(range) | |

| Maximum | =MAX(range) | |

| Range | =MAX(range) − MIN(range) |

| Function | Mathematical Formula | Excel |

|---|---|---|

| Item difficulty (p) | =COUNTIF(E8:E47,E7)/COUNT(E8:E47) | |

| Rasch difficulty (b) | =LN((1 − (COUNTIF(E8:E47,E7)/COUNT(E8:E47)))/(COUNTIF(E8:E47,E7)/COUNT(E8:E47))) | |

| Item discrimination (27% method) | =AVERAGE(E8:E18) − AVERAGE(E37:E47) | |

| Corrected item–total correlation | correlation between item and corrected total | =CORREL(E8:E39,AW8:AW39) |

| Random guess probability | =1/(number of MCQ options) |

| Model | Mathematical Formula | Excel |

|---|---|---|

| One-parameter logistic model (1PL/Rasch) | =1/(1 + EXP(−(A8 − $B$2))) | |

| Two-parameter logistic model (2PL) | =1/(1 + EXP(−$C$2 × (A8 − $B$2))) | |

| Three-parameter logistic model (3PL) | =$D$2 + (1 − $D$2)/(1 + EXP(−$C$2 × (A8 − $B$2))) |

| Model | Mathematical Formula | Excel Formula | Parameters | Pedagogical Interpretation |

|---|---|---|---|---|

| 1PL (Rasch) | =1/(1 + EXP(−(θ − b))) | b = difficulty | Fixed discrimination; suitable for standardised tests | |

| 2PL | =1/(1 + EXP(−a × (θ − b))) | α = discrimination b = difficulty | Variable discrimination; higher α indicates better item sensitivity | |

| 3PL | =c + (1 − c) × (1/(1 + EXP(−a × (θ − b)))) | α = discrimination b = difficulty c = guessing | Accounts for random guessing; ideal for multiple-choice items |

| Indicator | Excel Formula | Pedagogical Interpretation |

|---|---|---|

| Proportion correct (p) | =COUNTIF(responses;1)/COUNT(responses) | Classical difficulty index |

| Logit transformation (b) | =LN((1 − p)/p) | Converts p to logit scale |

| Item–ability correlation | =CORREL(item;ability) | Proxy for discrimination (approximates α) |

| Corrected item–total correlation | =CORREL(item;total score) | Used in test revision and quality monitoring |

Appendix C

| Proportion of Correct Responses | Interpretation |

|---|---|

| 0.00–0.30 | Difficult item |

| 0.31–0.70 | Moderate difficult item |

| 0.71–1.00 | Easy item |

| Item–Total Correlation | Interpretation |

|---|---|

| ≥0.40 | Excellent discrimination |

| 0.30–0.39 | Good discrimination |

| 0.20–0.29 | Acceptable; could be improved |

| <0.20 | Weak discrimination—item may be poorly formulated |

| Negative | Problematic item—inverse to expected performance trend |

| Blank cell | Non-discriminating item—should be reviewed |

| Indicator | Interpretation |

|---|---|

| Item difficulty | If p is close to the guessing value, the item may be poorly constructed or too difficult. |

| Item discrimination | High guessing probability and low discrimination suggests the item does not differentiate ability levels. |

| Distractor analysis | If many students select the correct option by chance, distractors may not be functioning effectively. |

Appendix D

References

- Bruner, J. The Process of Education; Harvard University Press: Cambridge, MA, USA, 1960. [Google Scholar]

- Bruner, J. Toward a Theory of Instruction; Harvard University Press: Cambridge, MA, USA, 1966. [Google Scholar]

- van Merriënboer, J.J.G.; Clark, R.E.; de Croock, M.B.M. Blueprints for complex learning: The 4C/ID-model. ETR&D 2002, 50, 39–61. [Google Scholar] [CrossRef]

- Uner, O.; Tekin, E.; Roediger, H.L. True-false tests enhance retention relative to rereading. J. Exp. Psychol. Appl. 2022, 28, 114–129. [Google Scholar] [CrossRef] [PubMed]

- Roediger, H.L.; Brown, P.C. The importance of testing as a learning strategy. Sch. Adm. 2019, 76, 35–37. Available online: http://psychnet.wustl.edu/memory/wp-content/uploads/2021/01/Roediger_Brown_School-Administrator.pdf (accessed on 15 November 2025).

- Pinto, A.C. Factores relevantes na avaliação escolar por perguntas de escolha múltipla. Psicol. Educ. Cult. 2001, 5, 23–44. Available online: https://www.fpce.up.pt/docentes/acpinto/artigos/15_pergunt_escolha_multipla.pdf (accessed on 2 November 2025).

- Gasigwa, T.; Bimenyimana, S.; Nteziryimana, J. Teachers’ attitude towards the use of excel software, to teach and learn statistics on learner’s performance in rwanda’s kicukiro public upper secondary schools. J. Res. Innov. Implic. Educ. 2024, 8, 246–252. [Google Scholar] [CrossRef]

- Muhammad, G.A.; Adamu, A.; Zubair, S.I.; Usman, H.A. Excel As an ICT Tool For Increasing Teacher Proficiency Towards Quality Education: A Panacea for Addressing Challenges Confronting Nigeria Education System. Int. J. Educ. Eval. 2024, 10, 40–56. Available online: https://ijee.io/Abstract/3857/excel-as-an-ict-tool-for-increasing-teacher-proficiency-towards-quality-education-a-panacea-for-addressing-challenges-confronting-nigeria-education-system (accessed on 16 November 2025).

- Hambleton, R.; Swaminathan, H.; Rogers, H. Fundamentals of Item Response Theory; SAGE: Hemet, CA, USA, 1991. [Google Scholar]

- Haleem, A.; Javaid, M.; Qadri, M.A.; Suman, R. Understanding the role of digital technologies in education: A review. Sustain. Oper. Comput. 2022, 3, 275–285. [Google Scholar] [CrossRef]

- Akbari, A. The rasch analysis of item response theory: An untouched area in evaluating student academic translations. SKASE J. Transl. Interpret. 2025, 18, 50–77. [Google Scholar] [CrossRef]

- Hu, Z.F.; Lin, L.; Wang, Y.H.; Li, J.W. The integration of classical testing theory and item response theory. Psychology 2021, 12, 1397–1409. Available online: https://www.scirp.org/journal/paperinformation?paperid=111936 (accessed on 15 November 2025).

- Ayanwale, M.A.; Chere-Masopha, J.; Morena, M.C. The classical test or item response measurement theory: The status of the framework at the examination council of Lasotho. Int. J. Learn. Teach. Educ. Res. 2022, 21, 384–406. [Google Scholar] [CrossRef]

- Eleje, L.I.; Onah, F.E.; Abanobi, C.C. Comparative study of classical test theory and item response theory using diagnostic quantitative economics skill test item analysis results. Eur. J. Educ. Soc. Sci. 2018, 3, 57–75. Available online: https://dergipark.org.tr/tr/pub/ejees/issue/40156/477675 (accessed on 2 November 2025).

- Allen, M.; Yen, W. Introduction to Measurement Theory; Brooks/Cole Publishing Company: Pacific Grove, CA, USA, 1979; p. 57. [Google Scholar]

- Kurniawan, D.D.; Syifa, A.; Huda, N.; Kusuma, M. Item analysis of teacher made test in Biology subject. In 5th International Conference on Current Issues in Education (ICCIE 2021); Atlantis Press: Dordrecht, The Netherlands, 2022; pp. 312–317. [Google Scholar] [CrossRef]

- Butakor, P.K. Using classical test and item response theories to evaluate psychometric quality of teacher-made test in Ghana. ESJ 2022, 18, 139. Available online: https://eujournal.org/index.php/esj/article/view/15098 (accessed on 15 November 2025).

- Priyani, T.; Sugiharto, B. Analysis of biology midterm exam items using a comparison of the classical theory test and the Rasch model. JPBI 2024, 10, 939–958. [Google Scholar] [CrossRef]

- Nasir, M. Application of Classical Test Theory and Item Response Theory to Analyze Multiple Choice Questions. Doctoral Thesis, University of Calgary, Calgary, AB, Canada, 2014. Available online: http://hdl.handle.net/11023/1917 (accessed on 2 November 2025).

- Sartes, L.; de Souza-Formigoni, M. Avanços na psicometria: Da teoria clássica dos testes à teoria de resposta ao item. Psicol. Reflexão Crítica 2013, 26, 241–250. [Google Scholar] [CrossRef]

- Bhakta, B.; Tennant, A.; Horton, M.; Lawton, G.; Andrich, D. Using item response theory to explore the psychometric properties of extended matching questions examination in undergraduate medical education. BMC Med. Educ. 2005, 5, 9. [Google Scholar] [CrossRef]

- Hamidah, N. The quality of test on national examination of natural science in the level of elementary school. Int. J. Eval. Res. Educ. 2022, 11, 604–616. [Google Scholar] [CrossRef]

- Brown, G.; Abdulnabi, H. Evaluating the quality of higher education instructor-constructed multiple-choice tests: Impact on student grades. Front. Educ. 2017, 2, 24. [Google Scholar] [CrossRef]

- Janssen, G.; Meier, V.; Trace, J. Classical test theory and item response theory: Two understandings of one high-stakes performance exam. Colomb. Appl. Linguist. J. 2014, 16, 167–184. [Google Scholar] [CrossRef]

- Lahza, H.; Smith, T.G.; Khosravi, H. Beyond item analysis: Connecting student behaviour and performance using e-assessment logs. Br. J. Educ. Technol. 2022, 54, 335–354. [Google Scholar] [CrossRef]

- Furr, R.; Bacharach, V. Psychometrics: An Introduction; SAGE: Hemet, CA, USA, 2018; pp. 314–334. [Google Scholar]

- Saatçioğlu, F.; Atar, H. Investigation of the effect of parameter estimation and classification accuracy in mixture IRT models under different conditions. Int. J. Assess. Tools Educ. 2022, 9, 1013–1029. [Google Scholar] [CrossRef]

- Jumini, J.; Retnawati, H. Estimating item parameters and student abilities: An IRT 2PL analysis of mathematics examination. Al-Ishlah J. Pendidik. 2022, 14, 385–398. [Google Scholar] [CrossRef]

- Liu, D.; Mueller, C.; Sedaghat, A. A scoping review of Rasch analysis and item response theory in otolaryngology: Implications and future possibilities. Laryngoscope Investig. Otolaryngol. 2024, 9, e1208. [Google Scholar] [CrossRef] [PubMed]

- Barbetta, P.; Trevisan, L.; Tavares, H.; Azevedo, T. Aplicação da Teoria da Resposta ao Item uni e multidimensional. Estud. Em Avaliação Educ. 2014, 25, 280–302. [Google Scholar] [CrossRef]

- Howells, K. The Future of Education and Skills: Education 2030: The Future We Want; OECD: Paris, France, 2018; Available online: https://repository.canterbury.ac.uk/download/96f6c3f39ae6dcffa26e72cefe47684172da0c93db0a63d78668406e4f478ae8/3102592/E2030%20Position%20Paper%20%2805.04.2018%29.pdf (accessed on 16 November 2025).

- Dillon, S. OECD Future of Education and Skills 2030: OECD Learning Compass 2030; OECD: Paris, France, 2019; Available online: https://www.oecd.org/content/dam/oecd/en/about/projects/edu/education-2040/1-1-learning-compass/OECD_Learning_Compass_2030_Concept_Note_Series.pdf (accessed on 16 November 2025).

- Le, C.; Wolfe, R.; Steinberg, A. The Past and the Promise: Today’s Competency Education Movement; Students at the Center: Competency Education Research Series; Jobs for the Future: Boston, MA, USA, 2014. Available online: https://files.eric.ed.gov/fulltext/ED561253.pdf (accessed on 11 November 2025).

- Education Commission of the States. Available online: https://www.ecs.org/wp-content/uploads/CBE-Toolkit-2017.pdf (accessed on 15 November 2025).

- Evans, C.M.; Landl, E.; Thompson, J. Making sense of K-12 competency-based education: A systematic literature review of implementation and outcomes research from 2000 to 2019. J. Competency-Based Educ. 2020, 5, e01228. [Google Scholar] [CrossRef]

- Looney, J.; Kelly, G. Assessing Learners’ Competences—Policies and Practices to Support Successful and Inclusive Education—Thematic Report; Publications Office of the European Union: Luxembourg, 2023; Available online: https://curated-library.iiep.unesco.org/library-record/assessing-learners-competences-policies-and-practices-support-successful-and (accessed on 11 November 2025).

- European Commission/EACEA/Eurydice. Developing Key Competences at School in Europe: Challenges and Opportunities for Policy; Eurydice Report; Publications Office of the European Union: Luxembourg, 2012; Available online: https://eurydice.eacea.ec.europa.eu/publications/developing-key-competences-school-europe-challenges-and-opportunities-policy (accessed on 11 November 2025).

- Pacheco, L.; Degering, L.; Mioto, F.; Gresse von Wangenheim, C.; Borgato, A.; Petri, G. Improvements in bASES21: 21st-Century Skills Assessment Model to K12. In Proceedings of the 12th International Conference on Computer Supported Education (CSEDU 2020); SciTePress: Setúbal, Portugal, 2020; Volume 1, pp. 297–307. [Google Scholar] [CrossRef]

- European Commission, Directorate-General for Education and Culture. Key Competences for Lifelong Learning; Publications Office of the European Union: Luxembourg, 2019; Available online: https://www.fi.uu.nl/publicaties/literatuur/2018_eu_key_competences.pdf (accessed on 11 November 2025).

- European Education Area. Available online: https://education.ec.europa.eu/document/action-plan-on-basic-skills-graphic-version (accessed on 16 November 2025).

- Kumar, A.P.; Nayak, A.; Shenoy, M.; Goyal, S. A novel approach to generate distractors for multiple choice questions. Expert Syst. Appl. 2023, 225, 120022. [Google Scholar] [CrossRef]

- Kar, S.S.; Lakshminarayanan, S.; Mahalakshmy, T. Basic principles of constructing multiple choice questions. Indian J. Community Fam. Med. 2015, 1, 65–69. [Google Scholar] [CrossRef]

- Shin, J.; Guo, Q.; Gierl, M.J. Multiple-choice item distractor development using topic modeling approaches. Front. Psychol. 2019, 10, 825. [Google Scholar] [CrossRef]

- Kiat, J.; Ong, A.R.; Ganesan, A. The influence of distractor strength and response order on MCQ responding. Educ. Psychol. 2018, 38, 368–380. [Google Scholar] [CrossRef]

- Toksöz, S.; Ertunc, A. Item Analysis of a Multiple-Choice Exam. Adv. Lang. Lit. Stud. 2017, 8, 141–146. [Google Scholar] [CrossRef]

- Vegada, B.; Shukla, A.; Khilnani, A.; Charan, J.; Desai, C. Comparison between three option, four option and five option multiple choice question tests for quality parameters: A randomized study. Indian J. Pharmacol. 2016, 48, 571–575. [Google Scholar] [CrossRef]

- Nwadinigwe, P.I.; Naibi, L. The number of options in a multiple-choice test item and the psychometric characteristics. J. Educ. Pract. 2013, 4, 189–196. Available online: https://www.iiste.org/Journals/index.php/JEP/article/view/9944/10148 (accessed on 2 November 2025).

- Dehnad, A.; Nasser, H.; Hosseini, A. comparison between three-and four-option multiple choice questions. Procedia-Soc. Behav. Sci. 2014, 98, 398–403. [Google Scholar] [CrossRef]

- Tarrant, M.; Ware, J.; Mohammed, A.M. An assessment of functioning and non-functioning distractors in multiple-choice questions: A descriptive analysis. BMC Med. Educ. 2009, 9, 40. [Google Scholar] [CrossRef]

- Romão, G.; Sá, M. Como elaborar questões de múltipla escolha de boa qualidade. Femina 2019, 47, 561–564. Available online: https://docs.bvsalud.org/biblioref/2019/12/1046547/femina-2019-479-561-564.pdf (accessed on 17 November 2025).

- Lai, H.; Gierl, M.J.; Touchie, C.; Pugh, D.; Boulais, A.P.; De Champlain, A. Using automatic item generation to improve the quality of MCQ distractors. Teach. Learn. Med. 2016, 28, 166–173. [Google Scholar] [CrossRef]

- Ansari, M.; Sadaf, R.; Akbar, A.; Rehman, S.; Chaudhry, Z.; Shakir, S. Assessment of distractor efficiency of MCQS in item analysis. Prof. Med. J. 2022, 29, 730–734. [Google Scholar] [CrossRef]

- Royal, K.; Stockdale, M.R. The impact of 3-option responses to multiple-choice questions on guessing strategies and cut score determinations. J. Adv. Med. Educ. Prof. 2017, 5, 84. Available online: https://pmc.ncbi.nlm.nih.gov/articles/PMC5346173/ (accessed on 17 November 2025).

- Loudon, C.; Macias-Muñoz, A. Item statistics derived from three-option versions of multiple-choice questions are usually as robust as four-or five-option versions: Implications for exam design. Adv. Physiol. Educ. 2018, 42, 565–575. [Google Scholar] [CrossRef]

- Rezigalla, A.; Eleragi, A.; Elhussein, A.; Alfaifi, J.; ALGhamdi, M.; Al Ameer, A.; Yahia, A.; Mohammed, O.; Adam, M. Item analysis: The impact of distractor efficiency on the difficulty index and discrimination power of multiple-choice items. BMC Med. Educ. 2024, 24, 445. [Google Scholar] [CrossRef]

- Opesemowo, O.A.G.; Opatunji, K.O.; Babatimehin, T.; Opesemowo, T.R. Analysis of 2022 and 2023 Osun State basic education certificate examination mathematics items using item response theory: Implications for large scale assessment. Soc. Sci. Humanit. Open 2026, 13, 102381. [Google Scholar] [CrossRef]

- Kasali, J.; Opesemowo, O.A.; Faremi, Y.A. Psychometric analysis of senior secondary school certificate examination (SSCE) 2017 NECO English language multiple choice test item in KWARA state using item response theory. J. Appl. Res. Multidiscip. Stud. 2022, 3, 83–102. [Google Scholar] [CrossRef]

- Kumar, M. A study on importance of microsoft excel data analysis statistical tools in research works. J. Manag. Educ. Res. Innov. 2023, 1, 25–33. [Google Scholar] [CrossRef]

- Marôco, J. Analise Estatística Com o SPSS Statistics, 8th ed.; ReportNumber: Lisboa, Portugal, 2021. [Google Scholar]

- Goals 4 Ensure Inclusive and Equitable Quality Education and Promote Lifelong Learning Opportunities for All. Available online: https://sdgs.un.org/goals/goal4 (accessed on 24 November 2025).

| θ | P Item 1 | P Item 5 | P Item 8 |

|---|---|---|---|

| −4 | 0.20 | 0.20 | 0.22 |

| −3.5 | 0.20 | 0.20 | 0.22 |

| −3 | 0.20 | 0.20 | 0.22 |

| −2.5 | 0.21 | 0.20 | 0.22 |

| −2 | 0.22 | 0.21 | 0.22 |

| −1.5 | 0.25 | 0.23 | 0.23 |

| −1 | 0.30 | 0.27 | 0.23 |

| −0.5 | 0.38 | 0.34 | 0.24 |

| 0 | 0.48 | 0.44 | 0.25 |

| 0.5 | 0.59 | 0.56 | 0.26 |

| 1 | 0.69 | 0.68 | 0.28 |

| 1.5 | 0.78 | 0.78 | 0.30 |

| 2 | 0.85 | 0.86 | 0.33 |

| 2.5 | 0.90 | 0.91 | 0.36 |

| 3 | 0.93 | 0.94 | 0.39 |

| 3.5 | 0.95 | 0.96 | 0.42 |

| 4 | 0.96 | 0.97 | 0.45 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Faria, A.; Miranda, G.L. Operationalising CTT and IRT in Spreadsheets: A Methodological Demonstration for Classroom Assessment. Analytics 2026, 5, 12. https://doi.org/10.3390/analytics5010012

Faria A, Miranda GL. Operationalising CTT and IRT in Spreadsheets: A Methodological Demonstration for Classroom Assessment. Analytics. 2026; 5(1):12. https://doi.org/10.3390/analytics5010012

Chicago/Turabian StyleFaria, António, and Guilhermina Lobato Miranda. 2026. "Operationalising CTT and IRT in Spreadsheets: A Methodological Demonstration for Classroom Assessment" Analytics 5, no. 1: 12. https://doi.org/10.3390/analytics5010012

APA StyleFaria, A., & Miranda, G. L. (2026). Operationalising CTT and IRT in Spreadsheets: A Methodological Demonstration for Classroom Assessment. Analytics, 5(1), 12. https://doi.org/10.3390/analytics5010012