Integrating Continuous Compliance into DevSecOps Pipelines: A Data Engineering Perspective

Abstract

1. Introduction

1.1. Problem Statement: The Audit Velocity Mismatch

1.2. State-of-the-Art and Research Gaps

- Lifecycle-Wide Evidence Correlation: Prior work records or governs individual events or artifacts, but provides limited architectural guidance for correlating source code changes, build outputs, security scans, policy decisions, and deployment state into a single, continuous compliance record.

- Queryable, Time-Indexed Compliance State: Although audit logs and governance controls are well studied, most approaches rely on static records or retrospective analysis. There is a lack of architectures that support real-time or historical querying of compliance state across pipeline stages and runtime environments.

- Unified Treatment of Heterogeneous Artifacts: SBOMs, static analysis results, infrastructure scans, and attestations are typically generated and stored independently. The literature does not yet converge on a canonical data abstraction that models these outputs as a cohesive, versioned data stream suitable for systematic compliance analytics.

1.3. Proposed Solution: The Continuous Compliance Framework (CCF)

1.4. Research Questions and Contributions

- RQ1: How can compliance be represented as a computable, continuously verifiable system property?

- RQ2: How can heterogeneous DevSecOps artifacts be unified into a queryable compliance provenance model?

- RQ3: What architectural trade-offs emerge when compliance is enforced pre-, intra-, and post-deployment?

- A Three-Plane Continuous Compliance Framework (CCF): A conceptual and operational reference architecture that decouples policy definition, data instrumentation, and cryptographic validation.

- Compliance Data Lakehouse and Multi-dimensional Lineage Graph: A novel data engineering pattern for persisting heterogeneous compliance events in a versioned, queryable format that maintains the “Golden Thread” of provenance across the software supply chain.

- End-to-End DevSecOps Integration Pattern: A practical blueprint for embedding automated validation gates into CI/CD pipelines using Policy-as-Code (PaC) and verifiable attestations.

- Extensibility to AI/ML Governance: A demonstration of how the framework scales to address emerging regulatory requirements for model lineage, training data transparency, and algorithmic accountability.

1.5. Methodology and Research Positioning

1.6. Paper Structure

2. Background and Foundational Technologies

2.1. Compliance Anchors (The “What”)

- Regulatory Frameworks: These provide the high-level objectives. Our model aims to provide the technical evidence for audits against standards like ISO/IEC 27001 (Information Security Management Systems, or ISMS) [6], SOC 2 (Trust Services Criteria, specifically Security, Availability, and Confidentiality) [7], GDPR (data protection and privacy) [8], and HIPAA (data privacy in healthcare) [9]. The framework translates their requirements (e.g., access control, data encryption) into specific, verifiable queries (e.g., ”Show me all database instances that do not have encryption-at-rest enabled”).

- Domain-Specific Standards: These provide more concrete technical controls. PCI-DSS (Payment Card Industry Data Security Standard) [10] offers explicit rules, such as network segmentation and encryption key management, that are highly amenable to automation [10]. Furthermore, emerging AI governance frameworks (e.g., NIST AI RMF [11], ISO/IEC 42001 [12], IEEE p3395 [13]) are being treated as technical, data-driven requirements. They mandate controls for data provenance, model lineage, bias detection, and model drift, all of which are fundamentally data engineering problems that should be solved within the pipeline.

2.2. Engineering Anchors (The “How”)

- Policy-as-Code (PaC) Engines: At the heart of automated compliance is the ability to express policies in a declarative, machine-readable format. Instead of a 100-page PDF, the policy becomes a version-controlled code artifact.

- Open Policy Agent (OPA): OPA [14] has emerged as the lingua franca for this domain. Using its query language, Rego, organizations can write a single policy and enforce it across the entire CI/CD pipeline. The same Rego policy can validate a Terraform plan (Infrastructure-as-Code), a Kubernetes manifest (admission control), and even an API call (microservice authorization).

- Kubernetes-native Policy: Tools like Kyverno [15] provide a similar function but are deeply integrated into Kubernetes, allowing for policy-based mutation and validation of resources as they enter the cluster.

- Supply Chain Security and Attestation: A compliance report is only as good as the integrity of the data on which it is based. Recent advances in software supply chain security provide the “cryptographic glue” for a verifiable compliance system.

- SLSA (Supply-level Security for Software Artifacts): SLSA [16] is a framework, not a tool. It provides a common language for defining the maturity and security of a build pipeline (e.g., ”SLSA Build Level 3“ requires a non-forgeable, versioned build service). This framework allows compliance to set tangible targets for pipeline security.

- Sigstore: The Sigstore project [17] (including tools like Cosign, Fulcio, and Rekor) provides the mechanism for achieving SLSA’s goals. It enables “keyless” signing of software artifacts, build steps, and policy results. A build process can cryptographically attest that it passed a vulnerability scan. This signature is then stored in a tamper-evident transparency log (Rekor). This is the critical mechanism for future-proofing audit trails: it provides a non-repudiable, verifiable, and timestamped record that a specific compliance check occurred and passed.

- In-toto: This framework [18] defines a layout or ”blueprint“ of what is supposed to happen in a pipeline. By creating a signed layout, a final product can be verified against the expected steps, ensuring no unauthorized or non-compliant steps (e.g., a test step being skipped) were part of the process. Together, these technologies form a new ”compliance fabric”, allowing us to build systems that are not just compliant by design but verifiable in operation.

3. The Continuous Compliance Framework (CCF)

3.1. Policy Definition and Management Plane (The ”Specification”)

- Declarative Policy-as-Code (PaC): This is the core principle. All compliance rules are written in a declarative Domain-Specific Language (DSL), such as Rego (for OPA), Kyverno, or HashiCorp Sentinel. For example, a rule for SOC 2 (CC6.1) requiring infrastructure to be provisioned via approved, version-controlled scripts can be expressed as a policy that rejects any Terraform plan that does not originate from the blessed-for-production git repository. Similarly, a PCI-DSS rule for access control can be written to assert that no Kubernetes deployment.yaml file mounts a secret as a plain-text environment variable.

- Policy Lifecycle Management: Policies are no longer static documents; they are software artifacts. As such, they require their own CI/CD pipeline. This “Policy-CI” pipeline involves the following:

- ○

- Definition: Translating regulatory text into a human-readable YAML or JSON, which is then compiled into a machine-interpretable rule (e.g., Rego).

- ○

- Testing: Policies are unit-tested and integration-tested against a corpus of “known-good” and “known-bad” input data (e.g., sample Terraform plans, Dockerfiles, or K8s manifests).

- ○

- Deployment: Once validated, policies are versioned, packaged (e.g., as an OPA Bundle), and deployed to the various enforcement points (CI runners, K8s admission controllers).

- ○

- Monitoring: This plane monitors policy effectiveness, such as the frequency of policy failures, to identify and tune rules that may be overly restrictive or permissive.

- Automated Remediation and Exception Workflows: This plane also defines the response to a policy violation. While the default response is to fail a pipeline, a mature implementation supports automated remediation (e.g., a Kubernetes mutating webhook that automatically adds a securityContext or readOnlyRootFilesystem = true). Crucially, it must also provide a technical workflow for handling exceptions. An ”exception” is not a verbal agreement; it is a time-bound, auditable, and cryptographically signed attestation (e.g., a JSON object signed by a security officer) attached to a specific artifact, granting it a temporary waiver of a specific policy.

3.2. Instrumentation and Data Collection Plane (The ”Observation”)

- Artifact Generation and Collection: This layer instruments the toolchain to produce and collect standardized, machine-readable artifacts at each stage of the pipeline. Key artifacts include the following:

- SBOMs (Software Bill of Materials): CycloneDX or SPDX formats [19] generated during the build stage to create a complete manifest of all first-party and third-party components, including transitive dependencies.

- VEX (Vulnerability Exploitability eXchange): A critical companion to SBOMs, VEX documents provide a machine-readable statement about the exploitability of vulnerabilities, allowing the Validation Plane to programmatically filter noise from scanners (e.g., ”CVE-12345 is present but not exploitable because the affected code path is not used”).

- Static Analysis Results: Standardized outputs, such as SARIF (Static Analysis Results Interchange Format) [20], from a variety of tools. This includes SAST (e.g., semgrep), DAST, secrets scanning, and Infrastructure-as-Code scanners (e.g., tfsec, checkov).

- Data-centric Metadata: This is a crucial, data-engineering-focused component. The framework extends beyond just code and artifacts to include the data itself. This involves the following:

- ○

- Data Lineage: Capturing data transformation lineage from tools like dbt [21], providing a verifiable chain of custody from raw data to a business intelligence dashboard.

- ○

- PII/SPI Scanning: Integrating data quality and scanning tools (e.g., Great Expectations, soda-core) [22] to scan schemas and data samples, producing metadata that flags PII (Personally Identifiable Information) or SPI (Sensitive Personal Information). This metadata can then be used to trigger policies in the Validation Plane.

3.3. Validation and Attestation Plane (The ”Enforcement”)

- Automated Checkpoints (Stage Gates): These are the CI/CD pipeline jobs (opa eval…, cosign verify…) that act as automated quality and compliance gates. At each transition (e.g., from build to test, or test to deploy), a checkpoint queries the Policy Plane. For example, a pre-deployment gate would take the target Docker image’s SBOM (from the Instrumentation Plane) and ask the Policy Plane, ”Does this SBOM contain any ‘Critical’ vulnerabilities (that are not waived via a VEX) or any ‘GPL’ licensed-dependencies?” The pipeline only proceeds if the policy returns true.

- Cryptographic Attestation Generation: This is the critical link that transforms compliance from a ”check-the-box” activity into a verifiable, data-centric process. When a checkpoint passes, it does more than write a log. It generates a structured metadata object (an ”attestation”) stating what was proven about which artifact. For example: {”artifact-sha”: ”sha256:abc…”, ”policy-version”: ”sec-policy-v1.4”, ”result”: “pass”, ”scanner”: ”Trivy-v0.3.1”} This attestation is then cryptographically signed (e.g., using cosign attest) and stored, often in the same registry as the artifact itself or in a transparency log like Rekor. The build artifact now carries an immutable, verifiable proof of its compliance state.

- Real-time Enforcement (Admission and Runtime): The framework’s enforcement capability extends beyond the CI pipeline into the runtime environment.

- Kubernetes Admission Controllers: A K8s admission controller (like Kyverno or OPA Gatekeeper) can be configured to intercept all deploy requests. Its policy is not just ”is the user authorized?” but ”does this container image have a valid, signed attestation from our CI pipeline proving it passed the ‘SAST’ and ‘CVE’ policies?” This independently verifies the build-time attestation, creating a Zero-Trust handoff between CI and CD.

- Service Mesh/ZTA: In a mature Zero-Trust Architecture (ZTA), this concept extends to service-to-service communication. Using workload identity (e.g., SPIFFE/SPIRE), a service mesh sidecar (e.g., Envoy) can query OPA to enforce policies like, ”Only services with a valid, non-expired pci-compliant runtime attestation can access the ‘payments’ database.” This makes compliance a dynamic, continuous property of the running system. Similar mechanisms for behavioral threat detection and automated policy enforcement in DevSecOps environments have been demonstrated in prior research [24].

4. Data Engineering Foundation: The Compliance Data Lakehouse

4.1. Canonical Event Model and Ingestion

- EventEnvelope: Contains common metadata: eventID, eventTimestamp, sourceTool (e.g., Trivy, OPA, dbt), correlationID (e.g., git_commit_sha, build_id).

- EventPayload: Contains the raw or standardized artifact (e.g., the SARIF object, the CycloneDX SBOM).

4.2. The ”Compliance Data Lakehouse” Architecture

4.2.1. Architectural Motivation and Design Rationale

4.2.2. Core Architectural Properties for Compliance Data

- ACID Transactions: Atomic writes ensure that a batch of evidence, such as a set of container vulnerability scans, is either fully committed or not recorded at all. This prevents partial or corrupt data from entering the auditable record, ensuring ”all-or-nothing” integrity for every pipeline run.

- Data Versioning (“Time Travel”): This is arguably the most critical feature for compliance. The lakehouse maintains a versioned history of table states, allowing an auditor to deterministically query the state of the compliance data as it was at any point in the past. An auditor can ask, ”Show me the vulnerability status of service ‘X’ as it was on 15 January, before the log4j vulnerability was known”, and then compare it to the status on 16 January. This provides an immutable, versioned history of the organization’s compliance posture.

- Schema Enforcement and Evolution: The lakehouse can enforce the canonical event schema to ensure data quality while supporting graceful schema evolution as new tools or artifact types are added. This architecture enables auditors and automated systems to run powerful, high-level SQL queries against the entire compliance record, such as the following:

- ○

- SELECT * FROM compliance_events WHERE timestamp BETWEEN ‘…’ AND ‘…’ AND policy_id = ‘pci-dss-3.4’ AND result = ‘FAIL’

- ○

- SELECT service_name, cve_id FROM vulnerability_reports WHERE severity = ‘CRITICAL’ AND last_seen_timestamp > (NOW()—INTERVAL ‘30 days’)

- ○

- SHOW HISTORY FOR/path/to/my/app/sbom (a command to see all versions of a specific artifact’s metadata).

4.3. Multi-Dimensional Lineage Graph (The ”Golden Thread”)

- Software Supply Chain Lineage (Code-to-Artifact): Connects a git_commit_sha to the build_id that processed it, which in turn connects to the docker_image_sha (or other binary) it produced.

- Operational Lineage (Artifact-to-Runtime): Connects the docker_image_sha to the kubernetes_deployment that deployed it, which connects to the pod_id and node_ip where it is currently running. This allows an operator to instantly answer, ”Where is the code from commit abc123 running right now?”

- Data Lineage (Data-to-System): This is the data-engineering-specific dimension. Using metadata from tools like dbt (data lineage) and PII scanners (data classification), this graph connects a database table (e.g., users) to the service (e.g., user-profile-svc) that consumes it, and to the classification of the data it contains (e.g., PII: TRUE).

- (Future-Proofing) AI/ML Provenance (Model-to-Data/MLOps): This extends the graph to AI/ML workflows, which is essential for AI compliance (e.g., NIST AI RMF). This connects a specific model_version: v1.2.3 to the training_pipeline_run: #456 that produced it, which connects to the dataset_hash: s3://…/data-v4-hash it was trained on. This is the only technical way to prove that a model was not trained on non-consensual or biased data.

4.4. Automated Policy Enforcement and Continuous Assurance

4.4.1. Data-Driven Policy-as-Code (PaC)

- The container image was built from a trusted repository (Supply Chain Lineage).

- All security scans were completed with no critical vulnerabilities (Instrumentation Data).

- A valid cryptographic attestation exists for each stage of the pipeline (Verification).

4.4.2. Continuous Assurance and Feedback Loops

- Synchronous Gating (Admission Control): During pipeline execution or at the point of deployment (e.g., via a Kubernetes Admission Controller), the framework acts as a gatekeeper. If the lineage graph or the lakehouse record indicates a compliance violation, the deployment is blocked, and a ”Compliance Failure“ event is fed back into the canonical model, notifying the developers immediately.

- Asynchronous Auditing (Drift Detection): Because the Lakehouse supports ”time-travel” queries (Section 4.2), the framework continuously scans the current runtime state against historical policies. If a new vulnerability (e.g., a Zero-Day) is discovered, the system can retroactively query the ”Golden Thread” to identify every running service that originated from the affected codebase, even if those services were compliant at the time of deployment.

4.4.3. Cryptographic Attestation and SLSA Alignment

5. Implementation Example: A Data-Handling Microservice

- Security Policy (sec.rego): ”Container image must have no ‘CRITICAL’ CVEs (CVE-202X-1234) unless a valid VEX attestation exists.”

- Infra Policy (infra.rego): ”Kubernetes deployments must have a readOnlyRootFilesystem: true and must not mount host volumes.”

- Data Privacy Policy (data.rego): ”Static analysis results (SARIF) must not contain any finding with the pii-logging tag and a ‘HIGH’ severity.”

5.1. Git Push (Commit and Pre-Flight):

- A developer pushes code with the new logging statement: logger.info(”User logged in: ” + user.email).

- A pre-commit hook (optional, but recommended) runs a local semgrep check. The check fails, blocking the commit as follows:

- bash # (Pre-commit hook output) ERROR: PII-LOGGING VIOLATION (data.rego) File: src/handlers.go:L52 logger.info(”User logged in: “ + user.email)

- The developer, now aware, refactors the code to: logger.info(“User logged in: “ + user.ID). This passes the pre-commit check.

5.2. Build (Instrumentation and Data Collection Plane): The CI Pipeline (e.g., GitHub Actions, GitLab CI) Executes Several Parallel Jobs:

- build-image: Builds the Docker image user-profile-api:sha-abc123.

- scan-sast: semgrep --sarif… runs against the source. It finds the pii-logging pattern (from the previous commit, now fixed) and flags it as resolved. It produces sast.sarif.

- scan-image: trivy image --format cyclonedx… generates sbom.cdx. trivy image --format json … generates cves.json.

- scan-iac: opa eval -d policies/infra.rego -i k8s/deployment.yaml checks the deployment manifest. It produces iac-results.json.

- ingest-evidence: All artifacts (sast.sarif, sbom.cdx, cves.json, iac-results.json) are standardized into the Canonical Event Model (Section 4.1) and pushed to the Compliance Data Lakehouse. This links them all by the correlationID: git-commit-sha-abc123. Emerging AI-assisted lineage extraction techniques can further enhance this step by deriving implicit relationships from heterogeneous metadata sources [29].

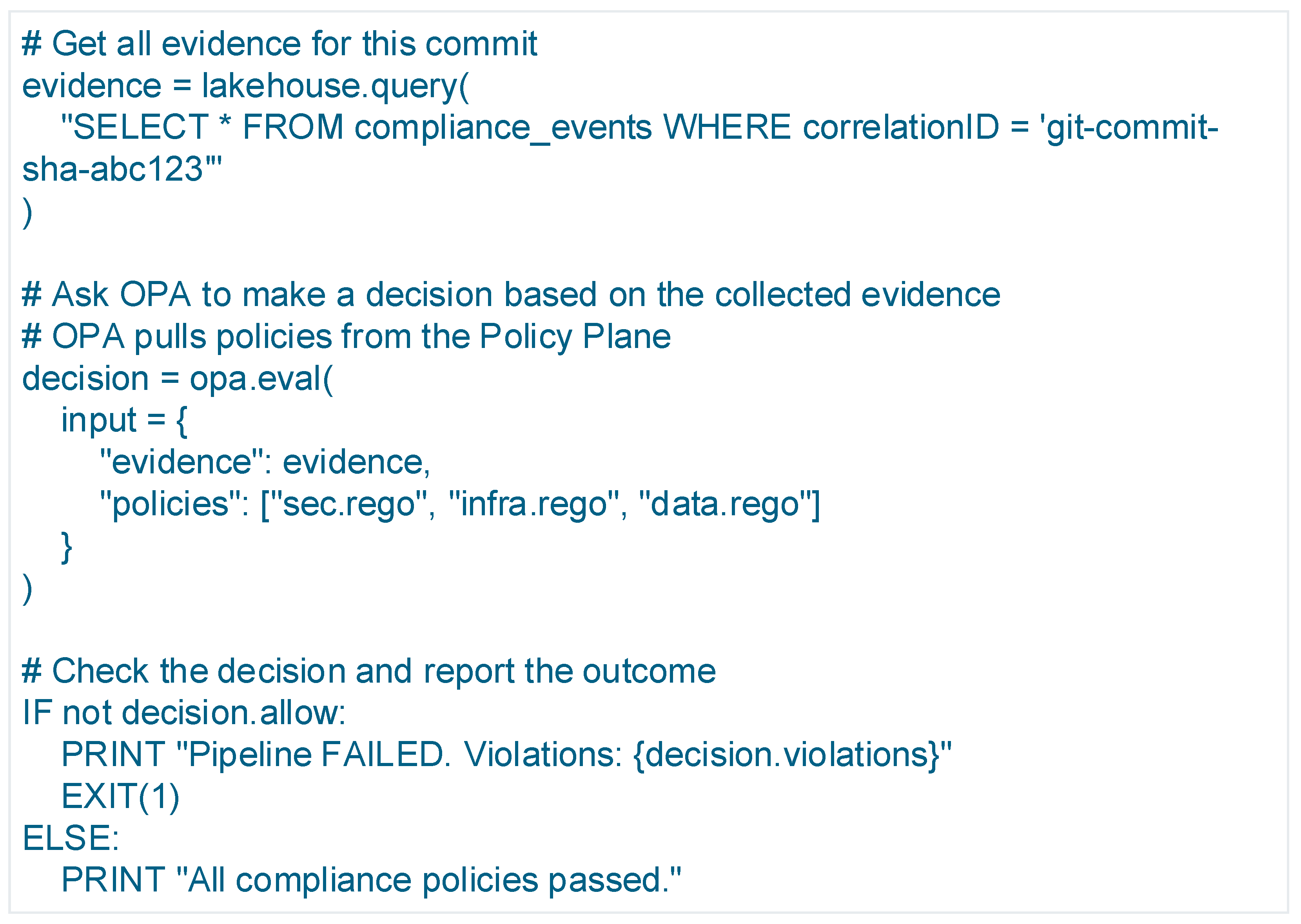

5.3. Test-and-Validate (Validation Plane): This Is a Blocking ”Stage Gate” Job That Does Not Re-Run Scans, Instead, It Queries the Policy Plane (OPA) Using the Data Just Ingested into the Lakehouse (Figure 3)

5.4. Attest-and-Publish (Attestation Plane): Since the Validation Gate Passed, the Pipeline Now Proves It

- The pipeline generates a JSON attestation:

- json { ”predicateType”: ”https://slsa.dev/provenance/v0.2”, ”subject”: [{”name”: ”user-profile-api”, ”digest”: ”sha256:abc123…”}], ”predicate”: { ”policy_evaluation”: { ”policy_bundle_version”: ”governance-policies:v1.4.2”, ”result”: ”PASSED”, ”evidence_correlation_id”: ”git-commit-sha-abc123” } } }

- It then signs this attestation using Sigstore:

- bash # Sign the image digest with the attestation cosign attest --key $MY_KEY --type ”slsaprovenance” \ --predicate attestation.json \ https://registry.mycorp.com/user-profile-api@sha256:abc123…

- The signed attestation is pushed to the OCI registry alongside the image, and the signature is recorded in the Rekor transparency log.

5.5. Deploy (Runtime Enforcement):

- The developer (or an automated CD tool) submits k8s/deployment.yaml to the Kubernetes API.

- A Kyverno/OPA Gatekeeper admission controller (running in the cluster) intercepts the request.

- It performs an independent, real-time verification using Sigstore (Figure 4):

- Because the image sha256:abc123… has a valid attestation that matches the policy, the admission controller allows the pod to be scheduled. If an unattested or a failed-policy image were submitted, the API server would reject it.

6. Experimental Evaluation

6.1. Experimental Environment

- CPU: AMD FX-8120

- Memory: 16 GB DDR3 RAM

- Operating Environment: Local Docker runtime

- Toolchain: Docker (version 29.1.3), Syft (version 1.39.0), Trivy (version 0.68.2 using vulnerability DB version 2 updated at 3 January 2026 00:35:09 +0000 UTC), Open Policy Agent (OPA) (version 1.12.1), Python (version 3.13.2), Cosign (version 3.0.3)

- Execution Model: Serial pipeline execution via shell script

6.2. Pipeline Structure and Validation Methodology

- Build: Container image construction.

- Instrumentation: SBOM generation and vulnerability scanning.

- Policy Evaluation: Declarative compliance evaluation using OPA.

- Artifact Persistence: Ingestion of compliance artifacts into a queryable store.

- Attestation: Cryptographic signing of compliance results.

- Instrumentation Plane: SBOM and vulnerability artifacts,

- Validation Plane: Policy decisions,

- Policy Plane: Rego-based compliance rules, with cryptographic linkage enabling end-to-end provenance.

6.3. Policy Enforceability and Determinism

- Compliance rules are machine-enforceable,

- Policy decisions are deterministic given identical inputs,

- Policy logic remains decoupled from underlying tooling.

6.4. Provenance Completeness and Auditability

- Container image digest,

- Software Bill of Materials (SBOM),

- Vulnerability scan results,

- Policy evaluation output,

- Cryptographic attestation.

6.5. Pipeline Overhead Analysis

- Instrumentation dominates execution time, primarily due to SBOM generation, consistent with expectations for deep dependency analysis.

- Policy evaluation latency is negligible relative to scanning and build steps.

- Cryptographic attestation adds only seconds, even on legacy hardware.

- Total pipeline overhead remains well under one minute in a fully serial execution model.

6.6. Audit Readiness and Query Demonstration

- “Which policy evaluations validated this artifact?”

- “What vulnerabilities were present at build time?”

- “Which compliance rules were enforced prior to deployment?”

6.7. AI Governance Extensibility

- Training dataset provenance,

- Model version lineage,

- Evaluation and bias metrics,

- Deployment attestations.

6.8. Summary

- Architecturally sound,

- Operationally feasible,

- Low-overhead, even on constrained hardware,

- Audit-ready by construction,

- Extensible to AI governance use cases.

7. Discussion and Implications

7.1. Performance and Overhead Analysis

- CI Pipeline Latency: The primary overhead introduced in the “hot path” (the developer’s wait time) is the stage gates in the Validation Plane (Section 3.3).

- Scanning: Tools like semgrep, trivy, and tfsec are highly optimized and typically complete in seconds to low minutes, not hours. By running these jobs in parallel (as shown in Section 5.2), the added latency is the max() of the scan times, not the sum().

- Data Ingestion: The decoupled ingestion model (Section 4.1) using an event bus or object storage is asynchronous. The CI pipeline ”fires-and-forgets” the evidence artifacts, introducing negligible latency (e.g., <1 sec for an S3 put or Kafka produce).

- Validation Gate: The synchronous gate (Section 5.3) performs a policy query. OPA is designed for high-performance, sub-millisecond decision-making. Hence, the bottleneck is not the policy engine itself, but the query to the data lakehouse. For hot path validation, a ”read-after-write” pattern against the lakehouse (or even a simpler key-value store cache) is required.

- Attestation: Cryptographic signing (Section 5.4) is extremely fast (milliseconds).

- Overall Impact: It is projected that a mature CCF implementation, when properly parallelized, adds less than 5–10% to the total pipeline execution time, an acceptable trade-off for the automated assurance and risk reduction it provides.

- Data Storage Cost: The artifacts (SBOMs, SARIF, attestations) are JSON or text and compress well. The data volume per pipeline run is in megabytes, not gigabytes. The storage cost in a cloud object store is minimal. The primary cost is in the query engine (e.g., Databricks, Snowflake, BigQuery) used to run the Compliance Data Lakehouse, which is a predictable operational expense.

7.2. Qualitative and Quantitative Benefits

- Audit Readiness and Cost Reduction: This is the most immediate quantitative benefit. The time required for auditors to gather Provided by Client (PBC) evidence is reduced from weeks of manual data calls (screenshots, interviews) to minutes. The Compliance Data Lakehouse (Section 4.2) and Lineage Graph (Section 4.3) provide a self-service, queryable, and verifiable data store that produces audit evidence on demand. The centrality of provenance and analytics governance in ensuring trustworthy decision-making in data-driven environments is well established in prior research [34].

- Mean Time to Remediation (MTTR) for Compliance: The framework acts as a “shift-left” mechanism for compliance. A violation (e.g., PII logging) is caught in the pre-commit or build phase (Section 5.1), not in a quarterly audit six months after deployment. The developer is notified in their native workflow (a failed PR) and can remediate the issue immediately. This reduces the MTTR for compliance-related issues from months to minutes.

- Reduction in ”Compliance Drift”: Compliance drift is the divergence of a running system’s state from its intended, as-code policy. By extending the CCF into runtime enforcement (Section 3.3) via admission controllers and service mesh policies, the system can prevent drift (e.g., rejecting a non-compliant kubectl apply) and detect it in real time.

7.3. Discussion: Future-Proofing and Advanced Applications

- From Blocking to Self-Healing: The framework can be extended from simply blocking non-compliant actions to automatically remediating them. A Kubernetes mutating admission controller, guided by the Policy Plane, can automatically add a required securityContext, a networkPolicy, or a sidecar proxy to a deployment, enforcing compliance without developer intervention.

- Runtime Compliance (ZTA): The model naturally extends to a Zero-Trust Architecture (ZTA). As discussed in (Section 3.3), a service mesh (e.g., Istio, Linkerd) can integrate OPA as an external authorizer. The OPA policy, now operating at runtime, can query the Compliance Data Lakehouse to make dynamic, attribute-based access control (ABAC) decisions. For example:

- Policy: ”Allow service-A to access service-B only if service-A’s production attestation (from the CI pipeline) is less than 30 days old and service-B’s runtime attestation shows no unpatched critical CVEs.”

- AI/ML Governance (MLOps): This is the most critical future-proofing capability. Emerging AI regulations (e.g., NIST AI RMF, EU AI Act) are fundamentally data-driven. The Multi-dimensional Lineage Graph (Section 4.3) provides a practical technical mechanism to satisfy these requirements. By extending the graph to include model_version, training_dataset_hash, and bias_test_results, an organization can prove its compliance with AI regulations. The platform can answer audit queries like, ”Show me all production models that were trained on dataset X”, or ”Block the deployment of any model that scored above a 0.8 on the disparate_impact bias test.” A broad survey of AI governance challenges confirms the necessity of such traceability mechanisms to ensure accountability, transparency, and regulatory alignment across AI-enabled systems [35].

7.4. Limitations and Scope Constraints

8. Conclusions

- The Policy Plane defines compliance as executable, version-controlled code (e.g., Rego).

- The Instrumentation Plane collects verifiable evidence (SBOMs, SARIF, scan results) from the pipeline.

- The Validation and Attestation Plane uses cryptographic tools (Sigstore) to create non-repudiable proof that policies were enforced.

Funding

Data Availability Statement

Conflicts of Interest

References

- Qureshi, J.N.; Farooq, M.S. ChainAgile: Enhancing agile DevOps using blockchain integration. PLoS ONE 2024, 19, e0299324. [Google Scholar]

- Pourmajidi, W.; Zhang, L.; Steinbacher, J.; Erwin, T.; Miranskyy, A. A Reference Architecture for Governance of Cloud Native Applications. arXiv 2025, arXiv:2302.11617v2. [Google Scholar] [CrossRef]

- Lourenço, B.; Adão, P.; Ferreira, J.F.; Marques, M.M.; Vaz, C. Structuring Security: A Survey of Cybersecurity Ontologies, Semantic Log Processing, and LLM Applications. arXiv 2025, arXiv:2510.16610. [Google Scholar] [CrossRef]

- Chittala, S. Securing DevOps Pipelines: Automating Security in DevSecOps Frameworks. J. Recent Trends Comput. Sci. Eng. 2024, 12, 31–44. [Google Scholar] [CrossRef]

- Hevner, A.R.; March, S.T.; Park, J.; Ram, S. Design Science in Information Systems Research. Manag. Inf. Syst. Q. 2004, 28, 75–105. [Google Scholar] [CrossRef]

- ISO/IEC 27001:2022; Information Security, Cybersecurity and Privacy Protection—Information Security Management Systems. International Organization for Standardization: Geneva, Switzerland, 2022.

- AICPA. SOC 2 Trust Services Criteria; AICPA: Durham, NC, USA, 2017. [Google Scholar]

- European Parliament. General Data Protection Regulation (GDPR); European Parliament: Strasbourg, France, 2016; Available online: https://gdpr-info.eu/ (accessed on 15 January 2026).

- U.S. Department of Health & Human Services. Health Insurance Portability and Accountability Act (HIPAA); U.S. Department of Health & Human Services: Washington, DC, USA, 1996; Available online: https://aspe.hhs.gov/reports/health-insurance-portability-accountability-act-1996 (accessed on 15 January 2026).

- PCI Security Standards Council. Payment Card Industry Data Security Standard (PCI-DSS); PCI Security Standards Council: Wakefield, MA, USA, 2022; Available online: https://www.pcisecuritystandards.org/ (accessed on 15 January 2026).

- NIST. AI Risk Management Framework 1.0; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2023; Available online: https://www.nist.gov/itl/ai-risk-management-framework (accessed on 15 January 2026).

- ISO/IEC 42001:2023; Information Technology—Artificial Intelligence—Management System. International Organization for Standardization: Geneva, Switzerland, 2023.

- IEEE. P3395 Draft Standard for AI Risk Management; IEEE: New York, NY, USA, 2024; Available online: https://standards.ieee.org/ieee/3395/11378/ (accessed on 15 January 2026).

- Open Policy Agent (OPA). 2023. Available online: https://www.openpolicyagent.org (accessed on 1 February 2026).

- Kyverno: Kubernetes Native Policy Management. 2023. Available online: https://kyverno.io (accessed on 1 February 2026).

- SLSA: Supply-Chain Levels for Software Artifacts. 2023. Available online: https://slsa.dev (accessed on 1 February 2026).

- The Sigstore Project. 2023. Available online: https://sigstore.dev (accessed on 1 February 2026).

- Torres-Arias, S.; Afzali, H.; Kuppusamy, T.K.; Curtmola, R.; Cappos, J. in-toto: Providing Farm-to-Table Guarantees for Bits and Bytes. In Proceedings of the 28th USENIX Security Symposium (USENIX Security 19), Santa Clara, CA, USA, 14–16 August 2019. [Google Scholar]

- Linux Foundation. CycloneDX SBOM Standard. 2023. Available online: https://cyclonedx.org (accessed on 1 February 2026).

- OASIS. Static Analysis Results Interchange Format (SARIF); OASIS Open: Woburn, MA, USA, 2022. [Google Scholar]

- dbt Labs Documentation. 2023. Available online: https://getdbt.com (accessed on 1 February 2026).

- Great Expectations Documentation. 2023. Available online: https://docs.greatexpectations.io (accessed on 1 February 2026).

- Chandramouli, R.; Kautz, F.; Torres-Arias, S. NIST SP 800-204D; Strategies for the Integration of Software Supply Chain Security in DevSecOps CI/CD Pipelines. National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024.

- Kamaluddin, K. Security Policy Enforcement and Behavioral Threat Detection in DevSecOps Pipelines. Eur. J. Technol. 2022, 6, 10–30. [Google Scholar] [CrossRef]

- LF AI & Data Foundation. OpenLineage Specification. 2023. Available online: https://openlineage.io (accessed on 1 February 2026).

- Databricks. What Is a Data Lakehouse? 2021. Available online: https://databricks.com/glossary/data-lakehouse (accessed on 1 February 2026).

- Verma, R.; Shrivastava, P.; Merla, N. Tracing the Path: Data Lineage and Its Impact on Data Governance. Int. J. Glob. Innov. Solut. 2024, 1, 1–10. [Google Scholar] [CrossRef]

- Sweet, E. Data Lineage and Compliance. ISACA J. 2016, 5, 1–4. [Google Scholar]

- Li, Z.; Guo, W.; Gao, Y.; Yang, D.; Kang, L. A Large Language Model–Based Approach for Data Lineage Parsing. Electronics 2025, 14, 1762. [Google Scholar] [CrossRef]

- Tyagi, D.; Sharada Devi, P.P. The Importance of Data and Analytics Provenance and Governance in the Realm of Datafication. Int. J. Comput. Trends Technol. 2022, 70, 28–33. [Google Scholar] [CrossRef]

- Adeyinka, A. Automated compliance management in hybrid cloud architectures. World J. Adv. Eng. Technol. Sci. 2023, 10, 283–297. [Google Scholar] [CrossRef]

- Antiya, D.S. Compliance as Code: Automating Compliance in Cloud Systems. Int. J. Recent Innov. Trends Comput. Commun. 2020, 8, 42–49. [Google Scholar]

- Zakharchenko, A. Continuous Compliance Framework (CCF) Experimental Pipeline. GitHub Repository. 2026. Available online: https://github.com/zakhalex/ccf-experiment (accessed on 4 January 2026).

- Lu, Q.; Zhu, L.; Xu, X.; Whittle, J.; Zowghi, D.; Jacquet, A. Responsible AI Pattern Catalogue: A Collection of Best Practices for AI Governance and Engineering. arXiv 2022, arXiv:2209.04963. [Google Scholar] [CrossRef]

- Batool, A.; Zowghi, D.; Bano, M. AI governance: A systematic literature review. AI Ethics 2025, 5, 3265–3279. [Google Scholar] [CrossRef]

| Dimension | Qureshi and Farooq (2024)–ChainAgile [1] | Pourmajidi et al. (2025)–Cloud-Native Governance [2] | Lourenço et al. (2025)–Security Ontologies Survey [3] | Chittala (2024)–DevSecOps Security Frameworks [4] | CCF |

|---|---|---|---|---|---|

| Primary Focus | Agile DevOps coordination using blockchain-backed logs | Governance reference architecture for cloud-native systems | Conceptual survey of security ontologies and semantic processing | Automation of security checks in DevSecOps pipelines | Continuous compliance as a data engineering problem |

| Scope of Compliance | Process integrity and traceability of DevOps actions | Policy and governance control points | Conceptual mapping of security knowledge | Security controls and pipeline hardening | End-to-end regulatory, security, and governance compliance |

| Pipeline Integration | Partial (log anchoring post-events) | Architectural guidance, not executable | Not applicable (survey) | CI/CD-stage security automation | Native, continuous CI/CD integration |

| Evidence Collection Model | Immutable logs via blockchain | Event and control abstractions | Conceptual discussion of semantic logs | Tool-specific scan outputs | Canonical event model for heterogeneous artifacts |

| Historical State Reconstruction | Limited (log inspection) | Conceptual (no concrete mechanism) | Discussed conceptually | Not addressed | Deterministic time-travel queries via lakehouse |

| Queryability of Compliance State | Manual or ad hoc log analysis | Not explicitly supported | Discussed at a conceptual level | Tool-dependent, siloed | Unified, SQL-accessible compliance data product |

| Artifact Unification | No | Partial (conceptual abstractions) | Ontological classification | No | Yes (SBOMs, scans, attestations as versioned data) |

| Automated Policy Enforcement | Not addressed | Governance guidance, not enforcement | Not applicable | Rule-based security checks | Data-driven, lineage-aware PaC enforcement |

| Audit Readiness | Improves log integrity | Conceptual governance support | Theoretical | Limited to security findings | Auditable-by-default with cryptographic attestations |

| Validation Approach | Conceptual + illustrative examples | Architectural modeling | Literature survey | Descriptive framework | End-to-end experimental validation |

| Key Limitation | Lacks active enforcement and data integration | No concrete data model or evaluation | No operational architecture | Siloed security focus | - |

| Standard/Regulation | Control Objective | Example Control | CCF Plane | Artifact |

|---|---|---|---|---|

| ISO/IEC 27001 | Change management | Changes must be authorized and traceable | Policy Plane | Rego policy |

| SOC 2 | System integrity | Unauthorized changes prevented | Validation Plane | Admission decision |

| PCI DSS | Vulnerability management | No critical CVEs in production | Instrumentation Plane | CVE scan, SBOM |

| NIST AI RMF | Transparency | Training data provenance | Data Lakehouse | Lineage graph |

| GDPR | Data minimization | No PII logging | Policy Plane | SARIF result |

| Capability | Traditional GRC | Policy-as-Code (OPA/Kyverno) | CI-Only Compliance | CCF (This Work) |

|---|---|---|---|---|

| Pre-deploy enforcement | No | Yes | Yes | Yes |

| Runtime enforcement | No | Partial | No | Yes |

| End-to-end provenance | No | No | Partial | Yes |

| Queryable audit evidence | No | No | No | Yes |

| Cryptographic attestation | No | No | Partial | Yes |

| AI/ML lineage support | No | No | No | Yes |

| Stage | Mean (ms) | Min (ms) | Max (ms) | Std Dev (ms) |

|---|---|---|---|---|

| Build | 6862 | 5799 | 7557 | 486 |

| SBOM Generation | 28,493 | 25,494 | 32,191 | 2187 |

| Vulnerability Scan | 1057 | 855 | 1373 | 163 |

| Policy Evaluation | 413 | 296 | 524 | 66 |

| Artifact Ingestion | 1917 | 1434 | 3395 | 552 |

| Attestation | 2212 | 1777 | 2805 | 345 |

| Total Pipeline | 41,287 | 38,556 | 44,988 | 2062 |

| Dimension | Validation Method | Evidence Type |

|---|---|---|

| Architectural Soundness | Design Analysis | Reference Architecture |

| Policy Enforceability | Scenario Execution | Policy Decisions |

| Provenance Completeness | Lineage Inspection | Linked Artifacts |

| Pipeline Overhead | Scoped Measurements | Timing Metrics |

| Audit Readiness | Query Demonstration | Sample Queries |

| AI Governance Extensibility | Conceptual Extension | Lineage Schema |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zakharchenko, A. Integrating Continuous Compliance into DevSecOps Pipelines: A Data Engineering Perspective. Software 2026, 5, 6. https://doi.org/10.3390/software5010006

Zakharchenko A. Integrating Continuous Compliance into DevSecOps Pipelines: A Data Engineering Perspective. Software. 2026; 5(1):6. https://doi.org/10.3390/software5010006

Chicago/Turabian StyleZakharchenko, Aleksandr. 2026. "Integrating Continuous Compliance into DevSecOps Pipelines: A Data Engineering Perspective" Software 5, no. 1: 6. https://doi.org/10.3390/software5010006

APA StyleZakharchenko, A. (2026). Integrating Continuous Compliance into DevSecOps Pipelines: A Data Engineering Perspective. Software, 5(1), 6. https://doi.org/10.3390/software5010006