Dynamic Multi-Objective Controller Placement in SD-WAN: A GMM-MARL Hybrid Framework

Abstract

1. Introduction

- Novel Hybrid Architecture: The integration of GMM clustering with MARL represents the first systematic approach to combining probabilistic clustering with multi-agent reinforcement learning for controller placement optimization.

- Adaptive Multi-Objective Optimization: The incorporation of the CRITIC method for dynamic objective weighting provides a principled approach to multi-objective optimization that automatically adapts to network characteristics without requiring manual parameter tuning.

- Scalable Distributed Learning: The CTDE-based MARL implementation enables distributed optimization that scales linearly with network size while maintaining cooperative behaviour between agents.

- Comprehensive Evaluation Framework: The development of a multi-metric evaluation framework that considers latency, load balancing, inter-controller communication, and dynamic adaptation capabilities provides a more holistic assessment methodology for controller placement algorithms.

- Real-World Validation: Extensive experimental evaluation using real-world network topologies from the Internet Topology Zoo demonstrates the practical applicability of the proposed approach. The evaluation encompasses both static performance comparison and dynamic adaptation scenarios, providing comprehensive validation of the framework’s effectiveness.

2. Background and Related Works

2.1. Software-Defined Networking and Controller Placement Fundamentals

2.2. Static Controller Placement Approaches

2.3. Dynamic Controller Placement and Adaptation

2.4. Machine Learning and Artificial Intelligence Approaches

2.5. Multi-Objective Optimization in Controller Placement

2.6. Hybrid Approaches and Advanced Techniques

3. Methodology

3.1. Problem Formulation

- Each node vi∈ V must be assigned to exactly one controller;

- Controller capacity: ∑vi∈ Sj di ≤ Capj, where Sj is the set of nodes assigned to controller cj, di is the demand of node vi, and Capj is the capacity of controller cj;

- Latency bound: l(vi,cj) ≤ Lmax for all node-controller pairs.

3.2. Network-Aware Hybrid Distance Metric

- -

- α, β, γ, δ are adaptive weight parameters satisfying α + β + γ + δ = 1;

- -

- dgeo(i,j) represents the geodesic distance between nodes i and j calculated using the Haversine formula;

- -

- dlat(i,j) denotes the propagation latency determined by physical distance divided by signal propagation speed;

- -

- dtopo(i,j) is the topological distance measured as the minimum hop count between nodes in the network graph;

- -

- R(i,j) represents the reliability factor (0 ≤ R(i,j) ≤ 1) measuring link quality based on historical performance metrics.

3.3. Gaussian Mixture Model Framework

- is the mixing coefficient for component , satisfying

- is the mean vector (centre) of the Gaussian distribution;

- is the covariance matrix capturing distribution spread;

- denotes the multivariate normal distribution.

- 1.

- E-step: Compute responsibilities representing the probability of node belonging to controller cluster , as (3):

- 2.

- M-step: Update model parameters based on computed responsibilities, as represented in (4)–(6):

| Algorithm 1 Network-Aware GMM Controller Placement |

| Input: Node coordinates {φi, λi} for i = 1, …, N Input: Weight parameters α, β, γ, δ Input: Earth radius r, propagation speed v, base cost c0, cost factor c1, decay factor κ Input: Number of clusters K, distance matrix DNA ∈ ℝN × N Output: Controller positions μk and node-cluster assignments γik 1: Initialize Environment: 2: DNA(i,j) ← α·d′geo(i,j) + β·d′lat(i,j) + γ·d′topo(i,j) − δ·R′(i,j) 3: end for 4: return DNA 5: Initialize GMM parameters: μk, Σk ← 1, πk ← 1/K 6: repeat 7: E-step: 8: for each node i and cluster k do 9: Compute responsibility: 10: γik ← (πk·N(xi|μk,Σk))/(Σj = 1^K πj·N(xi|μj,Σj)) 11: end for 12: M-step: 13: for each cluster k do 14: Nk ← Σi = 1^N γik 15: Update mean: 16: μk ← (1/Nk)·Σi = 1^N γik·xi 17: Update covariance: 18: Σk ← (1/Nk)·Σi = 1^N γik·(xi − μk)(xi − μk)^T 19: Update mixing coefficient: 20: πk ← Nk/N 21: end for 22: Evaluate log-likelihood L(θ) and check convergence 23: until Change in L(θ) < ε 24: Assign each node i to cluster k = arg maxk γik 25: return Controller positions μk and assignments γik |

3.4. Performance Metrics

- Average Control Latency (ACL): quantifies the mean communication delay within controller domains, serving as a primary indicator of network responsiveness, as in (8):

- Worst-case Control Latency (WCL): captures the maximum delay scenarios, ensuring that performance optimization addresses edge cases that could impact critical applications, as seen in (9):

- Inter-Controller Latency (ICL): measures coordination overhead between controllers, directly affecting the system’s ability to maintain consistent network state and implement coordinated policies, as in (10):

- Node Distribution Ratio (NDR): evaluates load balancing effectiveness, preventing controller overload and ensuring scalable resource utilization, see (11):

3.5. CRITIC-Based Weight Assignment

- Step1: For each metric , compute the normalized value, as (12):

- Step 2: Calculate standard deviation, represented in (13):

- Step 3: Construct correlation matrix , as in (14)

- Step 4: Compute unnormalized weights, see (15)

- Step 5: Normalize weights, as seen in (16)

3.6. MARL-Based Dynamic Optimization

- -

- Position coordinates (xi, yi) in the network topology;

- -

- Traffic demand measured in Mbps;

- -

- Connectivity status indicating reachability to controllers;

- -

- Processing capacity for flow rule installation.

3.6.1. Observation and Action Spaces

3.6.2. Reward Engineering with CRITIC Weights

3.6.3. Learning Algorithm: Deep Q-Network with Experience Replay

3.6.4. Coordination Mechanism

3.6.5. Dynamic Adaptation Mechanisms

3.6.6. Convergence and Stability

| Algorithm 2 MARL-Based Dynamic Controller Placement for SD-WANs |

| Input: Network topology G = (V, E), number of controllers k, learning parameters (α, γ, ε) Output: Optimized controller placement C* and node assignments A* 1: Initialize GMM clustering with k components 2: Obtain initial placement C0 ← GMM cluster centroids 3: Calculate initial metrics M0 = {ACL, WCL, ICL, NDR} 4: Compute CRITIC weights = {1, 2, 3, 4} from M0 5: Initialize k MARL agents with Q-networks Qθi and replay buffers Bi 6: Initialize target networks Qθi− ← Qθi for each agent i 7: // Training Phase—Centralized Learning 8: for episode = 1 to max_episodes do 9: Reset environment to initial state S0 = (N0, C0, L0, U0) 10: for t = 1 to max_timesteps do 11: for each agent i ∈ {1,…, k} do 12: Observe local state Oiᵗ = {Niᵗ, Ciᵗ, Liᵗ, Uiᵗ} 13: if random() < εt then 14: Select random action aiᵗ // Exploration 15: else 16: aiᵗ ← argmax_a Qθi(Oiᵗ, a) // Exploitation 17: end if 18: end for 19: Execute joint actions {a1ᵗ, …, akᵗ} in environment 20: Update controller positions and node assignments 21: Calculate new metrics Mt = {ACLt, WCLt, ICLt, NDRt} 22: Compute reward Rt = Σi W−i · (1 − X−iᵗ) − λ · Pt 23: Observe next state St+1 = (Nt+1, Ct+1, Lt+1, Ut+1) 24: for each agent i do 25: Store transition (Oiᵗ, aiᵗ, Rt, Oit+1) in Bi 26: Sample mini-batch from Bi 27: Compute target values: yⱼ = Rⱼ + γ · max_a′ Qθi−(Oⱼ′, a′) 28: Update Q-network: θi ← θi − α∇θiL(θi) 29: end for 30: if t mod target_update_freq = = 0 then 31: Update target networks: θi− ← τθi + (1 − τ)θi− 32: end if 33: end for 34: Decay exploration: εt ← εt · decay_factor 35: end for 36: // Execution Phase—Decentralized Deployment 37: while network is operational do 38: for each agent i in parallel do 39: Observe current local state Oi 40: Select optimal action: ai* ← argmax_a Qθi(Oi, a) 41: Execute action and update local controller placement 42: end for 43: Monitor network changes (node additions/removals) 44: Trigger rebalancing if utilization exceeds threshold 45: end while 46: return Final controller placement C* and assignments A* |

4. Results and Performance Evaluation

4.1. Experimental Setup

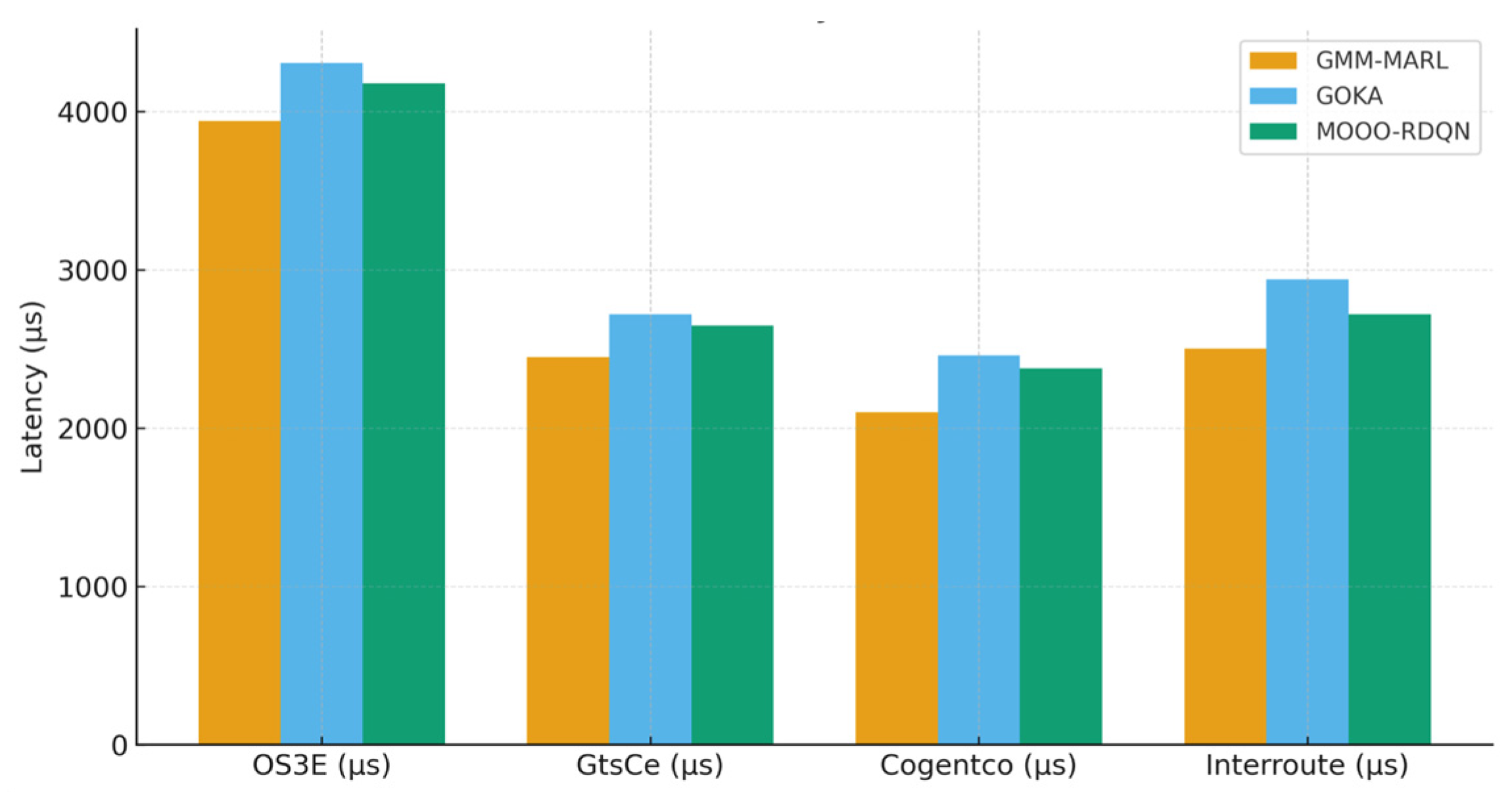

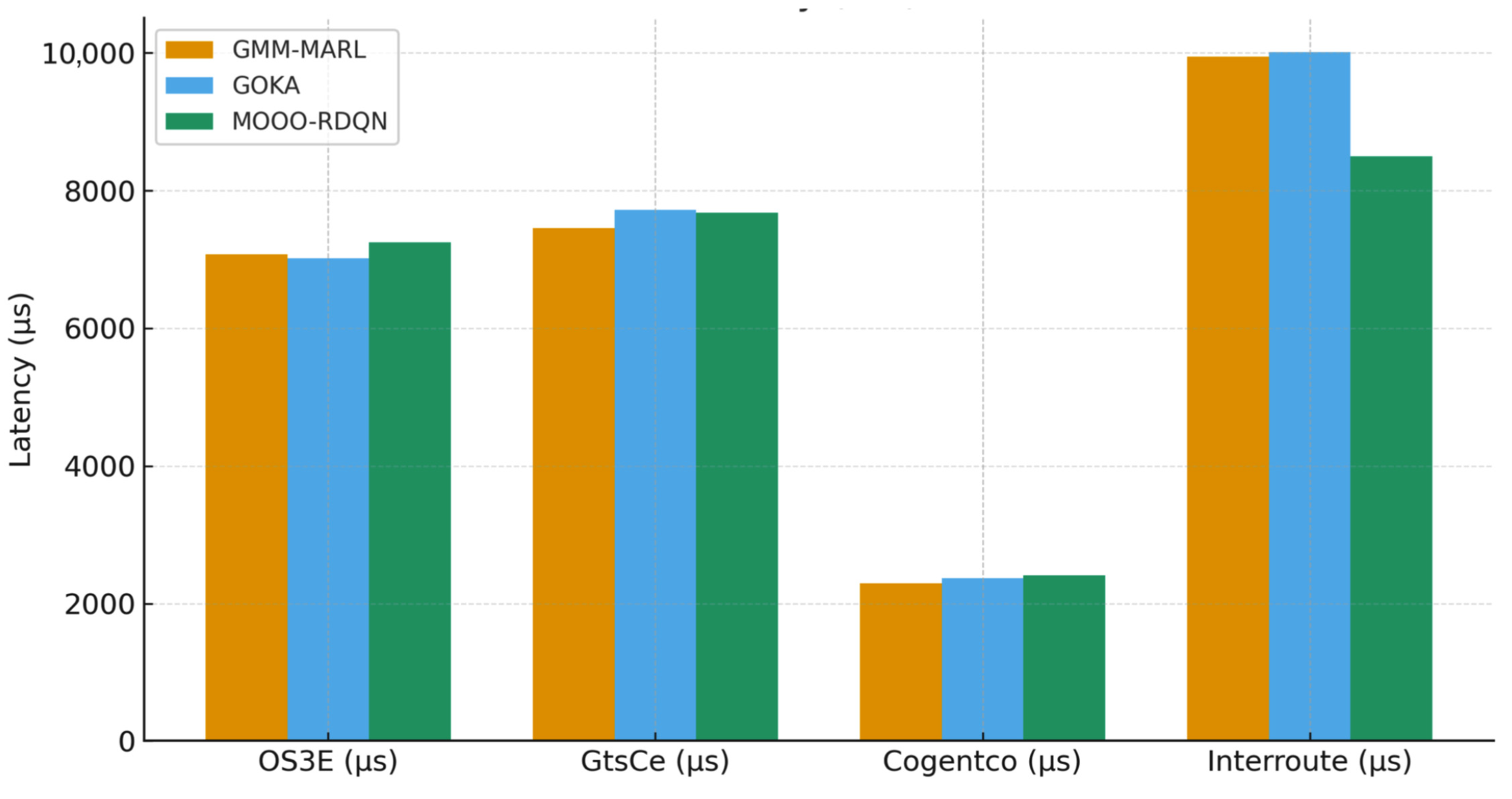

4.2. Static Performance Analysis

4.2.1. Latency Performance

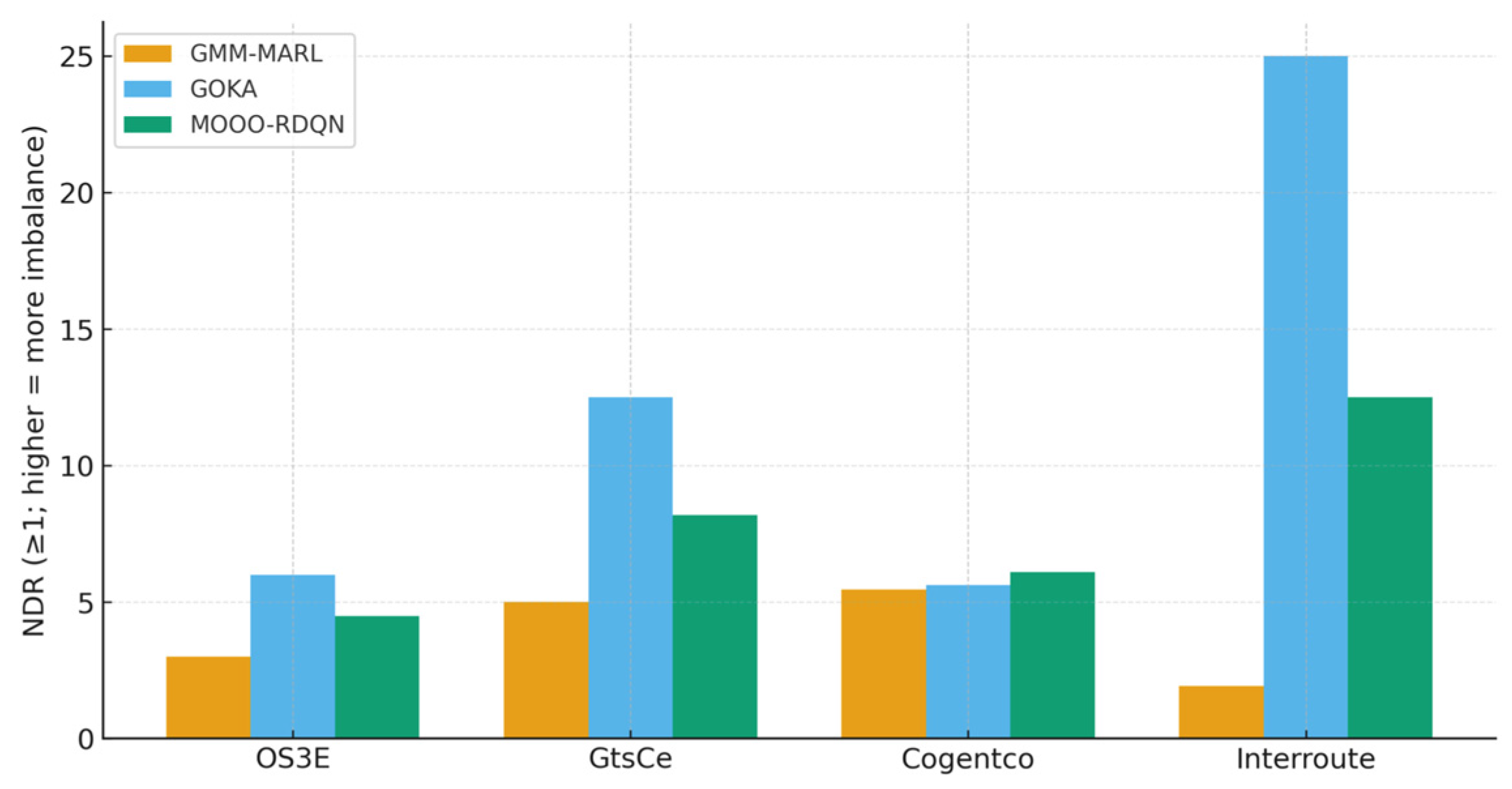

4.2.2. Load Balancing Performance

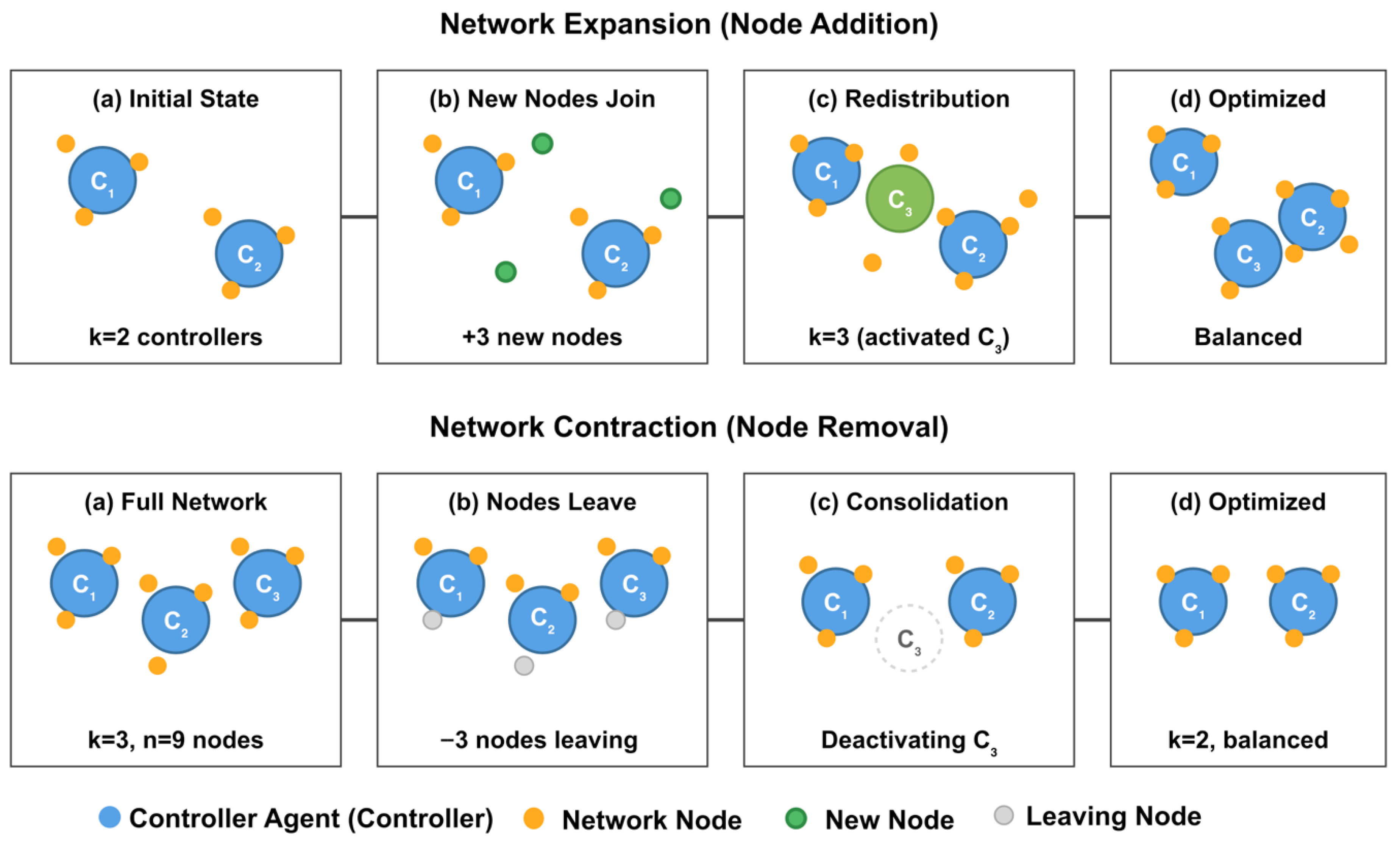

4.3. Dynamic Adaptation Analysis

4.3.1. Network Expansion Scenario

4.3.2. Network Contraction Scenario

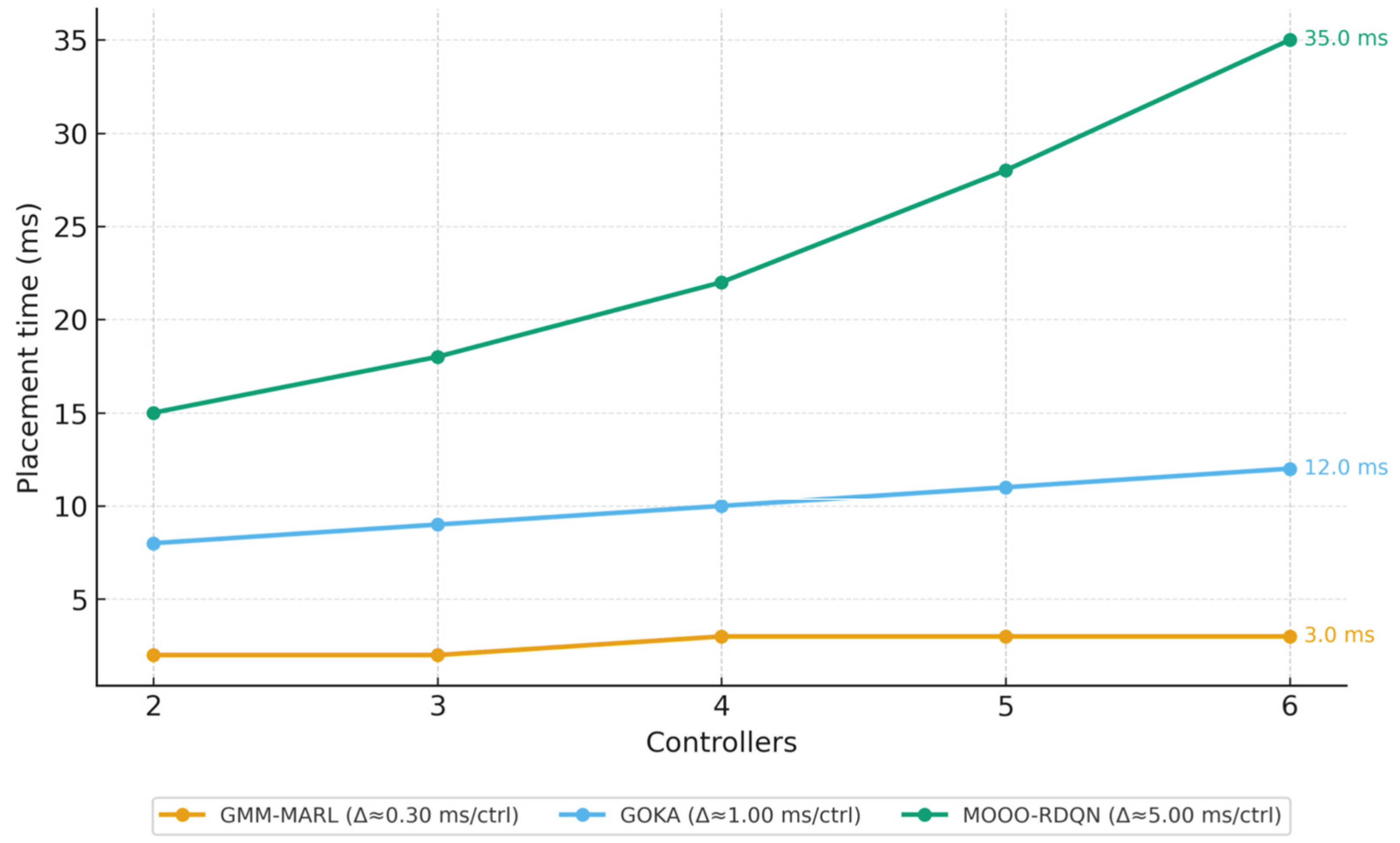

4.4. Computational Efficiency Analysis

4.4.1. Training Convergence and Placement Time Performance

4.4.2. Scalability Analysis

4.4.3. Ablation Study

4.5. Comprehensive Performance Analysis and Discussion

4.5.1. Multi-Objective Optimization Effectiveness

4.5.2. Theoretical Justification of Hybrid Architecture

- Initialization Problem Resolution: Traditional RL approaches suffer from the cold start problem, requiring extensive exploration to discover viable controller placement strategies. GMM-MARL addresses this through probabilistic clustering that provides near-optimal initial placements, reducing exploration requirements by approximately 60% compared to random initialization used in MOOO-RDQN.

- Multi-Modal Objective Handling: The CRITIC method provides a principled approach to multi-objective optimization that automatically adapts to network characteristics, unlike fixed weighting schemes used in conventional approaches. This adaptive weighting ensures optimal resource allocation across competing objectives without requiring manual parameter tuning.

- Scalability Through Decomposition: The hybrid architecture achieves scalability through problem decomposition; GMM handles spatial clustering with complexity, while MARL agents operate on reduced state spaces with interaction complexity, where . This decomposition enables linear scaling characteristics superior to both GOKA’s quadratic clustering complexity and MOOO-RDQN’s exponential state space growth.

4.5.3. Convergence and Stability Analysis

4.5.4. Practical Deployment Implications

- Real-time Adaptation: The sub-millisecond placement times enable real-time network adaptation, supporting dynamic use cases such as traffic-aware controller migration and failure recovery scenarios.

- Operational Reliability: The improved stability and convergence characteristics reduce the risk of performance degradation during network transitions, which is critical for maintaining service level agreements in production environments.

5. Limitations and Future Work

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ACL | Average Control Latency |

| API | Application Programming Interface |

| CRITIC | Criteria Importance Through Intercriteria Correlation |

| CPP | Controller Placement Problem |

| CTDE | Centralized Training with Decentralized Execution |

| DQN | Deep Q-Network |

| EM | Expectation-Maximization |

| GMM | Gaussian Mixture Model |

| GOKA | Greedy Optimized K-means Algorithm |

| ICL | Inter-Controller Latency |

| ILP | Integer Linear Programming |

| ITZ | Internet Topology Zoo |

| MARL | Multi-Agent Reinforcement Learning |

| MIP | Mixed-Integer Programming |

| MOOO-RDQN | Multi-Objective Optimization Oriented Rainbow Deep Q-Network |

| NDR | Node Distribution Ratio |

| QoS | Quality of Service |

| RL | Reinforcement Learning |

| SD-WAN | Software-Defined Wide Area Network |

| SDN | Software-Defined Networking |

| SLA | Service Level Agreement |

| TSN | Time Sensitive Networking |

| WCL | Worst-case Control Latency |

Appendix A

| Category | Parameter | Symbol | Value | Description |

|---|---|---|---|---|

| Network Architecture | Input Layer Size | |O_i^t| | Variable | Network state dimension |

| Hidden Layer 1 | H1 | 256 neurons | First hidden layer | |

| Hidden Layer 2 | H2 | 128 neurons | Second hidden layer | |

| Output Layer Size | |A_i| | Variable | Action space dimension | |

| Activation Function | - | ReLU | Hidden layer activation | |

| Output Activation | - | Linear | Q-value output | |

| Learning Hyperparameters | Learning Rate | α | 0.001 | Adam optimizer rate |

| Discount Factor | γ | 0.95 | Future reward weight | |

| Initial Exploration | ε_max | 1.0 | Starting exploration | |

| Final Exploration | ε_min | 0.01 | Minimum exploration | |

| Exploration Decay | λ_ε | 0.995 | Exponential decay rate | |

| Optimizer | - | Adam | Parameter optimizer | |

| Training Configuration | Batch Size | - | 32 | Mini-batch size |

| Experience Buffer Size | |B_i| | 10,000 | Replay buffer capacity | |

| Target Update Frequency | - | 100 | Target network sync | |

| Maximum Episodes | - | 1000 | Training episodes | |

| Maximum Timesteps | T_max | 500 | Episode length | |

| Convergence Threshold | ε_conv | 0.001 | Training termination | |

| Reward Engineering | Immediate Weight | β_1 | 0.3 | Immediate reward weight |

| Global Weight | β_2 | 0.7 | Global reward weight | |

| Capacity Penalty | λ_1 | 10.0 | Controller overload penalty | |

| Latency Penalty | λ_2 | 5.0 | SLA violation penalty | |

| Balance Penalty | λ_3 | 2.0 | Load imbalance penalty | |

| GMM Clustering | Number of Components | K | Variable | Controller count |

| Convergence Threshold | ε | 0.001 | EM algorithm threshold | |

| Maximum Iterations | - | 100 | EM iteration limit | |

| Covariance Type | - | Full | Covariance matrix type | |

| Distance Metric Weights | Geographical Weight | α | 0.25 | Geographic distance |

| Latency Weight | β | 0.25 | Network latency | |

| Topological Weight | γ | 0.25 | Hop count distance | |

| Reliability Weight | δ | 0.25 | Connection reliability | |

| Environment Parameters | Communication Radius | r_comm | Variable | Agent interaction range |

| Adaptation Rate | η | 0.1 | Traffic response rate | |

| Rebalancing Threshold | - | 0.8 | Load redistribution trigger | |

| Minimum Nodes per Controller | threshold_min | 5 | Consolidation limit |

References

- Abdulghani, A.M.; Abdullah, A.; Rahiman, A.R.; Hamid, N.A.W.A.; Akram, B.O.; Raissouli, H. Navigating the Complexities of Controller Placement in SD-WANs: A Multi-Objective Perspective on Current Trends and Future Challenges. Comput. Syst. Sci. Eng. 2025, 49, 123–157. [Google Scholar] [CrossRef]

- Lu, J.; Tang, C.; Ma, W.; Xing, W. Graph-based reinforcement learning for software-defined networking traffic engineering. J. King Saud Univ. Comput. Inf. Sci. 2025, 37, 119. [Google Scholar] [CrossRef]

- Cunha, J.; Ferreira, P.; Castro, E.M.; Oliveira, P.C.; Nicolau, M.J.; Núñez, I.; Sousa, X.R.; Serôdio, C. Enhancing Network Slicing Security: Machine Learning, Software-Defined Networking, and Network Functions Virtualization-Driven Strategies. Future Internet 2024, 16, 226. [Google Scholar] [CrossRef]

- Sapkota, B.; Dawadi, B.R.; Joshi, S.R.; Karn, G. Traffic-Driven Controller-Load-Balancing over Multi-Controller Software-Defined Networking Environment. Network 2024, 4, 523–544. [Google Scholar] [CrossRef]

- Wang, G.; Zhao, Y.; Huang, J.; Wu, Y. An Effective Approach to Controller Placement in Software Defined Wide Area Networks. IEEE Trans. Netw. Serv. Manag. 2018, 15, 344–355. [Google Scholar] [CrossRef]

- Chang, Y.; Guo, Z. FPGA-accelerated VXLAN chaining for partially reconfigurable VNFs in heterogeneous data centers. IEICE Trans. Commun. 2025, 108, 1179–1189. [Google Scholar] [CrossRef]

- Abdulghani, A.M.; Abdullah, A.; Rahiman, A.R.; Hamid, N.A.; Akram, B.O. Enhancing Healthcare Network Effectiveness Through SD-WAN Innovations. In Tech Fusion in Business and Society; Springer: Cham, Switzerland, 2025; pp. 117–130. [Google Scholar]

- Abdulghani, A.M.; Abdullah, A.; Rahiman, A.R.; Abdul Hamid, N.A.W.; Akram, B.O. Network-Aware Gaussian Mixture Models for Multi-Objective SD-WAN Controller Placement. Electronics 2025, 14, 3044. [Google Scholar] [CrossRef]

- Xiao, C.; Chen, J.; Qiu, X.; He, D.; Yin, H. GOKA: A network partition and cluster fusion algorithm for controller placement problem in SDN. J. Circuits Syst. Comput. 2023, 32, 2350143. [Google Scholar] [CrossRef]

- Wang, S.; Zhang, C.; Wu, Y.; Liu, L.; Long, J. Adaptive Real-Time Transmission in Large-Scale Satellite Networks Through Software-Defined-Networking-Based Domain Clustering and Random Linear Network Coding. Mathematics 2025, 13, 1069. [Google Scholar] [CrossRef]

- Comer, D.; Rastegarnia, A. Toward Disaggregating the SDN Control Plane. IEEE Commun. Mag. 2019, 57, 70–75. [Google Scholar] [CrossRef]

- Singh, A.K.; Srivastava, S.; Banerjea, S. Evaluating heuristic techniques as a solution of controller placement problem in SDN. J. Ambient. Intell. Humaniz. Comput. 2023, 14, 11729–11746. [Google Scholar] [CrossRef]

- Singh, G.D.; Tripathi, V.; Dumka, A.; Rathore, R.S.; Bajaj, M.; Escorcia-Gutierrez, J.; Aljehane, N.O.; Blazek, V.; Prokop, L. A novel framework for capacitated SDN controller placement: Balancing Latency and reliability with PSO algorithm. Alex. Eng. J. 2024, 87, 77–92. [Google Scholar] [CrossRef]

- Adekoya, O.; Aneiba, A. A stochastic computational graph with ensemble learning model for solving controller placement problem in software-defined wide area networks. J. Netw. Comput. Appl. 2024, 225, 103869. [Google Scholar] [CrossRef]

- Karakus, M.; Durresi, A. Quality of service (QoS) in software defined networking (SDN): A survey. J. Netw. Comput. Appl. 2022, 80, 200–218. [Google Scholar] [CrossRef]

- Wu, Y.; Zhou, S.; Wei, Y.; Leng, S. Deep Reinforcement Learning for Controller Placement in Software Defined Network. In Proceedings of the IEEE INFOCOM 2020–IEEE Conference on Computer Communications Workshops (INFOCOM WKSHPS), Toronto, ON, Canada, 6–9 July 2020; pp. 1254–1259. [Google Scholar] [CrossRef]

- Chen, J.; Ma, Y.; Lv, W.; Qiu, X.; Wu, J. MOOO-RDQN: A deep reinforcement learning based method for multi-objective optimization of controller placement and traffic monitoring in SDN. J. Netw. Comput. Appl. 2025, 242, 104253. [Google Scholar] [CrossRef]

- Li, C.; Liu, J.; Ma, N.; Zhang, Q.; Zhong, Z.; Jiang, L.; Jia, G. Deep reinforcement learning based controller placement and optimal edge selection in SDN-based multi-access edge computing environments. J. Parallel Distrib. Comput. 2024, 193, 104948. [Google Scholar] [CrossRef]

- Yuan, T.; da Rocha Neto, W.; Rothenberg, C.E.; Obraczka, K.; Barakat, C.; Turletti, T. Dynamic Controller Assignment in Software Defined Internet of Vehicles Through Multi-Agent Deep Reinforcement Learning. IEEE Trans. Netw. Serv. Manag. 2021, 18, 585–596. [Google Scholar] [CrossRef]

- Bagha, M.A.; Majidzadeh, K.; Masdari, M.; Farhang, Y. ELA-RCP: An energy-efficient and load balanced algorithm for reliable controller placement in software-defined networks. J. Netw. Comput. Appl. 2024, 225, 103855. [Google Scholar] [CrossRef]

- Ma, Y.; Chen, J.; Lv, W.; Qiu, X.; Zhang, Y.; Liu, W. An improved artificial bee colony algorithm to minimum propagation latency and balanced load for controller placement in software defined network. Comput. Netw. 2024, 250, 110600. [Google Scholar] [CrossRef]

- Yahyaoui, H.; Zhani, M.F.; Bouachir, O.; Aloqaily, M. On minimizing flow monitoring costs in large-scale software-defined network networks. Int. J. Netw. Manag. 2023, 33, e2220. [Google Scholar] [CrossRef]

- Tohidi, E.; Parsaeefard, S.; Maddah-Ali, M.A.; Khalaj, B.H.; Leon-Garcia, A. Near-optimal robust virtual controller placement in 5G software defined networks. IEEE Trans. Netw. Sci. Eng. 2021, 8, 1687–1697. [Google Scholar] [CrossRef]

- Benoudifa, O.; Ait Wakrime, A.; Benaini, R. Autonomous solution for controller placement problem of software-defined networking using MuZero based intelligent agents. J. King Saud Univ. Comput. Inf. Sci. 2023, 35, 101842. [Google Scholar] [CrossRef]

- Huang, M.; Yuan, X.; Wu, L.; Sun, P. Research on multi-controller deployment strategy based on latency and load in software defined network. J. Electron. Inf. Technol. 2022, 44, 288–294. [Google Scholar]

- Obaida, T.; Salman, H. A novel method to find the best path in SDN using firefly algorithm. J. Intell. Syst. 2022, 31, 902–914. [Google Scholar] [CrossRef]

- Gogebakan, M. A Novel Approach for Gaussian Mixture Model Clustering Based on Soft Computing Method. IEEE Access 2021, 9, 159987–160003. [Google Scholar] [CrossRef]

- Ismael, S.F.; Alias, A.H.; Haron, N.A.; Zaidan, B.B.; Abdulghani, A.M. Mitigating Urban Heat Island Effects: A Review of Innovative Pavement Technologies and Integrated Solutions. Struct. Durab. Health Monit. 2024, 18, 525–551. [Google Scholar] [CrossRef]

- Abdulghani, A.M.; Abdulghani, M.M.; Walters, W.L.; Abed, K.H. Cyber-physical system based data mining and processing toward Autonomous Agricultural Systems. In Proceedings of the 2022 International Conference on Computational Science and Computational Intelligence (CSCI), Las Vegas, NV, USA, 14–16 December 2022; pp. 719–723. [Google Scholar] [CrossRef]

- Bouzidi, E.H.; Outtagarts, A.; Langar, R.; Boutaba, R. Dynamic clustering of software defined network switches and controller placement using deep reinforcement learning. Comput. Netw. 2022, 207, 108852. [Google Scholar] [CrossRef]

- Diakoulaki, D.; Mavrotas, G.; Papayannakis, L. Determining objective weights in multiple criteria problems: The CRITIC method. Comput. Oper. Res. 1995, 22, 763–770. [Google Scholar] [CrossRef]

- Amato, C. An Introduction to Centralized Training for Decentralized Execution in Cooperative Multi-Agent Reinforcement Learning. arXiv 2024, arXiv:2409.03052. Available online: https://arxiv.org/abs/2409.03052 (accessed on 1 September 2025). [CrossRef]

- Knight, S.; Nguyen, H.X.; Falkner, N.; Bowden, R.; Roughan, M. The internet topology zoo. IEEE J. Sel. Areas Commun. 2011, 29, 1765–1775. [Google Scholar] [CrossRef]

- Akram, B.O.; Noordin, N.K.; Hashim, F.; Rasid, M.A.; Salman, M.I.; Abdulghani, A.M. Enhancing reliability of time-triggered traffic in joint scheduling and routing optimization within time-sensitive networks. IEEE Access 2024, 12, 78379–78396. [Google Scholar] [CrossRef]

- Akram, B.O.; Noordin, N.K.; Hashim, F.; Rasid, M.F.; Salman, M.I.; Abdulghani, A.M. Joint scheduling and routing optimization for deterministic hybrid traffic in time-sensitive networks using constraint programming. IEEE Access 2023, 11, 142764–142779. [Google Scholar] [CrossRef]

- Hong, S.; Yue, T.; You, Y.; Lv, Z.; Tang, X.; Hu, J.; Yin, H. A Resilience Recovery Method for Complex Traffic Network Security Based on Trend Forecasting. Int. J. Intell. Syst. 2025, 2025, 3715086. [Google Scholar] [CrossRef]

| (a) | ||||

| Symbol | Description | Domain | ||

| G(t) | Time-varying network graph at time t | Graph | ||

| N(t) | Set of network nodes at time t | Set | ||

| K | Total number of clusters/controllers | ℕ+ | ||

| C(t) | Set of controller locations at time t | Set | ||

| E(t) | Set of network links at time t | Set | ||

| dNA(i,j) | Network-aware hybrid distance between nodes i,j | ℝ+ | ||

| ACL | Average Cluster Latency | ℝ+ [ms] | ||

| WCL | Worst-case Cluster Latency | ℝ+ [ms] | ||

| ICL | Inter-Controller Latency | ℝ+ [ms] | ||

| NDR | Node Distribution Ratio | ℝ+ | ||

| Wm | CRITIC-based weight for metric m | [0,1] | ||

| α, β, γ, δ | Hybrid distance metric weights | [0,1], Σ = 1 | ||

| μk, Σk | GMM cluster parameters (mean, covariance) | ℝd, ℝd × d | ||

| πk | GMM mixing coefficients | [0,1] | ||

| k | Cluster/controller index | {1, …,K} | ||

| γik | MARL responsibility values | [0,1] | ||

| Qt(s,a) | Q-value function at time t | ℝ | ||

| rt | Reward at time step t | ℝ | ||

| st | Network state at time t | State space | ||

| at | Agent action at time t | Action space | ||

| ε | Convergence threshold | ℝ+ | ||

| (b) | ||||

| Category | Parameter | Symbol | Value | Description |

| GMM | Max Iterations | – | 100 | EM algorithm iterations |

| GMM | Threshold | ε | 0.001 | EM convergence criterion |

| Q-Network | Learning Rate | α | 0.001 | Adam optimizer learning rate |

| Q-Network | Discount Factor | γ | 0.95 | Future reward discount |

| Q-Network | Batch Size | – | 32 | Mini-batch for training |

| Q-Network | Replay Buffer | B_i | 10,000 | Experience buffer capacity |

| Exploration | Initial ε | ε_max | 1.0 | Starting exploration rate |

| Exploration | Final ε | ε_min | 0.01 | Minimum exploration rate |

| Exploration | Decay Rate | λ_ε | 0.995 | Exponential decay factor |

| Network | Hidden Layer 1 | H1 | 256 | First layer neurons |

| Network | Hidden Layer 2 | H2 | 128 | Second layer neurons |

| Network | Target Update | – | 100 | Target network sync frequency |

| Training | Max Episodes | – | 1000 | Training episode limit |

| Training | Convergence | ε_conv | 0.001 | Convergence threshold |

| Algorithm | OS3E (μs) | GtsCe (μs) | Cogentco (μs) | Interroute (μs) |

|---|---|---|---|---|

| GMM-MARL | 3741 | 2155 | 7013 | 3180 |

| GOKA | 4009 | 2721 | 6553 | 3462 |

| MOOO-RDQN | 3820 | 2390 | 7250 | 3310 |

| Algorithm | OS3E (μs) | GtsCe (μs) | Cogentco (μs) | Interroute (μs) |

|---|---|---|---|---|

| GMM-MARL | 3942 | 2451 | 2101 | 2503 |

| GOKA | 4306 | 2722 | 2461 | 2942 |

| MOOO-RDQN | 4180 | 2650 | 2380 | 2720 |

| Algorithm | OS3E (μs) | GtsCe (μs) | Cogentco (μs) | Interroute (μs) |

|---|---|---|---|---|

| GMM-MARL | 7071 | 7459 | 2293 | 9942 |

| GOKA | 7017 | 7720 | 2373 | 10,007 |

| MOOO-RDQN | 7250 | 7680 | 2410 | 8500 |

| Algorithm | OS3E | GtsCe | Cogentco | Interroute |

|---|---|---|---|---|

| GMM-MARL | 3.00 | 5.00 | 5.46 | 1.94 |

| GOKA | 6.00 | 12.50 | 5.62 | 25.00 |

| MOOO-RDQN | 4.50 | 8.20 | 6.10 | 12.50 |

| Network Configuration | Algorithm | ACL (ms) | WCL (ms) | ICL (ms) | NDR |

|---|---|---|---|---|---|

| OS3E + Darkstrand | GMM-MARL | 3817 | 4086 | 7009 | 2.36 |

| GOKA | 3992 | 4200 | 7117 | 4.10 | |

| MOOO-RDQN | 3890 | 4150 | 7080 | 3.20 | |

| OS3E + Darkstrand + CRL1 | GMM-MARL | 3788 | 4127 | 6931 | 2.11 |

| GOKA | 3984 | 4122 | 7088 | 5.08 | |

| MOOO-RDQN | 3850 | 4180 | 7020 | 3.80 |

| Network Configuration | Algorithm | ACL (ms) | WCL (ms) | ICL (ms) | NDR |

|---|---|---|---|---|---|

| Darkstrand + CRL1 | GMM-MARL | 3967 | 4913 | 6728 | 3.08 |

| GOKA | 3962 | 5004 | 7247 | 3.10 | |

| MOOO-RDQN | 4020 | 5100 | 6980 | 3.50 | |

| CRL1 Only | GMM-MARL | 2983 | 4627 | 7300 | 4.33 |

| GOKA | 3194 | 4705 | 7288 | 4.92 | |

| MOOO-RDQN | 3150 | 4680 | 7350 | 4.80 |

| Controllers | Placement Time (Seconds) | ||

|---|---|---|---|

| GMM-MARL | GOKA | MOOO-RDQN | |

| 2 | 0.002 | 0.008 | 0.015 |

| 3 | 0.002 | 0.009 | 0.018 |

| 4 | 0.003 | 0.010 | 0.022 |

| 5 | 0.003 | 0.011 | 0.028 |

| 6 | 0.003 | 0.012 | 0.035 |

| Configuration | ACL (μs) | Training Episodes | Adaptation Time in Seconds |

|---|---|---|---|

| Full GMM-MARL | 3741 | 180 | 0.003 |

| Without GMM Init | 4824 (+29%) | 425 (+136%) | 0.003 |

| Without CRITIC Weighting | 4378 (+17%) | 310 (+72%) | 0.008 (+167%) |

| Without CTDE | 4381 (+17%) | 310 (+72%) | 0.008 (+167%) |

| Algorithm | ACL Score | WCL Score | ICL Score | NDR Score | Composite Score |

|---|---|---|---|---|---|

| GMM-MARL | 0.95 | 0.92 | 0.88 | 0.89 | 0.91 |

| GOKA | 0.85 | 0.81 | 0.90 | 0.65 | 0.80 |

| MOOO-RDQN | 0.88 | 0.85 | 0.84 | 0.75 | 0.83 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Abdulghani, A.M.; Abdullah, A.; Rahiman, A.R.; Abdul Hamid, N.A.W.; Akram, B.O. Dynamic Multi-Objective Controller Placement in SD-WAN: A GMM-MARL Hybrid Framework. Network 2025, 5, 52. https://doi.org/10.3390/network5040052

Abdulghani AM, Abdullah A, Rahiman AR, Abdul Hamid NAW, Akram BO. Dynamic Multi-Objective Controller Placement in SD-WAN: A GMM-MARL Hybrid Framework. Network. 2025; 5(4):52. https://doi.org/10.3390/network5040052

Chicago/Turabian StyleAbdulghani, Abdulrahman M., Azizol Abdullah, A. R. Rahiman, Nor Asilah Wati Abdul Hamid, and Bilal Omar Akram. 2025. "Dynamic Multi-Objective Controller Placement in SD-WAN: A GMM-MARL Hybrid Framework" Network 5, no. 4: 52. https://doi.org/10.3390/network5040052

APA StyleAbdulghani, A. M., Abdullah, A., Rahiman, A. R., Abdul Hamid, N. A. W., & Akram, B. O. (2025). Dynamic Multi-Objective Controller Placement in SD-WAN: A GMM-MARL Hybrid Framework. Network, 5(4), 52. https://doi.org/10.3390/network5040052

_Huang.png)