1. Introduction

This article considers the inverse dynamics problem, which involves forming dynamic systems with trajectories belonging to a given smooth manifold. Dynamic systems are assumed to be described by Itô or Stratonovich stochastic differential equations. Hereinafter, they are called invariant stochastic differential systems, and their trajectories are those of diffusion processes. The manifold is implicitly defined by a differentiable function that is a first integral of the system.

In the following, we give examples of invariant stochastic differential systems. The first example concerns the problem of spatial orientation control for a manned or unmanned aerial vehicle [

1,

2]. Consider the system of linear ordinary differential equations describing the rotation of a rigid body in three-dimensional space [

3,

4]:

where

,

is a given time interval of rotation;

is the quaternion of rotation;

is the angular velocity that can be treated as a control input; and

means transposition.

It is not difficult to see that

Thus, the modulus of the quaternion

is equal to one for the condition

, and for the system of differential equations under consideration, the following identity holds:

i.e., the system state belongs to a three-dimensional hypersphere centered at the origin and with unit radius (in four-dimensional space).

By assuming that the system is affected by random disturbances, which lead to inaccurate control implementation, we obtain the following system of linear stochastic differential equations with multiplicative noise:

where

is the vector Gaussian white noise, and

is the vector of intensities of random disturbances. Random disturbances in spatial orientation control are typical, and their source is usually found in the measurement system (gyroscopes and accelerometers). In a control system, disturbances are typically generated by a shaping filter based on Gaussian white noise. In this example, a direct effect of Gaussian white noise is assumed to simplify the mathematical model.

Equation (

3) should be understood as Stratonovich stochastic differential equations [

5]; then, the system state belongs to the same three-dimensional hypersphere.

The second example is of great importance for numerical methods to solve stochastic differential equations with multiplicative noise [

6,

7,

8,

9], including Equation (

3). Consider the system of Itô stochastic differential equations:

where

,

is a given time interval;

denotes the standard vector Wiener process, i.e.,

and

are independent standard Wiener processes.

Two equations from the system of Equation (

4) have obvious solutions

and for the remaining equations, solutions are written as follows:

For a fixed

t, the random variables

, and

are used in numerical methods for solving stochastic differential equations, e.g., in the Milstein method [

8] or in one of the Rosenbrock-type methods [

10]. These random variables are essential in numerical methods that exhibit high convergence orders, provided that convergence is understood in a strong sense [

6,

9,

11].

The random variables

and

are called iterated stochastic integrals of the second multiplicity. They can be represented as multiple stochastic integrals (

t is not necessarily fixed):

where

is the unit step function:

Then

i.e., we have the identity

and therefore, the system state belongs to a hypercylinder over a hyperbolic paraboloid (in four-dimensional space).

The theory of invariant stochastic differential systems began to develop actively in the late 1970s [

12,

13]. It generalizes the theory of invariant deterministic differential systems [

14,

15,

16,

17,

18] that is utilized, e.g., in the design of aircraft control systems [

19,

20,

21].

One of the problems in the theory of invariant stochastic differential systems surrounds obtaining stochastic differential equations for a given manifold. This problem is reduced to finding and then applying conditions for coefficients of such equations [

22,

23,

24]. For each manifold, there are infinitely many invariant differential systems, among which we can distinguish systems with additional properties, e.g., stability [

25]. As for deterministic differential systems, we can consider the synthesis of control for stochastic differential systems that ensures invariance [

23,

26]. Among the applied problems, we can note the epidemic spread analysis [

26], the ecosystem evolution [

26], financial mathematics problems [

27], etc.

Conditions on coefficients of equations that ensure the existence of a first integral are usually written using a vector product in the space whose dimension coincides with the order of dynamic system or is greater by one [

22,

23,

26]. The set of coefficients of stochastic differential equations is formed using determinants of functional matrices. Some elements of such matrices depend on a given manifold (these elements can be assumed to be known), while others are chosen arbitrarily under the additional condition of existence of solutions to stochastic differential equations. The described approach allows one to find the entire set of invariant stochastic differential systems corresponding to a given first integral. However, it is characterized by both the complexity of expressions describing coefficients of stochastic differential equations (this is especially evident with increasing the order of dynamic system) and the redundancy of functions that can be chosen arbitrarily (some functions are included in coefficients of equations nonlinearly).

The purpose of this study is to describe and examine a method for obtaining stochastic differential equations for a given manifold. The proposed method has the following advantages:

(1) The method provides simple expressions for coefficients of stochastic differential equations.

(2) The method ensures a minimum number of functions required to determine the entire set of invariant stochastic differential systems associated with a given first integral (coefficients of equations depend on these functions linearly).

(3) The method allows one to obtain stochastic differential equations with a degenerate diffusion matrix relative to a part of the state components.

The proposed method is distinguished by a convenient implementation of the corresponding algorithm for forming invariant stochastic differential systems within symbolic computation environments. The method utilizes the construction of a basis related to a tangent hyperplane to the manifold. The article discusses the problem of basis degeneration and examines variants that ensure the simple construction of a basis that does not degenerate.

The results of this study can be applied to synthesize the control that guarantees invariance [

23,

26] and to obtain stochastic differential equations to validate numerical methods primarily focused on systems of stochastic differential equations with first integrals [

28,

29,

30,

31]. If a first integral exists for a system of stochastic differential equations but an analytical solution cannot be obtained, such a first integral can be utilized to estimate the accuracy of numerical methods whose convergence is understood in a strong sense. Furthermore, the proposed method ensures a simple transition from an invariant deterministic differential system to a stochastic one while preserving the first integral.

In addition to this Introduction, the article contains several sections.

Section 2 describes invariant stochastic differential systems and specifies invariance conditions. The proposed method for forming invariant stochastic differential systems is presented in

Section 3. Additionally,

Section 4 considers second-, fourth-, and eighth-order systems.

Section 5 includes examples of invariant stochastic differential systems and the results of numerical simulations for them.

Section 6 presents the conclusions of the article.

2. Invariant Stochastic Differential Systems

The term “invariant stochastic differential system” refers to a dynamic system whose mathematical model is represented by the Itô stochastic differential equation:

provided that the solution to this equation

almost surely (with probability 1) satisfies the relation

We assume that the vector describes the state of dynamic system, ; denotes time; the moments and T are given; and are vector and matrix functions, respectively; and represents the standard vector Wiener process. The Wiener process , which models disturbances that act on dynamic system, and the initial state are independent.

Components

of the vector function

and elements

of the matrix function

satisfy conditions for the existence and uniqueness of solutions to stochastic differential equations,

and

. This means the Lipschitz condition and the linear growth condition with respect to

x [

5,

6], namely

and

where

is a constant, and

and

are the vector modulus and the Frobenius norm of the matrix, respectively. Note that the above conditions can be weakened [

32,

33]. For instance, for many problems, it is sufficient to satisfy mentioned conditions locally rather than globally. The additional condition is the continuous differentiability of functions

with respect to

x.

The nonconstant function

is continuously differentiable with respect to

t and twice continuously differentiable with respect to

x. According to [

12], such a function is called the first integral for Equation (

5). Another definition of the first integral is formulated in [

13].

Necessary and sufficient conditions for the function

to be a first integral of Equation (

5) are written in the following way [

22]:

and these equalities should be satisfied on trajectories of the random process

.

The invariant stochastic differential system can be defined by the equivalent Stratonovich stochastic differential equation:

for which, in addition to the previously introduced notations,

is the vector function. Taking into account the well-known equation that relates drift coefficients in Equations (

5) and (

9) [

6], we can rewrite equality (

8) as follows:

where conditions on components

of the vector function

are determined through conditions on functions

and

,

and

.

It is not difficult to see that the equality of the Itô differential for the random process

to zero is equivalent to relations (

7) and (

8). Similarly, the equality of the Stratonovich differential for the same random process to zero is equivalent to relations (

7) and (

10) [

34,

35].

Equation (

6) defines a smooth manifold in

, and trajectories of the random process

with the initial condition

belong to this manifold. If

, then the term “dynamic manifold” may be used.

Necessary and sufficient conditions for the function

to be a first integral of Equation (

9) have a simple and clear geometric meaning. Equality (

7) is the condition that each column of the matrix

and the gradient

are orthogonal in

. For

, equality (

10) is the orthogonality condition for the vector

and the gradient

in

, and for

, it is the orthogonality condition for the vector

and the generalized gradient

.

Remark 1.

Using the notation for the lth column of the matrix and the notation for the inner product in , equalities (

7)

, (

8)

, and (

10)

can be rewritten aswhere For a nonautonomous dynamic system, we can convert it to an autonomous one using the additional equation with the solution by introducing the extended state (its components are numbered from zero). Then equalities similar to (

8)

and (

10)

will have a simpler form. 3. Forming Invariant Stochastic Differential Systems

In this section, we define the set of

linearly independent vectors orthogonal to the gradient

. To simplify notations, we introduce the vector

G:

Next, we define vectors

as follows:

or, in general,

,

, where

are columns of the identity matrix

E of size

. Vectors

are functions of a pair

with values in

. The arguments of these vector functions are not given for brevity.

By definition, vectors are orthogonal to vector G. Furthermore, vectors and are orthogonal if ; i.e., the corresponding Gram matrix for the set of vectors is tridiagonal in the general case.

Indeed, let . Then, the vector can only have nonzero components with indices j and , while the vector can only have nonzero components with indices k and . Consequently, the inner product of vectors and is equal to zero since . The same result holds if .

Proposition 1.

If and also , …, for , then vectors are linearly independent, and the determinant of the matrix formed by these vectors is equal to , where Proof. First, we find the determinant of the

-matrix

whose columns are vectors

. The case

is trivial:

Consider the case

:

using the Laplace expansion along the last row, i.e.,

The first determinant on the right-hand side is equal to the product of diagonal elements, and the second one is similar in structure to the original determinant but for size

. Thus, by denoting

, we obtain the recurrence formula

Second, we show that

using mathematical induction.

This formula is valid for

, since

. Further, we assume that

Then, in accordance with the recurrent Formula (

15), we have

Therefore, if and also ,…, for , then , and vectors are linearly independent. The proposition has been proven. □

Similarly, we can determine the set of

n linearly independent vectors orthogonal to the generalized gradient

. For this, we introduce the following notations:

as well as

or, in general,

,

, where

are columns of the identity matrix

E of size

if these columns are numbered from zero. By definition, they are orthogonal to vector

. Vectors

are functions of a pair

with values in

. As before, the arguments of these vector functions are omitted for brevity.

Proposition 2.

If and , …, for , then vectors are linearly independent, and the determinant of the matrix formed by these vectors is equal to , where is given by Formula (

14)

. Proof. The determinant of the -matrix, whose columns are vectors , is equal to . The proof of this statement is the same as the proof of Proposition 1. According to the property of determinants, if all elements of column are divided by , then should also be divided by .

Thus, the determinant of the matrix, whose columns are vectors , is equal to . Consequently, if and also , …, for , then vectors are linearly independent. The proposition has been proven. □

Sets of linearly independent vectors orthogonal to the gradient or the generalized gradient can certainly be constructed in a different way. However, the proposed approach is quite sufficient due to the simplicity of implementation of the corresponding algorithm. If the conditions of Propositions 1 and 2 are satisfied, then any other set of linearly independent vectors is expressed through vectors defined above.

Additional orthogonalization, e.g., using the Gram–Schmidt process, is not assumed here, since vectors are functions of the point in the general case. This complicates the implementation of the corresponding algorithm and entails the complexity of expressions describing components of orthogonal vectors.

Remark 2.

For , according to Proposition 1, vectors are linearly independent if , and , , …, ; i.e., the function may not depend on components and of the vector x.

If , , …, , i.e., the function does not depend on components (), then as the set of linearly independent vectors, we can take from the set (

13)

, supplementing them with unit vectors , columns of the identity matrix E of size . The determinant of the matrix, whose columns are vectors , is equal to , where is given by Formula (

14)

. The independence of the function from components does not limit the generality of reasoning, since components under the condition can always be ordered in this way.

The same arguments are valid for the set of vectors . According to Proposition 2, they are linearly independent if and , , …, ; i.e., the function may not depend on the last component of the vector x. Let , …, , i.e., the function does not depend on components (). Then, as the set of linearly independent vectors, we can take from the set (17), supplementing them with unit vectors , columns of the identity matrix E of size , provided that these columns are numbered from zero. Let

be the linear span of vectors

, the linear subspace of dimension

:

and let

and

be linear manifolds

and

, respectively:

where vectors

and

are functions of a pair

with values in

. The first vector is defined by the formula

and the second one is determined by relation (

11).

The set

is the orthogonal complement of the set

; i.e., an arbitrary linear combination of vectors

is orthogonal to the gradient

. Therefore, condition (

7) can be rewritten as

or

where functions

, …,

can be chosen arbitrarily under the additional condition of existence of a solution to Equation (

5). They represent expansion coefficients of columns

relative to the set of linearly independent vectors

, the basis of the linear subspace

.

Conditions (

8) and (

10), taking into account the above notations, can be rewritten as follows:

or

where functions

, …,

, like the previously introduced functions

, …,

, can be chosen arbitrarily under the additional condition of existence of a solution to Equation (

5).

The choice of functions for and specifies deterministic and stochastic components of a dynamic system (drift and diffusion coefficients). It influences such properties of invariant stochastic differential systems as stability (or partial stability) and optimality. The study of such properties requires additional conditions, e.g., stability and optimality criteria.

Remark 3.

The proposed approach has several advantages. First, it provides a minimum number of functions that define the entire set of invariant stochastic differential systems with a given first integral. Second, coefficients of Equations (

5)

and (

9)

depend on these functions linearly. If we consider such functions as the control inputs and formulate an optimal control problem for the system, then the linearity of coefficients ensures a simpler optimal control structure. Third, the definition of vectors allows one to ensure a degenerate diffusion matrix relative to some components, which is often necessary in applied problems such as motion control. Remark 4.

If , then the vector is equal to zero; therefore, and coincide, and is the linear manifold . In a particular case, the vector Σ

can also be zero, then . For example, this statement is valid for the system of Equation (

4)

. Next, we formulate invariance conditions that follow from the above reasoning.

Theorem 1.

Let the conditions of Proposition 1 be satisfied if , or the conditions of Proposition 2 be satisfied if . Then,

(1)

For the invariance of a stochastic differential system defined by the Itô stochastic differential equation (

5),

it is necessary and sufficient that conditions (

19)

and (

20)

hold on trajectories of the random process ;(2)

For the invariance of a stochastic differential system defined by the Stratonovich stochastic differential Equation (

9)

, it is necessary and sufficient that conditions (

19)

and (

21)

hold on trajectories of the random process . The conditions of Theorem 1 can be weakened, taking into account Remark 2. The described approach can be applied when

and conditions

,

, …,

used in Propositions 1 and 2 are violated on some subset in

. On such a subset, vectors

are not linearly independent; i.e., the basis of the linear subspace

degenerates. When the basis degenerates, condition (

6) holds, and conditions (

19), (

20), and (

21) are only sufficient but not necessary.

For example, consider the invariant stochastic differential system for

and

with the first integral

. The difference from Formula (

2) is only in the numerical coefficient (see also the system of Equation (

3) describing the rotation of a rigid body in three-dimensional space):

Here, the basis degenerates if

or

. In this problem, it is better to use vectors

, and

, where

instead of vectors

, and

. This follows from the system of Equation (

3), which can be rewritten in the form (

9):

where

is the standard vector Wiener process corresponding to the vector Gaussian white noise

.

At the same time, the first integral (

2) corresponds to infinitely many invariant stochastic differential systems, and the system of Equation (

22) describes only one of them.

If

and

, then vectors

, and

are related to vectors

, and

by expressions

but such a basis does not degenerate. Moreover, it is the orthonormal basis—

—and the determinant of the matrix formed by vectors

is

(this is easy to verify). However, in the general case, it is difficult to propose a basis that is defined by the same simple formulae and has similar properties (the goal of the article is to propose precisely a simple method for forming invariant stochastic differential systems).

Consider again the system of Equation (

4), defining iterated stochastic integrals of the second multiplicity. Here,

,

, and

. It is easy to show that such a system of equations can be obtained by the described method. Indeed,

hence,

In this case,

and this corresponds to conditions (

19), (

20), and (

21).

4. Invariant Stochastic Differential Systems of the Second, Fourth, and Eighth Orders

In the previous section, we noted that the basis (

13) of the linear subspace

can degenerate. This section examines sequentially invariant stochastic differential systems of the second, fourth, and eighth orders. Here, a basis of the linear subspace

is related to the definition of the multiplication of complex numbers (

), quaternions (

), and octonions (

).

The following proposition is formulated for the case . Although trivial, it is important in the general context.

Proposition 3.

Let and , where G is given by Formula (

12)

. Then, vectors G and , whereare orthogonal, and the determinant of the matrix formed by these vectors is equal to . Proof. Indeed,

i.e., vectors

G and

are orthogonal, and

The proposition has been proven. □

The vector

in Proposition 3 differs in sign from the corresponding vector in set (

13); therefore, Proposition 3 can be considered as the corollary of Proposition 1.

A classic example of a second-order invariant stochastic differential system is the Kubo oscillator [

36]. Trajectories of such a system almost surely belong to a circular cylinder, and the phase trajectories lie on the circle (the cylinder projection onto the phase plane). Proposition 3 covers all invariant second-order stochastic differential systems, e.g., those with first integrals that correspond to elliptic, parabolic, and hyperbolic cylinders [

37].

For

, the system of Equation (

3) describing the rotation of a rigid body in three-dimensional space uses the non-degenerate basis. In this example, the first integral defines a three-dimensional hypersphere centered at the origin and with unit radius. However, the similar result can be formulated for more general invariant stochastic differential systems, namely with arbitrary first integrals.

Proposition 4.

Let and , where G is given by Formula (

12)

. Then, vectors subject toare orthogonal, and the determinant of the matrix formed by these vectors is equal to . Proof. Any of the vectors , and can be obtained from vector G using the following transformation. Components of vector G are divided into pairs; then, elements in each pair are permuted, and the sign of one element in the pair changes after the permutation. Geometrically, such a transformation corresponds to rotating the points of the plane by a right angle.

This transformation ensures pairwise orthogonality of vectors . It can be verified by directly calculating the pairwise inner products (they are equal to zero).

Next, we find the determinant of the matrix formed by such vectors:

The proposition has been proven. □

Remark 5.

In the context of this work, it is sufficient to show that the matrix formed by vectors is non-singular.

It is easy to see thati.e., vectors are orthonormal. The matrix formed by these vectors is orthogonal and, therefore, non-singular. This implies that the matrix is non-singular. Next, we consider the case .

Proposition 5.

Let and , where G is given by Formula (12). Then, vectors subject toare orthogonal, and the matrix formed by these vectors is non-singular. The proof of this proposition is based on the reasoning used in both the proof of Proposition 4 and Remark 5. Additionally, it can be shown that the determinant of the matrix formed by vectors is equal to .

It is impossible to construct an orthogonal basis in a space of arbitrary dimension in the same way, i.e., by partitioning components of vector

G into pairs, then permuting elements and changing the sign of one element in all pairs. The properties of algebras of complex numbers, quaternions, and octonions are significantly important here [

38]. However, we can use the orthogonal basis corresponding to cases

or

in spaces of lower dimensions.

For example, consider the basis (

24) corresponding to the case

, setting

and not taking into account the last component (the projection of the basis in

):

Vectors

, and

are orthogonal to the vector

but the determinant of the matrix formed by vectors

, and

is equal to zero. However, the rank of such a matrix is equal to two provided that

, i.e., there exist two linearly independent vectors. As an example, we can consider diffusion on a sphere, namely a problem that involves a third-order invariant stochastic differential system [

35]. Its state belongs to a sphere in three-dimensional space centered at the origin and with a radius determined by the initial state. This is precisely the set of vectors used in such a problem.

As another example, consider the basis (

25) for the case

, setting

and ignoring the last two components (the projection of the basis in

):

Vectors are orthogonal to the vector . The rank of the matrix formed by vectors is equal to five provided that , i.e., we can choose five linearly independent vectors from seven vectors. Projections of the basis in and are constructed similarly.

Next, we restrict ourselves to the case

(see Remark 4) and rewrite conditions (

7), (

8), and (

10) as

where

In this case, vectors

are determined by the Formulae (

23), (

24), or (

25) for

,

, or

, respectively. The vector

, as before, is defined by relation (

11).

Now we can formulate weaker invariance conditions compared to Theorem 1.

Theorem 2.

Let , , and , where G is given by Formula (

12)

. Then, (1)

For the invariance of a stochastic differential system defined by the Itô stochastic differential equation (

5)

, it is necessary and sufficient that conditions (

26)

and (

27)

hold on trajectories of the random process ;(2)

For the invariance of a stochastic differential system defined by the Stratonovich stochastic differential equation (

9)

, it is necessary and sufficient that conditions (

26)

and (

28)

hold on trajectories of the random process . For example, consider the case

and

(see also the system of Equation (

4) defining iterated stochastic integrals of the second multiplicity). We restrict ourselves to the simplest condition (

26) for brevity. Formula (

24) defines the following vectors:

since

Then, condition (

26) can be rewritten in the form

where functions

,

, and

can be chosen arbitrarily under the additional condition of existence of a solution to the corresponding stochastic differential equation.

For condition (

27), vector

should be found, while condition (

28) does not require any additions.

The approach described in [

22,

23,

26] yields the following condition:

where the determinant is understood formally as for a vector product. Its first row is formed by unit vectors

, columns of the identity matrix

E of size

. The second row contains components of vector

G, and the remaining rows contain functions

, …,

and

, …,

. The choice of these functions along with the function

is limited by the existence condition for a solution to the corresponding stochastic differential equation.

According to [

22,

23,

26], conditions for coefficients

and

are specified by the determinant of the

-matrix similar in structure to the above determinant of the

-matrix.

This example demonstrates that the proposed method provides simpler expressions for coefficients of stochastic differential equations and a minimum number of functions required to determine the entire set of invariant stochastic differential systems with a given first integral. All such functions are included in coefficients of equations linearly.

6. Conclusions

This study presents the solution to the inverse dynamics problem, specifically the method for forming invariant stochastic differential systems associated with a given first integral. The main advantages of this method are as follows:

(1) The method provides simple expressions for coefficients of stochastic differential equations.

(2) The method ensures a minimum number of functions required to determine the entire set of invariant stochastic differential systems associated with a given first integral (coefficients of equations depend on these functions linearly).

(3) The method allows one to obtain stochastic differential equations with a degenerate diffusion matrix relative to a part of the state components.

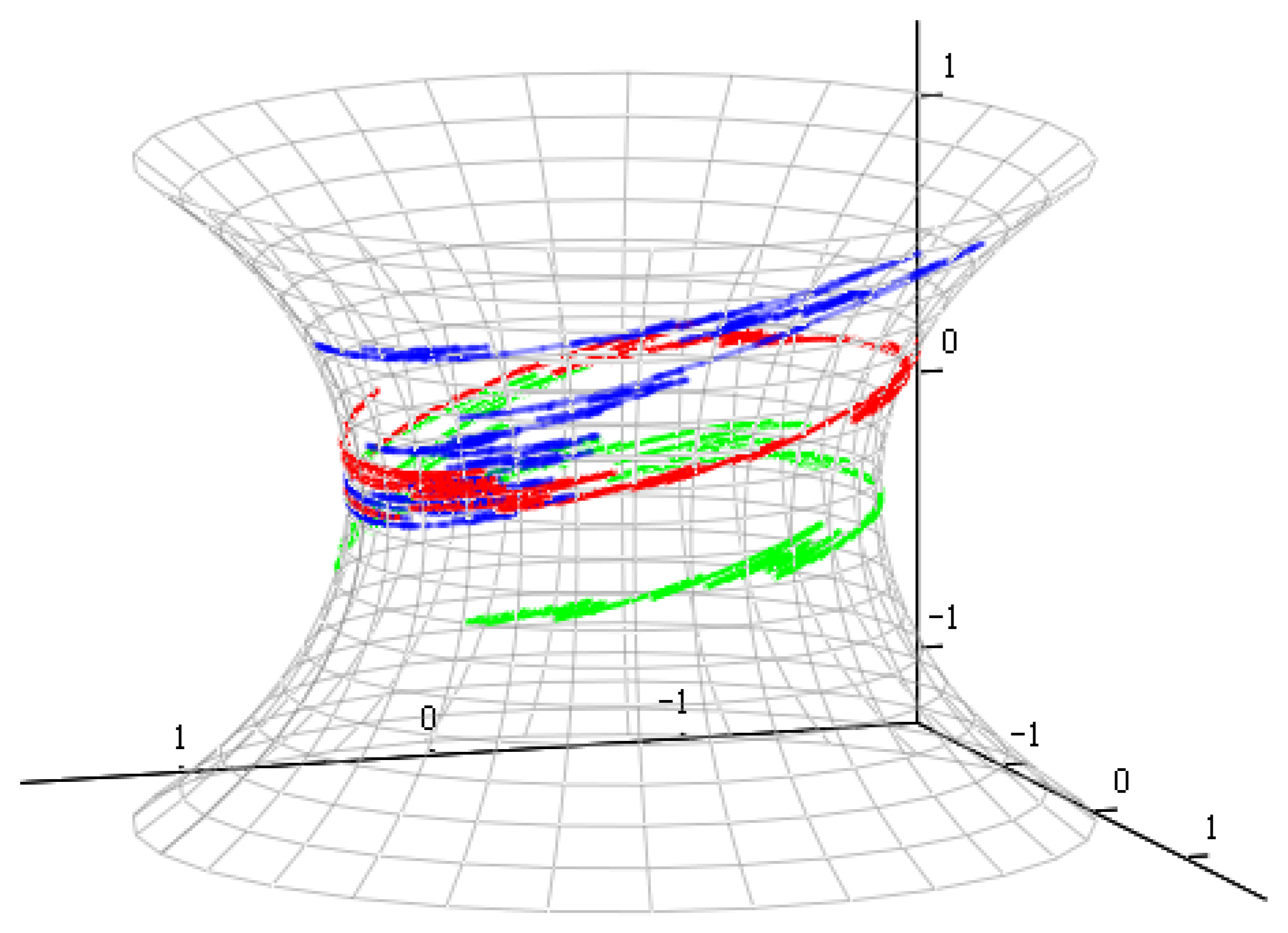

In addition to the theoretical results, the article includes numerical simulations of three invariant stochastic differential systems. For the first system (a third-order system), the state belongs to a catenoid. For the second system (a second-order system), the state belongs to a time-dependent parabola (a dynamic manifold). For the third system (a third-order system), the state belongs to a sphere.

For numerical simulations, the Milstein method [

8] and the Artemiev method [

7], which have first-order convergence, are used in the first two examples. The numerical solutions do not belong to the specified manifolds due to the error in the numerical methods; however, this error is small and fully corresponds to the indicated order of convergence. The third example uses an analytical solution.

The described method can be applied to derive invariant deterministic differential systems. It can be utilized to form more complex invariant stochastic differential systems. They are characterized by stochastic differential equations that include Wiener and Poisson components [

23,

26], as well as those with variable or random right-hand sides [

40].