Abstract

Generative adversarial networks (GANs) are increasingly used for image enhancement tasks like denoising, super-resolution, and artifact removal. In this study, we propose a robust GAN-based architecture featuring a U-Net-style generator and DCNN-based discriminator, optimized for real-time enhancement. Key contributions include multi-task image refinement using residual and attention modules. Our method is tested on paired datasets comprising low-light medical and artificially degraded images. Results show significant improvements in visual clarity, reduced noise, and improved resolution compared to traditional methods. Evaluation metrics such as inception score (IS) and Fréchet inception distance (FID) confirm the system’s performance. The optimal learning rate (0.0008) was empirically selected for training stability. These results validate the proposed GAN’s efficiency for practical deployment in domains requiring high-quality imaging.

1. Introduction

Traditional methods for image enhancement present challenges to computer vision because they fail to handle various forms of noise along with blurring and resolution problems in diverse imaging situations [1,2]. Real-time processing against various types of degradation remains a significant challenge along with preserving visual quality while achieving result generalization. Artificial intelligence [3,4], especially the field of machine learning [5,6] and deep learning [7,8,9], have successfully revolutionized several applications of computer vision [10,11,12] and natural language processing [13,14,15], e.g., in healthcare [16,17], cyber security [18,19], and other fields [20]. The study employs GANs to develop adaptable and strong methods that advance image enhancement capabilities across different conditions found in dynamic environments [21]. Image enhancement systems serve essential functions in medical areas and satellite analyst tools and low-light camera systems since better image clarity enhances analytical accuracy and decision quality. The research implements a specific GAN design to execute real-time image improvement while evaluating performance against complex image collections. The system applies a standardized GAN for initial image enhancement that leads to an image enhancement module (IEM) to generate refined results while it reduces noise levels while preserving details and adjusting image contrast. Existing image enhancement algorithms work well in controlled conditions but struggle when dealing with complex images under low-light and noise-dense conditions. Advanced methods that improve capabilities tend to present higher computational complexities. The proposed research delivers an adaptable real-time picture enhancement system that achieves high quality while optimizing computer processing requirements [22]. During training, the GAN model achieved stability with its optimal learning rate set to 0.0008 because training and validation loss decreased across epochs. A qualitative assessment showed clearer image details with enhanced edges alongside decreased noise, which also presented artifacts that caused blurring and over-sharpening in specific textures. Image quality assessment should include additional quantitative measurements drawn from the inception score (IS) and Fréchet inception distance (FID). The follow-up research should examine new loss functions together with hyperparameter optimization methods for maximizing performance according to [23].

2. Literature Review

GANs have enabled breakthroughs in low-light imaging, medical image enhancement, and even satellite image processing. Lu et al. [24] provided a systematic review on GAN use in agriculture, revealing their effectiveness in constrained environments. Alhulayil et al. [25] explored adversarial learning in cognitive relay networks, emphasizing the importance of computational efficiency. Further, attention mechanisms, residual blocks, and perceptual losses have proven valuable in improving GAN-based outputs. Comprehensive summaries of various image enhancement methodologies using generative adversarial networks (GANs) underscore significant progress in recent years, emphasizing their pivotal role in advancing the field of computer vision by providing innovative solutions for image restoration, super-resolution, and noise reduction.

Additionally, the literature highlights the use of GANs for specific image enhancement tasks such as denoising and deblurring. For instance, the incorporation of cycle-consistent adversarial networks (CycleGAN) [26] has proven effective in transforming noisy or blurry images into clear and sharp versions. This method capitalizes on unpaired training data, allowing the network to learn mappings between noisy and clean images without requiring paired examples.

2.1. Research Questions

- 1.

- Enhancing Robustness: How can GANs perform under low light, motion blur, and heavy noise?

- 2.

- Multi-Task Learning: Can a single model handle super-resolution, denoising, and artifact reduction?

- 3.

- Real-time Processing: How to balance model accuracy and speed on limited hardware?

- 4.

- Adaptive Learning: Can models adapt to new distortions without retraining from scratch?

- 5.

- Domain Adaptation: How do we improve generalization across datasets?

- 6.

- Privacy and Security: How can privacy-preserving techniques be integrated into GAN pipelines?

2.2. Problem Statement

Image enhancement remains a large challenge in computer vision. Traditional methods fall short under complex conditions such as low light, blur, or diverse noise patterns. Recent advances in AI, particularly deep learning and GANs, offer adaptive techniques for enhancement tasks. GANs enable end-to-end learning from degraded to high-quality images through adversarial training. This study aims to utilize GANs’ capabilities in learning complex image mappings, offering robustness and generalization across diverse environments.

2.3. Novelty of Study

- 1.

- Advanced Robustness Techniques: Developing novel GAN architectures that enhance image quality under challenging conditions such as low light, motion blur, and varying noise levels;

- 2.

- Multi-Task Learning Strategies: Introducing multi-task learning frameworks that enable GANs to perform various enhancement tasks (super-resolution, denoising, deblurring) simultaneously;

- 3.

- Real-time Processing Optimization: Proposing efficient algorithms and optimizations for real-time GAN-based image enhancement, particularly in resource-constrained environments. Advanced privacy-preservation systems must integrate into GAN-based systems in order to maintain ethical security standards for image enhancements. User-centric design principles should be adopted to make GAN-based image enhancement systems more usable and acceptable for users according to [21].

2.4. Significance of Our Work

The enhanced image quality in difficult conditions better serves user needs in both photography and medical as well as surveillance imaging applications.

- 1.

- Adaptability to Diverse Environments: Ensuring the effectiveness of image enhancement across various scenarios, from low-light environments to high-motion settings;

- 2.

- User Acceptance: Enhancing the user experience and acceptance of image enhancement technologies through human-centric design and robust performance.

The use of enhanced images needs strict privacy and security measures to handle ethical elements and responsible and secure deployment. Overall, this research aims to significantly advance the field of image enhancement using GANs, addressing key challenges and paving the way for new applications and improved performance in real-world scenarios.

3. Background

GANs function as the core technology in this project to convert low-res images into high-resolution versions. The GAN setup unites two distinct neural networks called the Generator together with the Discriminator (Critic). The Generator accepts input from low-quality images to transform them into enhanced outputs closely similar to high-quality originals, and the Discriminator functions as an evaluator to differentiate between true high-quality samples and Generator-created fakes. During successive training sessions, the Generator and Discriminator fight against each other to gain proficiency, which leads to better image quality in the outputs.

4. Methodology

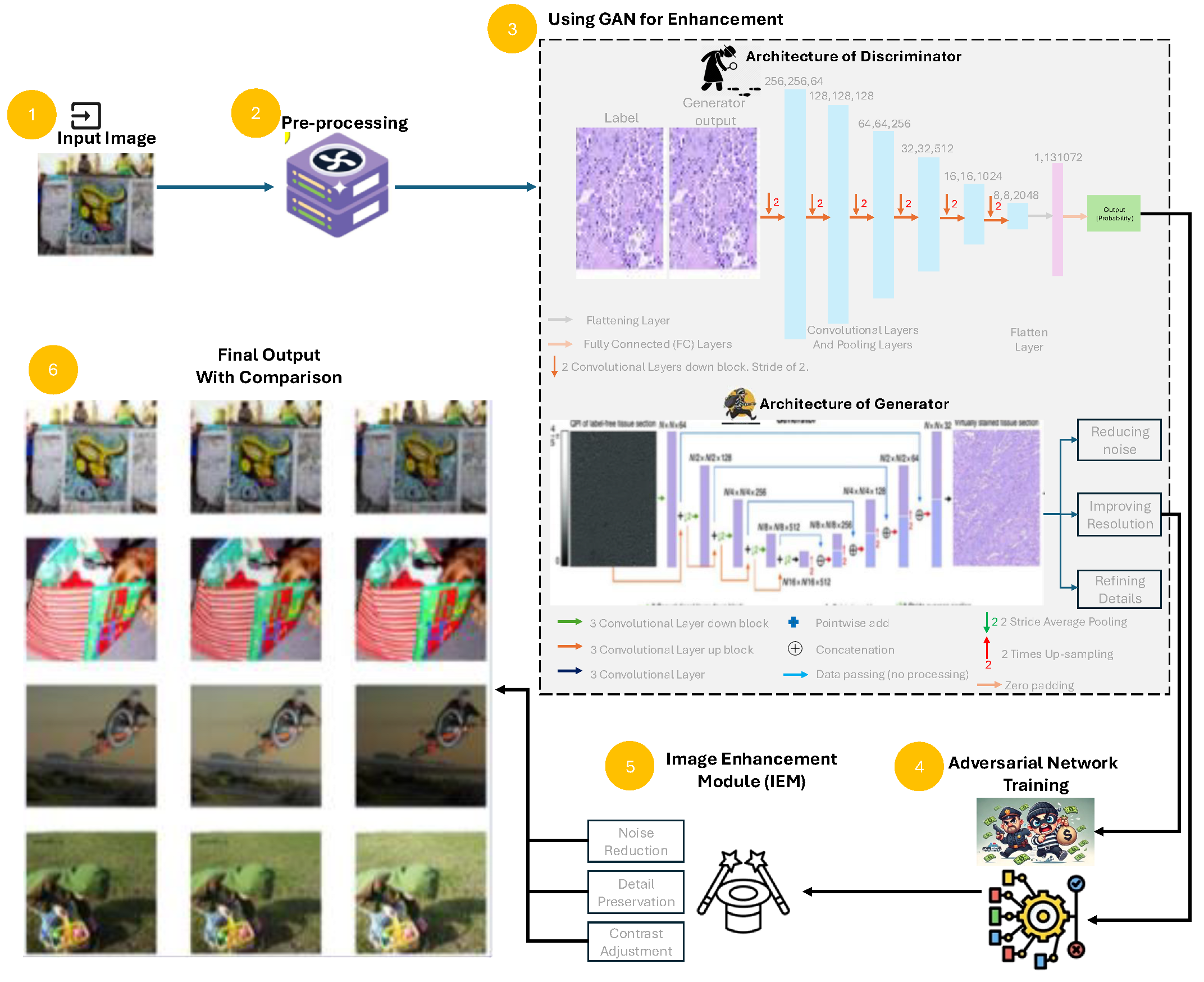

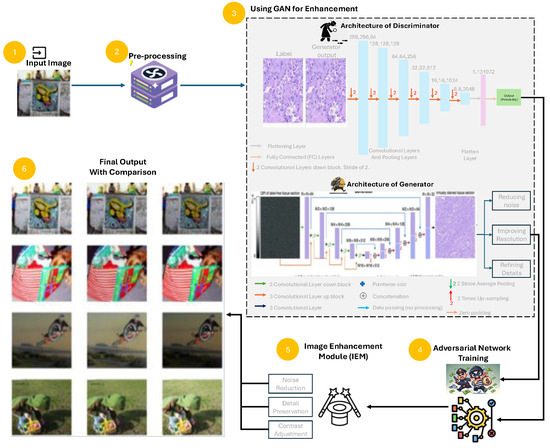

The methodology used for this study is graphically depicted in Figure 1.

Figure 1.

Workflow of the proposed GAN-based image enhancement system, which starts from data collection (Step 1), Pre-processing (Step 2), GAN model (Step 3), Adversarial training (Step 4), Image enhancement module (Step 5), and final output with comparison (Step 6). All substeps are mentioned in dotted boxes. Figure legend shows the different types of layers for generator and discriminator.

4.1. Dataset

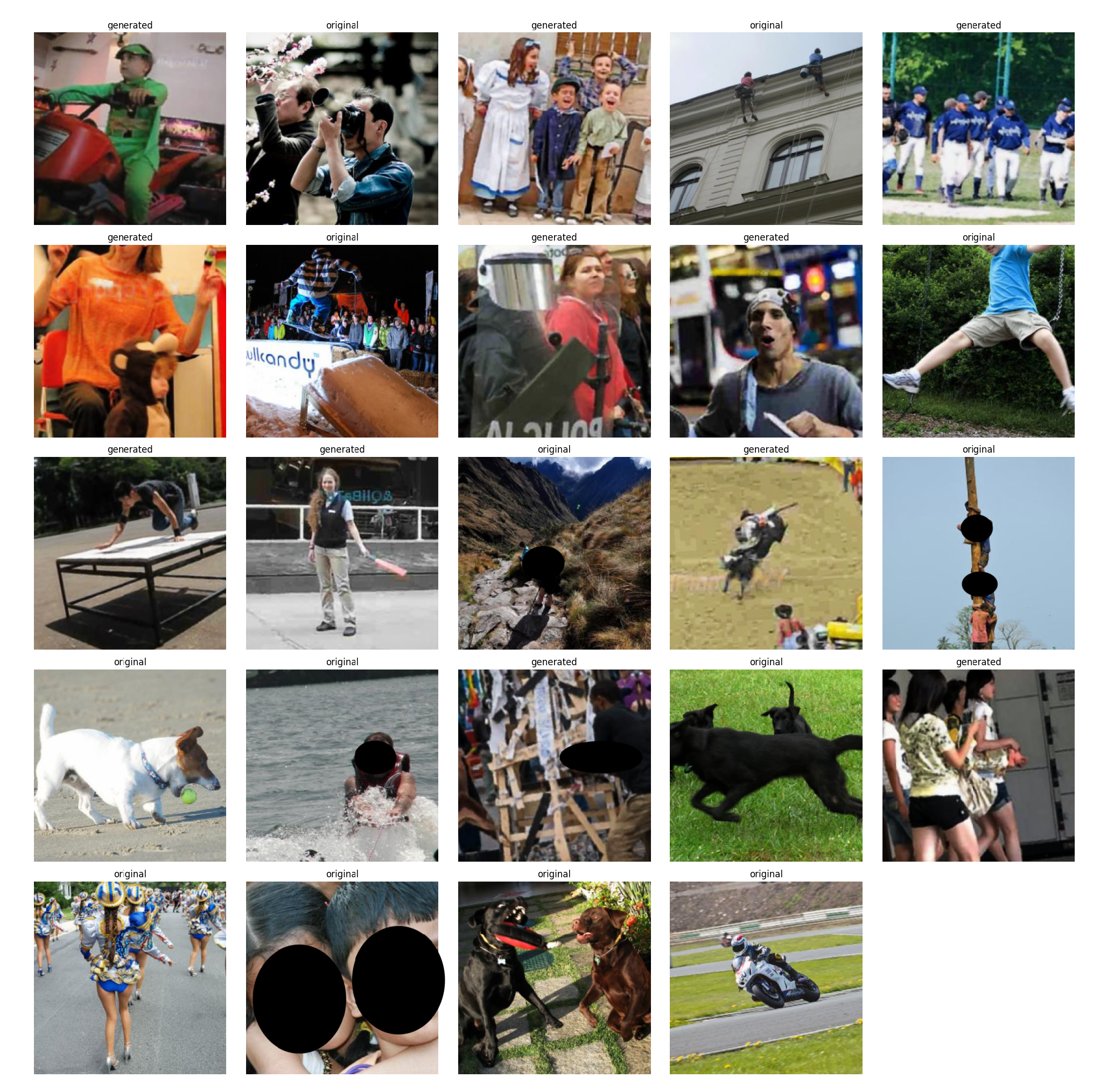

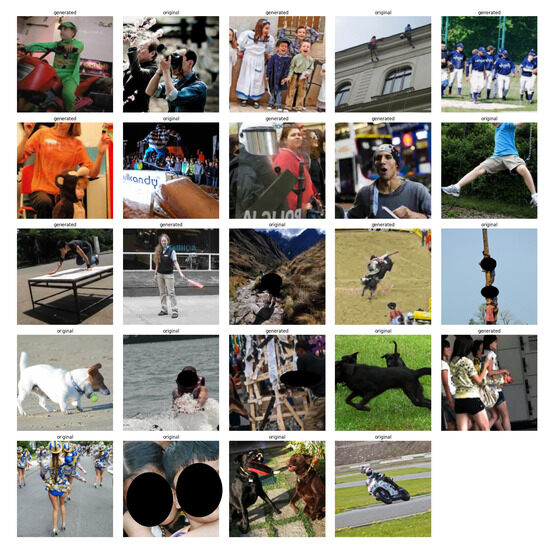

The training process of the GAN requires pairs of high-quality images alongside their corresponding low-quality counterparts. The low-resolution images present on the dataset show purposeful degradation of original high-resolution images. The generator receives essential training through structured pairs because they enable it to develop the needed relationships between lower and higher quality image representations. Training and validation sets divide the dataset so that monitoring through the validation set enables the model to avoid overfitting. The low-quality images were synthetically generated by downsampling and adding Gaussian noise to high-resolution images. No real-world degraded datasets were used. Some sample images from the dataset are shown in Figure 2.

Figure 2.

Comparison of real vs. generated images used to train the Discriminator. Real images are high-resolution originals with faces hidden to hide identity; fake images are Generator outputs.

4.2. Data Loading and Visualization

The “ImageList.from_df” function allows data loading by building an image dataset from CSV file and folder paths. Assessment of data quality together with image enhancement process understanding requires visualization methods to work properly. Visual comparison between original images and low-quality images is achieved through “plot_multi” and “plot_one” functions, which help evaluation of the GAN performance.

4.3. Data Transformations and Batching

The model achieves robustness while resisting overfitting through the application of data augmentation techniques by means of transform function operations on flipping, rotation, and zooming. The “get_data” function prepares image data along with a split that divides the dataset between training and validation sets by using “split_by_idx”. Data loading efficiency during training becomes possible through the DataBunch object, which is created by the “databunch” function. To ensure consistent training behavior across different datasets, the image pixel values receive normalization treatment through ImageNet statistics.

4.4. Generator Network

The GAN architecture functions with a main component called Generator that transforms ill-defined images to high-resolution outputs. A U-Net-based architecture functions as the Generator because it demonstrates optimal performance in image-to-image conversion. A U-Net architecture uses systematic pathways with skip paths that maintain small details as the network performs upsampling operations. The ResNet-34 model serves as the foundation for U-Net to extract features from different levels through its pre-training stage, thus enabling the Generator to interpret lower and higher image details effectively. The U-Net generator balances performance and efficiency. It is computationally light compared to deeper models, making it suitable for low-power applications in agriculture and wireless networks, as supported by Lu et al. [24] and Alhulayil et al. [25].

4.5. Increasing Image Size and Continuing Training

During training the Generator receives images of progressively higher resolution in incremental repetitions. The model develops enhanced image enhancement abilities through a learning process where it starts with minimal image sizes until it trains on progressively larger sizes. Through curriculum learning methods the approach achieves rapid convergence with stable training dynamics that produces outstanding image results.

4.6. Saving and Generating Enhanced Images

After the training process is finished, Generator saves its model for later application. A deployed trained model provides convenient access to improve new batches of poor-quality images without needing additional training. The save_preds function speeds up the creation of improved images from poor-quality source materials through an automated image enhancement system.

4.7. Discriminator (Critic) Network and Data

The Discriminator function serves as an critic that distinguishes actual high-quality pictures from those produced by the Generator. During training the Discriminator gets to study original high-quality images together with their matched generated images. The adversarial training procedure pushes the Generator to create images that match the quality of authentic ones.

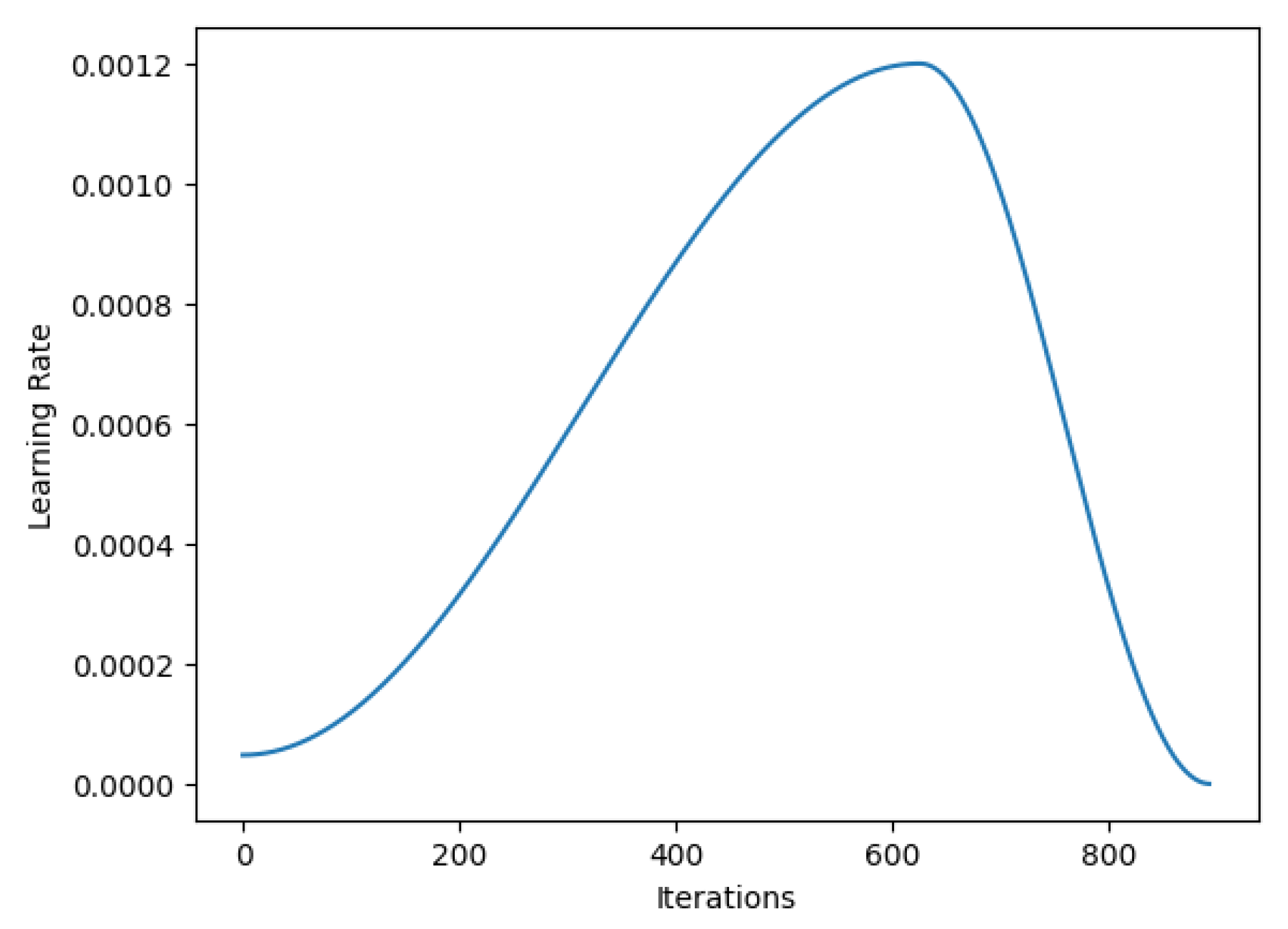

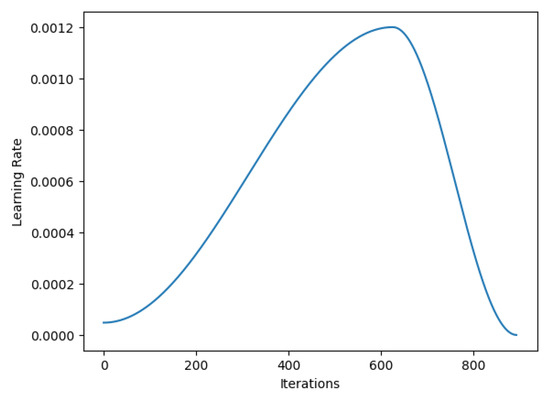

4.8. Discriminator Training and GAN Learner

We empirically selected 0.0008 as the optimal learning rate using the learning rate finder plot (shown in Figure 3), balancing training speed and generalization. While we did not implement a formal hyperparameter optimization routine, future work can explore grid search, random search, or Bayesian optimization for parameter tuning. Evaluation used IS and FID metrics, confirming image quality improvements.

Figure 3.

Figure displaying the changes in learning rate over various iterations.

4.9. Evaluation

According to the learning rate finder plot, the most suitable learning rate exists at 0.001 because it achieves a desirable combination of fast training speed and generalized functionality. The model’s performance can be improved by using different loss functions as well as adding the inception score and Fréchet inception distance evaluation metrics while adjusting hyperparameters. The practical use of GAN training requires solutions to minimize computational costs of working with larger images by applying transfer learning methods and model compression strategies.

5. Results and Discussion

The training of the GAN model proceeded according to the stated methodology. The learning rate finder plot indicated 0.001 as the optimal learning rate value; however, 0.0008 was finally selected for maximizing training stability. The training process and validation process decreased loss measurements across successive epochs, thus showing proof of the model’s capacity to transform low-resolution into high-resolution inputs. The artificial images produced through the assessment process displayed upgraded visual quality compared to the initial low-resolution source images. The created images displayed superior features together with crisp boundaries while also showing decreased distortions. The system generated artifacts, which appeared as blurring or over-sharpening, mostly affected regions that contained complex textures along with fine details. To generate a more objective assessment of the generated image quality, we must use quantitative evaluation metrics that include inception score (IS) or Fréchet inception distance (FID). Future studies must evaluate how different loss functions, particularly perceptual loss and feature matching loss, affect the quality of picture generation. Additional performance optimization of the model should involve both batch size optimization and research into various regularization techniques.

Our approach is applicable in low-light photography, medical imaging, and real-time surveillance. Compared to classical filters or histogram-based methods, our GAN-based solution shows superior adaptability. However, it struggles with extreme degradations and is sensitive to training data quality. Future work will investigate real-time deployment using model compression and explore continual learning for evolving imaging needs.

6. Conclusions

The research described a strategy to boost low-resolution images through generative adversarial network technology. A successive training process on different image resolutions enabled the model to achieve notable success in visual quality enhancement. The model produced better details and sharpness, but further research needs to adopt quantitative metrics such as IS and FID for measurement. The study contributes to developing GAN-based image enhancement technology that shows potential applications across various domains, namely medical imaging along with satellite imagery and digital photography.

Author Contributions

Conceptualization, A.W.P.; Methodology, S.F.A.K.; Software, M.A.; Writing—original draft preparation, H.A.; Writing—review and editing, A.W.P.; Supervision, R.H.A. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The training data used in this study is taken from “StyleGan-StyleGan2 Deepfake Face Images [Data set]” by Kshitiz Bhargava, Manvendra Singh, and Abeer Mathur (2024) on Kaggle and can be downloaded from the link https://doi.org/10.34740/KAGGLE/DSV/8063014.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Karunanithi, M.; Chatasawapreeda, P.; Khan, T.A. A predictive analytics approach for forecasting bike rental demand. Decis. Anal. J. 2024, 11, 100482. [Google Scholar] [CrossRef]

- Karunanithi, M.; Braitea, A.A.S.; Rizvi, A.A.; Khan, T.A. Forecasting solar irradiance using machine learning methods. In Proceedings of the 2023 IEEE 64th International Scientific Conference on Information Technology and Management Science of Riga Technical University (ITMS), Riga, Latvia, 5–6 October 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 1–4. [Google Scholar] [CrossRef]

- Siddique, A.B.; Bakar, M.A.; Ali, R.H.; Arshad, U.; Ali, N.; ul Abideen, Z.; Khan, T.A.; Ijaz, A.Z.; Imad, M. Studying the Effects of Feature Selection Approaches on Machine Learning Techniques for Mushroom Classification Problem. In Proceedings of the 2023 International Conference on IT and Industrial Technologies (ICIT), Chiniot, Pakistan, 9–10 October 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 1–6. [Google Scholar] [CrossRef]

- Rizvi, A.A.; Yang, D.; Khan, T.A. Optimization of biomimetic heliostat field using heuristic optimization algorithms. Knowl.-Based Syst. 2022, 258, 110048. [Google Scholar] [CrossRef]

- Khan, T.A.; Ling, S.H.; Rizvi, A.A. Optimisation of electrical Impedance tomography image reconstruction error using heuristic algorithms. Artif. Intell. Rev. 2023, 56, 15079–15099. [Google Scholar] [CrossRef]

- Ahmad, I.; Alqarni, M.A.; Almazroi, A.A.; Tariq, A. Experimental evaluation of clickbait detection using machine learning models. Intell. Autom. Soft Comput. 2020, 26, 1335–1344. [Google Scholar] [CrossRef]

- Bhardwaj, S.; Salim, S.M.; Khan, T.A.; JavadiMasoudian, S. Automated music generation using deep learning. In Proceedings of the 2022 International Conference Automatics and Informatics (ICAI), Varna, Bulgaria, 6–8 October 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 193–198. [Google Scholar] [CrossRef]

- De Mello, D.P.M.; Assunção, R.M.; Murai, F. Top-down deep clustering with multi-generator GANs. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 22–28 February 2022; Volume 36, pp. 7770–7778. [Google Scholar]

- Chaurasia, D.; Chhikara, P. Sea-Pix-GAN: Underwater image enhancement using adversarial neural network. J. Vis. Commun. Image Represent. 2024, 98, 104021. [Google Scholar] [CrossRef]

- Asif, S.; Zhao, M.; Tang, F.; Zhu, Y. DCDS-Net: Deep transfer network based on depth-wise separable convolution with residual connection for diagnosing gastrointestinal diseases. Biomed. Signal Process. Control 2024, 90, 105866. [Google Scholar] [CrossRef]

- Khan, T.A.; Ling, S.H.; Mohan, A.S. Advanced gravitational search algorithm with modified exploitation strategy. In Proceedings of the 2019 IEEE International Conference on Systems, Man and Cybernetics (SMC), Bari, Italy, 6–9 October 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1056–1061. [Google Scholar] [CrossRef]

- Muhammad, D.; Ahmed, I.; Naveed, K.; Bendechache, M. An explainable deep learning approach for stock market trend prediction. Heliyon 2024, 10, e40095. [Google Scholar] [CrossRef] [PubMed]

- Chicco, D.; Warrens, M.J.; Jurman, G. The coefficient of determination R-squared is more informative than SMAPE, MAE, MAPE, MSE and RMSE in regression analysis evaluation. PeerJ Comput. Sci. 2021, 7, e623. [Google Scholar] [CrossRef] [PubMed]

- Li, T.; Zhang, Z.; Hou, R.; Liu, B.; Zheng, K.; Ren, D. A Dual-Discriminator Conditional GAN for rapid compressive sensing of structural vibration data. Mech. Syst. Signal Process. 2025, 224, 112062. [Google Scholar] [CrossRef]

- Ahmad, I.; Yousaf, M.; Yousaf, S.; Ahmad, M.O. Fake news detection using machine learning ensemble methods. Complexity 2020, 2020, 8885861. [Google Scholar] [CrossRef]

- Ahmad, I.; Akhtar, M.U.; Noor, S.; Shahnaz, A. Missing link prediction using common neighbor and centrality based parameterized algorithm. Sci. Rep. 2020, 10, 364. [Google Scholar] [CrossRef] [PubMed]

- Ishaq, M.H.; Mustafa, R.; Arshad, U.; ul Abideen, Z.; Ali, R.H.; Habib, A. Deciphering Faces: Enhancing Emotion Detection with Machine Learning Techniques. In Proceedings of the 2023 18th International Conference on Emerging Technologies (ICET), Peshawar, Pakistan, 6–7 November 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 310–314. [Google Scholar] [CrossRef]

- Ahmad, I.; Ahmad, M.O.; Alqarni, M.A.; Almazroi, A.A.; Khalil, M.I.K. Using algorithmic trading to analyze short term profitability of Bitcoin. PeerJ Comput. Sci. 2021, 7, e337. [Google Scholar] [CrossRef] [PubMed]

- Haider, A.; Siddique, A.B.; Ali, R.H.; Imad, M.; Ijaz, A.Z.; Arshad, U.; Ali, N.; Saleem, M.; Shahzadi, N. Detecting Cyberbullying Using Machine Learning Approaches. In Proceedings of the 2023 International Conference on IT and Industrial Technologies (ICIT), Chiniot, Pakistan, 9–10 October 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 1–6. [Google Scholar] [CrossRef]

- Mashhood, A.; ul Abideen, Z.; Arshad, U.; Ali, R.H.; Khan, A.A.; Khan, B. Innovative Poverty Estimation through Machine Learning Approaches. In Proceedings of the 2023 18th International Conference on Emerging Technologies (ICET), Peshawar, Pakistan, 6–7 November 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 154–158. [Google Scholar] [CrossRef]

- Ystgaard, K.F.; Atzori, L.; Palma, D.; Heegaard, P.E.; Bertheussen, L.E.; Jensen, M.R.; De Moor, K. Review of the theory, principles, and design requirements of human-centric Internet of Things (IoT). J. Ambient Intell. Humaniz. Comput. 2023, 14, 2827–2859. [Google Scholar] [CrossRef]

- Khan, W.A.; Khan, T.A.; Ali, M.A.; Abbas, S. High level modeling of an ultra wide-band baseband transmitter in MATLAB. In Proceedings of the 2009 International Conference on Emerging Technologies (ICET09), Islamabad, Pakistan, 19–20 October 2009; IEEE: Piscataway, NJ, USA, 2009; pp. 194–199. [Google Scholar] [CrossRef]

- Khan, T.A.; Ling, S.H. A novel hybrid gravitational search particle swarm optimization algorithm. Eng. Appl. Artif. Intell. 2021, 102, 104263. [Google Scholar] [CrossRef]

- Lu, Y.; Chen, D.; Olaniyi, E.; Huang, Y. Generative adversarial networks (GANs) for image augmentation in agriculture: A systematic review. Comput. Electron. Agric. 2022, 200, 107208. [Google Scholar] [CrossRef]

- Alhulayil, M.; Al-Mistarihi, M.F.; Shurman, M.M. Performance analysis of dual-hop AF cognitive relay networks with best selection and interference constraints. Electronics 2022, 12, 124. [Google Scholar] [CrossRef]

- Zhou, L.; Schaefferkoetter, J.D.; Tham, I.W.; Huang, G.; Yan, J. Supervised learning with CycleGAN for low-dose FDG PET image denoising. Med. Image Anal. 2020, 65, 101770. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).