A Novel Data-Driven Framework for Automated Migraines Classification Using Ensemble Learning †

Abstract

1. Introduction

2. Literature Review

3. Methodology

3.1. Machine Learning

3.1.1. K-Nearest Neighbors (KNN)

3.1.2. Naïve Bayes (NB)

3.1.3. Decision Tree (DT)

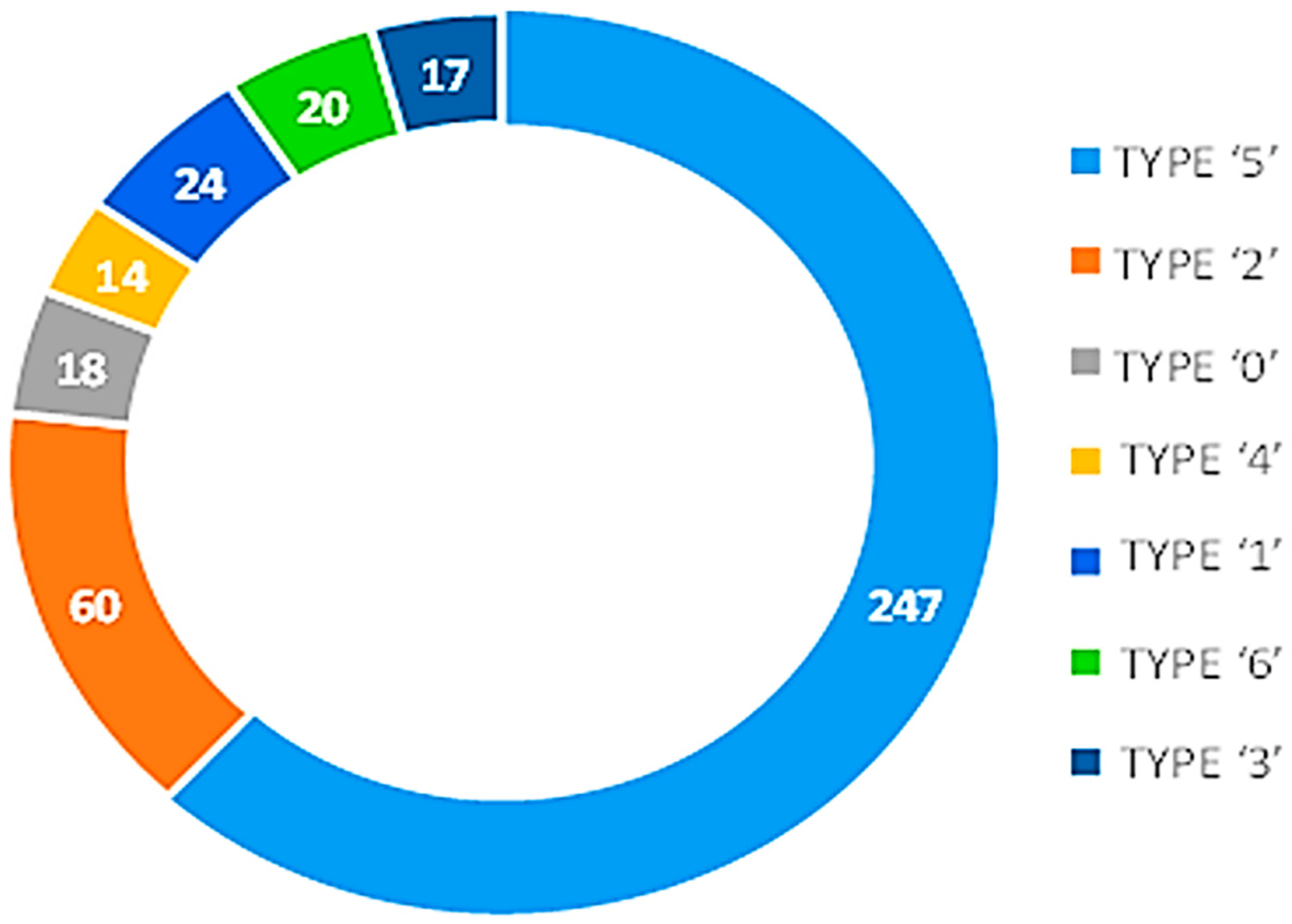

3.1.4. Data Gathering

3.1.5. Random Forest (RF)

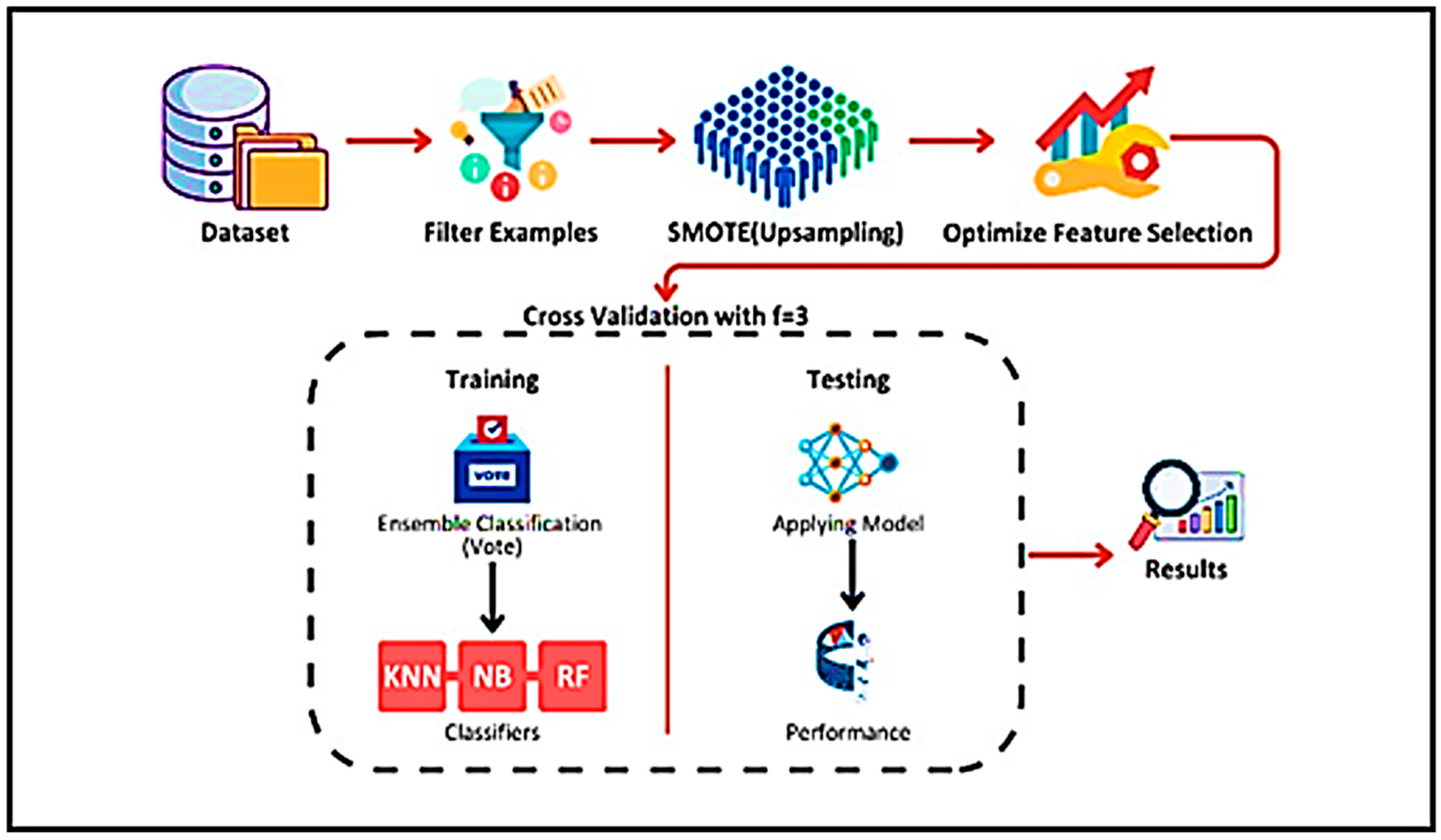

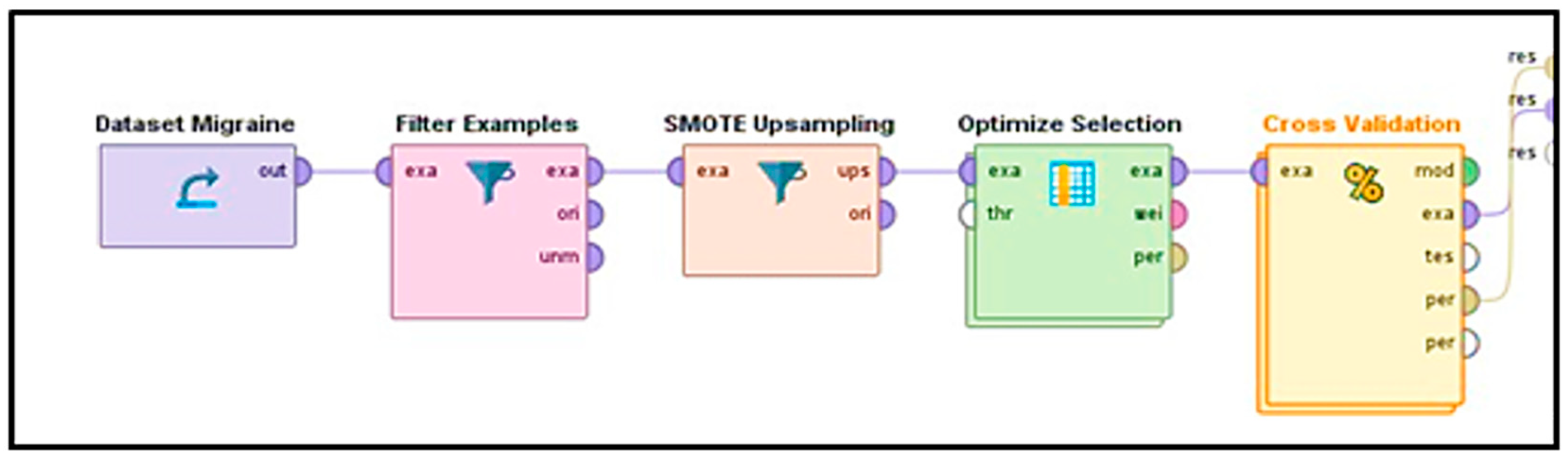

3.2. Framework

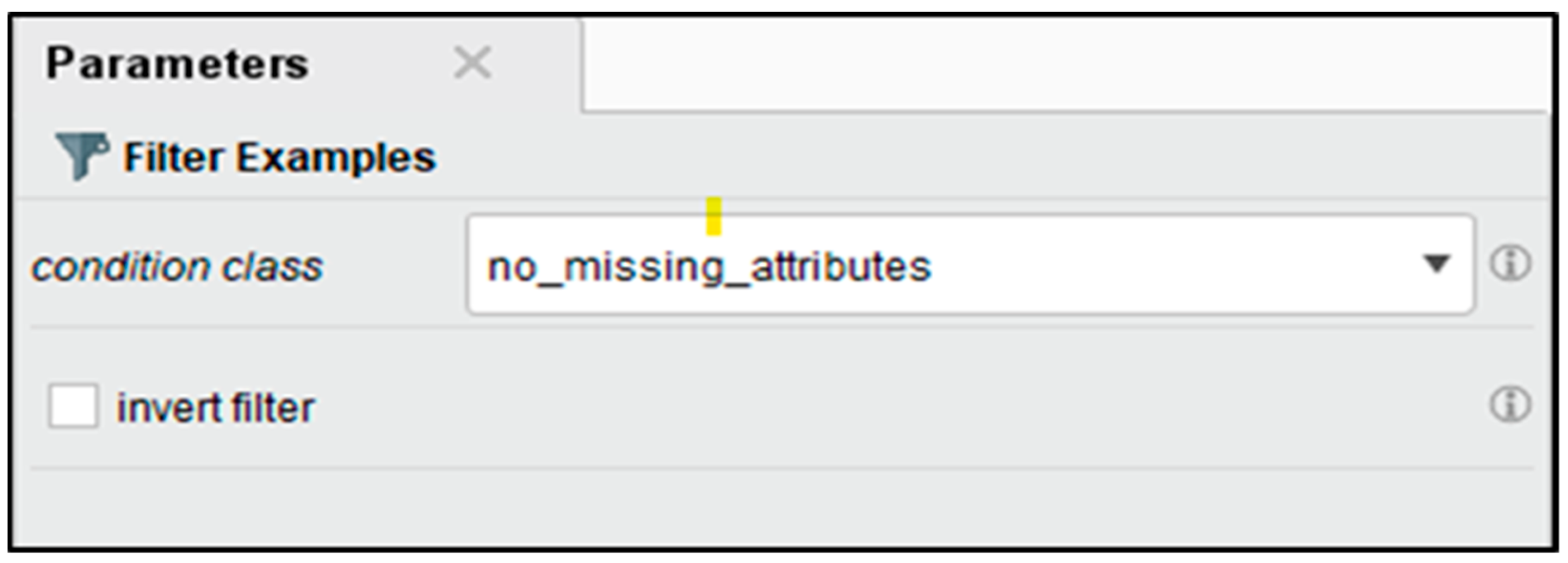

3.3. Data Preprocessing and Filter Examples

3.4. SMOTE (Upsampling)

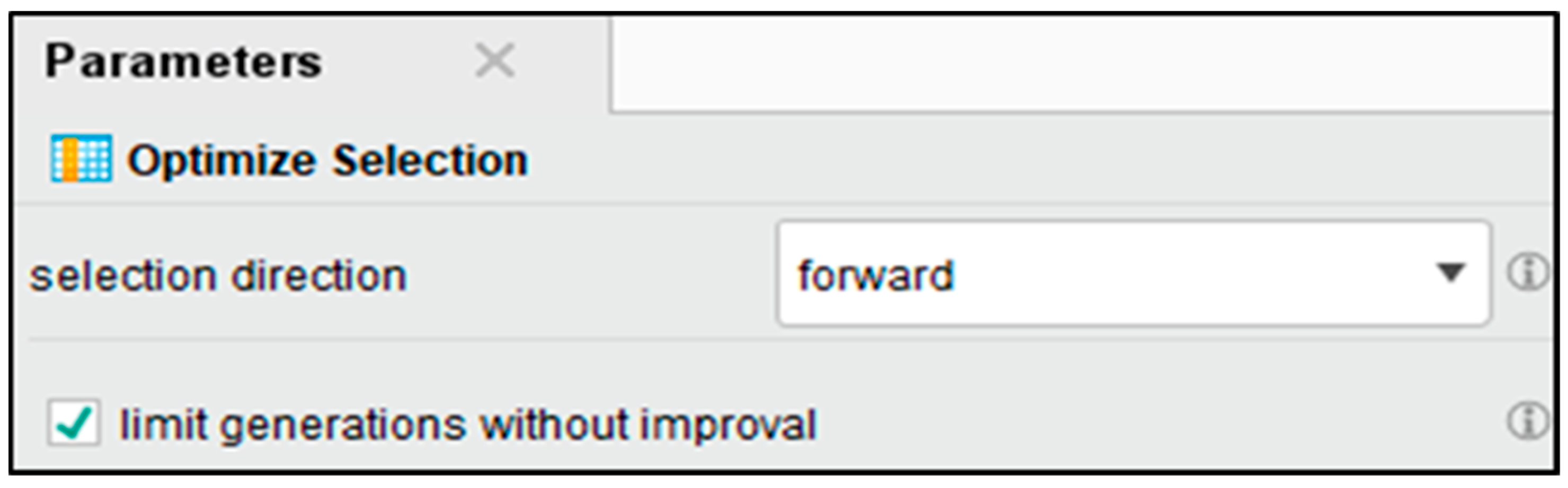

3.5. Optimize Feature Selection

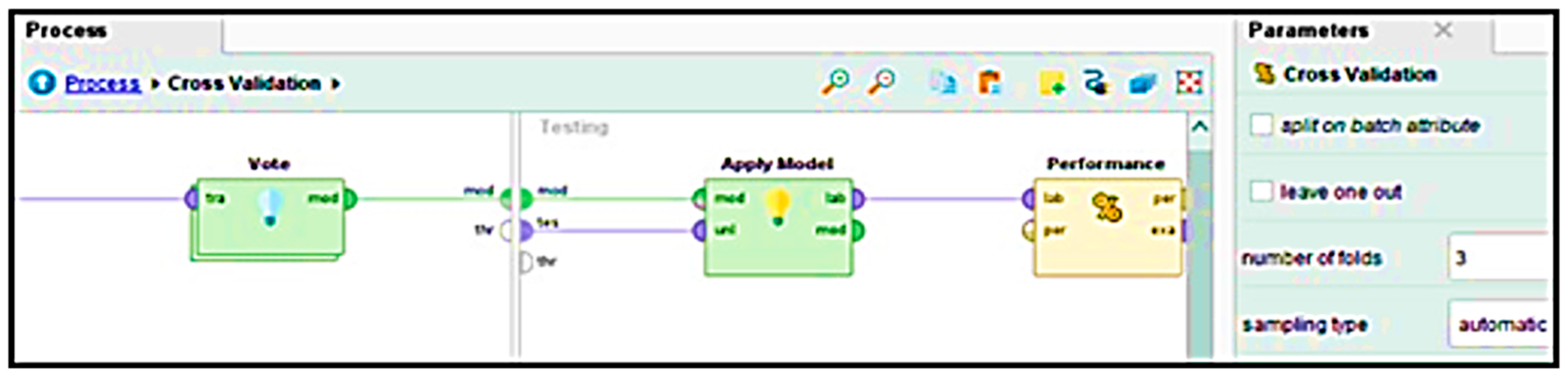

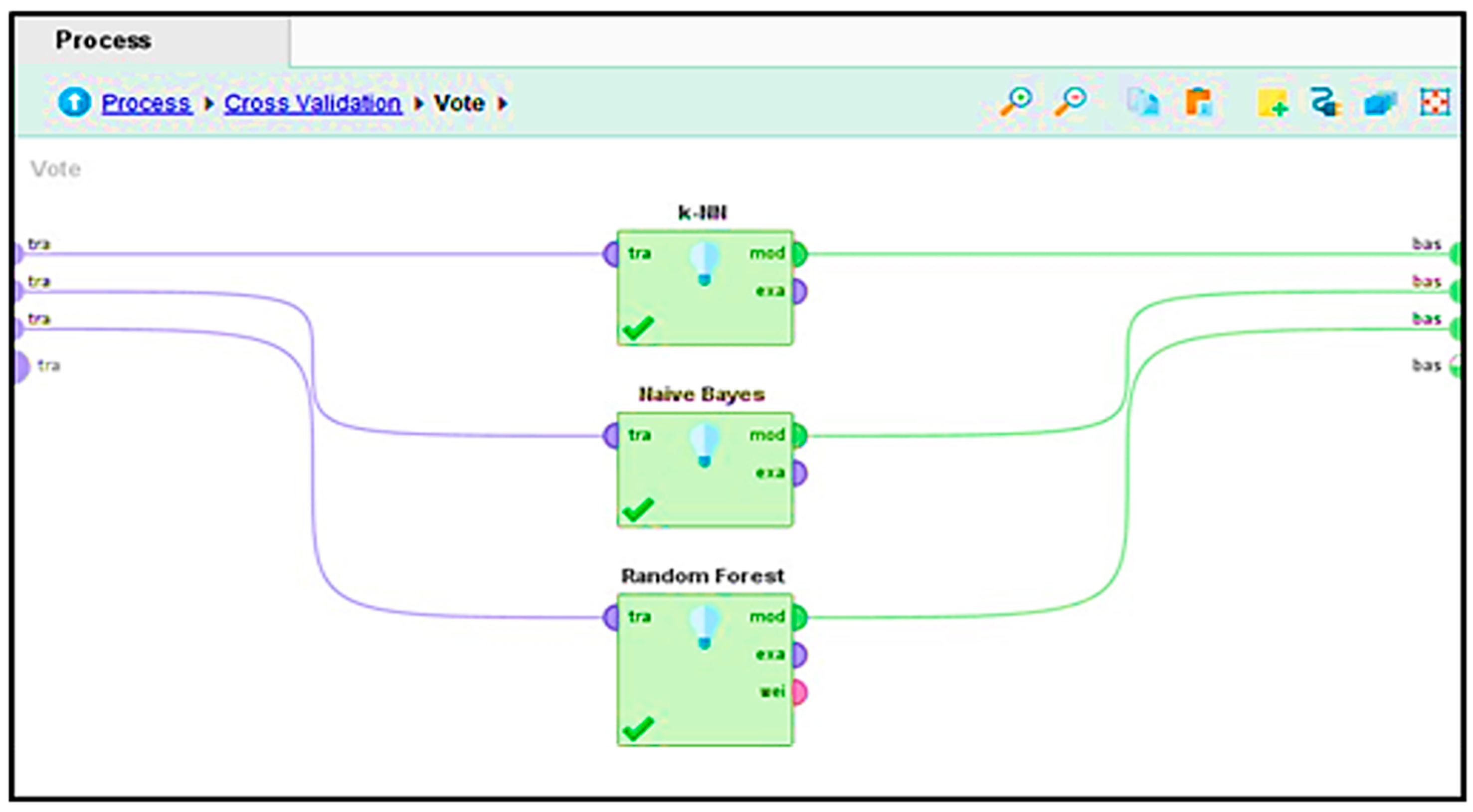

3.6. Cross-Validation and Ensemble Classification

4. Results

4.1. Performance Vector

4.2. Precision (P)

4.3. Recall (R)

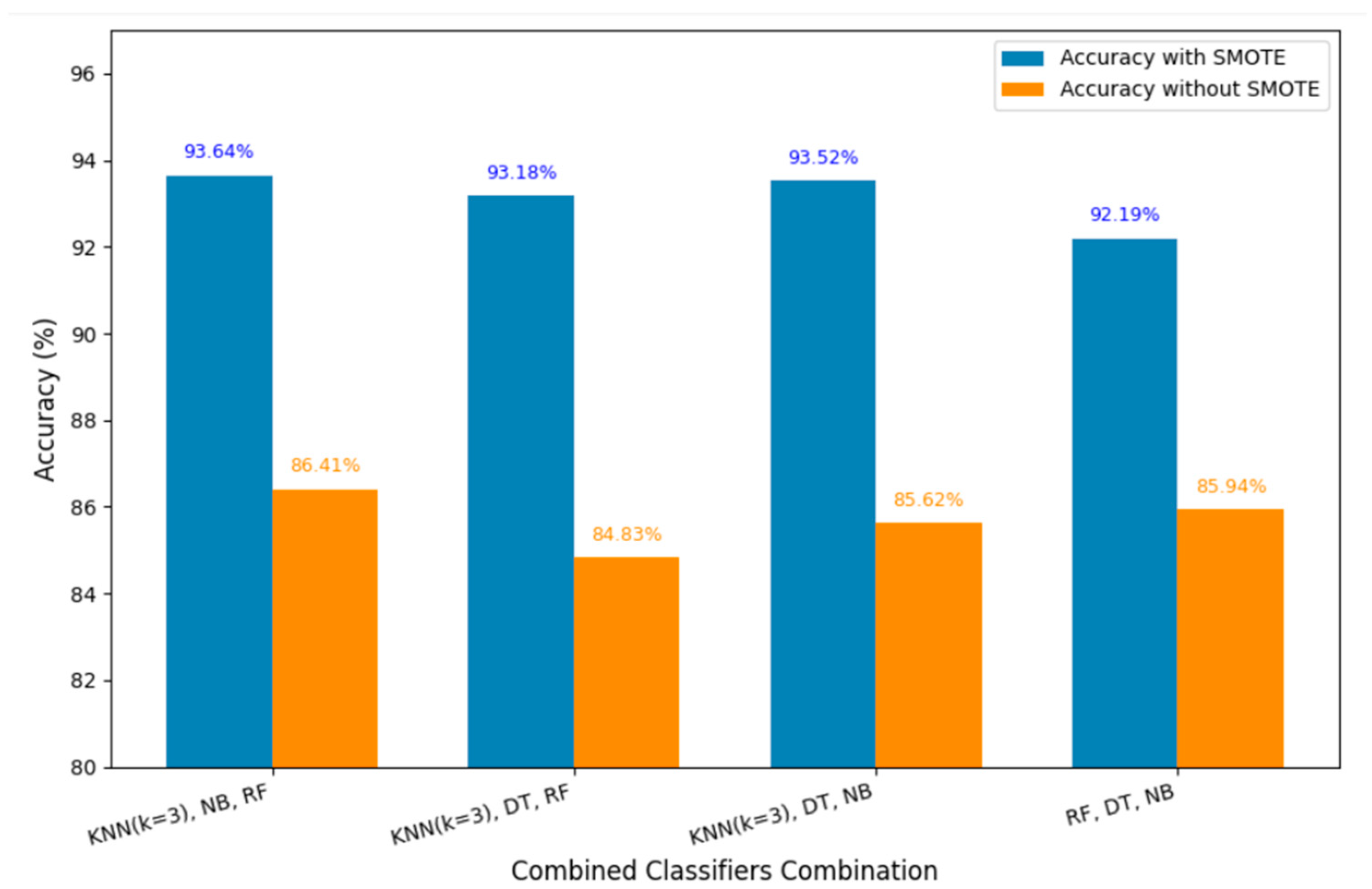

4.4. Accuracy

4.5. Accuracy with Supervised ML Models

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Choudhary, T.; Kunal, C. Migraine Prediction Method Using Feature Selection with Hybrid Optimization Method. In Proceedings of the 2024 1st International Conference on Innovations in Communications, Electrical and Computer Engineering (ICICEC), Davangere, India, 24–25 October 2024. [Google Scholar] [CrossRef]

- Chen, W.-T.; Hsieh, C.-Y.; Liu, Y.-H.; Cheong, P.-L.; Wang, Y.-M.; Sun, C.-W. Migraine classification by machine learning with functional near-infrared spectroscopy during the mental arithmetic task. Sci. Rep. 2022, 12, 14590. [Google Scholar] [CrossRef] [PubMed]

- Khan, L.; Shahreen, M.; Qazi, A.; Shah, S.J.A.; Hussain, S.; Chang, H.-T. Migraine headache (MH) classification using machine learning methods with data augmentation. Sci. Rep. 2024, 14, 1. [Google Scholar] [CrossRef] [PubMed]

- Zhu, B.; Coppola, G.; Shoaran, M. Migraine classification using somatosensory evoked potentials. Cephalalgia 2019, 39, 1143–1155. [Google Scholar] [CrossRef] [PubMed]

- Butt, M.O.; Rehman, A.U.; Javaid, S.; Ali, T.M.; Nawaz, A. An Application of Artificial Intelligence for an Early and Effective Prediction of Heart Failure. In Proceedings of the INTELLECT, Karachi, Pakistan, 16–17 November 2022. [Google Scholar] [CrossRef]

- Samiullah; Rehman, A.; Rehman, A.U.; Javaid, S.; Ali, T.M.; Mir, A.; Nirsanametla, Y. The Sophisticated Prognostication of Migraine Aura Using Machine Learning. In Proceedings of the ETNCC, Windhoek, Namibia, 23–25 July 2024; pp. 511–517. [Google Scholar] [CrossRef]

- Dollmat, K.S.; Abdullah, N.A. Machine learning in emotional intelligence studies: A survey. Behav. Inf. Technol. 2022, 41, 1485–1502. [Google Scholar] [CrossRef]

- Woldeamanuel, Y.W.; Cowan, R.P. Computerized migraine diagnostic tools: A systematic review. SAGE Open Med. 2022, 10, 20406223211065235. [Google Scholar] [CrossRef] [PubMed]

- Nattar Kannan, K.; Thangarasu, G. Knowledge Data Analysis on Migraine Headaches by Using Optimal Classifiers. Indian J. Comput. Sci. Eng. 2022, 13, 935–948. [Google Scholar] [CrossRef]

- Ullah, E.; Haider, A.; Chowdhury, A.; Chowdhury, M.H. A Comparative Analysis of Stimuli Response among People with Migraine Classification: A Machine Learning Approach. In Proceedings of the ICAEEE, Gazipur, Bangladesh, 25–27 April 2024. [Google Scholar] [CrossRef]

- Marino, S.; Jassar, H.; Kim, D.J.; Lim, M.; Nascimento, T.D.; Dinov, I.D.; Koeppe, R.A.; DaSilva, A.F. Classifying migraine using PET compressive big data analytics of brain’s μ-opioid and D2/D3 dopamine neurotransmission. Front. Pharmacol. 2023, 14, 1173596. [Google Scholar] [CrossRef] [PubMed]

- Eigenbrodt, A.K.; Ashina, H.; Khan, S.; Diener, H.-C.; Mitsikostas, D.D.; Sinclair, A.J.; Pozo-Rosich, P.; Martelletti, P.; Ducros, A.; Lantéri-Minet, M.; et al. Diagnosis and management of migraine in ten steps. Nat. Rev. Neurol. 2021, 17, 501–514. [Google Scholar] [CrossRef] [PubMed]

- Yuichi, M.; Susetyo, Y.A. Klasifikasi Penyakit Migrain Dengan Metode Naïve Bayes Pada Dataset Kaggle. 2025. Available online: https://journal.stmiki.ac.id (accessed on 1 January 2025).

- Orhanbulucu, F.; Latifoglu, F. Development of a Machine Learning Based Clinical Decision Support System for Classification of Migraine Types: A Preliminary Study. Int. J. Adv. Nat. Sci. Eng. Res. 2024, 2, 323–332. Available online: https://as-proceeding.com/index.php/ijanser (accessed on 10 January 2025).

- Schwedt, T.J.; Chong, C.D.; Wu, T.; Gaw, N.; Fu, Y.; Li, J. Accurate classification of chronic migraine via brain magnetic resonance imaging. Headache 2015, 55, 762–777. [Google Scholar] [CrossRef] [PubMed]

- Mitrović, K.; Petrušić, I.; Radojičić, A.; Daković, M.; Savić, A. Migraine with aura detection and subtype classification using machine learning algorithms and morphometric magnetic resonance imaging data. Front. Neurol. 2023, 14, 1106612. [Google Scholar] [CrossRef] [PubMed]

- Ferroni, P.; Zanzotto, F.M.; Scarpato, N.; Spila, A.; Fofi, L.; Egeo, G.; Rullo, A.; Palmirotta, R.; Barbanti, P.; Guadagni, F. Machine learning approach to predict medication overuse in migraine patients. Comput. Struct. Biotechnol. J. 2020, 18, 1487–1496. [Google Scholar] [CrossRef] [PubMed]

- Kwon, J.; Lee, H.; Cho, S.; Chung, C.-S.; Lee, M.J.; Park, H. Machine learning-based automated classification of headache disorders using patient-reported questionnaires. Sci. Rep. 2020, 10, 1. [Google Scholar] [CrossRef] [PubMed]

- Gulati, S.; Guleria, K.; Goyal, N. Classification of Migraine Disease using Supervised Machine Learning. In Proceedings of the ICRITO, Balaclava, Mauritius, 8–9 December 2022. [Google Scholar] [CrossRef]

- Romould, R.V.; Singh, V.; Gourisaria, M.K.; Das, H.; Dash, B.B. Deciphering Migraine Types: A Machine Learning Odyssey for Precision Prediction. In Proceedings of the IDCIoT, Bengaluru, India, 4–6 January 2024; pp. 1610–1616. [Google Scholar] [CrossRef]

- Diwaker, C.; Tomar, P.; Solanki, A.; Nayyar, A.; Jhanjhi, N.Z.; Abdullah, A.; Supramaniam, M. A New Model for Predicting Component-Based Software Reliability Using Soft Computing. IEEE Access 2019, 7, 147191–147203. [Google Scholar] [CrossRef]

- Kok, S.H.; Abdullah, A.; Jhanjhi, N.Z.; Supramaniam, M. A review of intrusion detection system using machine learning approach. Int. J. Eng. Res. Technol. 2019, 12, 8–15. [Google Scholar]

- Airehrour, D.; Gutierrez, J.; Kumar Ray, S. GradeTrust: A secure trust based routing protocol for MANETs. In Proceedings of the ITNAC, Sydney, Australia, 18–20 November 2015; pp. 65–70. [Google Scholar] [CrossRef]

| Attribute | Description | Coding/Values |

|---|---|---|

| Age | Age of the patient | Numeric value (years) |

| Duration | Duration of symptoms during the most recent episode | Numeric value (days) |

| Frequency | Monthly frequency of migraine episodes | Numeric value (episodes/month) |

| Location | Pain location during migraine | 0: None, 1: Unilateral, 2: Bilateral |

| Character | Nature of pain experienced | 0: None, 1: Throbbing, 2: Constant |

| Intensity | Intensity of pain | 0: None, 1: Mild, 2: Medium, 3: Severe |

| Nausea | Occurrence of nausea | 0: Not, 1: Yes |

| Vomit | Occurrence of vomiting | 0: Not, 1: Yes |

| Phonophobia | Auditory Sensitivity | 0: Not, 1: Yes |

| Photophobia | Light Sensitivity | 0: Not, 1: Yes |

| Visual | Count of temporary visual symptoms | Numeric count |

| Sensory | Count of temporary sensory symptoms | Numeric count |

| Dysphasia | Speech coordination impairment | 0: Not, 1: Yes |

| Dysarthria | Instances of incoherent speech | 0: Not, 1: Yes |

| Vertigo | Experience of dizziness | 0: Not, 1: Yes |

| Tinnitus | Presence of ear ringing | 0: Not, 1: Yes |

| Hypoacusis | Loss of hearing | 0: Not, 1: Yes |

| Diplopia | Occurrence of visual doubling | 0: Not, 1: Yes |

| Visual defect | Both eyes having simultaneous frontal and nasal field defect | 0: Not, 1: Yes |

| Ataxia | Muscle control deficiency | 0: Not, 1: Yes |

| Conscience | Compromised or altered level of consciousness | 0: Not, 1: Yes |

| Paresthesia | Presence of simultaneous bilateral abnormal sensations (tingling, numbness) | 0: Not, 1: Yes |

| DPF | Family history of migraine (diagnostic relevance of family background) | 0: Not, 1: Yes |

| Type | Migraine classification based on symptoms | 0: Basilar-type aura, 1: Familial hemiplegic migraine, 2: Migraine without aura, 3: Other, 4: Sporadic hemiplegic migraine, 5: Typical aura with migraine, 6: Typical aura without migraine |

| Combined Algorithms | Accuracy Without SMOTE | Accuracy with SMOTE |

|---|---|---|

| KNN (k = 3), NB, RF | 86.41% | 93.64% |

| KNN (k = 3), DT, RF | 84.83% | 93.18% |

| KNN (k = 3), DT, NB | 85.62% | 93.52% |

| RF, DT, NB | 85.94% | 92.19% |

| Confusion Matrix of KNN (k = 3), NB, RF—Accuracy 86.41%—Without SMOTE | ||||||||

|---|---|---|---|---|---|---|---|---|

| Act. 5 | Act. 2 | Act. 0 | Act. 4 | Act. 1 | Act. 3 | Act. 6 | Class Precision | |

| Est. 5 | 212 | 0 | 6 | 10 | 11 | 2 | 0 | 87.97% |

| Est. 2 | 0 | 58 | 0 | 2 | 2 | 2 | 0 | 90.62% |

| Est. 0 | 1 | 0 | 9 | 0 | 0 | 0 | 0 | 90.00% |

| Est. 4 | 34 | 2 | 2 | 235 | 11 | 0 | 0 | 82.75% |

| Est. 1 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0.00% |

| Est. 3 | 0 | 0 | 0 | 0 | 0 | 13 | 0 | 100.00% |

| Est. 6 | 0 | 0 | 0 | 0 | 0 | 0 | 20 | 100.00% |

| class recall | 85.83% | 96.67% | 50.00% | 95.14% | 0.00% | 76.47% | 100.00% | |

| Confusion Matrix of KNN (k = 3), NB, RF—Accuracy 93.64% with SMOTE | ||||||||

|---|---|---|---|---|---|---|---|---|

| Act. 5 | Act. 2 | Act. 0 | Act. 4 | Act. 1 | Act. 3 | Act. 6 | Class Precision | |

| Est. 5 | 202 | 0 | 15 | 3 | 6 | 0 | 0 | 89.38% |

| Est. 2 | 0 | 247 | 17 | 0 | 0 | 5 | 0 | 91.82% |

| Est. 0 | 2 | 0 | 203 | 4 | 3 | 0 | 0 | 95.75% |

| Est. 4 | 28 | 0 | 5 | 240 | 0 | 0 | 0 | 87.91% |

| Est. 1 | 15 | 0 | 7 | 0 | 238 | 0 | 0 | 91.54% |

| Est. 3 | 0 | 0 | 0 | 0 | 0 | 242 | 0 | 100.00% |

| Est. 6 | 0 | 0 | 0 | 0 | 0 | 0 | 247 | 100.00% |

| class recall | 81.78% | 100.0% | 82.19% | 97.17% | 96.36% | 97.98% | 100.0% | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Butt, M.O.; Mir, A.; Sujjada, A. A Novel Data-Driven Framework for Automated Migraines Classification Using Ensemble Learning. Eng. Proc. 2025, 107, 25. https://doi.org/10.3390/engproc2025107025

Butt MO, Mir A, Sujjada A. A Novel Data-Driven Framework for Automated Migraines Classification Using Ensemble Learning. Engineering Proceedings. 2025; 107(1):25. https://doi.org/10.3390/engproc2025107025

Chicago/Turabian StyleButt, Muhammad Owais, Azka Mir, and Alun Sujjada. 2025. "A Novel Data-Driven Framework for Automated Migraines Classification Using Ensemble Learning" Engineering Proceedings 107, no. 1: 25. https://doi.org/10.3390/engproc2025107025

APA StyleButt, M. O., Mir, A., & Sujjada, A. (2025). A Novel Data-Driven Framework for Automated Migraines Classification Using Ensemble Learning. Engineering Proceedings, 107(1), 25. https://doi.org/10.3390/engproc2025107025