1. Introduction

The primary motivation behind parallel processing is to execute computations faster and more efficiently than with a single-core processor. In a parallel system, multiple processors or cores operate simultaneously to divide and conquer large tasks. Applications that demand such optimized computation include real-time cryptocurrency price prediction [

1], autonomous vehicles, weather forecasting systems, and mobile devices [

2].

Parallel processing works by splitting tasks into smaller “subtasks” [

3], which are then executed concurrently across multiple processing units. This approach maximizes CPU utilization and accelerates data processing. Two dominant models—single instruction, multiple data (SIMD) and multiple instruction, multiple data (MIMD)—drive this architecture, processing large-scale data efficiently across multiple units. However, parallelism is not without challenges: maintaining task coordination, minimizing overheads, and achieving balanced load distribution across processors remain complex [

3].

Sequential programs, originally designed for single-core CPUs, cannot easily leverage modern multi-core systems without reengineering. Translators or compilers are generally incapable of automatically converting sequential code into efficient parallel versions. Therefore, understanding how to develop and optimize parallel programs has become increasingly valuable for engineers and researchers alike.

There are three main reasons why parallel processing continues to be a trend and is in demand; these are the following:

Hardware cost is low, which is why it is affordable to build systems with many processors.

The scalability of circuit technology has evolved to the point that it is possible to design complicated circuits on a single chip.

The cost of sequential implementation has reached heights as the speed of the single processor has been improved.

According to Pacheco [

3], we have been enjoying the benefits of ever-faster processors for a long time. However, the rate at which the performance of traditional Central Processing Units (CPUs) is improving is slowing down due to physical constraints. Sequential programs (those created for a traditional single-core processor) usually take advantage of multiple cores. Translation programs are unlikely to be able to handle the burden of parallelizing serial programs or turning them into parallel programs that can guarantee functionality with multiple cores. That is why understanding how to implement parallel programs is valuable.

2. Background

Computer architecture specifies how processors run, interact, and organize memory within the framework of a fully functional computer. One or two computing models typically adequately explain a computer architecture. It is important to note that implementing an existing computing model is not the aim of computer architecture; instead, it aims at the opposite. Computing models try to simulate real computer designs. Perfecting a computer’s speed and efficiency to run programs is the aim of computer architecture. In the past, the hardware industry increased the frequency of the CPU, which caused implementations to automatically obtain higher performance.

Few changes have been made to the architecture since then. Microprocessors with a single CPU have reached their stagnancy, which is why computer designs have now developed parallel computers. The most well-known architectures are Graphical Processing Units (GPUs), which are perfect for performing parallel processing, and CPU, which is considered the brain of the computer, usually by using multi-core processors. Unfortunately, simply buying better hardware will not make sequential implementations perform quicker. To increase their performance when more processors become available, they need to be redesigned as a parallel algorithm. In fact, elements like processor communication, memory organization, and the kind of instruction/data streams all contribute to better implementation. In the subsections below, we will refer to Flynn’s taxonomy and, more importantly, to structures that are utilized for parallel processing, such as SIMD and MIMD.

Recent works have addressed the integration of parallel programming in mobile devices [

2], high-speed media analytics [

4], and cloud-native scheduling [

5]. These studies highlight the growing relevance of lightweight parallelism models in distributed and constrained environments.

2.1. SIMD and MIMD

Computer architectures are classified by Flynn’s taxonomy and made using two types of information: instructions and data. Based on his taxonomy and hierarchy, computer architecture can be divided into four primary structures. They are single instruction single data (SISD); single instruction, multiple data (SIMD); multiple instruction, single data (MISD); and multiple instruction, multiple data (MIMD).

Figure 1 shows all four structures and their specifics: In SISD, there is a single CPU, and it runs by managing tasks sequentially. So, it does not need parallel processing. It is used for basic functions (e.g., simple calculations). SIMD uses one signal to multiple processors, where they process different pieces of data in parallel [

6]. It is mostly used for parallel and vector processing. MISD uses multiple processors to execute a single piece of data, where “redundancy” becomes the problem. MIMD uses multi-core computers to process multiple instructions of data. They tend to be very efficient.

Table 1 shows all four architectures with their possible use cases and example technologies where they are deployed [

6].

As mentioned previously, SIMD and MIMD are used for parallel processing. Their characteristics are as follows:

SIMD architecture is the parallel model where all the units receive the same instruction from the control unit, and then computers process data in parallel in multiple data streams. These processors perform operations on arrays, which are mostly used to work with large datasets [

6]. The most common applications where SIMD is used are image processing, machine learning, artificial intelligence, and encryption.

MIMD architecture performs multiple instructions through multiple data streams, where each unit works on its own data. This way, there are simultaneous data flows. Here, tasks can run in parallel, which maximizes the full utilization of resources and quickens the completion of the tasks, no matter how convoluted they might be [

6]. The common uses are server clusters where a large scale of data is handled in a network, and distributed computing where nodes (task performance) are connected through a high-speed network.

2.2. Parallel Programming—Real-Time Systems

Parallel programming in real time is important but also challenging. The importance of deadlines categorizes the systems in hard real time (where missing a deadline means failure) and soft real time (where the task is still acceptable). The areas of usage can vary from industry automation to the Internet of Things.

According to Pinho [

7], parallel programming in real-time systems can be performed with two approaches: utilizing the Open Multi-Processing (OpenMP) library and its approach of tasking model or utilizing the Ada programming language. OpenMP is used in high-performance computing, such as in video processing in autonomous vehicles. Its version 3.0 handles better workloads that do not fit in loops or data structures. Its version 4.0 assisted in defining dependencies between tasks, which is crucial in real time. Ada 2022 language supports hard-real-time systems. It does so by breaking the loops in chunks and reducing the variables using the keyword

parallel [

7]. In other words, it does what OpenMP does but in a more controlled way.

Parallel processing applications vary in a wide range of domains. In scientific computing, parallel processing is used for climate modeling or astrophysical simulations. In terms of artificial intelligence (AI), parallel processing is used in models like convolutional neural networks (CNNs), where large data are handled [

8]. Another industry where parallel processing is used is in multimedia processing, where it handles tasks like video encoding/decoding or image transformation [

6].

For example, Ada’s Ravenscar profile is widely used in aerospace applications, ensuring timing guarantees and safe concurrency. OpenMP is used in autonomous driving software to parallelize video frame analysis across multiple cores.

3. Methodology

In this section, we illustrate a parallel merge-sort algorithm and compare its performance to a traditional serial merge-sort algorithm. Both algorithms were implemented in Python, with pseudocode representations provided for clarity. The dataset used was randomly generated and contained 1 million integer elements. Importantly, both algorithms were tested under identical conditions to ensure a fair comparison.

The test environment consisted of a machine running Windows 10, with an Intel Core i5-1135G7 quad-core processor, 8 GB of RAM, and Python 3.11. Parallel execution was managed using Python’s multiprocessing library, with inter-process communication handled via pipes.

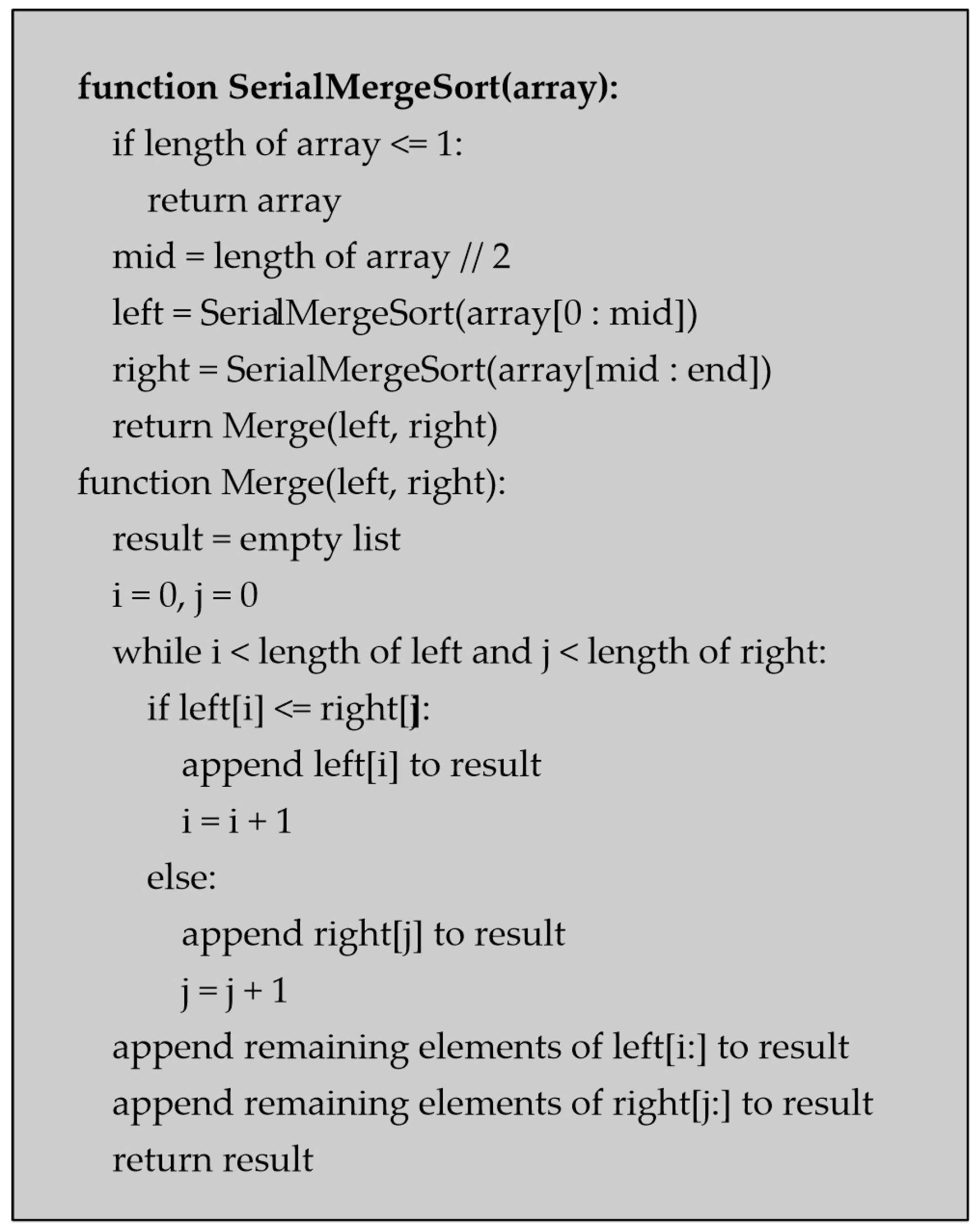

The serial version (

Figure 2) uses a standard divide-and-conquer approach. If the input array is not already sorted, it is split into two halves (left and right), each of which is recursively sorted and then merged using a two-pointer method. The merge operation sequentially compares and combines the subarrays into a sorted result.

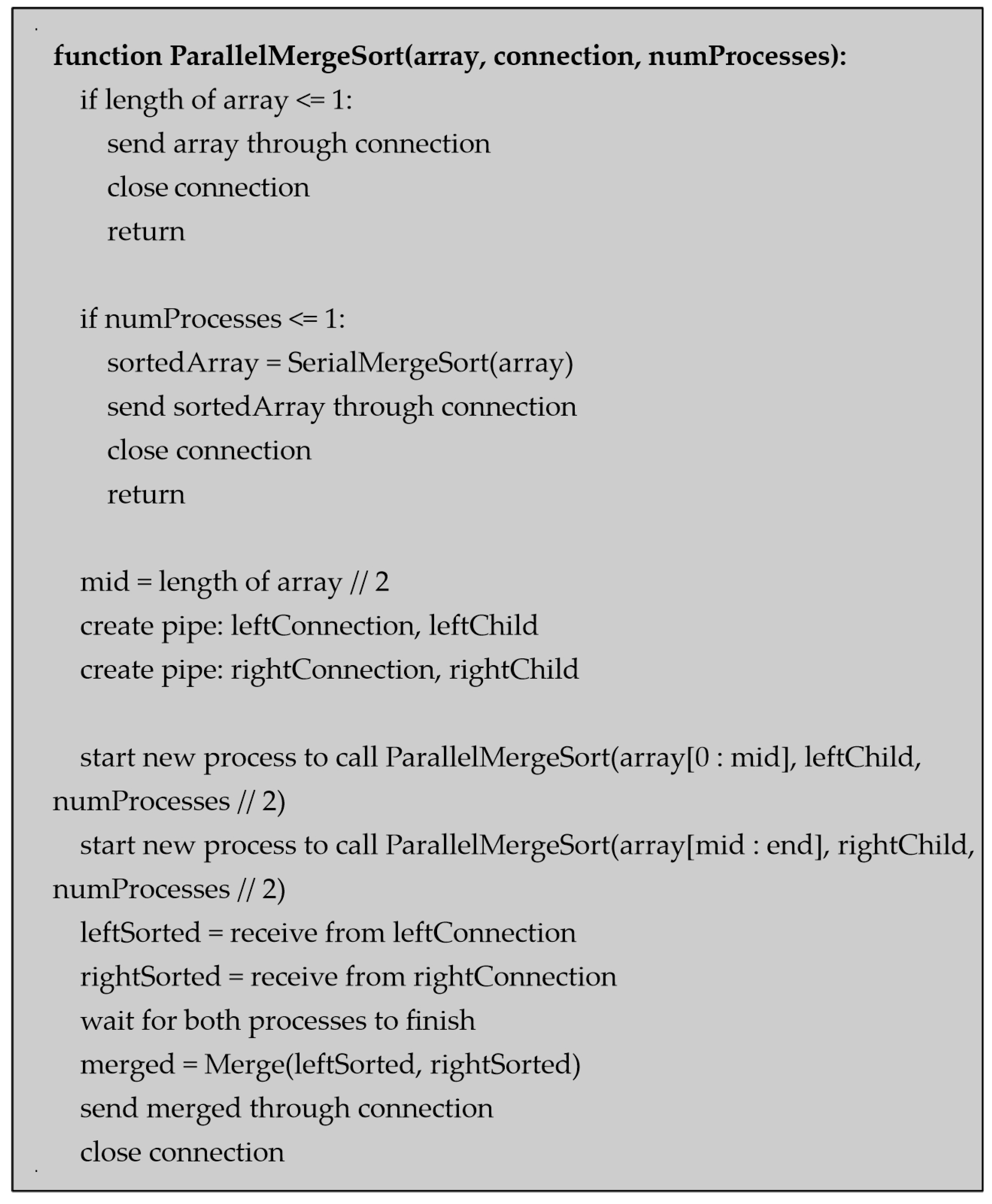

The parallel version (

Figure 3) also relies on the divide-and-conquer strategy, but spawns two new processes at each recursive level, allowing each half of the array to be sorted concurrently. Once sorting is complete, the results from each process are merged. Communication is achieved through pipes, and care is taken to halve the number of processes with each recursion step to prevent resource exhaustion.

4. Discussion

Parallel processing offers optimization in performance, scalability, and efficiency. Domains in which parallel processing has been expanded vary from big data analytics to artificial intelligence. Advancements in parallel processing have been noted by the release of new paradigms, for example, heterogeneous computing, quantum computing, and neuromorphic computing. Their aim is to satisfy the need for efficiency in processing large sets of data and fulfill complex computations [

9]. Putting aside its advancements, there are also challenges when attempting to achieve load balance and resource management. Hussain et al. proposed a DE-RALBA algorithm to enhance load-balancing while improving performance and reducing job execution time [

5]. In addition, frameworks like OpenMP, MPI, and CUDA are being used to ensure ease of use. Despite these gains, parallel algorithms require careful management of data synchronization. In systems like parallel databases or GPU workloads, improper coordination can lead to race conditions or inefficient cache usage.

Table 2 illustrates the execution time of merge-sort using both serial and parallel implementations across varying input sizes (from 100,000 to 1,000,000 elements) and processor configurations (one, two, four, and eight cores). As expected, the serial version exhibits a linear increase in execution time as the dataset grows, taking approximately 2.6 s for 1 million elements. The parallel version using a single core is slightly slower than its serial counterpart due to the additional overhead involved in managing process creation and inter-process communication, which becomes evident especially on smaller datasets.

As we move from two to eight cores, performance improvement becomes increasingly significant. For instance, on a dataset of 1 million elements, execution time is reduced from 2.6 s (serial) to 0.90 s (four cores), and further down to 0.61 s (eight cores), demonstrating a speedup of over 60%. This trend is consistent across all input sizes but becomes more pronounced with larger datasets, as the benefits of parallel processing outweigh coordination overheads. Smaller datasets (e.g., 100K) still see performance gains, though they are less dramatic due to relatively higher overhead compared to the workload size. These results validate the practical effectiveness of divide-and-conquer parallelism, especially in CPU-bound tasks such as sorting. The efficiency gains highlight the scalability of parallel approaches, particularly in multi-core environments. However, the data also suggest diminishing returns beyond four cores for moderately sized datasets, pointing to the importance of balancing parallelization depth with workload size and system capabilities. Future implementations may explore dynamic core allocation or hybrid approaches (e.g., CPU-GPU offloading) to further enhance performance.

Table 2 illustrates five instances of datasets while comparing their execution time of computational and varying numbers of CPU cores.

Table 3 shows that parallel processing only improves performance significantly for large datasets. For small (10K) and medium (100K) data sizes, increasing the number of cores leads to little or no performance gain and, in some cases, even increases execution time due to overhead from managing parallel tasks. In contrast, the large dataset (1000K) demonstrates clear benefits from parallelism, with average execution time decreasing from 2.4 s on one core to 0.94 s on four cores. This indicates that parallelization is most effective when the workload is substantial enough to outweigh the overhead it introduces.

5. Conclusions

Parallel processing is shifting towards heterogeneous and distributed systems. This shift is supported by algorithms that ensure load balancing and advanced scheduling to optimize and increase scalability. Besides advancements, there are also a few challenges in parallelism that necessitate constant improvement, such as workload imbalance, synchronization overhead, and programming.

It is important to emphasize the need for machine learning to further optimize parallel systems. Intelligent algorithms can predict workload patterns and automate complex scheduling, which optimizes efficiency and time of responsiveness.

In particular, exploring parallel execution models in edge computing devices (e.g., Raspberry Pi clusters) could bring real-time analytics closer to IoT endpoints. Furthermore, cloud platforms are beginning to leverage parallelism via serverless and GPU-based computing environments, enabling scalable deployment of AI models.

Looking ahead, future research should focus on user-friendly and energy-efficient parallel processing frameworks. Special research focus should be directed even more at architectures like quantum and neuromorphic systems.

Author Contributions

Conceptualization, M.S. and E.D.; methodology, M.S. and E.D.; software, M.S. and E.D.; validation, M.S. and E.D.; formal analysis, M.S. and E.D.; investigation, M.S. and E.D.; resources, M.S. and E.D.; data curation, M.S. and E.D.; writing—original draft preparation, M.S. and E.D.; writing—review and editing, M.S. and E.D.; visualization, M.S. and E.D.; supervision, M.S. and E.D.; project administration, M.S. and E.D.; funding acquisition, M.S. and E.D. All authors contributed equally to this work. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Datasets are available upon request from the authors.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| SIMD | Single Instruction, Multiple Data |

| SISD | Single Instruction, Single Data |

| MIMD | Multiple Instruction, Multiple Data |

| MISD | Multiple Instruction, Single Data |

| CPU | Central Processing Unit |

| GPU | Graphic Processing Unit |

| OpenMP | Open Multiprocessing |

| MPI | Message Passing Interface |

| CUDA | Compute Unified Device Architecture |

| IoT | Internet of Things |

| AI | Artificial Intelligence |

| DE-RALBA | Dynamic Enhanced Resource-Aware Load Balancing Algorithm |

References

- Premarathne, A.; Samarakody, R.; Nirmalathas, A. Real-time cryptocurrency price prediction by exploiting IOT concept and Beyond: Cloud computing, Data Parallelism and deep learning. Int. J. Adv. Comput. Sci. Appl. 2020, 11. [Google Scholar] [CrossRef]

- Afonso, S.; Gómez-Cárdenes, Ó.; Expósito, P.; Blanco, V.; Almeida, F. Parallel programming in mobile devices with FancyJCL. J. Supercomput. 2024, 80, 12891–12909. [Google Scholar] [CrossRef]

- Pacheco, P.; Malensek, M. An Introduction to Parallel Programming; Morgan Kaufmann; Elsevier: Cambridge, MA, USA, 2022. [Google Scholar]

- Can Cakmak, M.; Agarwal, N. High-Speed Transcript Collection on Multimedia Platforms: Advancing Social Media Research through Parallel Processing. In Proceedings of the 2024 IEEE International Parallel and Distributed Processing Symposium Workshops (IPDPSW), San Francisco, CA, USA, 27–31 May 2024. [Google Scholar] [CrossRef]

- Hussain, A.; Aleem, M.; Ur Rehman, A.; Arshad, U. DE-RALBA: Dynamic enhanced resource aware load balancing algorithm for cloud. PeerJ. Comput. Sci. 2025, 11, e2739. [Google Scholar] [CrossRef] [PubMed]

- Badshah, A. Available online: https://afzalbadshah.medium.com/parallel-programming-models-simd-and-mimd-a66372faa0d2 (accessed on 14 May 2024).

- Pinho, L.M. Real-Time Parallel Programming: State of Play and Open Issues. arXiv 2024, arXiv:2303.11018. [Google Scholar]

- Fegaras, L.; Noor, M.H. Translation of Array-Based Loops to Distributed. arXiv 2020, arXiv:2003.09769. [Google Scholar] [CrossRef]

- Dai, F.; Hossain, M.A.; Wang, Y. State of the Art in Parallel and Distributed Systems: Emerging Trends and Challenges. Electronics 2025, 14, 677. [Google Scholar] [CrossRef]

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).