1. Introduction

The 17 Sustainable Development Goals (SDGs) of the United Nations are the guide or benchmarks of modern society for sustainable development in all spheres and obtaining a fair life for both the current generation and future generations. To achieve SDG No 4. “Quality Education”, our society must “ensure inclusive and equitable quality education for all” [

1]. Ensuring quality and easily accessible education at all educational levels needs solving a number of administrative, methodological and technical challenges. Achieving inclusive and sustainable education leads to the removal of barriers to education for all, which again needs overcoming some problems.

The purpose of this article is to present an approach for achieving quality, accessible, inclusive, and sustainable education, as well as a software tool for overcoming the language localization (source language) of educational resources that support the attainment of sustainable quality education. On the one hand, in order to ensure quality education, it is much more meaningful to utilize broad access to educational resources from around the world, rather than limiting the search to resources in the source language of the learners. The current generation of students prefers video-based learning materials, which are abundant on the internet. On the other hand, a large part of the quality resources cannot be used due to language barriers, either because the learner does not know English or they are in a language that is not widely spoken. For example, in Bulgaria, according to the Bulgarian National Statistical Institute [

2], only 37.8% of people with secondary education (some of whom would continue to university education) use at least one foreign language, and this statistic for all educational levels is 47.9%.

The article presents an approach based on the use of reusable learning objects (RLOs) and a software tool, AI Subtitle Translator, which offers a specific solution for achieving the aforementioned goal. Here, RLOs refer to educational videos with multimedia content used as learning resources. The software tool AI Subtitle Translator is used for translating subtitles, allowing a particular educational resource like videos to be made reusable in different languages. RLOs in any given language can be repurposed by translating the subtitles for use by learners in their native or other target languages. To address this task, an approach has been created, and the AI Subtitle Translator tool has been developed for specialized subtitle translation, enabling the processing of educational video lectures. The aim of this is to convert a monolingual lecture into a multilingual one. The AI Subtitle Translator software tool is integrated into a web environment and utilizes AI models from OpenAI. The subtitle processing and translation is automated.

To assess the quality of the product, the complexity of its development, and its comparison with alternative solutions, a brief review of technologies and tools from the perspective of their use in specialized subtitle translation by leading translation companies has been conducted. Specifically, companies offering API integration services, such as Google Translate, DeepL, Microsoft Translate, and OpenAI, are discussed.

Section 2 briefly outlines the current state of automated text translation from the perspective of AI integration.

Section 3 examines the conceptual model and advantages of the implemented approach, while

Section 4 discusses the workflow, architecture, implementation, and operational scenario of the software development.

Section 5 explores the approach and the

AI Subtitle Translator tool. The paper ends with a conclusion.

2. Application of AI in the Specialized Translations

Translation tools have undergone significant changes in recent years, due to the use of software applications and the presence of artificial intelligence [

3,

4,

5]. The continuous development of technologies in this field supports and optimizes the translation processes. According to studies conducted in recent years, software translations have reached very high levels of automation. However, specialized translations are not fully automated and still rely on human intervention for editing [

6,

7]. The term “specialized translation” has different meanings depending on the context in which it is used. In our work, we refer to specialized translation as the translation of various subtitle standards, where the specifics are technical, due to the presence of specialized symbols in the translation text file, and linguistic, due to the semantic and contextual content of the text. The emerging trend in translations is a reduced role of the human translator [

8]. Modern practice in specialized translations involves the use of software applications, particularly those based on artificial intelligence models. While the benefits of using software in translation services have been well known for years, the advantages of integrating artificial intelligence into translation software are still under development. However, considering that the rise in AI-based software, which predicts events and states, outperforms software based on program logic, it can be expected that the trend in translation will increasingly involve the integration of AI into software applications.

Computer-Assisted Translation (CAT) tools are software platforms that assist translators by automating part of the translation process. The use of CAT tools offers both advantages and disadvantages [

9,

10]. The strengths of CAT tools are demonstrated in situations where translation memory is applied—reusing previously translated segments. However, while these tools support file formats for subtitle translation, their processing is not automated, making the work labor-intensive for the translator. In practice, inefficient. A major drawback of popular software in the field, such as SDL Trados Studio, memoQ, Smartcat, OmegaT, Wordfast, and others, is the need for additional processing of the translated file to synchronize the subtitles with the audio in the video. This limitation makes them impractical for subtitle translation.

With the advancement of neural networks and Natural Language Processing (NLP), machine translation has become much more accurate [

11,

12,

13,

14,

15], especially for text with more general meaning. Over the past decade, Neural Machine Translation (NMT) has emerged as the leading translation tool [

7,

16]. This type of translation is based on artificial neural networks and deep learning. Examples of such translation technology include Google Translate (since 2016), DeepL, Microsoft Translator, and others. This approach aims for a more precise understanding of the context, combined with the translation of phrases, sentences, and passages [

17,

18]. The development of client-side software for subtitle translation via APIs from resources like Google Translate, DeepL, and Microsoft Translator, as well as their use as freely accessible user services, yields noticeably less accurate results compared to the use of text-processing models from OpenAI for subtitle translation purposes.

The superiority of translations using OpenAI models through API access is currently indisputable. In our work, the application of the proprietary tool AI Subtitle Translator for subtitle translation, which we discuss in the article, with video content covering various topics and a total duration of several hundred hours, has drastically reduced the need for human editing. The editorial work of the translator, measured in person-hours, decreased more than tenfold after integrating OpenAI models for subtitle translation. Moreover, the complexity of the software development in one case—using APIs from Google Translate, DeepL, and Microsoft Translator—and in the other case—using the OpenAI text model API, is incomparably easier to implement in favor of the latter. The reduced complexity of developing subtitle translation software based on an AI model comes from the ability to flexibly format the timing of the text without programming complex specialized logic into the software. Furthermore, in AI-based subtitle translation tools, the basic timing formatting corresponding to specific subtitle standards, such as *.srt and *.vtt, is set through a text template rather than through programming logic.

The linguistic advantages of translations made with OpenAI’s AI model and the API services of Google Translate, DeepL, and Microsoft Translate favor the AI model. The reason for this is that OpenAI uses a Large Language Model (LLM) [

19,

20,

21], which offers a better understanding of the meaning of complex linguistic structures and generates translations with higher accuracy. As [

22] states, even for the weaker version of GPT: “GPT-3 achieves high performance on many NLP datasets, including translation, question answering, and related tasks, as well as several tasks that require on-the-fly reasoning or domain adaptation, such as decoding words, using a new word in a sentence, or performing three-digit arithmetic” [

22].

For the above mentioned reasons, solving the problem of integrating automated subtitle translation into reusable learning objects (RLOs) such as educational videos with multimedia content, we opted to develop a web application based on OpenAI’s GPT-4o model, and later migrated to the newer version GPT-4.5. We expect even more reliable performance with the release of the next version, GPT-5.

3. Learning Approach Based on RLOs

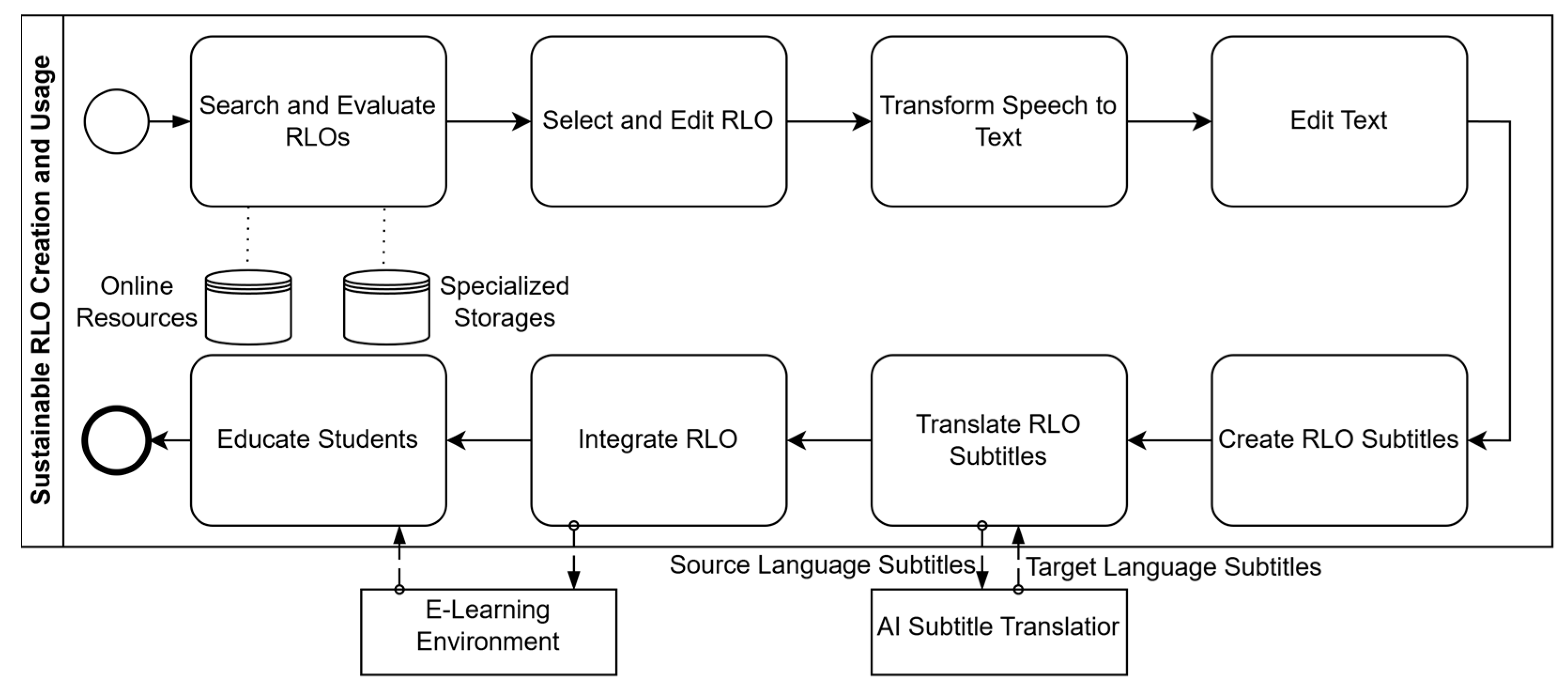

The article presents an approach to education based on the discovery of quality learning resources (regardless of their language) and their integration into courses and educational processes, irrespective of the language proficiency of the learners. The workflow of the approach is shown in

Figure 1 below. The approach uses the concept of reusable learning objects, where the instructor reuses existing video educational content, finds educational material, evaluates its quality, edits it, and adapts it according to the educational needs, after which it is provided to their learners. Learning resources (RLOs) in the source language are translated into the target language of the learners to overcome language barriers in the video content. The research focuses on the use of video resources, due to the motivation presented in the first section. Video resources are linguistically localized for specific learners by translating the subtitles, regardless of the source and target languages. We define language localization as the process of linguistically adapting content by integrating subtitles based on the user’s preferences, not necessarily linked to their geographic region.

Instructors can find resources, regardless of the language, online or in a specialized repository. These resources are then selected, evaluated, and, if necessary, edited (e.g., cutting parts of the video, adding animations, references). The primary subtitles are created by extracting the text from the audio file in the source language, through automatic transcription of the audio content into text. The resulting text is edited for greater accuracy and clarity. Subtitles are created and synchronized with the educational resources. The created primary subtitles are synchronized with the video resources. The subtitles are then translated into the target language using the developed software tool. Finally, the localized RLOs are integrated into an e-course within an e-learning environment or provided directly to the learners.

The positive aspects for students are:

access to most current quality materials (accessibility);

overcoming language barriers in education (openness);

understandable for learners not in the majority group (e.g., international students) (inclusiveness).

The positive aspects for the teachers are:

immediate access to most current quality materials available worldwide (quality);

independence from resource language (language independency);

rapid development of educational content (rapidity);

reusability of existing RLOs (reusability).

4. Software Application AI Subtitle Translator

The approach under consideration for overcoming language barriers is fully automatable, from the selection and editing of RLOs to the translation and integration of subtitles in the target language (see

Figure 2). At this stage, the team at the Human–Computer Interaction and Simulations Laboratory (HSL) and colleagues from the Faculty of Mathematics and Informatics at Plovdiv University are developing such a system, consisting of several software components. In this article, we discuss the software component

AI Subtitle Translator for the automated translation of subtitles. It can be used independently, without being technically dependent on integration with the other components of the entire system.

The software application for subtitle translation, which overcomes the language localization of educational resources, uses OpenAI’s AI models and is integrated into a web environment. The input data provided to the application consists of existing or specifically created subtitles in the *.srt and .vtt formats. The output of the translation process is one or more subtitle files in the target languages of the learners. The application offers translation for a wide range of languages supported by the GPT-4.5 model. The software component utilizes a client-server architecture, where the web interface, developed for the purposes of the application, is accessed via a browser. The interface provides a simplified user control for uploading subtitle files (.srt and *.vtt), selecting the source language of the subtitles, and choosing the target language into which the subtitles should be translated.

The main functionality of the application is implemented in four components:

Web user interface;

Text translation module with API integration with GPT-4o and GPT-4.5;

Collection of GPT prompt templates;

Program logic for processing of subtitles, text and GPT prompts.

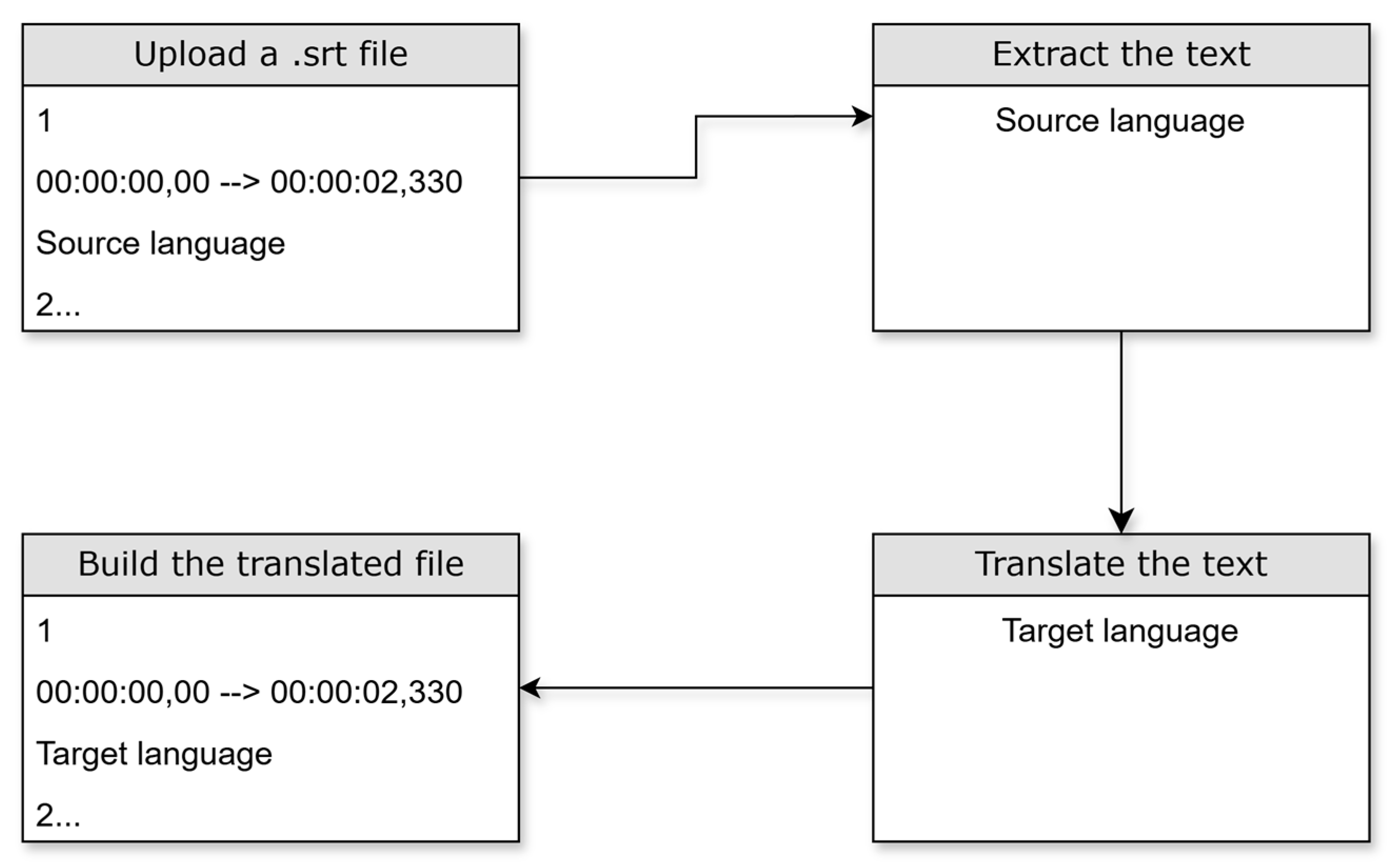

The program logic of the web application supports one scenario with two use cases. The use cases depend on the choice of the source language from which to translate and the selection of one or more target languages into which the subtitles should be translated. For both use cases, three parameters are provided to the server: a subtitle file, a parameter to specify the source language of the subtitles, and parameters for the target languages. The task of the module’s processing logic is to use pre-made GPT prompts to process the provided subtitle file. The module’s logic is designed to extract the text from the subtitles without their timing. The subsequent steps involve sending the text to the GPT model along with the language parameters and translating the submitted text, as shown in

Figure 3. The final stage is to assemble the translated text into an output subtitle file according to a template with a GPT prompt corresponding to the used subtitle format standard. The logic of the application does not directly affect the quality of the translations, as the translation process is performed by a GPT model operating on a text file, rather than directly on the subtitle file. The main role of the internal logic is to segment and reconstruct the subtitle file for processing. The quality of the translations depends entirely on the capabilities of the underlying GPT-4o and GPT-4.5 models. When used as an expert-level tool, GPT-4 occasionally produces so-called “hallucinations”, a phenomenon documented in current research [

23,

24]. Studies in the field indicate that GPT-4 achieves results comparable to those of entry-level human translators in terms of overall error rate, but it still lags behind intermediate and advanced professional translators in terms of quality and accuracy [

25].

The implementation of the application is realized through the NextJS framework and OpenAI’s GPT-4o and GPT-4.5 models, accessible via the company’s API key. The application is available for use through the domain

https://apps.davincilab.bg/ (accessed on 31 May 2025).

Working scenario:

Program logic for subtitle processing, text, and GPT prompts;

The user uploads a subtitle file via the browser;

The user selects the source language of the subtitles;

The user selects the target languages into which the subtitles should be translated;

The user sends a request to the server-side application;

The server-side application receives the request from the client, consisting of a subtitle file and translation parameters;

The server-side application extracts the text content of the subtitles from the file without timing and special characters;

The server-side application creates a system prompt that clearly defines the translation task and sends the request through the AI model API;

The AI model translates the text;

The server-side application receives the translated text and integrates it into a subtitle file according to a specified standard;

The server-side application returns the translated subtitles back to the client.

5. Discussion and Applications

The AI Subtitle Translator software can find wide application across various fields, overcoming language barriers and expanding access to high-quality video resources. The integration of artificial intelligence allows for automated, precise, and efficient localization of content, which can facilitate both educational and communication processes in various professional areas. The proposed approach holds enormous potential for overcoming language barriers when using RLOs from different sources. In particular, AI Subtitle Translator offers a scalable and effective solution to improve access to education, while simultaneously reducing the time and costs associated with localizing educational resources. Since the software utilizes OpenAI models, its advantage lies in the higher quality of translation compared to traditional tools. This makes it especially suitable for applications where both technical translation and the preservation of meaning and context are important. Specific examples of possible applications of the approach and subtitle translation software include e-learning and blended learning, ensuring accessibility, use by media and entertainment industries, and application in scientific conferences and seminars by international corporations, non-governmental organizations, etc.

Modern educational institutions are increasingly using online video platforms for learning, which raises the need for multilingual access. Universities and schools can implement subtitle translation software to integrate global educational resources into the learning process.

Through subtitle translation, learners with special educational needs can benefit from more comprehensive education. By integrating multilingual subtitles created with AI Subtitle Translator, accessibility can be improved according to many of the criteria set by the WCAG (Web Content Accessibility Guidelines) standard, which provides guidelines for creating web content.

The software offers significant benefits to the film and media industries by providing automated subtitle translation for movies, TV shows, and online videos. For example, YouTube creators and video producers can leverage this tool to expand their global reach by offering content with translated subtitles for international audiences. This technology has the potential to help creators overcome language barriers, making their content accessible to a wider audience. Automated subtitle translation not only increases accessibility for non-native speakers but also enhances accessibility for individuals with disabilities, such as those who are deaf or hard of hearing.

This can lead to increased viewership and engagement from international audiences, which is crucial in the globalized digital world. By using AI Subtitle Translator, video content creators can reach global markets without the need for manual translation of subtitles into multiple languages. This enables them to focus more on the creative aspects of content creation while the software handles the localization of the material.

These advantages make the software especially valuable for media and entertainment companies, as it reduces the costs and efforts associated with manual subtitle processing, thus improving overall efficiency.

One of the most significant advantages of this tool is demonstrated in its integration with location-based automated recognition systems. These systems enable the inclusion of a single video with multiple sets of subtitles in different languages. When accessing the content, the device’s location is automatically recognized, leading to the dynamic delivery of the video with the corresponding subtitles based on the geographic location or the technical settings of the user’s device and software. Well-known examples of such systems include YouTube, Netflix, Amazon Prime Video, among others.

At present, AI Subtitle Translator is being used by the development team and lecturers of the Faculty of Mathematics and Informatics (FMI) at Plovdiv University as a test tool. Although subtitle translation via the application is fully automated, human intervention remains essential when dealing with complex literary texts. In the entertainment industry and online platforms, especially YouTube, where it is common practice to use subtitles without thorough human post-editing, the application proves to be particularly useful. For example, the so-called “long videos” on the YouTube channels Talks on the Way of Wisdom and Mindset for Success were subtitled entirely by AI Subtitle Translation without any human editing.

As evident from the quality of subtitles in these videos, the translations produced before January 2025, when AI Subtitle Translation was powered by the GPT-3.5 model, exhibited poor performance in terms of stylistic adaptation and contextual understanding. In contrast, videos subtitled thereafter, using GPT-4o, demonstrate improved idiomatic usage, stylistic consistency, naturalness, and overall suitability for professional translation [

26,

27,

28].

For authoritative and high-quality video materials, however, it can be concluded that while GPT-4o and AI Subtitle Translation, can provide automated translation, professional post-editing is essential to ensure stylistic and qualitative adequacy. With the release of GPT-4.5, available since March 2025 through the OpenAI API for developers, significant progress has been achieved in the field of machine translation, combining improved naturalness, accuracy, and multilingual capability. These characteristics make the model particularly well-suited for professional translation tasks that require high quality and consistency [

29].

In this paper, we express support for and alignment with the conclusions of recent empirical research, which highlights the significant advancements of the GPT-4.5 language model in machine translation. Following the integration of this model into the AI Subtitle Translation tool, it was professionally applied to subtitle translation for a film adaptation of selected excerpts from the book [

30]. The resulting translations demonstrate a high degree of linguistic naturalness, accuracy, and stylistic consistency, aligned with the requirements for cultural and conceptual precision. Therefore, we argue that AI Subtitle Translation, powered by GPT-4.5, provides a professionally viable solution for translating content characterized by high linguistic, cultural, and stylistic complexity. The observed results affirm the model’s effectiveness in subtitling, literary adaptation, and multimedia localization in real-world translation workflows.

Analyses, empirical data, and accompanying evaluations confirm that the proposed approach for overcoming language barriers in education, through the integration of subtitle translation technologies into video resources via specialized software, proves to be methodologically sound and applicable within educational practices. The approach demonstrates clear relevance to modern educational environments characterized by linguistic heterogeneity.

The team at the Human–Computer Interaction and Simulations Laboratory, in collaboration with colleagues from the FMI at Plovdiv University, is actively developing an integrated platform for educational purposes. In this context, the proposed approach is planned for practical implementation, and the subtitle translation software tool has been successfully integrated as a functional component of the system. The platform is aimed at overcoming language barriers and ensuring equal access to multimedia educational content in today’s multilingual educational landscape. Its modules include the generation of specialized text based on pre-defined instructional scenarios, the generation of scenario-relevant images, grammar and stylistic correction tools to ensure textual accuracy, automatic generation of tags and keywords, text query extraction from images, and the creation of presentation-ready descriptions, among others.

6. Conclusions

The article discusses an approach that combines the concept of Reusable Learning Objects and the application of a software tool for automated AI-based subtitle translation. By implementing this approach, access to educational resources is ensured regardless of language barriers. Instead of learners being restricted by the language of the original content, the approach offers the localization of video resources into the target language, making them accessible to a broader audience. Through AI Subtitle Translator, subtitles for video content are automatically translated and synchronized using OpenAI models. This process minimizes human intervention, accelerates processing, and ensures high translation accuracy. The approach provokes and improves accessibility, quality, speed, reusability, openness, and inclusiveness of learning by enabling large-scale localization of content and integration of video resources into e-learning platforms.

The software can find wide applications in various fields, such as education, media, the corporate sector, and more, by ensuring more effective communication and facilitating access to quality content. Through this innovative approach, new opportunities for global learning and information dissemination are created, contributing to a more integrated and accessible digital society.